2024/10/21: We release the Mini-InternVL series. These models achieve impressive performance with minimal size: the 4B model achieves 90% of the performance with just 5% of the model size. For more details, please check our project page and document.

2024/08/01: The Chartmimic team evaluated the InternVL2 series models on their benchmark. The InternVL2-26B and 76B models achieved the top two performances among open-source models, with the InternVL2 76B model surpassing GeminiProVision and exhibiting comparable results to Claude-3-opus.

2024/08/01: InternVL2-Pro achieved the SOTA performance among open-source models on the CharXiv dataset, surpassing many closed-source models such as GPT-4V, Gemini 1.5 Flash, and Claude 3 Sonnet.

2024/07/24: The MLVU team evaluated InternVL-1.5 on their benchmark. The average performance on the multiple-choice task was 50.4%, while the performance on the generative tasks was 4.02. The performance on the multiple-choice task ranked #1 among all open-source MLLMs.

2024/07/18: ?? InternVL2-40B achieved SOTA performance among open-source models on the Video-MME dataset, scoring 61.2 when inputting 16 frames and 64.4 when inputting 32 frames. It significantly outperforms other open-source models and is the closest open-source model to GPT-4o mini.

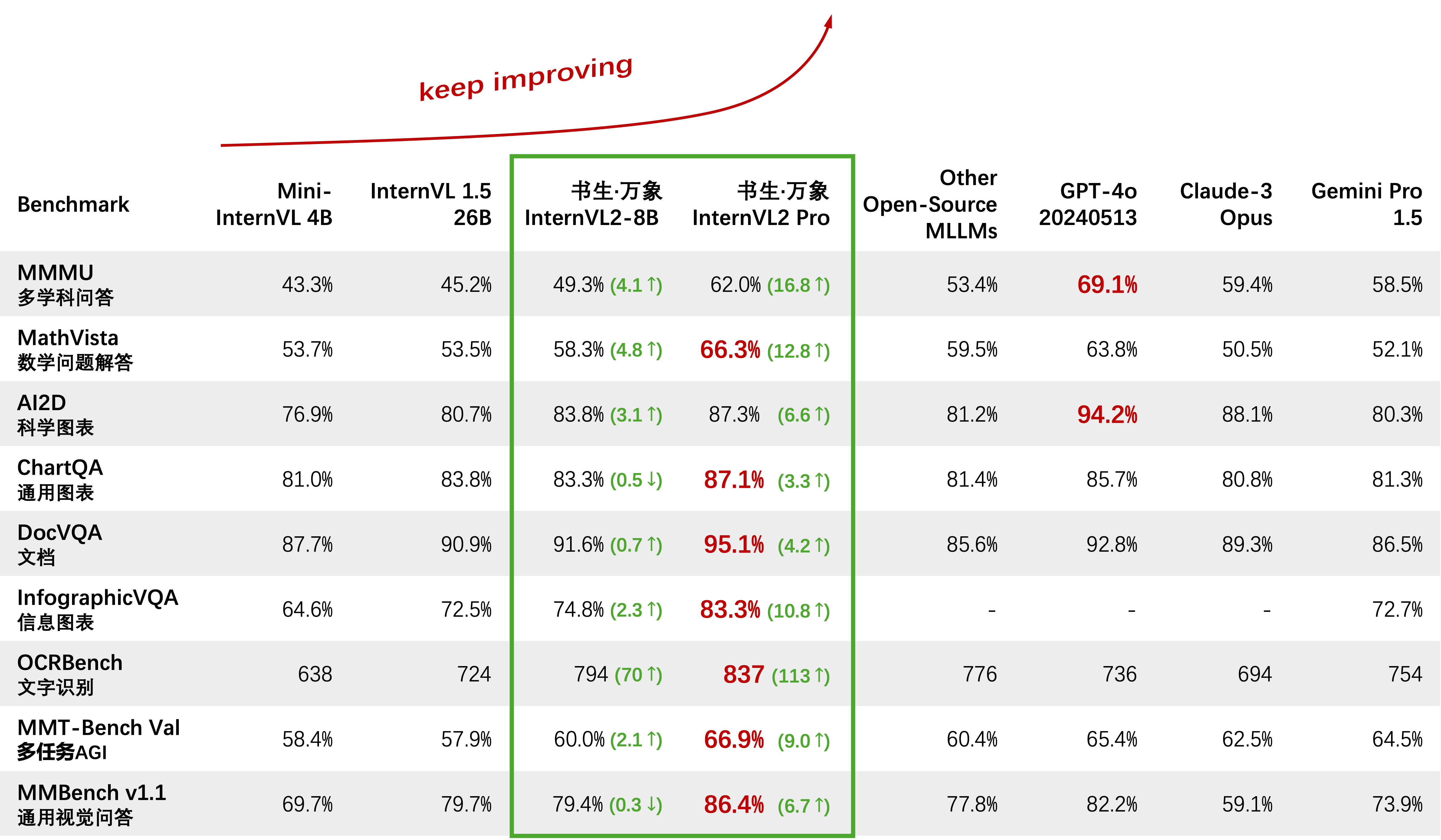

2024/07/18: ? InternVL2-Pro achieved the SOTA performance on the DocVQA and InfoVQA benchmarks.

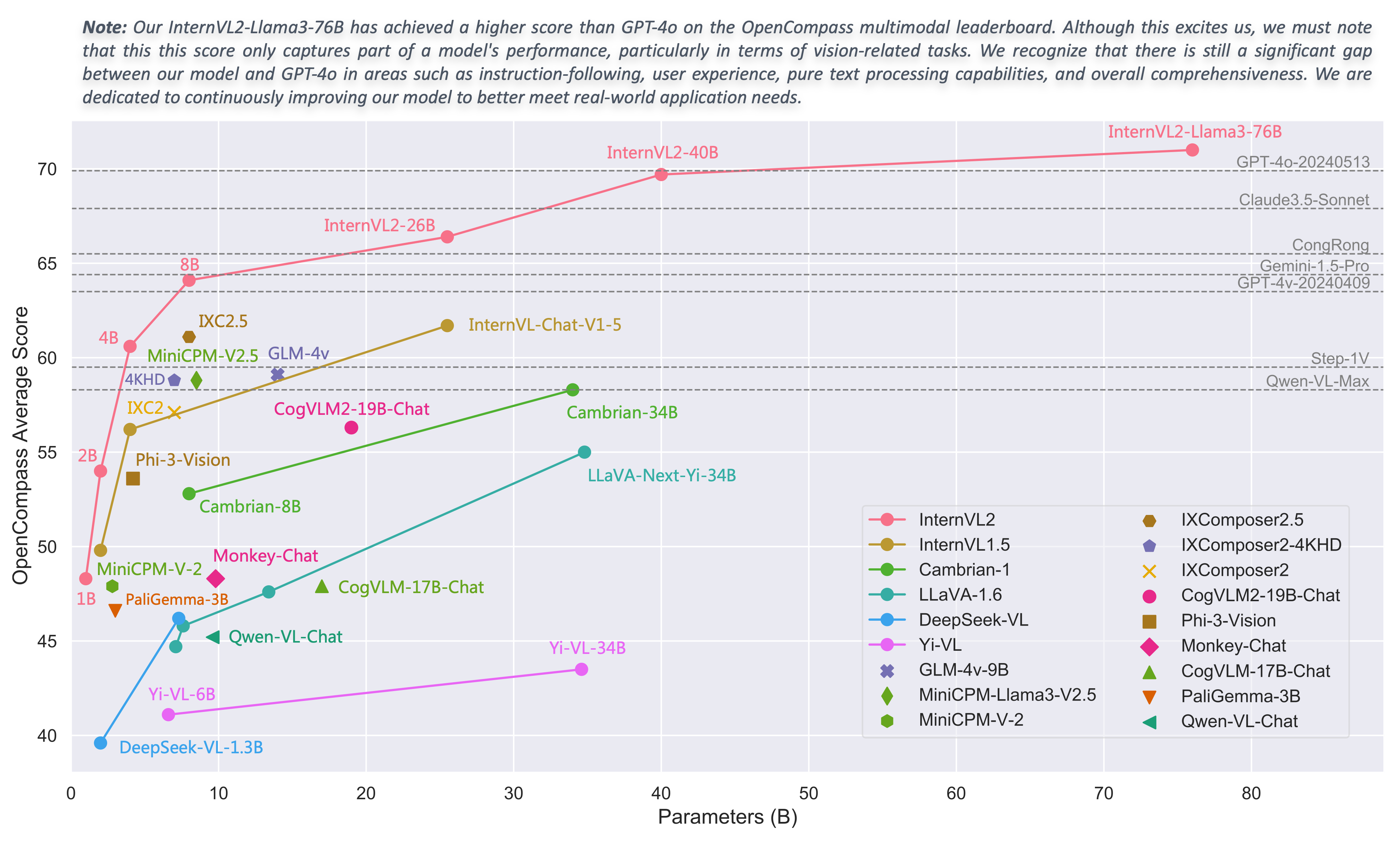

2024/07/04: ? We release the InternVL2 series. InternVL2-Pro achieved a 62.0% accuracy on the MMMU benchmark, matching the performance of leading closed-source commercial models like GPT-4o. The free API of this model can be applied by filling (application form) / (申请表). Other models are available at HF link.

2024/06/19: We propose Needle In A Multimodal Haystack (MM-NIAH), the first benchmark designed to systematically evaluate the capability of existing MLLMs to comprehend long multimodal documents.

2024/05/30: We release ShareGPT-4o, a large-scale dataset that we plan to open-source with 200K images, 10K videos, and 10K audios with detailed descriptions.

2024/05/28: Thanks to the lmdeploy team for providing AWQ quantization support. The 4-bit model is available at OpenGVLab/InternVL-Chat-V1-5-AWQ.

2024/05/13: InternVL 1.0 can now be used as the text encoder for diffusion models to support multilingual generation natively in over 110 languages worldwide. See MuLan for more details.

2024/04/18: InternVL-Chat-V1-5 has been released at HF link, approaching the performance of GPT-4V and Gemini Pro on various benchmarks like MMMU, DocVQA, ChartQA, MathVista, etc.

2024/02/27: InternVL is accepted by CVPR 2024 (Oral)! ?

2024/02/21: InternVL-Chat-V1-2-Plus achieved SOTA performance on MathVista (59.9), MMBench (83.8), and MMVP (58.7). See our blog for more details.

2024/02/12: InternVL-Chat-V1-2 has been released. It achieves 51.6 on MMMU val and 82.3 on MMBench test. For more details, please refer to our blog and SFT data. The model is now available on HuggingFace, and both training / evaluation data and scripts are open-sourced.

2024/01/24: InternVL-Chat-V1-1 is released, it supports Chinese and has stronger OCR capability, see here.

2024/01/16: We release our customized mmcv/mmsegmentation/mmdetection code, integrated with DeepSpeed, which can be used for training large-scale detection and segmentation models.

Support vLLM and Ollama

Rebuild documents using readthedocs

Support fine-tuning different LLMs with LoRA

Support video and PDF input in online demo

Release InternVL2 with VisionLLMv2 integration

Release requirements.txt for InternVL2

Release training / evaluation code for InternVL2 series

Release Streamlit web UI for InternVL1.5 and InternVL2

Get Started

Installation: [Environment] [requirements.txt]

Evaluation Data Preparation: [InternVL Evaluation]

Chat Data Format: [Meta File] [Pure Text] [Single-Image] [Multi-Image] [Video]

InternVL-Chat API: [InternVL2-Pro]

Local Chat Demo: [Streamlit Demo] [Gradio Demo] [LMDeploy Demo]

Tutorials: [Enhancing InternVL2 on COCO Caption Using LoRA Fine-Tuning]

InternVL Family

InternVL 2.0: [Introduction] [Quick Start] [Finetune] [Evaluation] [Deployment]

InternVL 1.5: [Introduction] [Quick Start] [Finetune] [Evaluation] [Deployment]

InternVL 1.2: [Introduction] [Quick Start] [Finetune] [Evaluation]

InternVL 1.1: [Introduction] [Quick Start] [Evaluation]

InternVL 1.0: [Classification] [CLIP-Benchmark] [Segmentation] [InternVL-Chat-LLaVA] [InternVL-G]

| Model Name | Vision Part | Language Part | HF Link | MS Link | Document |

|---|---|---|---|---|---|

| InternVL2‑1B | InternViT‑300M‑448px | Qwen2‑0.5B‑Instruct | ? link | ? link | ? doc |

| InternVL2‑2B | InternViT‑300M‑448px | internlm2‑chat‑1‑8b | ? link | ? link | ? doc |

| InternVL2‑4B | InternViT‑300M‑448px | Phi‑3‑mini‑128k‑instruct | ? link | ? link | ? doc |

| InternVL2‑8B | InternViT‑300M‑448px | internlm2_5‑7b‑chat | ? link | ? link | ? doc |

| InternVL2‑26B | InternViT‑6B‑448px‑V1‑5 | internlm2‑chat‑20b | ? link | ? link | ? doc |

| InternVL2‑40B | InternViT‑6B‑448px‑V1‑5 | Nous‑Hermes‑2‑Yi‑34B | ? link | ? link | ? doc |

| InternVL2-Llama3-76B | InternViT‑6B‑448px‑V1‑5 | Hermes‑2‑Theta‑ Llama‑3‑70B | ? link | ? link | ? doc |

We welcome everyone to use our API for research. For better management, please submit (application form) / (申请表) to obtain free API access.

| Model | Date | HF Link | MS Link | Note |

|---|---|---|---|---|

| Mini‑InternVL‑Chat‑4B‑V1‑5 | 2024.05.28 | ? link | ? link | ?? 16% of the model size, 90% of the performance |

| Mini‑InternVL‑Chat‑2B‑V1‑5 | 2024.05.19 | ? link | ? link | ? 8% of the model size, 80% of the performance |

| InternVL‑Chat‑V1‑5 | 2024.04.18 | ? link | ? link | support 4K image; super strong OCR; Approaching the performance of GPT-4V and Gemini Pro on various benchmarks like MMMU, DocVQA, ChartQA, MathVista, etc. |

| InternVL‑Chat‑V1‑2‑Plus | 2024.02.21 | ? link | ? link | more SFT data and stronger |

| InternVL‑Chat‑V1‑2 | 2024.02.11 | ? link | ? link | scaling up LLM to 34B |

| InternVL‑Chat‑V1‑1 | 2024.01.24 | ? link | ? link | support Chinese and stronger OCR |

| InternVL‑Chat‑19B | 2023.12.25 | ? link | ? link | English multimodal dialogue |

| InternVL‑Chat‑13B | 2023.12.25 | ? link | ? link | English multimodal dialogue |

| Model | Date | HF Link | MS Link | Note |

|---|---|---|---|---|

| InternViT‑300M‑448px | 2024.05.25 | ? link | ? link | distilled small vision foundation model with 300M parameters (?new) |

| InternViT‑6B‑448px‑V1‑5 | 2024.04.20 | ? link | ? link | support dynamic resolution and super strong OCR feature extraction capability by incremental pre-training (?new) |

| InternViT‑6B‑448px‑V1‑2 | 2024.02.11 | ? link | ? link | support 448 resolution by incremental pre-training |

| InternViT‑6B‑448px‑V1‑0 | 2024.01.30 | ? link | ? link | support 448 resolution by incremental pre-training |

| InternViT‑6B‑224px | 2023.12.22 | ? link | ? link | the first version of InternViT-6B, extracted from InternVL‑14B‑224px |

| Model | Date | HF Link | MS Link | Note |

|---|---|---|---|---|

| InternVL‑14B‑224px | 2023.12.22 | ? link | ? link | vision-language foundation model, InternViT-6B + QLLaMA, can be used for image-text retrieval like CLIP |

Linear-Probe Image Classification [see details]

ViT-22B uses the private JFT-3B dataset.

| method | #param | IN-1K | IN-ReaL | IN-V2 | IN-A | IN-R | IN-Sketch |

|---|---|---|---|---|---|---|---|

| OpenCLIP-G | 1.8B | 86.2 | 89.4 | 77.2 | 63.8 | 87.8 | 66.4 |

| DINOv2-g | 1.1B | 86.5 | 89.6 | 78.4 | 75.9 | 78.8 | 62.5 |

| EVA-01-CLIP-g | 1.1B | 86.5 | 89.3 | 77.4 | 70.5 | 87.7 | 63.1 |

| MAWS-ViT-6.5B | 6.5B | 87.8 | - | - | - | - | - |

| ViT-22B* | 21.7B | 89.5 | 90.9 | 83.2 | 83.8 | 87.4 | - |

| InternViT-6B (ours) | 5.9B | 88.2 | 90.4 | 79.9 | 77.5 | 89.8 | 69.1 |

Semantic Segmentation [see details]

| method | decoder | #param (train/total) | crop size | mIoU |

|---|---|---|---|---|

| OpenCLIP-G (frozen) | Linear | 0.3M / 1.8B | 512 | 39.3 |

| ViT-22B (frozen) | Linear | 0.9M / 21.7B | 504 | 34.6 |

| InternViT-6B (frozen) | Linear | 0.5M / 5.9B | 504 | 47.2 (+12.6) |

| ViT-22B (frozen) | UperNet | 0.8B / 22.5B | 504 | 52.7 |

| InternViT-6B (frozen) | UperNet | 0.4B / 6.3B | 504 | 54.9 (+2.2) |

| ViT-22B | UperNet | 22.5B / 22.5B | 504 | 55.3 |

| InternViT-6B | UperNet | 6.3B / 6.3B | 504 | 58.9 (+3.6) |

Zero-Shot Image Classification [see details]

| method | IN-1K | IN-A | IN-R | IN-V2 | IN-Sketch | ObjectNet |

|---|---|---|---|---|---|---|

| OpenCLIP-G | 80.1 | 69.3 | 92.1 | 73.6 | 68.9 | 73.0 |

| EVA-02-CLIP-E+ | 82.0 | 82.1 | 94.5 | 75.7 | 71.6 | 79.6 |

| ViT-22B* | 85.9 | 90.1 | 96.0 | 80.9 | - | 87.6 |

| InternVL-C (ours) | 83.2 | 83.8 | 95.5 | 77.3 | 73.9 | 80.6 |

Multilingual Zero-Shot Image Classification [see details]

EN: English, ZH: Chinese, JP: Japanese, Ar: Arabic, IT: Italian

| method | IN-1K (EN) | IN-1K (ZH) | IN-1K (JP) | IN-1K (AR) | IN-1K (IT) |

|---|---|---|---|---|---|

| Taiyi-CLIP-ViT-H | - | 54.4 | - | - | - |

| WuKong-ViT-L-G | - | 57.5 | - | - | - |

| CN-CLIP-ViT-H | - | 59.6 | - | - | - |

| AltCLIP-ViT-L | 74.5 | 59.6 | - | - | - |

| EVA-02-CLIP-E+ | 82.0 | - | - | - | 41.2 |

| OpenCLIP-XLM-R-H | 77.0 | 55.7 | 53.1 | 37.0 | 56.8 |

| InternVL-C (ours) | 83.2 | 64.5 | 61.5 | 44.9 | 65.7 |

Zero-Shot Video Classification

| method | #frame | K400 | K600 | K700 |

|---|---|---|---|---|

| OpenCLIP-G | 1 | 65.9 | 66.1 | 59.2 |

| EVA-02-CLIP-E+ | 1 | 69.8 | 69.3 | 63.4 |

| InternVL-C (ours) | 1 | 71.0 | 71.3 | 65.7 |

| ViCLIP | 8 | 75.7 | 73.5 | 66.4 |

| InternVL-C (ours) | 8 | 79.4 | 78.8 | 71.5 |

English Zero-Shot Image-Text Retrieval [see details]

| model | Flickr30K | COCO | avg | ||||||||||

| image-to-text | text-to-image | image-to-text | text-to-image | ||||||||||

| R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | ||

| OpenCLIP-G | 92.9 | 99.3 | 99.8 | 79.5 | 95.0 | 97.1 | 67.3 | 86.9 | 92.6 | 51.4 | 74.9 | 83.0 | 85.0 |

| EVA-02-CLIP-E+ | 93.9 | 99.4 | 99.8 | 78.8 | 94.2 | 96.8 | 68.8 | 87.8 | 92.8 | 51.1 | 75.0 | 82.7 | 85.1 |

| EVA-CLIP-8B | 95.6 | 99.6 | 99.9 | 80.8 | 95.5 | 97.6 | 70.3 | 89.3 | 93.9 | 53.0 | 76.0 | 83.4 | 86.2 |

| InternVL-C (ours) | 94.7 | 99.6 | 99.9 | 81.7 | 96.0 | 98.2 | 70.6 | 89.0 | 93.5 | 54.1 | 77.3 | 84.6 | 86.6 |

| InternVL-G (ours) | 95.7 | 99.7 | 99.9 | 85.0 | 97.0 | 98.6 | 74.9 | 91.3 | 95.2 | 58.6 | 81.3 | 88.0 | 88.8 |

Chinese Zero-Shot Image-Text Retrieval [see details]

| model | Flickr30K-CN | COCO-CN | avg | ||||||||||

| image-to-text | text-to-image | image-to-text | text-to-image | ||||||||||

| R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | R@1 | R@5 | R@10 | ||

| CN-CLIP-ViT-H | 81.6 | 97.5 | 98.8 | 71.2 | 91.4 | 95.5 | 63.0 | 86.6 | 92.9 | 69.2 | 89.9 | 96.1 | 86.1 |

| OpenCLIP-XLM-R-H | 86.1 | 97.5 | 99.2 | 71.0 | 90.5 | 94.9 | 70.0 | 91.5 | 97.0 | 66.1 | 90.8 | 96.0 | 87.6 |

| InternVL-C (ours) | 90.3 | 98.8 | 99.7 | 75.1 | 92.9 | 96.4 | 68.8 | 92.0 | 96.7 | 68.9 | 91.9 | 96.5 | 89.0 |

| InternVL-G (ours) | 92.9 | 99.4 | 99.8 | 77.7 | 94.8 | 97.3 | 71.4 | 93.9 | 97.7 | 73.8 | 94.4 | 98.1 | 90.9 |

Multilingual Zero-Shot Image-Text Retrieval on XTD [see details]

| method | EN | ES | FR | ZH | IT | KO | RU | JP | average |

|---|---|---|---|---|---|---|---|---|---|

| AltCLIP | 95.4 | 94.1 | 92.9 | 95.1 | 94.2 | 94.4 | 91.8 | 91.7 | 93.7 |

| OpenCLIP-XLM-R-H | 97.3 | 96.1 | 94.5 | 94.7 | 96.0 | 90.2 | 93.9 | 94.0 | 94.6 |

| InternVL-C (ours) | 97.3 | 95.7 | 95.1 | 95.6 | 96.0 | 92.2 | 93.3 | 95.5 | 95.1 |

| InternVL-G (ours) | 98.6 | 97.7 | 96.5 | 96.7 | 96.9 | 95.1 | 94.8 | 96.1 | 96.6 |

See "Compared with SOTA VLLMs" section.

import torchfrom PIL import Imagefrom transformers import AutoModel, CLIPImageProcessormodel = AutoModel.from_pretrained('OpenGVLab/InternViT-6B-448px-V1-5',torch_dtype=torch.bfloat16,low_cpu_mem_usage=True,trust_remote_code=True).cuda().eval()image = Image.open('./examples/image1.jpg').convert('RGB')image_processor = CLIPImageProcessor.from_pretrained('OpenGVLab/InternViT-6B-448px-V1-5')pixel_values = image_processor(images=image, return_tensors='pt').pixel_valuespixel_values = pixel_values.to(torch.bfloat16).cuda()outputs = model(pixel_values)import torchfrom PIL import Imagefrom transformers import AutoModel, CLIPImageProcessorfrom transformers import AutoTokenizermodel = AutoModel.from_pretrained('OpenGVLab/InternVL-14B-224px',torch_dtype=torch.bfloat16,low_cpu_mem_usage=True,trust_remote_code=True).cuda().eval()image_processor = CLIPImageProcessor.from_pretrained('OpenGVLab/InternVL-14B-224px')tokenizer = AutoTokenizer.from_pretrained('OpenGVLab/InternVL-14B-224px', use_fast=False, add_eos_token=True)tokenizer.pad_token_id = 0 # set pad_token_id to 0images = [Image.open('./examples/image1.jpg').convert('RGB'),Image.open('./examples/image2.jpg').convert('RGB'),Image.open('./examples/image3.jpg').convert('RGB')

]prefix = 'summarize:'texts = [prefix + 'a photo of a red panda', # Englishprefix + '一张熊猫的照片', # Chineseprefix + '二匹の猫の写真' # Japanese]pixel_values = image_processor(images=images, return_tensors='pt').pixel_valuespixel_values = pixel_values.to(torch.bfloat16).cuda()input_ids = tokenizer(texts, return_tensors='pt', max_length=80, truncation=True, padding='max_length').input_ids.cuda()# InternVL-Clogits_per_image, logits_per_text = model(image=pixel_values, text=input_ids, mode='InternVL-C')probs = logits_per_image.softmax(dim=-1)# tensor([[9.9609e-01, 5.2185e-03, 6.0070e-08],# [2.2949e-02, 9.7656e-01, 5.9903e-06],# [3.2932e-06, 7.4863e-05, 1.0000e+00]], device='cuda:0',# dtype=torch.bfloat16, grad_fn=<SoftmaxBackward0>)# InternVL-Glogits_per_image, logits_per_text = model(image=pixel_values, text=input_ids, mode='InternVL-G')probs = logits_per_image.softmax(dim=-1)# tensor([[9.9609e-01, 3.1738e-03, 3.6322e-08],# [8.6060e-03, 9.9219e-01, 2.8759e-06],# [1.7583e-06, 3.1233e-05, 1.0000e+00]], device='cuda:0',# dtype=torch.bfloat16, grad_fn=<SoftmaxBackward0>)# please set add_eos_token to False for generationtokenizer.add_eos_token = Falseimage = Image.open('./examples/image1.jpg').convert('RGB')pixel_values = image_processor(images=image, return_tensors='pt').pixel_valuespixel_values = pixel_values.to(torch.bfloat16).cuda()tokenized = tokenizer("English caption:", return_tensors='pt')pred = model.generate(pixel_values=pixel_values,input_ids=tokenized.input_ids.cuda(),attention_mask=tokenized.attention_mask.cuda(),num_beams=5,min_new_tokens=8,

)caption = tokenizer.decode(pred[0].cpu(), skip_special_tokens=True).strip()# English caption: a red panda sitting on top of a wooden platformHere, we take the smaller OpenGVLab/InternVL2-8B as an example:

import numpy as npimport torchimport torchvision.transforms as Tfrom decord import VideoReader, cpufrom PIL import Imagefrom torchvision.transforms.functional import InterpolationModefrom transformers import AutoModel, AutoTokenizerIMAGENET_MEAN = (0.485, 0.456, 0.406)IMAGENET_STD = (0.229, 0.224, 0.225)def build_transform(input_size):MEAN, STD = IMAGENET_MEAN, IMAGENET_STDtransform = T.Compose([T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),T.ToTensor(),T.Normalize(mean=MEAN, std=STD)

])return transformdef find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):best_ratio_diff = float('inf')best_ratio = (1, 1)area = width * heightfor ratio in target_ratios:target_aspect_ratio = ratio[0] / ratio[1]ratio_diff = abs(aspect_ratio - target_aspect_ratio)if ratio_diff < best_ratio_diff:best_ratio_diff = ratio_diffbest_ratio = ratioelif ratio_diff == best_ratio_diff:if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:best_ratio = ratioreturn best_ratiodef dynamic_preprocess(image, min_num=1, max_num=12, image_size=448, use_thumbnail=False):orig_width, orig_height = image.sizeaspect_ratio = orig_width / orig_height# calculate the existing image aspect ratiotarget_ratios = set(

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) ifi * j <= max_num and i * j >= min_num)target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])# find the closest aspect ratio to the targettarget_aspect_ratio = find_closest_aspect_ratio(aspect_ratio, target_ratios, orig_width, orig_height, image_size)# calculate the target width and heighttarget_width = image_size * target_aspect_ratio[0]target_height = image_size * target_aspect_ratio[1]blocks = target_aspect_ratio[0] * target_aspect_ratio[1]# resize the imageresized_img = image.resize((target_width, target_height))processed_images = []for i in range(blocks):box = (

(i % (target_width // image_size)) * image_size,

(i // (target_width // image_size)) * image_size,

((i % (target_width // image_size)) + 1) * image_size,

((i // (target_width // image_size)) + 1) * image_size)# split the imagesplit_img = resized_img.crop(box)processed_images.append(split_img)assert <sp