Kai Li1, Wendi Sang1, Chang Zeng2, Runxuan Yang1, Guo Chen1, Xiaolin Hu1

1Tsinghua University, China

2National Institute of Informatics, Japan

Paper | Demo

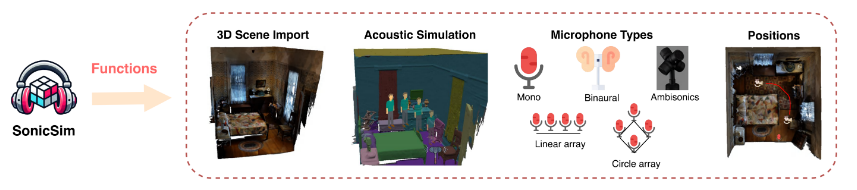

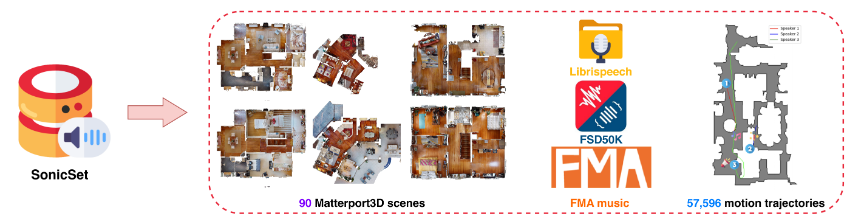

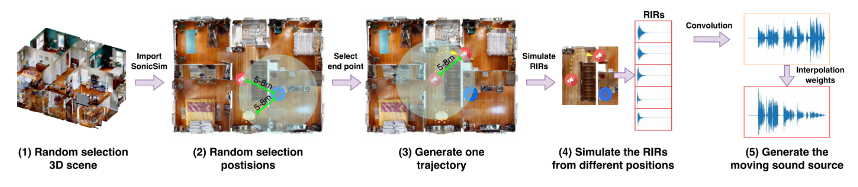

We introduce SonicSim, a synthetic toolkit designed to generate highly customizable data for moving sound sources. SonicSim is developed based on the embodied AI simulation platform, Habitat-sim, supporting multi-level parameter adjustments, including scene-level, microphone-level, and source-level, thereby generating more diverse synthetic data. Leveraging SonicSim, we constructed a moving sound source benchmark dataset, SonicSet, using the LibriSpeech dataset, the Freesound Dataset 50k (FSD50K) and Free Music Archive (FMA), and 90 scenes from the Matterport3D to evaluate speech separation and enhancement models.

[2024-10-30] We fixed the bugs for installing the environment and updated the training code for speech separation and enhancement models on the SonicSet dataset.

[2024-10-23] We release the training code for speech separation and enhancement models on the SonicSet dataset.

[2024-10-03] We release the paper on arxiv

[2024-10-01] We release the Real-world speech separation dataset, which aims to evaluate the performance of speech separation models in real-world scenarios.

[2024-07-31] We release the SonicSim dataset, which includes speech separation and enhancement tasks.

[2024-07-24] We release the scripts for dataset construction and the pre-trained models for speech separation and enhancement.

3D Scene Import: Supports importing a variety of 3D assets from datasets like Matterport3D, enabling efficient and scalable generation of complex acoustic environments.

Acoustic Environment Simulation:

Simulates sound reflections within room geometries using indoor acoustic modeling and bidirectional path tracing algorithms.

Maps semantic labels of 3D scenes to material properties, setting the absorption, scattering, and transmission coefficients of surfaces.

Synthesizes moving sound source data based on source paths, ensuring high fidelity to real-world conditions.

Microphone Configurations: Offers a wide range of microphone setups, including mono, binaural, and Ambisonics, along with support for custom linear and circular microphone arrays.

Source and Microphone Positioning: Provides customization or randomization of sound source and microphone positions. Supports motion trajectories for moving sound source simulations, adding realism to dynamic acoustic scenarios.

You can download the pre-constructed dataset from the following link:

| Dataset Name | Onedrive | Baidu Disk |

|---|---|---|

| train folder (40 split rar files, 377G) | [Download Link] | [Download Link] |

| val.rar (4.9G) | [Download Link] | [Download Link] |

| test.rar (2.2G) | [Download Link] | [Download Link] |

| sep-benchmark data (8.57G) | [Download Link] | [Download Link] |

| enh-benchmark data (7.70G) | [Download Link] | [Download Link] |

| Dataset Name | Onedrive | Baidu Disk |

|---|---|---|

| Real-world dataset (1.0G) | [Download Link] | [Download Link] |

RealMAN dataset: RealMAN

To construct the dataset yourself, please refer to the README in the SonicSim-SonicSet/data-script folder. This document provides detailed instructions on how to use the scripts provided to generate the dataset.

To set up the environment for training and inference, use the provided YAML file:

conda create -n SonicSim-Train python=3.10 conda activate SonicSim-Train pip install Cython==3.0.10 numpy==1.26.4 pip install torch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 --index-url https://download.pytorch.org/whl/cu118 pip install -r requirements.txt -i https://mirrors.tuna.tsinghua.edu.cn/pypi/web/simple

Navigate to the separation directory and run the following script to generate the fixed validation set:

cd separation python generate_fixed_validation.py --raw_dir=../SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/val --save_dir=../SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/val-sep-2 --is_mono python generate_fixed_test.py --raw_dir=/home/pod/SonicSim/SonicSim/SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/test --is_mono

Navigate to the enhancement directory and run the following script to generate the fixed validation set:

cd enhancement python generate_fixed_validation.py --raw_dir=../SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/val --save_dir=../SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/val-enh-noise --is_mono python generate_fixed_test.py --raw_dir=/home/pod/SonicSim/SonicSim/SonicSim-SonicSet/SonicSet/scene_datasets/mp3d/test --is_mono

Navigate to the separation directory and run the training script with the specified configuration file:

cd separation python train.py --conf_dir=configs/afrcnn.yaml

Navigate to the enhancement directory and run the training script with the specified configuration file:

cd enhancement python train.py --conf_dir=config/dccrn.yaml

Please check the contents of README.md in the sep-checkpoints and enh-checkpoints folders, download the appropriate pre-trained models in Release and unzip them into the appropriate folders.

Navigate to the separation directory and run the inference script with the specified configuration file:

cd separation python inference.py --conf_dir=../sep-checkpoints/TFGNet-Noise/config.yaml

Navigate to the enhancement directory and run the inference script with the specified configuration file:

cd enhancement python inference.py --conf_dir=../enh-checkpoints/TaylorSENet-Noise/config.yaml

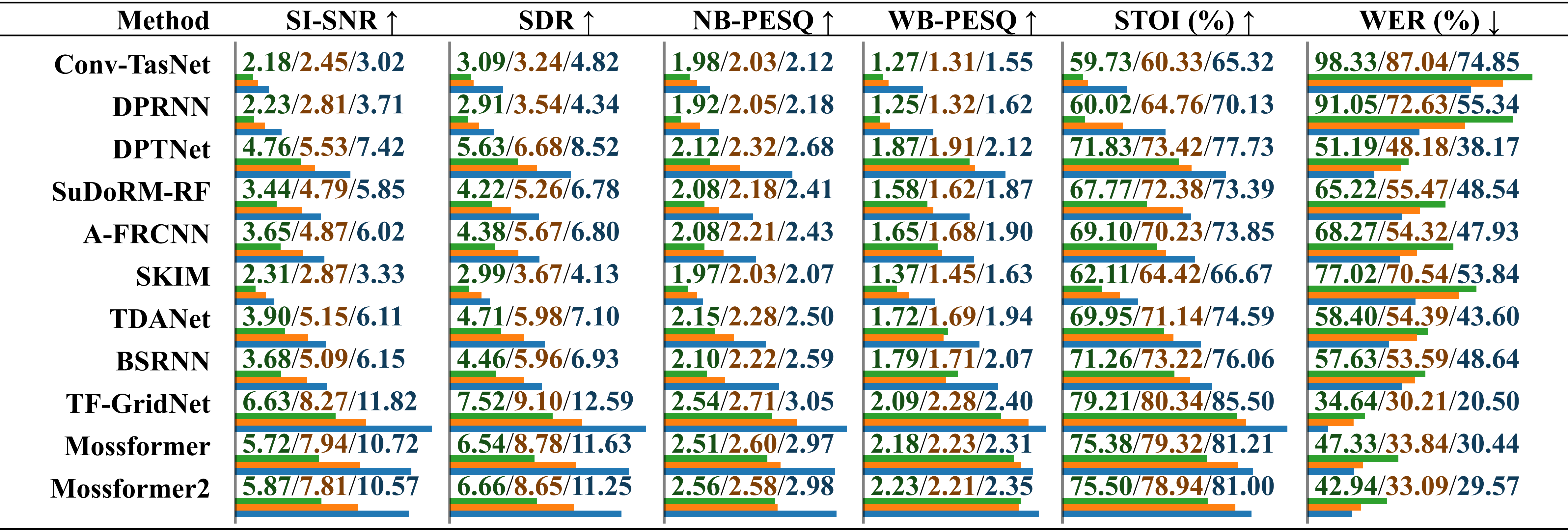

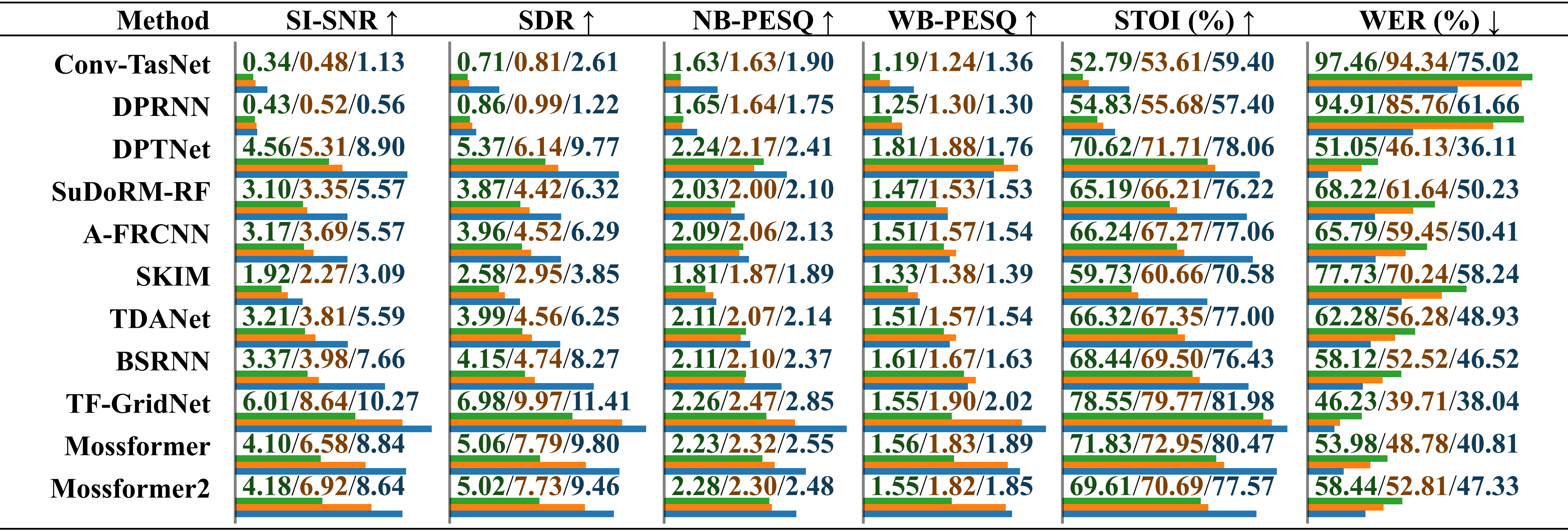

Comparative performance evaluation of models trained on different datasets using real-recorded audio with environmental noise. The results are reported separately for “trained on LRS2-2Mix”, “trained on Libri2Mix” and “trained on SonicSet”, distinguished by a slash. The relative length is indicated below the value by horizontal bars.

Comparative performance evaluation of models trained on different datasets using real-recorded audio with musical noise. The results are reported separately for “trained on LRS2-2Mix”, “trained on Libri2Mix” and “trained on SonicSet”, distinguished by a slash.

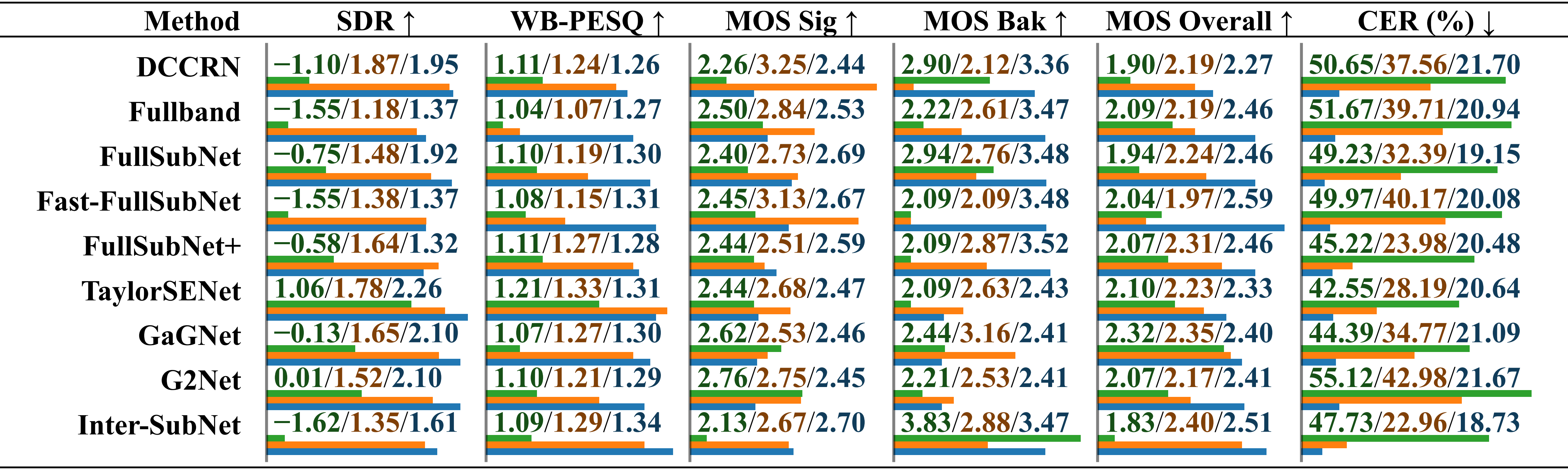

Comparative performance evaluation of models trained on different datasets using the RealMAN dataset. The results are reported separately for “trained on VoiceBank+DEMAND”, “trained on DNS Challenge” and “trained on SonicSet”, distinguished by a slash.

We have trained separation and enhancement models on the SonicSet dataset. The results are as follows:

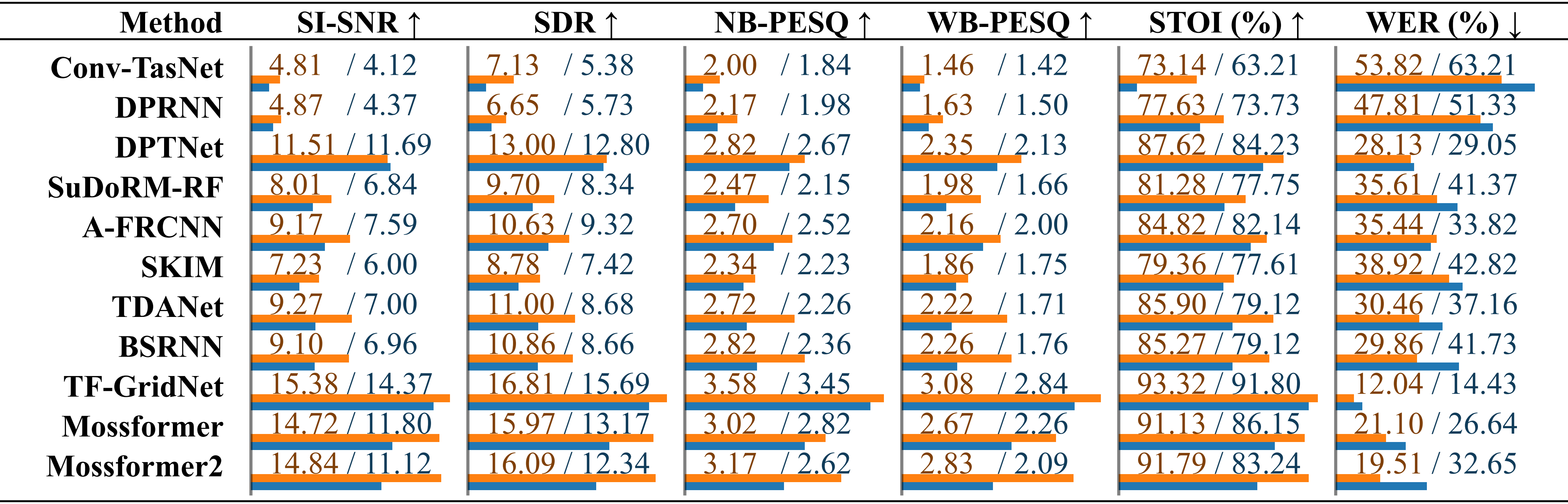

Comparison of existing speech separation methods on the SonicSet dataset. The performance of each model is listed separately for results under “environmental noise” and “musical noise”, distinguished by a slash.

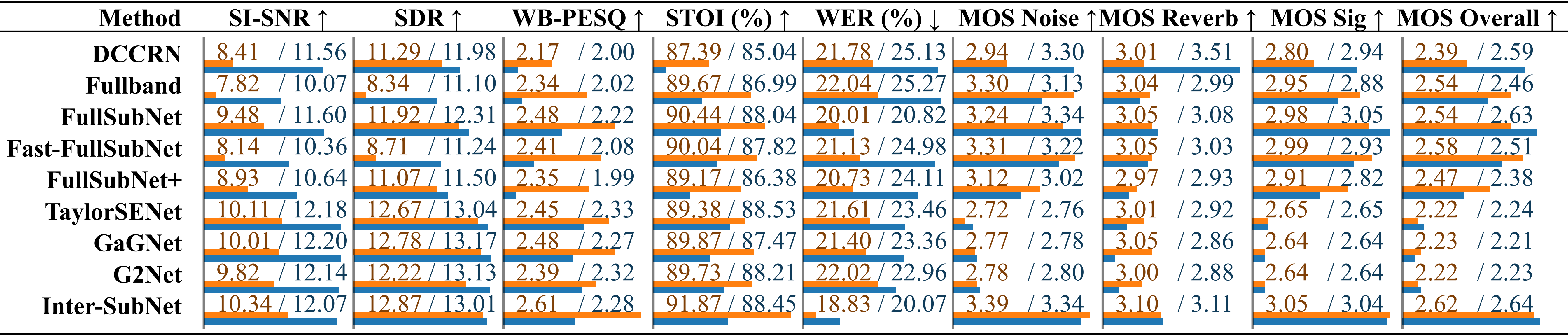

Comparison of existing speech enhancement methods on theComparison of speech enhancement methods using the SonicSet test set. The metrics are listed separately under “environmental noise” and “musical noise”, distinguished by a slash.

We would like to express our gratitude to the following:

LibriSpeech for providing the speech data.

SoundSpaces for the simulation environment.

Apple for providing dynamic audio synthesis scripts.

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.