Author/Mantainer: Ignacio Heredia (CSIC)

Project: This work is part of the DEEP Hybrid-DataCloud project that has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 777435.

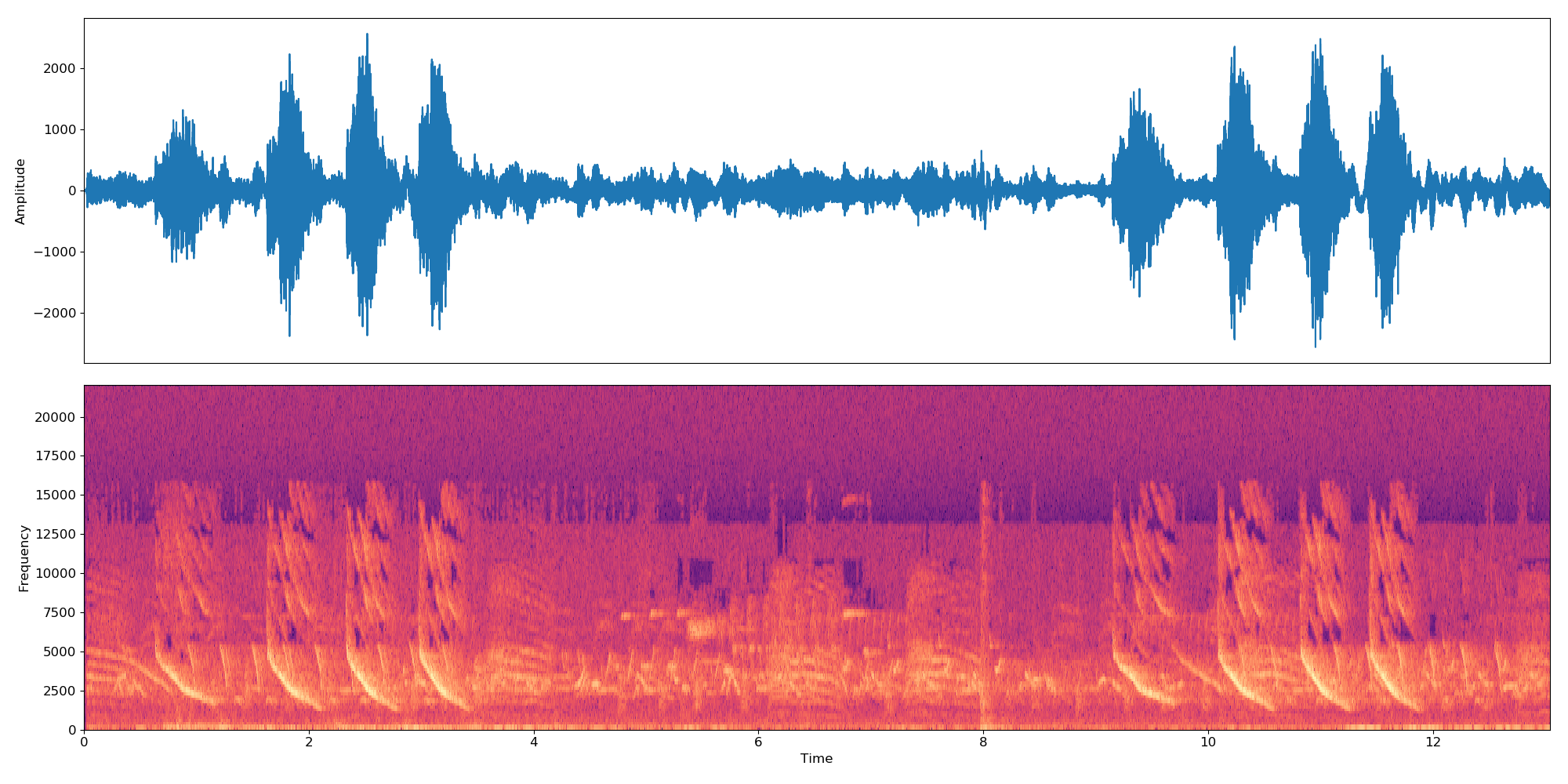

This is a plug-and-play tool to perform audio classification with Deep Learning. It allows the user to classify their samples of audio as well as training their own classifier for a custom problem. The classifier is currently pretrained on the 527 high-level classes from the AudioSet dataset.

You can find more information about it in the DEEP Marketplace.

Table of contents

Requirements

This project has been tested in Ubuntu 18.04 with Python 3.6.5. Further package requirements are described in the

requirements.txtfile.

- To support a wide range of audio formats we need to make use of the FFMPEG library. To install it in Linux please run:

apt-get install ffmpeg libavcodec-extra- It is a requirement to have Tensorflow>=1.14.0 installed (either in gpu or cpu mode). This is not listed in the

requirements.txtas it breaks GPU support.

To start using this framework clone the repo and download the default weights:

git clone https://github.com/deephdc/audio-classification-tf

cd audio-classification-tf

pip install -e .

curl -o ./models/default.tar.gz https://api.cloud.ifca.es:8080/swift/v1/audio-classification-tf/default.tar.gz

cd models && tar -zxvf default.tar.gz && rm default.tar.gz now run DEEPaaS:

deepaas-run --listen-ip 0.0.0.0

and open http://0.0.0.0:5000/ui and look for the methods belonging to the audioclas module.

We have also prepared a ready-to-use Docker container to run this module. To run it:

docker search deephdc

docker run -ti -p 5000:5000 -p 6006:6006 -p 8888:8888 deephdc/deep-oc-audio-classification-tfNow open http://0.0.0.0:5000/ui and look for the methods belonging to the audioclas module.

You can train your own audio classifier with your custom dataset. For that you have to:

Put your images in the./data/audios folder. If you have your data somewhere else you can use that location by setting

the dataset_directory parameter in the training args.

Please use a standard audio format (like .mp3 or .wav).

Note The classifier works on 10s samples. So if an audio file is longer/shorter than 10s it will be concatenated in loop to make it last a multiple of 10s. So a 22 seconds audio file will create 3 embeddings:

*-0.npy,*-1.npyand*-2.npy.

First you need add to the ./data/dataset_files directory the following files:

| Mandatory files | Optional files |

|---|---|

classes.txt, train.txt

|

val.txt, test.txt, info.txt

|

The train.txt, val.txt and test.txt files associate an audio name (or relative path) to a label number (that has

to start at zero).

The classes.txt file translates those label numbers to label names.

Finally the info.txt allows you to provide information (like number of audio files in the database) about each class.

If you are using the option compute_embeddings=False then the file path should point yo the .npy path of the

embedding instead than the original audio file and the dataset_directory parameter should point to the folder

containing the embeddings.

You can find examples of these files at ./data/demo-dataset_files.

Go to http://0.0.0.0:5000/ui and look for the TRAIN POST method. Click on 'Try it out', change whatever training args

you want and click 'Execute'. The training will be launched and you will be able to follow its status by executing the

TRAIN GET method which will also give a history of all trainings previously executed.

If the module has some sort of training monitoring configured (like Tensorboard) you will be able to follow it at http://0.0.0.0:6006.

Go to http://0.0.0.0:5000/ui and look for the PREDICT POST method. Click on 'Try it out', change whatever test args

you want and click 'Execute'. You can either supply a:

data argument with a path pointing to an audio file or a compressed file (eg. zip, tar, ...) containing audio

files.OR

url argument with an URL pointing to an audio file or a compressed file (eg. zip, tar, ...) containing audio

files. Here is an example of such

an url that you can use for testing purposes.The code in this project is based on the original repo by IBM, and implements the paper 'Multi-level Attention Model for Weakly Supervised Audio Classification' by Yu et al.

The main changes with respect to the original repo are that:

If you consider this project to be useful, please consider citing the DEEP Hybrid DataCloud project:

García, Álvaro López, et al. A Cloud-Based Framework for Machine Learning Workloads and Applications. IEEE Access 8 (2020): 18681-18692.

along with any of the references below:

- Jort F. Gemmeke, et al., Audio set: An ontology and human-labeled dataset for audio events, IEEE ICASSP, 2017.

- Qiuqiang Kong, et al., Audio Set classification with attention model: A probabilistic perspective. arXiv preprint arXiv:1711.00927 (2017).

- Changsong Yu, et al., Multi-level Attention Model for Weakly Supervised Audio Classification. arXiv preprint arXiv:1803.02353 (2018).

- S. Hershey, et al., CNN architectures for large-scale audio classification, arXiv preprint arXiv:1609.09430, 2016.