KeyBERT is a minimal and easy-to-use keyword extraction technique that leverages BERT embeddings to create keywords and keyphrases that are most similar to a document.

Corresponding medium post can be found here.

Back to ToC

Although there are already many methods available for keyword generation (e.g., Rake, YAKE!, TF-IDF, etc.) I wanted to create a very basic, but powerful method for extracting keywords and keyphrases. This is where KeyBERT comes in! Which uses BERT-embeddings and simple cosine similarity to find the sub-phrases in a document that are the most similar to the document itself.

First, document embeddings are extracted with BERT to get a document-level representation. Then, word embeddings are extracted for N-gram words/phrases. Finally, we use cosine similarity to find the words/phrases that are the most similar to the document. The most similar words could then be identified as the words that best describe the entire document.

KeyBERT is by no means unique and is created as a quick and easy method

for creating keywords and keyphrases. Although there are many great

papers and solutions out there that use BERT-embeddings

(e.g.,

1,

2,

3,

), I could not find a BERT-based solution that did not have to be trained from scratch and

could be used for beginners (correct me if I'm wrong!).

Thus, the goal was a pip install keybert and at most 3 lines of code in usage.

Back to ToC

Installation can be done using pypi:

pip install keybert

You may want to install more depending on the transformers and language backends that you will be using. The possible installations are:

pip install keybert[flair]

pip install keybert[gensim]

pip install keybert[spacy]

pip install keybert[use]

The most minimal example can be seen below for the extraction of keywords:

from keybert import KeyBERT

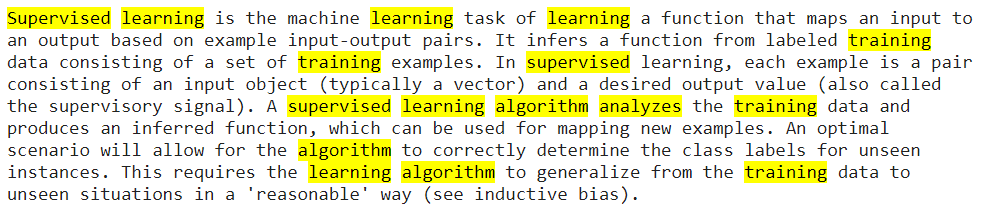

doc = """

Supervised learning is the machine learning task of learning a function that

maps an input to an output based on example input-output pairs. It infers a

function from labeled training data consisting of a set of training examples.

In supervised learning, each example is a pair consisting of an input object

(typically a vector) and a desired output value (also called the supervisory signal).

A supervised learning algorithm analyzes the training data and produces an inferred function,

which can be used for mapping new examples. An optimal scenario will allow for the

algorithm to correctly determine the class labels for unseen instances. This requires

the learning algorithm to generalize from the training data to unseen situations in a

'reasonable' way (see inductive bias).

"""

kw_model = KeyBERT()

keywords = kw_model.extract_keywords(doc)You can set keyphrase_ngram_range to set the length of the resulting keywords/keyphrases:

>>> kw_model.extract_keywords(doc, keyphrase_ngram_range=(1, 1), stop_words=None)

[('learning', 0.4604),

('algorithm', 0.4556),

('training', 0.4487),

('class', 0.4086),

('mapping', 0.3700)]To extract keyphrases, simply set keyphrase_ngram_range to (1, 2) or higher depending on the number

of words you would like in the resulting keyphrases:

>>> kw_model.extract_keywords(doc, keyphrase_ngram_range=(1, 2), stop_words=None)

[('learning algorithm', 0.6978),

('machine learning', 0.6305),

('supervised learning', 0.5985),

('algorithm analyzes', 0.5860),

('learning function', 0.5850)]We can highlight the keywords in the document by simply setting highlight:

keywords = kw_model.extract_keywords(doc, highlight=True)

NOTE: For a full overview of all possible transformer models see sentence-transformer.

I would advise either "all-MiniLM-L6-v2" for English documents or "paraphrase-multilingual-MiniLM-L12-v2"

for multi-lingual documents or any other language.

To diversify the results, we take the 2 x top_n most similar words/phrases to the document. Then, we take all top_n combinations from the 2 x top_n words and extract the combination that are the least similar to each other by cosine similarity.

>>> kw_model.extract_keywords(doc, keyphrase_ngram_range=(3, 3), stop_words='english',

use_maxsum=True, nr_candidates=20, top_n=5)

[('set training examples', 0.7504),

('generalize training data', 0.7727),

('requires learning algorithm', 0.5050),

('supervised learning algorithm', 0.3779),

('learning machine learning', 0.2891)]To diversify the results, we can use Maximal Margin Relevance (MMR) to create keywords / keyphrases which is also based on cosine similarity. The results with high diversity:

>>> kw_model.extract_keywords(doc, keyphrase_ngram_range=(3, 3), stop_words='english',

use_mmr=True, diversity=0.7)

[('algorithm generalize training', 0.7727),

('labels unseen instances', 0.1649),

('new examples optimal', 0.4185),

('determine class labels', 0.4774),

('supervised learning algorithm', 0.7502)]The results with low diversity:

>>> kw_model.extract_keywords(doc, keyphrase_ngram_range=(3, 3), stop_words='english',

use_mmr=True, diversity=0.2)

[('algorithm generalize training', 0.7727),

('supervised learning algorithm', 0.7502),

('learning machine learning', 0.7577),

('learning algorithm analyzes', 0.7587),

('learning algorithm generalize', 0.7514)]KeyBERT supports many embedding models that can be used to embed the documents and words:

Click here for a full overview of all supported embedding models.

Sentence-Transformers

You can select any model from sentence-transformers here

and pass it through KeyBERT with model:

from keybert import KeyBERT

kw_model = KeyBERT(model='all-MiniLM-L6-v2')Or select a SentenceTransformer model with your own parameters:

from keybert import KeyBERT

from sentence_transformers import SentenceTransformer

sentence_model = SentenceTransformer("all-MiniLM-L6-v2")

kw_model = KeyBERT(model=sentence_model)Flair

Flair allows you to choose almost any embedding model that

is publicly available. Flair can be used as follows:

from keybert import KeyBERT

from flair.embeddings import TransformerDocumentEmbeddings

roberta = TransformerDocumentEmbeddings('roberta-base')

kw_model = KeyBERT(model=roberta)You can select any ? transformers model here.

Back to ToC

With KeyLLM you can new perform keyword extraction with Large Language Models (LLM). You can find the full documentation here but there are two examples that are common with this new method. Make sure to install the OpenAI package through pip install openai before you start.

First, we can ask OpenAI directly to extract keywords:

import openai

from keybert.llm import OpenAI

from keybert import KeyLLM

# Create your LLM

client = openai.OpenAI(api_key=MY_API_KEY)

llm = OpenAI(client)

# Load it in KeyLLM

kw_model = KeyLLM(llm)This will query any ChatGPT model and ask it to extract keywords from text.

Second, we can find documents that are likely to have the same keywords and only extract keywords for those. This is much more efficient then asking the keywords for every single documents. There are likely documents that have the exact same keywords. Doing so is straightforward:

import openai

from keybert.llm import OpenAI

from keybert import KeyLLM

from sentence_transformers import SentenceTransformer

# Extract embeddings

model = SentenceTransformer('all-MiniLM-L6-v2')

embeddings = model.encode(MY_DOCUMENTS, convert_to_tensor=True)

# Create your LLM

client = openai.OpenAI(api_key=MY_API_KEY)

llm = OpenAI(client)

# Load it in KeyLLM

kw_model = KeyLLM(llm)

# Extract keywords

keywords = kw_model.extract_keywords(MY_DOCUMENTS, embeddings=embeddings, threshold=.75)You can use the threshold parameter to decide how similar documents need to be in order to receive the same keywords.

To cite KeyBERT in your work, please use the following bibtex reference:

@misc{grootendorst2020keybert,

author = {Maarten Grootendorst},

title = {KeyBERT: Minimal keyword extraction with BERT.},

year = 2020,

publisher = {Zenodo},

version = {v0.3.0},

doi = {10.5281/zenodo.4461265},

url = {https://doi.org/10.5281/zenodo.4461265}

}Below, you can find several resources that were used for the creation of KeyBERT but most importantly, these are amazing resources for creating impressive keyword extraction models:

Papers:

Github Repos:

MMR: The selection of keywords/keyphrases was modeled after:

NOTE: If you find a paper or github repo that has an easy-to-use implementation of BERT-embeddings for keyword/keyphrase extraction, let me know! I'll make sure to add a reference to this repo.