Keywords: Python, TensorFlow, Deep Learning, Time Series classification

This project was designed for:

$ cd /path/to/project/

$ git clone https://github.com/filippogiruzzi/voice_activity_detection.git

$ cd voice_activity_detection/$ pyenv install 3.7.3

$ pyenv virtualenv 3.7.3 vad-venv

$ pyenv activate vad-venv$ pip install -r requirements.txt

$ pip install -e .You can pull the latest image from DockerHub and run Python commands inside the container:

$ docker pull filippogrz/tf-vad:latest

$ docker run --rm --gpus all -v /var/run/docker.sock:/var/run/docker.sock -it --entrypoint /bin/bash -e TF_FORCE_GPU_ALLOW_GROWTH=true filippogrz/tf-vadIf you want to build the docker image and run the container from scratch, run the following commands.

Build the docker image:

$ make build(This might take a while.)

Run the docker image:

$ make local-nobuildThe purpose of this project is to design and implement a real-time Voice Activity Detection algorithm based on Deep Learning.

The designed solution is based on MFCC feature extraction and a 1D-Resnet model that classifies whether a audio signal is speech or noise.

| Model | Train acc. | Val acc. | Test acc. |

|---|---|---|---|

| 1D-Resnet | 99 % | 98 % | 97 % |

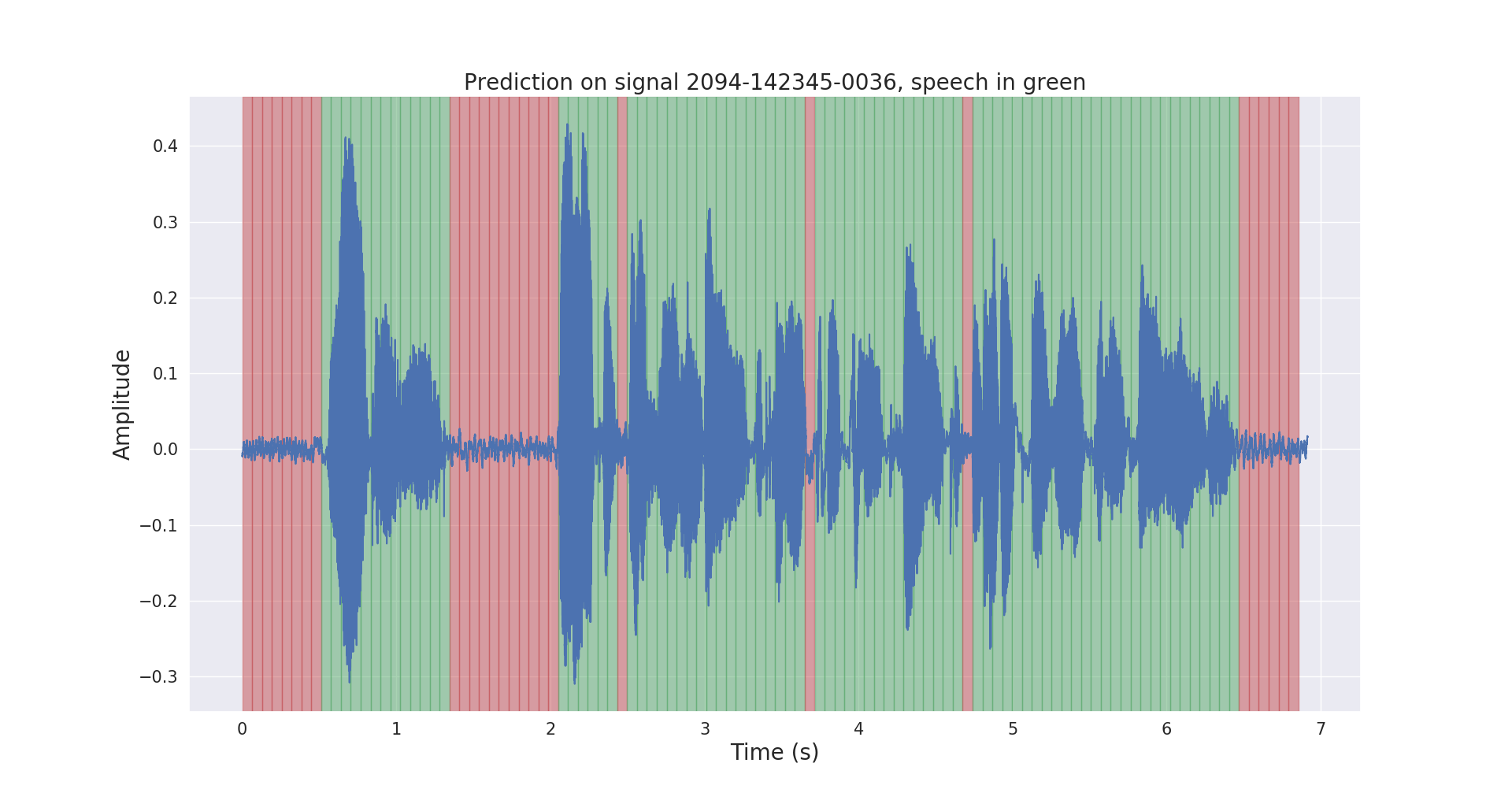

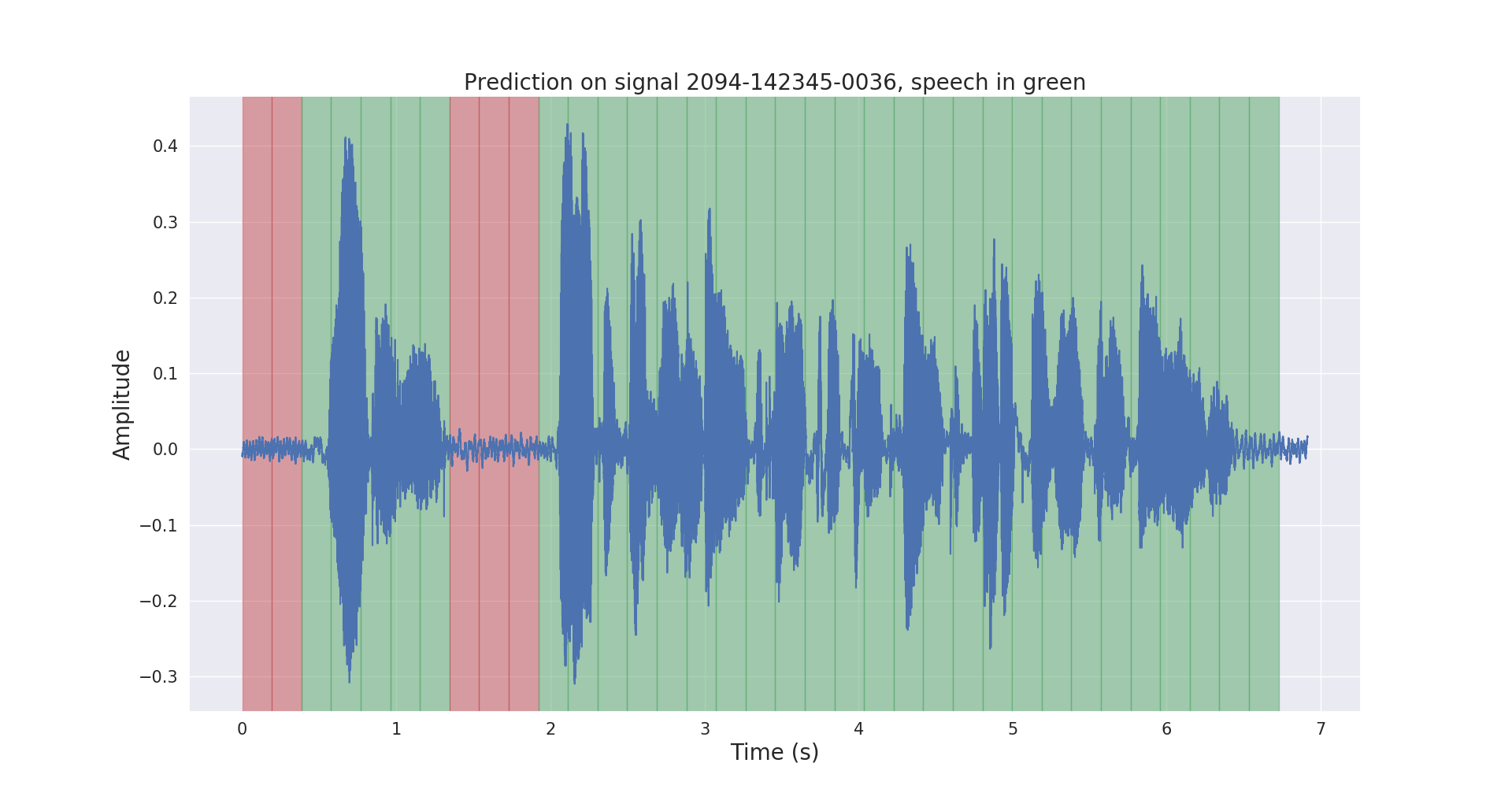

Raw and post-processed inference results on a test audio signal are shown below.

The project voice_activity_detection/ has the following structure:

vad/data_processing/: raw data labeling, processing,

recording & visualizationvad/training/: data, input pipeline, model

& training / evaluation / predictionvad/inference/: exporting trained model & inferencePlease download the LibriSpeech ASR corpus dataset from https://openslr.org/12/,

and extract all files to : /path/to/LibriSpeech/.

The dataset contains approximately 1000 hours of 16kHz read English speech from audiobooks, and is well suited for Voice Activity Detection.

I automatically annotated the test-clean set of the dataset with a

pretrained VAD model.

Please feel free to use the labels/ folder and the pre-trained VAD model (only for inference) from this

link .

$ cd /path/to/project/voice_activity_detection/vad/Skip this subsection if you already have the labels/ folder, that contains annotations

from a different pre-trained model.

$ python data_processing/librispeech_label_data.py --data-dir /path/to/LibriSpeech/test-clean/ --exported-model /path/to/pretrained/model/This will record the annotations into /path/to/LibriSpeech/labels/ as

.json files.

$ python data_processing/data_to_tfrecords.py --data-dir /path/to/LibriSpeech/This will record the splitted data to .tfrecord format in /path/to/LibriSpeech/tfrecords/

$ python training/train.py --data-dir /path/to/LibriSpeech/tfrecords/$ python inference/export_model.py --model-dir /path/to/trained/model/dir/

$ python inference/inference.py --data-dir /path/to/LibriSpeech/ --exported-model /path/to/exported/model/ --smoothingThe trained model will be recorded in /path/to/LibriSpeech/tfrecords/models/resnet1d/.

The exported model will be recorded inside this directory.