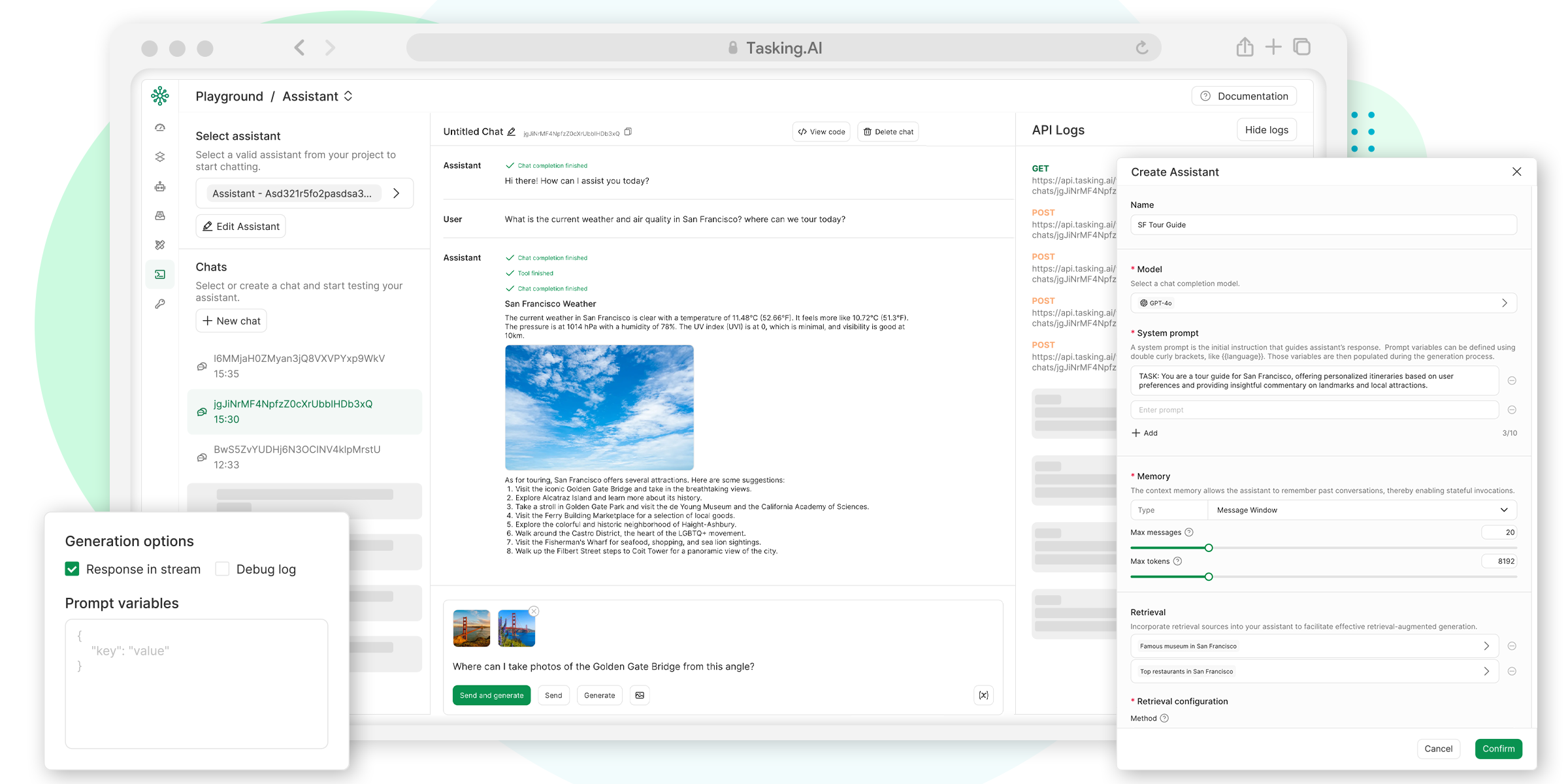

TaskingAI is a BaaS (Backend as a Service) platform for LLM-based Agent Development and Deployment. It unified the integration of hundreds of LLM models, and provides an intuitive user interface for managing your LLM application's functional modules, including tools, RAG systems, assistants, conversation history, and more.

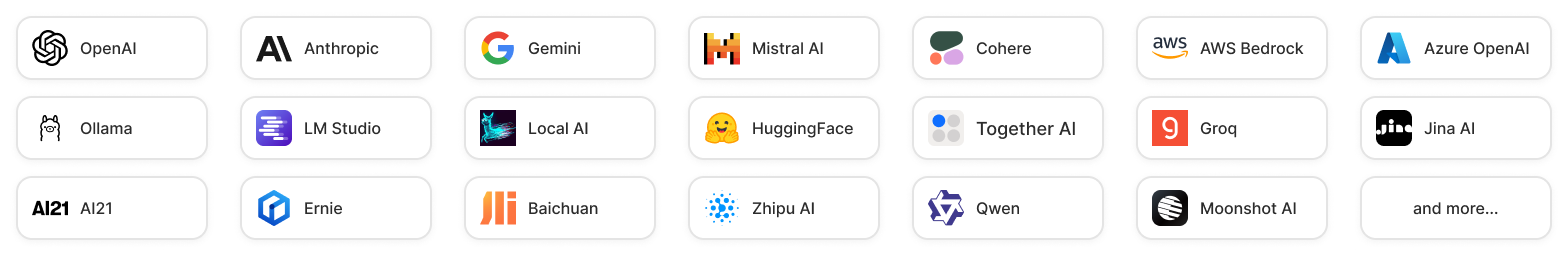

Models: TaskingAI connects with hundreds of LLMs from various providers, including OpenAI, Anthropic, and more. We also allow users to integrate local host models through Ollama, LM Studio and Local AI.

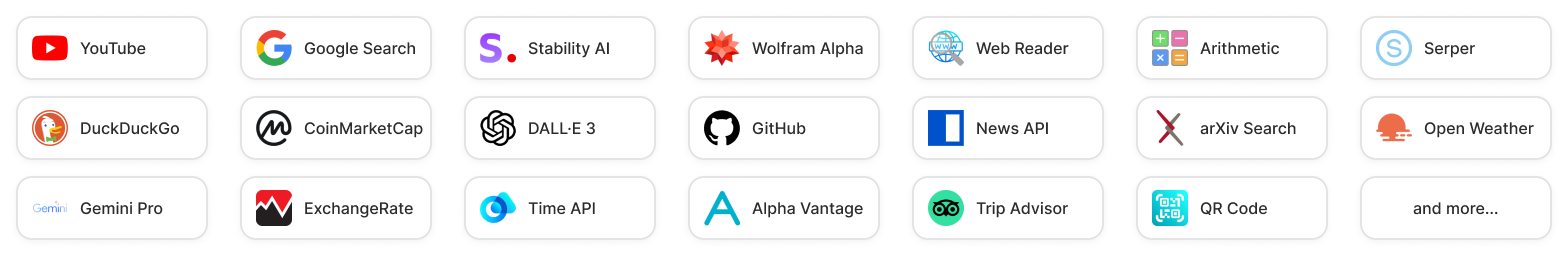

Plugins: TaskingAI supports a wide range of built-in plugins to empower your AI agents, including Google search, website reader, stock market retrieval, and more. Users can also create custom tools to meet their specific needs.

LangChain is a tool framework for LLM application development, but it faces practical limitations:

OpenAI's Assistant API excels in delivering GPTs-like functionalities but comes with its own constraints:

Please give us a FREE STAR ? if you find it helpful ?

A simple way to initiate self-hosted TaskingAI community edition is through Docker.

First, clone the TaskingAI (community edition) repository from GitHub.

git clone https://github.com/taskingai/taskingai.git

cd taskingaiInside the cloned repository, go to the docker directory.

cd dockerCopy .env.example to .env:

cp .env.example .envEdit the .env file:

Open the .env file in your favorite text editor and update the necessary configurations. Ensure all required environment variables are set correctly.

Start Docker Compose: Run the following command to start all services:

docker-compose -p taskingai --env-file .env up -dOnce the service is up, access the TaskingAI console through your browser with the URL http://localhost:8080. The default username and password are admin and TaskingAI321.

If you have already installed TaskingAI with a previous version and want to upgrade to the latest version, first update the repository.

git pull origin masterThen stop the current docker service, upgrade to the latest version by pulling the latest image, and finally restart the service.

cd docker

docker-compose -p taskingai down

docker-compose -p taskingai pull

docker-compose -p taskingai --env-file .env up -dDon't worry about data loss; your data will be automatically migrated to the latest version schema if needed.

Click the image above to view the TaskingAI Console Demo Video.

Once the console is up, you can programmatically interact with the TaskingAI server using the TaskingAI client SDK.

Ensure you have Python 3.8 or above installed, and set up a virtual environment (optional but recommended). Install the TaskingAI Python client SDK using pip.

pip install taskingaiHere is a client code example:

import taskingai

taskingai.init(api_key='YOUR_API_KEY', host='http://localhost:8080')

# Create a new assistant

assistant = taskingai.assistant.create_assistant(

model_id="YOUR_MODEL_ID",

memory="naive",

)

# Create a new chat

chat = taskingai.assistant.create_chat(

assistant_id=assistant.assistant_id,

)

# Send a user message

taskingai.assistant.create_message(

assistant_id=assistant.assistant_id,

chat_id=chat.chat_id,

text="Hello!",

)

# generate assistant response

assistant_message = taskingai.assistant.generate_message(

assistant_id=assistant.assistant_id,

chat_id=chat.chat_id,

)

print(assistant_message)Note that the YOUR_API_KEY and YOUR_MODEL_ID should be replaced with the actual API key and chat completion model ID you created in the console.

You can learn more in the documentation.

Please see our contribution guidelines for how to contribute to the project.

Also, we’re excited to announce that TaskingAI now has an official Discord community! ?

Join our Discord server to:

• Engage in discussions about TaskingAI, share ideas, and provide feedback.

• Get support, tips, and best practices from other users and our team.

• Stay updated on the latest news, updates, and feature releases.

• ? Network with like-minded individuals who are passionate about AI and task automation.

TaskingAI is released under a specific TaskingAI Open Source License. By contributing to this project, you agree to abide by its terms.

For support, please refer to our documentation or contact us at [email protected].