Download PDF • About • Errata •

"Keep learning, or risk becoming irrelevant."

In this first volume, I purposely present a coherent, cumulative, and content-specific core curriculum of the data science field, including topics such as information theory, Bayesian statistics, algorithmic differentiation, logistic regression, perceptrons, and convolutional neural networks. I hope you will find this book stimulating.

It is my belief that you the postgraduate students and job-seekers for whom the book is primarily meant will benefit from reading it; however, it is my hope that even the most experienced researchers will find it fascinating as well.

Contact Amir:

https://www.linkedin.com/in/amirivry/

https://scholar.google.com.mx/citations?user=rQCVwksAAAAJ&hl=iw

Contact Shlomo:

https://www.linkedin.com/in/quantscientist/

https://scholar.google.com.mx/citations?user=bM0LGgcAAAAJ&hl

This book is available for purchase through Amazon and other standard distribution channels. Please see the publisher's web page to order the book or to obtain further details on its publication. A manuscript of the book can be found below—it has been made available for personal use only and must not be sold.

https://amazon.com/author/quantscientist

https://arxiv.org/abs/2201.00650

@misc{kashani2021deep,

title={Deep Learning Interviews: Hundreds of fully solved job interview questions from a wide range of key topics in AI},

author={Shlomo Kashani and Amir Ivry},

year={2021},

eprint={2201.00650},

note = {ISBN 13: 978-1-9162435-4-5 },

url = {https://www.interviews.ai},

archivePrefix={arXiv},

primaryClass={cs.LG}

}SELLING OR COMMERCIAL USE IS STRICTLY PROHIBITED. The user rights of this e-resource are specified in a licence agreement below. You may only use this e-resource for the purposes private study. Any selling/reselling of its content is strictly prohibited.

This book (www.interviews.ai) was written for you: an aspiring data scientist with a quantitative background, facing down the gauntlet of the interview process in an increasingly competitive field. For most of you, the interview process is the most significant hurdle between you and a dream job. Even though you have the ability, the background, and the motivation to excel in your target position, you might need some guidance on how to get your foot in the door.

The second edition of Deep Learning Interviews (The Amazon Softcover is printed in B&W) is home to hundreds of fully-solved problems, from a wide range of key topics in AI. It is designed to both rehearse interview or exam specific topics and provide machine learning M.Sc./Ph.D. students, and those awaiting an interview a well-organized overview of the field. The problems it poses are tough enough to cut your teeth on and to dramatically improve your skills-but they’re framed within thought-provoking questions and engaging stories.

That is what makes the volume so specifically valuable to students and job seekers: it provides them with the ability to speak confidently and quickly on any relevant topic, to answer technical questions clearly and correctly, and to fully understand the purpose and meaning of interview questions and answers. Those are powerful, indispensable advantages to have when walking into the interview room.

The book’s contents is a large inventory of numerous topics relevant to DL job interviews and graduate level exams. That places this work at the forefront of the growing trend in science to teach a core set of practical mathematical and computational skills. It is widely accepted that the training of every computer scientist must include the fundamental theorems of ML, and AI appears in the curriculum of nearly every university. This volume is designed as an excellent reference for graduates of such programs.

The book spans almost 400 pages

Hundreds of fully-solved problems

Problems from numerous areas of deep learning

Clear diagrams and illustrations

A comprehensive index

Step-by-step solutions to problems

Not just the answers given, but the work shown

Not just the work shown, but reasoning given where appropriate

This book was written for you: an aspiring data scientist with a quantitative background, facing down the gauntlet of the interview process in an increasingly competitive field. For most of you, the interview process is the most significant hurdle between you and a dream job. Even though you have the ability, the background, and the motivation to excel in your target position, you might need some guidance on how to get your foot in the door. Your curiosity will pull you through the book’s problem sets, formulas, and instructions, and as you progress, you’ll deepen your understanding of deep learning. There are intricate connections between calculus, logistic regression, entropy, and deep learning theory; work through the book, and those connections will feel intuitive.

VOLUME-I of the book focuses on statistical perspectives and blends background fundamentals with core ideas and practical knowledge. There are dedicated chapters on:

Information Theory

Calculus & Algorithmic Differentiation

Bayesian Deep Learning & Probabilistic Programming

Logistic Regression

Ensemble Learning

Feature Extraction

Deep Learning: expanded chapter (100+ pages)

These chapters appear alongside numerous in-depth treatments of topics in Deep Learning with code examples in PyTorch, Python and C++.

"PyTorch" is a trademark of Facebook.

Copyright © Shlomo Kashani, author of the book "Deep Learning Interviews" Shlomo Kashani, Author of the book Deep Learning Interviews www.interviews.ai: [email protected]

Thank you to all the readers who pointed out these issues.Errata for the version 03/12/2020 printing and reflected in the online version:

Question number PRB-267 -CH.PRB- 8.91 was removed due to lack of clarity

Question number PRB-115 - CH.PRB- 5.16 was removed due to lack of clarity

Errata for the version 05/12/2020 printing and reflected in the online version:

Page 230, Question number PRB-178 amend “startified scross validation“ TO “stratified cross validation.“

Page 231, Question number PRB-181 added a ” .“ after data-folds

Page 231, Question number PRB-191 amend “an” to “a”

Page 234, Question number PRB-192 “in” repeated twice

Page 236, Question number PRB-194 amend “approached” to “approaches“, “arr” to “arr001”

Page 247, Question number PRB-210 amend “an” to “a”

Page 258, Question number PRB-227 amend “A confusion metrics” to “A confusion matrix”

Page 271, Question number PRB-240 amend “MaxPool2D(4,4,)” to “MaxPool2D(4,4)”

Page 273, Question number PRB-243 amend “identity” to “identify”

Page 281, Question number PRB-254 amend “suggest” to “suggests”

Page 283, Question number PRB-256 “happening” misspelled

Page 286, “L1, L2” amended to “Norms”

Page 288, Question number SOL-184 amend “the full” to “is the full”

Page 298, Question number SOL-208 amend “ou1” to “out”

Page 319, Question number SOL-240 amend “torch.Size([1, 32, 222, 222]).” to “torch.size([1, 32, 222, 222]).“

Page 283, Question number PRB-256 “happening” was misspelled

Errata for the version 07/12/2020 printing and reflected in the online version:

Page 187, Question number PRB-140 two missing plots (6.3, 6.4) which did not render correctly on the print version

6.3

6.4Errata for the version 09/21/2020 printing and reflected in the online version:

Page 34, Solution number SOL-19 , 0.21886 should be 0.21305 and 0.21886 ± 1.95 × 0.21886 should be 0.21305 ± 1.95 × 0.21886

Page 36-7, Solution number SOL-21 ,4.8792/0.0258 = 189.116 and not 57.3 and pi(33) = 0.01748 and not pi(33) = 0.211868.

Page 49, PRB-47 “What is the probability that the expert is a monkey“ should be “What is the probability that the expert is a human”

Errata for the version 09/22/2020 printing and reflected in the online version:

Page 73, Solution number SOL-56 should read ”The Hessian is generated by differentiating”

Page 57, Problem number PRB-65 should read ”two neurons”

Errata for the version 09/24/2020 printing and reflected in the online version:

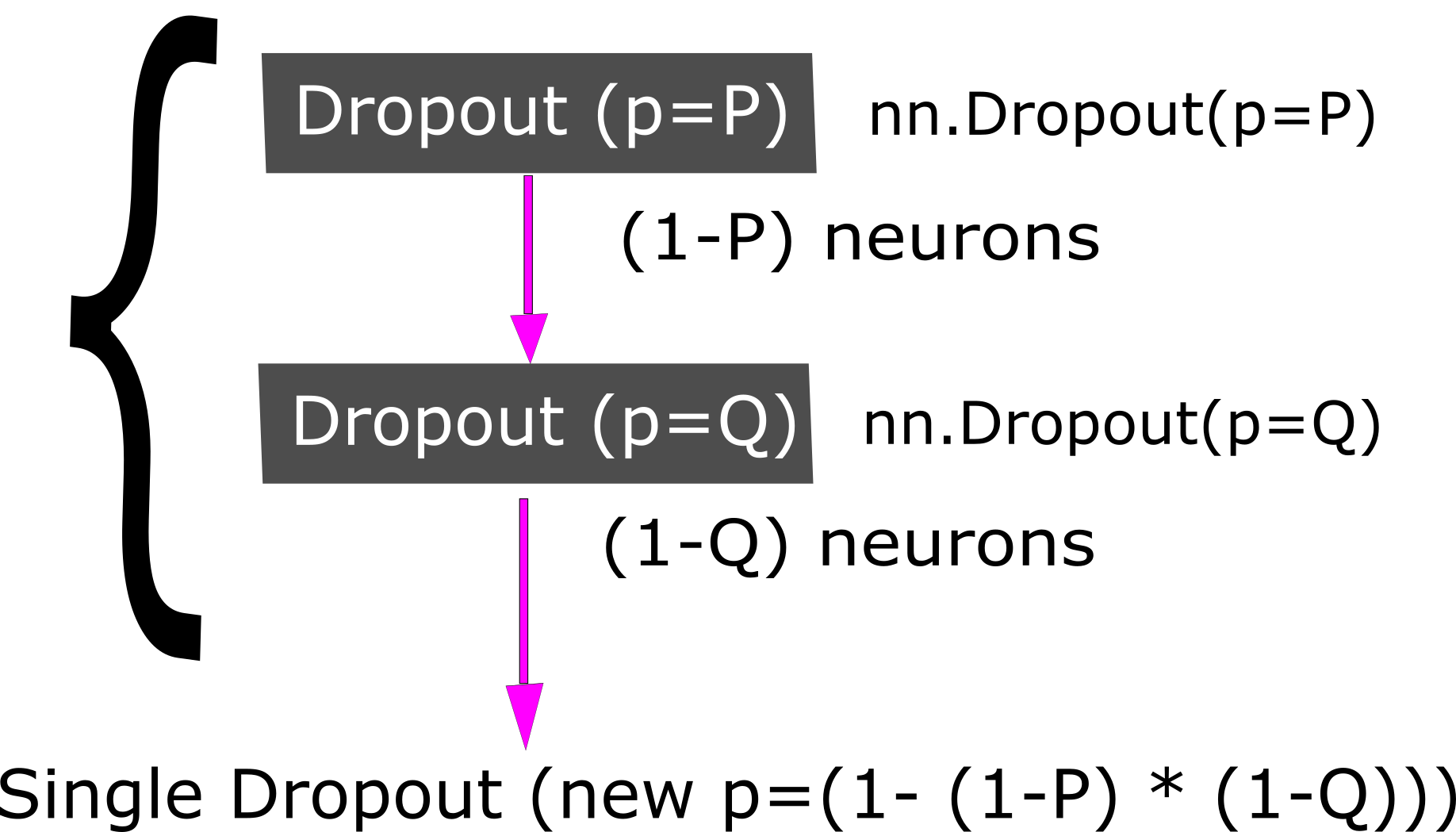

Page 78, Solution number SOL-64 , the OnOffLayer is off only if at least 150 out of 200 neurons are off. Therefore, this may be represented as a Binomial distribution and the probability for the layer to be off is :