This is the repo for the ChatPLUG project, which aims to build and share a Chinese open-domain dialogue system.

| 爱用emoji的萌妹子小婉 | 富有智慧的得道高僧 | 会说古文的的三国NPC关羽 |

|---|---|---|

|

|

|

Role-Play-Chat

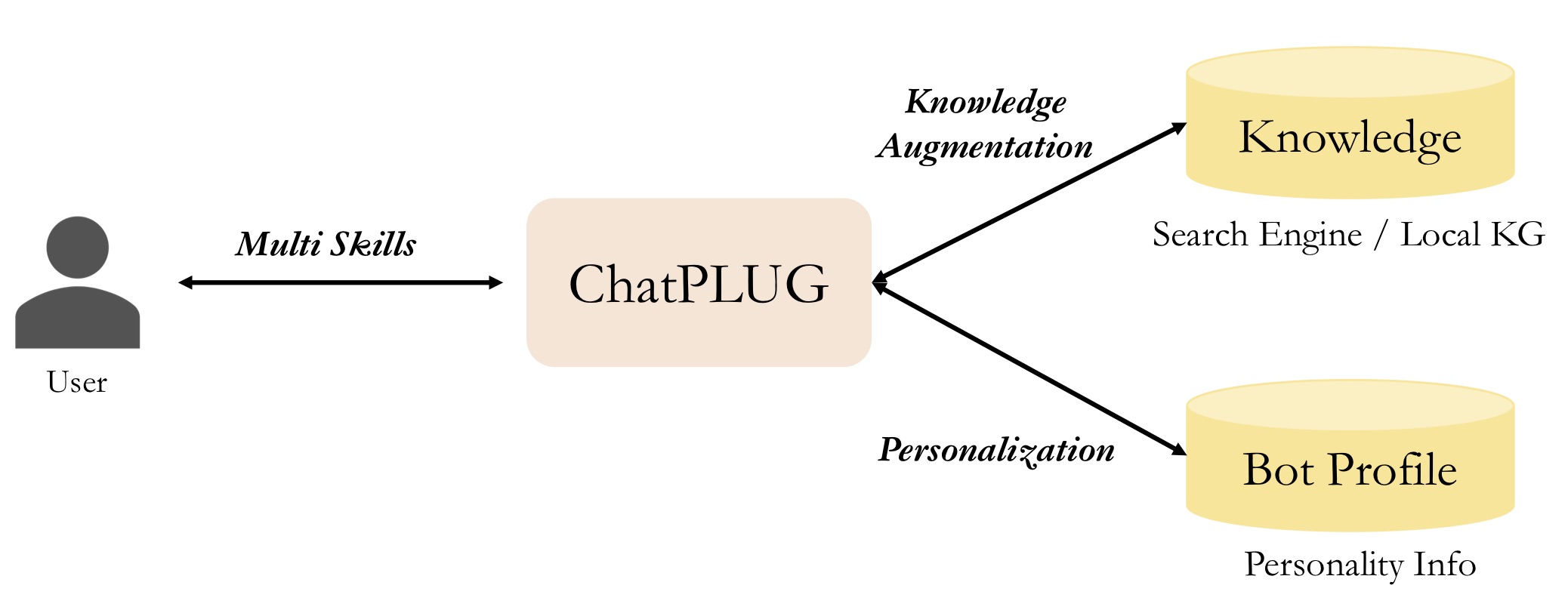

Compared with existed open-source models, we highlight three feaures of ChatPLUG as follows:

It's flexible to integrate external knowledge during inference, and this is an optional input. You can utilize a

search engineto acquire up-to-date information or use a local knowledge base to obtain domain knowledge.

It's easy to customize the style of conversations and characters by setting

bot profilesor usingrole-paly instructions.

It exhibits its proficiency in open-domain dialogue through mulit-turn conversation, while also displaying impressive

multi-task abilitieson a wide range of NLP tasks.

We offer three methods to use or continue developing ChatPLUG as follows:

| Getting Started | Inference | Train | Deploy | |

|---|---|---|---|---|

| ModelScope | Easy | ✔️ Cli | Not Ready | Not Ready |

| HuggingFace | Medium | ✔️ Cli | Not Ready | Not Ready |

| XDPX | Hard | ✔️ Cli | ✔️ Support | ✔️ Serving |

You can download and use ChatPLUG models from ModelScope.

| Model Name | URL |

|---|---|

| ChatPLUG-240M | ChatPLUG-开放域对话模型-240M |

| ChatPLUG-3.7B | ChatPLUG-开放域对话模型-3.7B |

Coming soon.

XDPX is an easy-to-use library, that allows researchers and developers to train custom models and build own chatbots in a streamlined manner. Its all-in-one functionality allows for a one-stop solution that simplifies complex processes. quick start

When using ChatPLUG-3.7B, you can set

core_chat_half_precision : trueto save memory.

# Requirement

# in the dir of XDPX

cd XDPX

pip install -e .

# Download checkpoints

# in the same dir as the download.sh

cd ..

sh download.sh

# Inference

# in the dir of XDPX

cd XDPX

CUDA_VISIBLE_DEVICES=0 x-script fidchat_new chat_pipeline/chatplug_3.7B_sftv2.6.0_instruction.hjson

# input `#exit` and exit the terminalIf your GPU(e.g. A100、A10) support bf16, set

deepspeed_bf16: trueanddeepspeed_fp16: false, otherwise setdeepspeed_bf16: falseanddeepspeed_fp16: true

# 1. Download dataset from belle

# in ChatPLUG/data/belle dir

cd data/belle

git lfs install

git clone https://huggingface.co/datasets/BelleGroup/train_0.5M_CN

python process_belle_0.5M.py

# $ls data/belle

# train_0.jsonl dev.jsonl ...

# 2. Preprocess Data

# in XDPX dir

x-prepro chat_pipeline/chatplug_prepro_sft_instruction.hjson

# $ls data/dialogue/sft/chatplug/belle_instruction

# train_0.pt dev.pt

# 3. Training

# in XDPX dir

x-train chat_pipeline/chatplug_3.7B_train_sftv2.6.0_instruction.hjsonComing soon.

Please refer to Installation for installation instructions.

For detailed user guides, please refer to our documentation:

User Guides

Advanced Guides

If you find our project useful in your work, please cite:

@misc{tian2023chatplug,

title={ChatPLUG: Open-Domain Generative Dialogue System with Internet-Augmented Instruction Tuning for Digital Human},

author={Junfeng Tian and Hehong Chen and Guohai Xu and Ming Yan and Xing Gao and Jianhai Zhang and Chenliang Li and Jiayi Liu and Wenshen Xu and Haiyang Xu and Qi Qian and Wei Wang and Qinghao Ye and Jiejing Zhang and Ji Zhang and Fei Huang and Jingren Zhou},

year={2023},

eprint={2304.07849},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

@misc{plug2021,

title = {{PLUG: Pre-training for Language Understanding and Generation}},

author={ModelScope},

publisher = {ModelScope},

journal = {ModelScope repository},

year = {2021},

howpublished = {url{https://modelscope.cn/models/damo/nlp_plug_text-generation_27B/summary}},

}

This code is licensed under the Apache License (Version 2.0).