pi zero pytorch

0.1.5

Physical Intelligence가 제안하는 로봇 기반 모델 아키텍처 π₀ 구현

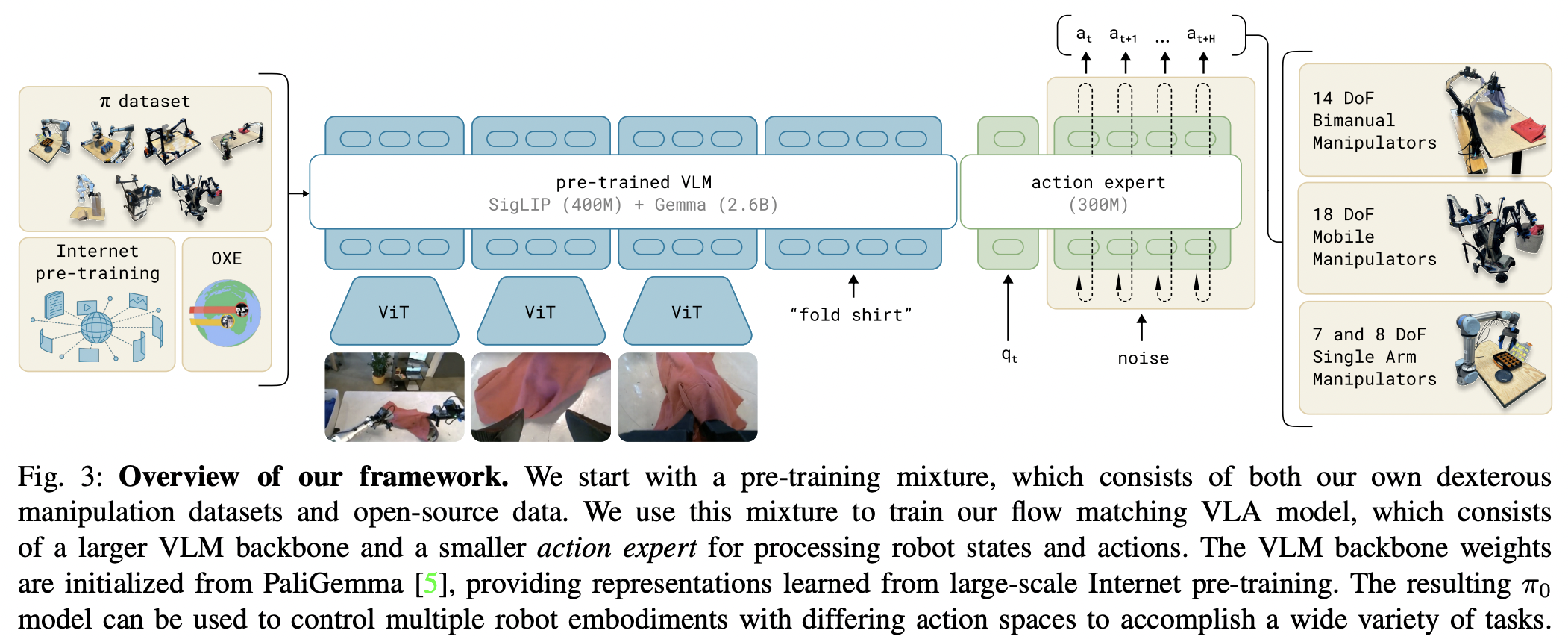

이 작업을 요약하면 Stable Diffusion 3(Esser et al.)의 영향을 받은 단순화된 Transfusion(Zhou et al.)이며 주로 정책 생성을 위해 확산 대신 흐름 일치를 채택하고 매개변수(mmDIT의 Joint Attention). 이는 사전 훈련된 비전 언어 모델인 PaliGemma 2B를 기반으로 구축됩니다.

다양한 토큰 세트를 관리하기 위해 여기에서 광범위하게 사용되는 놀라운 팩 및 언팩을 위한 Einops

자동 회귀 주의와 양방향 주의를 쉽게 혼합할 수 있는 Flex Attention

코드 검토 및 문제 식별을 위한 @Wonder1905

너? 어쩌면 행동 복제를 위한 최신 SOTA 아키텍처에 기여하고 싶은 박사 과정 학생일까요?

$ pip install pi-zero-pytorch import torch

from pi_zero_pytorch import π0

model = π0 (

dim = 512 ,

dim_action_input = 6 ,

dim_joint_state = 12 ,

num_tokens = 20_000

)

vision = torch . randn ( 1 , 1024 , 512 )

commands = torch . randint ( 0 , 20_000 , ( 1 , 1024 ))

joint_state = torch . randn ( 1 , 12 )

actions = torch . randn ( 1 , 32 , 6 )

loss , _ = model ( vision , commands , joint_state , actions )

loss . backward ()

# after much training

sampled_actions = model ( vision , commands , joint_state , trajectory_length = 32 ) # (1, 32, 6)프로젝트 루트에서 다음을 실행합니다.

$ pip install ' .[test] ' # or `uv pip install '.[test]'` 그런 다음 tests/test_pi_zero.py 에 테스트를 추가하고 실행하십시오.

$ pytest tests/그게 다야

@misc { Black2024 ,

author = { Kevin Black, Noah Brown, Danny Driess, Adnan Esmail, Michael Equi, Chelsea Finn, Niccolo Fusai, Lachy Groom, Karol Hausman, Brian Ichter, Szymon Jakubczak, Tim Jones, Liyiming Ke, Sergey Levine, Adrian Li-Bell, Mohith Mothukuri, Suraj Nair, Karl Pertsch, Lucy Xiaoyang Shi, James Tanner, Quan Vuong, Anna Walling, Haohuan Wang, Ury Zhilinsky } ,

url = { https://www.physicalintelligence.company/download/pi0.pdf }

} @inproceedings { Zhou2024ValueRL ,

title = { Value Residual Learning For Alleviating Attention Concentration In Transformers } ,

author = { Zhanchao Zhou and Tianyi Wu and Zhiyun Jiang and Zhenzhong Lan } ,

year = { 2024 } ,

url = { https://api.semanticscholar.org/CorpusID:273532030 }

} @inproceedings { Darcet2023VisionTN ,

title = { Vision Transformers Need Registers } ,

author = { Timoth'ee Darcet and Maxime Oquab and Julien Mairal and Piotr Bojanowski } ,

year = { 2023 } ,

url = { https://api.semanticscholar.org/CorpusID:263134283 }

} @article { Li2024ImmiscibleDA ,

title = { Immiscible Diffusion: Accelerating Diffusion Training with Noise Assignment } ,

author = { Yiheng Li and Heyang Jiang and Akio Kodaira and Masayoshi Tomizuka and Kurt Keutzer and Chenfeng Xu } ,

journal = { ArXiv } ,

year = { 2024 } ,

volume = { abs/2406.12303 } ,

url = { https://api.semanticscholar.org/CorpusID:270562607 }

} @inproceedings { Sadat2024EliminatingOA ,

title = { Eliminating Oversaturation and Artifacts of High Guidance Scales in Diffusion Models } ,

author = { Seyedmorteza Sadat and Otmar Hilliges and Romann M. Weber } ,

year = { 2024 } ,

url = { https://api.semanticscholar.org/CorpusID:273098845 }

} @article { Bulatov2022RecurrentMT ,

title = { Recurrent Memory Transformer } ,

author = { Aydar Bulatov and Yuri Kuratov and Mikhail S. Burtsev } ,

journal = { ArXiv } ,

year = { 2022 } ,

volume = { abs/2207.06881 } ,

url = { https://api.semanticscholar.org/CorpusID:250526424 }

} @inproceedings { Bessonov2023RecurrentAT ,

title = { Recurrent Action Transformer with Memory } ,

author = { A. B. Bessonov and Alexey Staroverov and Huzhenyu Zhang and Alexey K. Kovalev and D. Yudin and Aleksandr I. Panov } ,

year = { 2023 } ,

url = { https://api.semanticscholar.org/CorpusID:259188030 }

} @article { Zhu2024HyperConnections ,

title = { Hyper-Connections } ,

author = { Defa Zhu and Hongzhi Huang and Zihao Huang and Yutao Zeng and Yunyao Mao and Banggu Wu and Qiyang Min and Xun Zhou } ,

journal = { ArXiv } ,

year = { 2024 } ,

volume = { abs/2409.19606 } ,

url = { https://api.semanticscholar.org/CorpusID:272987528 }

}친애하는 앨리스