该存储库包含我们的论文《Grounding Large Language Models with Online Reinforcement Learning》所使用的代码。

您可以在我们的网站上找到更多信息。

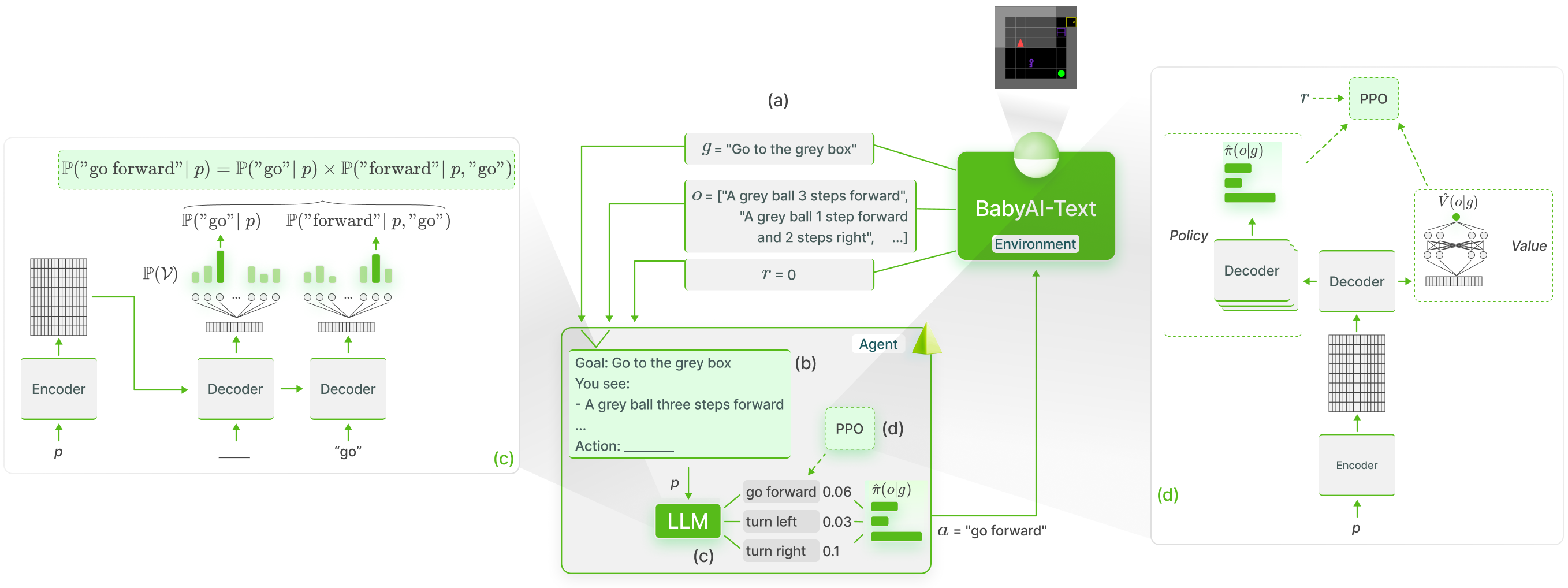

我们使用GLAM方法对 BabyAI-Text 中的法学硕士知识进行功能性基础:

我们发布了 BabyAI-Text 环境以及执行实验的代码(训练代理并评估其性能)。我们依靠 Lamorel 图书馆来使用法学硕士。

我们的存储库的结构如下:

? Grounding_LLMs_with_online_RL

┣babyai babyai-text我们的BabyAI-Text环境

┣ experiments ——我们的实验代码

┃ ┣ agents ——执行我们所有的代理

┃ ┃ ┣ bot --利用 BabyAI 机器人的机器人代理

┃ ┃ ┣ random_agent --代理均匀随机播放

┃ ┃ ┣ drrn -- DRRN 代理来自这里

┃ ┃ ┣ ppo --使用 PPO 的代理

┃ ┃ ┃ ┣ symbolic_ppo_agent.py -- SymbolicPPO 改编自 BabyAI 的 PPO

┃ ┃ ┃ ┗ llm_ppo_agent.py --我们的 LLM 代理基于 PPO

┃ ┣ configs --我们实验的 Lamorel 配置

┃ ┣ slurm --在 SLURM 集群上启动我们的实验的 utils 脚本

┃ ┣ campaign ——用于启动我们实验的 SLURM 脚本

┃ ┣ train_language_agent.py --使用 BabyAI-Text(LLM 和 DRRN)训练代理 -> 包含我们对 LLM 的 PPO 损失的实现以及 LLM 之上的附加头

┃ ┣ train_symbolic_ppo.py --在 BabyAI 上训练 SymbolicPPO(使用 BabyAI-Text 的任务)

┃ ┣ post-training_tests.py --经过训练的智能体的泛化测试

┃ ┣ test_results.py --格式化结果的实用程序

┃ ┗ clm_behavioral-cloning.py —使用轨迹对 LLM 执行行为克隆的代码

conda create -n dlp python=3.10.8; conda activate dlp

conda install pytorch==1.12.1 torchvision==0.13.1 torchaudio==0.12.1 cudatoolkit=11.3 -c pytorch

pip install -r requirements.txt

安装 BabyAI-Text :请参阅babyai-text包中的安装详细信息

安装拉莫雷尔

git clone https://github.com/flowersteam/lamorel.git; cd lamorel/lamorel; pip install -e .; cd ../..

请与我们的配置一起使用 Lamorel。您可以在活动中找到我们的培训脚本示例。

要在 BabyAI-Text 环境中训练语言模型,必须使用train_language_agent.py文件。该脚本(使用 Lamorel 启动)使用以下配置条目:

rl_script_args :

seed : 1

number_envs : 2 # Number of parallel envs to launch (steps will be synchronized, i.e. a step call will return number_envs observations)

num_steps : 1000 # Total number of training steps

max_episode_steps : 3 # Maximum number of steps in a single episode

frames_per_proc : 40 # The number of collected transitions to perform a PPO update will be frames_per_proc*number_envs

discount : 0.99 # Discount factor used in PPO

lr : 1e-6 # Learning rate used to finetune the LLM

beta1 : 0.9 # PPO's hyperparameter

beta2 : 0.999 # PPO's hyperparameter

gae_lambda : 0.99 # PPO's hyperparameter

entropy_coef : 0.01 # PPO's hyperparameter

value_loss_coef : 0.5 # PPO's hyperparameter

max_grad_norm : 0.5 # Maximum grad norm when updating the LLM's parameters

adam_eps : 1e-5 # Adam's hyperparameter

clip_eps : 0.2 # Epsilon used in PPO's losses clipping

epochs : 4 # Number of PPO epochs performed on each set of collected trajectories

batch_size : 16 # Minibatch size

action_space : ["turn_left","turn_right","go_forward","pick_up","drop","toggle"] # Possible actions for the agent

saving_path_logs : ??? # Where to store logs

name_experiment : ' llm_mtrl ' # Useful for logging

name_model : ' T5small ' # Useful for logging

saving_path_model : ??? # Where to store the finetuned model

name_environment : ' BabyAI-MixedTestLocal-v0 ' # BabiAI-Text's environment

load_embedding : true # Whether trained embedding layers should be loaded (useful when lm_args.pretrained=False). Setting both this and use_action_heads to True (lm_args.pretrained=False) creates our NPAE agent.

use_action_heads : false # Whether action heads should be used instead of scoring. Setting both this and use_action_heads to True (lm_args.pretrained=False) creates our NPAE agent.

template_test : 1 # Which prompt template to use to log evolution of action's probability (Section C of our paper). Choices or [1, 2].

nbr_obs : 3 # Number of past observation used in the prompt对于与语言模型本身相关的配置条目,请参阅Lamorel。

要评估代理(例如经过训练的 LLM、BabyAI 机器人...)在测试任务上的性能,请使用post-training_tests.py并设置以下配置条目:

rl_script_args :

seed : 1

number_envs : 2 # Number of parallel envs to launch (steps will be synchronized, i.e. a step call will return number_envs observations)

max_episode_steps : 3 # Maximum number of steps in a single episode

action_space : ["turn_left","turn_right","go_forward","pick_up","drop","toggle"] # Possible actions for the agent

saving_path_logs : ??? # Where to store logs

name_experiment : ' llm_mtrl ' # Useful for logging

name_model : ' T5small ' # Useful for logging

saving_path_model : ??? # Where to store the finetuned model

name_environment : ' BabyAI-MixedTestLocal-v0 ' # BabiAI-Text's environment

load_embedding : true # Whether trained embedding layers should be loaded (useful when lm_args.pretrained=False). Setting both this and use_action_heads to True (lm_args.pretrained=False) creates our NPAE agent.

use_action_heads : false # Whether action heads should be used instead of scoring. Setting both this and use_action_heads to True (lm_args.pretrained=False) creates our NPAE agent.

nbr_obs : 3 # Number of past observation used in the prompt

number_episodes : 10 # Number of test episodes

language : ' english ' # Useful to perform the French experiment (Section H4)

zero_shot : true # Whether the zero-shot LLM (i.e. without finetuning should be used)

modified_action_space : false # Whether a modified action space (e.g. different from the one seen during training) should be used

new_action_space : # ["rotate_left","rotate_right","move_ahead","take","release","switch"] # Modified action space

im_learning : false # Whether a LLM produced with Behavioral Cloning should be used

im_path : " " # Path to the LLM learned with Behavioral Cloning

bot : false # Whether the BabyAI's bot agent should be used