customer support chatbot

1.0.0

聊天機器人是一種以類似人類的方式進行對話的電腦程式。該專案實現了聊天機器人,它嘗試作為客戶支援代理回答用戶的問題。實施了以下客戶支援聊天機器人:AppleSupport、AmazonHelp、Uber_Support、Delta 和 SpotifyCares。聊天機器人接受了 Twitter 上客戶支援和用戶之間公開對話的訓練。

聊天機器人被實現為具有註意力的序列到序列深度學習模型。項目主要基於 Bahdanau 等人。 2014,Luong 等人。 2015. 和 Vinyals 等人,2015..

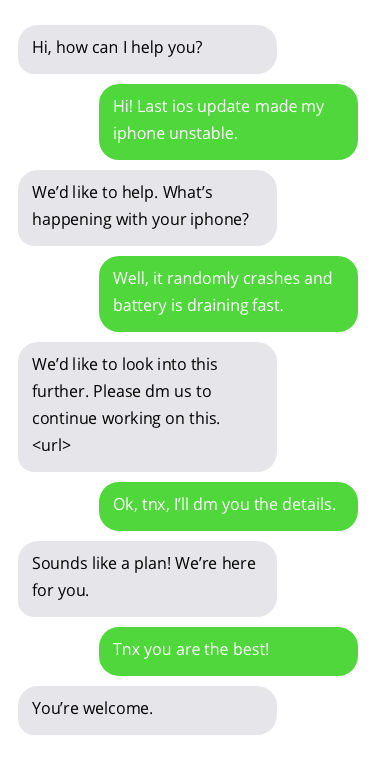

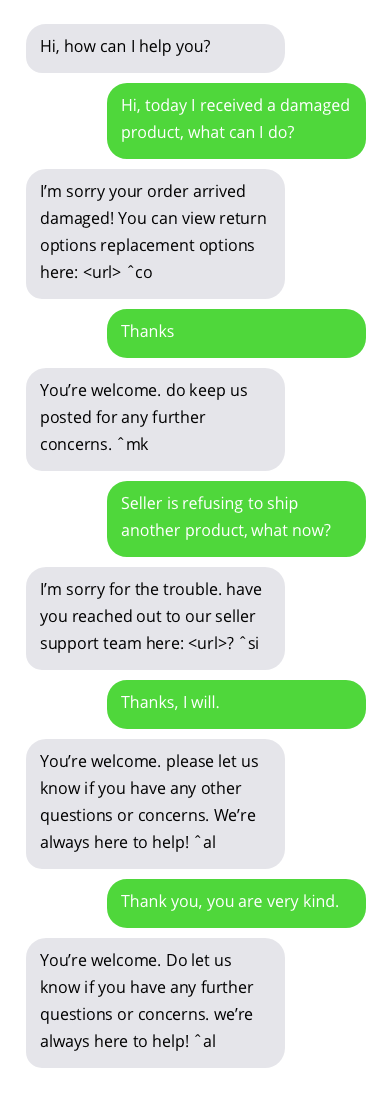

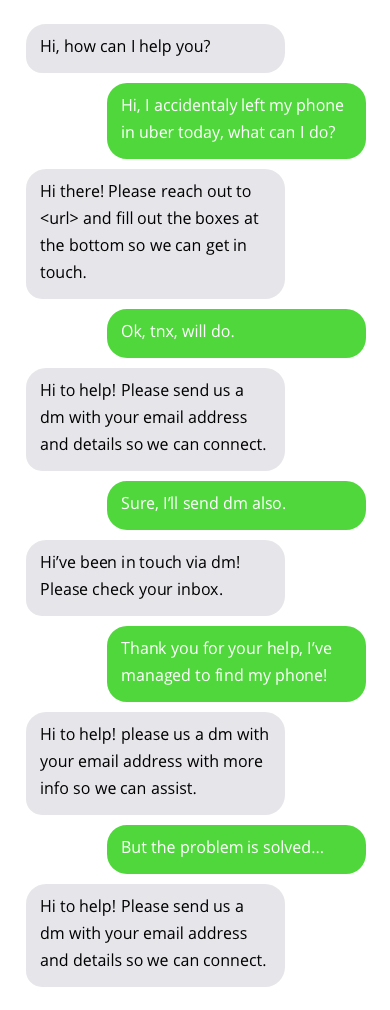

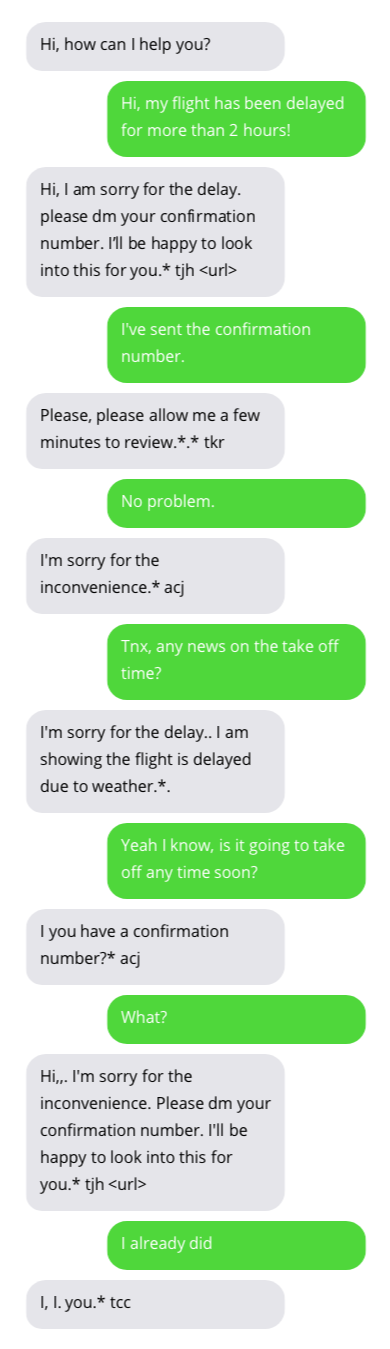

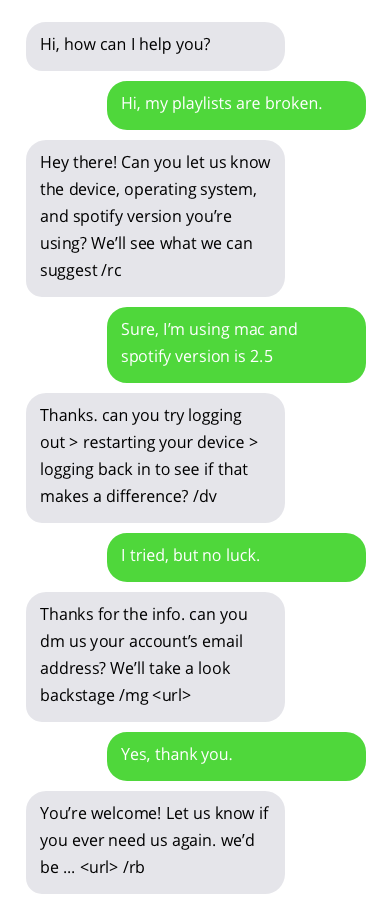

與客戶支援聊天機器人的對話範例。與聊天機器人的對話並不理想,但顯示出有希望的結果。聊天機器人的答案位於灰色氣泡中。

用於訓練聊天機器人的資料集可以在這裡找到。該資料集是透過收集 Twitter 上客戶支援和用戶之間的公開對話創建的。非常感謝數據集的作者!

您可以透過使用預先訓練的模型或訓練您自己的聊天機器人來嘗試聊天機器人。

pip3 install -r requirements.txt

python3 -m spacy download en在此儲存庫的根目錄中執行以下命令以下載預先訓練的客戶服務聊天機器人。

wget https://www.dropbox.com/s/ibm49gx1gefpqju/pretrained-models.zip

unzip pretrained-models.zip

rm pretrained-models.zip

sudo chmod +x predict.py現在您可以使用predict.py腳本與客戶服務聊天機器人「交談」。以下客戶服務聊天機器人可用: apple,amazon,uber,delta,spotify 。以下範例展示如何運行apple客戶服務聊天機器人:

./predict.py -cs apple您可以選擇自己訓練聊天機器人。執行以下命令來下載並格式化本專案中使用的 Twitter 資料集:

wget https://www.dropbox.com/s/nmnlcncn7jtb7i9/twcs.zip

unzip twcs.zip

mkdir data

mv twcs.csv data

rm twcs.zip

python3 datasets/twitter_customer_support/format.py # this runs for couple of hours

sudo chmod +x train.py警告此區塊將運行幾個小時!

現在您可以使用train.py來訓練聊天機器人。

train.py用於訓練 seq2seq 聊天機器人。

usage: train.py [-h] [--max-epochs MAX_EPOCHS] [--gradient-clip GRADIENT_CLIP]

[--batch-size BATCH_SIZE] [--learning-rate LEARNING_RATE]

[--train-embeddings] [--save-path SAVE_PATH]

[--save-every-epoch]

[--dataset {twitter-applesupport,twitter-amazonhelp,twitter-delta,twitter-spotifycares,twitter-uber_support,twitter-all,twitter-small}]

[--teacher-forcing-ratio TEACHER_FORCING_RATIO] [--cuda]

[--multi-gpu]

[--embedding-type {glove.42B.300d,glove.840B.300d,glove.twitter.27B.25d,glove.twitter.27B.50d,glove.twitter.27B.100d,glove.twitter.27B.200d,glove.6B.50d,glove.6B.100d,glove.6B.200d,glove.6B.300d} | --embedding-size EMBEDDING_SIZE]

[--encoder-rnn-cell {LSTM,GRU}]

[--encoder-hidden-size ENCODER_HIDDEN_SIZE]

[--encoder-num-layers ENCODER_NUM_LAYERS]

[--encoder-rnn-dropout ENCODER_RNN_DROPOUT]

[--encoder-bidirectional] [--decoder-type {bahdanau,luong}]

[--decoder-rnn-cell {LSTM,GRU}]

[--decoder-hidden-size DECODER_HIDDEN_SIZE]

[--decoder-num-layers DECODER_NUM_LAYERS]

[--decoder-rnn-dropout DECODER_RNN_DROPOUT]

[--luong-attn-hidden-size LUONG_ATTN_HIDDEN_SIZE]

[--luong-input-feed]

[--decoder-init-type {zeros,bahdanau,adjust_pad,adjust_all}]

[--attention-type {none,global,local-m,local-p}]

[--attention-score {dot,general,concat}]

[--half-window-size HALF_WINDOW_SIZE]

[--local-p-hidden-size LOCAL_P_HIDDEN_SIZE]

[--concat-attention-hidden-size CONCAT_ATTENTION_HIDDEN_SIZE]

Script for training seq2seq chatbot.

optional arguments:

-h, --help show this help message and exit

--max-epochs MAX_EPOCHS

Max number of epochs models will be trained.

--gradient-clip GRADIENT_CLIP

Gradient clip value.

--batch-size BATCH_SIZE

Batch size.

--learning-rate LEARNING_RATE

Initial learning rate.

--train-embeddings Should gradients be propagated to word embeddings.

--save-path SAVE_PATH

Folder where models (and other configs) will be saved

during training.

--save-every-epoch Save model every epoch regardless of validation loss.

--dataset {twitter-applesupport,twitter-amazonhelp,twitter-delta,twitter-spotifycares,twitter-uber_support,twitter-all,twitter-small}

Dataset for training model.

--teacher-forcing-ratio TEACHER_FORCING_RATIO

Teacher forcing ratio used in seq2seq models. [0-1]

--embedding-type {glove.42B.300d,glove.840B.300d,glove.twitter.27B.25d,glove.twitter.27B.50d,glove.twitter.27B.100d,glove.twitter.27B.200d,glove.6B.50d,glove.6B.100d,glove.6B.200d,glove.6B.300d}

Pre-trained embeddings type.

--embedding-size EMBEDDING_SIZE

Dimensionality of word embeddings.

GPU:

GPU related settings.

--cuda Use cuda if available.

--multi-gpu Use multiple GPUs if available.

Encoder:

Encoder hyperparameters.

--encoder-rnn-cell {LSTM,GRU}

Encoder RNN cell type.

--encoder-hidden-size ENCODER_HIDDEN_SIZE

Encoder RNN hidden size.

--encoder-num-layers ENCODER_NUM_LAYERS

Encoder RNN number of layers.

--encoder-rnn-dropout ENCODER_RNN_DROPOUT

Encoder RNN dropout probability.

--encoder-bidirectional

Use bidirectional encoder.

Decoder:

Decoder hyperparameters.

--decoder-type {bahdanau,luong}

Type of the decoder.

--decoder-rnn-cell {LSTM,GRU}

Decoder RNN cell type.

--decoder-hidden-size DECODER_HIDDEN_SIZE

Decoder RNN hidden size.

--decoder-num-layers DECODER_NUM_LAYERS

Decoder RNN number of layers.

--decoder-rnn-dropout DECODER_RNN_DROPOUT

Decoder RNN dropout probability.

--luong-attn-hidden-size LUONG_ATTN_HIDDEN_SIZE

Luong decoder attention hidden projection size

--luong-input-feed Whether Luong decoder should use input feeding

approach.

--decoder-init-type {zeros,bahdanau,adjust_pad,adjust_all}

Decoder initial RNN hidden state initialization.

Attention:

Attention hyperparameters.

--attention-type {none,global,local-m,local-p}

Attention type.

--attention-score {dot,general,concat}

Attention score function type.

--half-window-size HALF_WINDOW_SIZE

D parameter from Luong et al. paper. Used only for

local attention.

--local-p-hidden-size LOCAL_P_HIDDEN_SIZE

Local-p attention hidden size (used when predicting

window position).

--concat-attention-hidden-size CONCAT_ATTENTION_HIDDEN_SIZE

Attention layer hidden size. Used only with concat

score function.

predict.py用於與 seq2seq 聊天機器人「交談」。

usage: predict.py [-h] [-cs {apple,amazon,uber,delta,spotify}] [-p MODEL_PATH]

[-e EPOCH] [--sampling-strategy {greedy,random,beam_search}]

[--max-seq-len MAX_SEQ_LEN] [--cuda]

Script for "talking" with pre-trained chatbot.

optional arguments:

-h, --help show this help message and exit

-cs {apple,amazon,uber,delta,spotify}, --customer-service {apple,amazon,uber,delta,spotify}

-p MODEL_PATH, --model-path MODEL_PATH

Path to directory with model args, vocabulary and pre-

trained pytorch models.

-e EPOCH, --epoch EPOCH

Model from this epoch will be loaded.

--sampling-strategy {greedy,random,beam_search}

Strategy for sampling output sequence.

--max-seq-len MAX_SEQ_LEN

Maximum length for output sequence.

--cuda Use cuda if available.