在 Pytorch 中實現 MusicLM,這是 Google 使用注意力網路生成音樂的新 SOTA 模型。

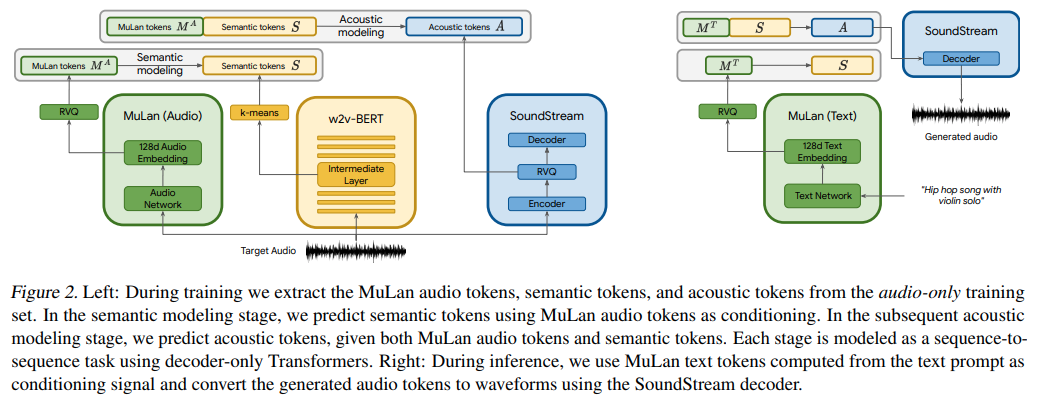

他們基本上使用文字條件的 AudioLM,但令人驚訝的是使用名為 MuLan 的文字音訊對比學習模型的嵌入。 MuLan 是在此儲存庫中建立的內容,AudioLM 是從其他儲存庫修改而來的,以支援此處的音樂生成需求。

如果您有興趣幫助 LAION 社區進行複製,請加入

路易斯‧布沙爾 (Louis Bouchard) 的《什麼是人工智慧》

Stability.ai 慷慨贊助工作與開源尖端人工智慧研究

? Huggingface 的加速訓練庫

$ pip install musiclm-pytorch

MuLaN首先需要訓練

import torch

from musiclm_pytorch import MuLaN , AudioSpectrogramTransformer , TextTransformer

audio_transformer = AudioSpectrogramTransformer (

dim = 512 ,

depth = 6 ,

heads = 8 ,

dim_head = 64 ,

spec_n_fft = 128 ,

spec_win_length = 24 ,

spec_aug_stretch_factor = 0.8

)

text_transformer = TextTransformer (

dim = 512 ,

depth = 6 ,

heads = 8 ,

dim_head = 64

)

mulan = MuLaN (

audio_transformer = audio_transformer ,

text_transformer = text_transformer

)

# get a ton of <sound, text> pairs and train

wavs = torch . randn ( 2 , 1024 )

texts = torch . randint ( 0 , 20000 , ( 2 , 256 ))

loss = mulan ( wavs , texts )

loss . backward ()

# after much training, you can embed sounds and text into a joint embedding space

# for conditioning the audio LM

embeds = mulan . get_audio_latents ( wavs ) # during training

embeds = mulan . get_text_latents ( texts ) # during inference要獲得作為AudioLM一部分的三個變壓器的條件嵌入,您必須使用MuLaNEmbedQuantizer ,如下所示

from musiclm_pytorch import MuLaNEmbedQuantizer

# setup the quantizer with the namespaced conditioning embeddings, unique per quantizer as well as namespace (per transformer)

quantizer = MuLaNEmbedQuantizer (

mulan = mulan , # pass in trained mulan from above

conditioning_dims = ( 1024 , 1024 , 1024 ), # say all three transformers have model dimensions of 1024

namespaces = ( 'semantic' , 'coarse' , 'fine' )

)

# now say you want the conditioning embeddings for semantic transformer

wavs = torch . randn ( 2 , 1024 )

conds = quantizer ( wavs = wavs , namespace = 'semantic' ) # (2, 8, 1024) - 8 is number of quantizers要訓練(或微調)作為AudioLM一部分的三個變壓器,您只需按照audiolm-pytorch上的說明進行訓練,但將MulanEmbedQuantizer實例傳遞到關鍵字audio_conditioner下的訓練類

前任。 SemanticTransformerTrainer

import torch

from audiolm_pytorch import HubertWithKmeans , SemanticTransformer , SemanticTransformerTrainer

wav2vec = HubertWithKmeans (

checkpoint_path = './hubert/hubert_base_ls960.pt' ,

kmeans_path = './hubert/hubert_base_ls960_L9_km500.bin'

)

semantic_transformer = SemanticTransformer (

num_semantic_tokens = wav2vec . codebook_size ,

dim = 1024 ,

depth = 6 ,

audio_text_condition = True # this must be set to True (same for CoarseTransformer and FineTransformers)

). cuda ()

trainer = SemanticTransformerTrainer (

transformer = semantic_transformer ,

wav2vec = wav2vec ,

audio_conditioner = quantizer , # pass in the MulanEmbedQuantizer instance above

folder = '/path/to/audio/files' ,

batch_size = 1 ,

data_max_length = 320 * 32 ,

num_train_steps = 1

)

trainer . train ()在對所有三個變壓器(語義、粗略、精細)進行大量訓練後,您將把經過微調或從頭開始訓練的AudioLM和MuLaN傳遞給MuLaNEmbedQuantizer中的MusicLM

# you need the trained AudioLM (audio_lm) from above

# with the MulanEmbedQuantizer (mulan_embed_quantizer)

from musiclm_pytorch import MusicLM

musiclm = MusicLM (

audio_lm = audio_lm , # `AudioLM` from https://github.com/lucidrains/audiolm-pytorch

mulan_embed_quantizer = quantizer # the `MuLaNEmbedQuantizer` from above

)

music = musiclm ( 'the crystalline sounds of the piano in a ballroom' , num_samples = 4 ) # sample 4 and pick the top match with mulan 木蘭似乎正在使用解耦對比學習,將其作為一種選擇

用 mulan 包裝器包裝 mulan 並量化輸出,投影到 audiolm 維度

修改audiolm以接受條件嵌入,可以選擇透過單獨的投影處理不同的維度

audiolm 和 mulan 進入 musiclm 並使用 mulan 產生、過濾

為 AST 中的自註意力提供動態位置偏差

實現 MusicLM 產生多個樣本並選擇與 MuLaN 的最佳匹配

支援可變長度音頻,並在音頻轉換器中進行掩蔽

添加花木蘭版本打開剪輯

設定所有正確的頻譜圖超參數

@inproceedings { Agostinelli2023MusicLMGM ,

title = { MusicLM: Generating Music From Text } ,

author = { Andrea Agostinelli and Timo I. Denk and Zal{'a}n Borsos and Jesse Engel and Mauro Verzetti and Antoine Caillon and Qingqing Huang and Aren Jansen and Adam Roberts and Marco Tagliasacchi and Matthew Sharifi and Neil Zeghidour and C. Frank } ,

year = { 2023 }

} @article { Huang2022MuLanAJ ,

title = { MuLan: A Joint Embedding of Music Audio and Natural Language } ,

author = { Qingqing Huang and Aren Jansen and Joonseok Lee and Ravi Ganti and Judith Yue Li and Daniel P. W. Ellis } ,

journal = { ArXiv } ,

year = { 2022 } ,

volume = { abs/2208.12415 }

} @misc { https://doi.org/10.48550/arxiv.2302.01327 ,

doi = { 10.48550/ARXIV.2302.01327 } ,

url = { https://arxiv.org/abs/2302.01327 } ,

author = { Kumar, Manoj and Dehghani, Mostafa and Houlsby, Neil } ,

title = { Dual PatchNorm } ,

publisher = { arXiv } ,

year = { 2023 } ,

copyright = { Creative Commons Attribution 4.0 International }

} @article { Liu2022PatchDropoutEV ,

title = { PatchDropout: Economizing Vision Transformers Using Patch Dropout } ,

author = { Yue Liu and Christos Matsoukas and Fredrik Strand and Hossein Azizpour and Kevin Smith } ,

journal = { ArXiv } ,

year = { 2022 } ,

volume = { abs/2208.07220 }

} @misc { liu2021swin ,

title = { Swin Transformer V2: Scaling Up Capacity and Resolution } ,

author = { Ze Liu and Han Hu and Yutong Lin and Zhuliang Yao and Zhenda Xie and Yixuan Wei and Jia Ning and Yue Cao and Zheng Zhang and Li Dong and Furu Wei and Baining Guo } ,

year = { 2021 } ,

eprint = { 2111.09883 } ,

archivePrefix = { arXiv } ,

primaryClass = { cs.CV }

} @misc { gilmer2023intriguing

title = { Intriguing Properties of Transformer Training Instabilities } ,

author = { Justin Gilmer, Andrea Schioppa, and Jeremy Cohen } ,

year = { 2023 } ,

status = { to be published - one attention stabilization technique is circulating within Google Brain, being used by multiple teams }

} @inproceedings { Shukor2022EfficientVP ,

title = { Efficient Vision-Language Pretraining with Visual Concepts and Hierarchical Alignment } ,

author = { Mustafa Shukor and Guillaume Couairon and Matthieu Cord } ,

booktitle = { British Machine Vision Conference } ,

year = { 2022 }

} @inproceedings { Zhai2023SigmoidLF ,

title = { Sigmoid Loss for Language Image Pre-Training } ,

author = { Xiaohua Zhai and Basil Mustafa and Alexander Kolesnikov and Lucas Beyer } ,

year = { 2023 }

}唯一的真理就是音樂。 ——傑克‧凱魯亞克

音樂是人類的通用語言。 ——亨利·沃茲沃斯·朗費羅