Easily compute clip embeddings and build a clip retrieval system with them. 100M text+image embeddings can be processed in 20h using a 3080.

End to end this make it possible to build a simple semantic search system. Interested to learn about semantic search in general ? You can read my medium post on the topic.

Also see laion5B and semantic search at billions scale to read more on how to make this scale to billion of samples.

If you believe in making reusable tools to make data easy to use for ML and you would like to contribute, please join the DataToML chat.

pip install clip-retrieval

If your interest it to run the laion5B index, see this doc

ClipClient allows remote querying of a clip-retrieval backend via python.

See ClipClient - Getting Started Notebook for a jupyter notebook example.

During initialization you can specify a few parameters:

backend_url: the url of the backend. (required)indice_name: specify the name of the index you want to use. (required)aesthetic_score: the aesthetic score as rated by aesthetic-predictor. Default is 9.use_mclip: whether to use a multi-lingual version of CLIP. Default is False.aesthetic_weight: the weight of the aesthetic score. Default is 0.5

modality: search over image or text in the index, one of Multimodal.IMAGE or Multimodal.TEXT. Default is Multimodal.IMAGE.num_images: the number of images to return from the API. Default is 40.deduplicate: Whether to deduplicate the result by image embedding. Default is true.use_safety_model: Whether to remove unsafe images. Default is true.use_violence_detector: Whether to remove images with violence. Default is true.For instance, to query the hosted backend for Laion5B with the default parameters:

from clip_retrieval.clip_client import ClipClient, Modality

client = ClipClient(url="https://knn.laion.ai/knn-service", indice_name="laion5B-L-14")You can find captioned images similar to the text you provide.

results = client.query(text="an image of a cat")

results[0]

> {'url': 'https://example.com/kitten.jpg', 'caption': 'an image of a kitten', 'id': 14, 'similarity': 0.2367108941078186}You can also find captioned images similar to the image you provide. Images can be passed via local path or url.

cat_results = client.query(image="cat.jpg")

dog_results = client.query(image="https://example.com/dog.jpg")You can also find captioned images similar to a clip embedding you provide.

cat_results = client.query(embedding_input=cat_embedding)To enhance an existing dataset with similar text/image pairs, you can query a directory of images and combine the results.

all_results = [result for result in [client.query(image=image) for image in os.listdir("my-images")]]

with open("search-results.json", "w") as f:

json.dump(all_results, f)You can create a dataset using the saved json results and the tool img2dataset.

img2dataset "search-results.json"

--input_format="json"

--output_folder="knn_search_dataset"

--caption_col="caption"First pick a dataset of image urls and captions (examples) then run:

You may want to run export CUDA_VISIBLE_DEVICES= to avoid using your GPU if it doesn't have enough VRAM.

wget https://github.com/rom1504/img2dataset/raw/main/tests/test_files/test_1000.parquet

clip-retrieval end2end test_1000.parquet /tmp/my_output

Then go to http://localhost:1234 and enjoy searching among your pictures

Use --run_back False if you don't want to run the backend

Get some images in an example_folder, for example by doing:

pip install img2dataset

echo 'https://placekitten.com/200/305' >> myimglist.txt

echo 'https://placekitten.com/200/304' >> myimglist.txt

echo 'https://placekitten.com/200/303' >> myimglist.txt

img2dataset --url_list=myimglist.txt --output_folder=image_folder --thread_count=64 --image_size=256

You can also put text files with the same names as the images in that folder, to get the text embeddings.

Then run clip-retrieval inference --input_dataset image_folder --output_folder embeddings_folder

Output folder will contain:

This scales to million of samples. At 1400 sample/s of a 3080, 10M samples can be processed in 2h.

clip_inference turn a set of text+image into clip embeddings

"open_clip:ViT-B-32/laion2b_s34b_b79k" to use the open_clip or "hf_clip:patrickjohncyh/fashion-clip" to use the hugging face clip model.DeepSparse is an inference runtime for fast sparse model inference on CPUs. There is a backend available within clip-retrieval by installing it with pip install deepsparse-nightly[clip], and specifying a clip_model with a prepended "nm:", such as "nm:neuralmagic/CLIP-ViT-B-32-256x256-DataComp-s34B-b86K-quant-ds" or "nm:mgoin/CLIP-ViT-B-32-laion2b_s34b_b79k-ds".

If you wish to have more control over how inference is run, you can create and call workers directly using clip-retrieval inference.worker

Example Usage:

clip-retrieval inference.worker

--tasks="[0]"

--input_dataset="input/folder/{000000..000100}.tar"

--output_folder="example/path"

--input_format="webdataset"

--output_partition_count="1"Doing so will invoke a single worker that can be instructed to focus on a specific subset of the input_dataset. That worker will sequentially process the tasks passed to it. Here, tasks is a lists of partition_id's that this worker will be responsible for.

To manually compute the number of tasks, use the following formula: number_samples / wds_number_file_per_input_file.

The API is very similar to clip-retrieval inference with some minor changes:

partition_id's that this worker is responsible for computing. (required)"open_clip:ViT-B-32-quickgelu" to use the open_clip or "hf_clip:patrickjohncyh/fashion-clip" to use the hugging face clip model.Note: The worker does not accept the following arguments

- write_batch_size Write batch size (default 10**6)

- distribution_strategy choose how to distribute the job, see distribution section for details (default sequential)

- wds_number_file_per_input_file estimation of the number of sample per tar if using wds and not specifying output_partition_count (default 10000)

- any of the SLURM arguments

Example of hdfs query using webdataset format: `clip_inference --input_dataset "pipe:hdfs dfs -cat /myfolder/webdataset/{00000..00010}.tar" --output_folder "hdfs://myfolder/embeddings" --input_format webdataset

`clip_inference --input_dataset "pipe:aws s3 cp --quiet s3://myfolder/webdataset/{00000..00010}.tar -" --output_folder "s3://myfolder/embeddings" --input_format webdataset

To run this on multiple nodes (and multiple gpus), see tutorial at docs/distributed_clip_inference.md

Clip index takes as input the output of clip inference and makes an index out of it using autofaiss

clip-retrieval index --embeddings_folder embeddings_folder --index_folder index_folder

--max_index_memory_usage "16G" option allow configuring the amount of ram the index will consume. More ram, better knn recall (Default 4G).--current_memory_available 24G allows controlling how much ram is used during the creation process (Default 16G).--image_subfolder "img_emb" allows to specify a subfolder for the image embeddings which is concatenated to the --embeddings_folder option (Default img_emb).--text_subfolder "text_emb" allows to specify a subfolder for the text embeddings which is concatenated to the --embeddings_folder option (Default text_emb).--copy_metadata True makes it possible to choose whether to copy metadata or not at the end of the process (Default True).--nb_cores 8 allows controlling the number of threads (Default None, which will use all cores).The output is a folder containing:

Thanks to autofaiss and faiss, this scales to hundred of million of samples in a few hours.

You may want to carefully pick how much memory to use for your index in order to maximize the knn recall.

autofaiss index selection colab can help along with autofaiss score_index command to check the recall of your index. In general indices using more memory get a better recall and hence are closer to a naive (slow) knn

Once the embeddings are computed, you may want to filter out the data by a specific query.

For that you can run clip-retrieval filter --query "cat" --output_folder "cat/" --indice_folder "indice_folder"

It will copy the 100 best images for this query in the output folder.

Using the --num_results or --threshold may be helpful to refine the filter

Thanks to fast knn index, this can run in real time (<10ms) for large K values (100000), and in minutes for very large K values.

This scripts works for small datasets. For larger ones, please check [notebook/simple_filter.ipynb].

Clip back is a simple knn service backend. If using both hdf5 and faiss memory mapping, it uses only the memory used by clip which is 4GB.

Run (output_folder is the output of clip index)

echo '{"example_index": "output_folder"}' > indices_paths.json

clip-retrieval back --port 1234 --indices-paths indices_paths.jsonOptions:

--use_jit True uses jit for the clip model--clip_model "ViT-B/32" allows choosing the clip model to use. Prefix with "open_clip:" to use an open_clip model.--enable_mclip_option True loads the mclip model, making it possible to search in any language.--columns_to_return='["url", "image_path", "caption", "NSFW"] allows you to specify which columns should be fetched from the metadata and returned by the backend. It's useful to specify less in case of hdf5 caching to speed up the queries.--enable_faiss_memory_mapping=True option can be passed to use an index with memory mapping.

That decreases the memory usage to zero.--enable_hdf5 True option can be passed to enable hdf5 caching for the metadata.

HDF5 caching makes it possible to use the metadata with almost no memory usage.--use_arrow True allows using arrow instead of hdf5. Should be used along with clip_back_prepro for very large datasets (billions)--reorder_metadata_by_ivf_index True option takes advantage of the data locality property of results of a knn ivf indices: it orders the metadata collection in order of the IVF clusters. That makes it possible to have much faster metadata retrieval as the reads are then accessing a few mostly sequential parts of the metadata instead of many non sequential parts. In practice that means being able to retrieve 1M items in 1s whereas only 1000 items can be retrieved in 1s without this method. This will order the metadata using the first image index.--provide_safety_model True will automatically download and load a safety model. You need to pip install autokeras optional dependency for this to work.--provide_violence_detector True will load a violence detector, paper--provide_aesthetic_embeddings True will load the aesthetic embeddings and allow users to make the query move towards a nicer point of the clip spaceThese options can also be provided in the config file to have different options for each index. Example:

{

"laion5B": {

"indice_folder": "/mnt/laion5B/prepared_data",

"provide_safety_model": true,

"enable_faiss_memory_mapping": true,

"use_arrow": true,

"enable_hdf5": false,

"reorder_metadata_by_ivf_index": false,

"columns_to_return": ["url", "caption"],

"clip_model": "ViT-L/14",

"enable_mclip_option": false

},

"laion_400m": {

"indice_folder": "/mnt/laion400M/index100",

"provide_safety_model": true,

"enable_faiss_memory_mapping": true,

"enable_hdf5": true,

"use_arrow": false,

"reorder_metadata_by_ivf_index": true,

"enable_mclip_option": true,

"clip_model": "ViT-B/32"

}

}hdf5 or arrow caching is a good idea to use if:

At this point you have a simple flask server running on port 1234 and that can answer these queries:

/indices-list -> return a list of indices/knn-service that takes as input:{

"text": "a text query",

"image": "a base64 image",

"image_url": "http://some-url.com/a.jpg",

"modality": "image", // image or text index to use

"num_images": 4, // number of output images

"indice_name": "example_index",

"num_result_ids": 4 // optional, if specified fetch this number of results in total but only num_images with metadata

}text, image and image_url are mutually exclusive and returns:

[

{

"image": "base 64 of an image",

"text": "some result text",

"id": 543

},

{

"image": "base 64 of an image",

"text": "some result text",

"id": 782

}

]Each object may also contain an url field if the metadata provides it.

The id is the position of the item in the index. It may be used to query metadata with the /metadata endpoint:

{

"indice_name": "example_index",

"ids": [543, 782]

}which returns:

{

"image": "base 64 of an image",

"text": "some result text"

// any other key available in the metadata and specified in columns_to_return cli option

}num_result_ids argument of /knn-service and /metadata can be used together to do large knn queries and then fetch the metadata only when needed. It makes sense to do that as knn search can be very efficient thanks to strong locality of reference of knn IVF index making it fast to do knn with a large K, whereas the current on-disk implementation of metadata (hdf5) does not have that property and hence cannot handle retrieving a large amount of random items fast.

In particular this can be used to implement infinite scroll in a front end.

By default the backend will also expose a front end. That front end will by default hit this backend, however you may need to specify whether this is happening over http or https, in this case use the option --default_backend to specify the backend url. --url_column allows specifying the name of the column url for the front

This backends has a 50ms latency if using memory mapped indices and metadata. Throughput is about 20 query/s. For high throughput, using a grpc server is required as well as a GPU for fast clip inference, turning off memory mapping options can also speed up requests, at the cost of high ram usage.

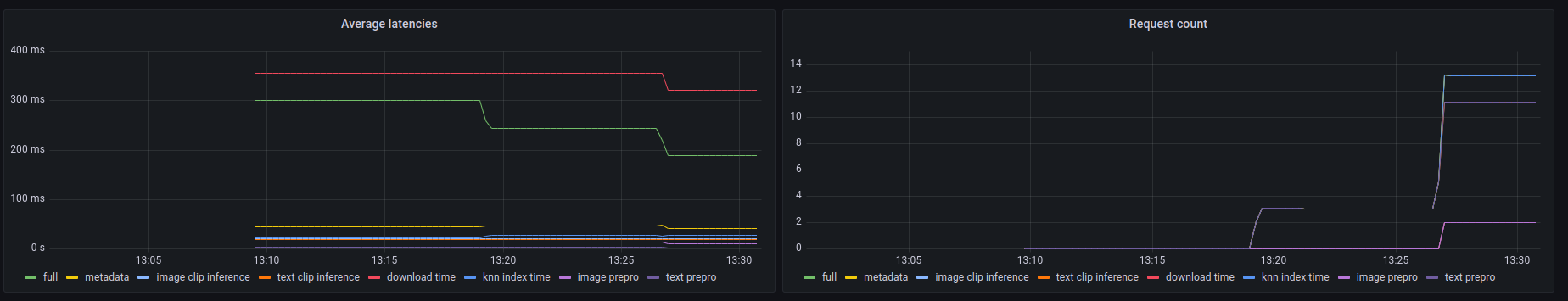

This backends also exposes a prometheus /metrics endpoint as well as an human readable summary at /metrics-summary.

This can (optionally) be used to setup a grafana dashboard for monitoring:

It can be seen on this dashboard that the slowest part of any call is fetching the image by its url in case of image url search, taking up to 300ms. For text queries or image queries, the latency is about 50ms. Here is an example of output in the metrics summary:

Among 20.0 calls to the knn end point with an average latency of 0.1889s per request, the step costs are (in order):

name description calls average proportion

0 download_time Time spent downloading an url 6 0.3215s 170.2%

1 metadata_get_time Time spent retrieving metadata 20 0.0415s 21.9%

2 knn_index_time Time spent doing a knn on the index 20 0.0267s 14.1%

3 image_clip_inference_time Time spent doing a image clip inference 6 0.0206s 10.9%

4 text_clip_inference_time Time spent doing a text clip inference 14 0.0186s 9.8%

5 image_prepro_time Time spent doing the image preprocessing 6 0.0097s 5.2%

6 text_prepro_time Time spent doing the text preprocessing 14 0.0020s 1.0%

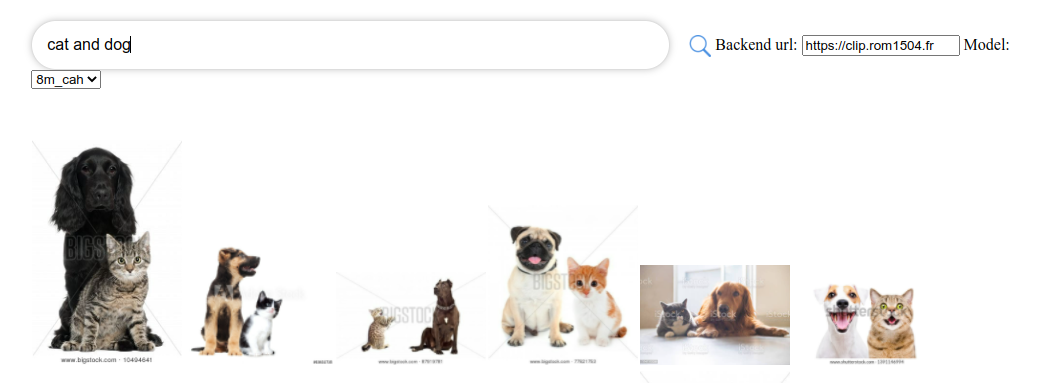

Clip front is a simple UI that connects to clip back and display the results. You can use it at clip-retrieval ui

Or you can run it yourself with:

npm install -g clip-retrieval-front

clip-retrieval-front 3005

You can also run it with clip-retrieval front or back from the python package.

For development it, go to front and run npm install then npm start.

Either locally, or in gitpod (do export PIP_USER=false there)

Setup a virtualenv:

python3 -m venv .env

source .env/bin/activate

pip install -e .

to run tests:

pip install -r requirements-test.txt

then

make lint

make test

You can use make black to reformat the code

python -m pytest -x -s -v tests -k "test_runner" to run a specific test

If you want to use the front through the python backend or frontend, run

cd front

npm install

npm run build

cd ..

pip install -e .

@misc{beaumont-2022-clip-retrieval,

author = {Romain Beaumont},

title = {clip retrieval: Easily compute clip embeddings and build a clip retrieval system with them},

year = {2022},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {url{https://github.com/rom1504/clip-retrieval}}

}