| 이 저장소에 공개된 성능 레시피에 대해 자세히 알아보고 싶다면 내 책 "Spring Boot Persistence Best Practices"를 좋아하실 것이라고 확신합니다. | 100개 이상의 Java 지속성 성능 문제에 대한 팁과 그림이 필요한 경우 "Java Persistence Performance Illustrated Guide"가 적합합니다. |

|

|

최대 절전 모드 및 스프링 부트 샘플

설명: 이 애플리케이션은 날짜, 시간 및 타임스탬프를 UTC 시간대에 저장하는 방법에 대한 샘플입니다. 두 번째 설정인 useLegacyDatetimeCode 는 MySQL에만 필요합니다. 그렇지 않으면 hibernate.jdbc.time_zone 만 설정하십시오.

핵심 사항:

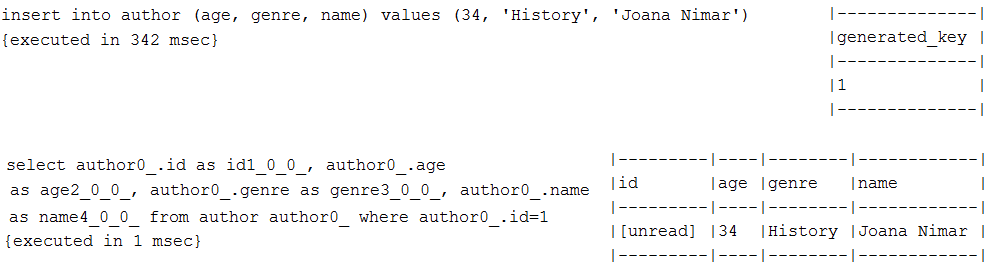

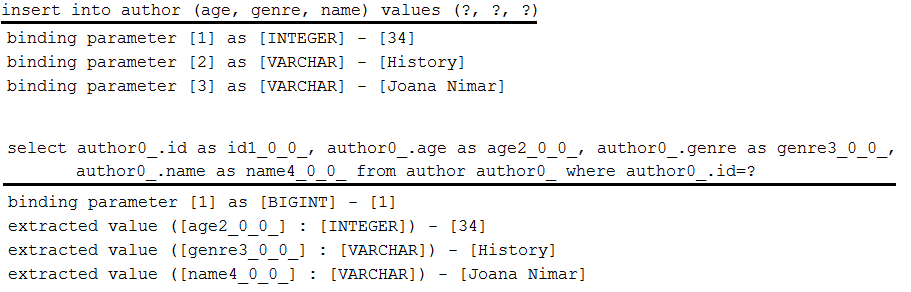

spring.jpa.properties.hibernate.jdbc.time_zone=UTCspring.datasource.url=jdbc:mysql://localhost:3306/screenshotdb?useLegacyDatetimeCode=false설명: Log4J 2 로거 설정을 통해 준비된 명령문 바인딩/추출된 매개변수를 봅니다.

핵심 사항:

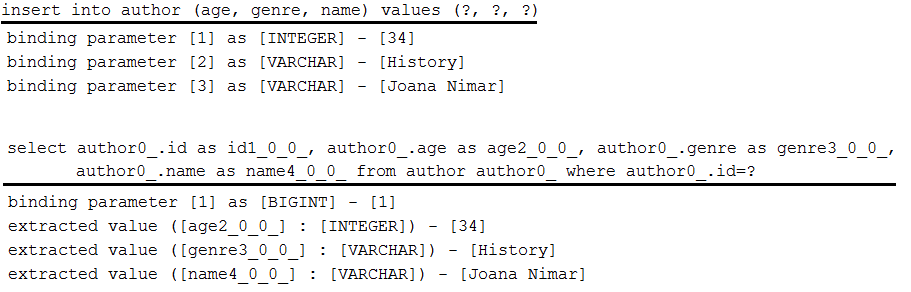

pom.xml 에서 Spring Boot의 기본 로깅을 제외합니다.pom.xml 에서 Log4j 2 종속성을 추가합니다.log4j2.xml 에 <Logger name="org.hibernate.type.descriptor.sql" level="trace"/> 를 추가하세요. 출력 예:

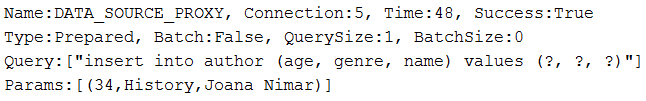

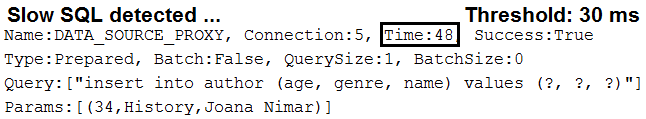

설명: DataSource-Proxy를 통해 쿼리 세부 정보(쿼리 유형, 바인딩 매개변수, 배치 크기, 실행 시간 등)를 봅니다.

핵심 사항:

pom.xml 에 datasource-proxy 종속성을 추가하세요.DataSource 빈을 가로채기 위해 빈 포스트 프로세서를 생성합니다.ProxyFactory 와 MethodInterceptor 구현을 통해 DataSource 빈을 래핑합니다. 출력 예:

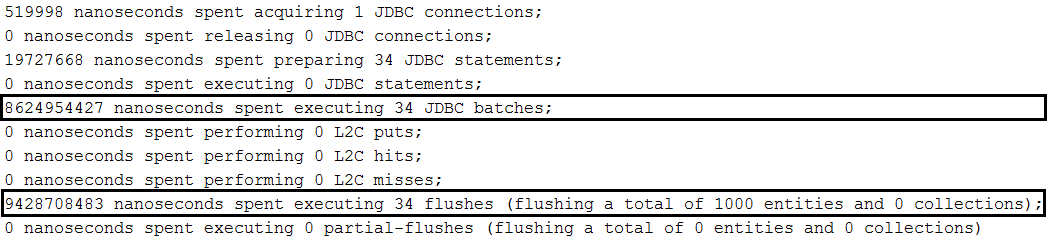

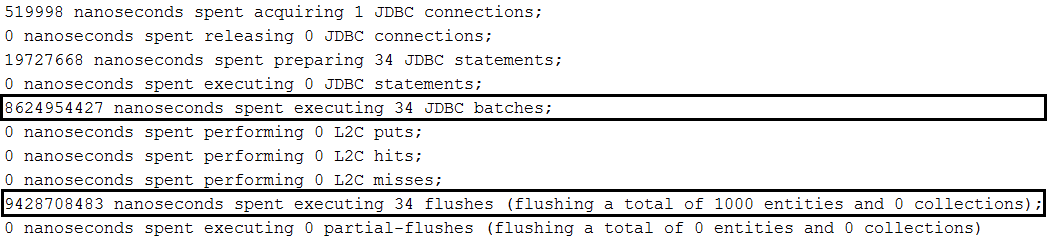

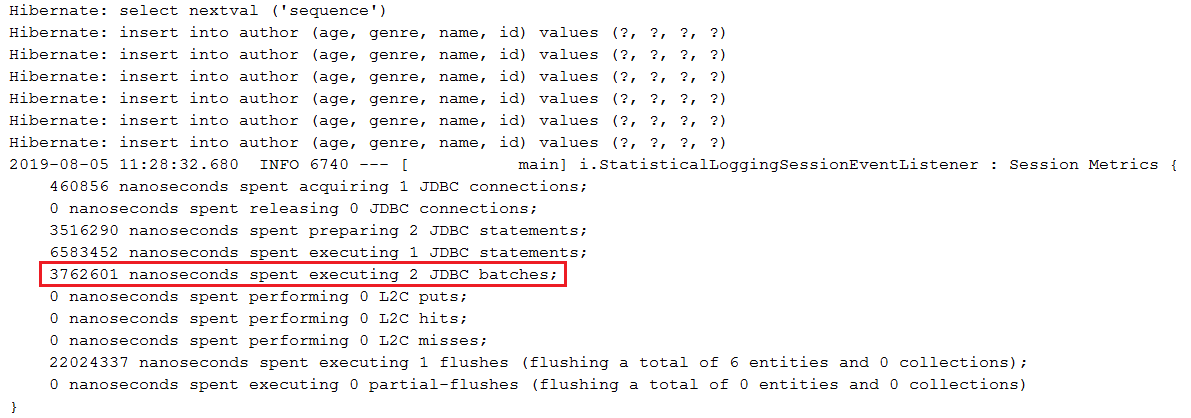

saveAll(Iterable<S> entities) 통한 일괄 삽입 설명: MySQL의 SimpleJpaRepository#saveAll(Iterable<S> entities) 메서드를 통한 일괄 삽입

핵심 사항:

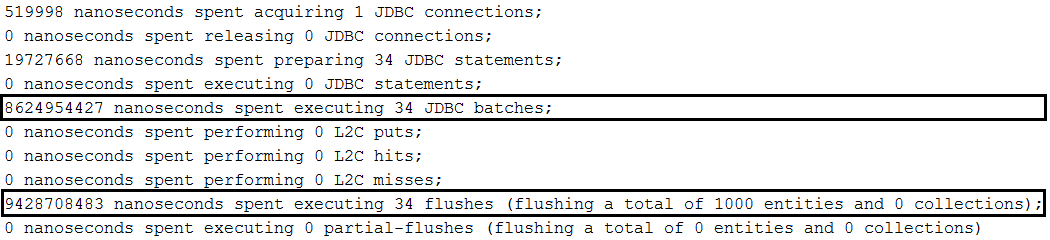

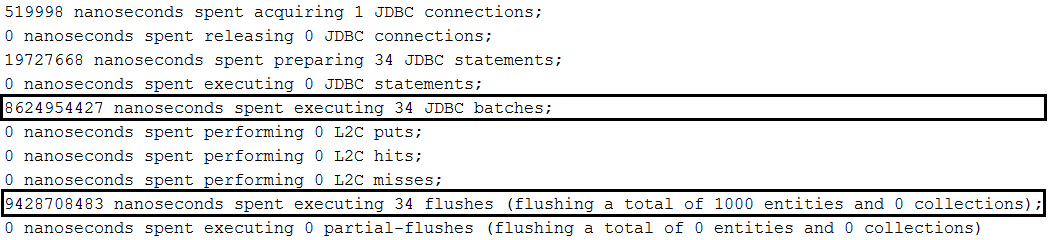

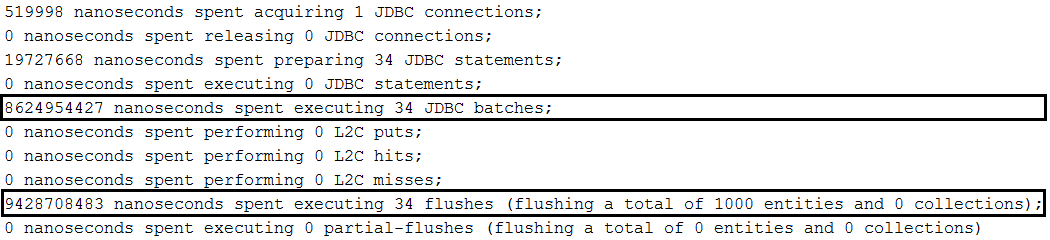

application.properties 에서 spring.jpa.properties.hibernate.jdbc.batch_size 설정하십시오.application.properties 에서 spring.jpa.properties.hibernate.generate_statistics 설정합니다(일괄 처리가 작동하는지 확인하기 위해).application.properties 에서 rewriteBatchedStatements=true 로 JDBC URL을 설정합니다(MySQL 최적화).application.properties 에서 JDBC URL을 cachePrepStmts=true 로 설정합니다(캐싱을 활성화하고 prepStmtCacheSize , prepStmtCacheSqlLimit 등도 설정하기로 결정한 경우 유용합니다. 이 설정이 없으면 캐시가 비활성화됩니다).application.properties 에서 useServerPrepStmts=true 로 JDBC URL을 설정합니다(이렇게 하면 서버 측 준비된 명령문으로 전환할 수 있습니다(상당한 성능 향상으로 이어질 수 있음)).spring.jpa.properties.hibernate.order_inserts=true 설정하여 삽입을 주문하여 일괄 처리를 최적화하는 것을 고려하십시오.IDENTITY 로 인해 삽입 일괄 처리가 비활성화되므로 할당된 생성기를 사용합니다.@Version 속성을 추가하여 일괄 처리 전에 추가 SELECT 문이 실행되는 것을 방지합니다(또한 다중 요청 트랜잭션에서 업데이트 손실을 방지합니다). Extra- SELECT 문은 persist() 대신 merge() 사용하는 효과입니다. 이면에서, saveAll() save() 사용하는데, 이는 새로운 엔터티(ID를 가진 엔터티)가 아닌 경우 merge() 호출하여 Hibernate에 SELECT 문을 실행하여 레코드가 없는지 확인하도록 지시합니다. 동일한 식별자를 가진 데이터베이스saveAll() 에 전달된 삽입의 양에 주의하세요. 일반적으로 EntityManager 수시로 플러시되고 지워져야 하지만 saveAll() 실행 중에는 그렇게 할 수 없습니다. 따라서 saveAll() 에 많은 양의 데이터가 포함된 목록이 있는 경우 해당 데이터는 모두 Persistence에 도달합니다. 컨텍스트(1단계 캐시)는 플러시 시간까지 메모리에 유지됩니다. 상대적으로 적은 양의 데이터를 사용해도 괜찮습니다(이 예에서는 30개의 엔터티로 구성된 각 배치가 별도의 트랜잭션 및 영구 컨텍스트에서 실행됩니다).saveAll() 메소드는 지속된 엔터티를 포함하는 List<S> 반환합니다. 각각의 지속된 엔터티가 이 목록에 추가됩니다. 이 List 이 필요하지 않으면 아무것도 생성되지 않습니다.spring.jpa.properties.hibernate.cache.use_second_level_cache=false 를 통해 두 번째 수준 캐시가 비활성화되어 있는지 확인하십시오. 설명: 이 애플리케이션은 MySQL의 EntityManager 통한 일괄 삽입 샘플입니다. 이 방법을 사용하면 현재 트랜잭션 내 지속성 컨텍스트(1단계 캐시)의 flush() 및 clear() 주기를 쉽게 제어할 수 있습니다. 이 메서드는 트랜잭션당 단일 플러시를 실행하므로 Spring Boot, saveAll(Iterable<S> entities) 를 통해서는 불가능합니다. 또 다른 장점은 merge() 대신 persist() 호출할 수 있다는 것입니다. 이는 SpringBoot saveAll(Iterable<S> entities) 및 save(S entity) 에 의해 배후에서 사용됩니다.

트랜잭션별로 일괄 처리를 실행하려면(권장) 이 예를 확인하세요.

핵심 사항:

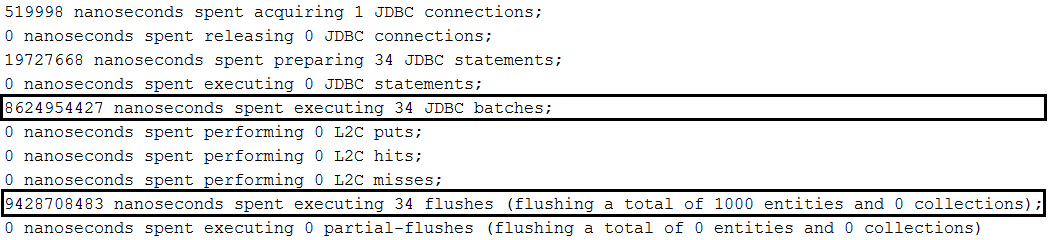

application.properties 에서 spring.jpa.properties.hibernate.jdbc.batch_size 설정하십시오.application.properties 에서 spring.jpa.properties.hibernate.generate_statistics 설정합니다(일괄 처리가 작동하는지 확인하기 위해).application.properties 에서 rewriteBatchedStatements=true 로 JDBC URL을 설정합니다(MySQL 최적화).application.properties 에서 JDBC URL을 cachePrepStmts=true 로 설정합니다(캐싱을 활성화하고 prepStmtCacheSize , prepStmtCacheSqlLimit 등도 설정하기로 결정한 경우 유용합니다. 이 설정이 없으면 캐시가 비활성화됩니다).application.properties 에서 useServerPrepStmts=true 로 JDBC URL을 설정합니다(이렇게 하면 서버 측 준비된 명령문으로 전환할 수 있습니다(상당한 성능 향상으로 이어질 수 있음)).spring.jpa.properties.hibernate.order_inserts=true 설정하여 삽입을 주문하여 일괄 처리를 최적화하는 것을 고려하십시오.IDENTITY 로 인해 삽입 일괄 처리가 비활성화되므로 할당된 생성기를 사용합니다.spring.jpa.properties.hibernate.cache.use_second_level_cache=false 를 통해 두 번째 수준 캐시가 비활성화되어 있는지 확인하십시오. 출력 예:

설명: MySQL의 JpaContext/EntityManager 를 통해 일괄 삽입됩니다.

핵심 사항:

application.properties 에서 spring.jpa.properties.hibernate.jdbc.batch_size 설정하십시오.application.properties 에서 spring.jpa.properties.hibernate.generate_statistics 설정합니다(일괄 처리가 작동하는지 확인하기 위해).application.properties 에서 rewriteBatchedStatements=true 로 JDBC URL을 설정합니다(MySQL 최적화).application.properties 에서 JDBC URL을 cachePrepStmts=true 로 설정합니다(캐싱을 활성화하고 prepStmtCacheSize , prepStmtCacheSqlLimit 등도 설정하기로 결정한 경우 유용합니다. 이 설정이 없으면 캐시가 비활성화됩니다).application.properties 에서 useServerPrepStmts=true 로 JDBC URL을 설정합니다(이렇게 하면 서버 측 준비된 명령문으로 전환할 수 있습니다(상당한 성능 향상으로 이어질 수 있음)).spring.jpa.properties.hibernate.order_inserts=true 설정하여 삽입을 주문하여 일괄 처리를 최적화하는 것을 고려하십시오.IDENTITY 로 인해 삽입 일괄 처리가 비활성화되므로 할당된 생성기를 사용합니다.EntityManager 는 JpaContext#getEntityManagerByManagedType(Class<?> entity) 를 통해 엔터티 유형별로 가져옵니다.spring.jpa.properties.hibernate.cache.use_second_level_cache=false 를 통해 두 번째 수준 캐시가 비활성화되어 있는지 확인하십시오. 출력 예:

설명: MySQL에서 Hibernate 세션 수준 일괄 처리(Hibernate 5.2 이상)를 통해 일괄 삽입합니다.

핵심 사항:

application.properties 에서 spring.jpa.properties.hibernate.generate_statistics 설정합니다(일괄 처리가 작동하는지 확인하기 위해).application.properties 에서 rewriteBatchedStatements=true 로 JDBC URL을 설정합니다(MySQL 최적화).application.properties 에서 JDBC URL을 cachePrepStmts=true 로 설정합니다(캐싱을 활성화하고 prepStmtCacheSize , prepStmtCacheSqlLimit 등도 설정하기로 결정한 경우 유용합니다. 이 설정이 없으면 캐시가 비활성화됩니다).application.properties 에서 useServerPrepStmts=true 로 JDBC URL을 설정합니다(이렇게 하면 서버 측 준비된 명령문으로 전환할 수 있습니다(상당한 성능 향상으로 이어질 수 있음)).spring.jpa.properties.hibernate.order_inserts=true 설정하여 삽입을 주문하여 일괄 처리를 최적화하는 것을 고려하십시오.IDENTITY 로 인해 삽입 일괄 처리가 비활성화되므로 할당된 생성기를 사용합니다.Session EntityManager#unwrap(Session.class) 통해 래핑을 해제하여 얻습니다.Session#setJdbcBatchSize(Integer size) 를 통해 설정되고 Session#getJdbcBatchSize() 통해 가져옵니다.spring.jpa.properties.hibernate.cache.use_second_level_cache=false 를 통해 두 번째 수준 캐시가 비활성화되어 있는지 확인하십시오. 출력 예:

findById() , JPA EntityManager 및 Hibernate Session 통한 직접 가져오기 설명: Spring Data, EntityManager 및 Hibernate Session 예제를 통한 직접 가져오기.

핵심 사항:

findById() 사용합니다.EntityManager 통한 직접 가져오기는 find() 사용합니다.Session 통한 직접 가져오기는 get() 사용합니다.참고: "스프링 프로젝션을 통해 가상 속성으로 DTO를 강화하는 방법" 레시피를 읽어볼 수도 있습니다.

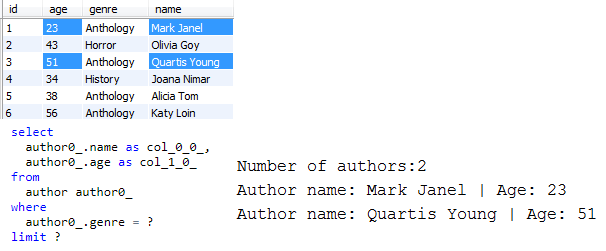

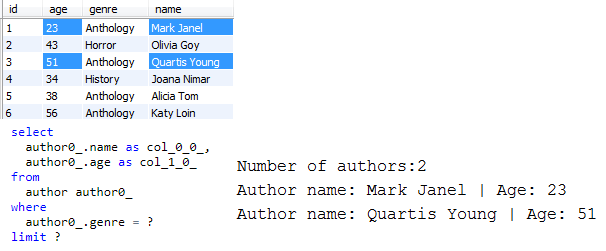

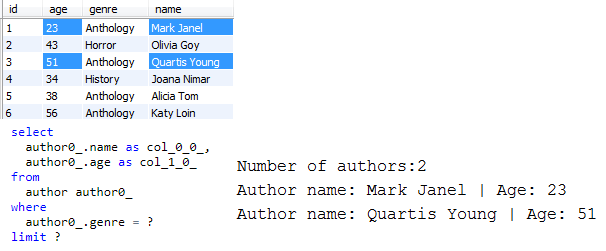

설명: DTO(Spring Data Projections)를 통해 데이터베이스에서 필요한 데이터만 가져옵니다.

핵심 사항:

List<projection> 을 반환하는 적절한 쿼리를 작성하세요.LIMIT 통해).참고: 프로젝션 사용은 Spring Data 저장소 인프라에 내장된 쿼리 빌더 메커니즘을 사용하는 것으로 제한되지 않습니다. JPQL이나 기본 쿼리를 통해서도 예측을 가져올 수 있습니다. 예를 들어 이 애플리케이션에서는 JPQL을 사용합니다.

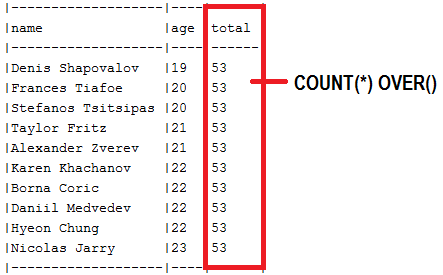

출력 예(처음 2개 행 선택, "이름" 및 "나이"만 선택):

| 이 저장소에 공개된 성능 레시피에 대해 자세히 알아보고 싶다면 내 책 "Spring Boot Persistence Best Practices"를 좋아하실 것이라고 확신합니다. | 100개 이상의 Java 지속성 성능 문제에 대한 팁과 그림이 필요한 경우 "Java Persistence Performance Illustrated Guide"가 적합합니다. |

|

|

설명: 기본적으로 엔터티의 속성은 즉시(한 번에) 로드됩니다. 하지만 게으른 로드도 가능합니다. 이는 대량의 데이터( CLOB , BLOB , VARBINARY 등)를 저장하거나 요청 시 로드해야 하는 세부 정보를 저장하는 열 유형에 유용합니다. 이 애플리케이션에는 Author 라는 엔터티가 있습니다. 해당 속성은 id , name , genre , avatar 및 age 입니다. 그리고 우리는 avatar 를 게으르게 로드하고 싶습니다. 따라서 avatar 요청 시 로드되어야 합니다.

핵심 사항:

pom.xml 에서 Hibernate 바이트코드 향상을 활성화합니다(예: Maven 바이트코드 향상 플러그인 사용).@Basic(fetch = FetchType.LAZY) 사용하여 지연 로드되어야 하는 속성에 주석을 답니다.application.properties 에서 보기에서 세션 열기를 비활성화합니다. 다음 사항도 확인하세요.

- 지연 로드 속성의 기본값

- 속성 지연 로딩 및 Jackson 직렬화

설명: Hibernate 프록시는 하위 엔터티가 상위 엔터티( @ManyToOne 또는 @OneToOne 연결)에 대한 참조로 지속될 수 있을 때 유용할 수 있습니다. 그러한 경우 데이터베이스에서 상위 엔터티를 가져오는 것( SELECT 문 실행)은 성능 저하이고 무의미한 작업입니다. 왜냐하면 Hibernate는 초기화되지 않은 프록시에 대한 기본 외래 키 값을 설정할 수 있기 때문입니다.

핵심 사항:

EntityManager#getReference() 에 의존JpaRepository#getOne() ->을 사용합니다.load() 사용합니다.@ManyToOne 연결에 관련된 두 엔터티 Author 및 Book 가정합니다( Author 는 상위 측임).SELECT 가 실행되지 않음), 새 책을 만들고, 프록시를 이 책의 저자로 설정하고 책을 저장합니다(이렇게 하면 book 테이블에서 INSERT 가 실행됩니다).출력 예:

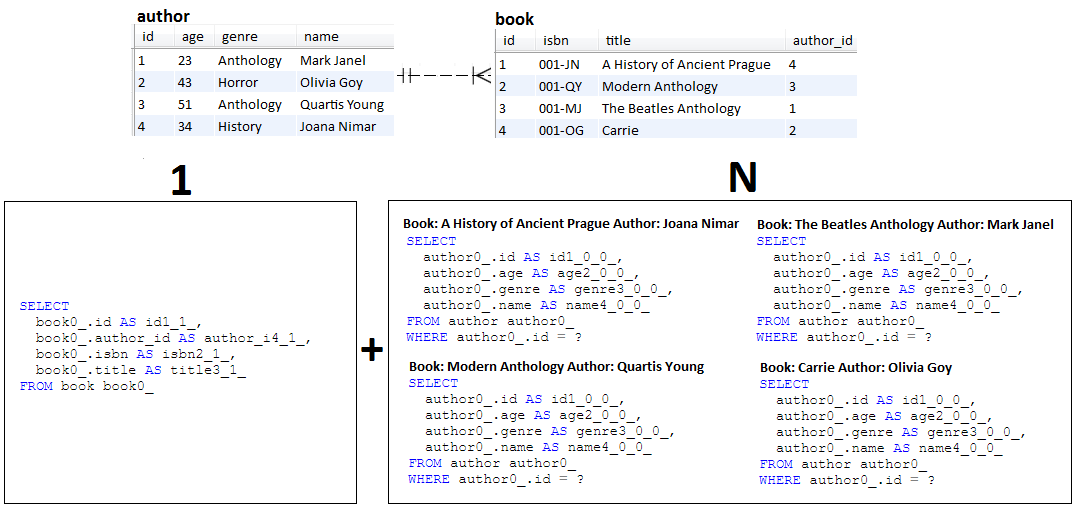

INSERT 만 트리거되고 SELECT 없음이 표시됩니다.설명: N+1은 지연 가져오기 문제입니다(그러나 열망도 예외는 아닙니다). 이 응용 프로그램은 N+1 동작을 재현합니다.

핵심 사항:

@OneToMany 연관에서 Author 와 Book 두 개의 엔터티를 정의합니다.Book 게으르게 가져오므로 Author 없습니다(쿼리 1개 발생)Book 컬렉션을 반복하고 각 항목에 대해 해당 Author 가져옵니다(N 쿼리 결과)Author 게으르게 가져오므로 Book 없습니다(결과적으로 쿼리 1개 발생).Author 컬렉션을 반복하고 각 항목에 대해 해당 Book 가져옵니다(N개의 쿼리 결과) 출력 예:

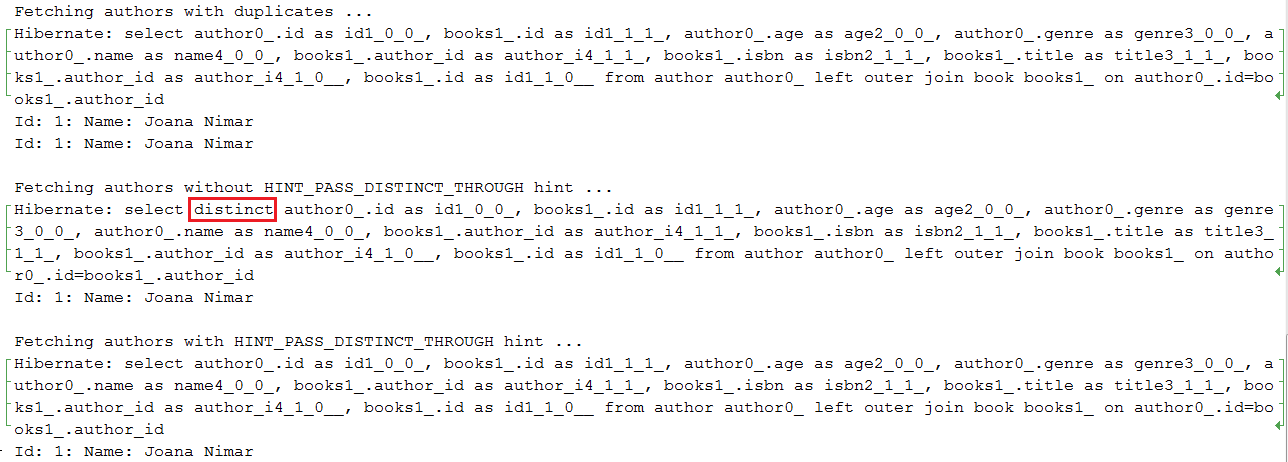

HINT_PASS_DISTINCT_THROUGH 힌트를 통해 SELECT DISTINCT 최적화 설명: Hibernate 5.2.2부터 HINT_PASS_DISTINCT_THROUGH 힌트를 통해 SELECT DISTINCT 유형의 JPQL(HQL) 쿼리 항목을 최적화할 수 있습니다. 이 힌트는 JPQL(HQL) JOIN FETCH 쿼리에만 유용하다는 점을 명심하세요. 스칼라 쿼리(예: List<Integer> ), DTO 또는 HHH-13280에는 유용하지 않습니다. 이러한 경우 기본 SQL 쿼리에 DISTINCT JPQL 키워드를 전달해야 합니다. 이는 결과 집합에서 중복 항목을 제거하도록 데이터베이스에 지시합니다.

핵심 사항:

@QueryHints(value = @QueryHint(name = HINT_PASS_DISTINCT_THROUGH, value = "false")) 사용 출력 예:

참고: Hibernate Dirty Checking 메커니즘은 플러시 시 엔터티 수정 사항을 식별하고 우리를 대신하여 해당 UPDATE 문을 트리거하는 역할을 합니다.

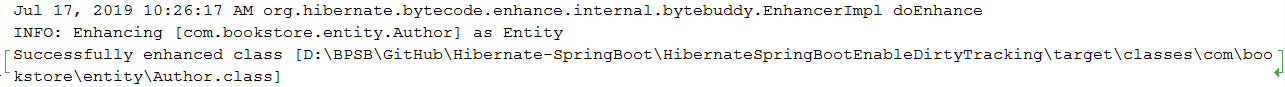

설명: Hibernate 버전 5 이전에는 Dirty Checking 메커니즘이 모든 관리 엔터티의 모든 속성을 확인하기 위해 Java Reflection API를 사용했습니다. Hibernate 버전 5부터 Dirty Checking 메커니즘은 Hibernate Bytecode Enhancement가 애플리케이션에 존재하도록 요구하는 Dirty Tracking 메커니즘(자체 속성 변경을 추적하는 엔터티의 기능)에 의존할 수 있습니다. Dirty Tracking 메커니즘은 특히 엔터티 수가 비교적 많은 경우 더 나은 성능을 유지합니다.

Dirty Tracking 의 경우 바이트코드 향상 프로세스 중에 엔티티 클래스 바이트코드는 추적기 $$_hibernate_tracker 추가하여 Hibernate에 의해 계측됩니다. 플러시 시간에 Hibernate는 이 추적기를 사용하여 엔터티 변경 사항을 발견합니다(각 엔터티 추적기는 변경 사항을 보고합니다). 이는 모든 관리 엔터티의 모든 속성을 확인하는 것보다 낫습니다.

일반적으로(기본적으로) 계측은 빌드 시 발생하지만 런타임이나 배포 시에도 발생하도록 구성할 수 있습니다. 런타임 시 오버헤드를 피하기 위해 빌드 시에 수행하는 것이 좋습니다.

바이트코드 향상 추가 및 더티 트래킹 활성화는 Maven 또는 Gradle을 통해 추가된 플러그인을 통해 수행할 수 있습니다(Ant도 사용할 수 있음). 우리는 Maven을 사용하므로 pom.xml 에 추가합니다.

핵심 사항:

pom.xml 파일에 Bytecode Enhancement 플러그인을 추가하세요. 출력 예:

바이트코드 향상 효과는 여기 Author.class 에서 볼 수 있습니다. $$_hibernate_tracker 사용하여 바이트코드가 어떻게 계측되었는지 확인하세요.

Optional 사용 설명: 이 애플리케이션은 엔터티 및 쿼리에서 Java 8 Optional 사용하는 것이 올바른 방법을 보여주는 예입니다.

핵심 사항:

Optional 반환하는 Spring Data 내장 쿼리 메서드를 사용하세요(예: findById() )Optional 반환하는 쿼리를 직접 작성하세요.Optional 사용data-mysql.sql 파일을 확인하세요.@OneToMany 양방향 연결을 매핑하는 가장 좋은 방법 설명: 이 애플리케이션은 성능 관점에서 양방향 @OneToMany 연결을 구현하는 것이 올바른 방법에 대한 개념 증명입니다.

핵심 사항:

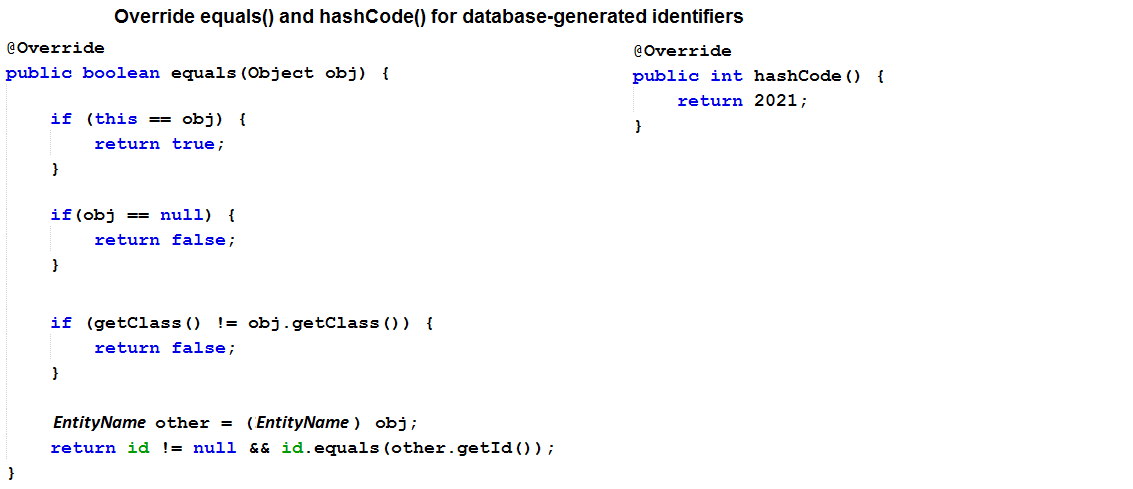

mappedBy 사용하세요.orphanRemoval 사용하세요.@NaturalId )) 및/또는 데이터베이스 생성 식별자를 사용하고 여기에서와 같이 equals() 및 hashCode() 메서드를 (자식 측에서) 적절하게 재정의합니다.toString() 재정의해야 하는 경우 엔터티가 데이터베이스에서 로드될 때 가져온 기본 속성만 포함하도록 주의하세요. 참고: 제거 작업, 특히 하위 엔터티 제거에 주의하세요. CascadeType.REMOVE 및 orphanRemoval=true 너무 많은 쿼리를 생성할 수 있습니다. 이러한 시나리오에서는 대량 작업에 의존하는 것이 대부분 삭제를 위한 가장 좋은 방법입니다.

설명: 이 애플리케이션은 JpaRepository , EntityManager 및 Session 통해 쿼리를 작성하는 방법의 예입니다.

핵심 사항:

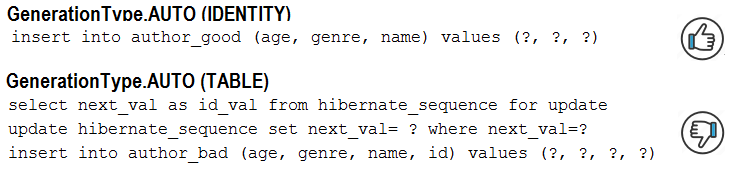

JpaRepository 의 경우 @Query 또는 Spring 데이터 쿼리 생성을 사용하세요.EntityManager 및 Session 의 경우 createQuery() 메소드를 사용하십시오.AUTO 생성기 유형을 피하는 이유와 방법 설명: MySQL 및 Hibernate 5에서 GenerationType.AUTO 생성기 유형은 TABLE 생성기를 사용하게 됩니다. 이는 상당한 성능 저하를 추가합니다. GenerationType.IDENTITY 또는 기본 생성기를 사용하여 이 동작을 IDENTITY 생성기로 전환할 수 있습니다.

핵심 사항:

GenerationType.AUTO 대신 GenerationType.IDENTITY 사용하세요. 출력 예:

설명: 이 응용 프로그램은 엔터티에 대한 save() 호출이 중복되는 경우(필요하지 않음)의 예입니다.

핵심 사항:

save() 메소드를 명시적으로 호출할 필요 없이 자동으로 해당 UPDATE 문을 트리거합니다.save() 호출)은 트리거된 쿼리 수에 영향을 미치지 않지만 기본 Hibernate 프로세스의 성능 저하를 의미합니다.| 이 저장소에 공개된 성능 레시피에 대해 자세히 알아보고 싶다면 내 책 "Spring Boot Persistence Best Practices"를 좋아하실 것이라고 확신합니다. | 100개 이상의 Java 지속성 성능 문제에 대한 팁과 그림이 필요한 경우 "Java Persistence Performance Illustrated Guide"가 적합합니다. |

|

|

BIG ) SERIAL 피하는 이유 설명: PostgreSQL에서 GenerationType.IDENTITY 사용하면 삽입 일괄 처리가 비활성화됩니다. (BIG)SERIAL 은 MySQL, AUTO_INCREMENT 와 "거의" 비슷하게 작동합니다. 이 애플리케이션에서는 삽입 일괄 처리를 허용하는 GenerationType.SEQUENCE 사용하고 hi/lo 최적화 알고리즘을 통해 이를 최적화합니다.

핵심 사항:

GenerationType.IDENTITY 대신 GenerationType.SEQUENCE 사용하세요.hi/lo 알고리즘을 사용하여 데이터베이스 왕복에서 hi 값을 가져옵니다( hi 값은 메모리 내에서 특정/주어진 수의 식별자를 생성하는 데 유용합니다. 모든 메모리 내 식별자를 모두 사용하기 전까지는 필요하지 않습니다. 또 다른 인사 를 받으러 )pooled 및 pooled-lo 식별자 생성기를 사용할 수 있습니다(이것은 외부 서비스가 중복 키 오류를 일으키지 않고 데이터베이스를 사용할 수 있도록 허용하는 hi/lo 의 최적화입니다).spring.datasource.hikari.data-source-properties.reWriteBatchedInserts=true 를 통해 일괄 처리 최적화 출력 예:

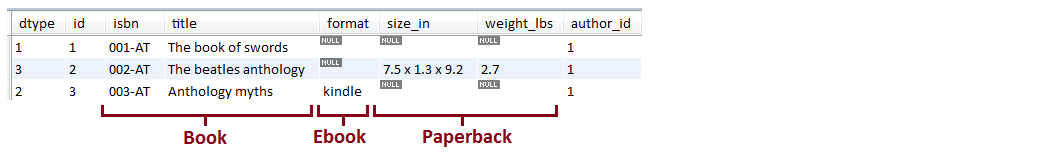

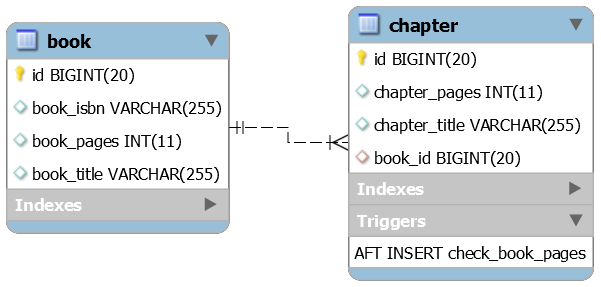

SINGLE_TABLE 설명: 이 애플리케이션은 JPA 단일 테이블 상속 전략( SINGLE_TABLE )을 사용하는 샘플입니다.

핵심 사항:

@Inheritance(strategy=InheritanceType.SINGLE_TABLE) ).@NotNull 및 MySQL 트리거를 통해 하위 클래스 속성의 null 허용 여부가 보장됩니다.TINYINT 유형으로 선언하여 최적화되었습니다. 출력 예(아래는 3개 엔터티에서 얻은 단일 테이블입니다):

설명: 이 애플리케이션은 "무대 뒤에서" 트리거된 SQL 문을 계산하고 어설션하는 샘플입니다. 코드가 생각하는 것보다 더 많은 SQL 문을 생성하지 않도록 하기 위해 SQL 문 수를 계산하는 데 매우 유용합니다(예: 예상 문 수를 지정하여 N+1을 쉽게 감지할 수 있음).

핵심 사항:

pom.xml 에서 DataSource-Proxy 라이브러리 및 Vlad Mihalcea의 db-util 라이브러리에 대한 종속성을 추가합니다.countQuery() 사용하여 ProxyDataSourceBuilder 만듭니다.SQLStatementCountValidator.reset() 을 통해 카운터를 재설정합니다.assertInsert/Update/Delete/Select/Count(long expectedNumberOfSql) 를 통해 INSERT , UPDATE , DELETE 및 SELECT 주장합니다. 출력 예(예상 SQL 수가 실제와 같지 않으면 예외가 발생함):

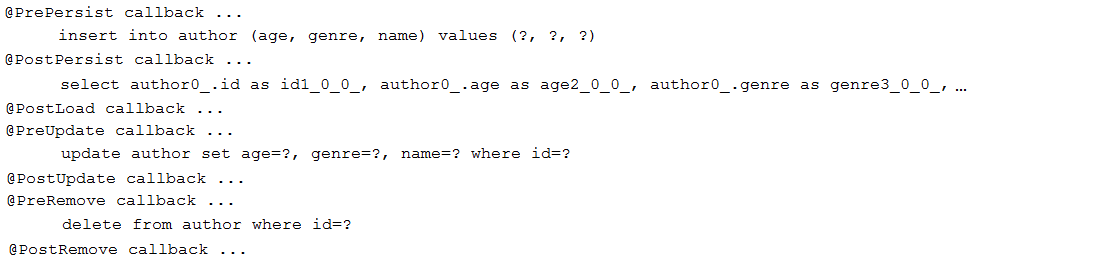

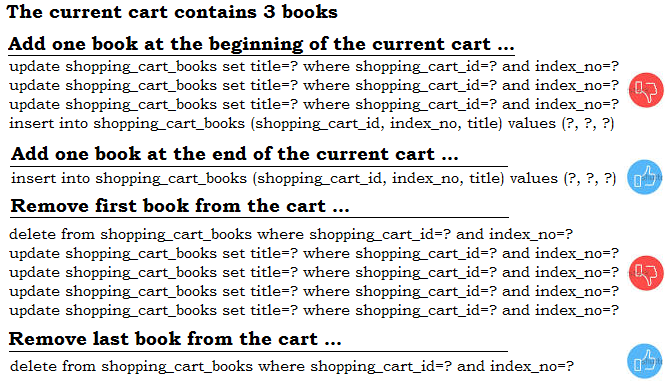

설명: 이 애플리케이션은 JPA 콜백( Pre/PostPersist , Pre/PostUpdate , Pre/PostRemove 및 PostLoad ) 설정 샘플입니다.

핵심 사항:

void 반환하고 인수를 취하지 않아야 합니다. 출력 예:

@OneToOne 관계에서 식별자를 공유하기 위해 @MapsId 사용하는 방법 설명: 일반적인 단방향/양방향 @OneToOne 대신 단방향 @OneToOne 및 @MapsId 사용하는 것이 좋습니다. 이 응용 프로그램은 개념 증명입니다.

핵심 사항:

@MapsId 사용@JoinColumn 사용하십시오.@OneToOne 연결의 경우 @MapsId 기본 키를 상위 테이블과 공유합니다( id 속성은 기본 키와 외래 키 역할을 모두 수행함).메모:

@MapsId @ManyToOne 에도 사용할 수 있습니다.SqlResultSetMapping 및 EntityManager 통해 DTO를 가져오는 방법 설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 SqlResultSetMapping 및 EntityManager 사용합니다.

핵심 사항:

SqlResultSetMapping 및 EntityManager 사용SqlResultSetMapping 및 NamedNativeQuery 통해 DTO를 가져오는 방법 참고: 기본 명명된 쿼리와 동일한 이름을 가진 저장소 인터페이스 메서드를 간단히 생성하기 위해 {EntityName}.{RepositoryMethodName} 명명 규칙을 사용하려면 이 애플리케이션을 건너뛰고 이 애플리케이션을 확인하세요.

설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 SqlResultSetMapping , NamedNativeQuery 사용합니다.

핵심 사항:

SqlResultSetMapping , NamedNativeQuery 사용javax.persistence.Tuple 및 기본 SQL을 통해 DTO를 가져오는 방법 설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 javax.persistence.Tuple 및 기본 SQL을 사용합니다.

핵심 사항:

java.persistence.Tuple 사용하고 쿼리를 nativeQuery = true 로 표시합니다.javax.persistence.Tuple 및 JPQL을 통해 DTO를 가져오는 방법 설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 javax.persistence.Tuple 및 JPQL을 사용합니다.

핵심 사항:

java.persistence.Tuple 사용하십시오.설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 생성자 표현식과 JPQL을 사용합니다.

핵심 사항:

SELECT new com.bookstore.dto.AuthorDto(a.name, a.age) FROM Author a 로 사용합니다. 참조:

생성자 및 Spring 데이터 쿼리 빌더 메커니즘을 통해 DTO를 가져오는 방법

| 이 저장소에 공개된 성능 레시피에 대해 자세히 알아보고 싶다면 내 책 "Spring Boot Persistence Best Practices"를 좋아하실 것이라고 확신합니다. | 100개 이상의 Java 지속성 성능 문제에 대한 팁과 그림이 필요한 경우 "Java Persistence Performance Illustrated Guide"가 적합합니다. |

|

|

ResultTransformer 및 기본 SQL을 통해 DTO를 가져오는 방법 설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하될 수 있습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 Hibernate, ResultTransformer 및 기본 SQL을 사용합니다.

핵심 사항:

AliasToBeanConstructorResultTransformer 사용합니다.Transformers.aliasToBean() 사용하십시오.EntityManager.createNativeQuery() 및 unwrap(org.hibernate.query.NativeQuery.class) 사용하십시오.ResultTransformer 더 이상 사용되지 않지만 대체품이 가능할 때까지(아마도 Hibernate 6.0에서) 사용할 수 있습니다(자세히 읽어보세요)ResultTransformer 및 JPQL을 통해 DTO를 가져오는 방법 설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하될 수 있습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 Hibernate, ResultTransformer 및 JPQL을 사용합니다.

핵심 사항:

AliasToBeanConstructorResultTransformer 사용합니다.Transformers.aliasToBean() 사용하십시오.EntityManager.createQuery() 및 unwrap(org.hibernate.query.Query.class) 사용하십시오.ResultTransformer 더 이상 사용되지 않지만 대체품이 사용 가능해질 때까지(Hibernate 6.0에서) 사용할 수 있습니다(자세히 읽어보세요)설명: 필요한 것보다 더 많은 데이터를 가져오면 성능이 저하되기 쉽습니다. DTO를 사용하면 필요한 데이터만 추출할 수 있습니다. 이 애플리케이션에서는 Blaze-Persistence 엔터티 뷰를 사용합니다.

핵심 사항:

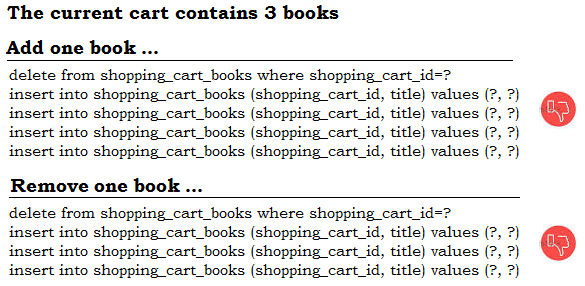

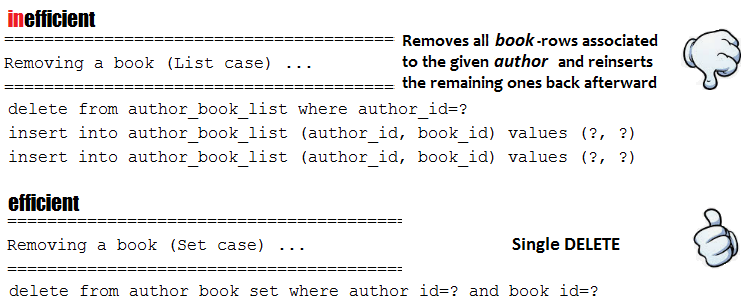

pom.xml 에 추가합니다.CriteriaBuilderFactory 및 EntityViewManager 를 통해 Blaze-Persistence 구성EntityViewRepository 확장하여 Spring 중심 저장소 작성findAll() , findOne() 등과 같은 이 저장소의 호출 메소드@ElementCollection ( @OrderColumn 제외)의 작동 방식 설명: 이 애플리케이션은 @ElementCollection 사용 시 발생할 수 있는 성능 저하를 보여줍니다. 이 경우에는 @OrderColumn 이 없습니다. 다음 항목(34)에서 볼 수 있듯이 @OrderColumn 추가하면 일부 성능 저하를 완화할 수 있습니다.

핵심 사항:

@ElementCollection 에는 기본 키가 없습니다.@ElementCollection 은 별도의 테이블에 매핑됩니다.@ElementCollection 사용하지 마세요. 삽입/삭제는 Hibernate가 기존 테이블 행을 모두 삭제하고, 메모리 내에서 컬렉션을 처리하고, 메모리에서 컬렉션을 미러링하기 위해 나머지 테이블 행을 다시 삽입하도록 합니다. 출력 예:

@OrderColumn 사용한 @ElementCollection 의 작동 방식 설명: 이 애플리케이션은 @ElementCollection 사용으로 인한 성능 저하를 보여줍니다. 이 경우에는 @OrderColumn 사용합니다. 그러나 이 애플리케이션에서 볼 수 있듯이(항목 33과 비교) @OrderColumn 추가하면 컬렉션 테일 근처에서 작업이 발생할 때 일부 성능 저하를 완화할 수 있습니다(예: 컬렉션 끝에서 추가/제거). 주로 추가/제거 항목 앞에 있는 모든 요소는 그대로 유지되므로 컬렉션 꼬리에 가까운 행에 영향을 미치면 성능 저하를 무시할 수 있습니다.

핵심 사항:

@ElementCollection 에는 기본 키가 없습니다.@ElementCollection 은 별도의 테이블에 매핑됩니다.@OrderColumn 이 있는 @ElementCollection 선호합니다. 출력 예:

참고: 이 항목을 읽기 전에 Hibernate5Module이 당신이 찾고 있는 것이 아닌지 확인하십시오.

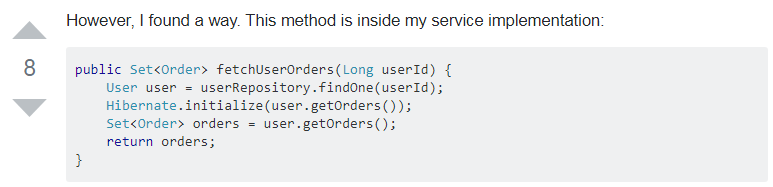

설명: View 안티 패턴의 Open-Session은 SpringBoot에서 기본적으로 활성화됩니다. 이제 Author 와 Book (저자는 더 많은 책을 연결했습니다)이라는 두 엔터티 사이의 지연 연결(예: @OneToMany )을 상상해 보세요. 다음으로 REST 컨트롤러 엔드포인트는 연결된 Book 없이 Author 를 가져옵니다. 그러나 View(보다 정확하게는 Jackson)는 관련 Book 의 지연 로딩도 강제로 수행합니다. OSIV는 이미 열려 있는 Session 제공하므로 프록시 초기화가 성공적으로 수행됩니다. 이러한 성능 저하를 방지하는 솔루션은 OSIV를 비활성화하는 것부터 시작됩니다. 또한 가져오지 않은 지연 연결을 명시적으로 초기화합니다. 이렇게 하면 뷰가 지연 로딩을 강제하지 않습니다.

핵심 사항:

application.properties 에 다음 설정을 추가하여 OSIV를 비활성화합니다: spring.jpa.open-in-view=falseAuthor 엔터티를 가져오고 연관된 Book (기본값) 값(예: null )으로 명시적으로 초기화합니다.null 렌더링이나 결과 JSON에서 비어 있는 것으로 간주되는 것을 방지하려면 이 엔터티 수준에서 @JsonInclude(Include.NON_EMPTY) 설정하세요. 참고: OSIV가 활성화된 경우 개발자는 플러시를 방지하기 위해 트랜잭션 외부에서 이 작업을 수행하는 한 가져오지 않은 지연 연결을 수동으로 초기화할 수 있습니다. 그런데 이것이 왜 작동하는 걸까요? Session 이 열려 있는데 관리되는 엔터티의 연결을 수동으로 초기화해도 플러시가 트리거되지 않는 이유는 무엇입니까? 대답은 다음을 지정하는 OpenSessionInViewFilter 의 문서에서 찾을 수 있습니다. 이 필터는 기본적으로 플러시 모드가 FlushMode.NEVER 로 설정된 Hibernate Session 을 플러시하지 않습니다. 플러시를 관리하는 서비스 계층 트랜잭션과 함께 사용되는 것으로 가정합니다. 활성 트랜잭션 관리자는 읽기-쓰기 트랜잭션 중에 플러시 모드를 일시적으로 FlushMode.AUTO 로 변경하고 마지막에는 플러시 모드를 FlushMode.NEVER 로 재설정합니다. 각 거래의. 트랜잭션 없이 이 필터를 사용하려면 기본 플러시 모드를 변경하는 것이 좋습니다("flushMode" 속성을 통해).

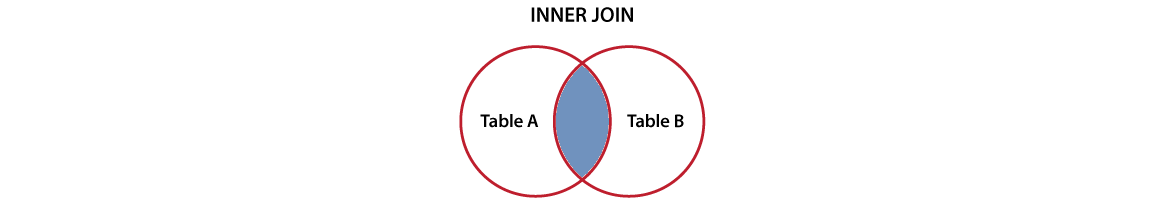

설명: 이 애플리케이션은 JPQL 및 기본 SQL(MySQL용)을 통해 작성된 Spring Projections(DTO) 및 내부 조인을 사용하기 위한 개념 증명입니다.

핵심 사항:

@OneToMany 연결의 Author 및 Book )resources/data-mysql.sql 파일 확인).AuthorNameBookTitle.java 확인)

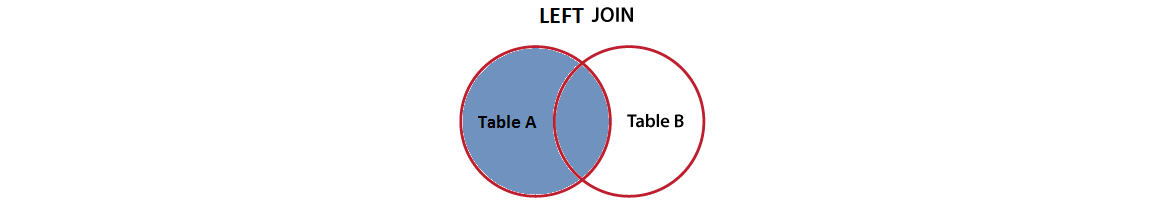

설명: 이 애플리케이션은 JPQL 및 기본 SQL(MySQL용)을 통해 작성된 Spring Projections(DTO) 및 Left Join을 사용하기 위한 개념 증명입니다.

핵심 사항:

@OneToMany 연결의 Author 및 Book )resources/data-mysql.sql 파일 확인).AuthorNameBookTitle.java 확인).

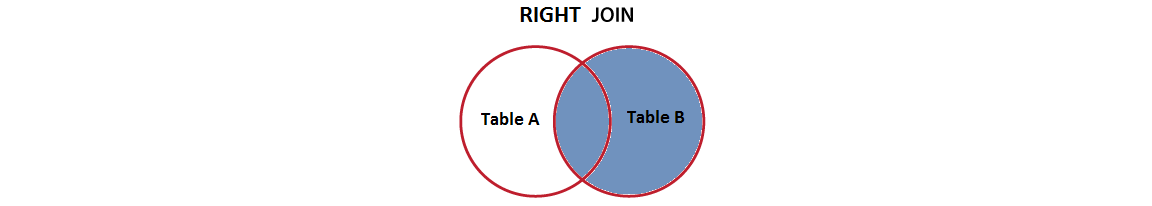

설명 : 이 응용 프로그램은 Spring Projection (DTO)을 사용하기위한 개념 증명이며 JPQL 및 Native SQL (MySQL)을 통해 작성된 오른쪽 조인입니다.

핵심 사항:

@OneToMany Association의 Author 및 Book )resources/data-mysql.sql 확인하십시오).AuthorNameBookTitle.java 확인).

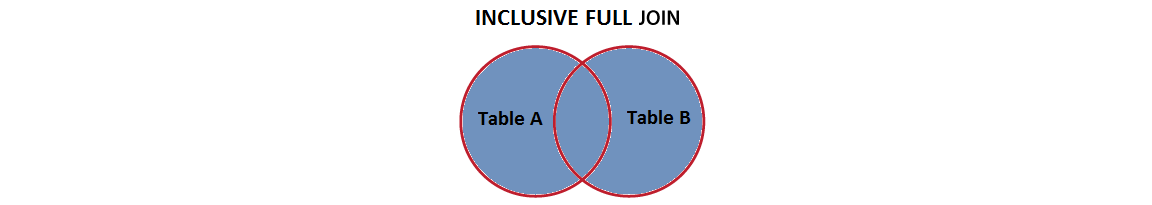

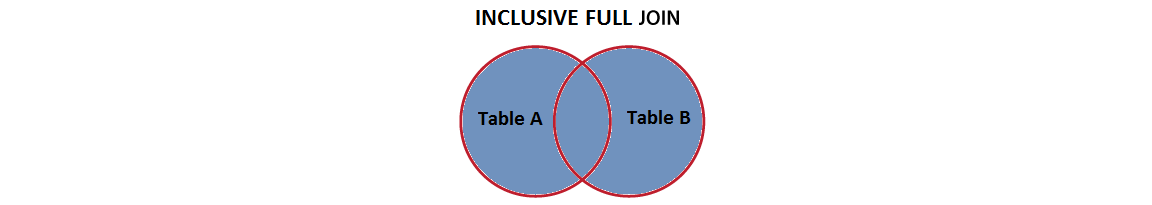

설명 : 이 응용 프로그램은 Spring Projections (DTO)를 사용하고 JPQL 및 기본 SQL (PostgreSQL)을 통해 작성된 포괄적 인 전체 조인을 사용하기위한 개념 증명입니다.

핵심 사항:

@OneToMany Association의 Author 및 Book )resources/data-mysql.sql 확인하십시오).AuthorNameBookTitle.java 확인).| 이 저장소에 노출 된 성능 레시피에 대한 깊은 다이빙이 필요하다면 내 책 "Spring Boot Persistence 모범 사례"를 좋아할 것입니다. | 100 개 이상의 Java Persistence Performance 문제에 대한 팁과 삽화가 필요한 경우 "Java Persistence Performance Illustrated Guide"가 귀하를위한 것입니다. |

|

|

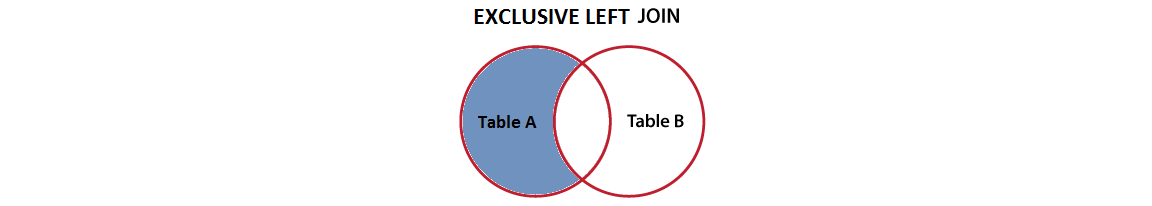

설명 : 이 응용 프로그램은 Spring Projection (DTO)을 사용하기위한 개념 증명이며 JPQL 및 Native SQL (MySQL)을 통해 작성된 독점 왼쪽 조인입니다.

핵심 사항:

@OneToMany Association의 Author 및 Book )resources/data-mysql.sql 확인하십시오).AuthorNameBookTitle.java 확인).

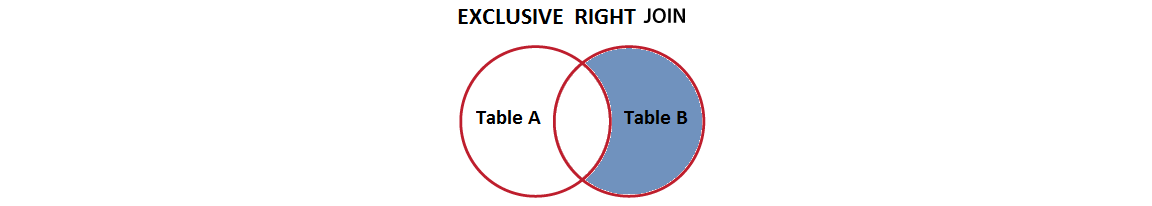

설명 : 이 응용 프로그램은 Spring Projection (DTO)을 사용하기위한 개념 증명이며 JPQL 및 Native SQL (MySQL)을 통해 작성된 독점 오른쪽 조인입니다.

핵심 사항:

@OneToMany Association의 Author 및 Book )resources/data-mysql.sql 확인하십시오).AuthorNameBookTitle.java 확인).

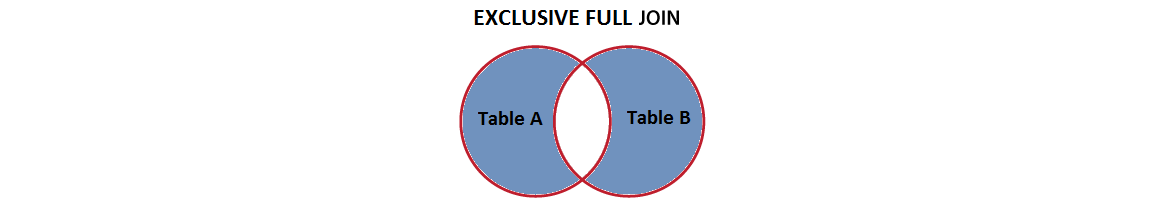

설명 : 이 응용 프로그램은 스프링 투영 (DTO)을 사용하기위한 개념 증명이며 JPQL 및 기본 SQL (PostgreSQL)을 통해 작성된 전용 전체 조인입니다.

핵심 사항:

@OneToMany Association의 Author 및 Book )resources/data-mysql.sql 확인하십시오).AuthorNameBookTitle.java 확인).설명 : 이 응용 프로그램은 스프링 포스트 커밋 후크를 사용하고 지속성 층 성능에 영향을 줄 수있는 개념 증명입니다.

핵심 사항:

설명 : 이 응용 프로그램은 Spring Projection (DTO)을 사용하고 관련없는 엔티티에 가입하기위한 개념 증명입니다. Hibernate 5.1은 관련없는 엔티티에 대한 명시 적 조인을 소개했으며 구문 및 동작은 SQL JOIN 문과 유사합니다.

핵심 사항:

Author 및 Book 관련이없는 엔티티)resources/data-mysql.sql 확인하십시오).BookstoreDto )@EqualsAndHashCode 및 @Data 피하는 이유와 equals() 및 hashCode() 무시하는 방법 설명 : 엔티티는 여기와 같이 equals() 및 hashCode() 구현해야합니다. 주요 아이디어는 최대 절전 모드는 엔티티가 모든 상태 전환 ( 과도 , 부착 , 분리 및 제거 )에서 자체와 동일해야한다는 것입니다. lombok @EqualsAndHashCode (또는 @Data )를 사용해 도이 요구 사항을 존중하지 않습니다.

핵심 사항:

이러한 접근법을 피하십시오

@EqualsAndHashCode 의 Lombok 기본 동작 사용 (Entity : LombokDefaultBook , Test : LombokDefaultEqualsAndHashCodeTest )@EqualsAndHashCode 사용 (Entity : LombokIdBook , Test : LombokEqualsAndHashCodeWithIdOnlyTest )equals() 및 hashCode() (엔티티 : DefaultBook , Test : DefaultEqualsAndHashCodeTest )에 의존합니다.equals() 및 hashCode() 에 의존합니다 (엔티티 : IdBook , test : IdEqualsAndHashCodeTest )이러한 접근법을 선호합니다

BusinessKeyBook , Test : BusinessKeyEqualsAndHashCodeTest )에 의존합니다.@NaturalId 에 의존합니다 (Entity : NaturalIdBook , Test : NaturalIdEqualsAndHashCodeTest )IdManBook , Test : IdManEqualsAndHashCodeTest )에 의존합니다.IdGenBook , Test : IdGenEqualsAndHashCodeTest )에 의존합니다.

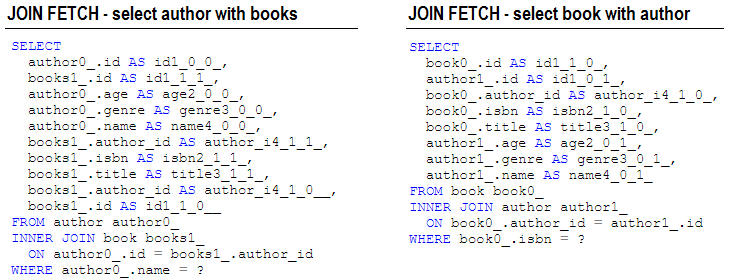

JOIN FETCH 통해 LazyInitializationException 피하는 방법참조:

설명 : 일반적으로 LazyInitializationException 을 얻을 때 우리는 연관 페치 유형을 LAZY 것에서 EAGER 수정하는 경향이 있습니다. 그것은 매우 나쁩니다! 이것은 코드 냄새입니다. 이 예외를 피하는 가장 좋은 방법은 JOIN FETCH (Fetched Entities를 수정하려는 경우) 또는 + JOIN (페치 된 데이터 만 읽는 경우)에 의존하는 것입니다. JOIN FETCH 단일 SELECT 사용하여 부모 객체와 함께 연결을 초기화 할 수 있습니다. 이것은 관련 컬렉션을 가져 오는 데 특히 유용합니다.

이 응용 프로그램은 LazyInitializationException 피하기위한 JOIN FETCH 예제입니다.

핵심 사항:

@OneToMany Lazy-Bidirectional Association의 Author 및 Book )JOIN FETCH 작성하여 그의 책을 포함한 저자를 가져 오기JOIN FETCH (또는 JOIN )를 작성하여 저자를 포함한 책을 가져 오십시오. 출력 예:

설명 : 이것은 다음 기사를 기반으로 한 스프링 부팅 예입니다. 블라드의 예제의 기능적 구현입니다. 해당 기사를 읽는 것이 좋습니다.

핵심 사항:

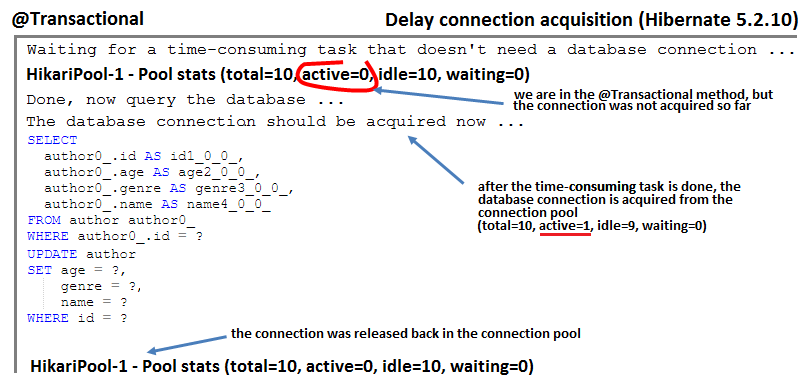

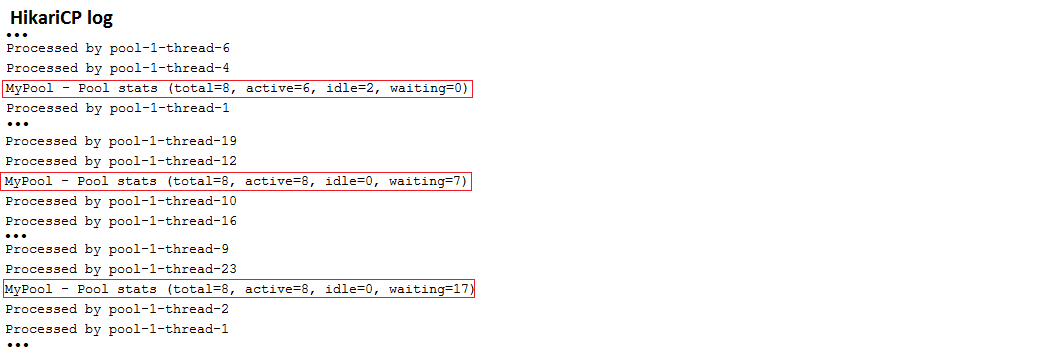

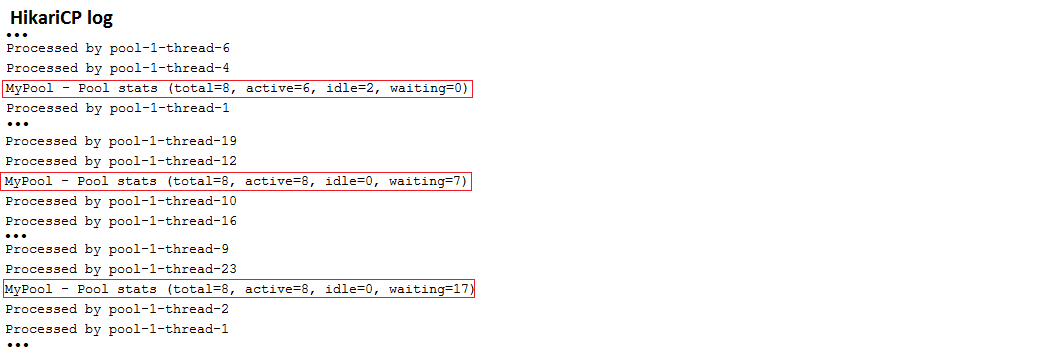

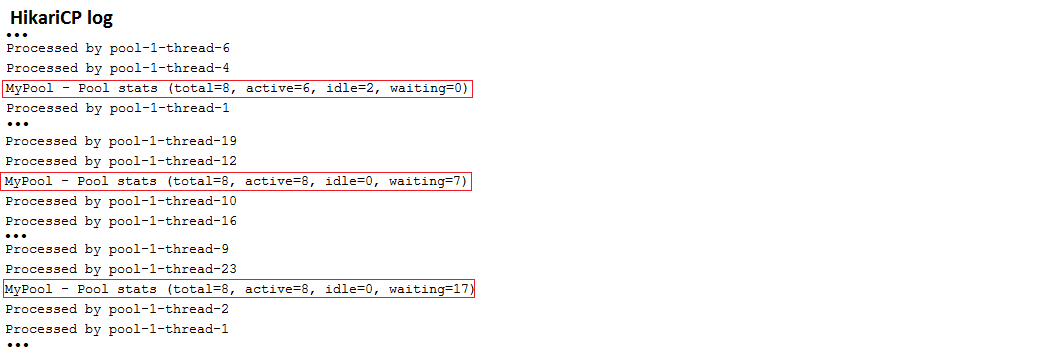

설명 : 이것은 필요에 따라 연결 획득을 지연시키는 5.2.10 기능을 이용하는 스프링 부팅 예입니다. 기본적으로 리소스-로컬 모드에서 @Transactional 과 주석이 달린 메소드를 호출 한 후 즉시 데이터베이스 연결이 Aquried됩니다. 이 메소드에 첫 번째 SQL 문 앞에 시간이 많이 걸리는 작업이 포함되어 있으면 연결은 아무것도 열리지 않습니다. 그러나 Hibernate 5.2.10을 사용하면 필요에 따라 연결 획득을 지연시킬 수 있습니다. 이 예제는 Spring Boot의 기본 연결 풀로 Hikaricp에 의존합니다.

핵심 사항:

spring.datasource.hikari.auto-commit=false 설정합니다spring.jpa.properties.hibernate.connection.provider_disables_autocommit=true application.properties 에서 true를 설정합니다 출력 예:

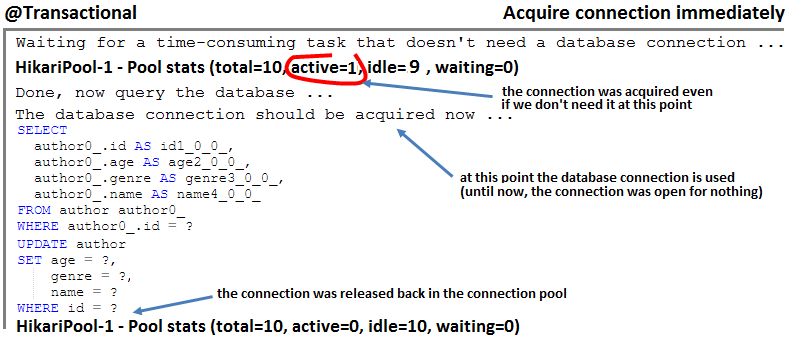

hi/lo 알고리즘을 통해 식별자 시퀀스를 생성하는 방법 참고 : 애플리케이션 외부의 시스템이 테이블에 행을 삽입 해야하는 경우 hi/lo 알고리즘에 의존하지 않으면이 경우 복제 된 식별자를 생성하여 오류가 발생할 수 있습니다. pooled 또는 pooled-lo 알고리즘 ( hi/lo 의 최적화)에 의존하십시오.

설명 : 이것은 30 개의 배치로 1000 인서트를 배치하기 위해 10 개의 데이터베이스 라운드에서 1000 개의 식별자를 생성하기 위해 hi/lo 알고리즘을 사용하는 스프링 부팅 예입니다.

핵심 사항:

SEQUENCE 생성기 유형을 사용하십시오 (예 : PostgreSQL에서)Author.java 엔티티에서와 같이 hi/lo 알고리즘을 구성하십시오 출력 예:

| 이 저장소에 노출 된 성능 레시피에 대한 깊은 다이빙이 필요하다면 내 책 "Spring Boot Persistence 모범 사례"를 좋아할 것입니다. | 100 개 이상의 Java Persistence Performance 문제에 대한 팁과 삽화가 필요한 경우 "Java Persistence Performance Illustrated Guide"가 귀하를위한 것입니다. |

|

|

@ManyToMany 협회를 구현하는 가장 좋은 방법 설명 : 이 응용 프로그램은 성능 관점에서 양방향 @ManyToMany 연관을 구현하는 것이 어떻게 올바른지에 대한 개념 증명입니다.

핵심 사항:

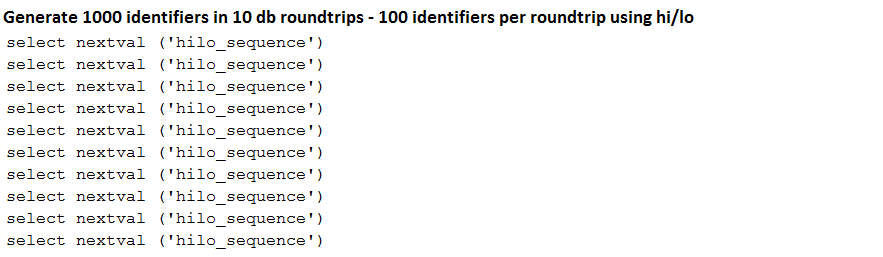

mappedBy 쪽을 선택하십시오Set 아닌 List 통해 관계 컬렉션을 실현하십시오CascadeType.PERSIST 및 CascadeType.MERGE 사용하지만 CascadeType.REMOVE/ALL 피하십시오.@ManyToMany 는 기본적으로 게으르다. 이렇게 유지하십시오!@NaturalId )) 및/또는 데이터베이스 생성 식별자를 사용하고 여기에서와 같이 equals() 및 hashCode() 메소드를 올바르게 재정의합니다.toString() 재정의 해야하는 경우 엔티티가 데이터베이스에서로드 될 때 가져온 기본 속성에 대해서만주의를 기울여야합니다.@ManyToMany Associations에서 List 대신 Set 선호합니다 설명 : 이것은 각각 List Set 사용하여 양방향 @ManyToMany 의 경우 행을 제거하는 스프링 부팅 예입니다. 결론은 Set 훨씬 더 좋다는 것입니다! 이것은 단방향에도 적용됩니다!

핵심 사항:

Set 사용하는 것은 List 보다 훨씬 효율적입니다 출력 예:

log4jdbc 통해 쿼리 세부 정보를 보는 방법설명 : log4jdbc를 통해 쿼리 세부 정보를보십시오.

핵심 사항:

pom.xml 에서 maven의 경우 log4jdbc 종속성을 추가하십시오 출력 샘플 :

설명 : TRACE 통해 준비된 명령문 바인딩/추출 매개 변수를 봅니다.

핵심 사항:

application.properties 에서 추가 : logging.level.org.hibernate.type.descriptor.sql=TRACE 출력 샘플 :

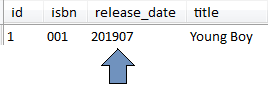

java.time.YearMonth 저장하는 방법 Hibernate Type Library를 통한 Integer 또는 Date 설명 : 최대 절전 모드 유형은 최대 절전 모드 코어에서 기본적으로 지원되지 않는 추가 유형 세트입니다. 이러한 유형 중 하나는 java.time.YearMonth 입니다. 이것은 최대 절전 모드 유형을 사용하여 YearMonth MySQL 데이터베이스에 정수 또는 날짜로 저장하는 스프링 부트 응용 프로그램입니다.

핵심 사항:

pom.xml 의 종속성으로 최대 절전 모드 유형을 추가하십시오.@TypeDef 사용하여 typeClass defaultForType 에 매핑하십시오 출력 예:

참고 : JPA 2.1의 쿼리의 WHERE 부분 ( SELECT 부분이 아님)에서 SQL 함수를 사용하면 여기에서와 같이 function() 통해 수행 할 수 있습니다.

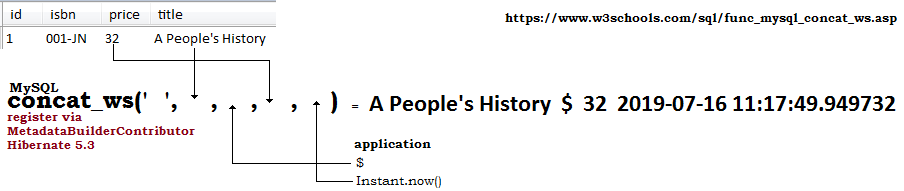

설명 : JPQL 쿼리에서 SQL 함수 (표준 또는 정의)를 사용하려고하면 Hibernate가 인식하지 못하고 JPQL 쿼리를 구문 분석 할 수없는 경우 예외가 발생할 수 있습니다. 예를 들어, MySQL, concat_ws 함수는 최대 절전 모드로 인식되지 않습니다. 이 응용 프로그램은 MetadataBuilderContributor 통해 concat_ws 기능을 등록하고 metadata_builder_contributor 속성을 통해 Hibernate에게 알리는 Hibernate 5.3을 기반으로하는 Spring Boot 응용 프로그램입니다. 이 예제는 @Query 및 EntityManager 도 사용하므로 두 가지 사용 사례를 볼 수 있습니다.

핵심 사항:

MetadataBuilderContributor 구현하고 concat_ws mysql 함수를 등록하십시오application.properties 에서 spring.jpa.properties.hibernate.metadata_builder_contributor 설정하여 MetadataBuilderContributor 구현의 최대 요선을 지적합니다. 출력 예:

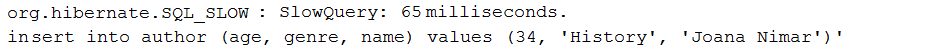

설명 : 이 응용 프로그램은 DataSource-Proxy를 통한 느린 쿼리 만 기록하는 샘플입니다. 느린 쿼리는 실행 시간이 밀리 초의 특정 임계 값보다 큰 쿼리입니다.

핵심 사항:

pom.xml 에 데이터 소스-프로스-록시 의존성을 추가하십시오DataSource Bean을 가로 채기 위해 Bean Post 프로세서 작성ProxyFactory 및 MethodInterceptor 구현을 통해 DataSource Bean을 랩핑하십시오.afterQuery() 재정의합니다. 출력 예:

Page<dto> SELECT COUNT . 설명 : 이 응용 프로그램은 스프링 부팅 오프셋 페이지 매김을 통해 데이터를 Page<dto> 로 가져옵니다. 대부분의 경우, 페이지를 입은 데이터는 읽기 전용 데이터입니다. 데이터를 엔티티로 가져 오는 것은 해당 데이터를 수정하려는 경우에만 수행되어야하므로 Page<entity> 로 전용 데이터를 가져 오는 것이 상당한 성능 페널티가 될 수 있으므로 바람직하지 않습니다. 총 레코드 수를 계산하기 위해 트리거 된 SELECT COUNT 기본 SELECT 의 하위 쿼리입니다. 따라서 두 개 대신 단일 데이터베이스 라운드 트립이 있습니다 (일반적으로 데이터를 가져 오는 데 필요한 쿼리가 하나 있고 총 레코드 수를 계산하는 데 하나).

핵심 사항:

PagingAndSortingRepository 확장하는 저장소를 작성하십시오List<dto> 으로 가져옵니다.List<dto> 와 적절한 Pageable 사용하여 Page<dto> 만듭니다.List<dto> SELECT COUNT . 설명 : 이 응용 프로그램은 스프링 부팅 오프셋 페이지 매김을 통해 데이터를 List<dto> 으로 가져옵니다. 대부분의 경우, 페이지를 입은 데이터는 읽기 전용 데이터입니다. 데이터를 엔티티로 가져 오는 것은 해당 데이터를 수정하려는 경우에만 수행되어야하므로, 읽기 전용 데이터를 List<entity> 로 가져 오는 것이 상당한 성능 페널티가 될 수 있으므로 바람직하지 않습니다. 총 레코드 수를 계산하기 위해 트리거 된 SELECT COUNT 기본 SELECT 의 하위 쿼리입니다. 따라서 두 개 대신 단일 데이터베이스 라운드 트립이 있습니다 (일반적으로 데이터를 가져 오는 데 필요한 쿼리가 하나 있고 총 레코드 수를 계산하는 데 하나).

핵심 사항:

PagingAndSortingRepository 확장하는 저장소를 작성하십시오List<dto> 으로 가져옵니다. spring-boot-starter-jdbc 또는 spring-boot-starter-data-jpa "Starters"를 사용하는 경우 Hikaricp에 자동으로 종속성을 얻습니다.

참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 application.properties 를 통해 hikaricp를 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다. 연결 풀 상태를 공개하는 Hickaricp 보고서를 확인하십시오.

핵심 사항:

application.properties 에서 spring.datasource.hikari.* 에 의존하여 Hikaricp를 구성하십시오 출력 샘플 :

| 이 저장소에 노출 된 성능 레시피에 대한 깊은 다이빙이 필요하다면 내 책 "Spring Boot Persistence 모범 사례"를 좋아할 것입니다. | 100 개 이상의 Java Persistence Performance 문제에 대한 팁과 삽화가 필요한 경우 "Java Persistence Performance Illustrated Guide"가 귀하를위한 것입니다. |

|

|

DataSourceBuilder 통해 Hikaricp 설정을 사용자 정의하는 방법 spring-boot-starter-jdbc 또는 spring-boot-starter-data-jpa "Starters"를 사용하는 경우 Hikaricp에 자동으로 종속성을 얻습니다.

참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 DataSourceBuilder 통해 hikaricp를 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다. 연결 풀 상태를 공개하는 Hickaricp 보고서를 확인하십시오.

핵심 사항:

application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오 출력 샘플 :

이 응용 프로그램은이 Dzone 기사에 자세히 설명되어 있습니다.

DataSourceBuilder 통해 Bonecp 설정을 사용자 정의하는 방법참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 DataSourceBuilder 통해 Bonecp를 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다.

핵심 사항:

pom.xml 에서 Bonecp 종속성을 추가하십시오application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오 출력 샘플 :

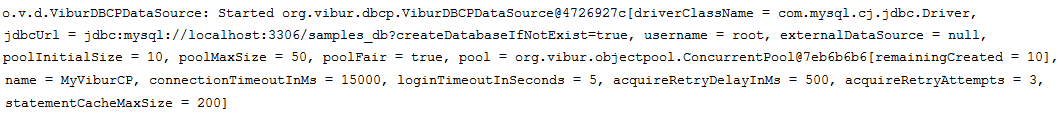

DataSourceBuilder 통해 ViburdBCP 설정을 사용자 정의하는 방법참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 DataSourceBuilder 통해 ViburdBCP를 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다.

핵심 사항:

pom.xml 에서 viburdbcp 종속성을 추가하십시오application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오 출력 샘플 :

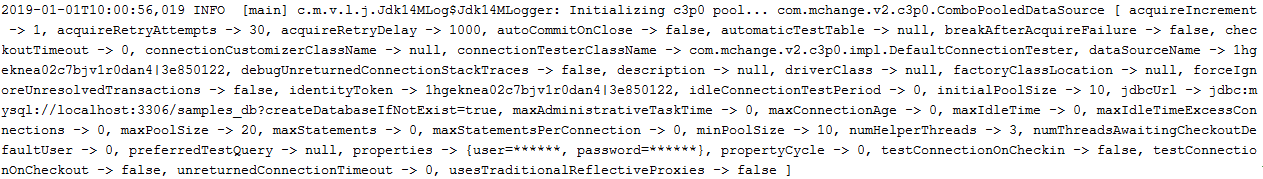

DataSourceBuilder 통해 C3P0 설정을 사용자 정의하는 방법참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 DataSourceBuilder 통해 C3P0을 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다.

핵심 사항:

pom.xml 에서 C3P0 종속성을 추가하십시오application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오 출력 샘플 :

DataSourceBuilder 통해 DBCP2 설정을 사용자 정의하는 방법참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

설명 : 이것은 DataSourceBuilder 통해 DBCP2를 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다.

핵심 사항:

pom.xml 에서 dbcp2 종속성을 추가하십시오application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오DataSourceBuilder 통해 Tomcat 설정을 사용자 정의하는 방법참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

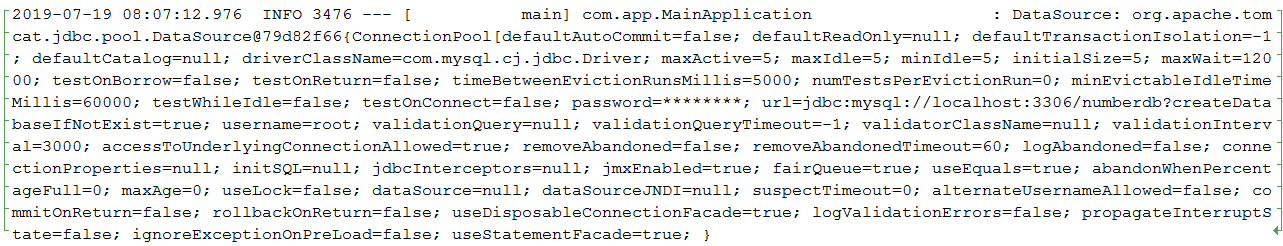

설명 : 이것은 DataSourceBuilder 통해 Tomcat을 설정하는 킥오프 응용 프로그램입니다. jdbcUrl MySQL 데이터베이스 용으로 설정됩니다. 테스트 목적으로 응용 프로그램은 동시 사용자를 시뮬레이션하기 위해 ExecutorService 사용합니다.

핵심 사항:

pom.xml 에서 Tomcat 의존성을 추가하십시오application.properties 에서 사용자 정의 접두사 (예 : app.datasource.*DataSource 반환하는 @Bean 을 작성하십시오 출력 샘플 :

참고 : Connection Pool 매개 변수를 조정하는 가장 좋은 방법은 Vlad Mihalcea의 Flexy Pool을 사용하는 것으로 구성됩니다. Flexy Pool을 통해 연결 풀의 고성능을 유지하는 최적 설정을 찾을 수 있습니다.

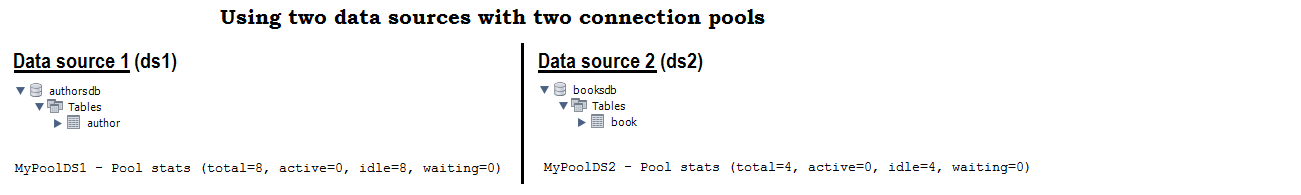

설명 : 이것은 두 개의 데이터 소스 (2 개의 MySQL 데이터베이스, 하나는 jororsDB라는 이름의 authorsdb 및 1 개의 이름이 지정된 booksdb )를 사용하는 킥오프 응용 프로그램입니다 (각 데이터베이스는 다른 설정이있는 자체 HikarICP 연결 풀을 사용합니다). 위의 항목을 기반으로 한 두 개의 다른 제공 업체의 두 개의 연결 풀을 쉽게 구성하기 쉽습니다.

핵심 사항:

application.properties 에서는 두 개의 사용자 정의 접두사 (예 : app.datasource.ds1 및 app.datasource.ds2 통해 두 개의 Hikaricp 연결 풀을 구성하십시오.DataSource 반환하는 @Bean 작성하여 @Primary 로 표시하십시오.DataSource 반환하는 다른 @Bean 작성하십시오EntityManagerFactory 구성하고 각각을 스캔 할 패키지를 가리키십시오.EntityManager 의 도메인 및 저장소를 오른쪽 패키지에 넣으십시오. 출력 샘플 :

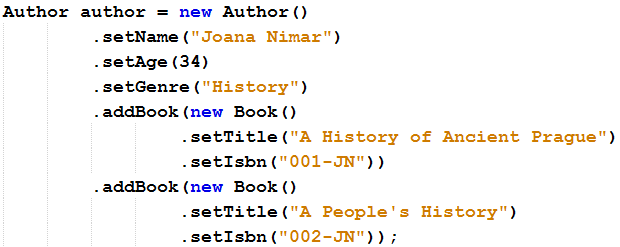

참고 : 세터를 변경하지 않고 유창한 API를 제공하려면이 항목을 고려하십시오.

설명 : 이것은 유창한 API를 강화하기 위해 엔티티 세터 메서드를 변경하는 샘플 응용 프로그램입니다.

핵심 사항:

void 대신 this 반환하십시오 유창한 API 예 :

참고 : 세터를 변경하여 유창한 API를 제공하려면이 항목을 고려하십시오.

설명 : 유창한 API에 권한을 부여하기 위해 엔티티 추가 방법 (예 : setName 의 경우 name 추가) 메소드를 추가하는 샘플 응용 프로그램입니다.

핵심 사항:

void 대신 this 반환하는 추가 메소드를 추가하십시오. 유창한 API 예 :

| 이 저장소에 노출 된 성능 레시피에 대한 깊은 다이빙이 필요하다면 내 책 "Spring Boot Persistence 모범 사례"를 좋아할 것입니다. | 100 개 이상의 Java Persistence Performance 문제에 대한 팁과 삽화가 필요한 경우 "Java Persistence Performance Illustrated Guide"가 귀하를위한 것입니다. |

|

|

Slice<T> findAll() 아마도 아마도 이것이 당신이 원하는 전부 일 것입니다 : Slice<entity> / Slice<dto> fetchAll / fetchAllDto 통해 가져 오는 방법

Slice<T> findAll() 의 일부 구현 :

"SELECT e FROM " + entityClass.getSimpleName() + " e;"CriteriaBuilder 기반으로 한 또 다른 미니멀리스트 구현입니다.Sort 을 제공 할 수있는 구현이므로 결과를 정렬 할 수 있습니다.Sort 및 스프링 데이터 Specification 제공 할 수있는 구현입니다.Sort , LockModeType , QueryHints 및 스프링 데이터 Specification 제공 할 수있는 구현입니다.Pageable SimpleJpaRepository 스프링 데이터에서 확장하여 스프링 데이터 및/또는 Specification 제공 할 수있는 구현입니다. 기본적 으로이 구현은 Slice<T> 대신 Page<T> 반환하는 유일한 구현이지만 Page<T> readPage(...) 메소드를 재정의하여 제거되어 추가 SELECT COUNT 트리거하지 않습니다. SimpleJpaRepository . 주요 단점은 Page<T> 유지함으로써 다음 페이지가 있는지 또는 현재 페이지가 마지막 페이지인지 알지 못한다는 것입니다. 그럼에도 불구하고, 이것을 갖는 해결 방법도 있습니다. 이 구현에서는 LockModeType 또는 쿼리 힌트를 설정할 수 없습니다. 스토리 : Spring Boot는 Page 또는 Slice 반환하는 오프셋 기반 내장 페이징 메커니즘을 제공합니다. 이러한 각 API는 데이터 페이지와 일부 메타 데이터를 나타냅니다. 주요 차이점은 Page 에 총 레코드 수가 포함되어 있고 Slice 사용 가능한 다른 페이지가 있는지 만 알 수 있다는 것입니다. Page 의 경우 Spring Boot는 인수로서 Pageable 및/또는 Specification 또는 Example 사용할 수있는 findAll() 메소드를 제공합니다. 총 레코드 수가 포함 된 Page 만들기 위해이 메소드는 현재 페이지의 데이터를 가져 오는 데 사용되는 쿼리 옆에 SELECT COUNT 추가 쿼리를 트리거합니다. 페이지를 요청할 때마다 SELECT COUNT 쿼리가 트리거되므로 성능 페널티가 될 수 있습니다. 이 여분의 쿼리를 피하기 위해 Spring Boot는보다 편안한 API 인 Slice API를 제공합니다. Page 대신 Slice 사용하면이 추가 SELECT COUNT 쿼리의 필요성이 제거되고 총 레코드 수없이 페이지 (레코드) 및 일부 메타 데이터를 반환합니다. 따라서 Slice 총 레코드 수를 알지 못하지만 현재 페이지 또는이 페이지 후에 다른 페이지가 있는지 여부를 알 수 있습니다. 문제는 Slice SQL WHERE 포함 된 쿼리 (스프링 데이터에 내장 된 쿼리 빌더 메커니즘을 사용하는 것)를 포함하는 쿼리에 대해 잘 작동하지만 findAll() 에서는 작동하지 않습니다 . 이 메소드는 여전히 Slice 대신 Page 반환하므로 SELECT COUNT Query가 Slice<T> findAll(...); .

설명 : 이것은 다양한 버전의 Slice<T> findAll(...) 메소드를 제공하는 샘플 애플리케이션 제품군입니다. "SELECT e FROM " + entityClass.getSimpleName() + " e"; (이 SimpleJpaRepository ), 정렬, 사양, 잠금 모드 및 쿼리 힌트를 지원하는 사용자 정의 구현.

핵심 사항:

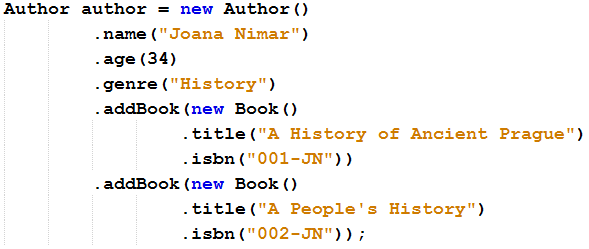

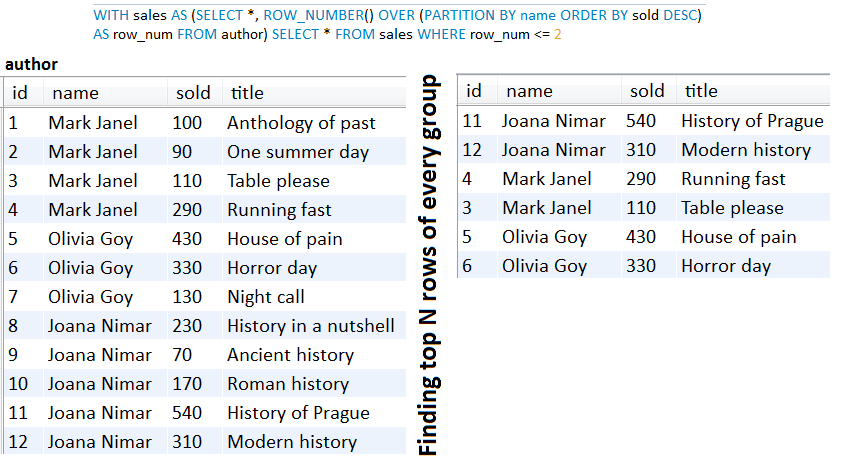

Slice<T> findAll(...) 메소드를 노출 SlicePagingRepositoryImplementation abstract 클래스 작성findAll() 메소드를 구현하여 Slice<T> (또는 Page<T> 반환하지만 총 요소 수는 없음)SliceImpl ( Slice<T> ) 또는 PageImpl ( Page<T> )을 반환합니다.readSlice() 메소드를 구현하거나 SimpleJpaRepository#readPage() 페이지를 재정의하여 SELECT COUNT 피하십시오.Author.class )를이 abstract 클래스로 전달하십시오 ( AuthorRepository )COUNT(*) OVER 및 리턴 List<dto> 설명 : 일반적으로 오프셋 페이지 매김에는 데이터를 가져 오는 데 필요한 쿼리가 하나 있고 총 레코드 수를 계산하는 데 하나가 있습니다. 그러나이 정보를 기본 SELECT 에 중첩 된 SELECT COUNT 하위 쿼리를 통해 단일 데이터베이스 Rountrip 에서이 정보를 가져올 수 있습니다. 더 좋은 점은 창 함수를 지원하는 데이터베이스 공급 업체 SELECT COUNT 경우이 응용 프로그램에서 MySQL 8에 대한 기본 쿼리 에서이 창 함수를 사용하는 COUNT(*) OVER() 에 의존하는 솔루션이 있습니다. .

핵심 사항:

COUNT(*) OVER() 예:

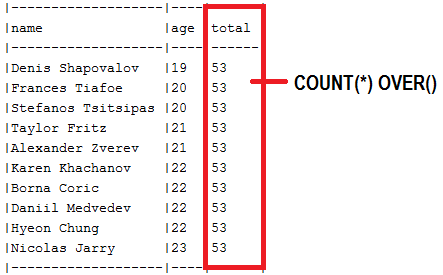

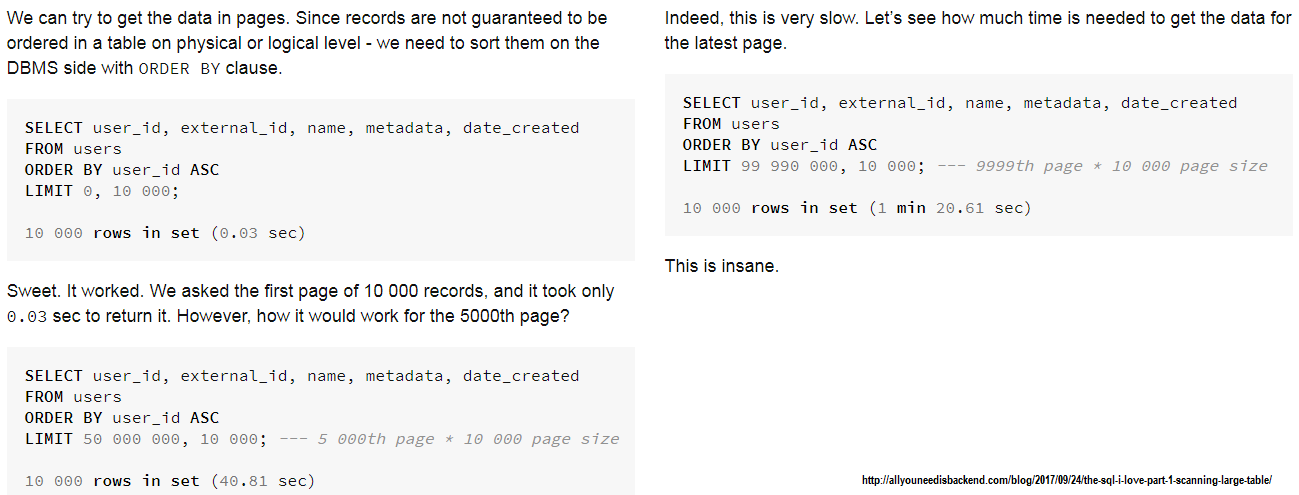

설명 : 오프셋 페이징에 의존 할 때 원하는 오프셋에 도달하기 전에 N 레코드를 버려 성능 페널티가 유발됩니다. 더 큰 N은 상당한 성과 페널티로 이어집니다. 우리가 큰 n을 가지고있을 때, 큰 데이터 세트의 "일정한"시간을 유지하는 Keyset Pagination에 의존하는 것이 좋습니다. 나쁜 오프셋이 어떻게 수행 할 수 있는지 이해하려면이 기사를 확인하십시오.

해당 기사의 스크린 샷 ( 오프셋 페이지 매김) :

더 많은 기록이 있는지 알아야합니까?

본질적으로 Keyset은 SELECT COUNT 사용하여 총 레코드 수를 가져 오지 않습니다. 그러나 약간의 조정으로 더 많은 레코드가 있는지 쉽게 말할 수 있으므로 Next Page 유형의 버튼을 표시 할 수 있습니다. 주로 그러한 것이 필요한 경우 다음은 다음과 같은 클라이맥스가있는이 응용 프로그램을 고려하십시오.

public AuthorView fetchNextPage(long id, int limit) {

List<Author> authors = authorRepository.fetchAll(id, limit + 1);

if (authors.size() == (limit + 1)) {

authors.remove(authors.size() - 1);

return new AuthorView(authors, true);

}

return new AuthorView(authors, false);

}

또는 이와 같이 ( Author.toString() 메소드에 의존) :

public Map<List<Author>, Boolean> fetchNextPage(long id, int limit) {

List<Author> authors = authorRepository.fetchAll(id, limit + 1);

if(authors.size() == (limit + 1)) {

authors.remove(authors.size() -1);

return Collections.singletonMap(authors, true);

}

return Collections.singletonMap(authors, false);

}

Previous Page 버튼은 첫 번째 레코드를 기반으로 쉽게 구현할 수 있습니다.

핵심 사항:

id ) 역할을하려면 열을 선택하십시오.WHERE and ORDER BY 열을 사용하십시오.설명 : 이것은 클래식 스프링 부트 오프셋 페이지 매김 예입니다. 그러나 성능 페널티가 더 설명되어 있기 때문에이 접근법을 생산에 사용하는 것이 좋지 않습니다.

우리가 오프셋 페이지 매김에 의존 할 때, 우리는 원하는 오프셋에 도달하기 전에 N 레코드를 버려 성능 페널티를 유발합니다. 더 큰 N은 상당한 성과 페널티로 이어집니다. 또 다른 형벌은 총 레코드 수를 계산하는 데 필요한 추가 SELECT 입니다. 오프셋 Pagination이 어떻게 수행 할 수 있는지 이해하려면이 기사를 확인하십시오. 해당 기사의 스크린 샷은 다음과 같습니다. 그럼에도 불구하고 아마도이 예는 약간 극단적 일 것입니다. 상대적으로 작은 데이터 세트의 경우 오프셋 페이지 매김이 그렇게 나쁘지 않습니다 ( 키 세트 페이지 매김에 성능이 가깝습니다). Spring Boot는

그럼에도 불구하고 아마도이 예는 약간 극단적 일 것입니다. 상대적으로 작은 데이터 세트의 경우 오프셋 페이지 매김이 그렇게 나쁘지 않습니다 ( 키 세트 페이지 매김에 성능이 가깝습니다). Spring Boot는 Page API를 통한 오프셋 페이지 매김에 대한 내장 지원을 제공하기 때문에 사용하기가 매우 쉽습니다. 그러나 사례에 따라 다음 예제에서와 같이 오프셋 페이지 매김을 약간 최적화 할 수 있습니다.

페이지를 Page 로 가져 오기 :

COUNT(*) OVER 및 반환 Page<dto>COUNT(*) OVER 통해 Page<entity> 반환합니다Page<dto> SELECT COUNTPage<entity> 추가 열을 통해 SELECT COUNT .Page<projection> 이 엔티티 및 투영을 통한 총 레코드 수를 SELECT COUNT . 페이지를 List 으로 가져 오기 :

COUNT(*) OVER 및 리턴 List<dto>COUNT(*) OVER 통해 List<entity> 반환합니다List<dto> SELECT COUNTList<entity> SELECT COUNT .List<projection> 이 엔티티 및 투영을 통한 총 레코드 수를 SELECT COUNT .그러나 : 오프셋 페이지 매김이 성능 문제를 일으키고 Keyset Pagination을 사용하기로 결정한 경우 여기에서 확인하십시오 ( Keyset Pagination).

클래식 오프셋 페이지 매김의 핵심 사항 :

PagingAndSortingRepository 확장하는 저장소를 작성하십시오Page<entity>고전적인 오프셋 페이지 매김의 예 :

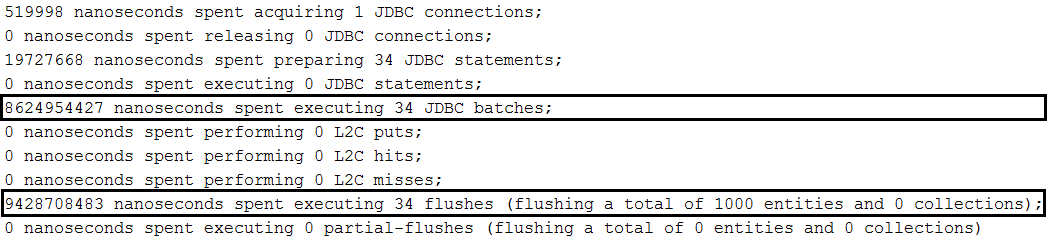

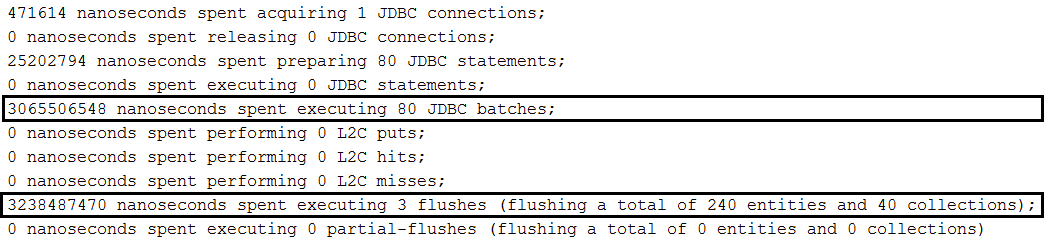

findAll(Pageable) 호출하십시오.repository.findAll(PageRequest.of(page, size));findAll(Pageable) 호출하십시오.repository.findAll(PageRequest.of(page, size, new Sort(Sort.Direction.ASC, "name")));Page<Author> findByName(String name, Pageable pageable);Page<Author> queryFirst10ByName(String name, Pageable pageable); 설명 : Author 와 Book 단체 사이에 일대일 관계가 있다고 가정 해 봅시다. 우리가 저자를 구할 때, 우리는 Cascading All/Persist 덕분에 그의 책을 저장합니다. 우리는 책이있는 많은 저자를 만들고 배치 기술을 사용하여 데이터베이스 (예 : MySQL 데이터베이스)에 저장하려고합니다. 기본적으로, 이로 인해 각 저자와 저자 당 책을 배치 할 수 있습니다 (저자의 한 배치 및 책의 한 배치, 저자를위한 다른 배치 및 책의 다른 배치 등). In order to batch authors and books, we need to order inserts as in this application.

Key points: Beside all setting specific to batching inserts in MySQL, we need to set up in application.properties the following property: spring.jpa.properties.hibernate.order_inserts=true

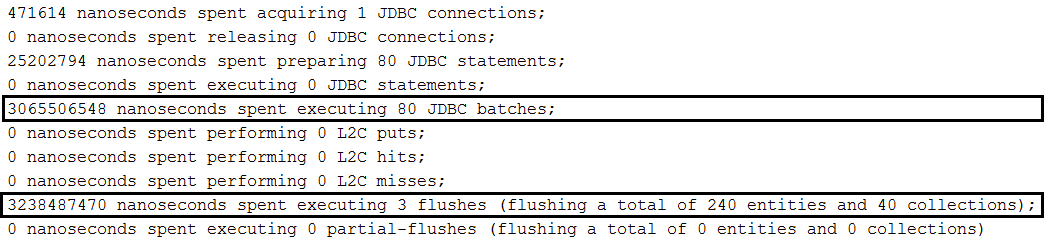

Example without ordered inserts:

Example with ordered inserts:

Implementations:

Description: Batch updates in MySQL.

핵심 사항:

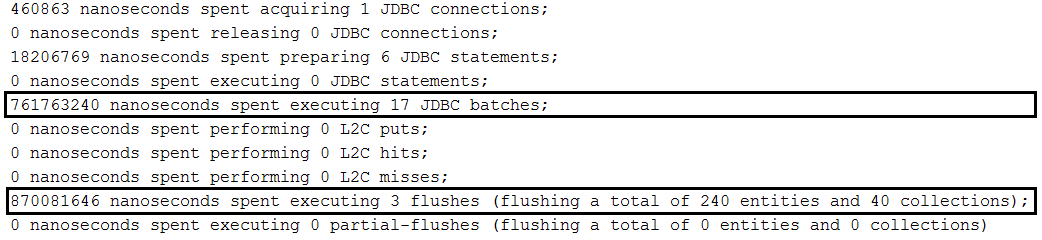

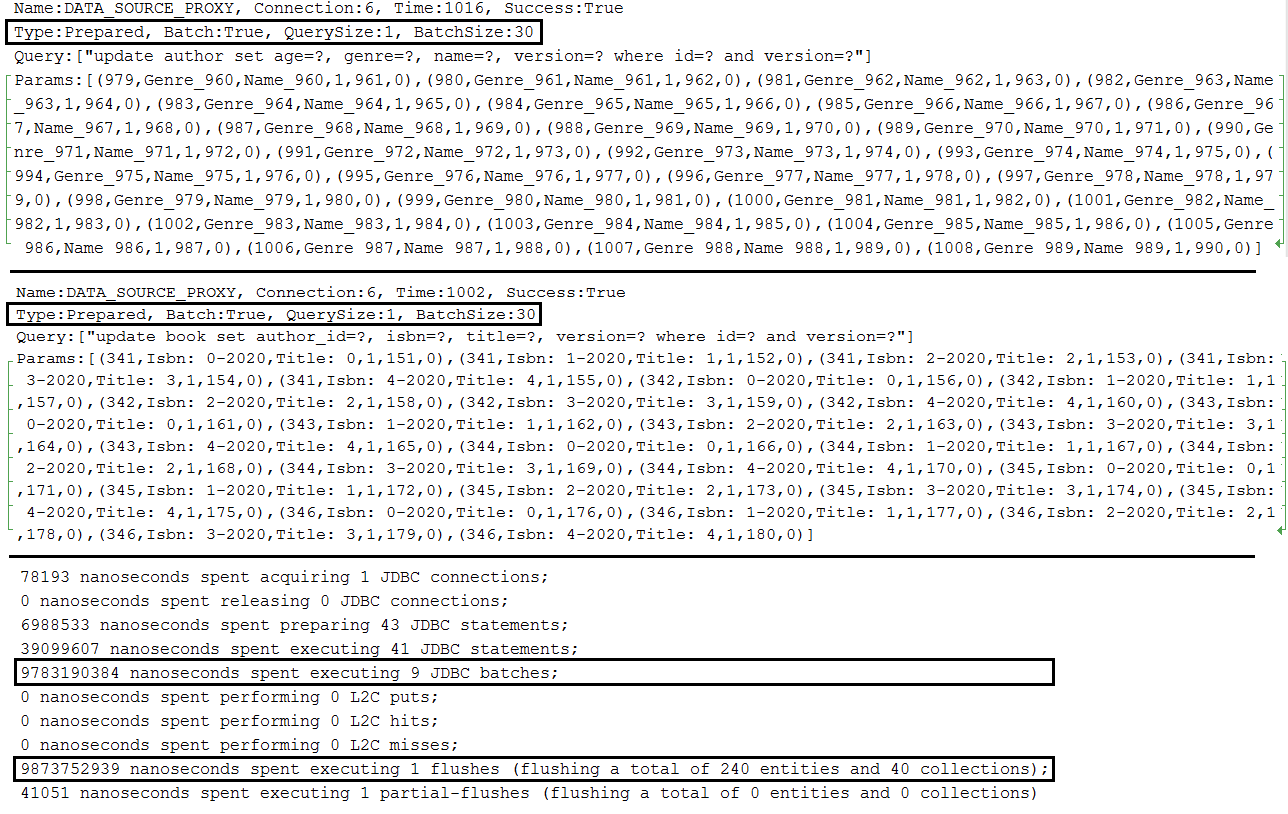

application.properties set spring.jpa.properties.hibernate.jdbc.batch_sizeapplication.properties set JDBC URL with rewriteBatchedStatements=true (optimization for MySQL, statements get rewritten into a single string buffer and sent in a single request)application.properties set JDBC URL with cachePrepStmts=true (enable caching and is useful if you decide to set prepStmtCacheSize , prepStmtCacheSqlLimit , etc as well; without this setting the cache is disabled)application.properties set JDBC URL with useServerPrepStmts=true (this way you switch to server-side prepared statements (may lead to signnificant performance boost))spring.jpa.properties.hibernate.order_updates=true to optimize the batching by ordering 업데이트application.properties a setting for enabling batching for versioned entities during update and delete operations (entities that contains @Version for implicit optimistic locking); this setting is: spring.jpa.properties.hibernate.jdbc.batch_versioned_data=true ; starting with Hibernate 5, this setting should be true by default Output example for single entity:

Output example for parent-child relationship:

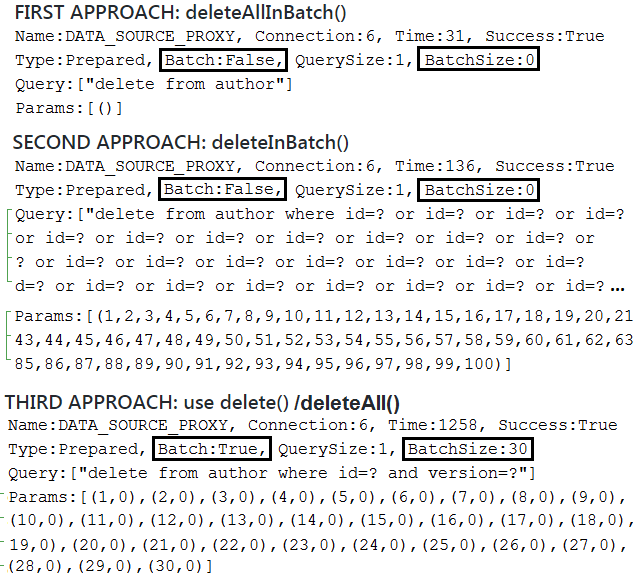

Description: Batch deletes that don't involve associations in MySQL.

Note: Spring deleteAllInBatch() and deleteInBatch() don't use delete batching and don't take advantage of automatic optimstic locking mechanism to prevent lost updates (eg, @Version is ignored). They rely on Query.executeUpdate() to trigger bulk operations. These operations are fast, but Hibernate doesn't know which entities are removed, therefore, the Persistence Context is not updated accordingly (it's up to you to flush (before delete) and close/clear (after delete) the Persistence Context accordingly to avoid issues created by unflushed (if any) or outdated (if any) entities). The first one ( deleteAllInBatch() ) simply triggers a delete from entity_name statement and is very useful for deleting all records. The second one ( deleteInBatch() ) triggers a delete from entity_name where id=? or id=? or id=? ... statement, therefore, is prone to cause issues if the generated DELETE statement exceedes the maximum accepted size. This issue can be controlled by deleting the data in chunks, relying on IN operator, and so on. Bulk operations are faster than batching which can be achieved via the deleteAll() , deleteAll(Iterable<? extends T> entities) or delete() method. Behind the scene, the two flavors of deleteAll() relies on delete() . The delete() / deleteAll() methods rely on EntityManager.remove() therefore the Persistence Context is synchronized accordingly. Moreover, if automatic optimstic locking mechanism (to prevent lost updates ) is enabled then it will be used.

Key points for regular delete batching:

deleteAll() , deleteAll(Iterable<? extends T> entities) or delete() methodapplication.properties set spring.jpa.properties.hibernate.jdbc.batch_sizeapplication.properties set JDBC URL with rewriteBatchedStatements=true (optimization for MySQL, statements get rewritten into a single string buffer and sent in a single request)application.properties set JDBC URL with cachePrepStmts=true (enable caching and is useful if you decide to set prepStmtCacheSize , prepStmtCacheSqlLimit , etc as well; without this setting the cache is disabled)application.properties set JDBC URL with useServerPrepStmts=true (this way you switch to server-side prepared statements (may lead to signnificant performance boost))application.properties a setting for enabling batching for versioned entities during update and delete operations (entities that contains @Version for implicit optimistic locking); this setting is: spring.jpa.properties.hibernate.jdbc.batch_versioned_data=true ; starting with Hibernate 5, this setting should be true by default 출력 예:

Description: Batch deletes in MySQL via orphanRemoval=true .

Note: Spring deleteAllInBatch() and deleteInBatch() don't use delete batching and don't take advantage of cascading removal, orphanRemoval and automatic optimstic locking mechanism to prevent lost updates (eg, @Version is ignored). They rely on Query.executeUpdate() to trigger bulk operations. These operations are fast, but Hibernate doesn't know which entities are removed, therefore, the Persistence Context is not updated accordingly (it's up to you to flush (before delete) and close/clear (after delete) the Persistence Context accordingly to avoid issues created by unflushed (if any) or outdated (if any) entities). The first one ( deleteAllInBatch() ) simply triggers a delete from entity_name statement and is very useful for deleting all records. The second one ( deleteInBatch() ) triggers a delete from entity_name where id=? or id=? or id=? ... statement, therefore, is prone to cause issues if the generated DELETE statement exceedes the maximum accepted size. This issue can be controlled by deleting the data in chunks, relying on IN operator, and so on. Bulk operations are faster than batching which can be achieved via the deleteAll() , deleteAll(Iterable<? extends T> entities) or delete() method. Behind the scene, the two flavors of deleteAll() relies on delete() . The delete() / deleteAll() methods rely on EntityManager.remove() therefore the Persistence Context is synchronized accordingly. If automatic optimstic locking mechanism (to prevent lost updates ) is enabled then it will be used. Moreover, cascading removals and orphanRemoval works as well.

Key points for using deleteAll()/delete() :

Author entity and each author can have several Book ( one-to-many )orphanRemoval=true and CascadeType.ALLBook from the corresponding AuthororphanRemoval=true to enter into the scene; thanks to this setting, all disassociated books will be deleted; the generated DELETE statements are batched (if orphanRemoval is set to false , a bunch of updates will be executed instead of deletes)Author via the deleteAll() or delete() method (since we have dissaciated all Book , the Author deletion will take advantage of batching as well)ON DELETE CASCADE Description: Batch deletes in MySQL via ON DELETE CASCADE . Auto-generated database schema will contain the ON DELETE CASCADE directive.

Note: Spring deleteAllInBatch() and deleteInBatch() don't use delete batching and don't take advantage of cascading removal, orphanRemoval and automatic optimistic locking mechanism to prevent lost updates (eg, @Version is ignored), but both of them take advantage on ON DELETE CASCADE and are very efficient. They trigger bulk operations via Query.executeUpdate() , therefore, the Persistence Context is not synchronized accordingly (it's up to you to flush (before delete) and close/clear (after delete) the Persistence Context accordingly to avoid issues created by unflushed (if any) or outdated (if any) entities). The first one simply triggers a delete from entity_name statement, while the second one triggers a delete from entity_name where id=? or id=? or id=? ... 성명. For delete in batches rely on deleteAll() , deleteAll(Iterable<? extends T> entities) or delete() method. Behind the scene, the two flavors of deleteAll() relies on delete() . Mixing batching with database automatic actions ( ON DELETE CASCADE ) will result in a partially synchronized Persistent Context.

핵심 사항:

Author entity and each author can have several Book ( one-to-many )orphanRemoval or set it to falseCascadeType.PERSIST and CascadeType.MERGE@OnDelete(action = OnDeleteAction.CASCADE) next to @OneToManyspring.jpa.properties.hibernate.dialect to org.hibernate.dialect.MySQL5InnoDBDialect (or, MySQL8Dialect )deleteFoo() methods that uses bulk and batching deletes as well출력 예:

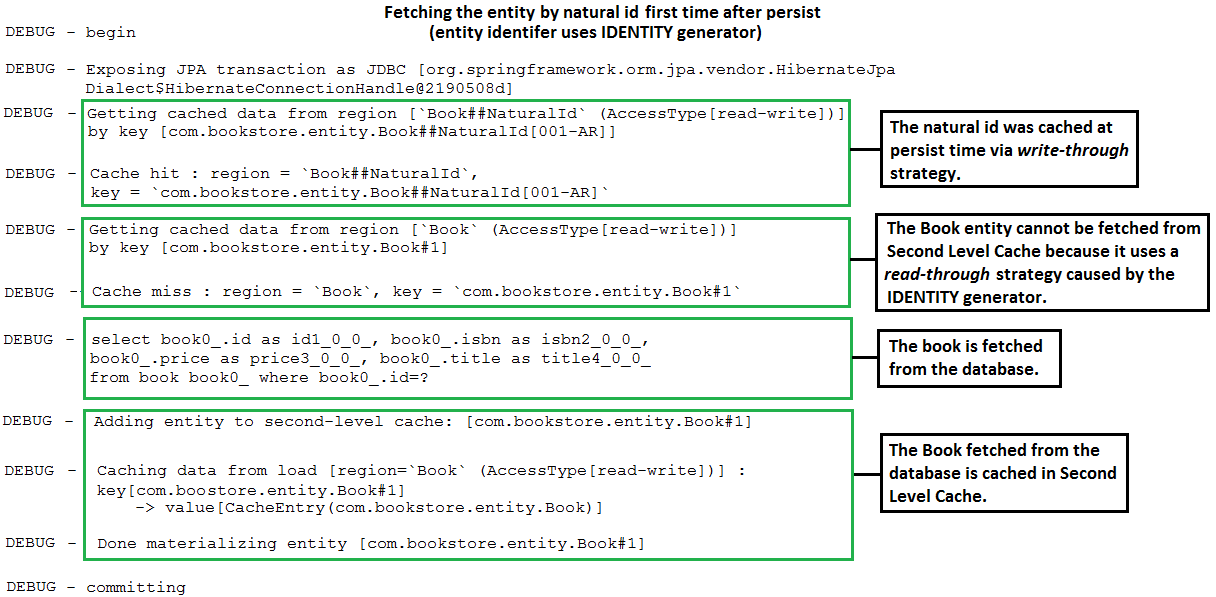

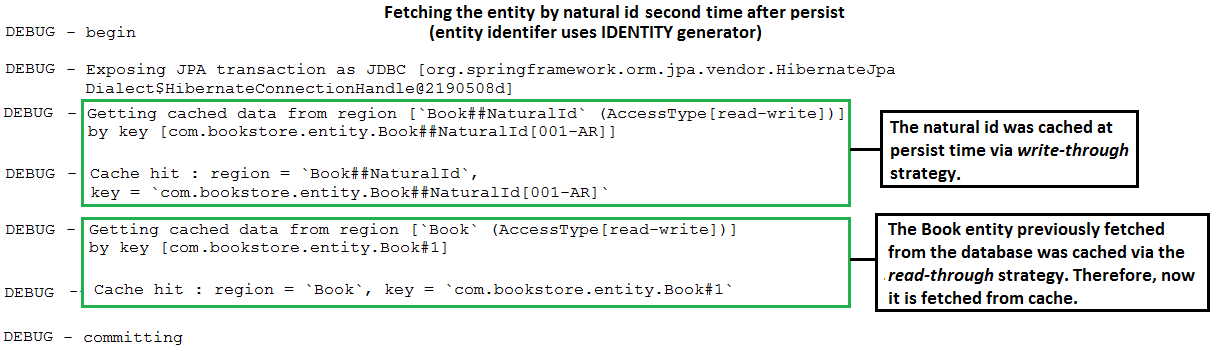

@NaturalId In Spring Boot Style Alternative implementation: In case that you want to avoid extending SimpleJpaRepository check this implementation.

Description: This is a SpringBoot application that maps a natural business key using Hibernate @NaturalId . This implementation allows us to use @NaturalId as it was provided by Spring.

핵심 사항:

Book ), mark the properties (business keys) that should act as natural IDs with @NaturalId ; commonly, there is a single such property, but multiple are suppored as well as here@NaturalId(mutable = false) and @Column(nullable = false, updatable = false, unique = true, ...)@NaturalId(mutable = true) and @Column(nullable = false, updatable = true, unique = true, ...)equals() and hashCode() using the natural id(s)@NoRepositoryBean interface ( NaturalRepository ) to define two methods, named findBySimpleNaturalId() and findByNaturalId()NaturalRepositoryImpl ) relying on Hibernate, Session , bySimpleNaturalId() and byNaturalId() methods@EnableJpaRepositories(repositoryBaseClass = NaturalRepositoryImpl.class) to register this implementation as the base classfindBySimpleNaturalId() or findByNaturalId()| If you need a deep dive into the performance recipes exposed in this repository then I am sure that you will love my book "Spring Boot Persistence Best Practices" | If you need a hand of tips and illustrations of 100+ Java persistence performance issues then "Java Persistence Performance Illustrated Guide" is for you. |

|

|

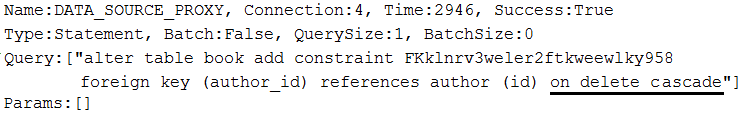

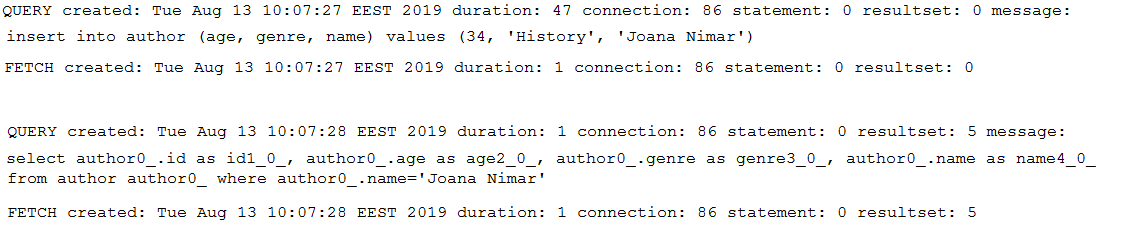

Description: This is a Spring Boot application that uses P6Spy. P6Spy is a framework that enables database data to be seamlessly intercepted and logged with no code changes to the application.

핵심 사항:

pom.xml , add the P6Spy Maven dependencyapplication.properties , set up JDBC URL as, jdbc:p6spy:mysql://localhost:3306/db_usersapplication.properties , set up driver class name as, com.p6spy.engine.spy.P6SpyDriverspy.properties (this file contains P6Spy configurations); in this application, the logs will be outputed to console, but you can easy switch to a file; more details about P6Spy configurations can be found in documentation Output sample:

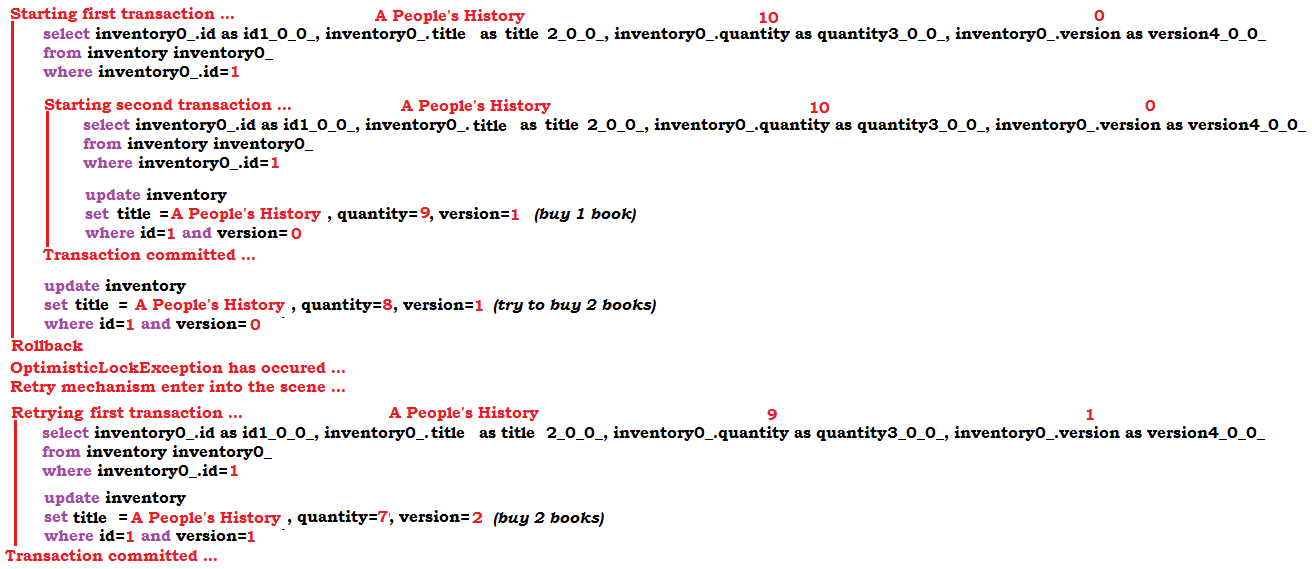

OptimisticLockException Exception ( @Version ) Note: Optimistic locking mechanism via @Version works for detached entities as well.

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception. When such exception occur, the application retry the corresponding transaction via db-util library developed by Vlad Mihalcea.

핵심 사항:

pom.xml , add the db-util dependencyOptimisticConcurrencyControlAspect bean@Transactional ) that is prone to throw (or that calls a method that is prone to throw (this method can be annotated with @Transactional )) an optimistic locking exception with @Retry(times = 10, on = OptimisticLockingFailureException.class) Output sample:

OptimisticLockException Exception (Hibernate Version-less Optimistic Locking Mechanism)Note: Optimistic locking mechanism via Hibernate version-less doesn't work for detached entities (don't close the Persistent Context).

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception (eg, in Spring Boot, OptimisticLockingFailureException ) via Hibernate version-less optimistic locking. When such exception occur, the application retry the corresponding transaction via db-util library developed by Vlad Mihalcea.

핵심 사항:

pom.xml , add the db-util library dependencyOptimisticConcurrencyControlAspect beanInventory ) with @DynamicUpdate and @OptimisticLocking(type = OptimisticLockType.DIRTY)@Transactional ) that is prone to throw (or that calls a method that is prone to throw (this method can be annotated with @Transactional )) an optimistic locking exception with @Retry(times = 10, on = OptimisticLockingFailureException.class)Note: You may also like to read the recipe, "How To Create DTO Via Spring Data Projections"

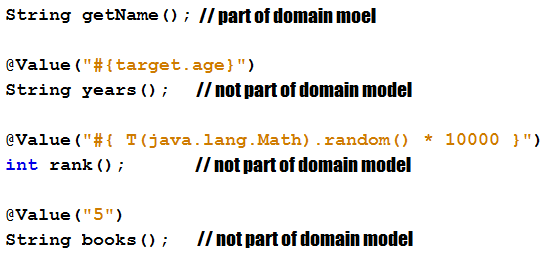

Description: This is an application sample that fetches only the needed columns from the database via Spring Data Projections (DTO) and enrich the result via virtual properties.

핵심 사항:

name and ageAuthorNameAge , use the @Value and Spring SpEL to point to a backing property from the domain model (in this case, the domain model property age is exposed via the virtual property years )AuthorNameAge , use the @Value and Spring SpEL to enrich the result with two virtual properties that don't have a match in the domain model (in this case, rank and books ) 출력 예:

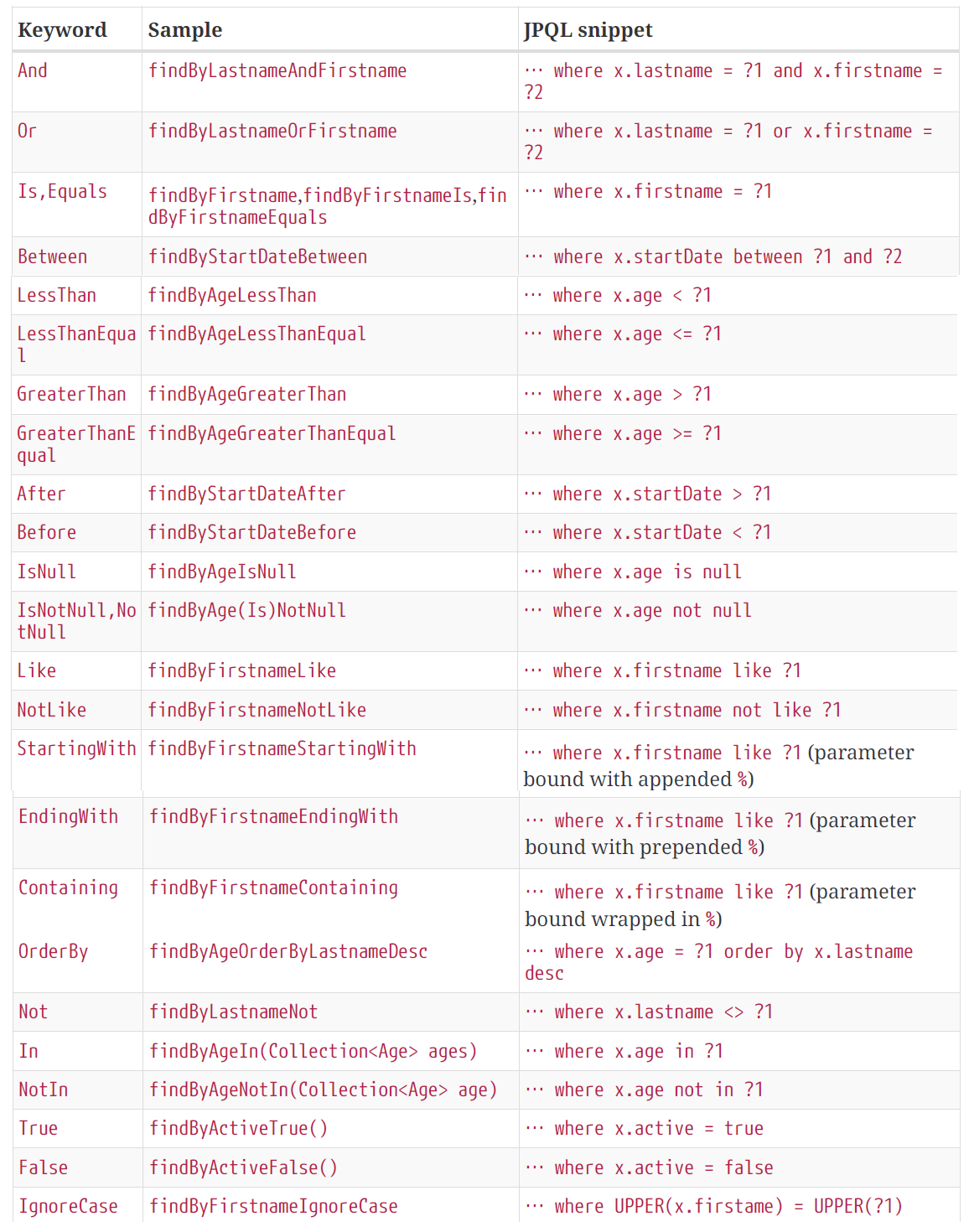

Description: Spring Data comes with the query creation mechanism for JPA that is capable to interpret a query method name and convert it into a SQL query in the proper dialect. This is possible as long as we respect the naming conventions of this mechanism. This is an application that exploit this mechanism to write queries that limit the result size. Basically, the name of the query method instructs Spring Data how to add the LIMIT (or similar clauses depending on the RDBMS) clause to the generated SQL queries.

핵심 사항:

AuthorRepository ) 예:

- List<Author> findFirst5ByAge(int age);

- List<Author> findFirst5ByAgeGreaterThanEqual(int age);

- List<Author> findFirst5ByAgeLessThan(int age);

- List<Author> findFirst5ByAgeOrderByNameDesc(int age);

- List<Author> findFirst5ByGenreOrderByAgeAsc(String genre);

- List<Author> findFirst5ByAgeGreaterThanEqualOrderByNameAsc(int age);

- List<Author> findFirst5ByGenreAndAgeLessThanOrderByNameDesc(String genre, int age);

- List<AuthorDto> findFirst5ByOrderByAgeAsc();

- Page<Author> queryFirst10ByName(String name, Pageable p);

- Slice<Author> findFirst10ByName(String name, Pageable p);

The list of supported keywords is listed below:

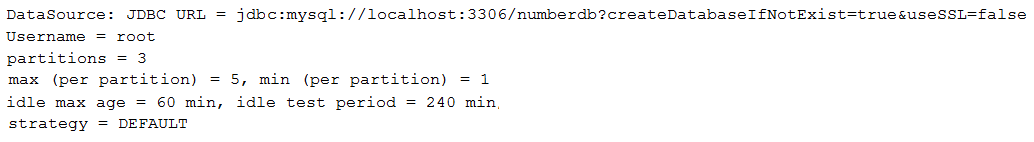

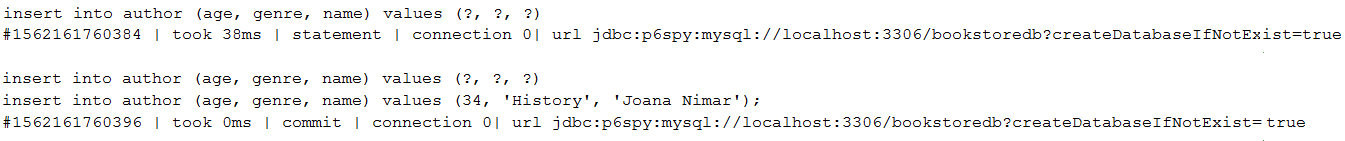

schema-*.sql In MySQL Note: As a rule, in real applications avoid generating schema via hibernate.ddl-auto or set it to validate . Use schema-*.sql file or better Flyway or Liquibase migration tools.

Description: This application is an example of using schema-*.sql to generate a schema(database) in MySQL.

핵심 사항:

application.properties , set the JDBC URL (eg, spring.datasource.url=jdbc:mysql://localhost:3306/bookstoredb?createDatabaseIfNotExist=true )application.properties , disable DDL auto (just don't add explicitly the hibernate.ddl-auto setting)application.properties , instruct Spring Boot to initialize the schema from schema-mysql.sql fileschema-*.sql And Match Entities To Them Via @Table In MySQL Note: As a rule, in real applications avoid generating schema via hibernate.ddl-auto or set it to validate . Use schema-*.sql file or better Flyway or Liquibase .

Description: This application is an example of using schema-*.sql to generate two databases in MySQL. The databases are matched at entity mapping via @Table .

핵심 사항:

application.properties , set the JDBC URL without the database, eg, spring.datasource.url=jdbc:mysql://localhost:3306application.properties , disable DDL auto (just don't specify hibernate.ddl-auto )aaplication.properties , instruct Spring Boot to initialize the schema from schema-mysql.sql fileAuthor entity, specify that the corresponding table ( author ) is in the database authorsdb via @Table(schema="authorsdb")Book entity, specify that the corresponding table ( book ) is in the database booksdb via @Table(schema="booksdb")출력 예:

Author results in the following SQL: insert into authorsdb.author (age, genre, name) values (?, ?, ?)Book results the following SQL: insert into booksdb.book (isbn, title) values (?, ?)Note: For web-applications, pagination should be the way to go, not streaming. But, if you choose streaming then keep in mind the golden rule: keep th result set as small as posible. Also, keep in mind that the Execution Plan might not be as efficient as when using SQL-level pagination.

Description: This application is an example of streaming the result set via Spring Data and MySQL. This example can be adopted for databases that fetches the entire result set in a single roundtrip causing performance penalties.

핵심 사항:

@Transactional(readOnly=true) )Integer.MIN_VALUE (recommended in MySQL))Statement fetch-size to Integer.MIN_VALUE , or add useCursorFetch=true to the JDBC URL and set Statement fetch-size to a positive integer (eg, 30)createDatabaseIfNotExist Note: For production, don't rely on hibernate.ddl-auto (or counterparts) to export schema DDL to the database. Simply remove (disable) hibernate.ddl-auto or set it to validate . Rely on Flyway or Liquibase.

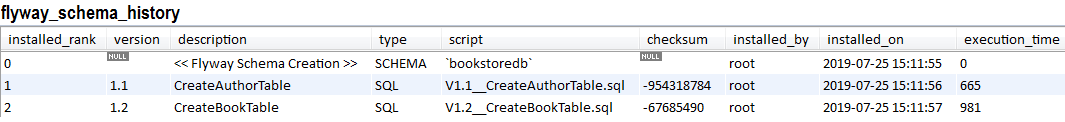

Description: This application is an example of migrating a MySQL database via Flyway when the database exists (it is created before migration via MySQL specific parameter, createDatabaseIfNotExist=true ).

핵심 사항:

pom.xml , add the Flyway dependencyspring.jpa.hibernate.ddl-autoapplication.properties , set the JDBC URL as follows: jdbc:mysql://localhost:3306/bookstoredb?createDatabaseIfNotExist=trueclasspath:db/migrationV1.1__Description.sql , V1.2__Description.sql , ...spring.flyway.schemas Note: For production, don't rely on hibernate.ddl-auto (or counterparts) to export schema DDL to the database. Simply remove (disable) hibernate.ddl-auto or set it to validate . Rely on Flyway or Liquibase.

Description: This application is an example of migrating a MySQL database when the database is created by Flyway via spring.flyway.schemas . In this case, the entities should be annotated with @Table(schema = "bookstoredb") or @Table(catalog = "bookstoredb") . Here, the database name is bookstoredb .

핵심 사항:

pom.xml , add the Flyway dependencyspring.jpa.hibernate.ddl-autoapplication.properties , set the JDBC URL as follows: jdbc:mysql://localhost:3306/application.properties , add spring.flyway.schemas=bookstoredb , where bookstoredb is the database that should be created by Flyway (feel free to add your own database name)@Table(schema/catalog = "bookstoredb")classpath:db/migrationV1.1__Description.sql , V1.2__Description.sql , ... Output of migration history example:

| If you need a deep dive into the performance recipes exposed in this repository then I am sure that you will love my book "Spring Boot Persistence Best Practices" | If you need a hand of tips and illustrations of 100+ Java persistence performance issues then "Java Persistence Performance Illustrated Guide" is for you. |

|

|

Note: For production don't rely on hibernate.ddl-auto to create your schema. Remove (disable) hibernate.ddl-auto or set it to validate . Rely on Flyway or Liquibase.

Description: This application is an example of auto-creating and migrating schemas for MySQL and PostgreSQL. In addition, each data source uses its own HikariCP connection pool. In case of MySQL, where schema = database , we auto-create the schema ( authorsdb ) based on createDatabaseIfNotExist=true . In case of PostgreSQL, where a database can have multiple schemas, we use the default postgres database and auto-create in it the schema, booksdb . For this we rely on Flyway, which is capable to create a missing schema.

핵심 사항:

pom.xml , add the Flyway dependencyspring.jpa.hibernate.ddl-auto or set it to validateapplication.properties , configure the JDBC URL for MySQL as, jdbc:mysql://localhost:3306/authorsdb?createDatabaseIfNotExist=true and for PostgreSQL as, jdbc:postgresql://localhost:5432/postgres?currentSchema=booksdbapplication.properties , set spring.flyway.enabled=false to disable default behaviorDataSource for MySQL and one for PostgreSQLFlywayDataSource for MySQL and one for PostgreSQLEntityManagerFactory for MySQL and one for PostgreSQLdbmigrationmysqldbmigrationpostgresql Note: For production, don't rely on hibernate.ddl-auto (or counterparts) to export schema DDL to the database. Simply remove (disable) hibernate.ddl-auto or set it to validate . Rely on Flyway or Liquibase.

Description: This application is an example of auto-creating and migrating two schemas in PostgreSQL using Flyway. In addition, each data source uses its own HikariCP connection pool. In case of PostgreSQL, where a database can have multiple schemas, we use the default postgres database and auto-create two schemas, authors and books . For this we rely on Flyway, which is capable to create the missing schemas.

핵심 사항:

pom.xml , add the Flyway dependencyspring.jpa.hibernate.ddl-auto or set it to validateapplication.properties , configure the JDBC URL for books as jdbc:postgresql://localhost:5432/postgres?currentSchema=books and for authors as jdbc:postgresql://localhost:5432/postgres?currentSchema=authorsapplication.properties , set spring.flyway.enabled=false to disable default behaviorDataSource , one for books and one for authorsFlywayDataSource , one for books and one for authorsEntityManagerFactory , one for books and one for authorsbooks , place the migration SQLs files in dbmigrationbooksauthors , place the migration SQLs files in dbmigrationauthorsJOIN FETCH an @ElementCollection Description: This application is an example applying JOIN FETCH to fetch an @ElementCollection .

핵심 사항:

@ElementCollection is loaded lazy, keep it lazyJOIN FETCH in the repository@Subselect ) in a Spring Boot Application Note: Consider using @Subselect only if using DTO, DTO and extra queries, or map a database view to an entity is not a solution.

Description: This application is an example of mapping an entity to a query via Hibernate, @Subselect . Mainly, we have two entities in a bidirectional one-to-many association. An Author has wrote several Book . The idea is to write a read-only query to fetch from Author only some fields (eg, DTO), but to have the posibility to call getBooks() and fetch the Book in a lazy manner as well. As you know, a classic DTO cannot be used, since such DTO is not managed and we cannot navigate the associations (don't support any managed associations to other entities). Via Hibernate @Subselect we can map a read-only and immutable entity to a query. This time, we can lazy navigate the associations.

핵심 사항:

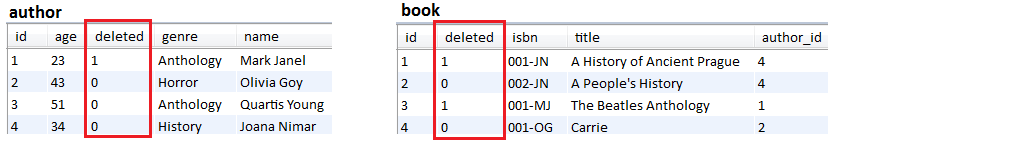

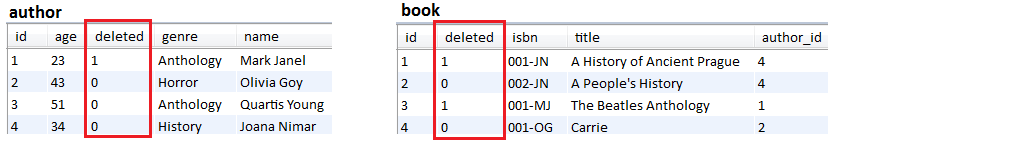

Author (including association to Book )@Immutable since no write operations are allowed@Synchronize@Subselect to write the needed query, map an entity to an SQL queryDescription: This application is an example of using Hibernate soft deletes in a Spring Boot application.

핵심 사항:

abstract class BaseEntity with a field named deletedAuthor and Book entities) that should take advantage of soft deletes should extend BaseEntity@Where annotation like this: @Where(clause = "deleted = false")@SQLDelete annotation to trigger UPDATE SQLs in place of DELETE SQLs, as follows: @SQLDelete(sql = "UPDATE author SET deleted = true WHERE id = ?") 출력 예:

DataSourceBuilder If you use the spring-boot-starter-jdbc or spring-boot-starter-data-jpa "starters", you automatically get a dependency to HikariCP

Note: The best way to tune the connection pool parameters consist in using Flexy Pool by Vlad Mihalcea. Via Flexy Pool you can find the optim settings that sustain high-performance of your connection pool.

Description: This is a kickoff application that set up HikariCP via DataSourceBuilder . The jdbcUrl is set up for a MySQL database. For testing purposes, the application uses an ExecutorService for simulating concurrent users. Check the HickariCP report revealing the connection pool status.

핵심 사항:

@Bean that returns the DataSource programmaticallyDescription: Auditing is useful for maintaining history records. This can later help us in tracking user activities.

핵심 사항:

abstract base entity (eg, BaseEntity ) and annotate it with @MappedSuperclass and @EntityListeners({AuditingEntityListener.class})@CreatedDate protected LocalDateTime created;@LastModifiedDate protected LocalDateTime lastModified;@CreatedBy protected U createdBy;@LastModifiedBy protected U lastModifiedBy;@EnableJpaAuditing(auditorAwareRef = "auditorAware")AuditorAware (this is needed for persisting the user that performed the modification; use Spring Security to return the currently logged-in user)@Beanspring.jpa.hibernate.ddl-auto=create )Description: Auditing is useful for maintaining history records. This can later help us in tracking user activities.

핵심 사항:

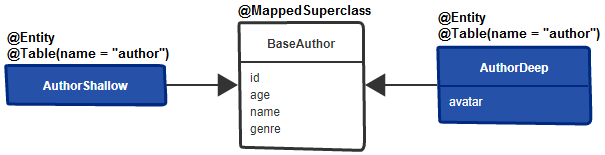

@Audited@AuditTable to rename the table used for auditingValidityAuditStrategy for fast database reads, but slower writes (slower than the default DefaultAuditStrategy )Description: By default, the attributes of an entity are loaded eager (all at once). This application is an alternative to How To Use Hibernate Attribute Lazy Loading from here. This application uses a base class to isolate the attributes that should be loaded eagerly and subentities (entities that extends the base class) for isolating the attributes that should be loaded on demand.

핵심 사항:

BaseAuthor , and annotate it with @MappedSuperclassAuthorShallow subentity of BaseAuthor and don't add any attribute in it (this will inherit the attributes from the superclass)AuthorDeep subentity of BaseAuthor and add to it the attributes that should be loaded on demand (eg, avatar )@Table(name = "author")AuthorShallowRepository and AuthorDeepRepositoryRun the following requests (via BookstoreController):

localhost:8080/authors/shallowlocalhost:8080/authors/deepCheck as well:

Description: Fetching more data than needed is prone to performance penalities. Using DTO allows us to extract only the needed data. In this application we rely on constructor and Spring Data Query Builder Mechanism.

핵심 사항:

참조:

Dto Via Constructor Expression and JPQL

| If you need a deep dive into the performance recipes exposed in this repository then I am sure that you will love my book "Spring Boot Persistence Best Practices" | If you need a hand of tips and illustrations of 100+ Java persistence performance issues then "Java Persistence Performance Illustrated Guide" is for you. |

|

|

JOIN Description: Using JOIN is very useful for fetching DTOs (data that is never modified, not in the current or subsequent requests). For example, consider two entities, Author and Book in a lazy-bidirectional @OneToMany association. And, we want to fetch a subset of columns from the parent table ( author ) and a subset of columns from the child table ( book ). This job is a perfect fit for JOIN which can pick up columns from different tables and build a raw result set. This way we fetch only the needed data. Moreover, we may want to serve the result set in pages (eg, via LIMIT ). This application contains several approaches for accomplishing this task with offset pagination.

핵심 사항:

Page (with SELECT COUNT and COUNT(*) OVER() window function)Slice and ListDENSE_RANK() for avoiding the truncation of the result set (an author can be fetched with only a subset of his books)LEFT JOIN FETCH참조:

Description: Let's assume that we have two entities engaged in a one-to-many (or many-to-many) lazy bidirectional (or unidirectional) relationship (eg, Author has more Book ). And, we want to trigger a single SELECT that fetches all Author and the corresponding Book . This is a job for JOIN FETCH which is converted behind the scene into a INNER JOIN . Being an INNER JOIN , the SQL will return only Author that have Book . If we want to return all Author , including those that doesn't have Book , then we can rely on LEFT JOIN FETCH . Similar, we can fetch all Book , including those with no registered Author . This can be done via LEFT JOIN FETCH or LEFT JOIN .

핵심 사항:

Author and Book in a one-to-many lazy bidirectional relationship)LEFT JOIN FETCH to fetch all authors and books (fetch authors even if they don't have registered books)LEFT JOIN FETCH to fetch all books and authors (fetch books even if they don't have registered authors)JOIN VS. JOIN FETCH참조:

Description: This is an application meant to reveal the differences between JOIN and JOIN FETCH . The important thing to keep in mind is that, in case of LAZY fetching, JOIN will not be capable to initialize the associated collections along with their parent objects using a single SQL SELECT . On the other hand, JOIN FETCH is capable to accomplish this kind of task. But, don't underestimate JOIN , because JOIN is the proper choice when we need to combine/join the columns of two (or more) tables in the same query, but we don't need to initialize the associated collections on the returned entity (eg, very useful for fetching DTO).

핵심 사항:

Author and Book in a one-to-many lazy-bidirectional relationship)JOIN and JOIN FETCH to fetch an author including his booksJOIN to fetch a book (1)JOIN to fetch a book including its author (2)JOIN FETCH to fetch a book including its author다음 사항에 유의하세요.

JOIN , fetching Book of Author requires additional SELECT statements being prone to N+1 performance penaltyJOIN (1), fetching Author of Book requires additional SELECT statements being prone to N+1 performance penaltyJOIN (2), fetching Author of Book works exactly as JOIN FETCH (requires a single SELECT )JOIN FETCH , fetching each Author of a Book requires a single SELECT Description: If, for some reason, you need an entity in your Spring projection (DTO), then this application shows you how to do it via an example. In this case, there are two entities, Author and Book , involved in a lazy bidirectional one-to-many association (it can be other association as well, or even no materialized association). And, we want to fetch in a Spring projection the authors as entities, Author , and the title of the books.

핵심 사항:

Author and Book in a one-to-many lazy bidirectional relationship)public Author getAuthor() and public String getTitle() Description: If, for some reason, you need an entity in your Spring projection (DTO), then this application shows you how to do it via an example. In this case, there are two entities, Author and Book , that have no materialized association between them, but, they share the genre attribute. We use this attribute to join authors with books via JPQL. And, we want to fetch in a Spring projection the authors as entities, Author , and the title of the books.

핵심 사항:

Author and Book )public Author getAuthor() and public String getTitle() Description: Let's assume that we have two entities, Author and Book . There is no materialized association between them, but, both entities shares an attribute named, genre . We want to use this attribute to join the tables corresponding to Author and Book , and fetch the result in a DTO. The result should contain the Author entity and only the title attribute from Book . Well, when you are in a scenario as here, it is strongly advisable to avoid fetching the DTO via constructor expression . This approach cannot fetch the data in a single SELECT , and is prone to N+1. Way better than this consists of using Spring projections, JPA Tuple or even Hibernate ResultTransformer . These approaches will fetch the data in a single SELECT . This application is a DON'T DO THIS example. Check the number of queries needed for fetching the data. In place, do it as here: Entity Inside Spring Projection (no association).

@ElementCollection Description: This application is an example of fetching a DTO that includes attributes from an @ElementCollection .

핵심 사항:

@ElementCollection is loaded lazy, keep it lazyJOIN in the repositorySet Of Associated Entities In @ManyToMany Association Via @OrderBy Description: In case of @ManyToMany association, we always should rely on Set (not on List ) for mapping the collection of associated entities (entities of the other parent-side). 왜? Well, please see Prefer Set Instead of List in @ManyToMany Relationships. But, is well-known that HashSet doesn't have a predefined entry order of elements. If this is an issue then this application relies on @OrderBy which adds an ORDER BY clause in the SQL statement. The database will handle the ordering. Further, Hibernate will preserve the order via a LinkedHashSet .

This application uses two entities, Author and Book , involved in a lazy bidirectional many-to-many relationship. First, we fetch a Book by title. Further, we call getAuthors() to fetch the authors of this book. The fetched authors are ordered descending by name. The ordering is done by the database as a result of adding @OrderBy("name DESC") , and is preserved by Hibernate.

핵심 사항:

@OrderByHashSet , but doesn't provide consistency across all transition states (eg, transient state)LinkedHashSet instead of HashSet Note: Alternatively, we can use @OrderColumn . This gets materialized in an additional column in the junction table. This is needed for maintaining a permanent ordering of the related data.

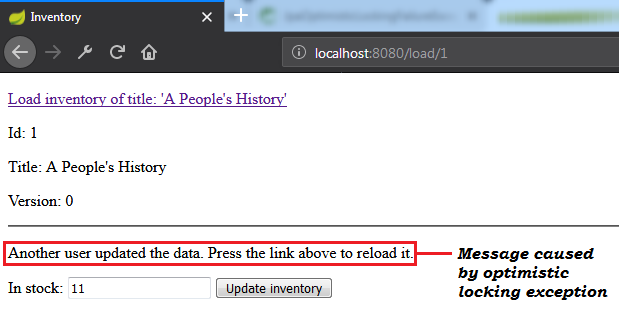

Description: This is a sample application that shows how versioned ( @Version ) optimistic locking and detached entity works. Running the application will result in an optimistic locking specific exception (eg, the Spring Boot specific, OptimisticLockingFailureException ).

핵심 사항:

findById(1L) ; commit transaction and close the Persistence ContextfindById(1L) and update it; commit the transaction and close the Persistence Contextsave() and pass to it the detached entity; trying to merge ( EntityManager.merge() ) the entity will end up in an optimistic locking exception since the version of the detached and just loaded entity don't matchOptimisticLockException Shaped Via @Version Note: Optimistic locking via @Version works for detached entities as well.

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception. So, running the application should end up with a Spring specific ObjectOptimisticLockingFailureException exception.

핵심 사항:

@Transactional method used for updating data| If you need a deep dive into the performance recipes exposed in this repository then I am sure that you will love my book "Spring Boot Persistence Best Practices" | If you need a hand of tips and illustrations of 100+ Java persistence performance issues then "Java Persistence Performance Illustrated Guide" is for you. |

|

|

TransactionTemplate After OptimisticLockException Exception ( @Version ) Note: Optimistic locking via @Version works for detached entities as well.

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception. When such exception occurs, the application retry the corresponding transaction via db-util library developed by Vlad Mihalcea.

핵심 사항:

pom.xml , add the db-util dependencyOptimisticConcurrencyControlAspect beanTransactionTemplateOptimisticLockException In Version-less Optimistic LockingNote: Version-less optimistic locking doesn't work for detached entities (do not close the Persistence Context).

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception. So, running the application should end up with a Spring specific ObjectOptimisticLockingFailureException exception.

핵심 사항:

@Transactional method used for updating dataTransactionTemplate After OptimisticLockException Shaped Via Hibernate Version-less Optimistic Locking MechanismNote: Version-less optimistic locking doesn't work for detached entities (do not close the Persistence Context).

Description: This is a Spring Boot application that simulates a scenario that leads to an optimistic locking exception. When such exception occur, the application retry the corresponding transaction via db-util library developed by Vlad Mihalcea.

핵심 사항:

pom.xml , add the db-util dependencyOptimisticConcurrencyControlAspect beanTransactionTemplateDescription: This is a sample application that shows how to take advantage of versioned optimistic locking and detached entities in HTTP long conversations. The climax consists of storing the detached entities across multiple HTTP requests. Commonly, this can be accomplished via HTTP session.

핵심 사항:

@Version@SessionAttributes for storing the detached entities Sample output (check the message caused by optimistic locking exception):

@Where Note: Rely on this approach only if you simply cannot use JOIN FETCH WHERE or @NamedEntityGraph .

Description: This application is a sample of using Hibernate @Where for filtering associations.

핵심 사항:

@Where(clause = "condition to be met") in entity (check the Author entity)Description: Batch inserts (in MySQL) in Spring Boot style.

핵심 사항:

application.properties set spring.jpa.properties.hibernate.jdbc.batch_sizeapplication.properties set spring.jpa.properties.hibernate.generate_statistics (just to check that batching is working)application.properties set JDBC URL with rewriteBatchedStatements=true (optimization for MySQL)application.properties set JDBC URL with cachePrepStmts=true (enable caching and is useful if you decide to set prepStmtCacheSize , prepStmtCacheSqlLimit , etc as well; without this setting the cache is disabled)application.properties set JDBC URL with useServerPrepStmts=true (this way you switch to server-side prepared statements (may lead to signnificant performance boost))spring.jpa.properties.hibernate.order_inserts=true to optimize the batching by ordering insertsIDENTITY will cause insert batching to be disabledspring.jpa.properties.hibernate.cache.use_second_level_cache=false 출력 예:

COUNT(*) OVER And Return Page<entity> Via Extra Column Description: Typically, in offset pagination, there is one query needed for fetching the data and one for counting the total number of records. But, we can fetch this information in a single database rountrip via a SELECT COUNT subquery nested in the main SELECT . Even better, for databases vendors that support Window Functions there is a solution relying on COUNT(*) OVER() as in this application that uses this window function in a native query against MySQL 8. So, prefer this one instead of SELECT COUNT subquery.This application fetches data as Page<entity> via Spring Boot offset pagination, but, if the fetched data is read-only , then rely on Page<dto> as here.

핵심 사항:

PagingAndSortingRepository@Column(insertable = false, updatable = false)List<entity>List<entity> and Pageable to create a Page<entity>SELECT COUNT Subquery And Return List<entity> Via Extra Column Description: This application fetches data as List<entity> via Spring Boot offset pagination. The SELECT COUNT triggered for counting the total number of records is a subquery of the main SELECT . Therefore, there will be a single database roundtrip instead of two (typically, one query is needed for fetching the data and one for counting the total number of records).

핵심 사항:

PagingAndSortingRepositoryentity , add an extra column for representing the total number of records and annotate it as @Column(insertable = false, updatable = false)SELECT COUNT subquery) into a List<entity>SELECT COUNT Subquery And Return List<projection> That Maps Entities And The Total Number Of Records Via Projection Description: This application fetches data as List<projection> via Spring Boot offset pagination. The projection maps the entity and the total number of records. This information is fetched in a single database rountrip because the SELECT COUNT triggered for counting the total number of records is a subquery of the main SELECT . Therefore, there will be a single database roundtrip instead of two (typically, there is one query needed for fetching the data and one for counting the total number of records). Use this approch only if the fetched data is not read-only . Otherwise, prefer List<dto> as here.

핵심 사항:

PagingAndSortingRepositorySELECT COUNT subquery) into a List<projection>COUNT(*) OVER And Return List<entity> Via Extra Column Description: Typically, in offset pagination, there is one query needed for fetching the data and one for counting the total number of records. But, we can fetch this information in a single database rountrip via a SELECT COUNT subquery nested in the main SELECT . Even better, for databases vendors that support Window Functions there is a solution relying on COUNT(*) OVER() as in this application that uses this window function in a native query against MySQL 8. So, prefer this one instead of SELECT COUNT subquery.This application fetches data as List<entity> via Spring Boot offset pagination, but, if the fetched data is read-only , then rely on List<dto> as here.

핵심 사항:

PagingAndSortingRepositoryentity , add an extra column for representing the total number of records and annotate it as @Column(insertable = false, updatable = false)COUNT(*) OVER subquery) into a List<entity>| If you need a deep dive into the performance recipes exposed in this repository then I am sure that you will love my book "Spring Boot Persistence Best Practices" | If you need a hand of tips and illustrations of 100+ Java persistence performance issues then "Java Persistence Performance Illustrated Guide" is for you. |

|

|

SELECT COUNT Subquery And Return Page<entity> Via Extra Column Description: This application fetches data as Page<entity> via Spring Boot offset pagination. Use this only if the fetched data will be modified. Otherwise, fetch Page<dto> as here. The SELECT COUNT triggered for counting the total number of records is a subquery of the main SELECT . Therefore, there will be a single database roundtrip instead of two (typically, there is one query needed for fetching the data and one for counting the total number of records).

핵심 사항:

PagingAndSortingRepository@Column(insertable = false, updatable = false)List<entity>List<entity> and Pageable to create a Page<entity>SELECT COUNT Subquery And Return Page<projection> That Maps Entities And The Total Number Of Records Via Projection Description: This application fetches data as Page<projection> via Spring Boot offset pagination. The projection maps the entity and the total number of records. This information is fetched in a single database rountrip because the SELECT COUNT triggered for counting the total number of records is a subquery of the main SELECT .

핵심 사항: