Comfyui 레이어 스타일

中文说明点这里

商务合作请联系 [email protected]으로 이메일을 보내십시오.

비즈니스 협력은 이메일 [email protected]으로 문의하십시오.

기능과 같은 포토샵을 달성하기 위해 레이어를 합성하고 마스크 할 수있는 comfyui의 노드 세트.

워크 플로를 중앙 집중화하고 소프트웨어 전환 빈도를 줄이기 위해 Photoshop의 기본 기능을 Comfyui로 마이그레이션합니다.

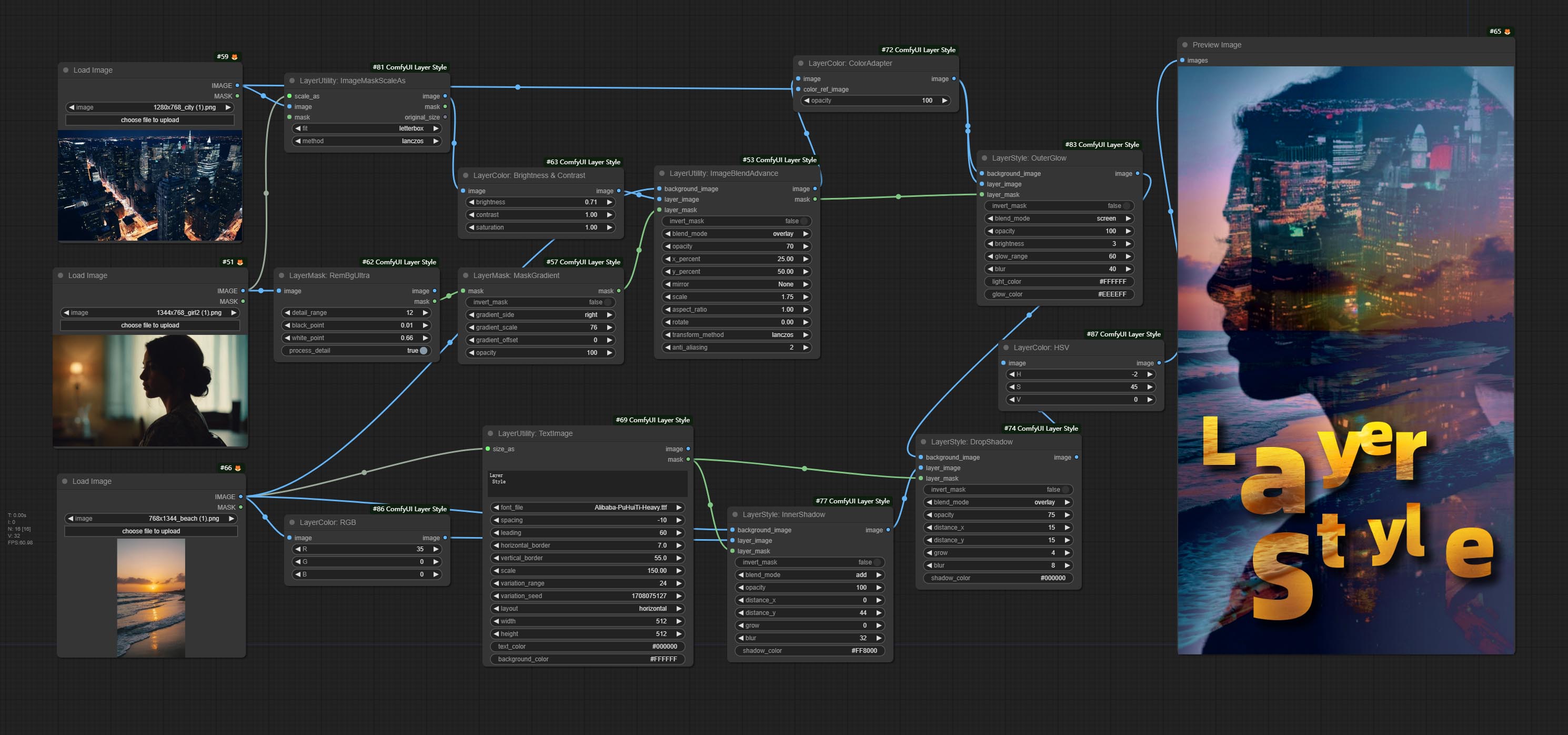

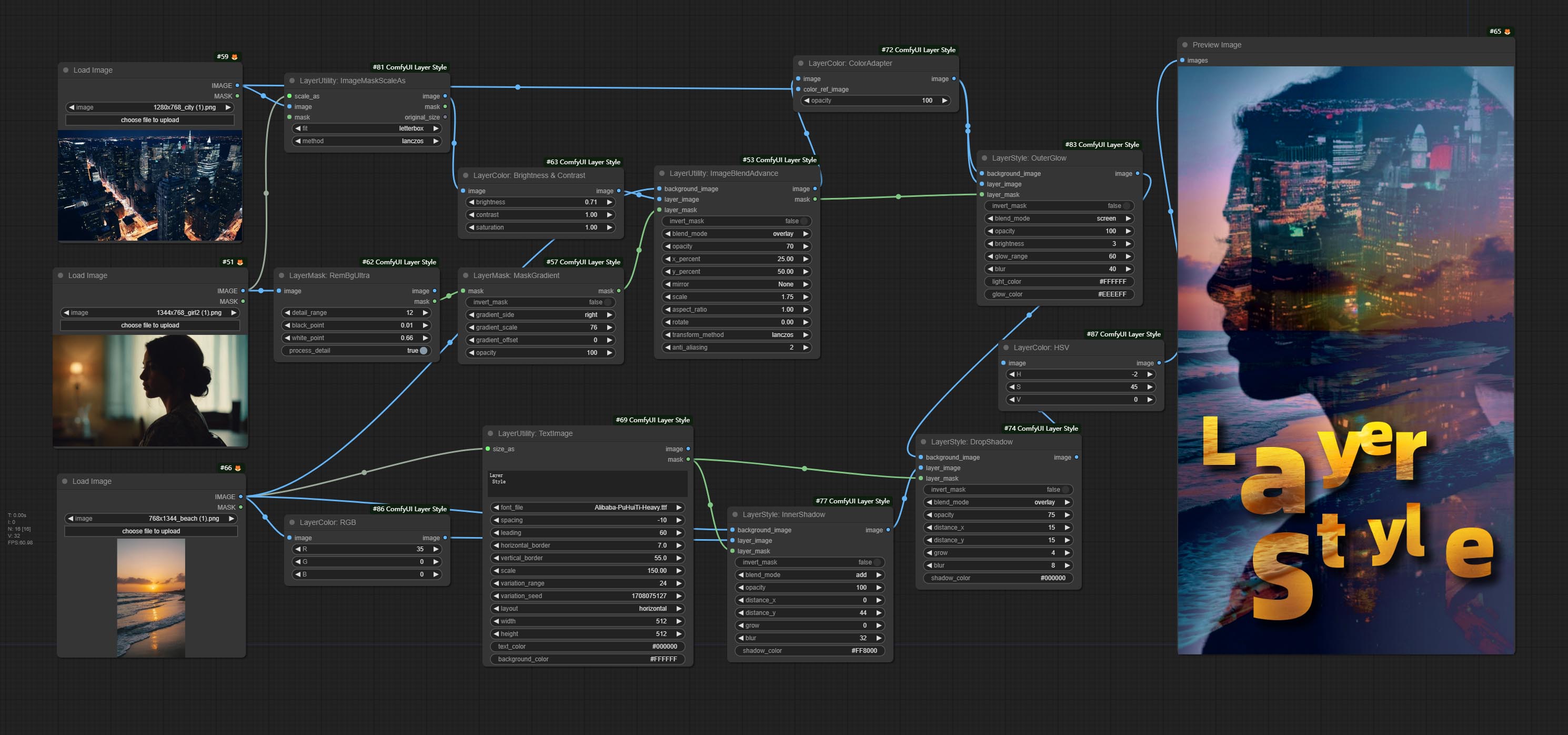

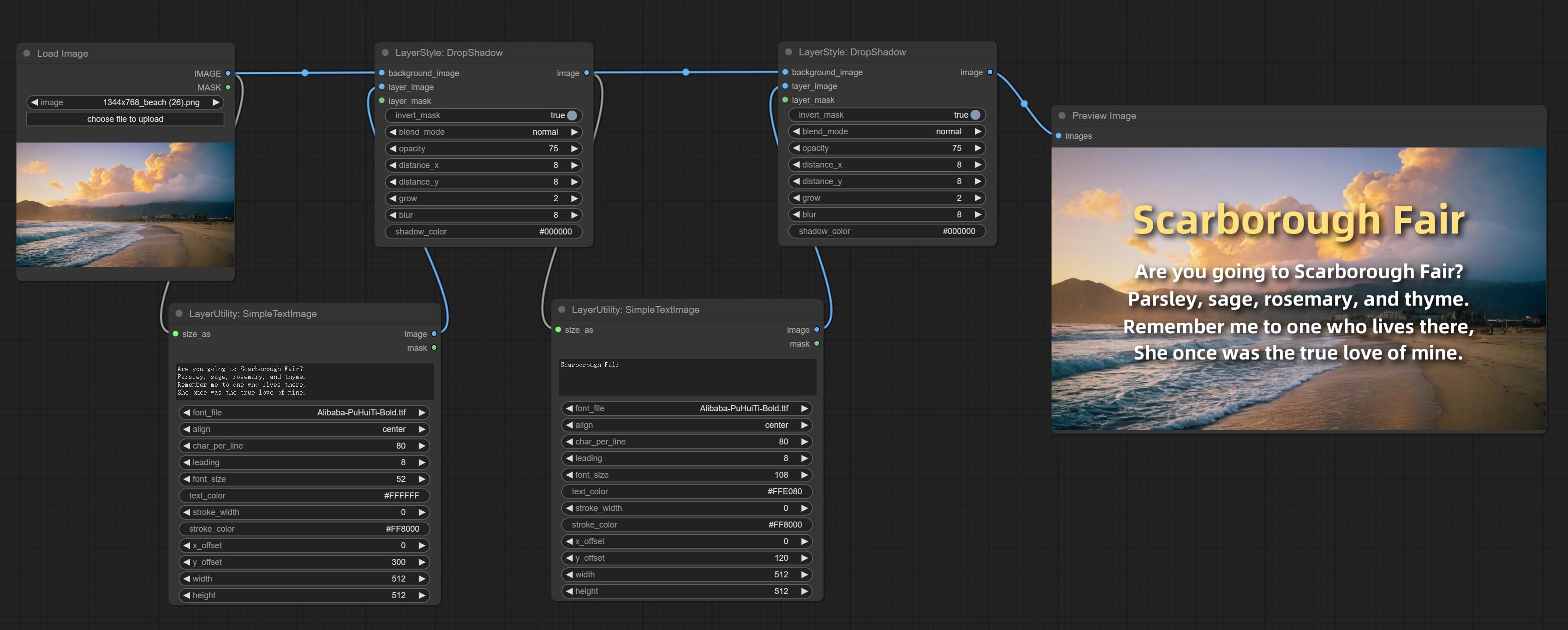

*이 워크 플로 (Title_example_WorkFlow.json)는 워크 플로 디렉토리에 있습니다.

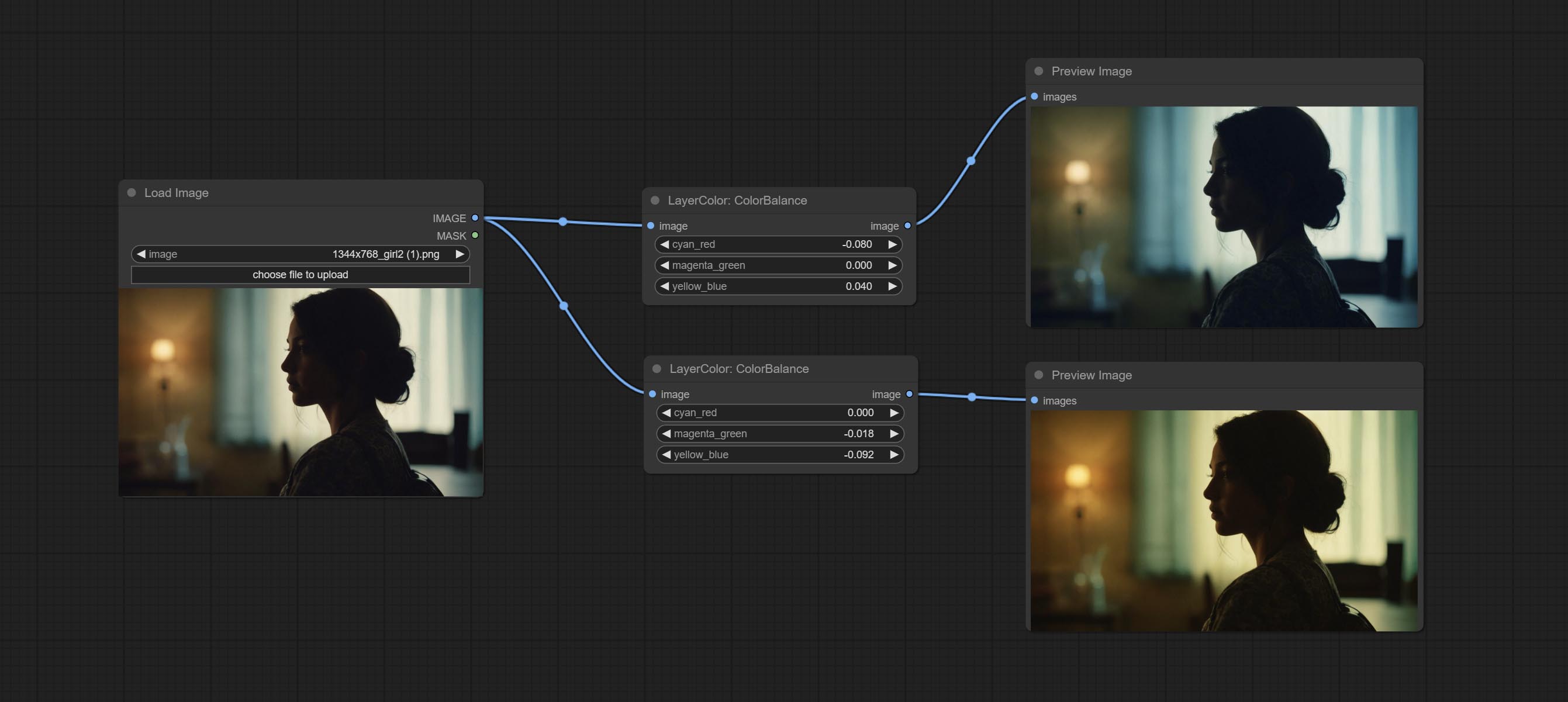

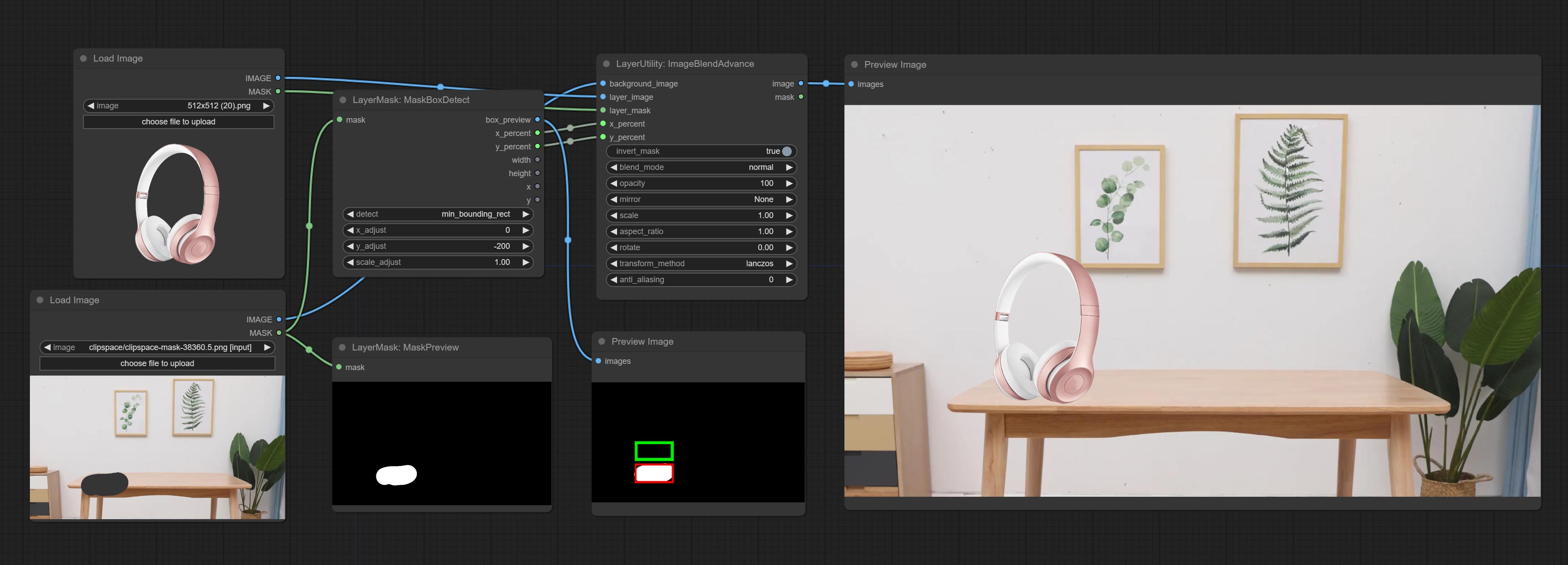

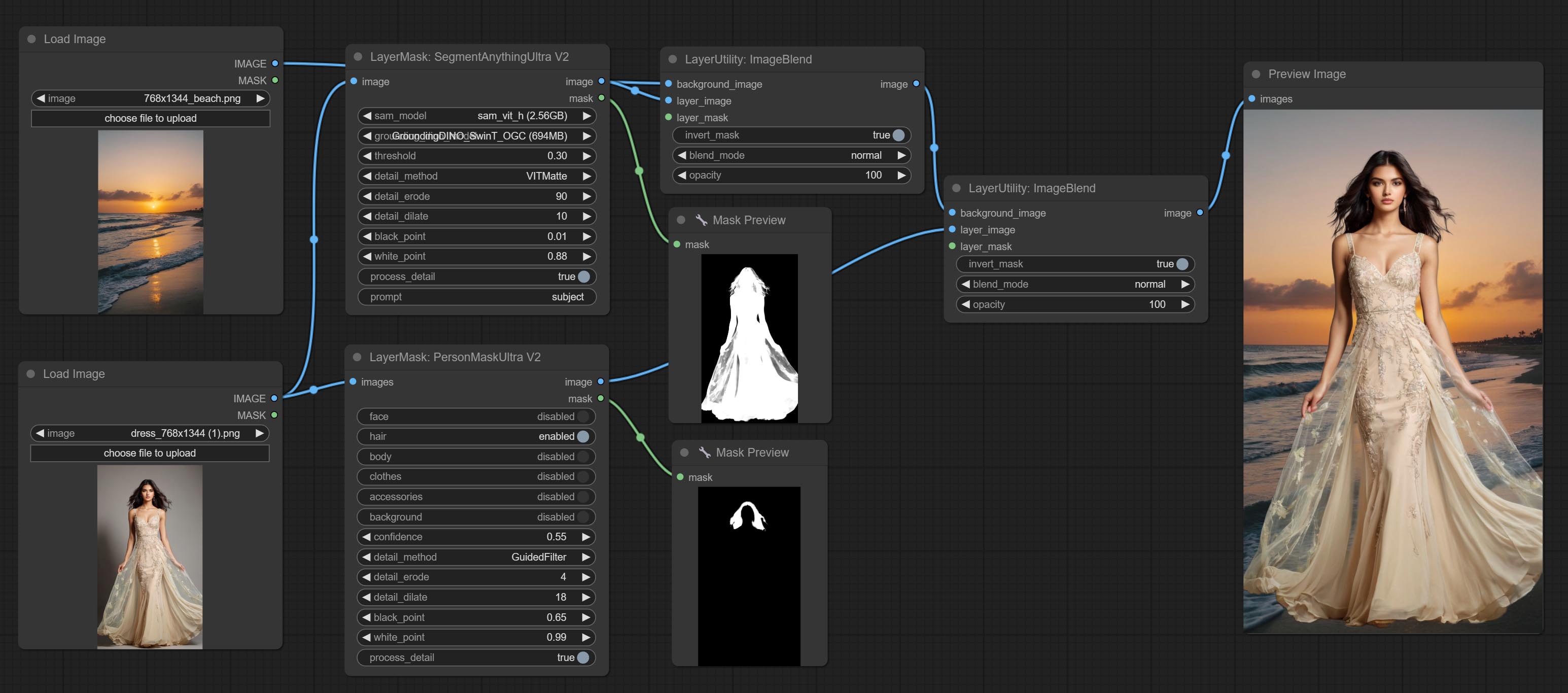

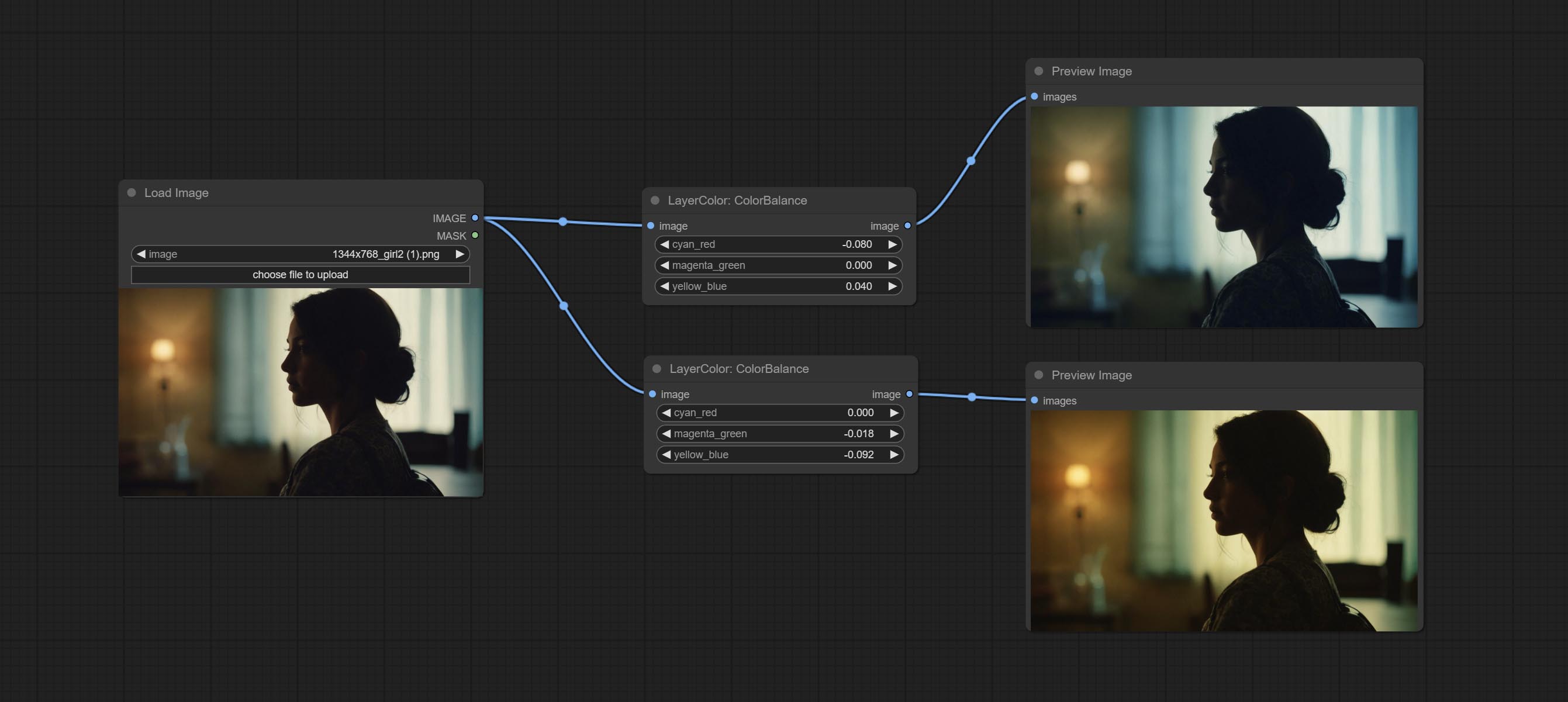

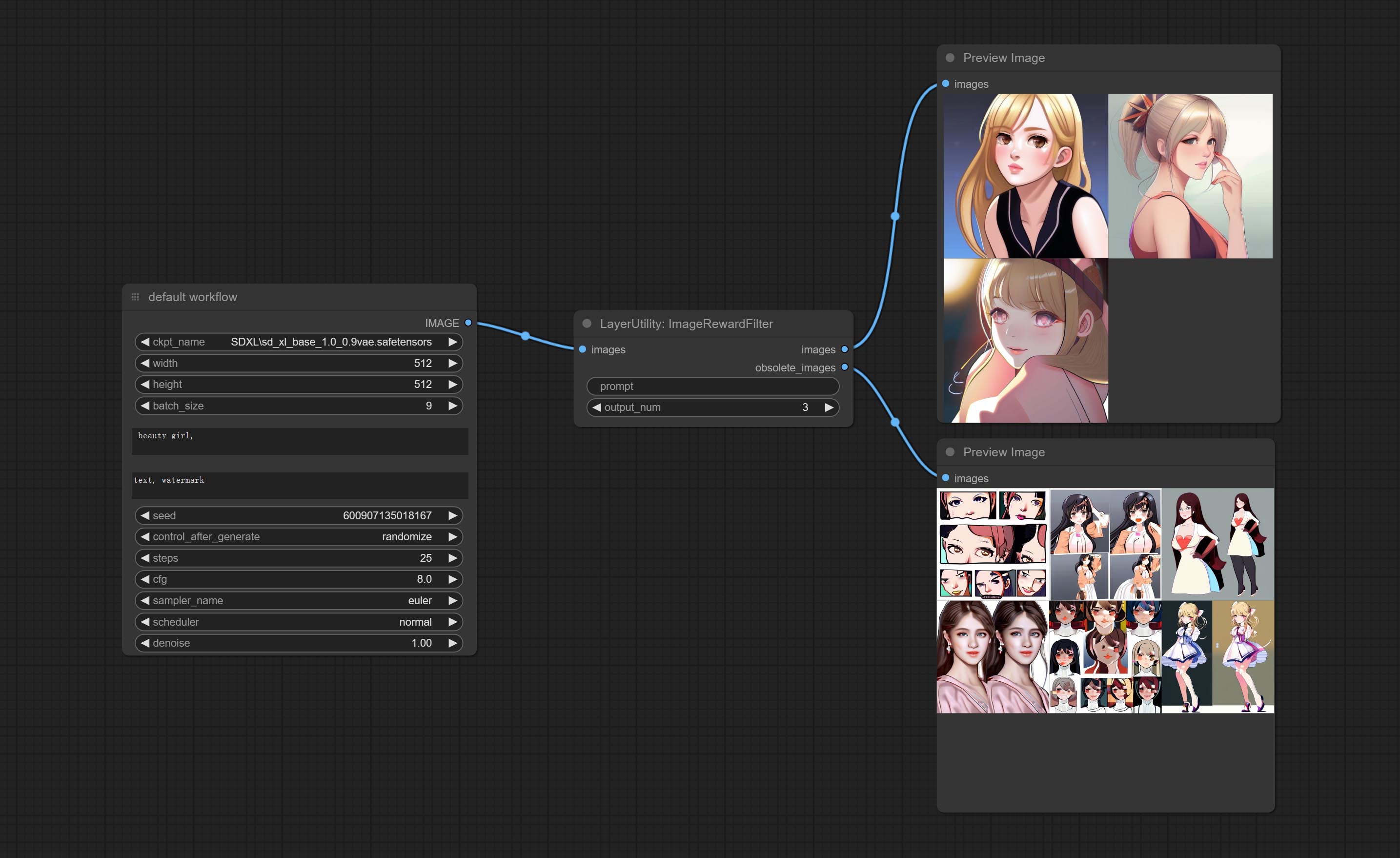

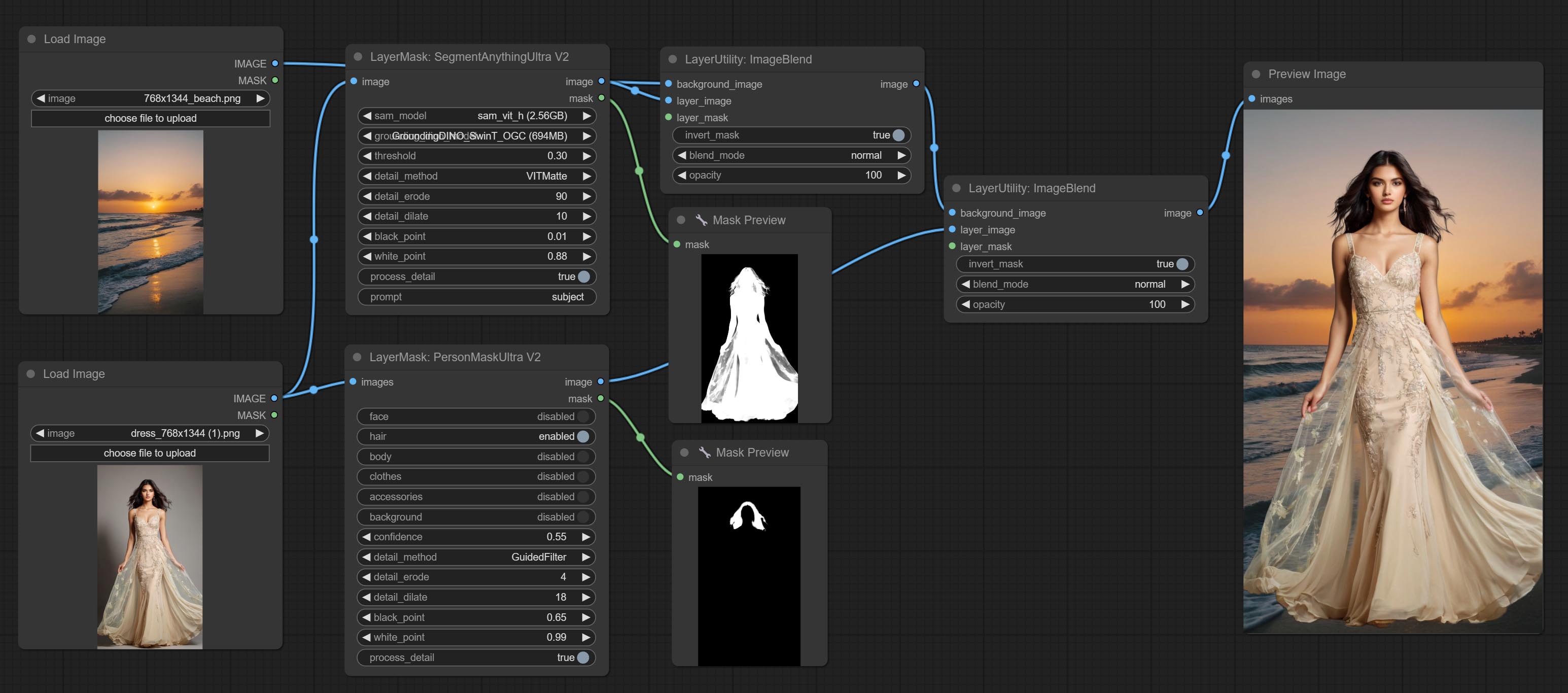

예제 워크 플로

workflow 디렉토리의 일부 JSON 워크 플로 파일은 Comfyui에서 이러한 노드를 사용하는 방법의 예입니다.

설치 방법

(Comfyui 공식 휴대용 패키지 및 Aki Comfyui 패키지를 예로 들어 보려면 다른 Comfyui 환경에 대한 종속성 환경 디렉토리를 수정하십시오)

플러그인을 설치하십시오

종속성 패키지를 설치하십시오

Comfyui 공식 휴대용 패키지의 경우 플러그인 디렉토리에서 install_requirements.bat 을 두 번 클릭하십시오. Aki Comfyui 패키지는 플러그인 디렉토리의 install_requirements_aki.bat 두 번 클릭하고 설치가 완료 될 때까지 기다립니다.

또는 종속성 패키지를 설치하고 ComfyUIcustom_ NodesComfyUI_LayerStyle 플러그인 디렉토리에서 CMD 창을 열고 다음 명령을 입력하십시오.

Comfyui 공식 휴대용 패키지의 경우 다음을 입력하십시오.

......python_embededpython.exe -s -m pip install .whldocopt-0.6.2-py2.py3-none-any.whl

......python_embededpython.exe -s -m pip install .whlhydra_core-1.3.2-py3-none-any.whl

......python_embededpython.exe -s -m pip install -r requirements.txt

.repair_dependency.bat

Aki Comfyui 패키지의 경우 다음을 입력하십시오.

....pythonpython.exe -s -m pip install .whldocopt-0.6.2-py2.py3-none-any.whl

....pythonpython.exe -s -m pip install .whlhydra_core-1.3.2-py3-none-any.whl

....pythonpython.exe -s -m pip install -r requirements.txt

.repair_dependency.bat

모델 파일 다운로드

Baidunetdisk의 중국 국내 사용자 및 Huggingface.co/chflame163/comfyui_layerstyle의 다른 사용자

모든 파일을 다운로드하여 ComfyUImodels 폴더에 복사하십시오. 이 링크는이 플러그인에 필요한 모든 모델 파일을 제공합니다. 또는 각 노드의 지침에 따라 모델 파일을 다운로드하십시오.

일반적인 문제

노드를 제대로로드 할 수 없거나 사용 중에 오류가 있으면 Comfyui 터미널 창의 오류 메시지를 확인하십시오. 다음은 일반적인 오류와 솔루션입니다.

경고 : xxxx.ini를 찾을 수없고, 기본 xxxx를 사용하십시오 ..

이 경고 메시지는 INI 파일을 찾을 수 없으며 사용에 영향을 미치지 않음을 나타냅니다. 이러한 경고를보고 싶지 않다면 플러그인 디렉토리의 모든 *.ini.example 파일을 *.ini 로 수정하십시오.

modulenotfounderRor : 'psd_tools'라는 모듈 없음

이 오류는 psd_tools 올바르게 설치되지 않았다는 것입니다.

해결책:

- Comfyui를 닫고 플러그인 디렉토리에서 터미널 창을 열고 다음

ModuleNotFoundError: No module named 'docopt' ../../../python_embeded/python.exe -s -m pip install psd_tools 실행하십시오. ModuleNotFoundError: No module named 'docopt' Docopt의 WHL을 다운로드하고 수동 설치하십시오. 터미널 창에서 다음 명령 ../../../python_embeded/python.exe -s -m pip install path/docopt-0.6.2-py2.py3-none-any.whl 실행 path . WHL 파일의.

'cv2.ximgproc'에서 이름 'GuidedFilter'를 가져올 수 없습니다.

이 오류는 opencv-contrib-python 패키지의 잘못된 버전으로 인해 발생 하거나이 패키지는 다른 OpenCV 패키지에 의해 덮어 씁니다.

NameerRor : Name 'GuidedFilter'는 정의되지 않았습니다

문제의 이유는 위와 동일합니다.

'Transformers'에서 이름 'VitmatteimageProcessor'이름을 가져올 수 없습니다.

이 오류는 낮은 버전의 transformers 패키지로 인해 발생합니다.

Insightface로드가 매우 느립니다

이 오류는 낮은 버전의 protobuf 패키지로 인해 발생합니다.

위의 세 가지 종속성 패키지와 관련된 문제는 플러그인 폴더의 Comfyui-Aki-V1.x의 공식 Comfyui Protable의 경우 repair_dependency.bat (공식 Comfyui Protable) 또는 repair_dependency_aki.bat 두 번 클릭하십시오.

onnxruntime :: python :: createexecutionproviderinstance cuda_path가 설정되었지만 Cuda는로드 할 수 없었습니다. GPU 요구 사항 페이지에 언급 된대로 CUDA 및 CUDNN의 올바른 버전을 설치하십시오.

솔루션 : onnxruntime 종속성 패키지를 다시 설치하십시오.

오류로드 모델 xxx : huggingface.co에 연결할 수 없었습니다.

네트워크 환경을 확인하십시오. 중국에서 일반적으로 Huggingface.co에 액세스 할 수없는 경우 Huggingface_hub 패키지를 수정하여 HF_MIRROR 사용을 강제하십시오.

ValueError : Trimap은 전경 값을 포함하지 않았습니다 (xxxx ...)

이 오류는 마스크 영역이 마스크 가장자리를 처리하기 위해 PyMatting 방법을 사용할 때 너무 크거나 작기 때문에 발생합니다.

해결책:

- 마스크의 유효 영역을 변경하려면 매개 변수를 조정하십시오. 또는 다른 방법을 사용하여 가장자리를 처리하십시오.

requests.exceptions.proxyerror : httpsConnectionPool (xxxx ...)

이 오류가 발생하면 네트워크 환경을 확인하십시오.

UNBOUNDLOCALERROR : 로컬 변수 'Clip_Processor'가 할당되기 전에 참조되었습니다

UnboundLocalError : 로컬 변수 'Text_Model'을 할당하기 전에 참조했습니다

JoyCaption2 노드를 실행할 때이 오류가 발생하고 모델 파일이 올바른 디렉토리에 배치되었음을 확인한 경우 transformers 종속성 패키지 버전이 4.43.2 이상을 확인하십시오. transformers 버전이 4.45.0보다 높거나 같고 오류 메시지가 있습니다.

Error loading models: De️️scriptors cannot be created directly.

If this call came from a _pb2.py file, your generated code is out of date and must be regenerated with protoc >= 3.19.0.

......

protobuf 종속성 패키지를 3.20.3으로 다운 그레이드하거나 환경 변수를 설정하십시오 : PROTOCOL_BUFFERS_PYTHON_IMPLEMENTATION=python .

업데이트

** 업데이트 후 종속성 패키지 오류가 발생하면 repair_dependency.bat (공식 Comfyui Protable) 또는 repair_dependency_aki.bat (comfyui-aki-v1.x)가 플러그인 폴더의 경우에 종속성 패키지를 다시 설치하십시오.

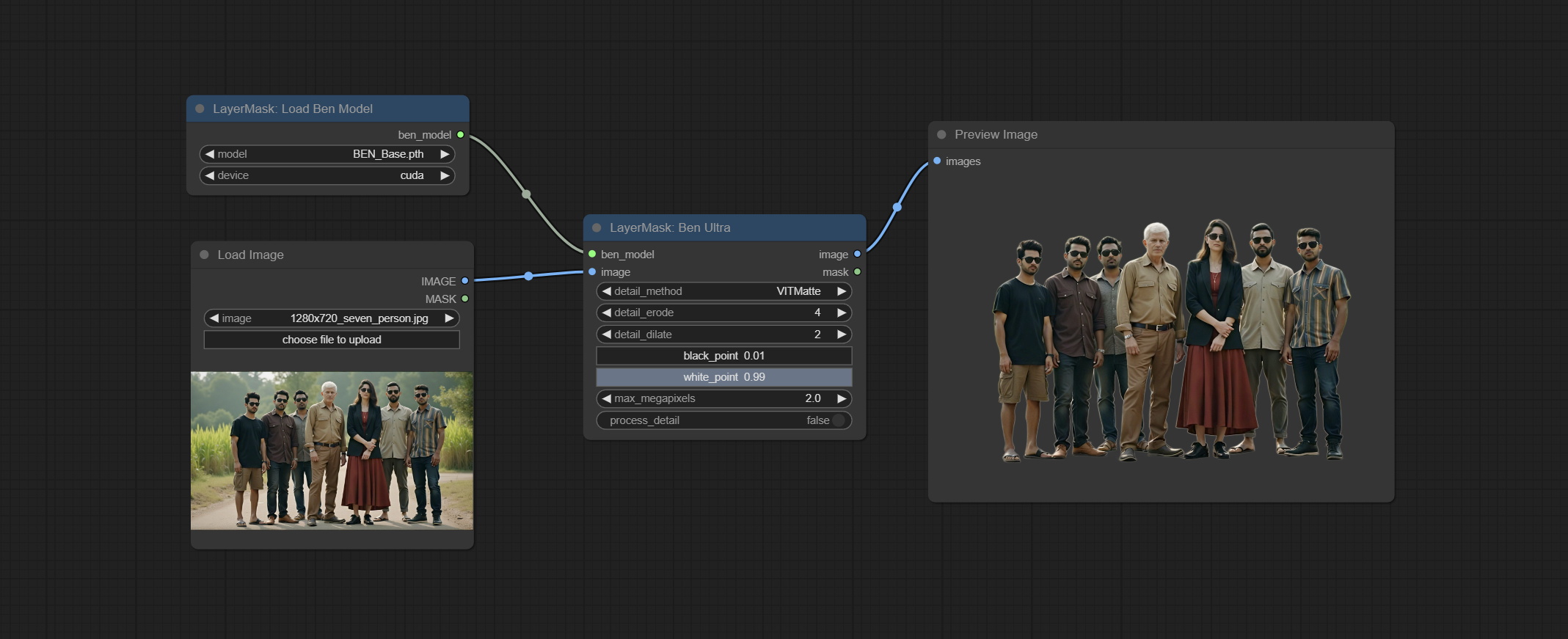

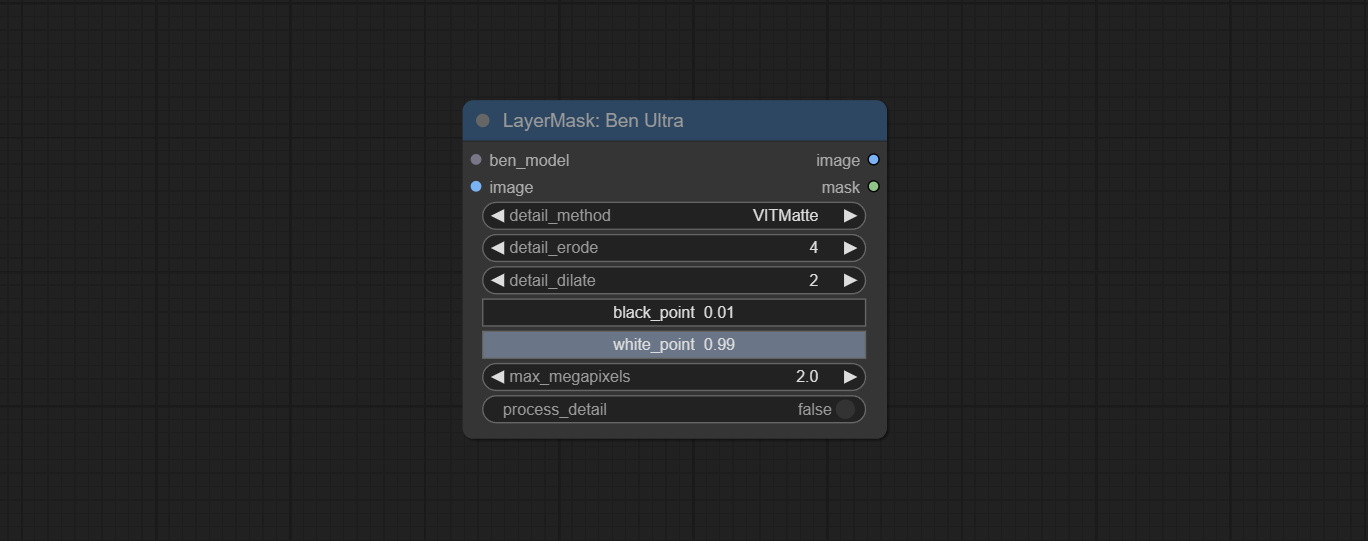

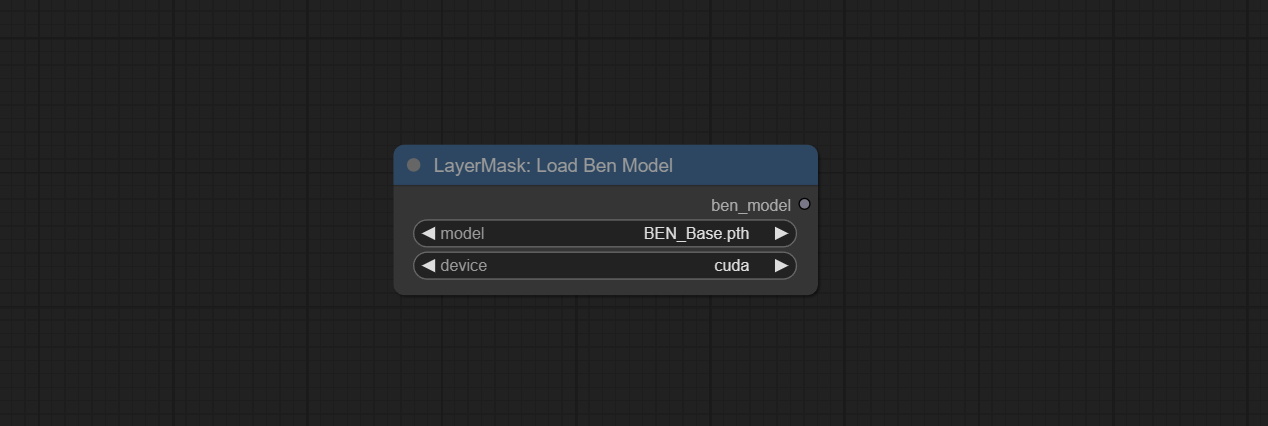

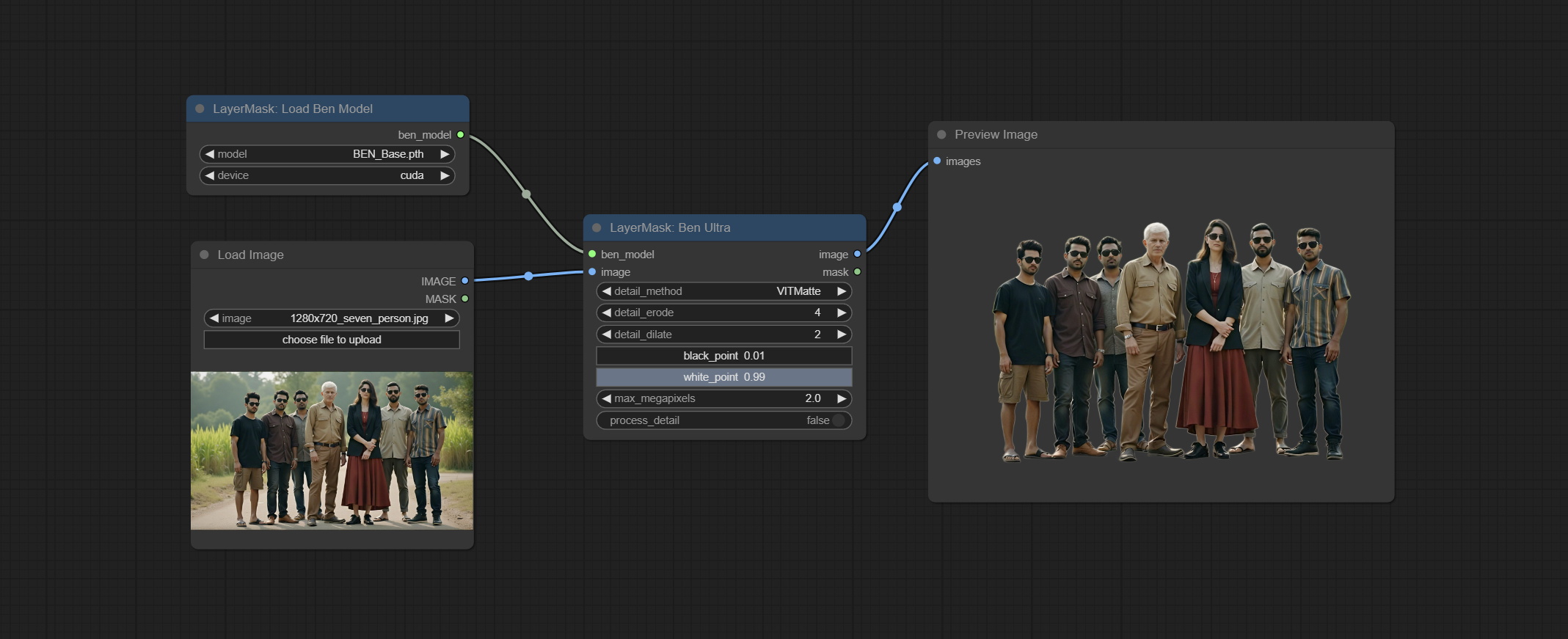

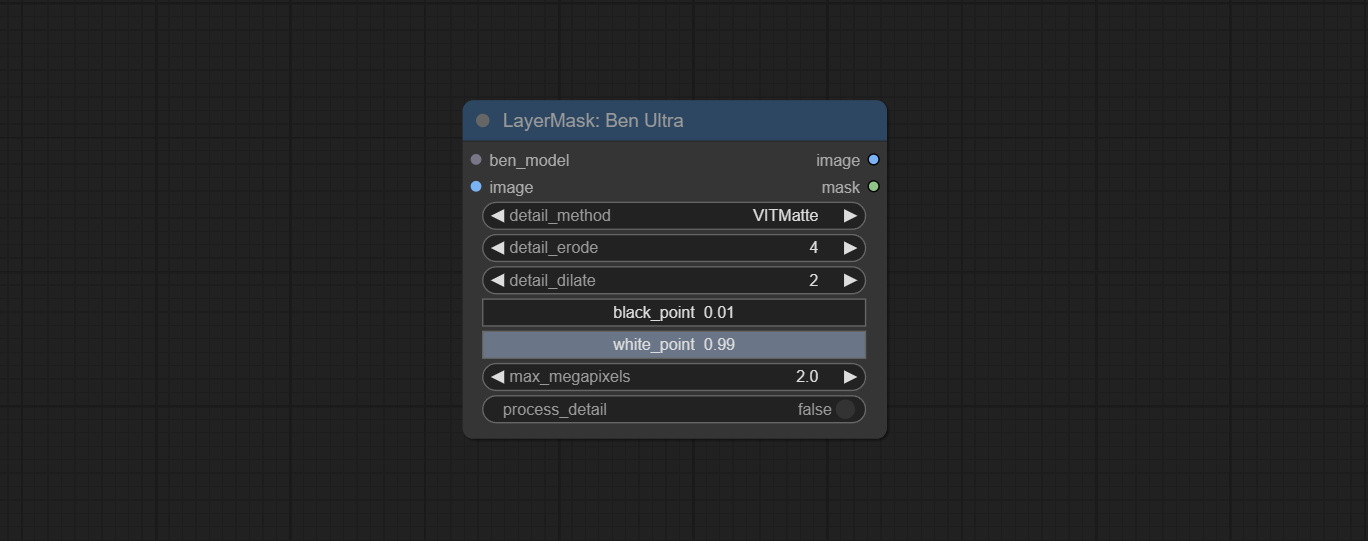

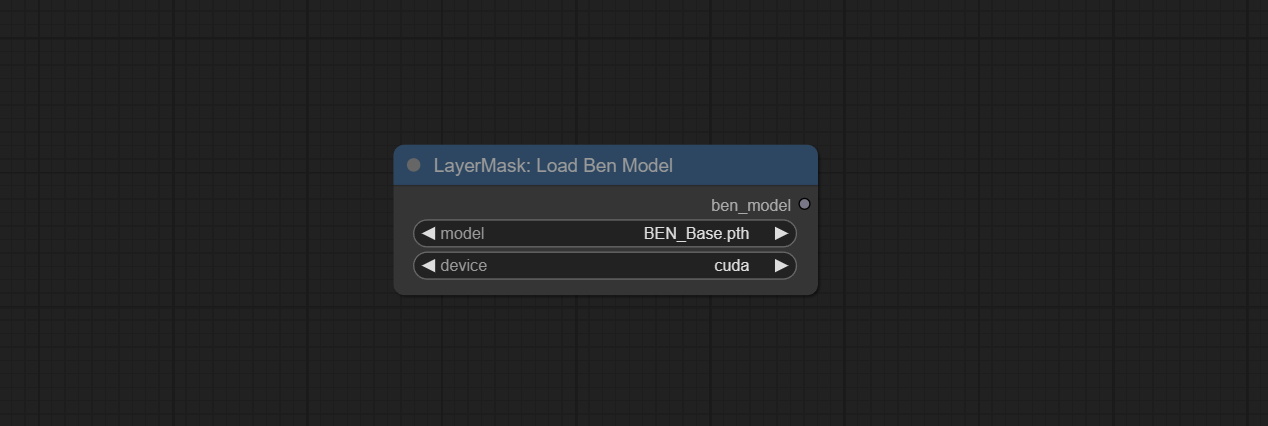

Benultra 및 LoadbenModel 노드를 커밋하십시오. 이 두 노드는 Comfyui에서 Pramallc/Ben Project의 구현입니다.

Huggingface 또는 BaidunetDisk에서 BEN_Base.pth 및 config.json 다운로드하여 ComfyUI/models/BEN 폴더로 복사하십시오.

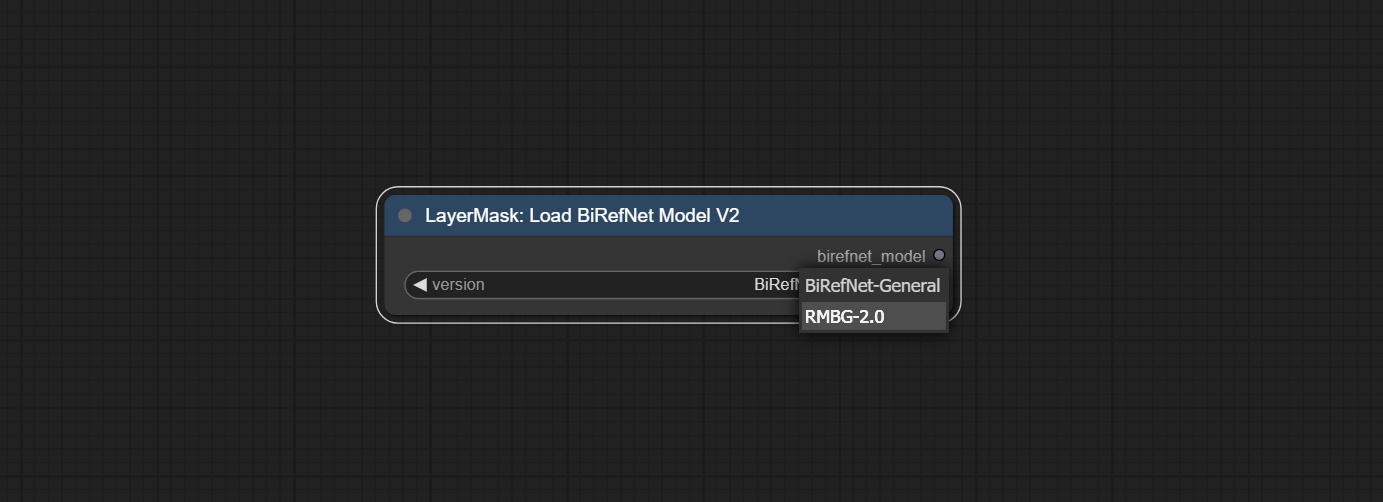

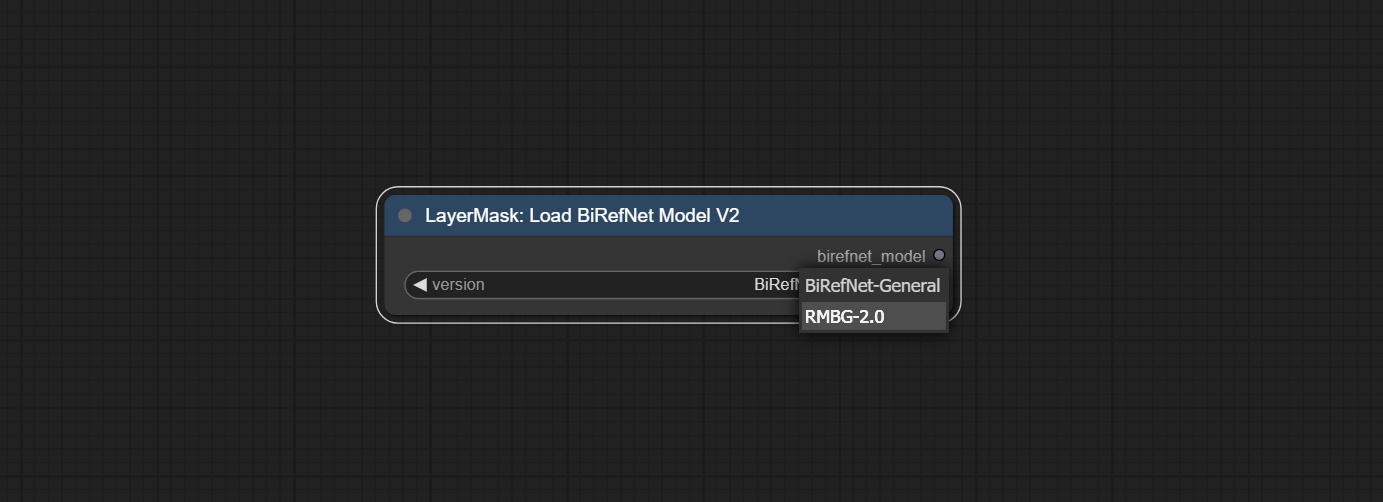

Jimlee2048에서 제출 한 PR을 병합하고 Loadbirefnetmodelv2 노드를 추가하고 RMBG 2.0 모델로드를 지원하십시오.

Huggingface 또는 BaidunetDisk에서 모델 파일을 다운로드하고 ComfyUI/models/BiRefNet/RMBG-2.0 폴더로 복사하십시오.

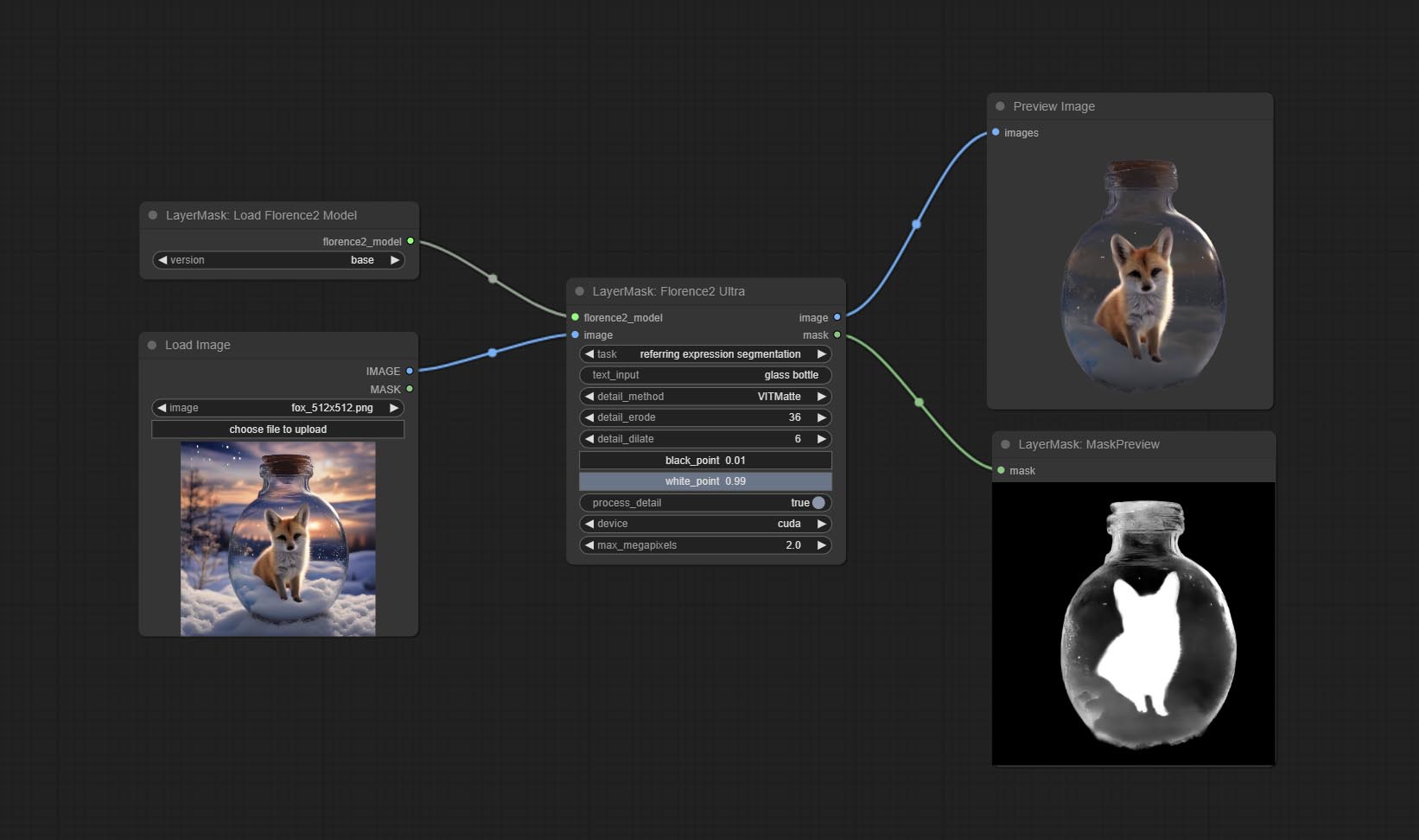

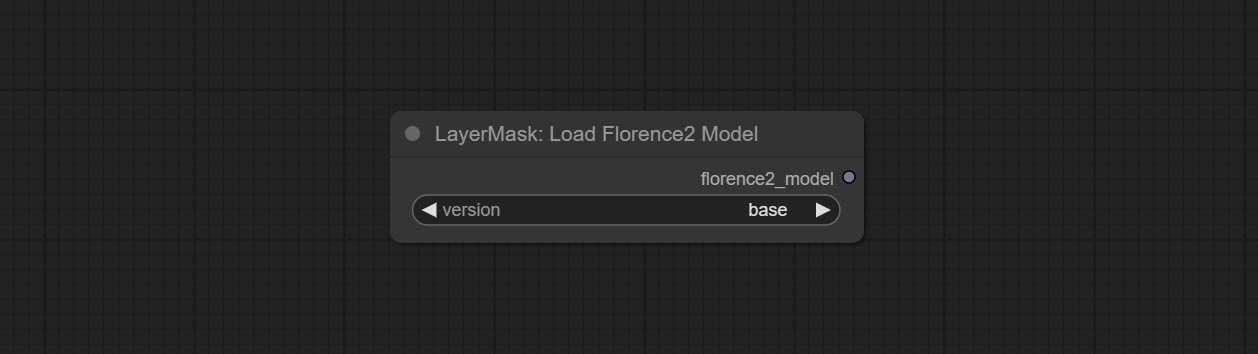

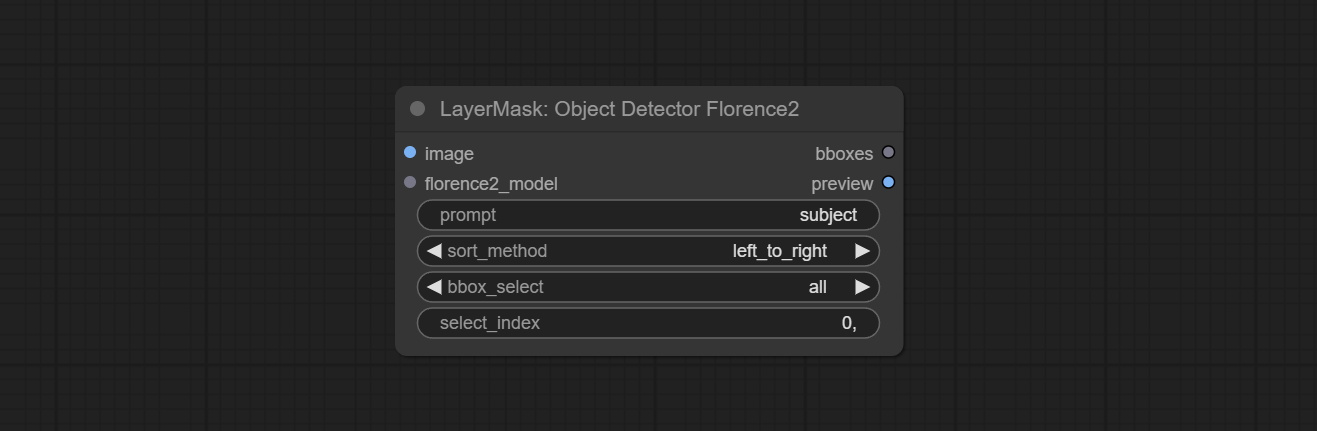

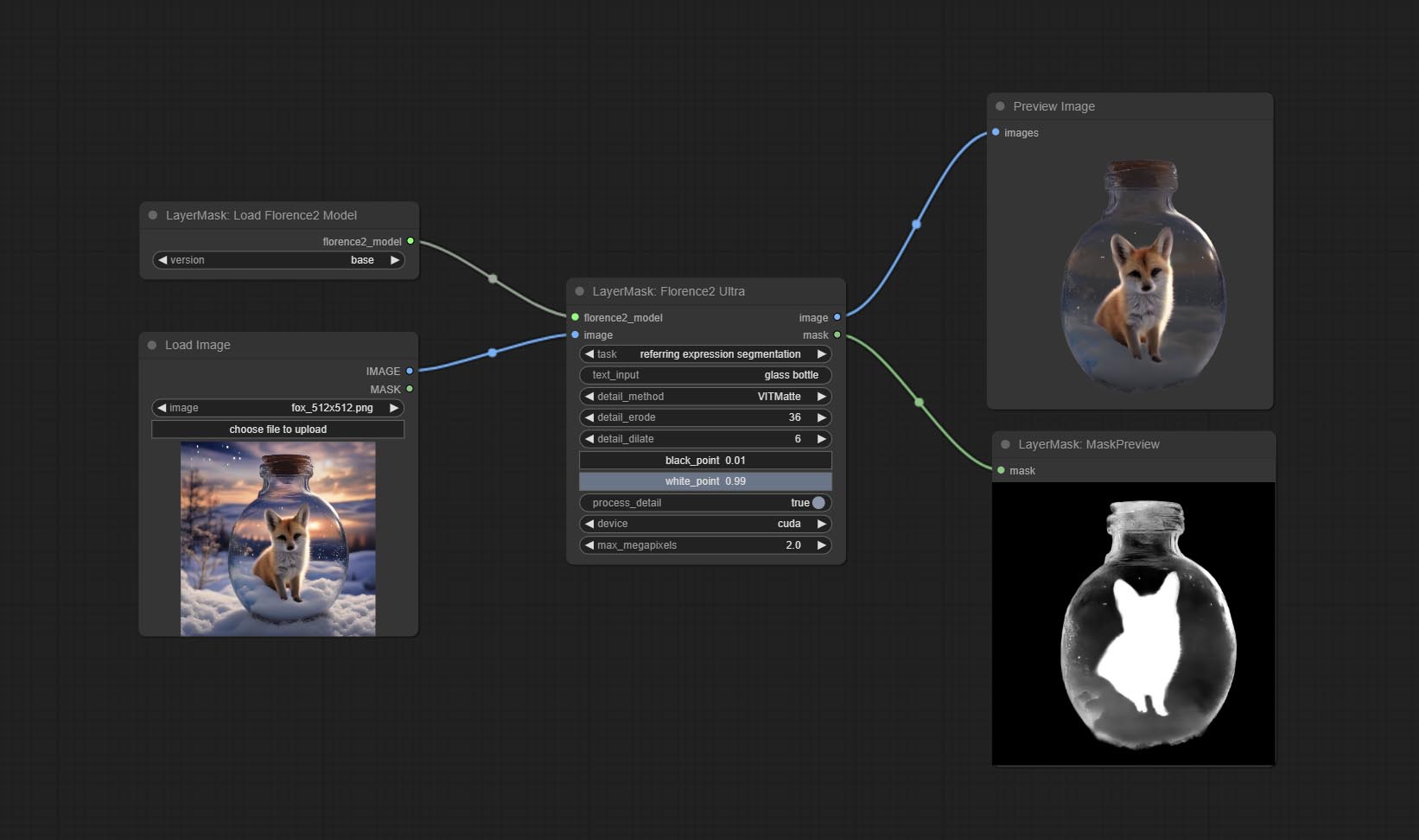

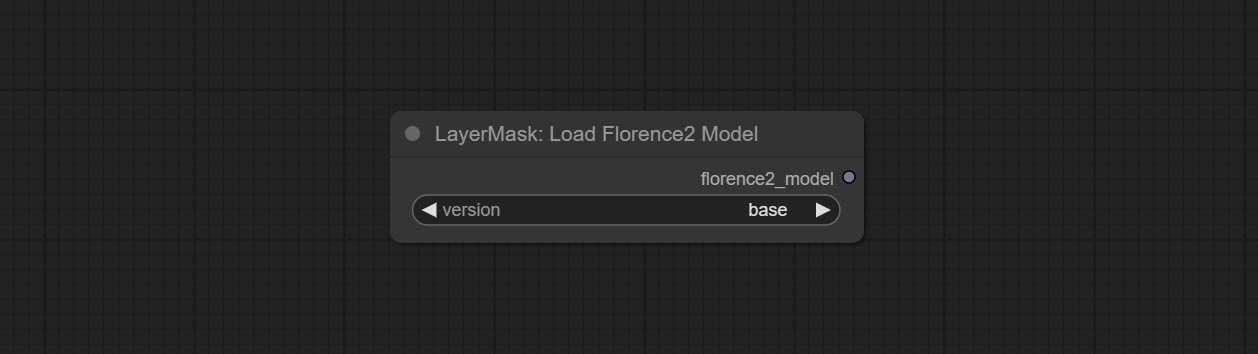

Florence2 노드는 Base-PromptGen-V2.0 및 Large large-PromptGen-v2.0 base-PromptGen-v2.0 -V2.0, Huggingface 또는 BaidunetDisk에서 2 개의 폴더를 다운로드하고 ComfyUI/models/florence2 폴더로 복사합니다. .

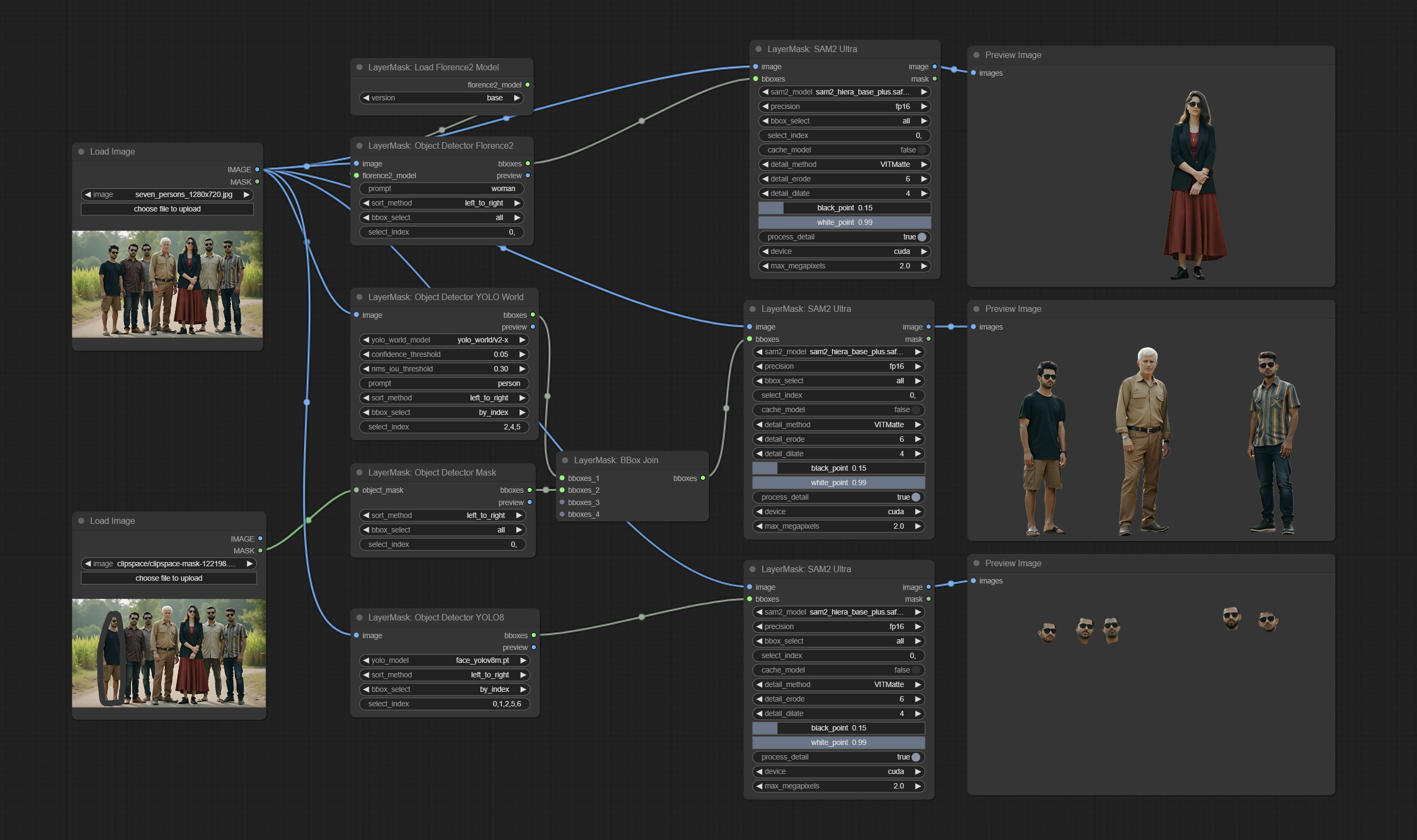

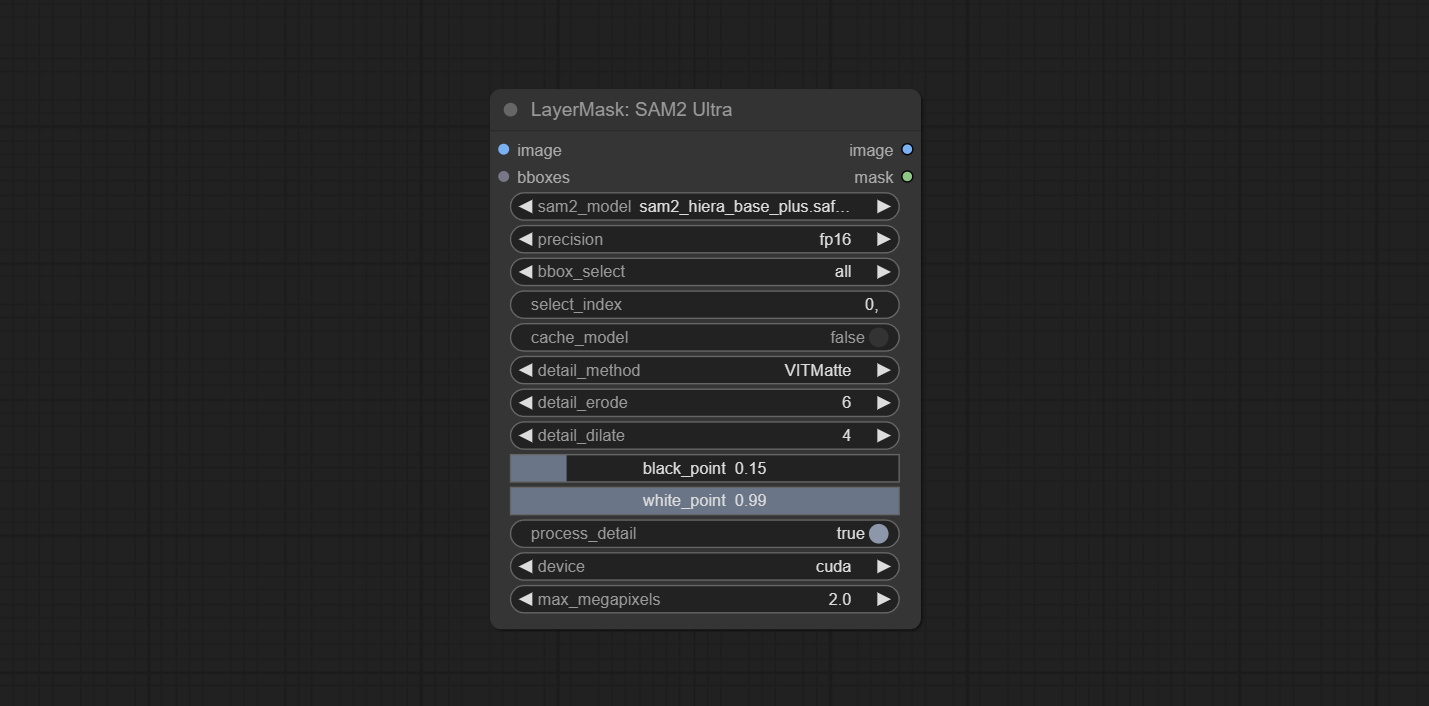

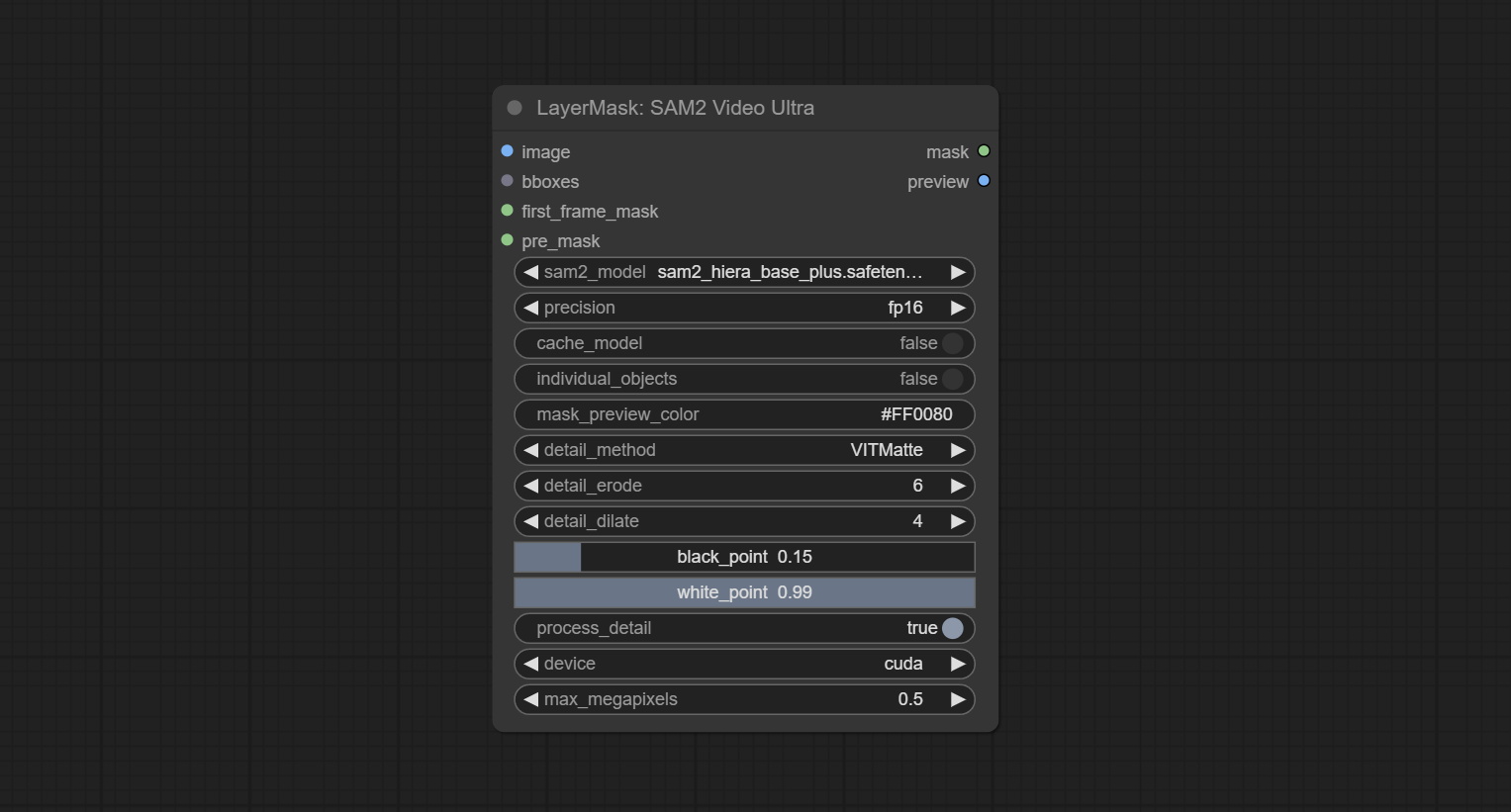

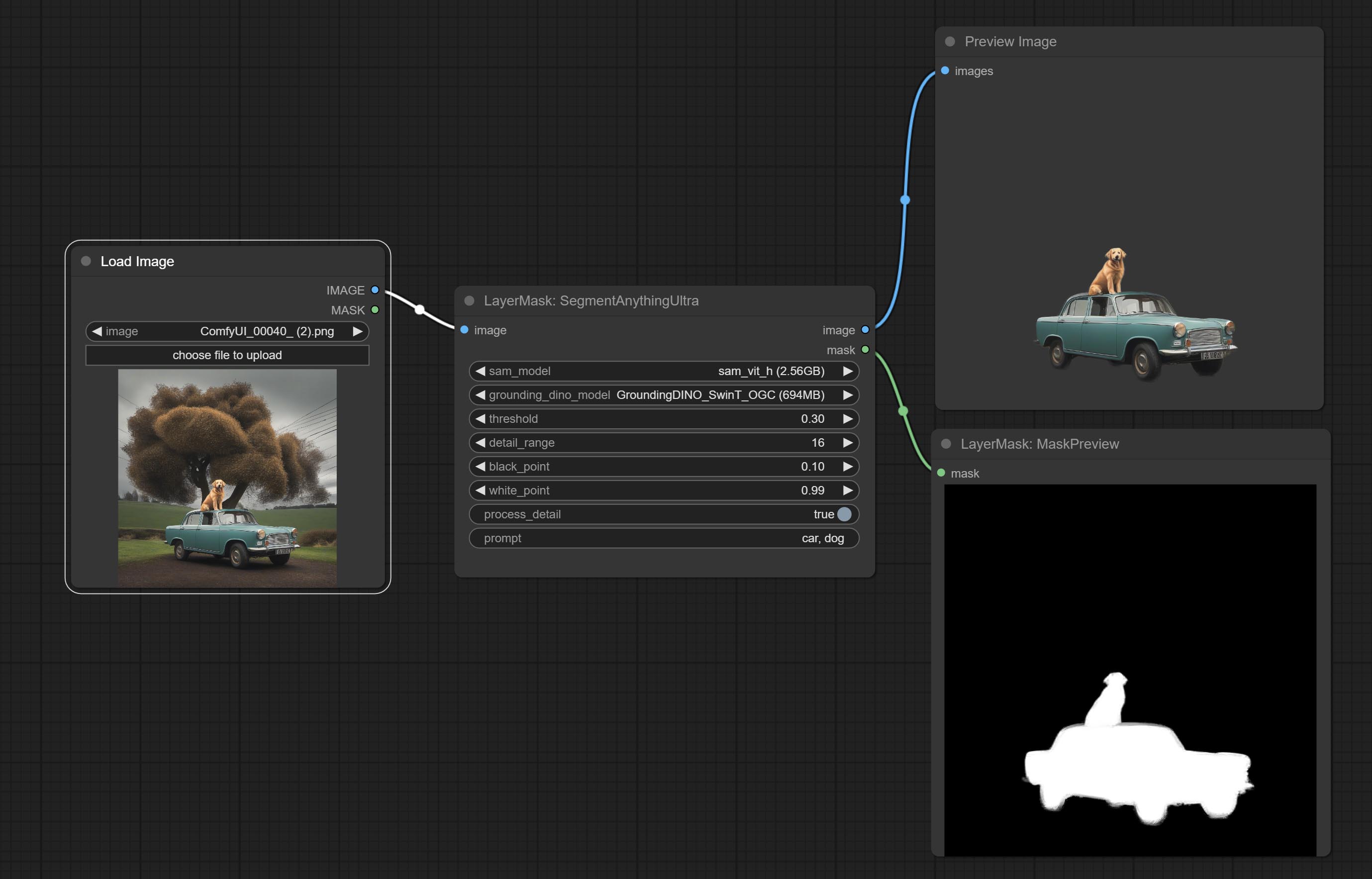

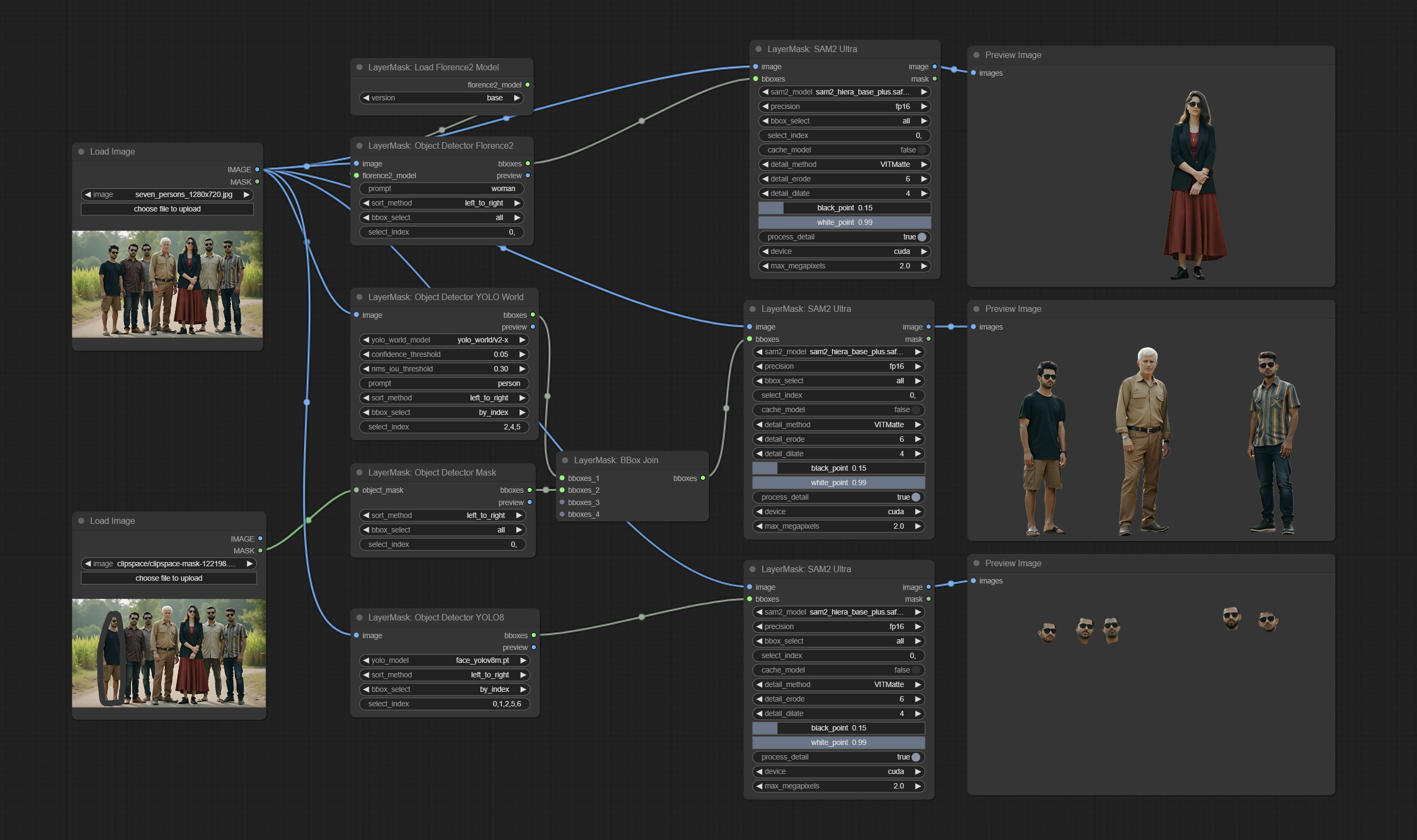

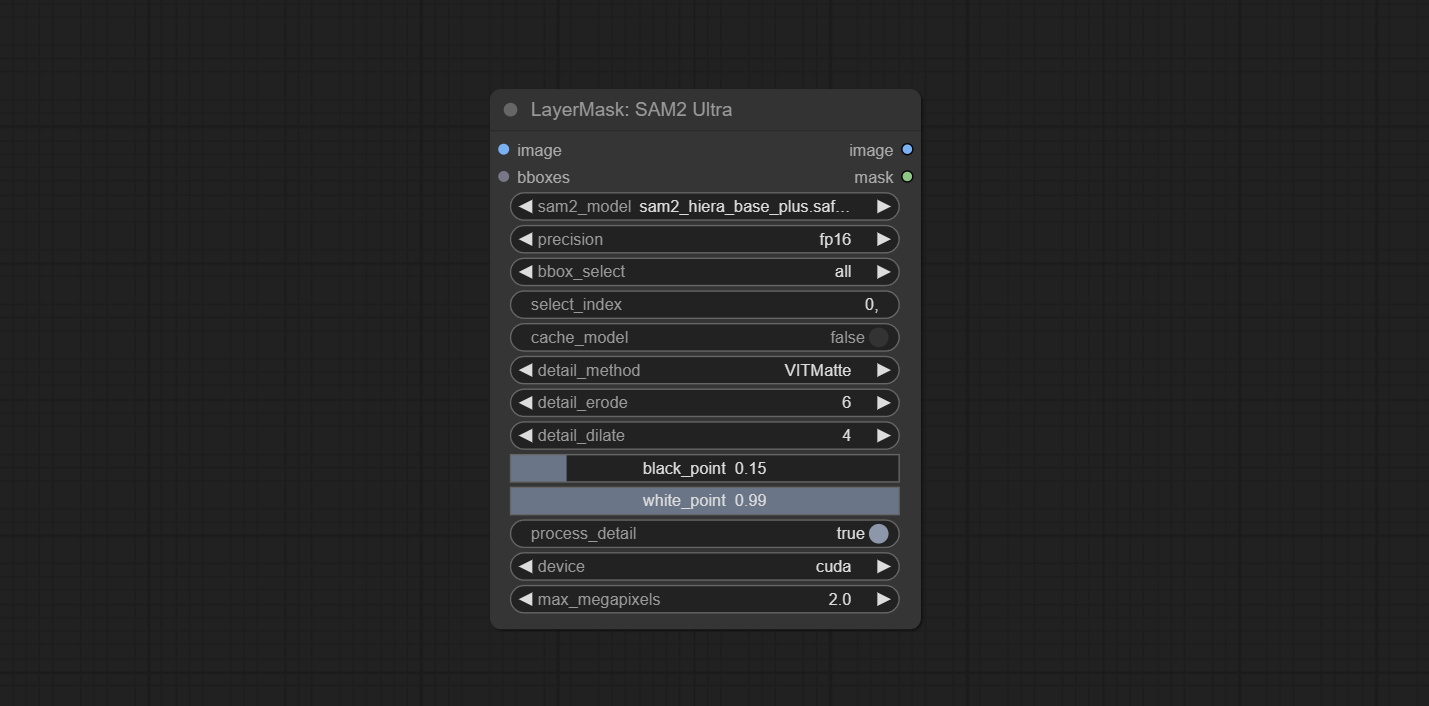

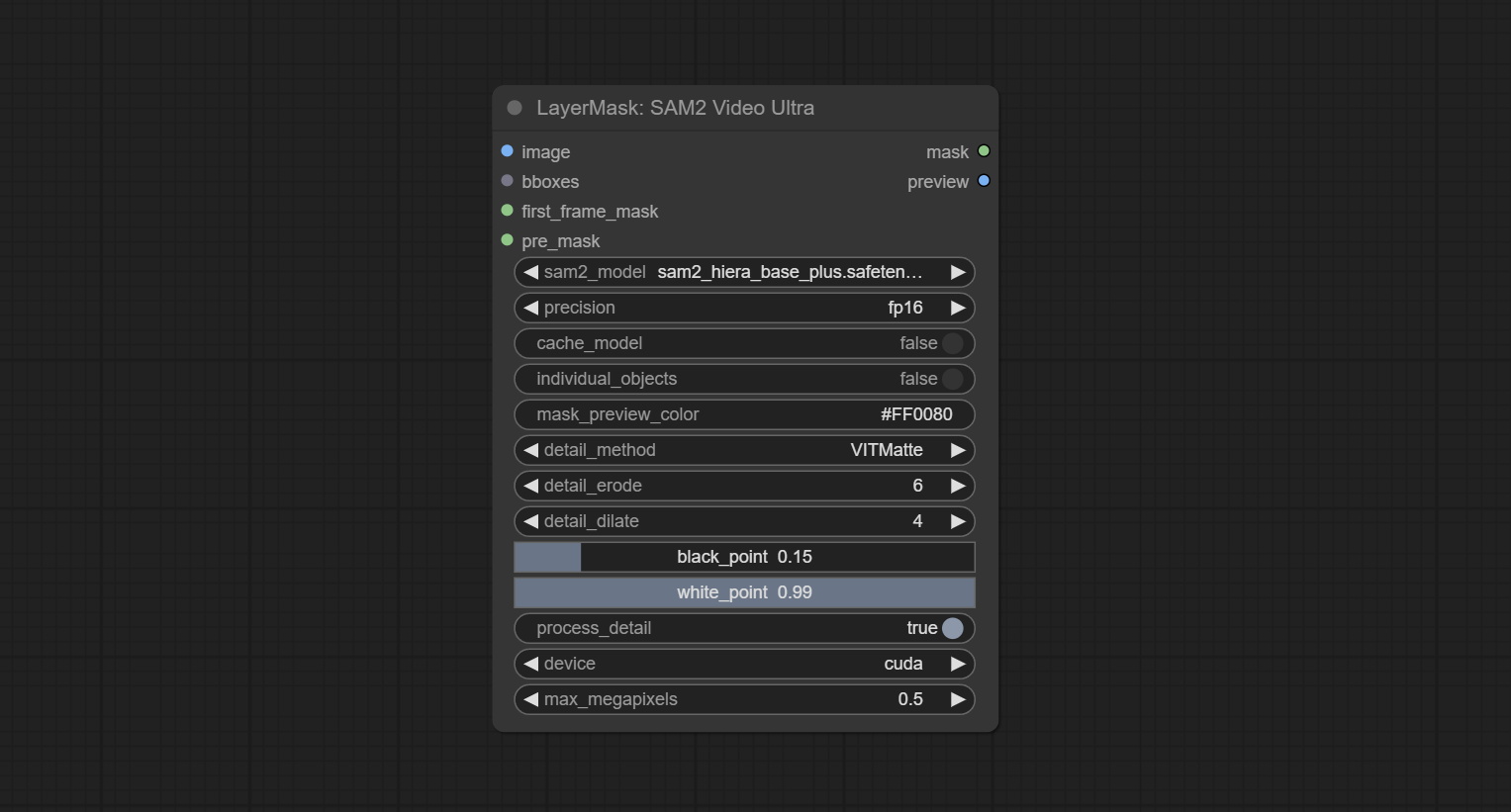

sam2ultra 및 ObjectDetector 노드는 이미지 배치를 지원합니다.

SAM2ULTRA 및 SAM2VIDEOULTRA 노드는 Kijai의 FP16 모델을 포함하여 SAM2.1 모델에 대한 지원을 추가합니다. Baidunetdisk 또는 Huggingface.co/sam2-safetensors에서 모델 파일을 다운로드하고 ComfyUI/models/sam2 폴더에 복사하십시오.

JoyCaption2Split 및 Load JoyCaption2Model 노드를 커밋하여 여러 JoyCaption2 노드에서 모델을 공유하면 효율성이 향상됩니다.

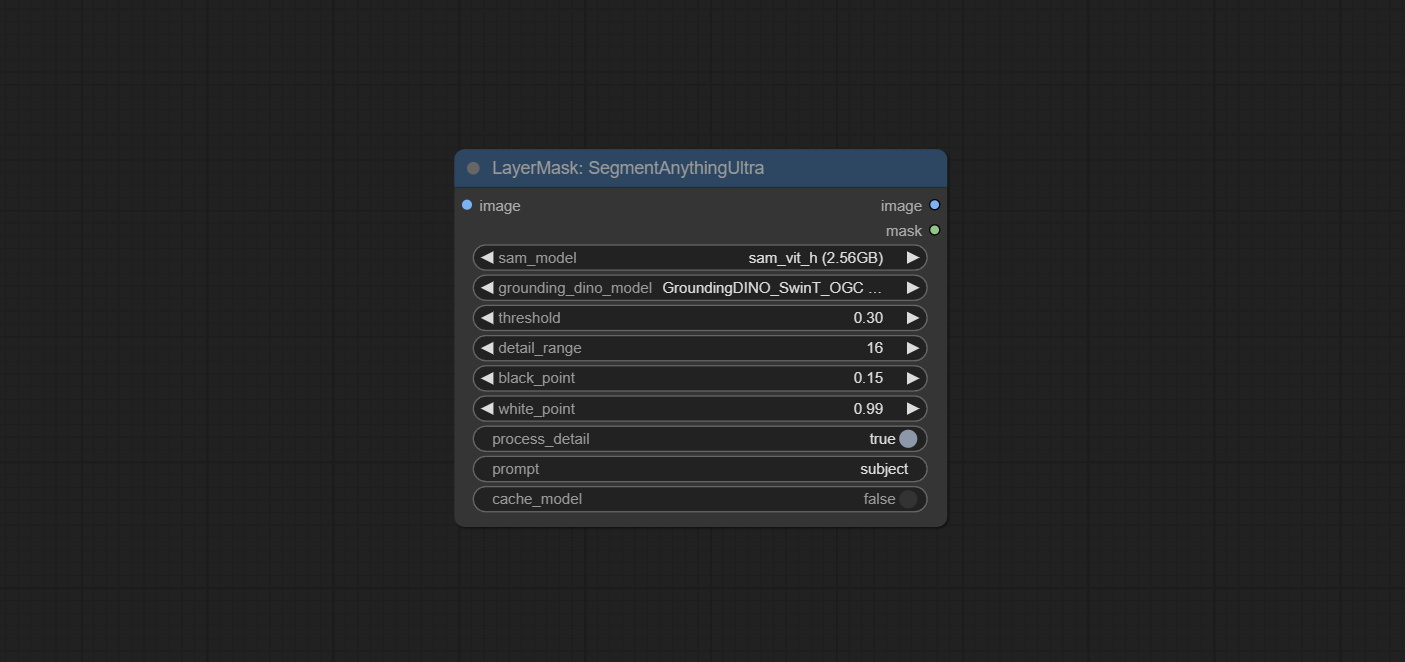

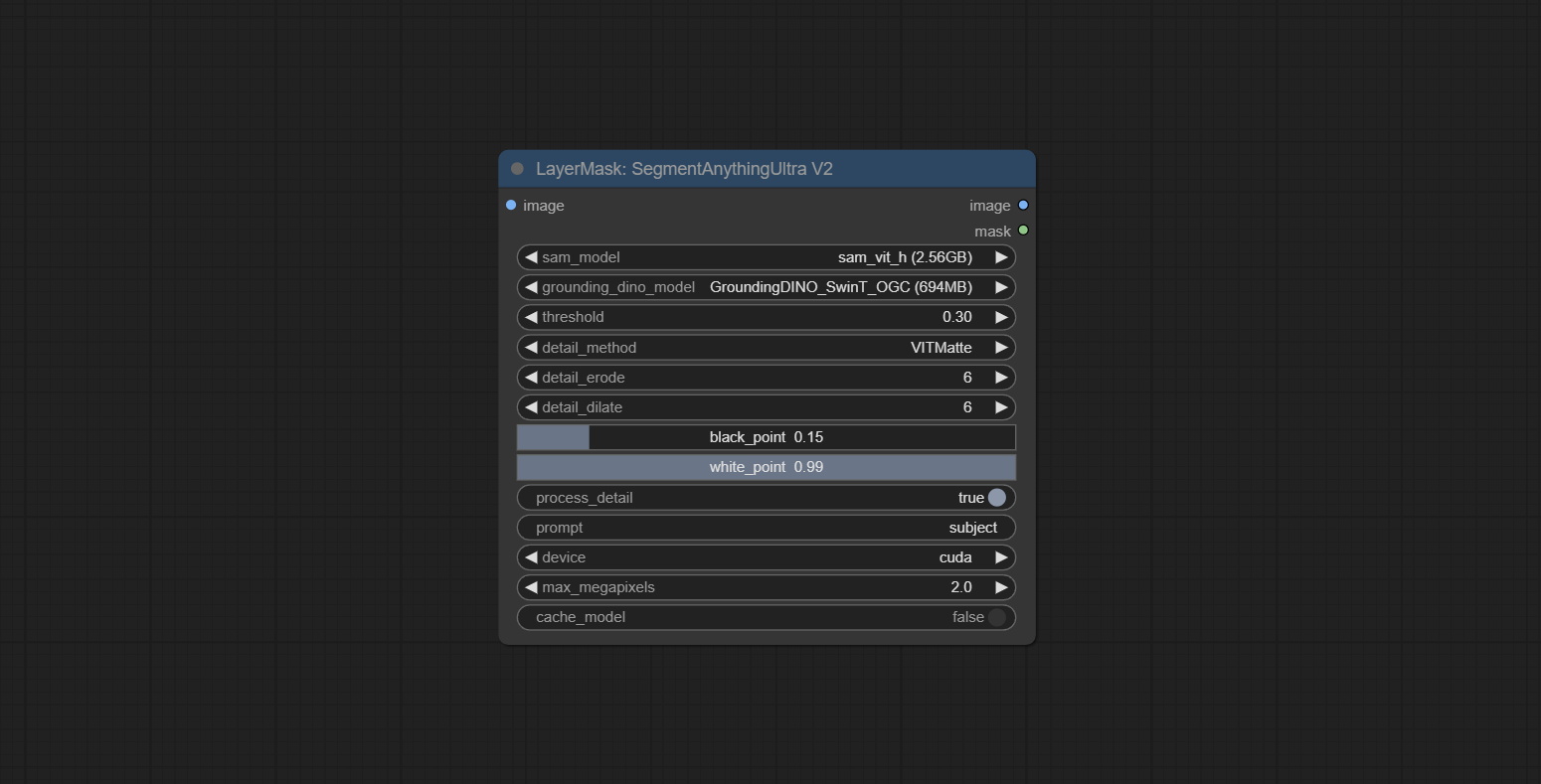

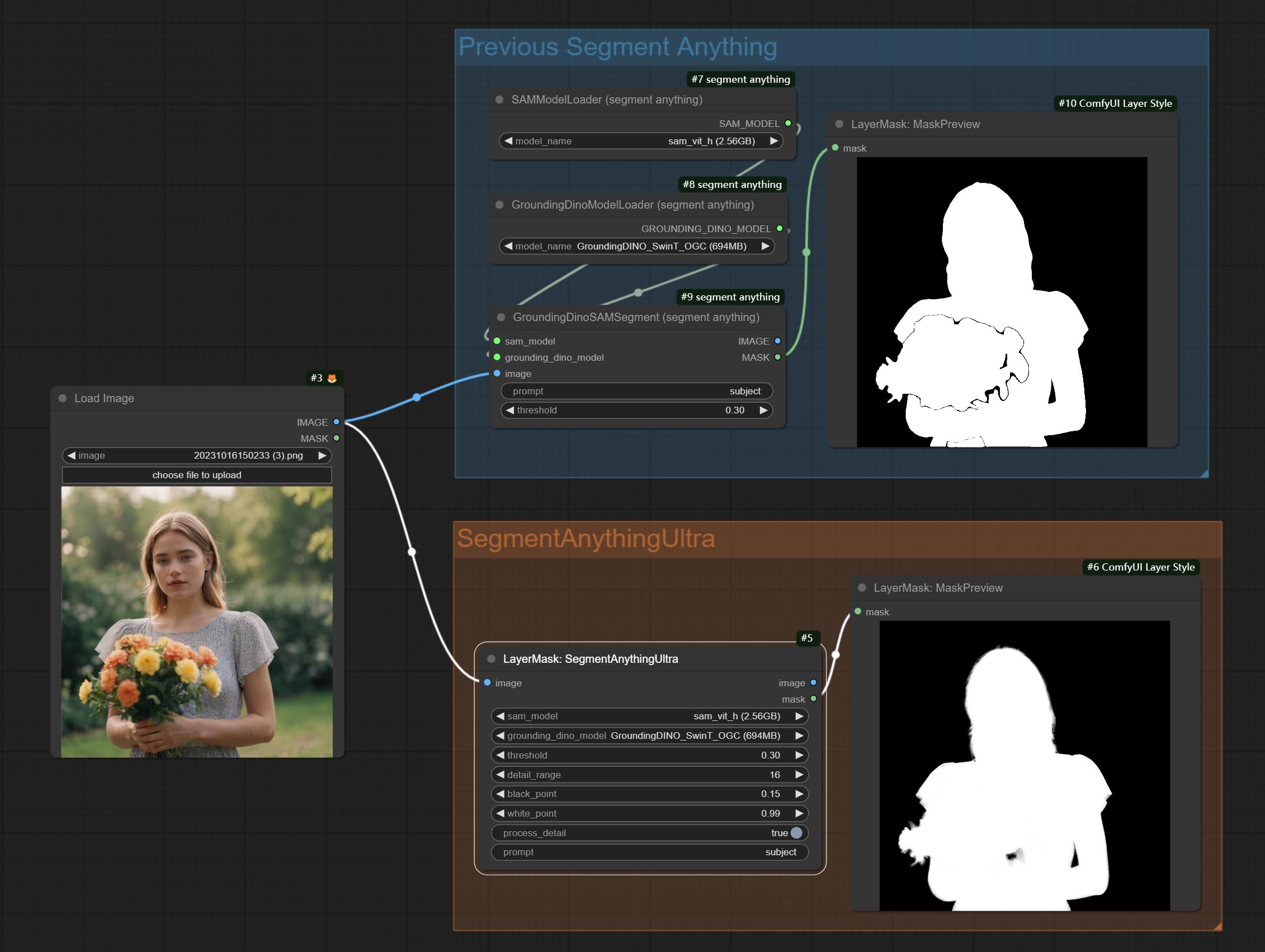

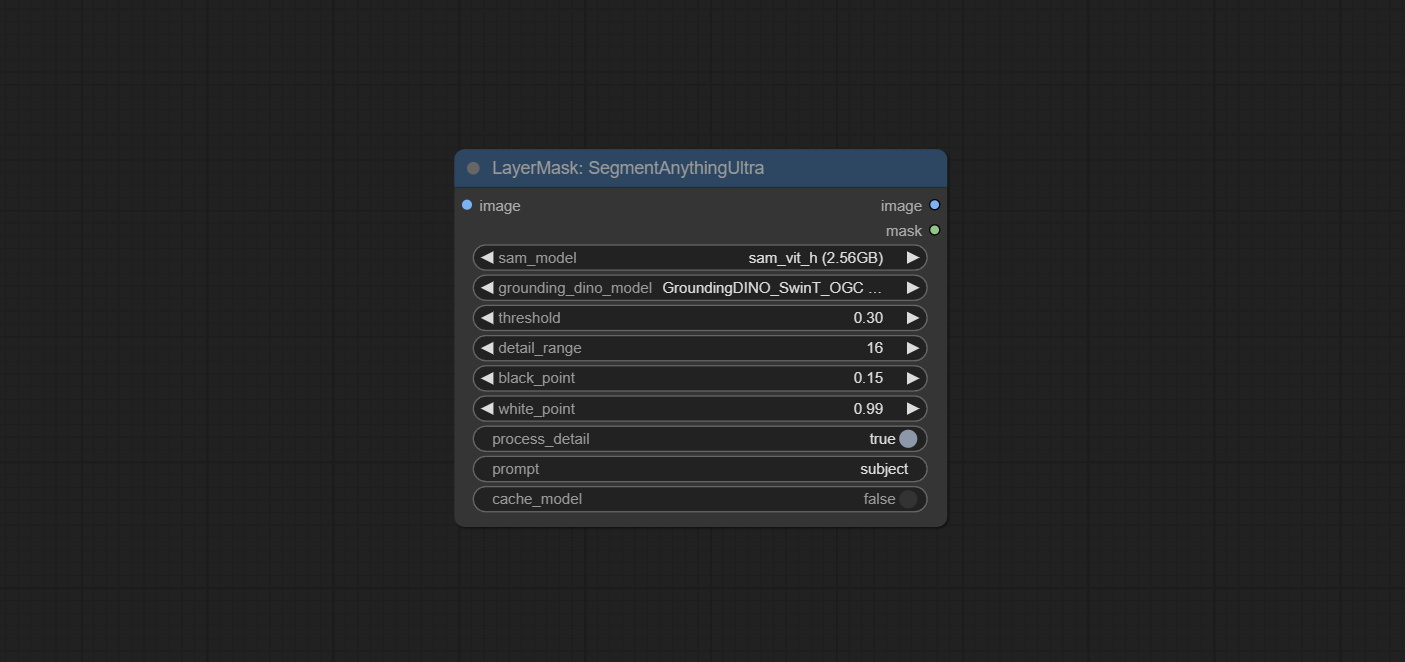

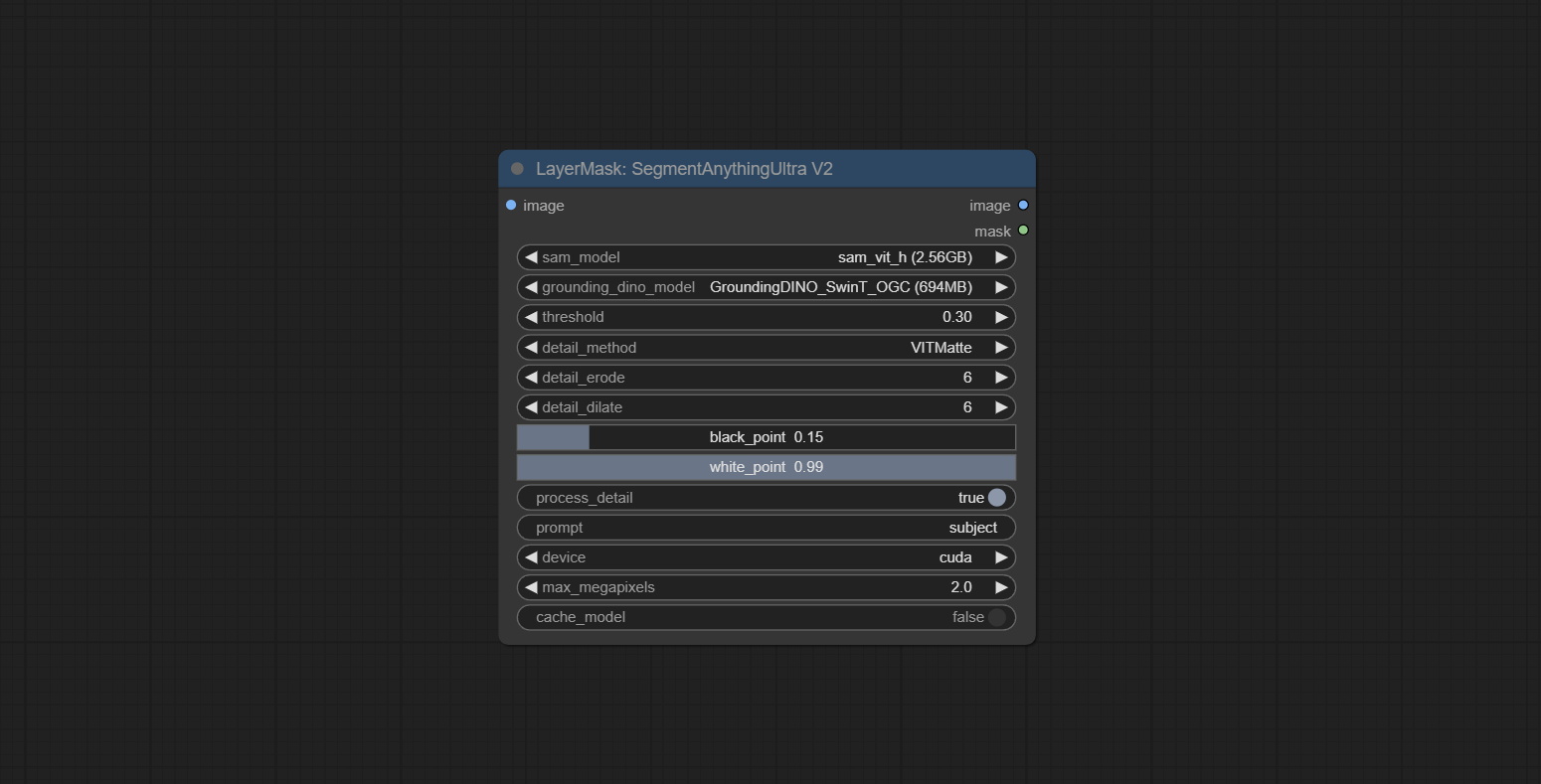

segmentanythingultra 및 segmentanythingUltrav2 cache_model 옵션을 추가하여 VRAM 사용을 쉽게 관리 할 수 있습니다.

transformers 용 Llamavision 노드의 높은 버전 요구 사항으로 인해 일부 이전 타사 플러그인의로드에 영향을 미치므로 Layerstyle 플러그인은 기본 요구 사항을 4.43.2로 낮췄습니다. llamavision을 실행 해야하는 경우 스스로 4.45.0 이상으로 업그레이드하십시오.

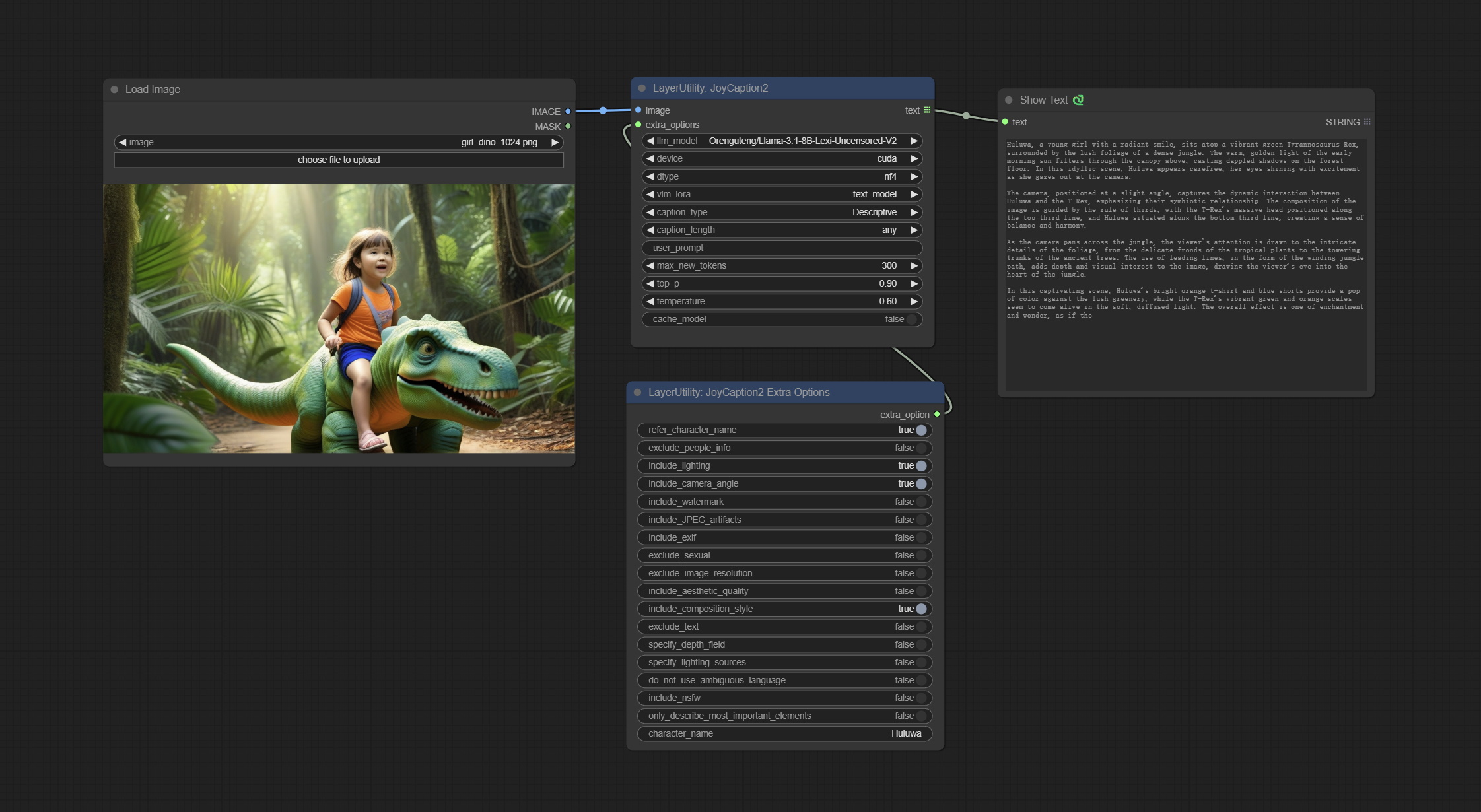

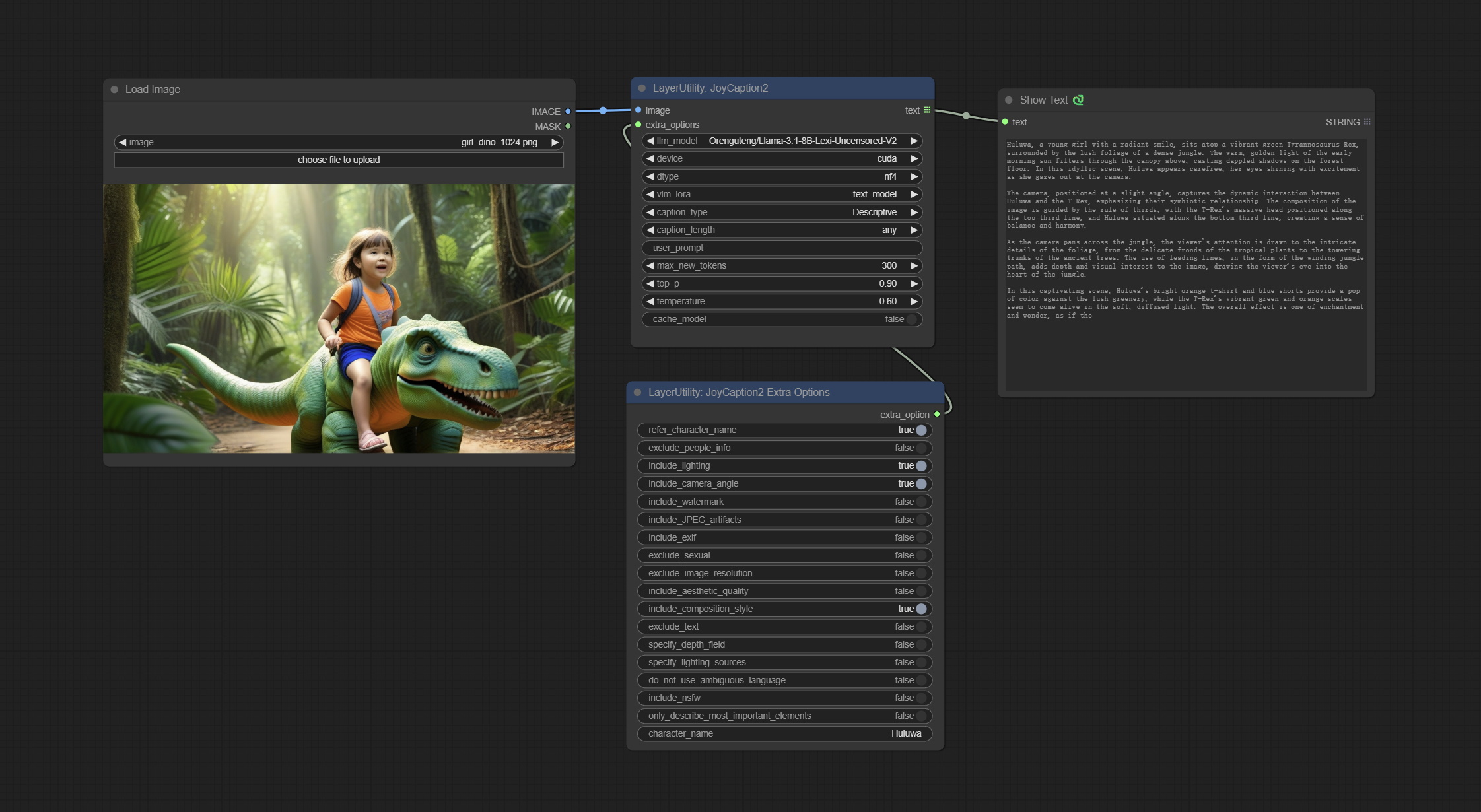

JoyCaption2 및 JoyCaption2Extraoptions 노드를 커밋하십시오. 새로운 종속성 패키지를 설치해야합니다. 로컬 추론을 위해 Joycaption-Alpha-Two 모델을 사용하십시오. 신속한 단어를 생성하는 데 사용할 수 있습니다. 이 노드는 comfyui에서 https://huggingface.co/john6666/joy-caption-alpha-two-cli-mod 구현입니다. 원래 저자에게 감사합니다. 모델 다운로드 BaidunetDisk ComfyUI/models/LLM cgrkzexw-599808 ComfyUI/models/clip Huggingf Baidunetdisk 또는 Huggingface/John6666, ComfyUI/models/Joy_caption 에 복사。

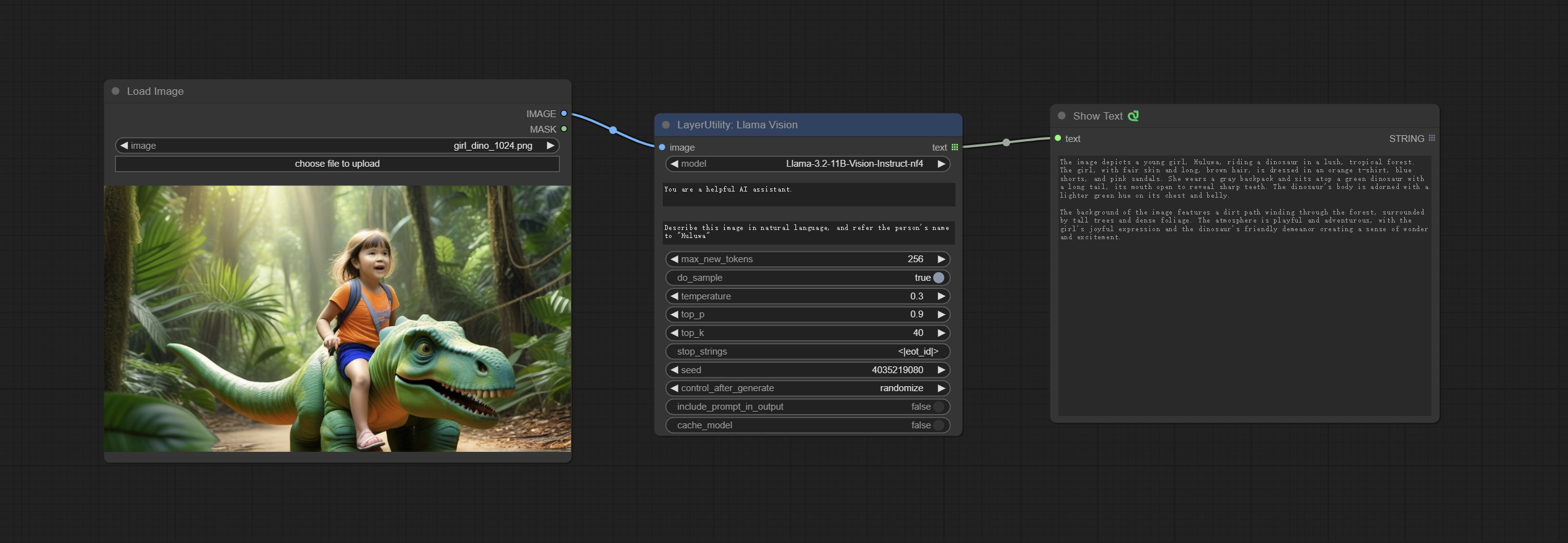

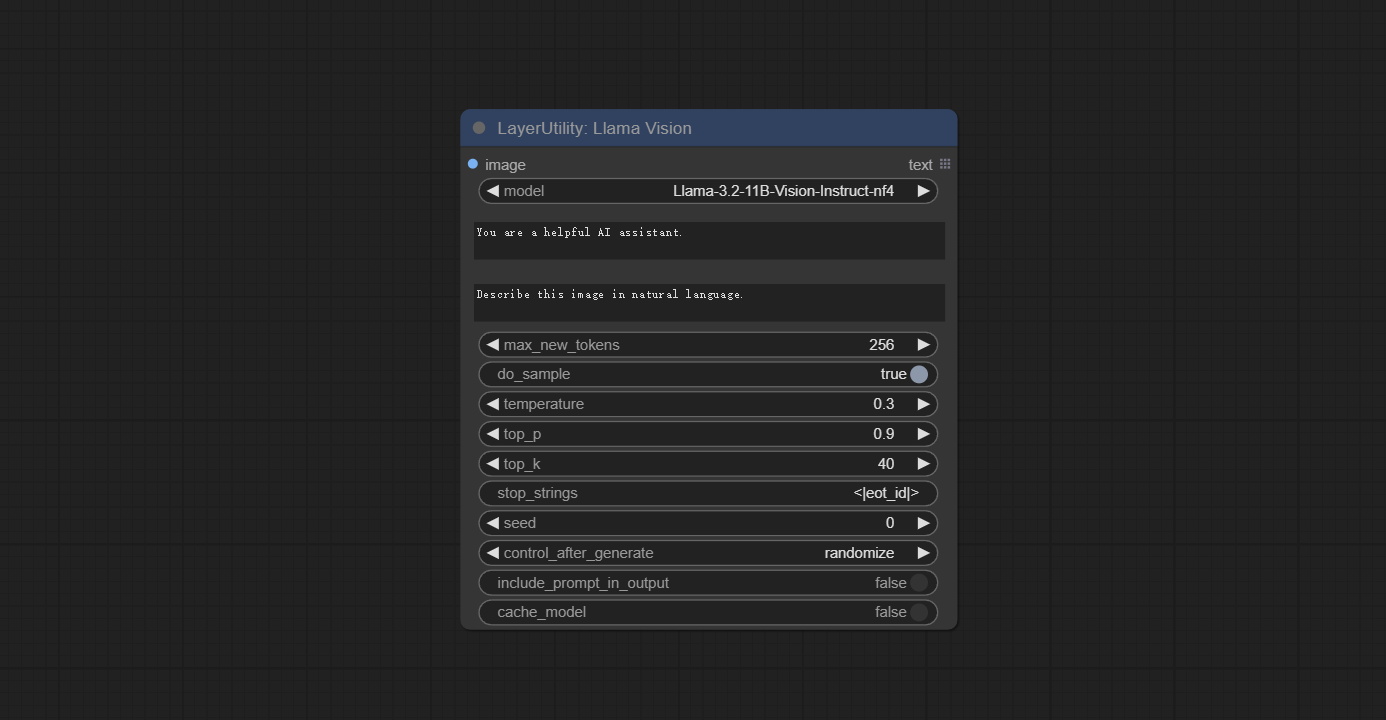

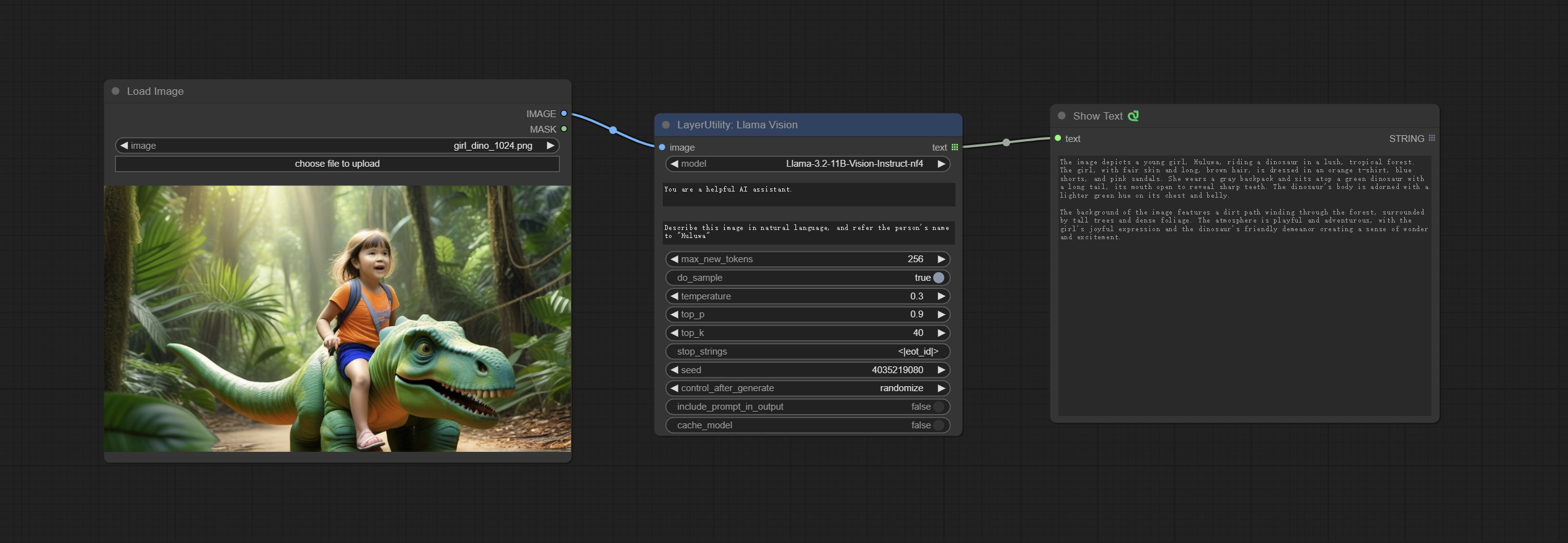

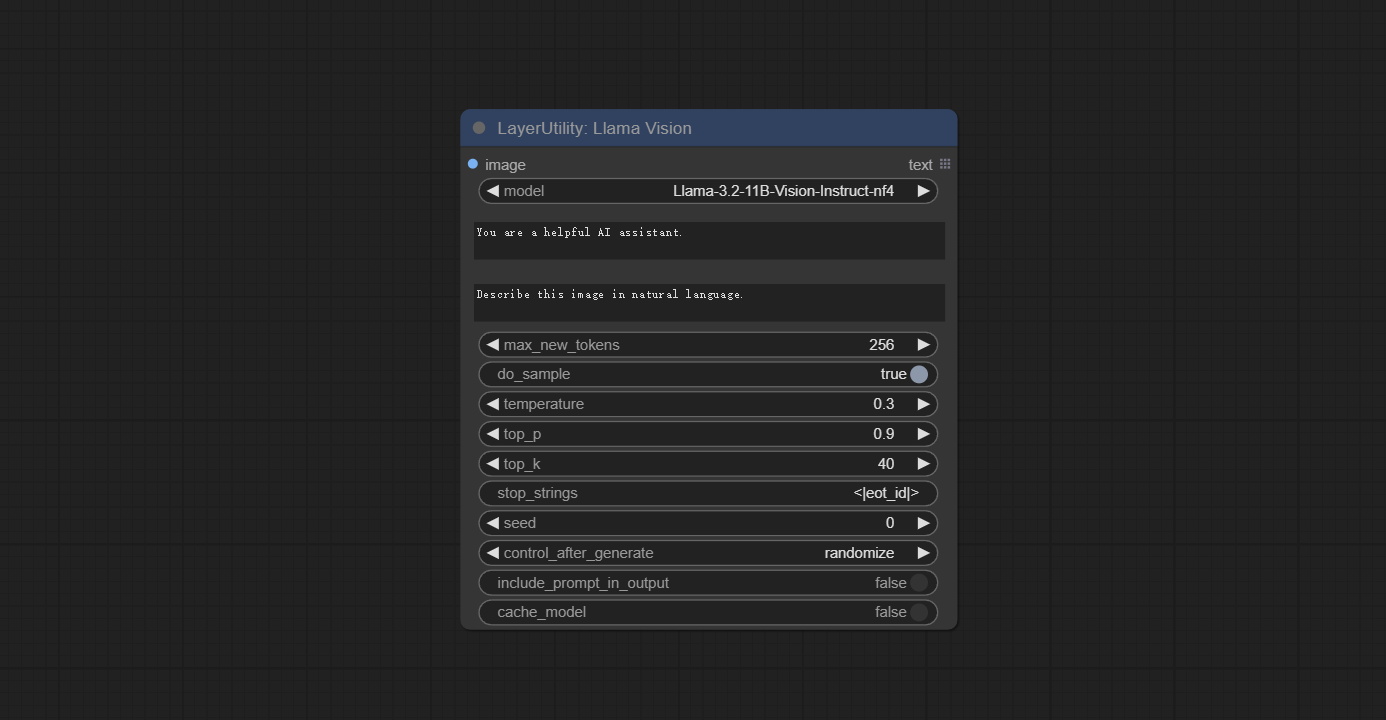

llamavision 노드를 커밋하고 LLAMA 3.2 비전 모델을 사용하여 로컬 추론에 사용하십시오. 신속한 단어를 생성하는 데 사용할 수 있습니다. 이 노드의 코드의 일부는 Comfyui-pixtralllamamolmovision에서 제공합니다. 원래 저자에게 감사합니다. 이 노드를 사용하려면 transformers 4.45.0 이상으로 업그레이드되어야합니다. Baidunetdisk 또는 Huggingface/Seanscripts에서 모델을 다운로드하고 ComfyUI/models/LLM 에 복사하십시오.

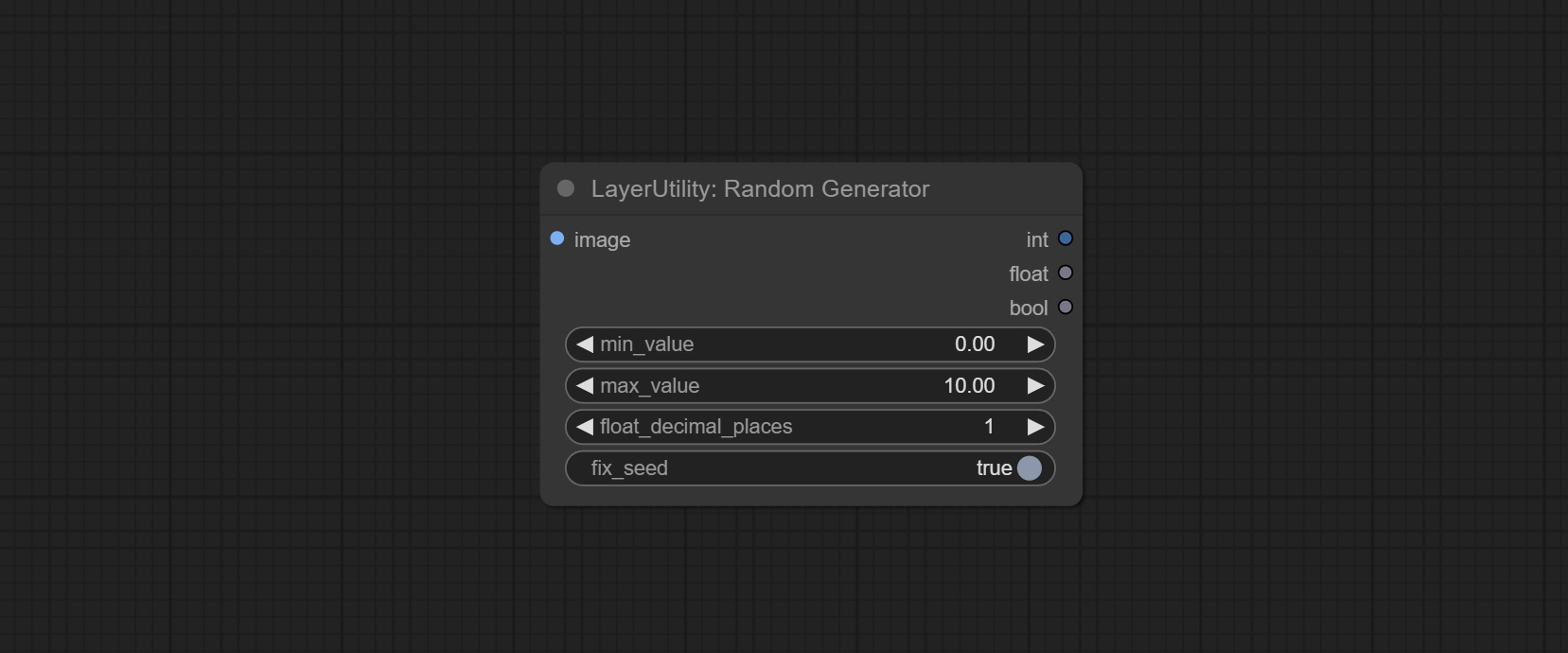

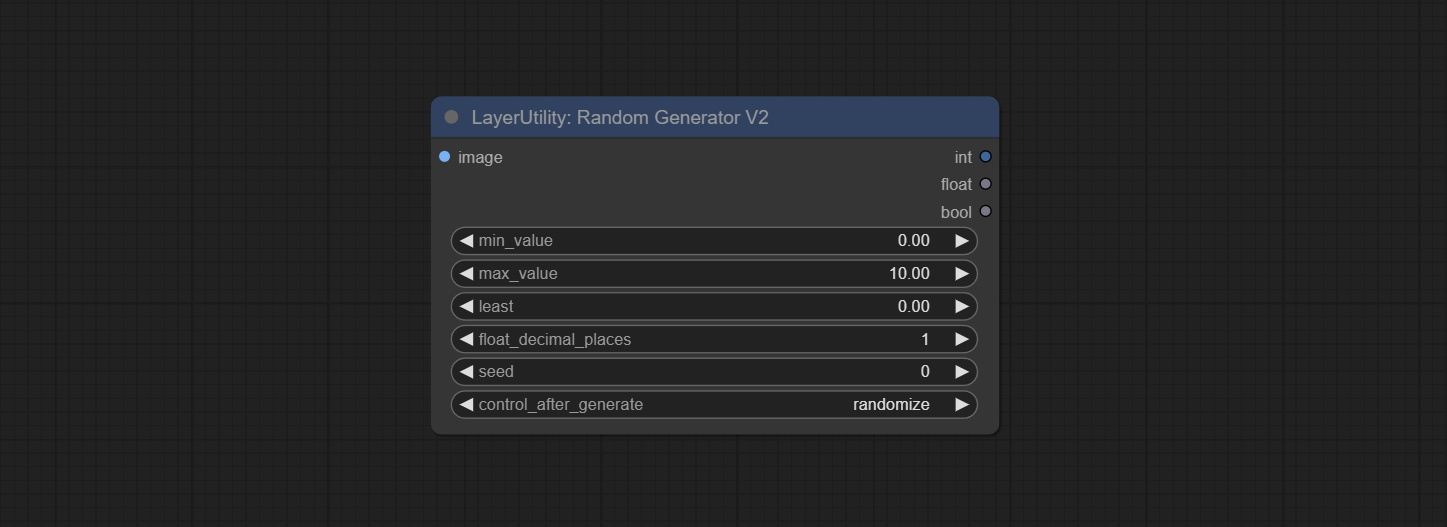

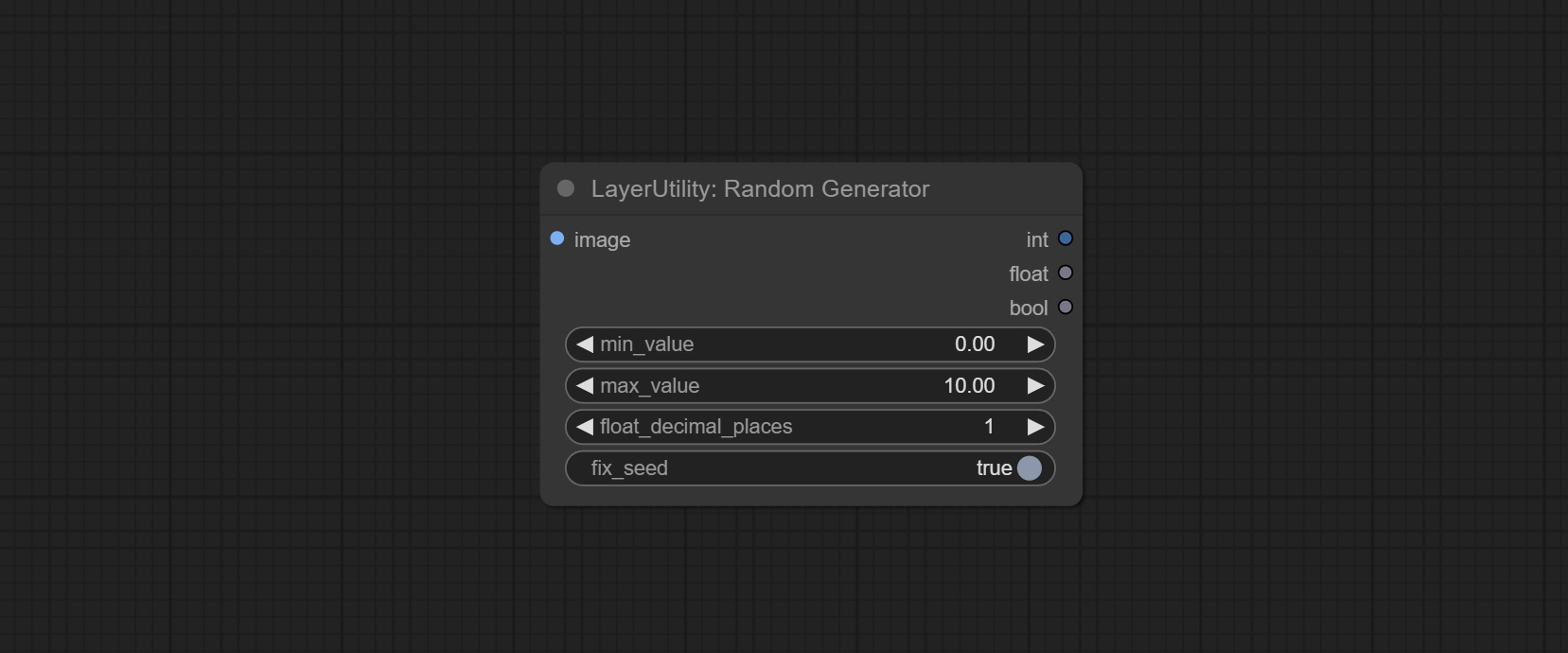

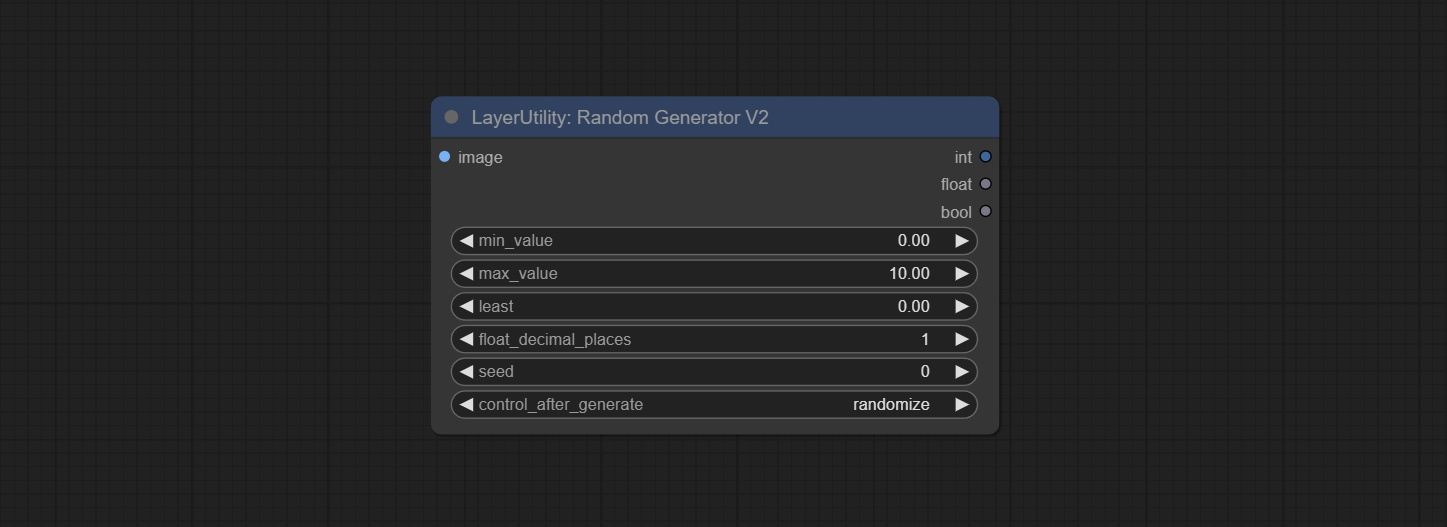

RandomGeneratorV2 노드를 커밋하고 최소 임의 범위와 종자 옵션을 추가하십시오.

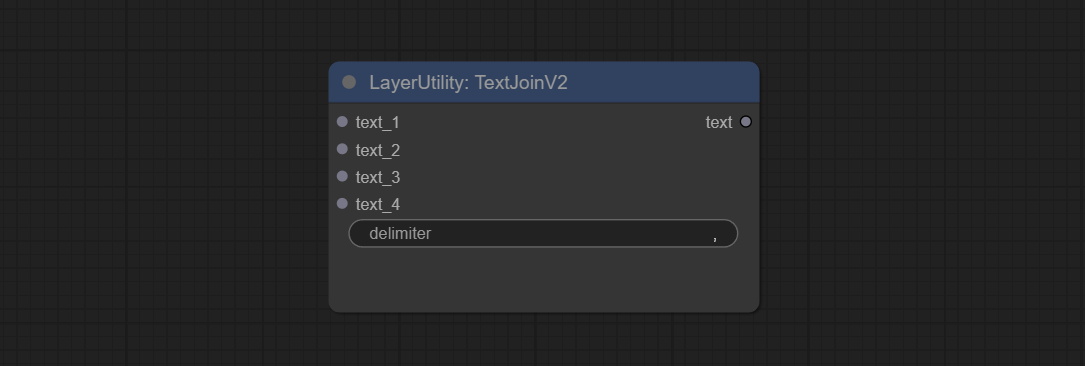

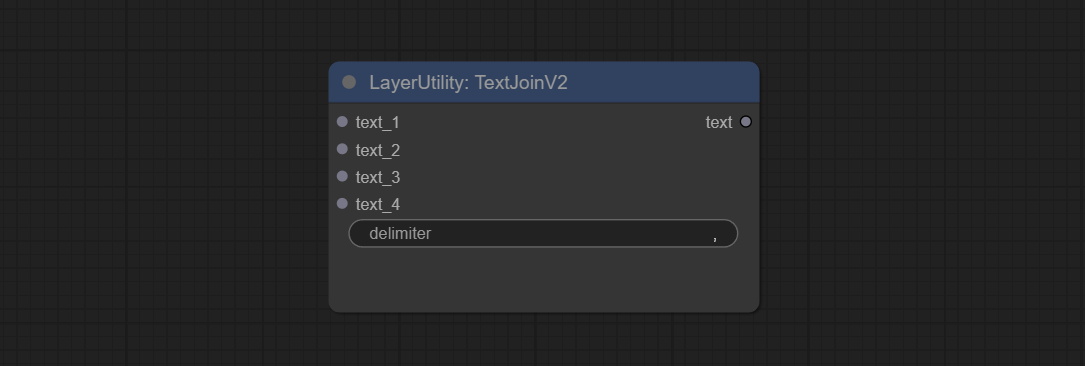

TextJoinv2 노드를 커밋하고 TextJion 위에 구분 기 옵션을 추가하십시오.

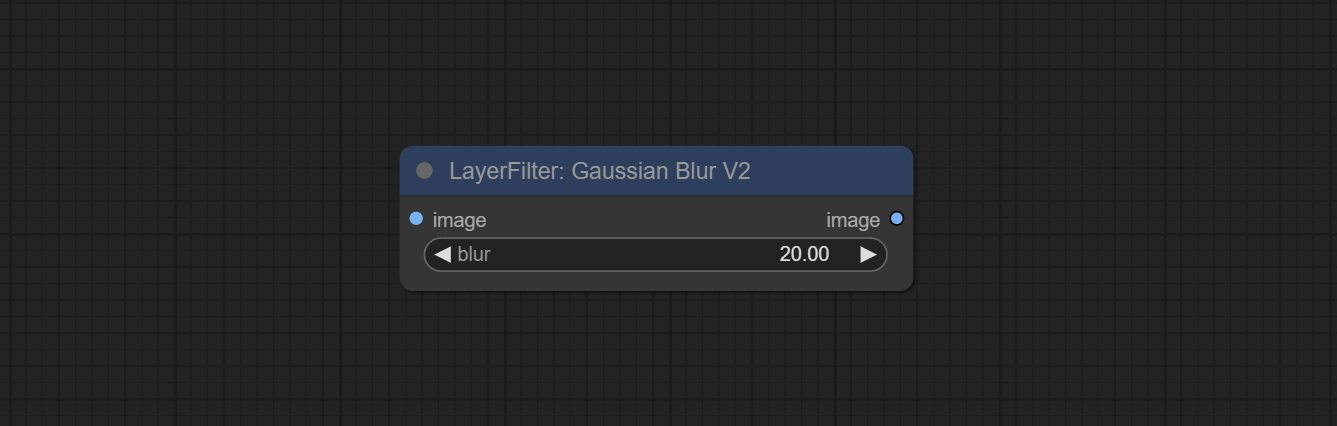

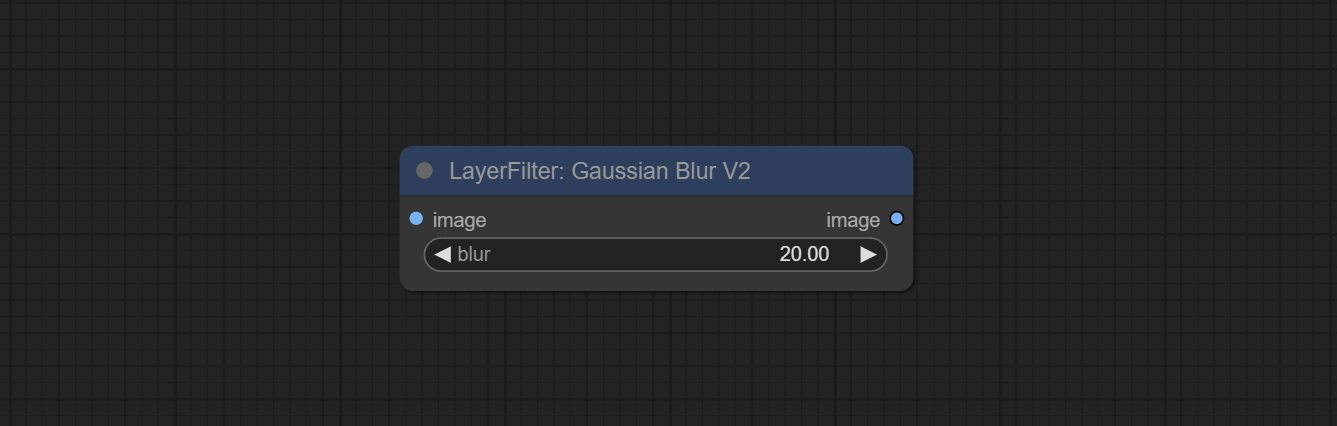

Commit GaussianBlurv2 노드, 파라미터 정확도는 0.01로 향상되었습니다.

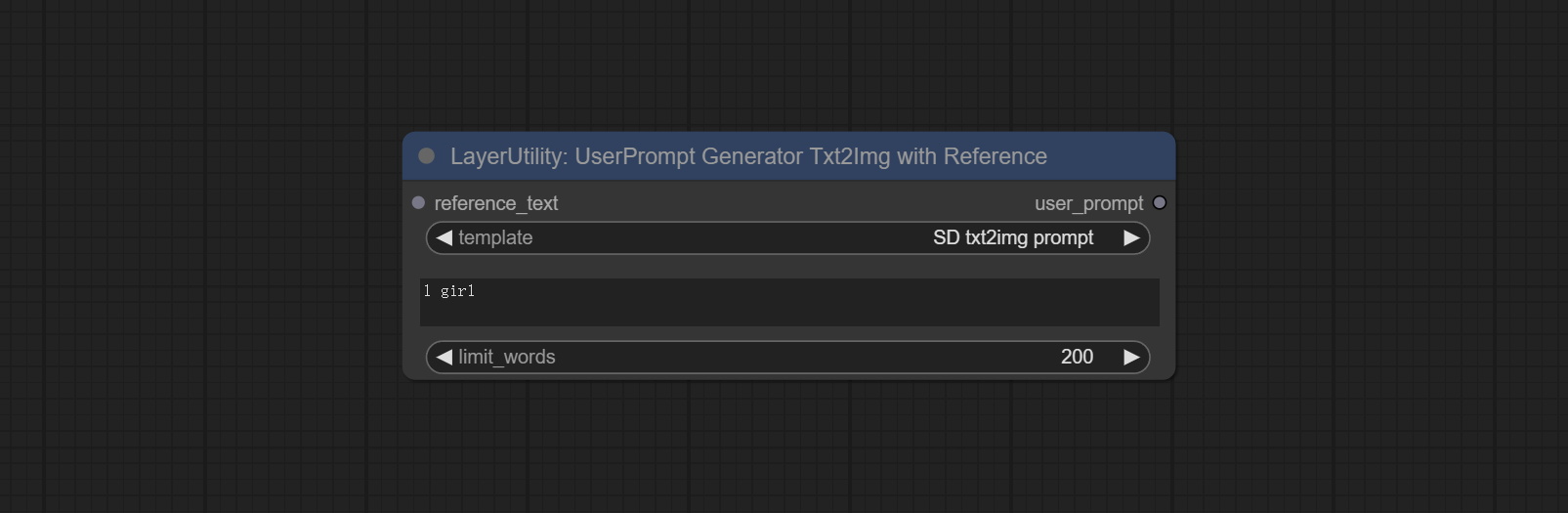

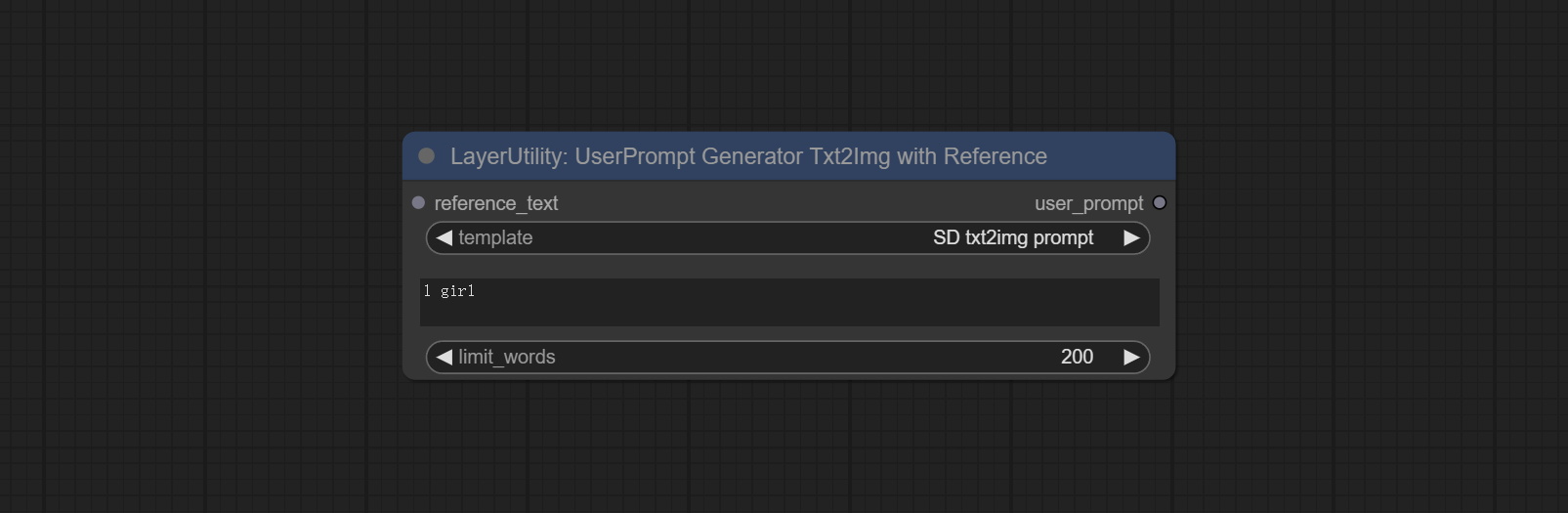

userpromptgeneratortxtimgwithreference 노드를 커밋하십시오.

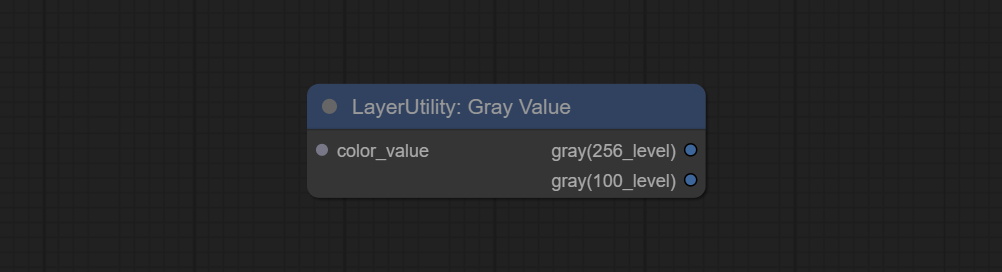

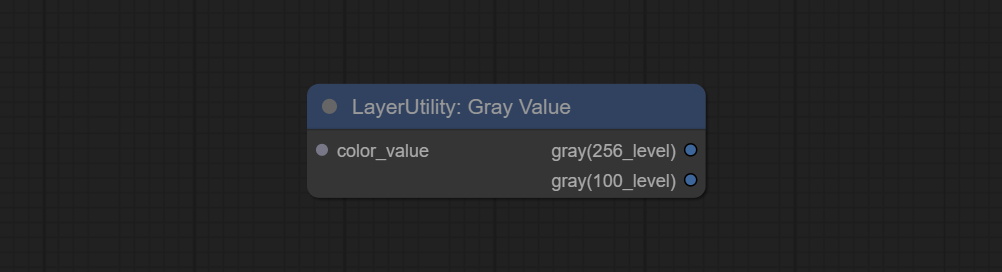

GrayValue 노드를 커밋하고 RGB 색상 값에 해당하는 GrayScale 값을 출력하십시오.

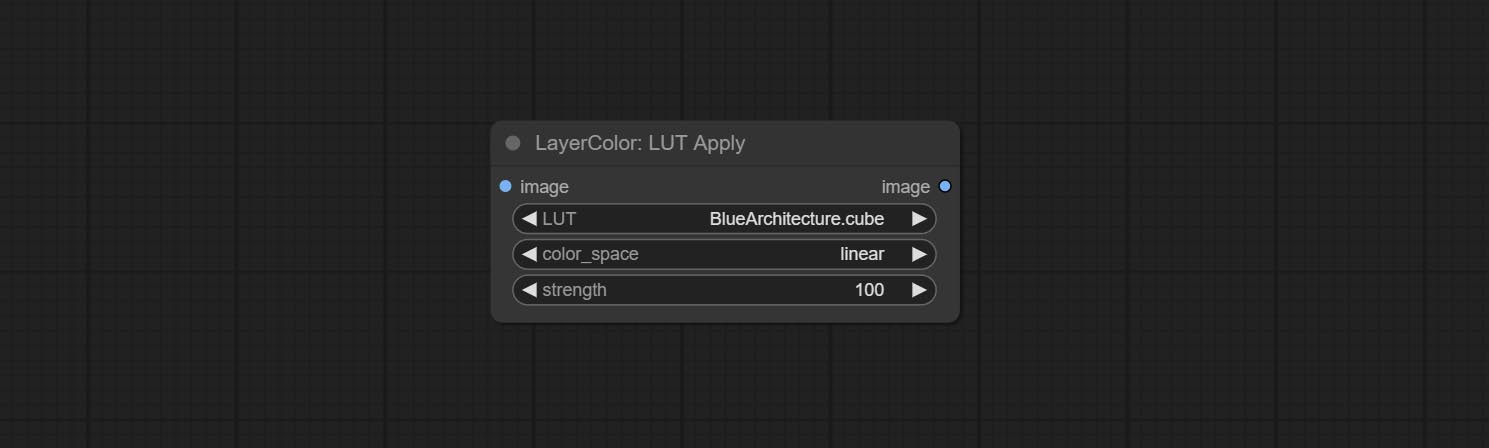

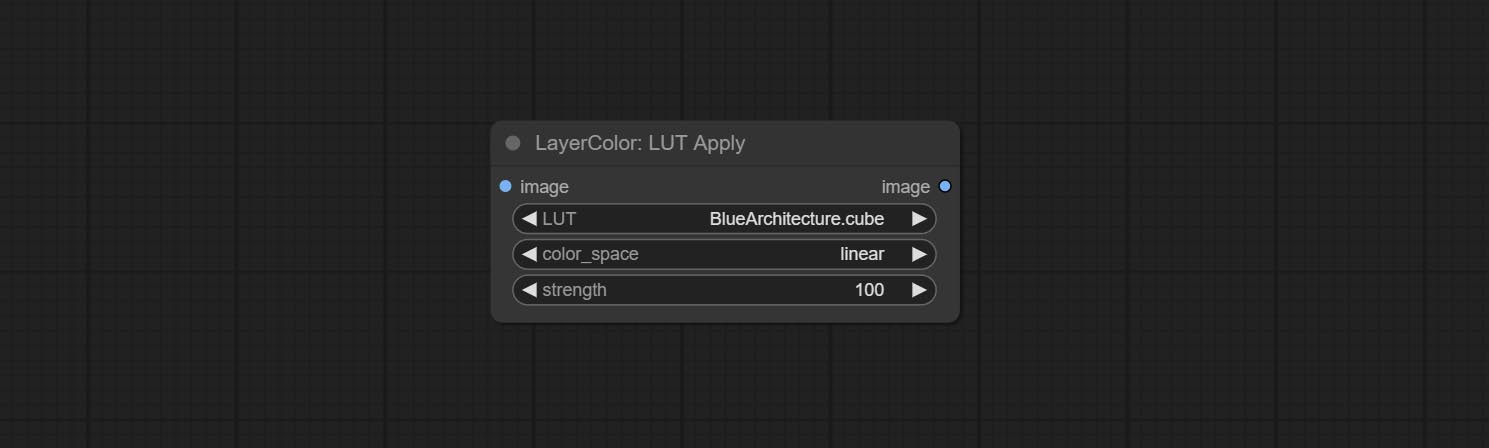

LUT는 TextImageV2, TextImage, SimpletextImage 노드를 적용하여 쉼표, 세미콜론 또는 공백으로 분리 된 resource-dir.ini 에서 여러 폴더를 정의하는 지원을 지원합니다. 동시에 실시간 업데이트를 새롭게 지원합니다.

LUT Apply, TextImagev2, TextImage, SimpletextImage 노드는 멀티 디렉토리 글꼴 및 LUT 폴더를 정의하고 새로 고침 및 실시간 업데이트를 지원합니다.

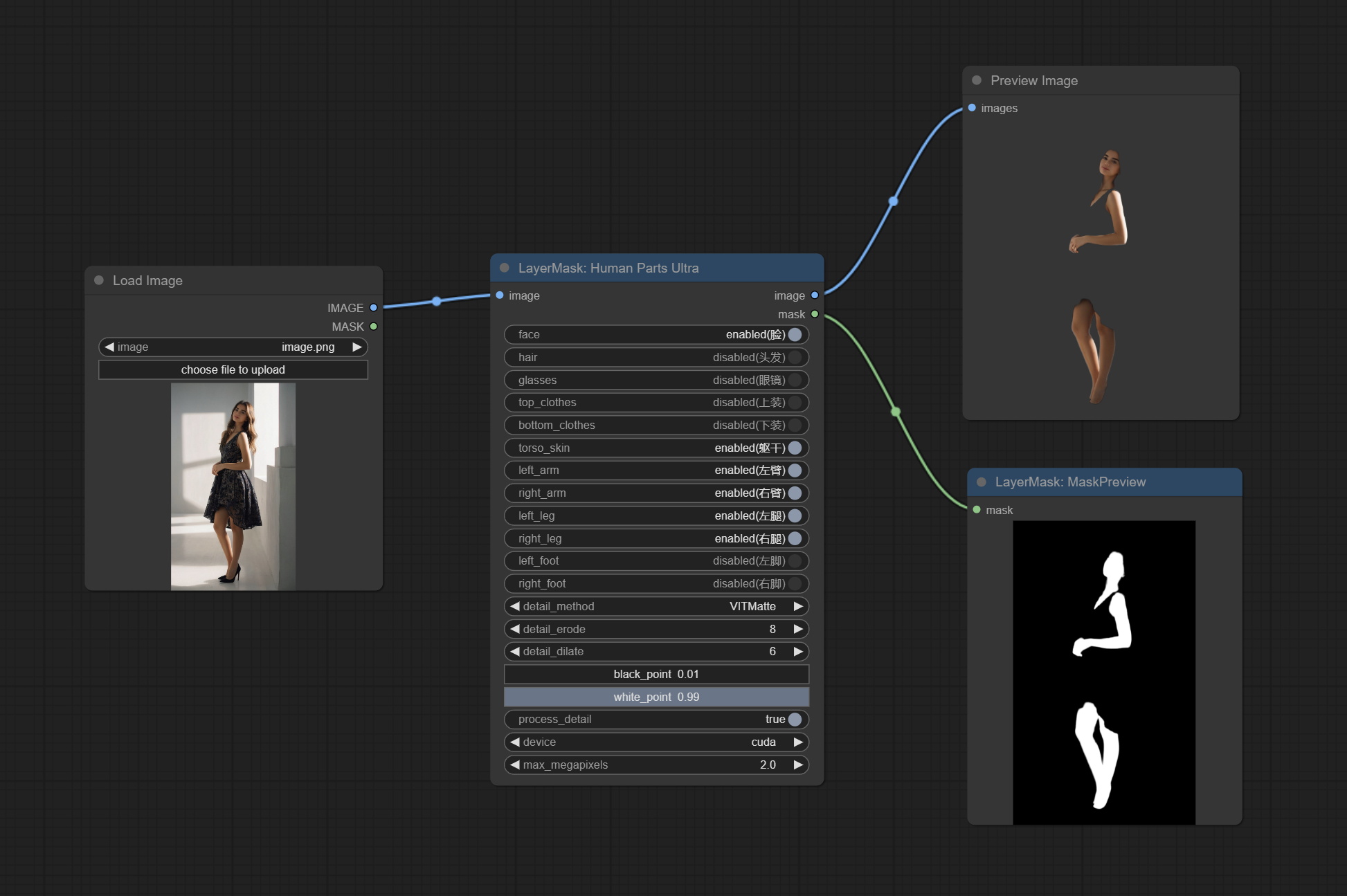

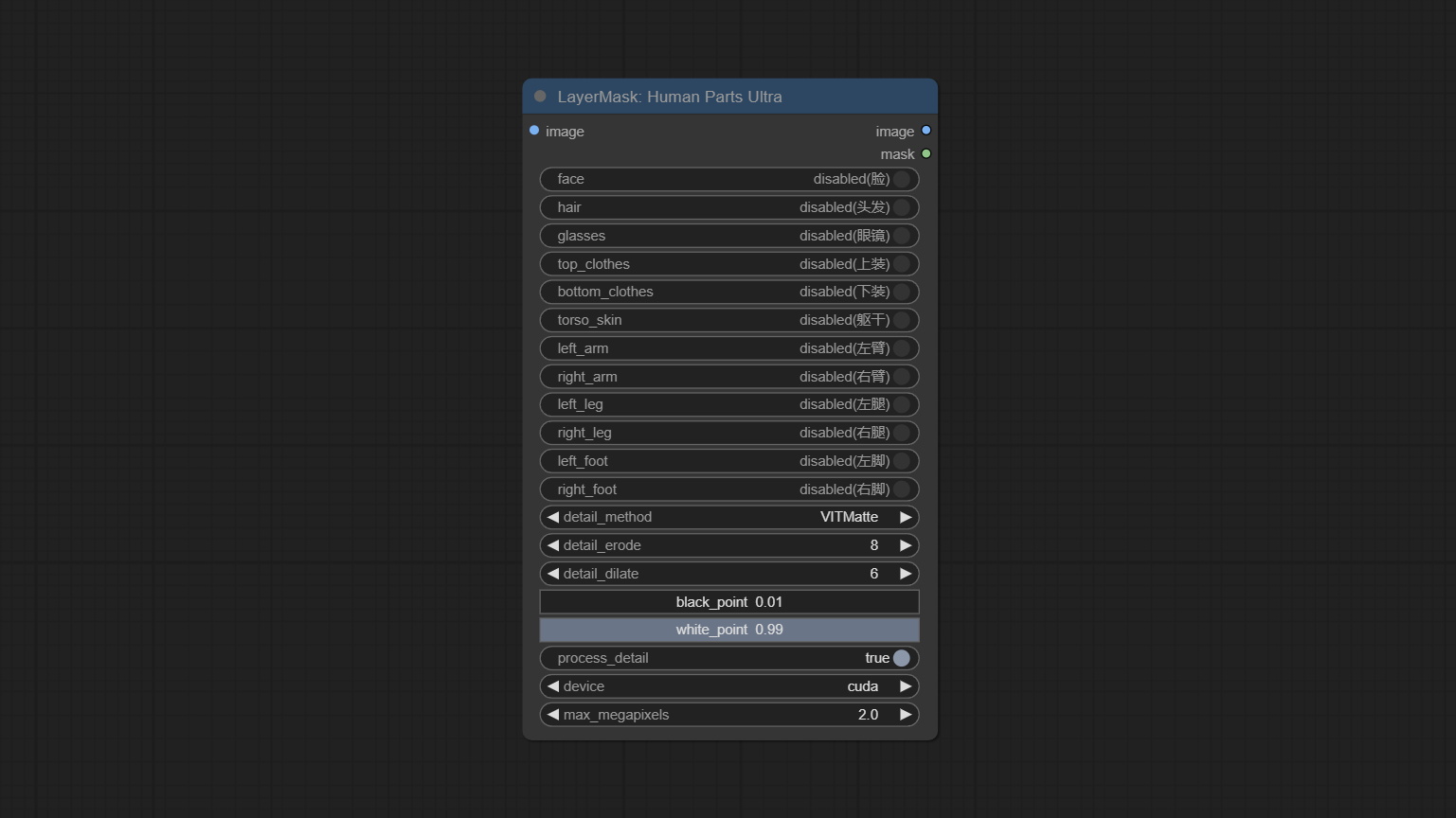

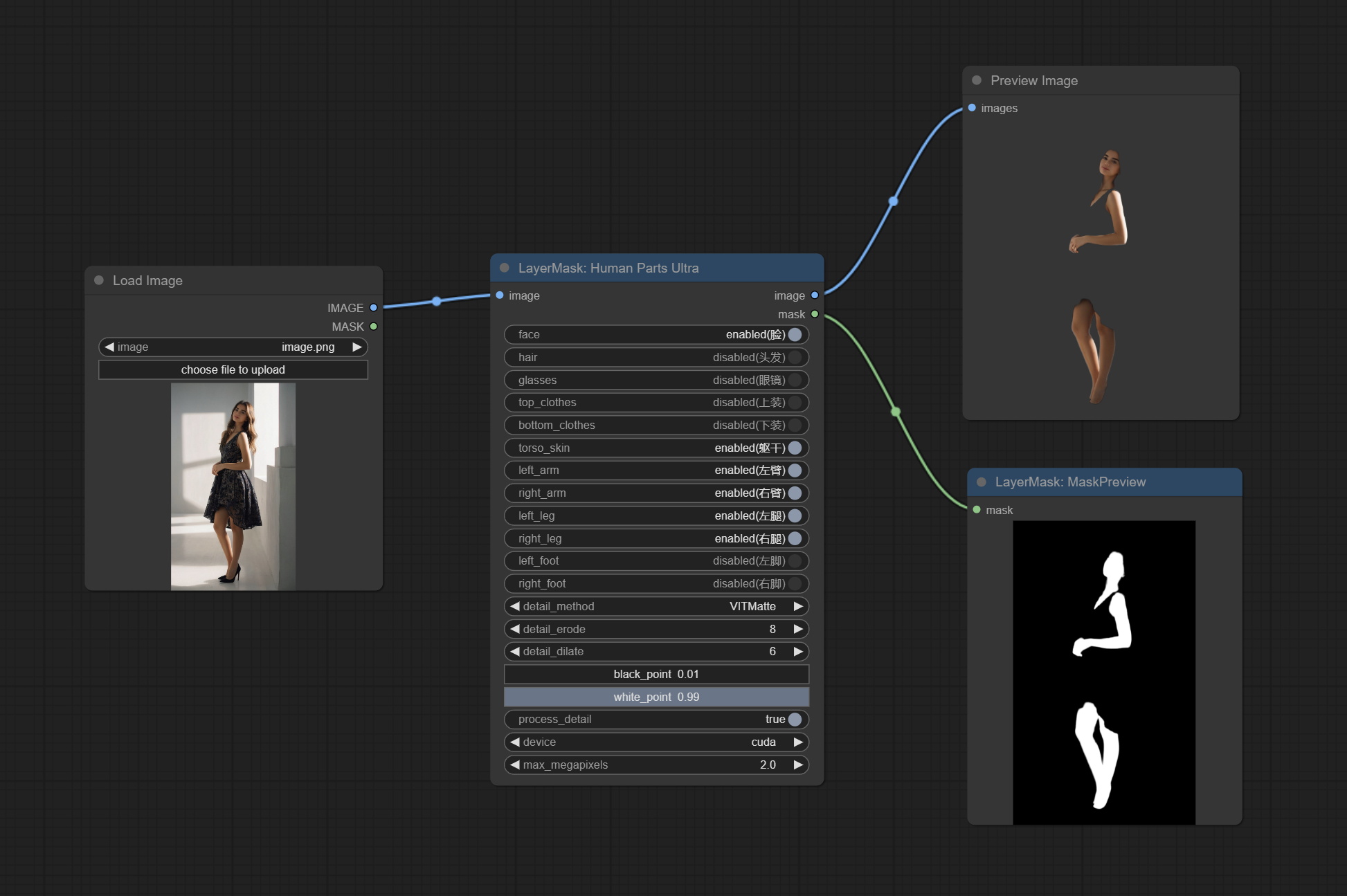

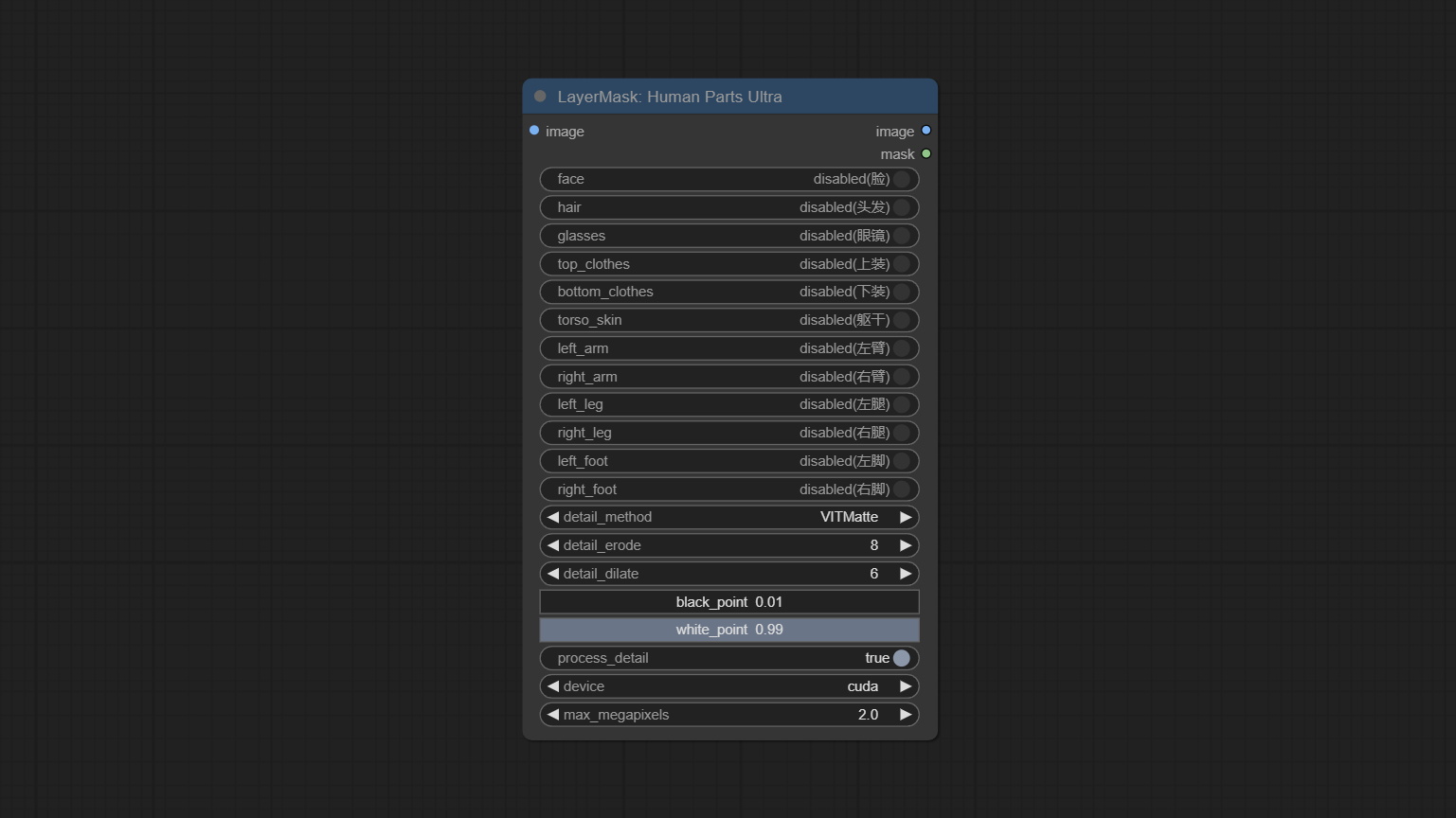

인체 부품 마스크를 생성하는 데 사용되는 HumanPartSultra 노드를 커밋하십시오. 그것은 Metal3d/Comfyui_human_parts의 Warrper를 기반으로합니다. 원래 저자에게 감사합니다. BaidunetDisk 또는 HuggingFace에서 모델 파일을 다운로드하고 ComfyUImodelsonnxhuman-parts 폴더에 복사하십시오.

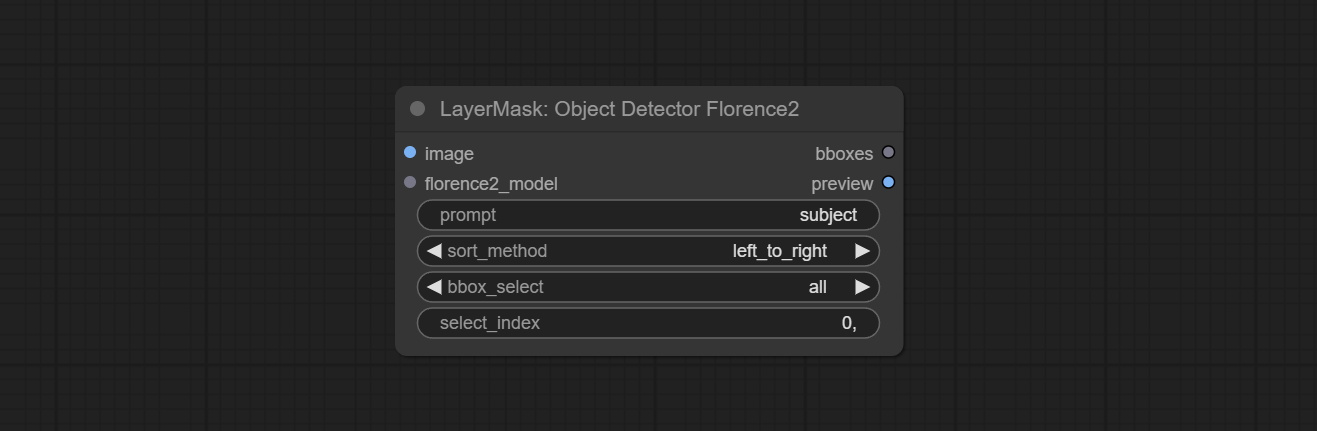

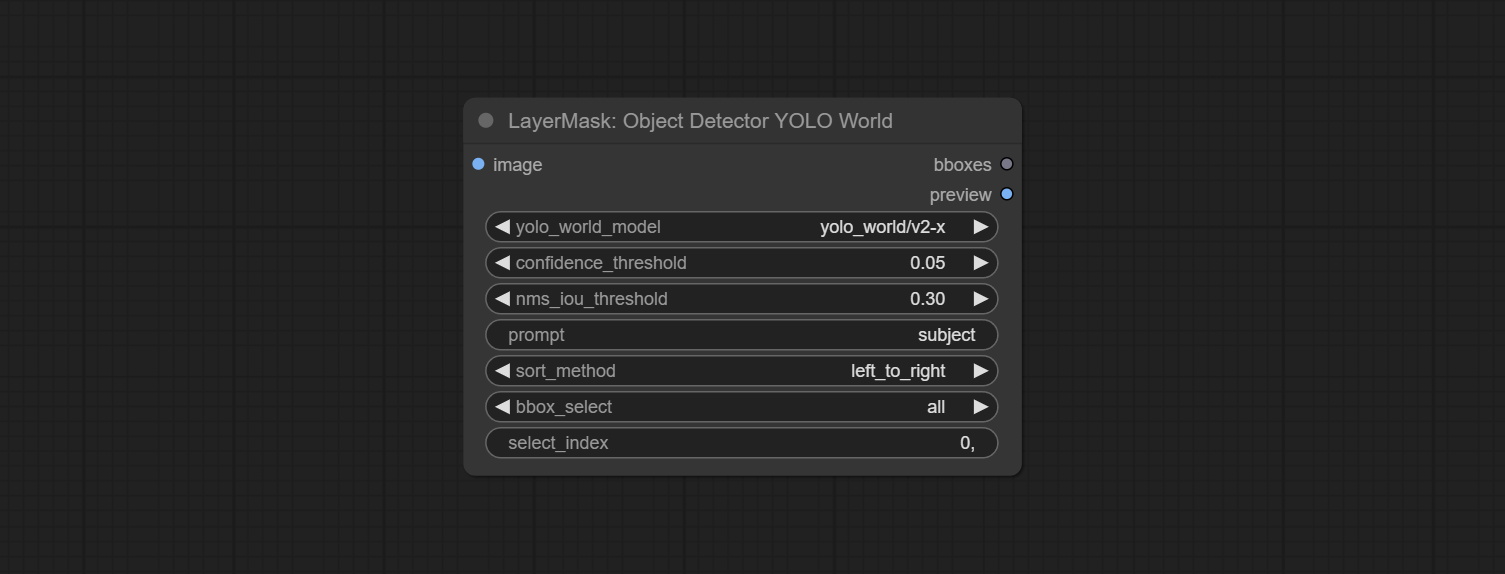

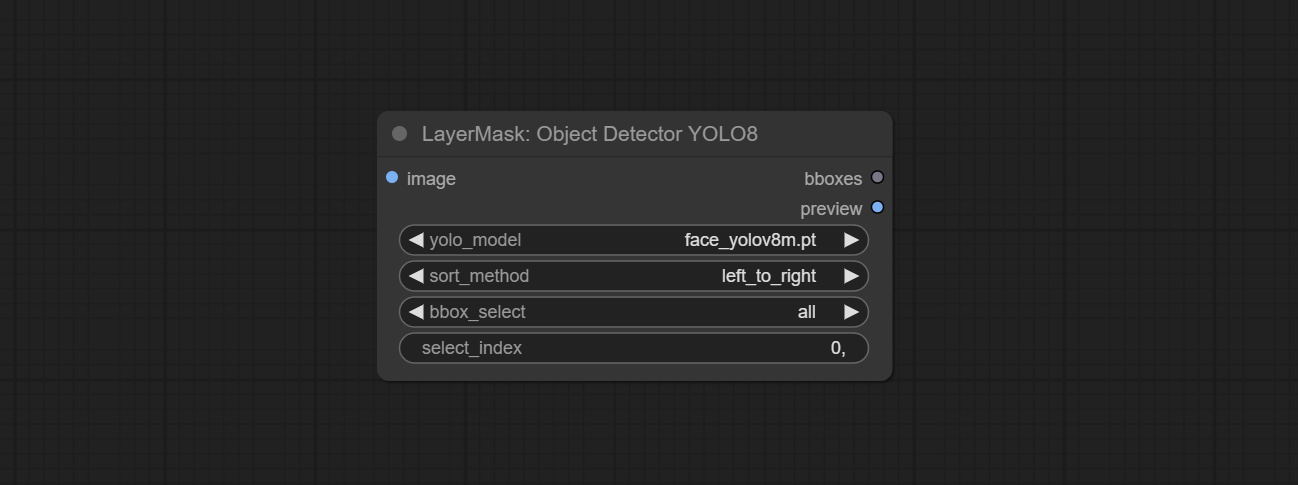

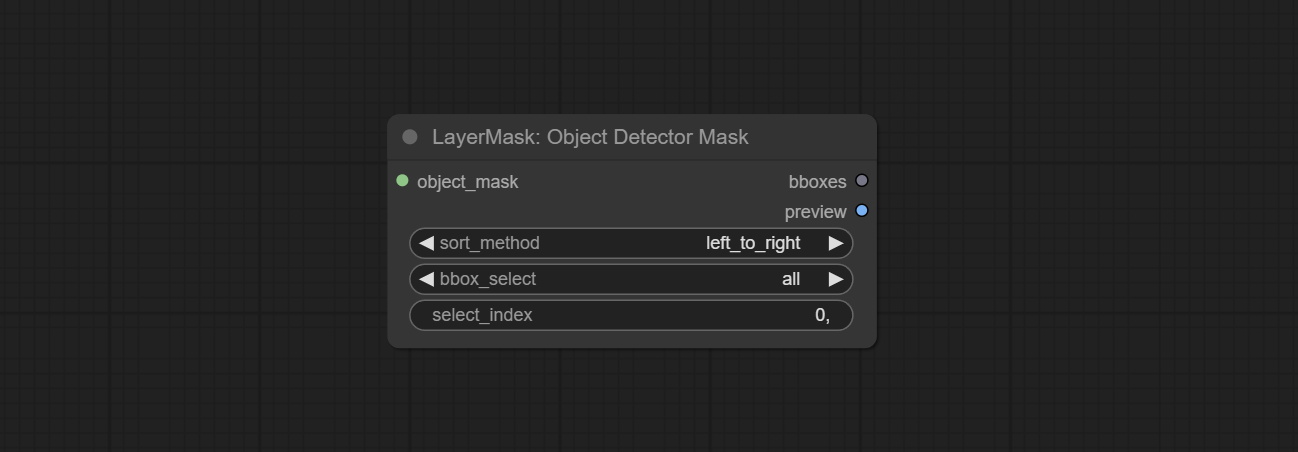

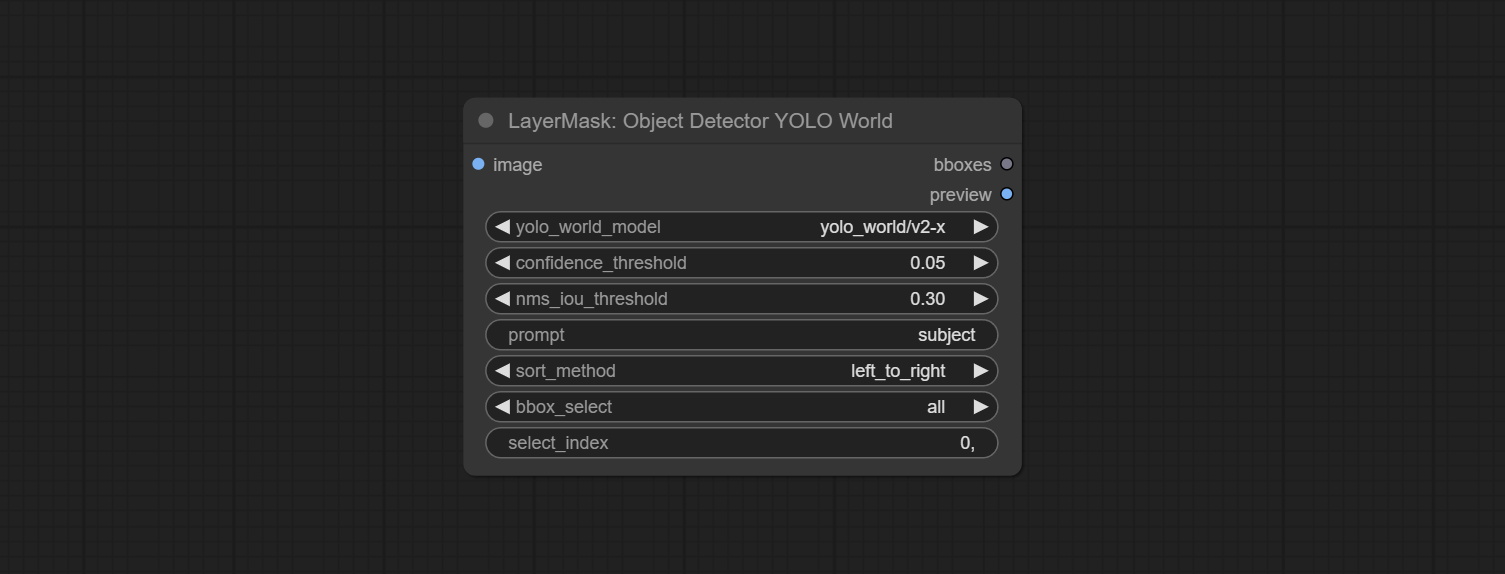

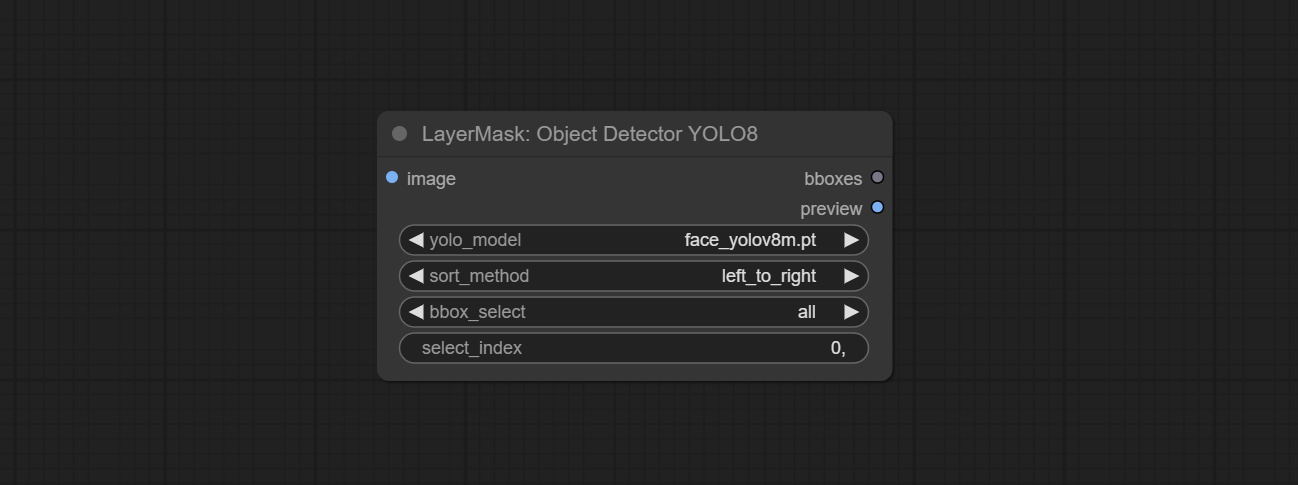

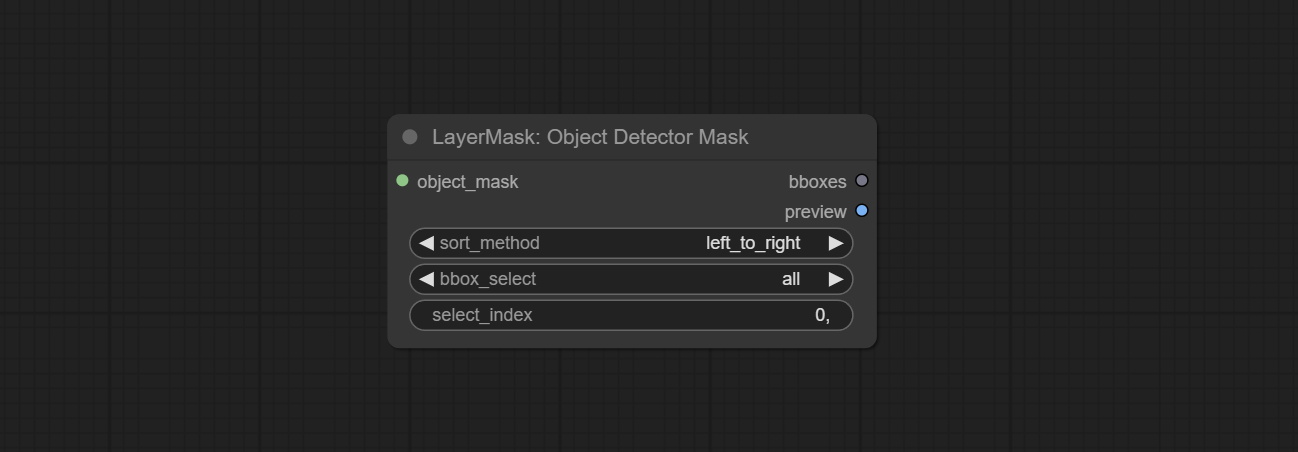

ObjectDetector 노드는 신뢰 옵션별로 정렬을 추가합니다.

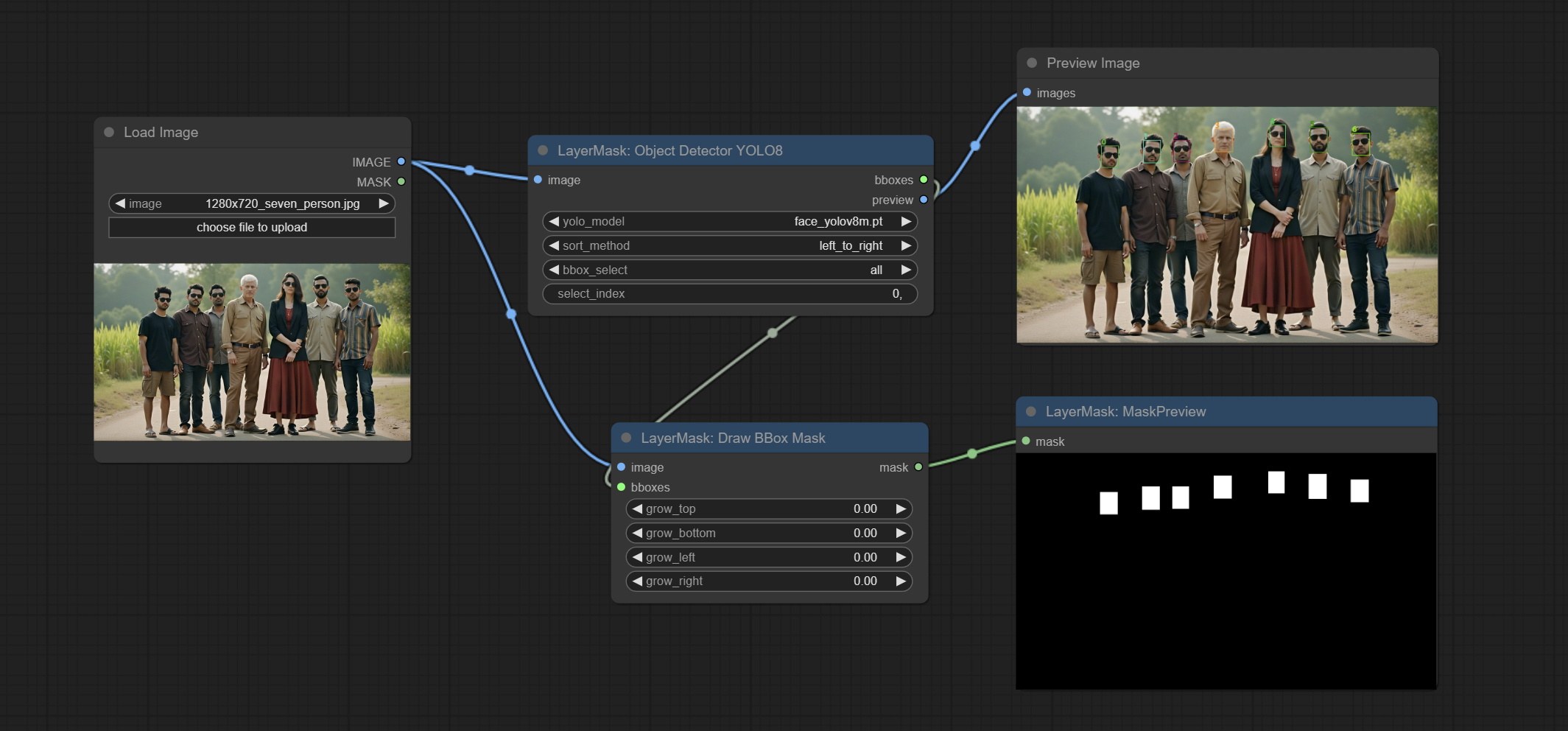

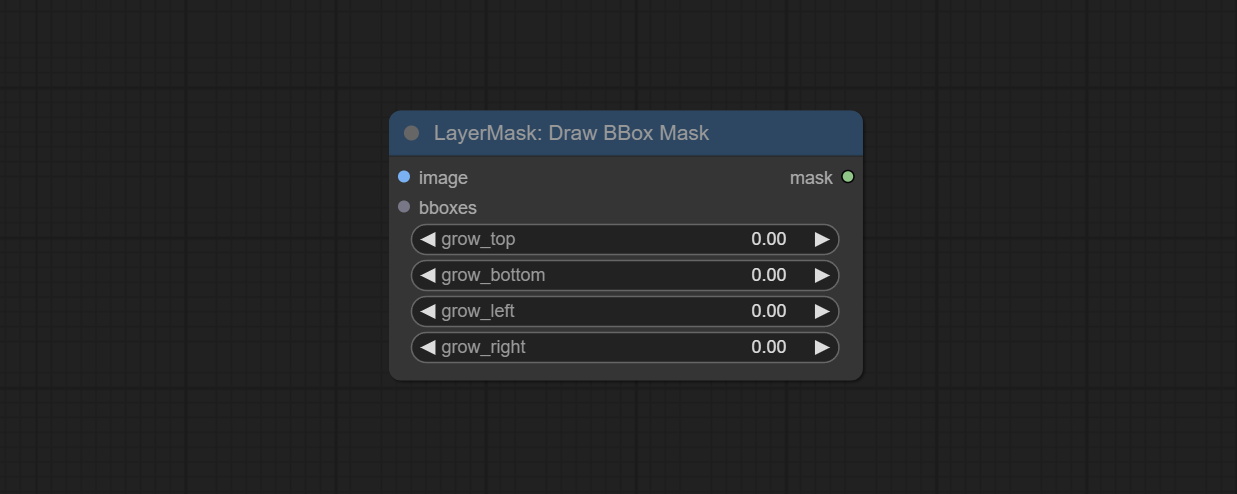

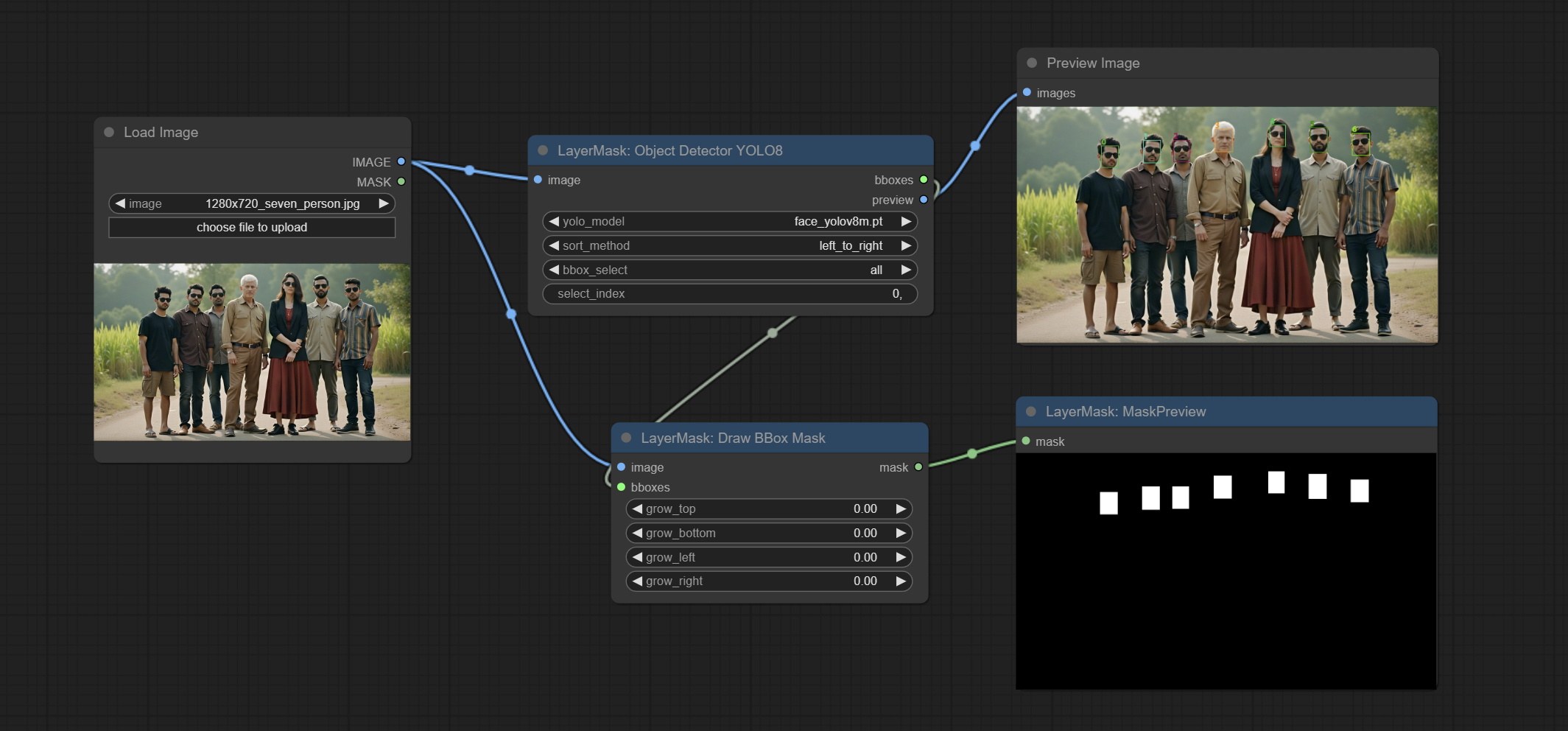

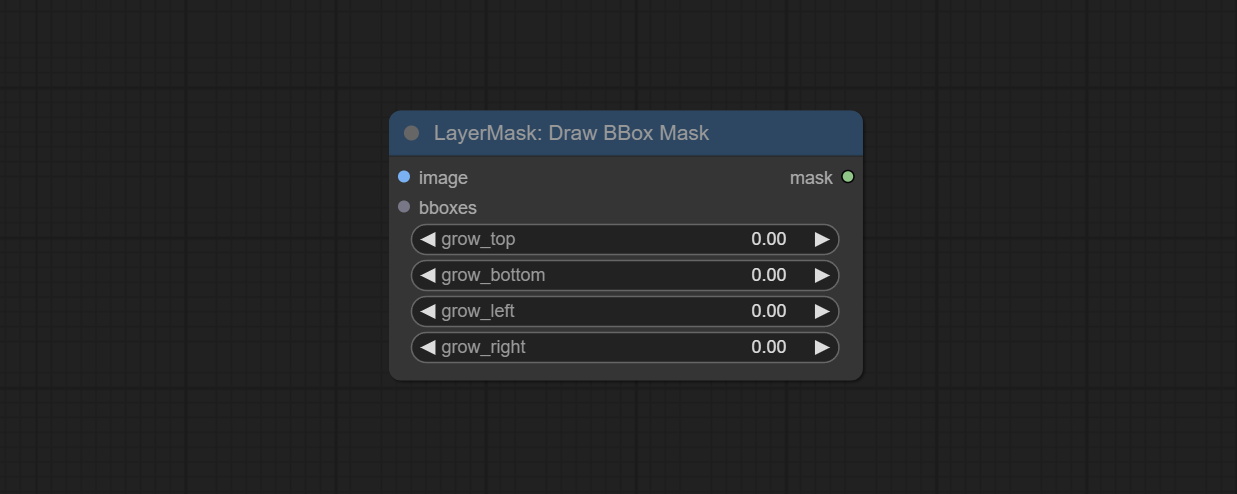

Commit DrawBboxMask 노드는 객체 검출기 노드의 Bbox 출력을 마스크로 변환하는 데 사용됩니다.

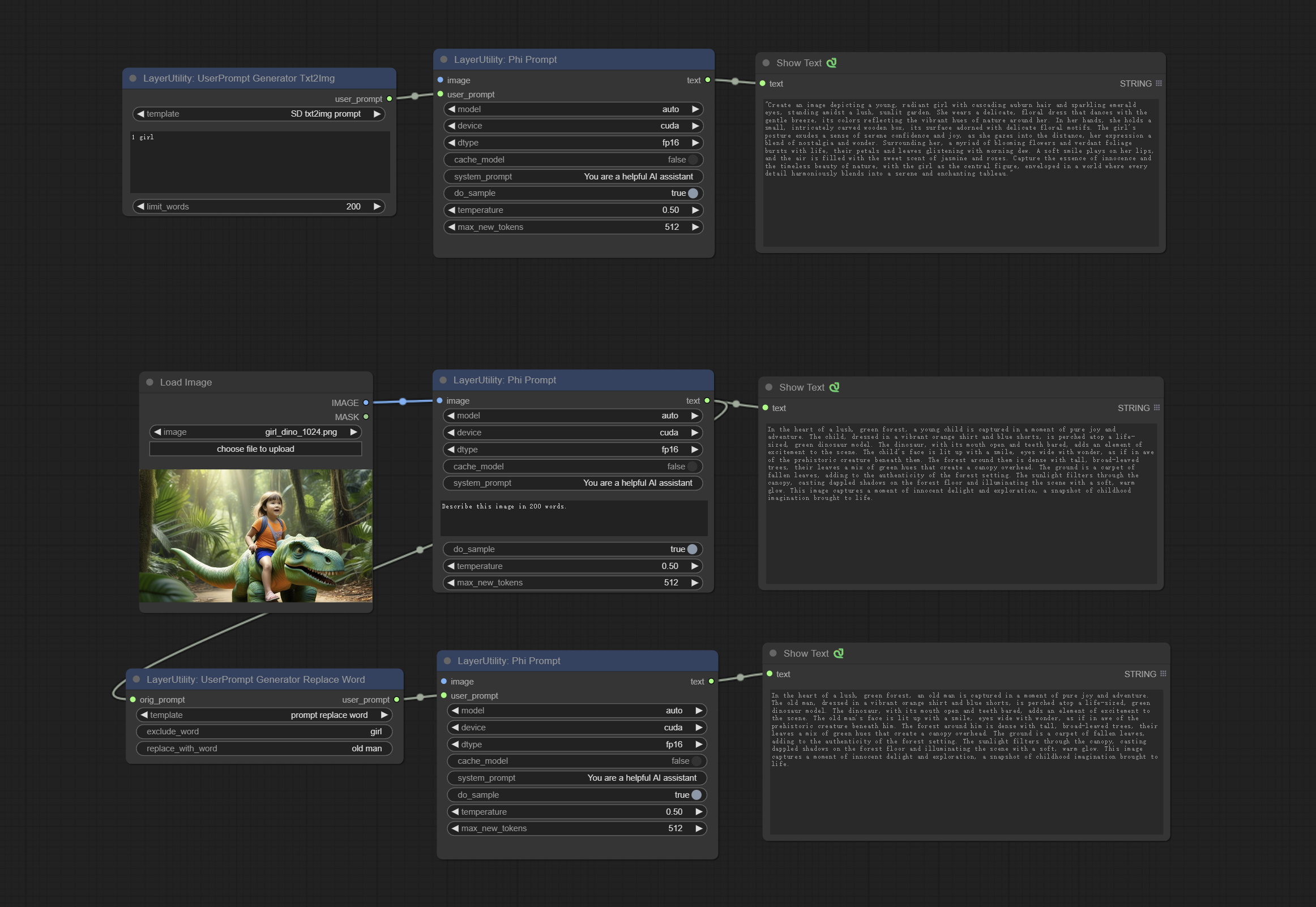

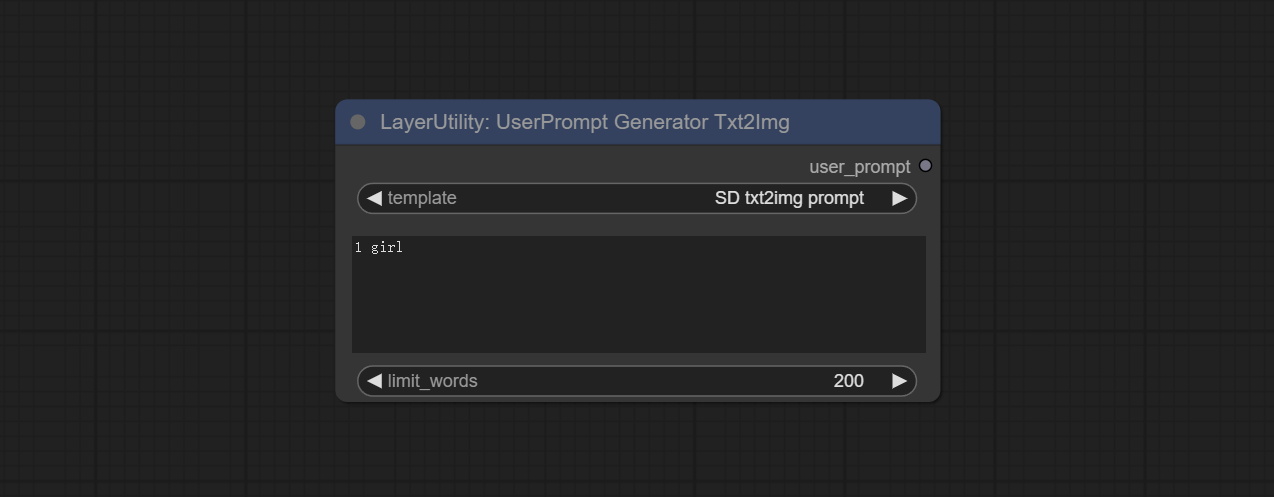

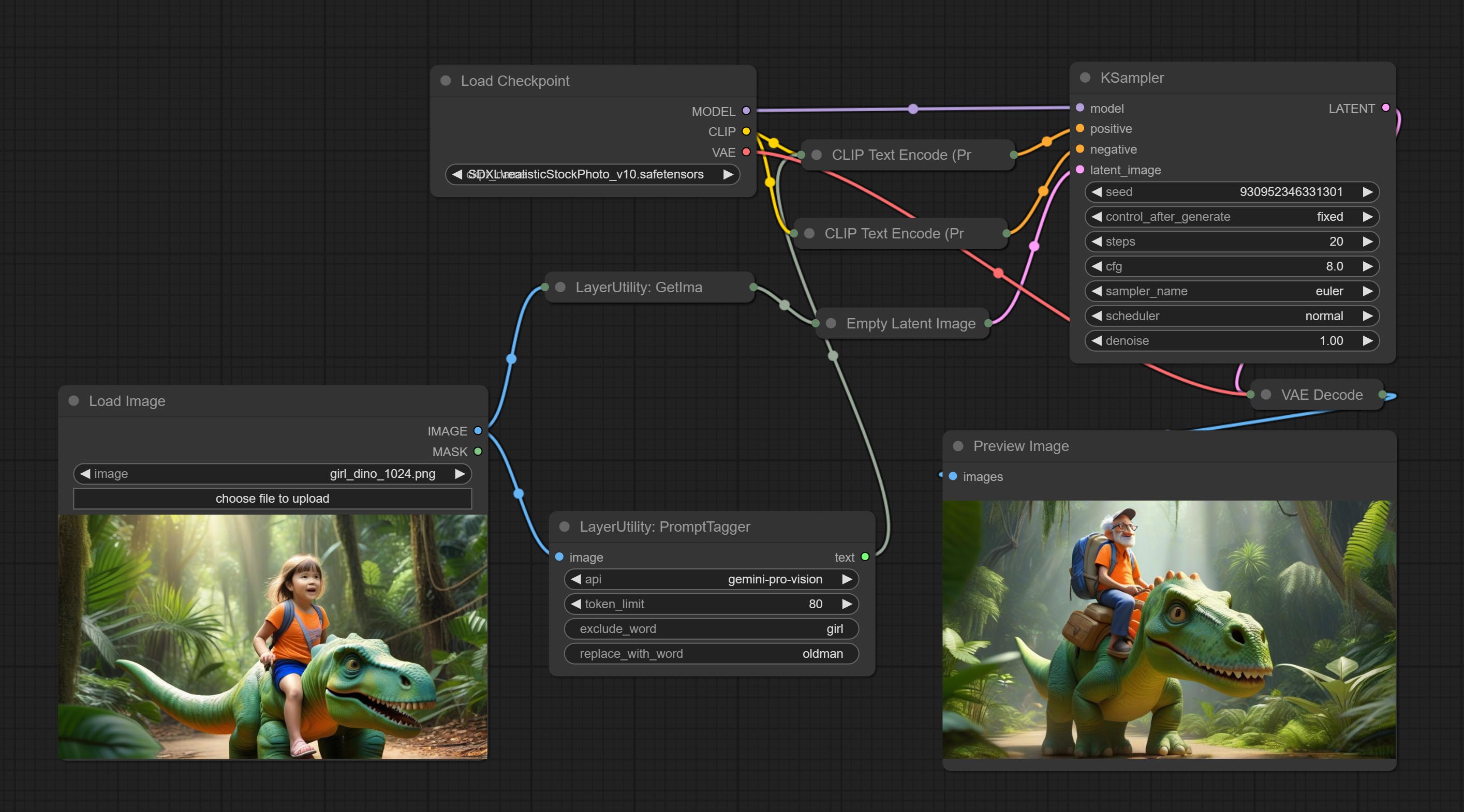

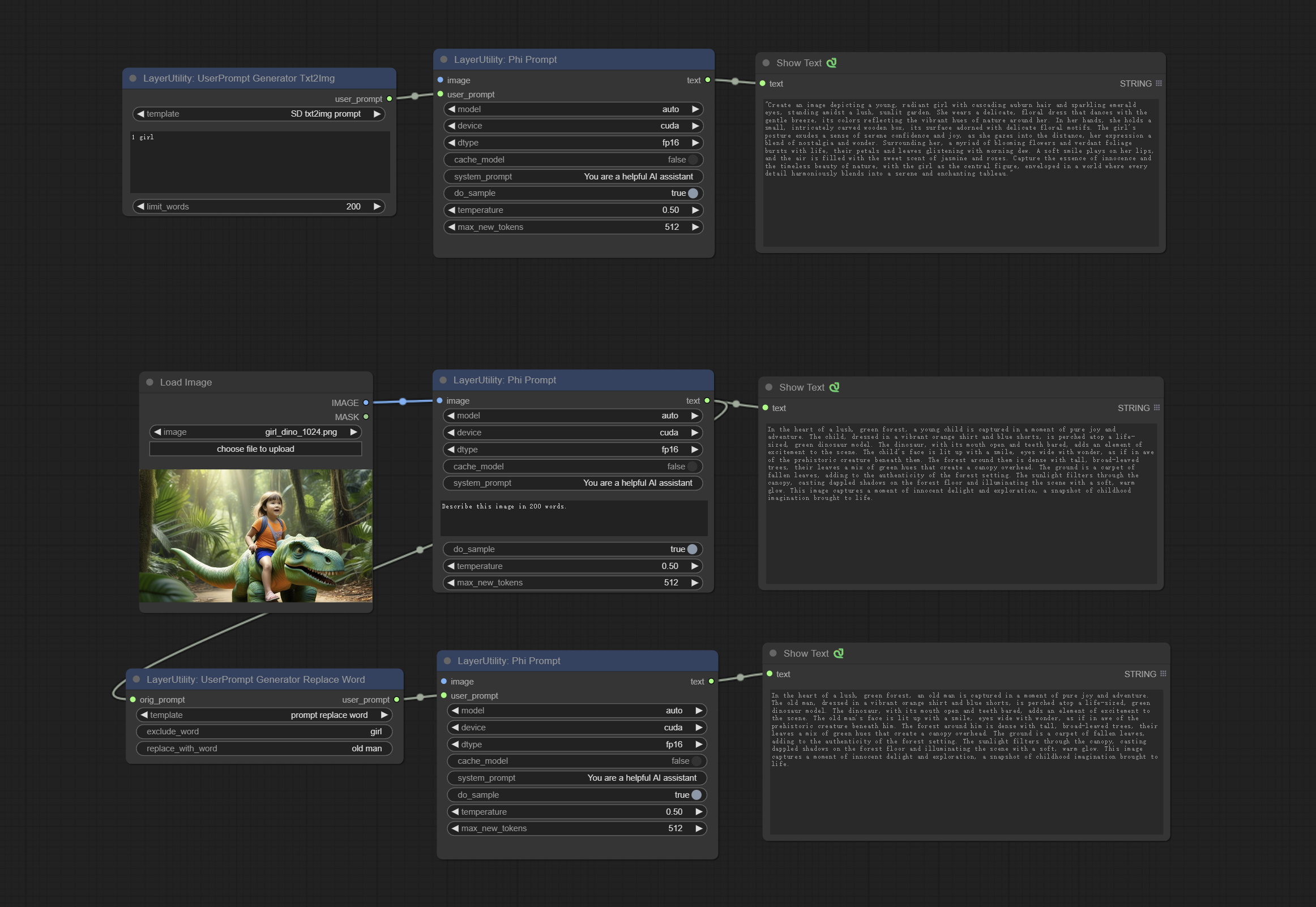

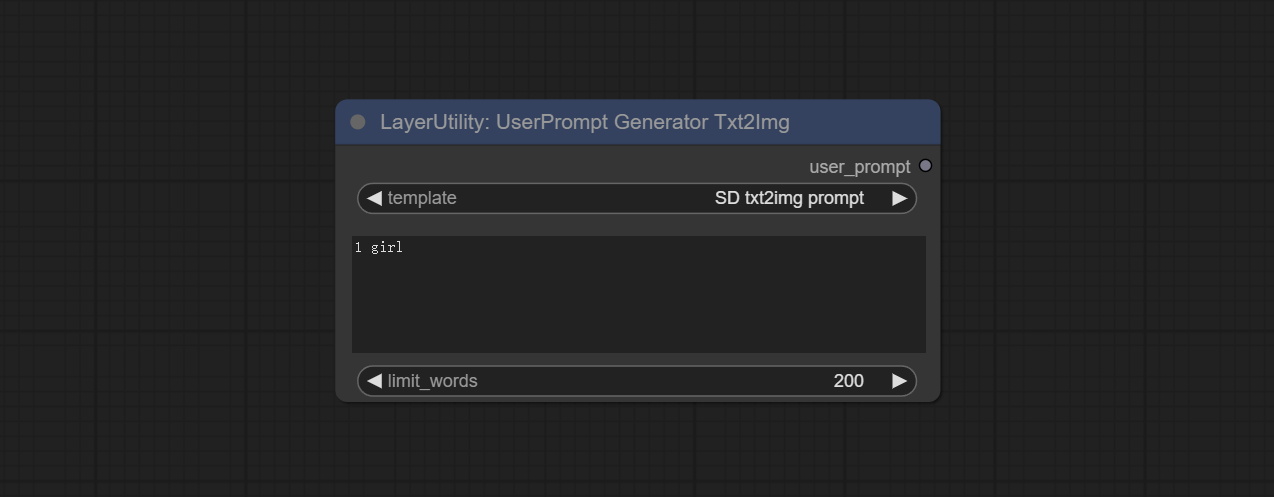

텍스트 및 이미지 프롬프트를 생성하고 프롬프트 컨텐츠를 바꾸는 데 사용되는 userpromptgeneratortxtimg 및 userpromptgeneratorreplaceword 노드를 커밋하십시오.

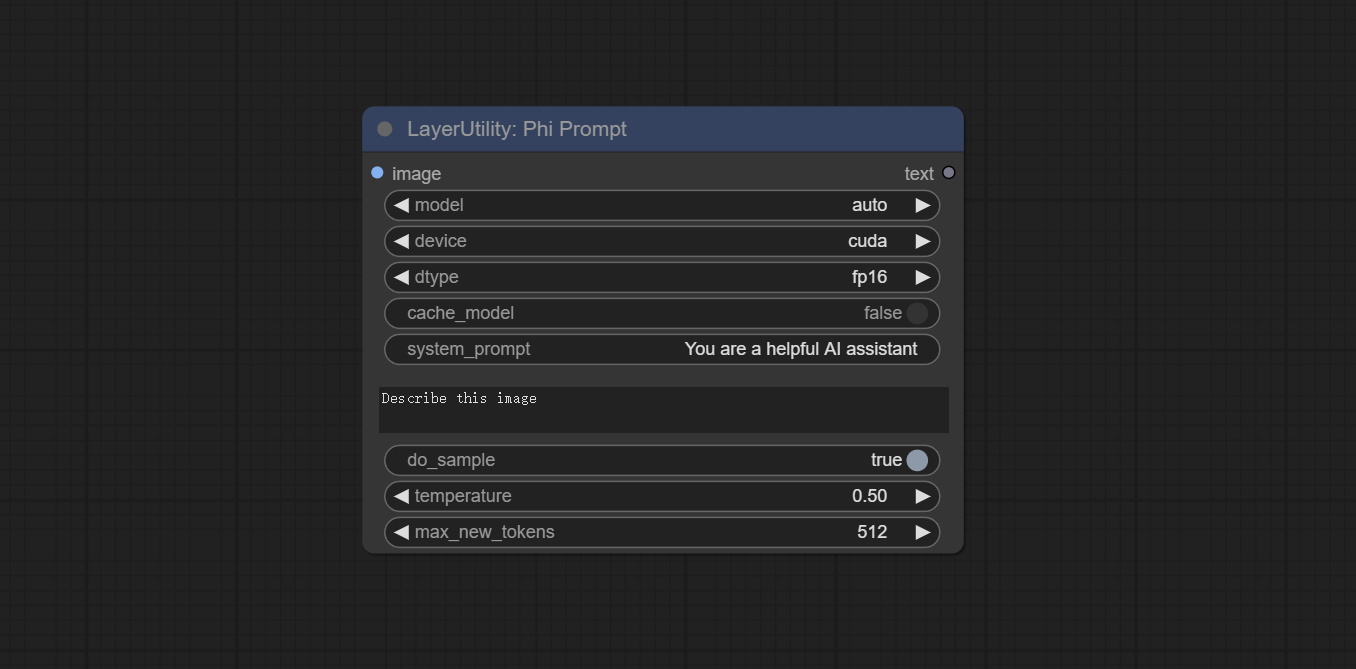

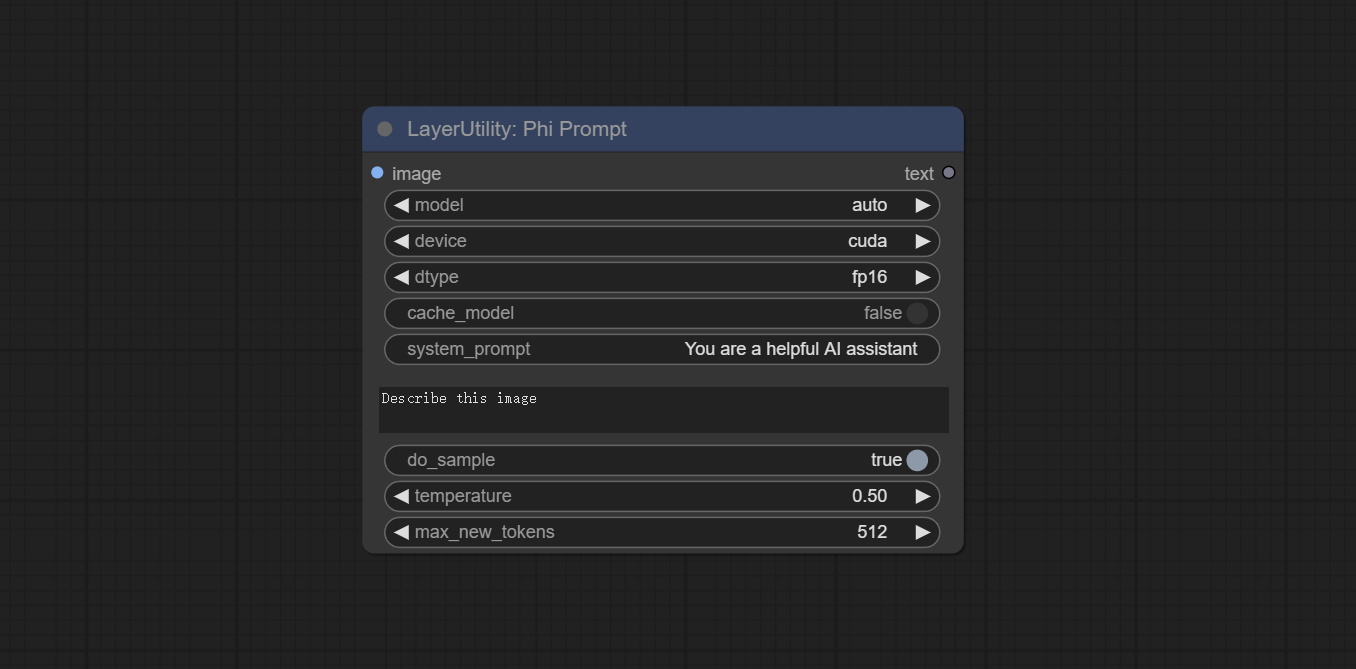

Phiprompt 노드를 커밋하고 로컬 추론에 Microsoft Phi 3.5 텍스트 및 시각적 모델을 사용하십시오. 프롬프트 단어를 생성하거나 프롬프트 단어를 처리하거나 이미지에서 프롬프트 단어를 추론하는 데 사용할 수 있습니다. 이 모델을 실행하려면 16GB 이상의 비디오 메모리가 필요합니다.

Baidunetdisk 또는 Huggingface.co/microsoft에서 모델 파일을 다운로드하고 Huggingface.co/microsoft/phi-3.5-mini-instruct에서 ComfyUImodelsLLM 폴더에 복사하십시오.

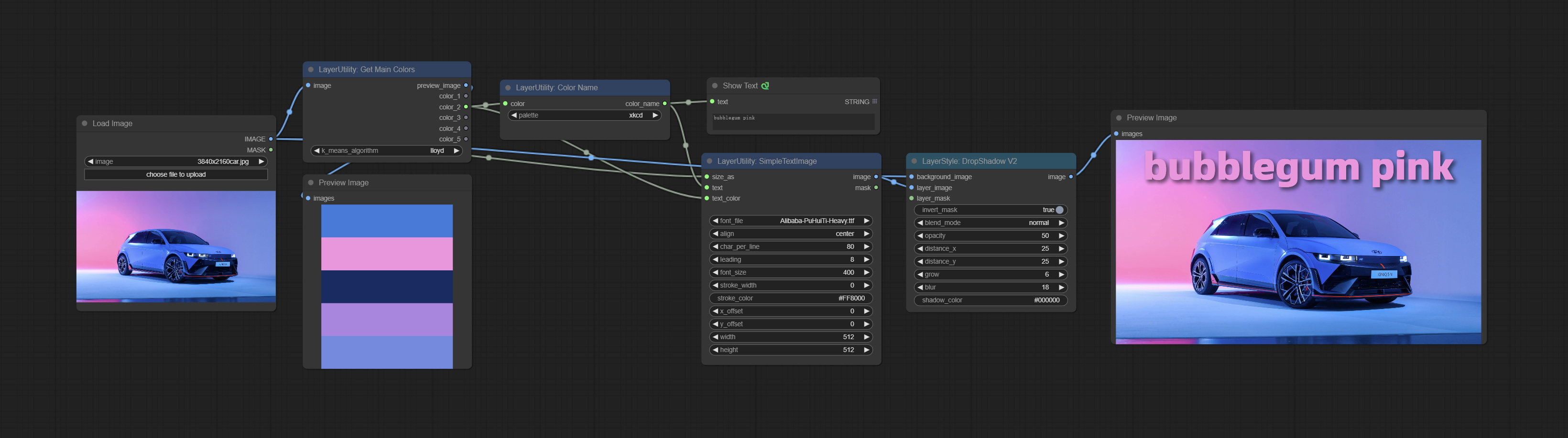

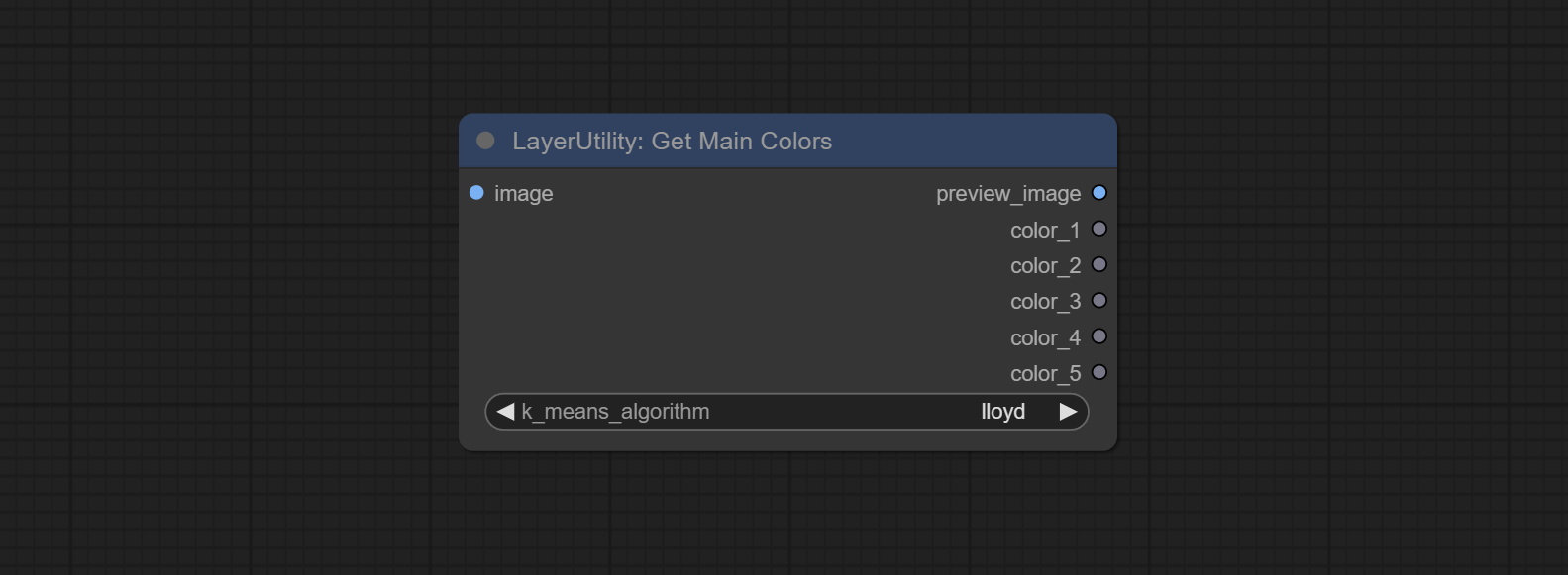

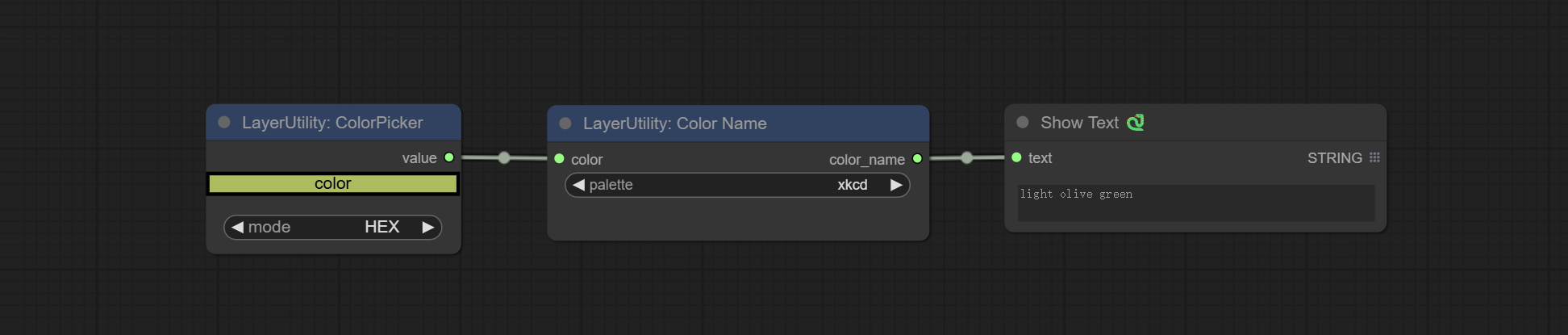

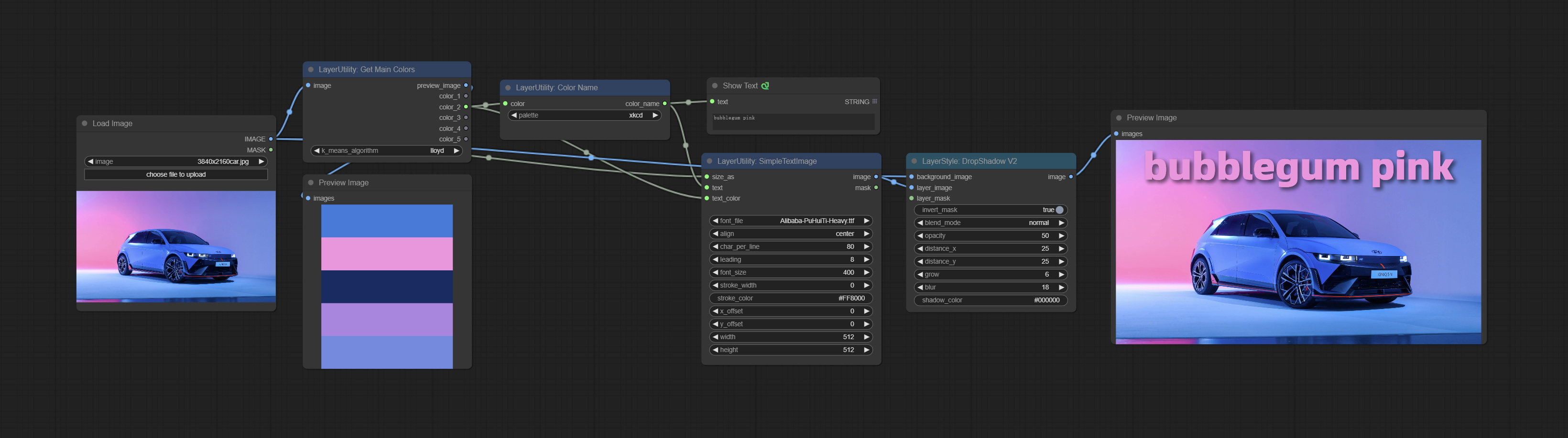

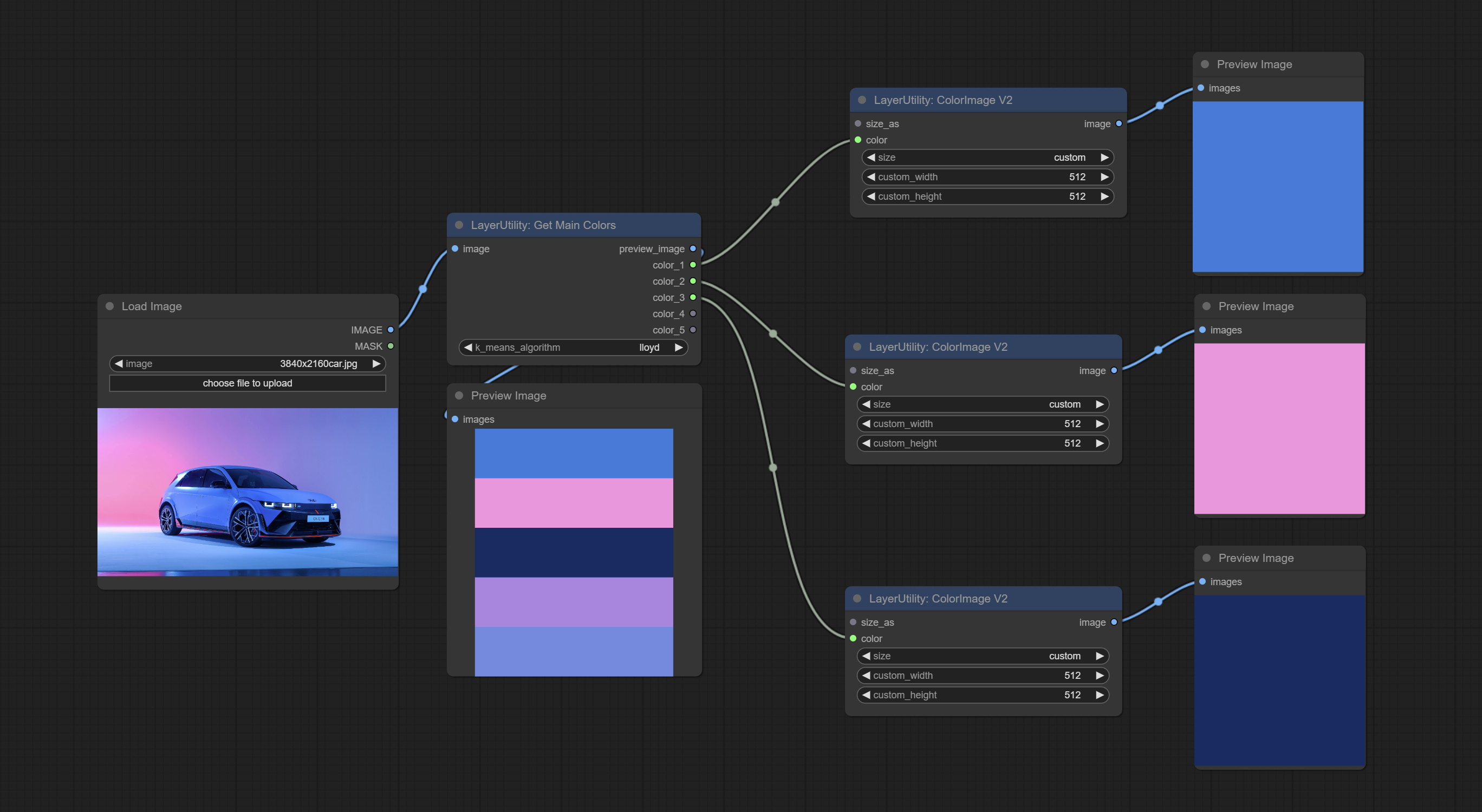

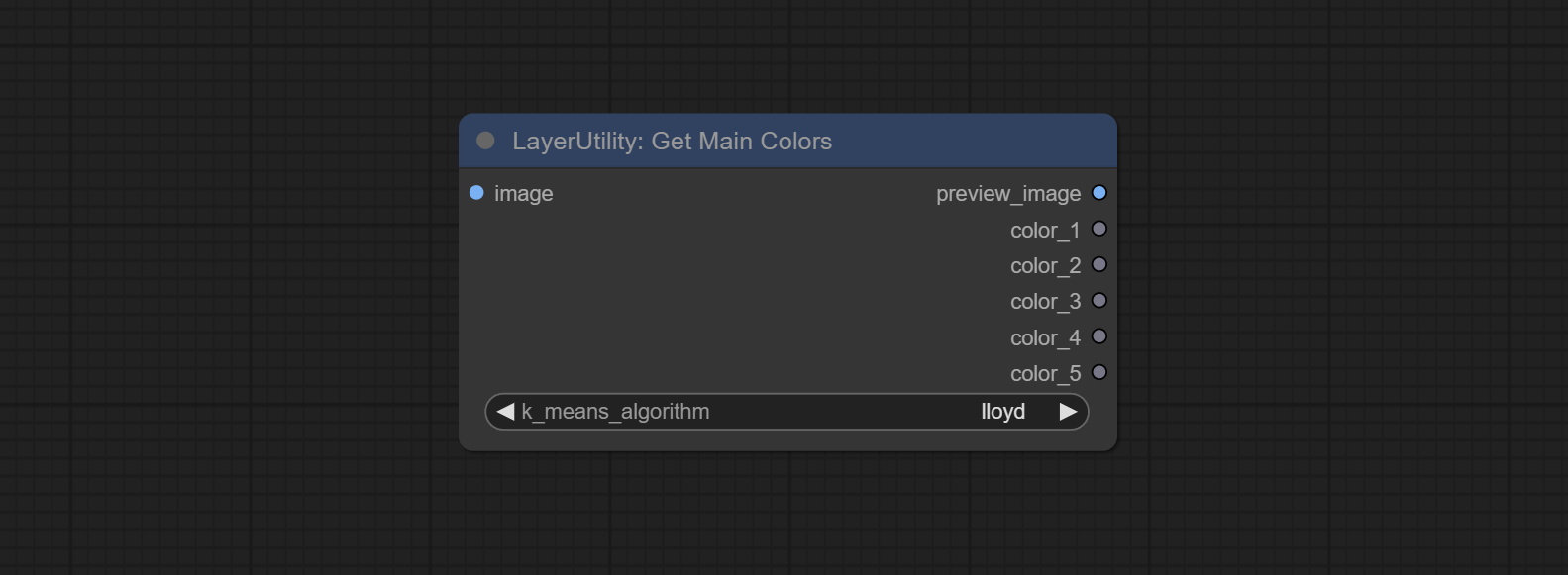

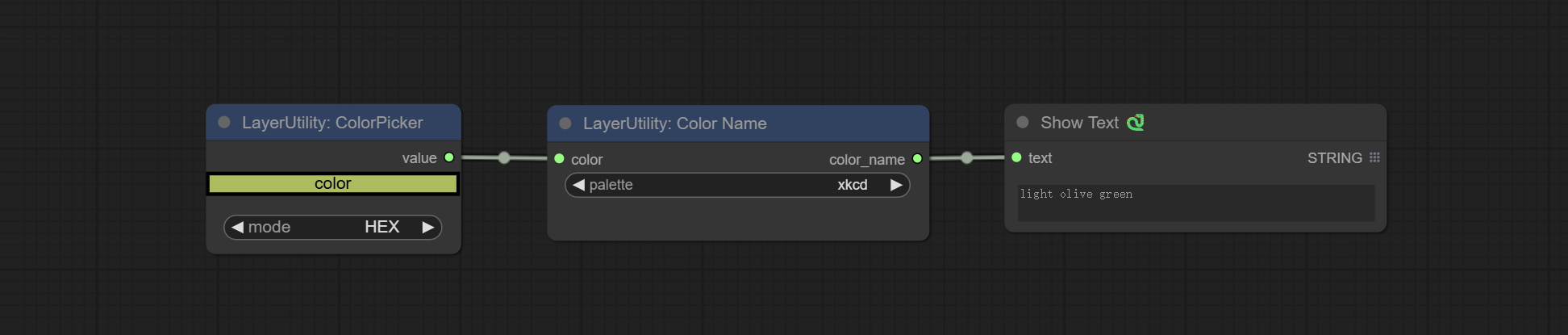

getMainColors 노드를 커밋하면 5 가지 주요 색상의 이미지를 얻을 수 있습니다. CONCORNAME 노드를 커밋하면 입력 색상 값의 색상 이름을 얻을 수 있습니다.

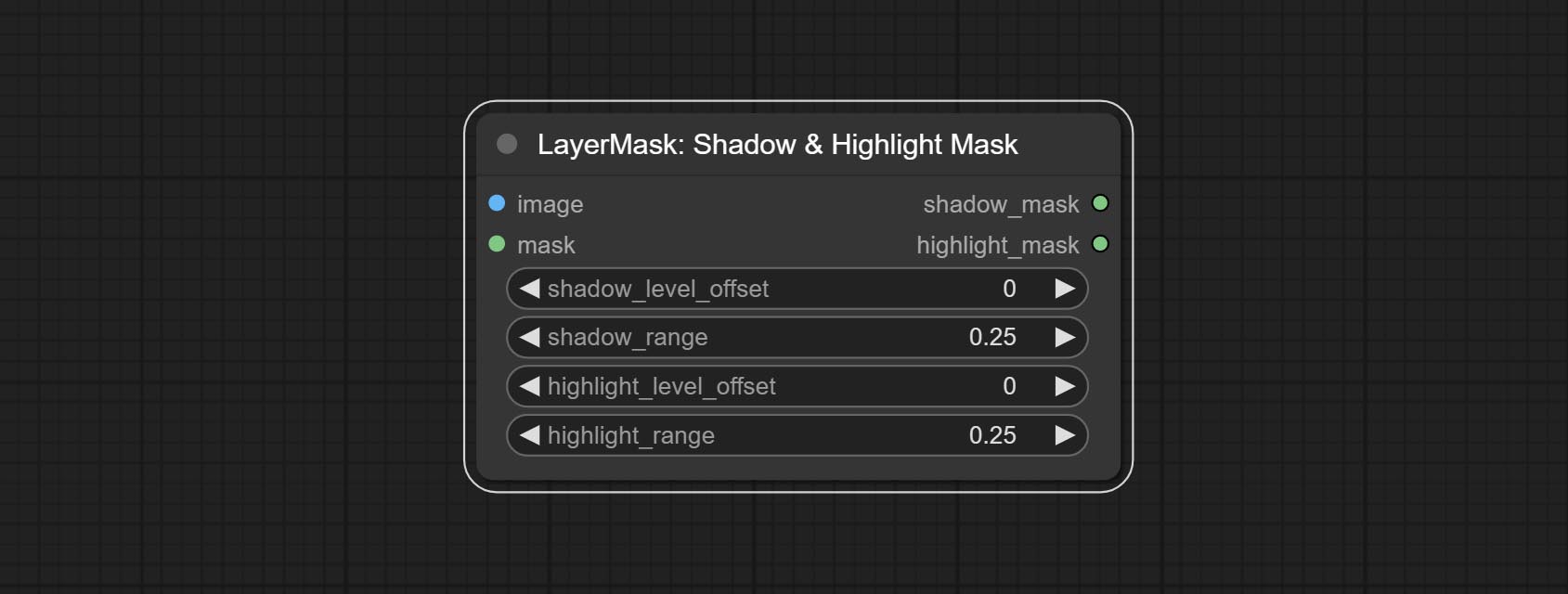

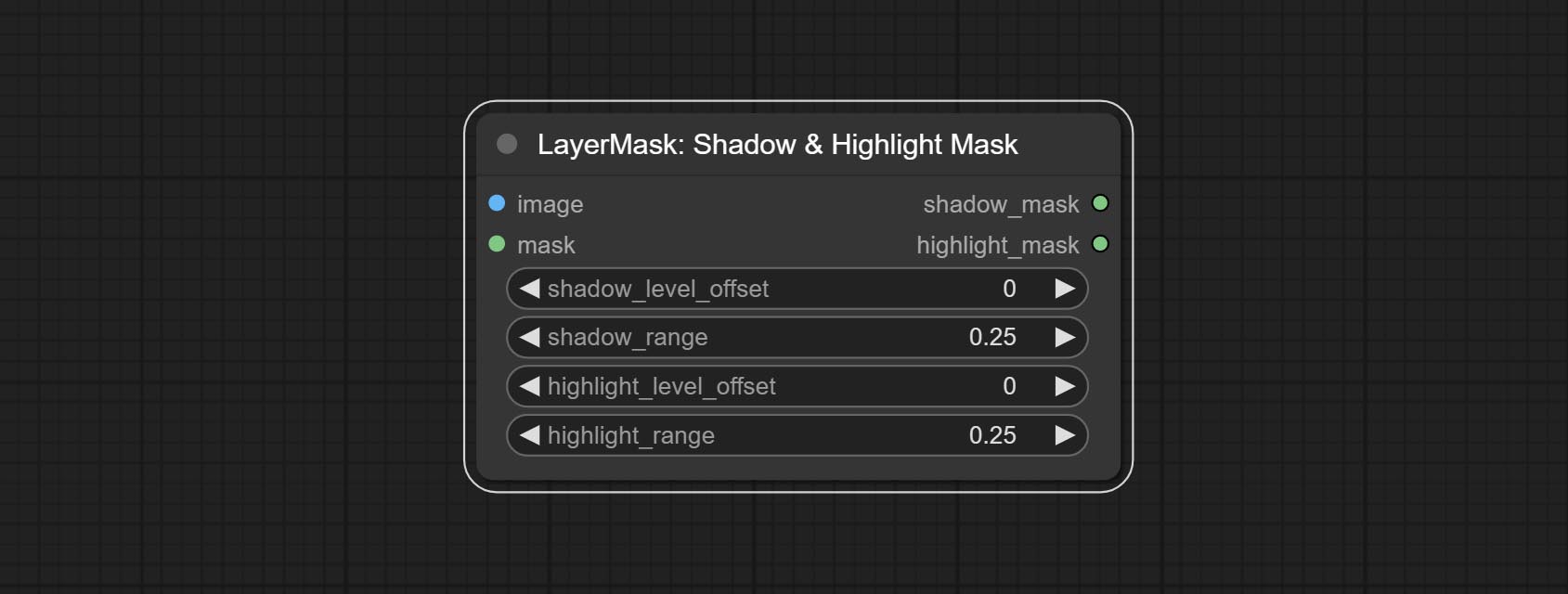

밝기 및 대비 노드를 BrightnessContrastV2, Shadow & Highlight Node의 색상으로 ColorfshadowHighlight로, Shadow & Highlight Mask의 색상을 Shadow Mask V2로 복제하여 Node 이름의 "&"문자로 인한 Comfyui 워크 플로우 구문 분석의 오류를 피하십시오.

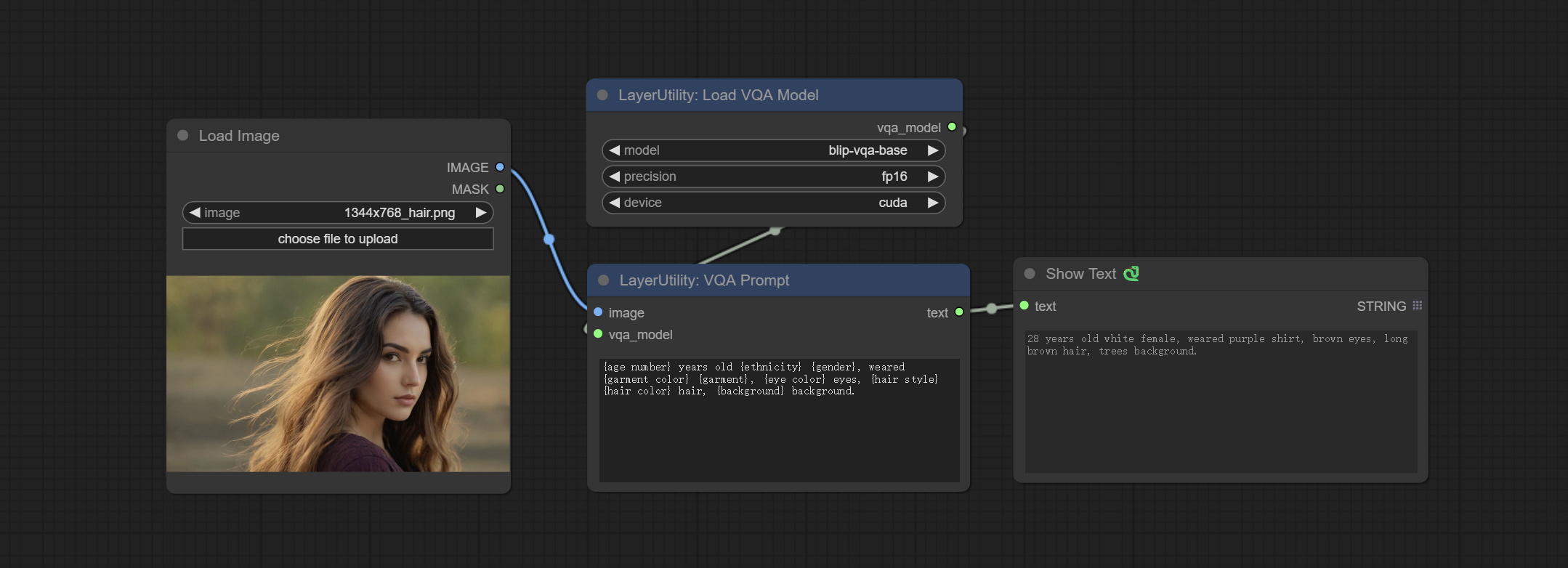

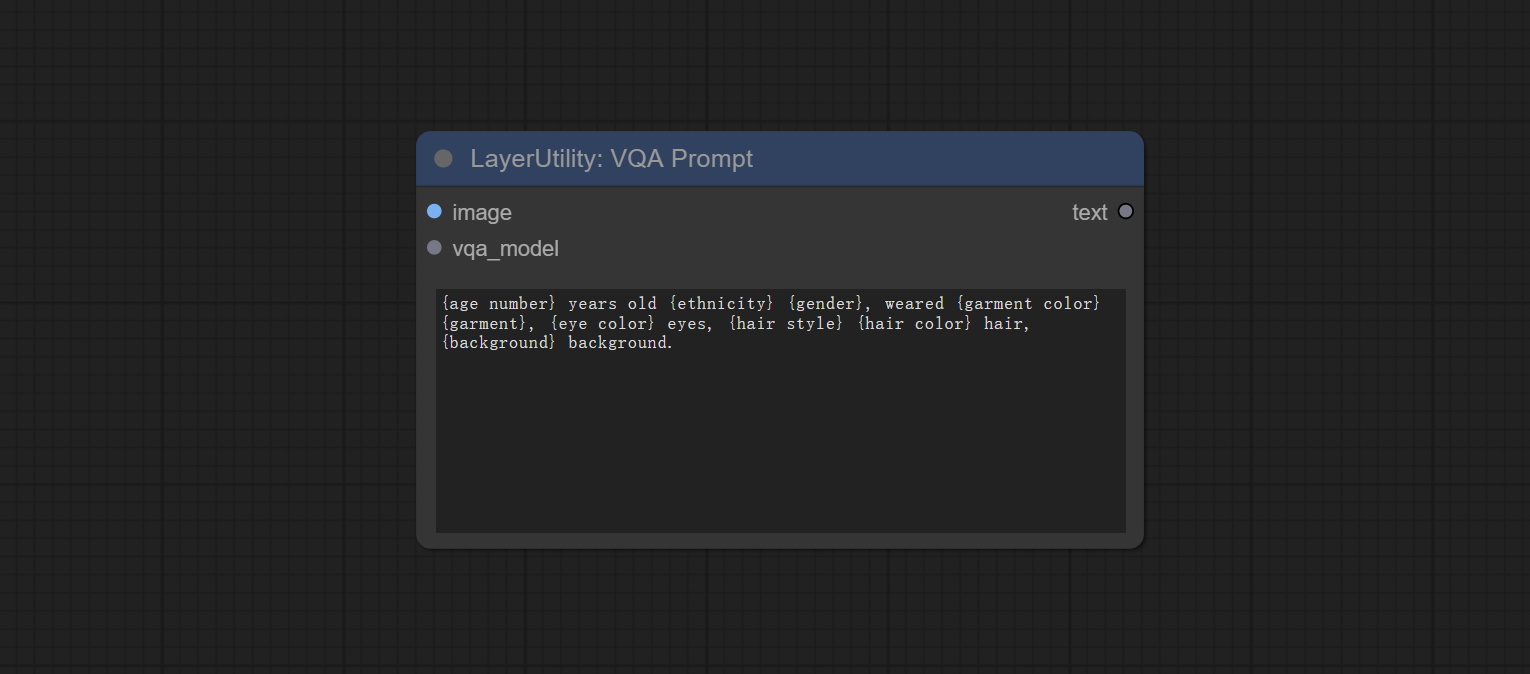

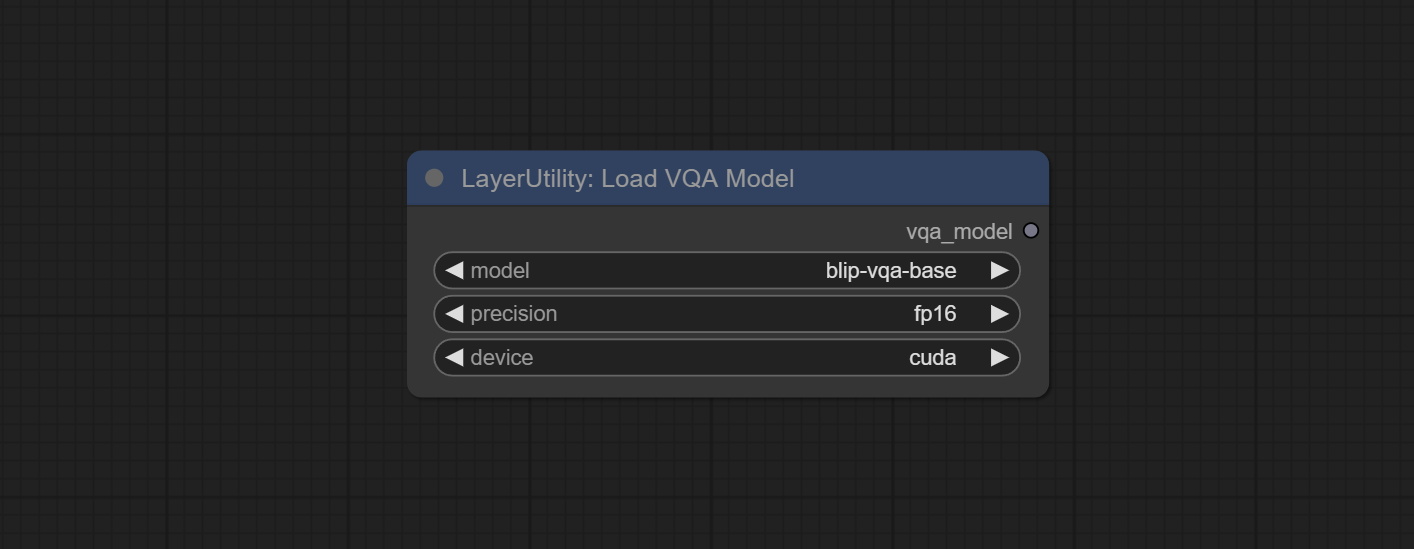

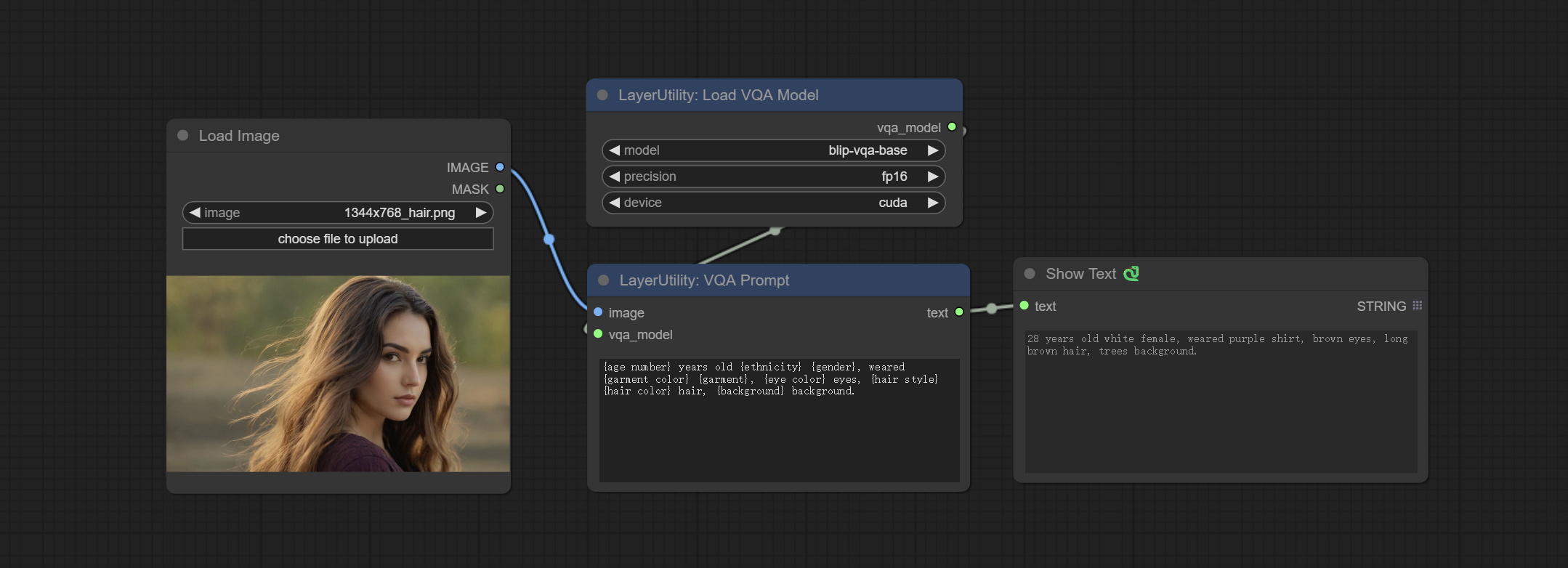

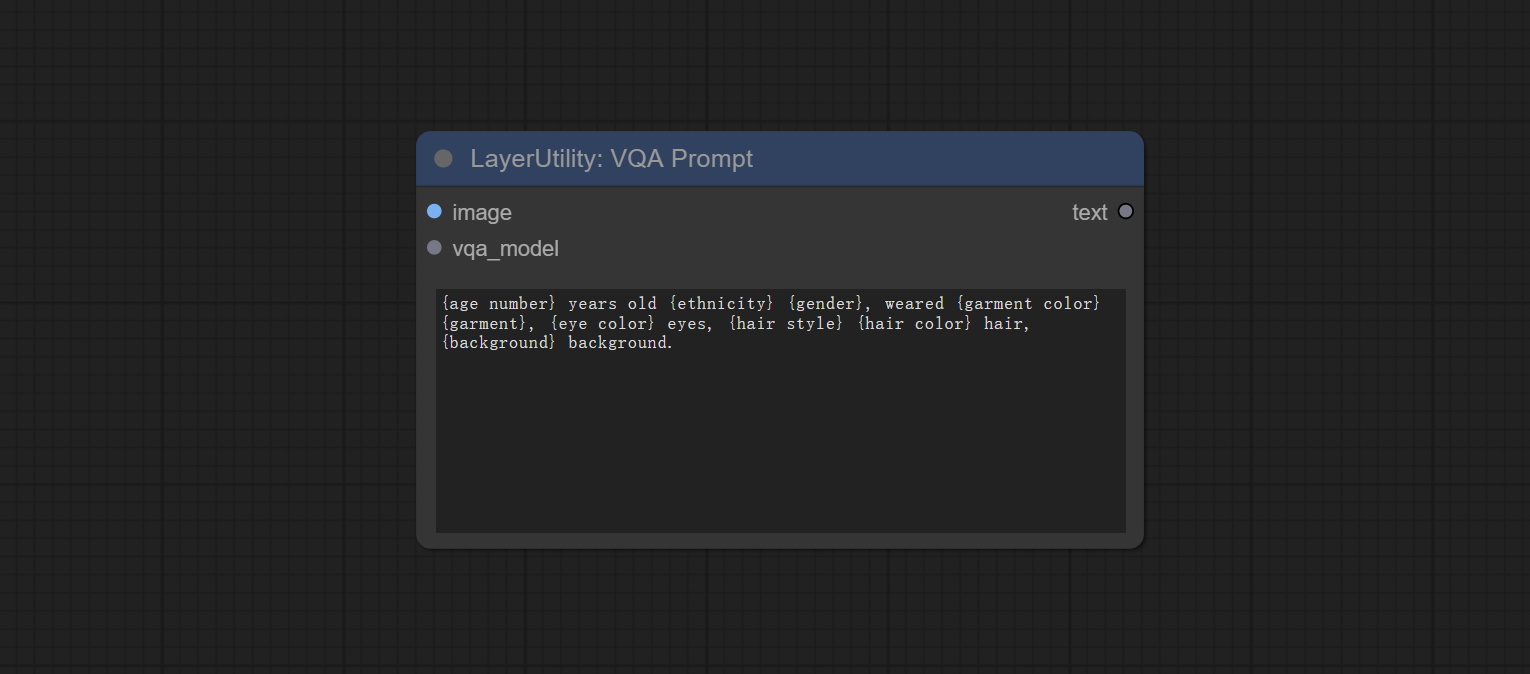

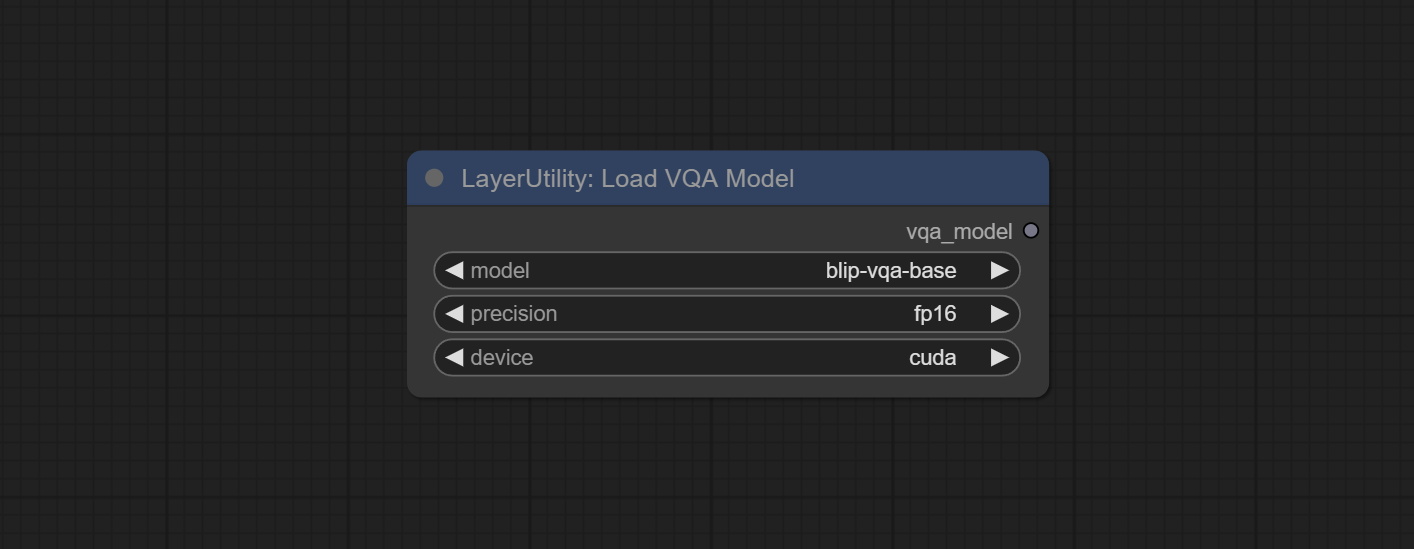

VQAPROMPT 및 LOADVQAMODEL 노드를 커밋하십시오.

Baidunetdisk 또는 Huggingface.co/salesforce/blip-vqa-capfilt-large 및 huggingface.co/salesforce/blip-vqa-base에서 모델을 다운로드하고 ComfyUImodelsVQA 폴더에 복사하십시오.

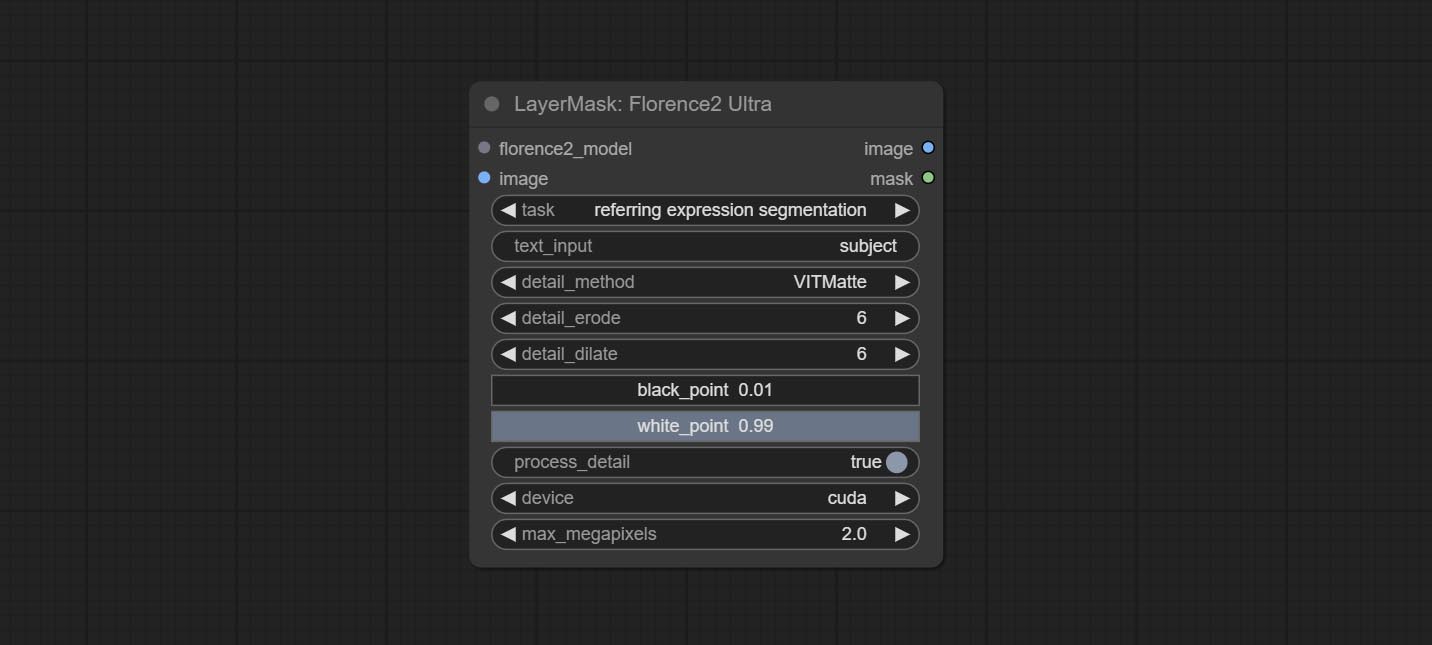

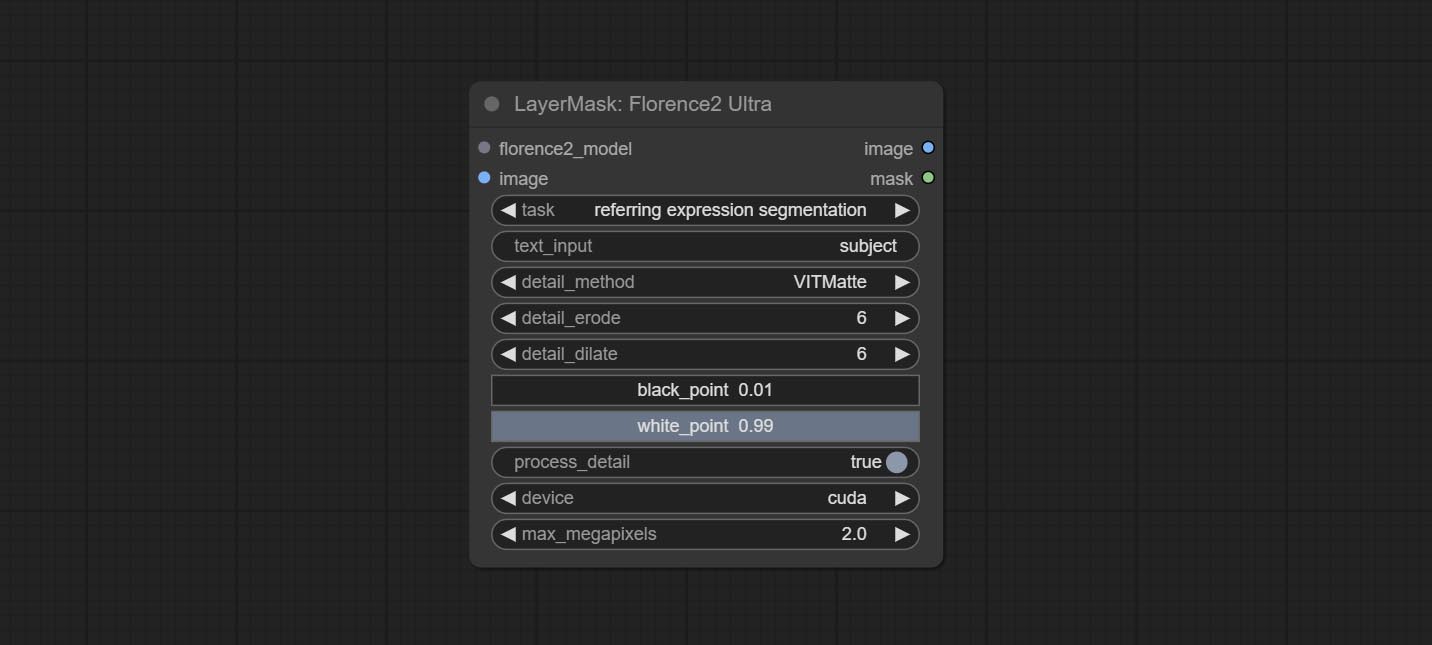

Florence2ultra, Florence2Image2Prompt 和 loadflorence2 모드 노드는 Miaoshouai/Florence-2-Large-Promptgen-V1.5 및 Miaoshouai/Florence-2-Base-Promptgen-V1.5 모델을 지원합니다.

Baidunetdisk 또는 Huggingface.co/miaoshouai/florence-2-large-promptgen-v1.5에서 모델 파일을 다운로드하고 Huggingface.co/miaoshouai/florence-2-base-promptgen-v1.5, ComfyUImodelsflorence2 폴더로 복사하십시오. .

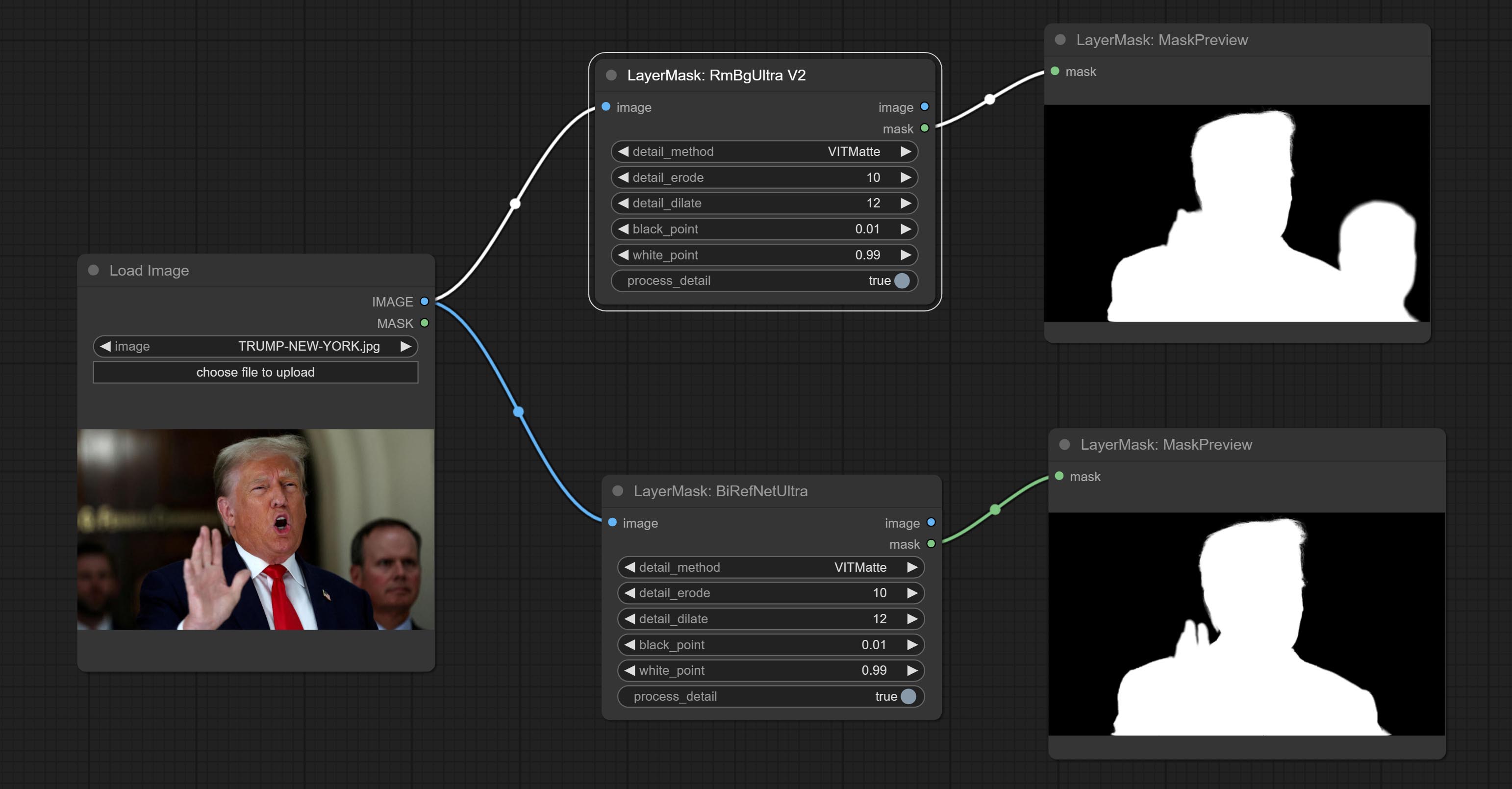

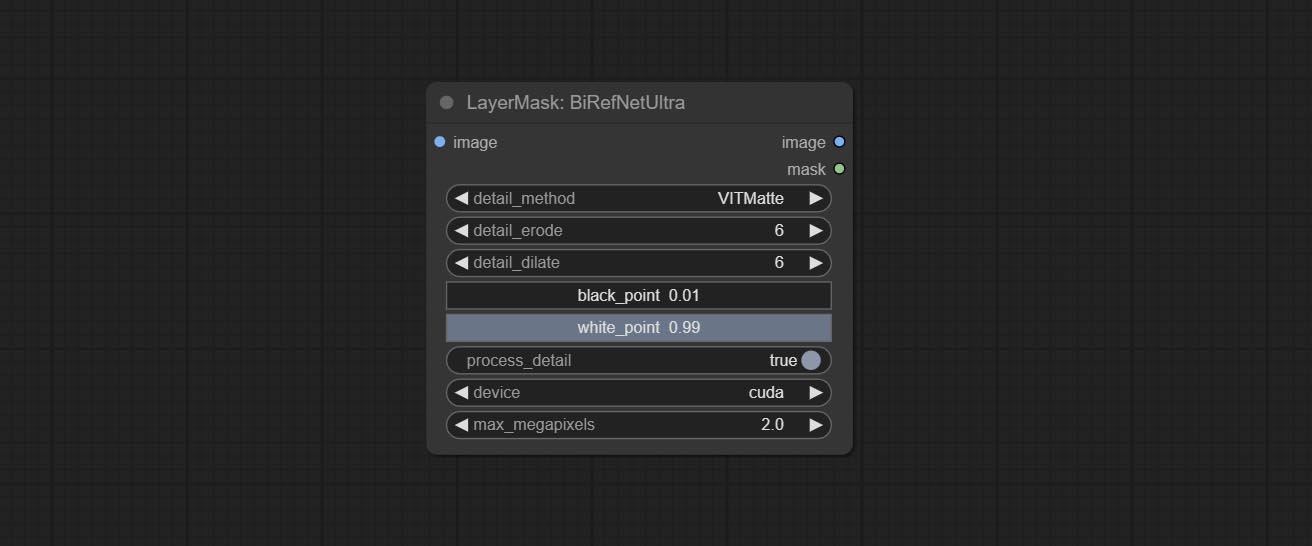

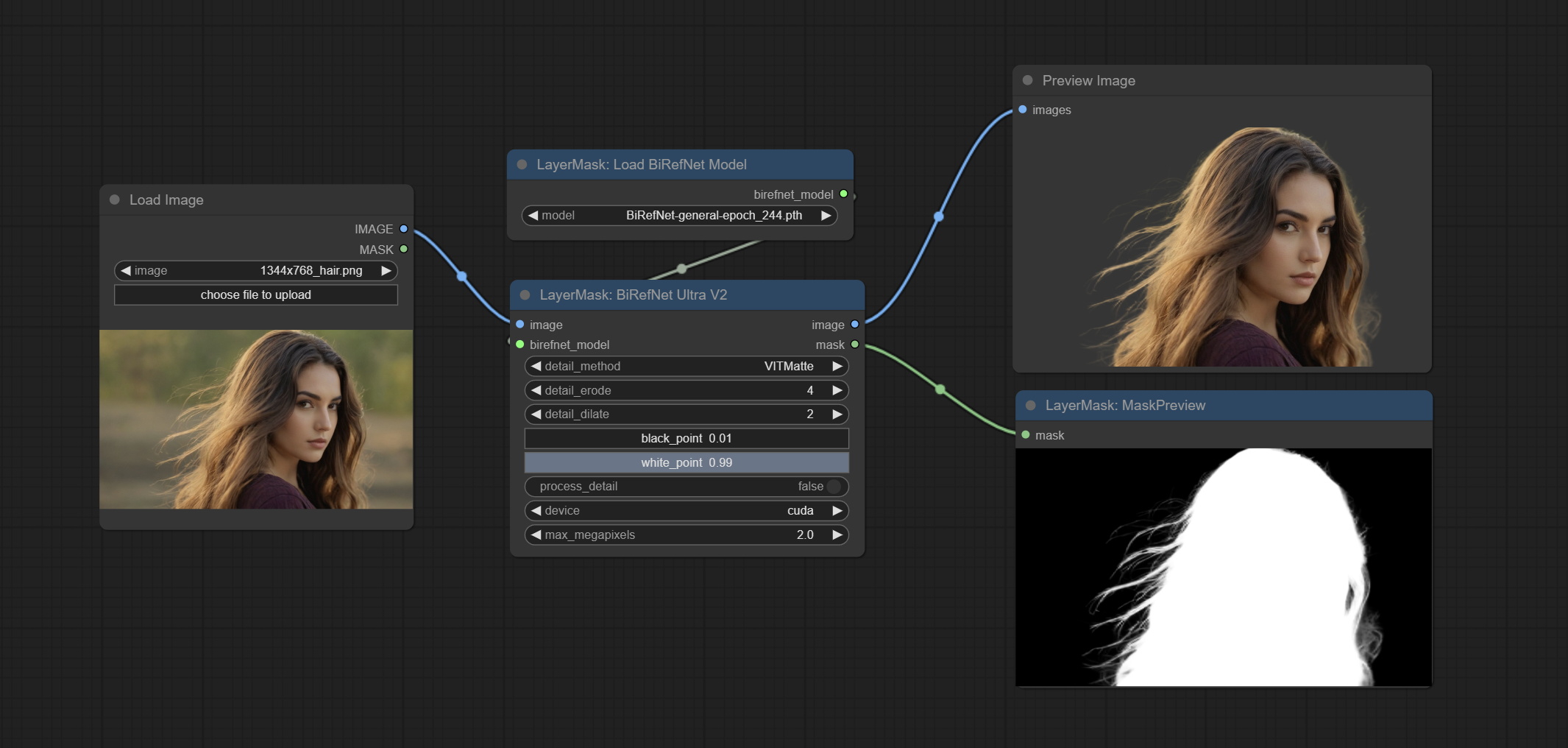

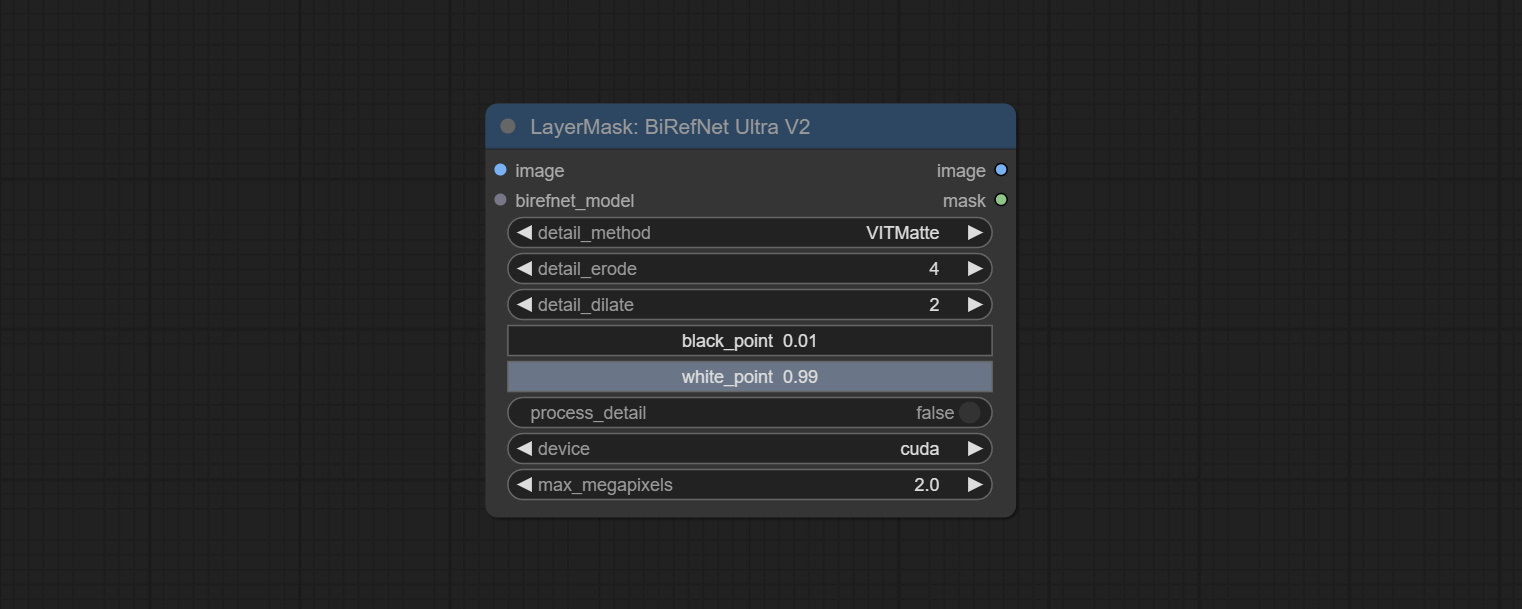

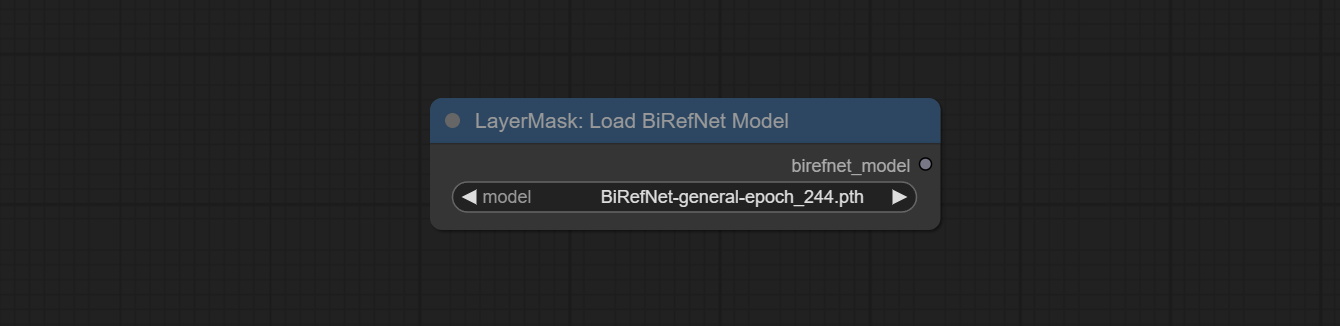

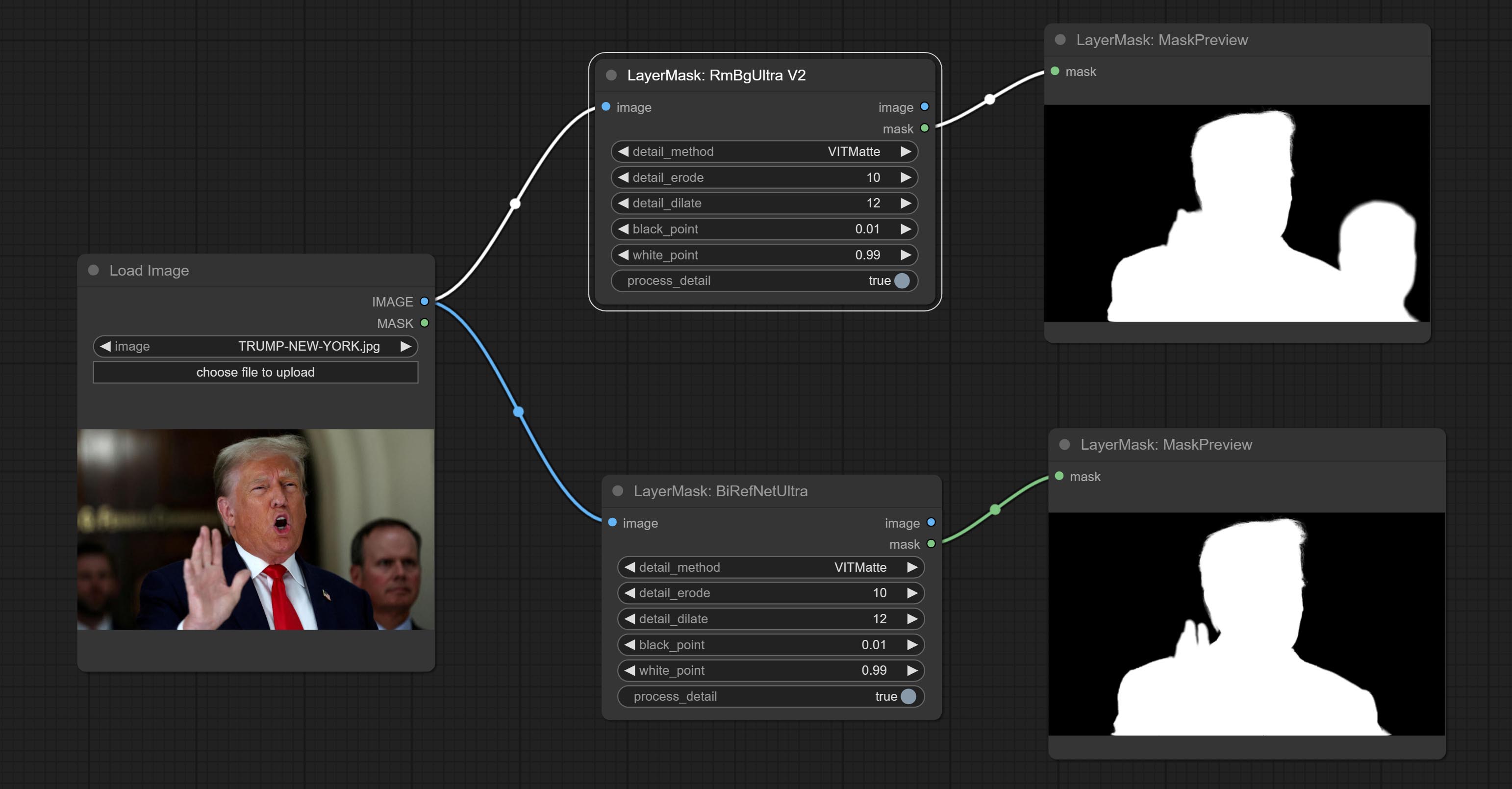

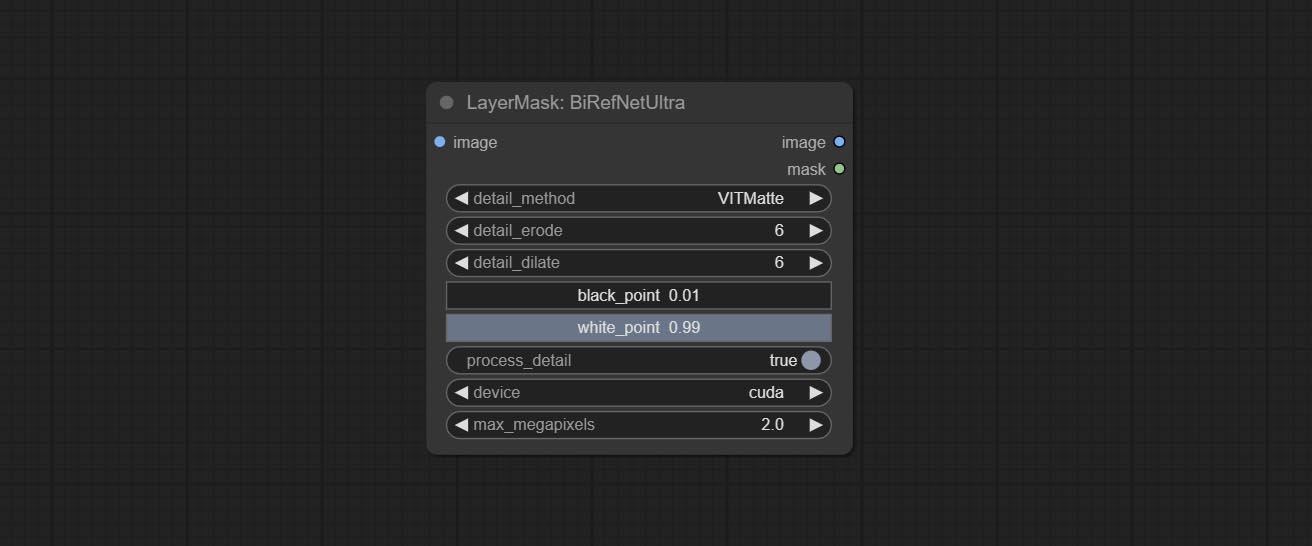

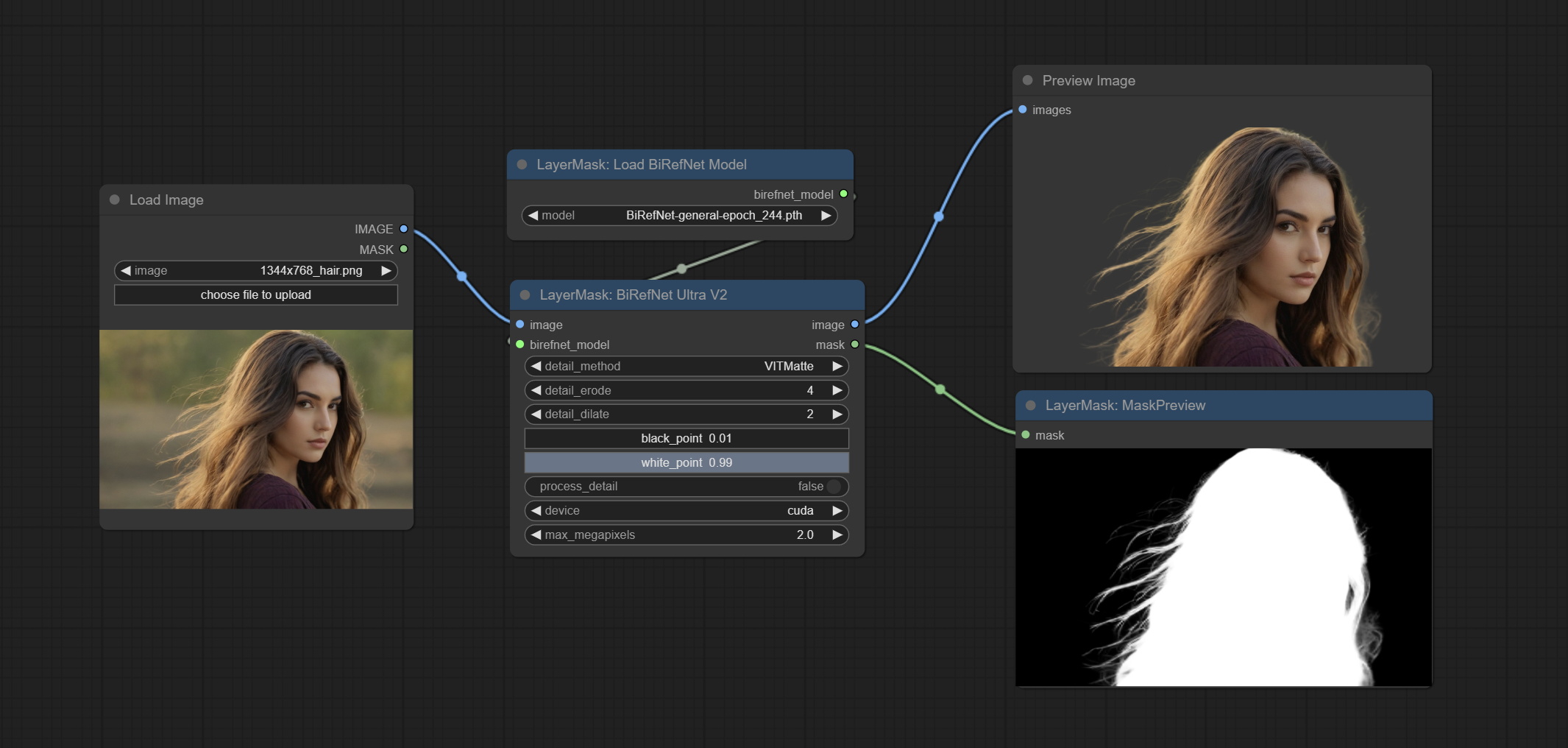

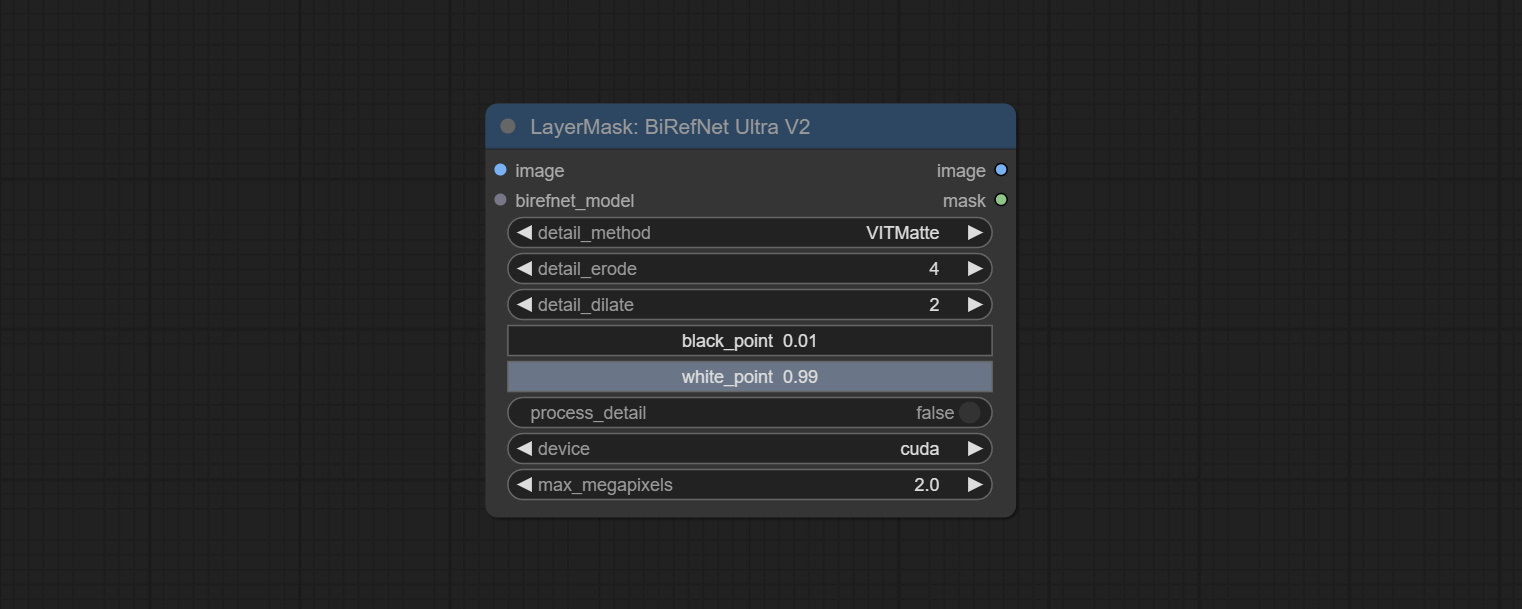

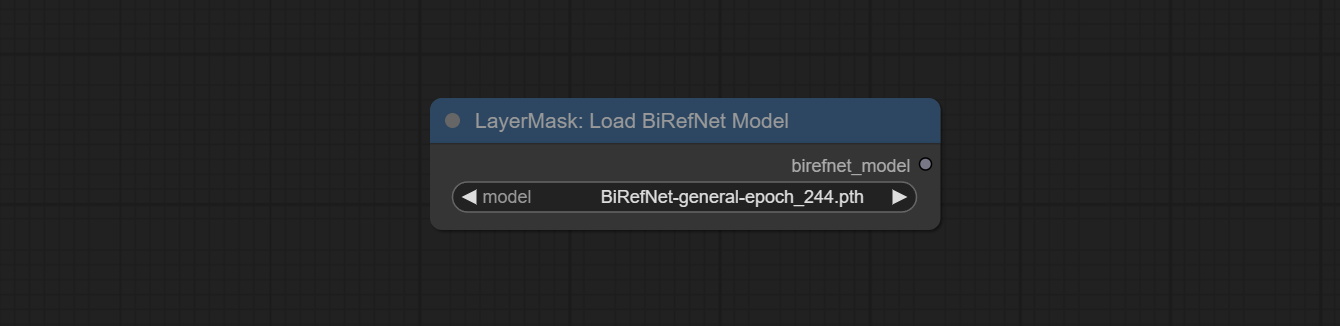

최신 Birefnet 모델의 사용을 지원하는 Birefnetultrav2 및 LoadbirefnetModel 노드를 Commit하십시오. BaidunetDisk 또는 GoogleDrive에서 BiRefNet-general-epoch_244.pth 에서 ComfyUI/Models/BiRefNet/pth 폴더라는 모델 파일을 다운로드하십시오. 더 많은 Birefnet 모델을 다운로드하여 여기에 넣을 수도 있습니다.

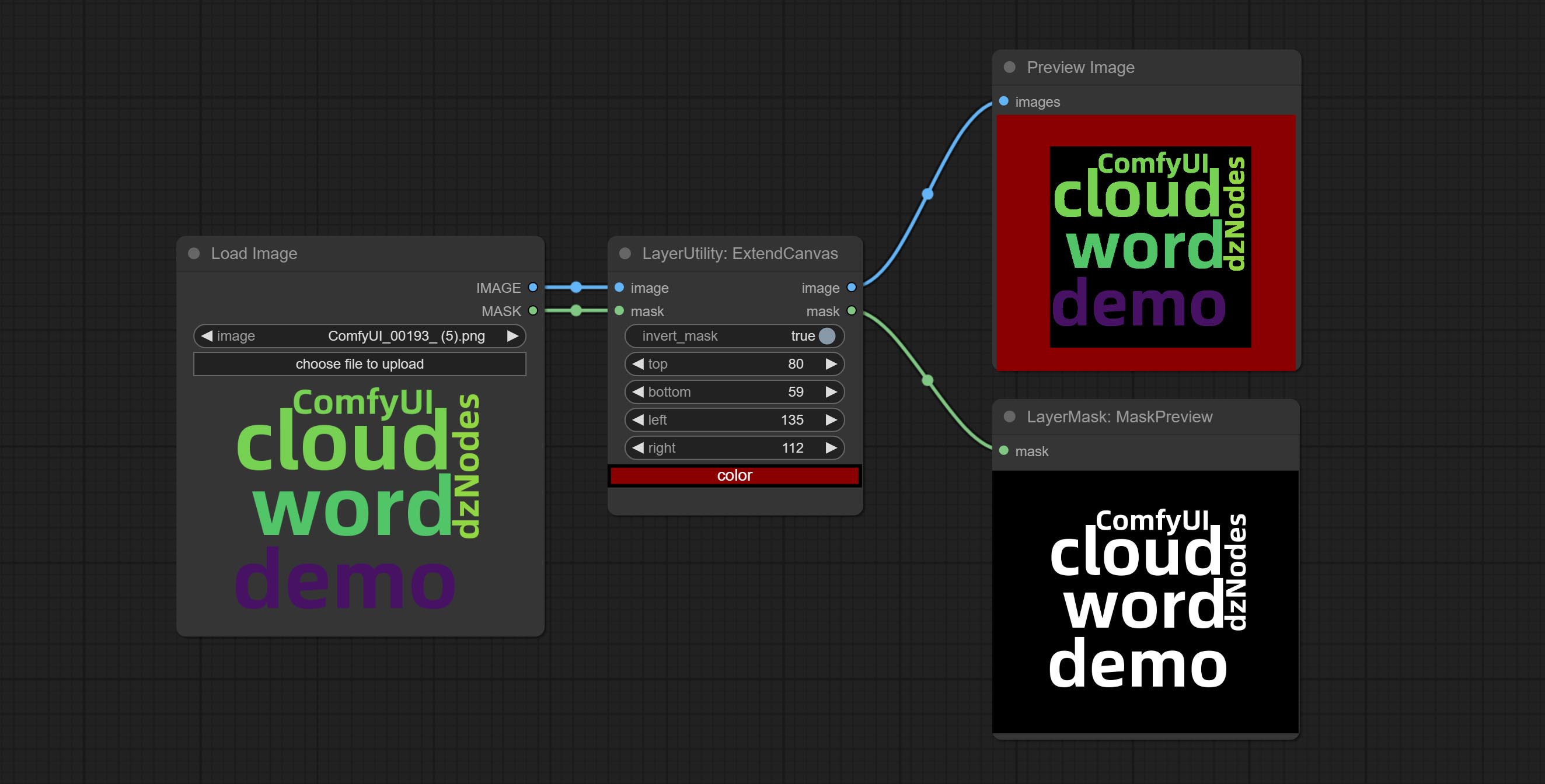

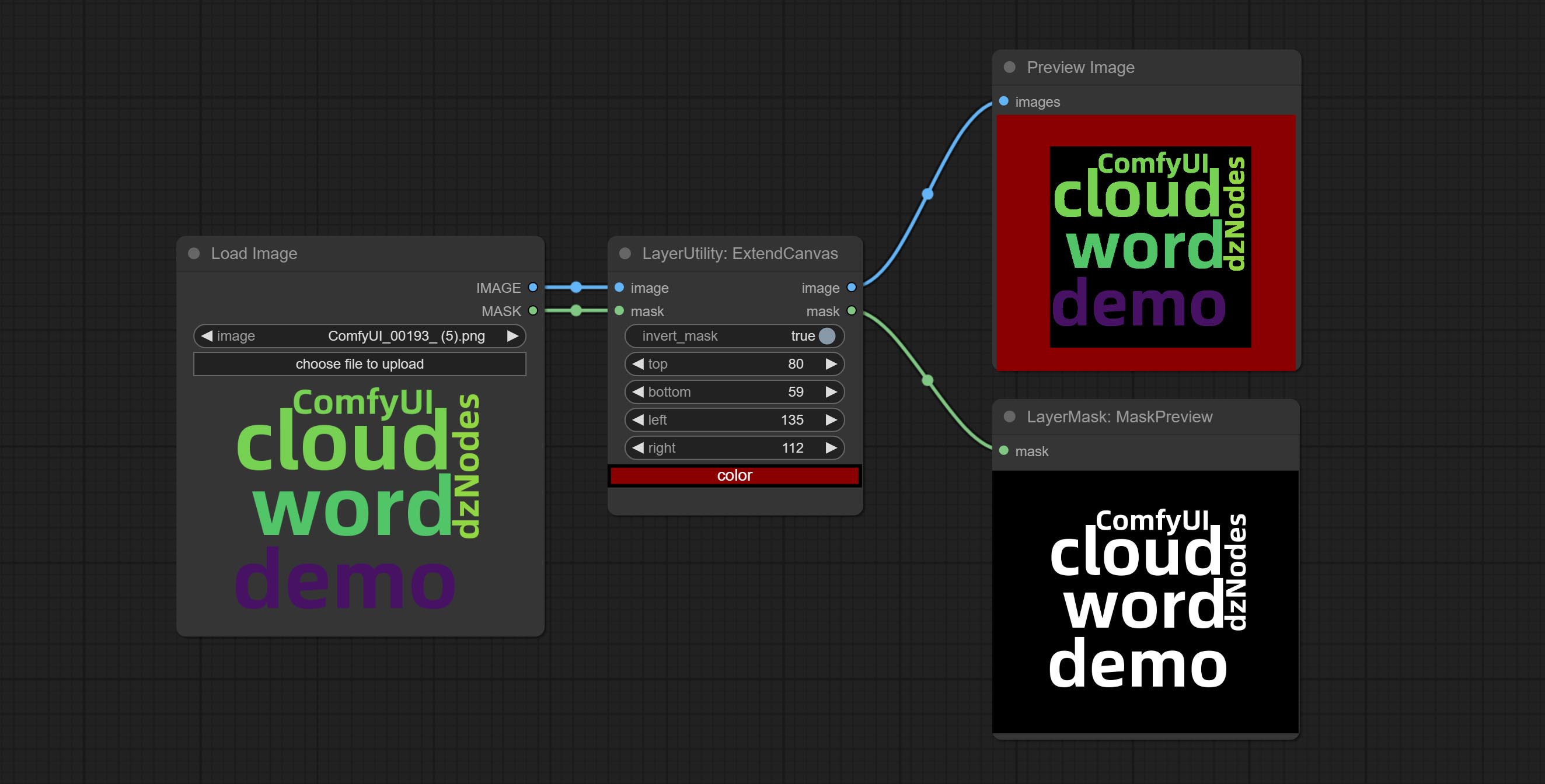

ExtendCanvasv2 노드를 지원하는 음수 값 입력을 지원합니다. 이미지가 잘린다는 것을 의미합니다.

노드의 기본 제목 색상은 청록색으로 변경되며 Layerstyle, LayerColor, Layermask, Layerutility 및 LayerFilter의 노드는 다른 색상으로 구별됩니다.

객체 감지기 노드는 정렬 Bbox 옵션을 추가하여 왼쪽에서 오른쪽으로, 위쪽으로, 위쪽으로, 작은 것에서 작은 것까지 객체 선택을보다 직관적이고 편리하게 만듭니다. 어제 출시 된 노드는 버려졌습니다. 수동으로 새 버전 노드로 교체하십시오 (죄송합니다).

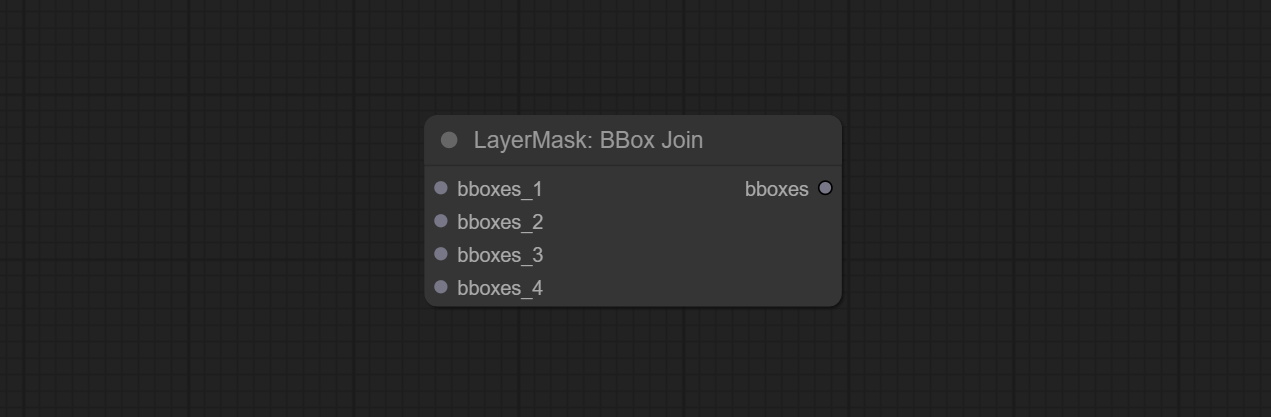

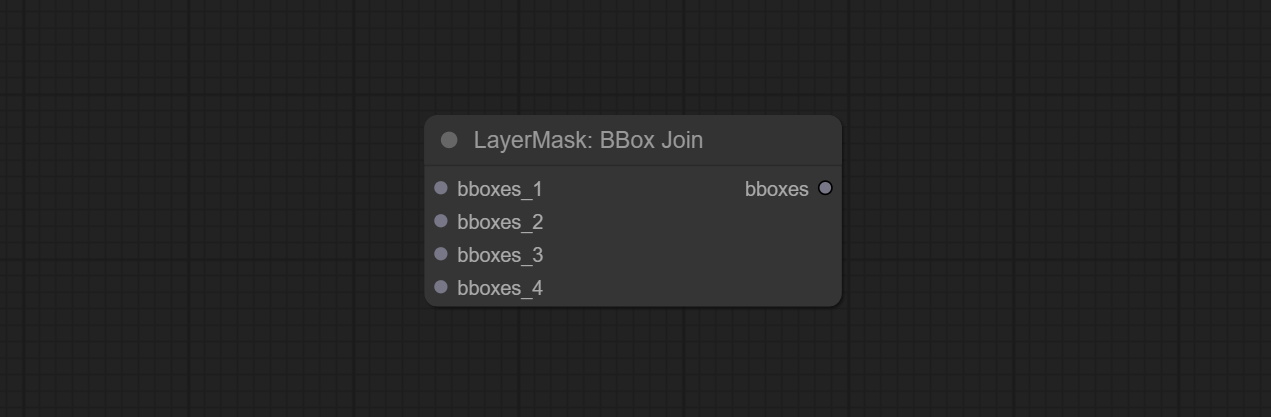

Sam2ultra, Sam2Videoultra, ObjectDetectorfl2, ObjectDetectoryOloworld, ObjectDetectoryolo8, ObjectDetectorMask 및 Bboxjoin 노드를 커밋하십시오. Baidunetdisk 또는 Huggingface.co/kijai/sam2-safetensors에서 모델을 다운로드하고 ComfyUI/models/sam2 폴더로 복사하고 BaidunetDisk 또는 GoogledRive에서 모델을 다운로드하고 ComfyUI/models/yolo-world 폴더로 복사하십시오. 이 업데이트는 새로운 종속성을 소개합니다. 종속성 패키지를 다시 설치하십시오.

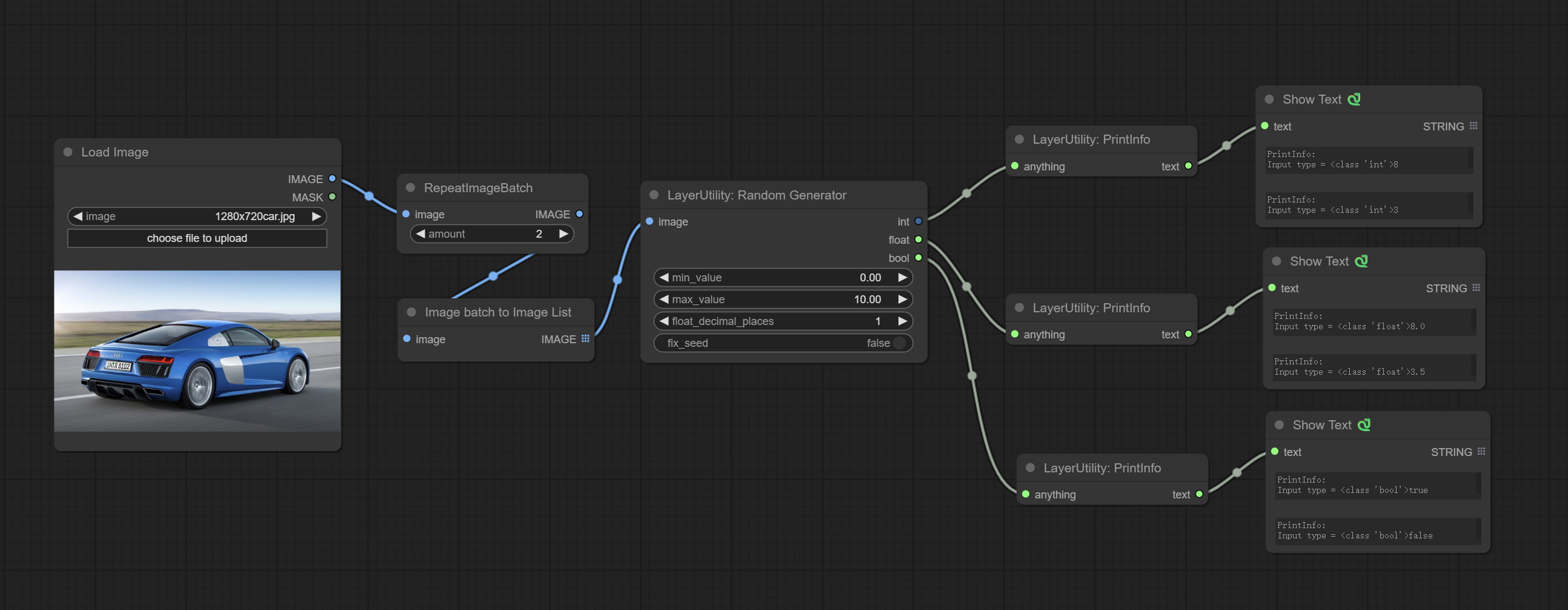

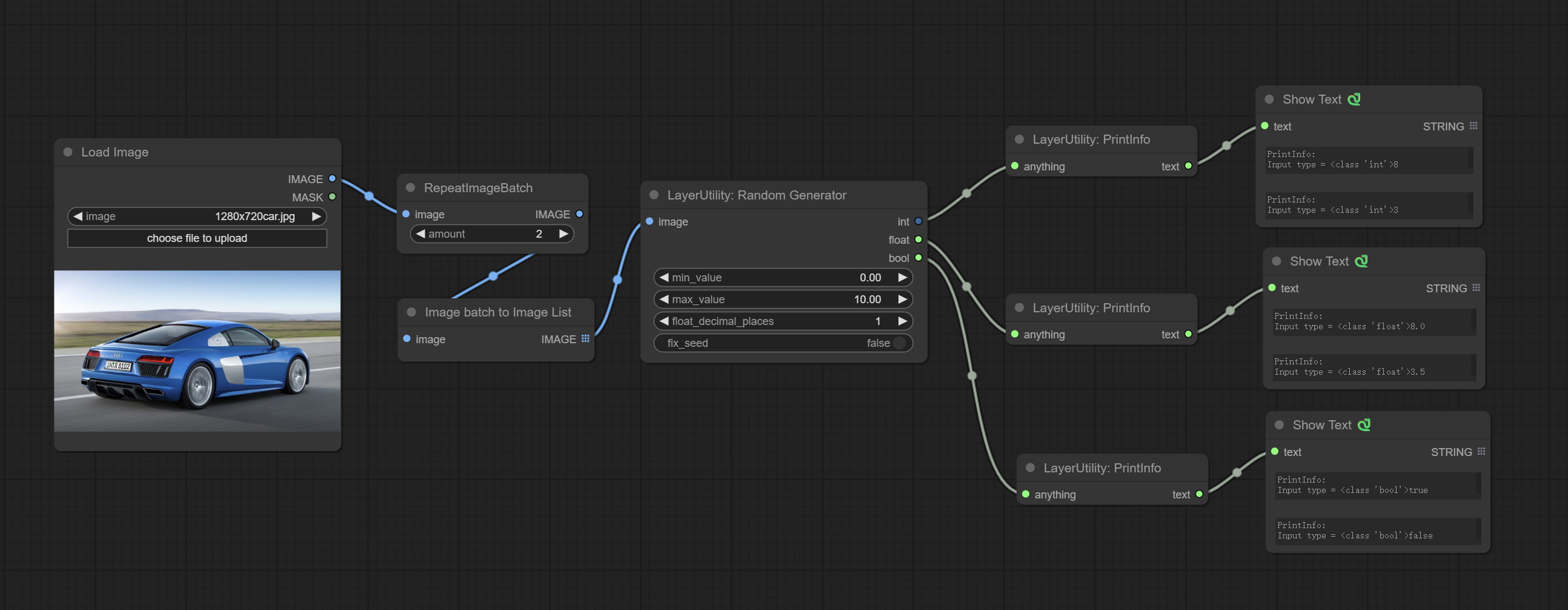

int, float 및 boolean의 출력을 통해 지정된 범위 내에서 임의의 숫자를 생성하는 데 사용되는 임의의 랜덤 숫자 노드를 커밋하여 이미지 배치에 의해 다른 임의의 배치 생성을 지원합니다.

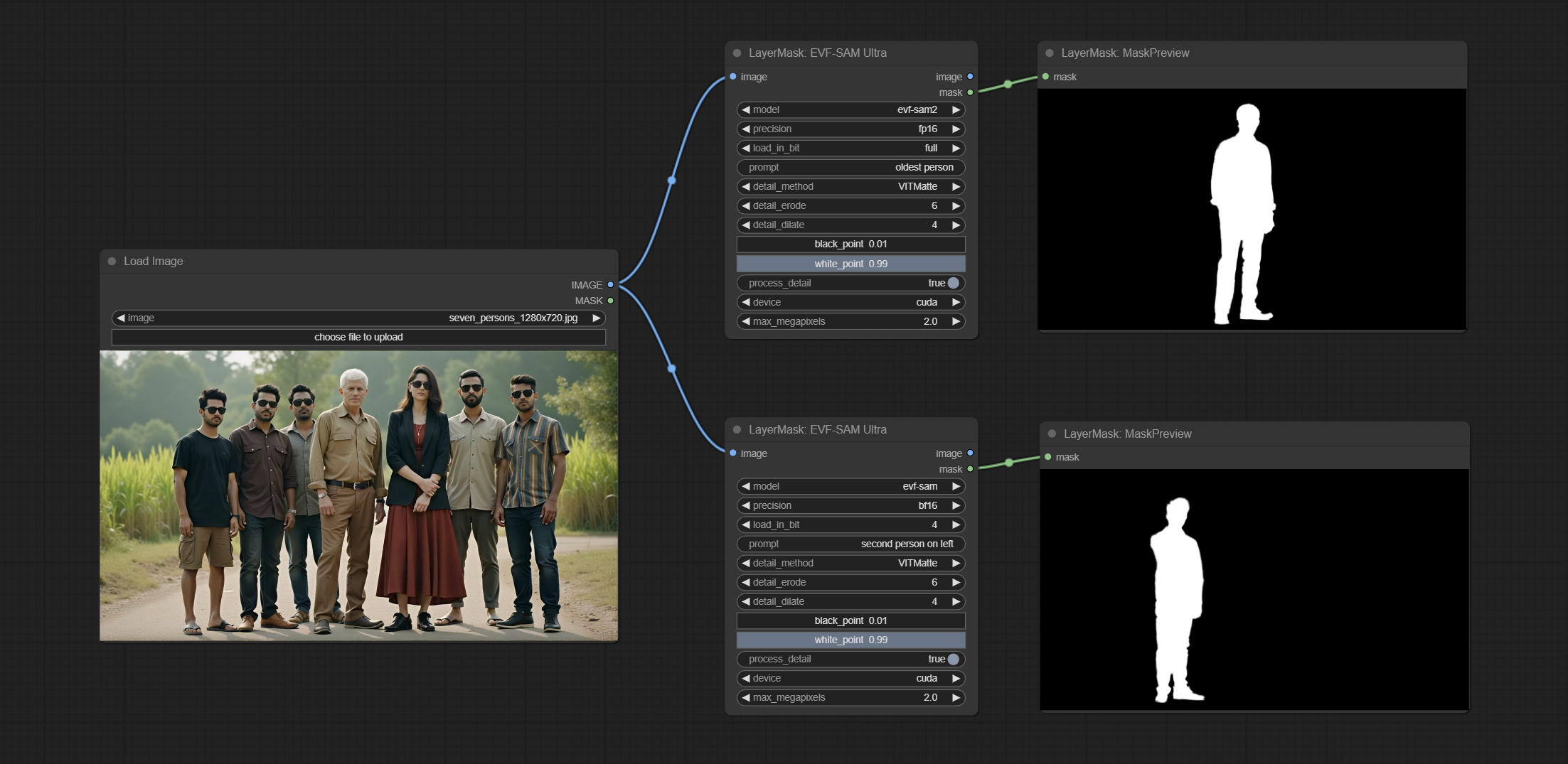

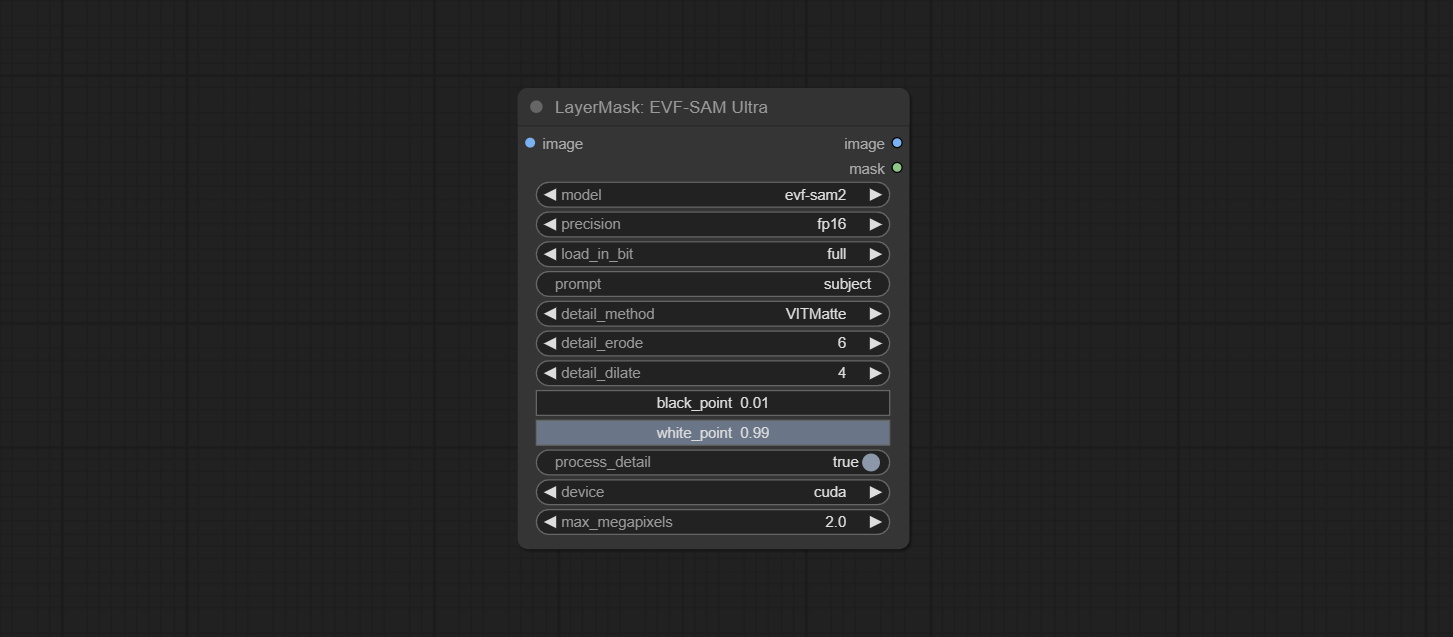

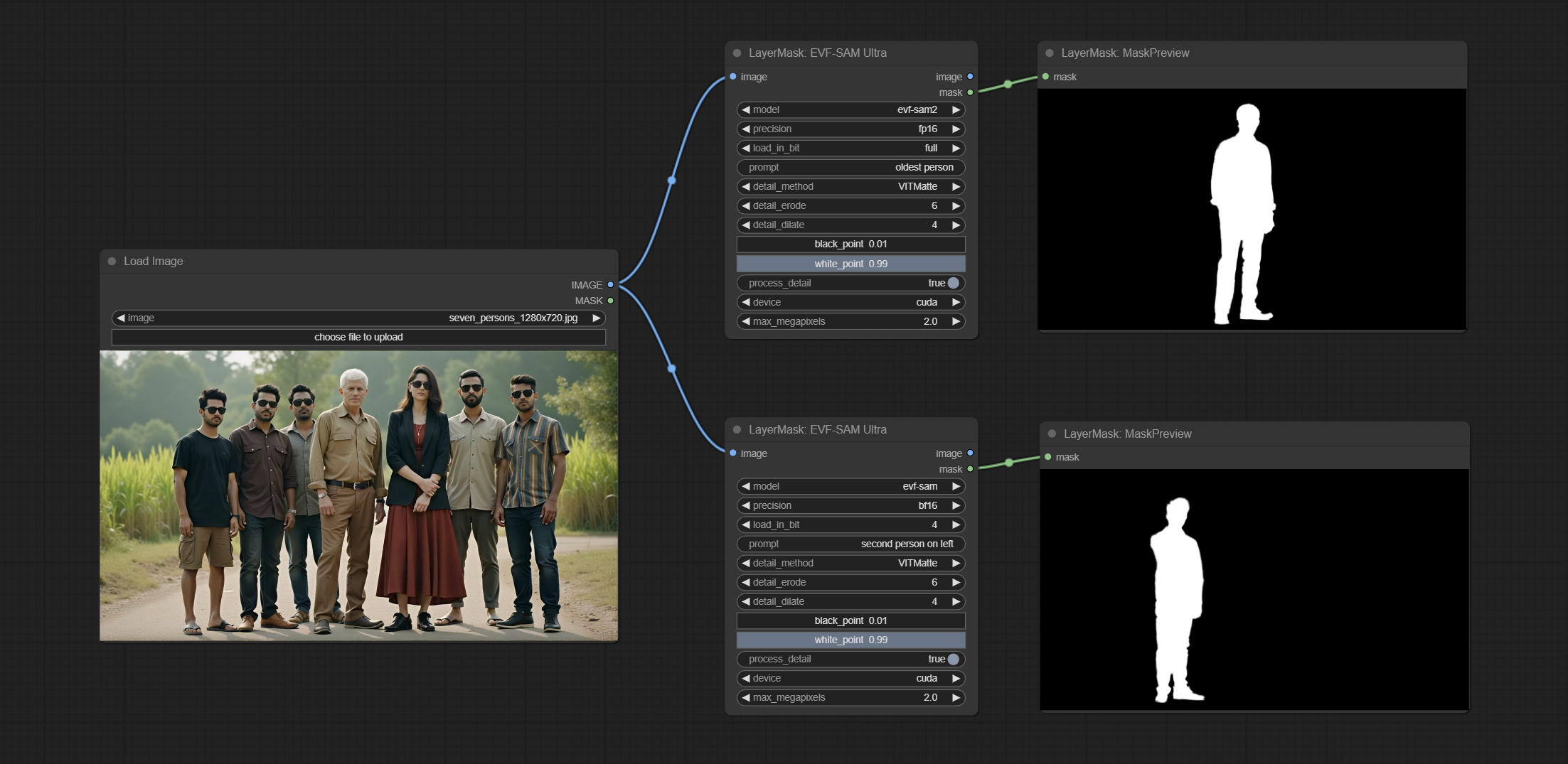

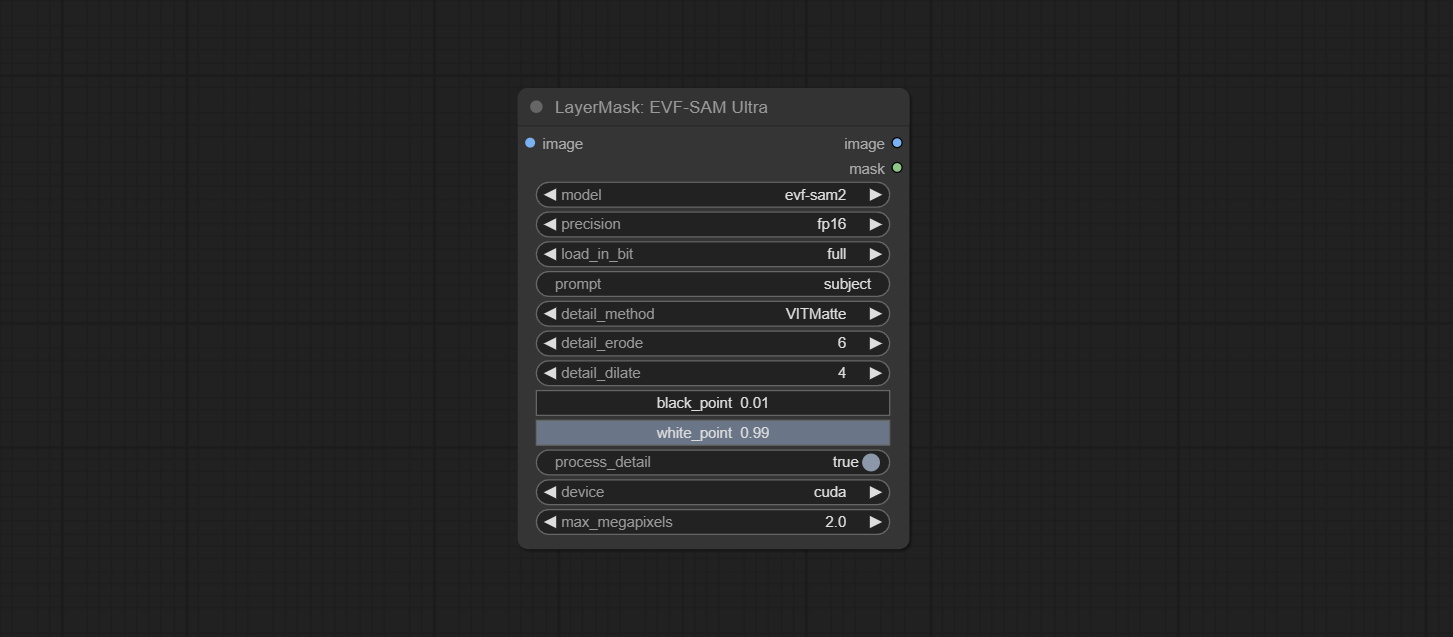

Commit Evf-Samultra 노드는 Comfyui에서 EVF-SAM을 구현합니다. BaidunetDisk 또는 Huggingface/EVF-SAM2, Huggingface/Evf-Sam to ComfyUI/models/EVF-SAM 폴더에서 모델 파일을 다운로드하십시오 (각 하위 디렉터에 모델을 저장). 새로운 종속성 패키지가 도입되어 플러그인 업그레이드 후 종속성 패키지를 다시 설치하십시오.

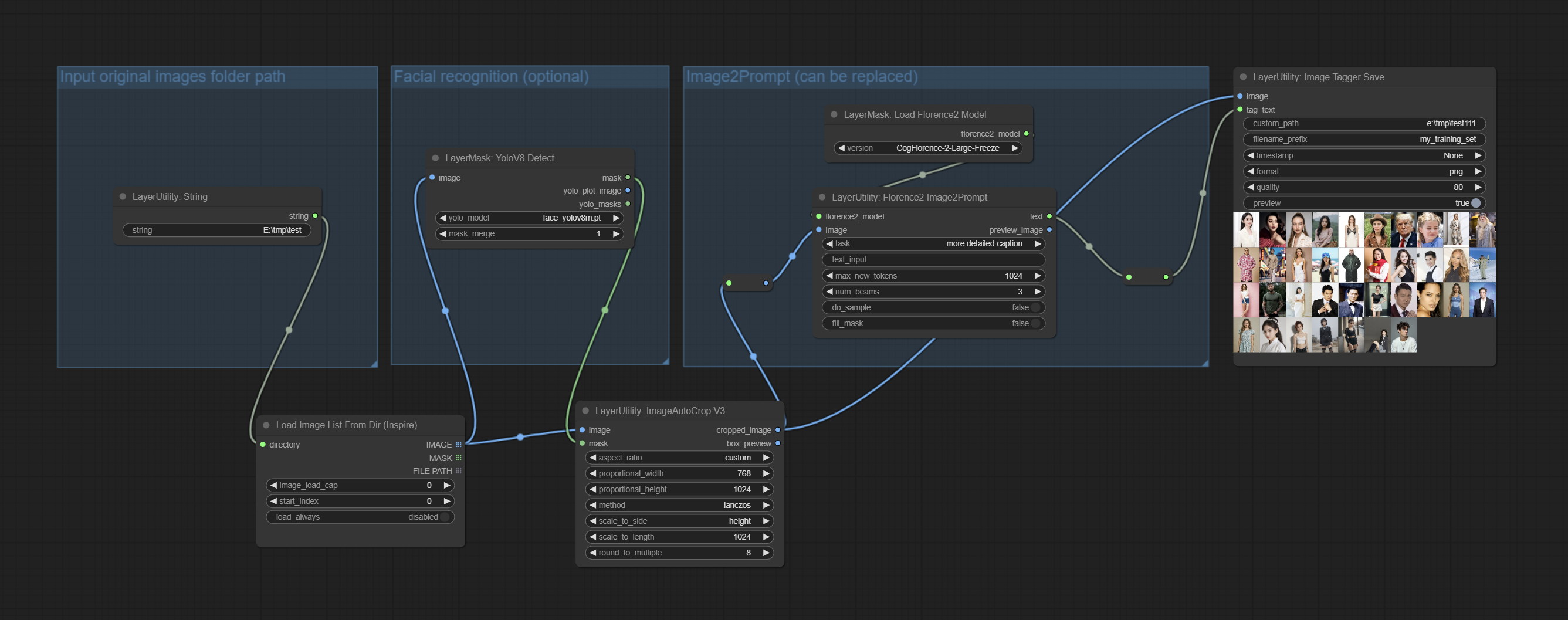

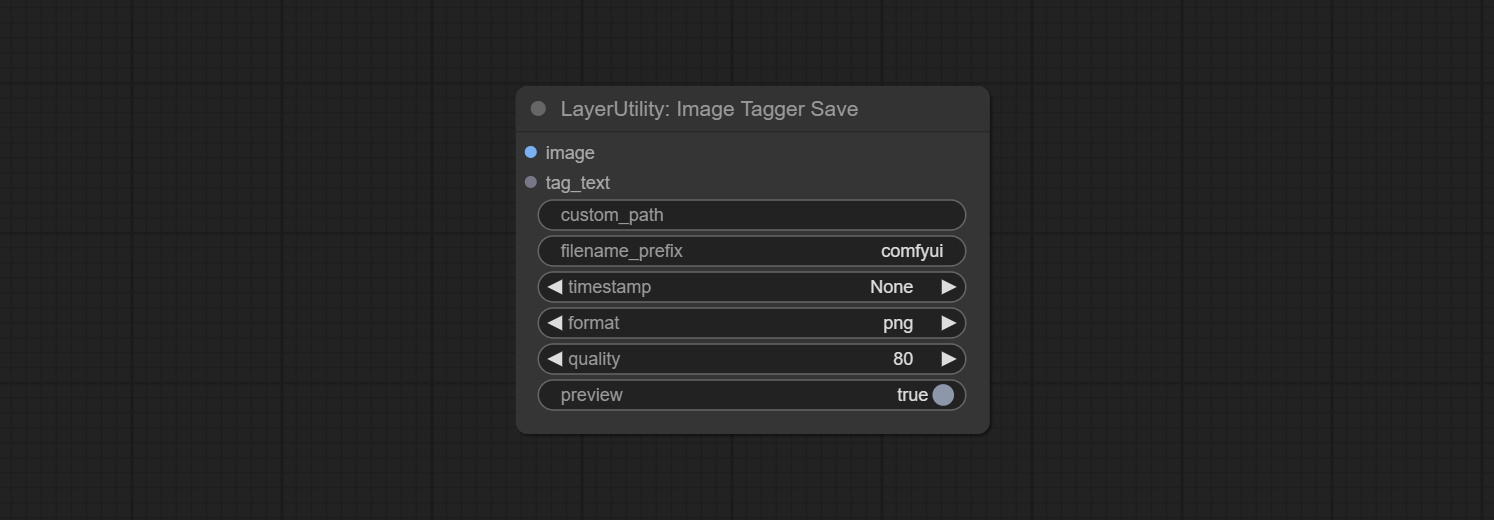

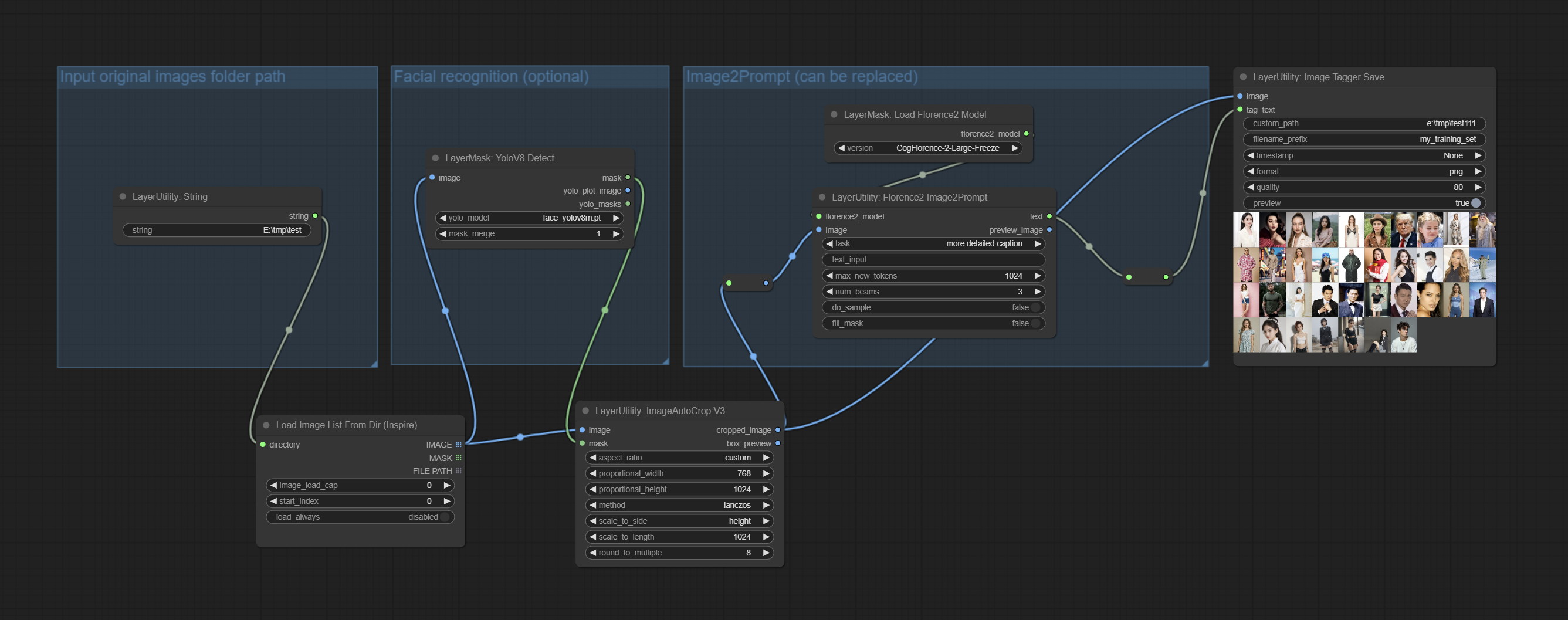

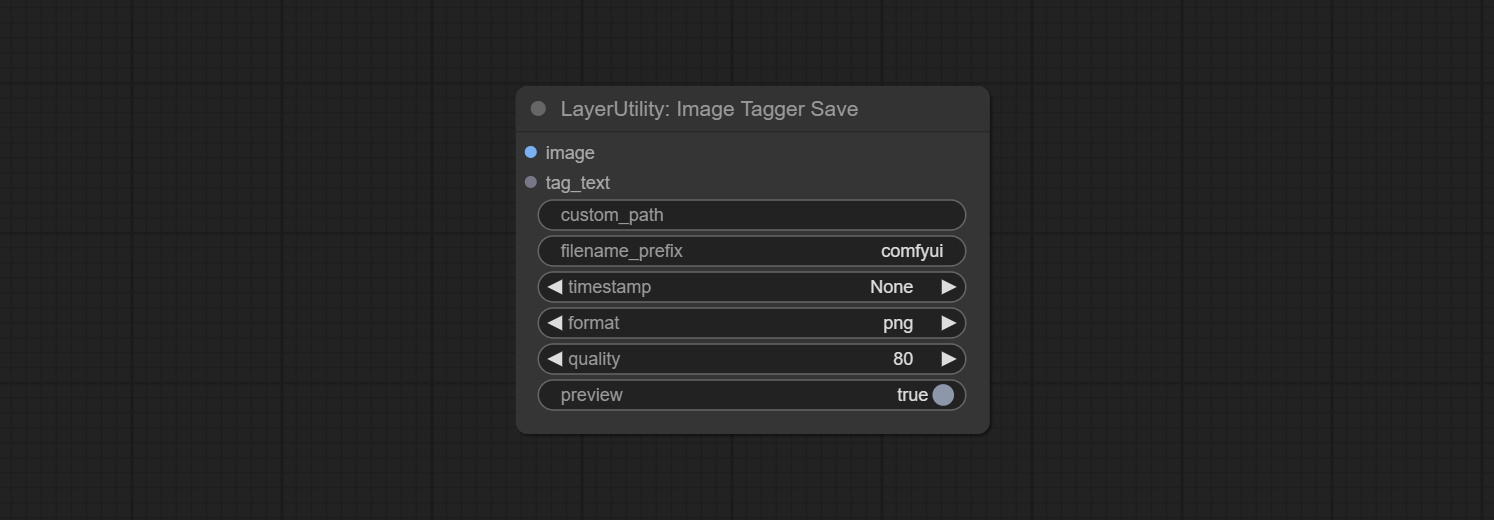

imageTaggersave 및 imageautocropv3 노드를 커밋하십시오. 교육 세트의 자동 트리밍 및 마킹 워크 플로를 구현하는 데 사용됩니다 (Workflow image_tagger_save.json 은 워크 플로 디렉토리에 있습니다).

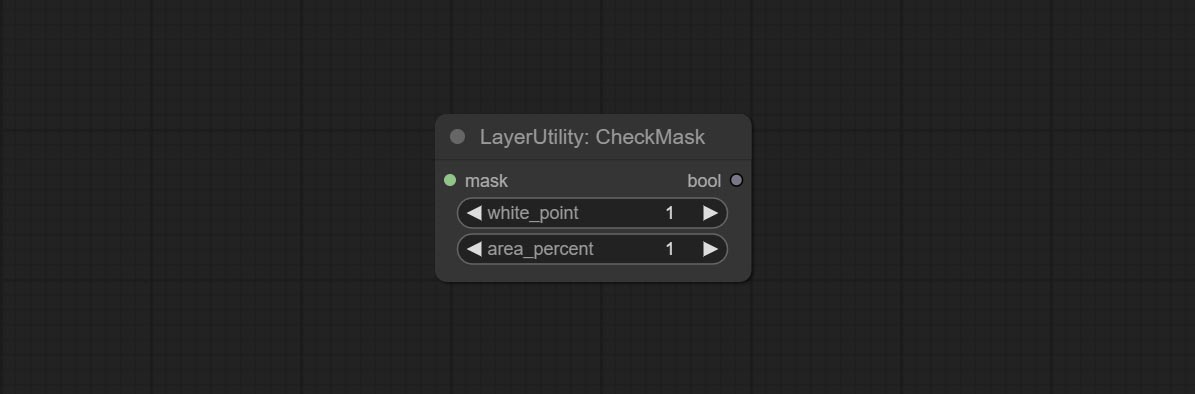

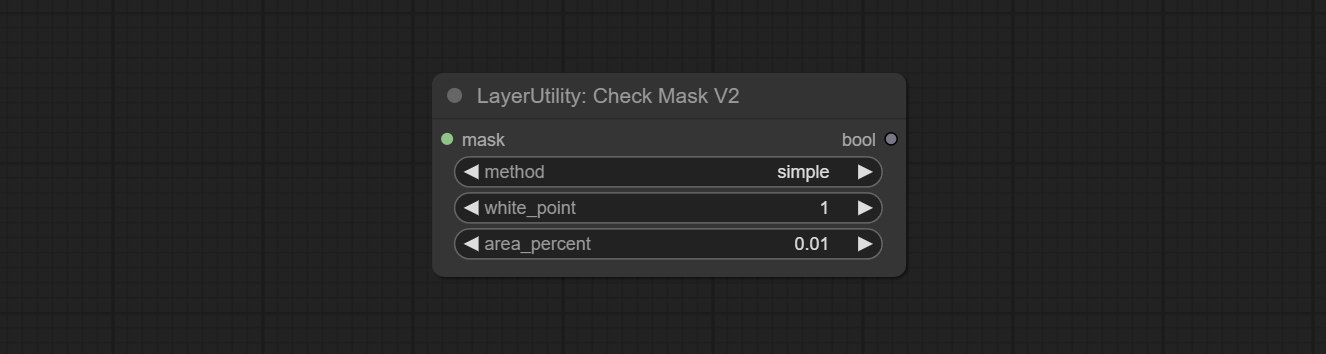

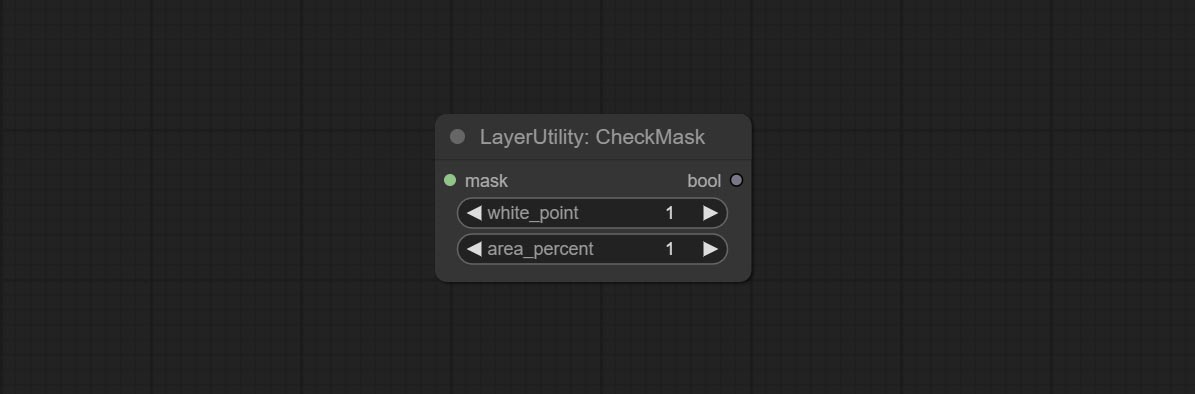

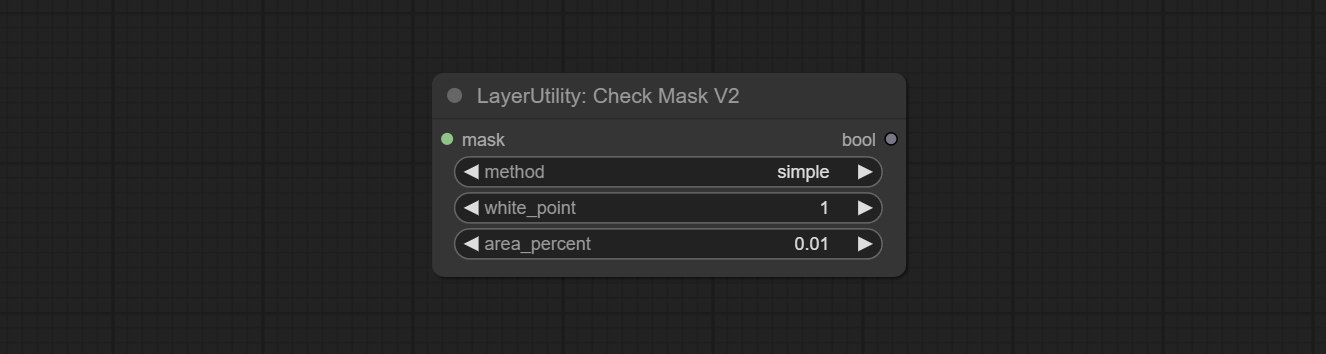

Commit Checkmaskv2 노드를 커밋하고 simple 방법을 추가하여 마스크를 더 빨리 감지하십시오.

캔버스의 여러 이미지를 합성하기 위해 ImageEel 및 ImageEelcomposite 노드를 커밋합니다.

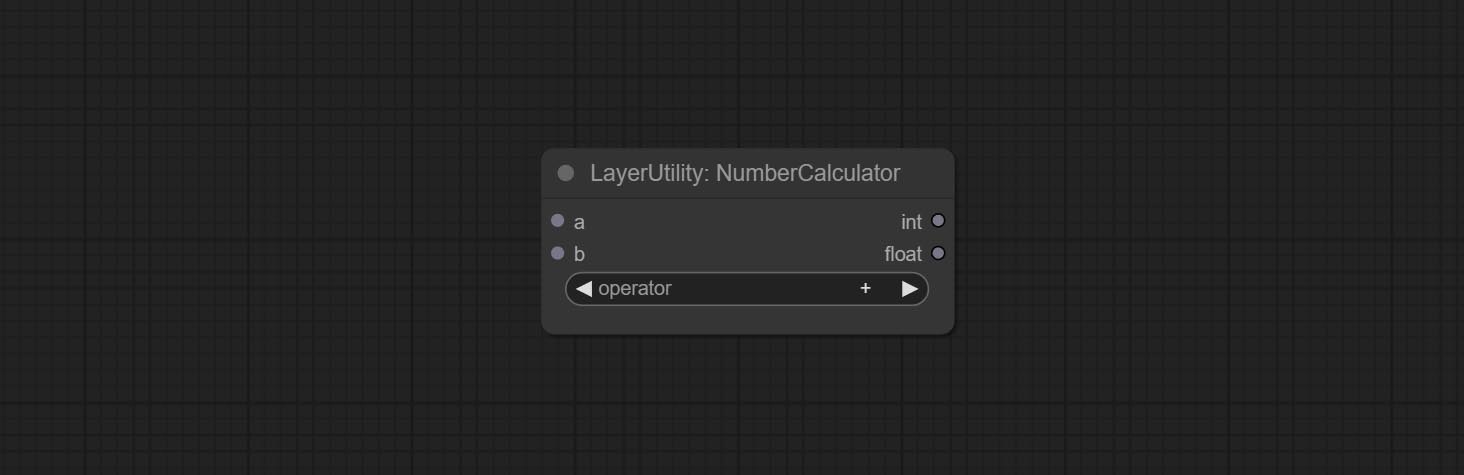

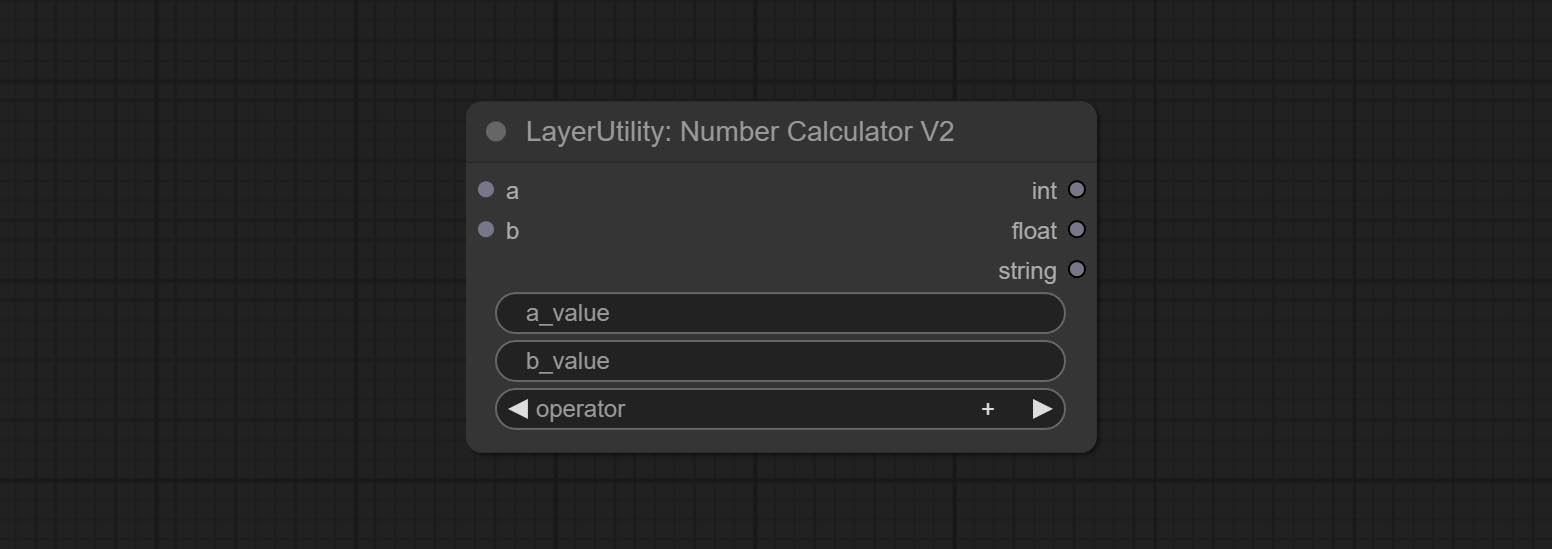

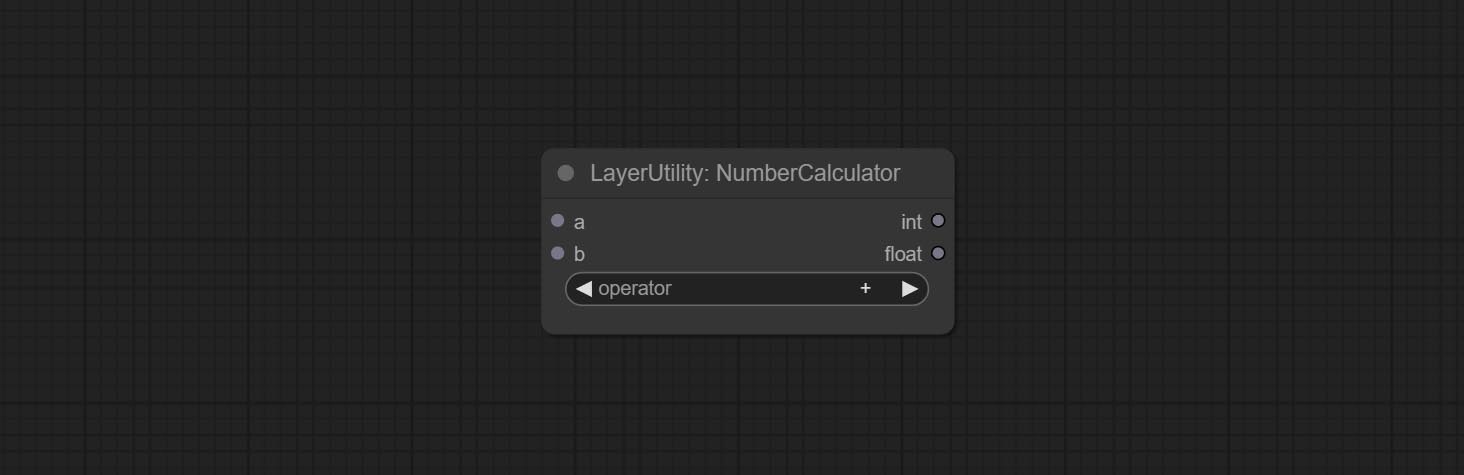

NumberCalculatorV2 및 NumberCalculator min 및 max 방법을 추가하십시오.

노드로드 속도를 최적화합니다.

Florence2image2prompt는 thwri/CogFlorence-2-Large-Freeze 및 thwri/CogFlorence-2.1-Large 모델에 대한 지원을 추가합니다. BaidunetDisk 또는 Huggingface/Cogflorence-2-Large-Freeze 및 Huggingface/Cogflorence-2.1-Large에서 모델 파일을 다운로드 한 다음 ComfyUI/models/florence2 폴더에 복사하십시오.

Clownsharkbatwing의 Branch Merge "컬러 블렌드 모드 용 GPU 사용"은 일부 레이어의 속도 인 10 배 이상의 속도입니다.

Florence2ultra, Florence2Image2Prompt 및 Loadflorence2 모드 노드를 커밋하십시오.

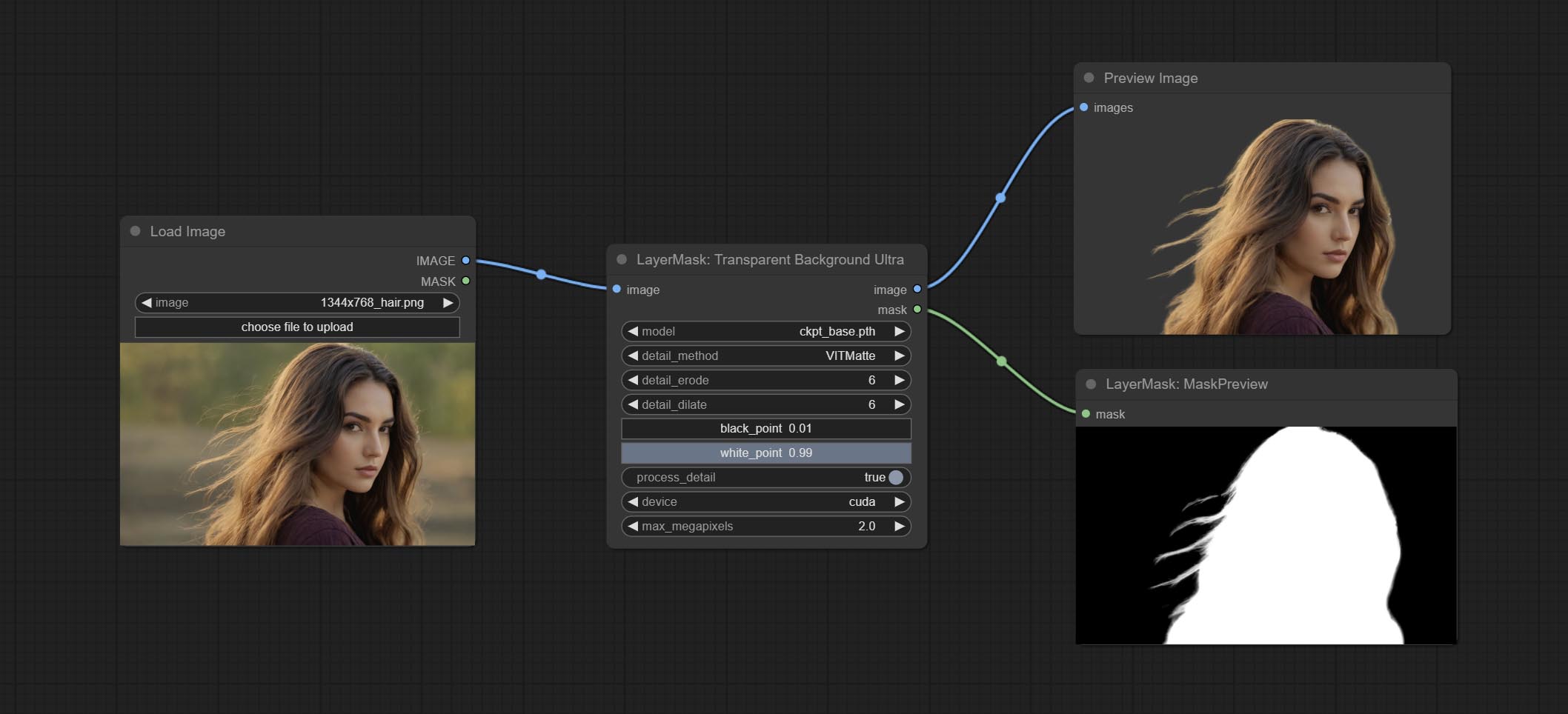

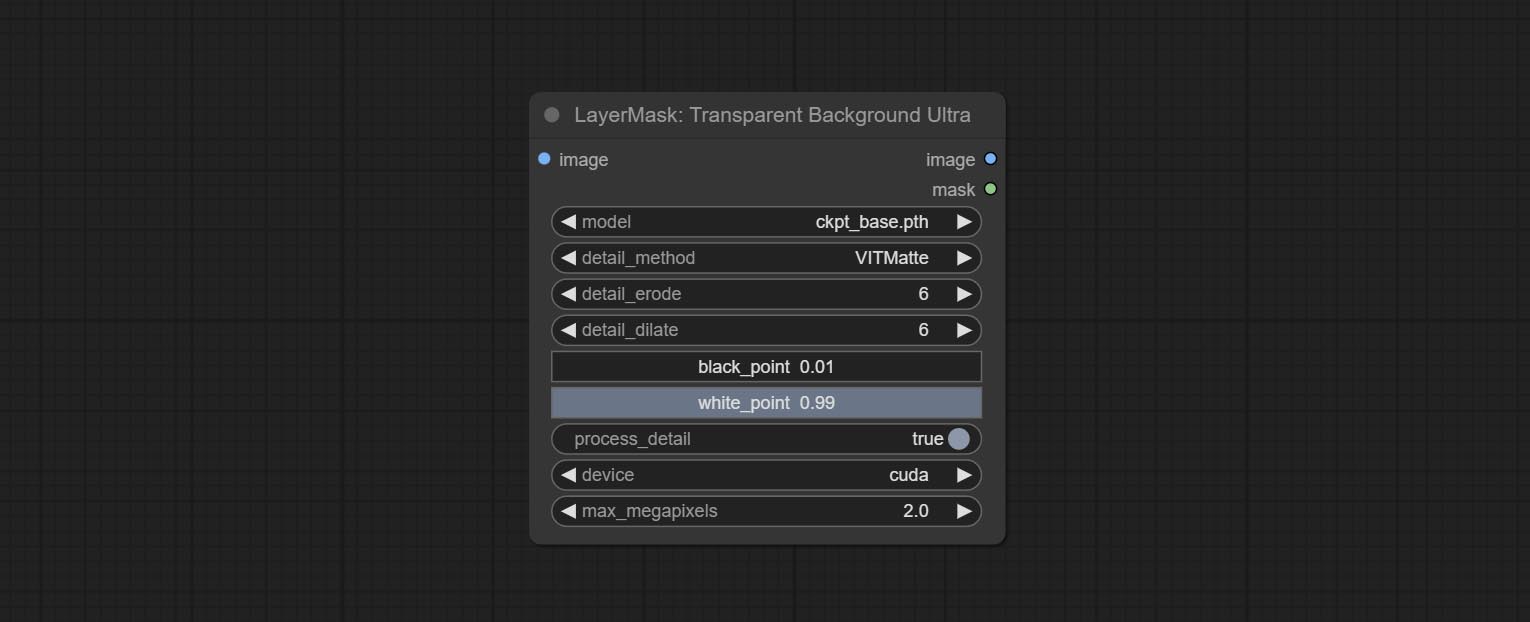

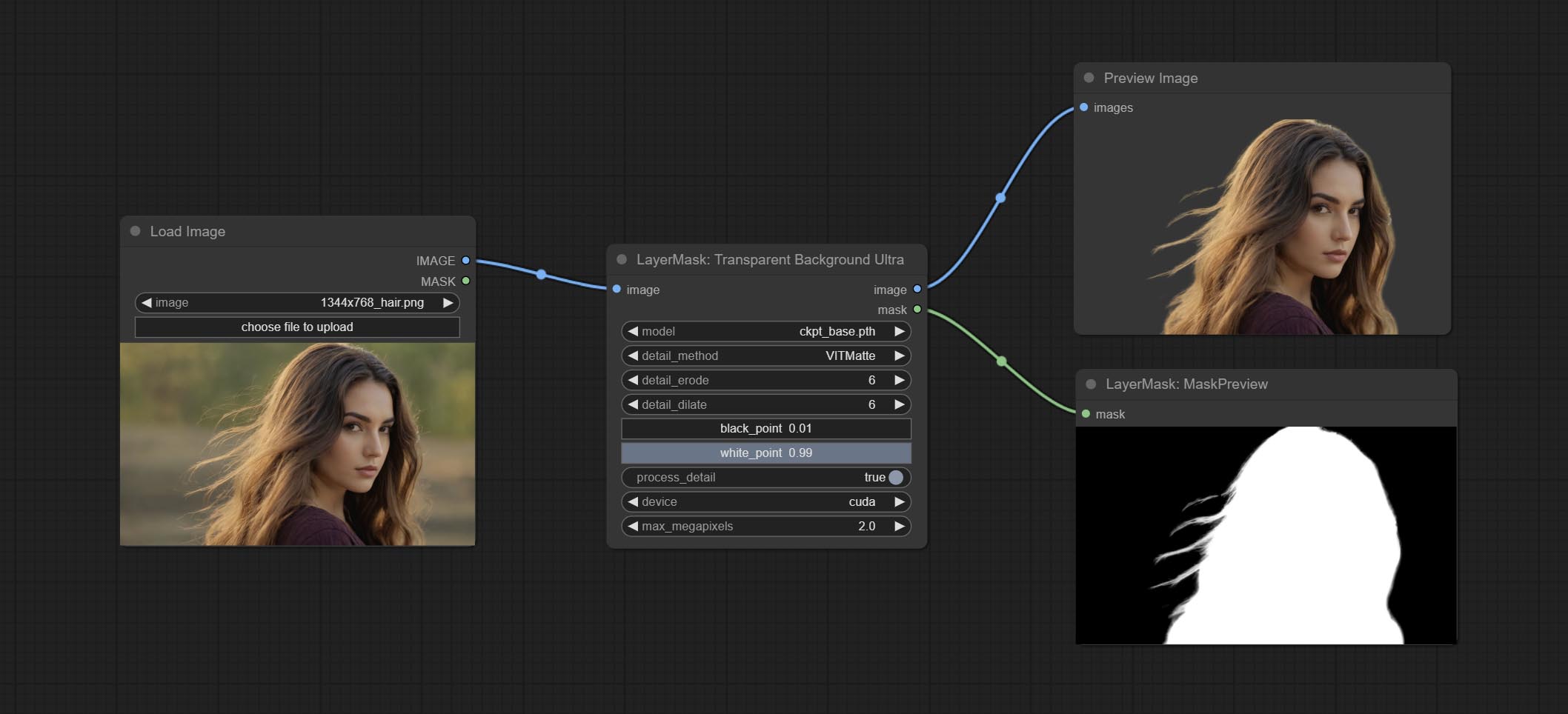

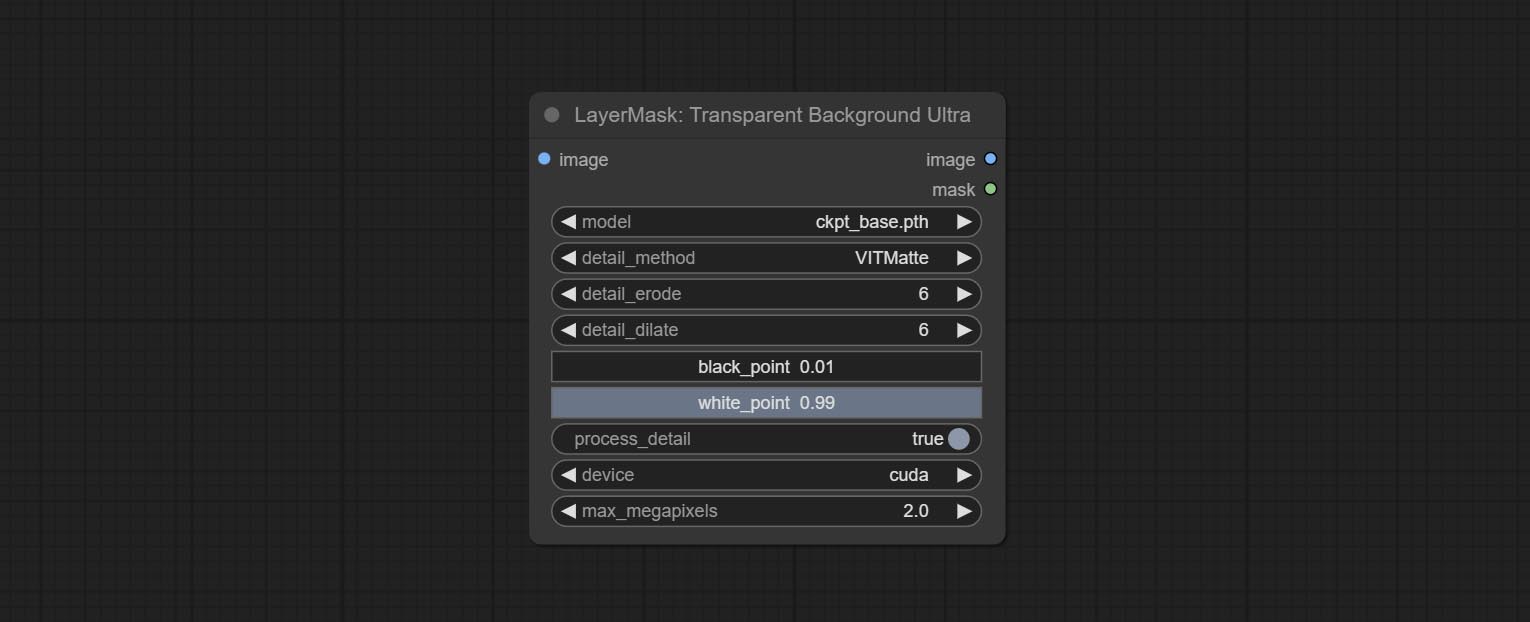

Transparentbackgroundultra 노드 새로운 모델 지원을 추가합니다. 지침에 따라 모델 파일을 다운로드하십시오.

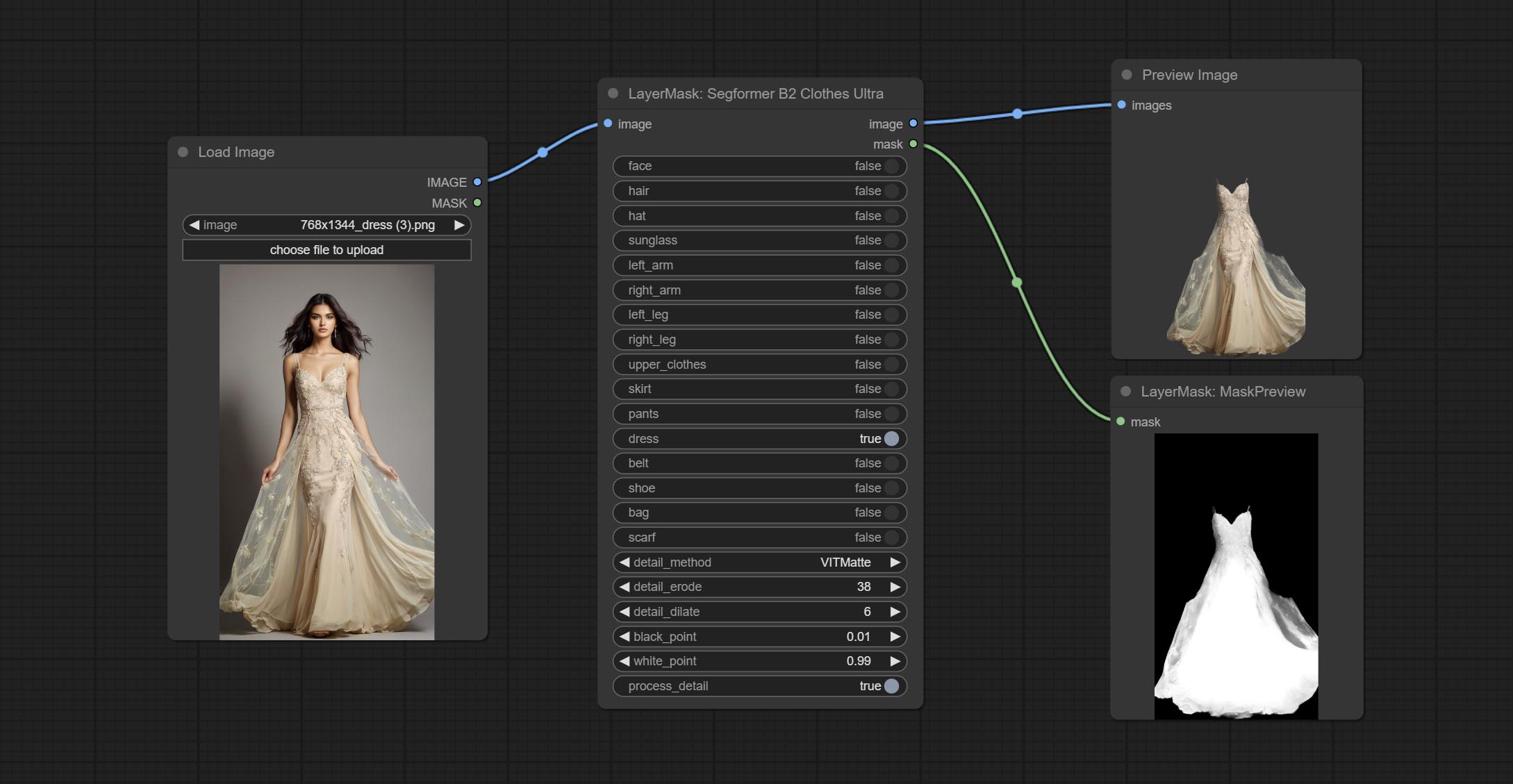

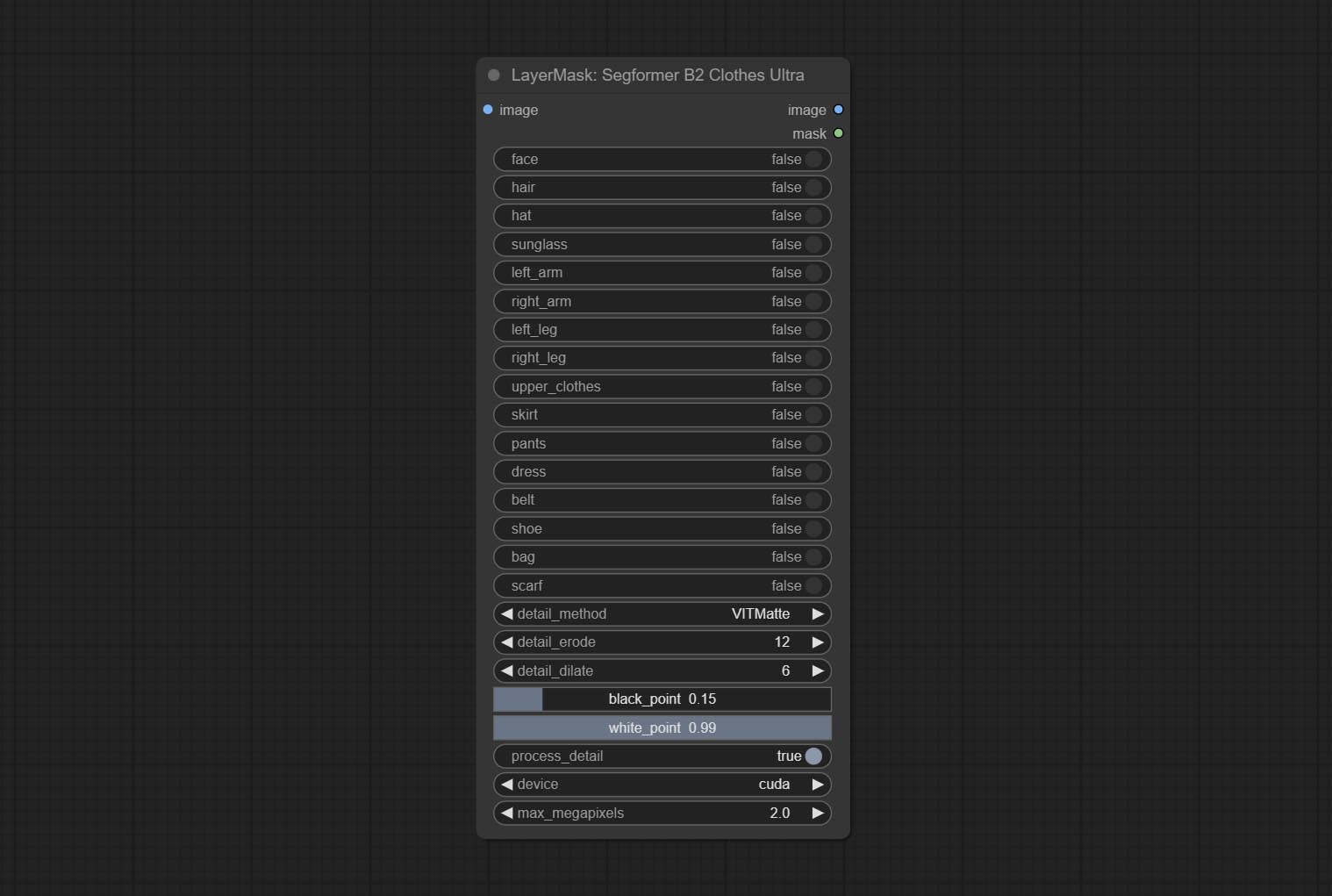

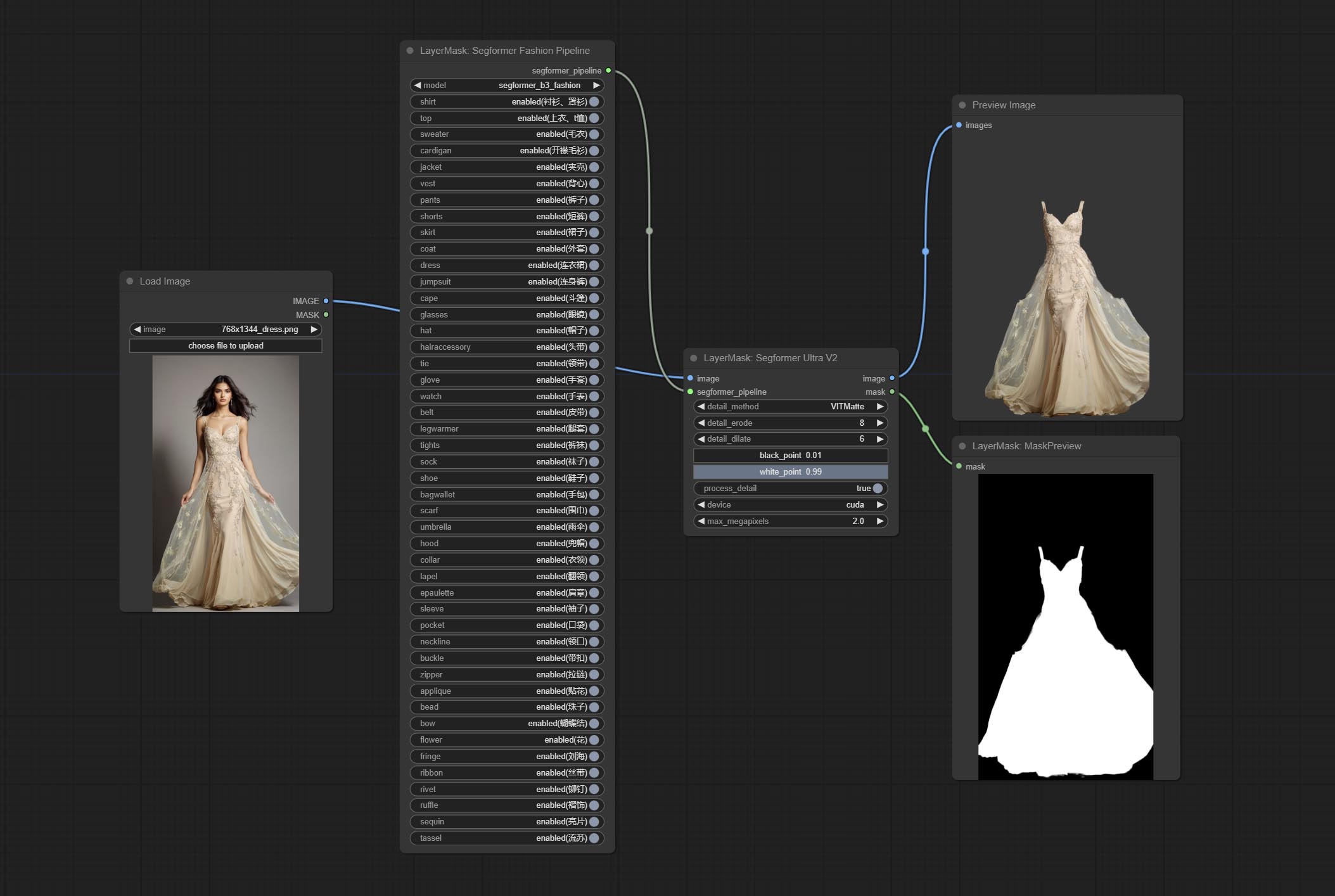

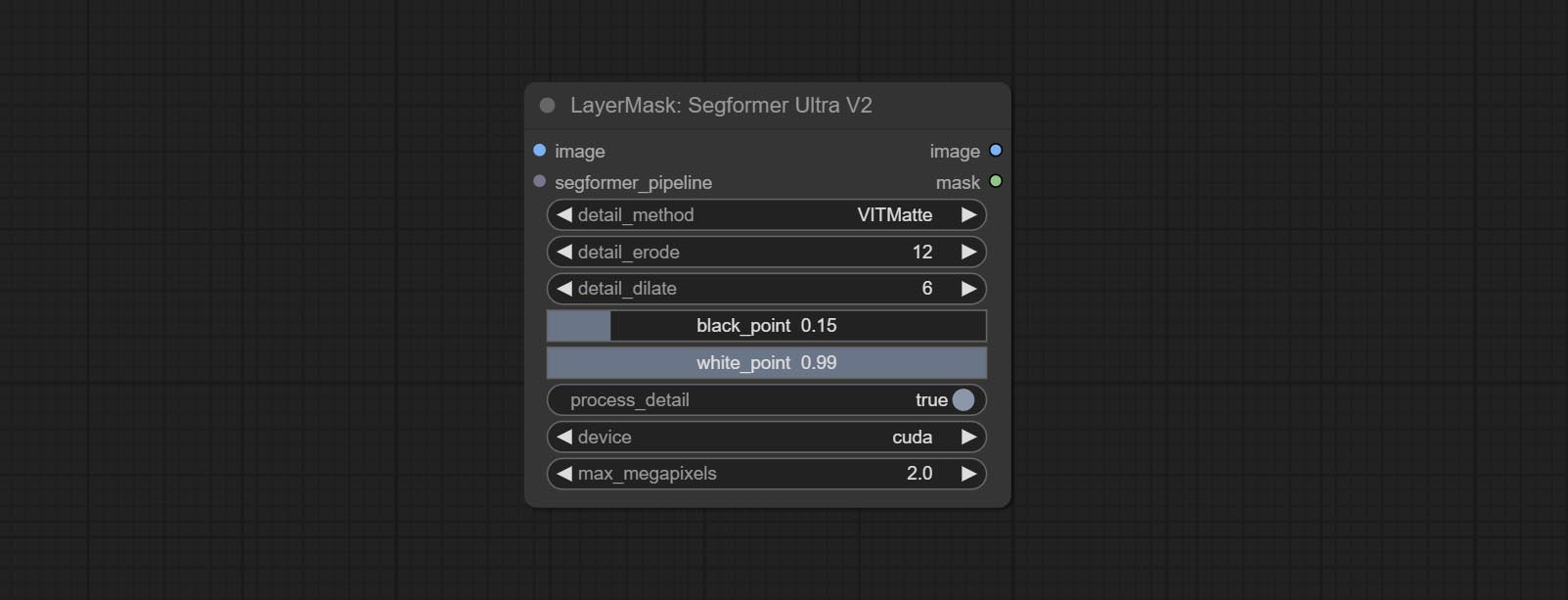

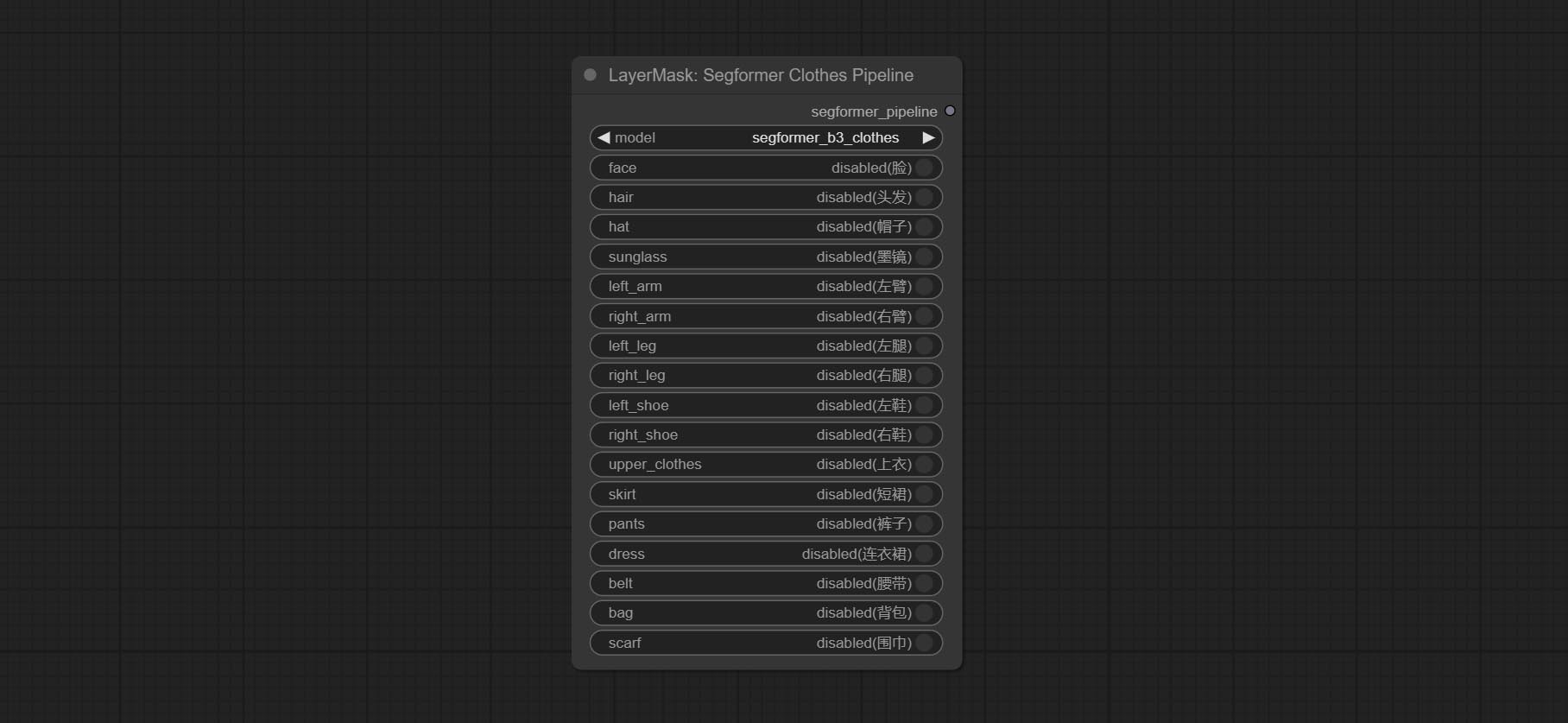

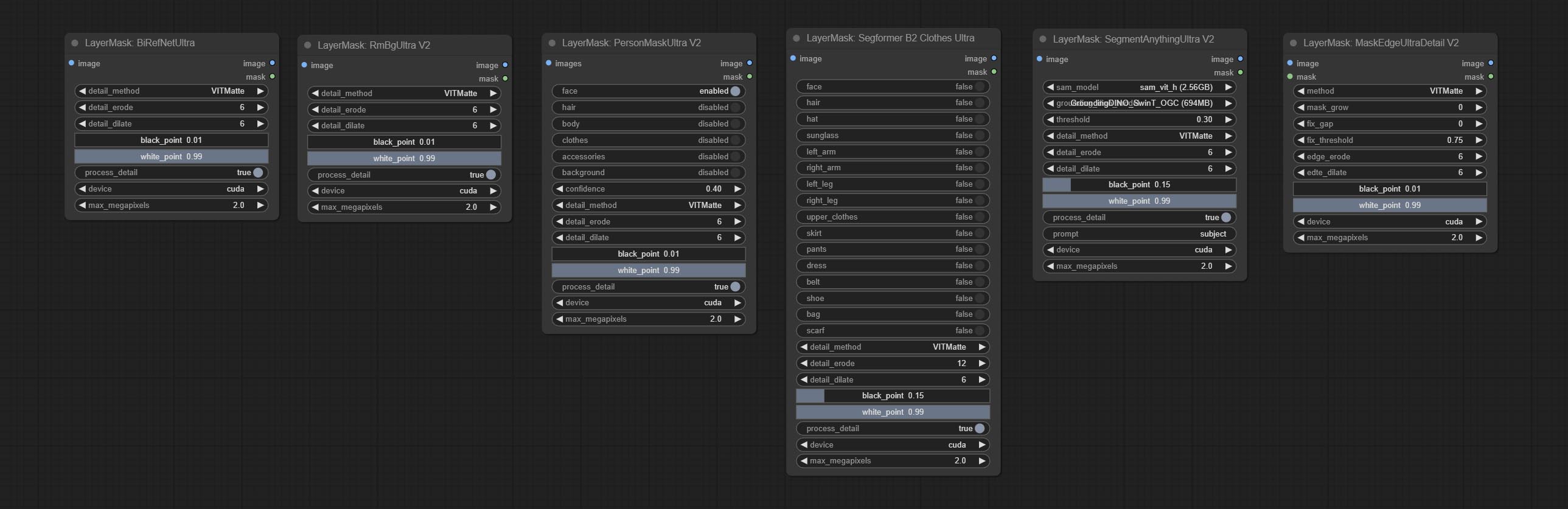

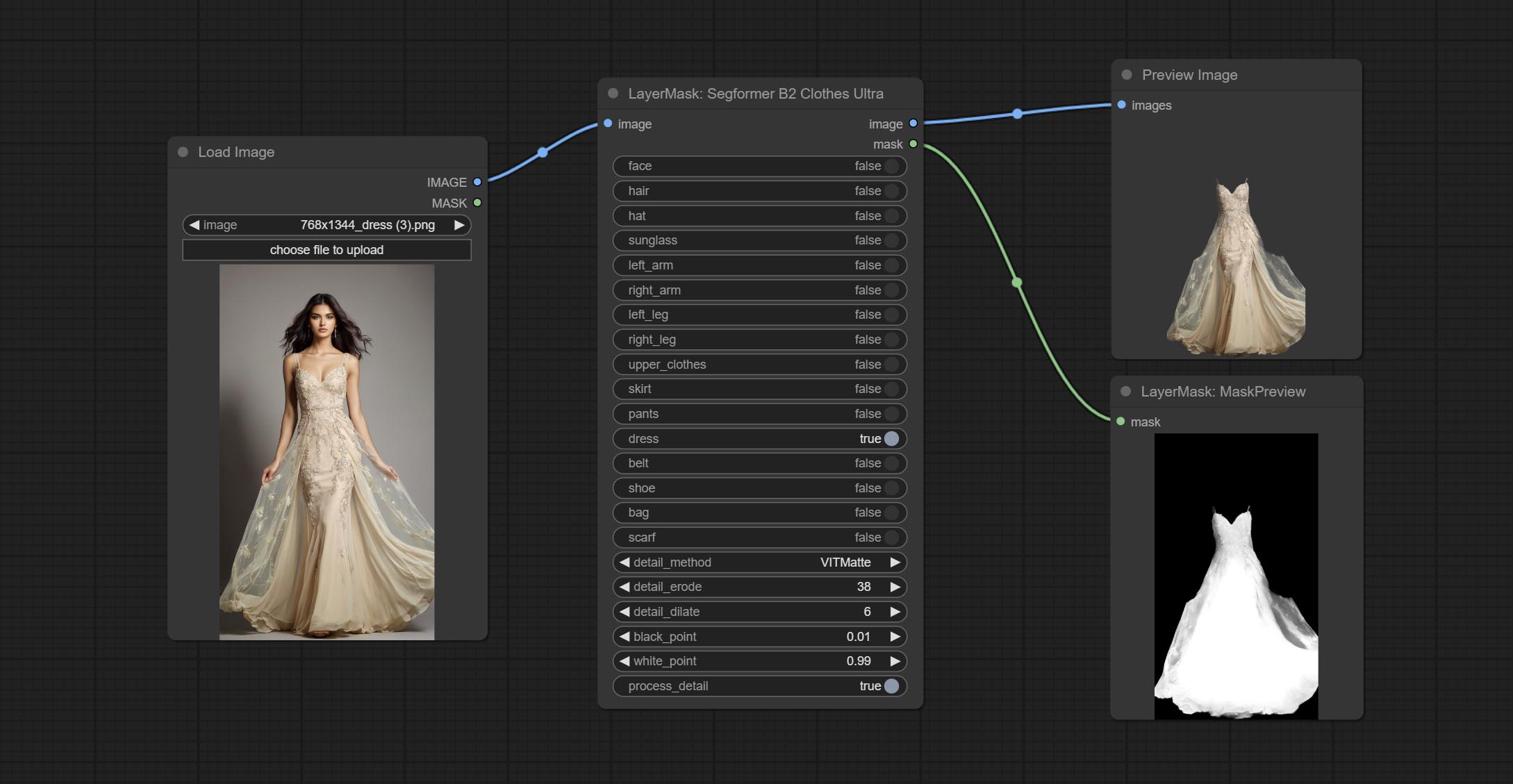

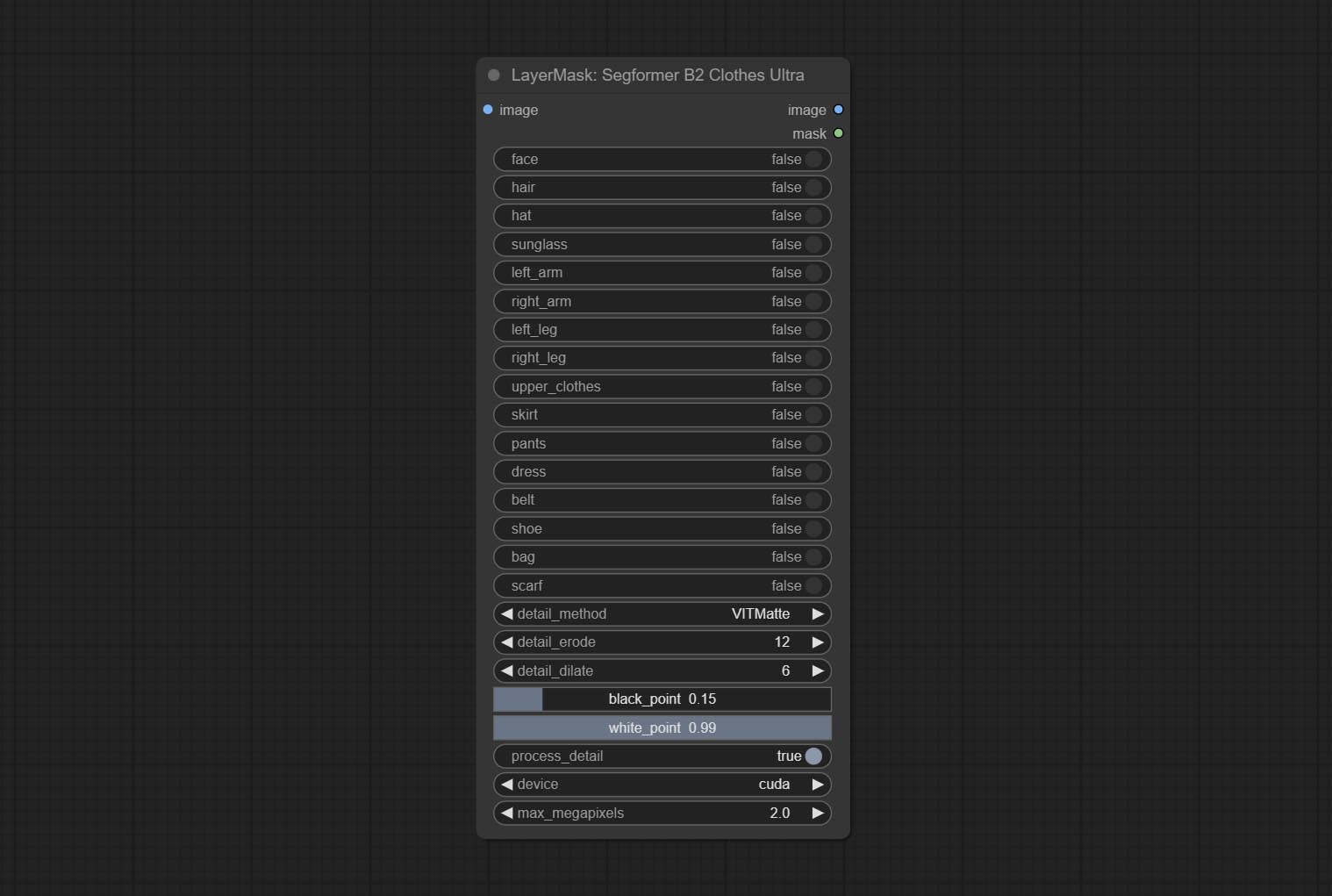

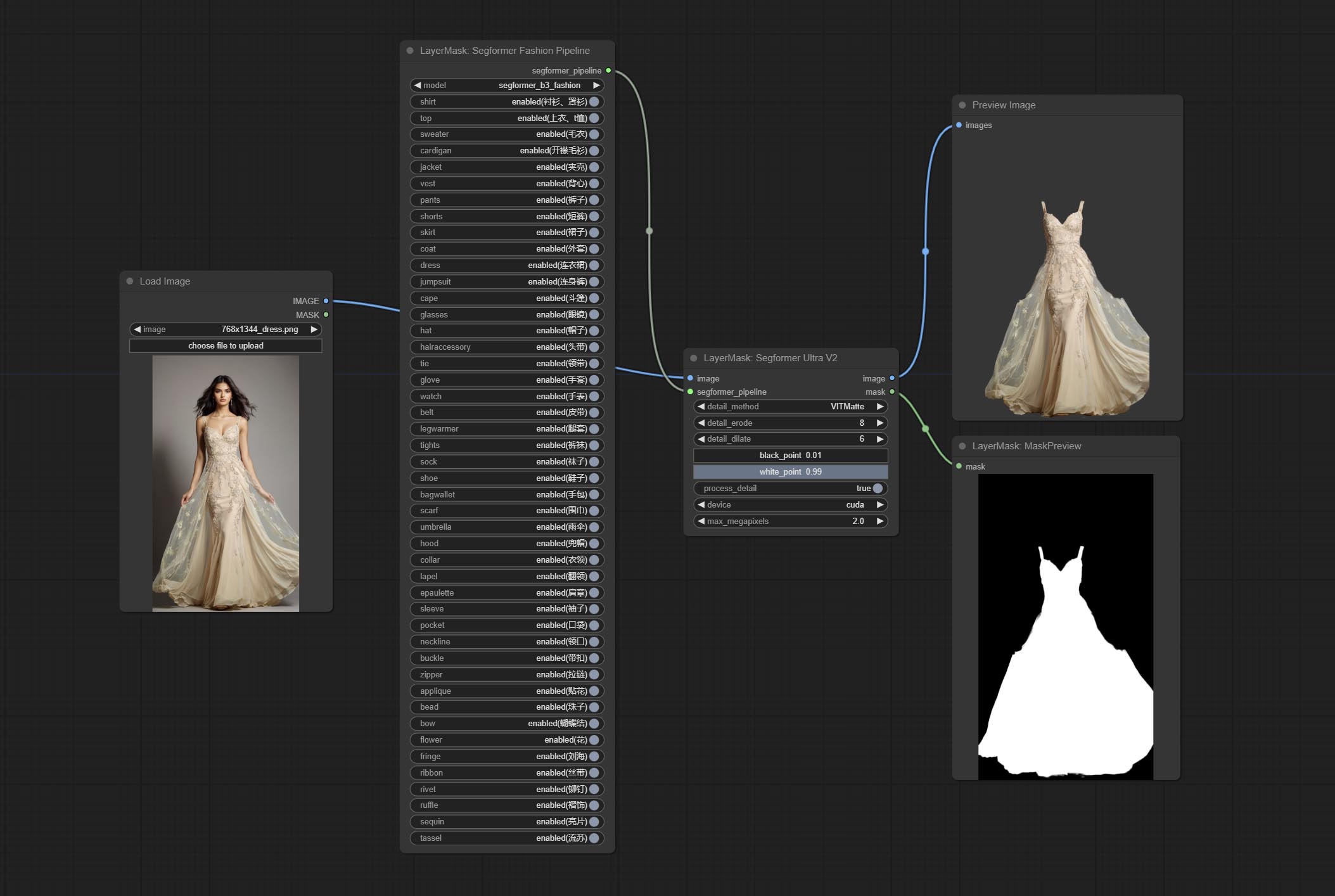

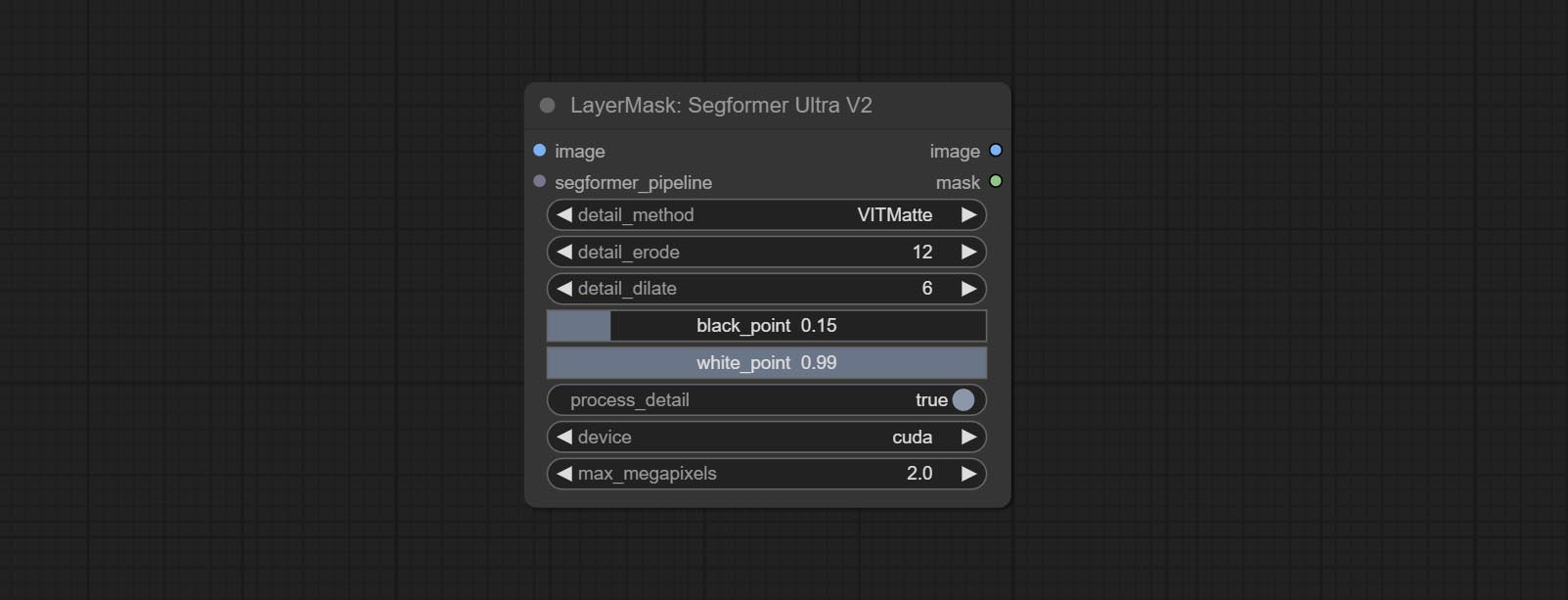

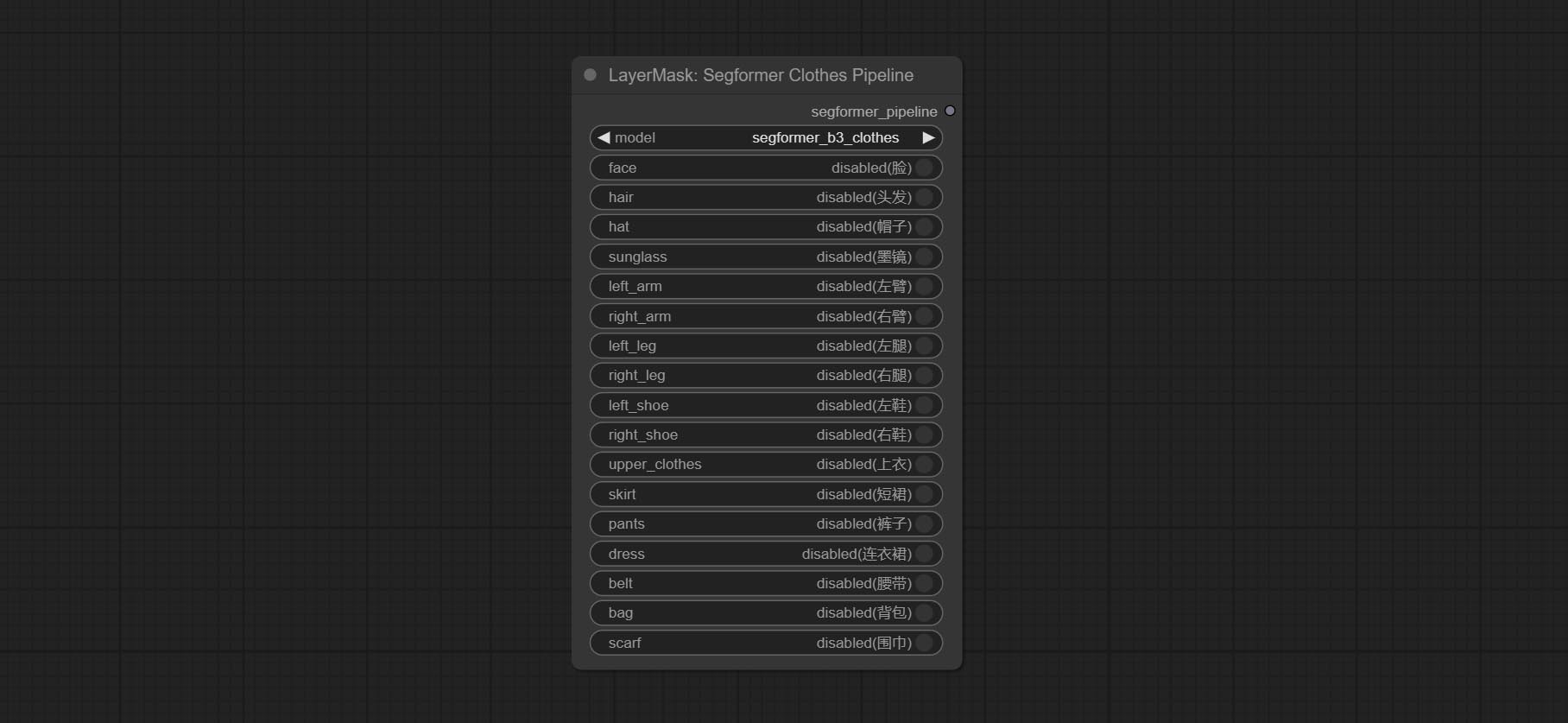

의류 세분화에 사용되는 Segformerultrav2, Segfromerfashionpipeline 및 Segformerclothespipippipeline 노드를 커밋합니다. 지침에 따라 모델 파일을 다운로드하십시오.

Commit install_requirements.bat 및 install_requirements_aki.bat , 종속성 패키지를 설치하려면 한 번의 클릭 솔루션.

투명한 백 그라운 노드 노드를 커밋하면 투명한 백 지질 모델을 기반으로 배경을 제거합니다.

Ultra Node의 Vitmatte 모델을 로컬 호출로 변경하십시오. Vitmatte 모델의 모든 파일을 ComfyUI/models/vitmatte 폴더로 다운로드하십시오.

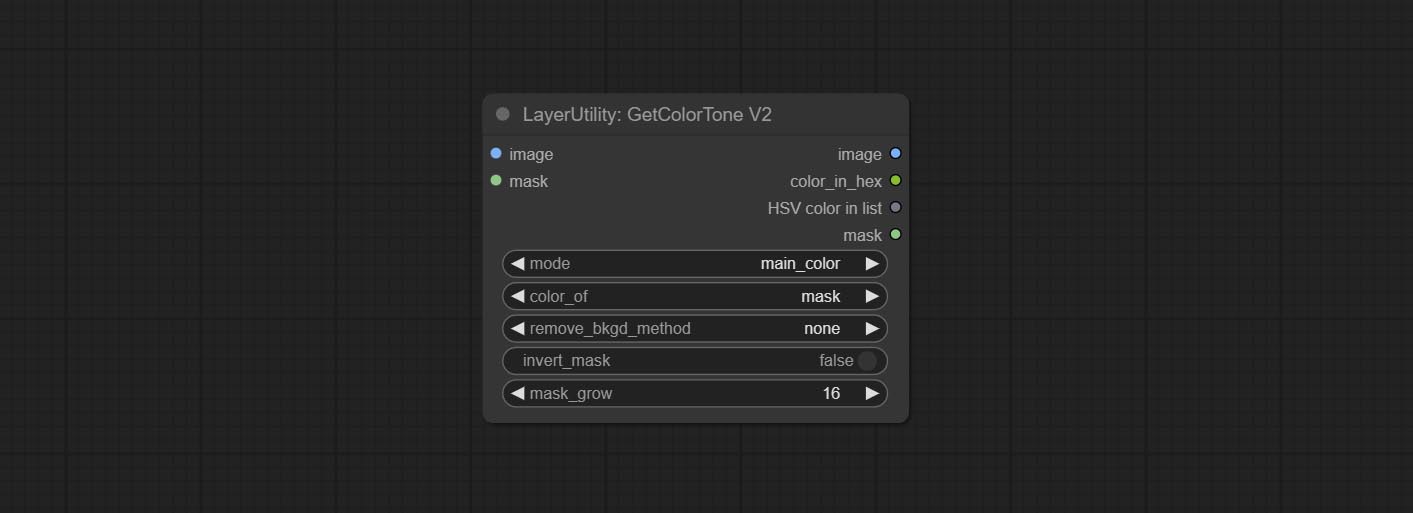

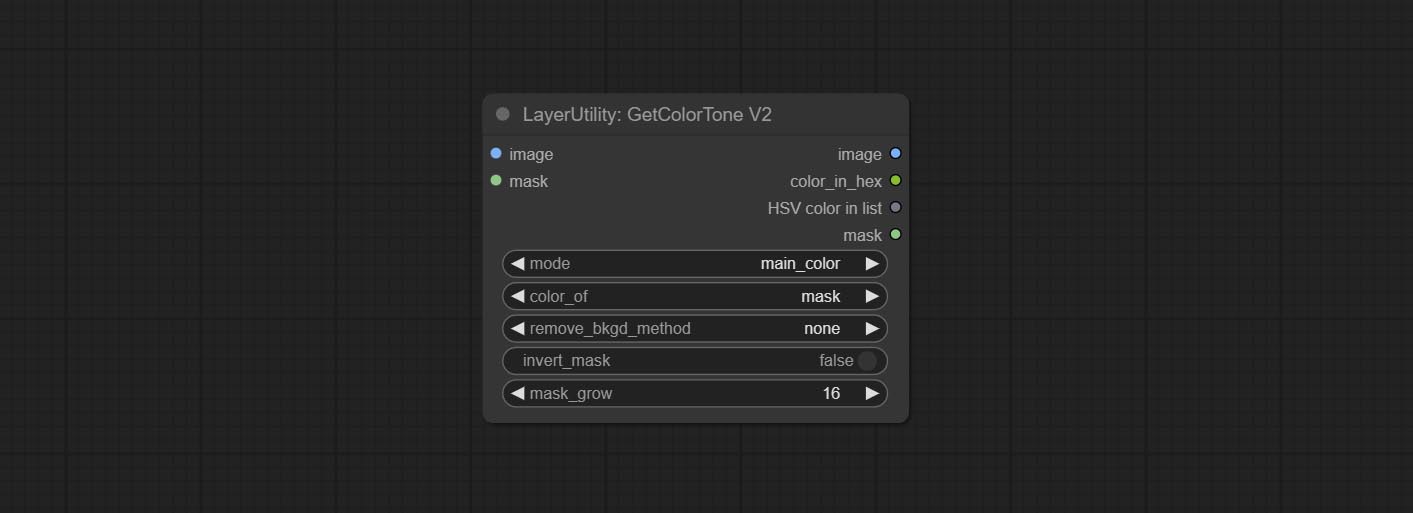

getColortOnev2 노드 mask 메소드를 색상 선택 옵션에 추가하여 마스크 내에서 기본 색상과 평균 색상을 정확하게 얻을 수 있습니다.

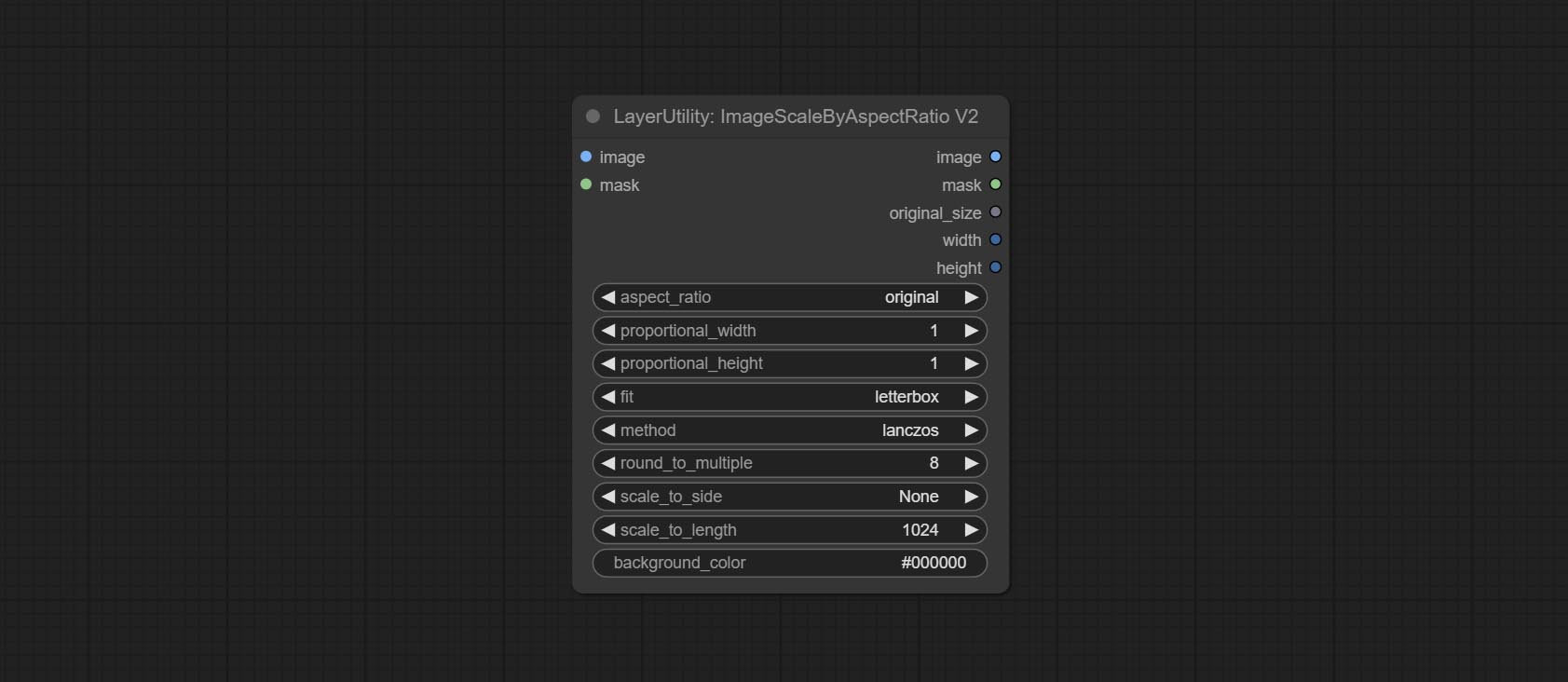

ImagesCaleByAspectratiov2 노드 "background_color"옵션을 추가합니다.

LUT Apply "강도"옵션을 추가하십시오.

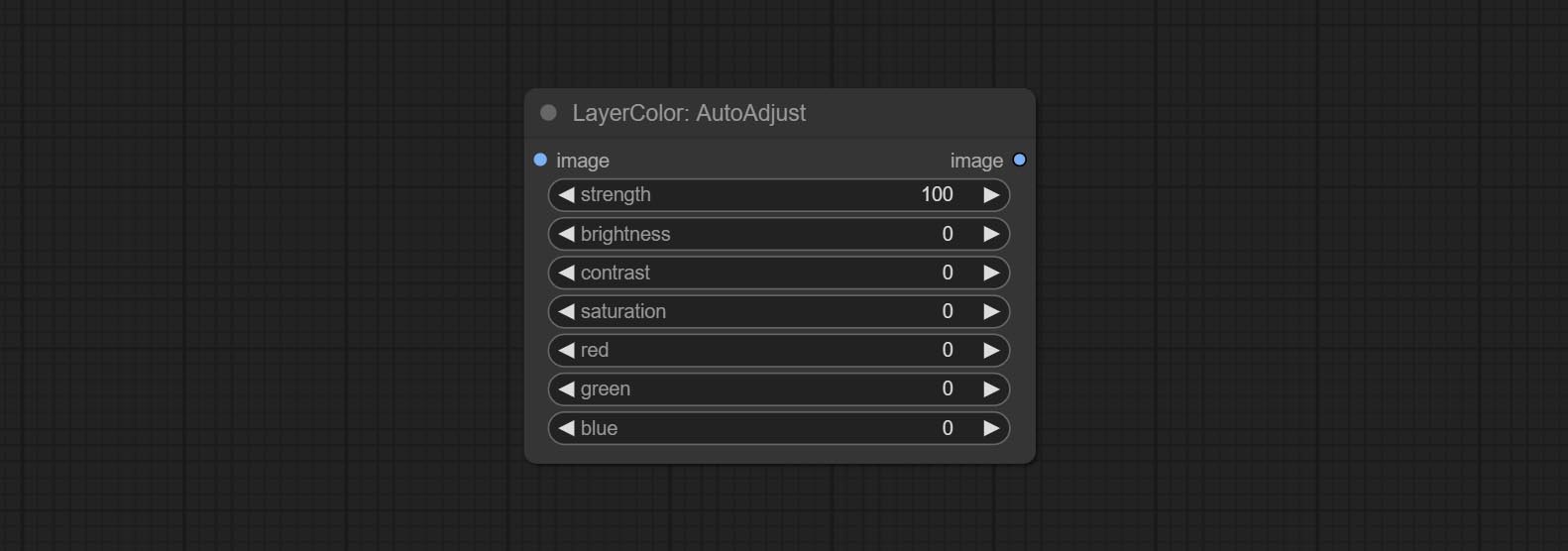

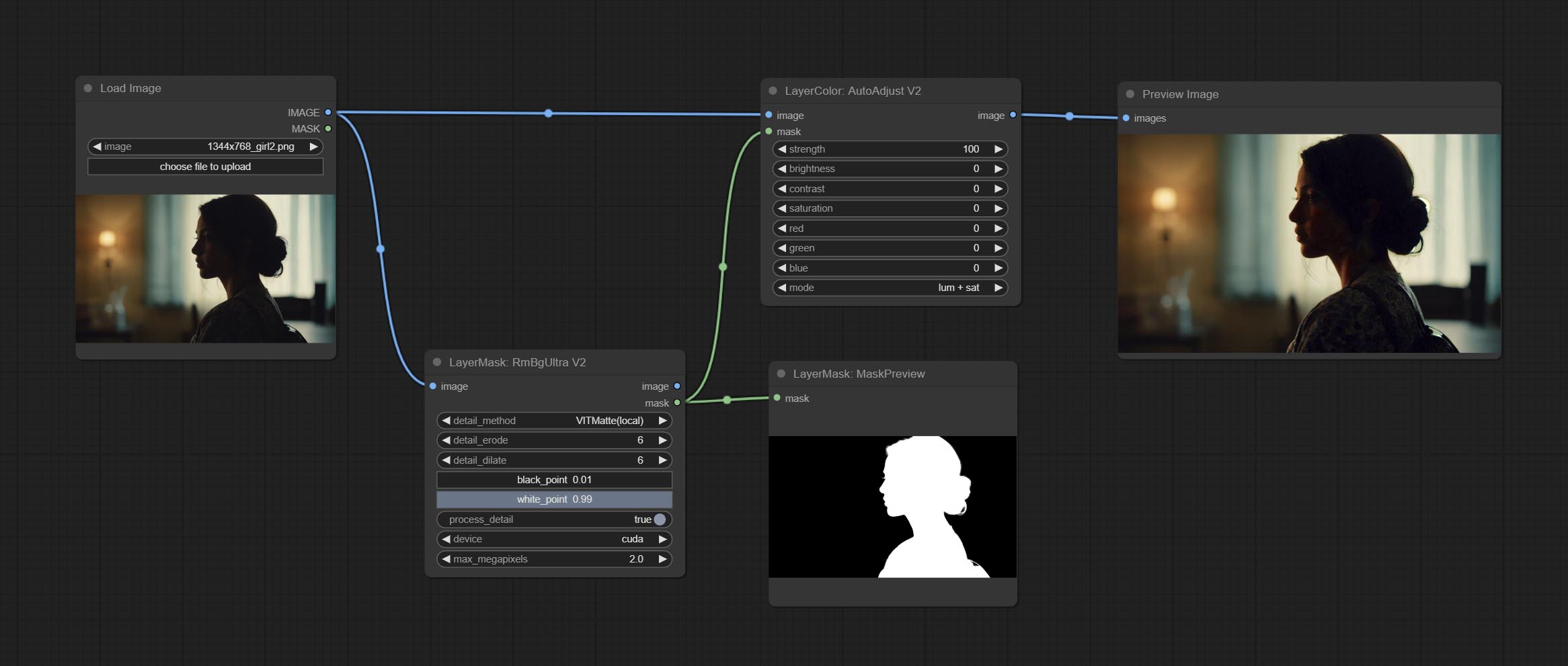

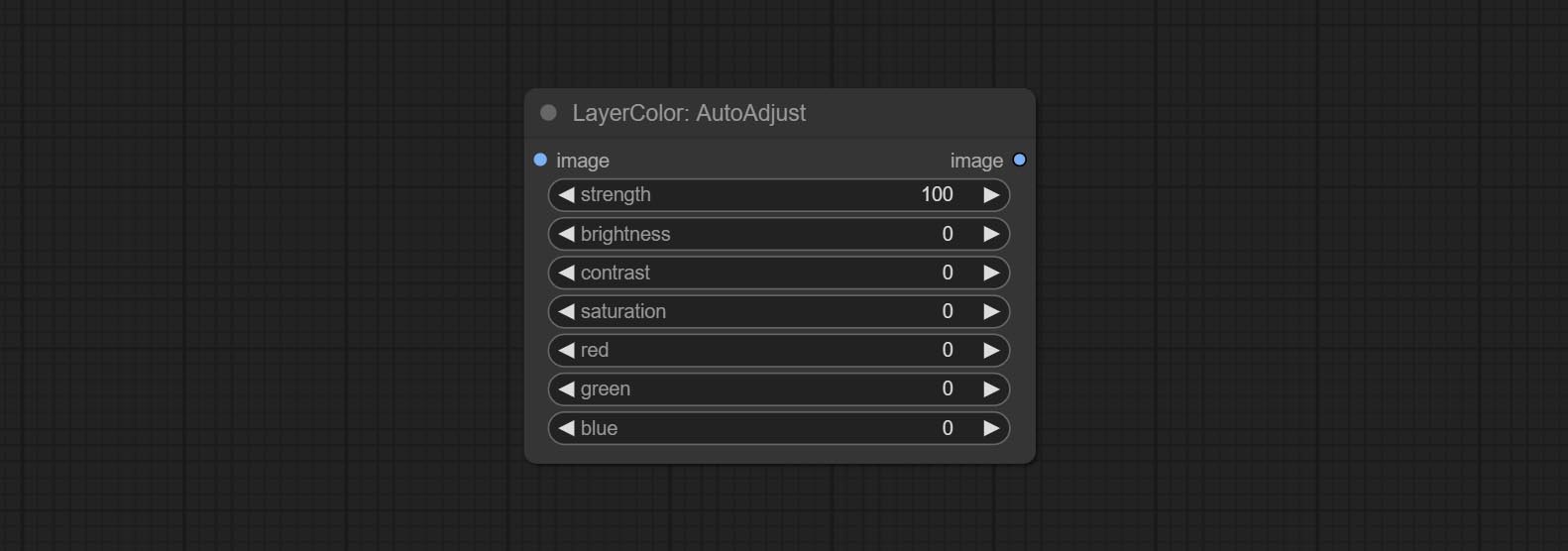

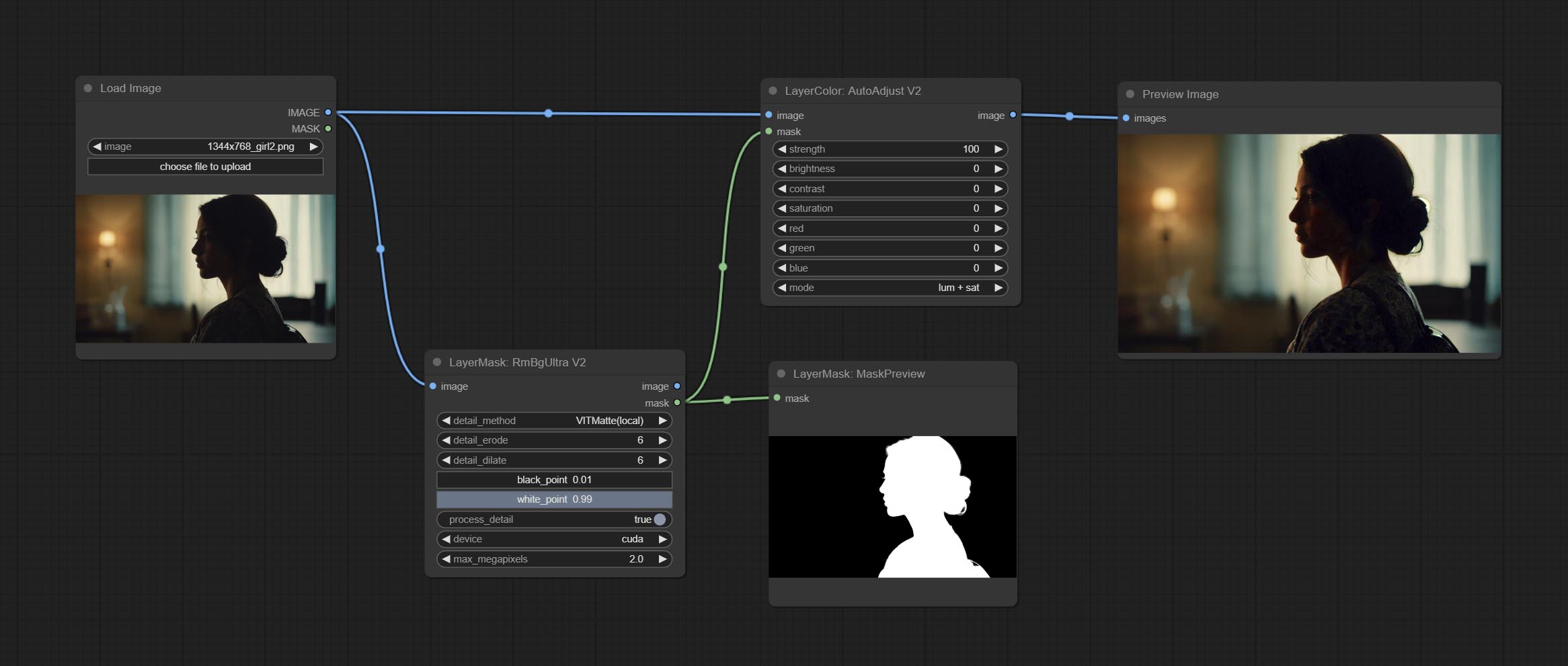

AutoadJustv2 노드를 커밋하고 옵션 마스크 입력을 추가하고 여러 자동 색상 조정 모드를 지원합니다.

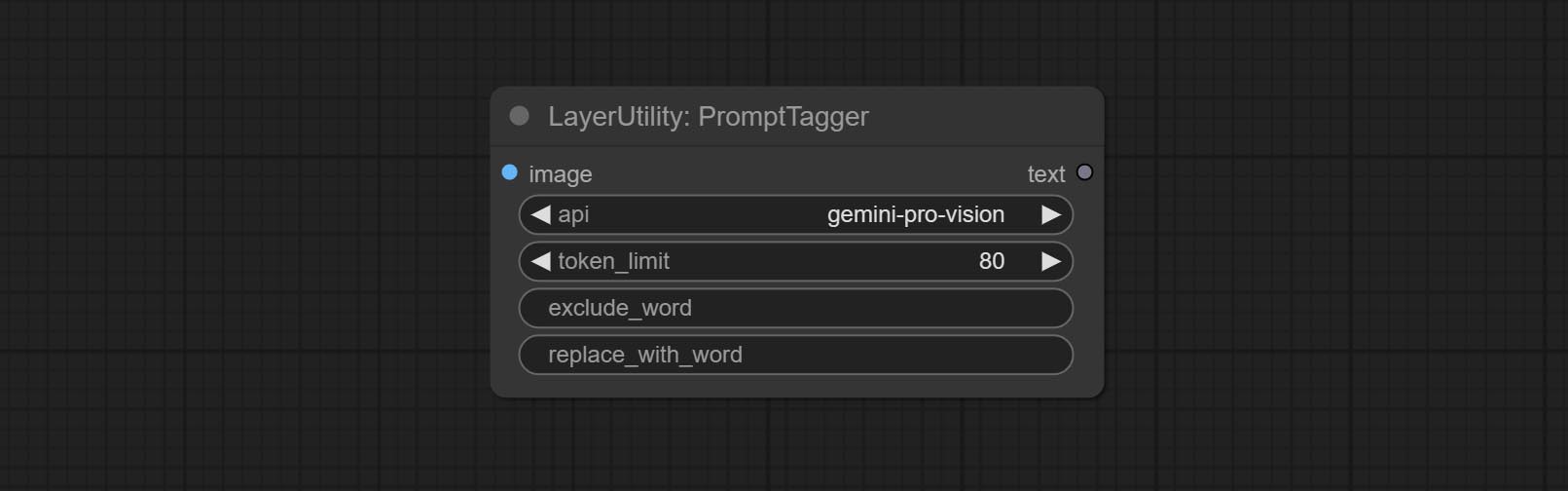

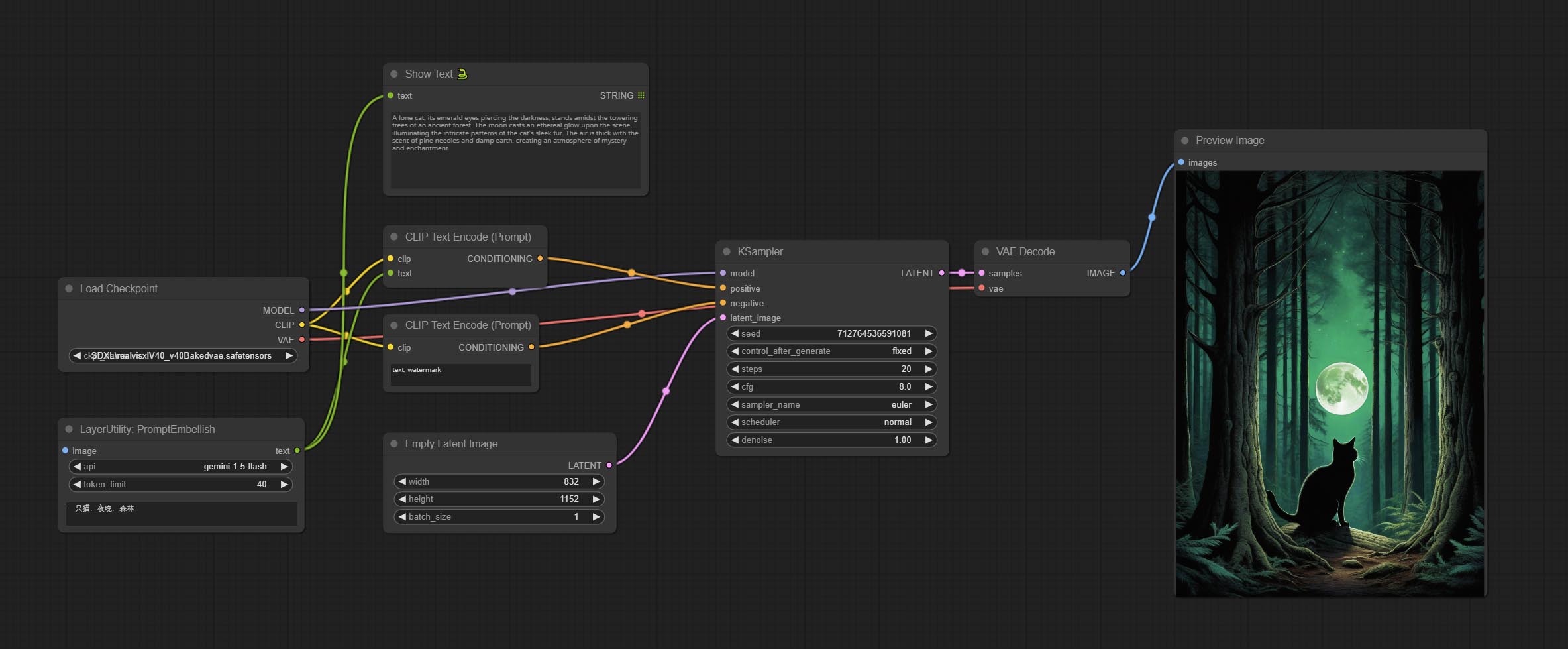

다가오는 Gemini-Pro Vision Services의 중단으로 인해 Prompttagger와 Prustembellish는 "Gemini-1.5-Flash"API를 추가하여 계속 사용했습니다.

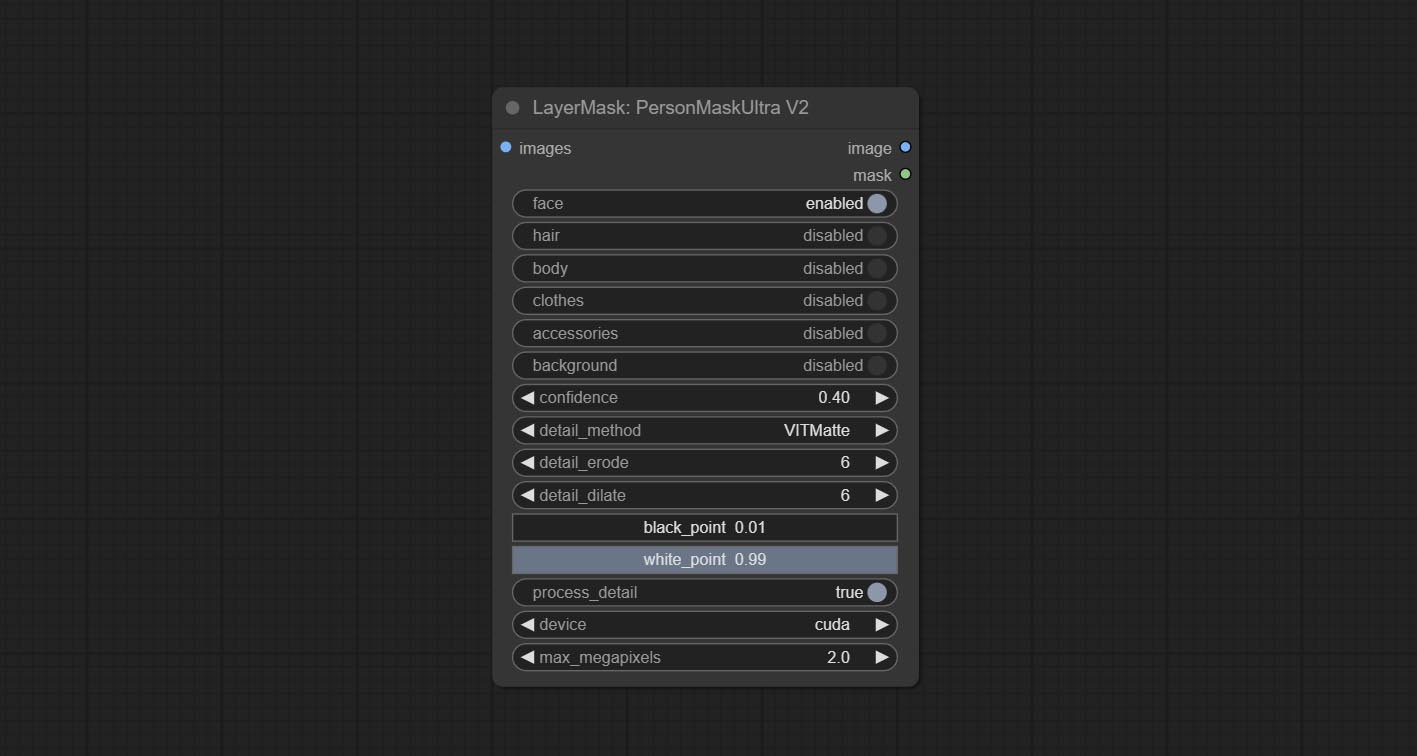

Ultra Nodes는 Cuda 장치에서 VitMatte 실행하는 옵션을 추가하여 달리기 속도가 5 배 증가했습니다.

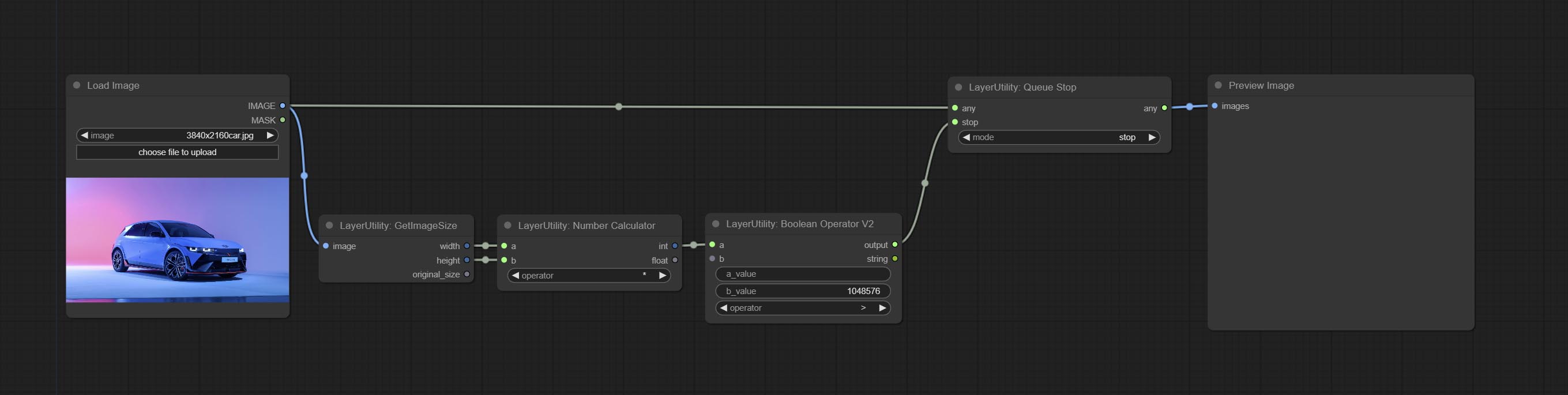

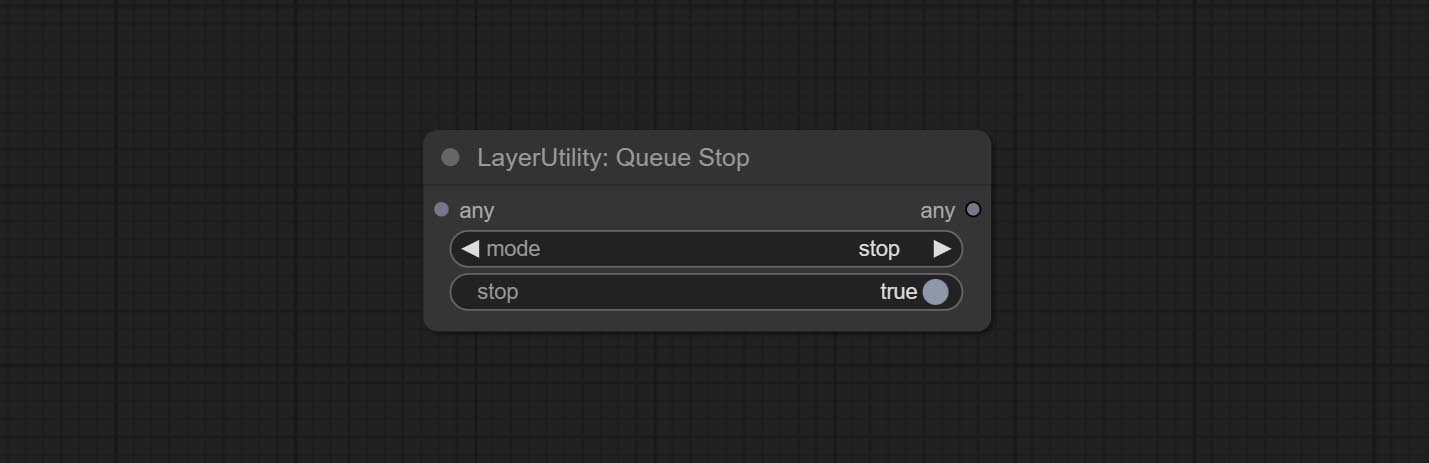

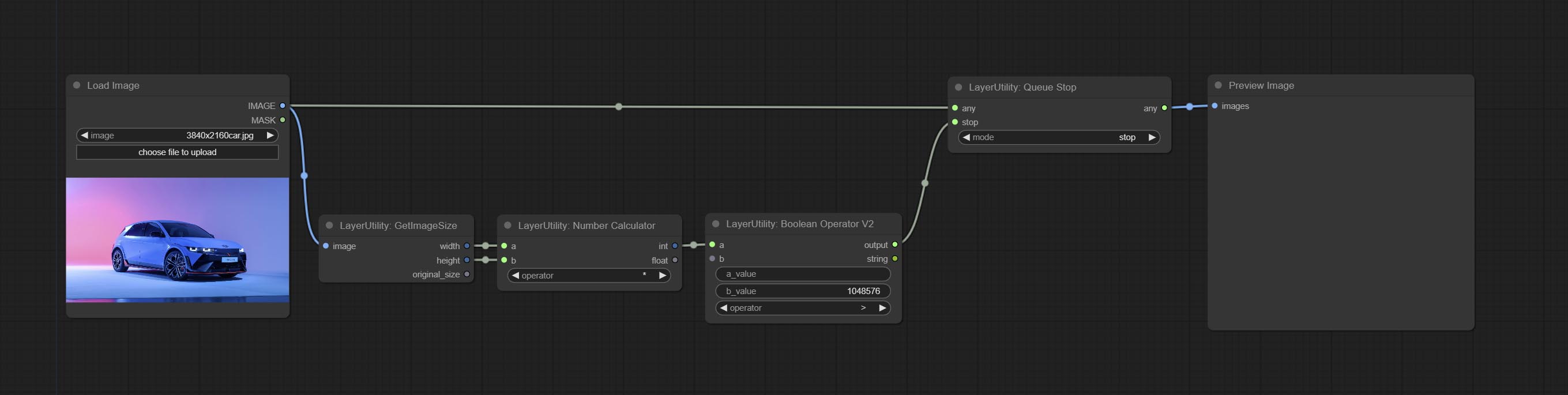

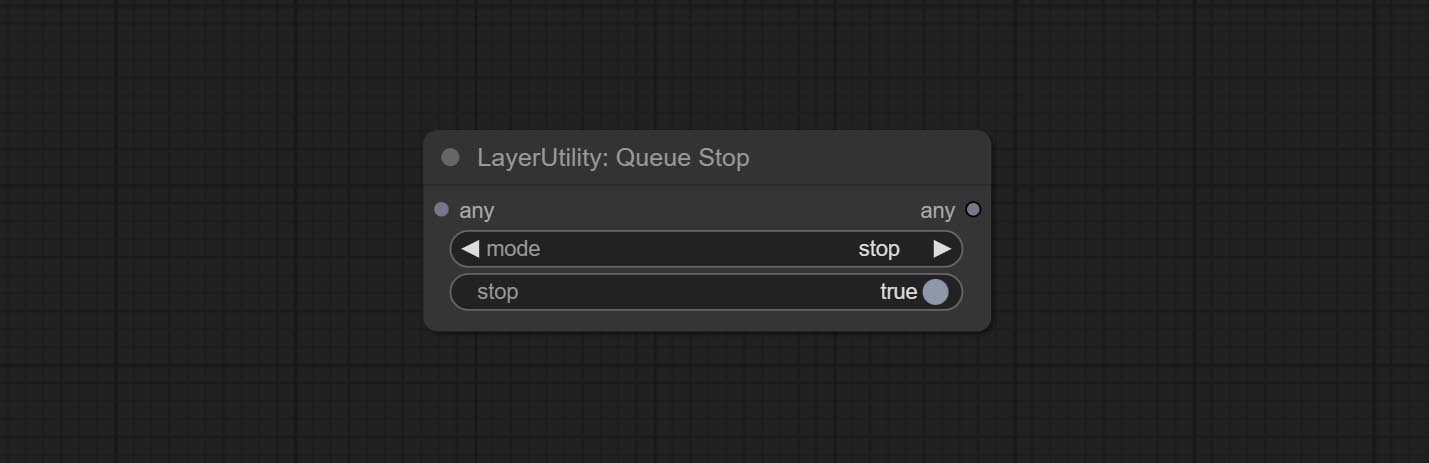

큐 작업을 종료하는 데 사용되는 Queuestop 노드를 커밋하십시오.

대형 이미지를 처리 할 때 Ultra 노드에 대한 VitMate 방법의 성능을 최적화하십시오.

Cropbymaskv2는 여러 가지 크기를 여러 번 둥글게하기 위해 옵션을 추가합니다.

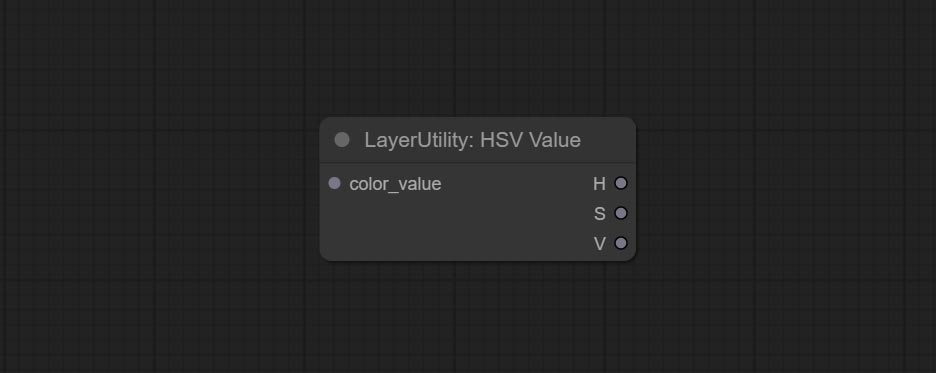

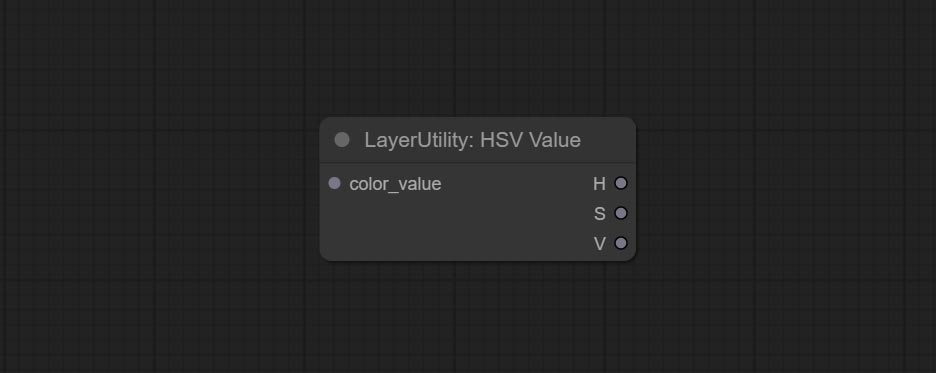

Commit Checkmask Node는 마스크에 충분한 효과적인 영역이 포함되어 있는지 여부를 감지합니다. HSVValue 노드를 커밋하면 색상 값을 HSV 값으로 변환합니다.

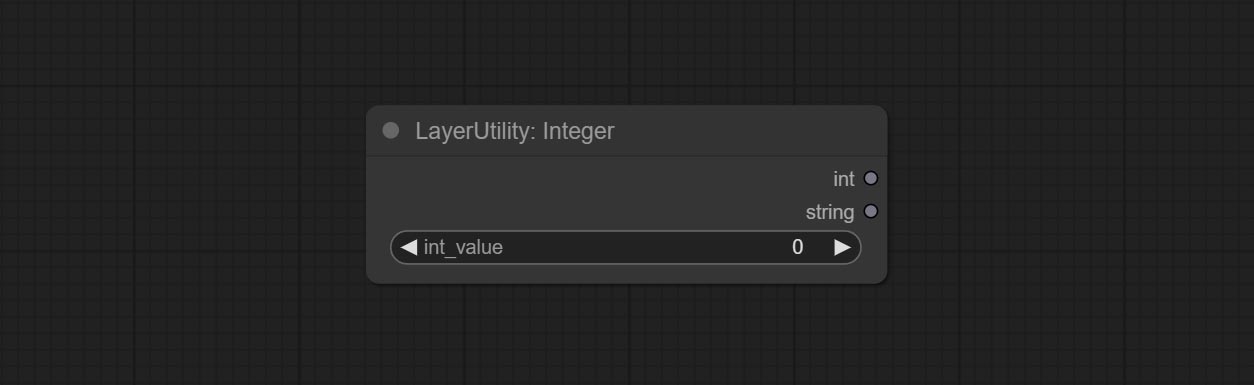

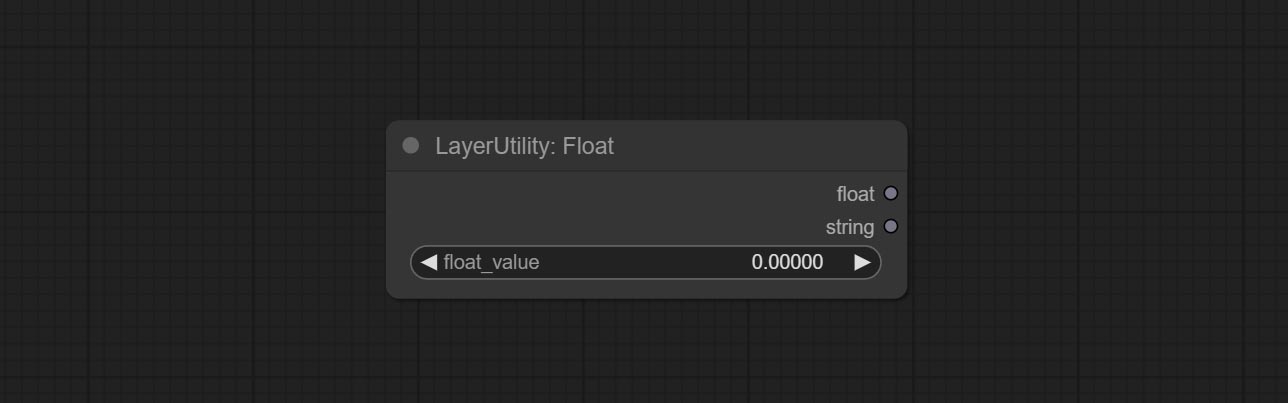

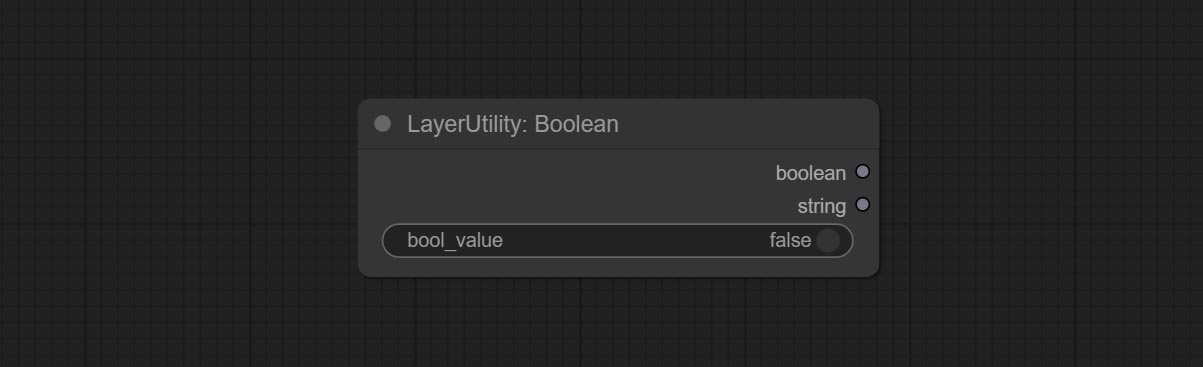

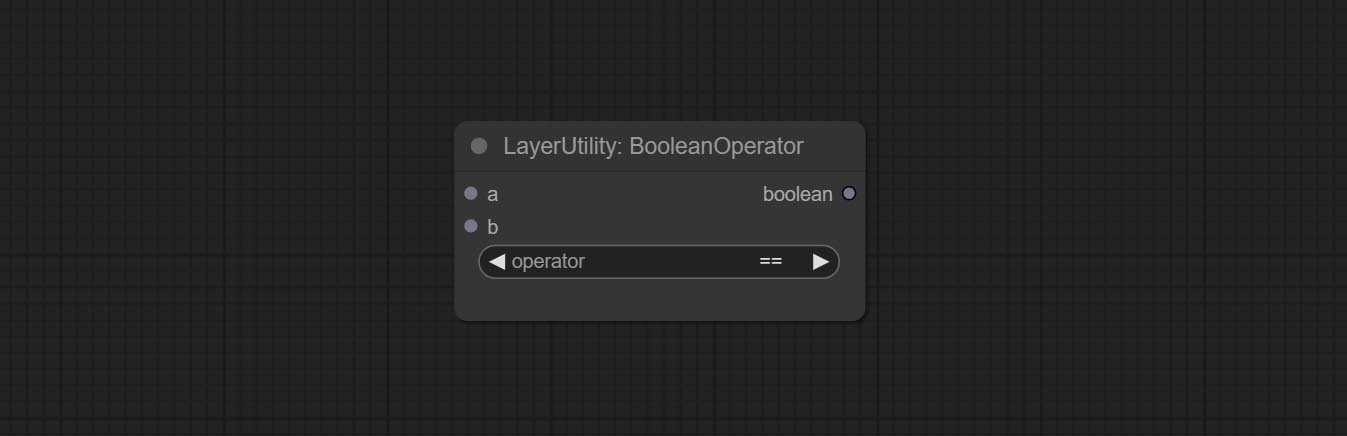

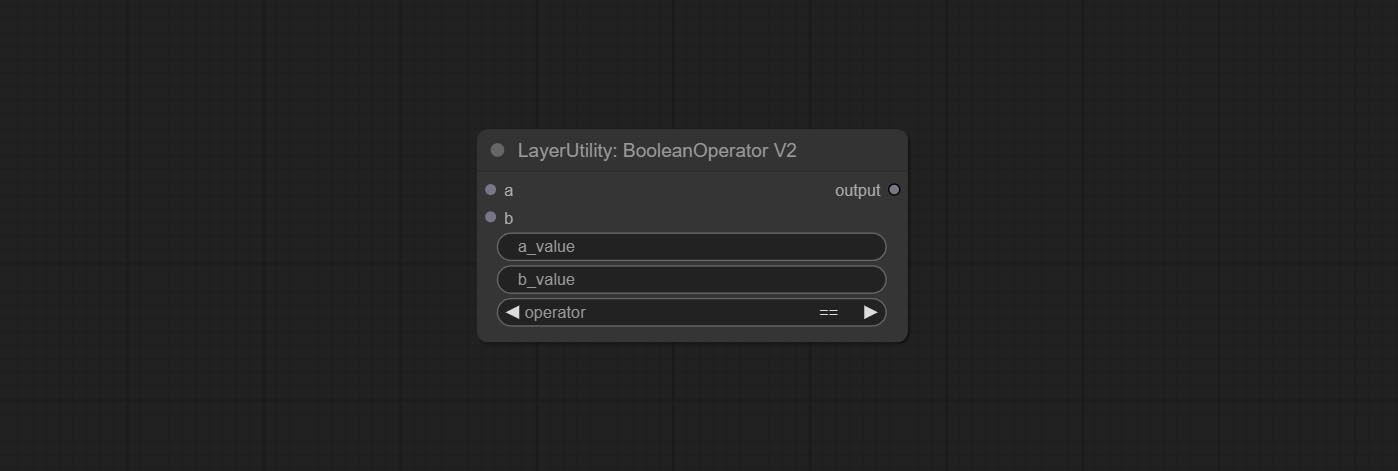

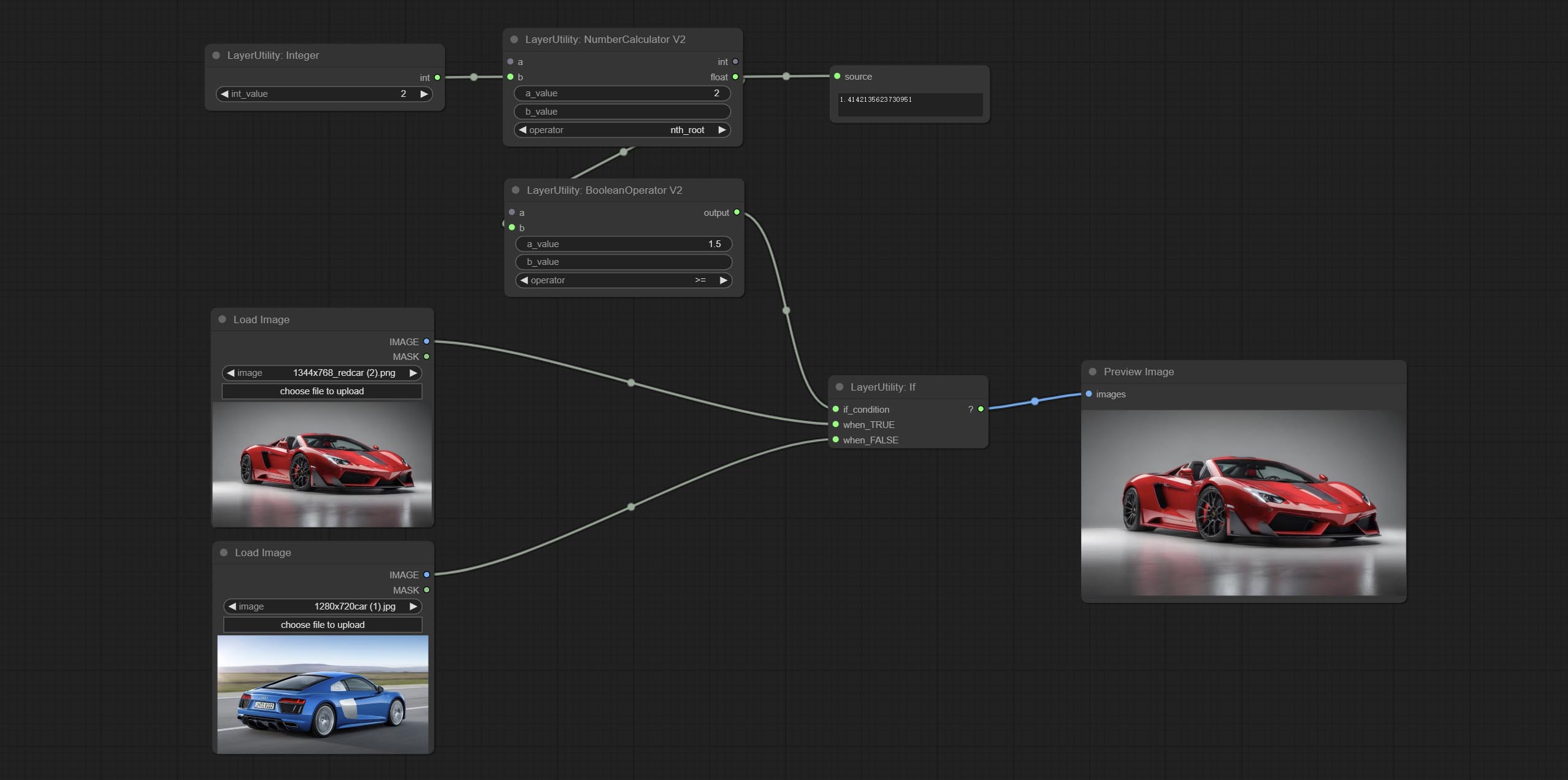

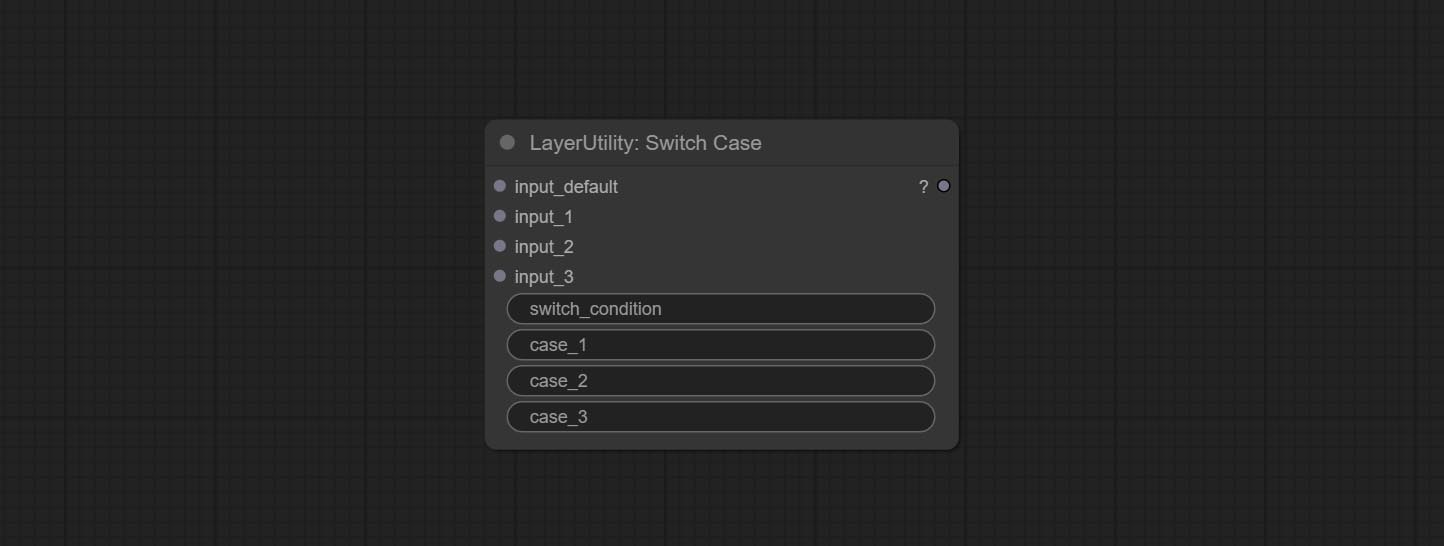

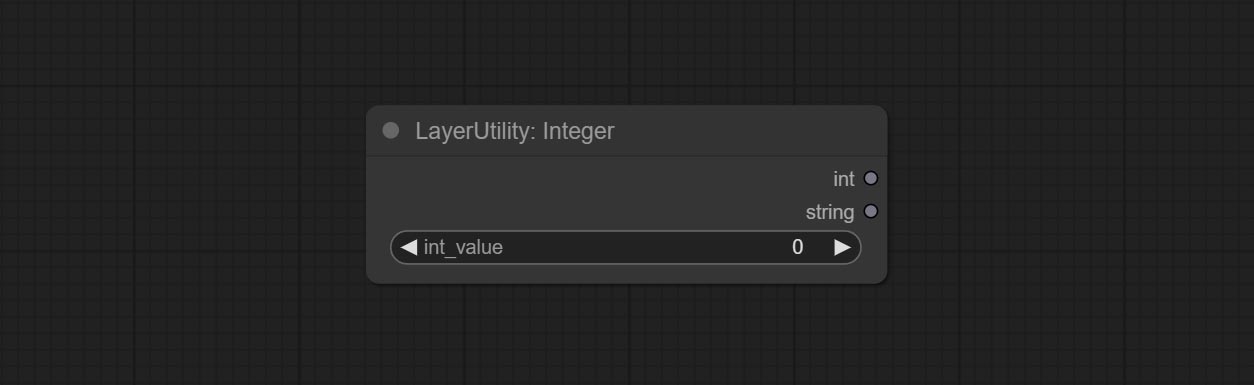

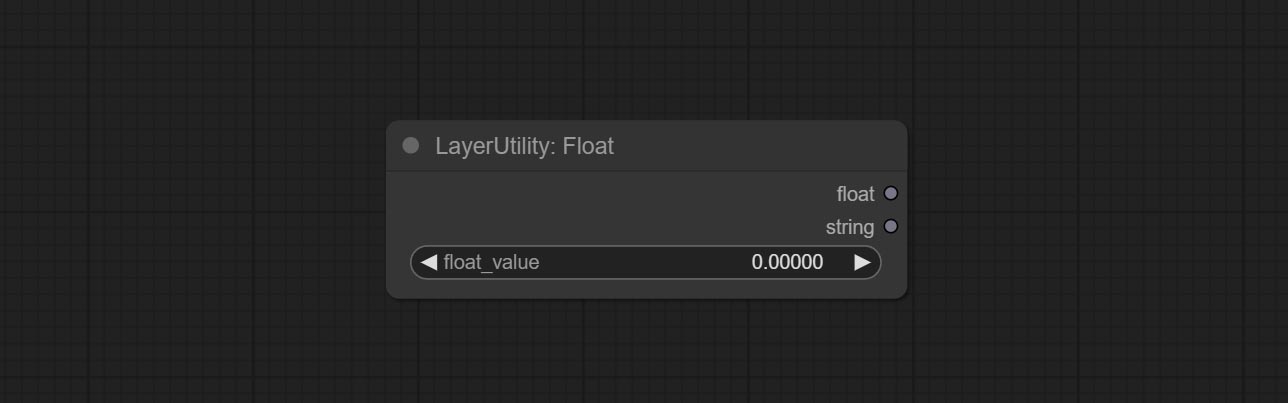

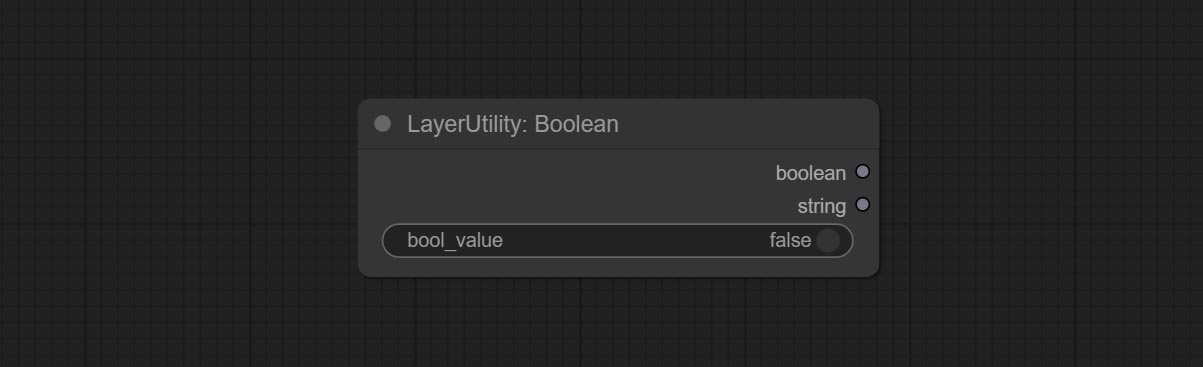

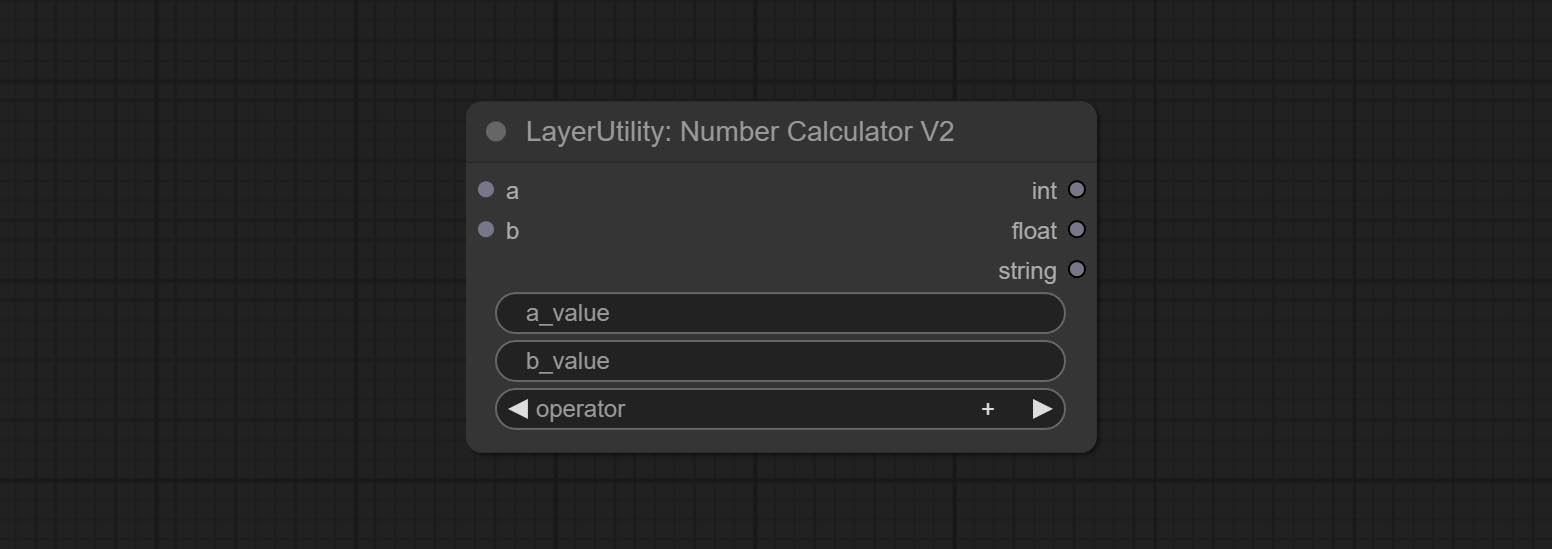

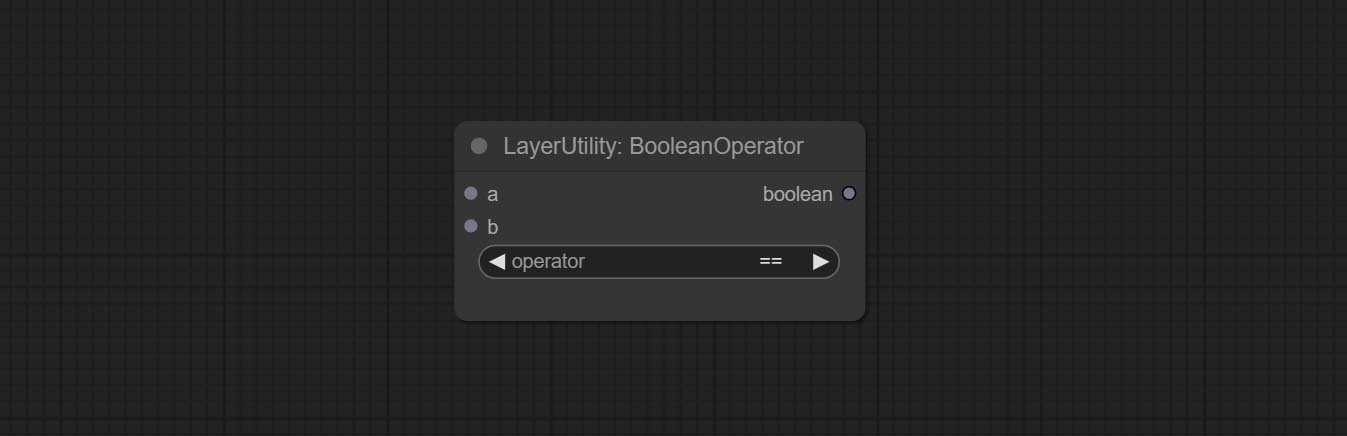

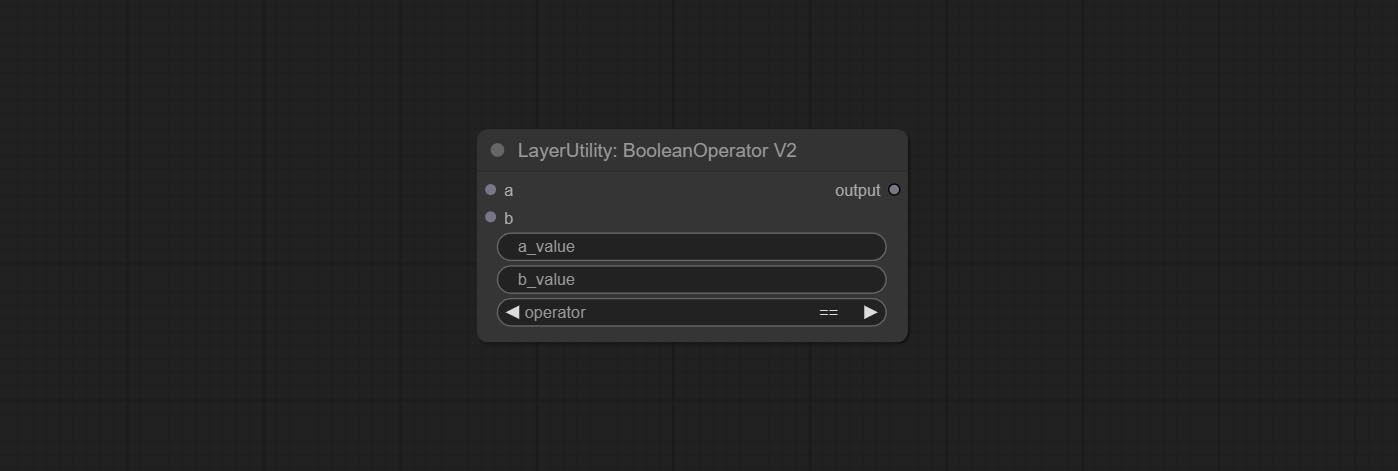

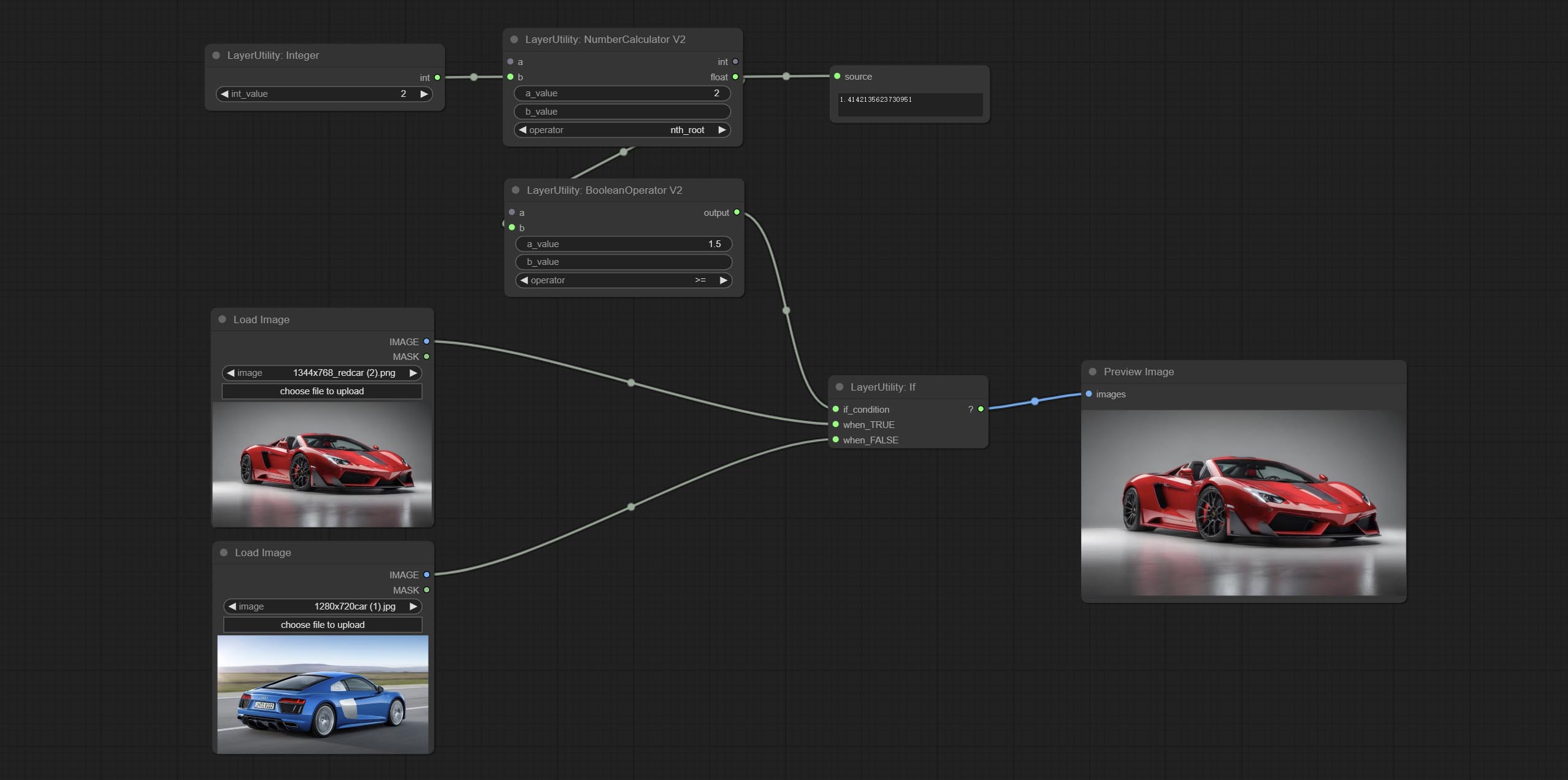

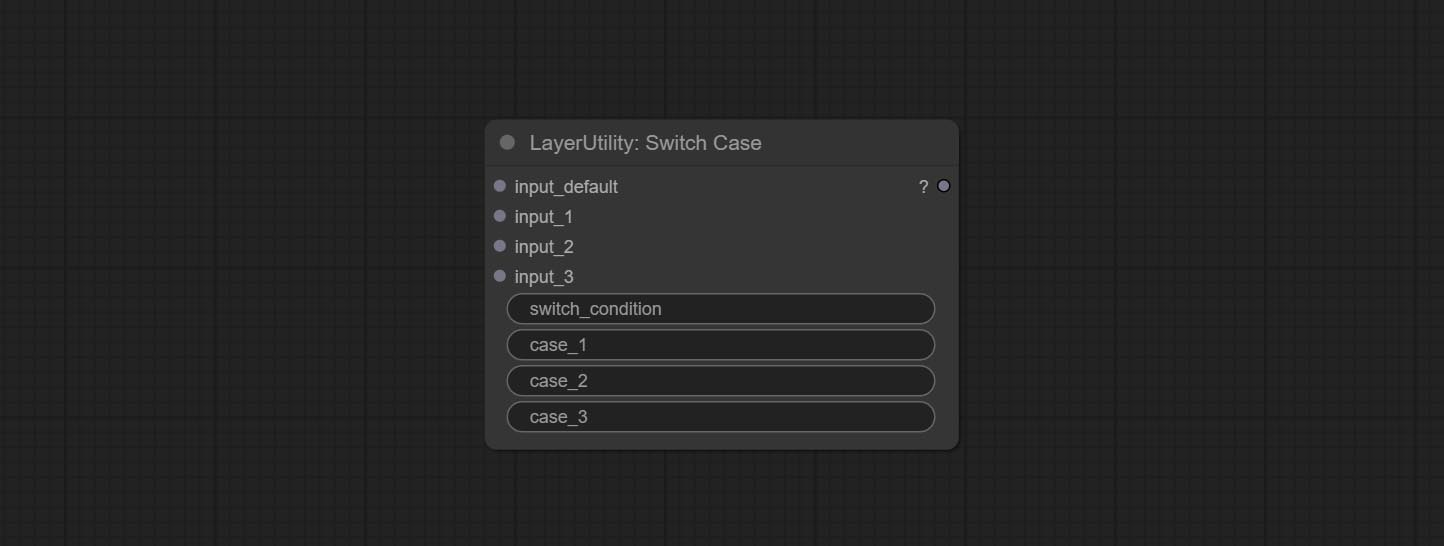

booleanoperatorv2, numbercalculatorv2, Integer, float, boolean 노드는 스위치 케이스와 함께 사용할 문자열로 값을 출력하기 위해 문자열 출력을 추가합니다.

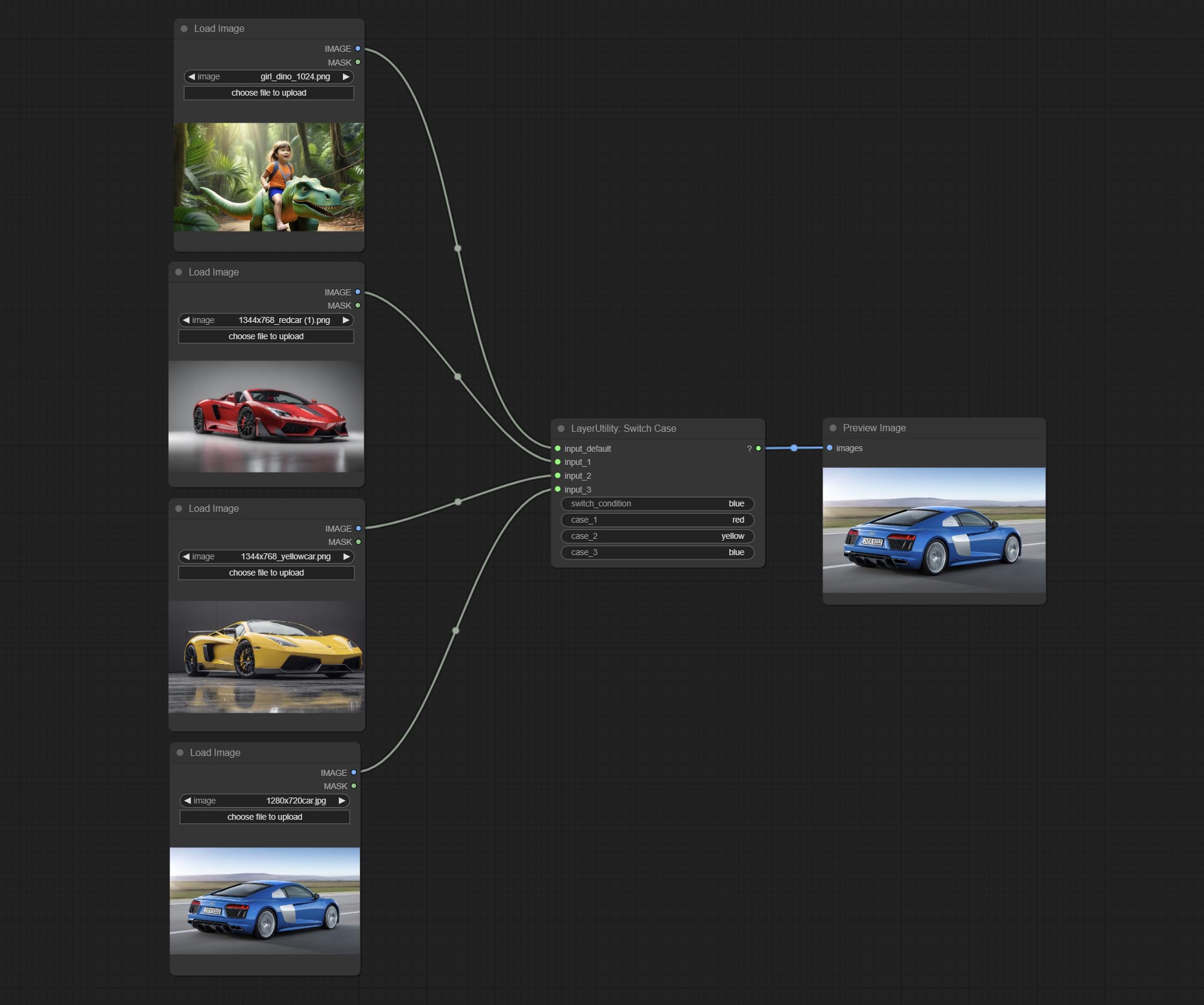

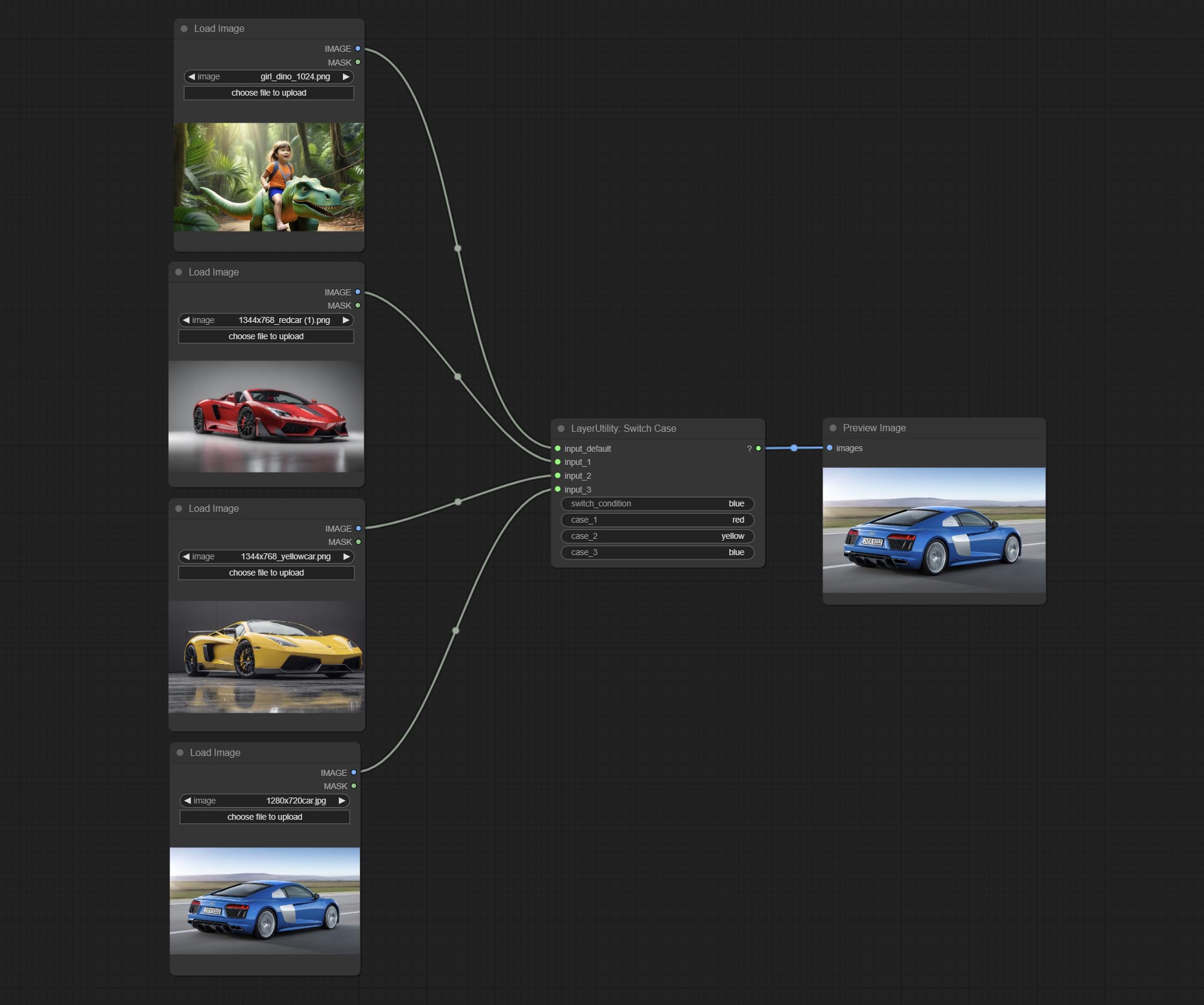

Commit Switchcase 노드는 일치하는 문자열을 기반으로 출력을 전환합니다. 모든 유형의 데이터 전환에 사용할 수 있습니다.

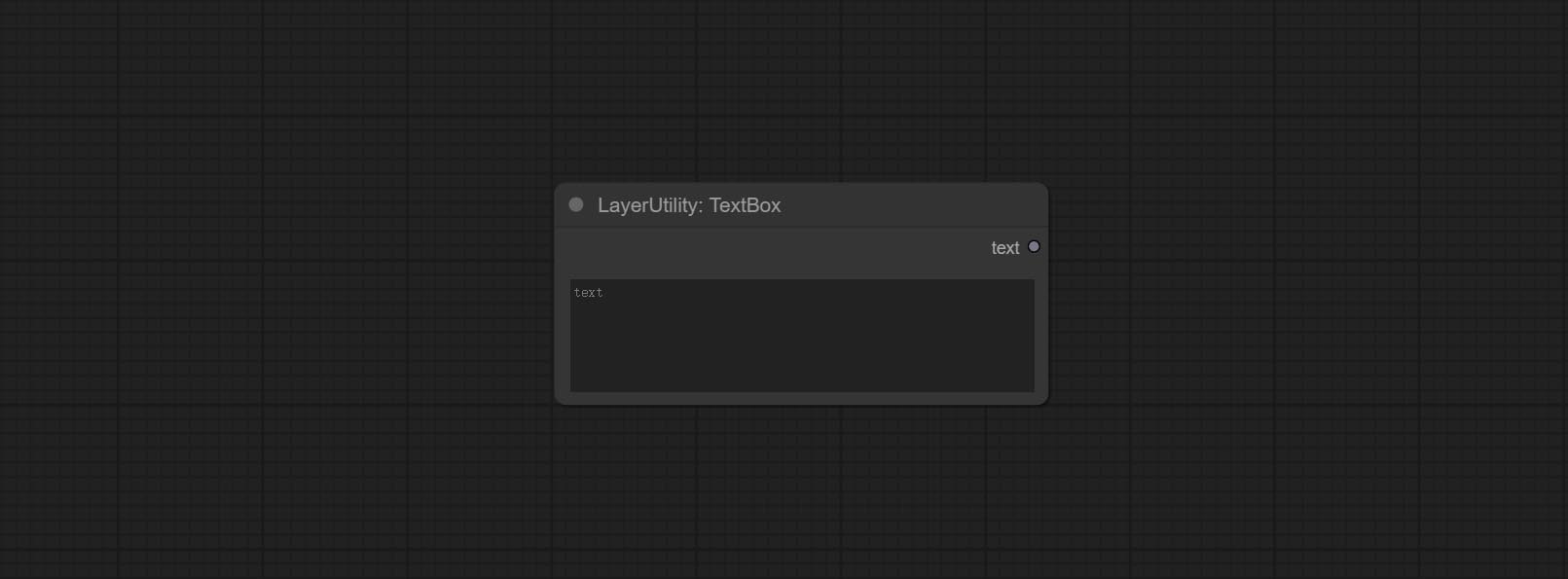

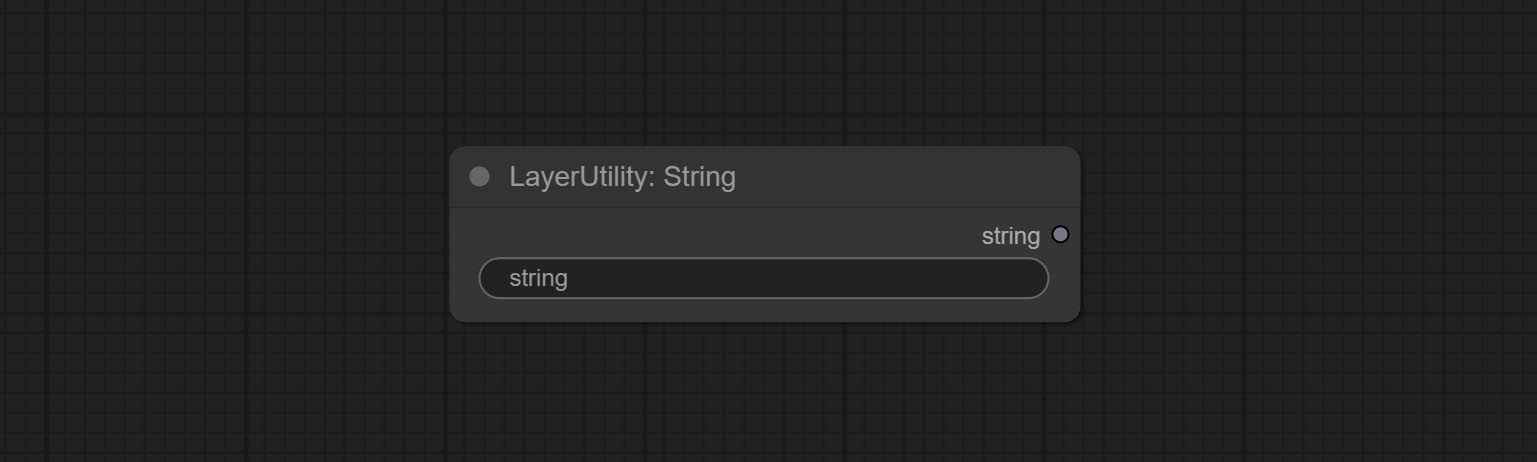

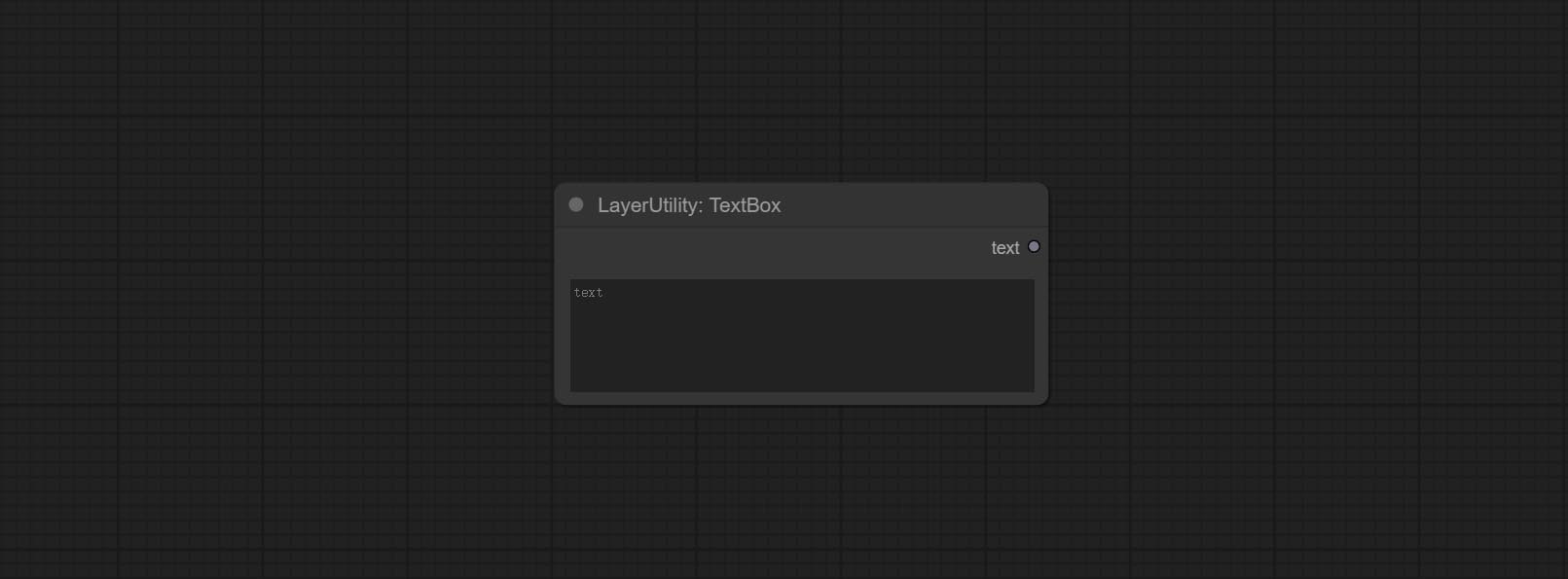

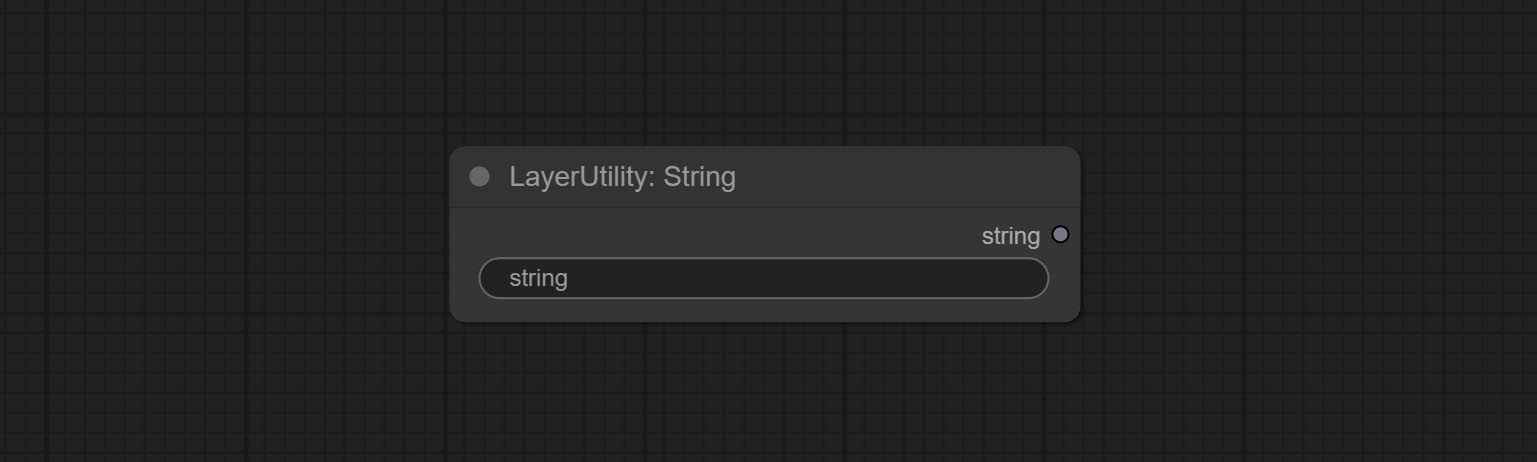

문자열을 출력하는 데 사용되는 문자열 노드를 커밋합니다. 텍스트 상자 단순화 된 노드입니다.

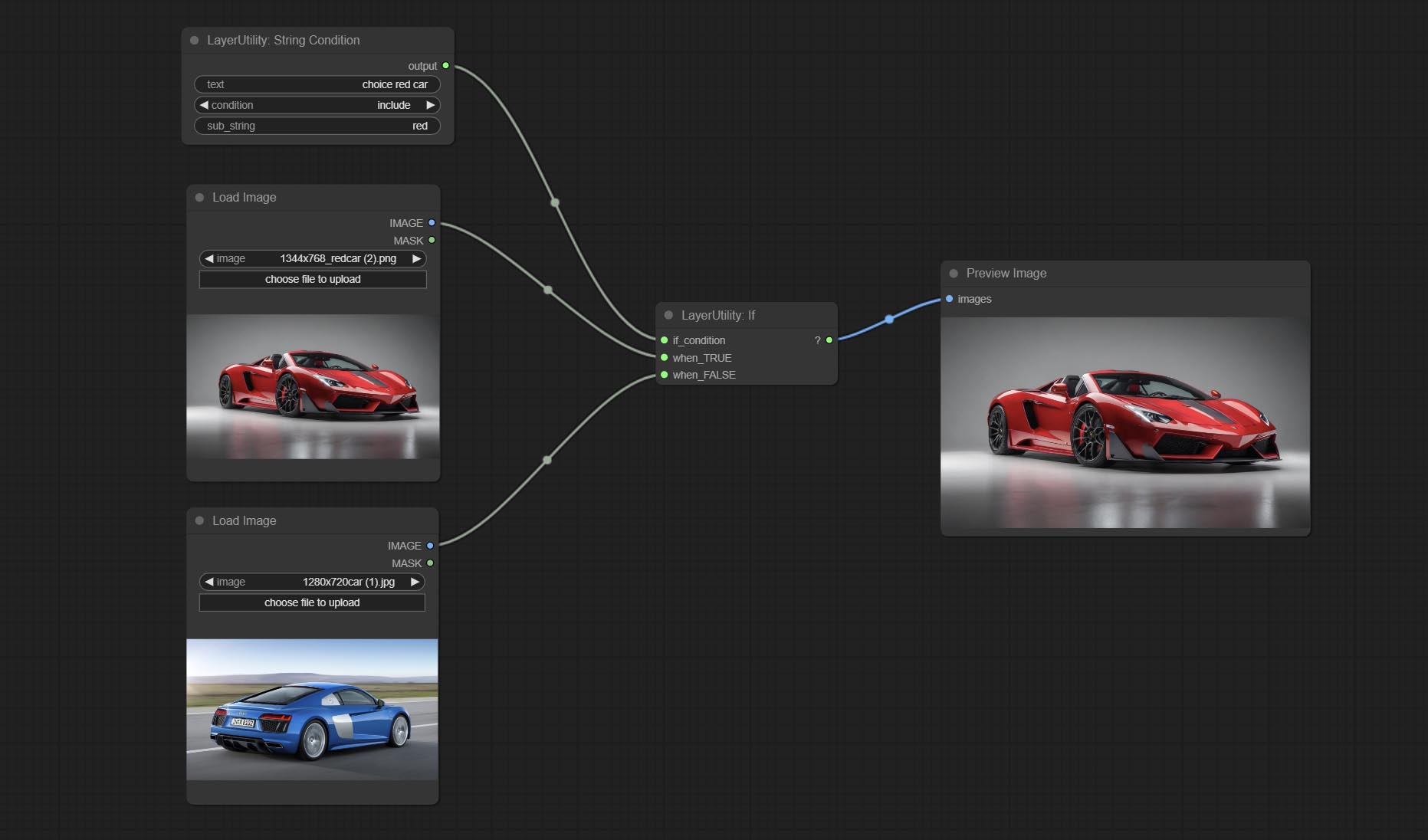

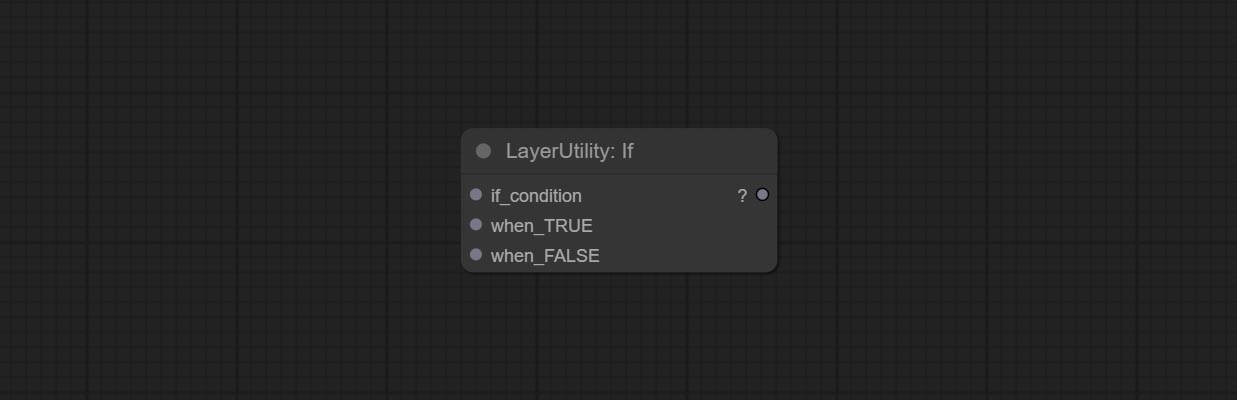

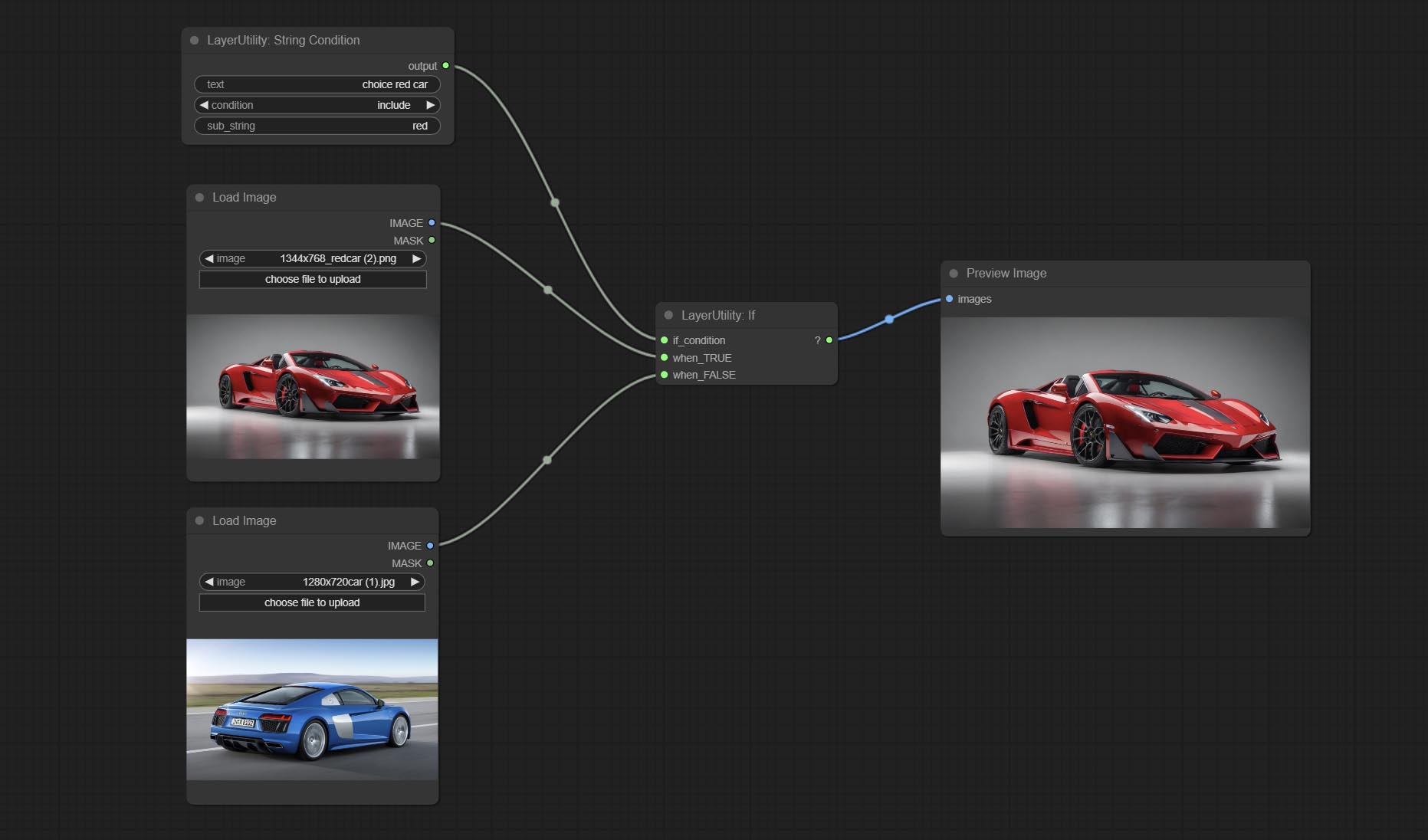

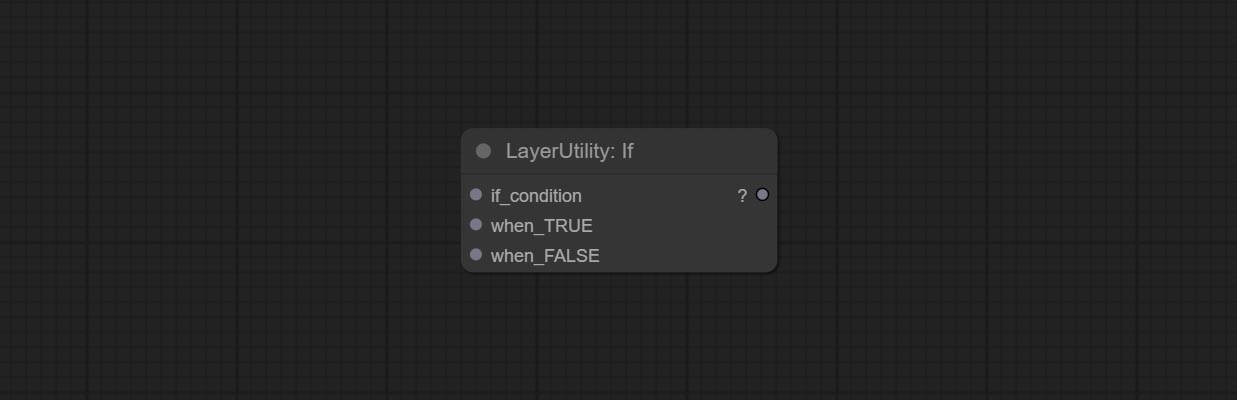

노드 인 경우 커밋하고 부울 조건부 입력에 따라 출력을 스위치합니다. 모든 유형의 데이터 전환에 사용할 수 있습니다.

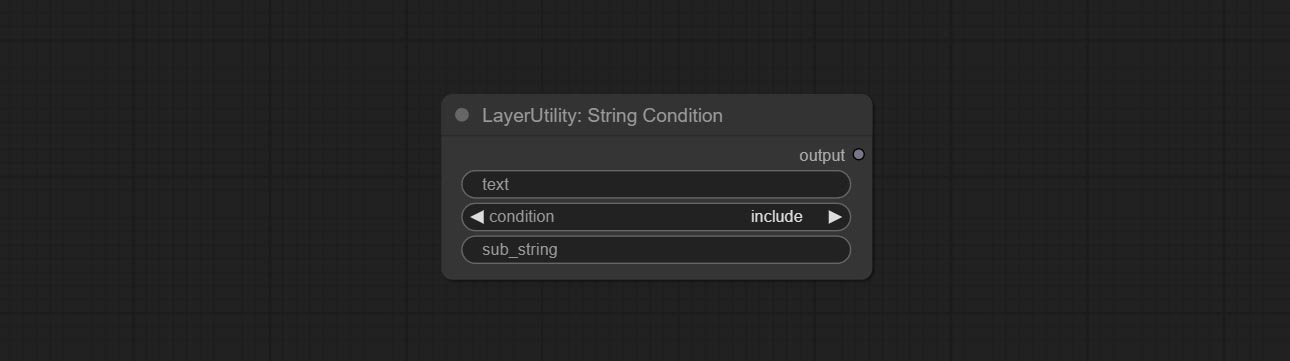

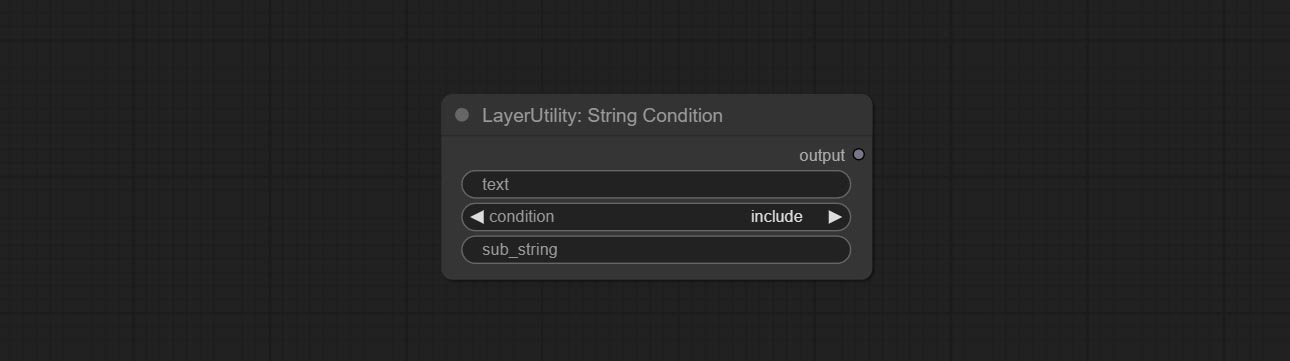

Commit StringCondition 노드는 텍스트에 서브 스트링이 포함되어 있는지 또는 포함되지 않는지 결정합니다.

NumberCalculatorV2 노드를 커밋하고 Nth 루트 작업을 추가하십시오. Booleanoperatorv2 노드를 커밋하여 논리적 판단보다 크거나 작거나 더 크거나 작습니다. 두 노드는 숫자 입력에 액세스 할 수 있으며 노드 내에서 숫자 값을 입력 할 수 있습니다. 참고 : 숫자 입력이 우선합니다. 입력이있을 때 노드의 값은 유효하지 않습니다.

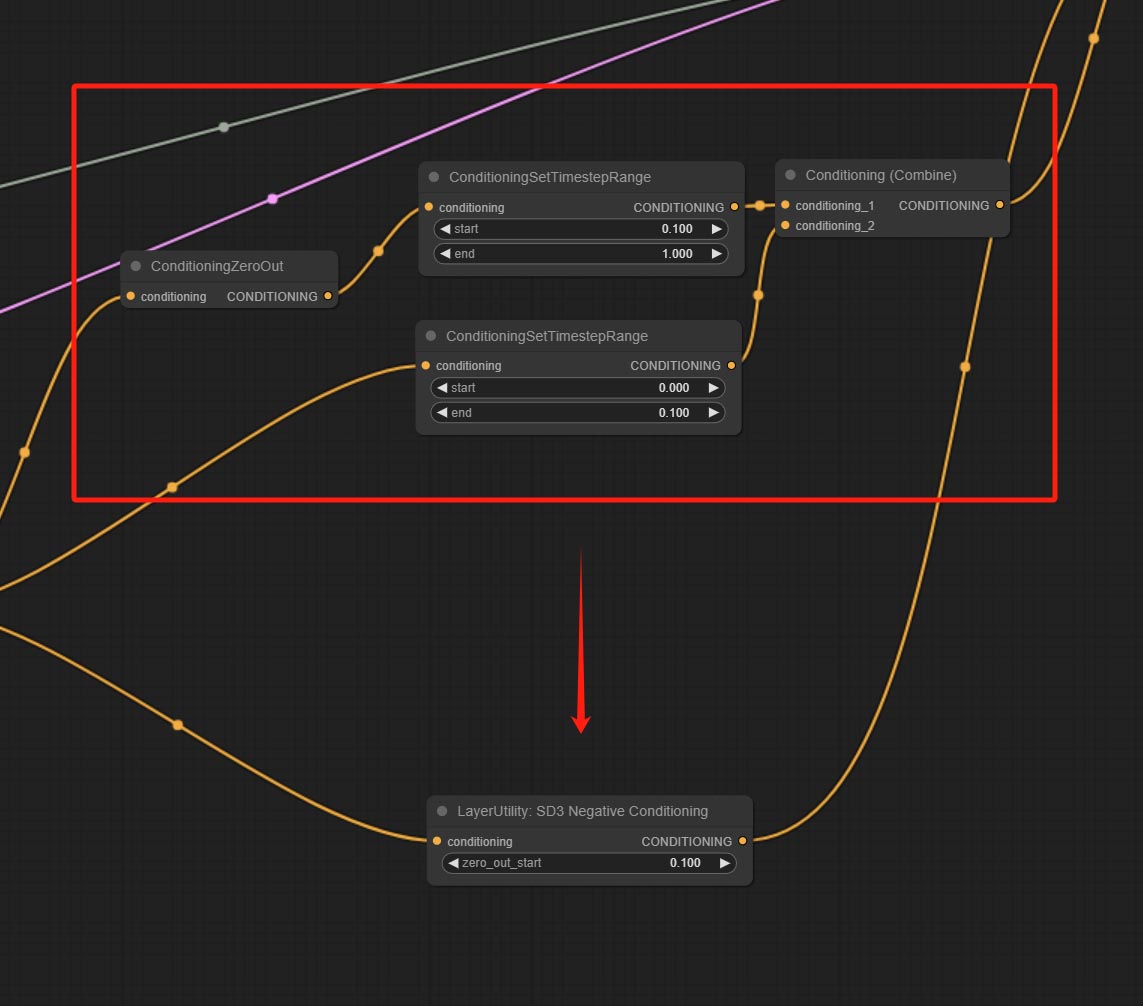

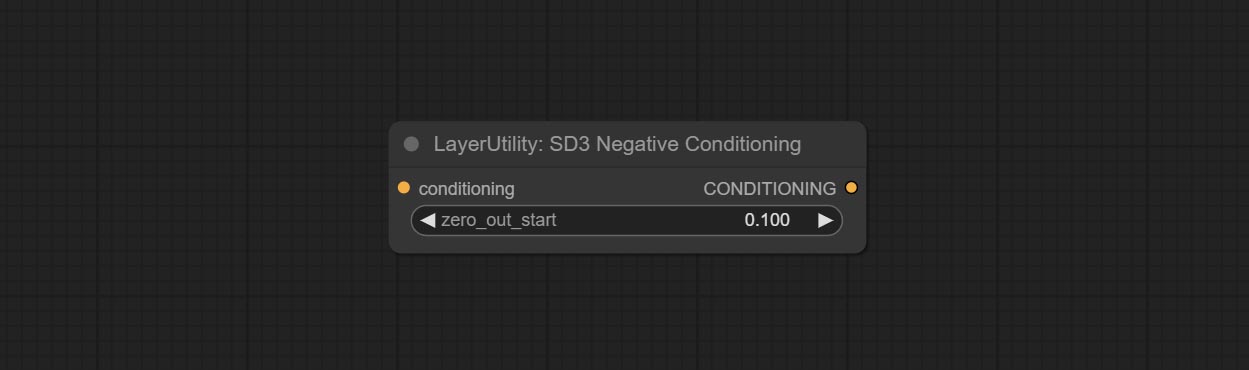

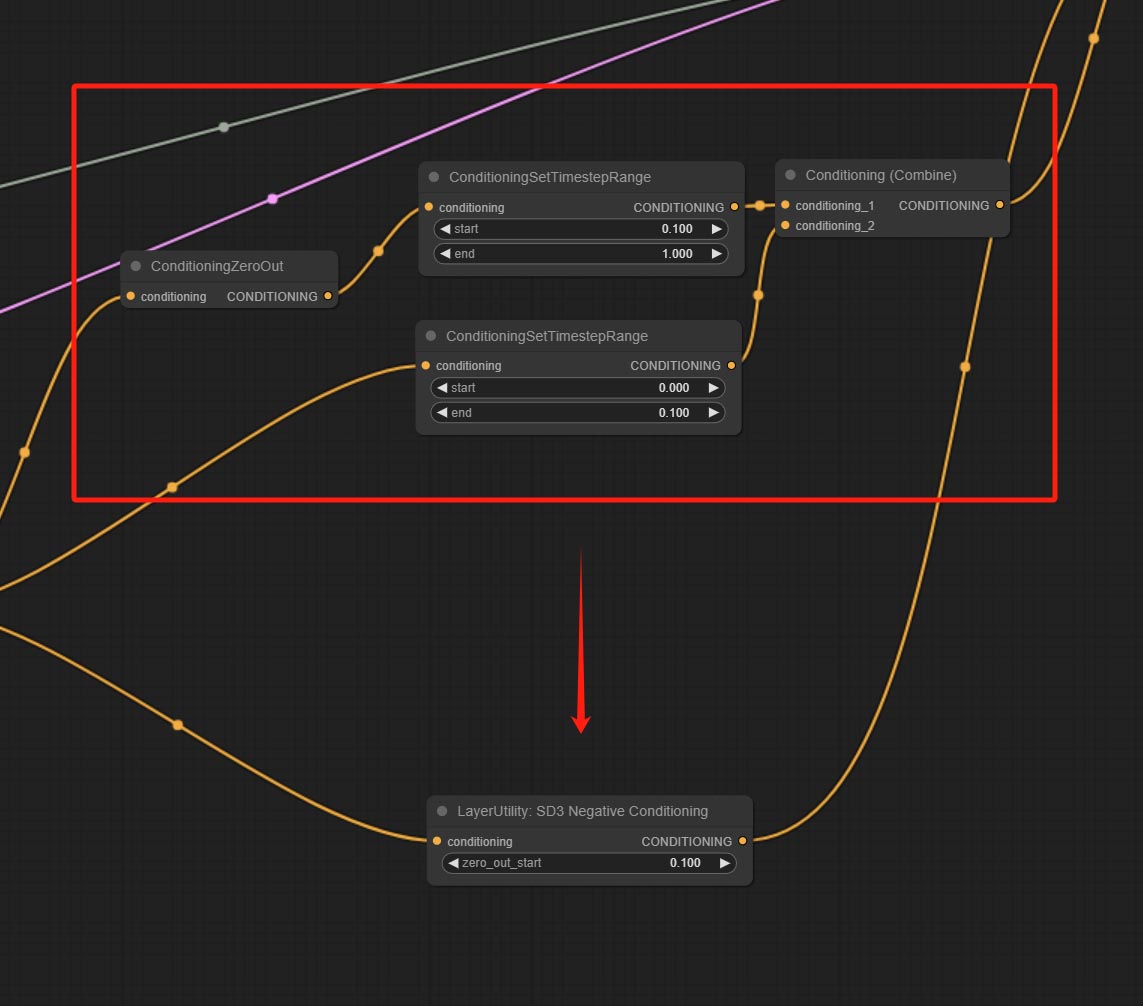

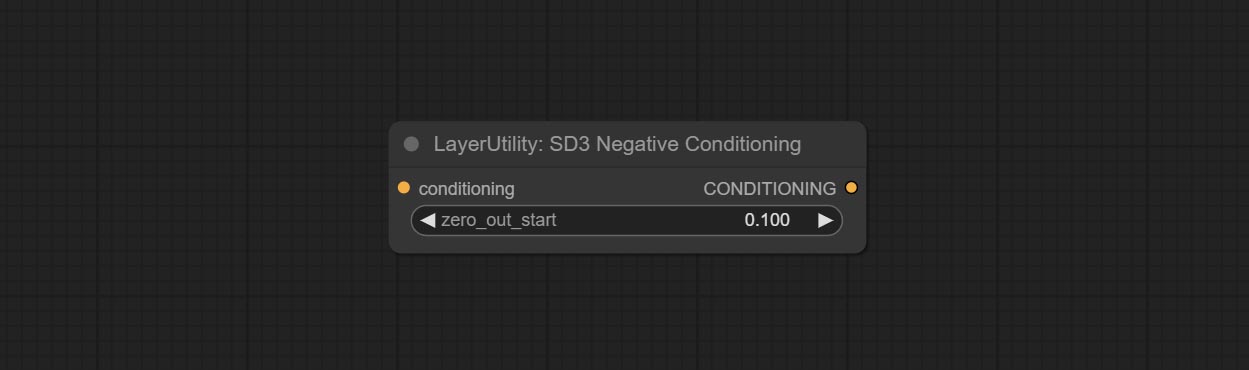

SD3NegativeConditioning 노드를 커밋하고 SD3의 음의 조건의 4 개의 노드를 별도의 노드로 캡슐화하십시오.

imageRemovealpha 노드 옵션 마스크 입력을 추가합니다.

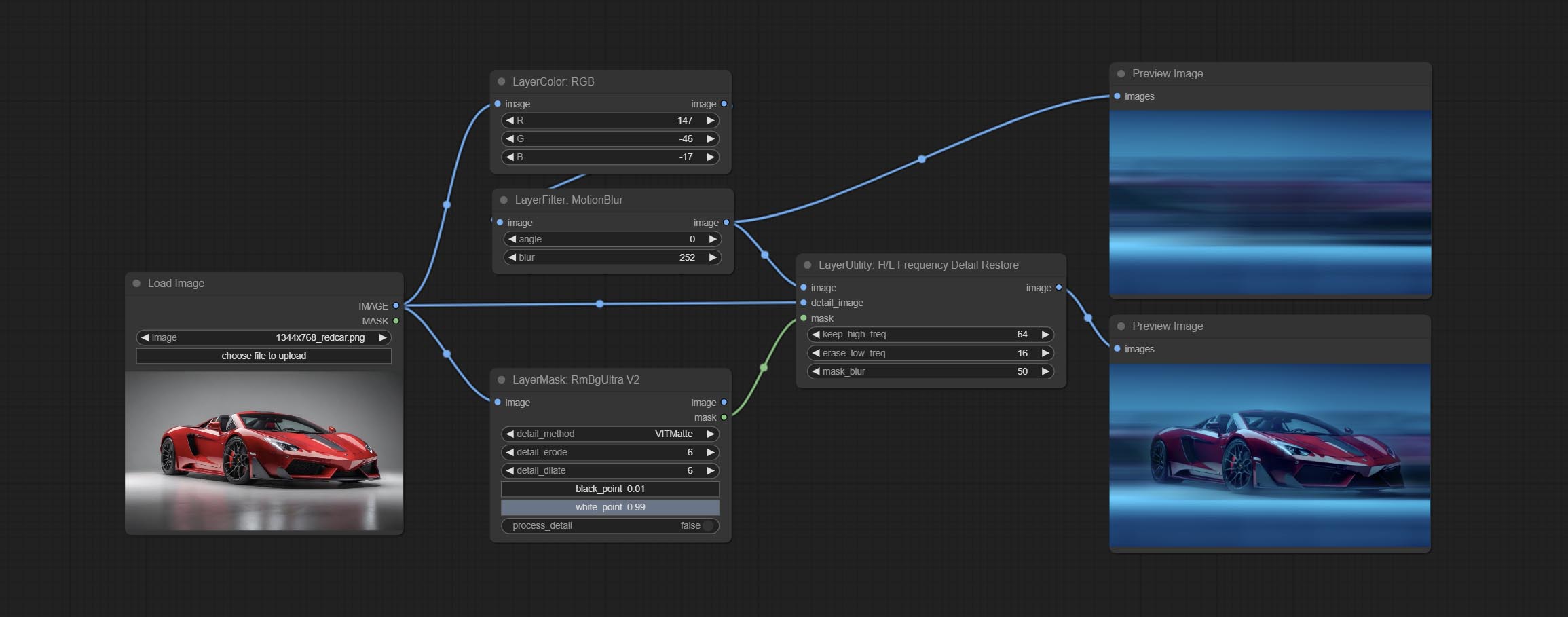

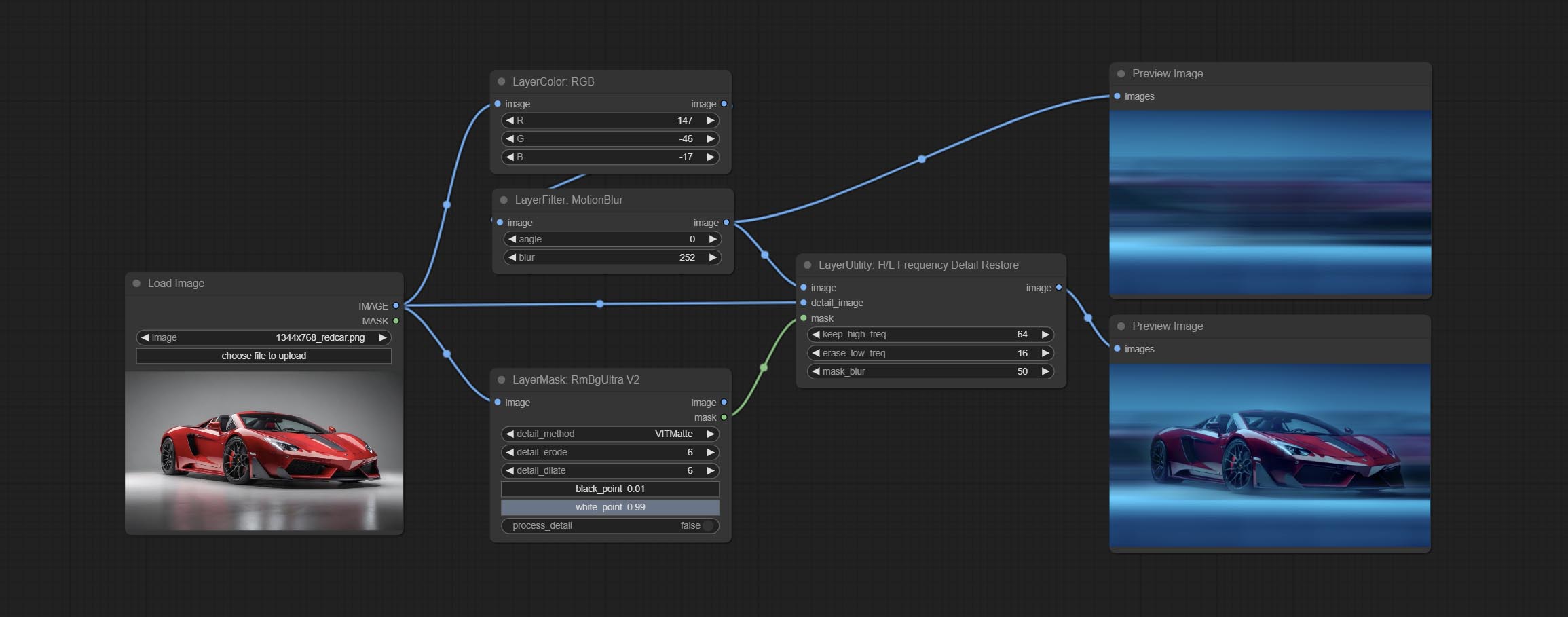

저주파 필터링 및 고주파 보존을 사용하여 이미지 세부 사항을 복원하기 위해 HLFrequencyDetailRestore 노드를 커밋하면 퓨전이 더 좋습니다.

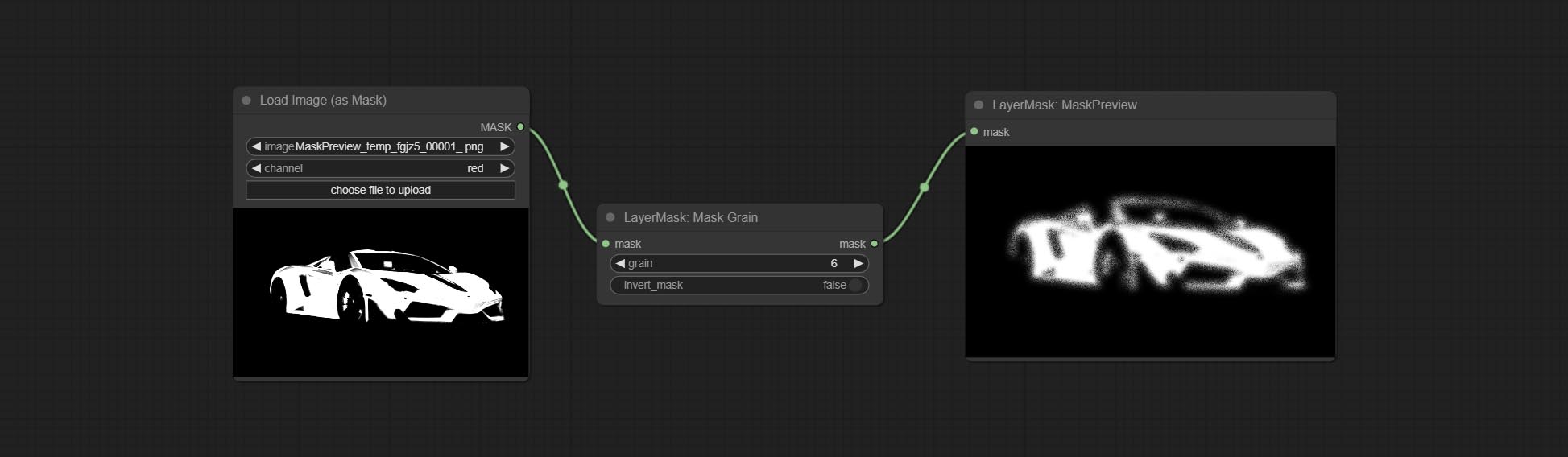

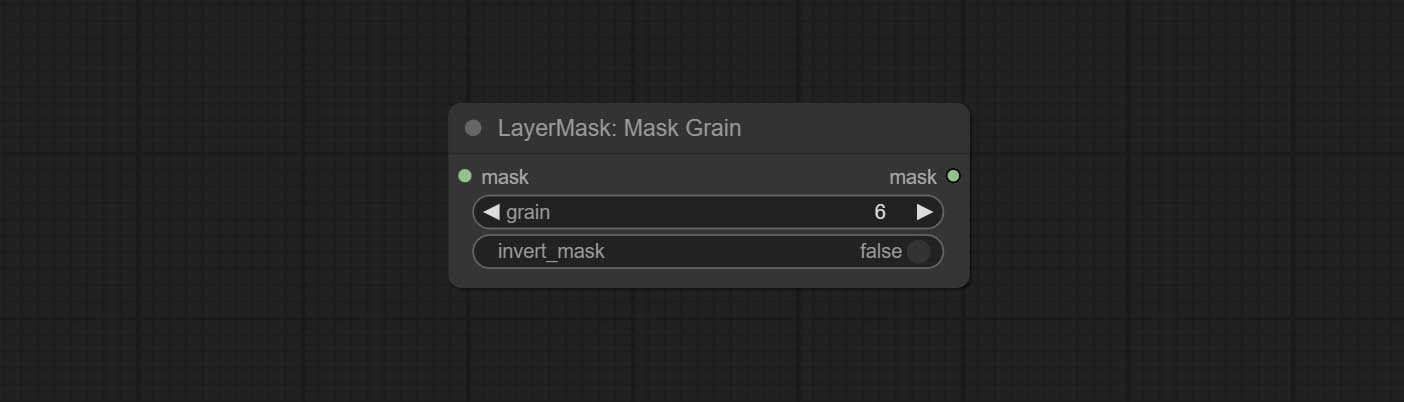

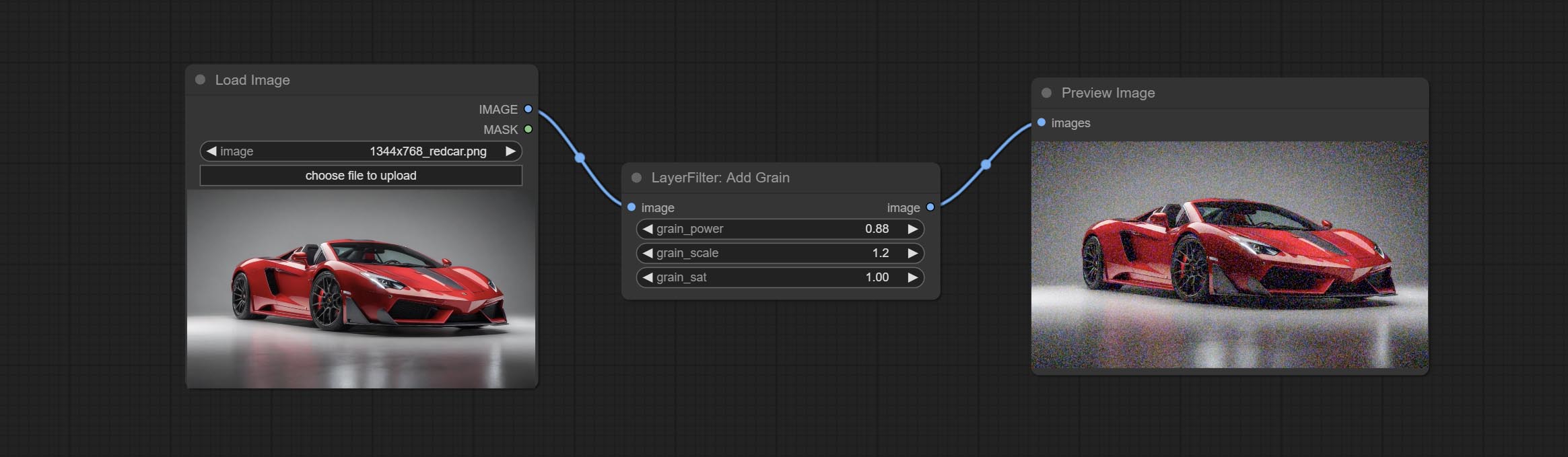

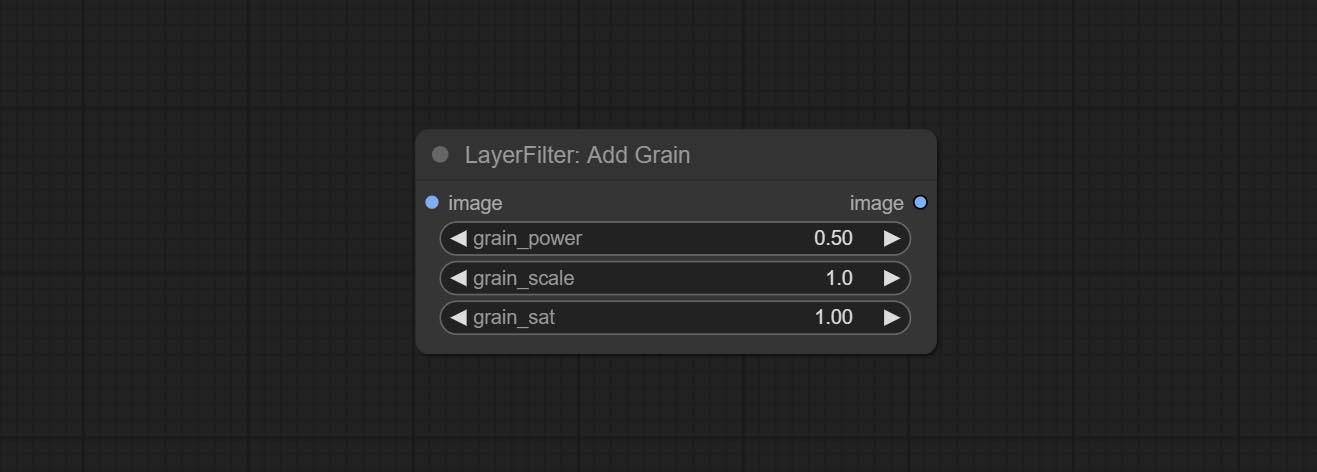

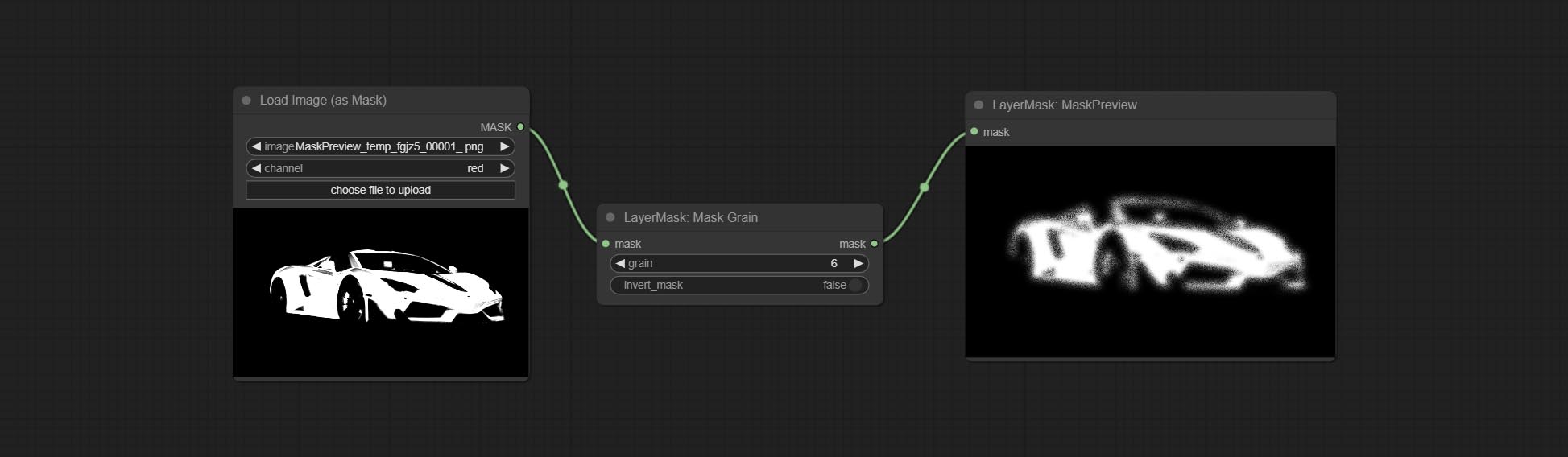

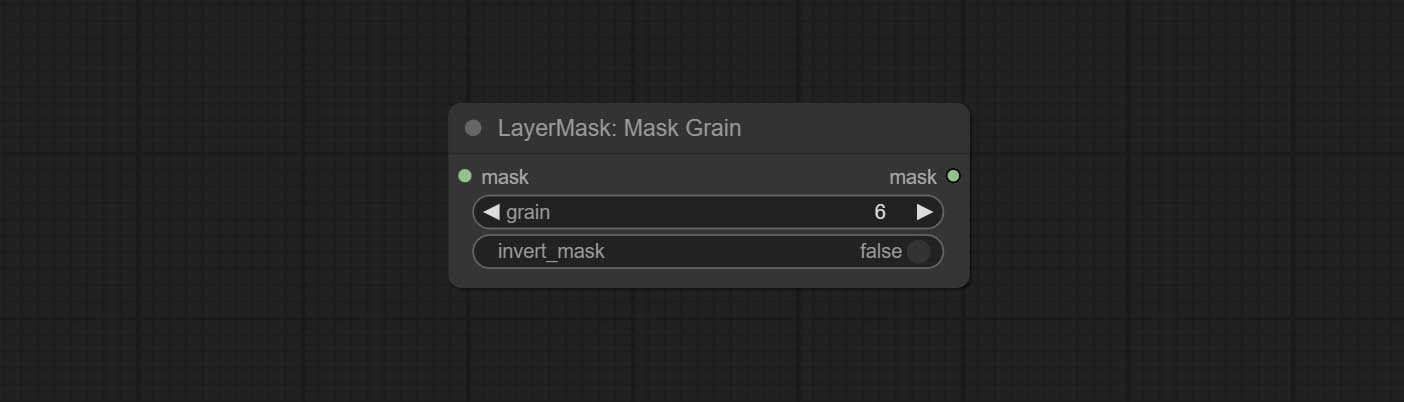

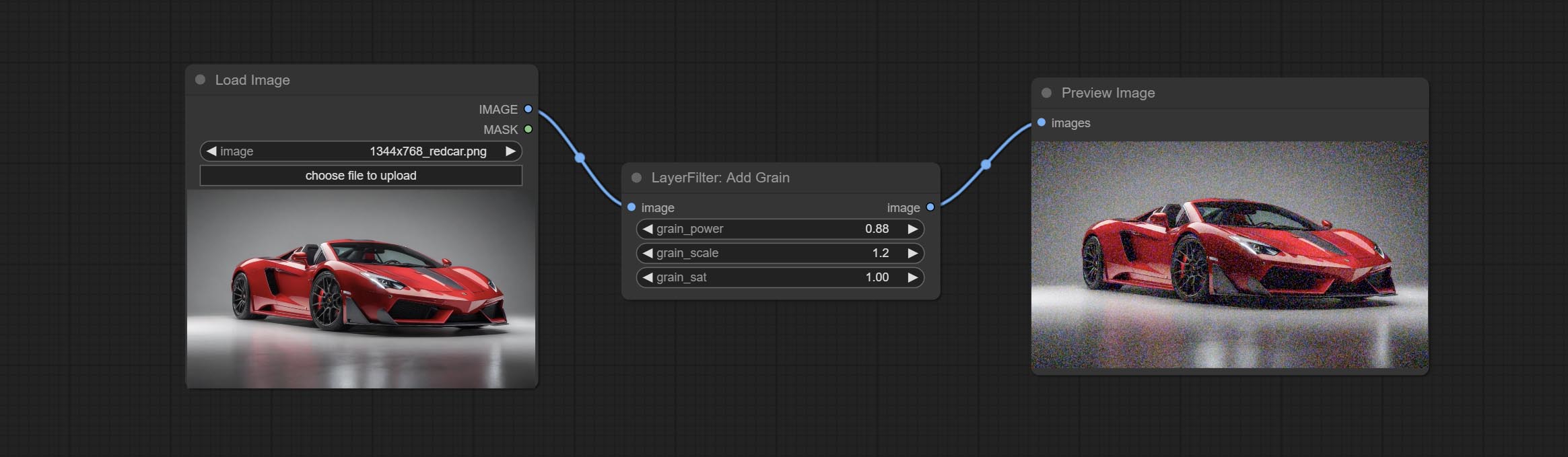

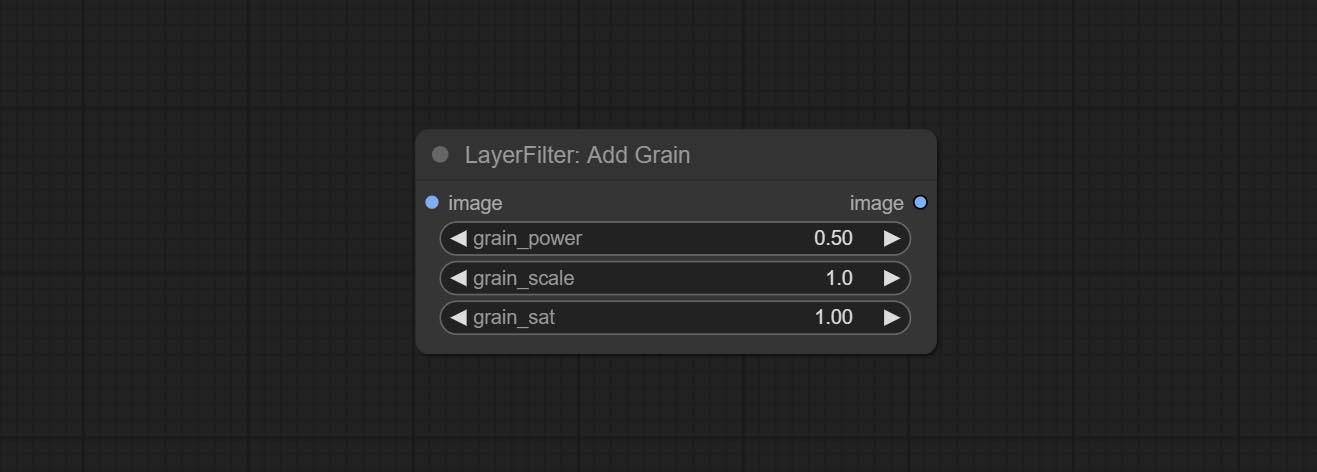

Addgrain과 Maskgrain 노드를 커밋하고 사진이나 마스크에 소음을 추가하십시오.

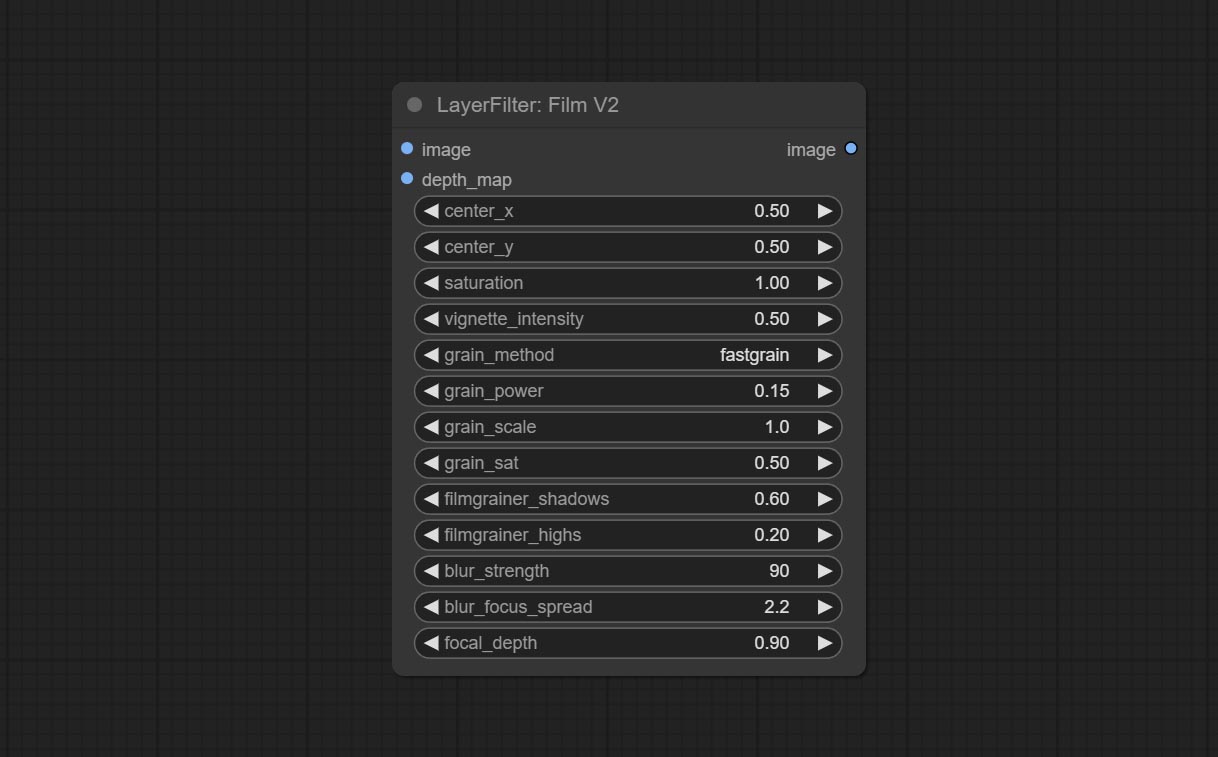

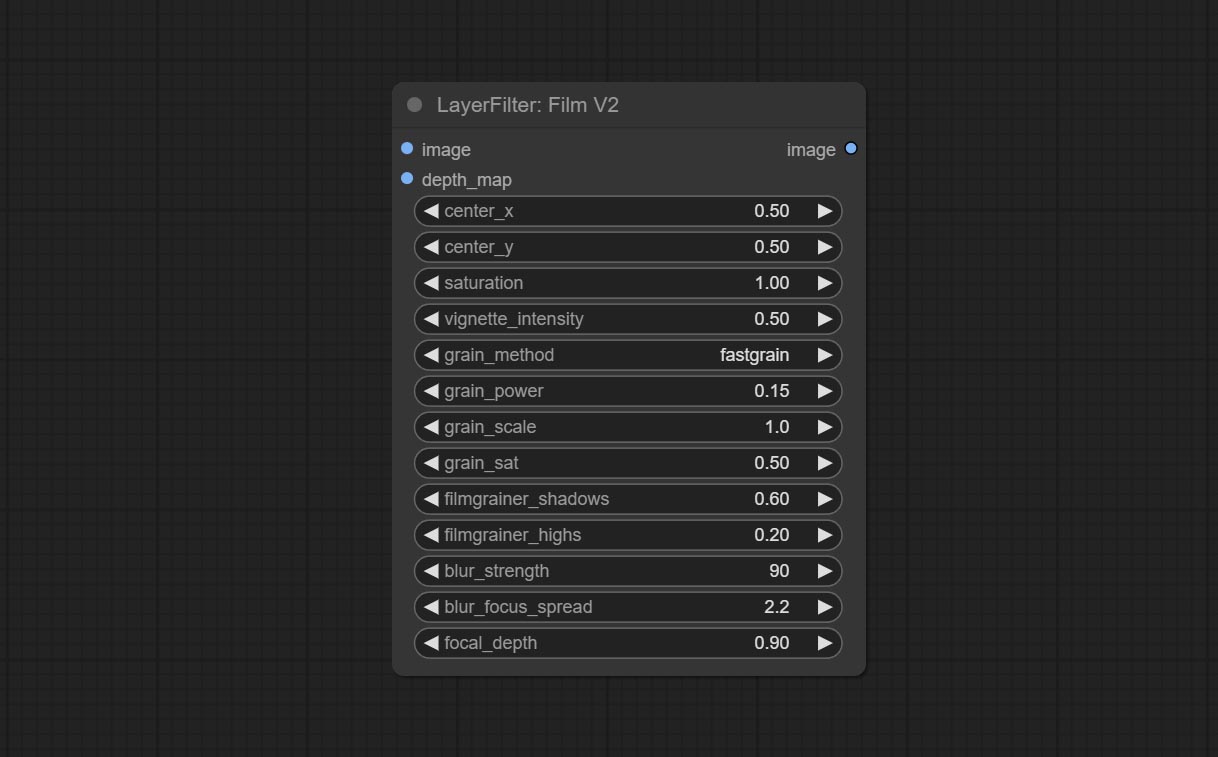

Commit FilmV2 노드, Fastgrain 방법은 이전의 방법에 따라 추가되며 노이즈 생성 속도는 10 배 빠릅니다.

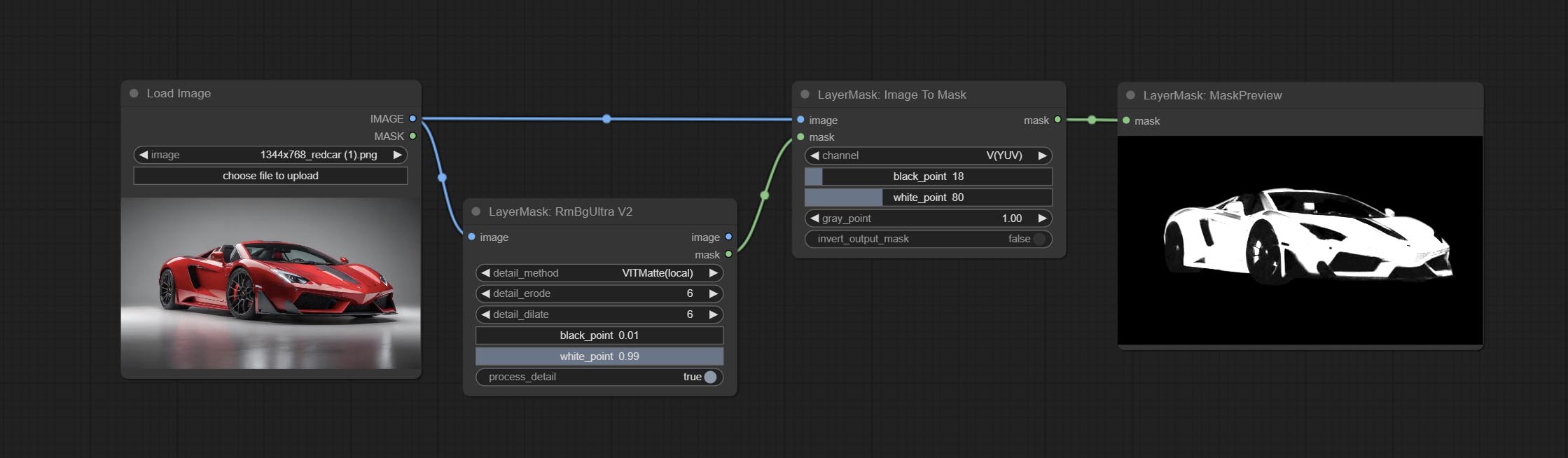

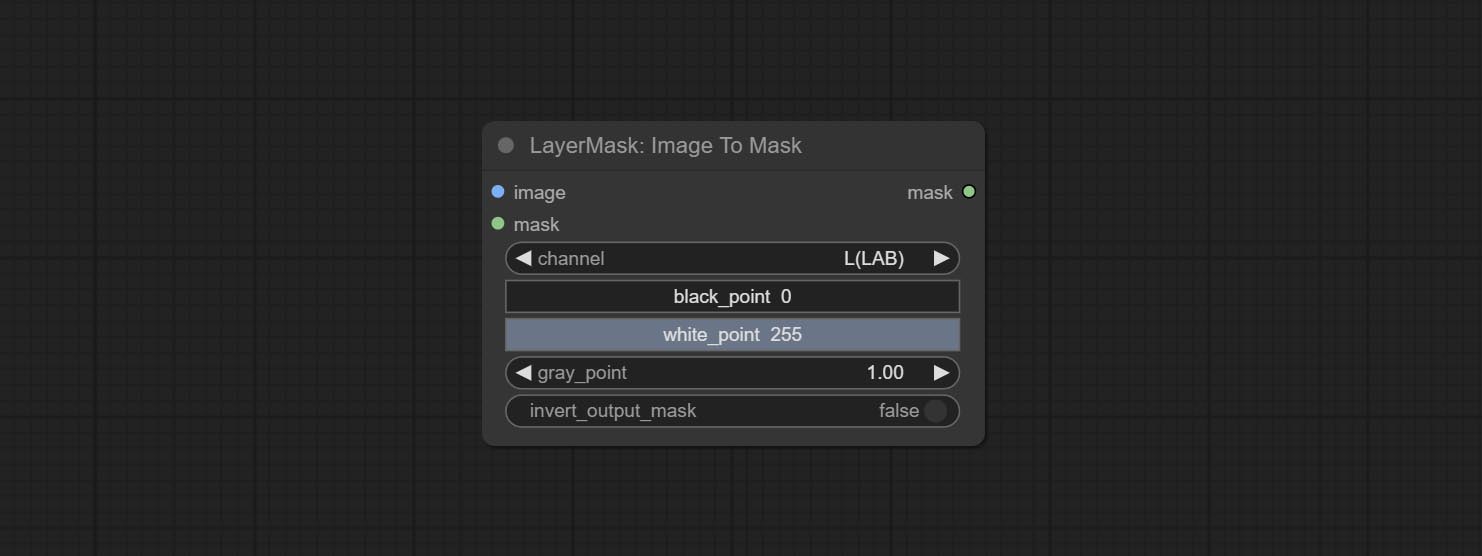

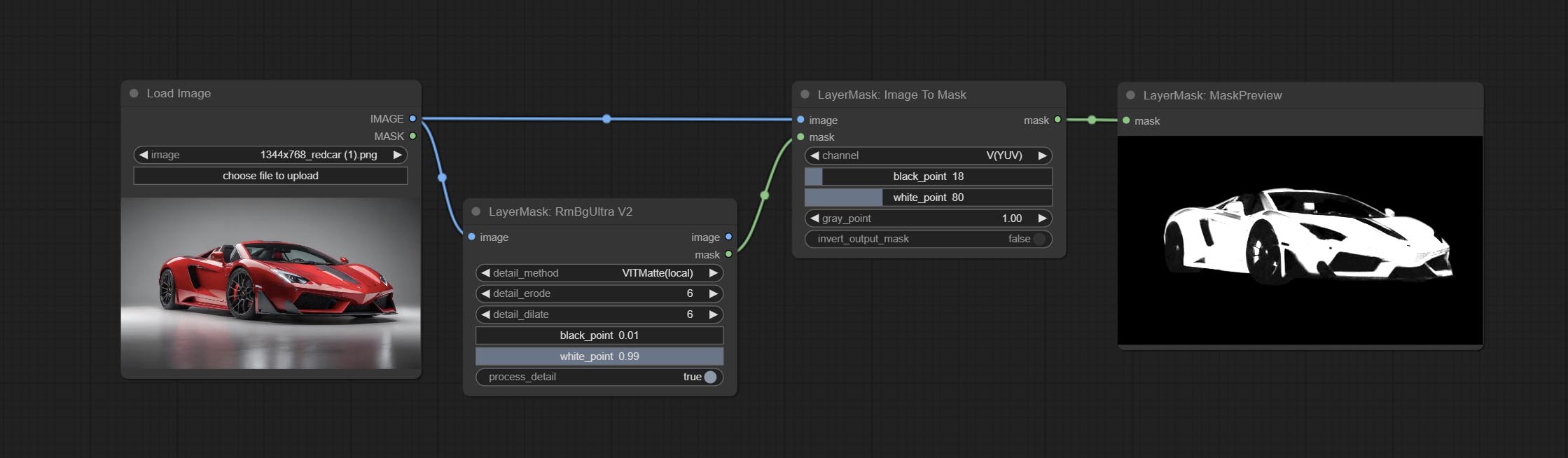

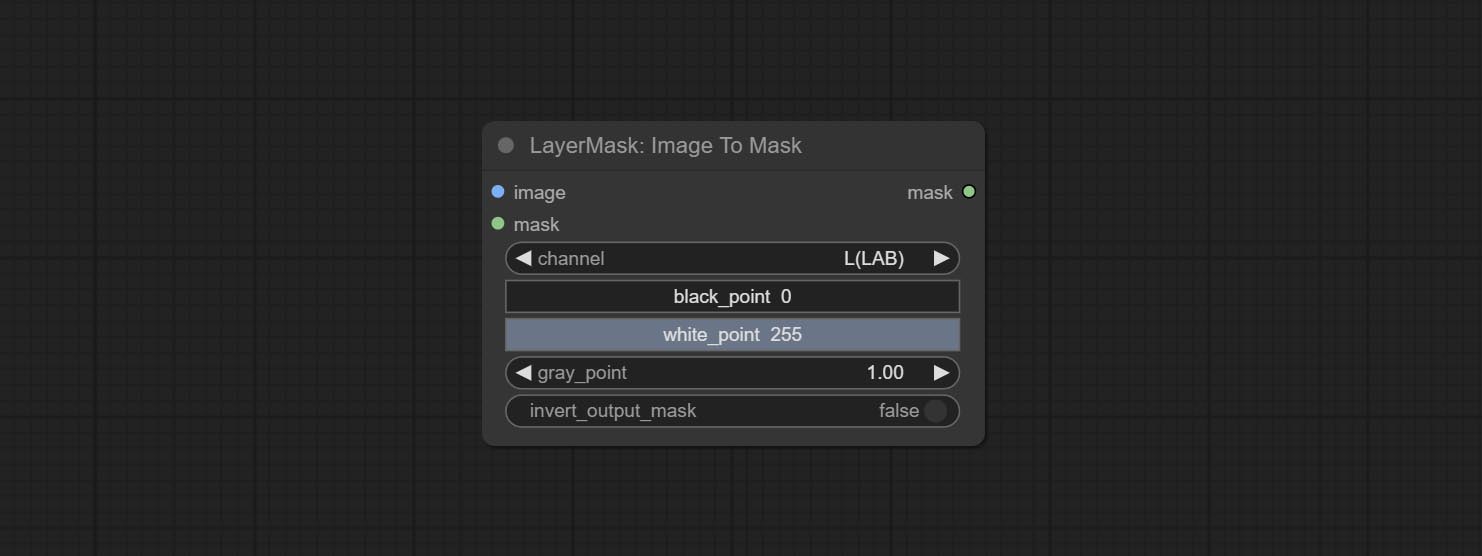

ImageTomask 노드를 커밋하면 이미지를 마스크로 변환 할 수 있습니다. 실험실, RGBA, YUV 및 HSV 모드의 모든 채널을 마스크로 변환하면서 색상 스케일 조정을 제공합니다. 유효한 부품 만 포함하는 마스크를 얻기 위해 마스크 옵션 입력 지원.

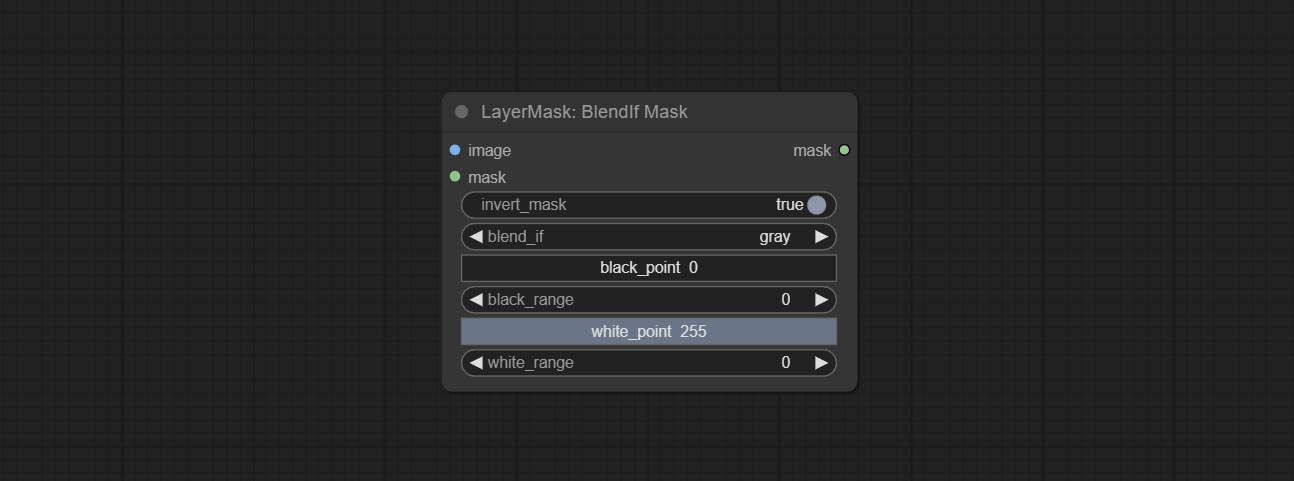

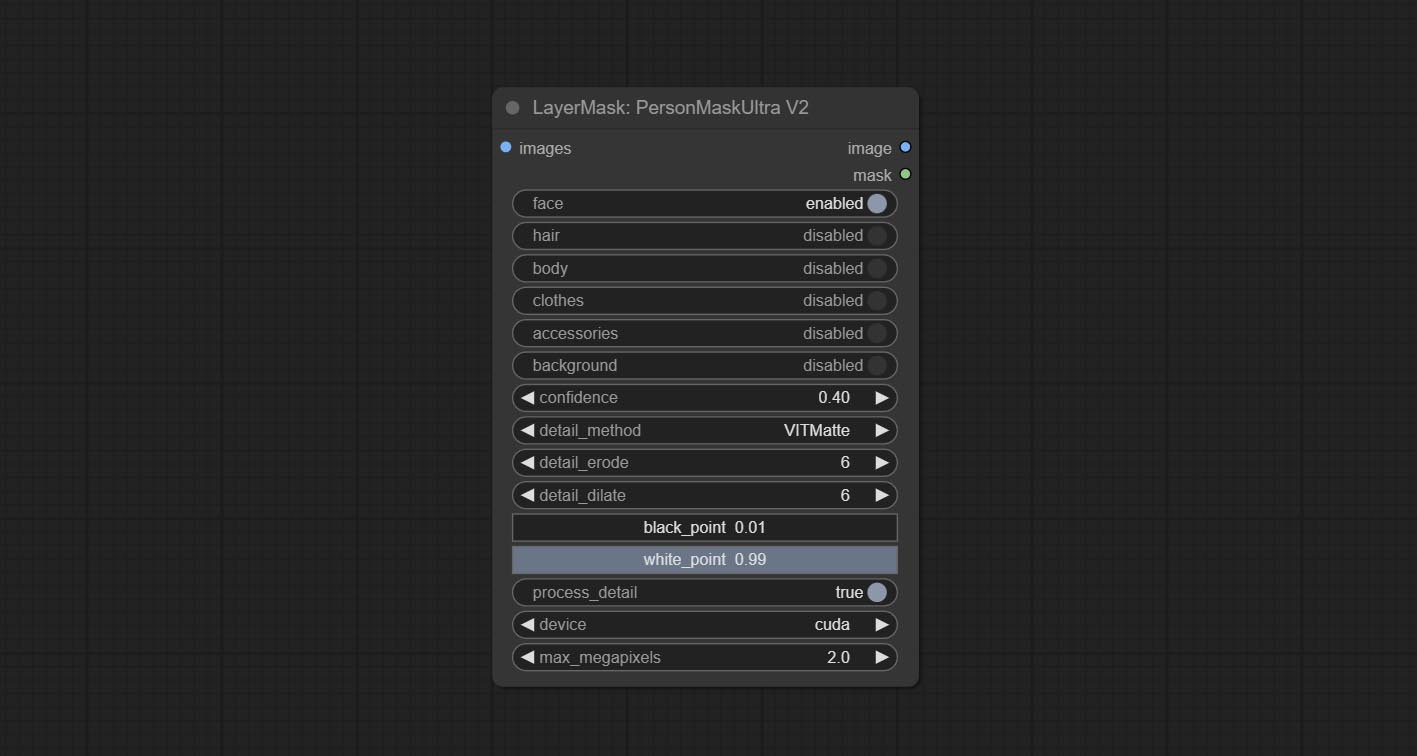

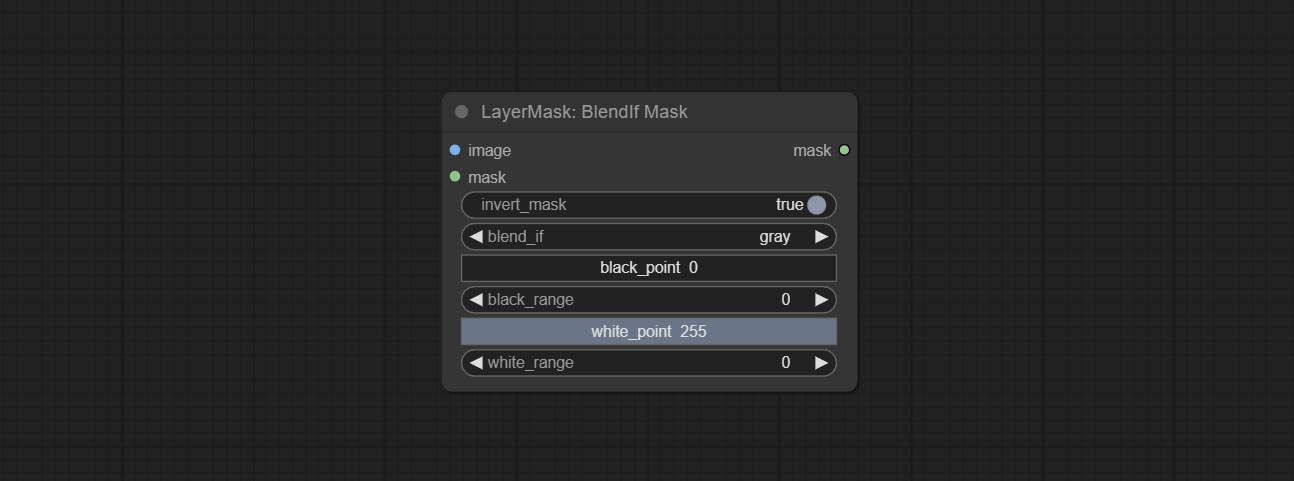

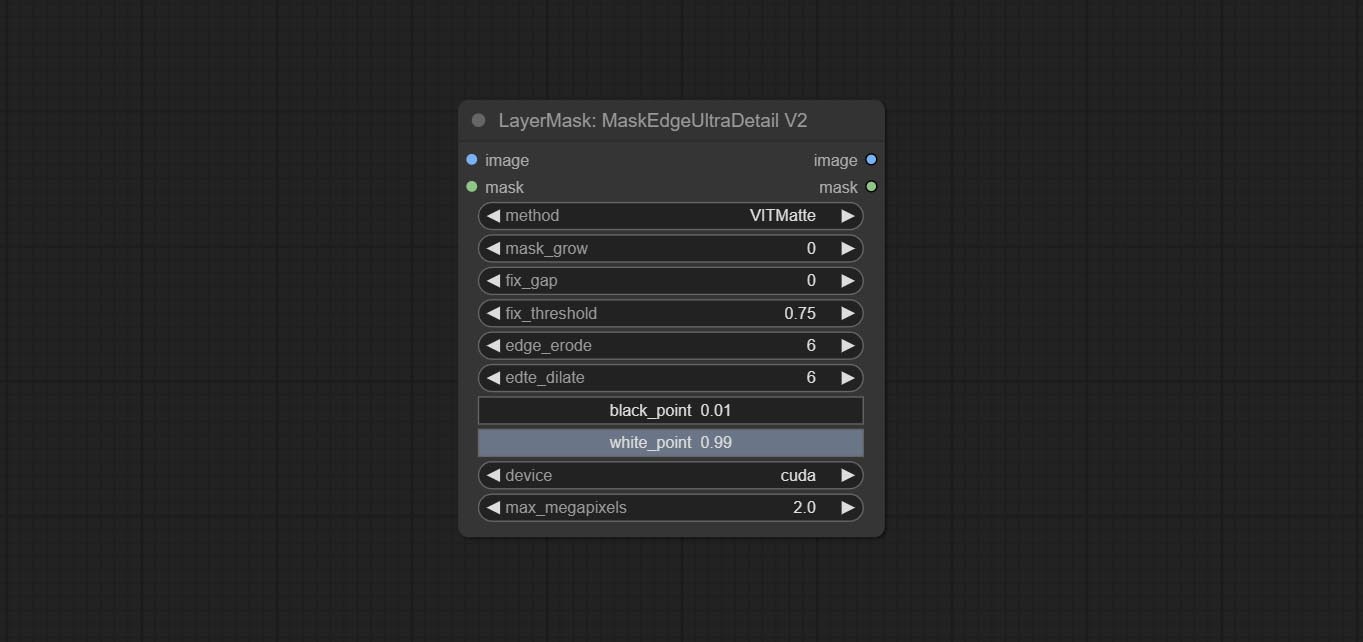

일부 노드의 BlackPoint 및 WhitePoint 옵션은보다 직관적 인 디스플레이를 위해 슬라이더 조정으로 변경되었습니다. maskedgeultradetailv2, segmentanythingultrav2, rmbgultrav2, personmaskultrav2, birefnetultra, segformerb2clothesultra, blendifmask 및 레벨 포함.

ImagesCalerestorev2 및 ImagesCaleByAspectratiov2 노드는 이미지를 확장하기 위해 total_pixel 메소드를 추가합니다.

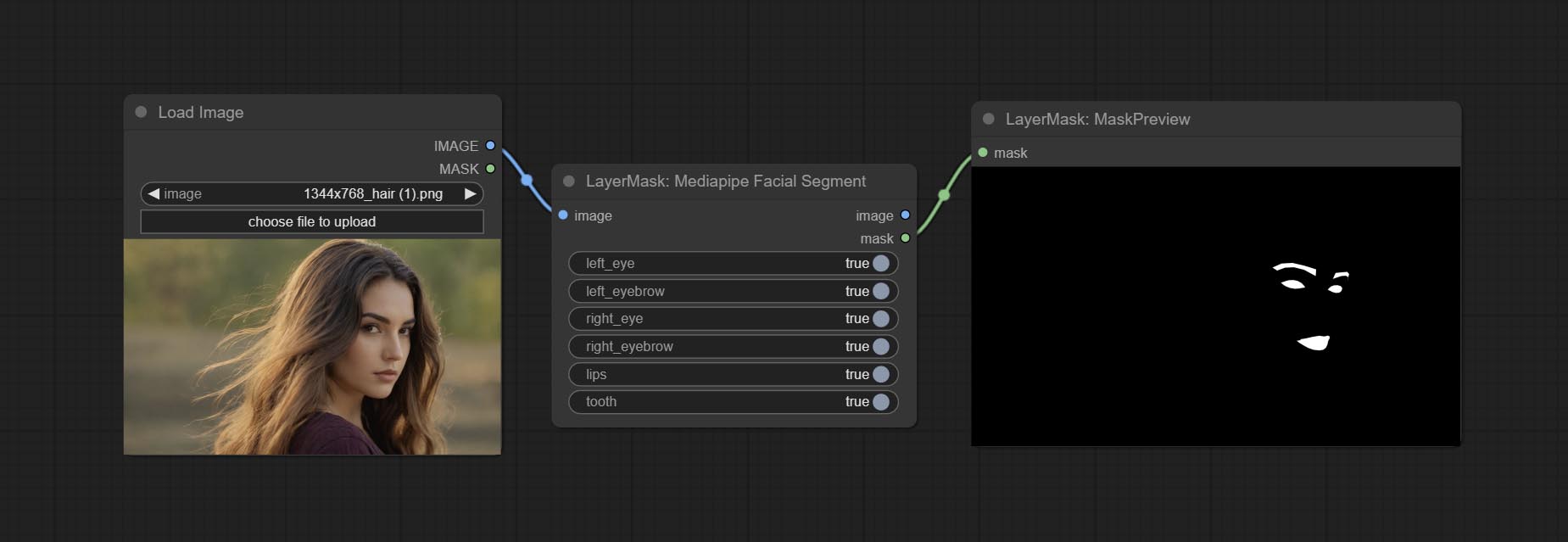

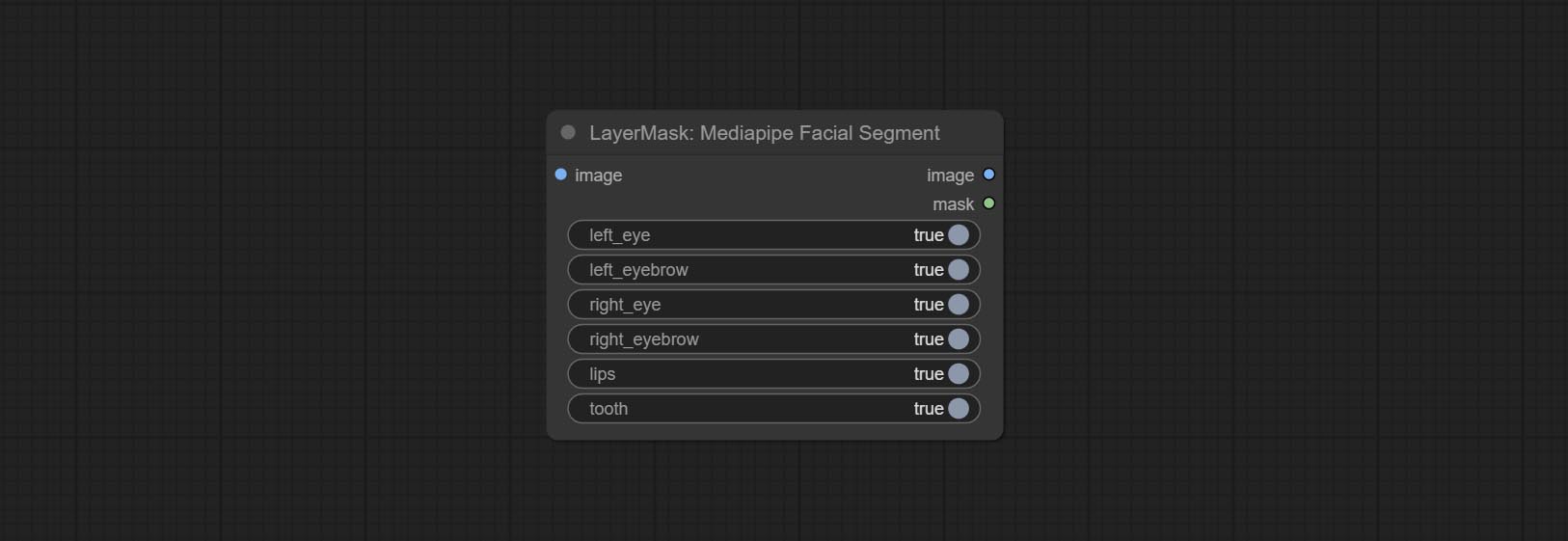

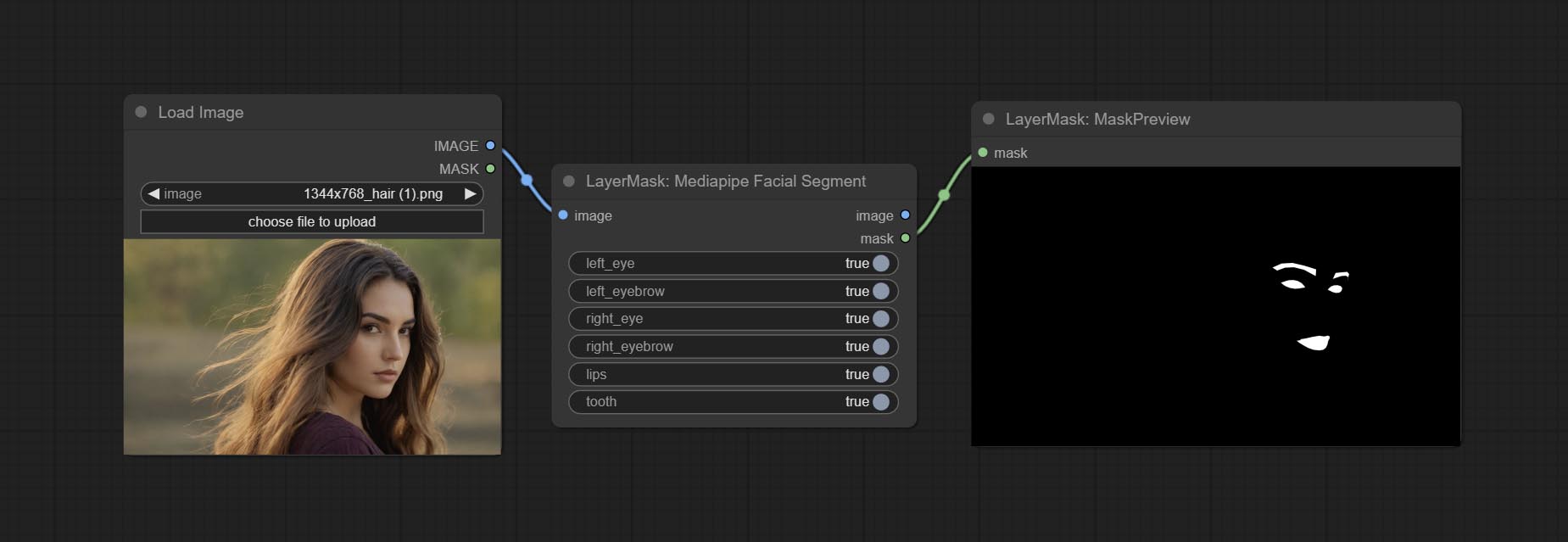

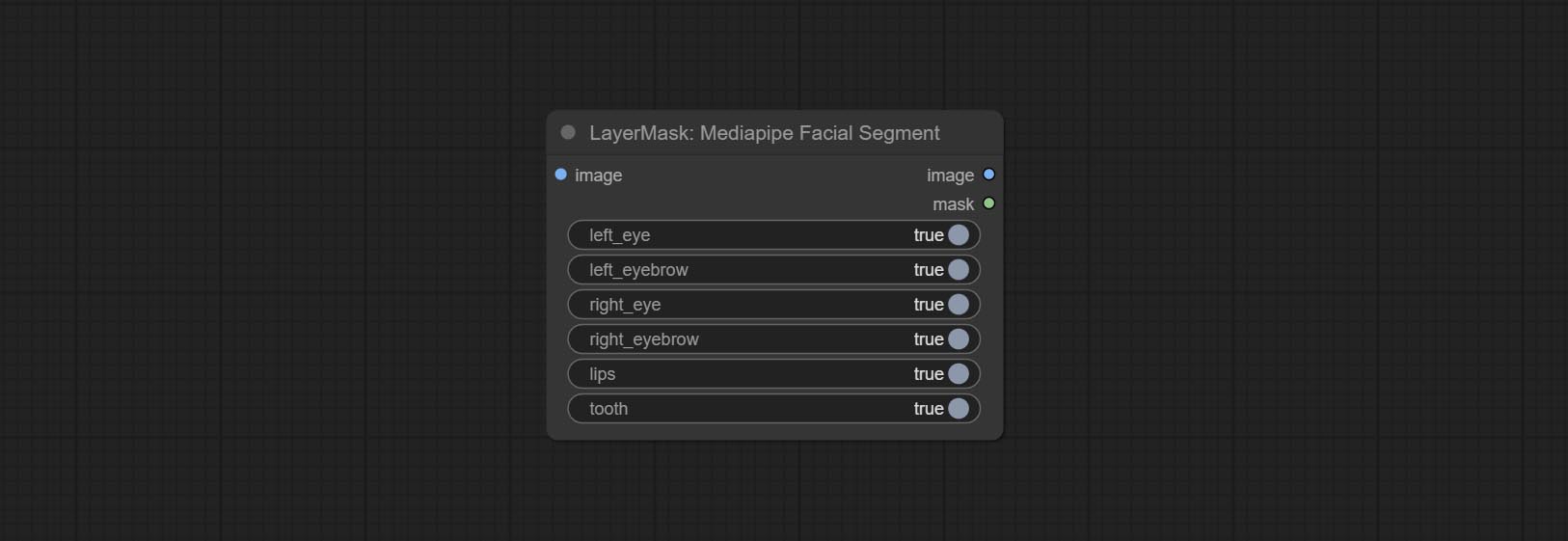

왼쪽 및 오른쪽 눈썹, 눈, 입술 및 치아를 포함한 얼굴 특징을 분할하는 데 사용되는 MediaPipeFacialSegment 노드를 Commit.

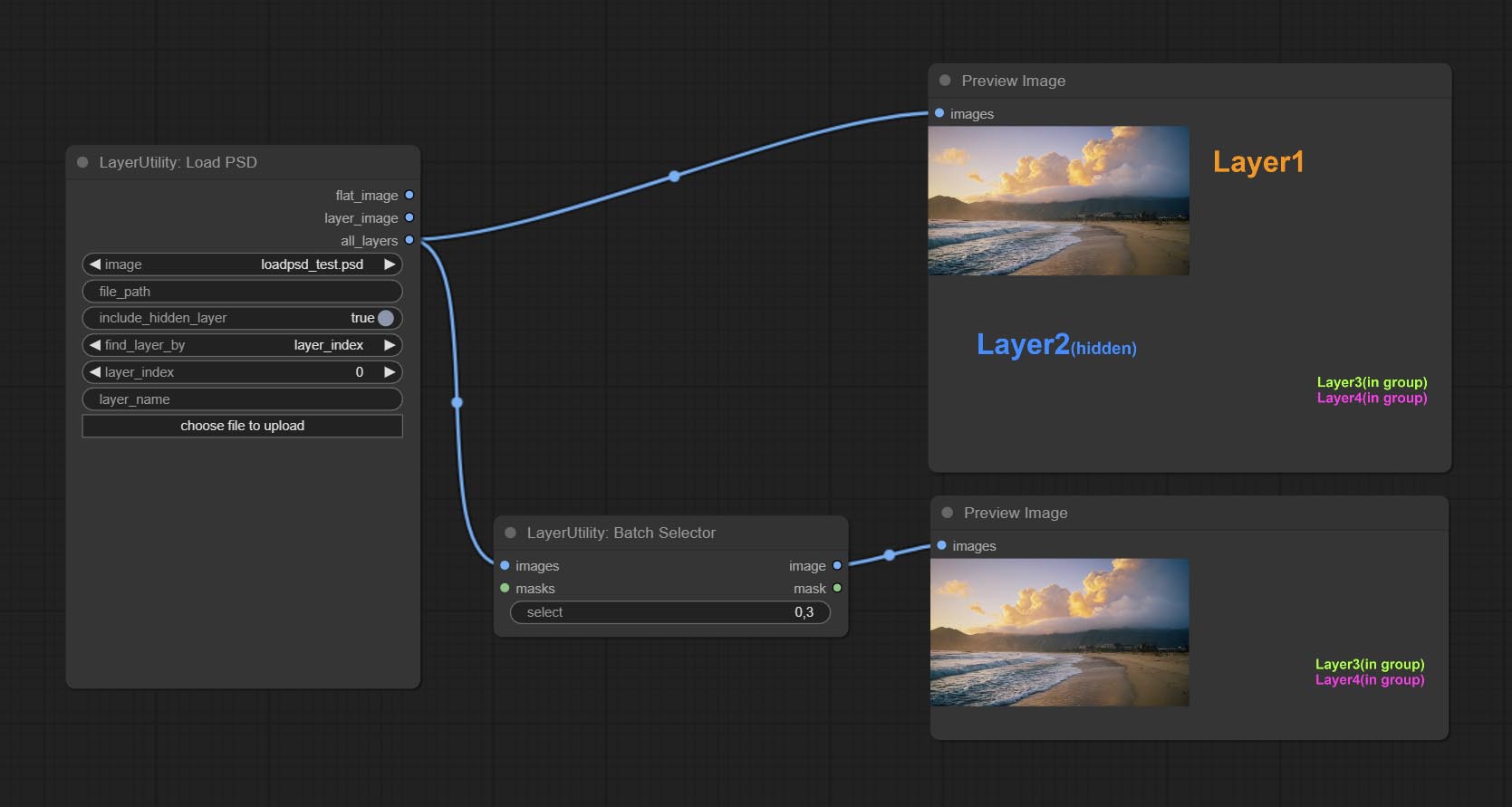

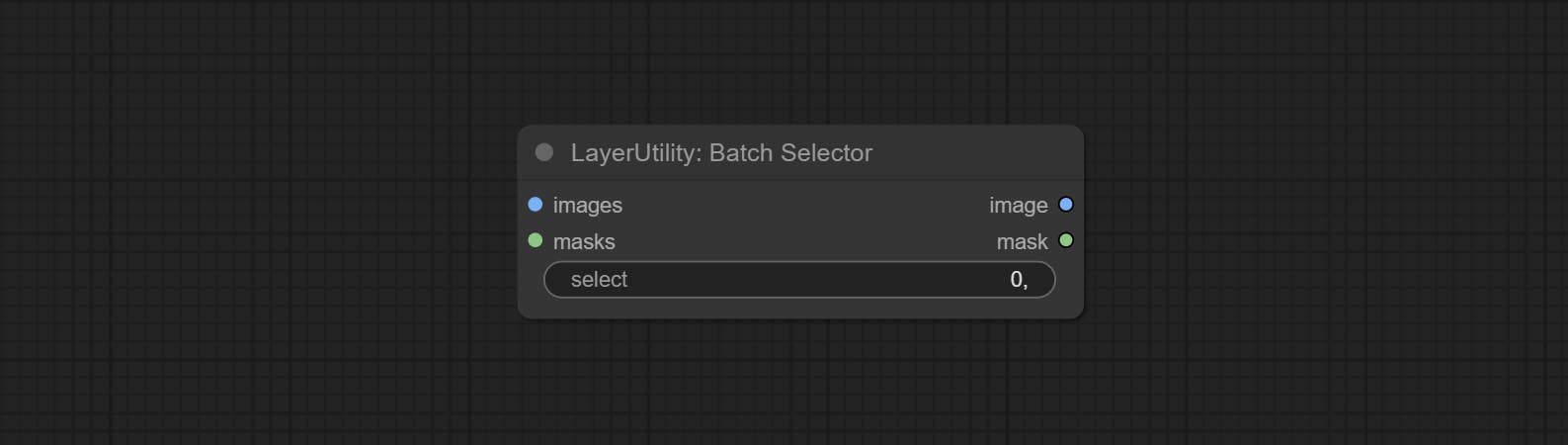

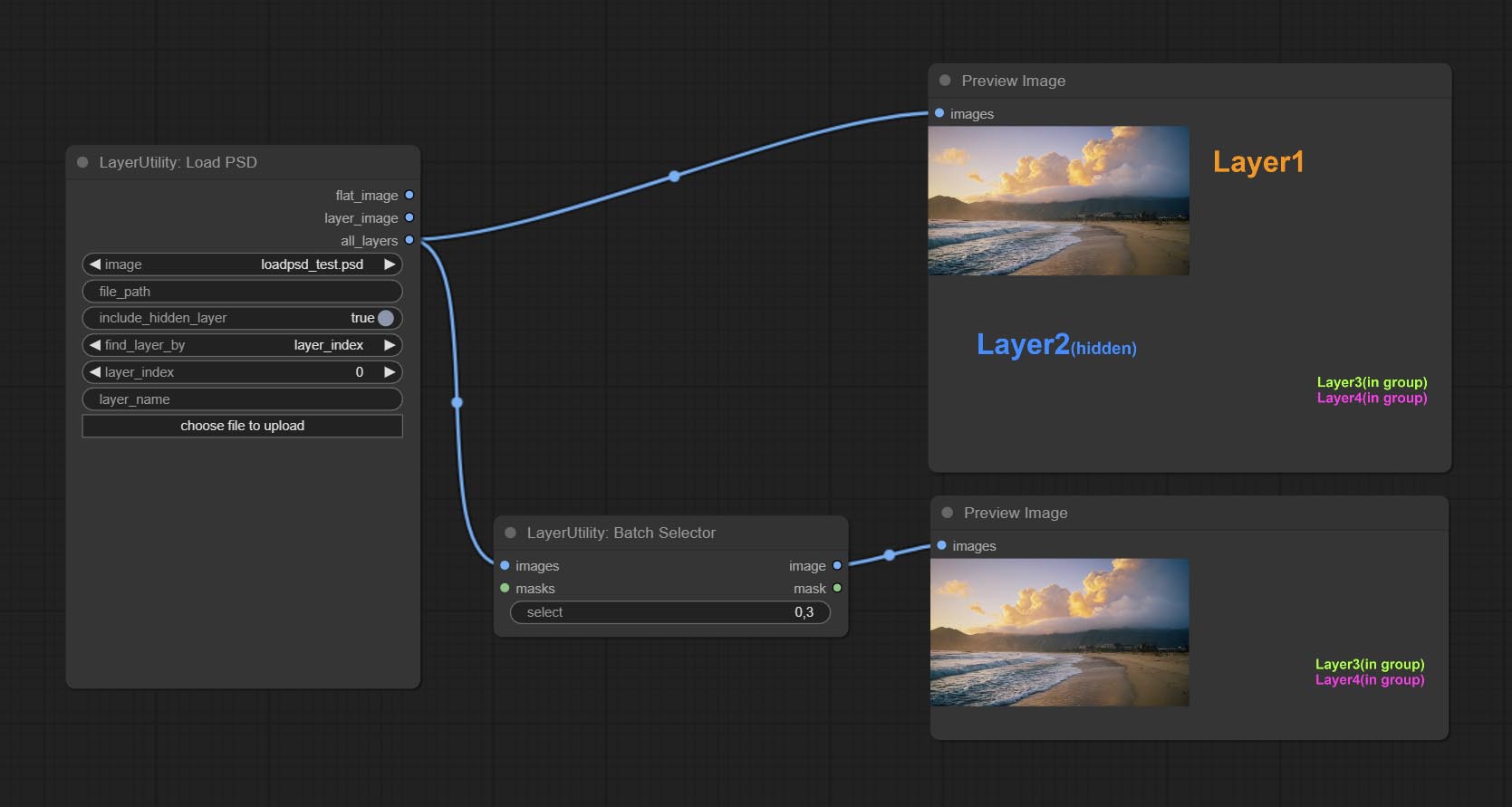

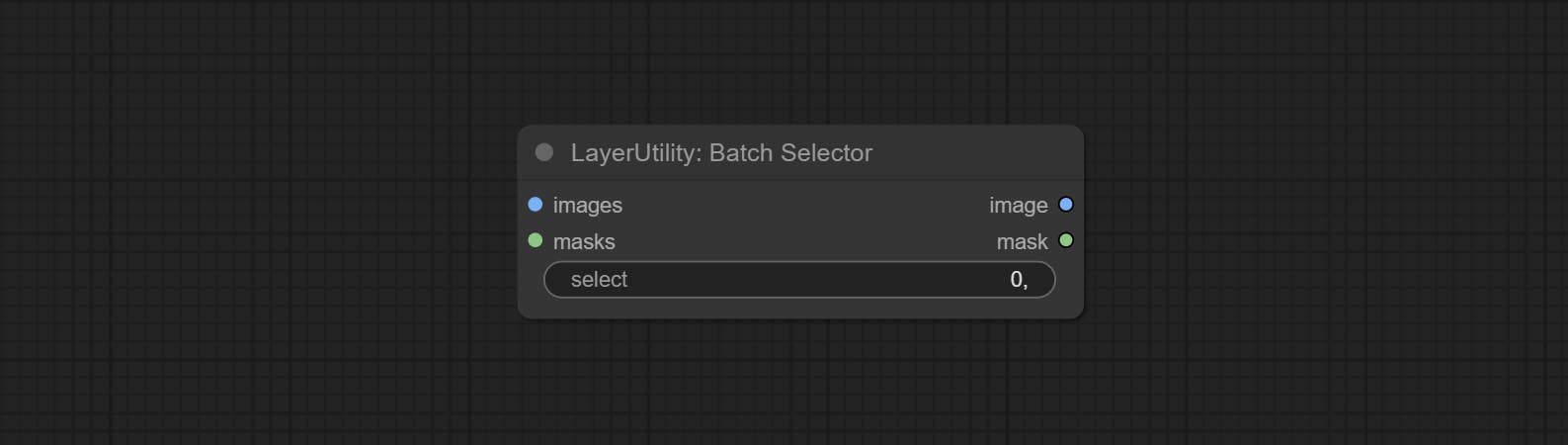

배치 이미지 나 마스크에서 지정된 이미지 나 마스크를 검색하는 데 사용되는 BatchSelector 노드를 커밋합니다.

계층은 Systemio, 데이터 및 프롬프트와 같은 새로운 하위 디렉토리를 만듭니다. 일부 노드는 하위 디렉터로 분류됩니다.

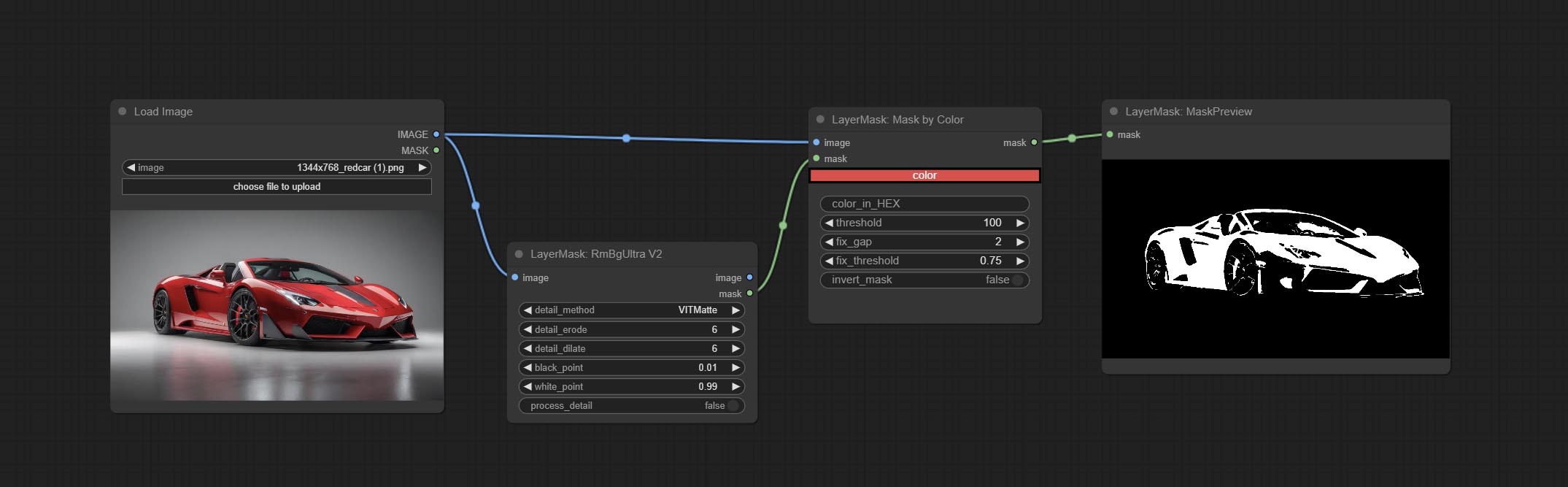

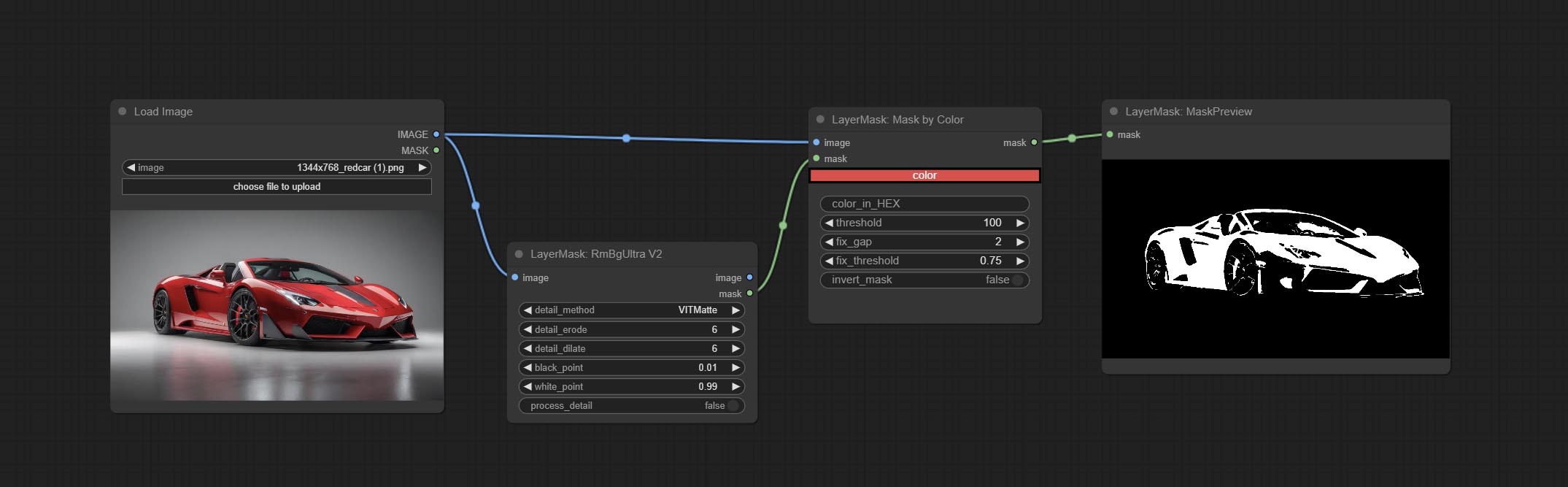

Commit MaskByColor 노드를 커밋하고 선택한 색상에 따라 마스크를 생성합니다.

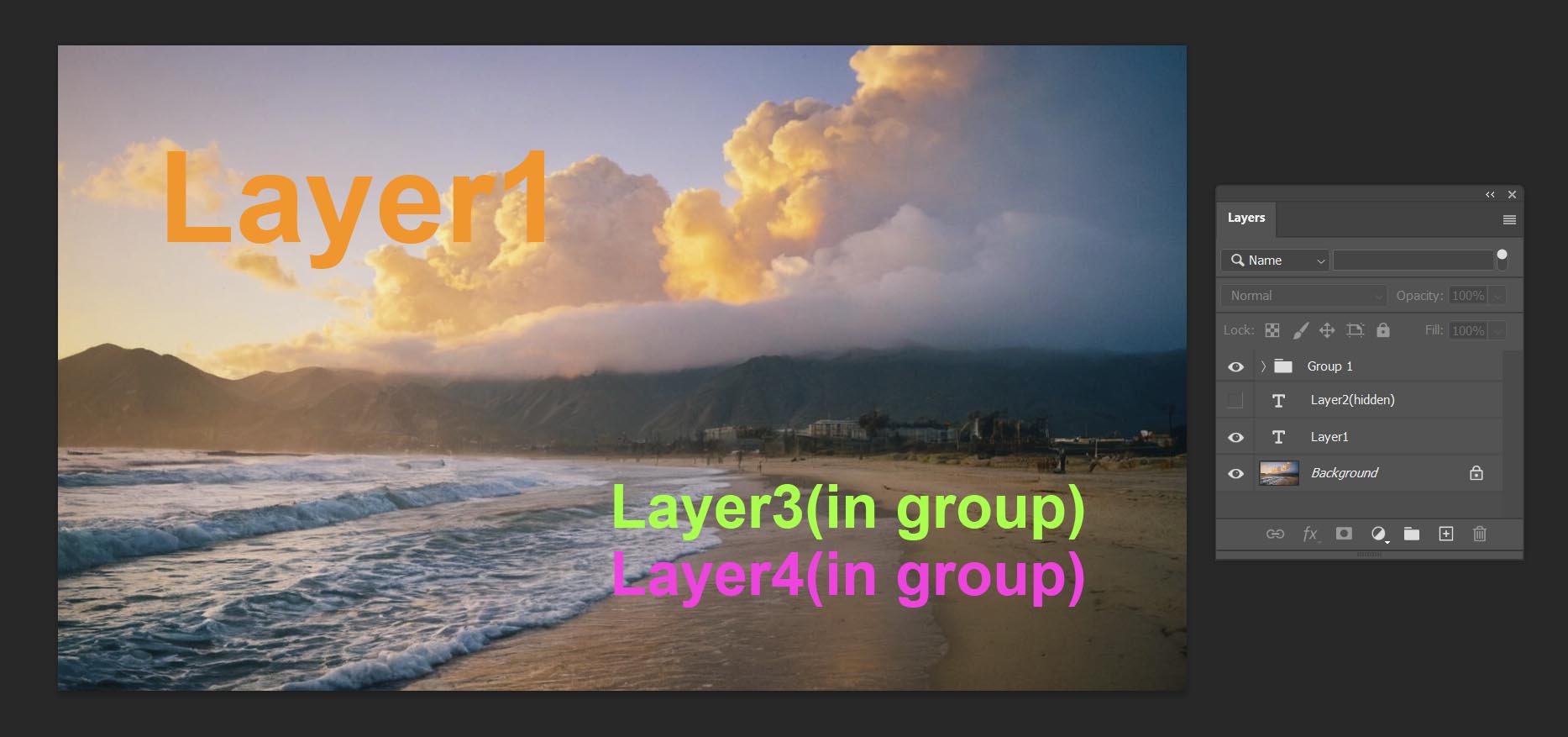

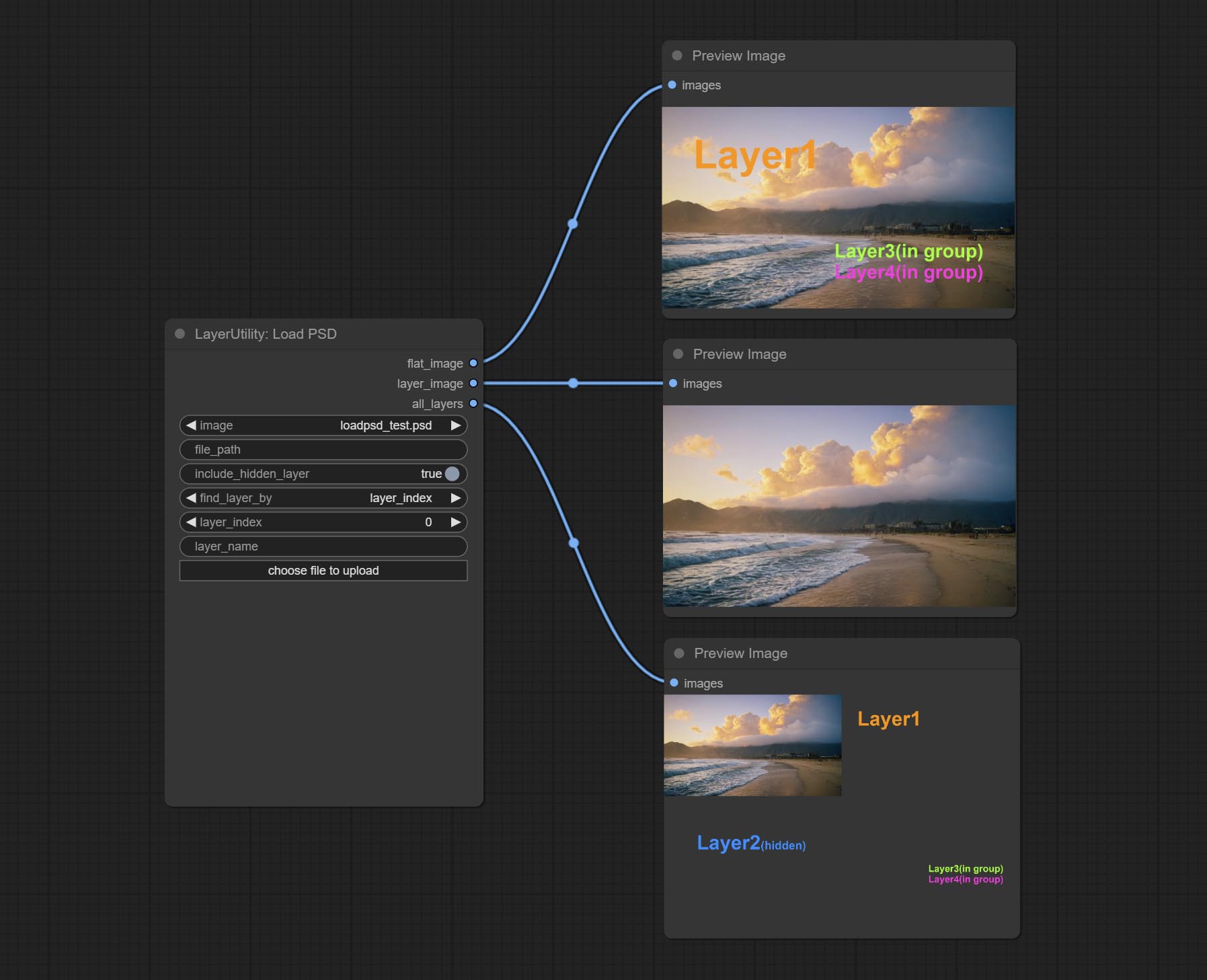

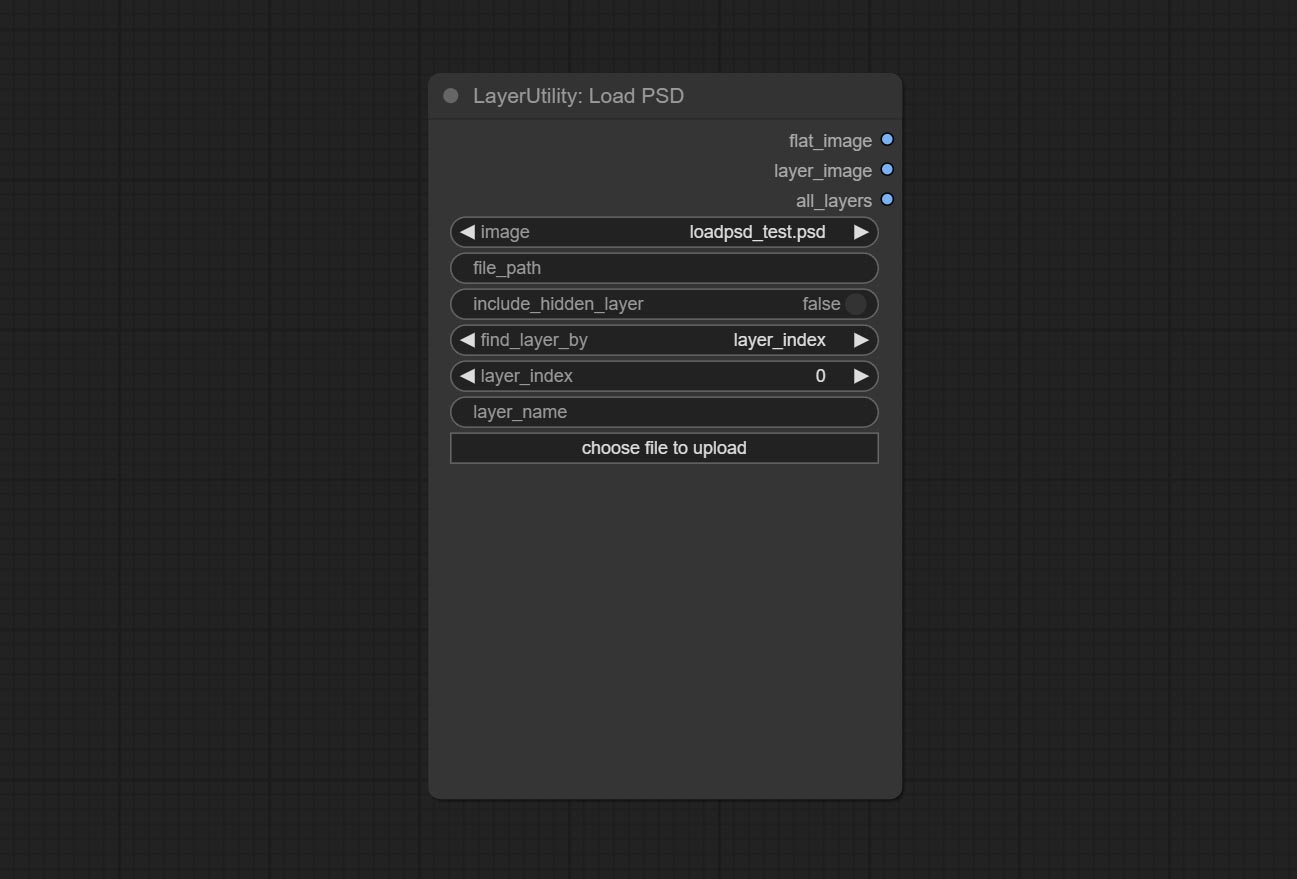

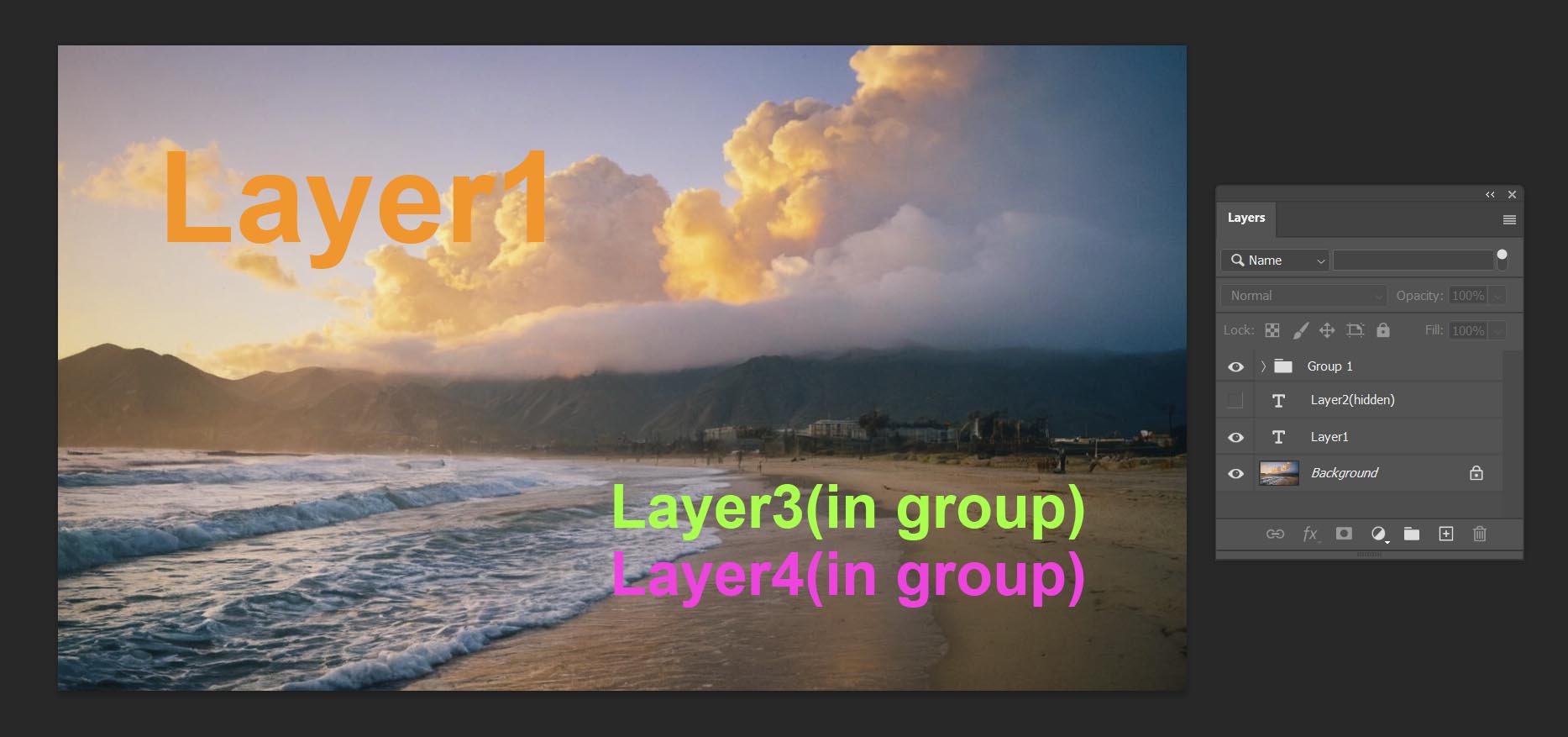

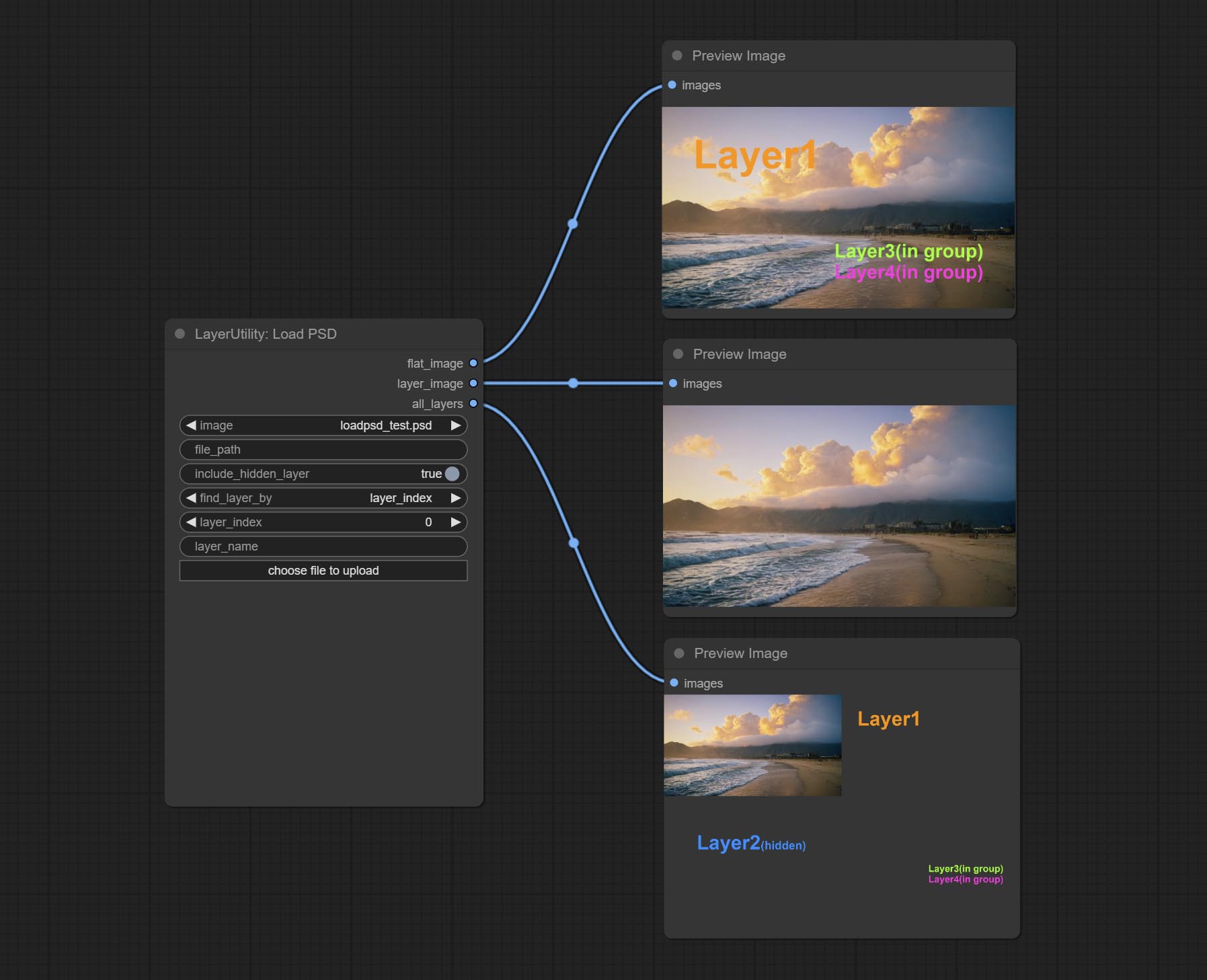

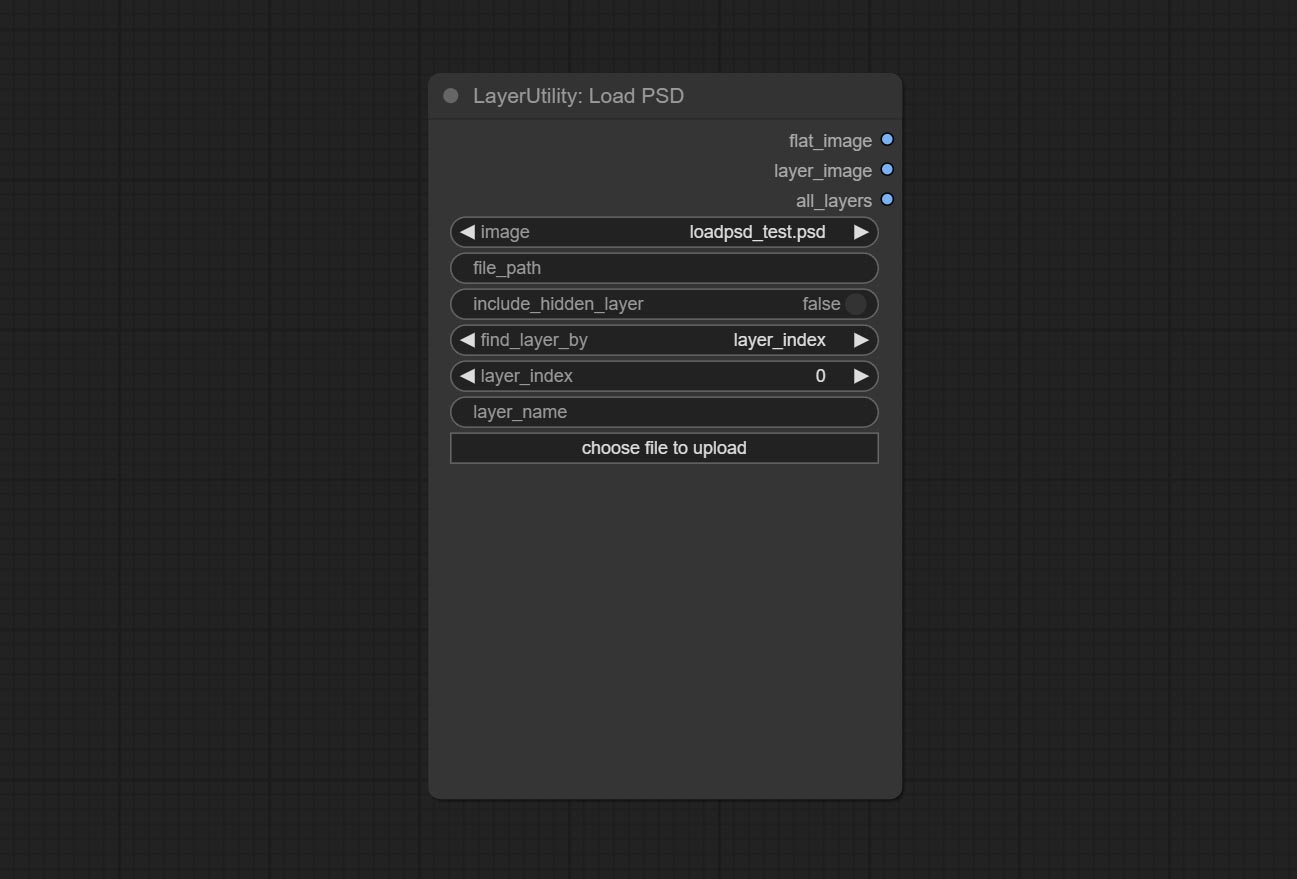

Commit LoadPSD 노드, PSD 형식 및 출력 레이어 이미지를 읽습니다. 이 노드에는 ModuleNotFoundError: No module named 'docopt' PSD_Tool을 설치하는 동안 오류가 발생하는 경우 psd_tools 종속성 패키지를 설치해야합니다. DocOpt의 WHL을 다운로드하고 수동 설치하십시오.

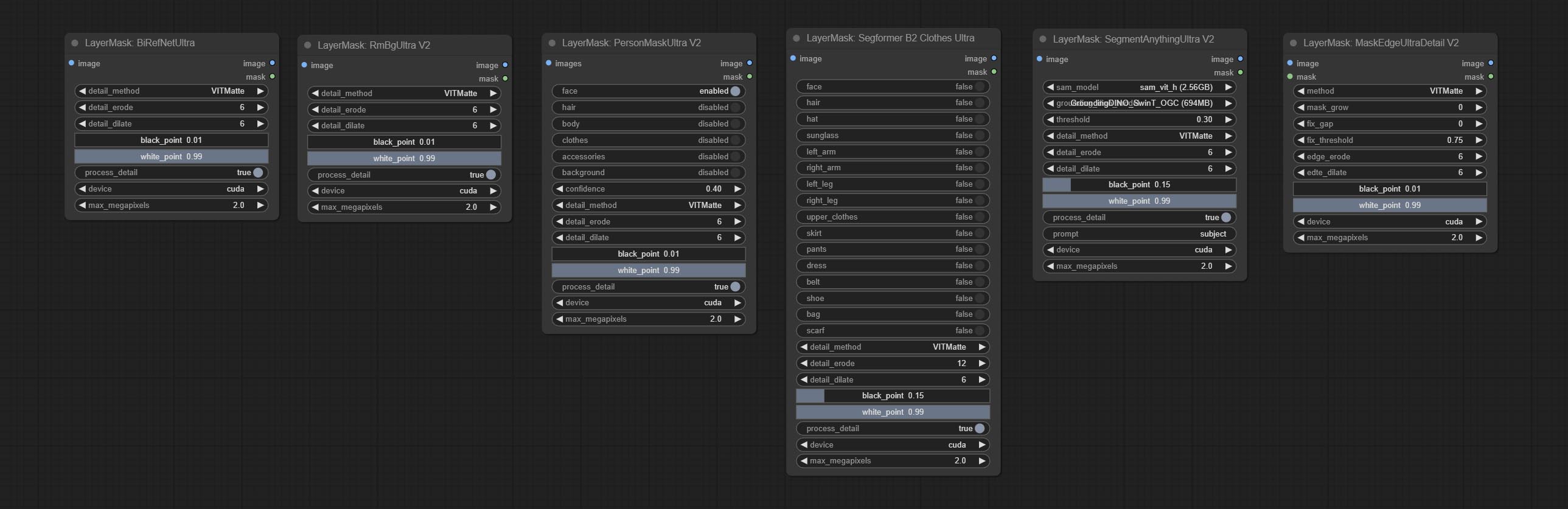

segformerb2clothesultra 노드를 커밋합니다. 문자 의류를 세그먼트하는 데 사용됩니다. 모델 세분화 코드는 원래 저자 덕분에 Starthua에서 온 것입니다.

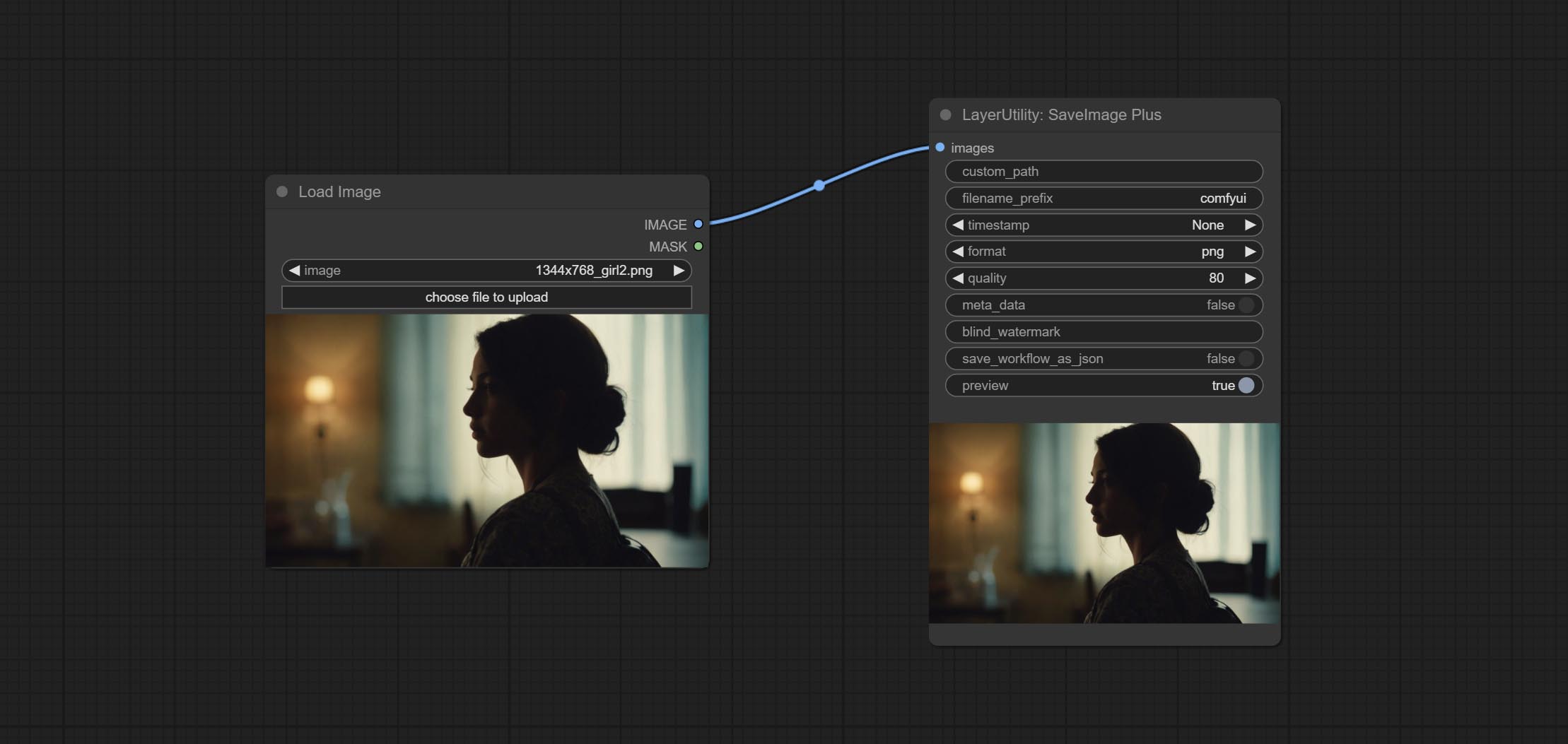

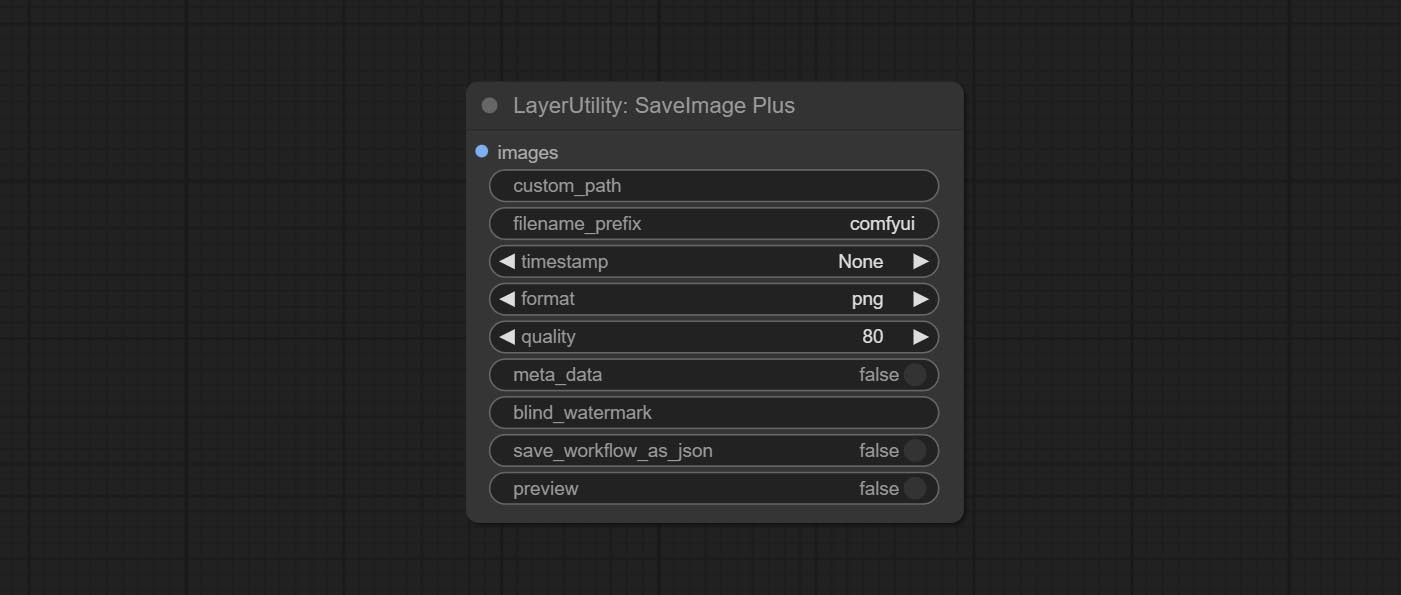

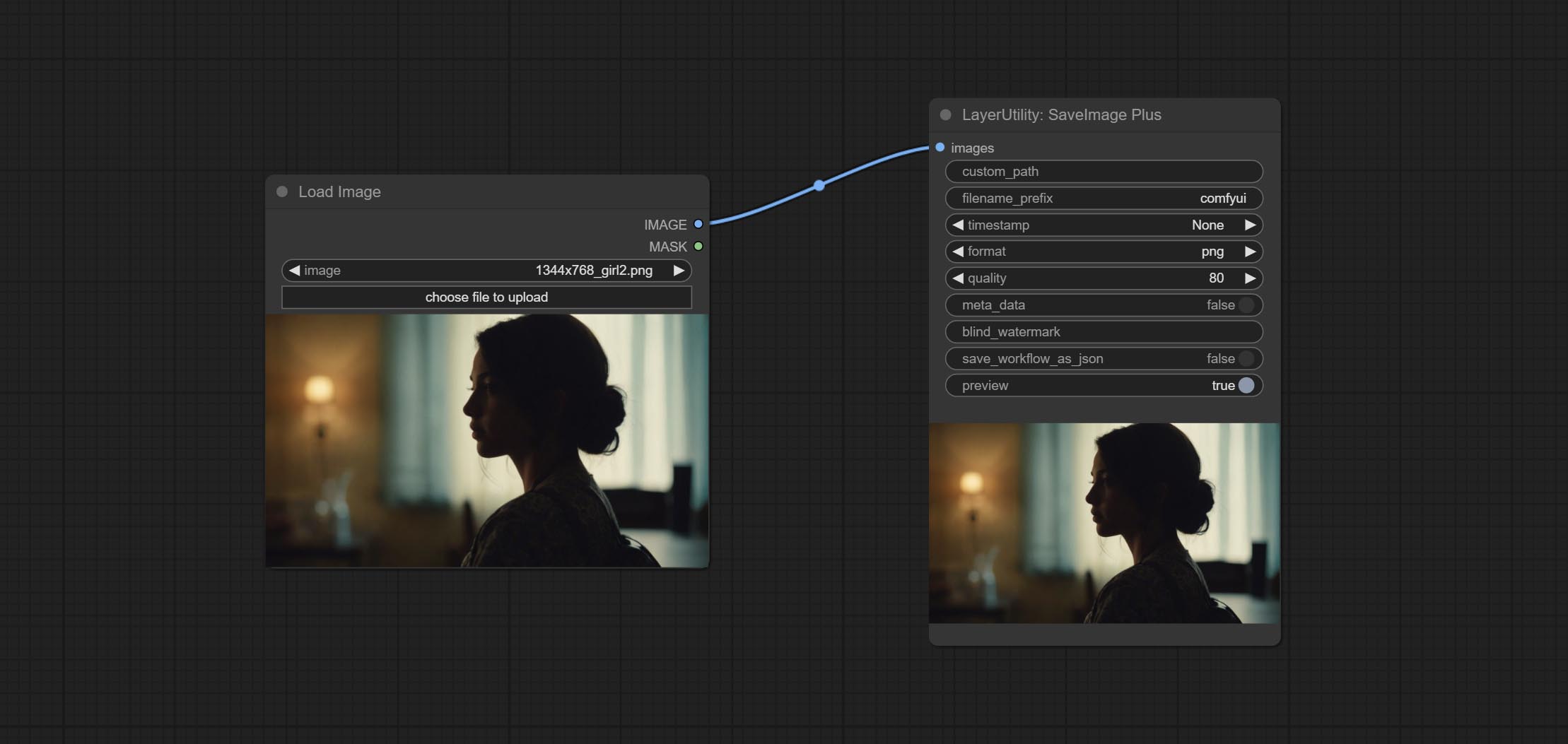

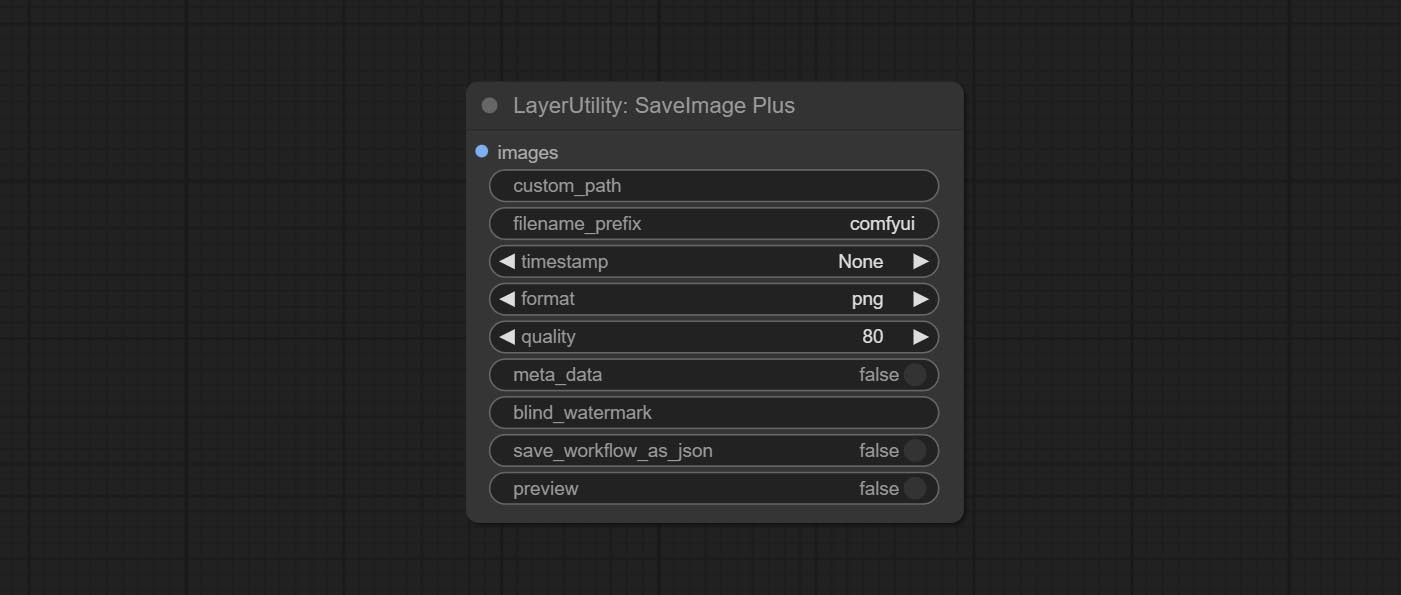

SaveImagePlus 노드는 출력 워크 플로를 JSON 기능에 추가하고, 임베드 인트 날짜 또는 경로 및 파일 이름에 대한 %date 및 %time 지원하고 미리보기 스위치를 추가합니다.

Commit SaveImagePlus 노드 됨면 사진이 저장된 디렉토리를 사용자 정의하고 파일 이름에 타임 스탬프를 추가하고 형식 저장을 선택하고 이미지 압축 속도를 설정하고 워크 플로를 저장하는지 여부를 설정 한 다음 그림에 보이지 않는 워터 마크를 추가 할 수 있습니다.

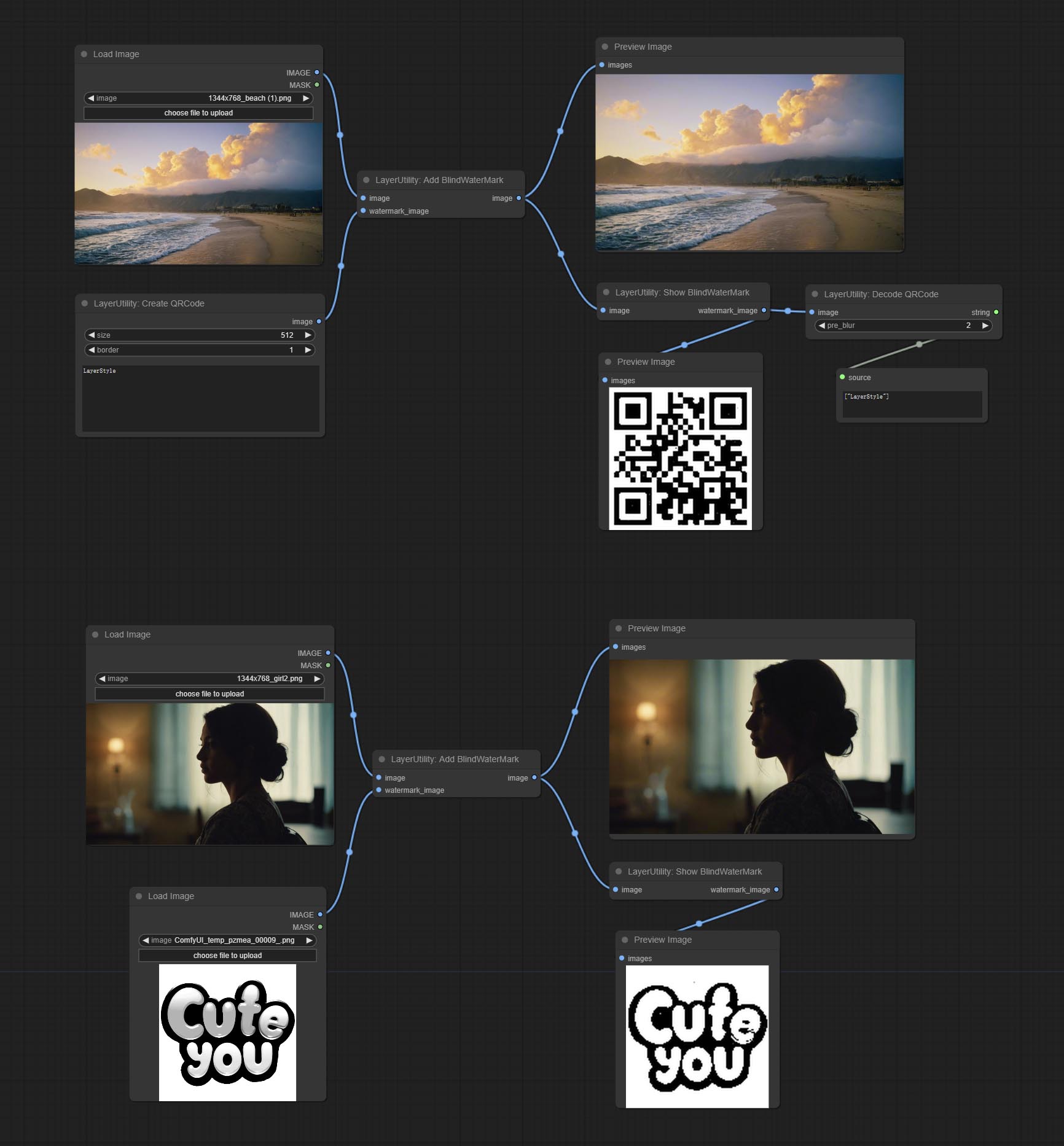

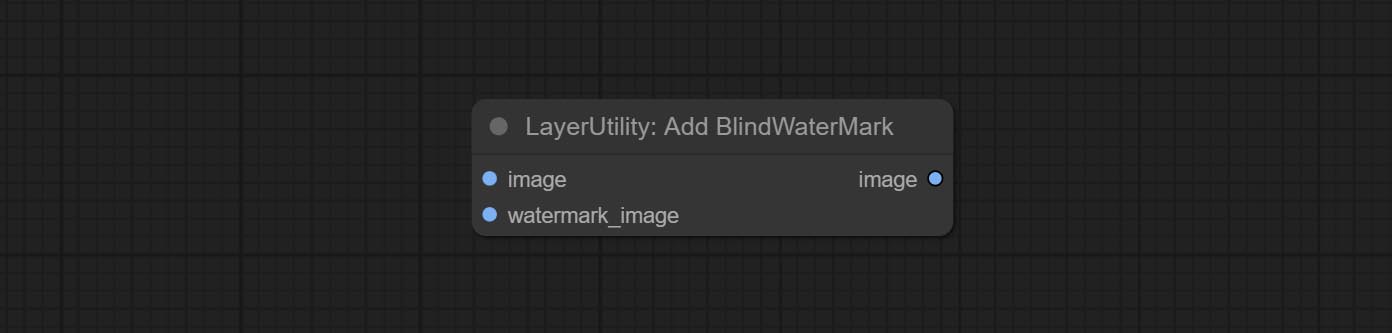

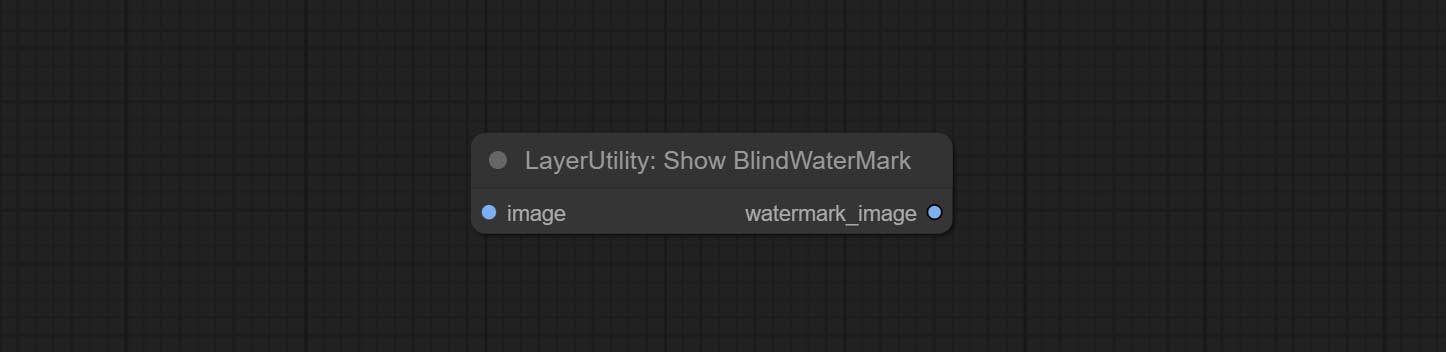

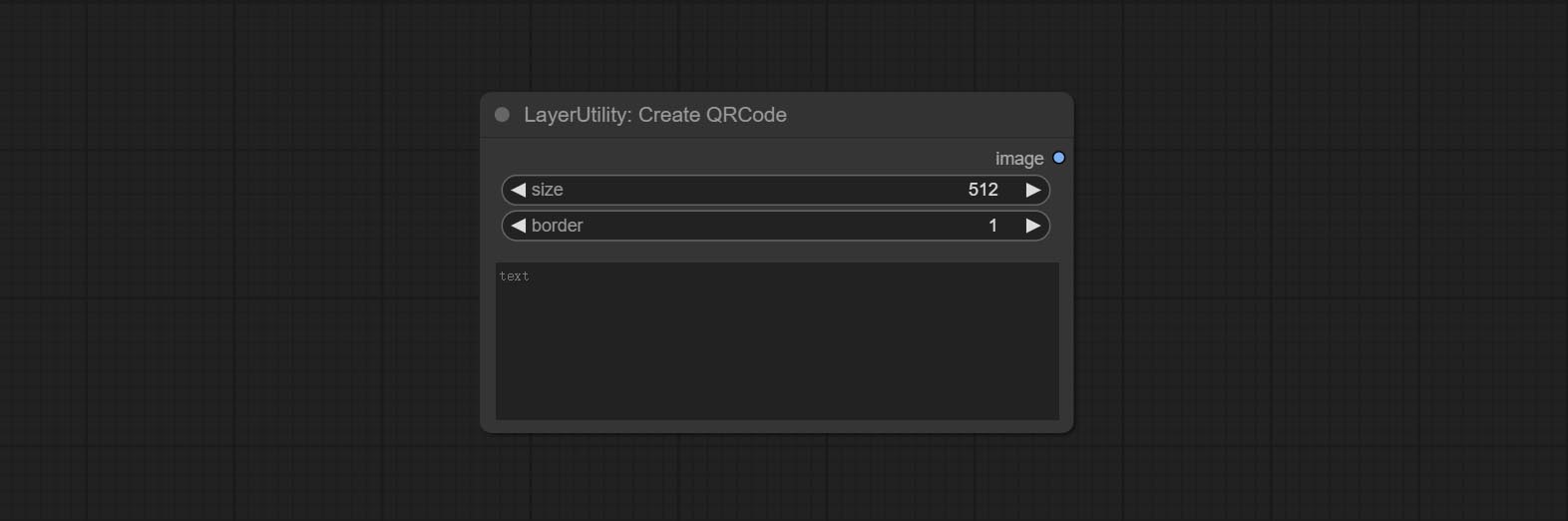

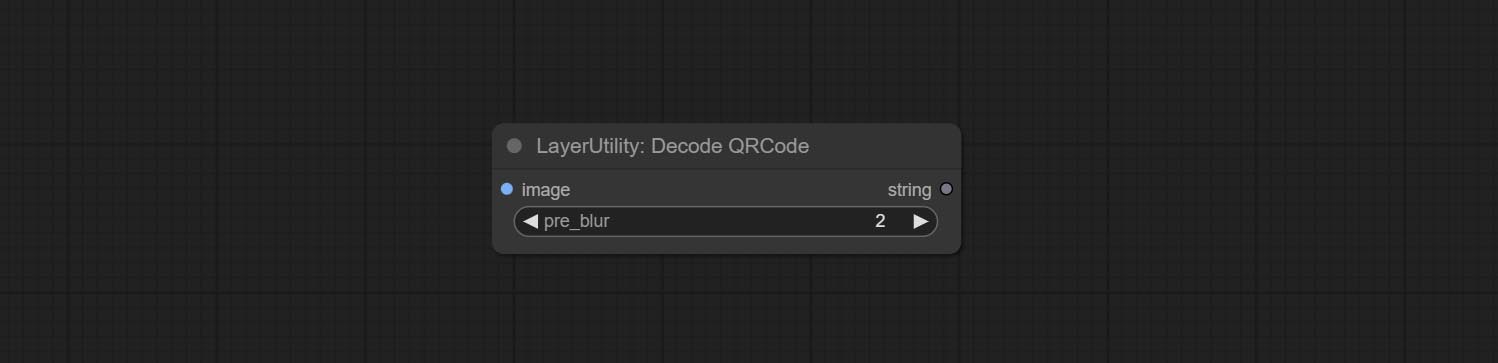

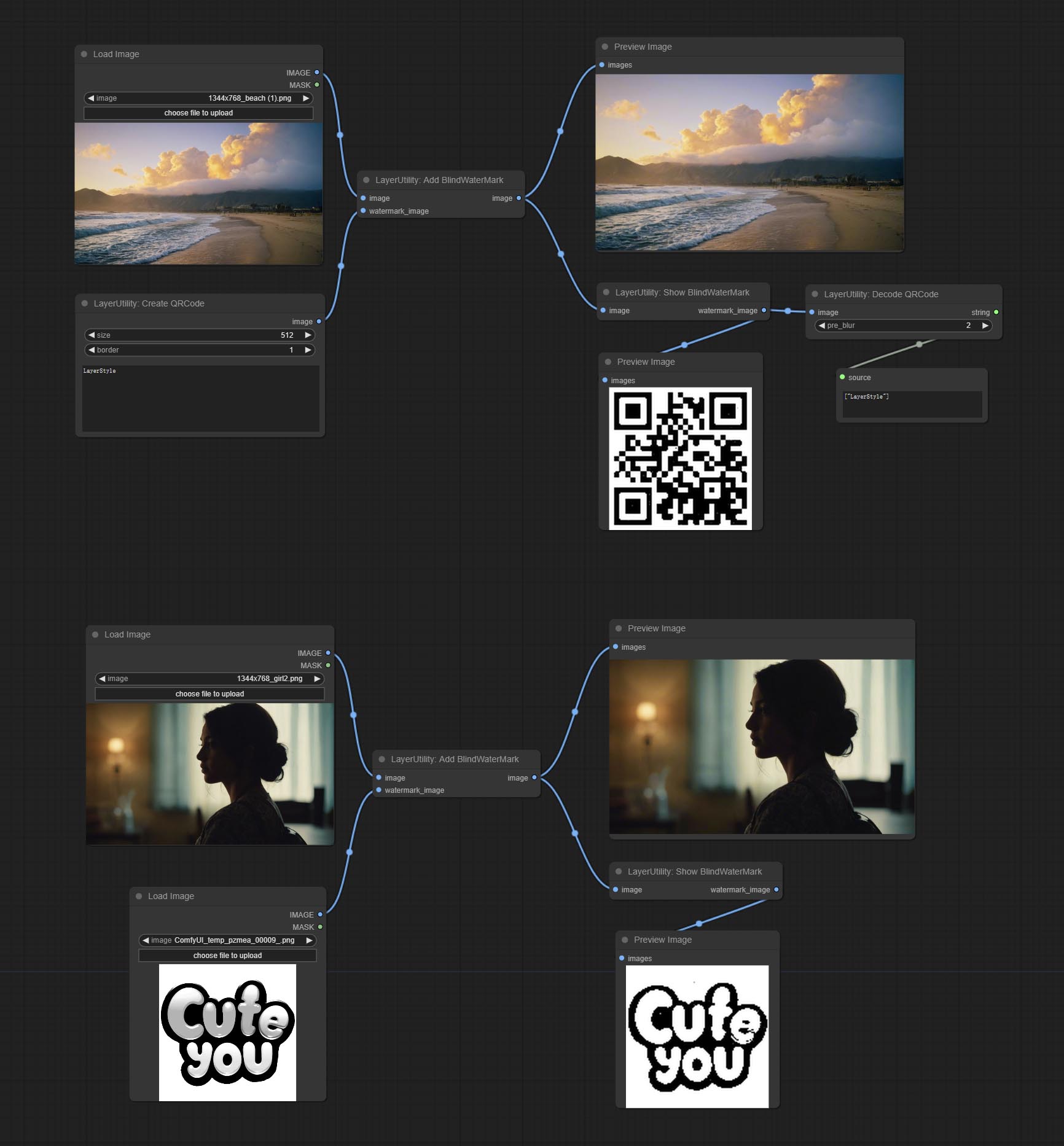

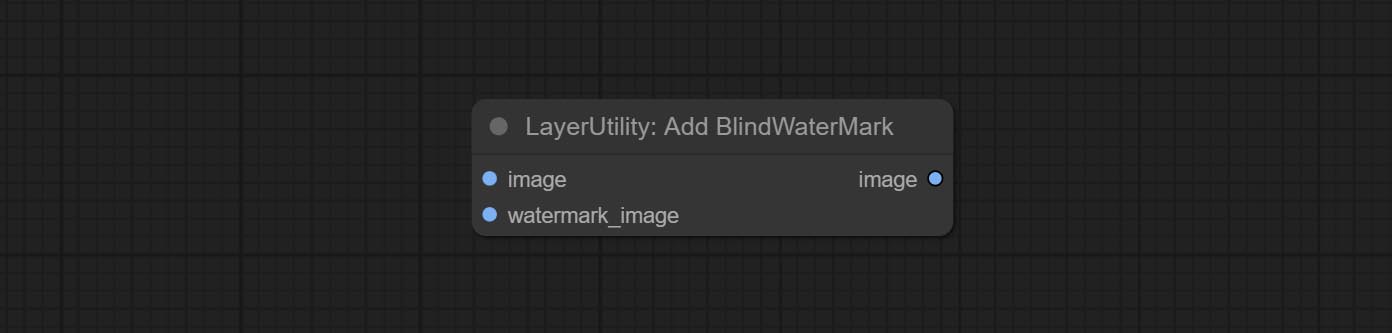

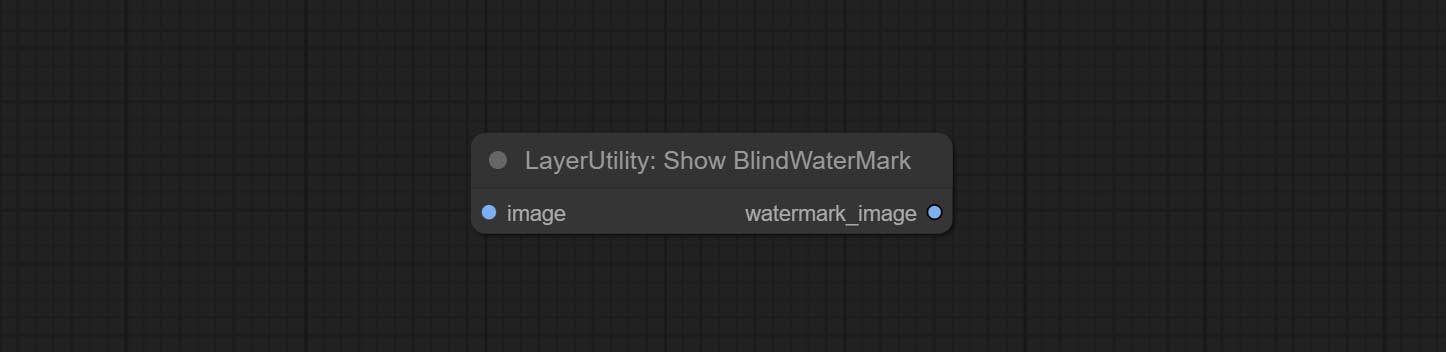

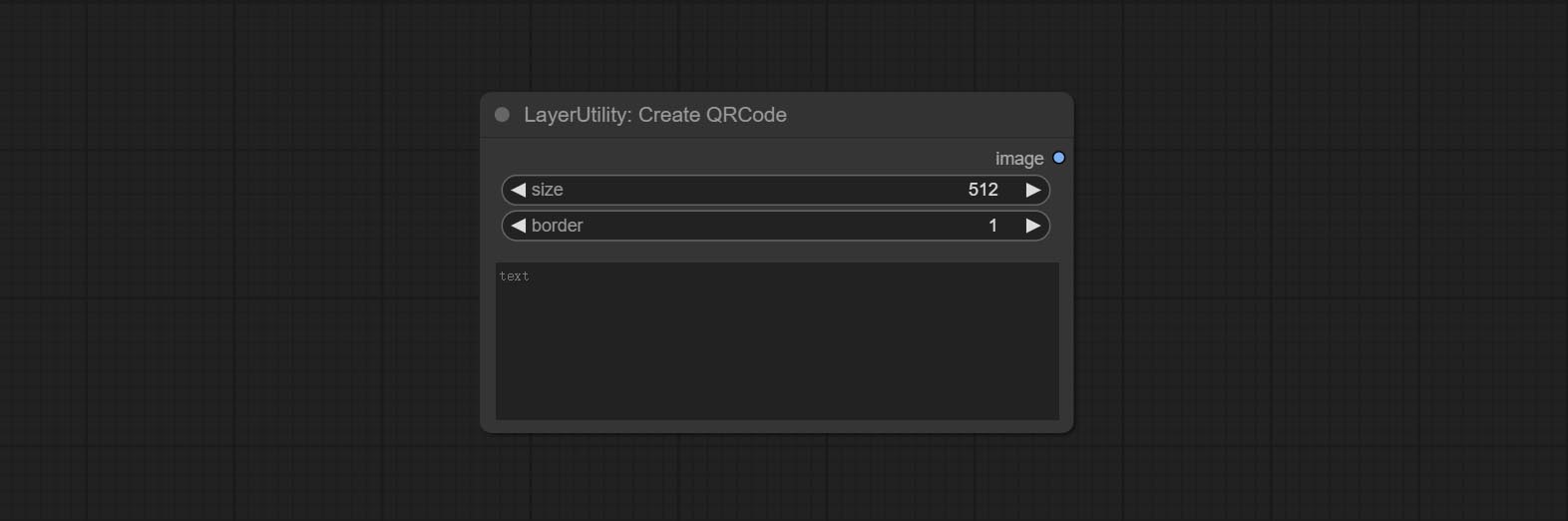

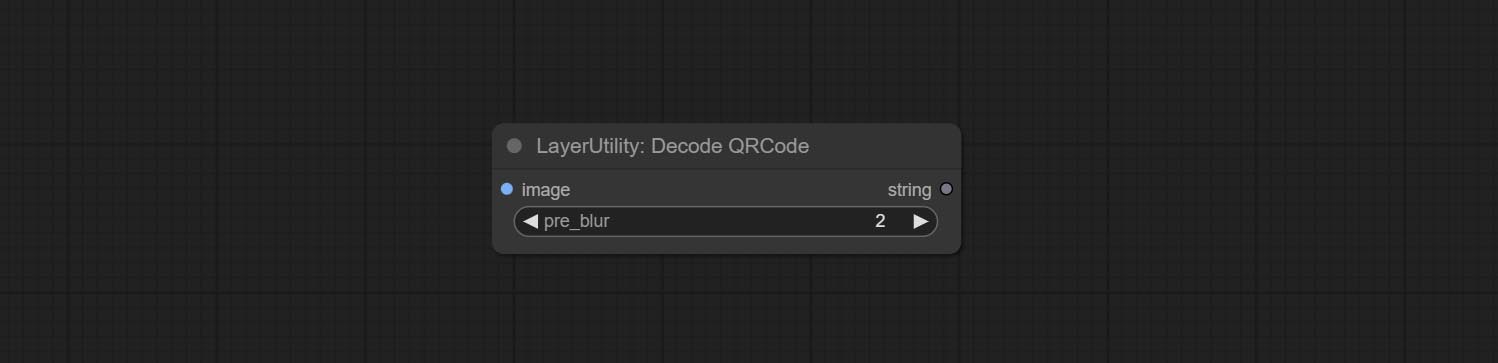

AddBlindwatermark, Showblindwatermark 노드를 커밋하고, 보이지 않는 워터 마크를 추가하고 디코딩 된 워터 마크를 그림에 추가하십시오. CEMPINCEQRCODE, DECODEQRCODE 노드를 커밋하면 2 차원 코드 그림을 생성하고 2 차원 코드를 디코딩 할 수 있습니다.

ImagesCalerestorev2, ImagesCaleByAspectratiov2, ImageAutocRopv2 노드는 width 와 height 고정 값으로 지정할 수있는 너비와 높이에 대한 옵션을 추가합니다.

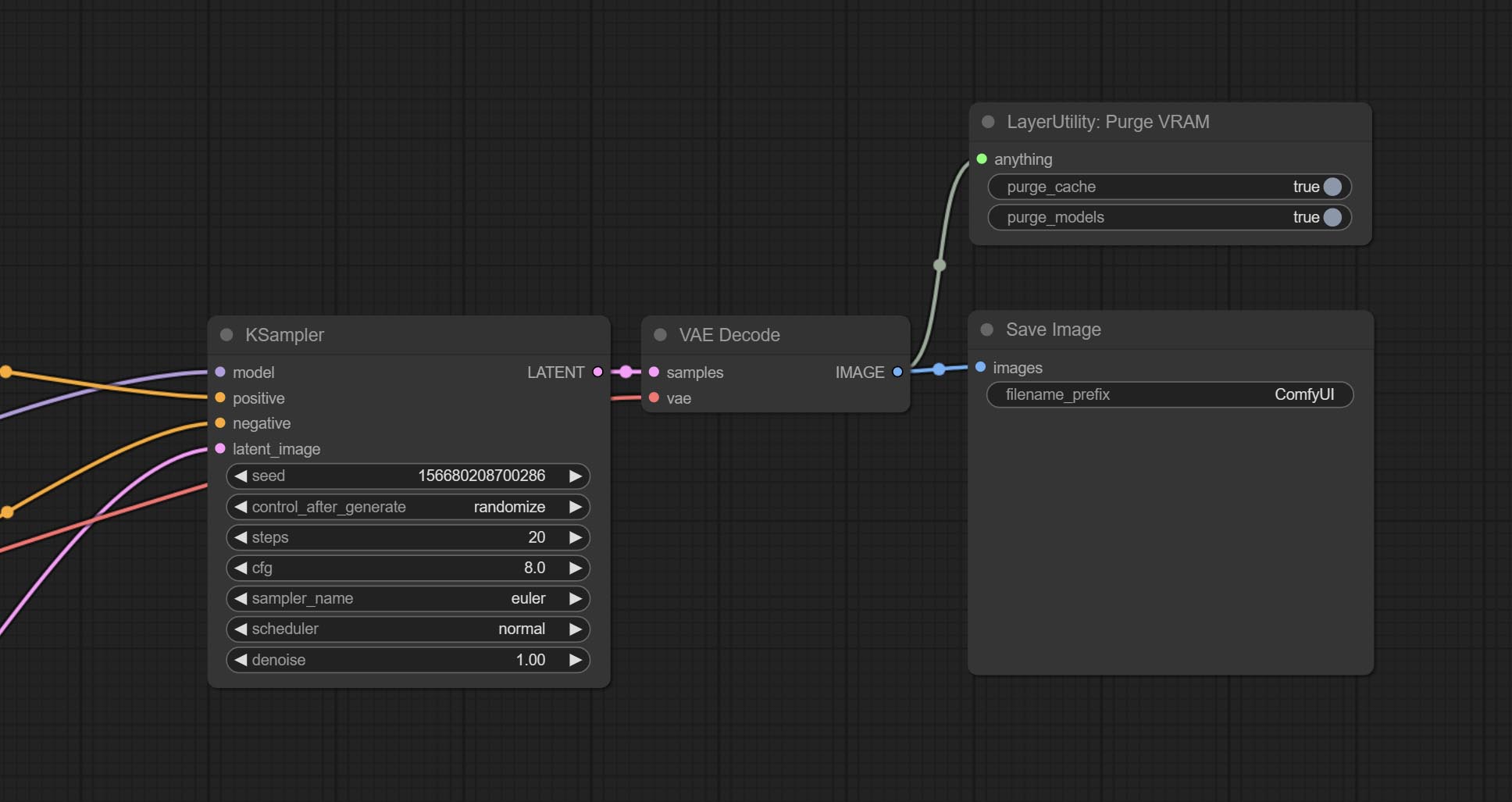

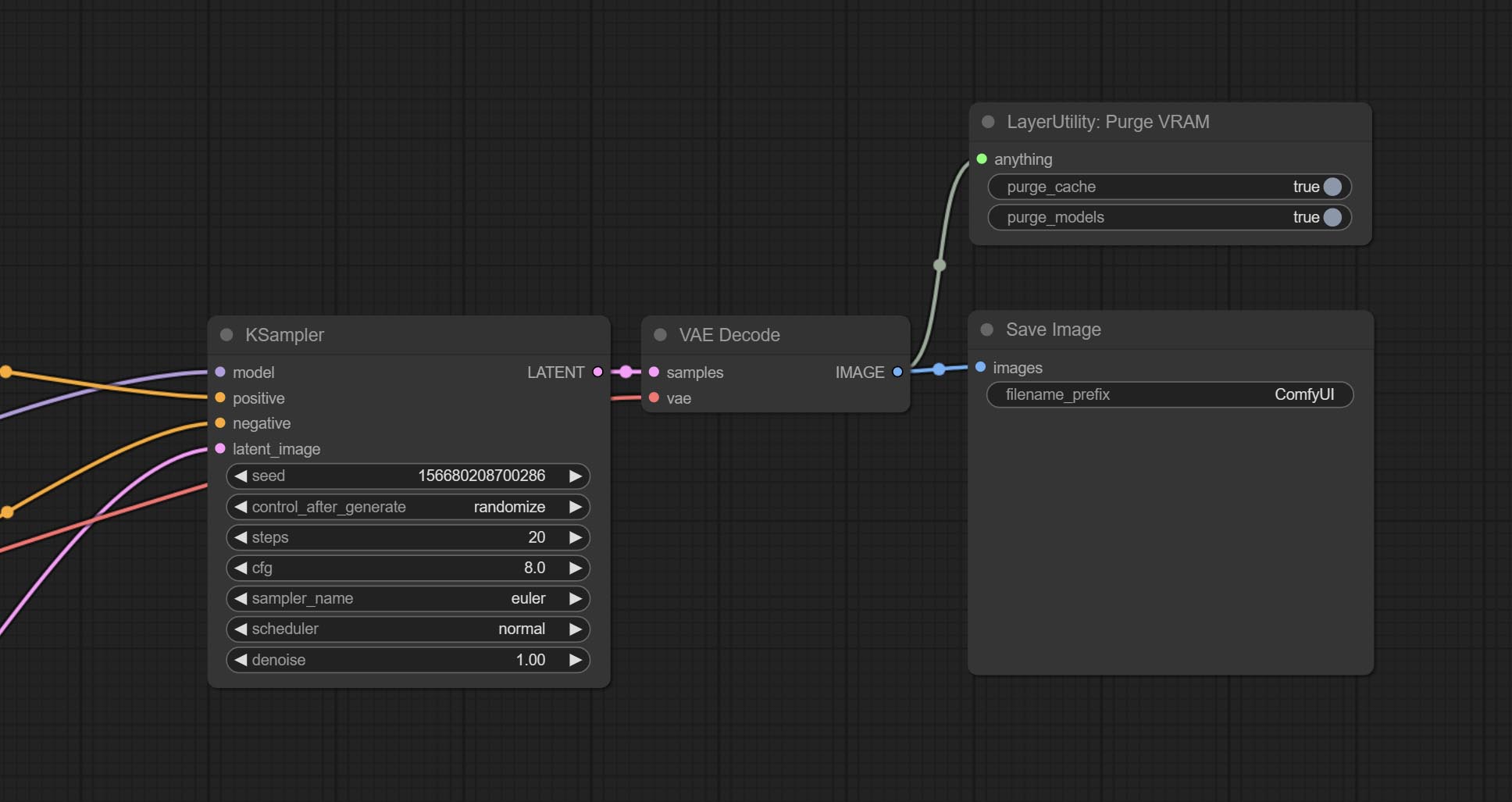

Purgevram 노드를 커밋하고 Vram을 정리하십시오.

자동 조정 노드를 커밋하면 이미지 대비 및 화이트 밸런스를 자동으로 조정할 수 있습니다.

rgbvalue 노드를 커밋하여 색상 값을 R, G, B의 단일 소수점 값으로 출력하십시오.이 아이디어는 vxinhao에서 나온 것입니다.

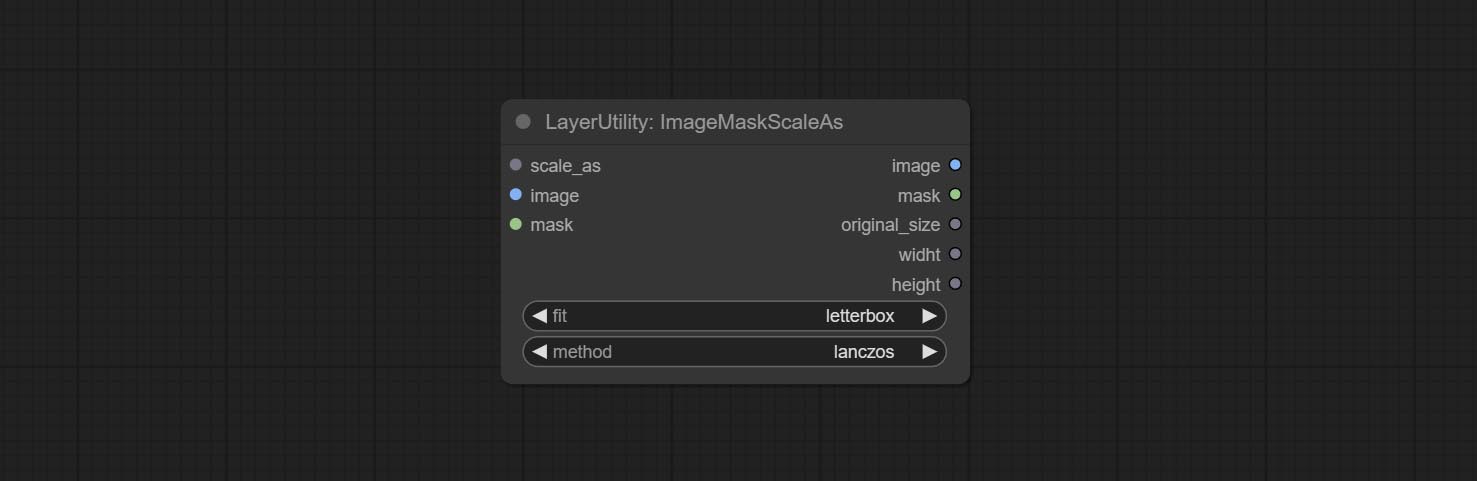

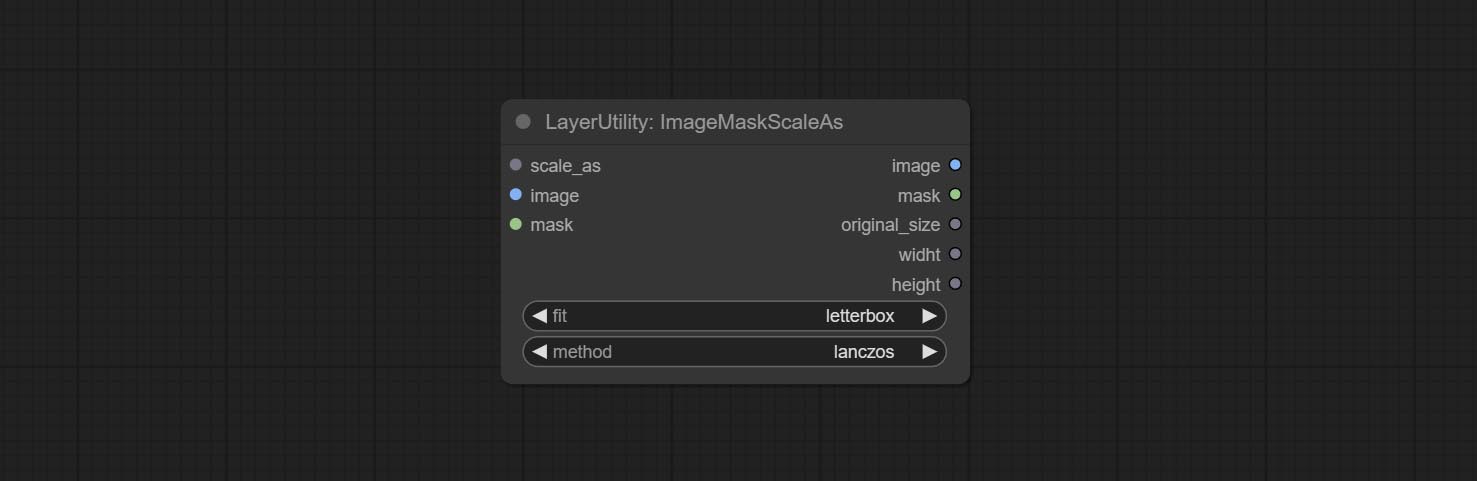

종자 노드를 커밋하여 종자 값을 출력하십시오. ImageMaskScaleas, ImagesCalebySpectratio, ImagesCalebySpectratiov2, ImagesCalerestore, ImagesCalerestorev2 노드는 width , height 출력을 증가시킵니다.

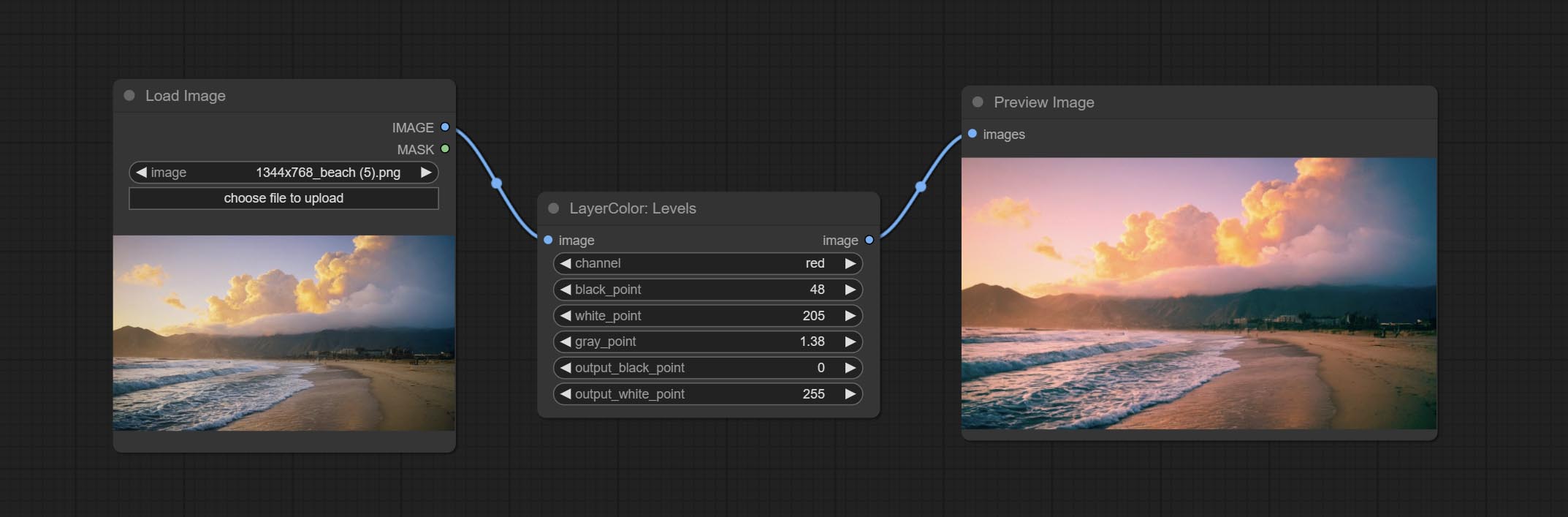

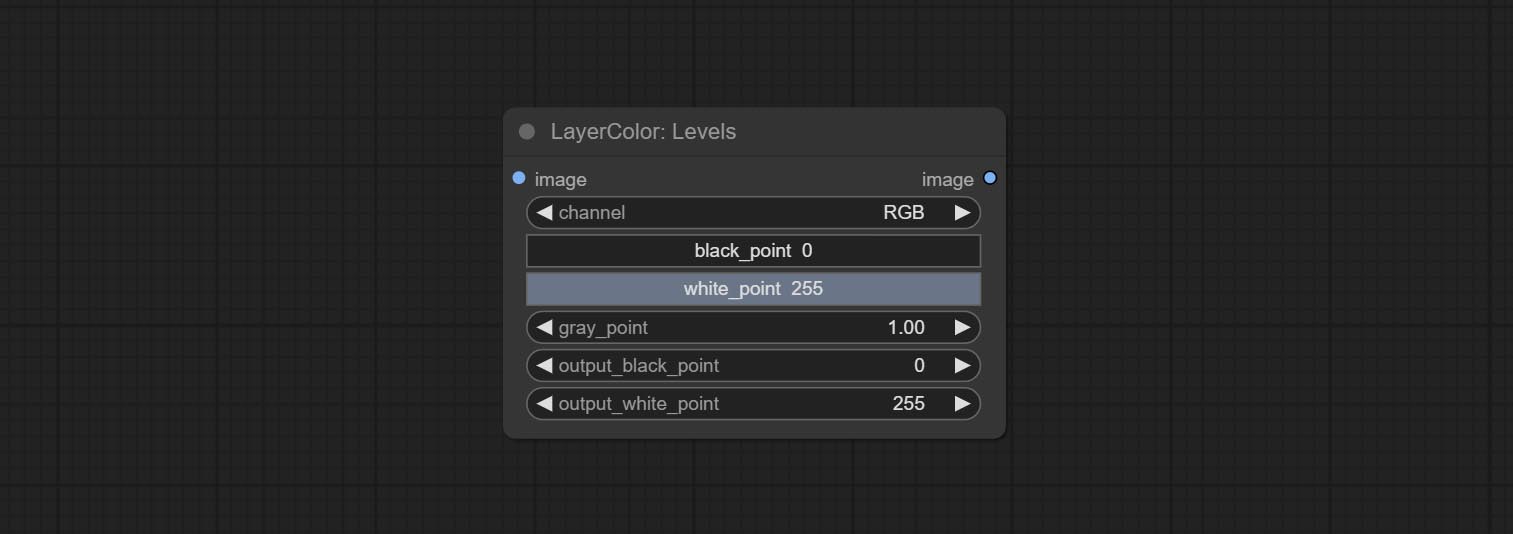

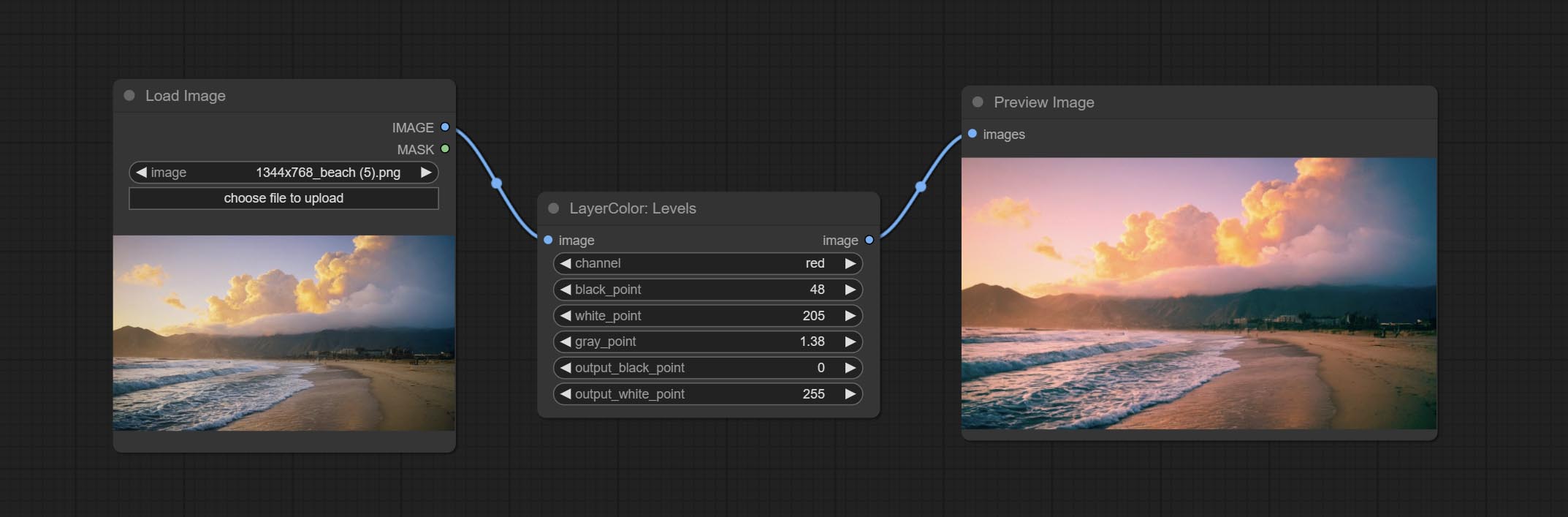

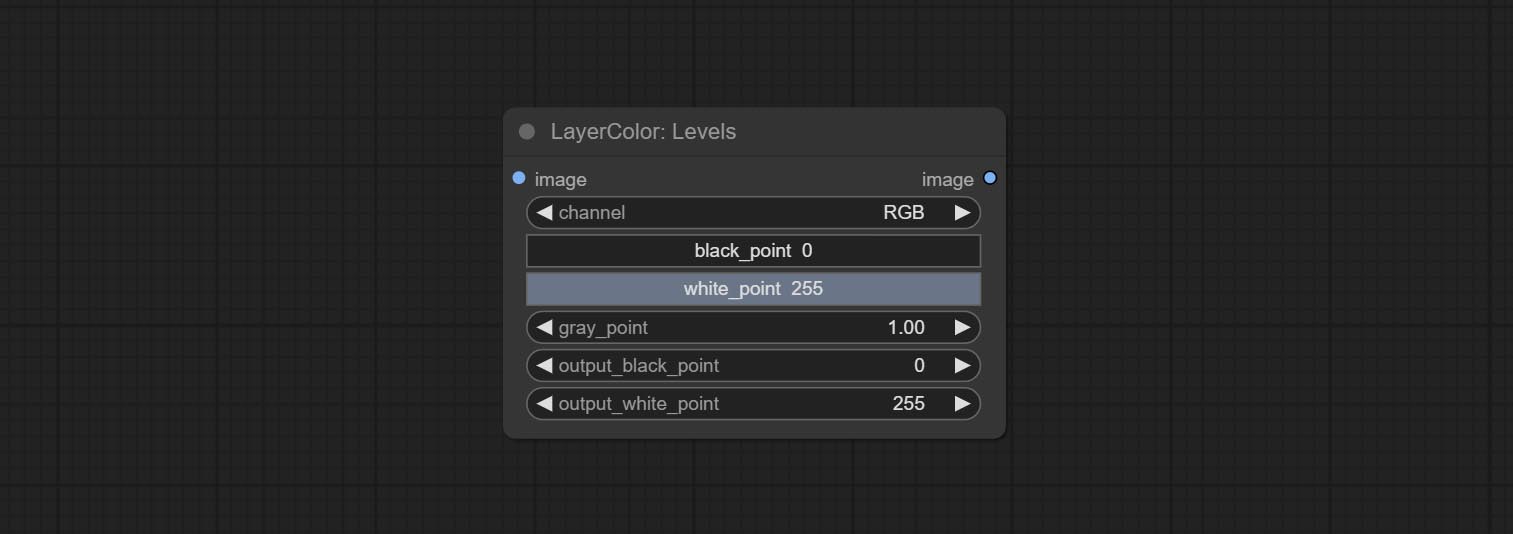

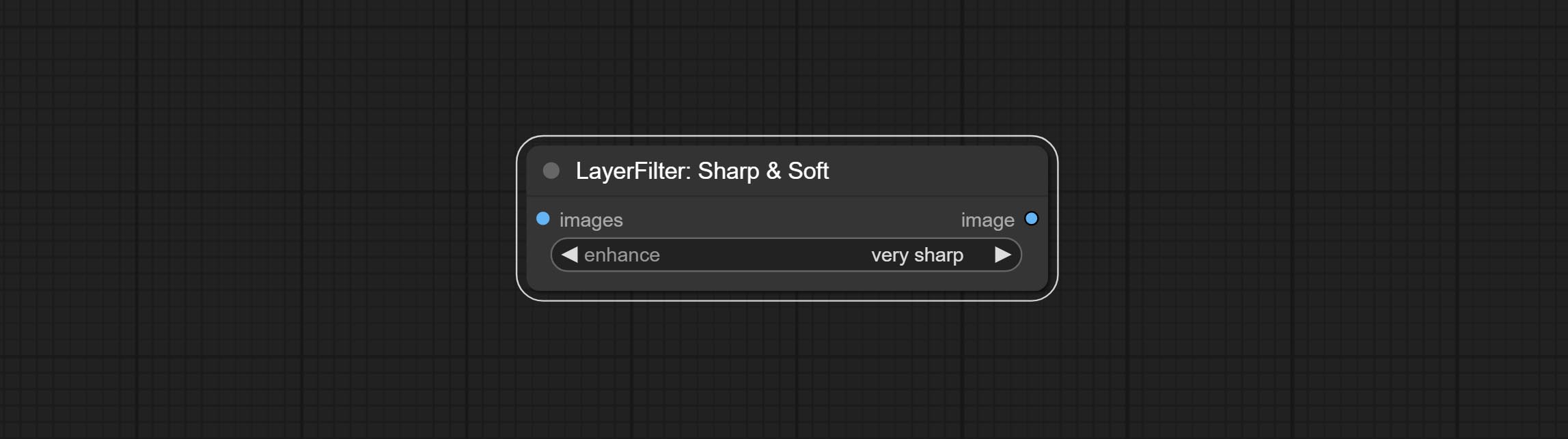

커밋 레벨 노드, photoshop.sharp & soft와 동일한 색상 레벨 조정 기능을 달성 할 수 있습니다.

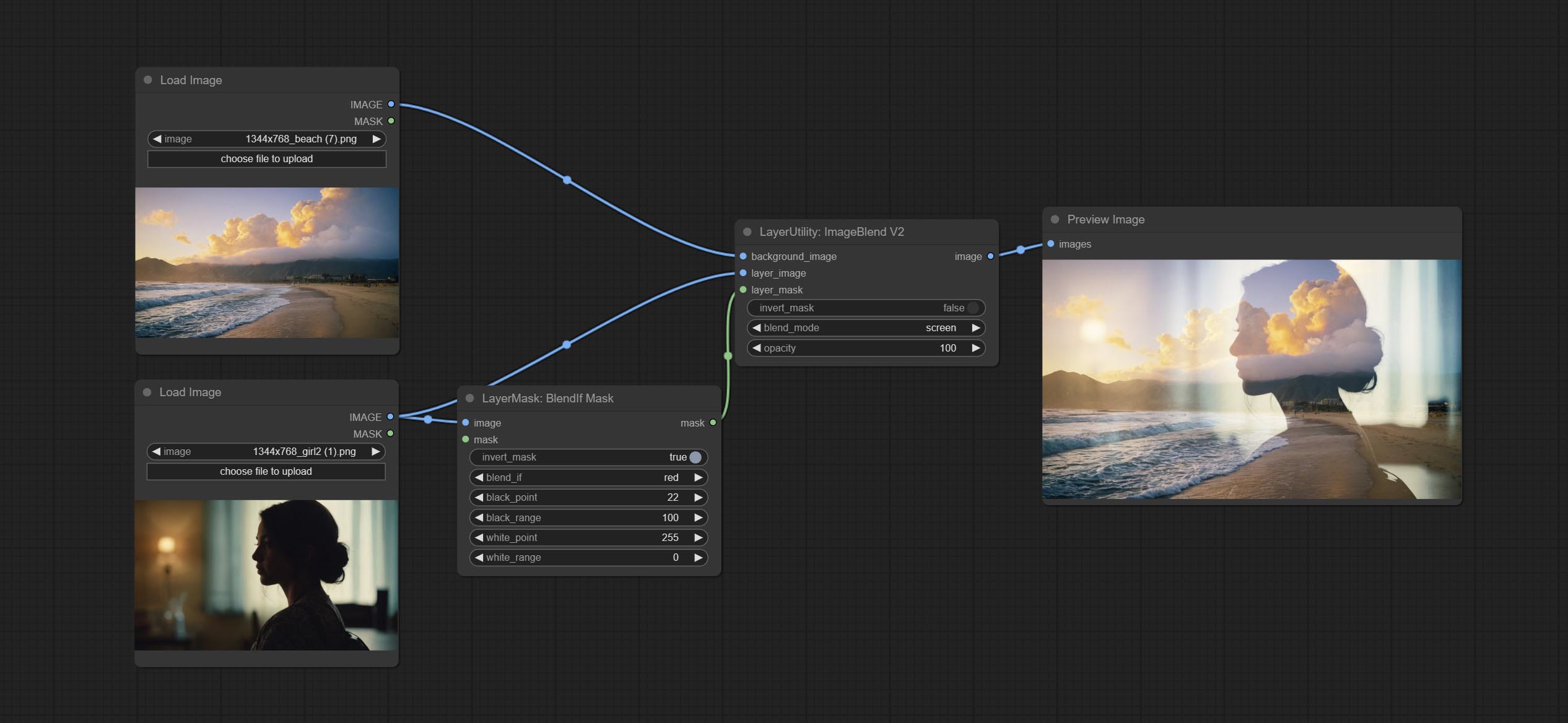

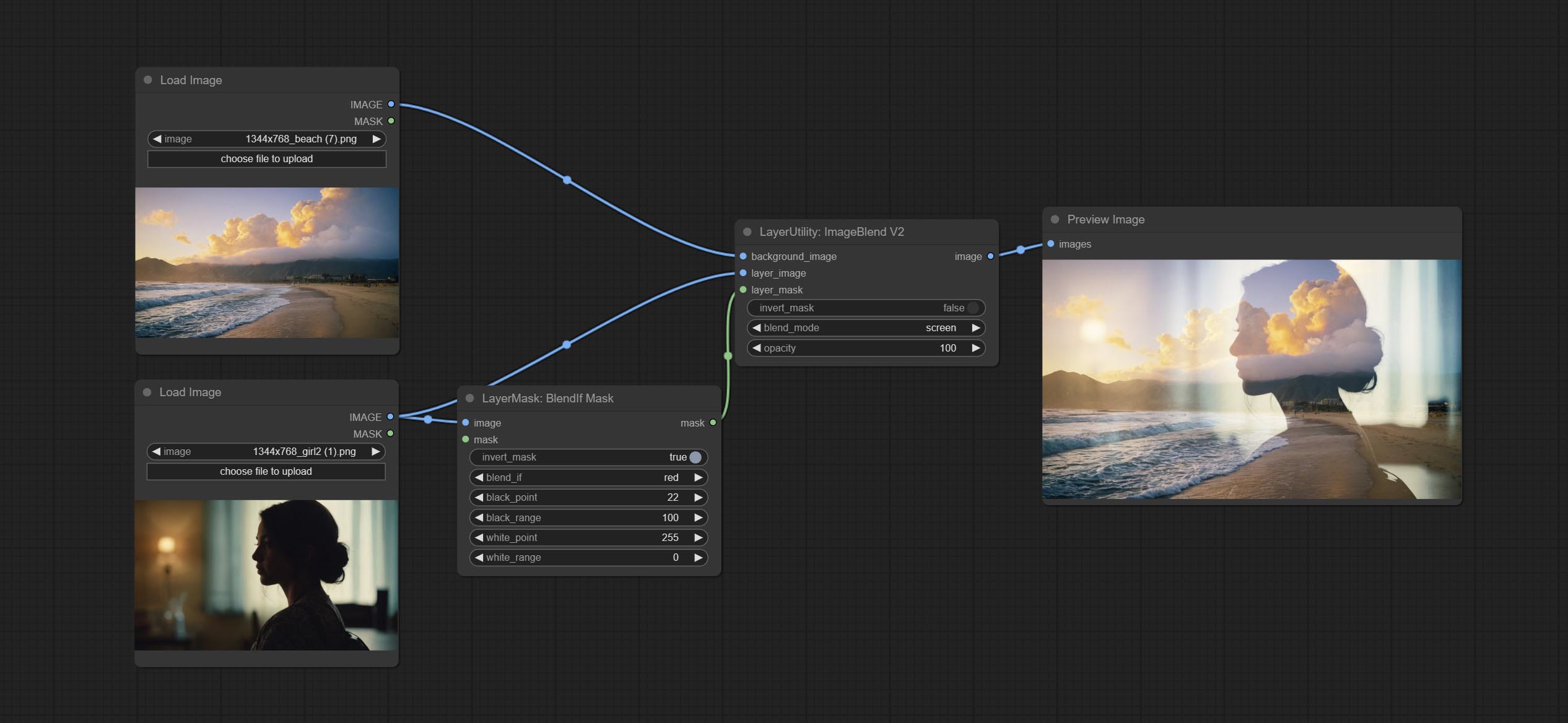

Commit Blendifmask 노드,이 노드는 imgaeblendv2 또는 imageblendadvancev2와 협력하여 Photoshop과 동일한 블렌드를 달성합니다.

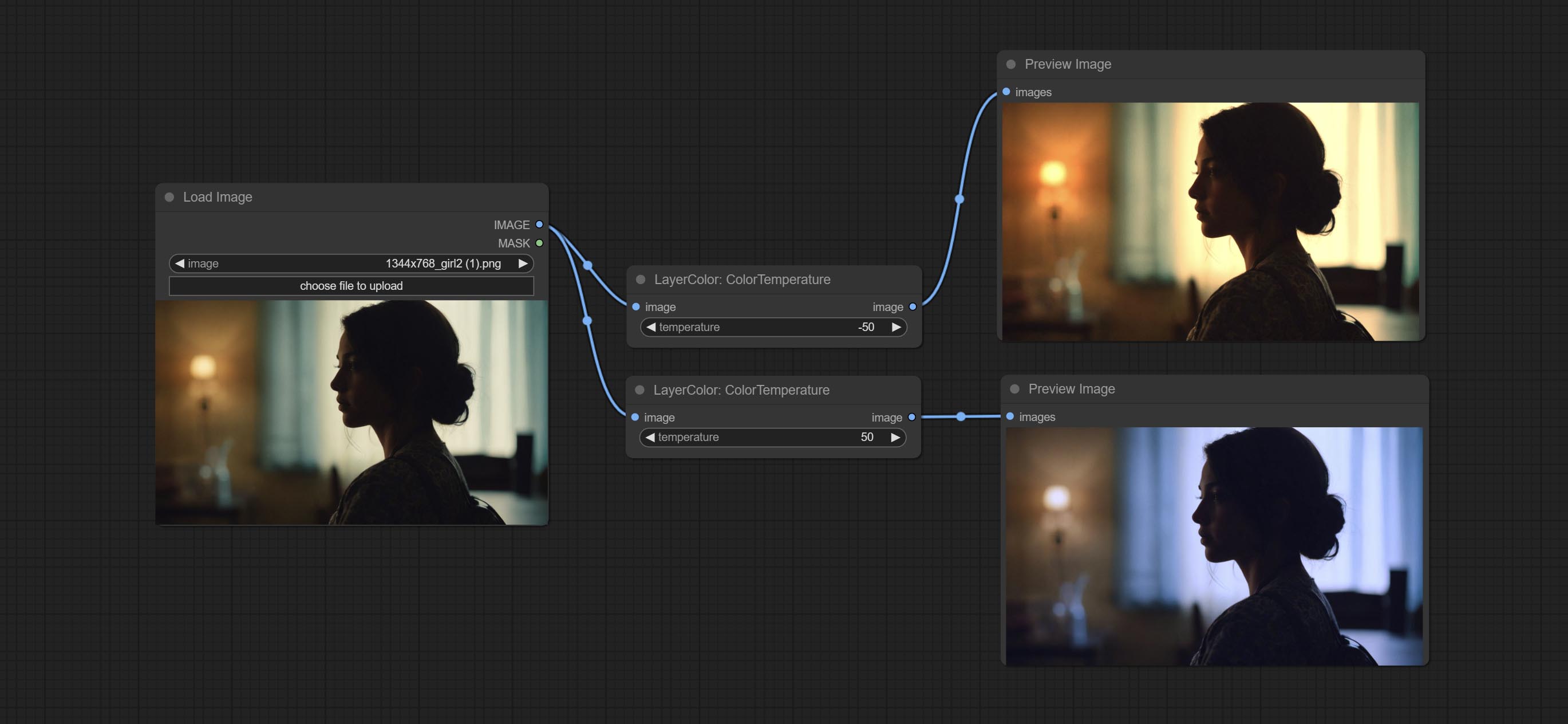

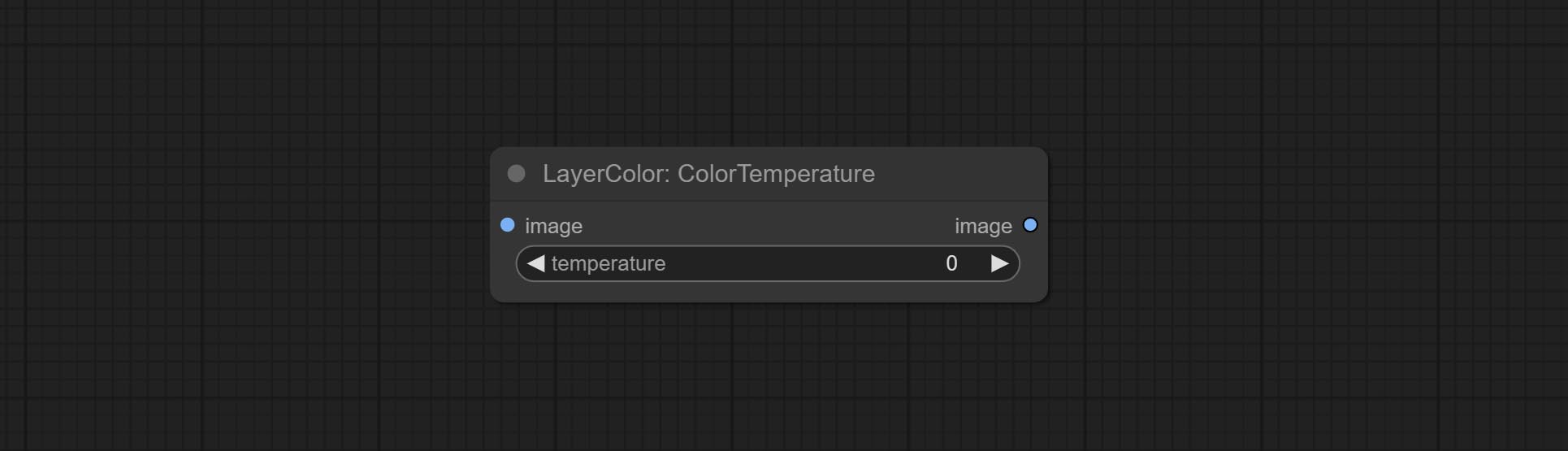

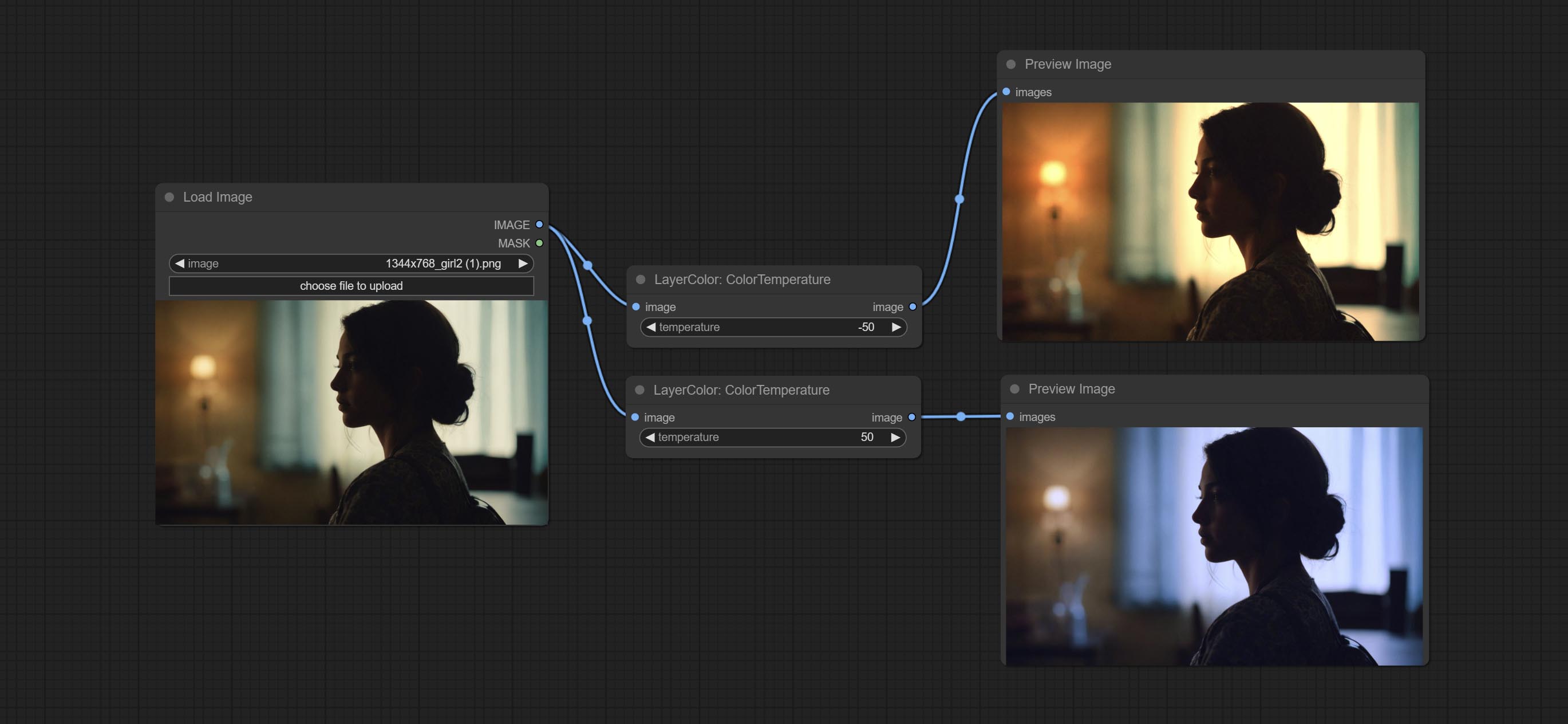

그림의 색상 온도와 색상 균형을 조정하는 데 사용되는 colortemperature 및 coloralance 노드를 커밋합니다.

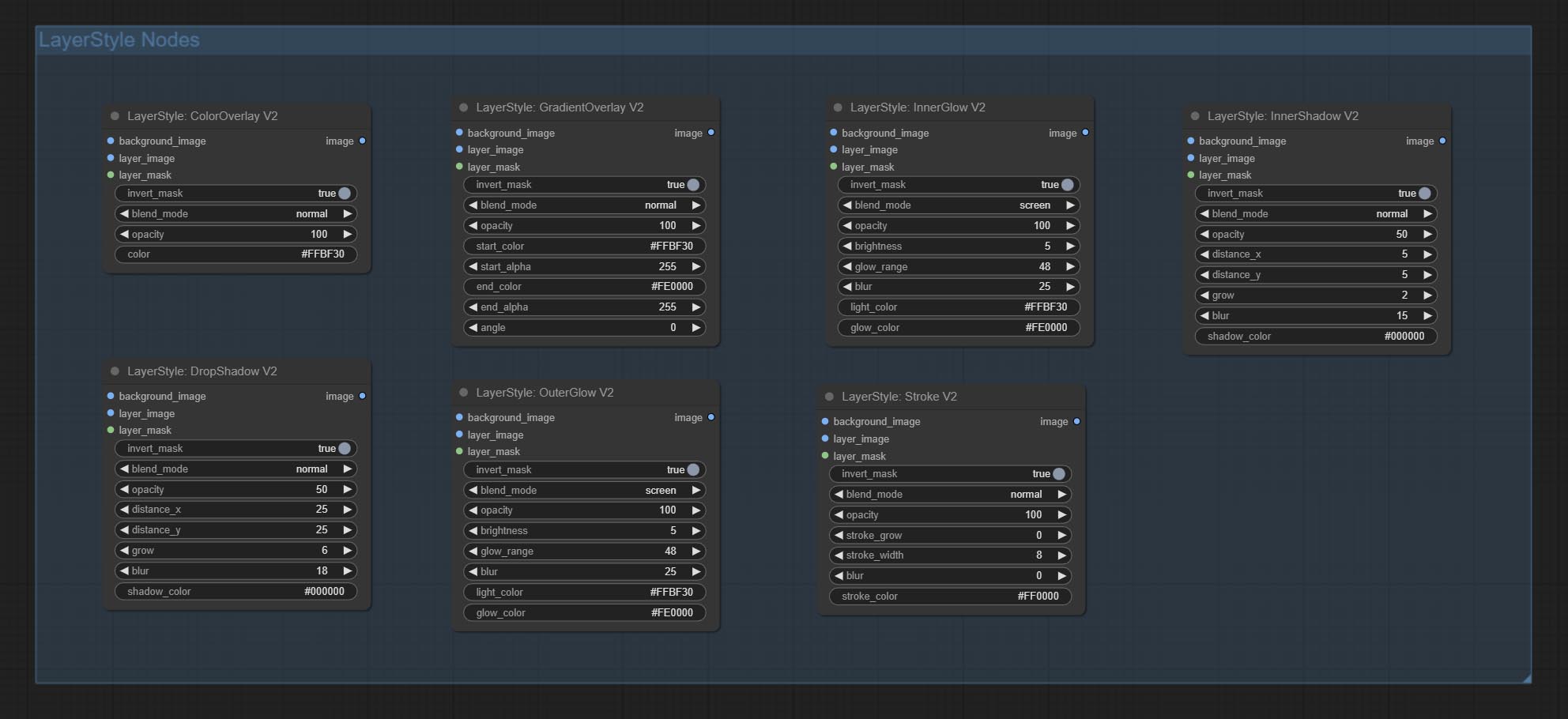

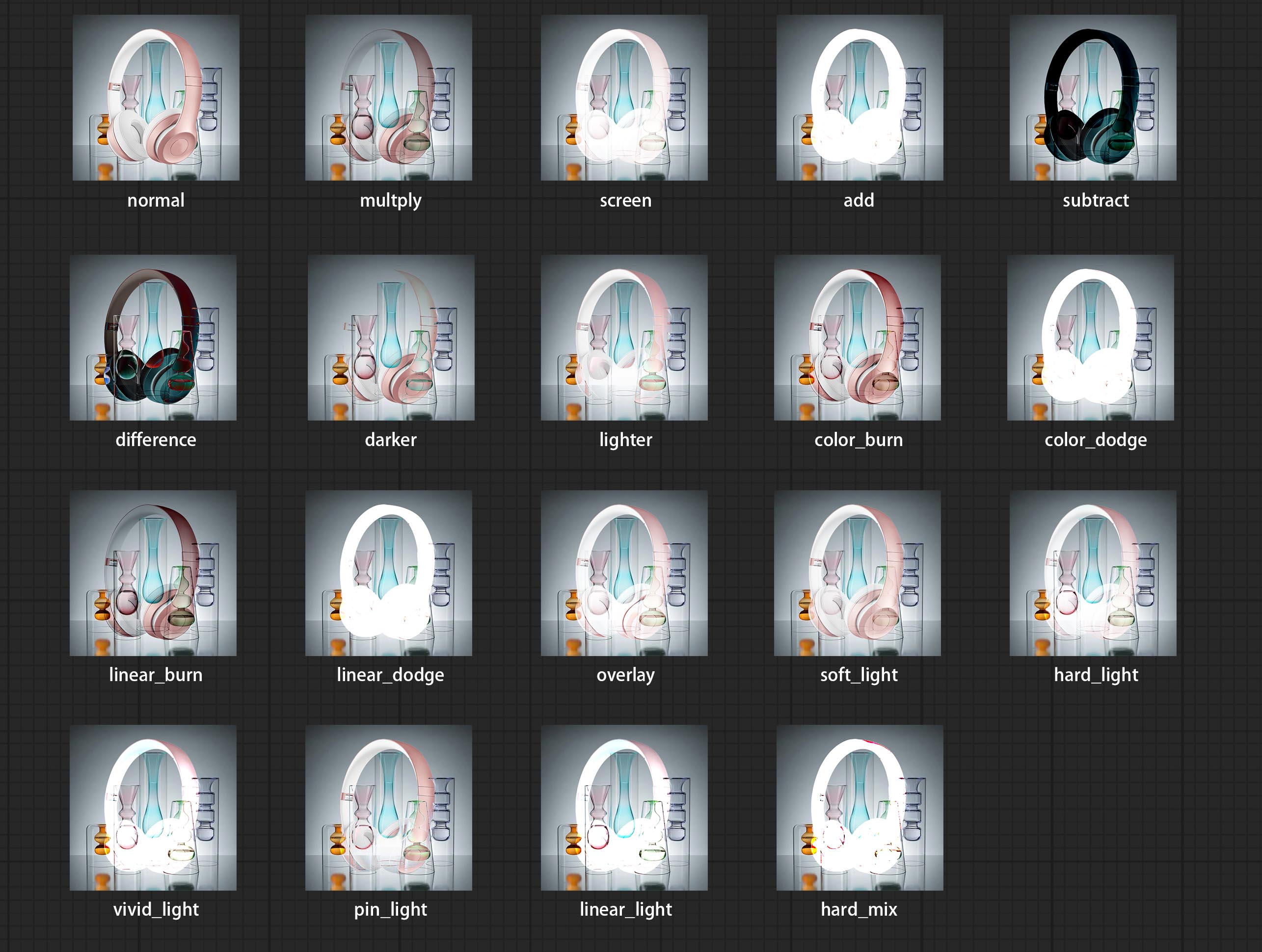

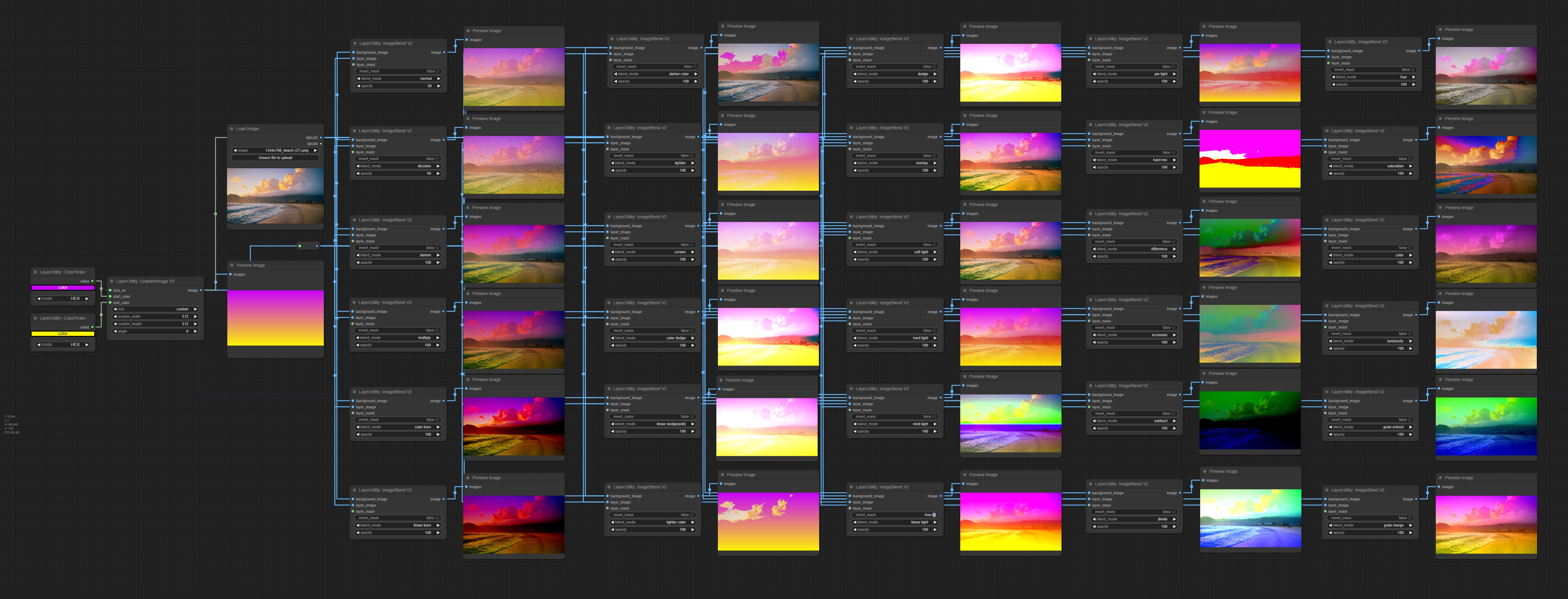

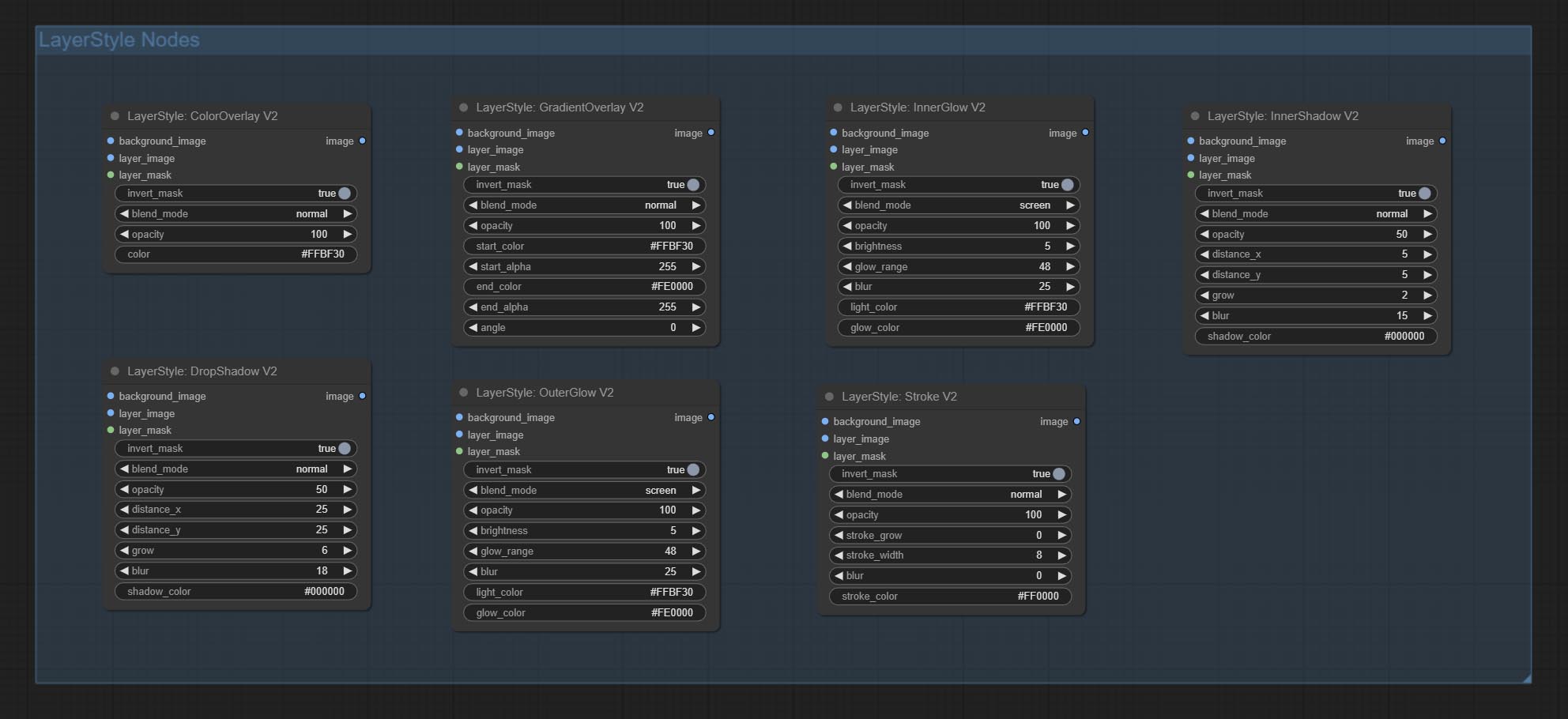

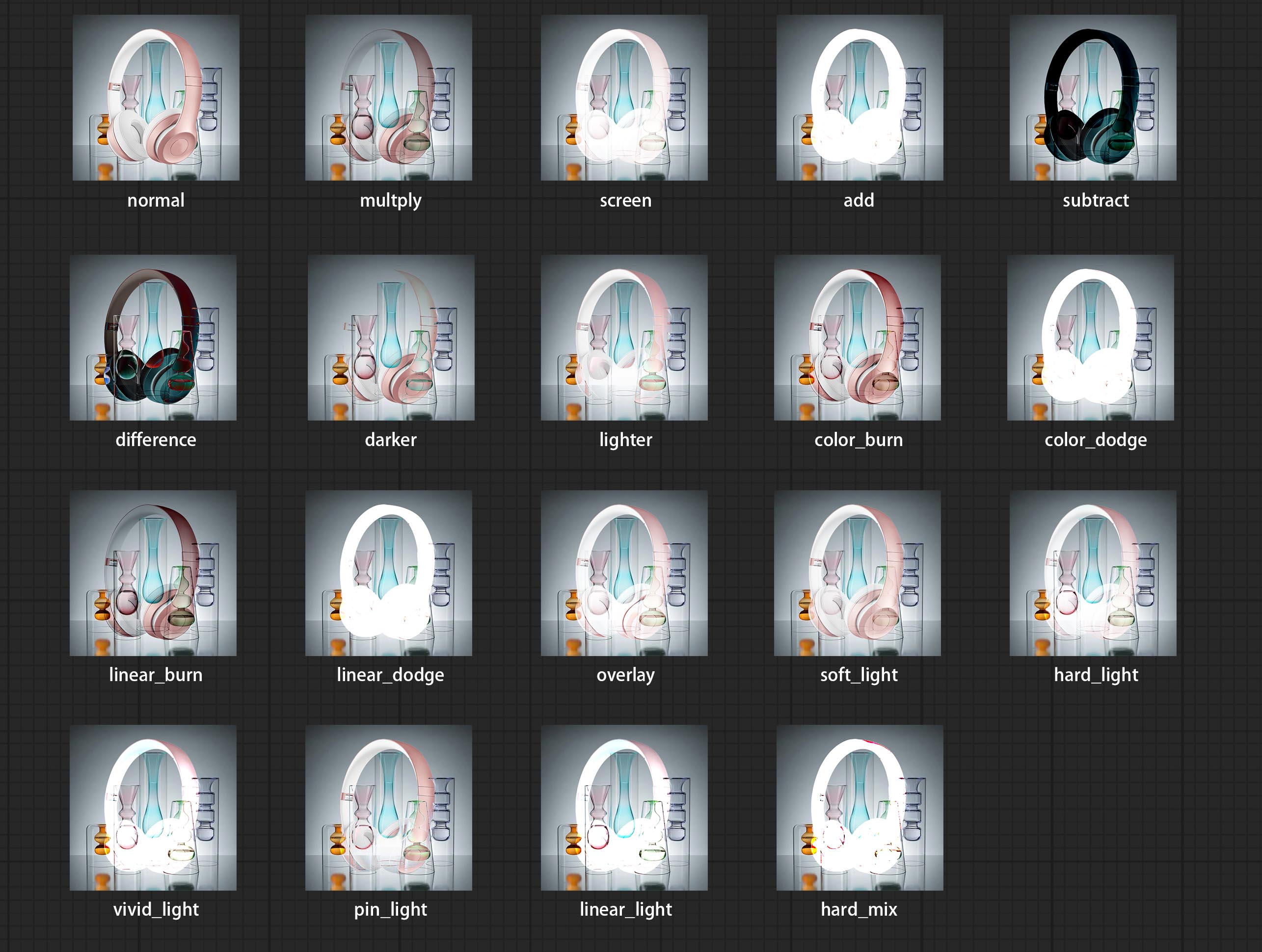

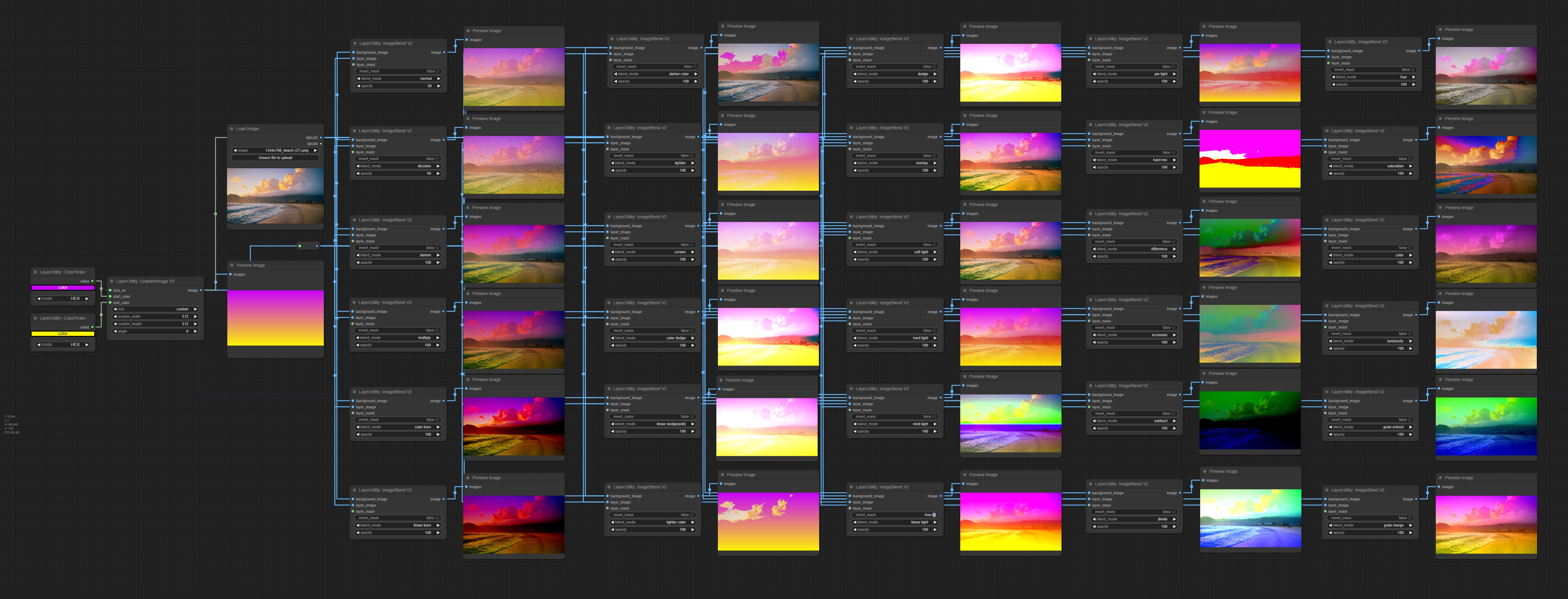

이미지 사이에 새로운 유형의 블렌드 모드 v2를 추가하십시오. 이제 최대 30 개의 블렌드 모드를 지원합니다. 새로운 블렌드 모드는 ImageBlend V2, ImageBlendAdvance V2, Dropshadow V2, Innershadow V2, Outerglow V2, InnerGlow V2, Stroke V2, ColorOverLay V2, GradiendOverlay V2 등 혼합 모드 노드를 지원하는 모든 V2 버전에 사용할 수 있습니다.

Blendmode v2 코드의 일부는 Comfyui의 Virtuoso 노드에서 나온 것입니다. 원래 저자에게 감사합니다.

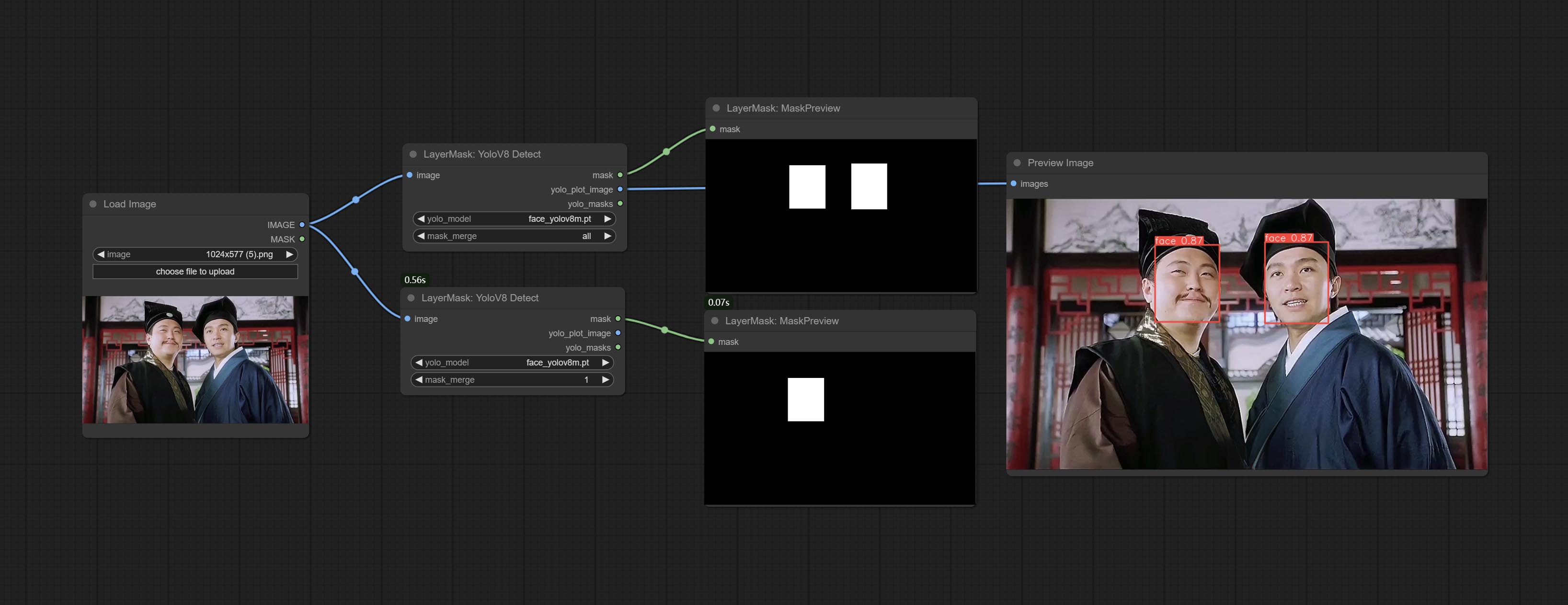

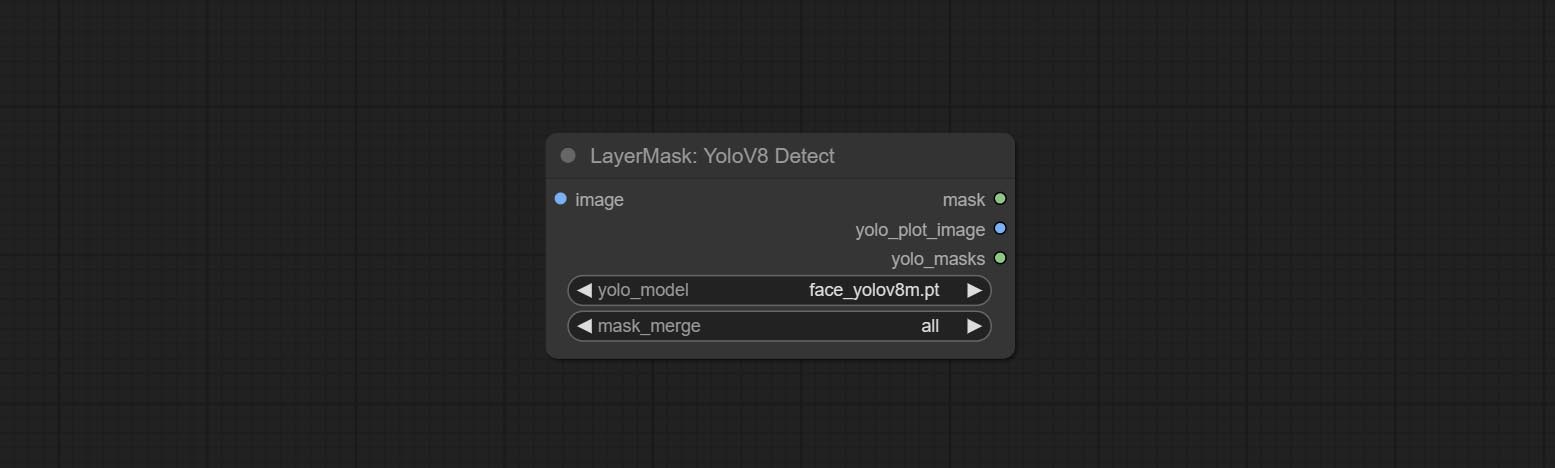

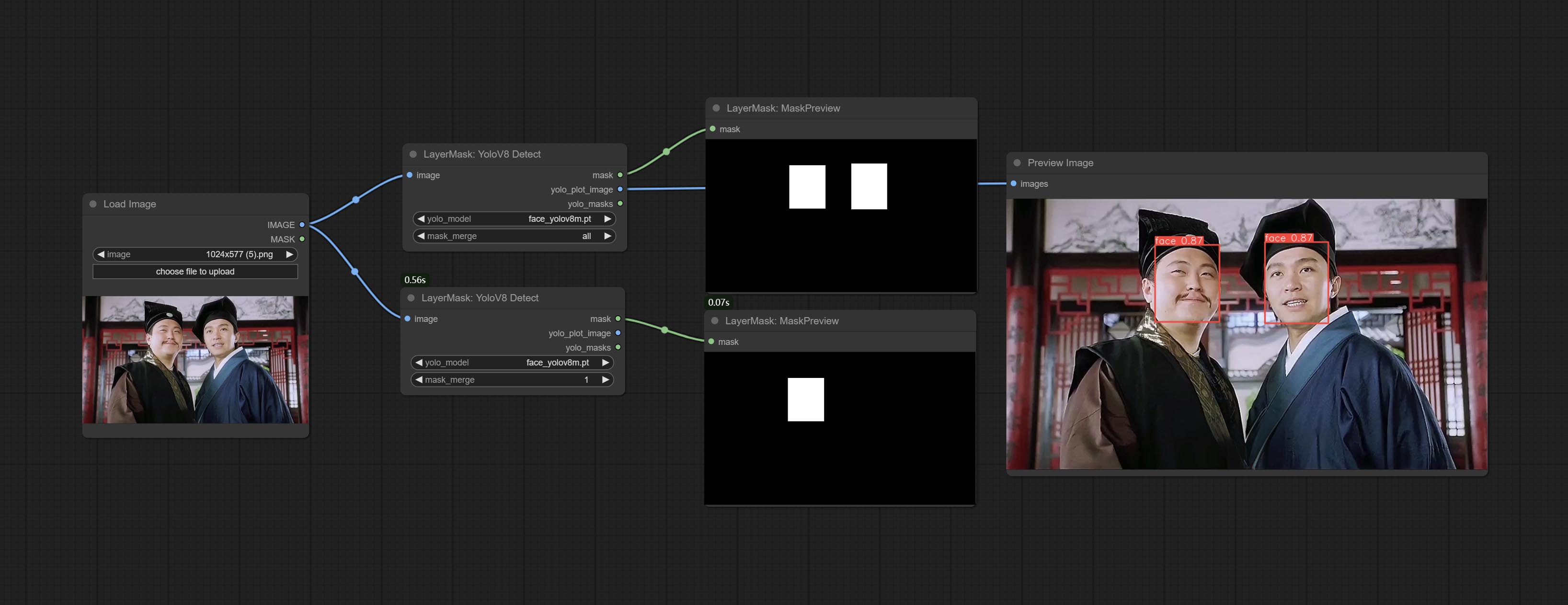

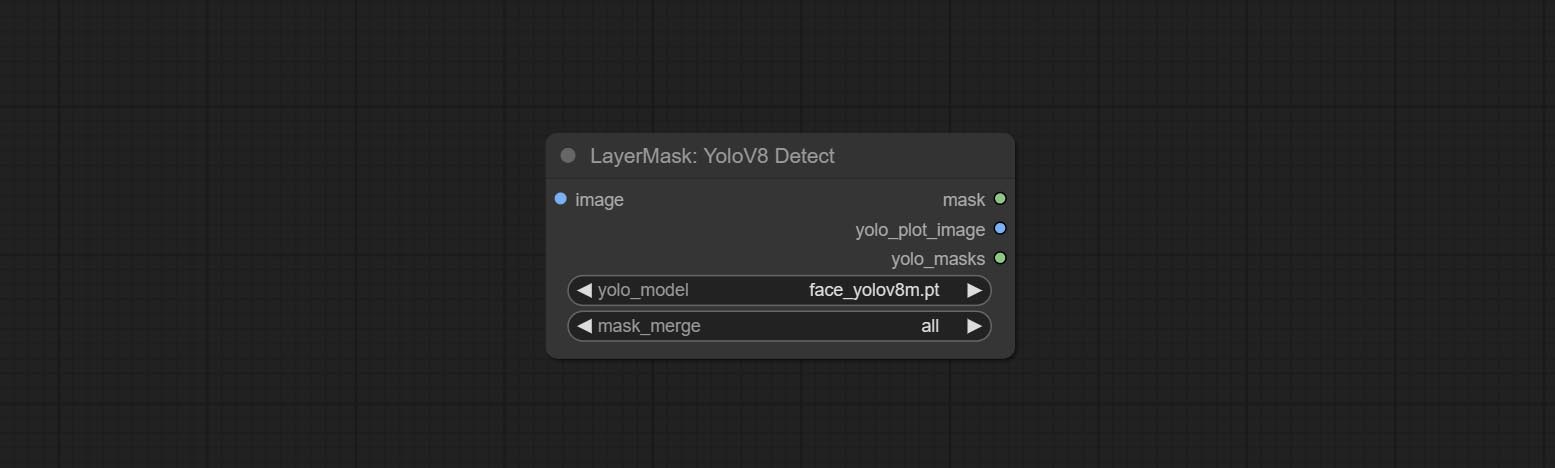

Yolov8Detect 노드를 커밋하십시오.

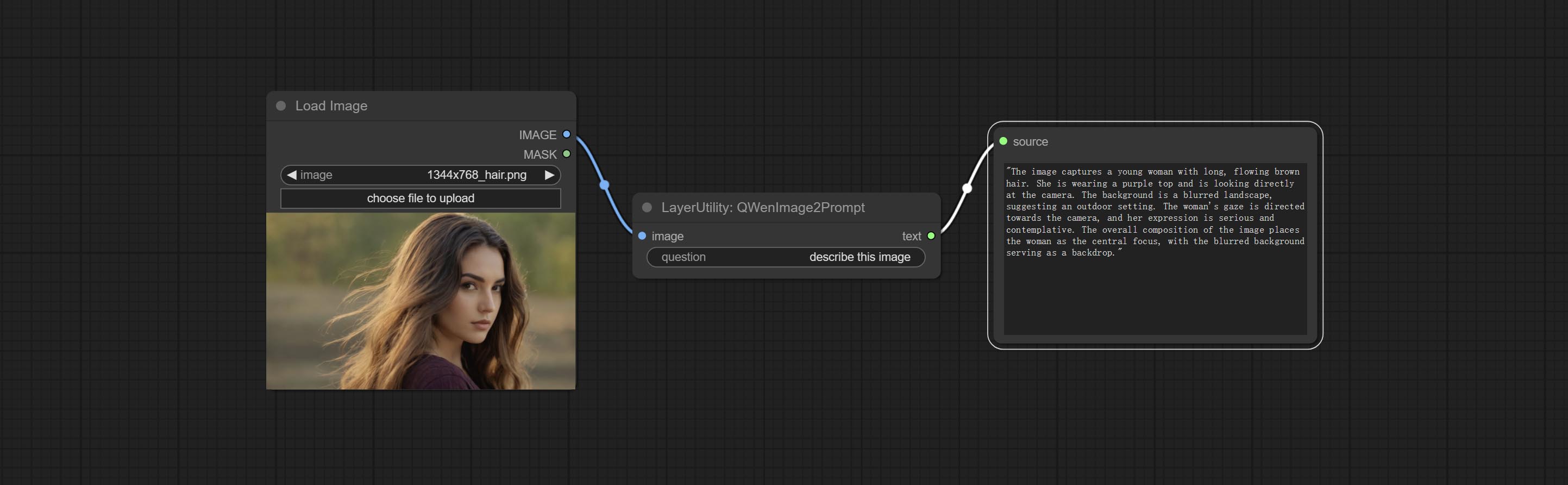

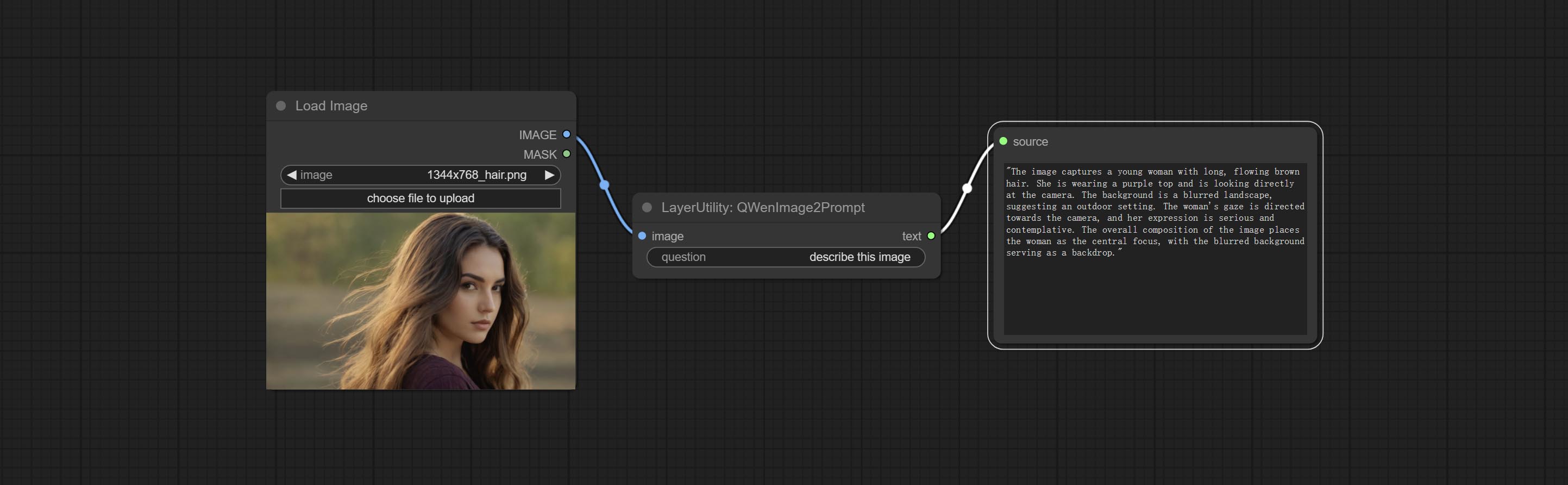

QWENIMAGE2PROMPT 노드를 커밋하십시오.이 노드는 원래 저자 덕분에 comfyui_vlm_nodes의 UForm-Gen2 Qwen Node 의 재 포장입니다.

부울 수사기, 숫자 계산기, 텍스트 상자, 정수, 부유물, 부울 노드를 커밋하십시오. 이 노드는 수학적 및 논리적 작업을 수행 할 수 있습니다.

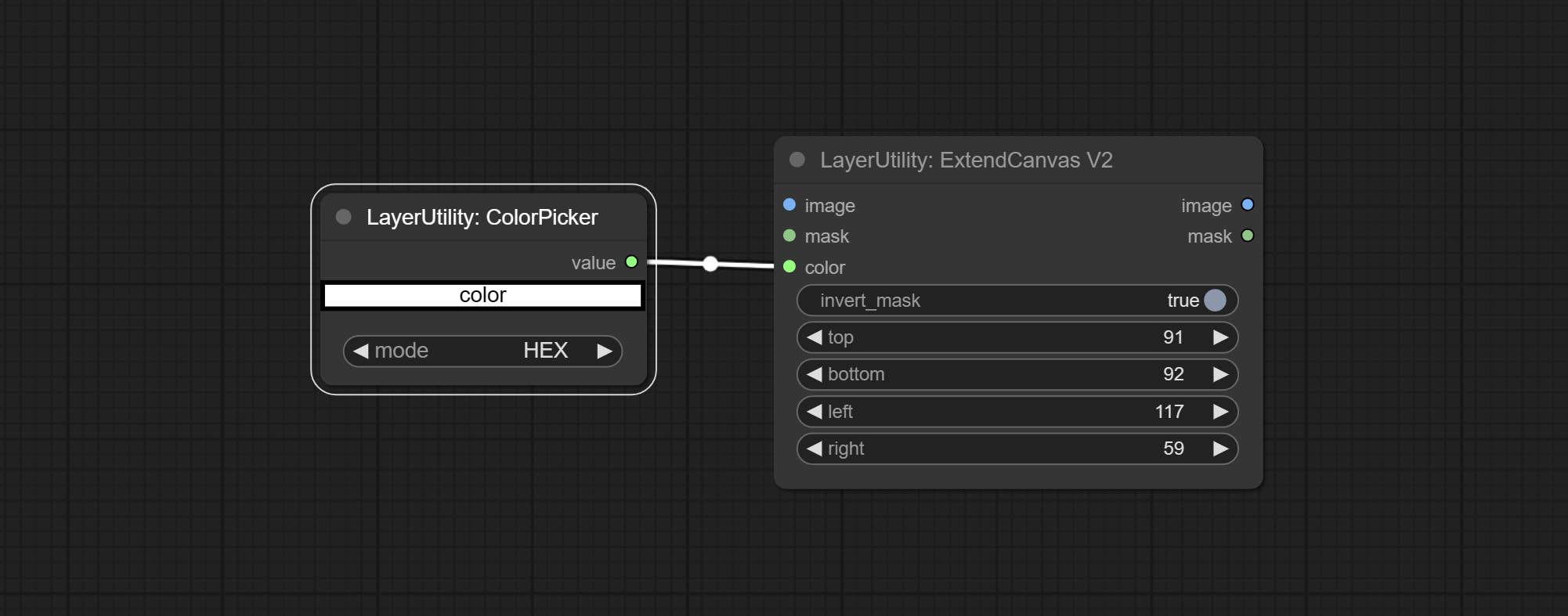

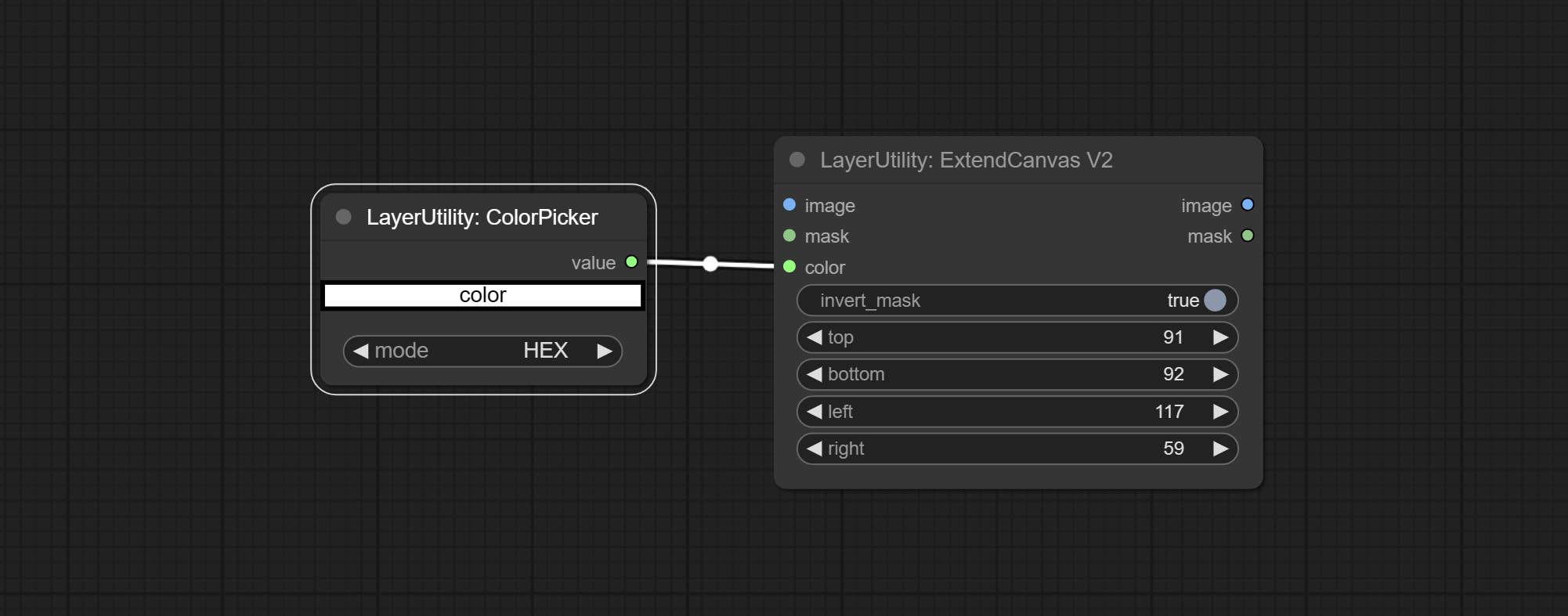

ExtendCanvasv2 노드를 커밋하고 색상 값 입력을 지원하십시오.

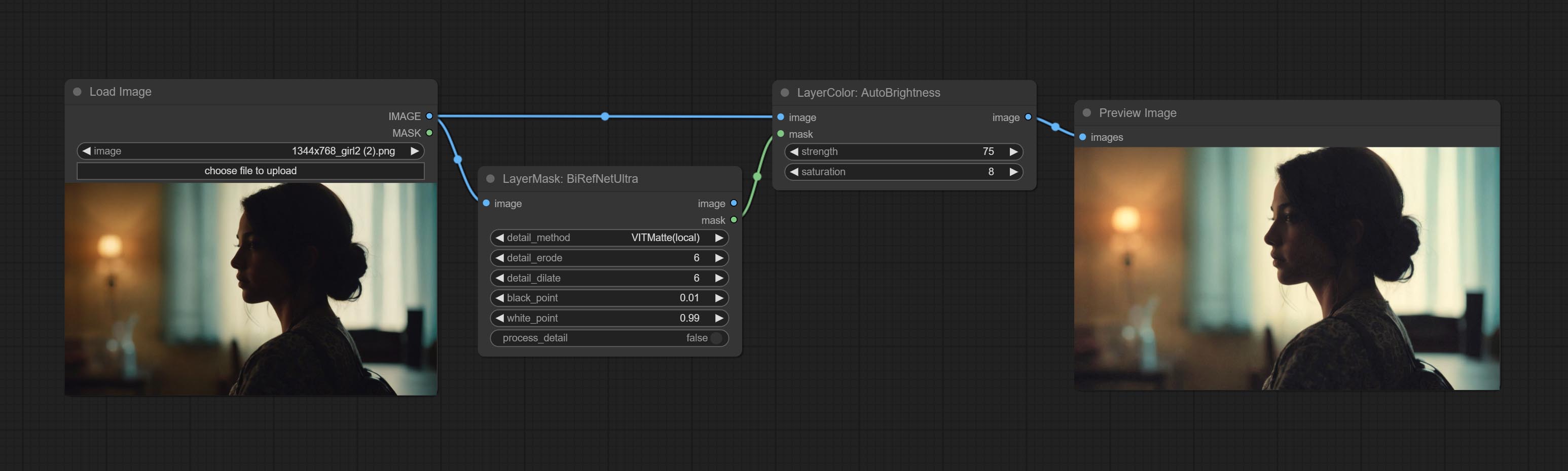

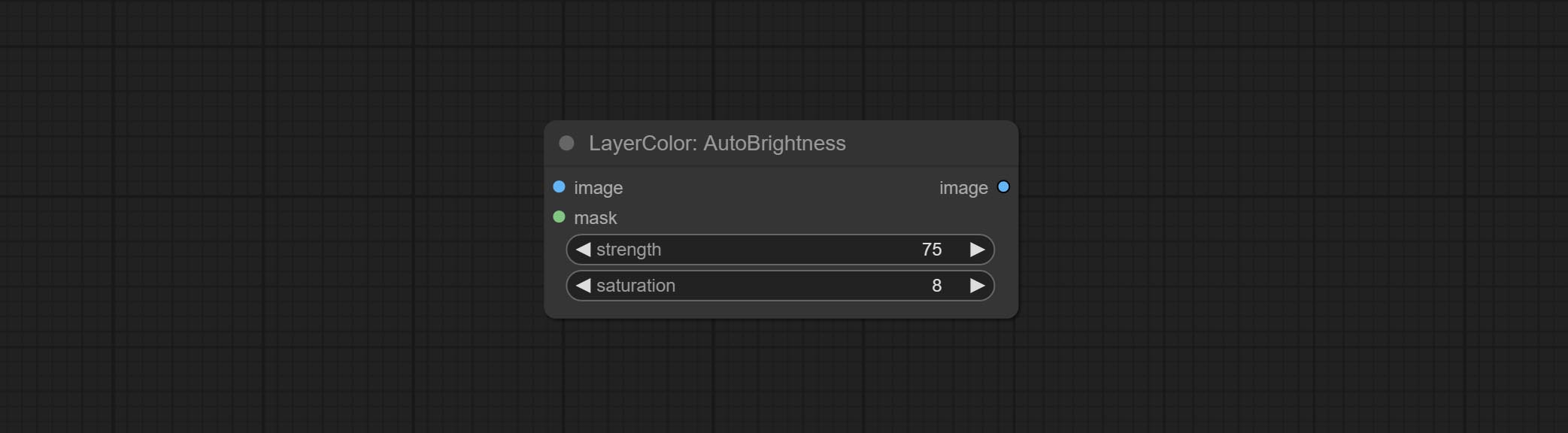

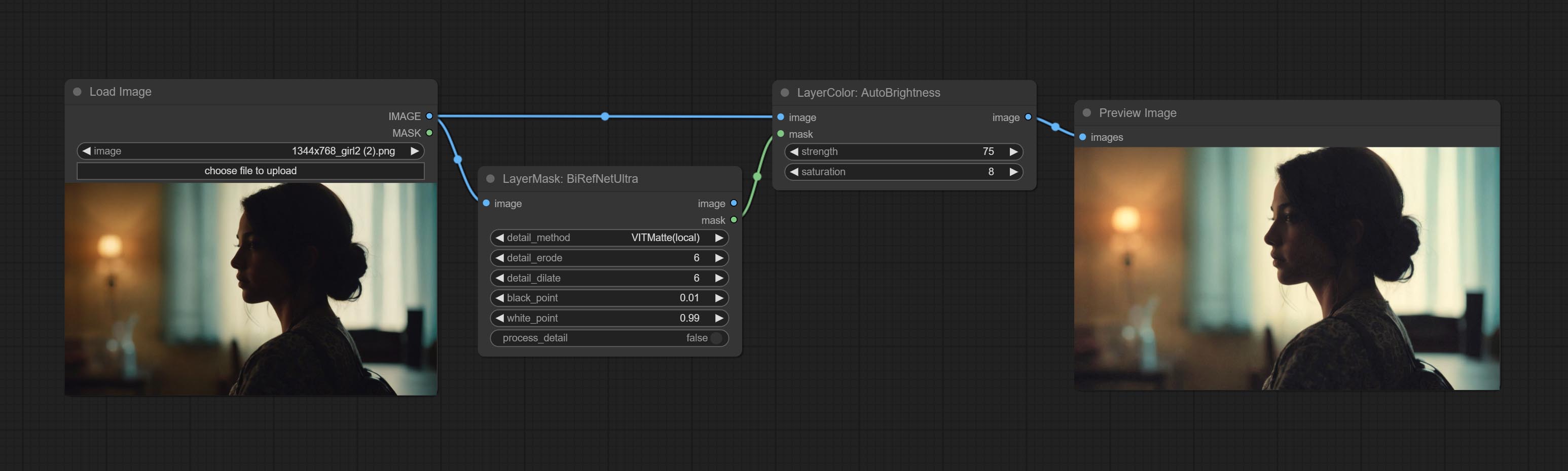

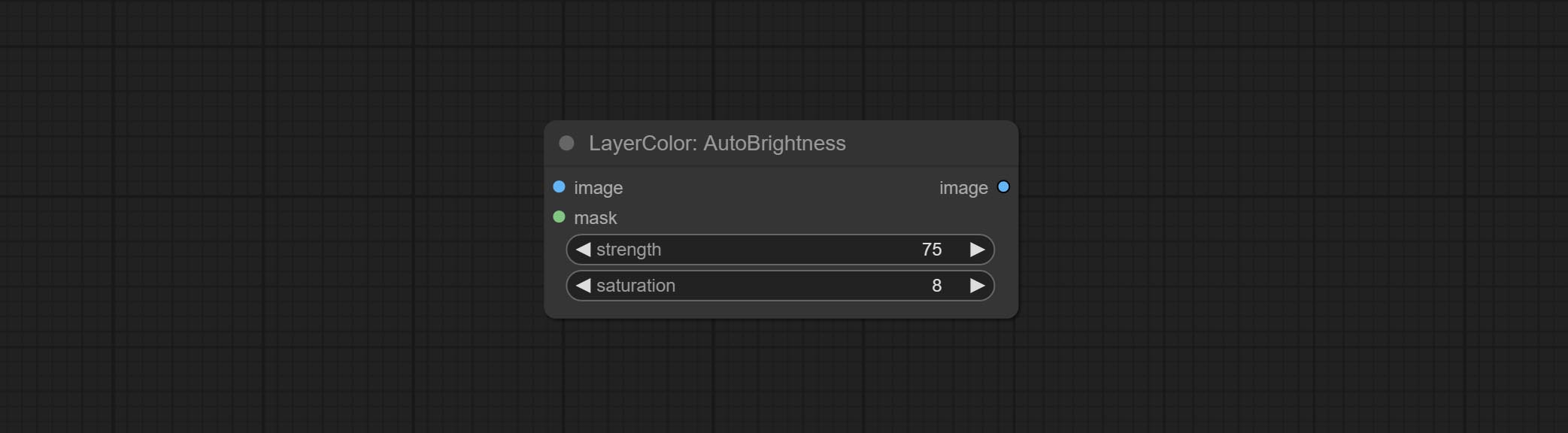

자가 정당도 노드를 커밋하면 이미지의 밝기를 자동으로 조정할 수 있습니다.

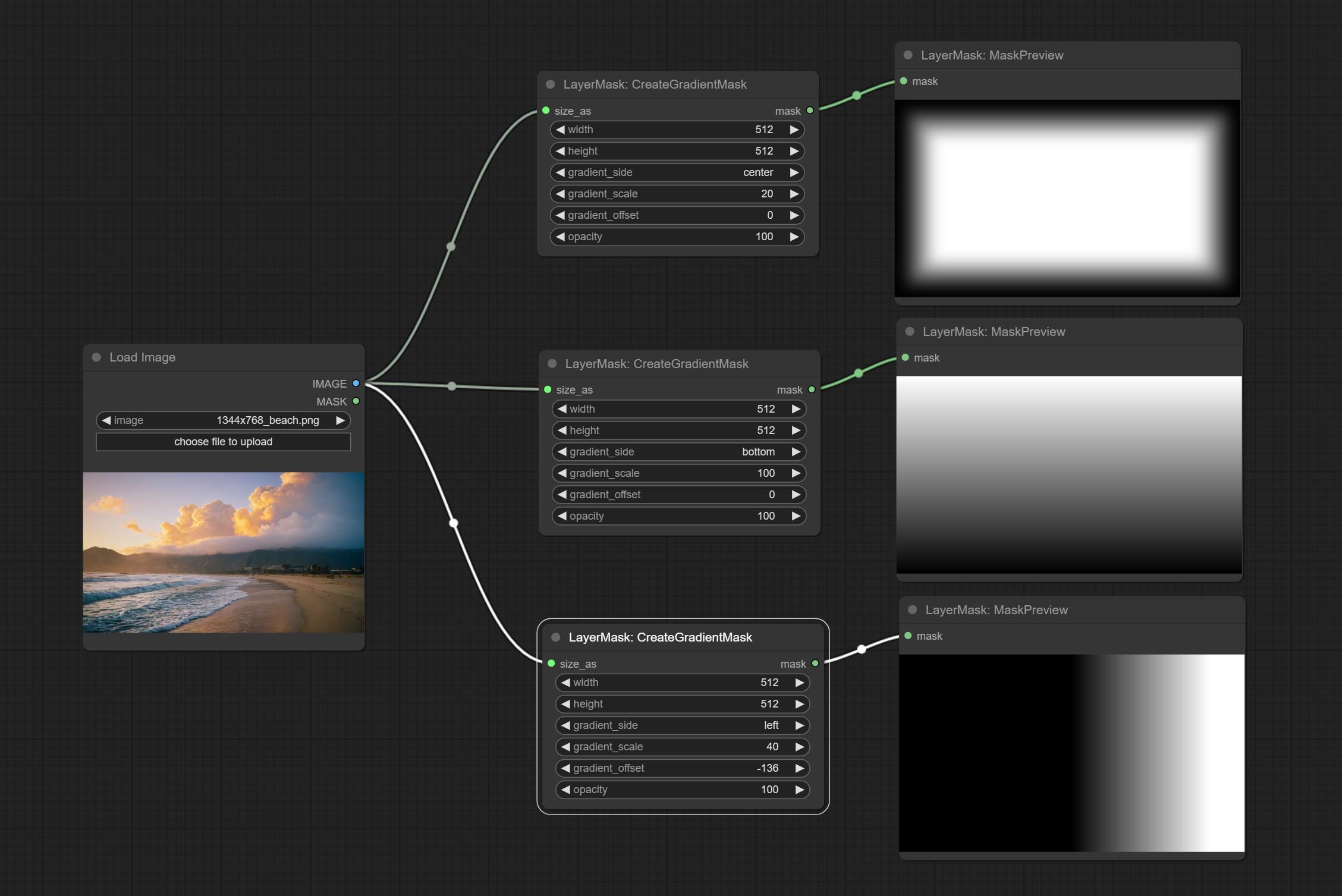

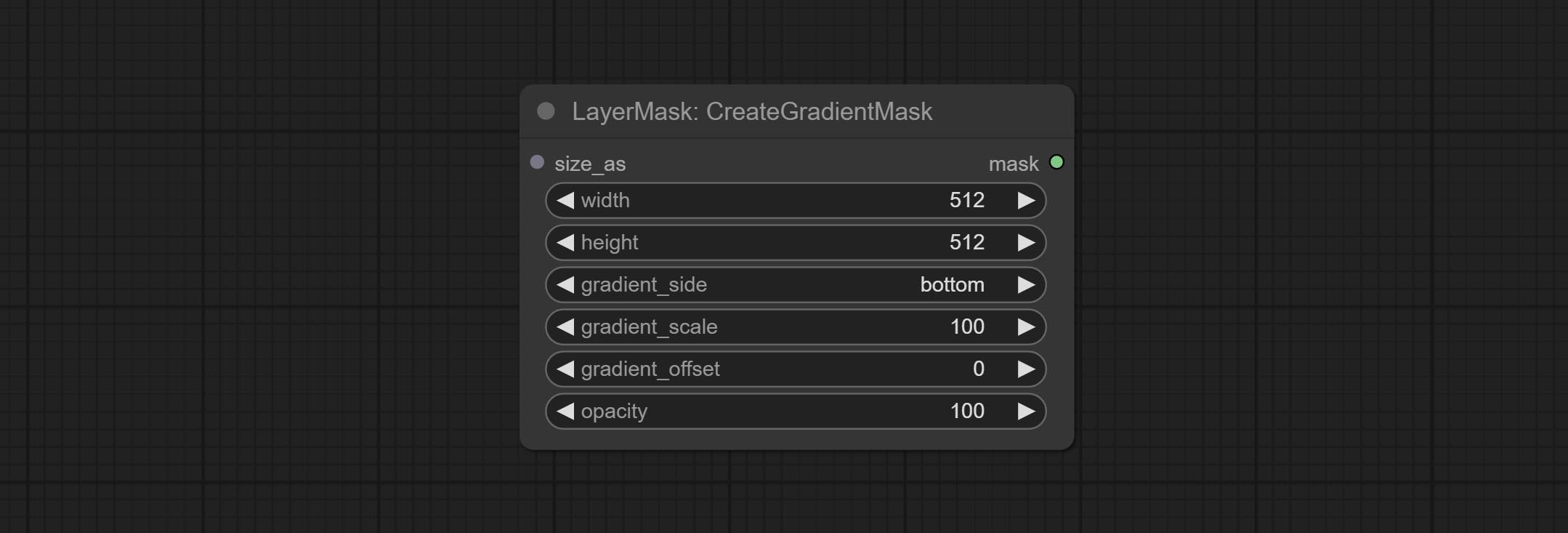

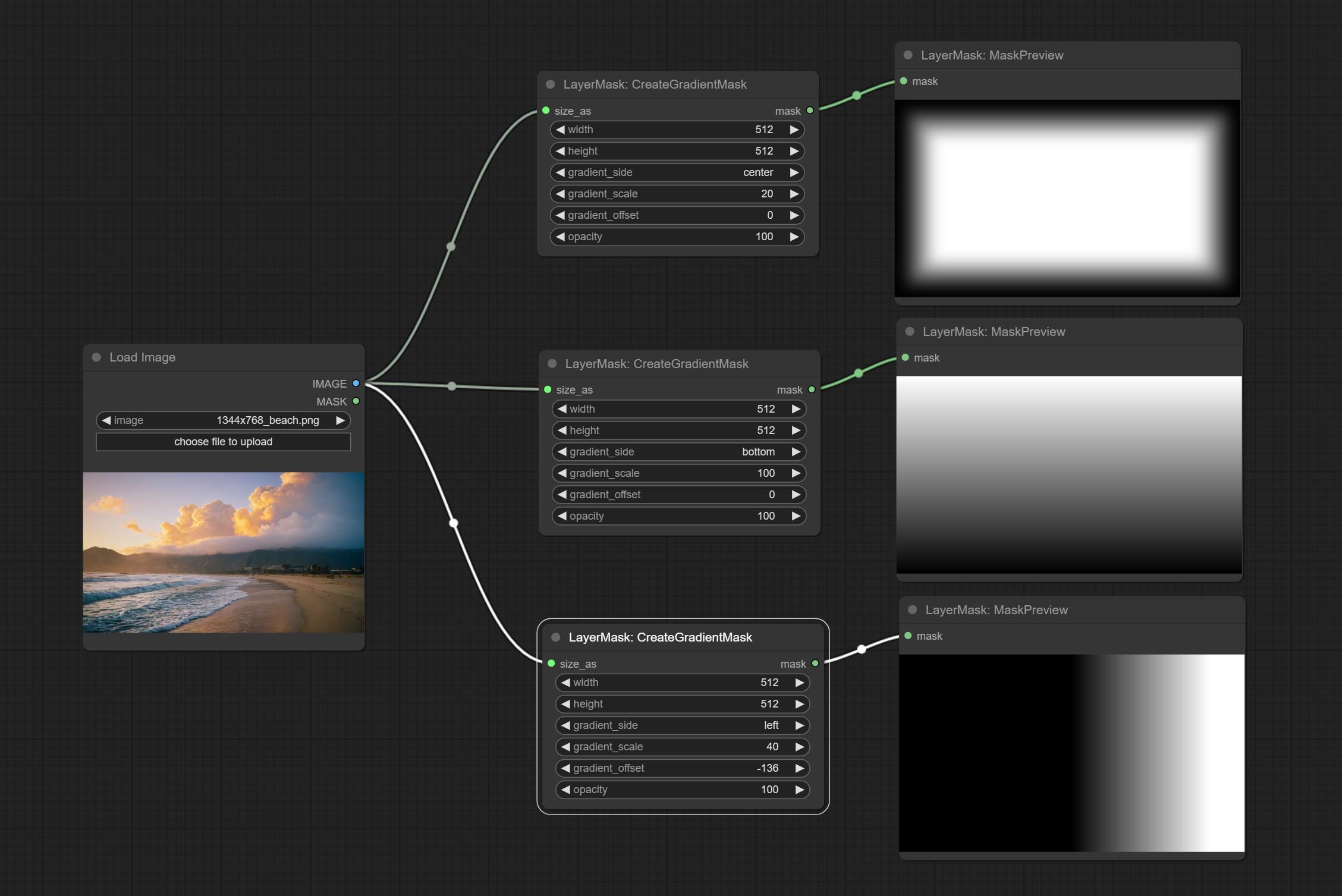

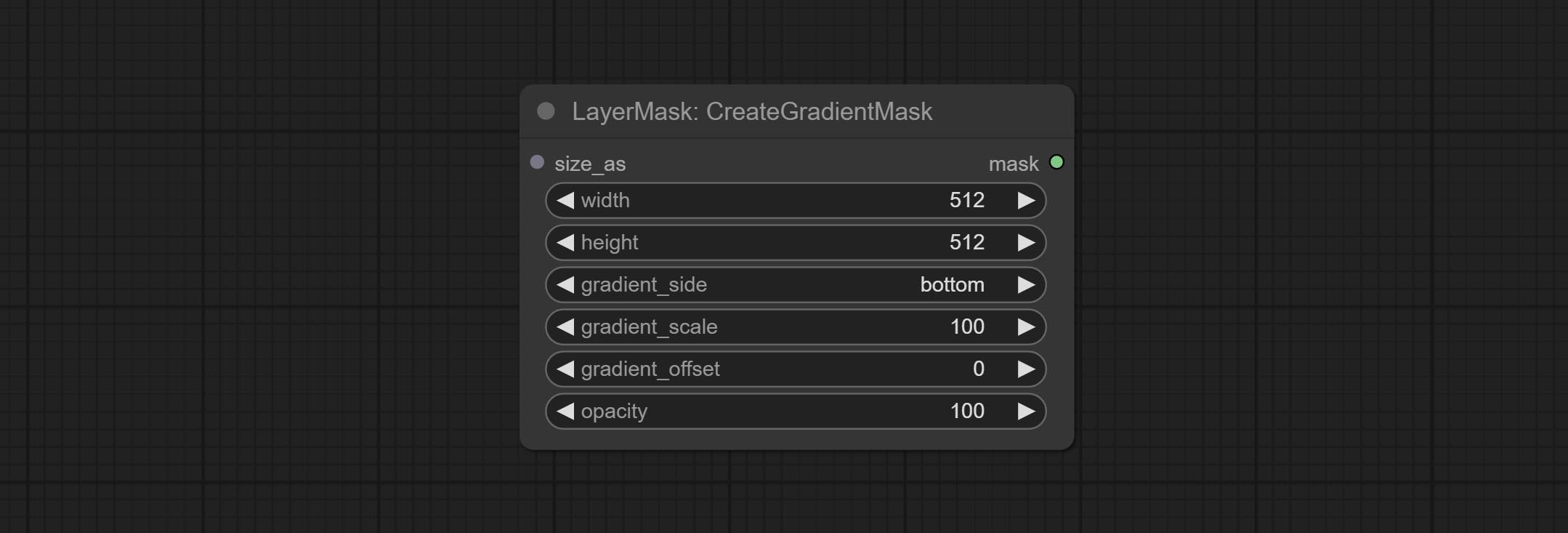

CreateGradientMask 노드 추가 center 옵션.

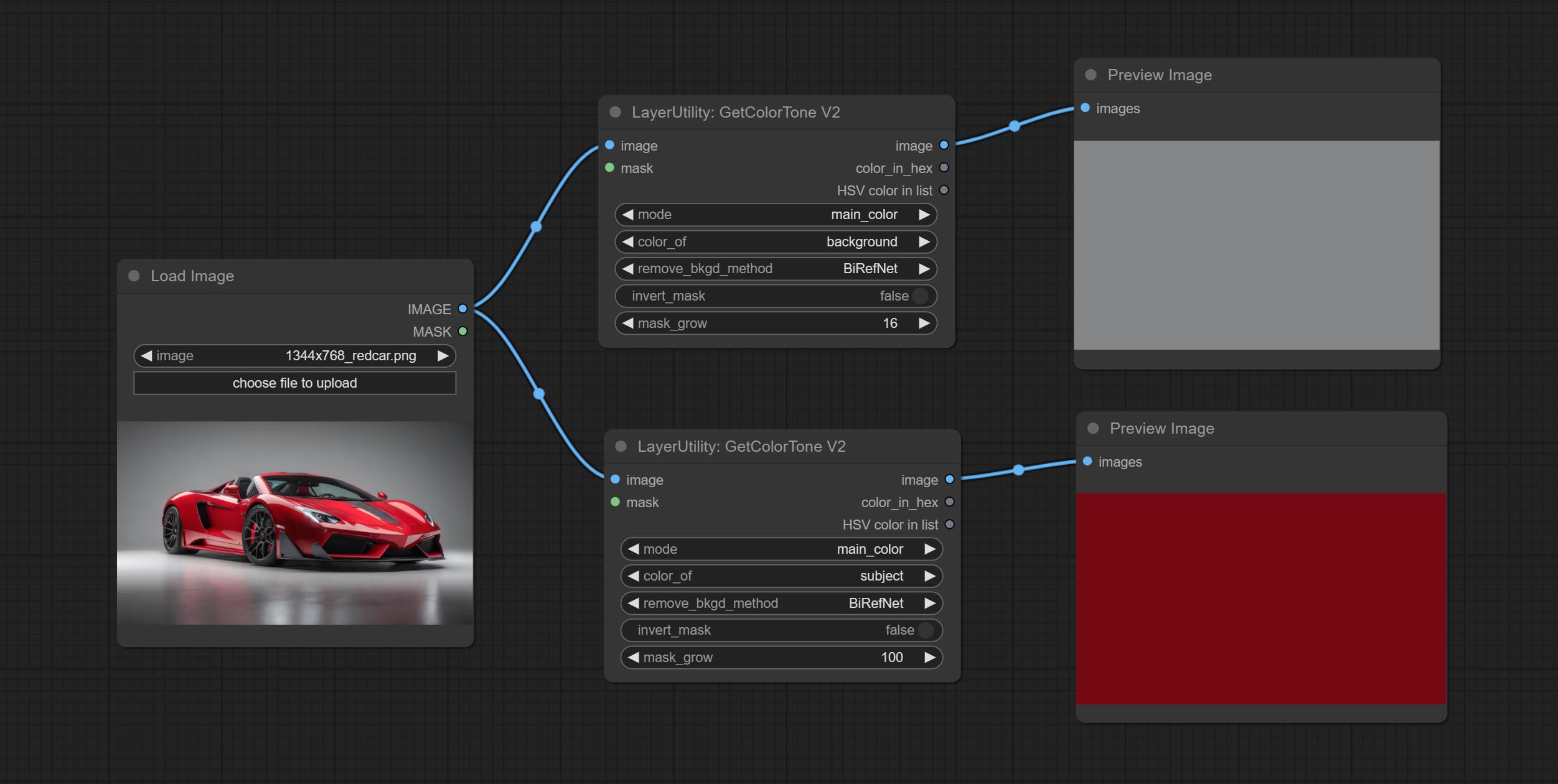

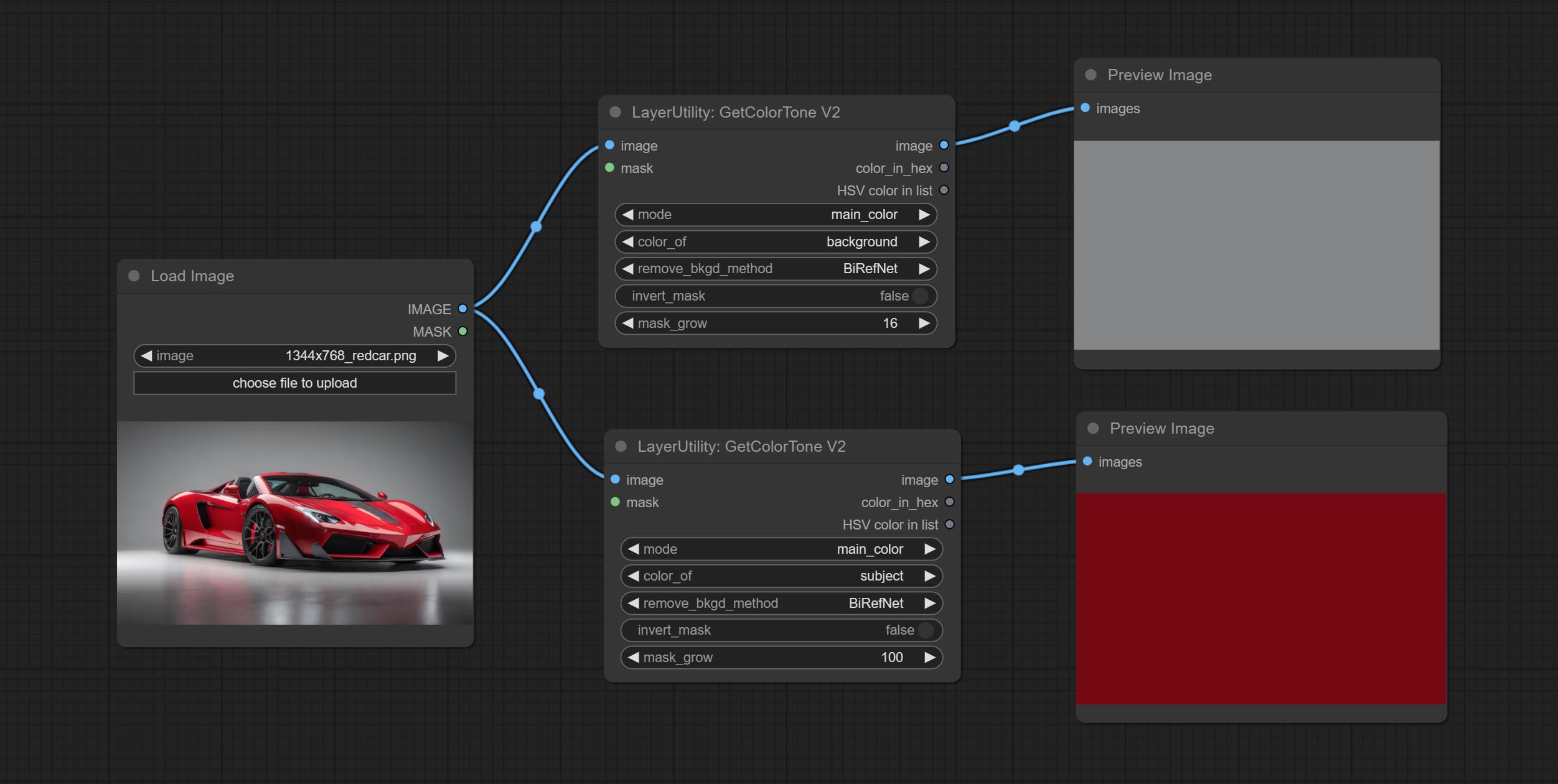

getcolortonev2 노드를 커밋하고 배경이나 신체의 메인 및 평균 색상을 선택할 수 있습니다.

ImageRerewardFilter 노드를 커밋하고 품질이 좋지 않은 사진을 필터링 할 수 있습니다.

Ultra Nods는 VITMatte(local) 방법을 추가하면 이전 모델을 이미 다운로드 한 경우 Huggingface.co에 액세스하지 않도록이 방법을 선택할 수 있습니다.

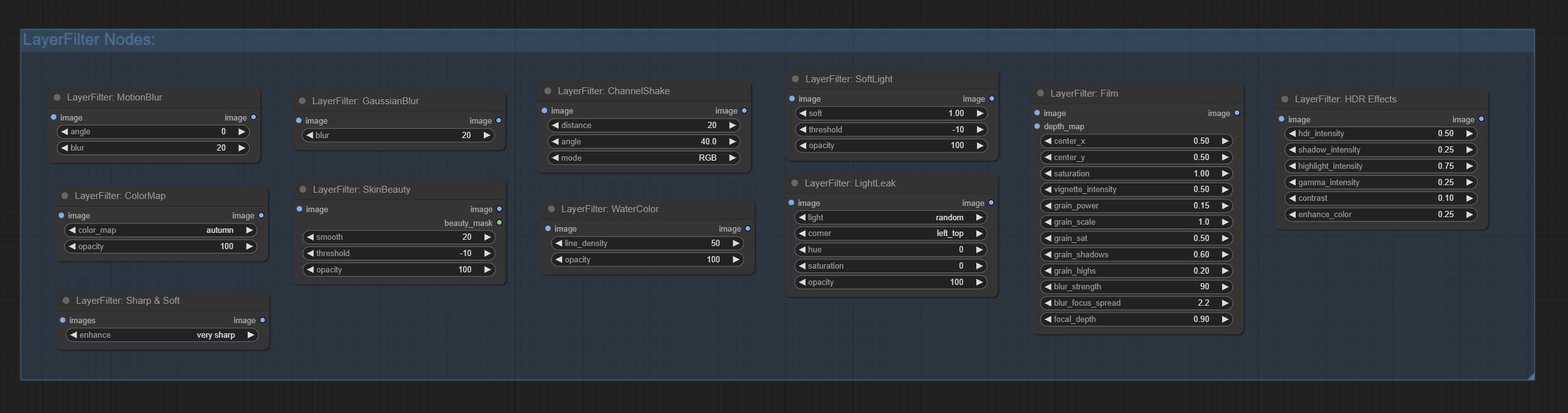

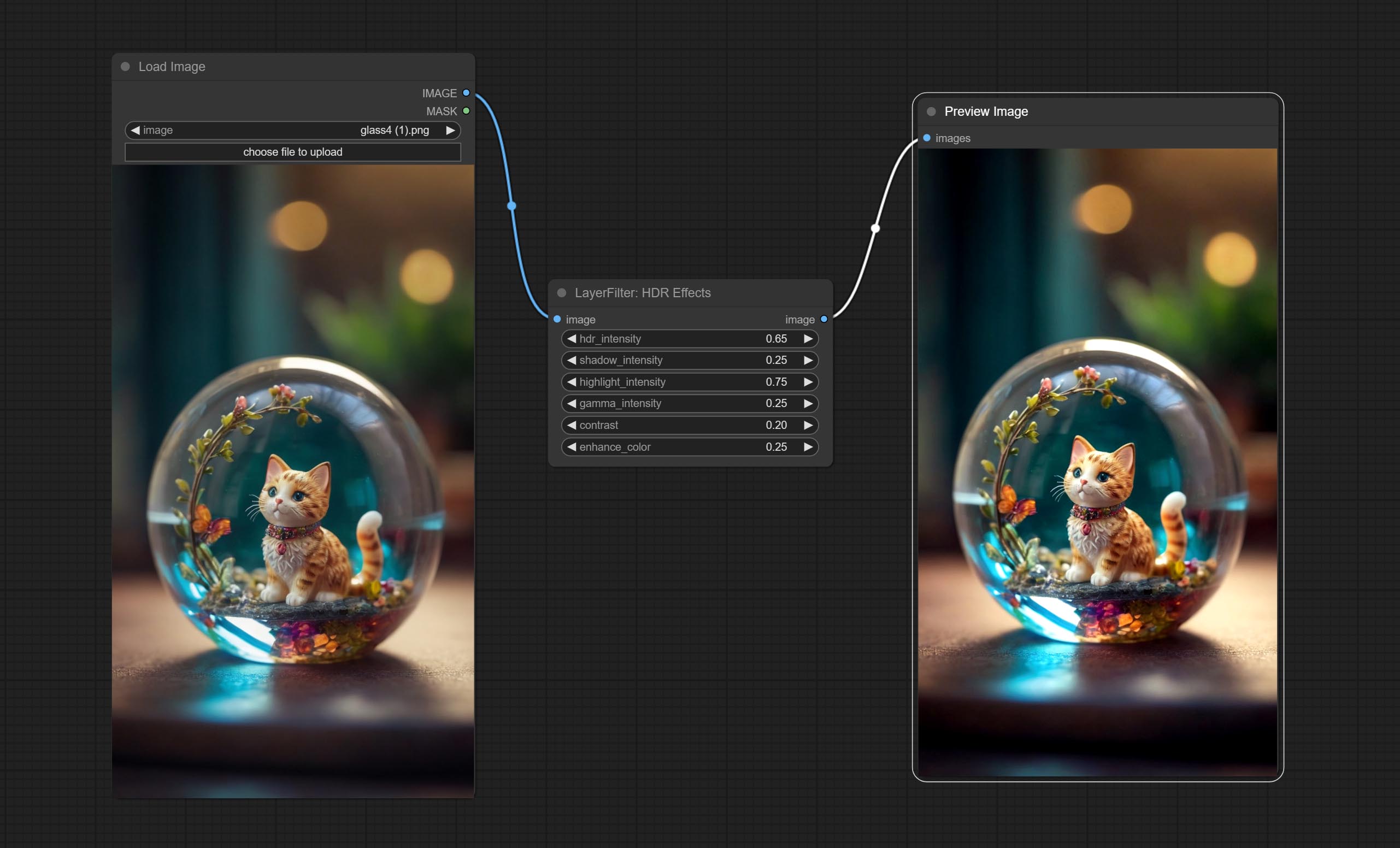

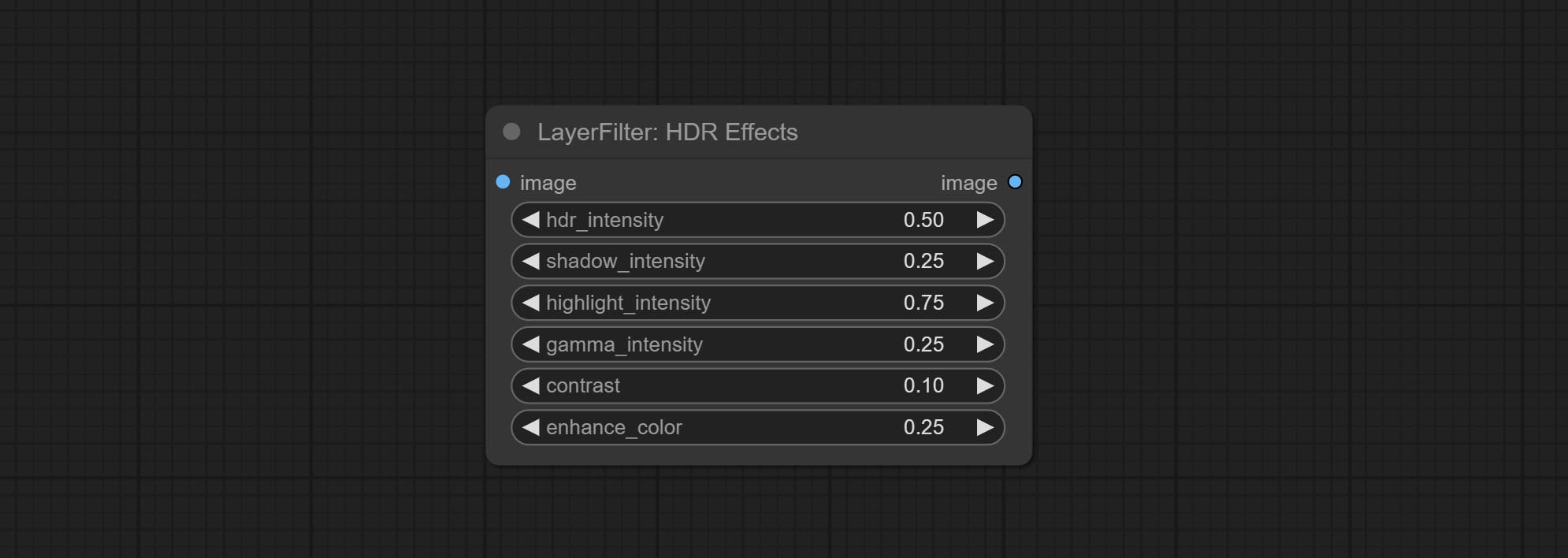

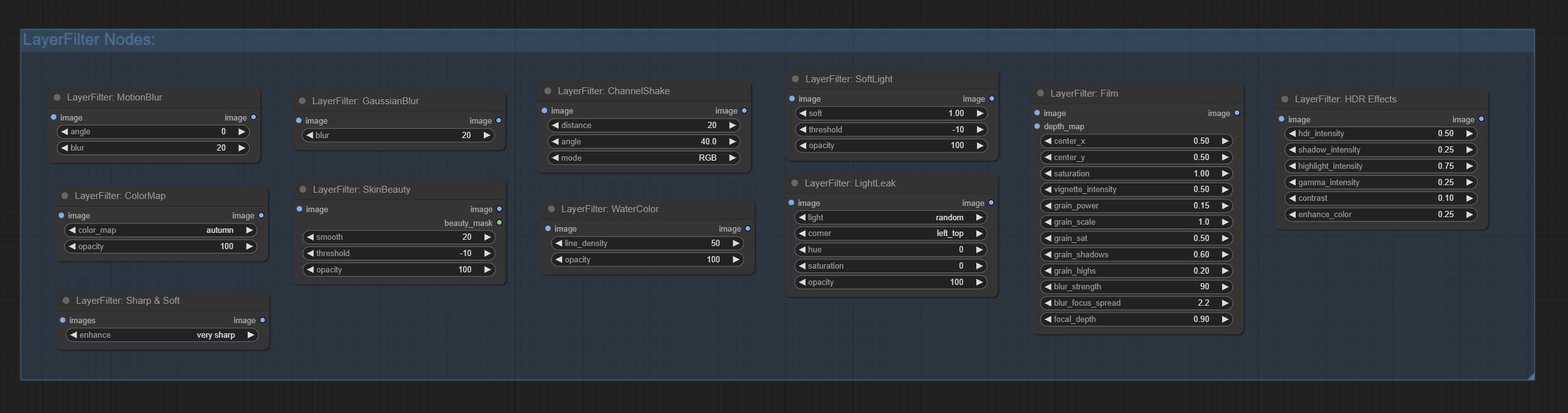

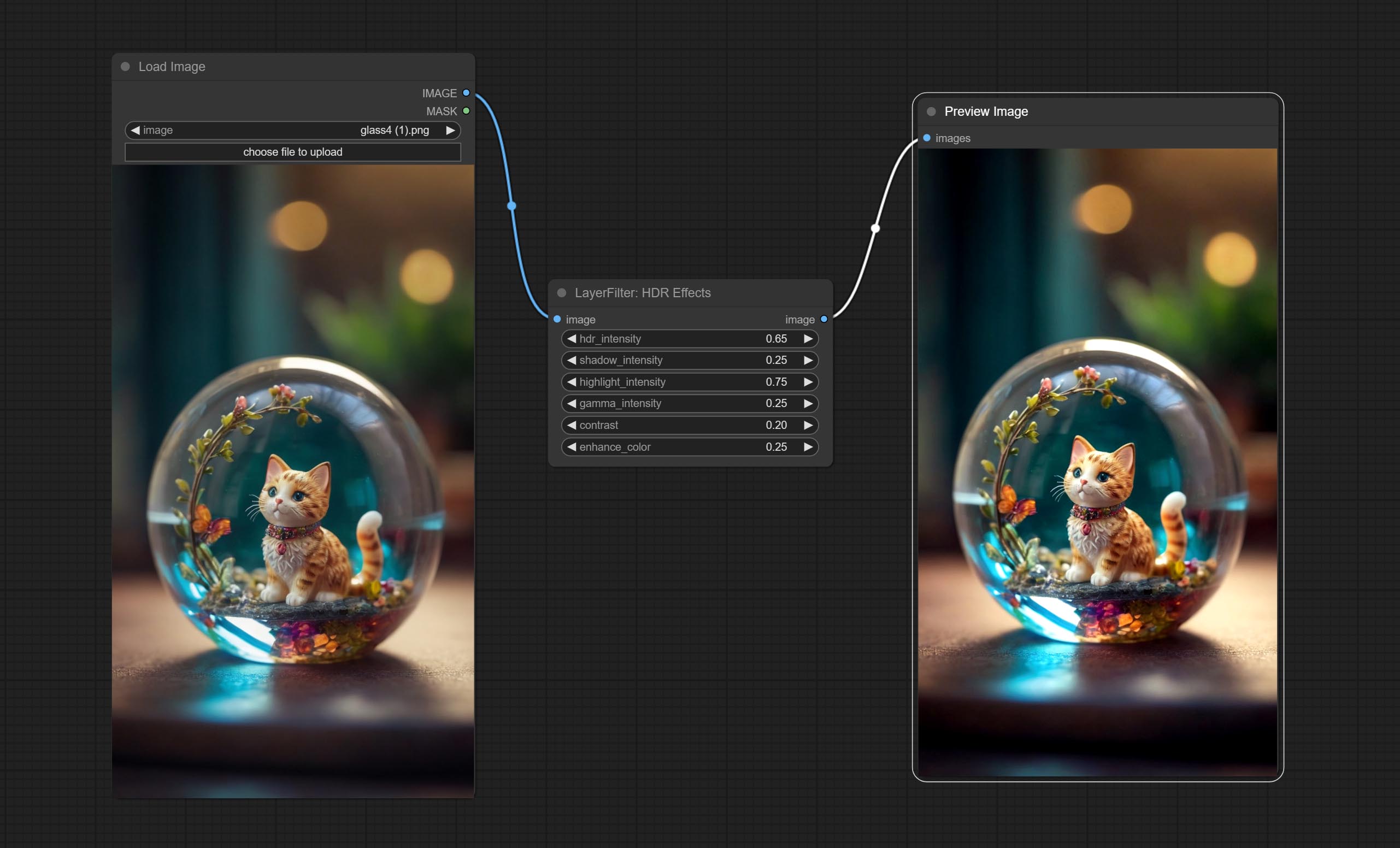

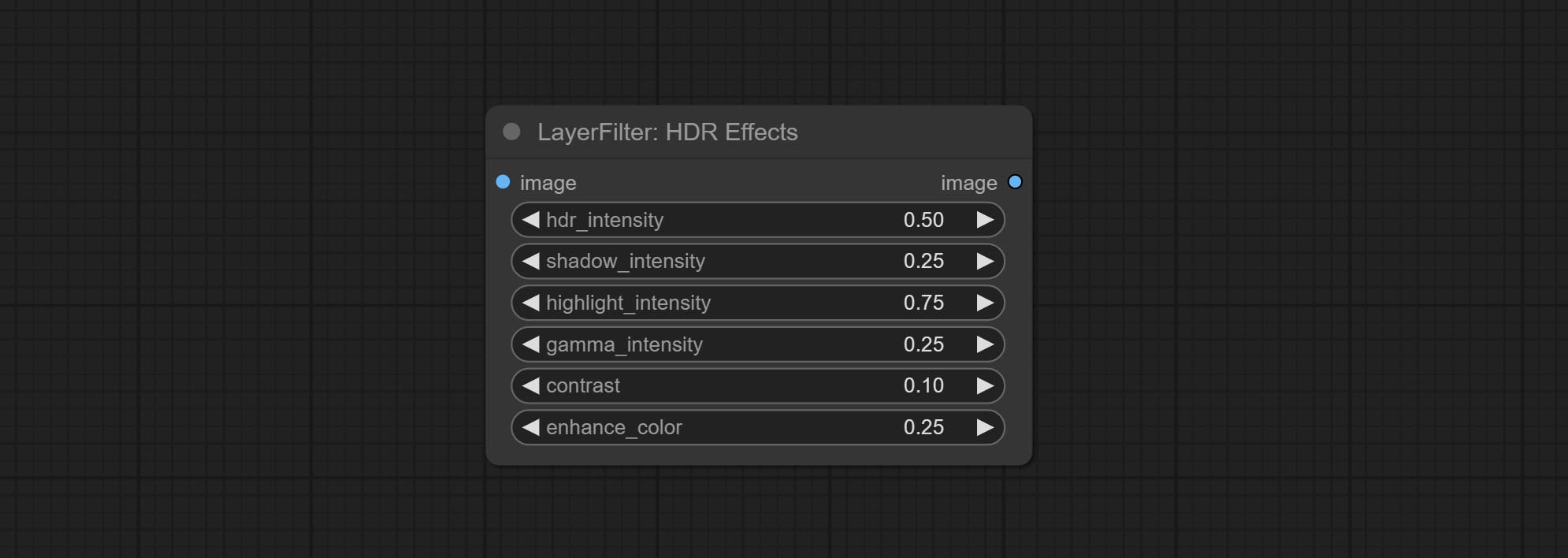

HDR 효과 노드를 커밋하면 입력 이미지의 동적 범위와 시각적 매력을 향상시킵니다. 이 노드는 HDR 효과 (Super -Veasts.ai)의 재 포장입니다.

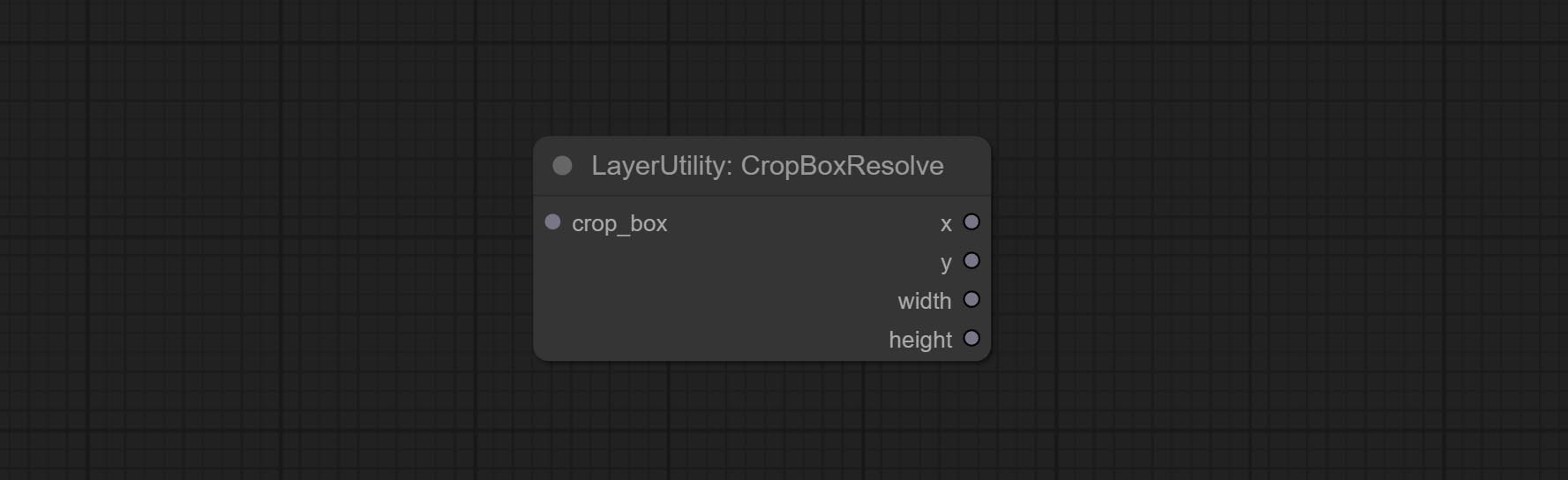

Commit CropboxResolve 노드.

Birefnetultra 노드를 Commit Birefnet 모델을 사용하여 배경을 제거하면 더 나은 인식 능력과 초고 에지 세부 사항이 있습니다.

imageautocropv2 노드를 커밋하면 배경을 제거하지 않고 마스크 입력을 지원하며 길이 또는 짧은 측면 크기로 스케일을 선택할 수 있습니다.

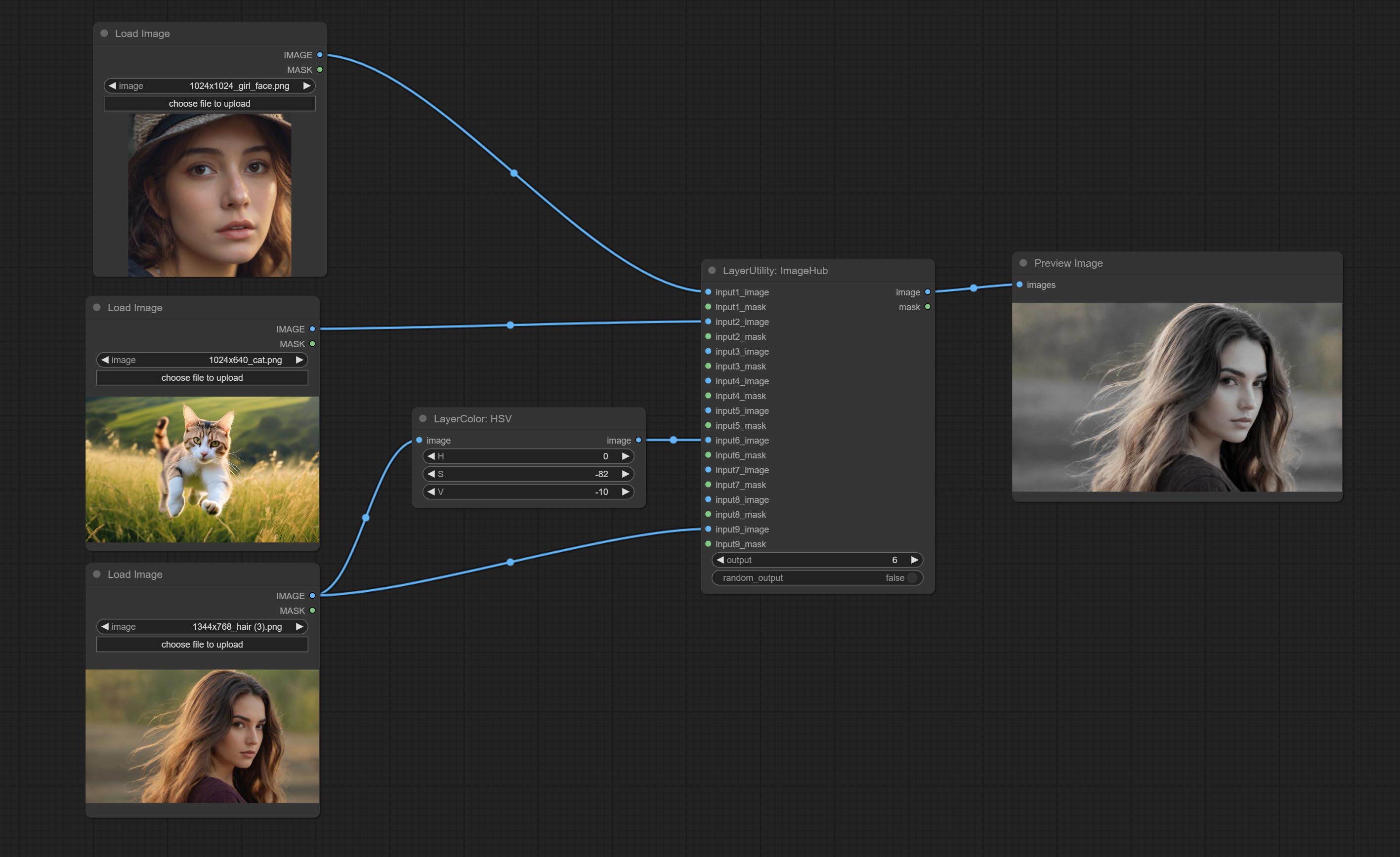

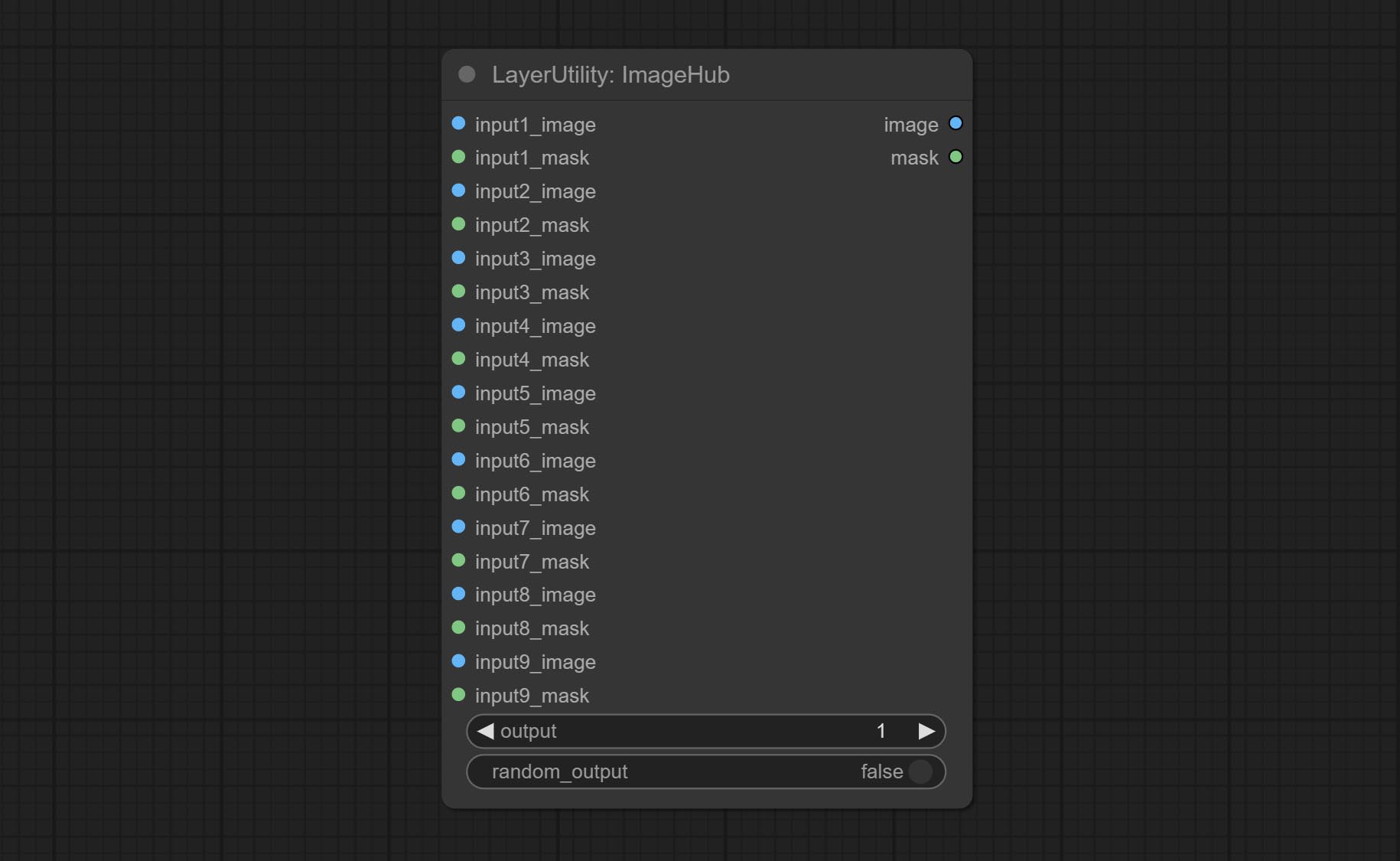

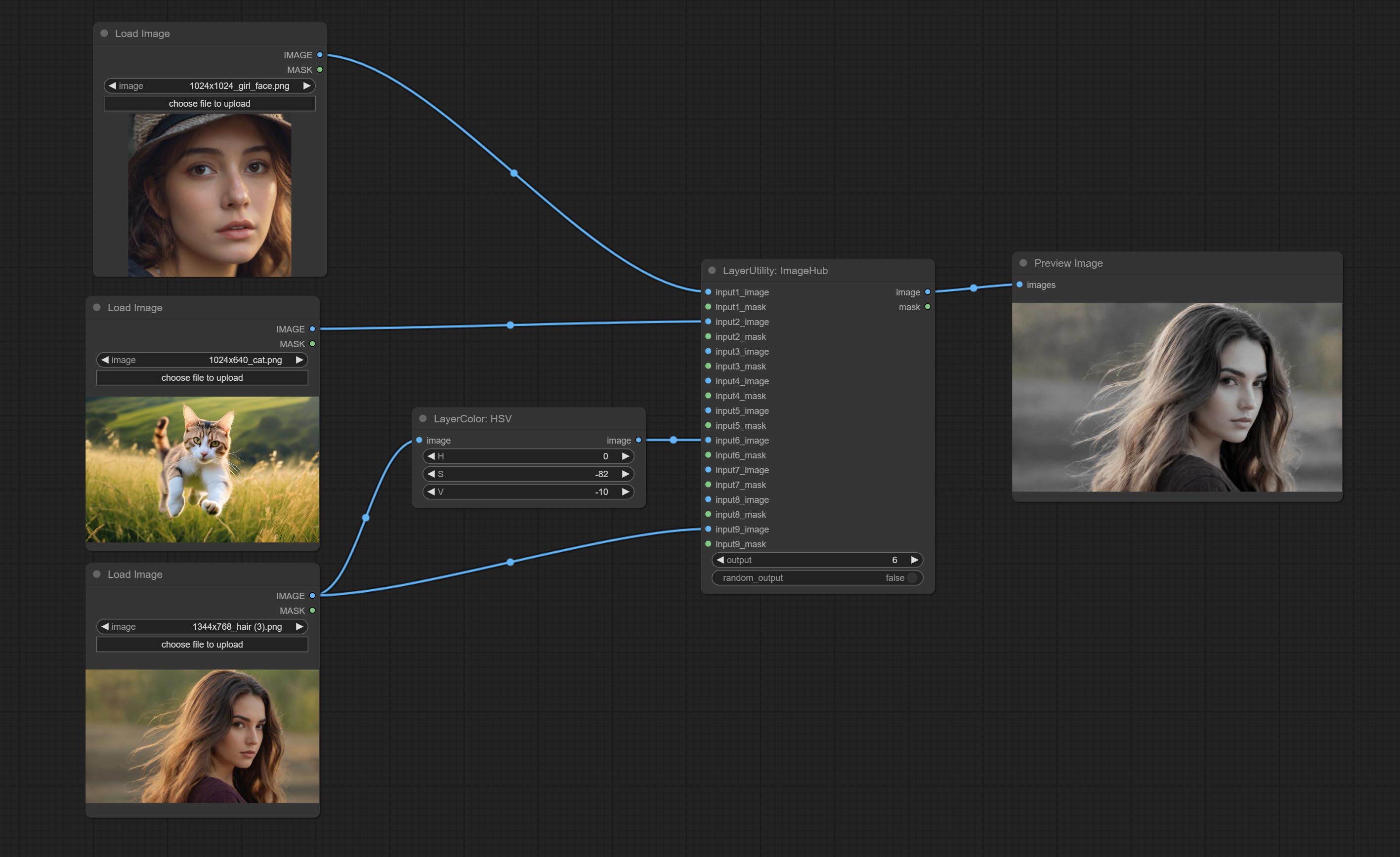

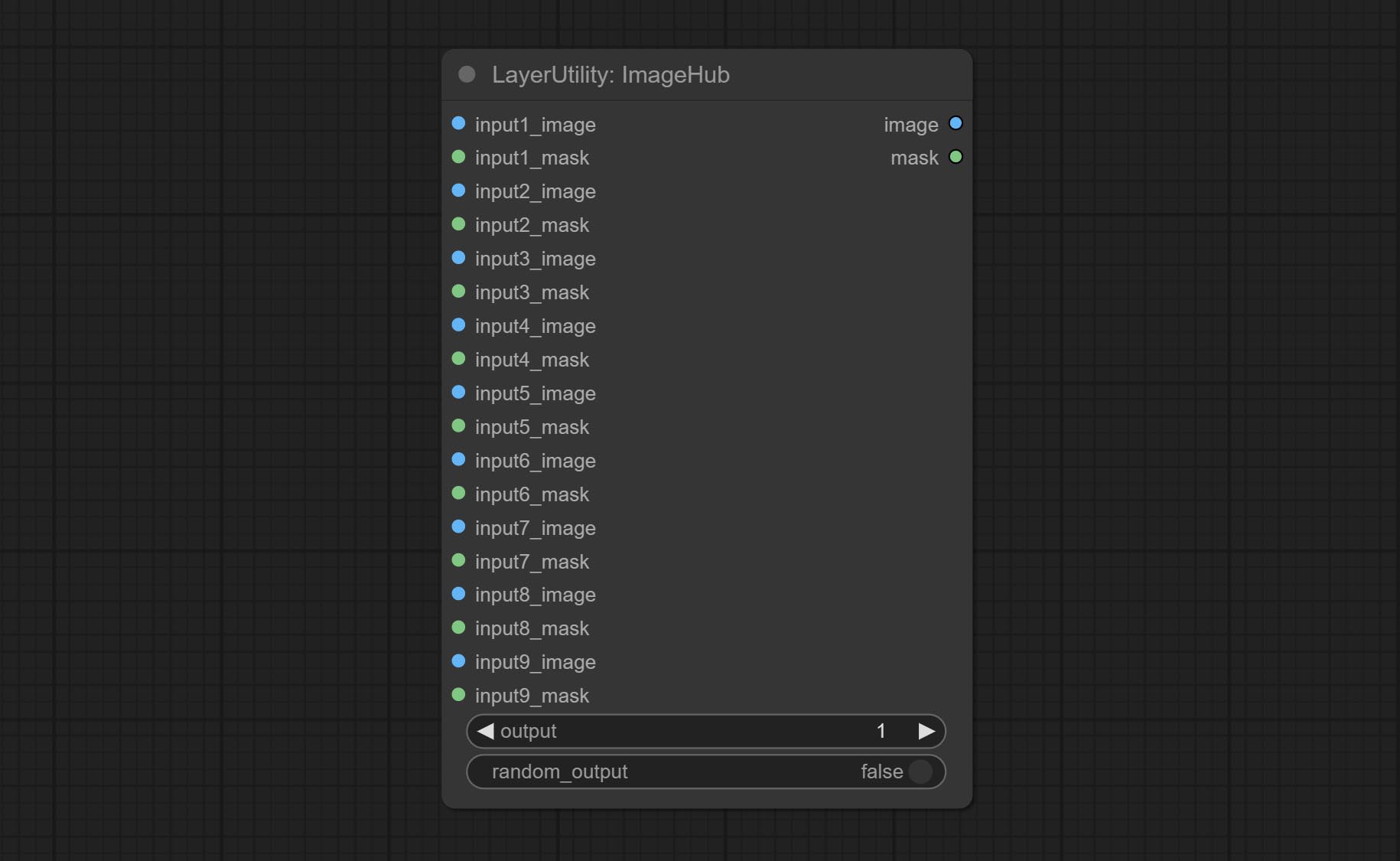

ImageHub 노드를 커밋하고 최대 9 세트의 이미지 및 마스크 스위칭 출력을 지원하며 임의 출력을 지원합니다.

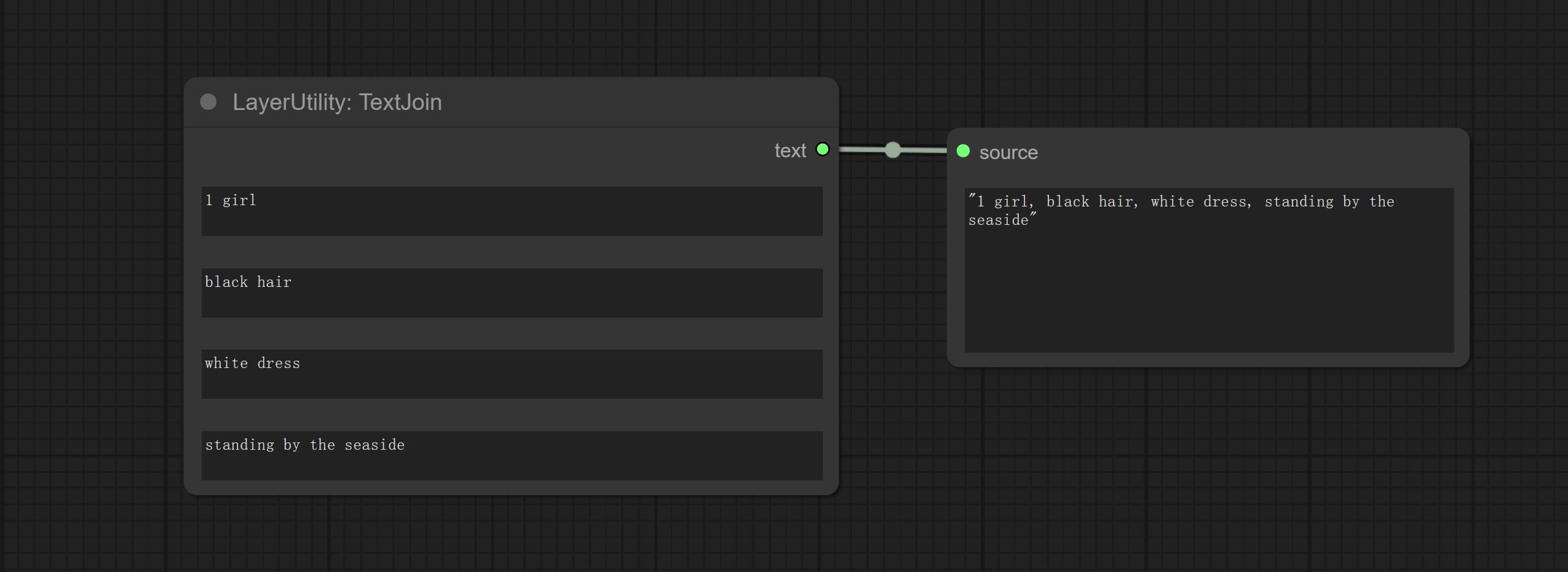

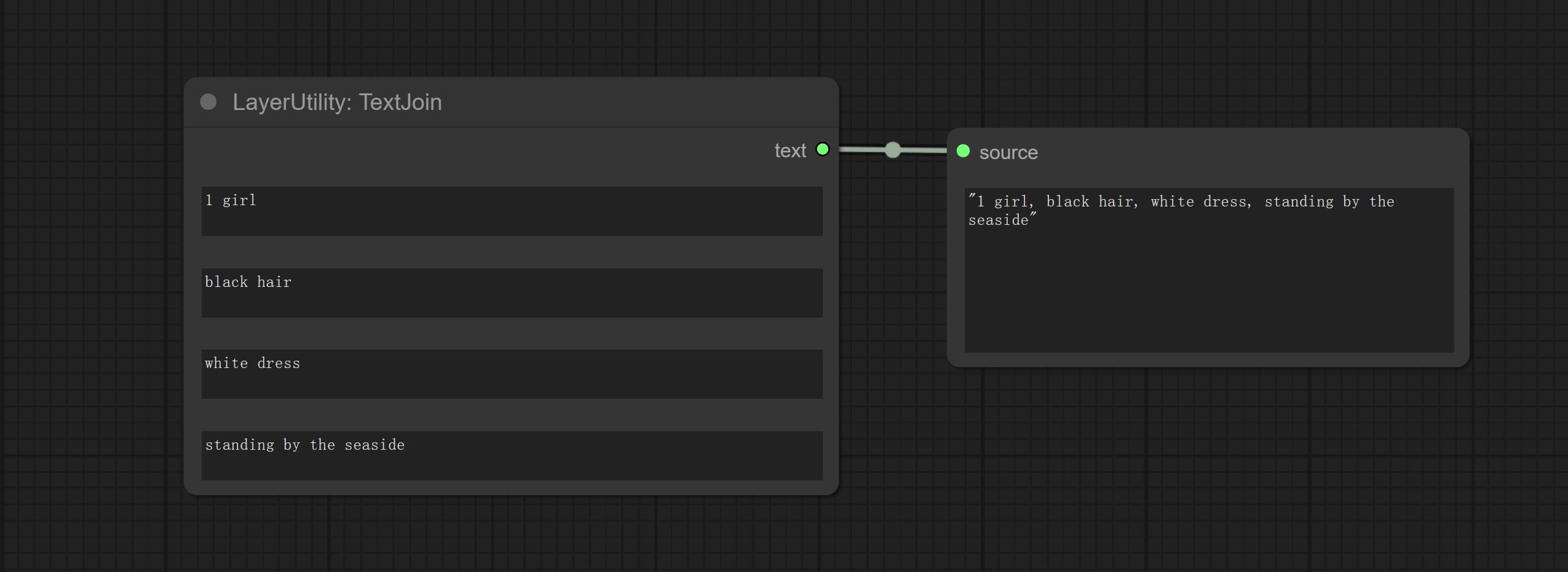

Textjoin 노드를 커밋하십시오.

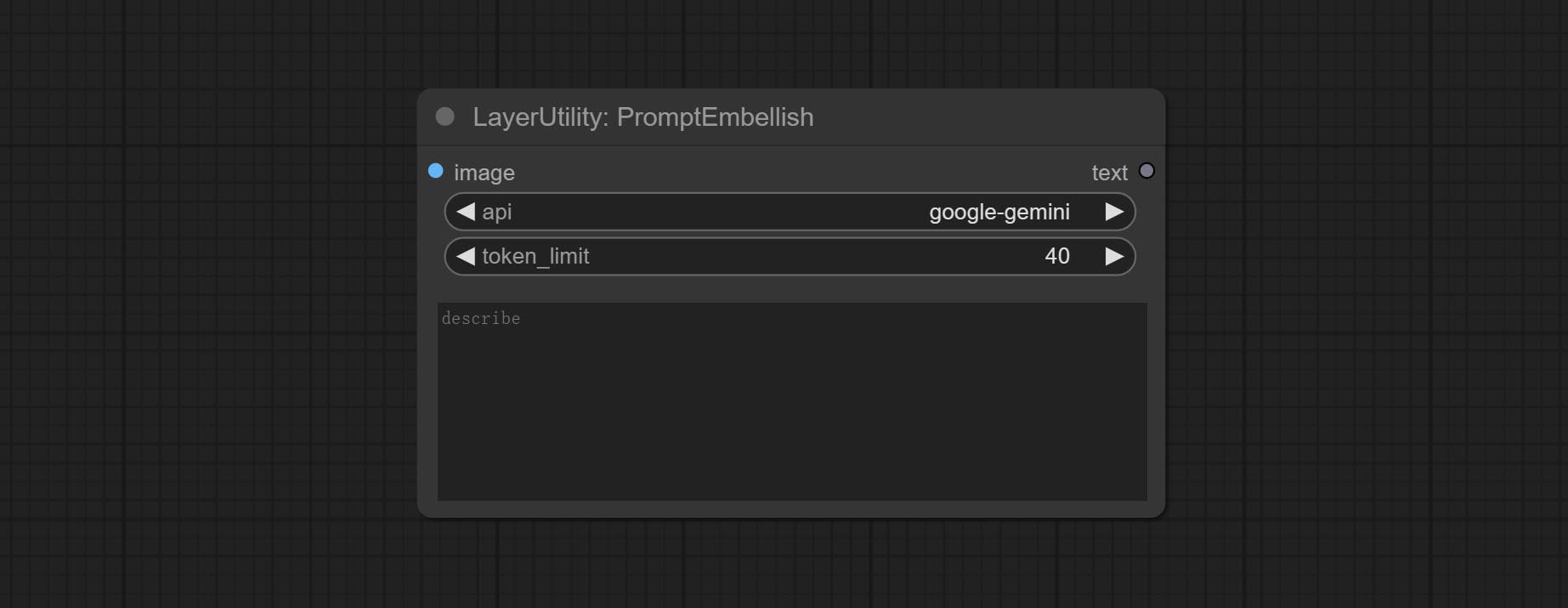

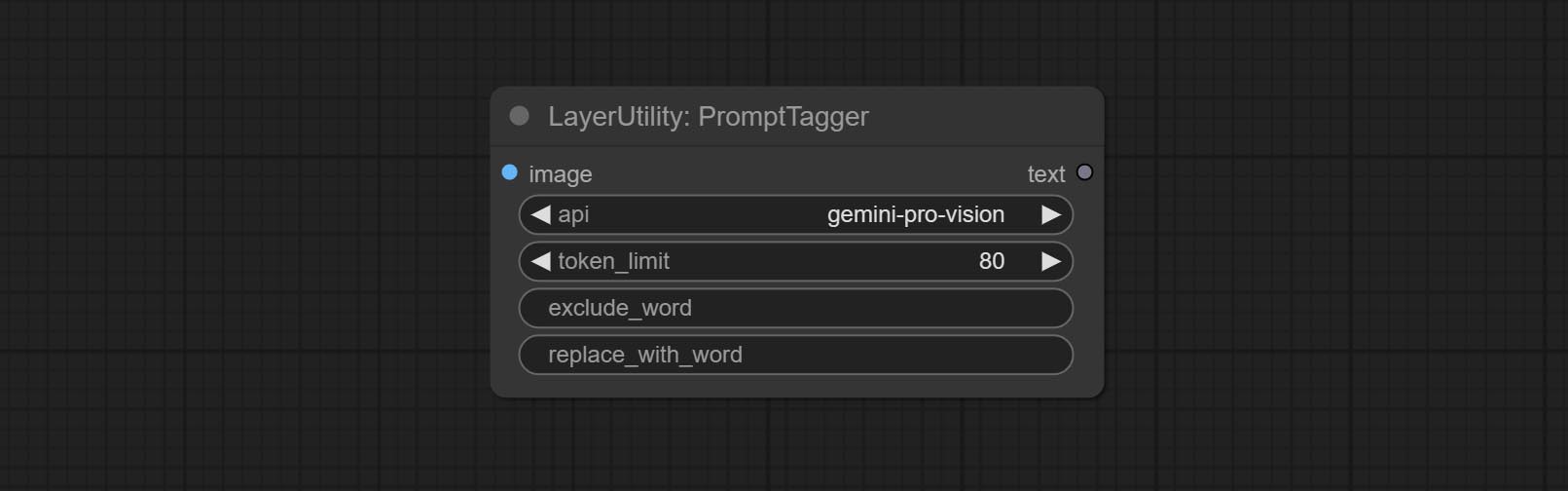

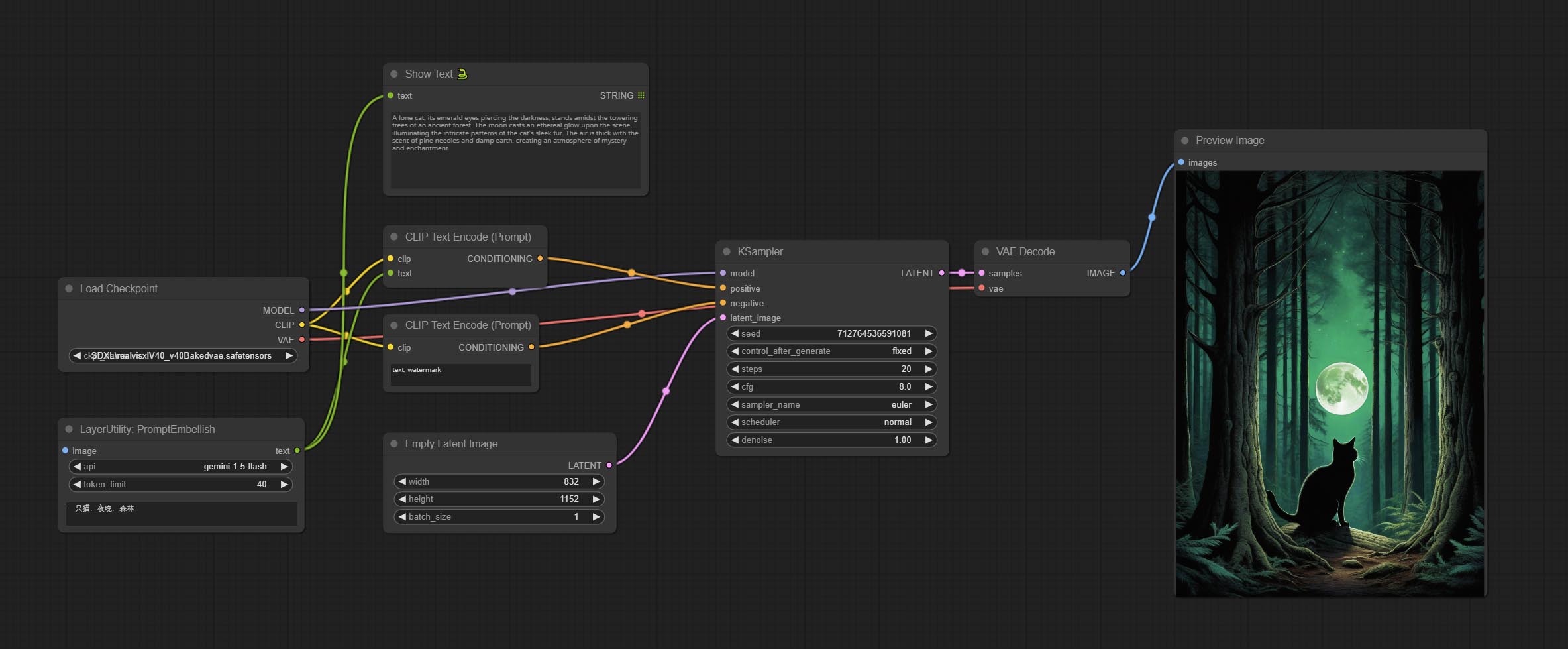

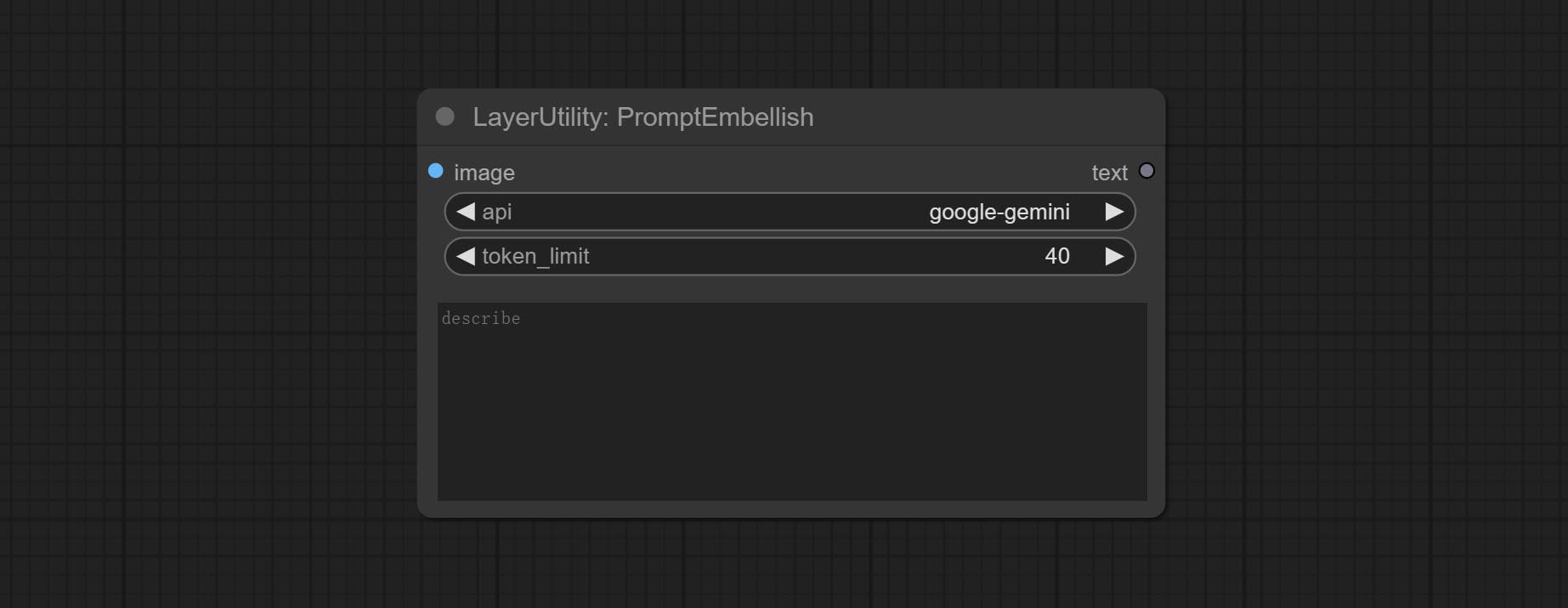

Promptembellish 노드를 커밋하십시오. 광택이있는 프롬프트 단어를 출력하고 이미지를 참조로 지원합니다.

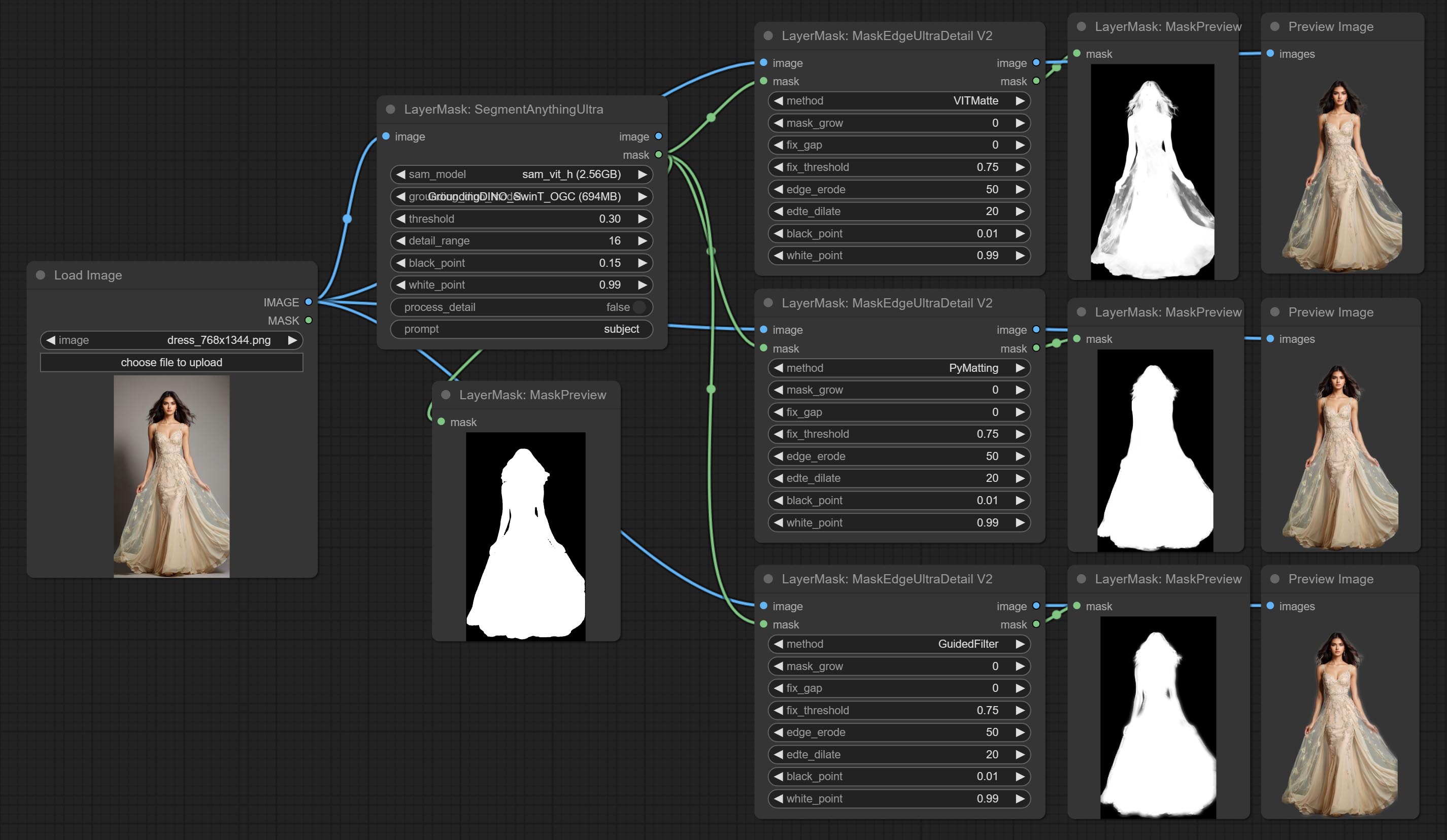

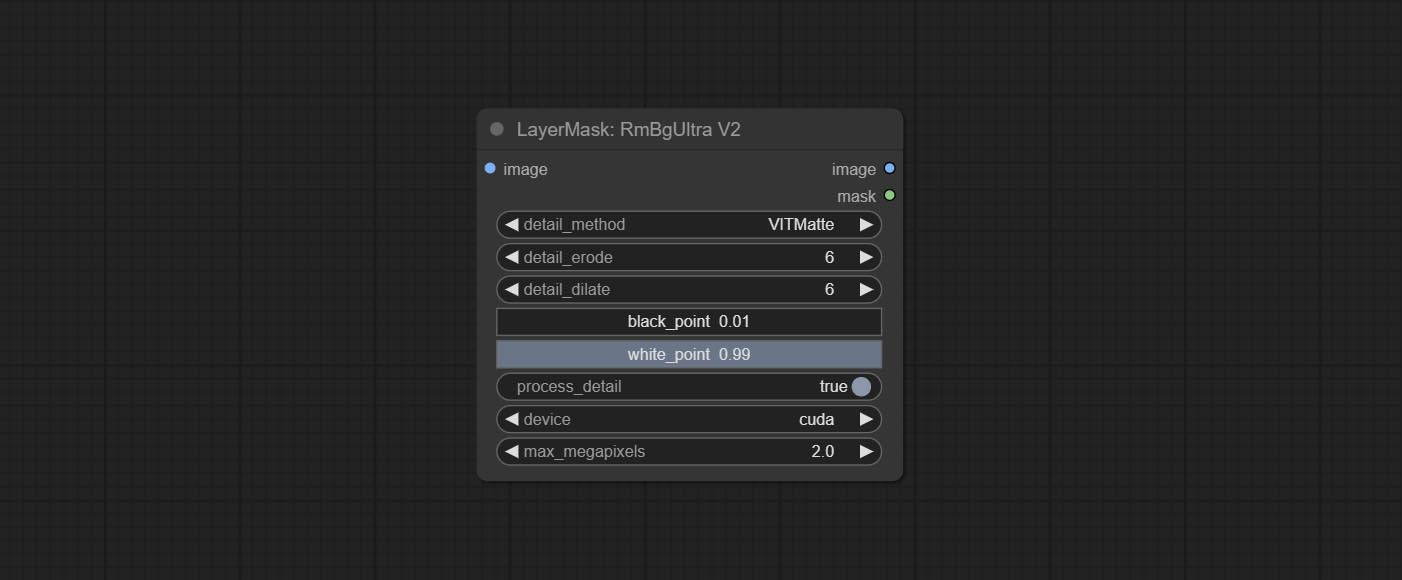

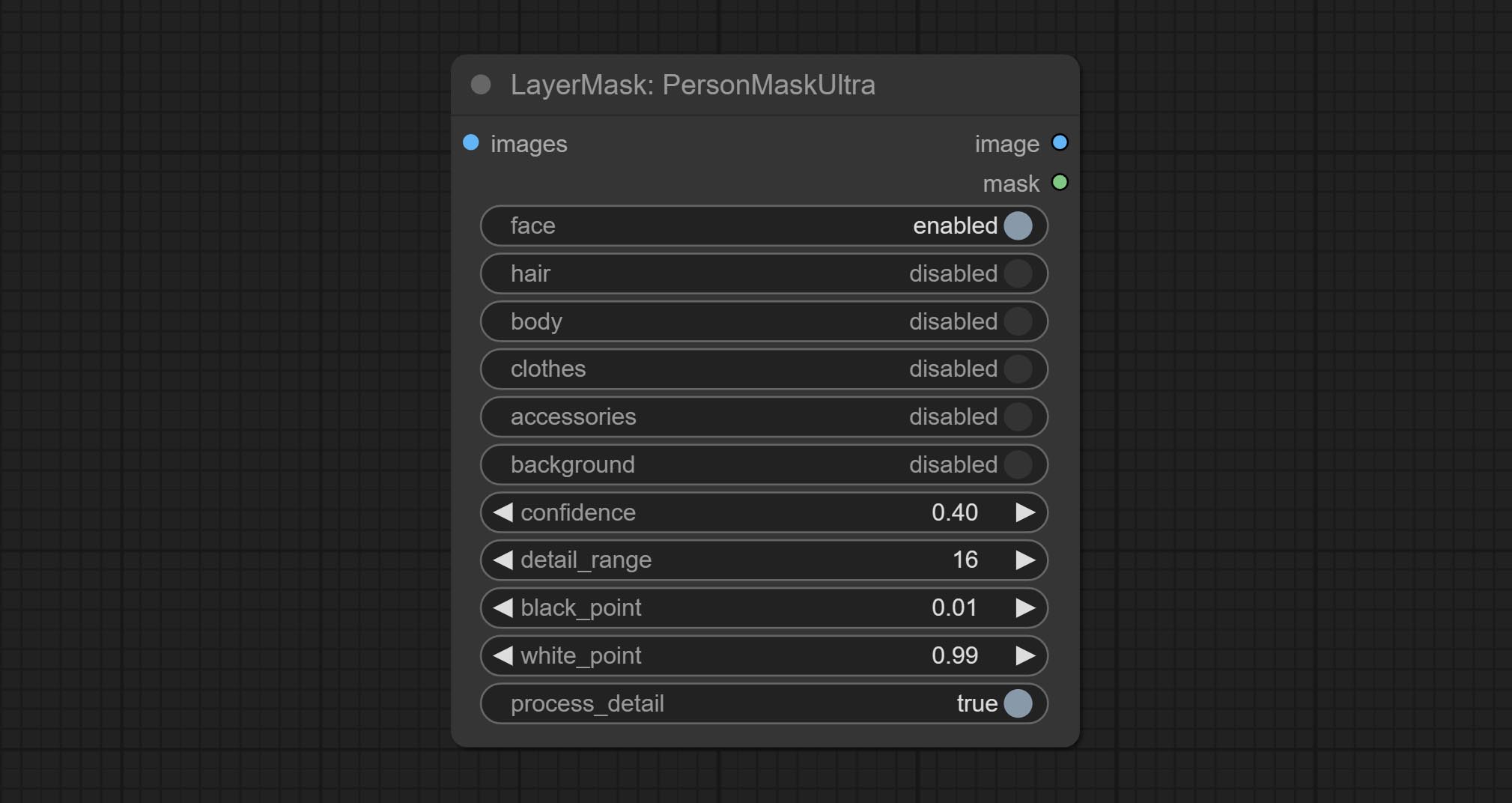

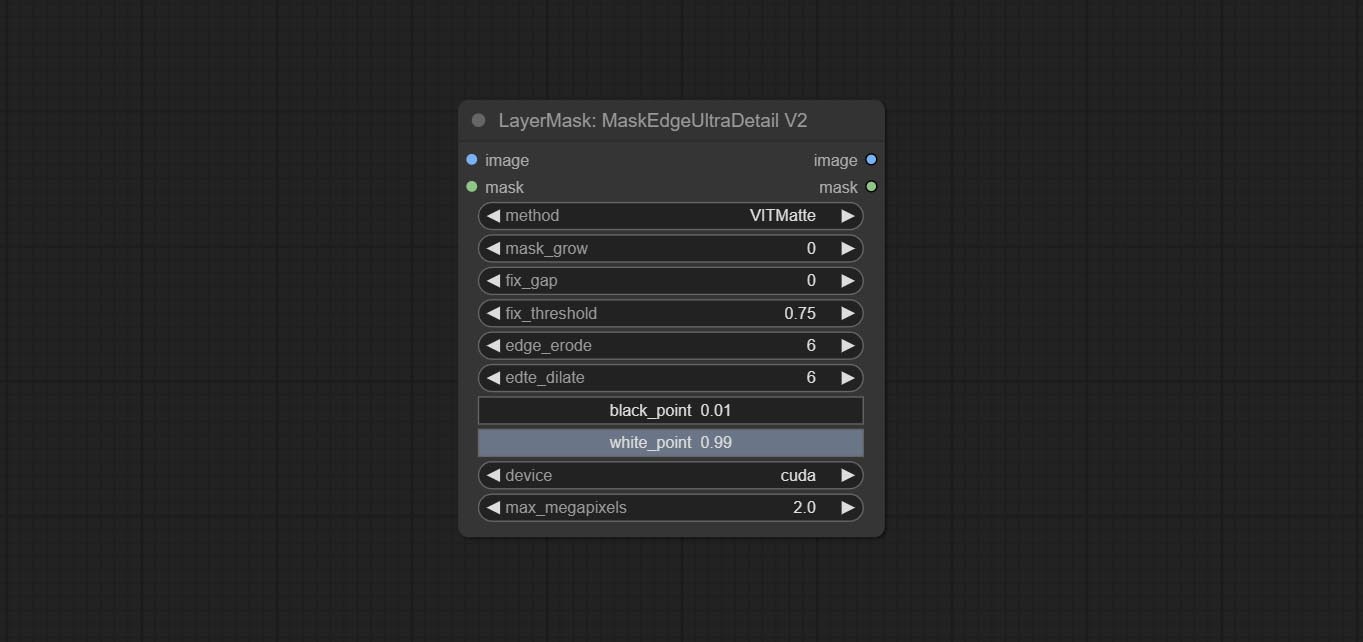

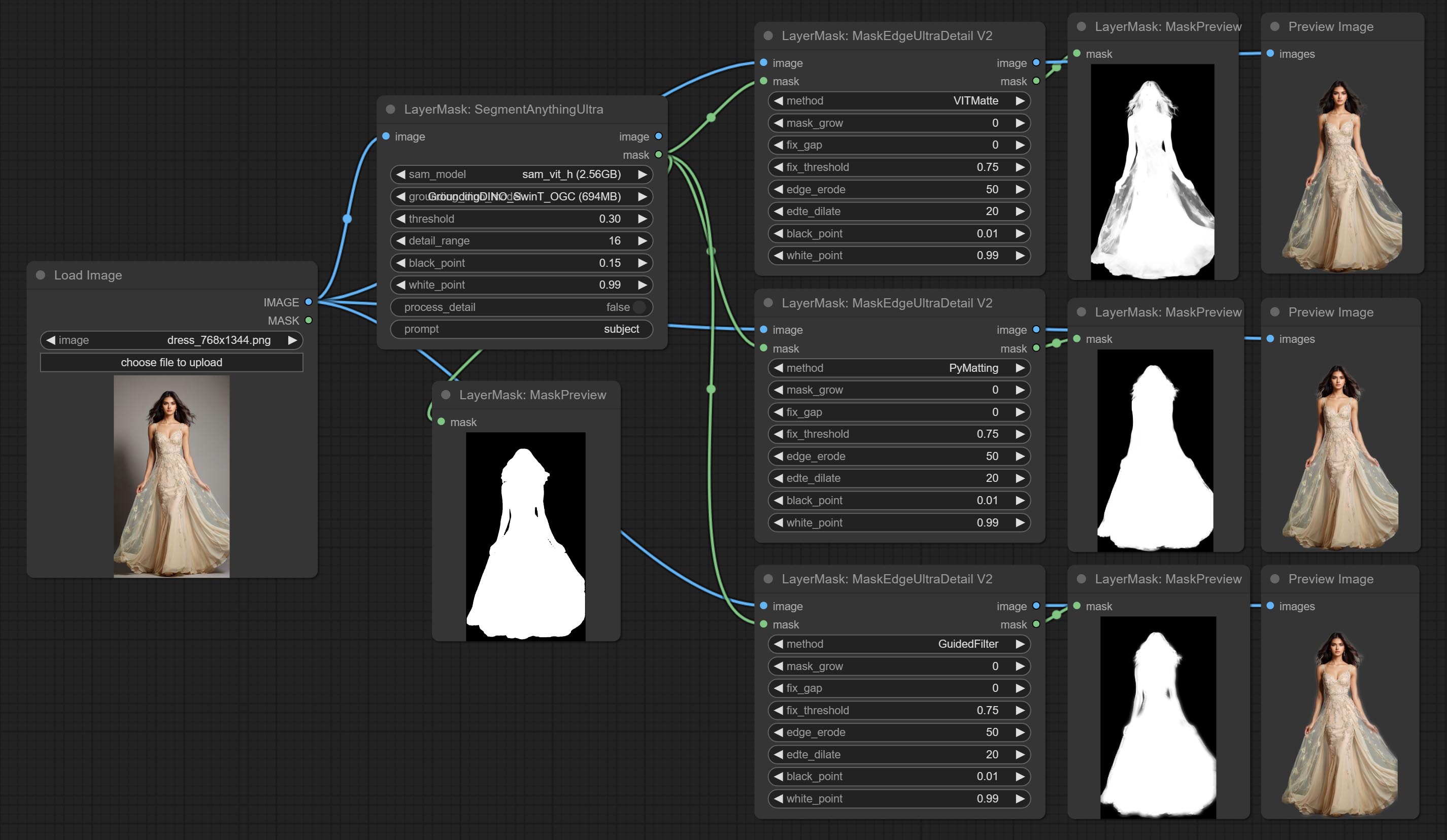

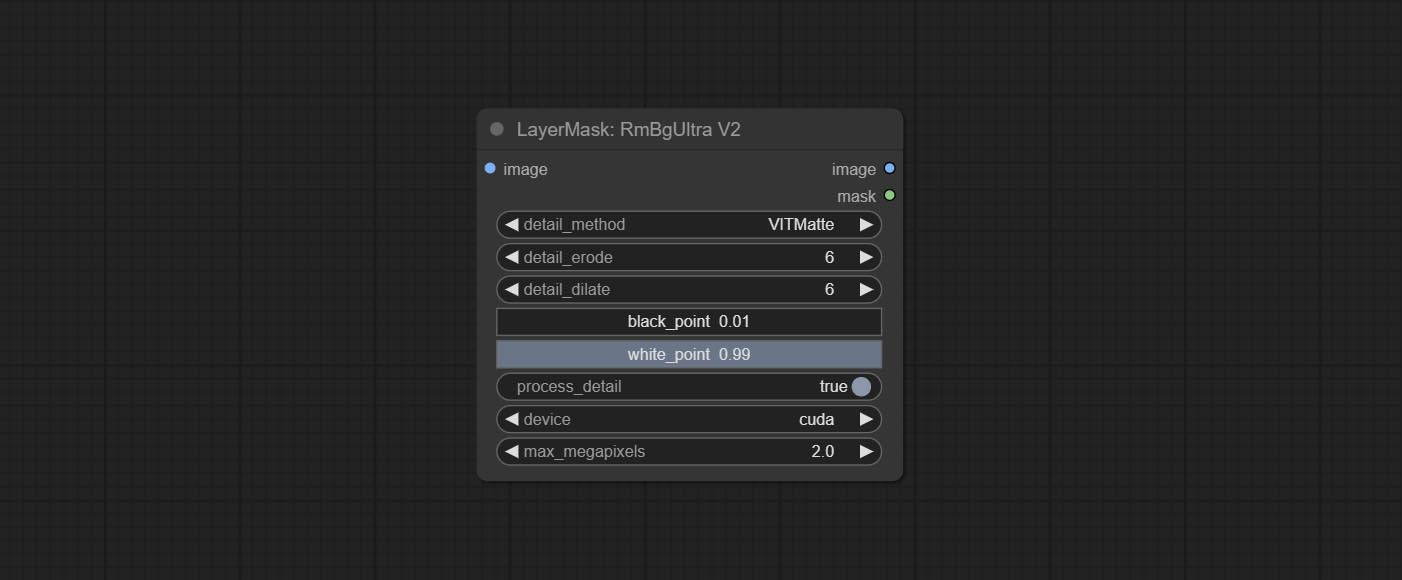

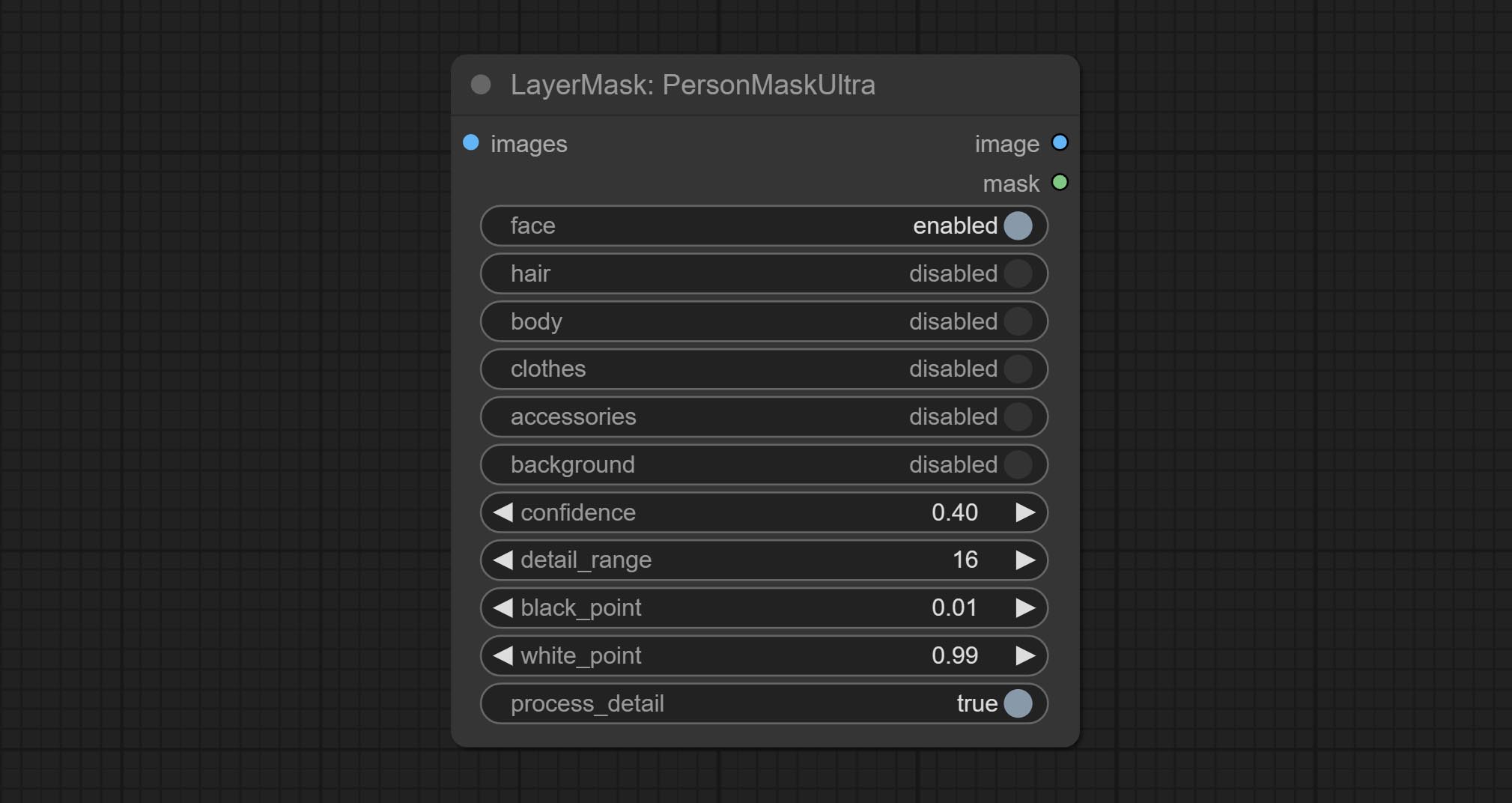

Vitmatte Edge 처리 방법을 추가하여 Ultra Nods가 V2 버전으로 완전히 업그레이드되었으며, 이는 반 투명 영역을 처리하는 데 적합합니다. maskedgeultradetailv2, segmentanythingultrav2, rmbgultrav2 및 personmaskultrav2 노드 포함.

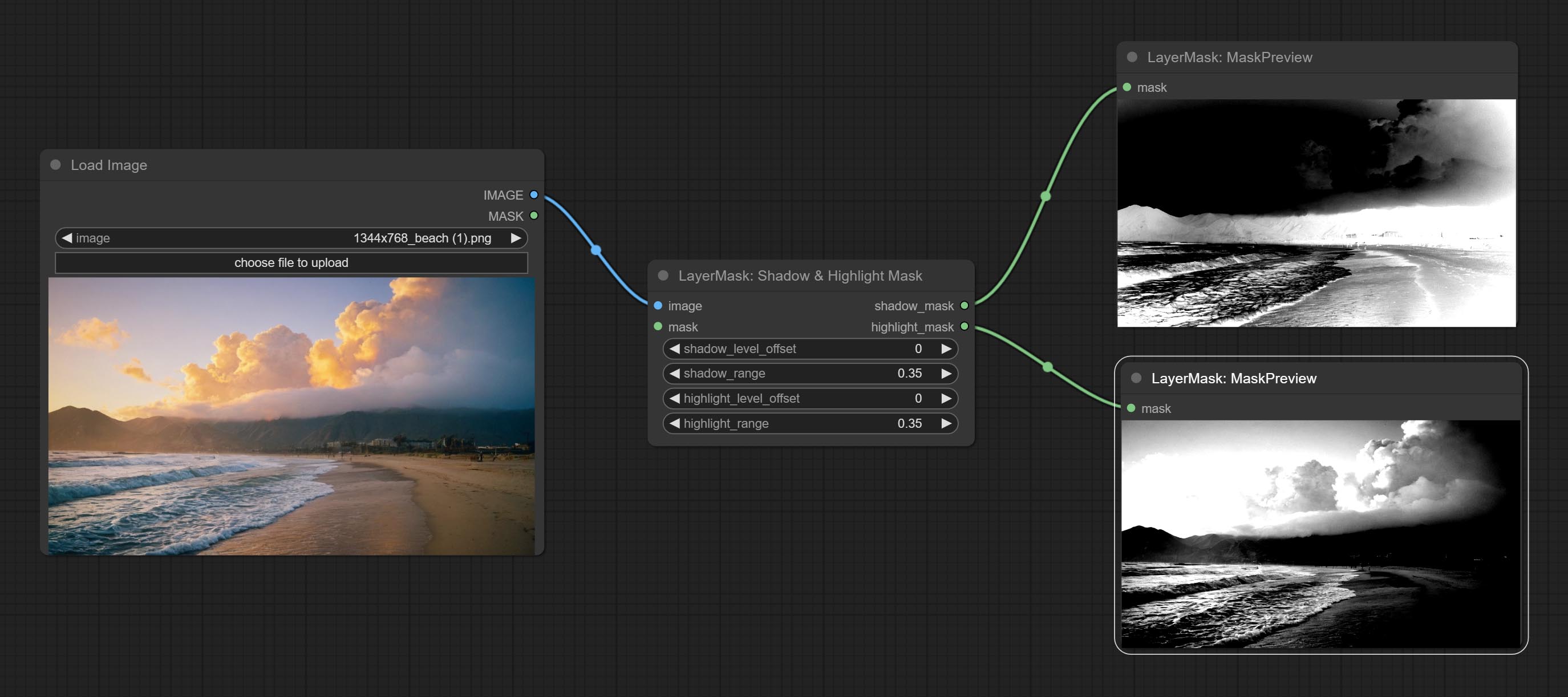

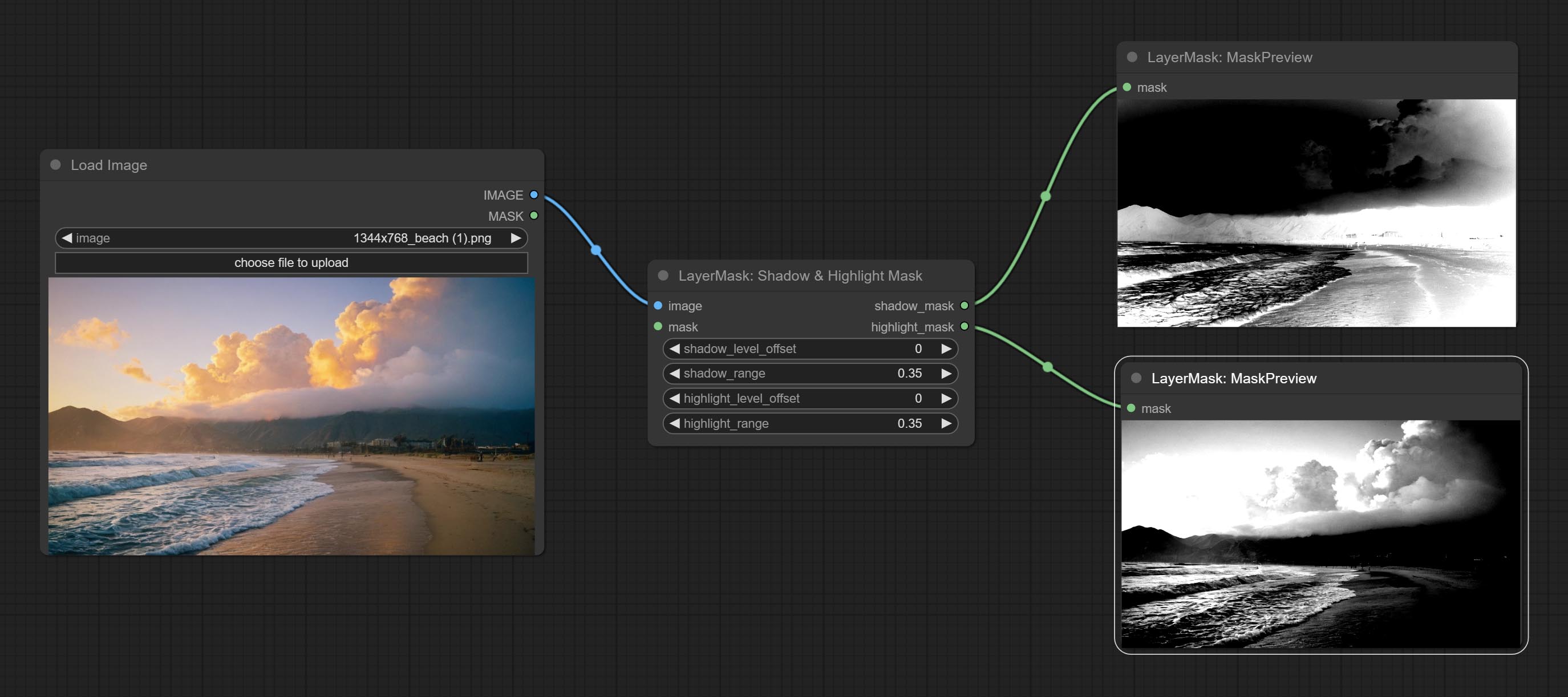

Shadow & Highlight Node의 색상을 커밋하면 어둡고 밝은 부품의 색상을 별도로 조정할 수 있습니다. 섀도우 및 하이라이트 마스크 노드를 커밋하면 어둡고 밝은 영역에 대한 마스크를 출력 할 수 있습니다.

Commit Cremberbymaskv2 노드는 원래 노드를 기반으로 crop_box input을 지원하므로 동일한 크기의 레이어를 잘라내는 데 편리합니다.

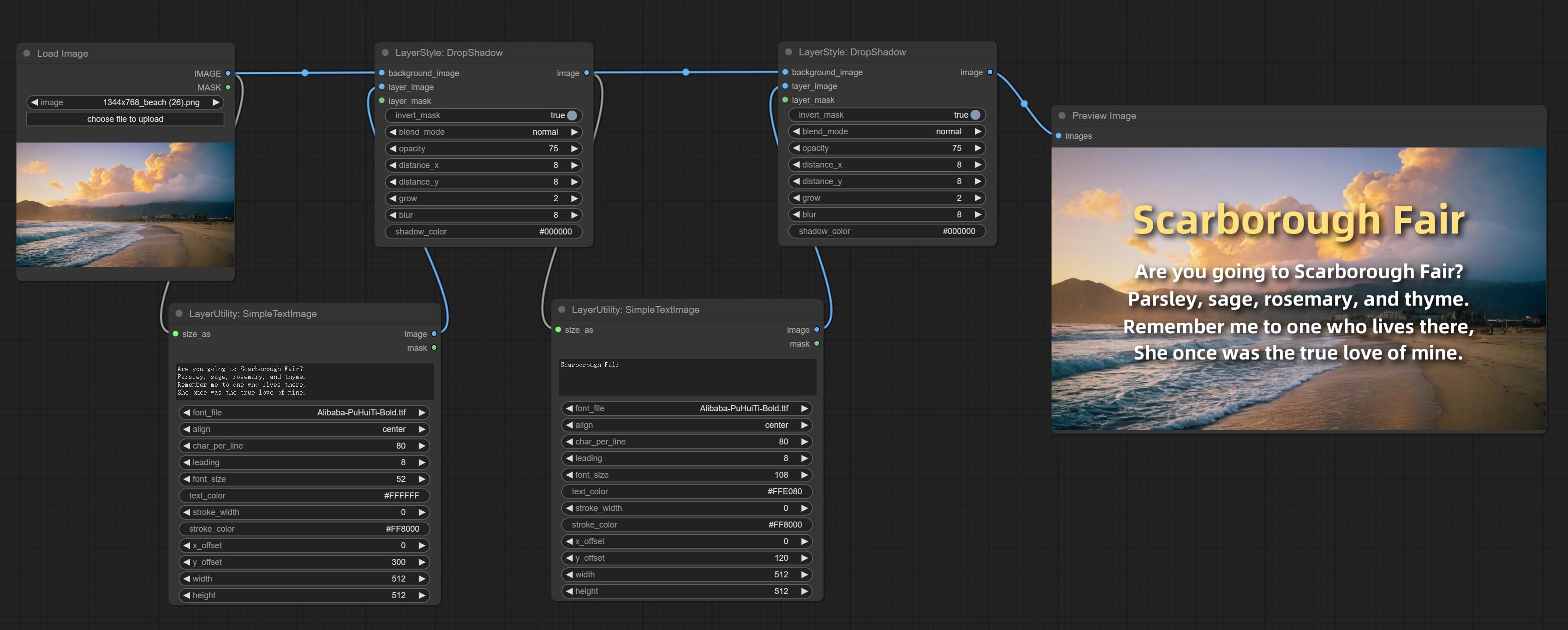

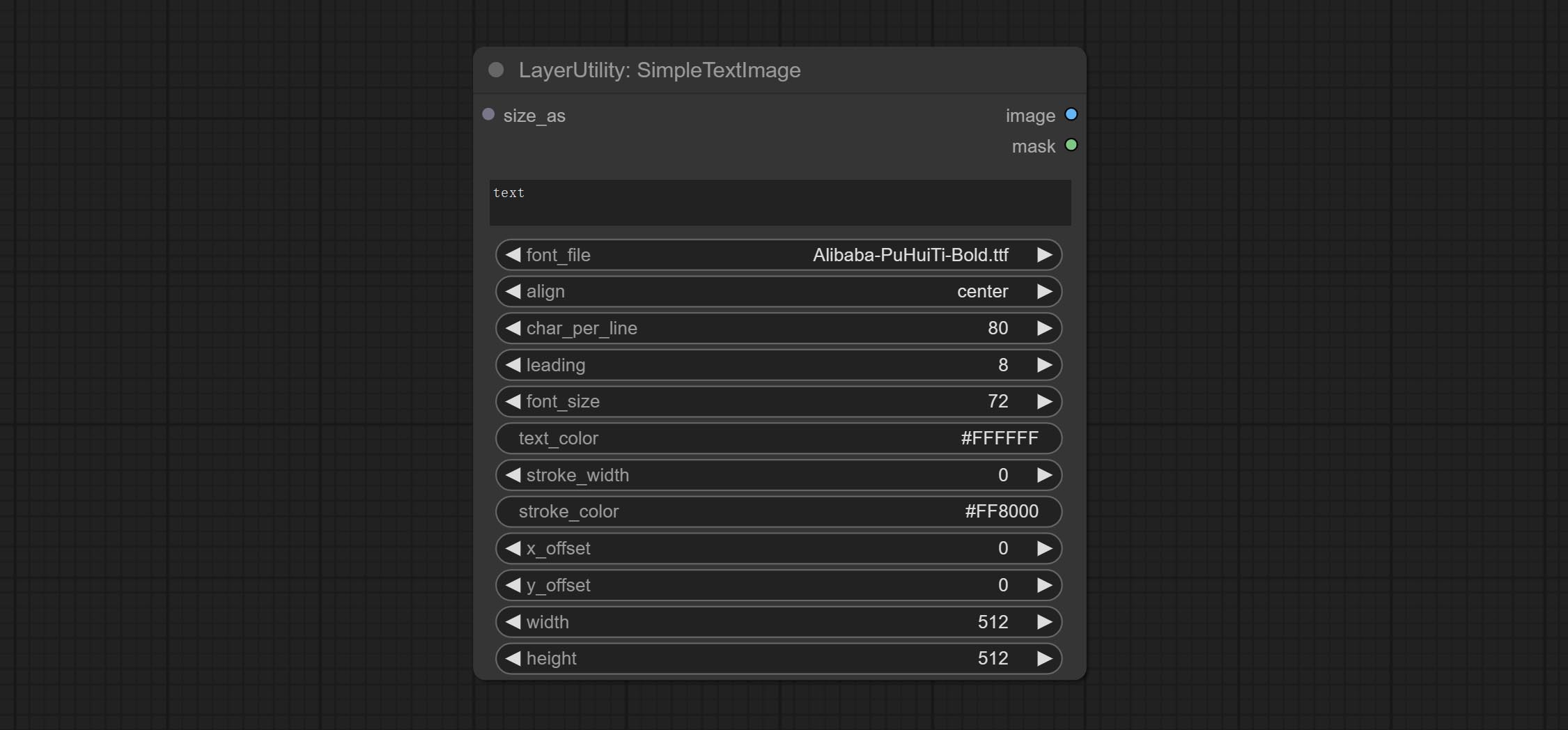

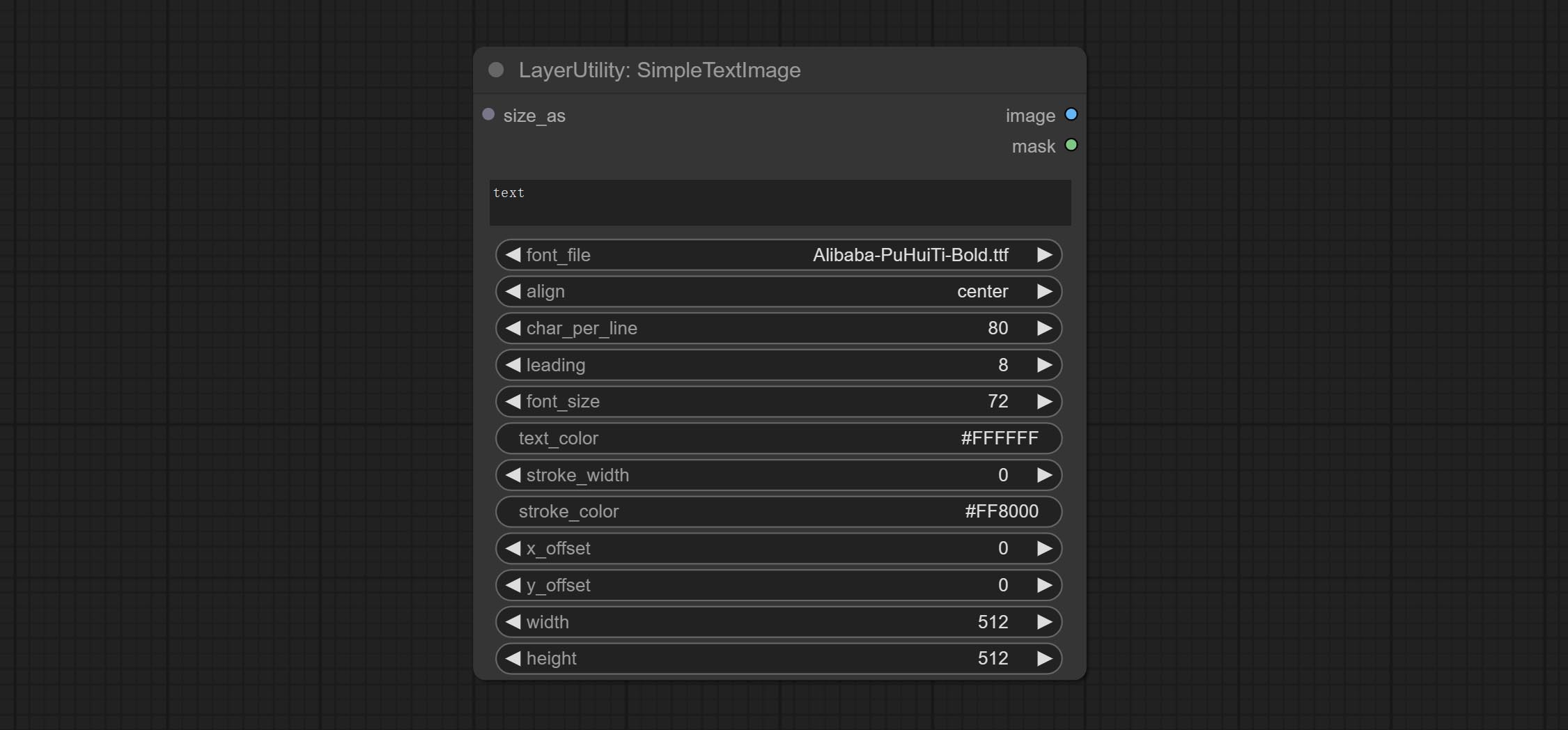

SimpletextImage 노드를 커밋하면 텍스트에서 간단한 조판 이미지와 마스크를 생성합니다. 이 노드는 Zho-Zho-Zho/comfyui-text_image-composite의 기능 및 코드 중 일부를 참조합니다.

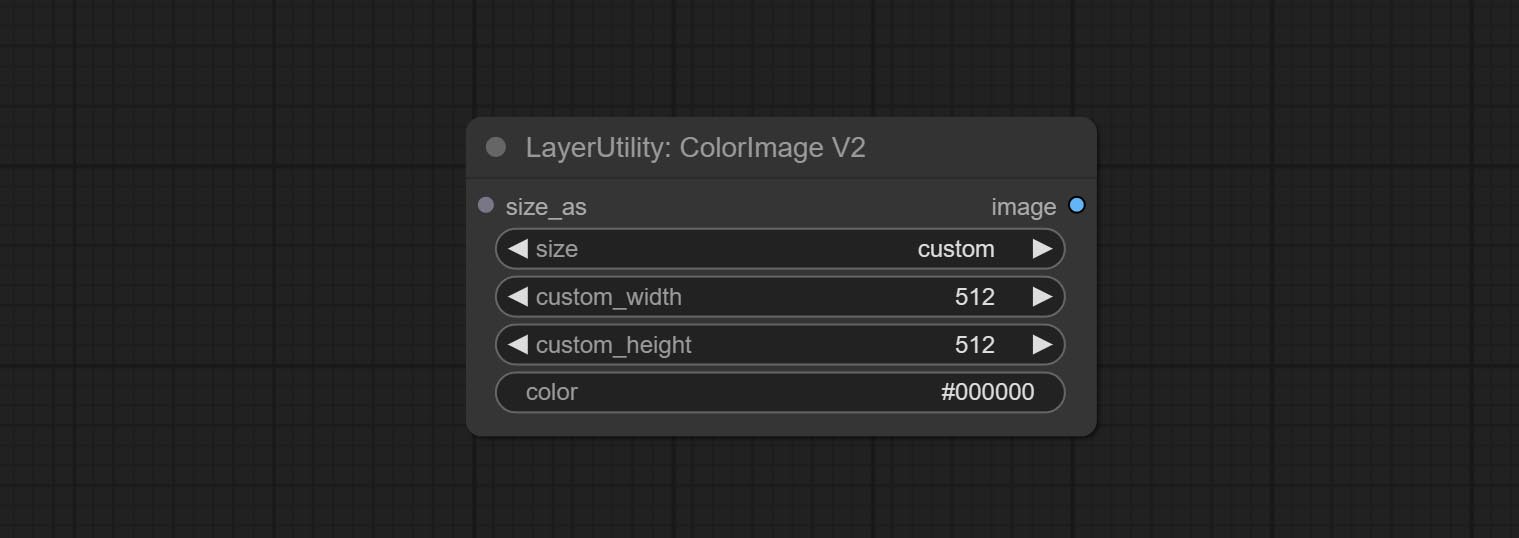

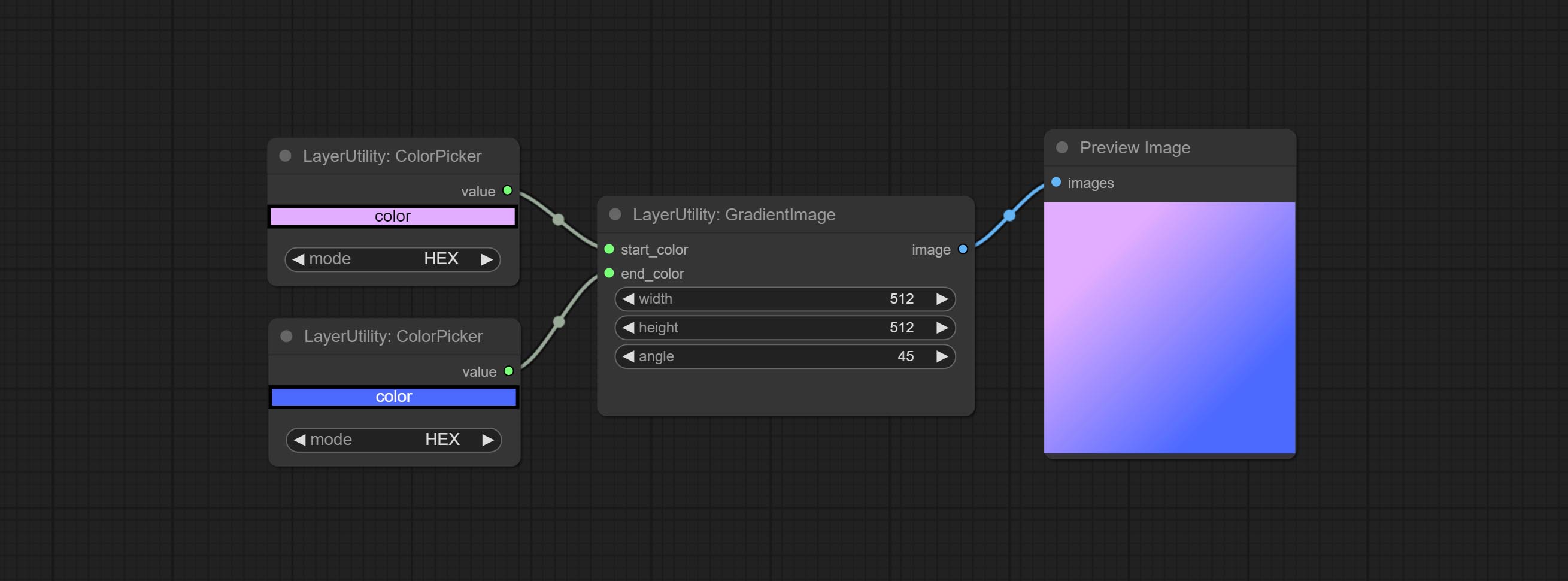

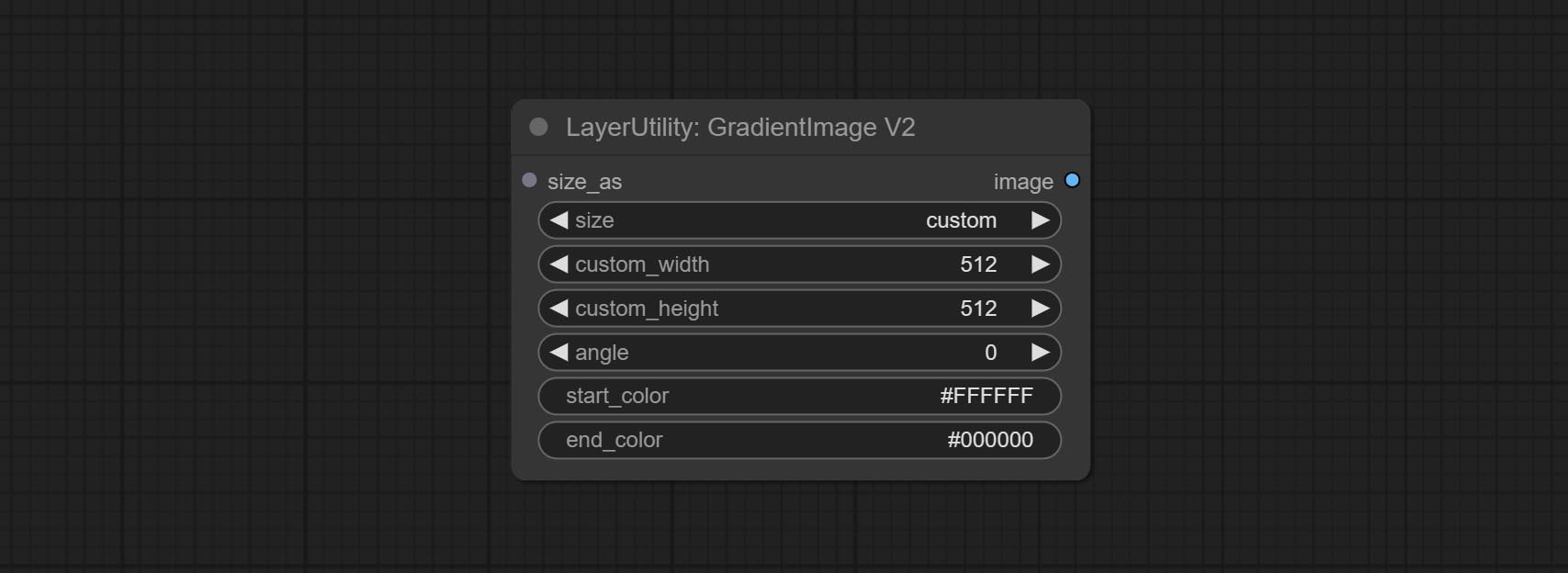

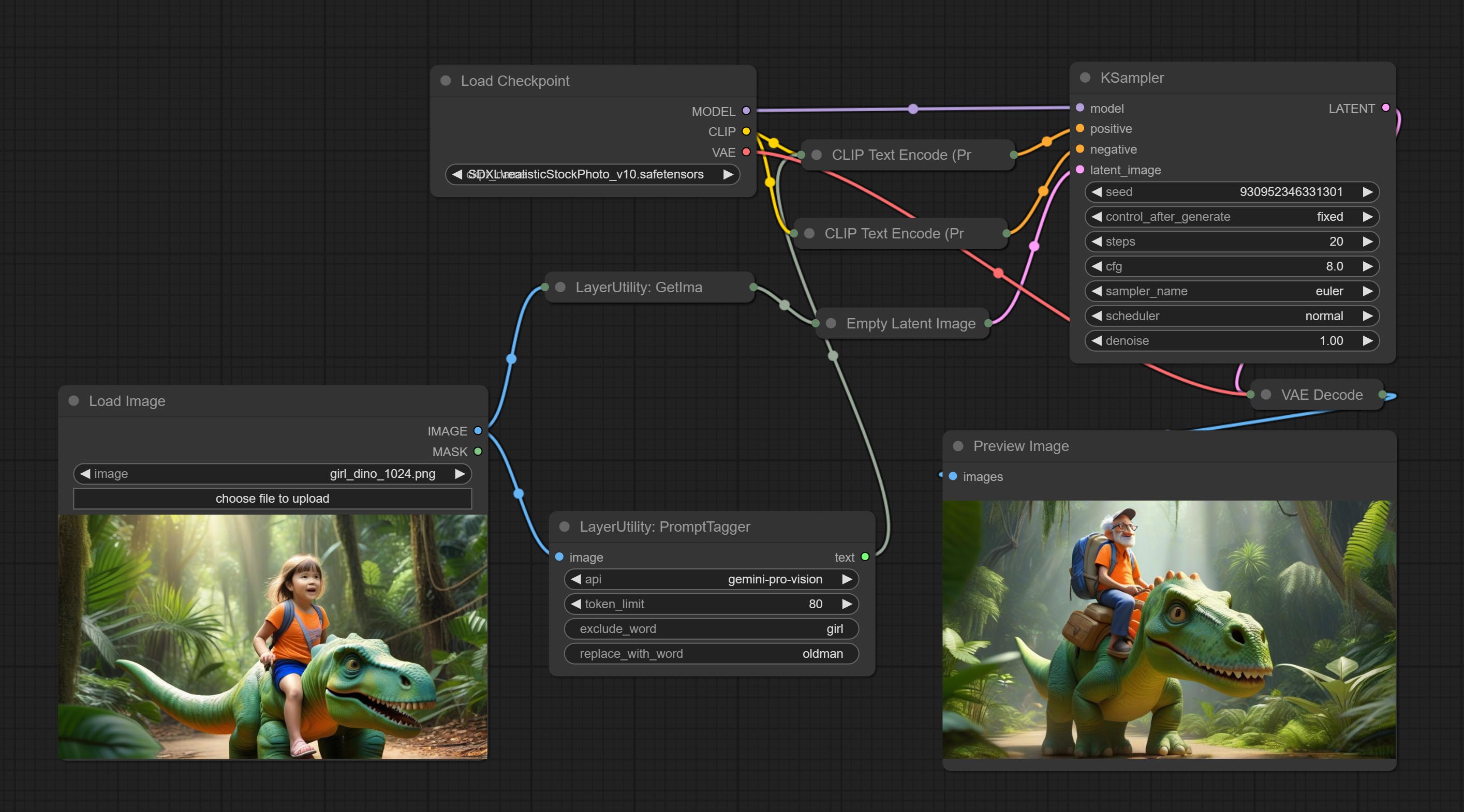

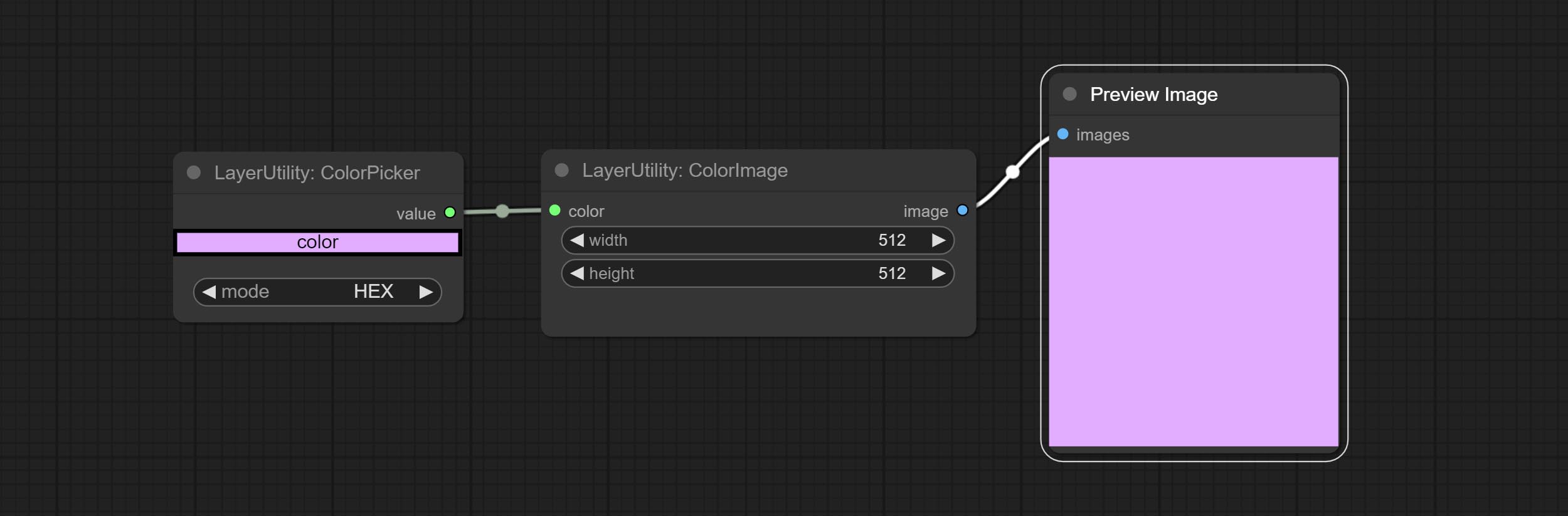

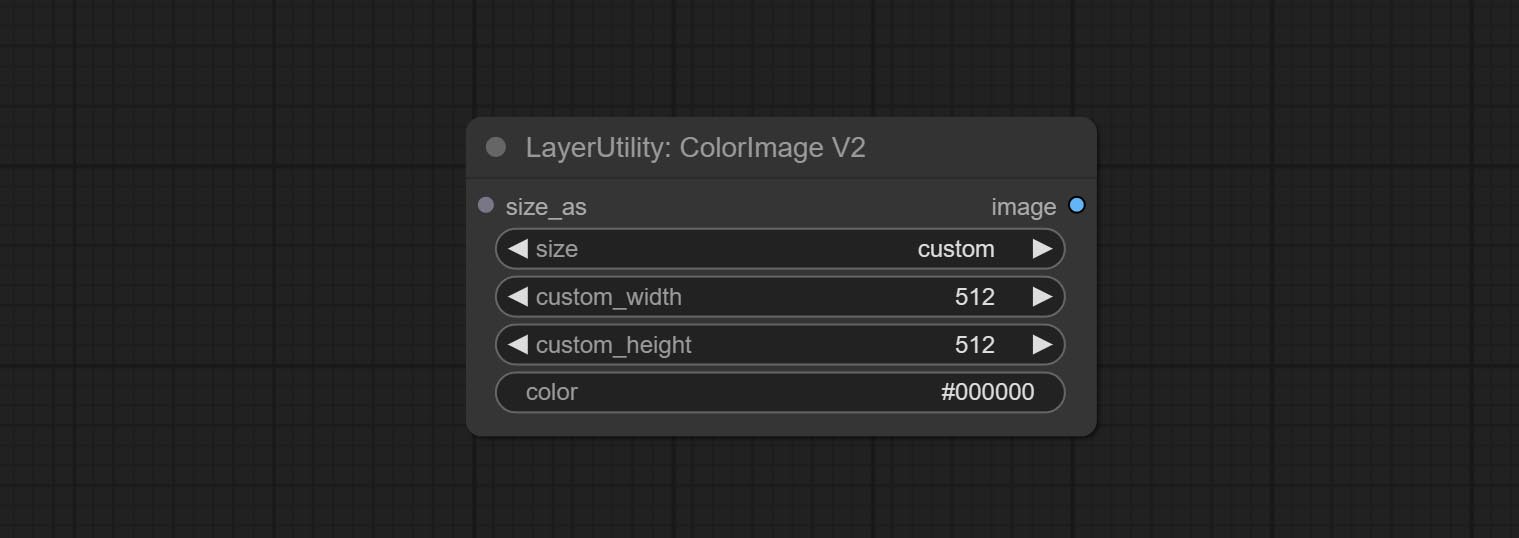

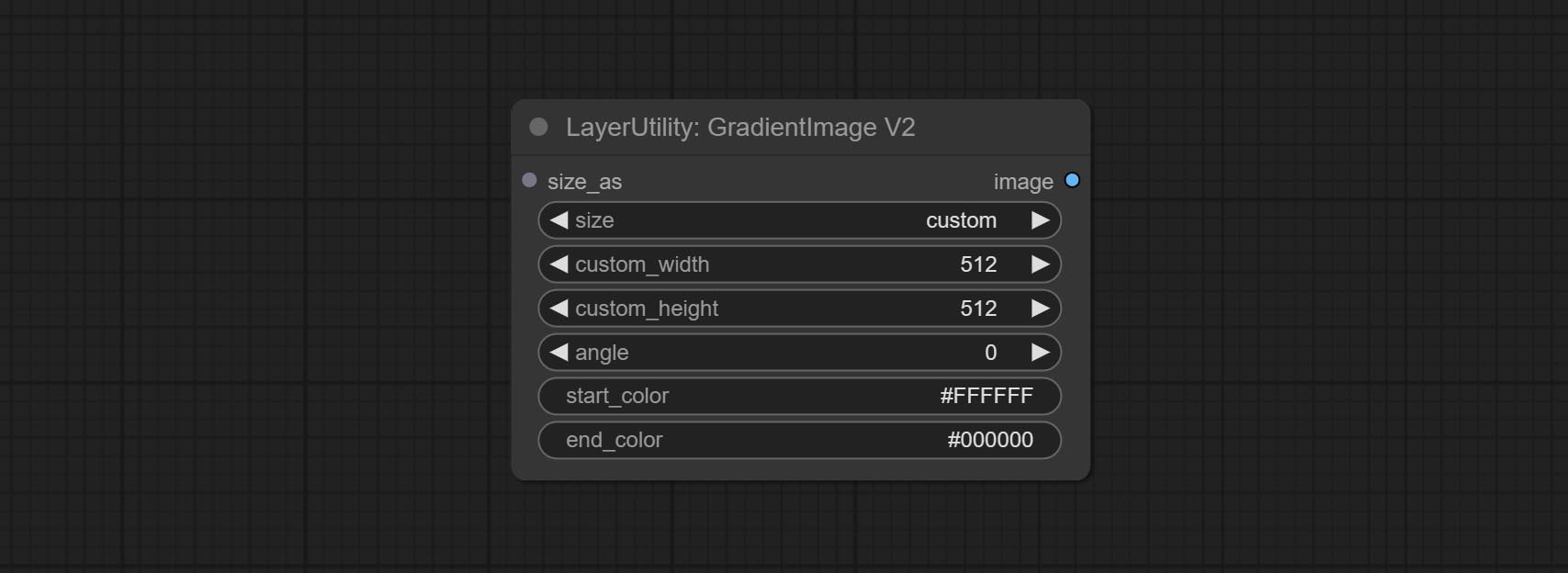

PromptTagger 노드를 커밋하고 이미지를 기반으로 프롬프트를 추론하십시오. 또한 프롬프트의 키워드를 대체 할 수 있습니다 (Google Studio API 키에 적용해야 함). ColorImagev2 및 Gradientimagev2를 업그레이드하고 사용자 정의 사전 설정 크기 및 size_as 입력을 지원합니다.

라마 노드를 커밋하면 마스크를 기반으로 이미지에서 물체를 지울 수 있습니다. 이 노드는 iopaint의 재 포장입니다.

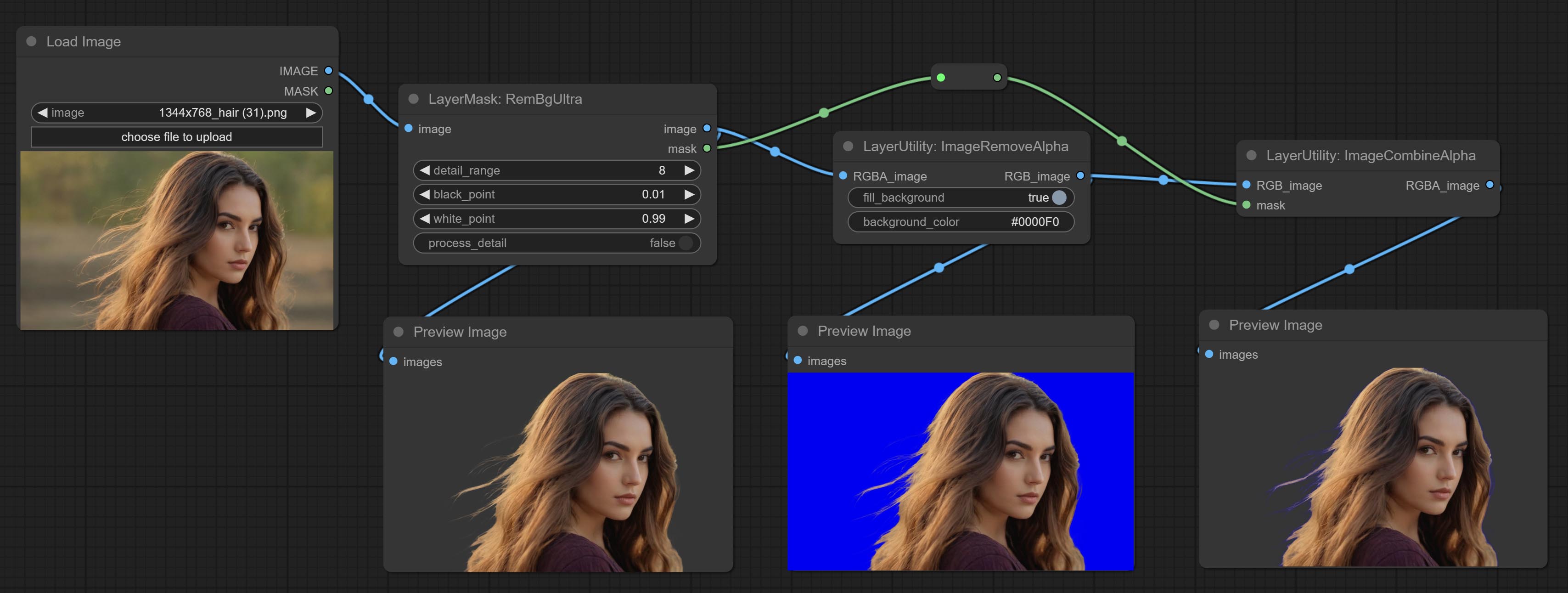

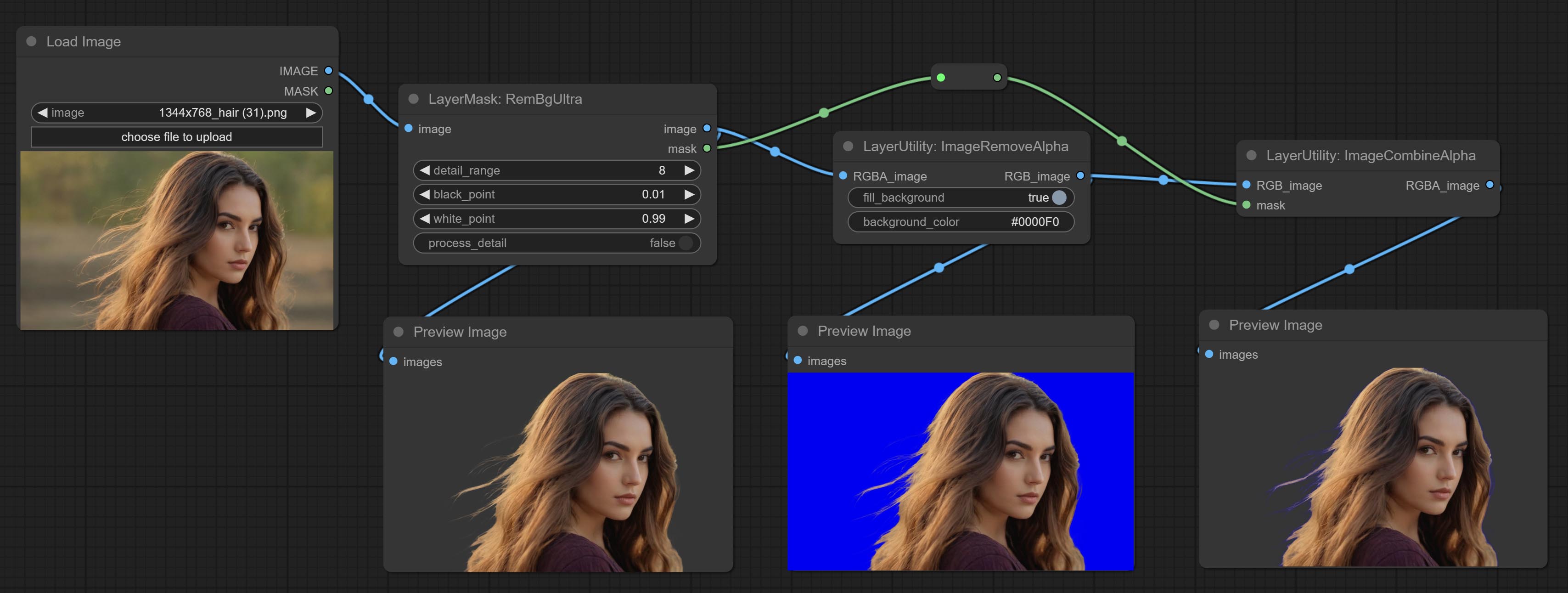

imageremovealpha 및 imagecombinealpha 노드 커밋 이미지의 알파 채널을 제거하거나 병합 할 수 있습니다.

Commit ImagesCalerestorev2 및 ImagesCaleByAspectratiov2 노드를 커밋하고 지정된 길고 짧은 가장자리 크기로 이미지를 스케일링하는 것을 지원합니다.

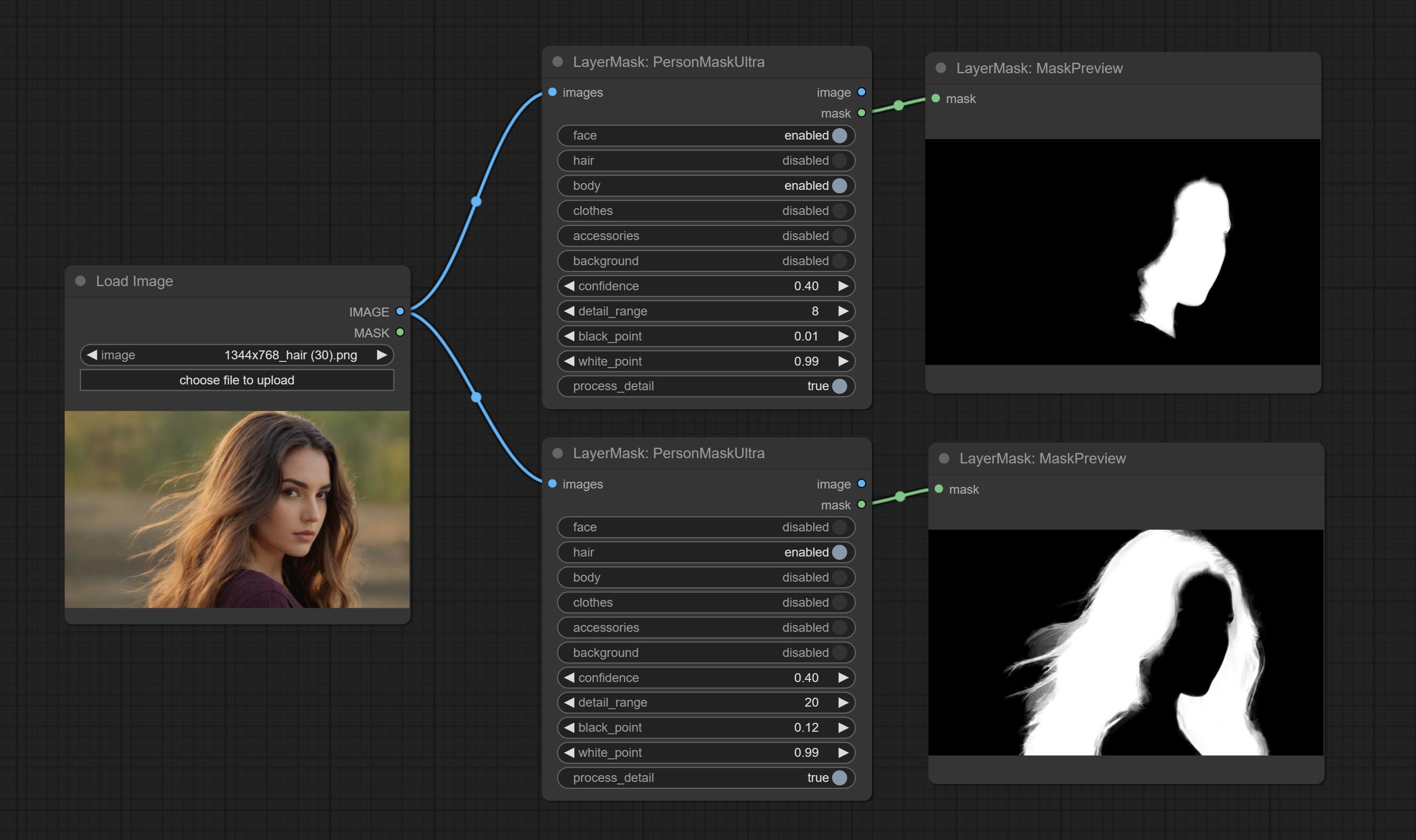

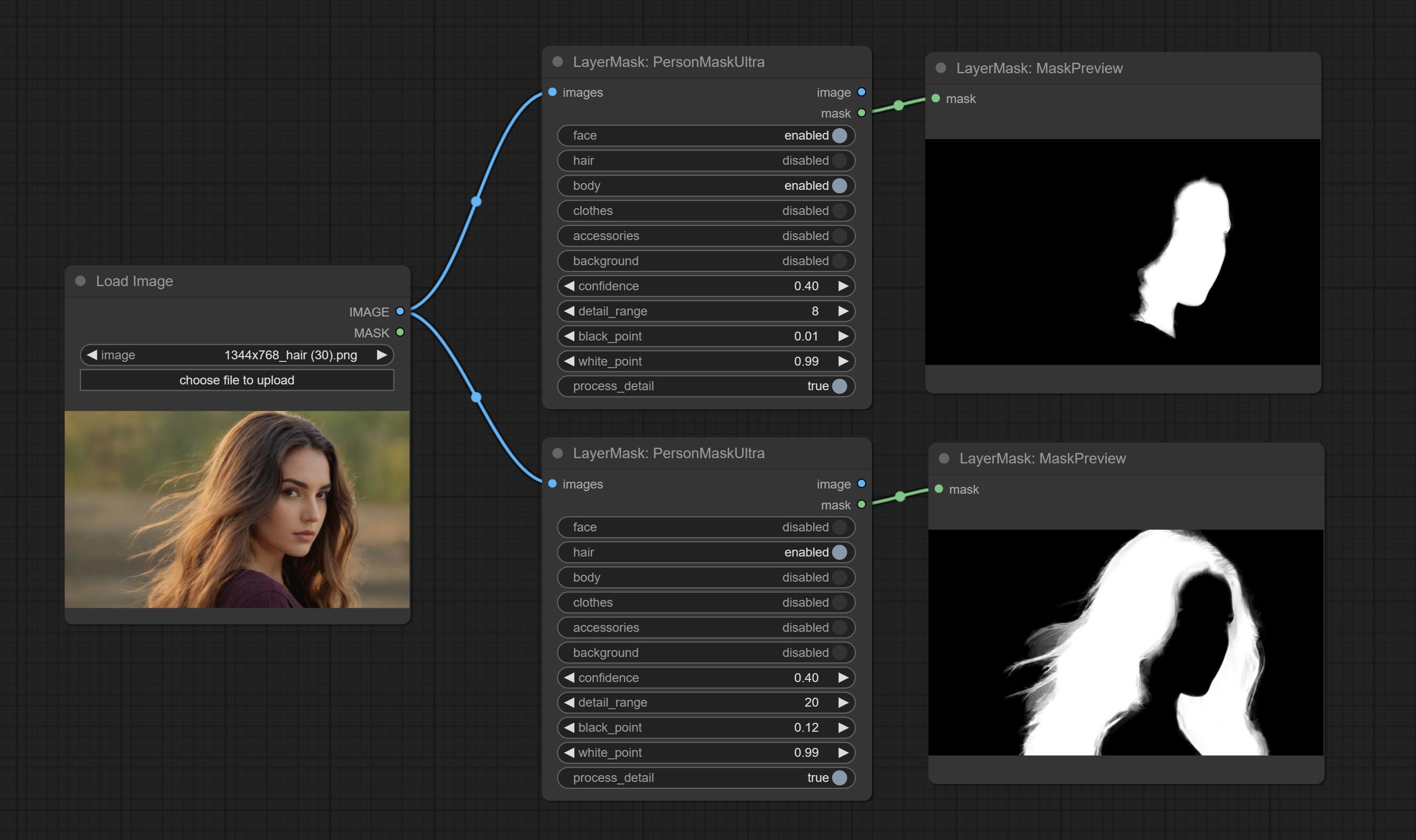

PersonMaskultra 노드를 커밋하고 초상화의 얼굴, 머리카락, 신체 피부, 의류 또는 액세서리에 대한 마스크를 생성하십시오. 이 노드의 모델 코드는 A-Person-Mask-Generator에서 제공됩니다.

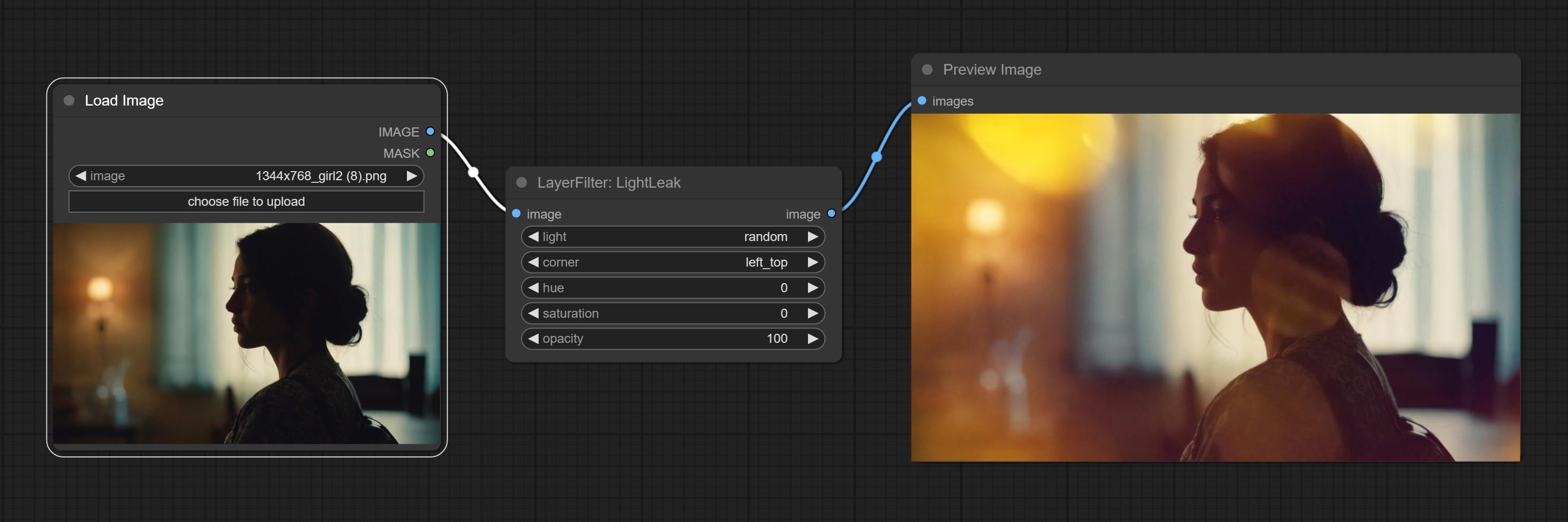

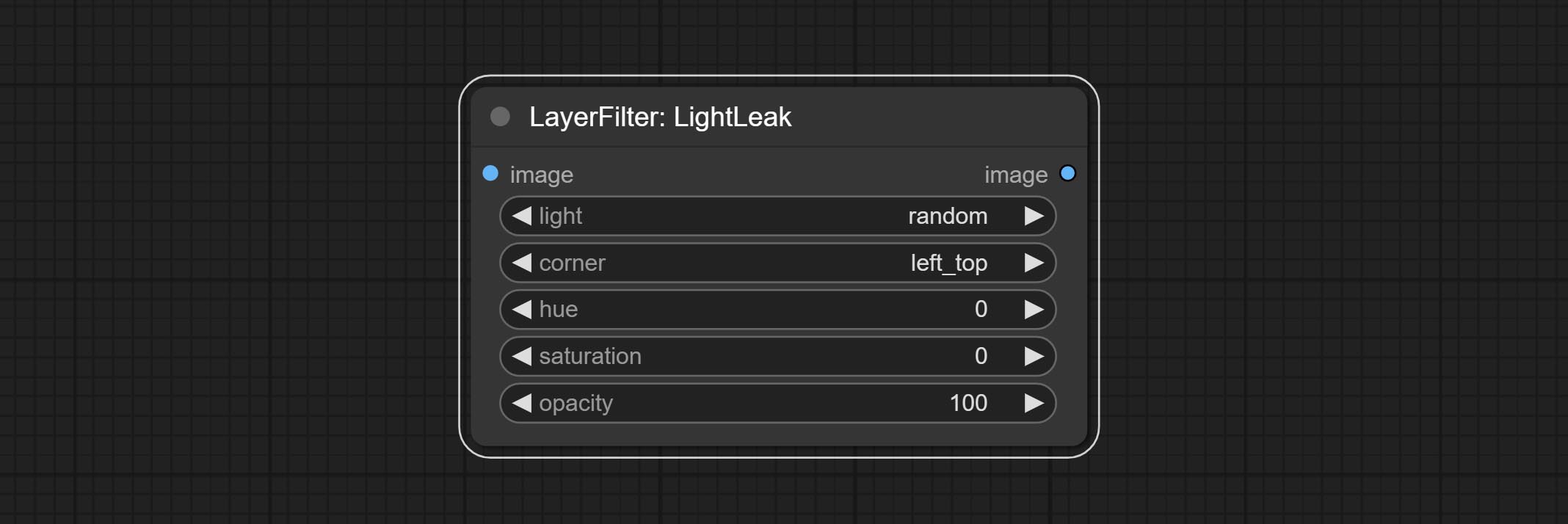

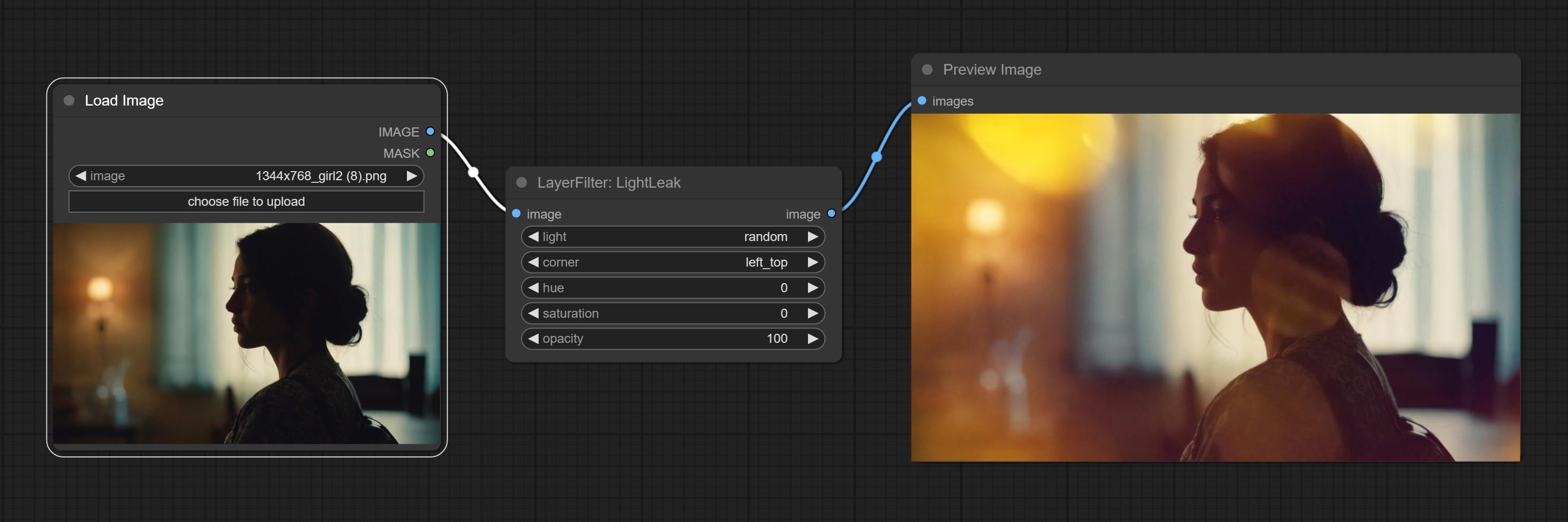

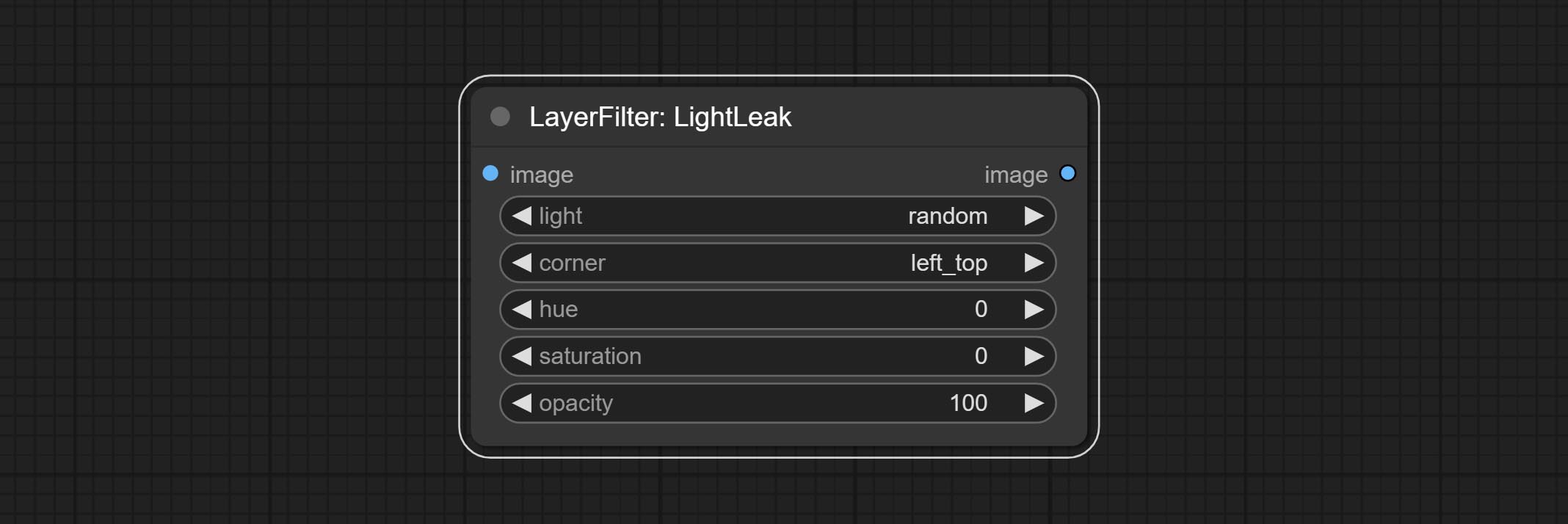

조명 노드를 커밋하면이 필터는 필름의 광 누출 효과를 시뮬레이션합니다.

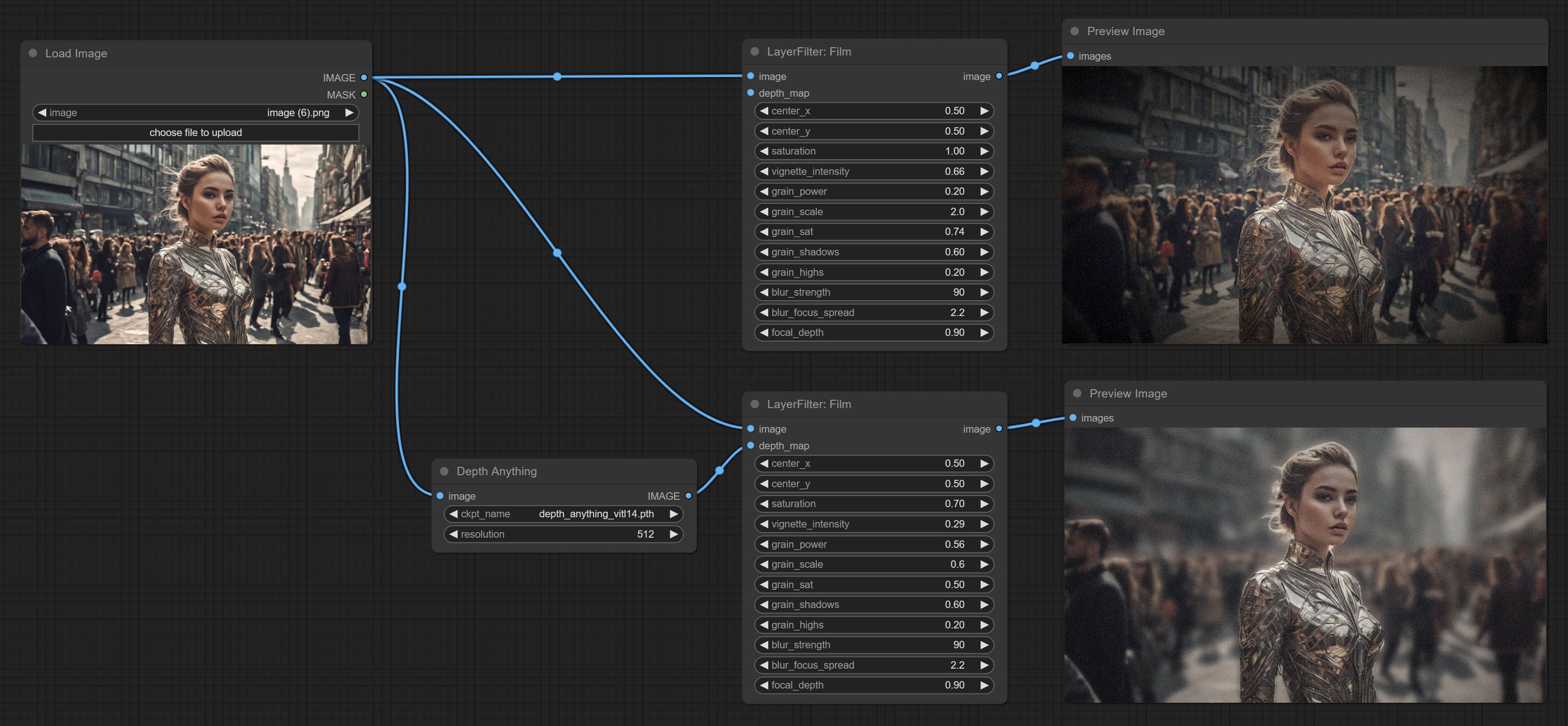

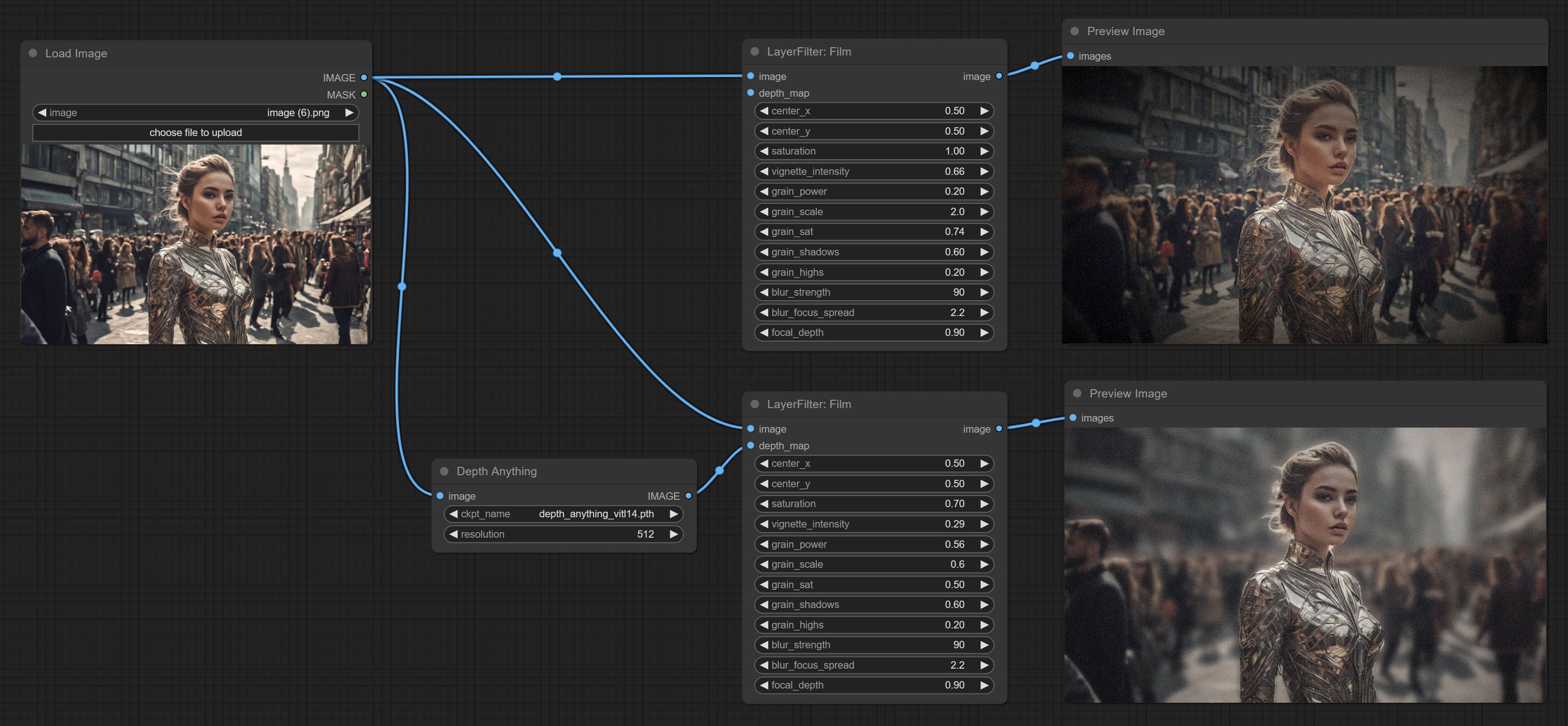

필름 노드를 커밋하면이 필터는 필름의 곡물, 어두운 가장자리 및 흐릿한 모서리를 시뮬레이션하여 입력 깊이 맵을 지원하여 Defocus를 시뮬레이션합니다. Digital John/Comfyui-Propost의 재구성 및 캡슐화됩니다.

훈련 모델을위한 이미지 자료를 생성하도록 설계된 ImageAutocrop 노드를 커밋하십시오.

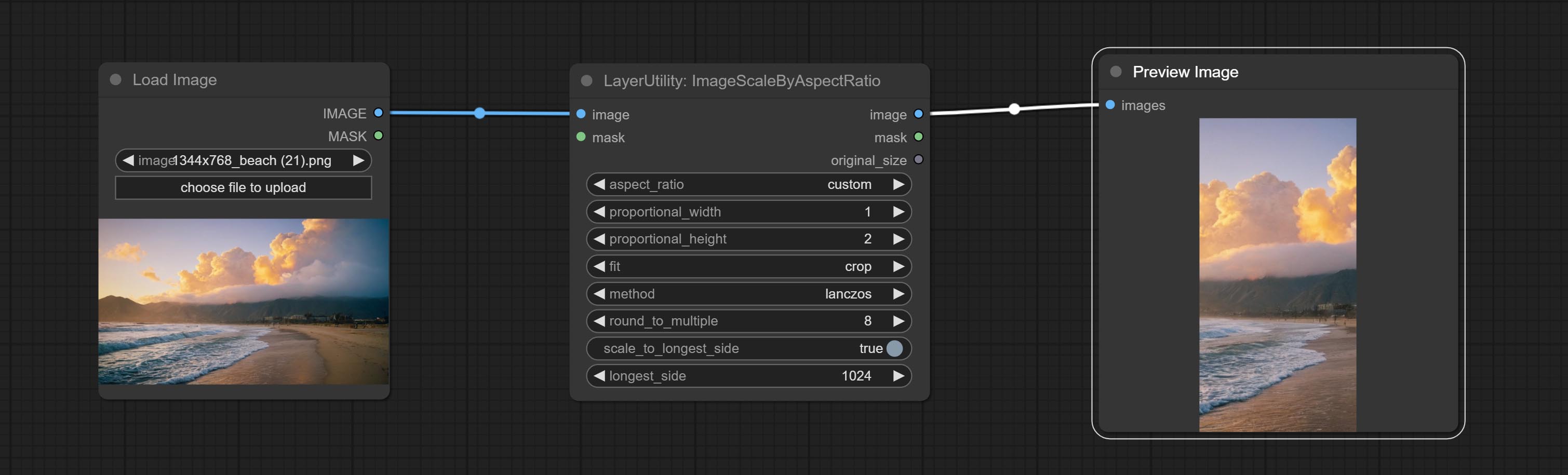

ImagesCaleByAspectratio 노드를 커밋하면 프레임 비율에 따라 이미지를 조정하거나 마스크 할 수 있습니다.

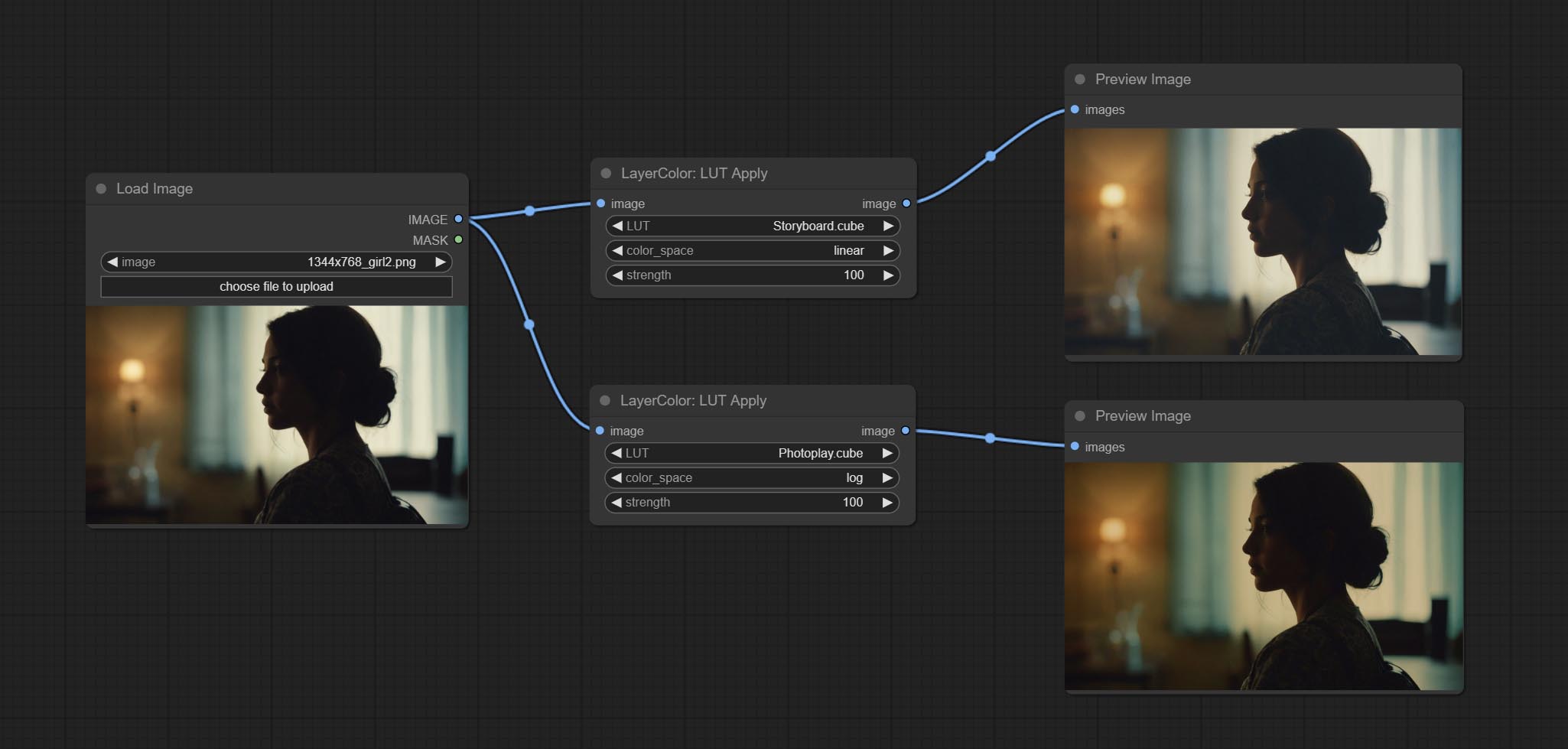

LUT에서 색상 그라데이션 버그를 수정하여 노드 렌더링을 적용 하고이 노드는 이제 로그 색상 공간을 지원합니다. *로그 색상 공간 이미지에 대한 전용 로그 LUT 파일을로드하십시오.

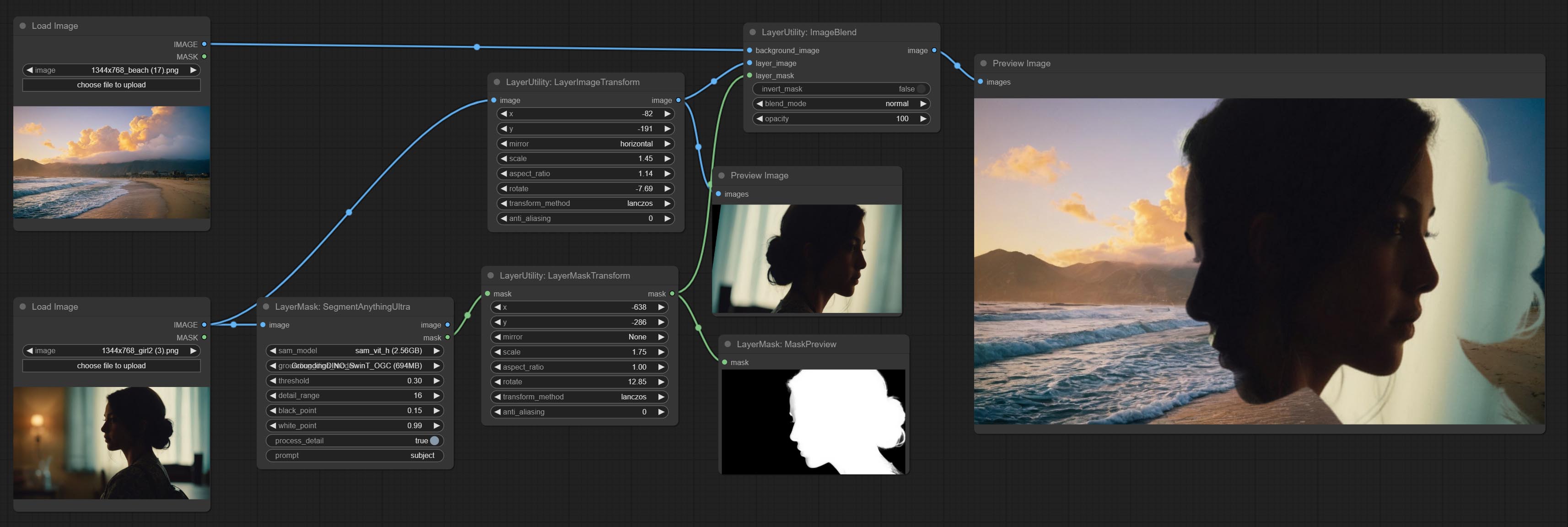

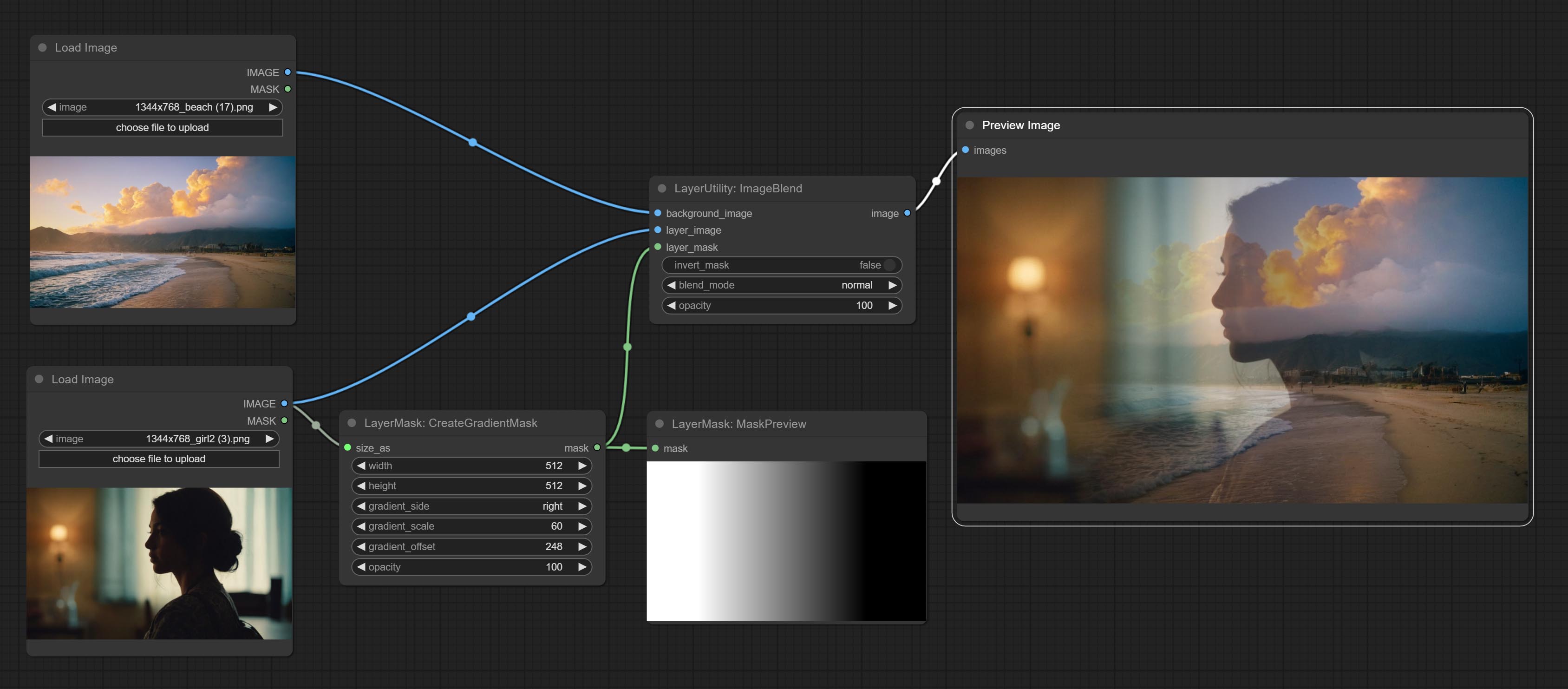

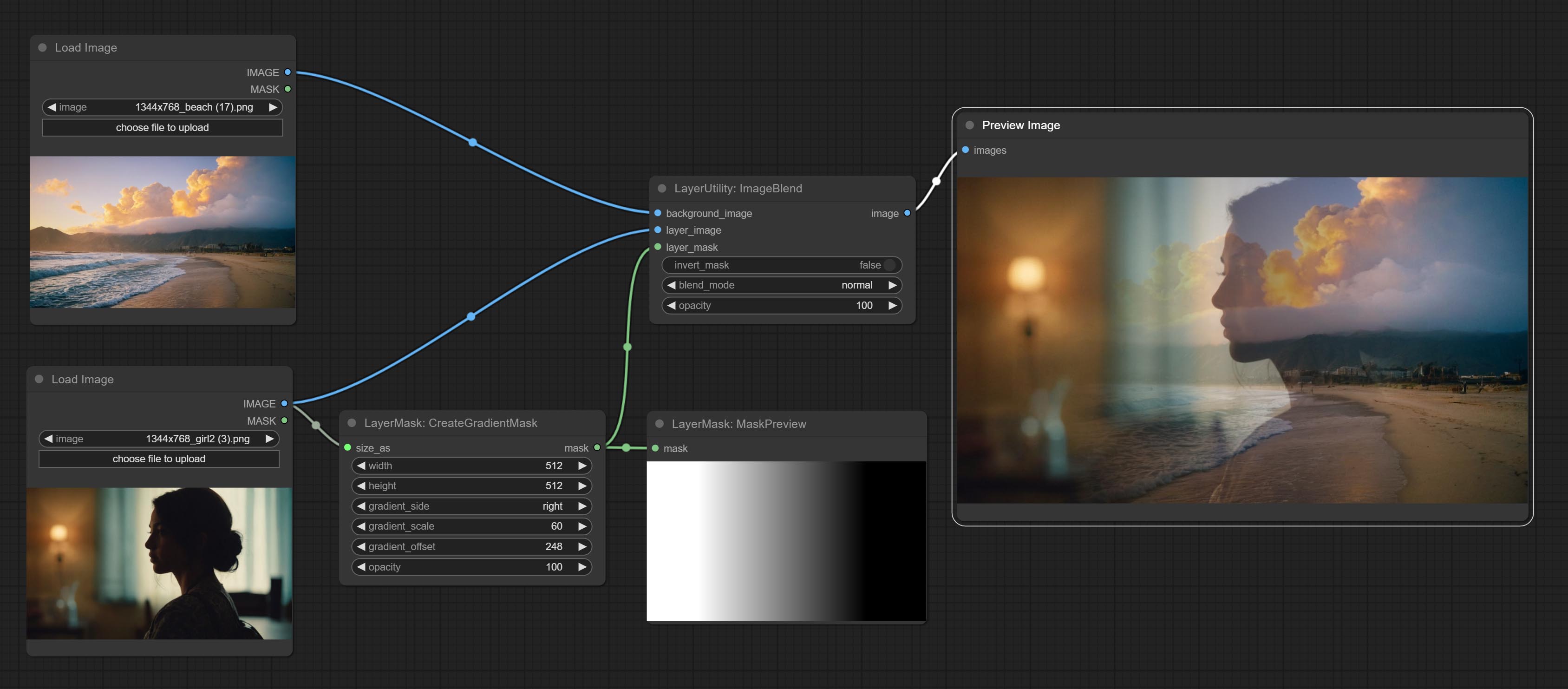

CreateGradientMask 노드를 커밋합니다. LayerImagetransform 및 Layermasktransform 노드를 커밋하십시오.

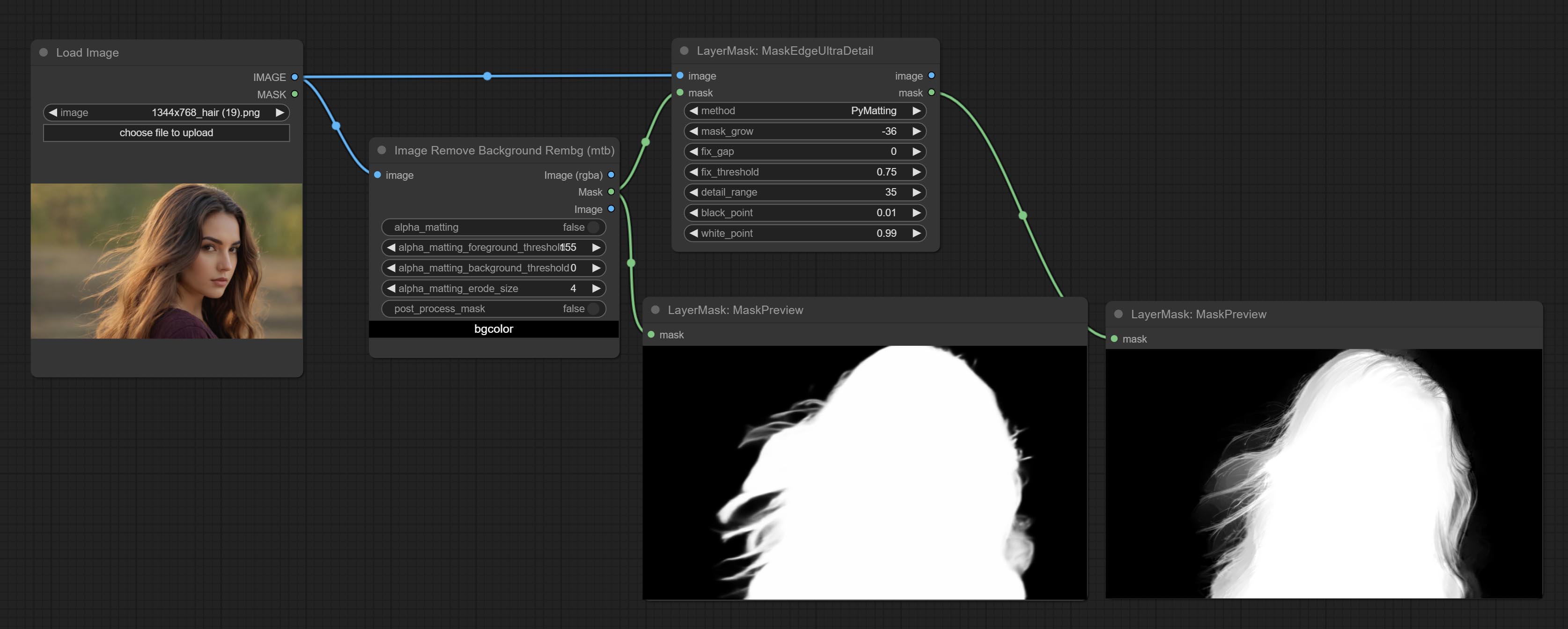

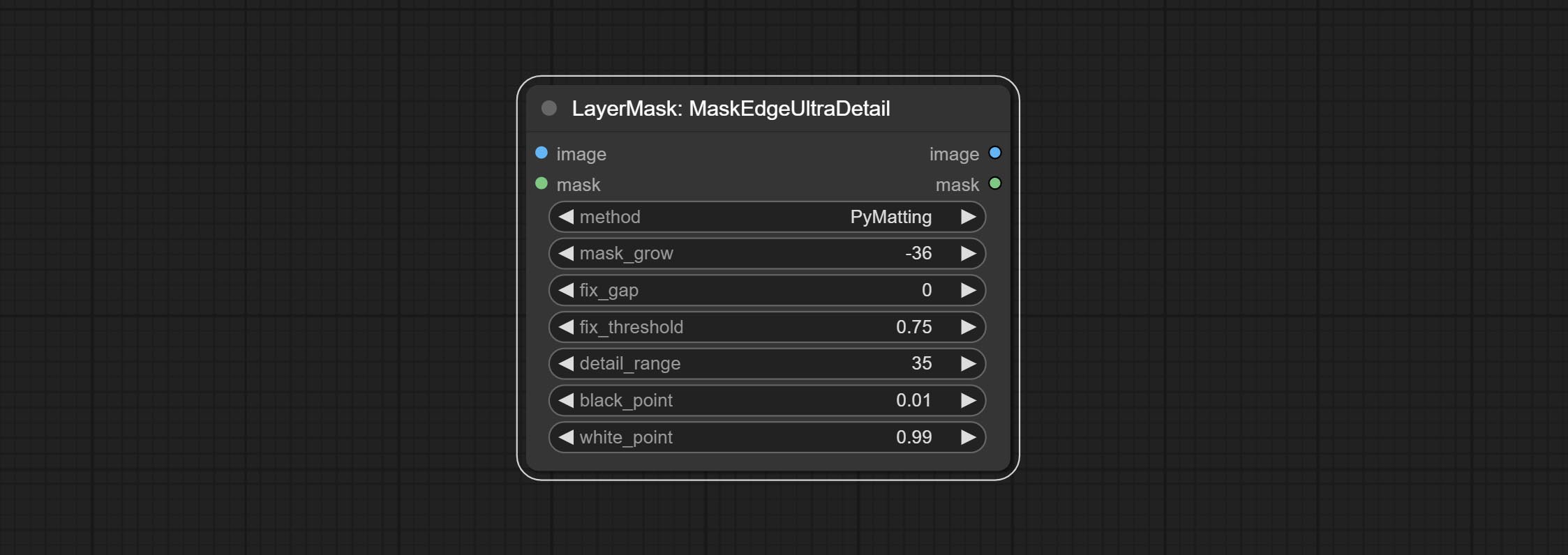

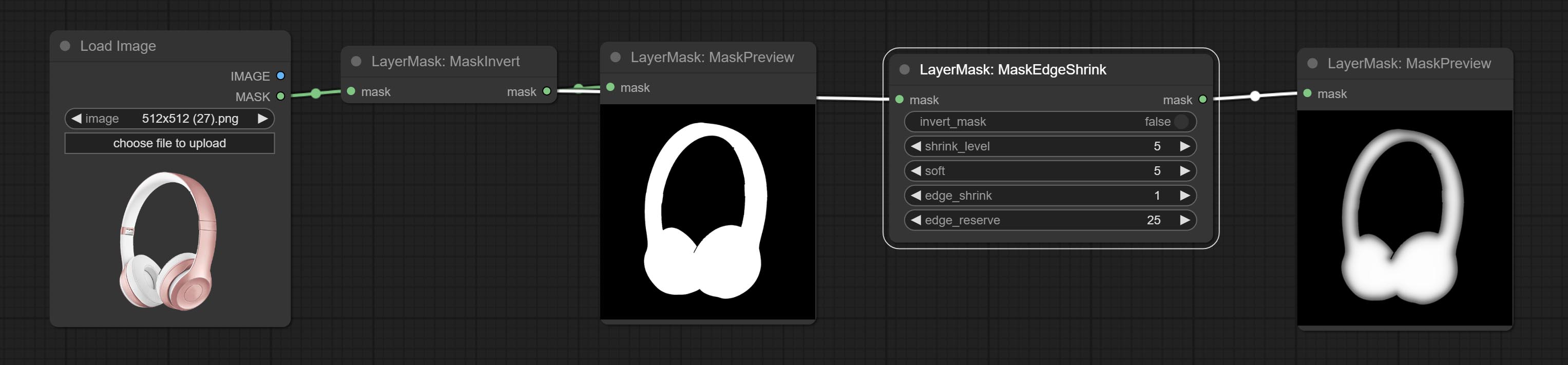

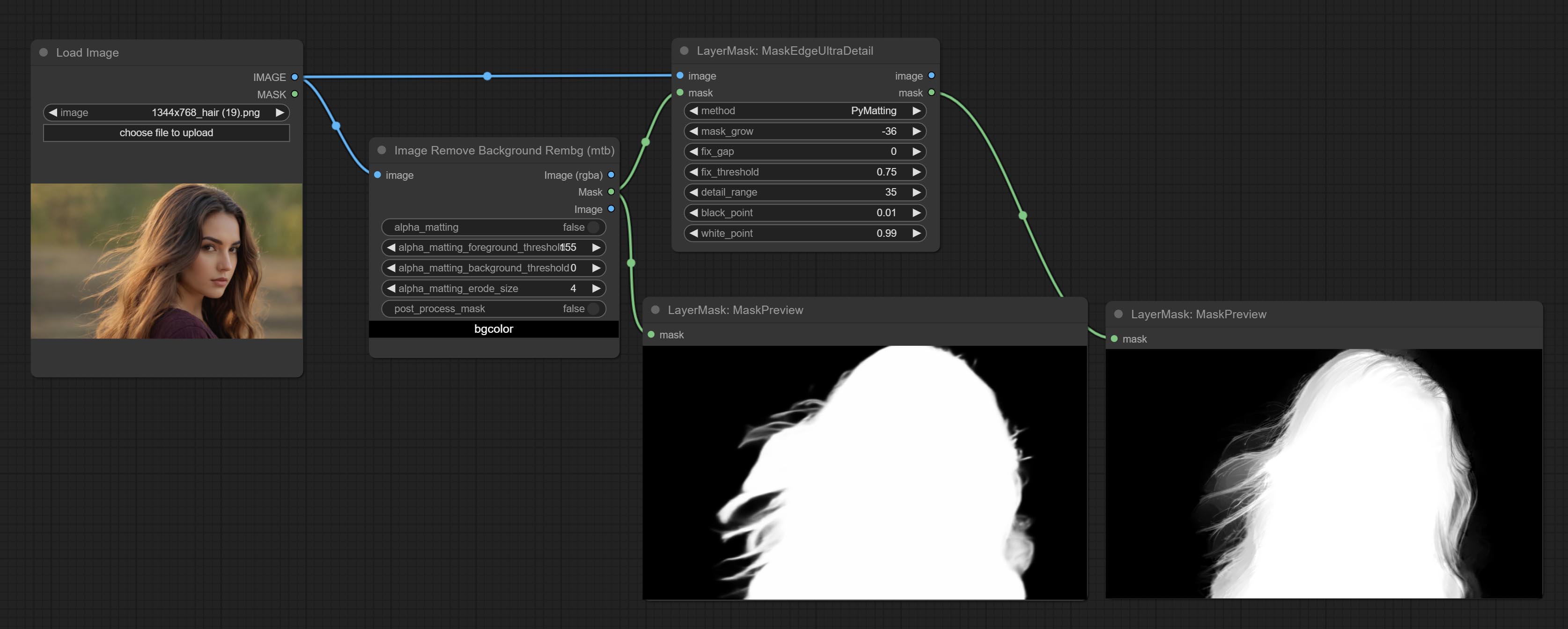

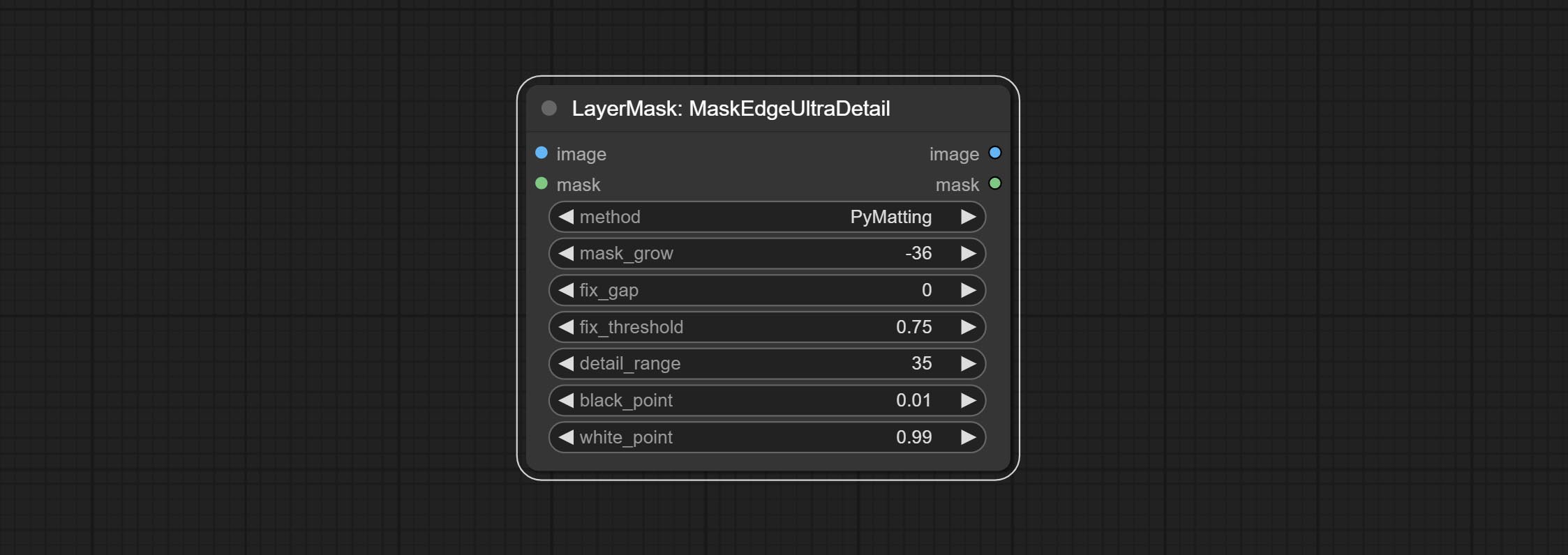

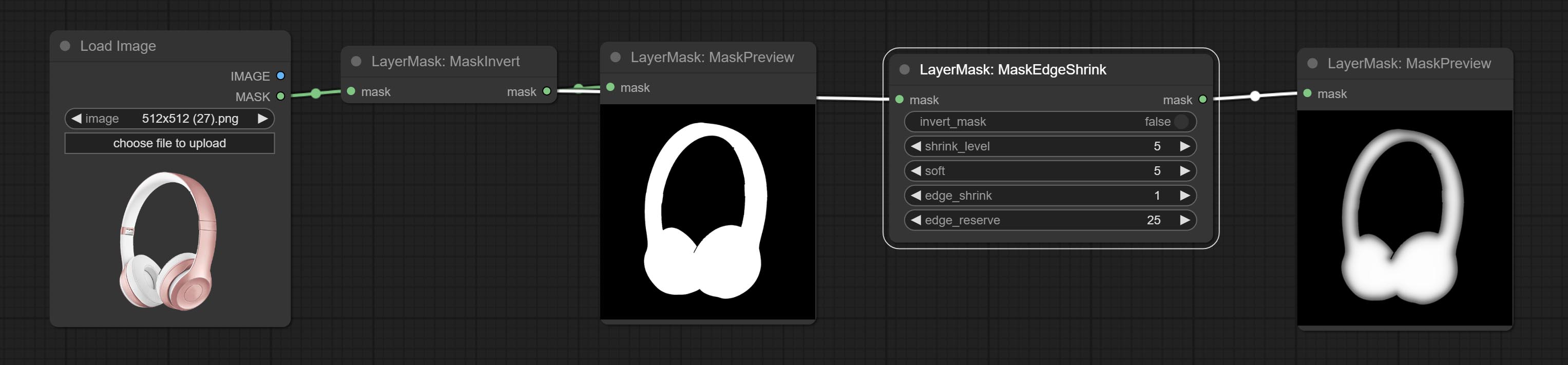

Maskedgeultradetail 노드를 커밋하면 거친 마스크를 울트라 미세 가장자리로 처리합니다.

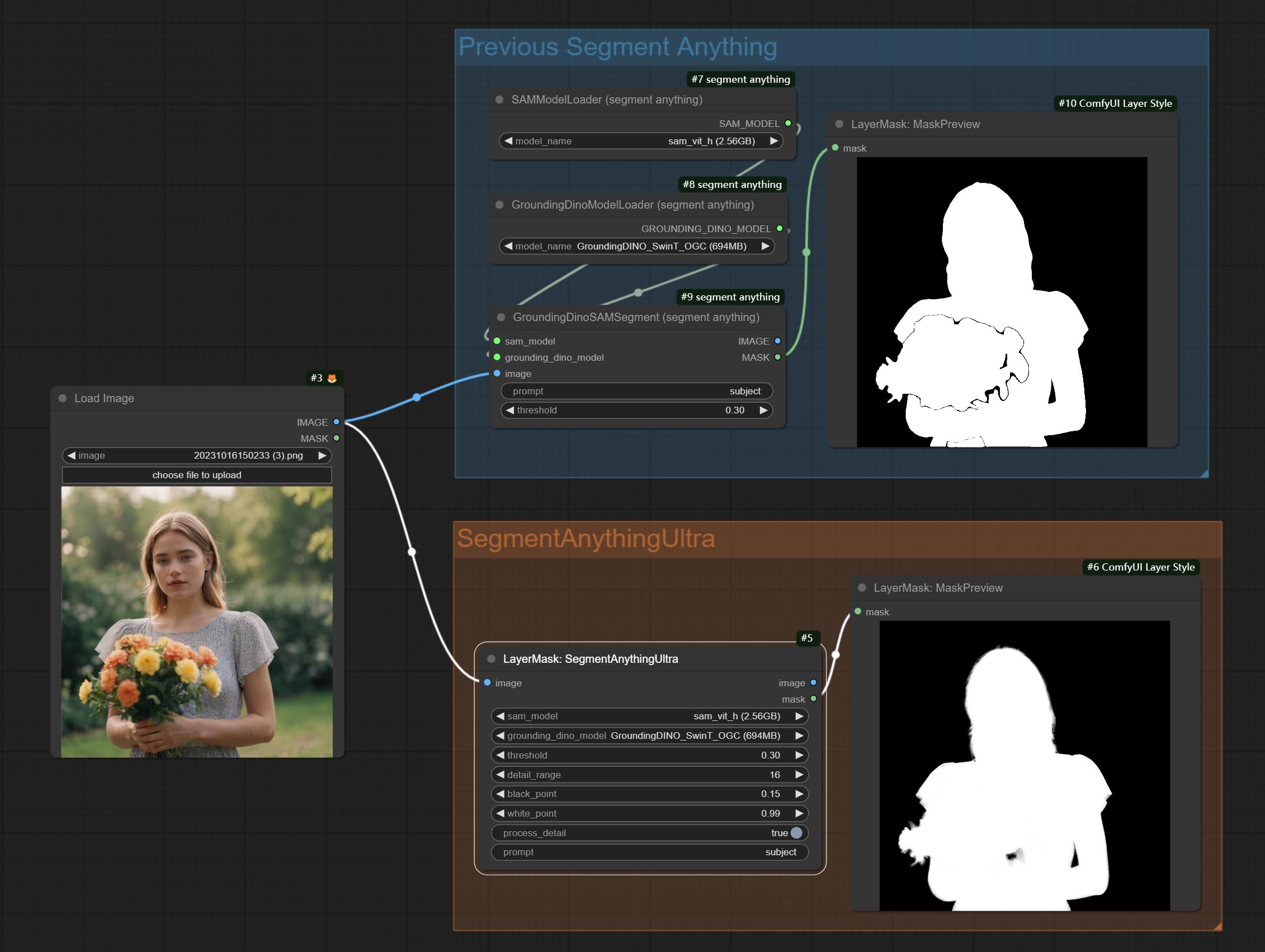

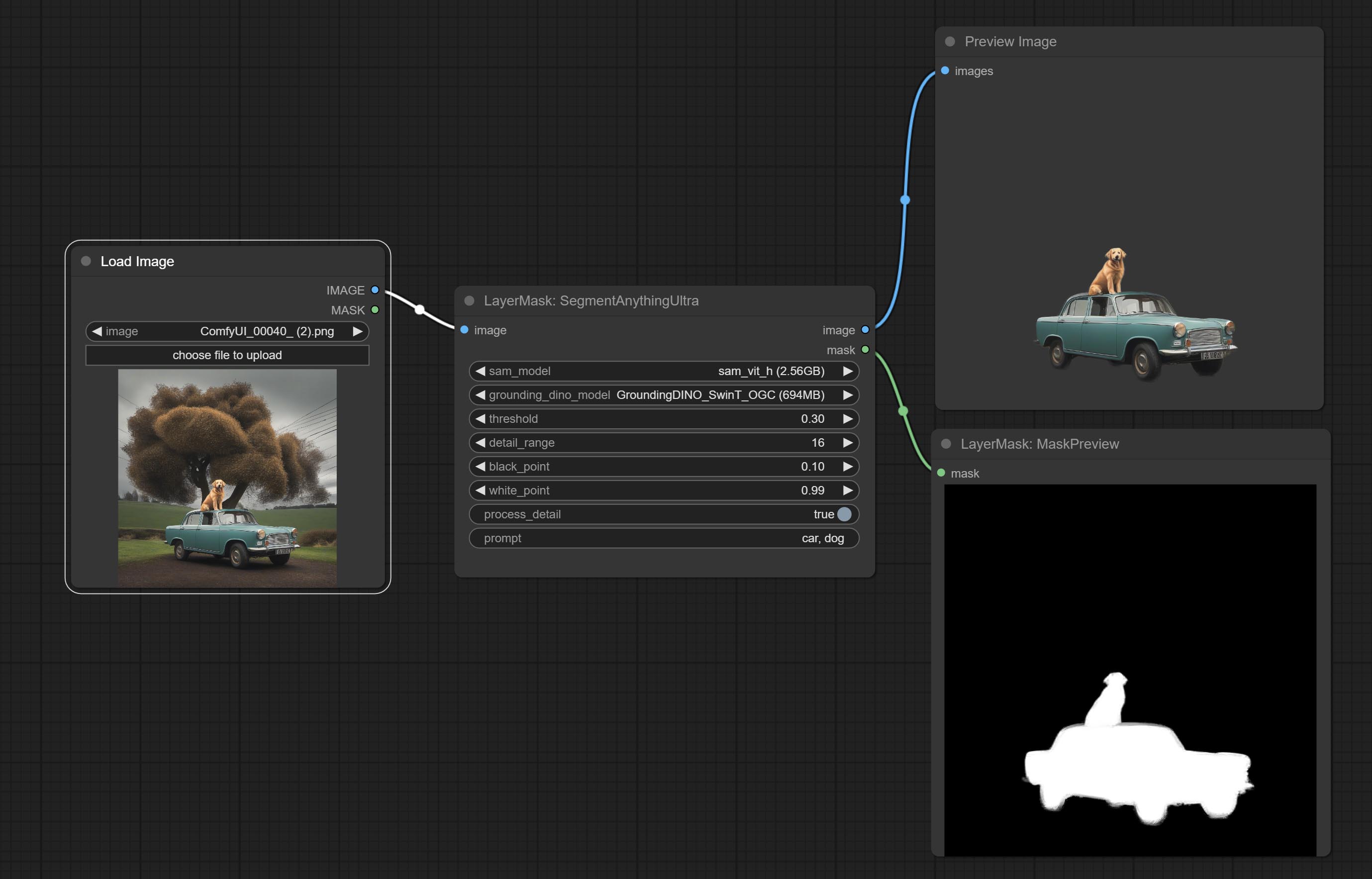

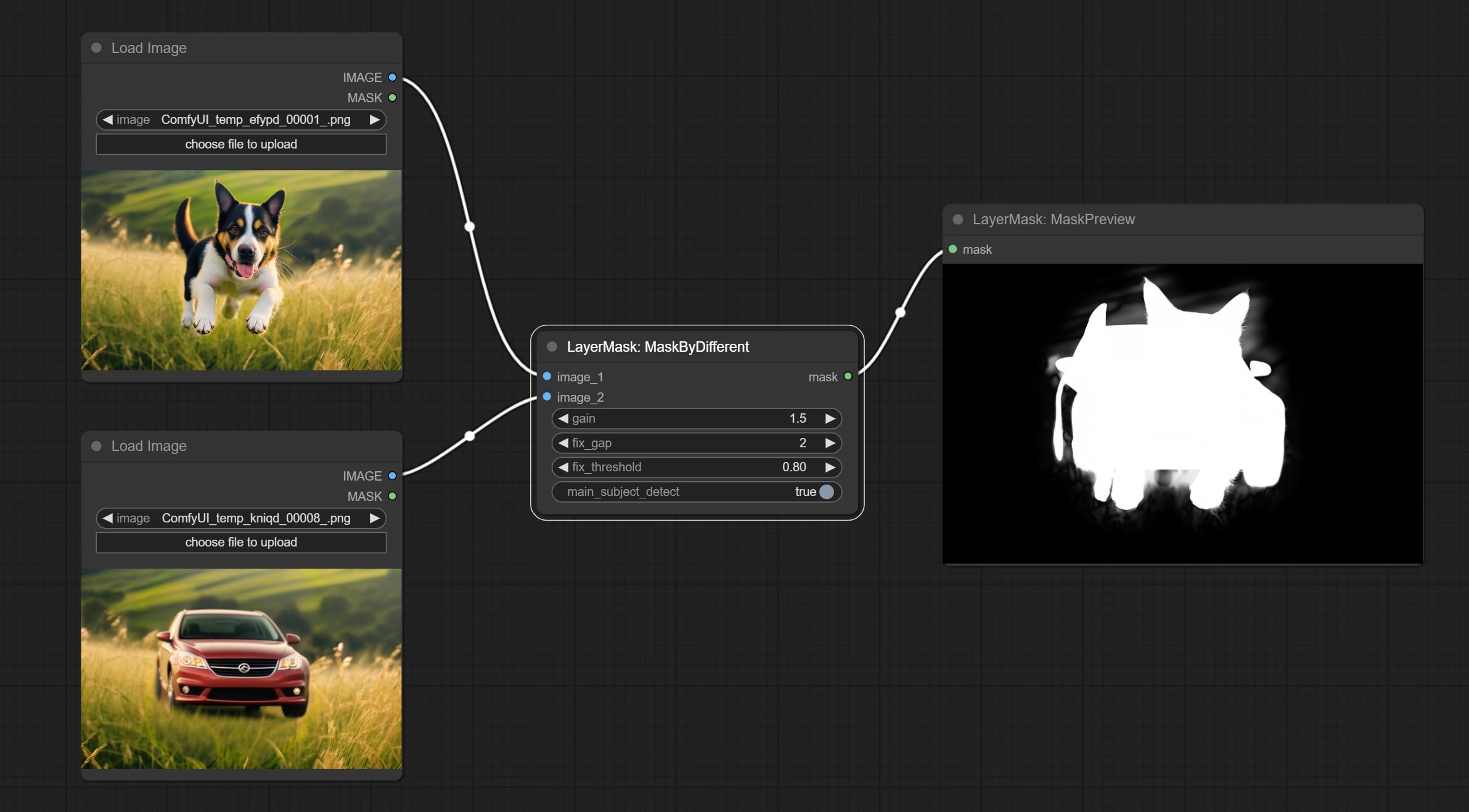

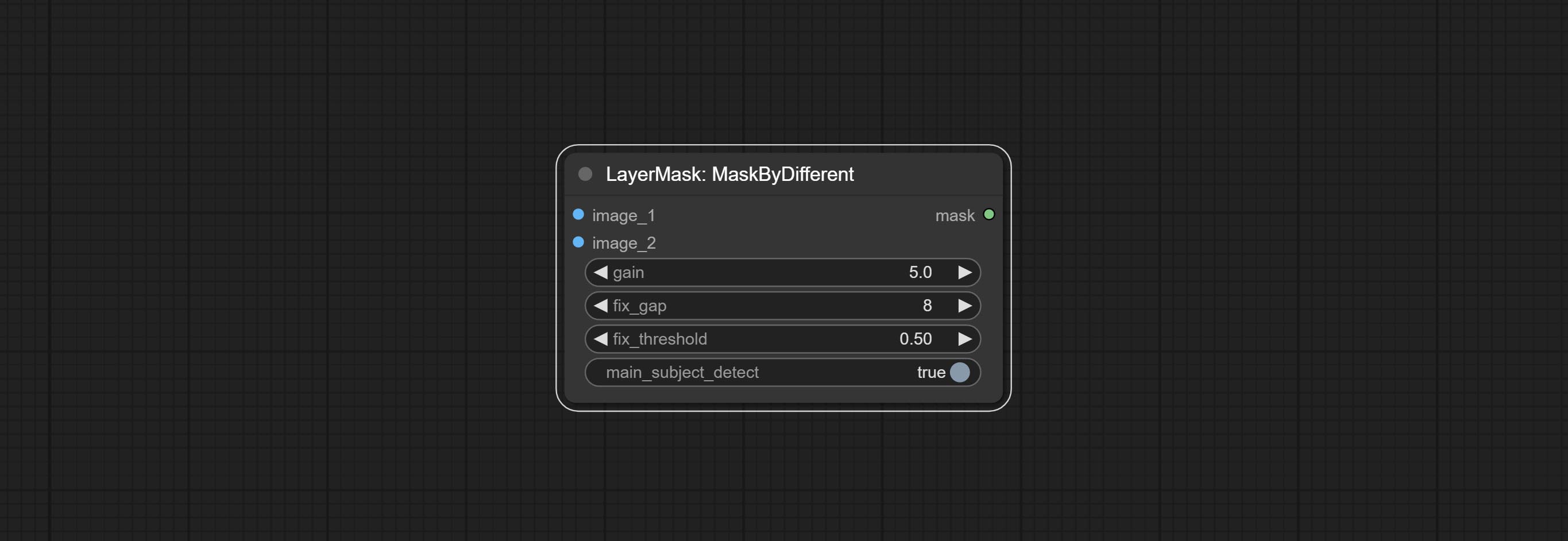

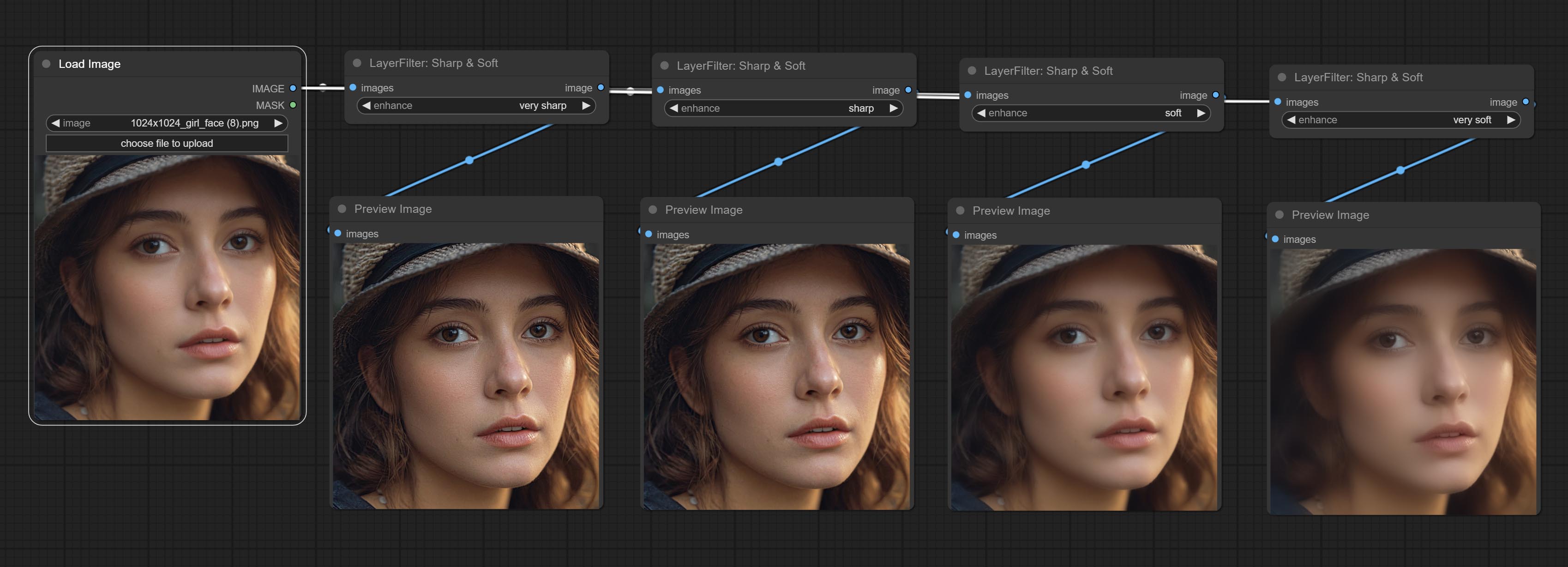

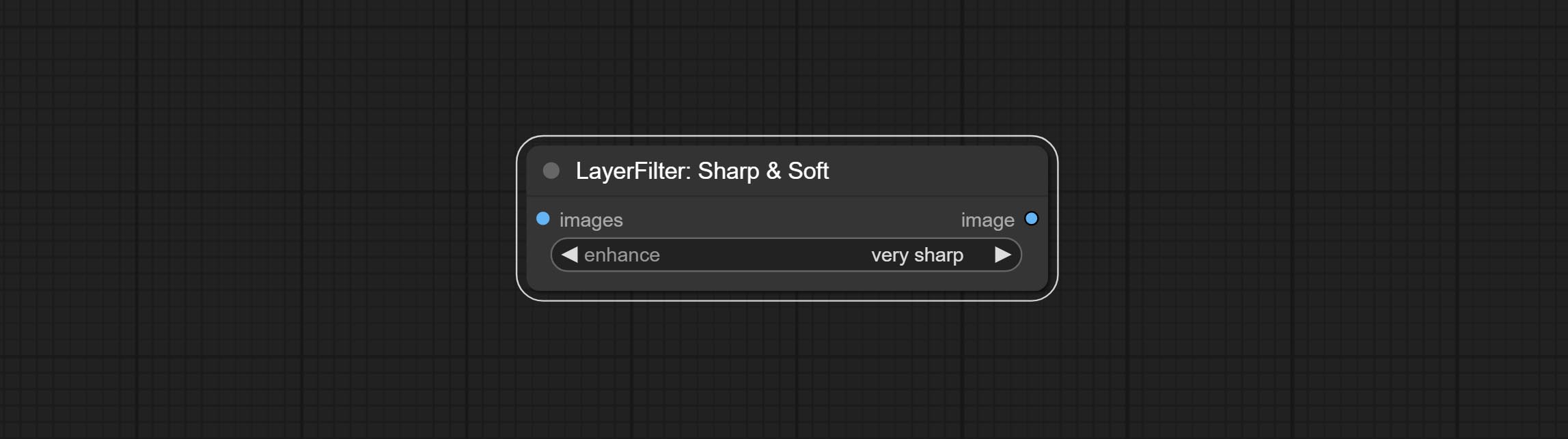

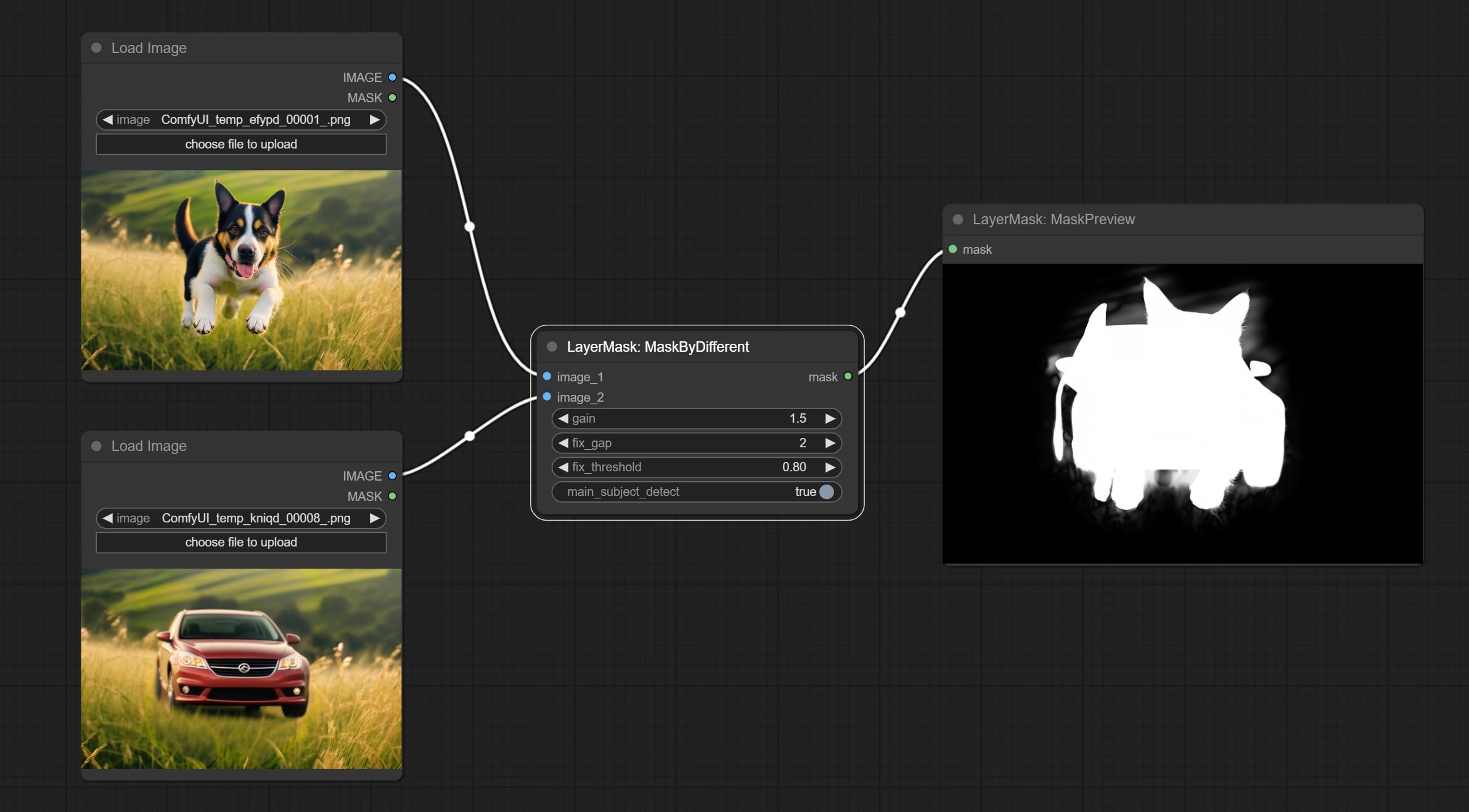

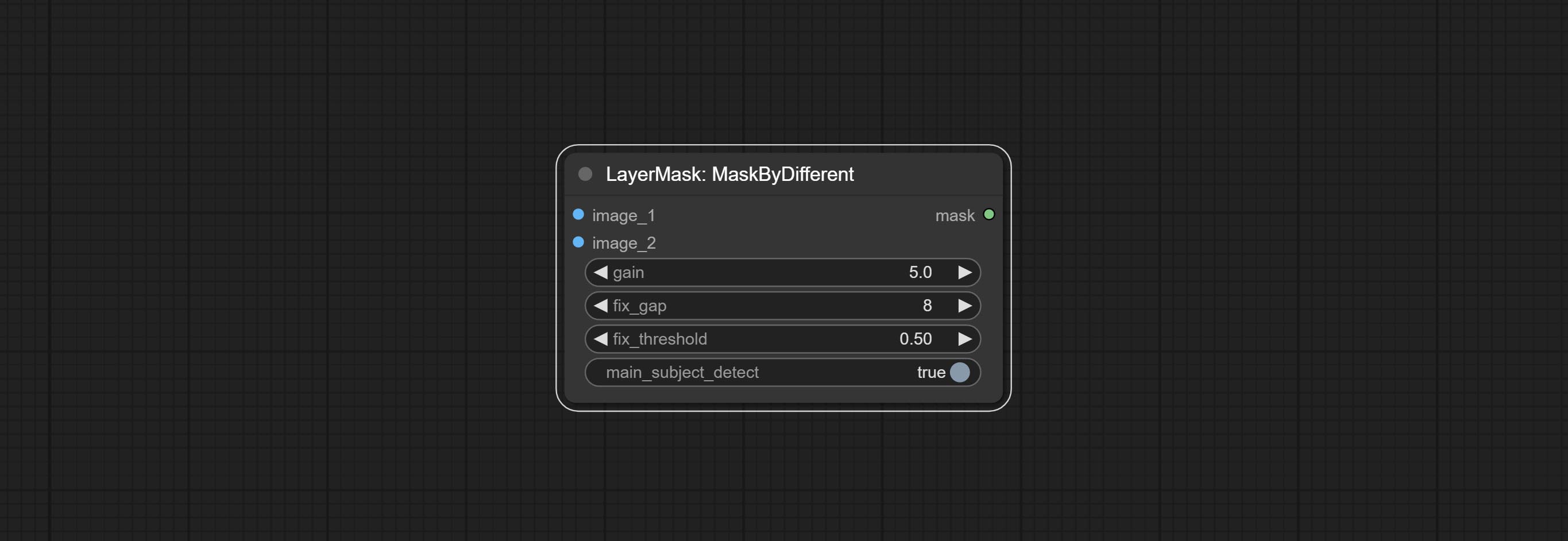

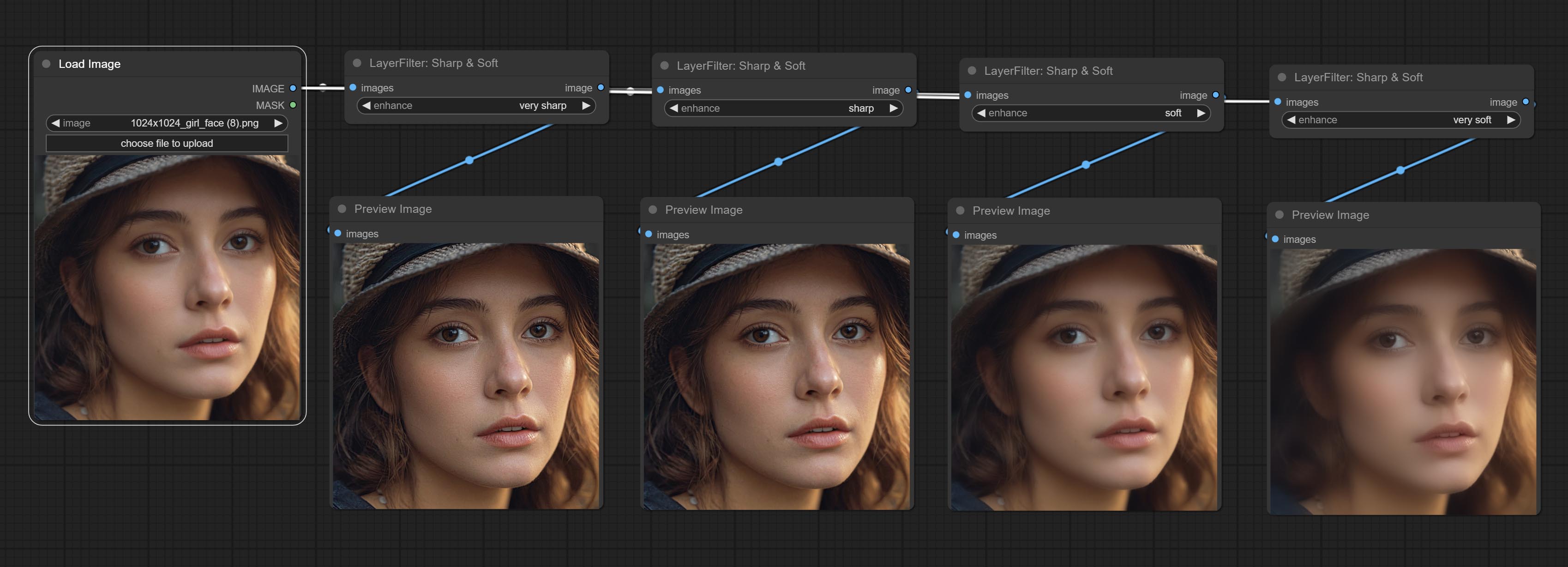

Sharp & Soft 노드를 커밋하면 이미지 세부 사항을 향상 시키거나 부드럽게 할 수 있습니다. Commit MaskByDifferent 노드, 두 개의 이미지를 비교하고 마스크를 출력합니다. SegmentAnythingUltra 노드를 커밋하고 마스크 가장자리의 품질을 향상시킵니다. *SegmentAnything이 설치되지 않은 경우 모델을 수동으로 다운로드해야합니다.

모든 노드에는 배치 이미지가 완전히 지원되어 비디오 제작을위한 편리함을 제공합니다. (Cropbymask 노드는 동일한 크기의 컷 만 지원합니다. Batch Mask_For_Crop이 입력되면 첫 번째 시트의 데이터가 사용됩니다.)

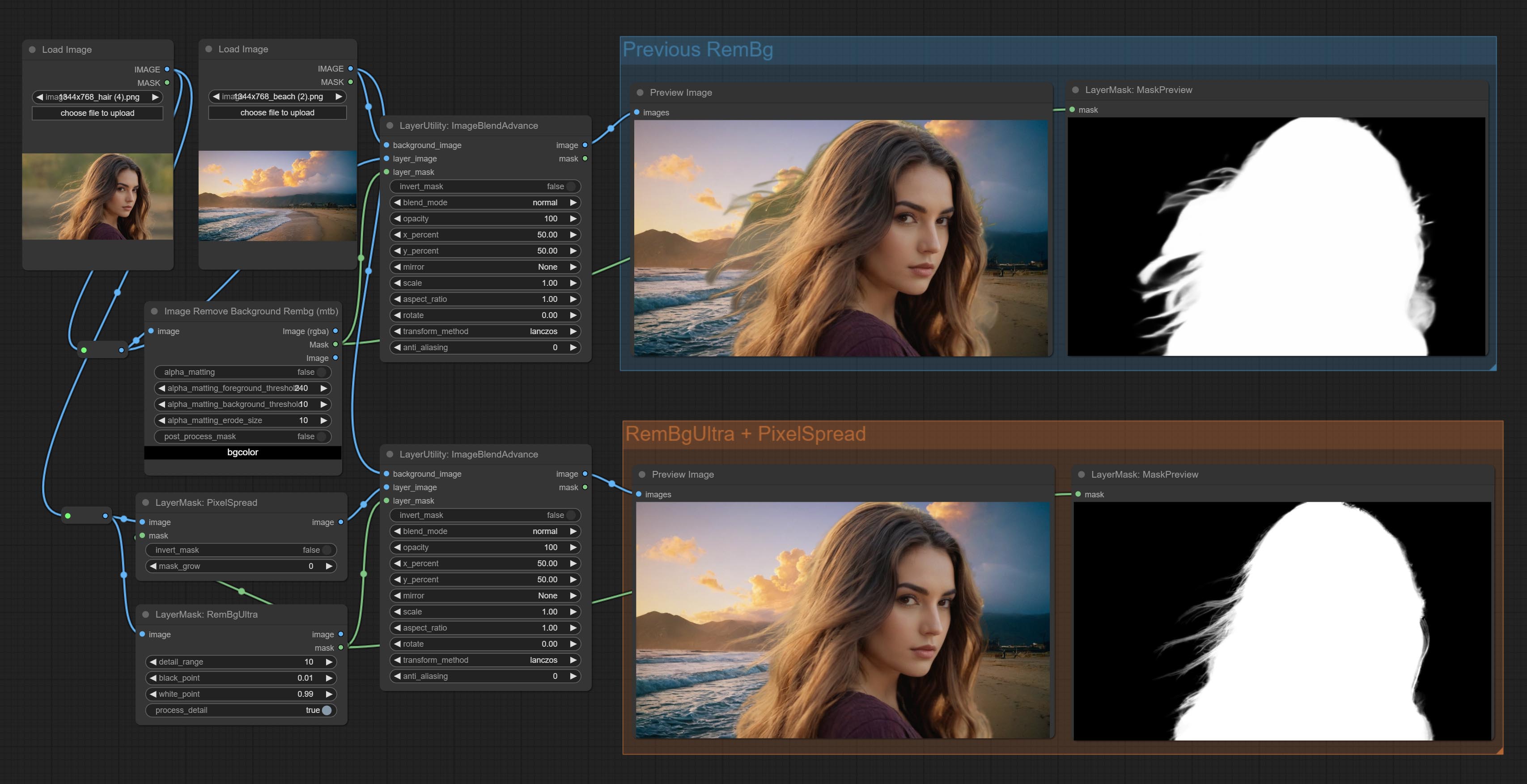

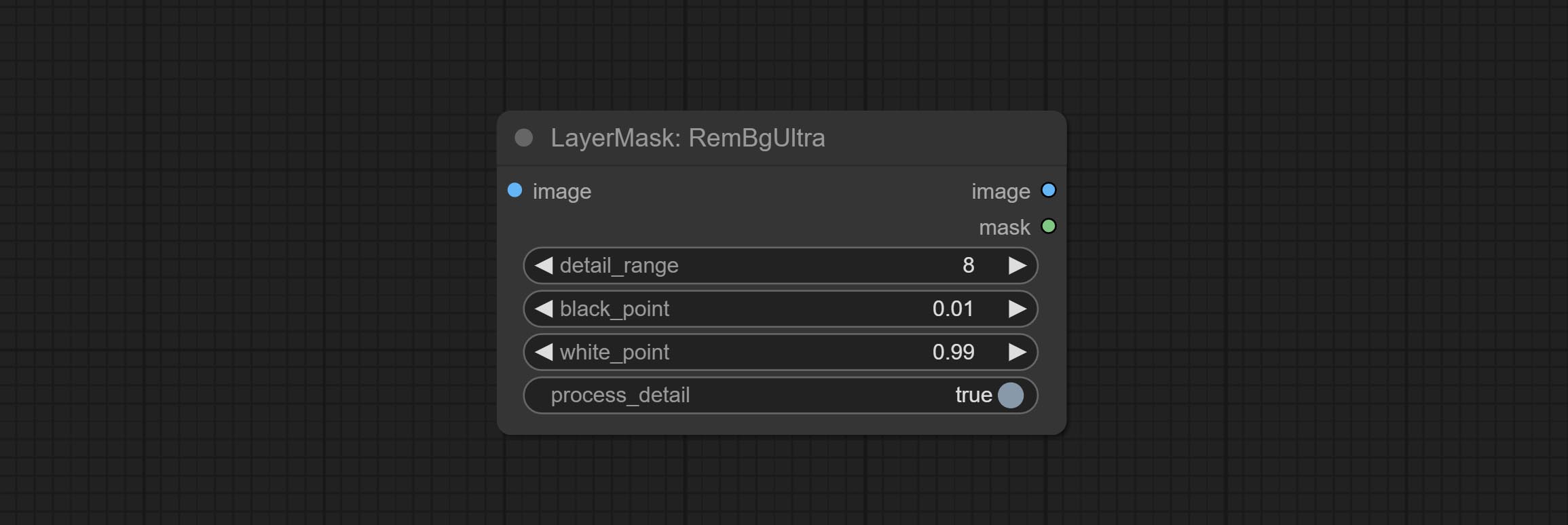

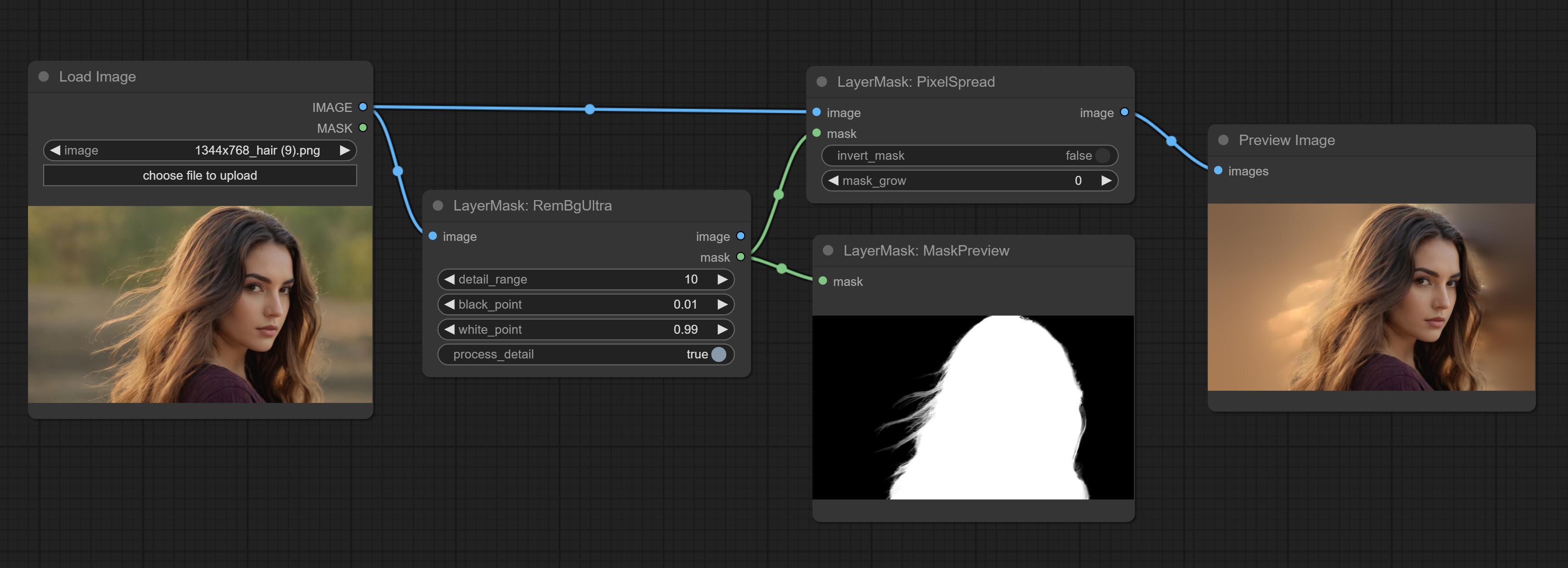

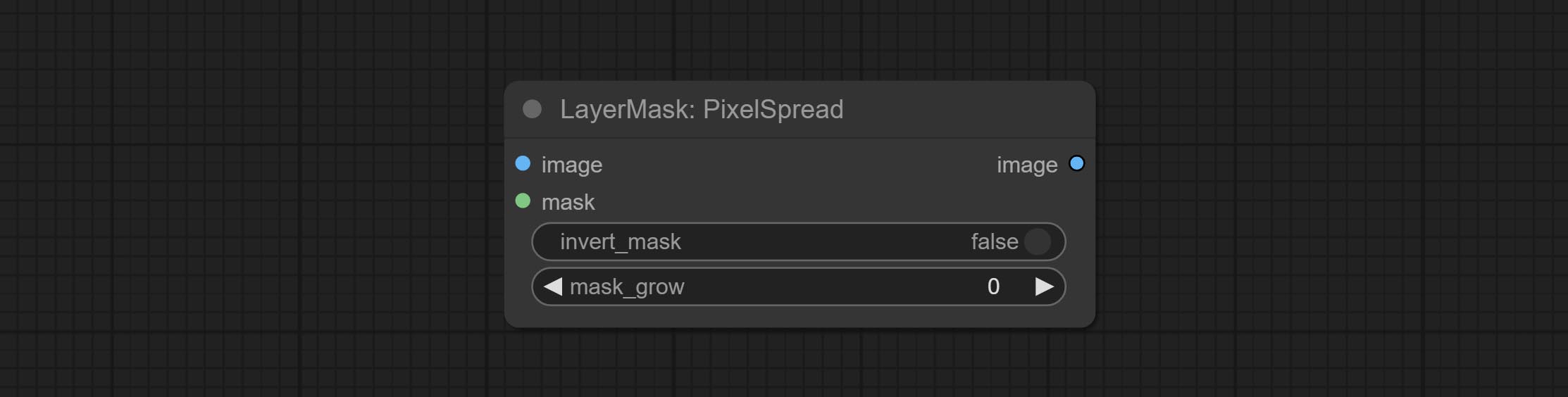

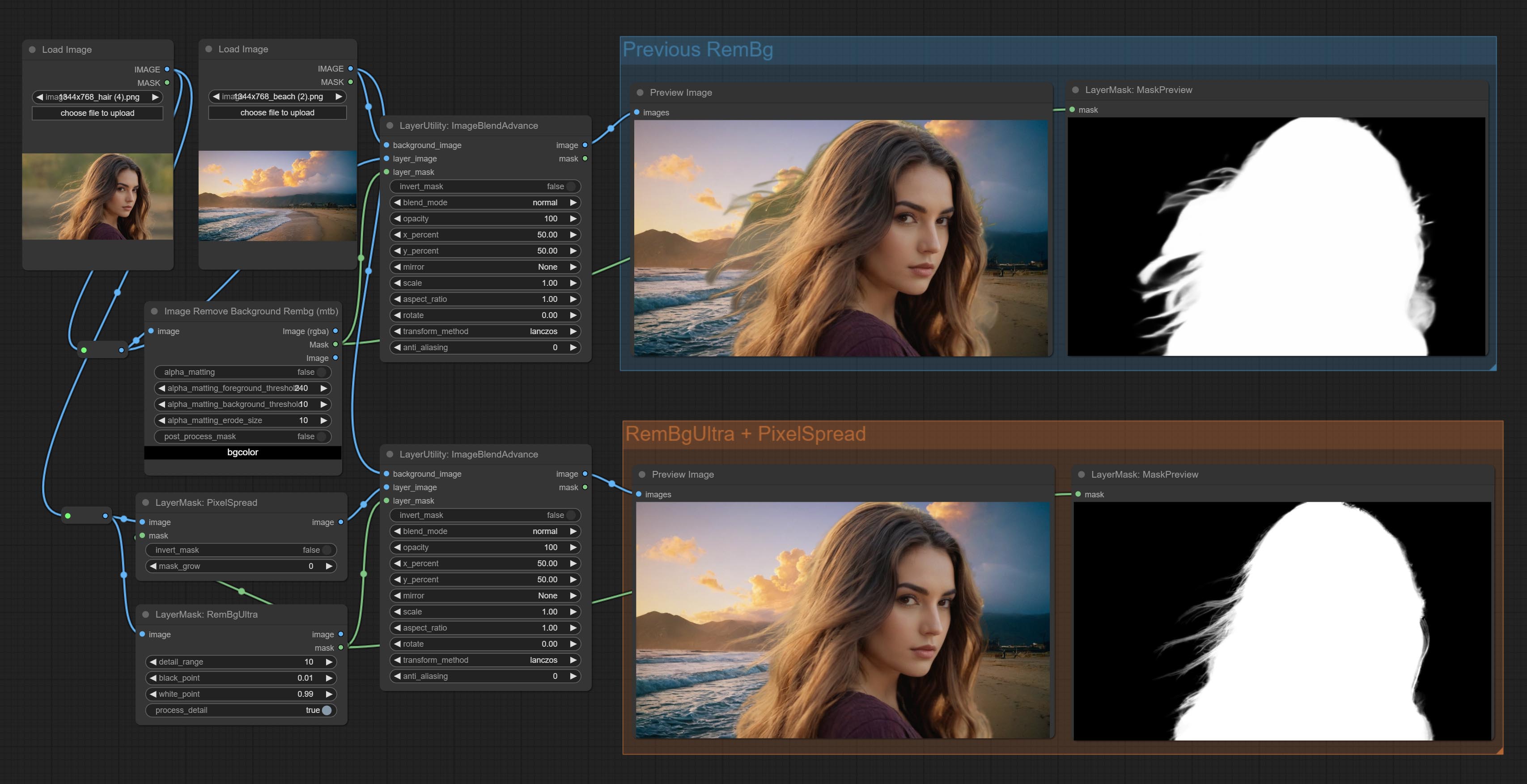

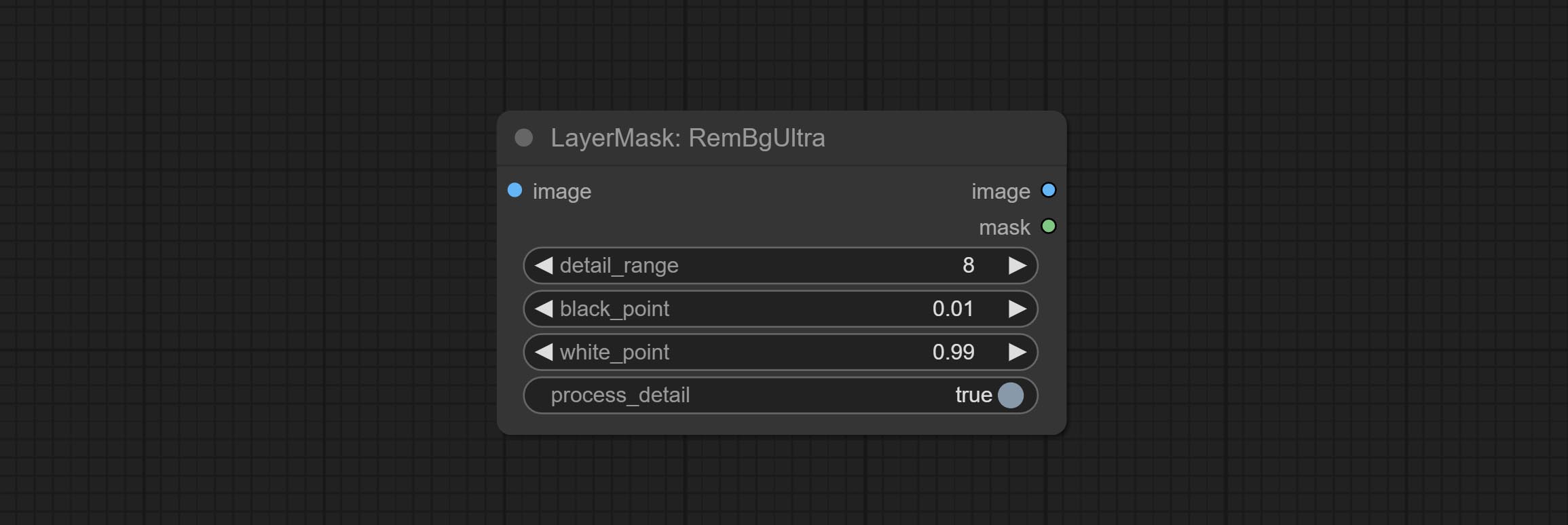

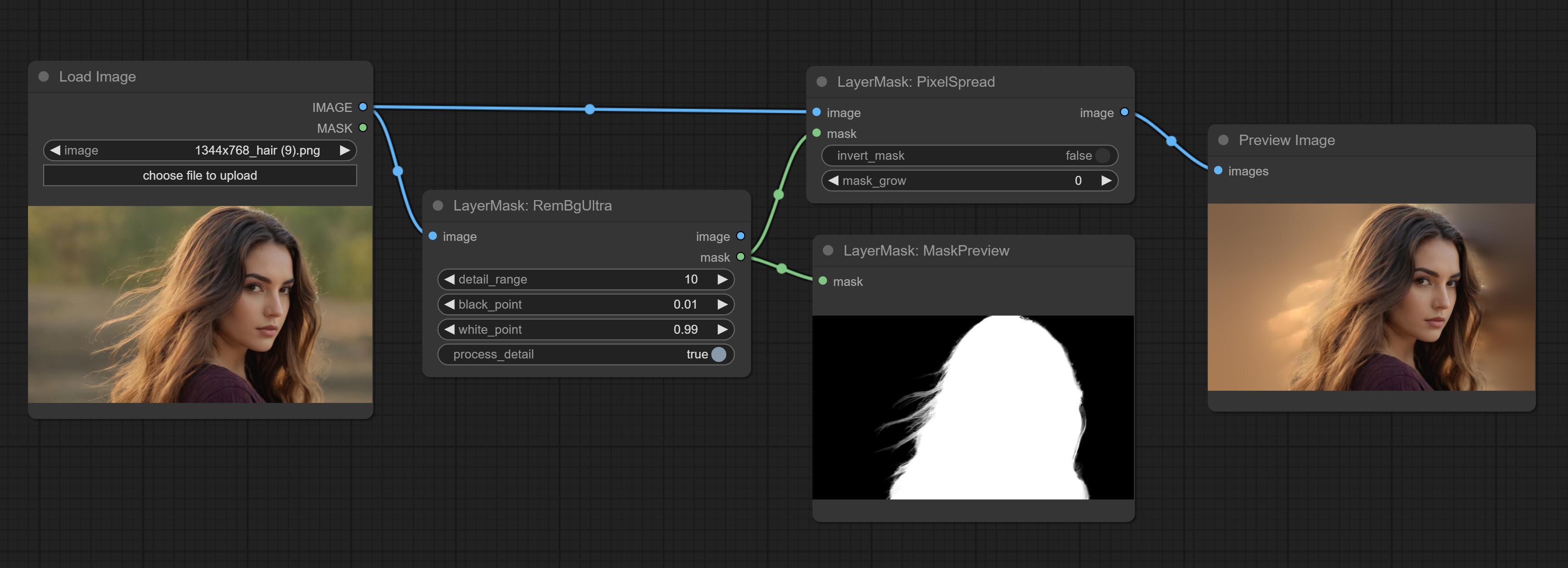

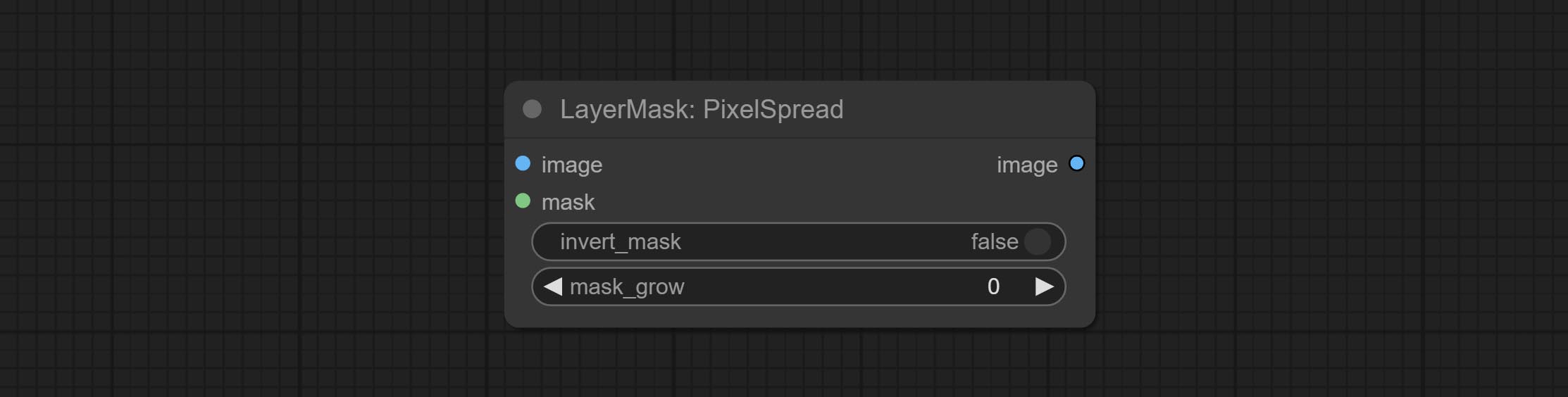

Commit REMBGULTRA 및 PIXELSPREAD 노드는 마스크 품질을 크게 향상 시켰습니다. *rembgultra에는 수동 모델 다운로드가 필요합니다.

텍스트 노드를 커밋하면 텍스트 이미지와 마스크가 생성됩니다.

이미지 사이에 새로운 유형의 블렌드 모드를 추가하십시오. 이제 최대 19 개의 블렌드 모드를 지원합니다. Color_Burn, Color_Dodge, Linear_Burn, Linear_Dodge, 오버레이, 소프트 _light, 하드 라이트, vivid_light, pin_light, linear_light 및 hard_mix를 추가하십시오. 새로 추가 된 블렌드 모드는 블렌드 모드를 지원하는 모든 노드에 적용 할 수 있습니다.

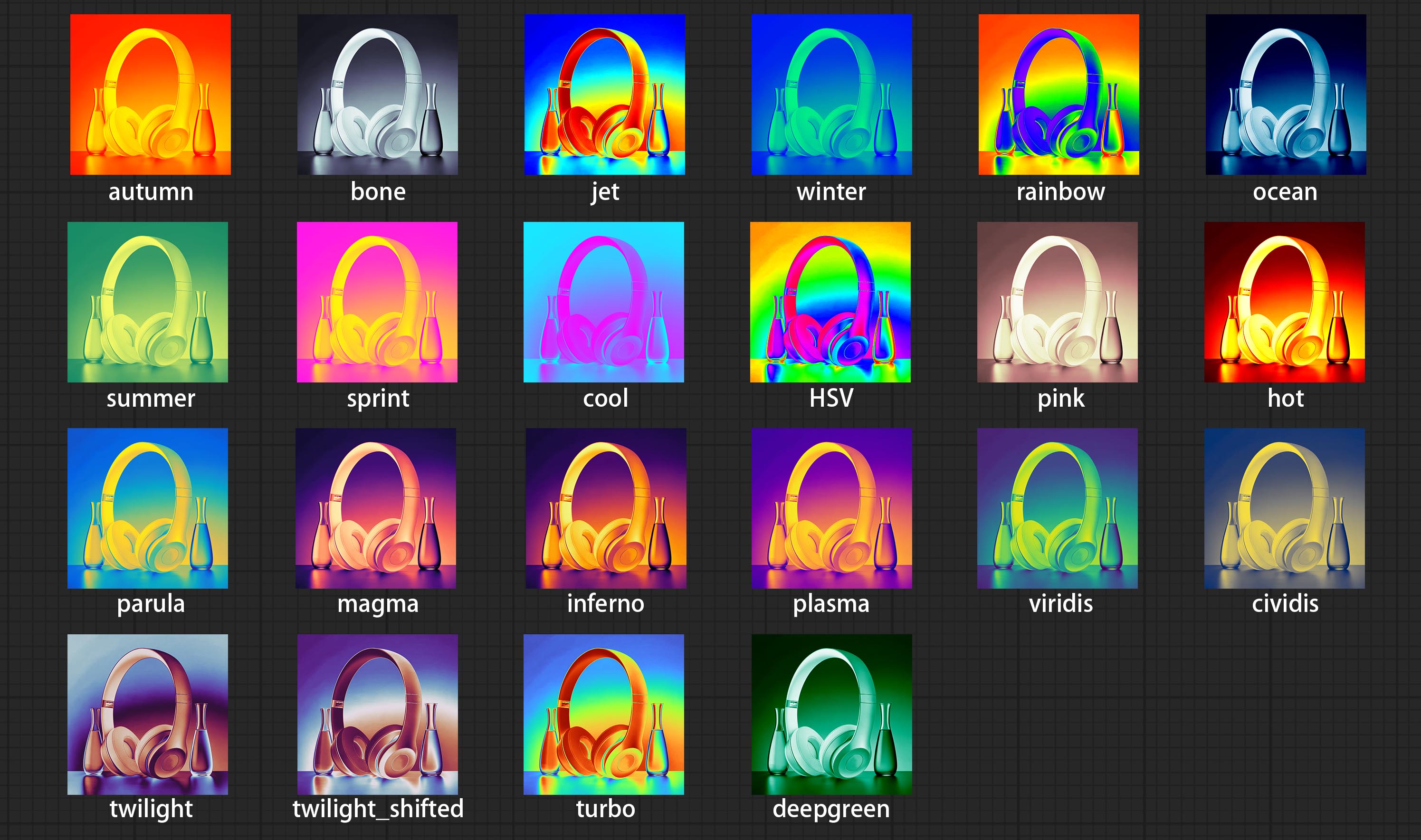

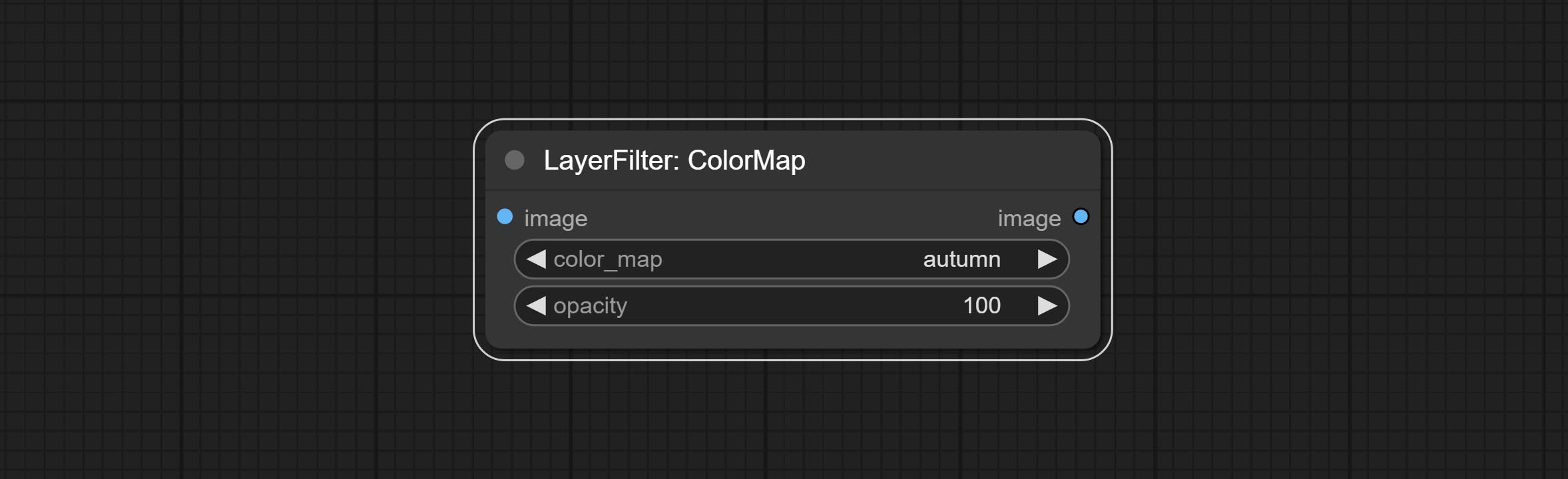

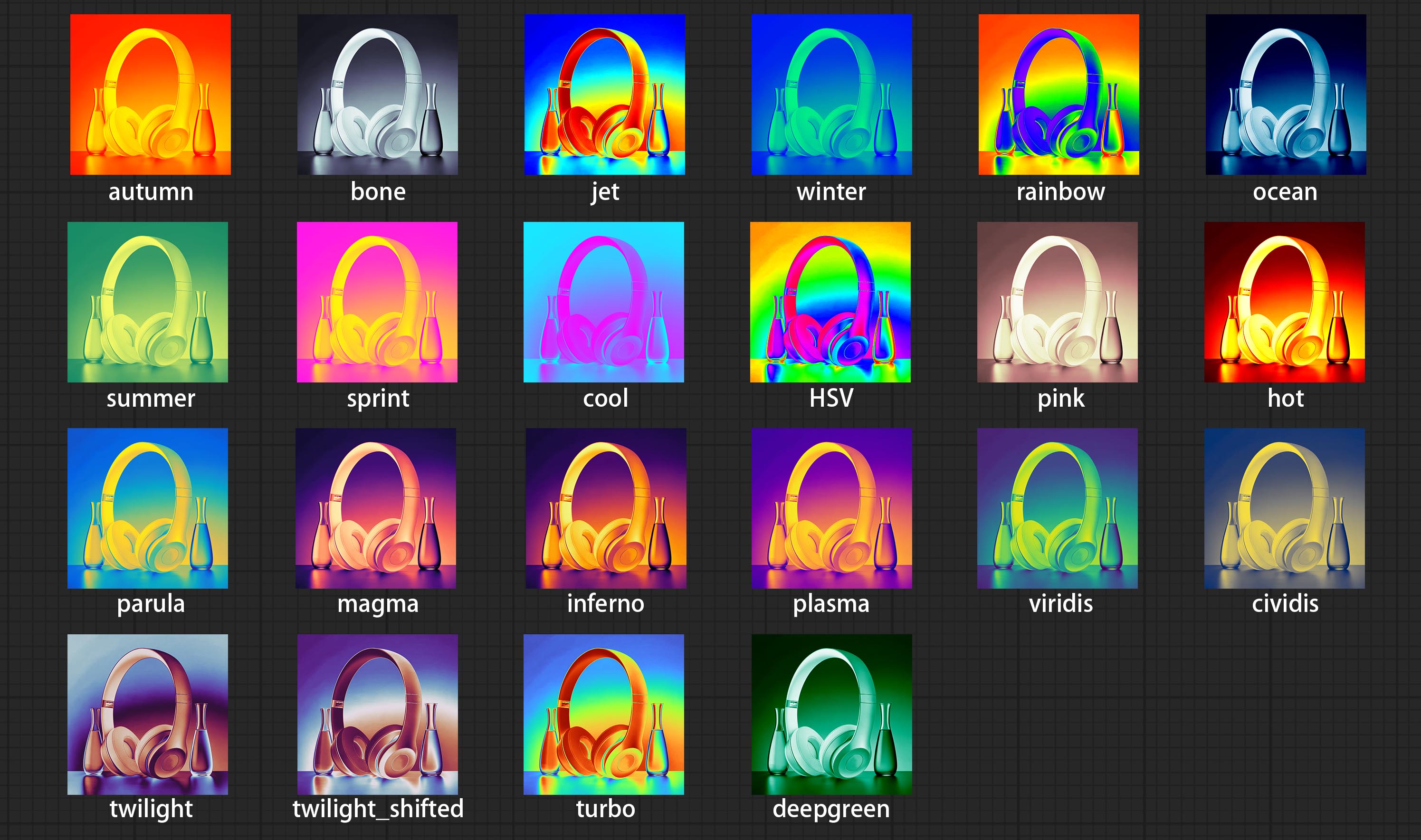

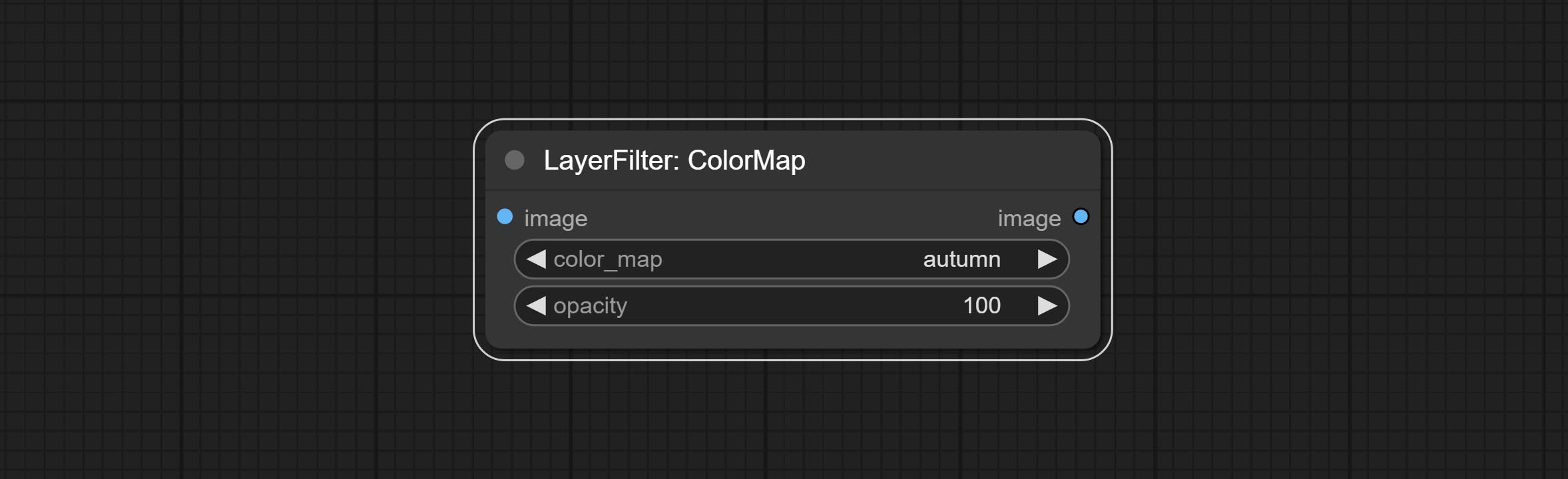

컬러 맵 필터 노드를 커밋하여 의사 색상 히트 맵 효과를 만듭니다.

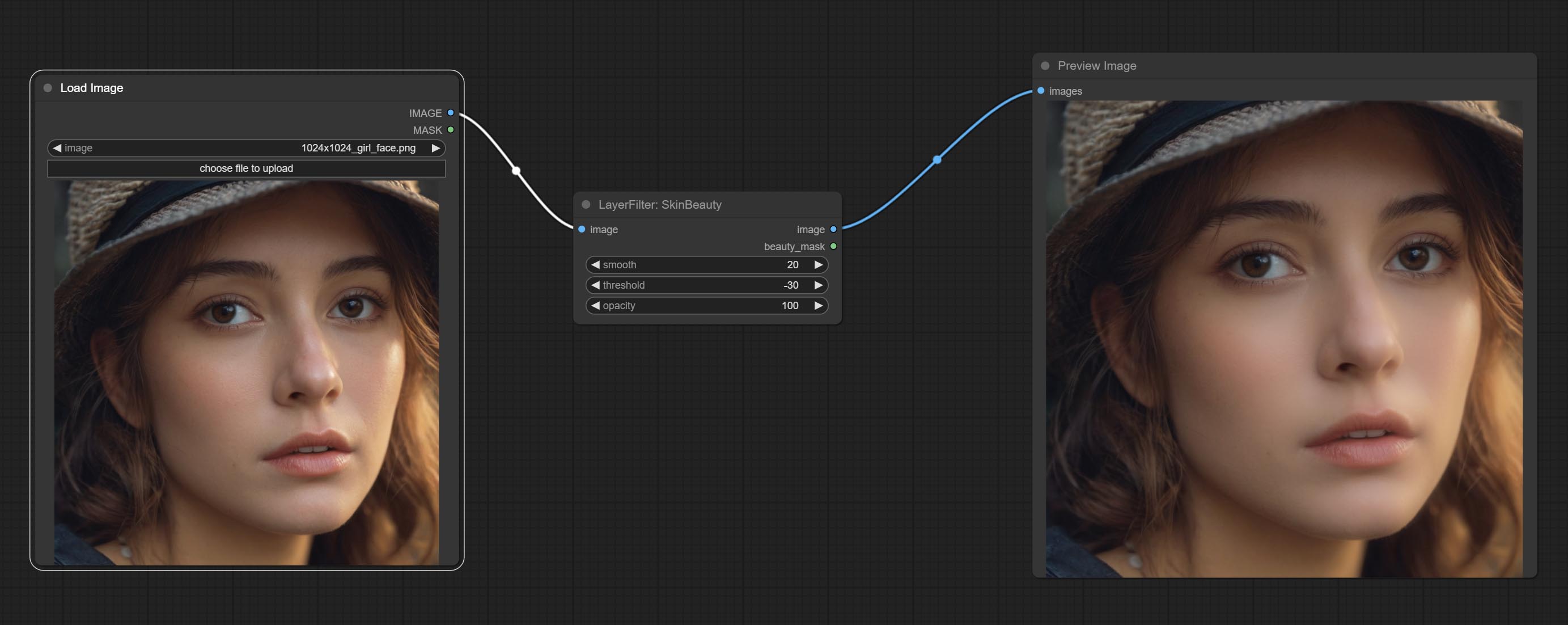

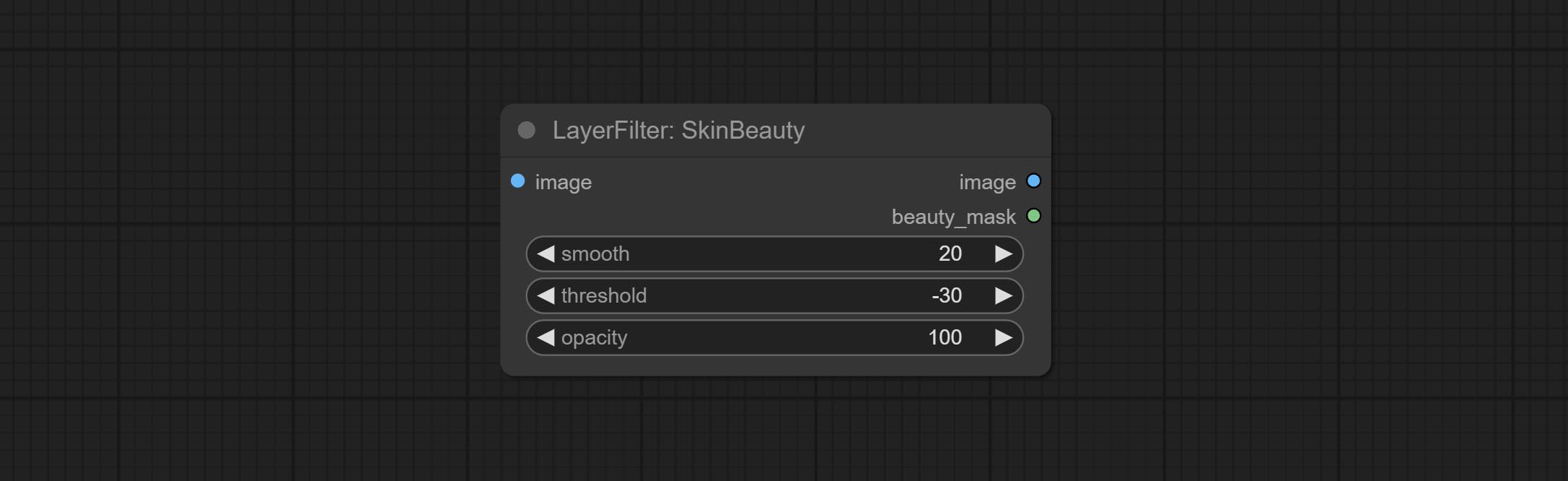

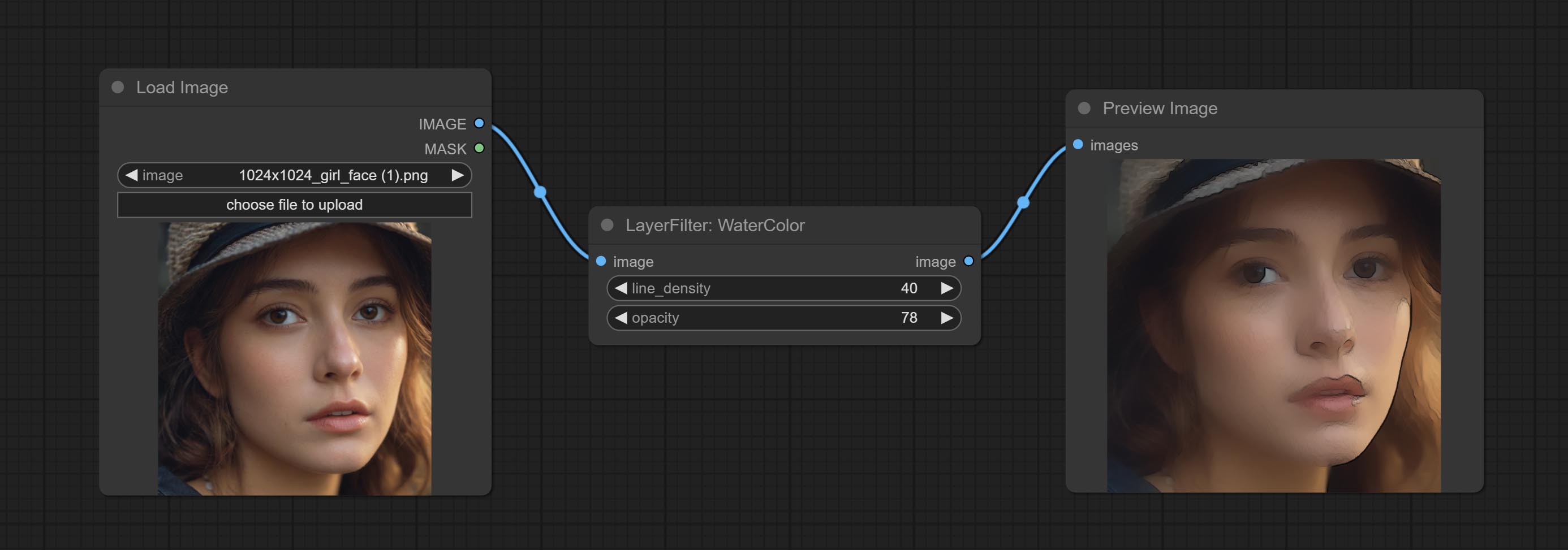

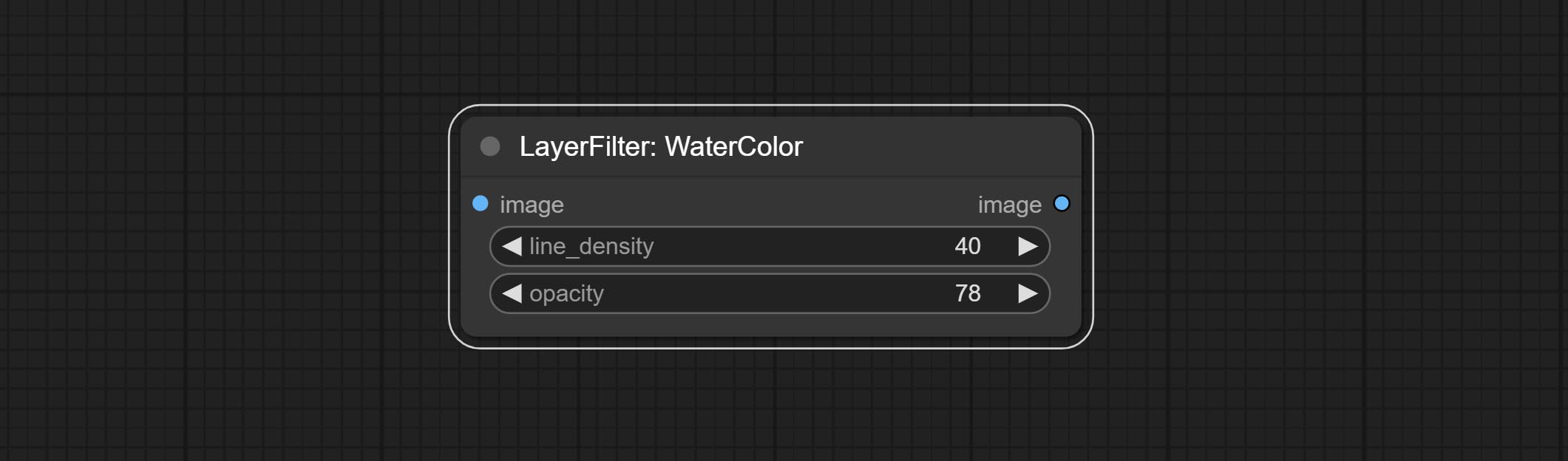

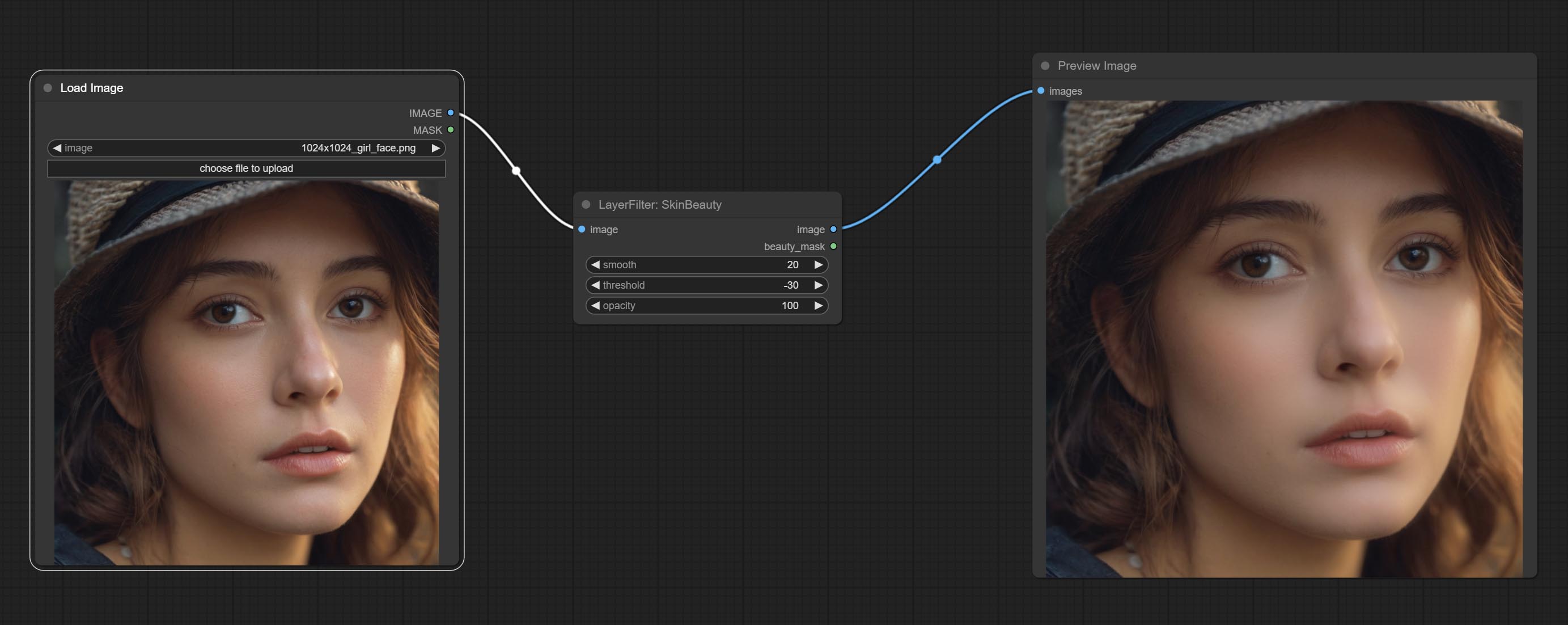

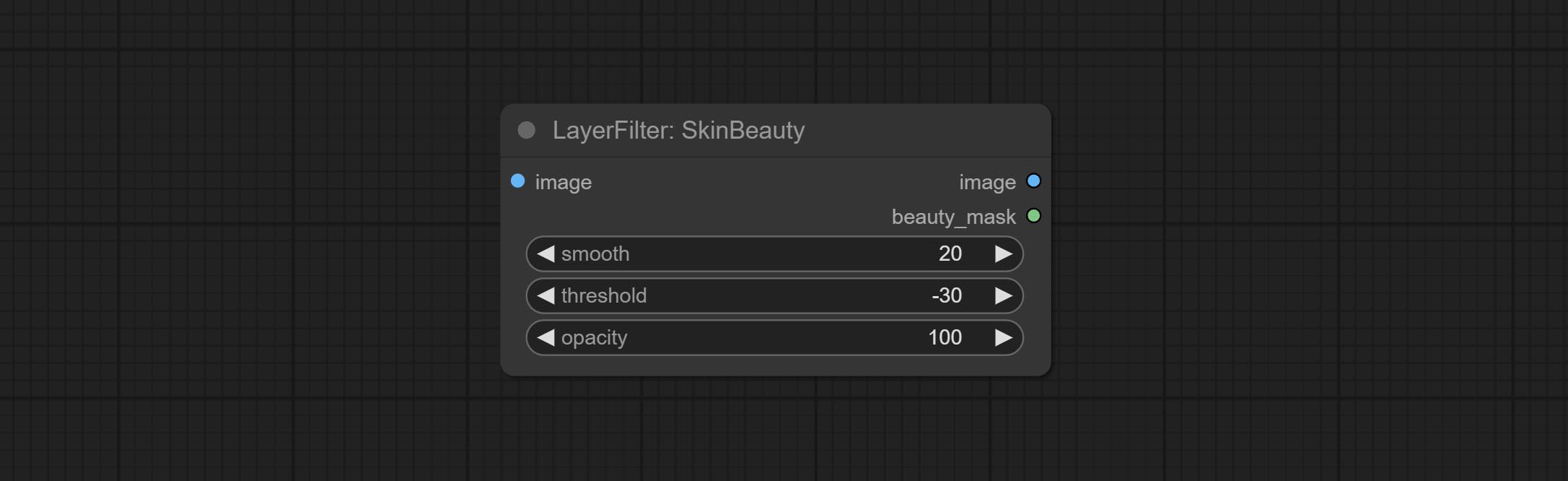

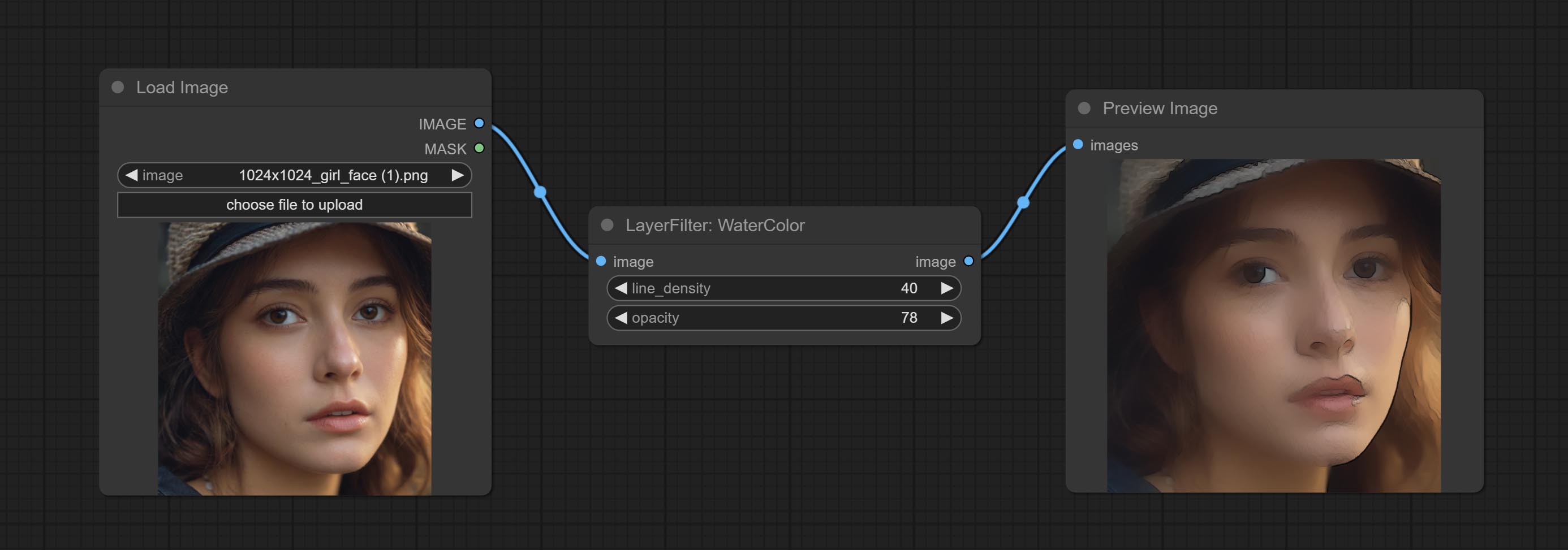

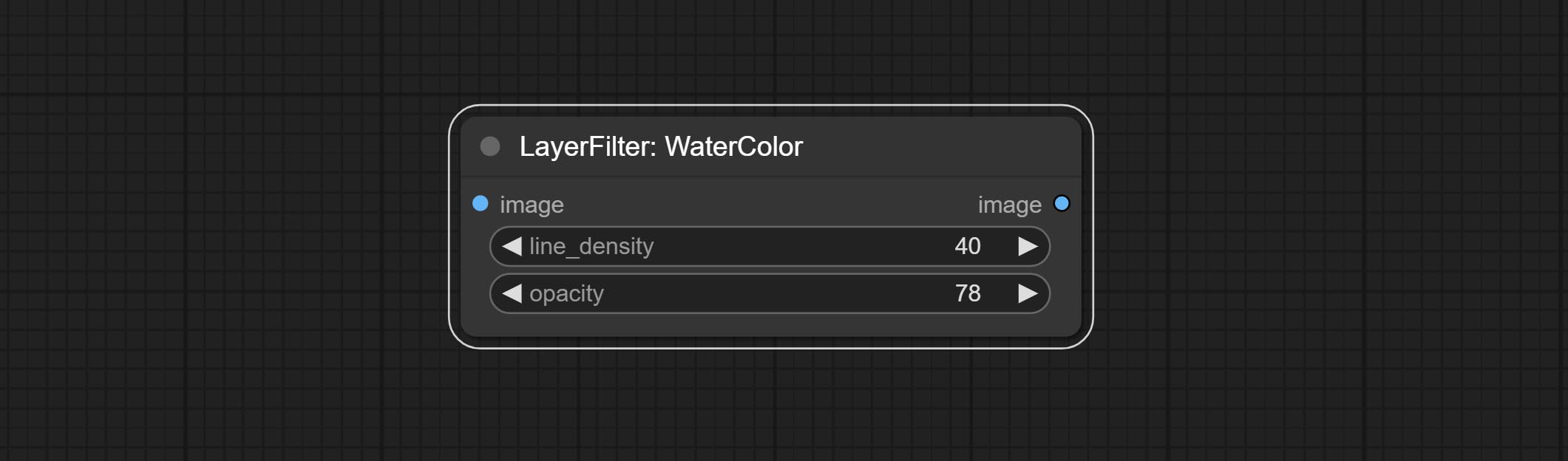

수채화 및 Skinbeauty 노드를 커밋합니다. 이는 수채화 및 피부 부드러움 효과를 생성하는 이미지 필터입니다.

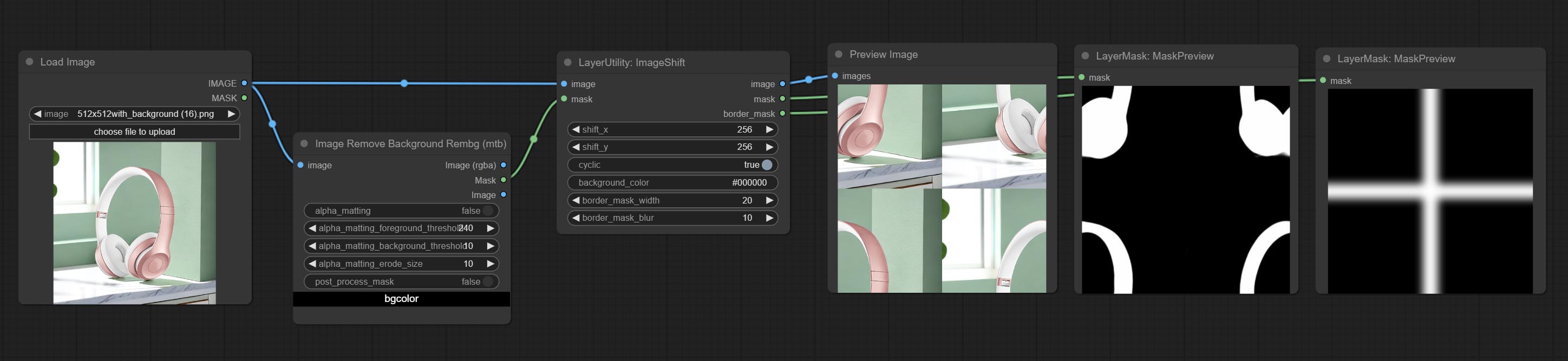

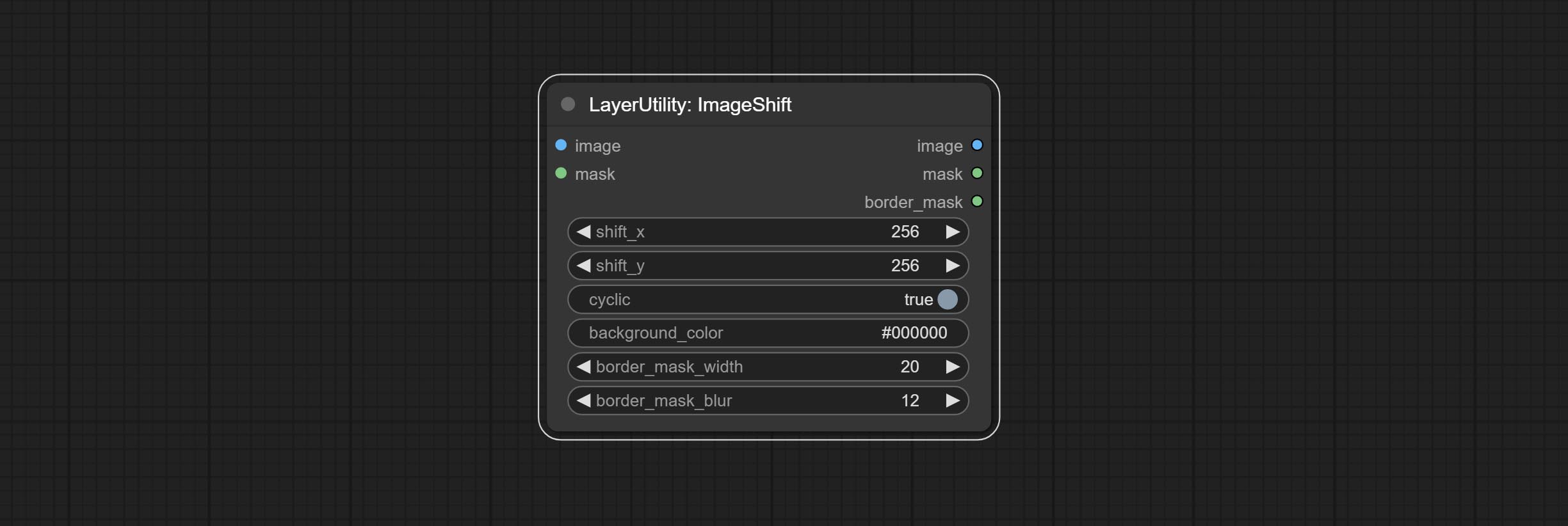

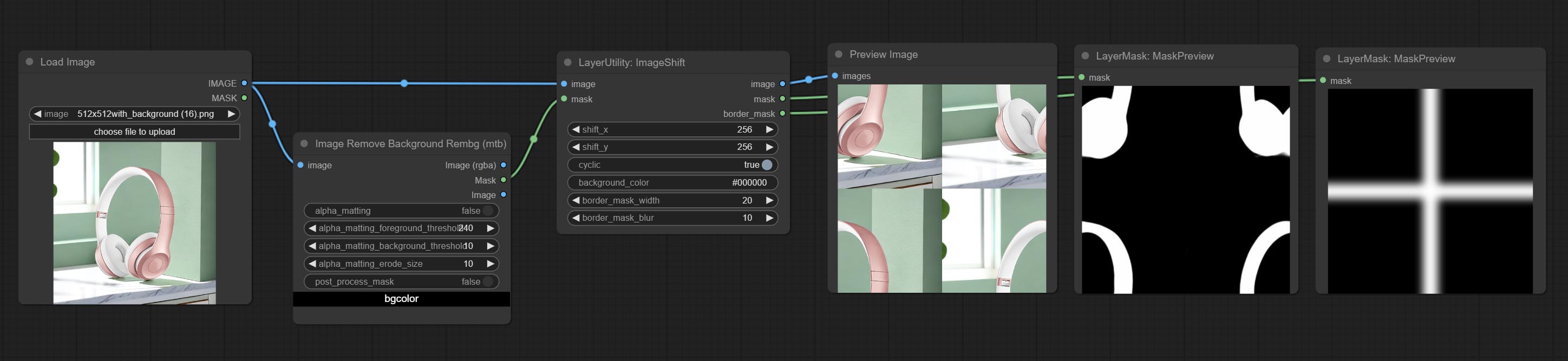

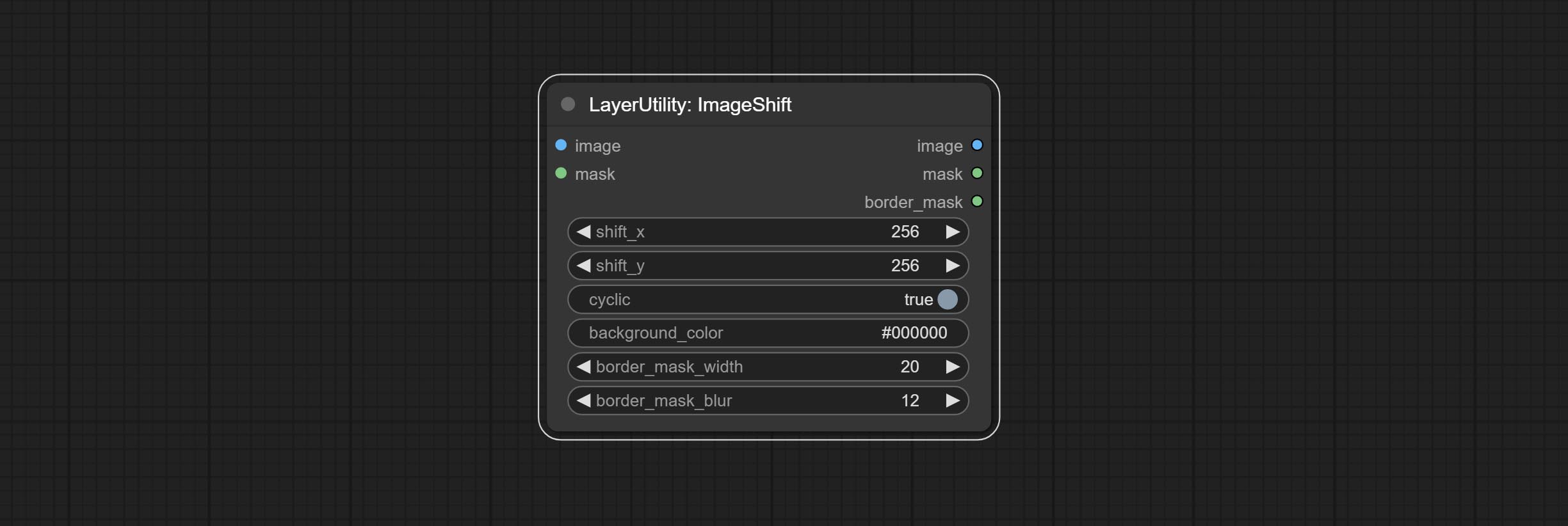

Imageshift 노드를 커밋하여 이미지를 바꾸고 변위 이음새 마스크를 출력하여 연속 텍스처를 만드는 데 편리합니다.

imageMaskScaleas 노드를 커밋하여 참조 이미지를 기반으로 이미지 또는 마스크 크기를 조정하십시오.

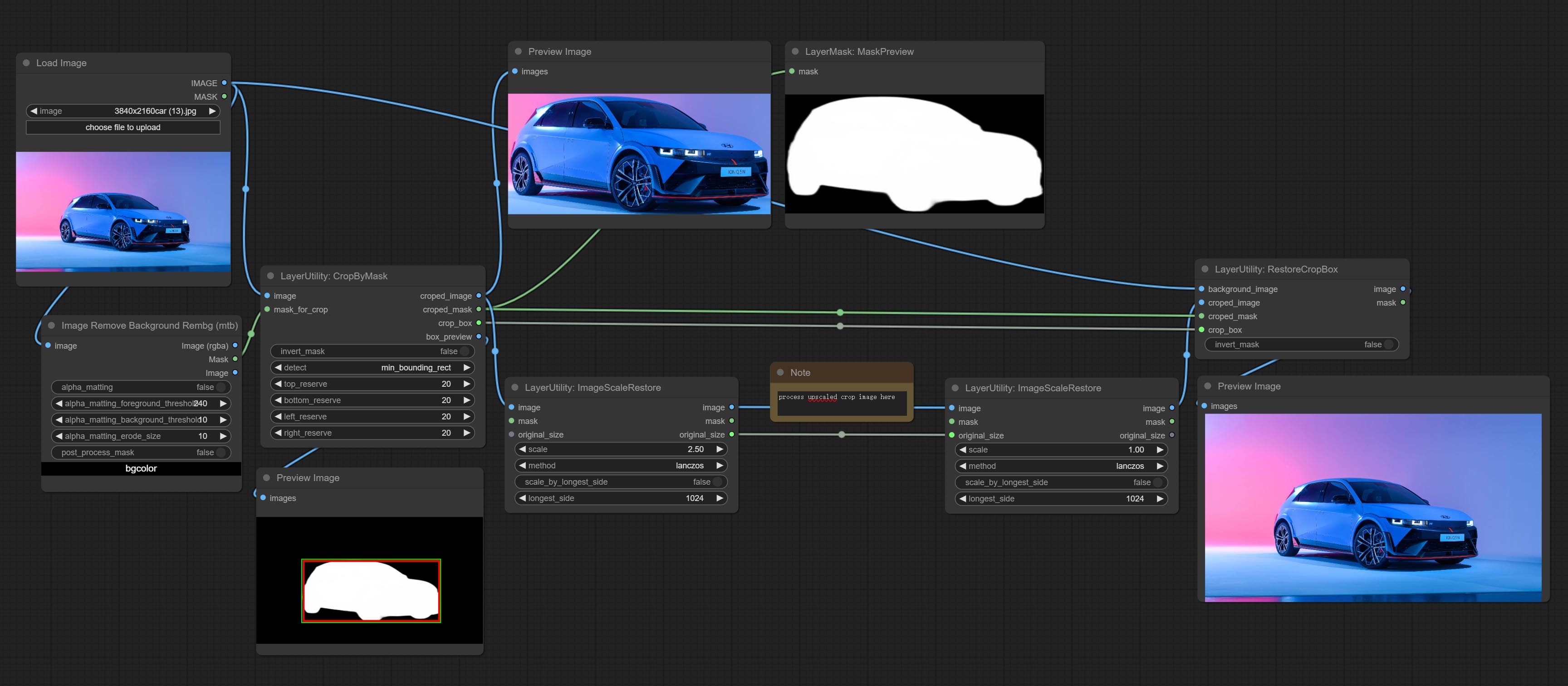

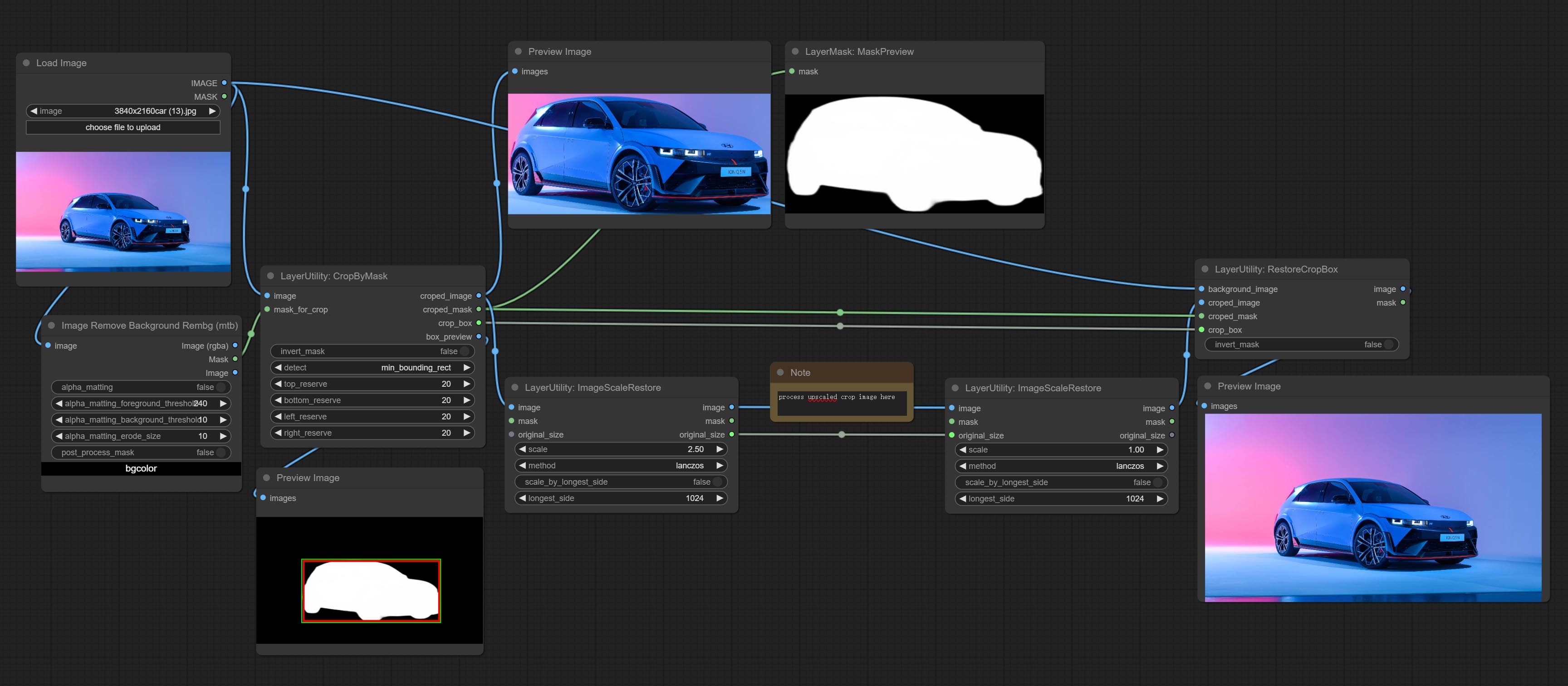

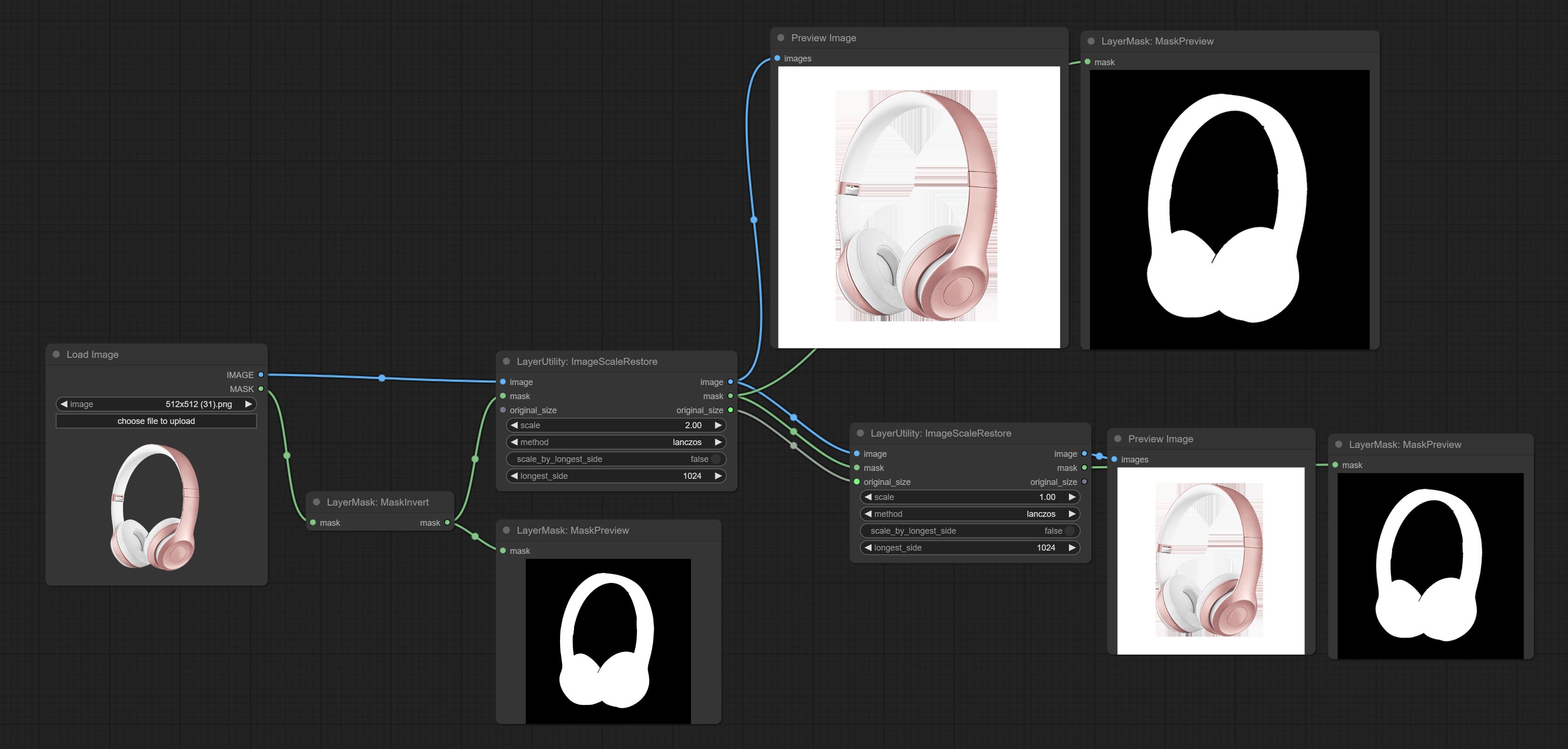

로컬 고급 및 수리 작업을 위해 Cropbymask에서 작업하려면 ImagesCalerestore 노드를 커밋하십시오.

Cropbymask 및 RestoreCropbox 노드를 커밋하십시오. 이 두 가지의 조합은 이미지를 복원하기 전에 부분적으로 자르고 다시 그리기 할 수 있습니다.

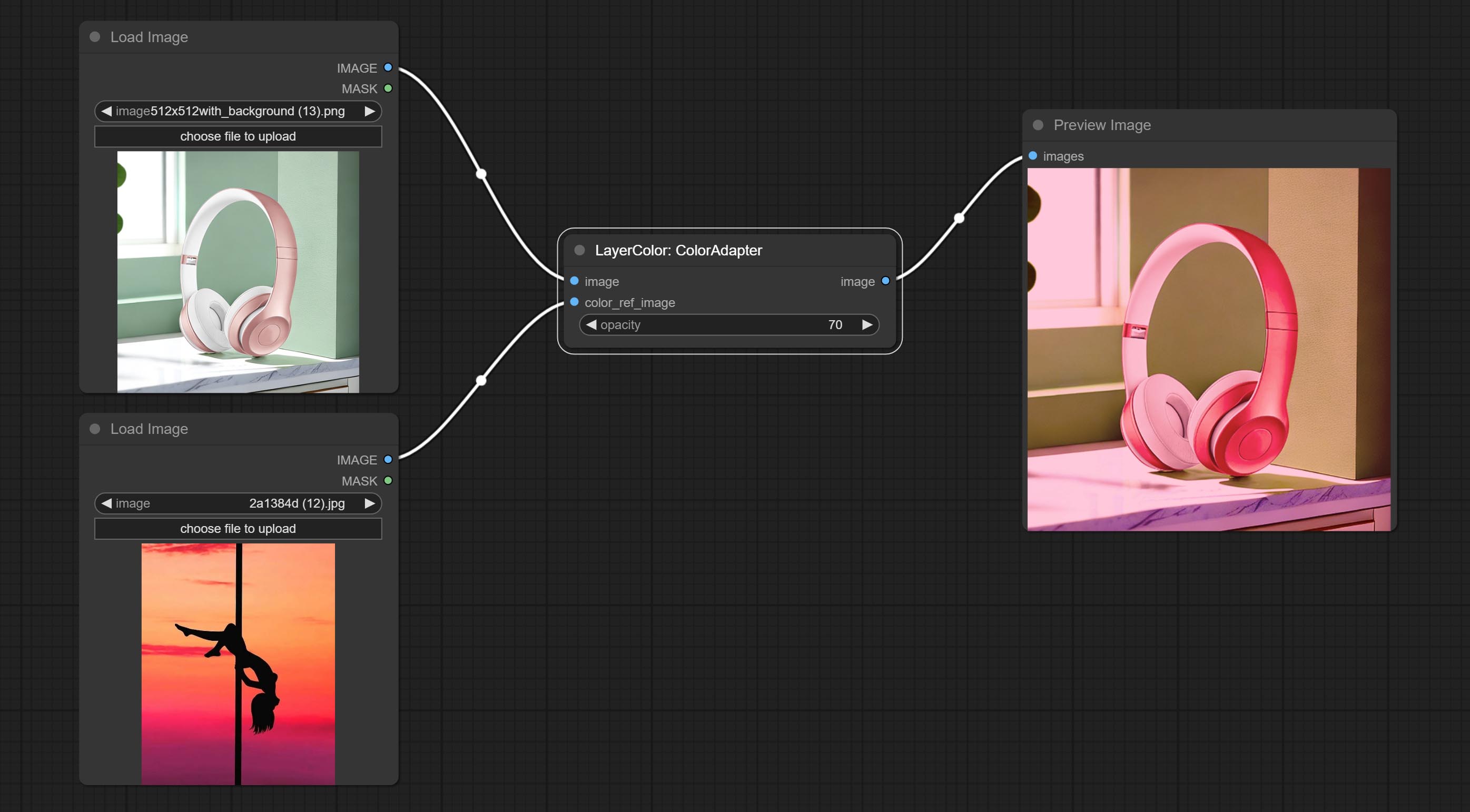

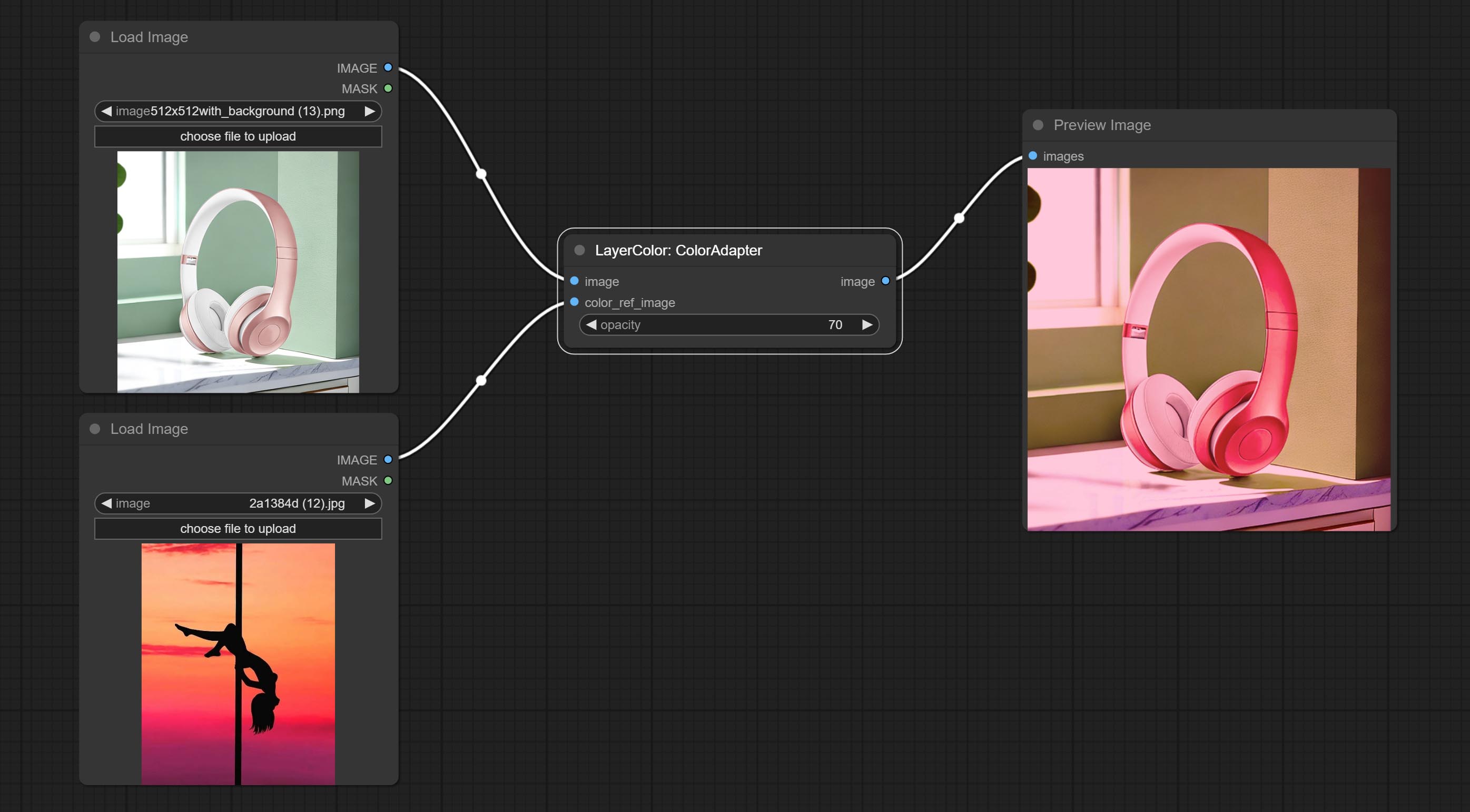

이미지의 색조를 자동으로 조정할 수있는 ColorAdapter 노드를 커밋합니다.

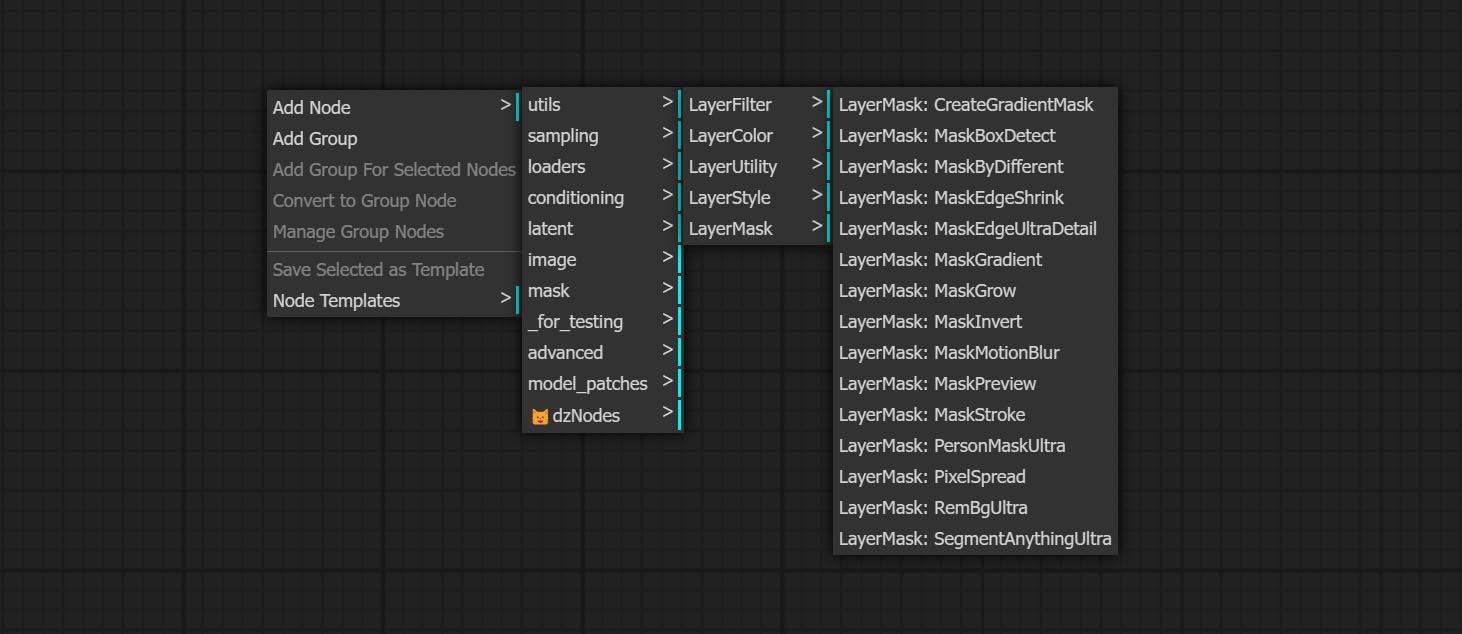

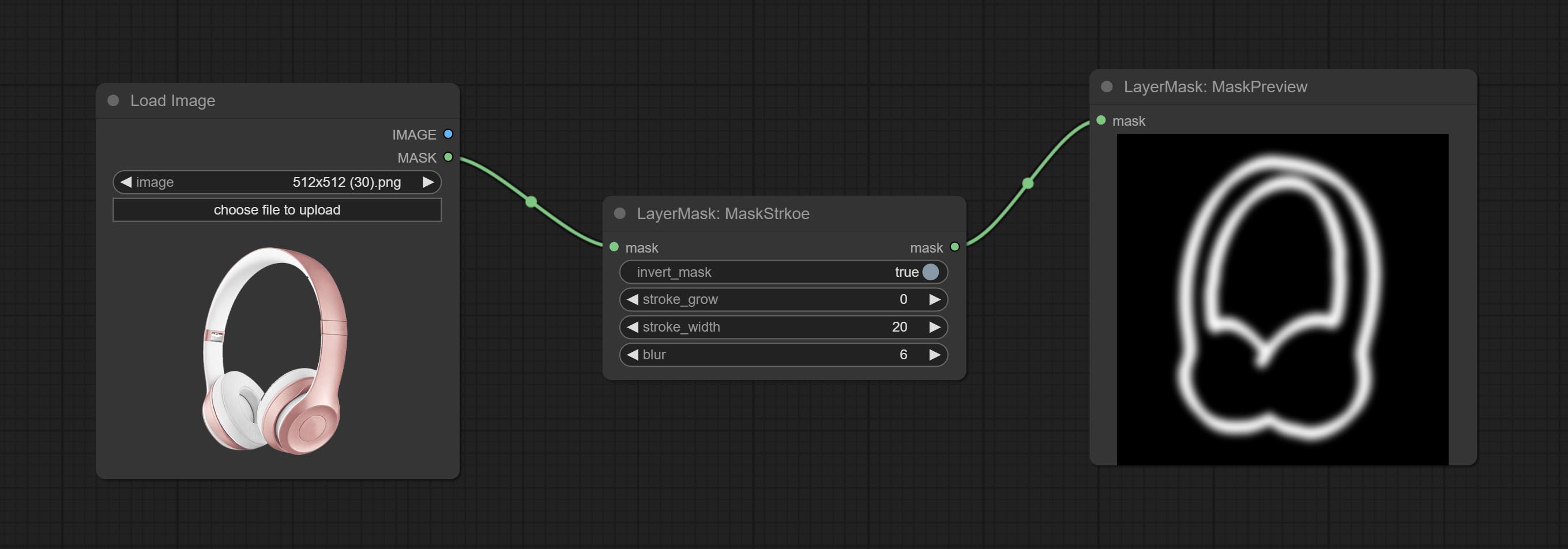

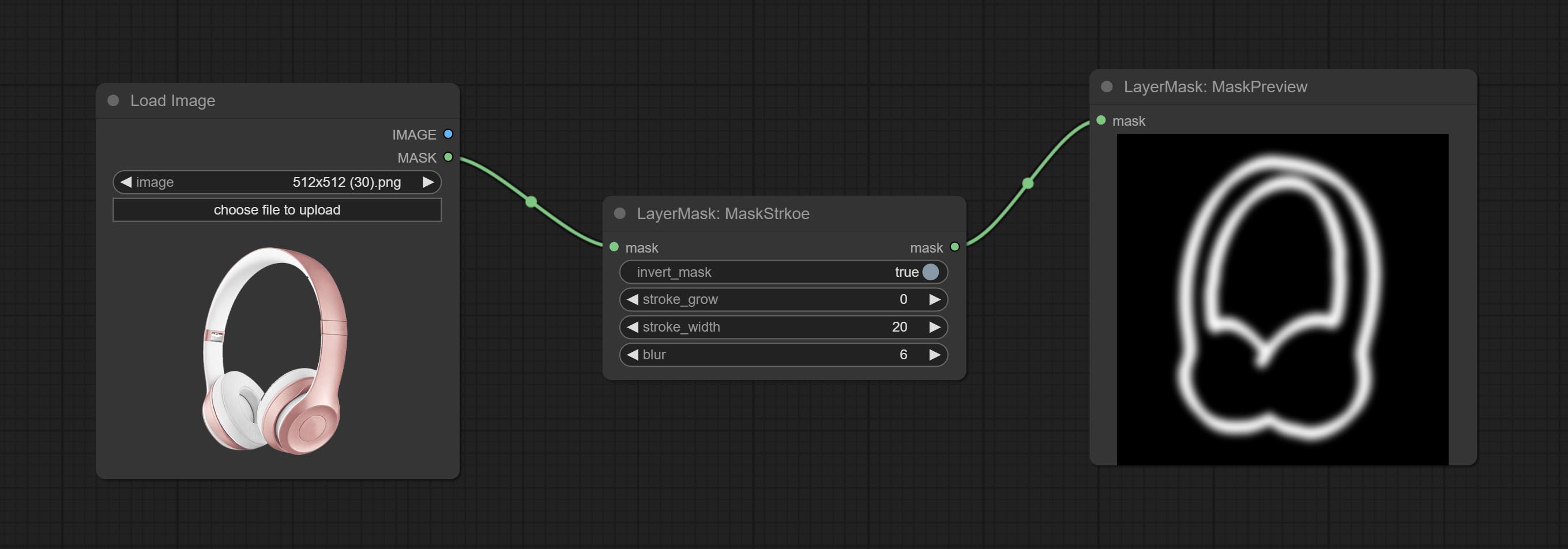

마스크 스트로크 노드를 커밋하면 마스크 윤곽선 스트로크를 생성 할 수 있습니다.

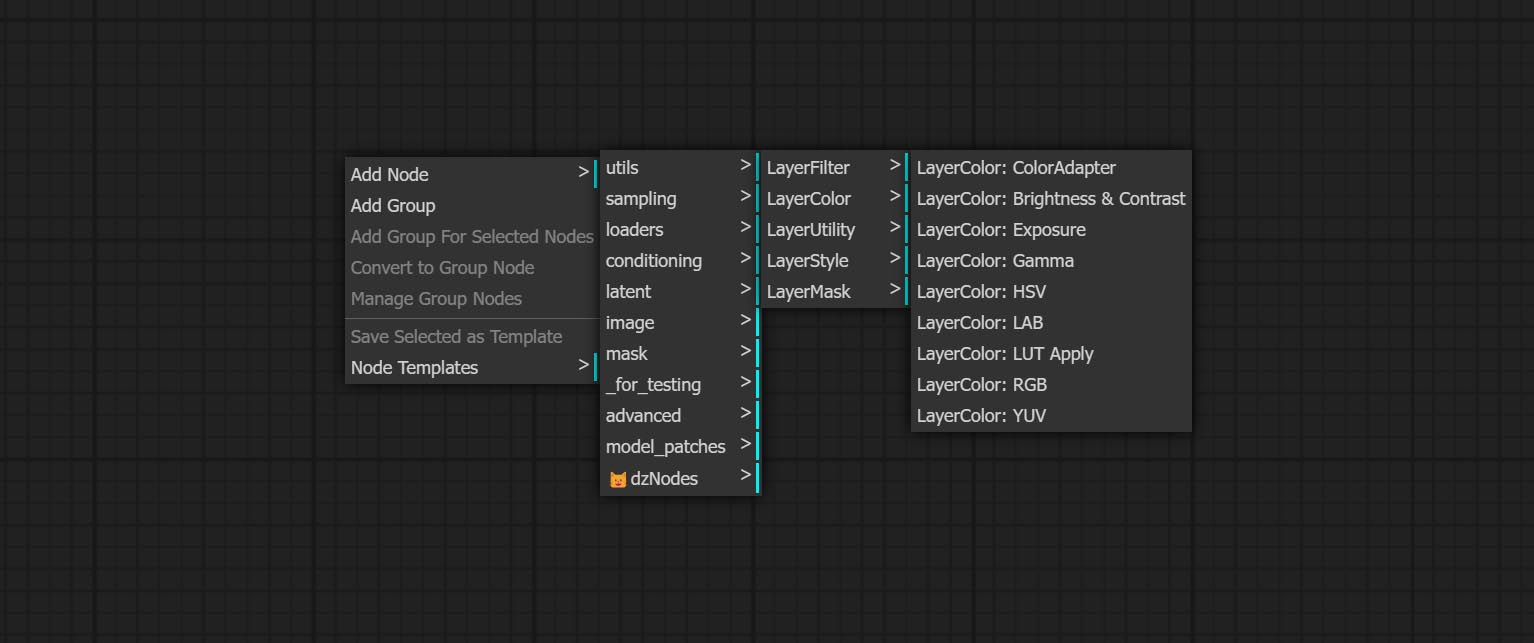

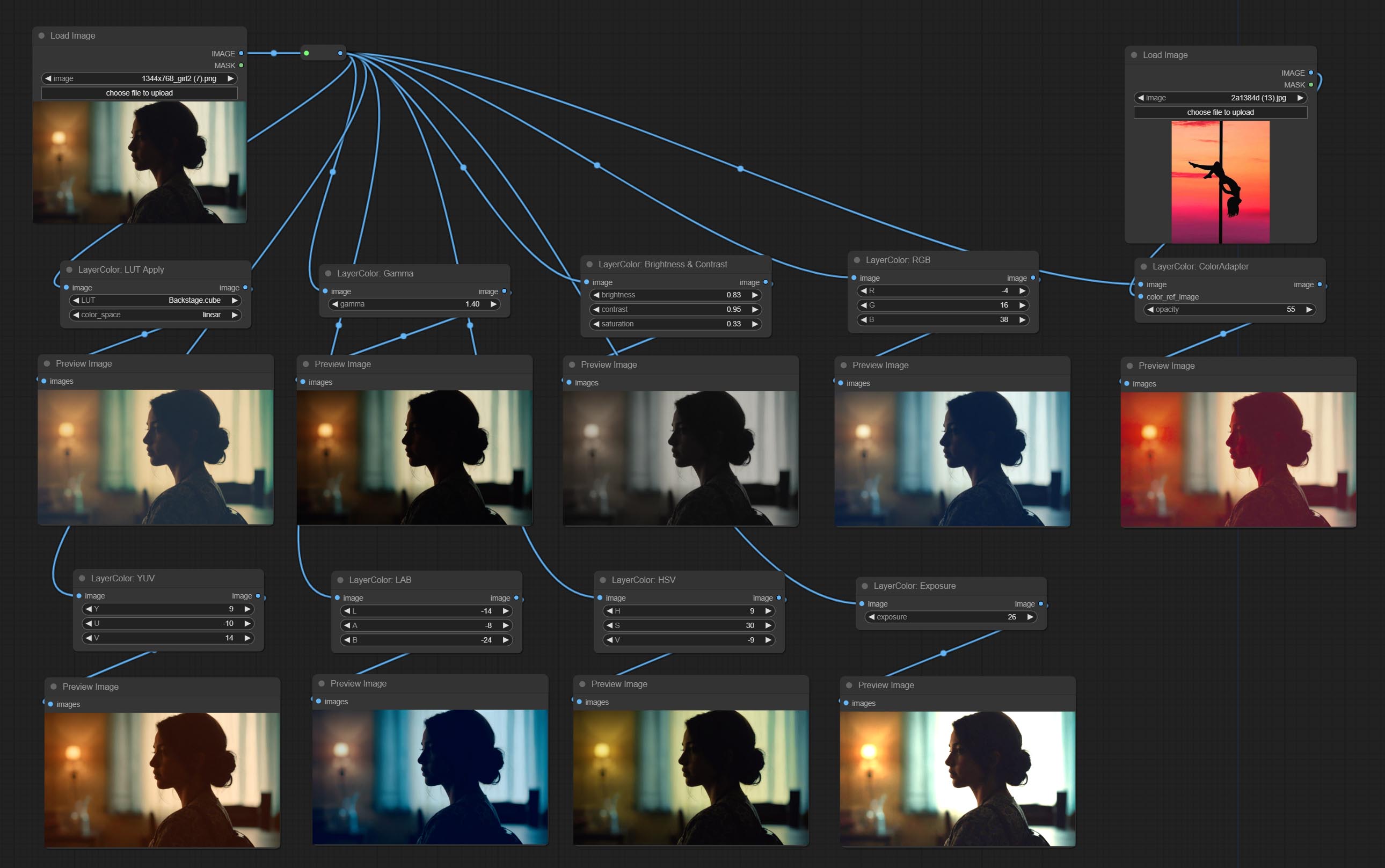

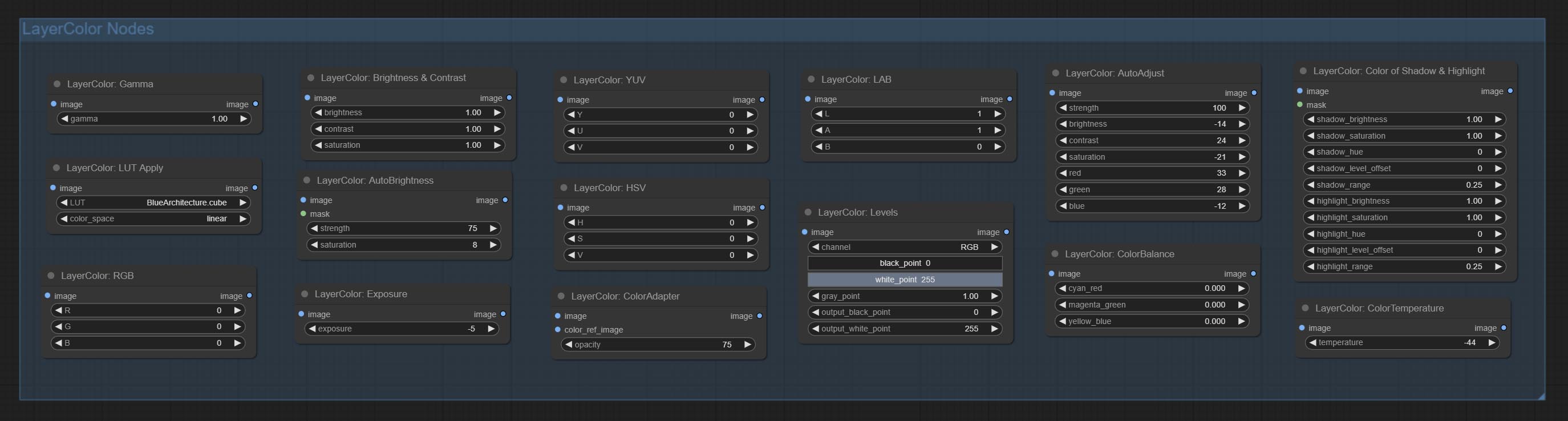

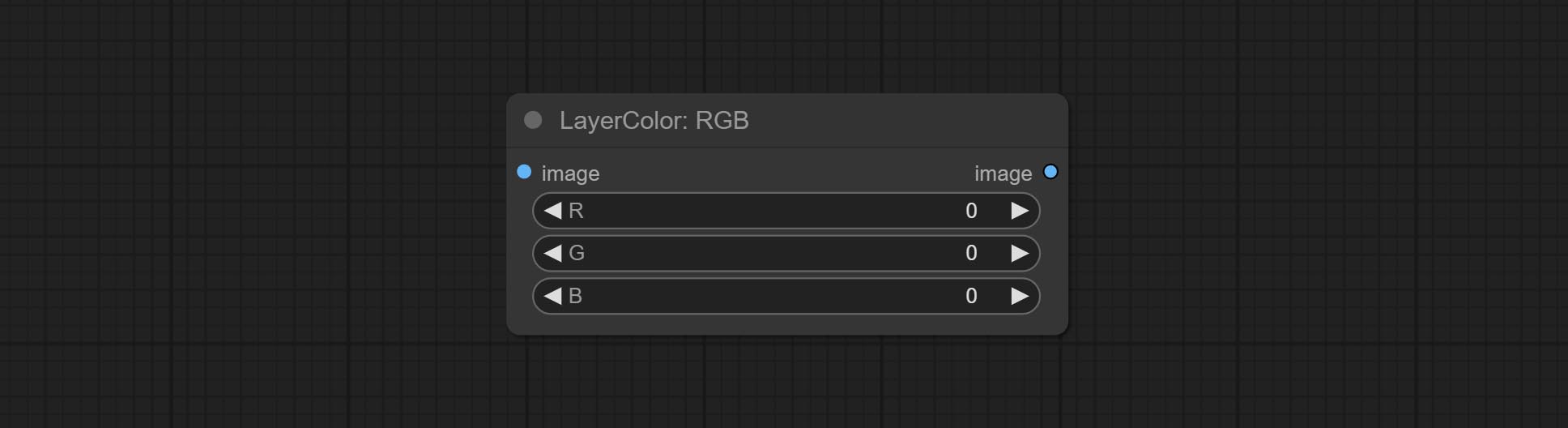

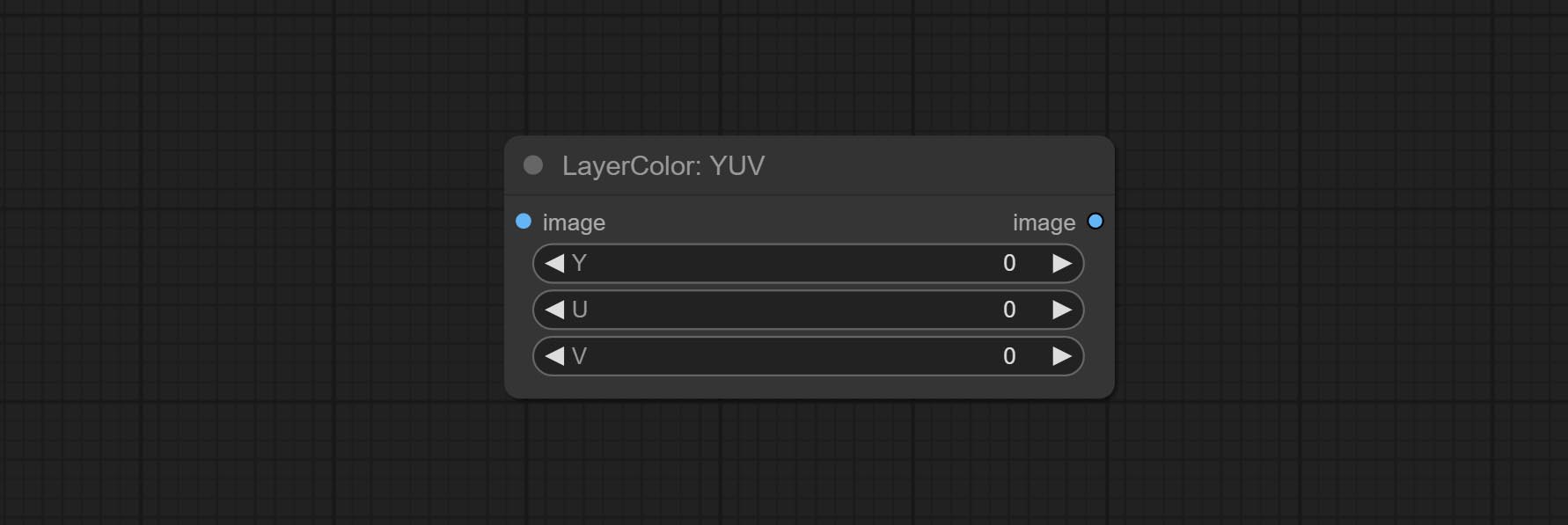

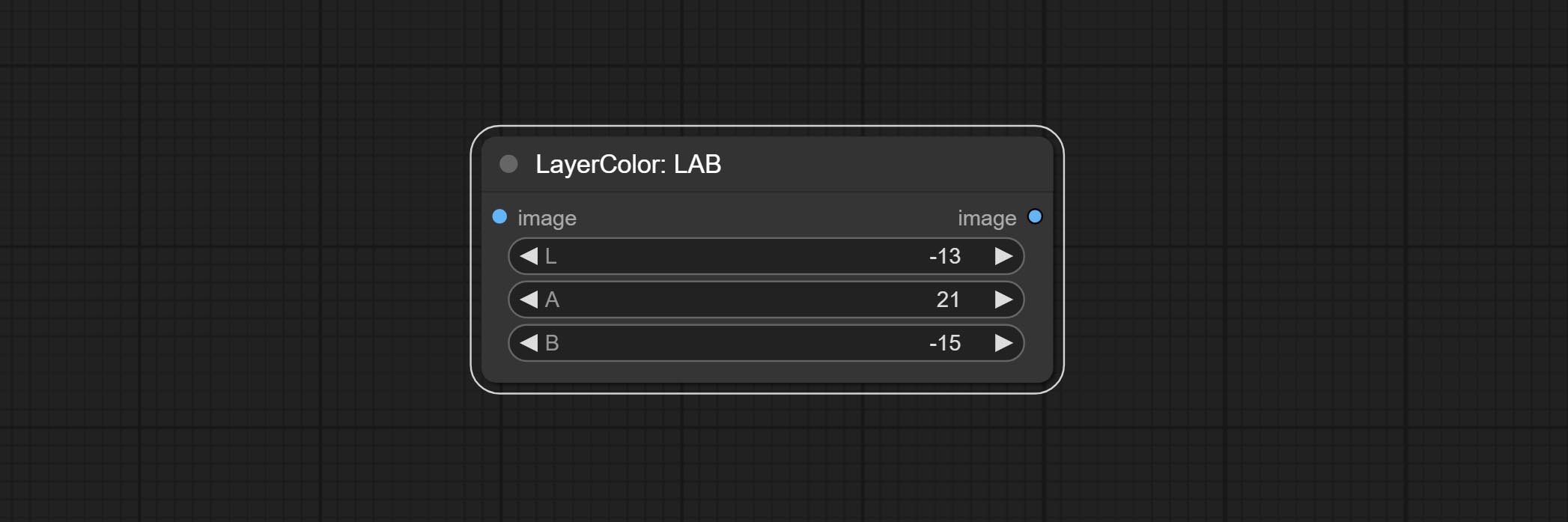

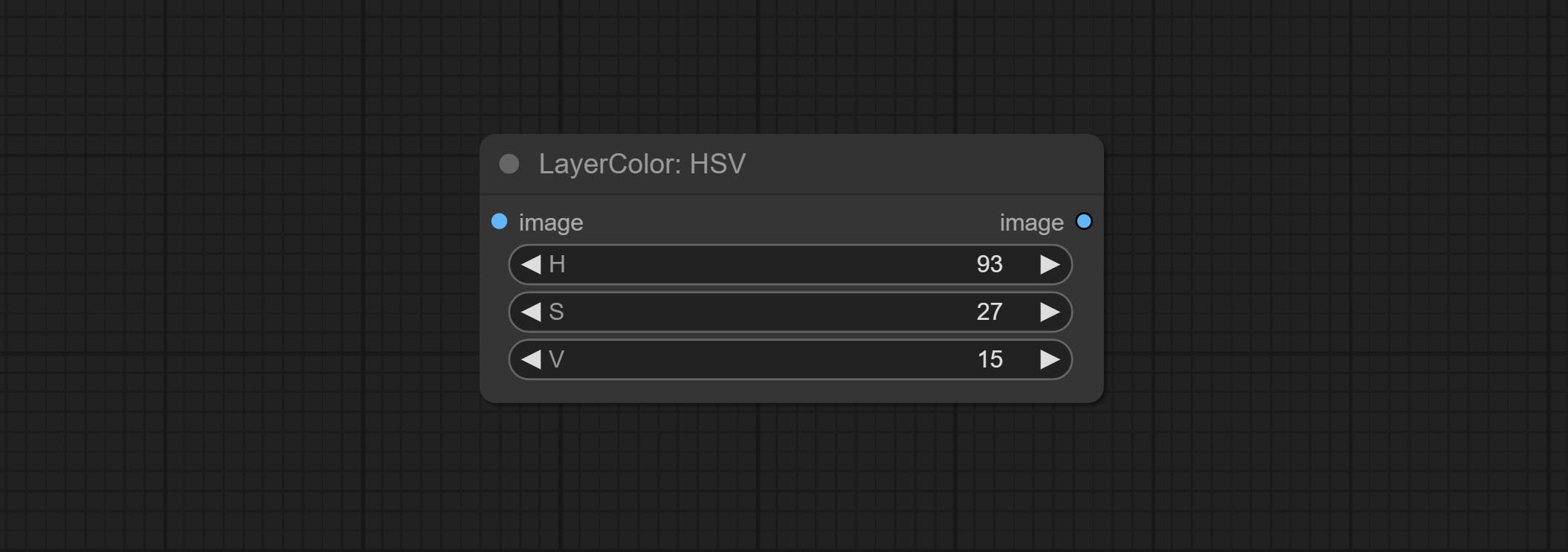

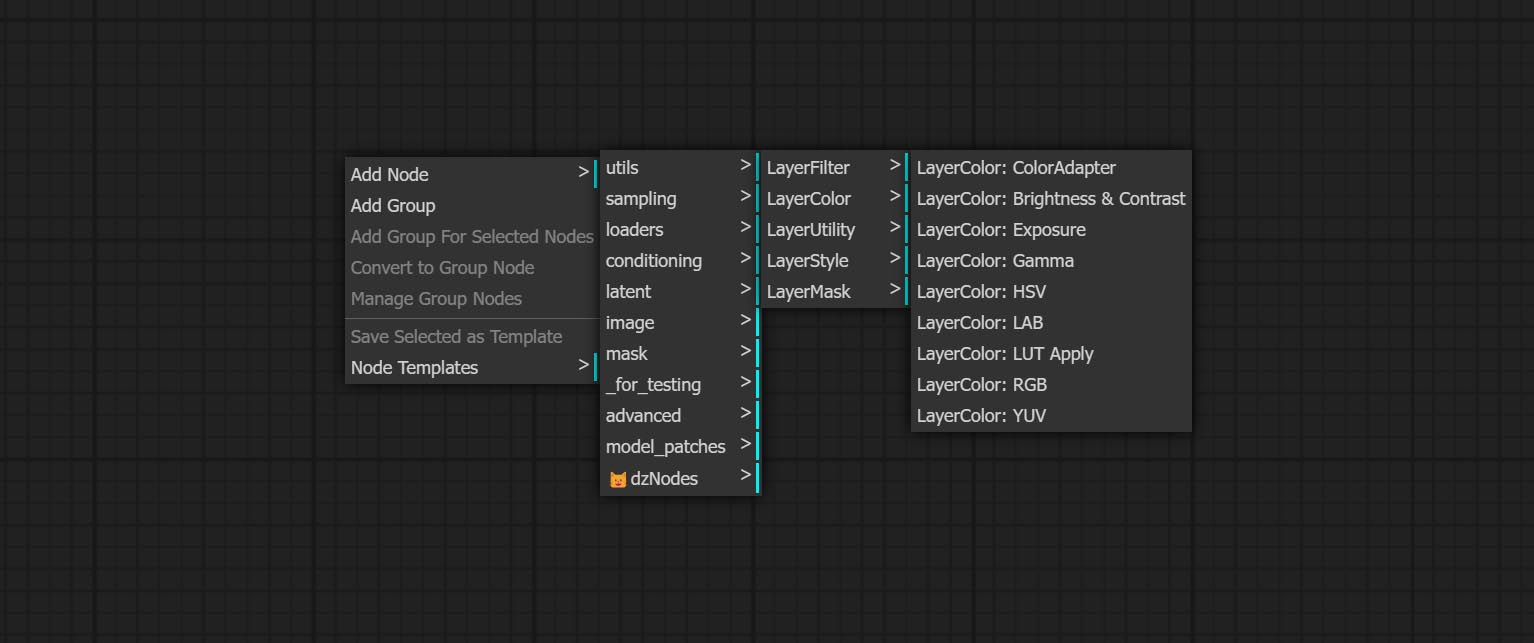

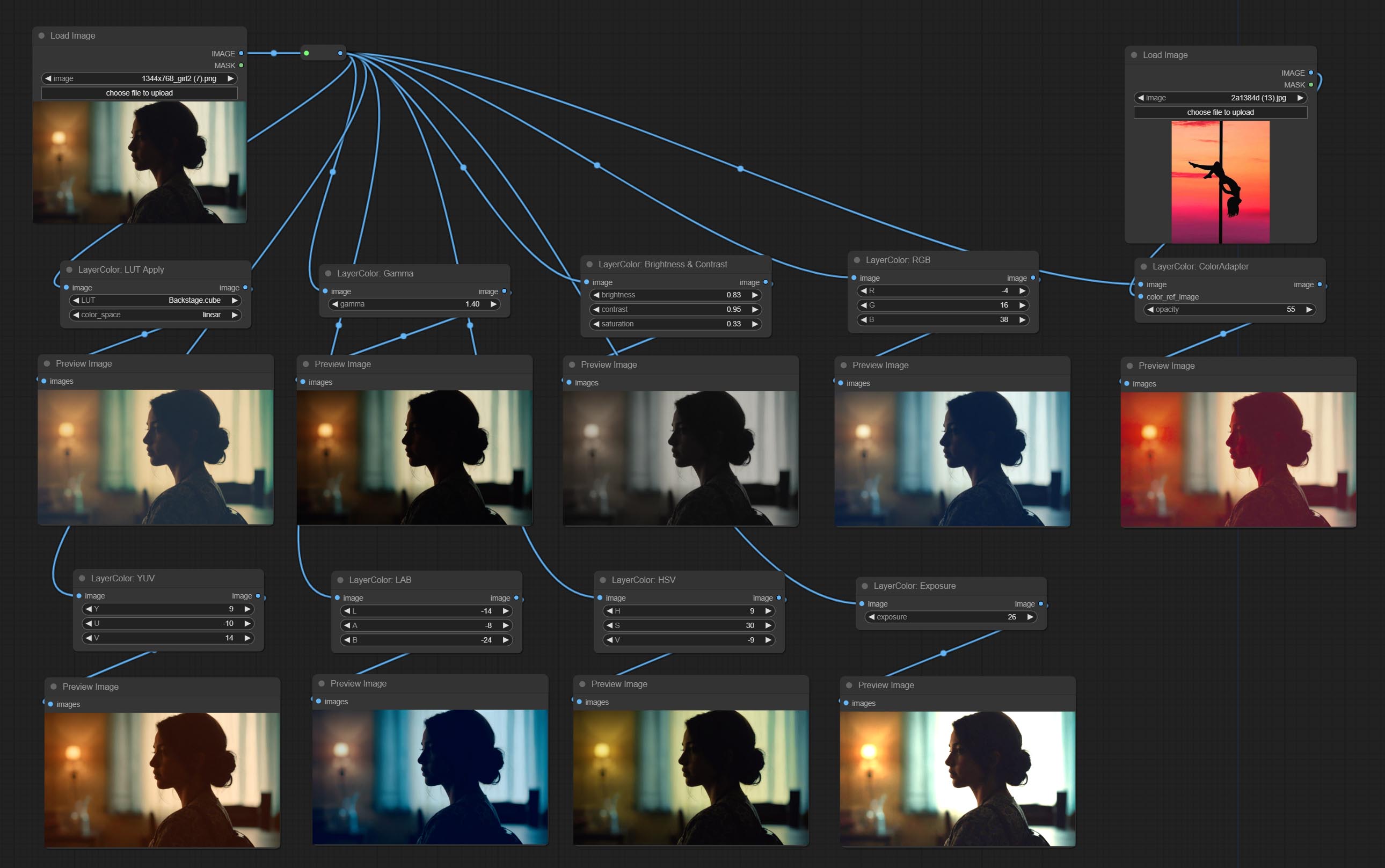

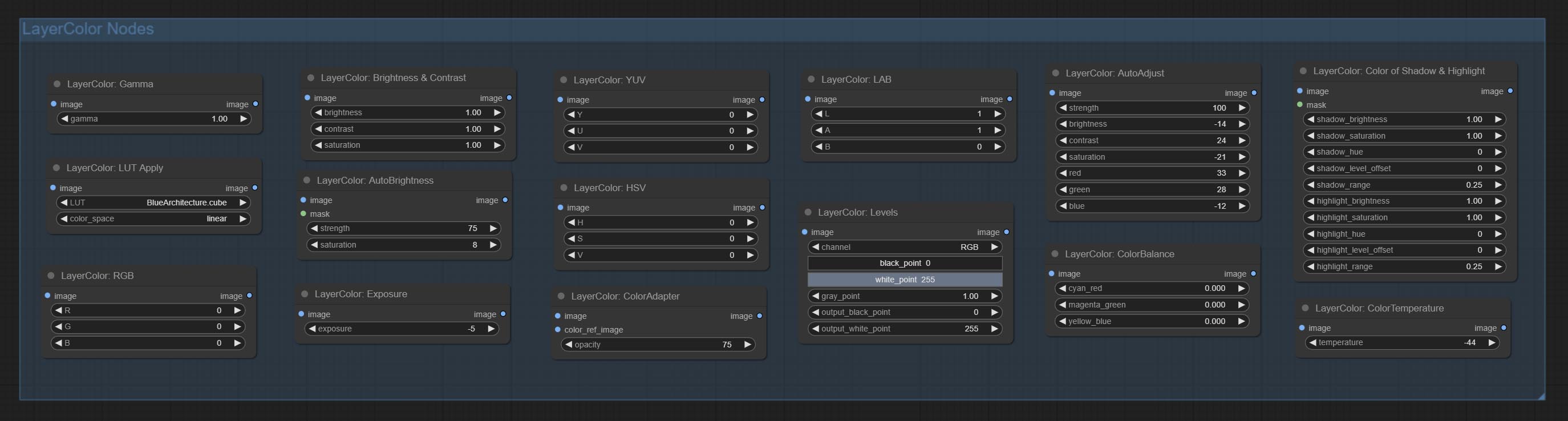

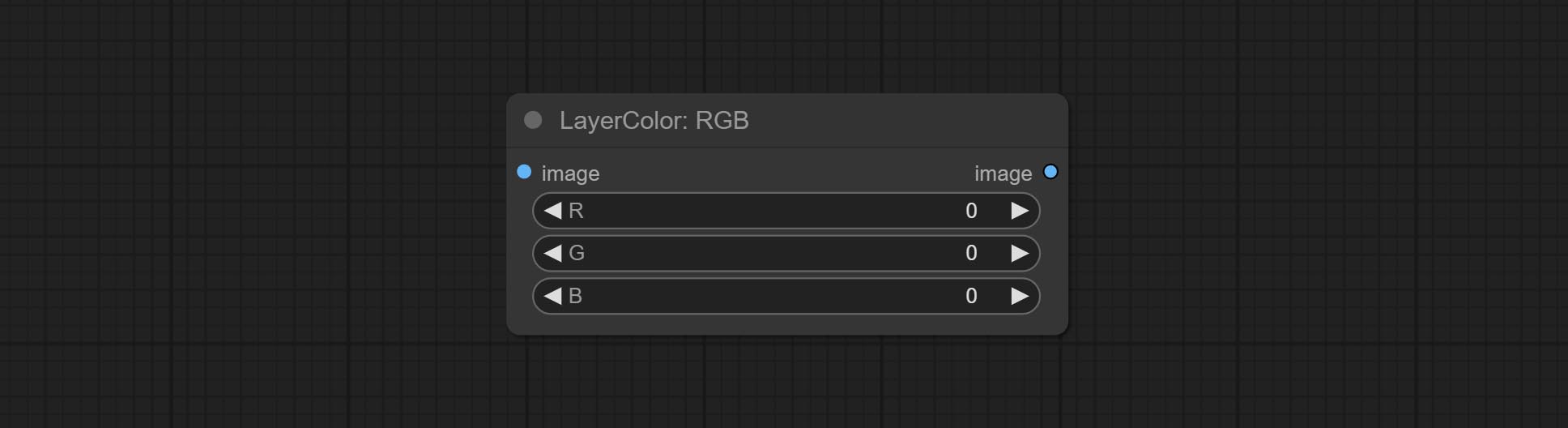

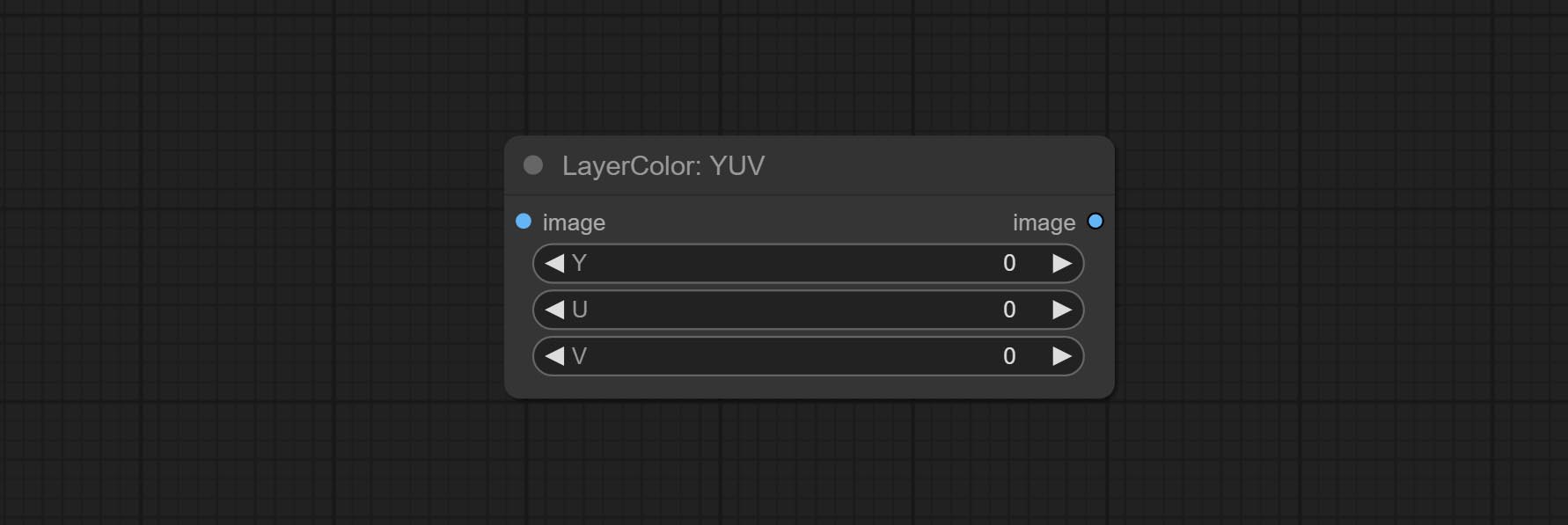

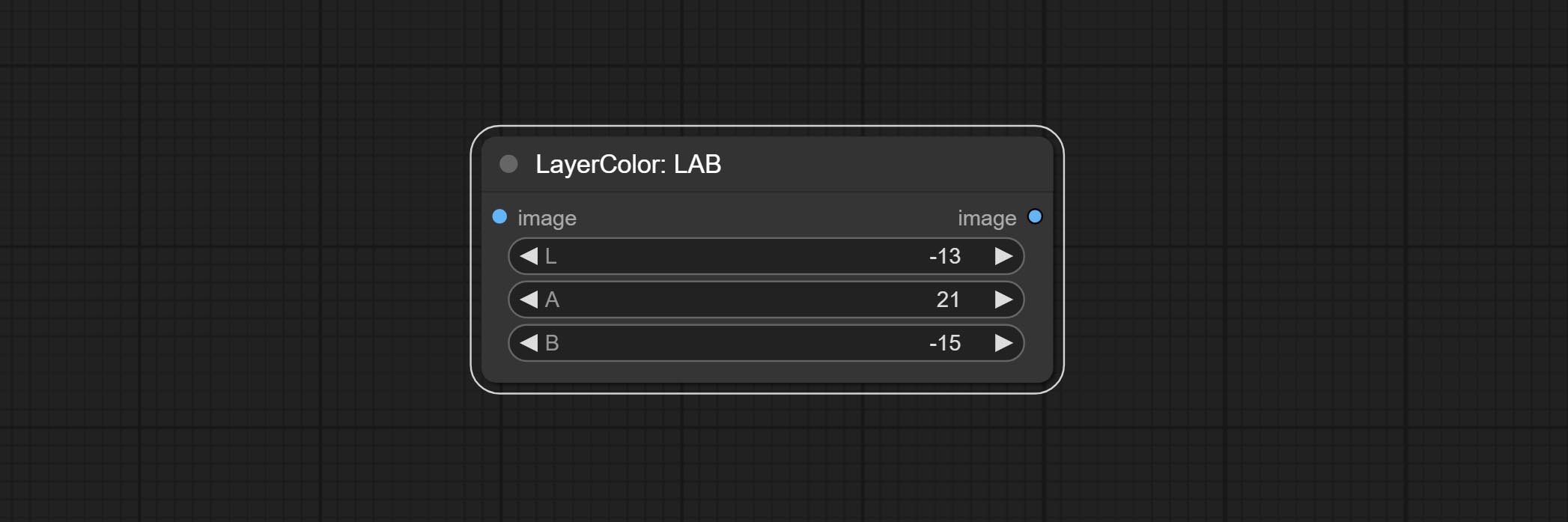

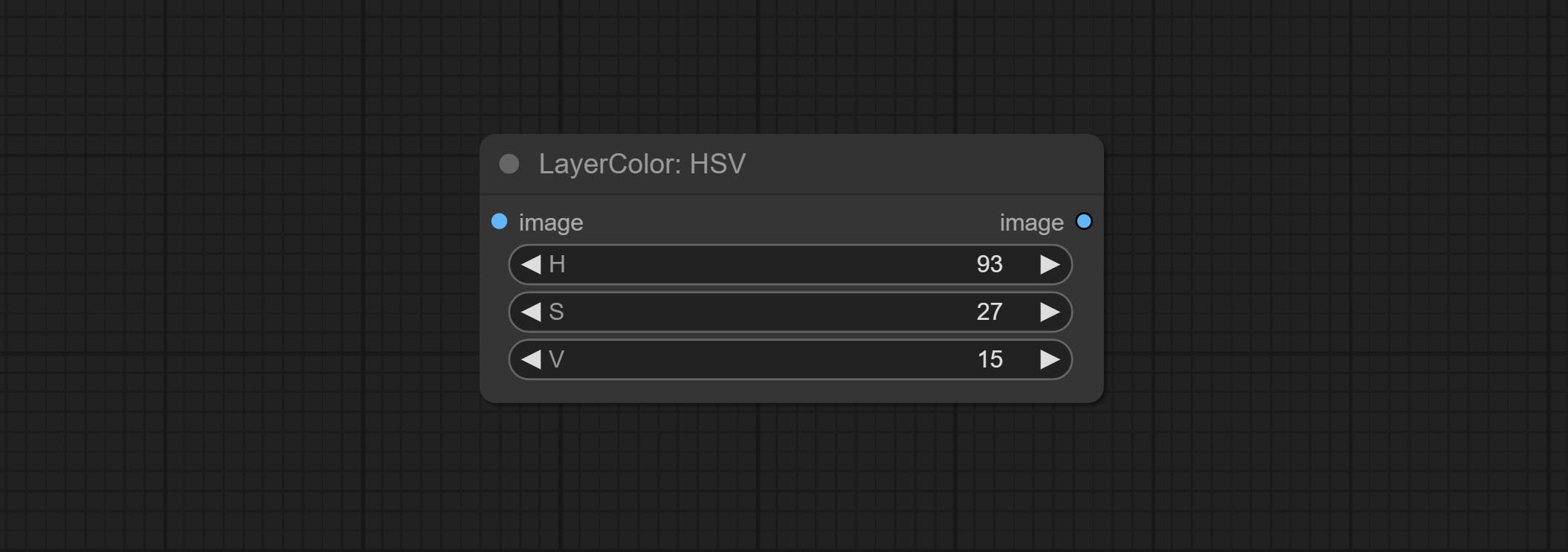

이미지 색상을 조정하는 데 사용되는 LayerColor 노드 그룹을 추가하십시오. LUT Apply, Gamma, Brightness & Contrast, RGB, YUV, Lab Adn HSV가 포함됩니다.

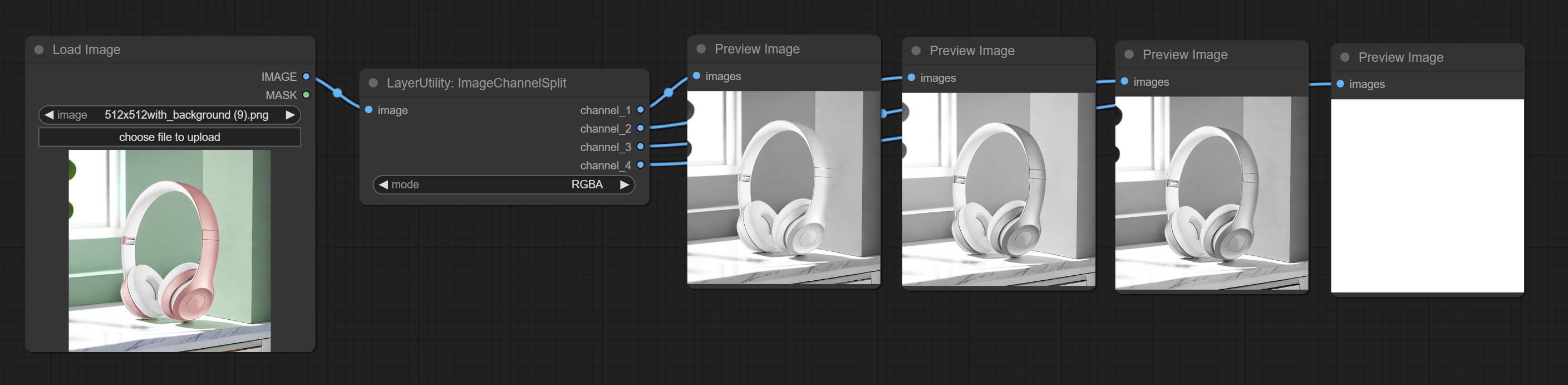

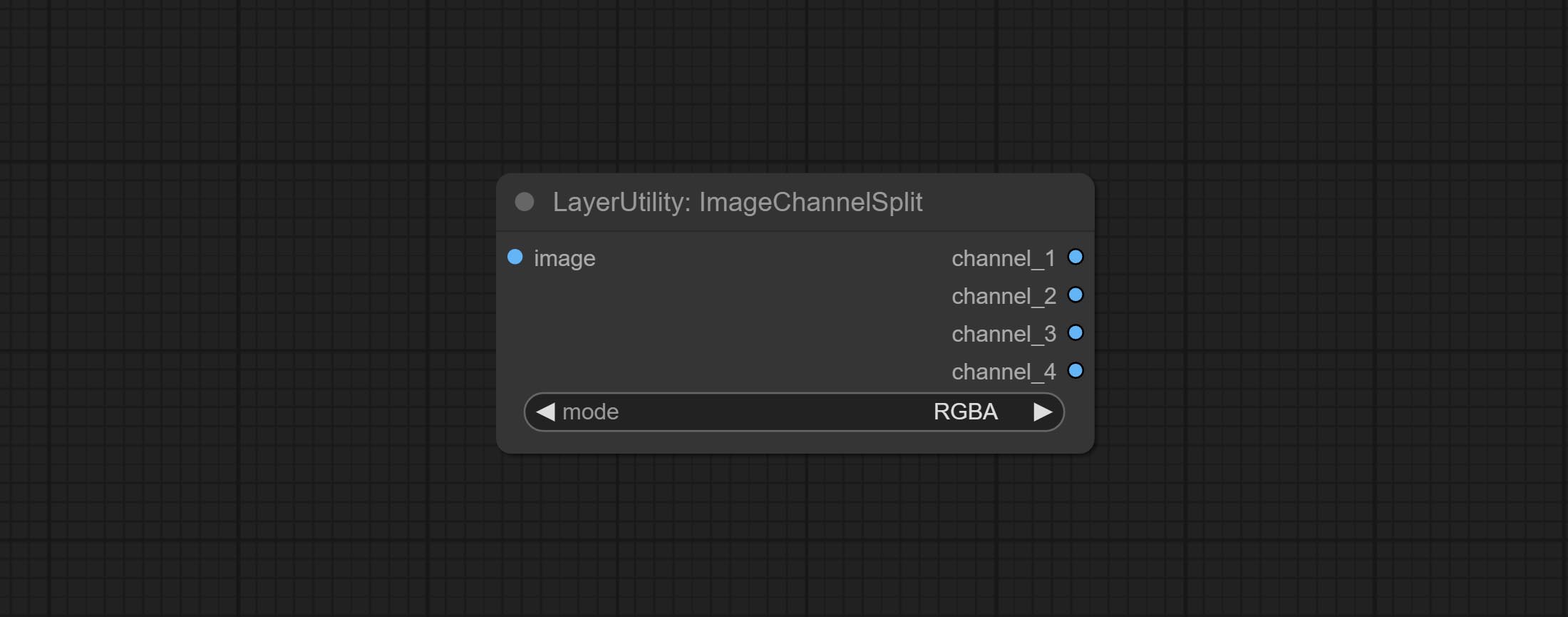

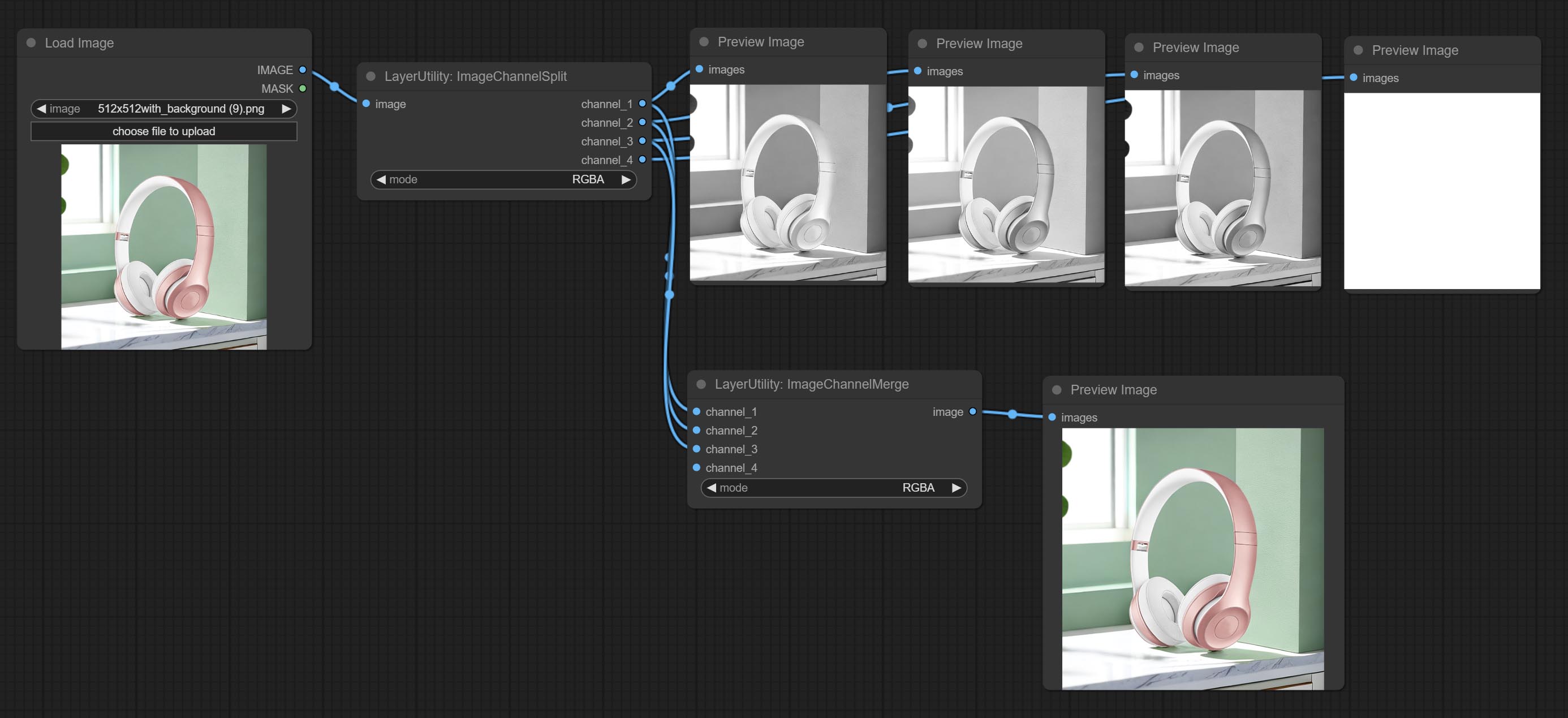

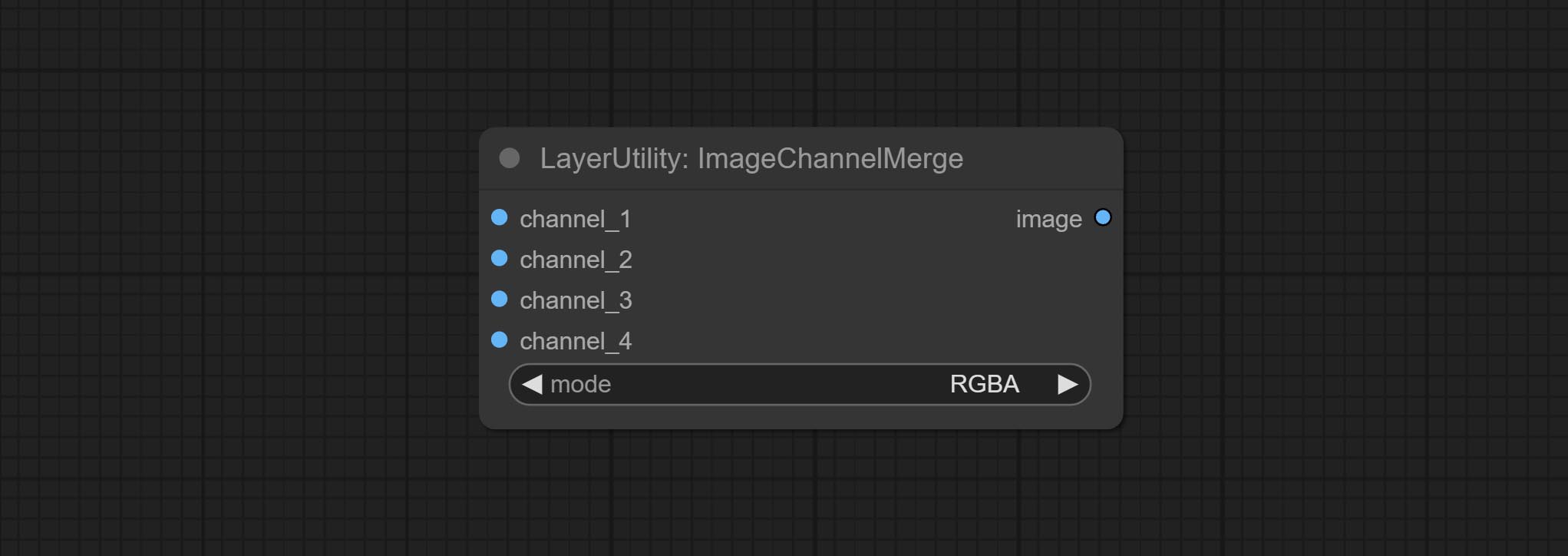

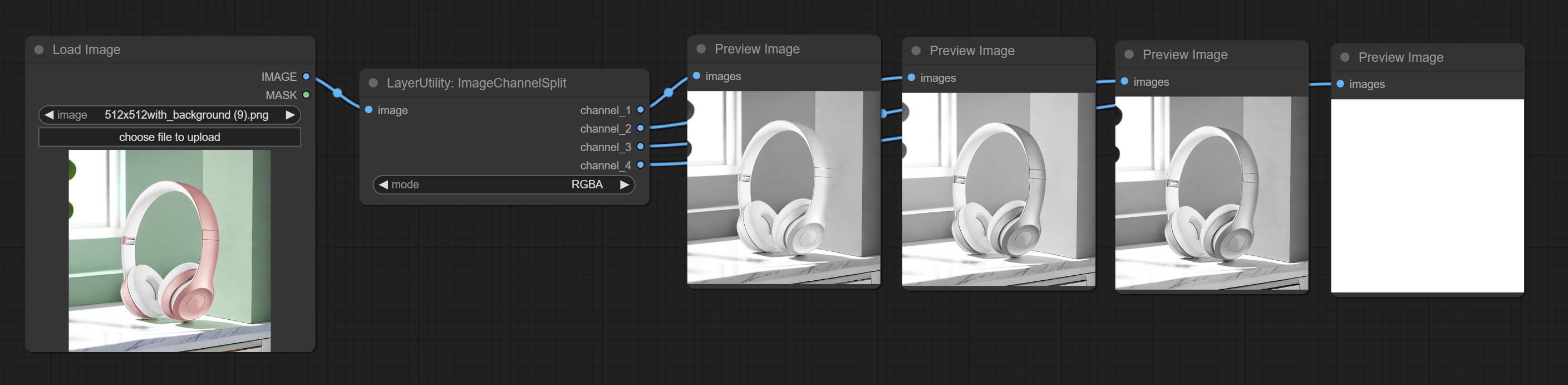

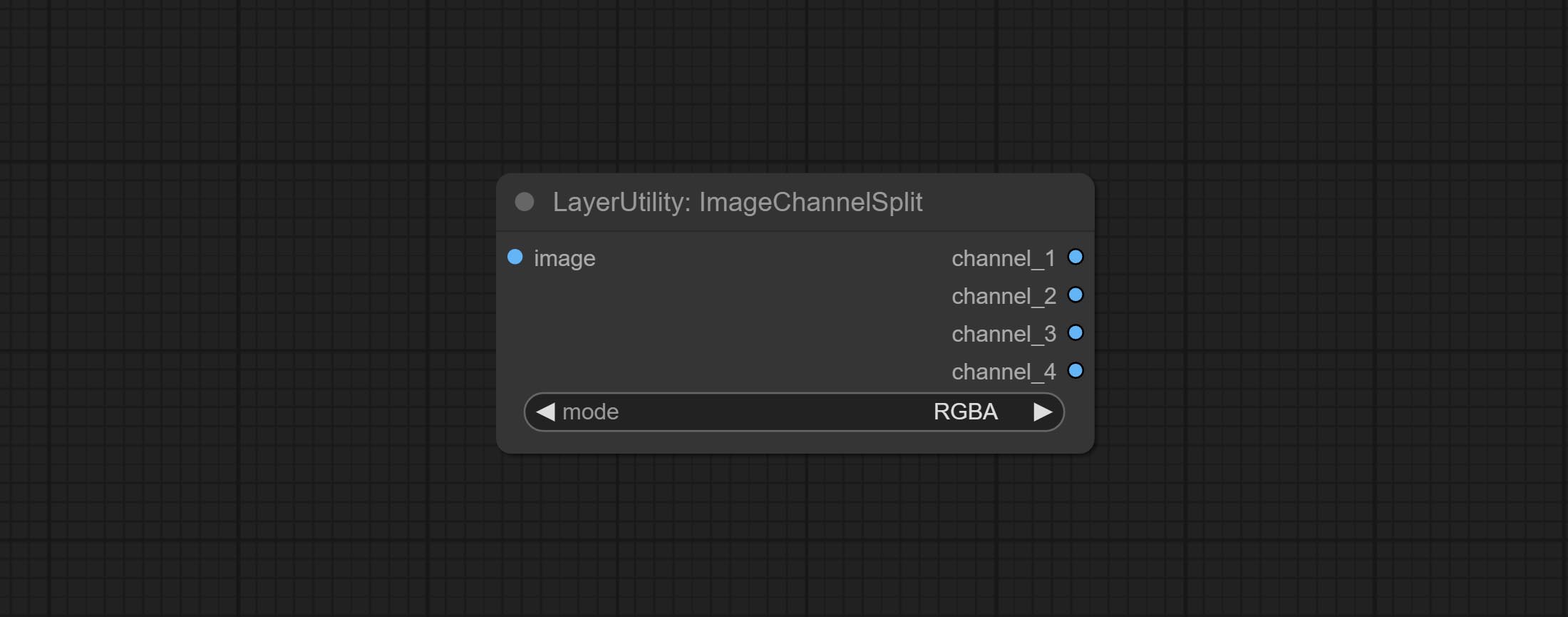

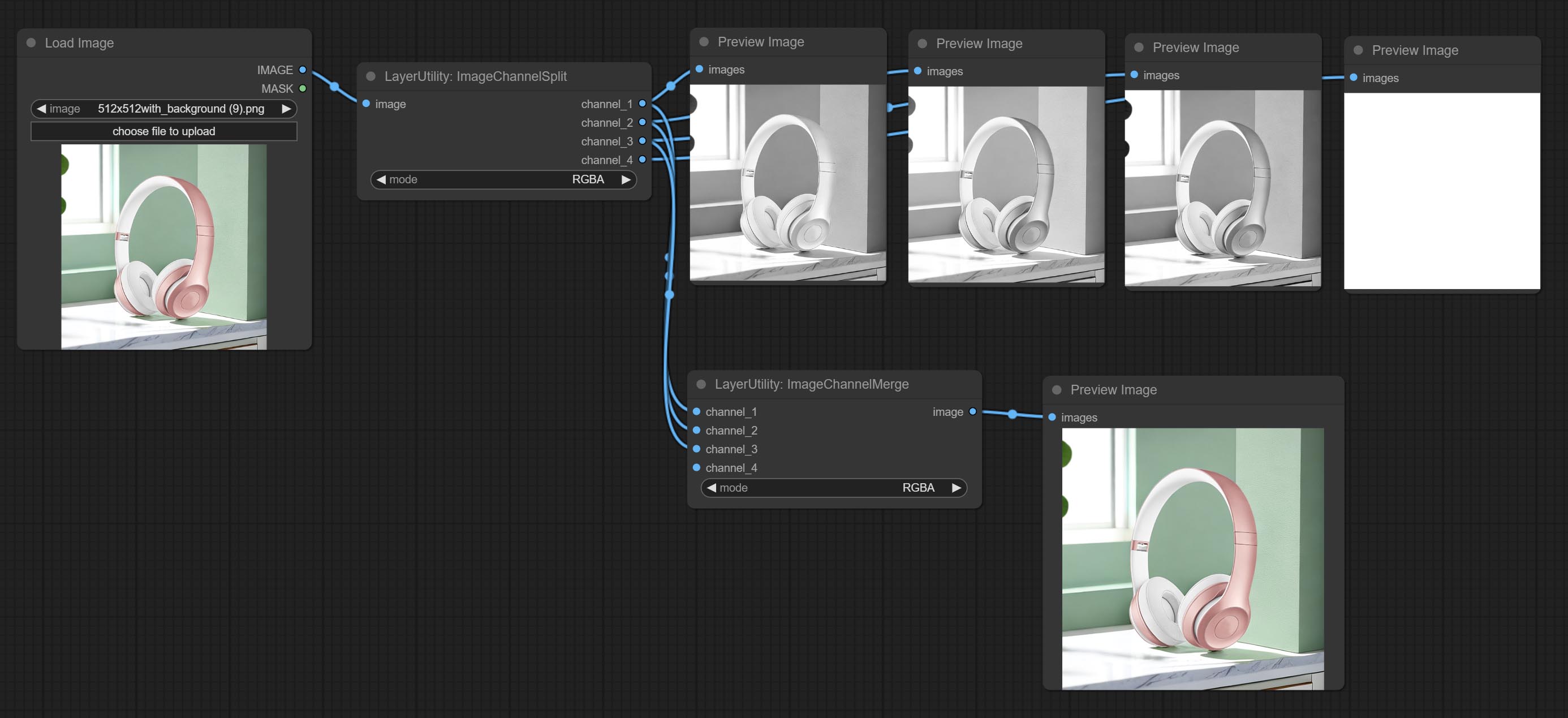

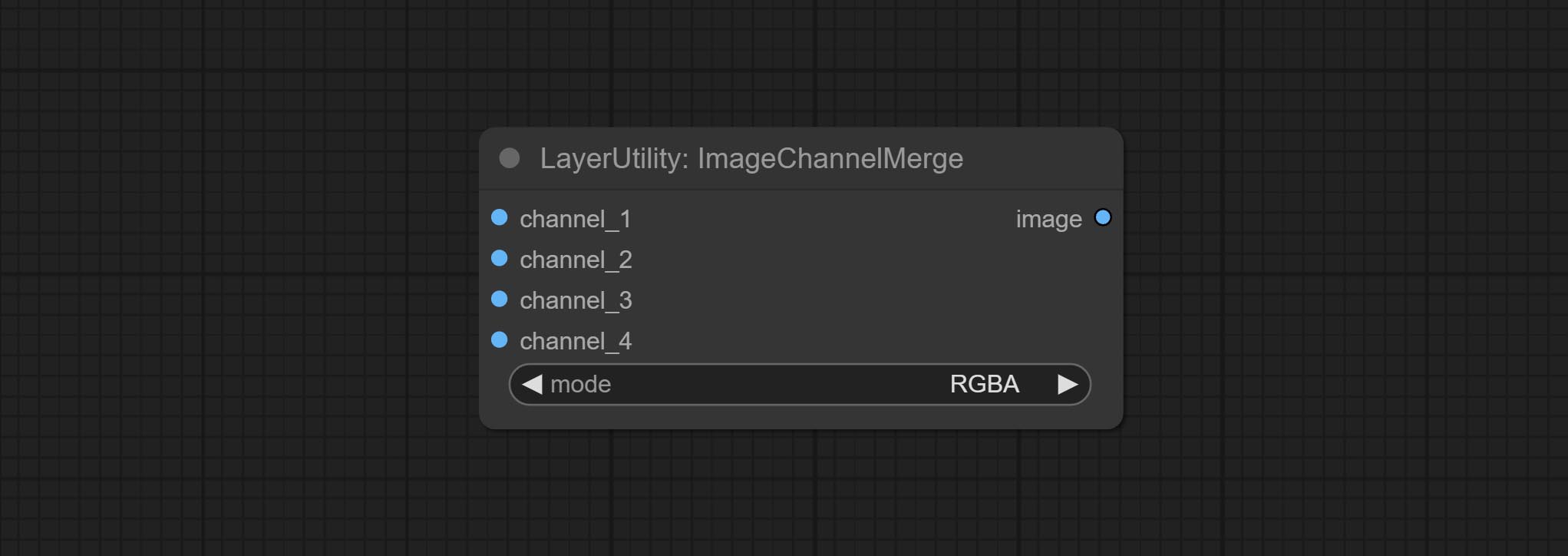

imageChannelsplit 및 imageChannelMerge 노드를 커밋하십시오.

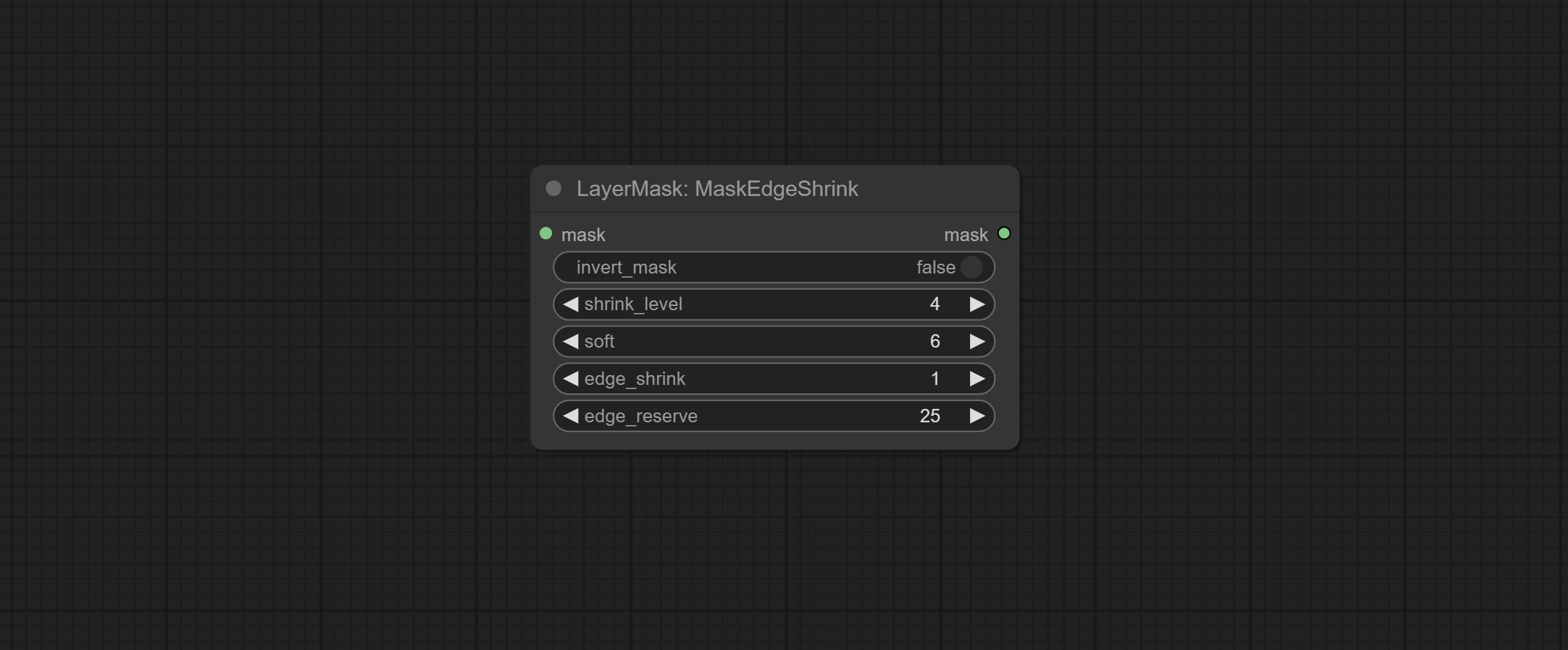

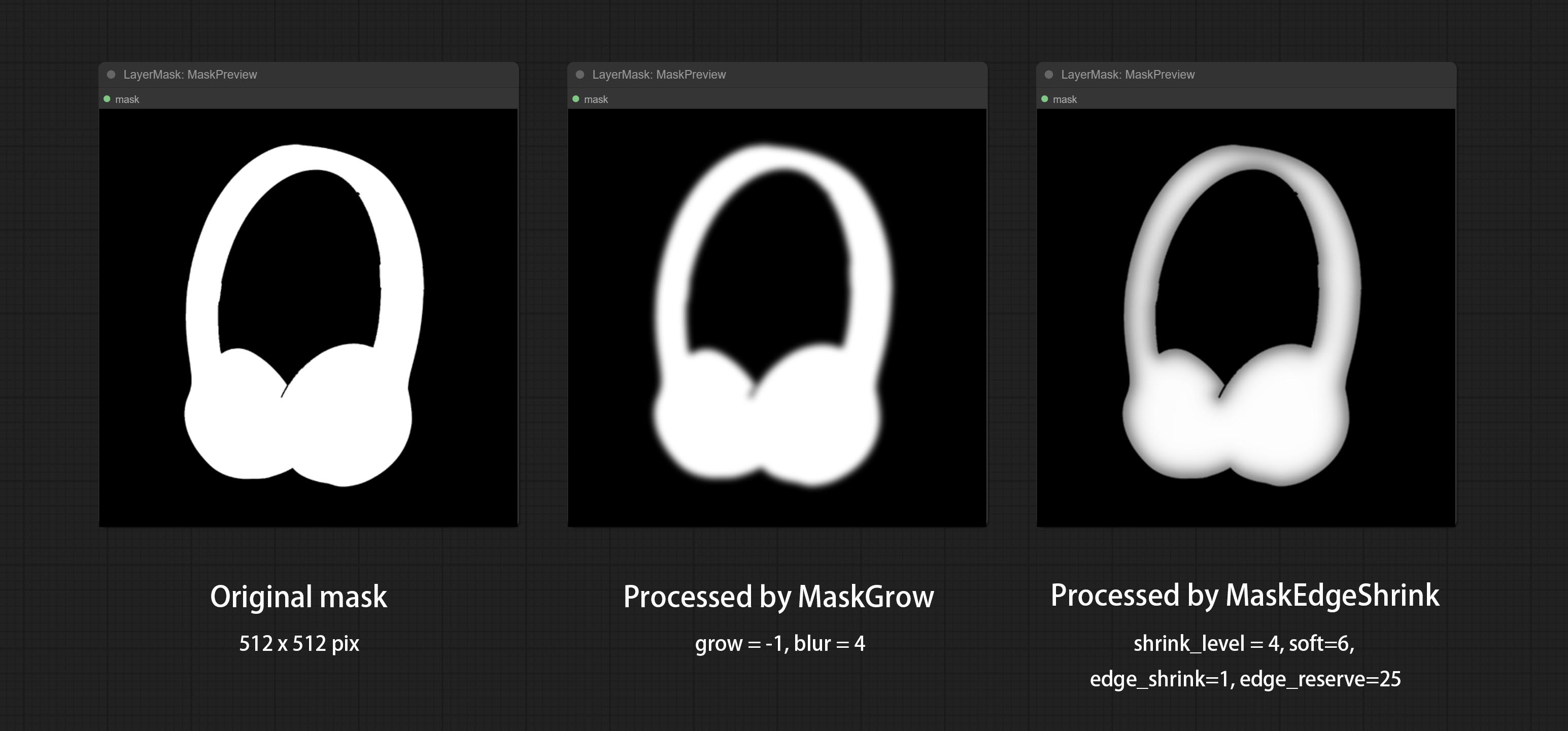

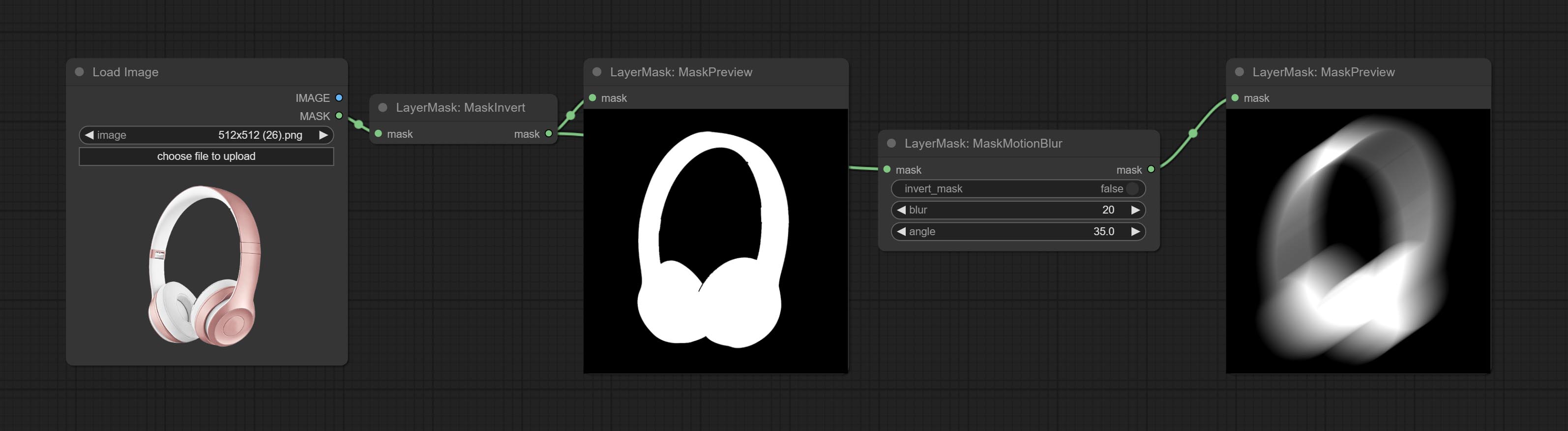

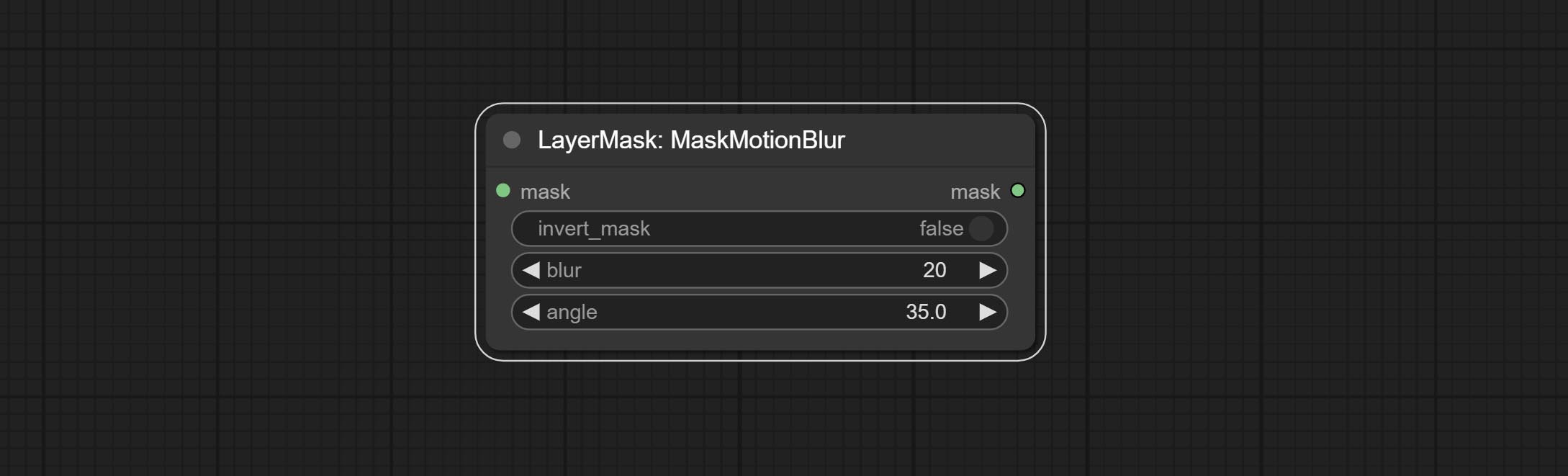

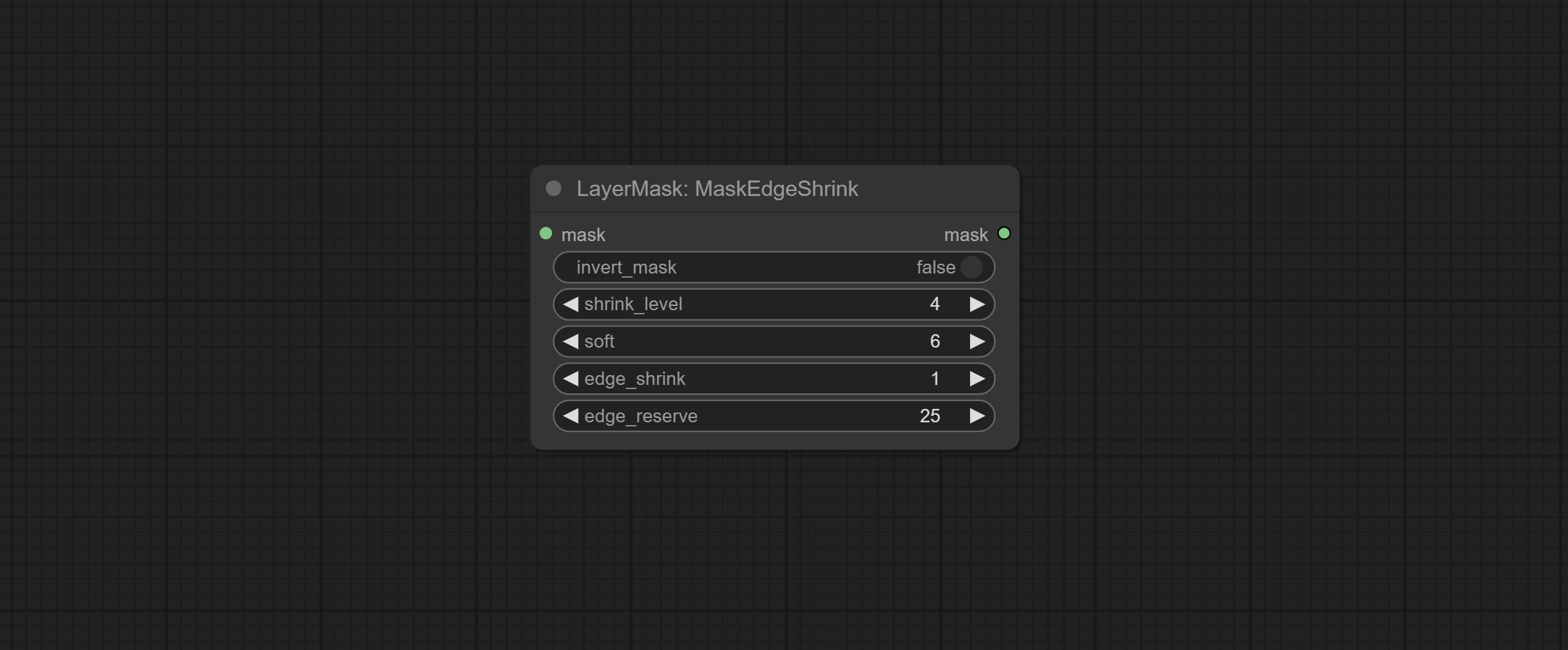

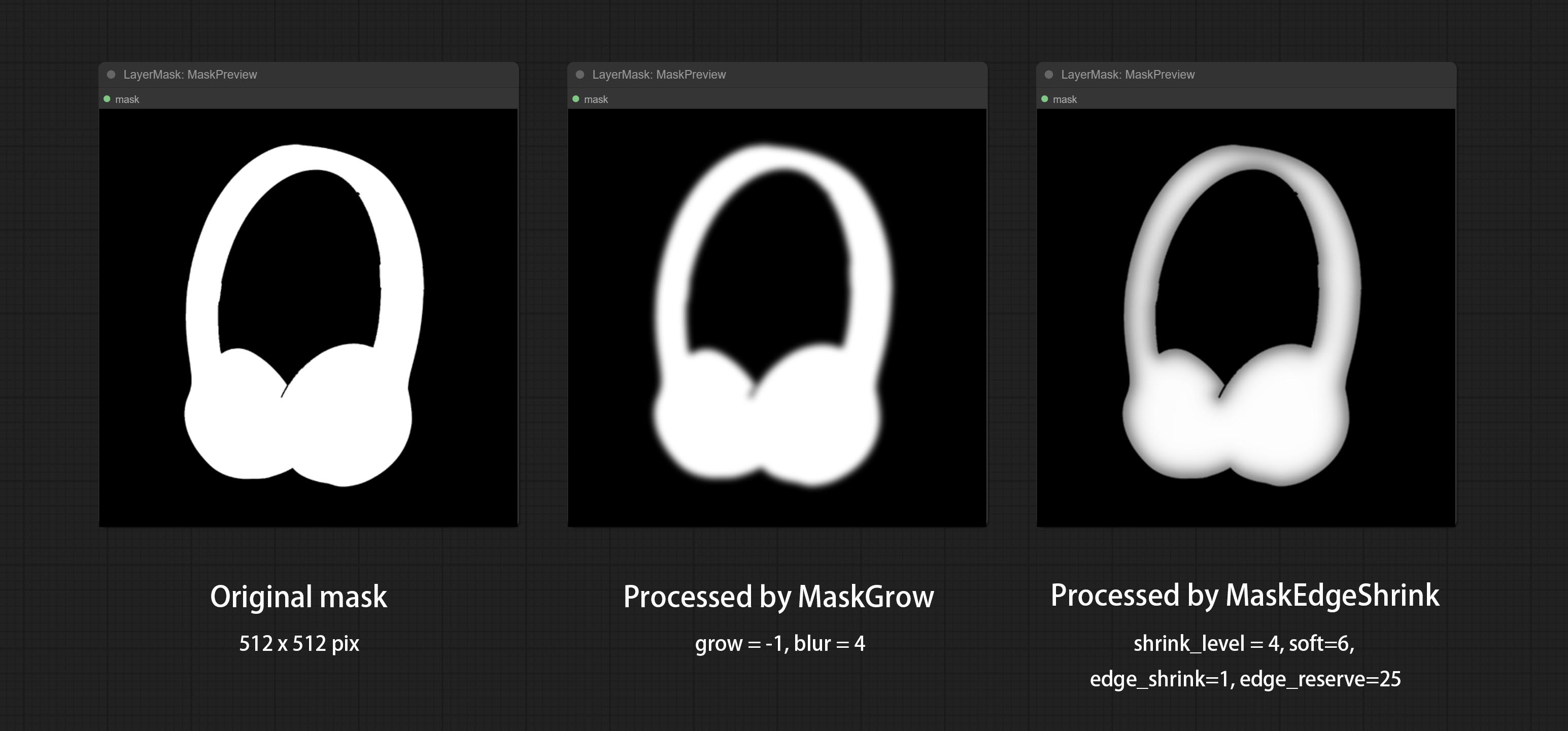

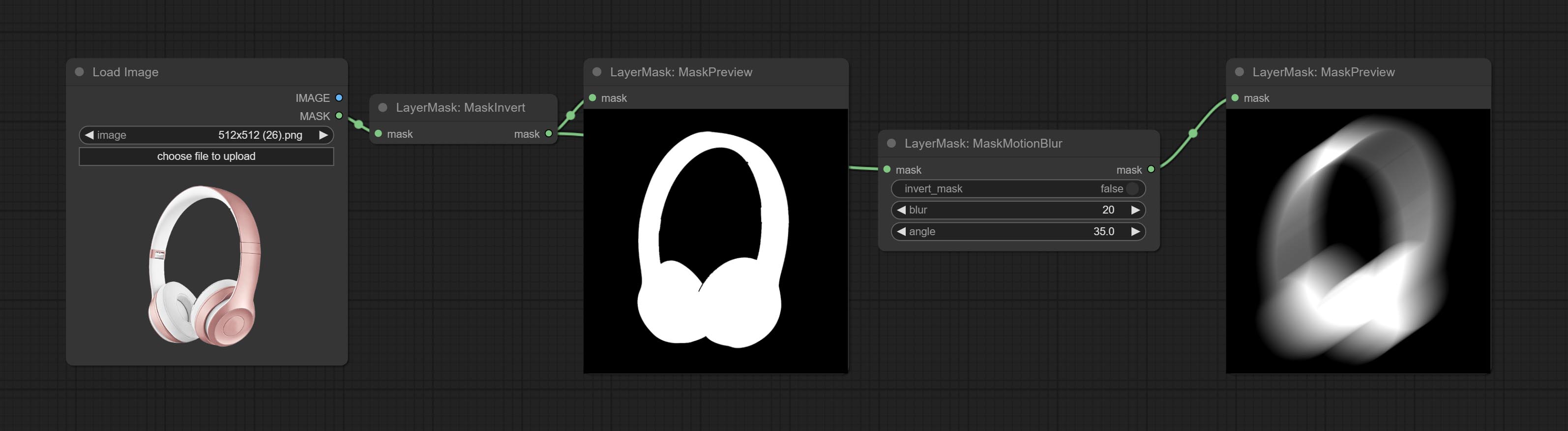

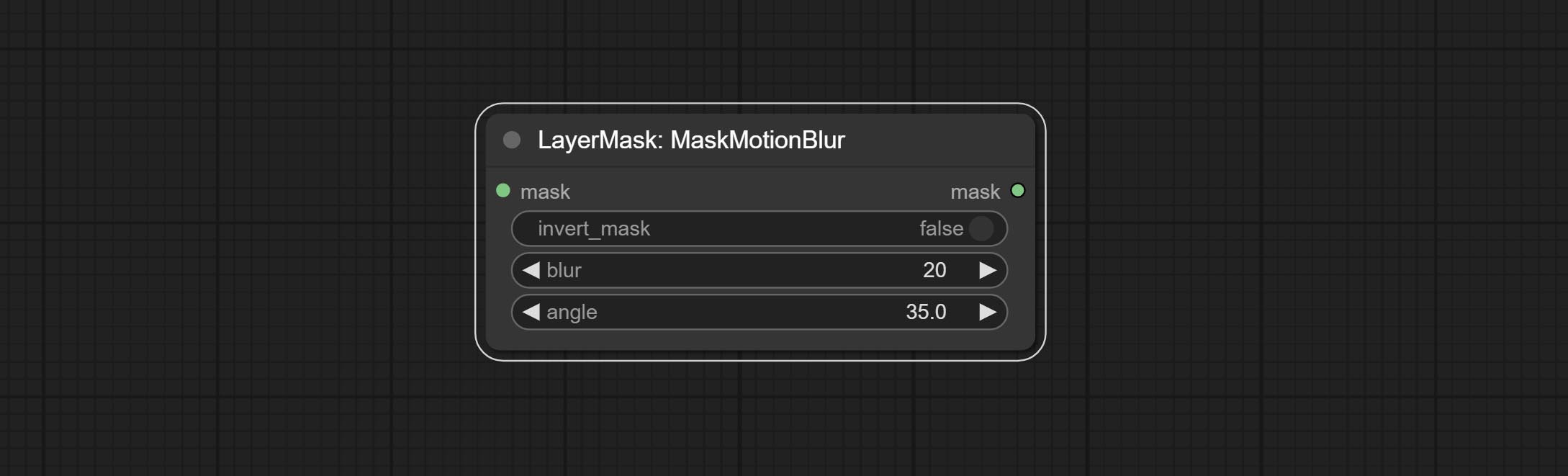

Commit MaskMotionBlur 노드.

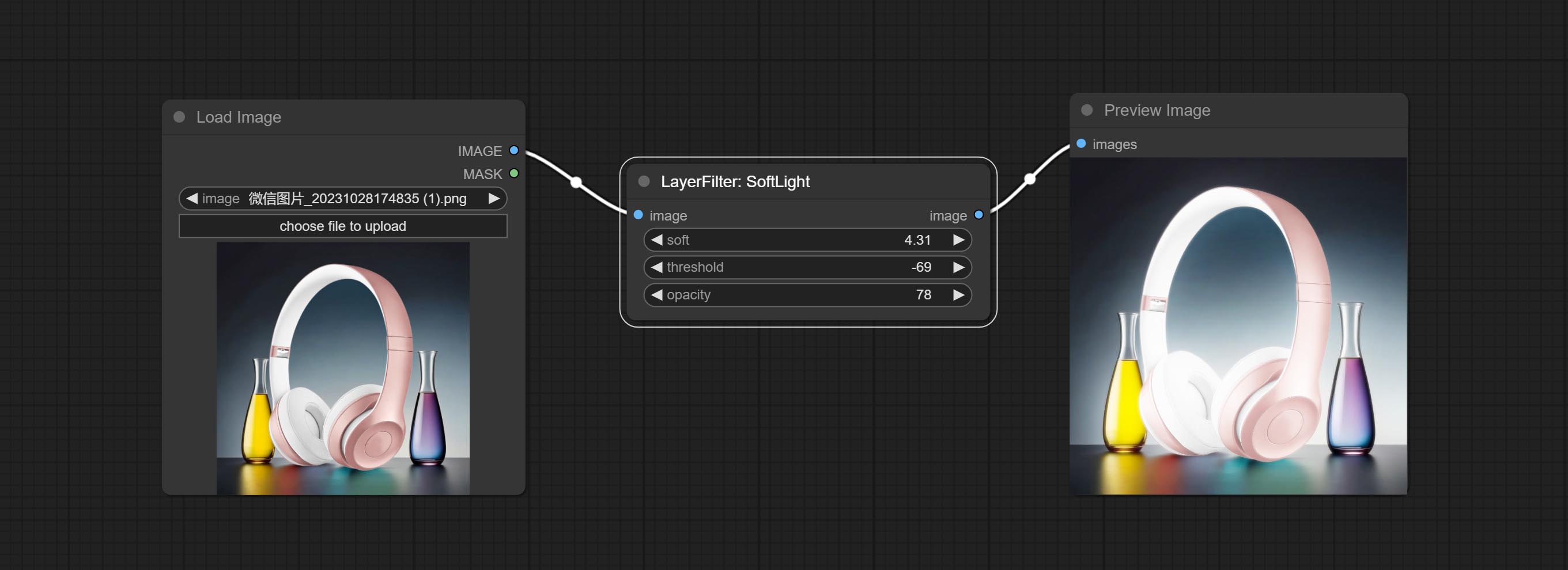

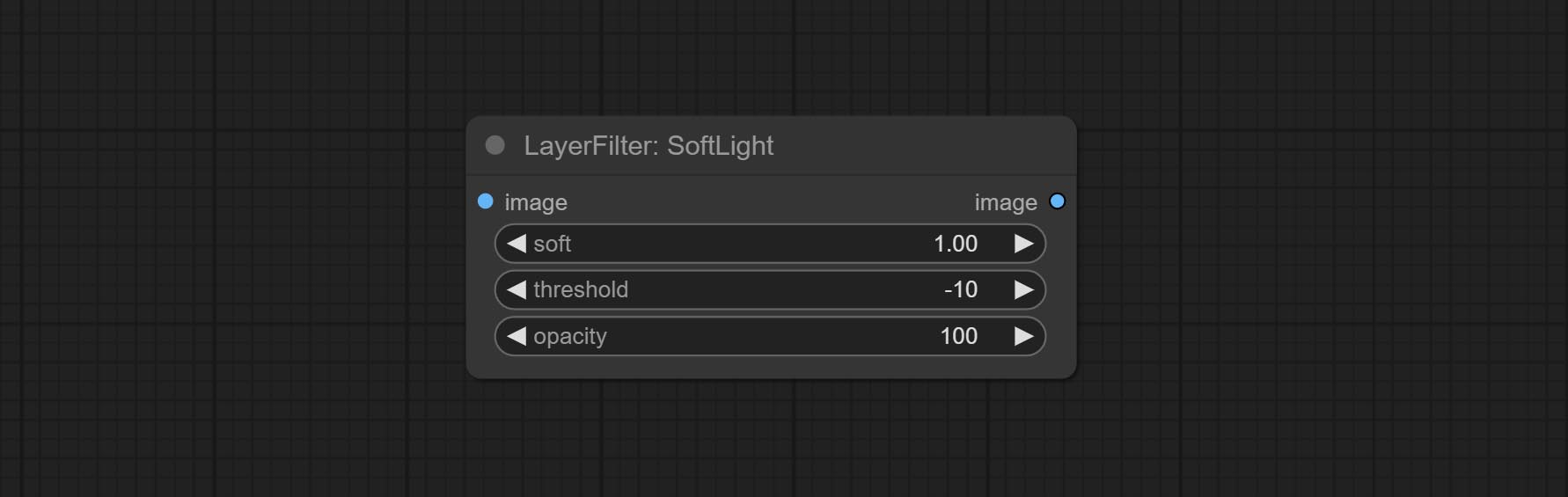

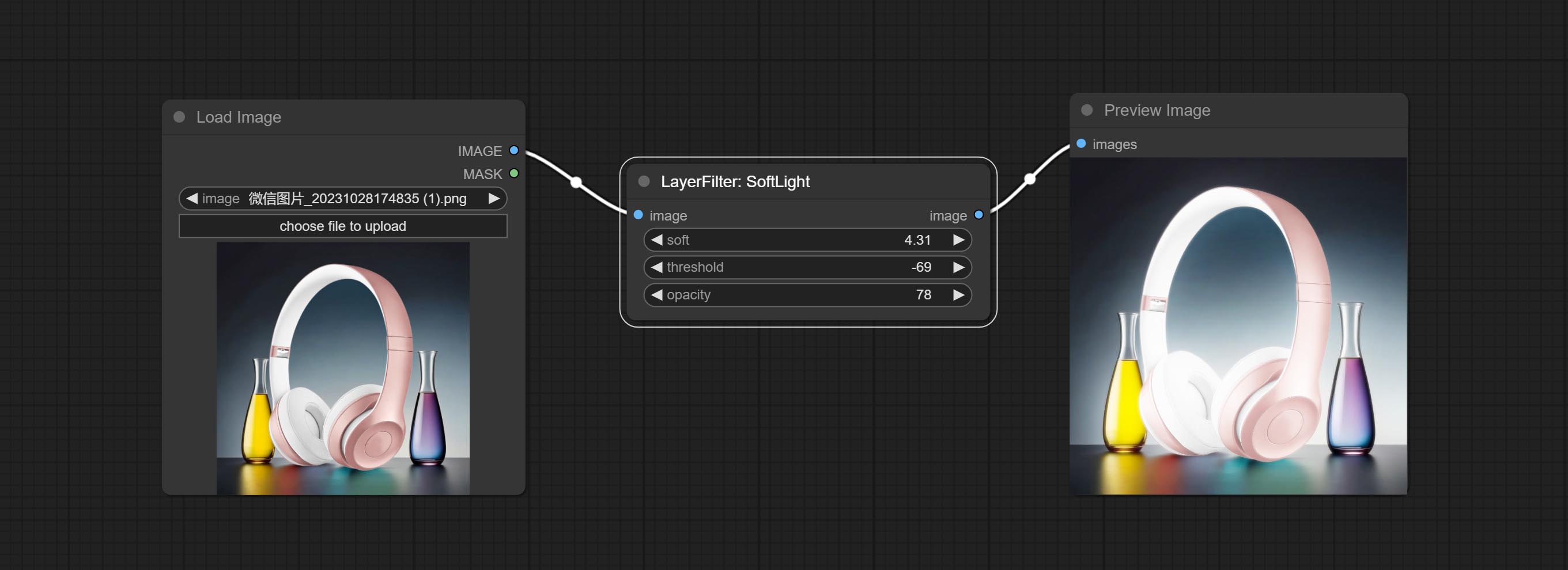

소프트 라이트 노드를 커밋하십시오.

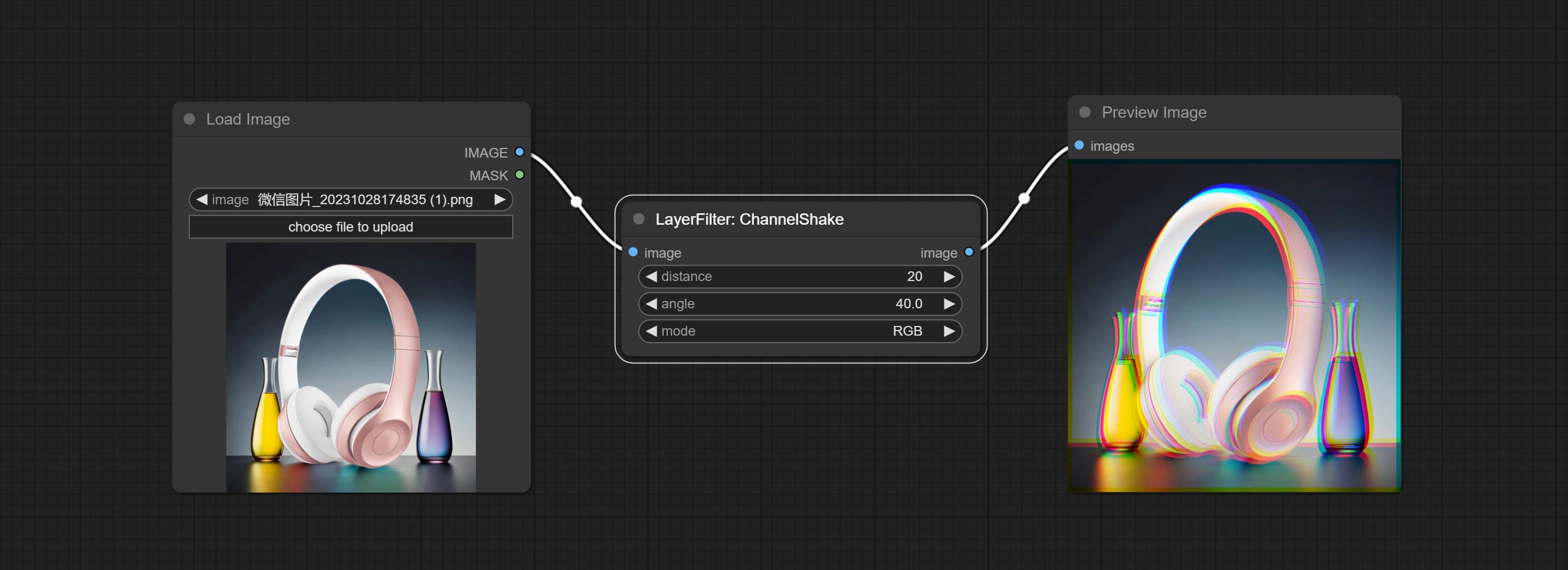

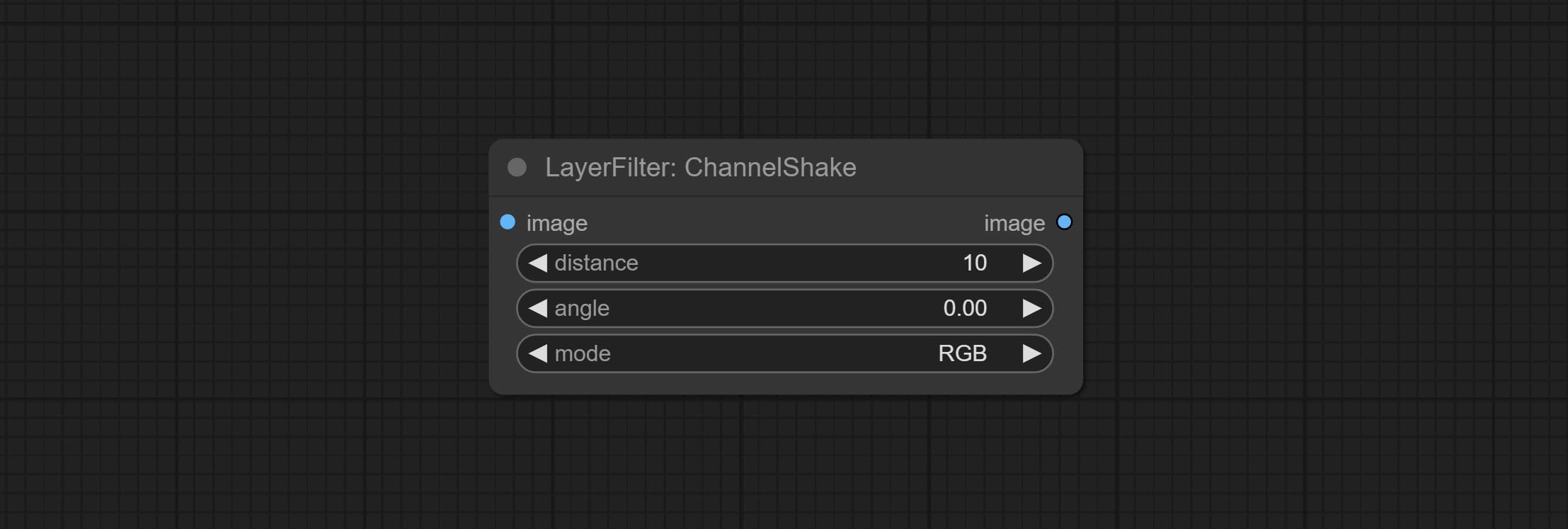

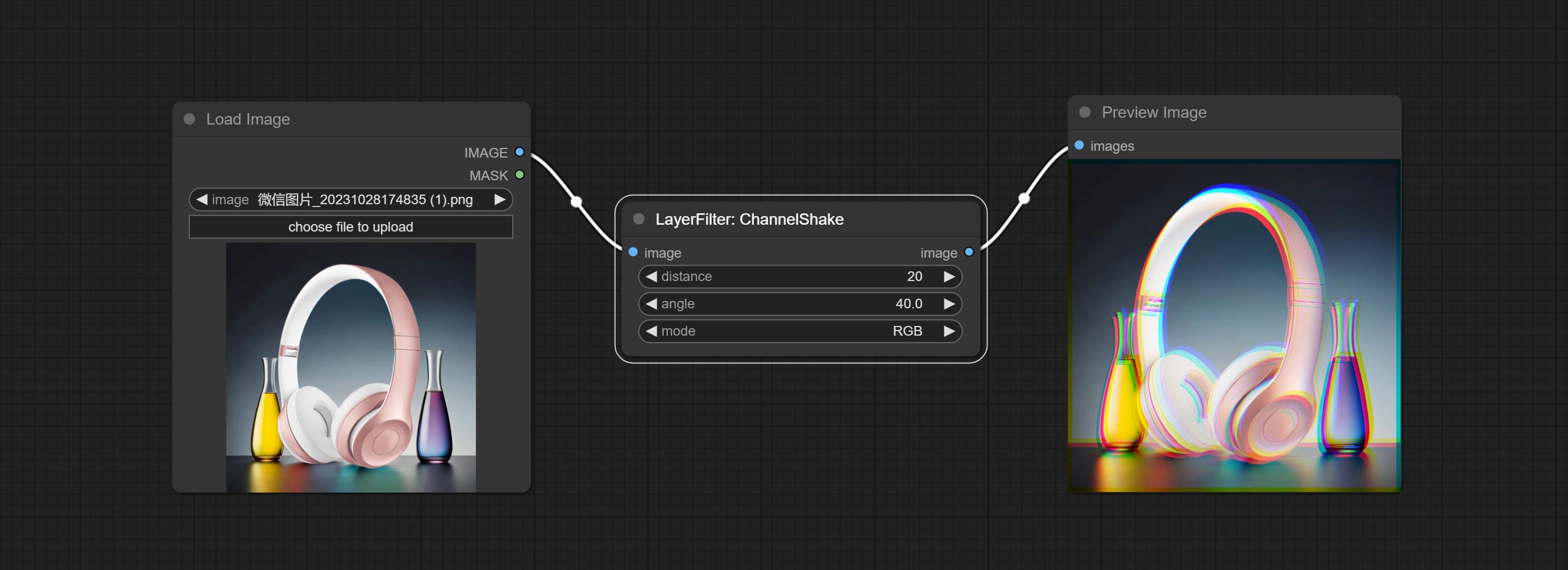

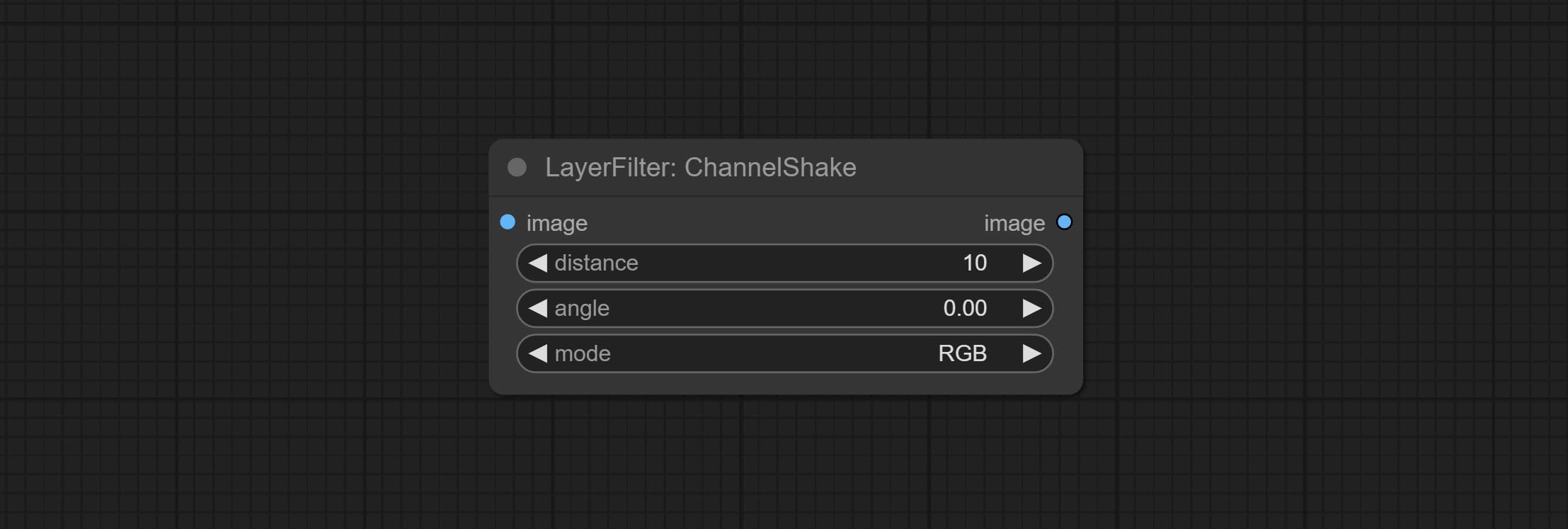

필터 인 Commit ChannelShake 노드는 Tiktok 로고와 유사한 채널 탈구 효과를 생성 할 수 있습니다.

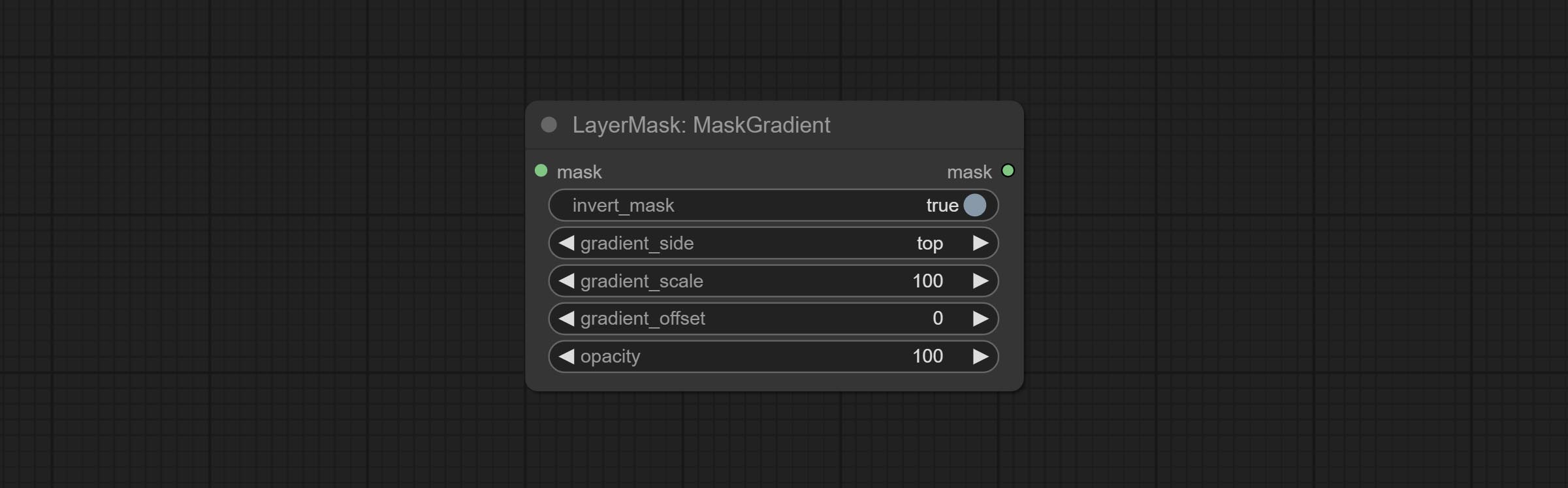

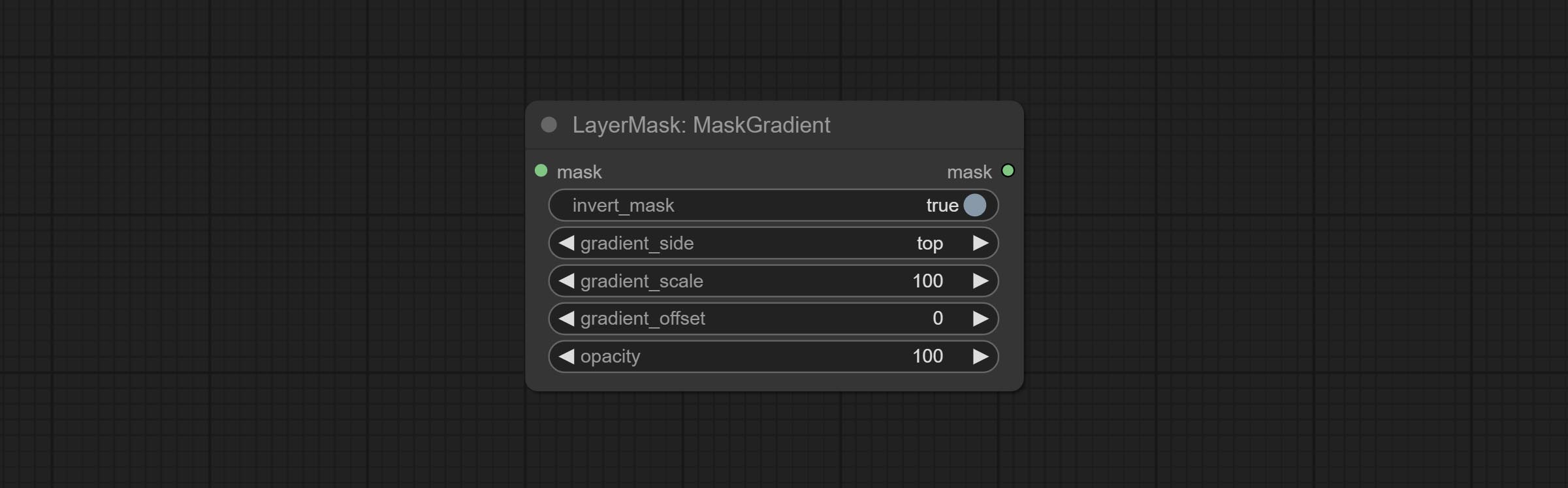

마스크 그레이드 노드를 커밋하고 마스크에서 그라디언트를 생성 할 수 있습니다.

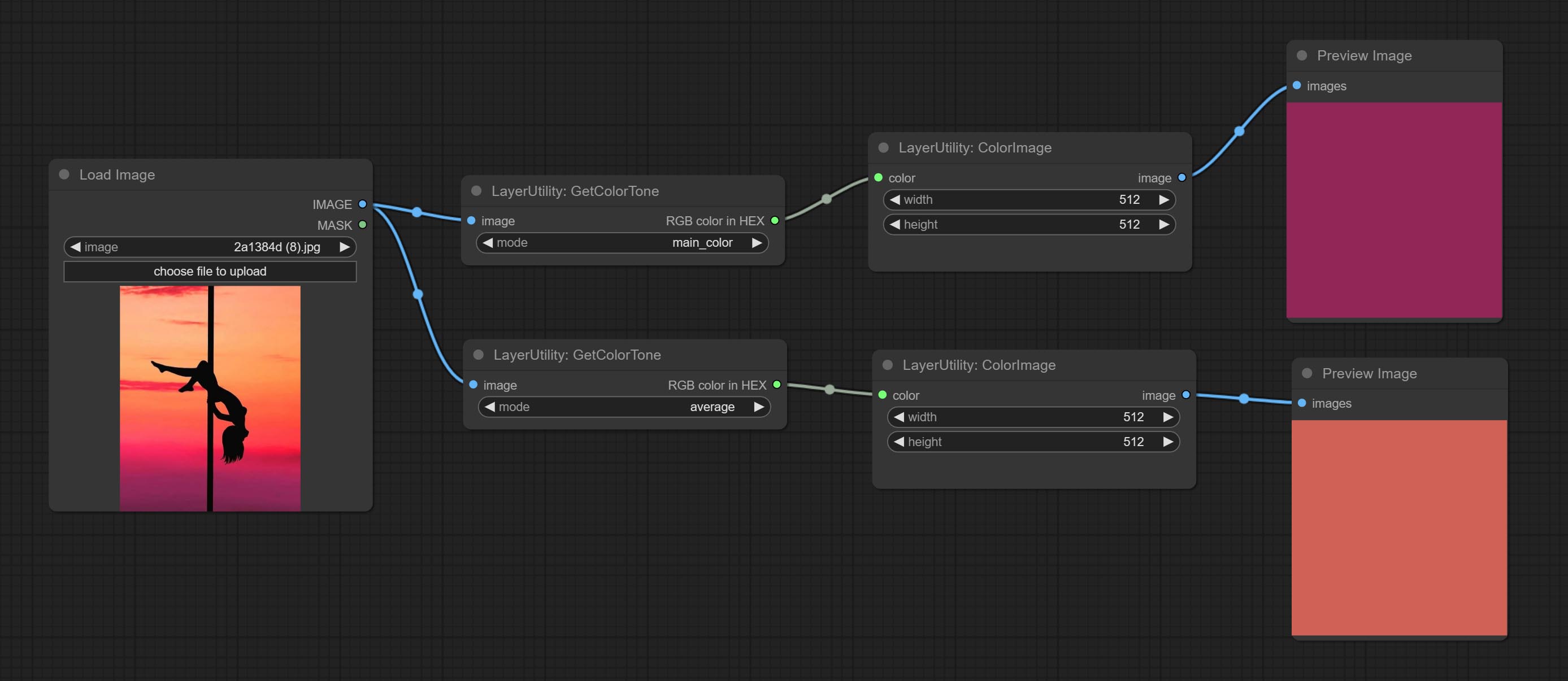

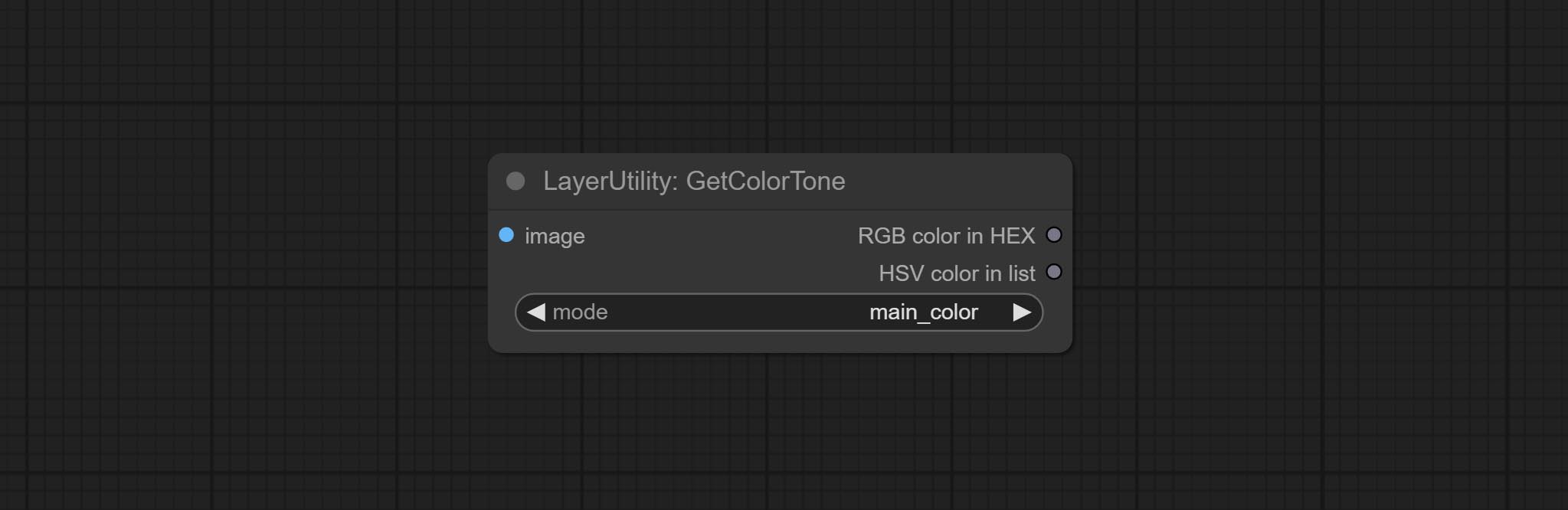

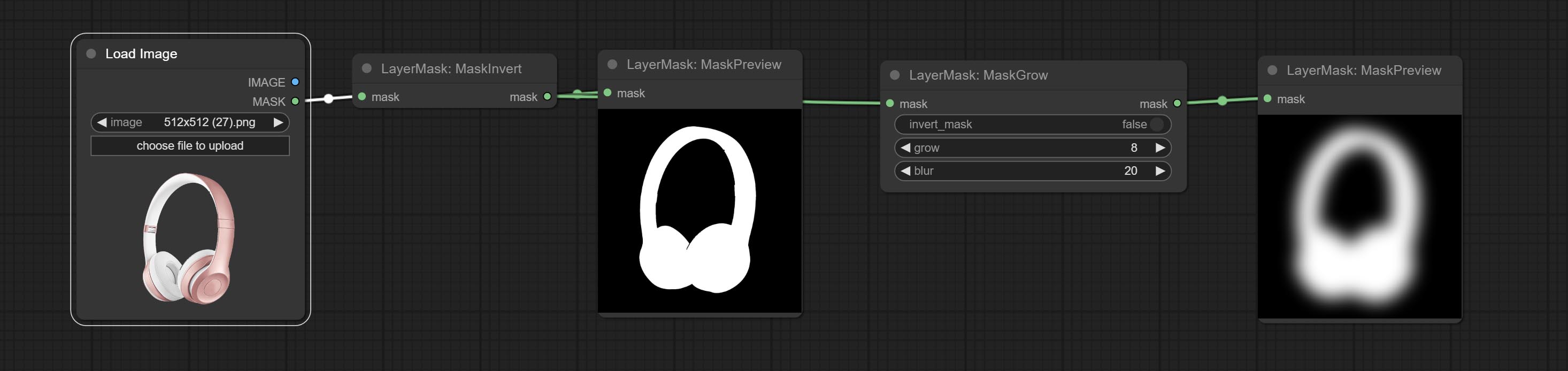

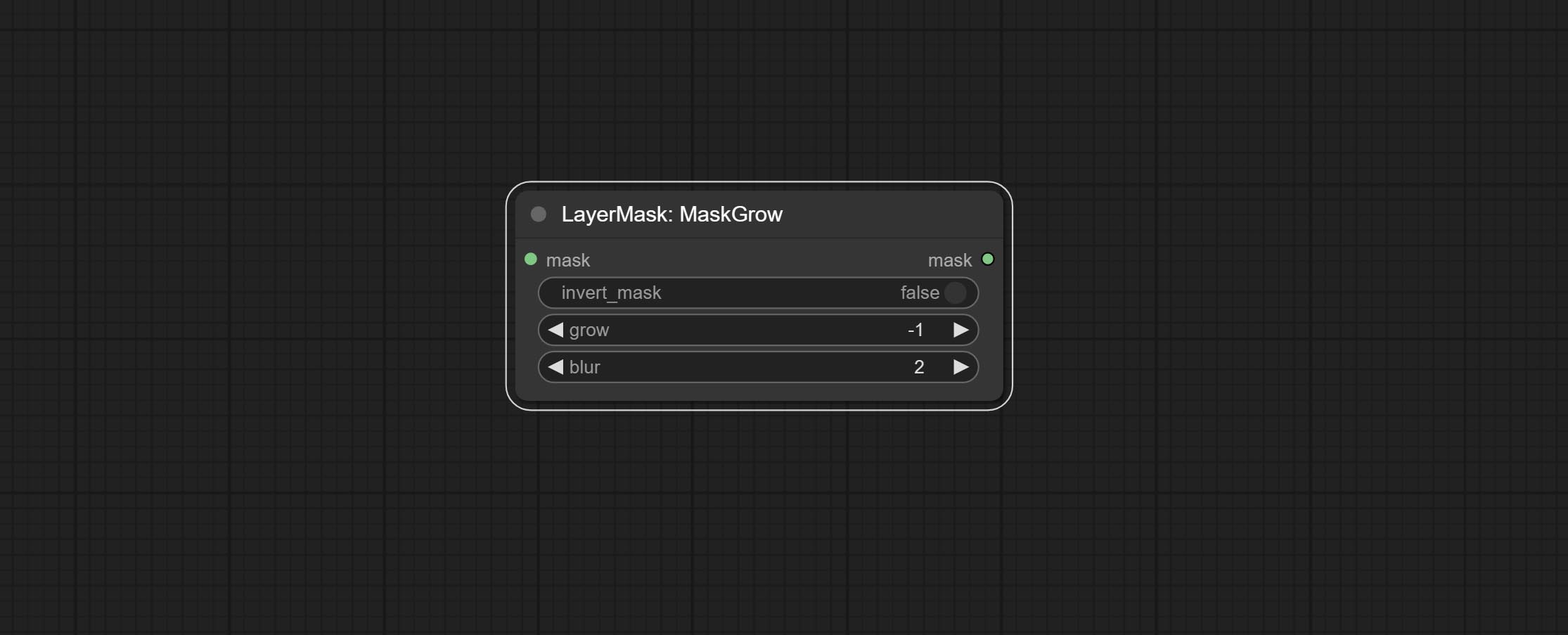

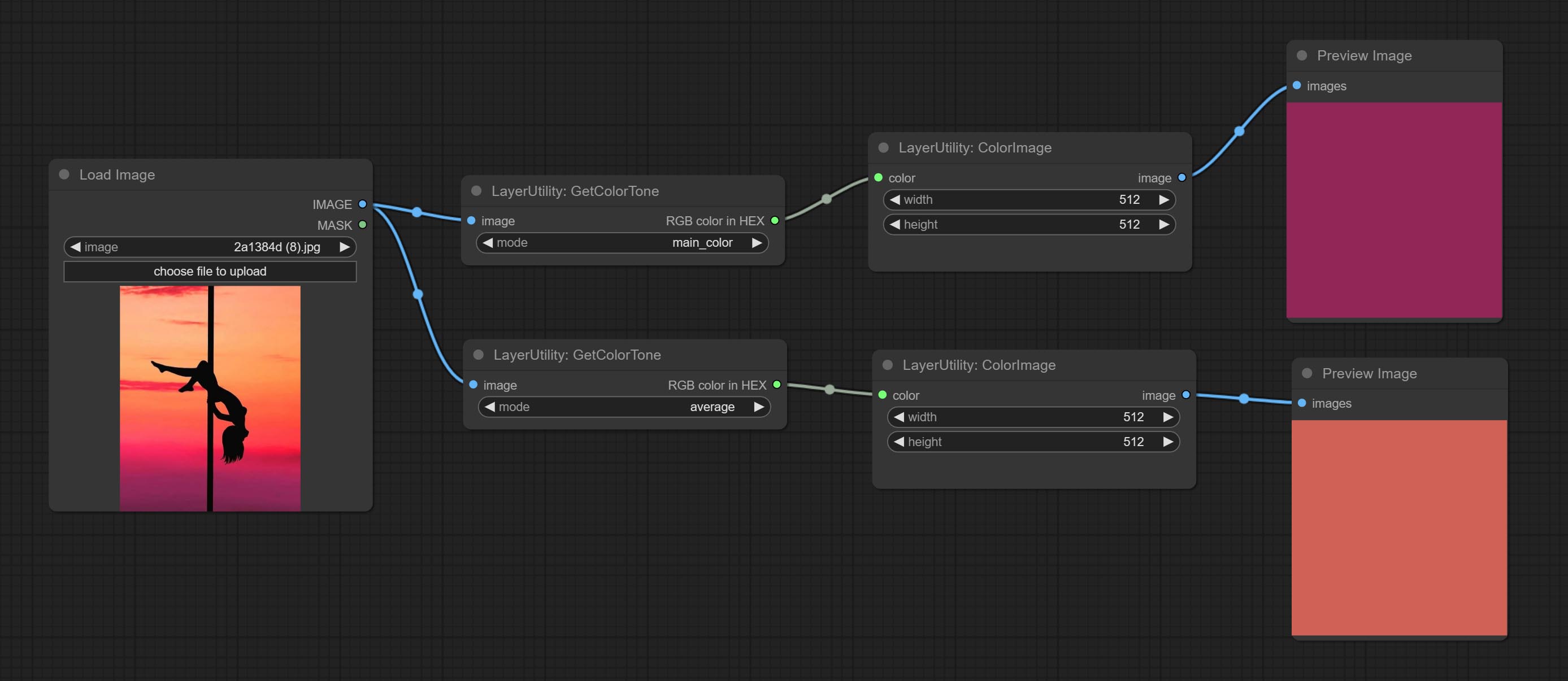

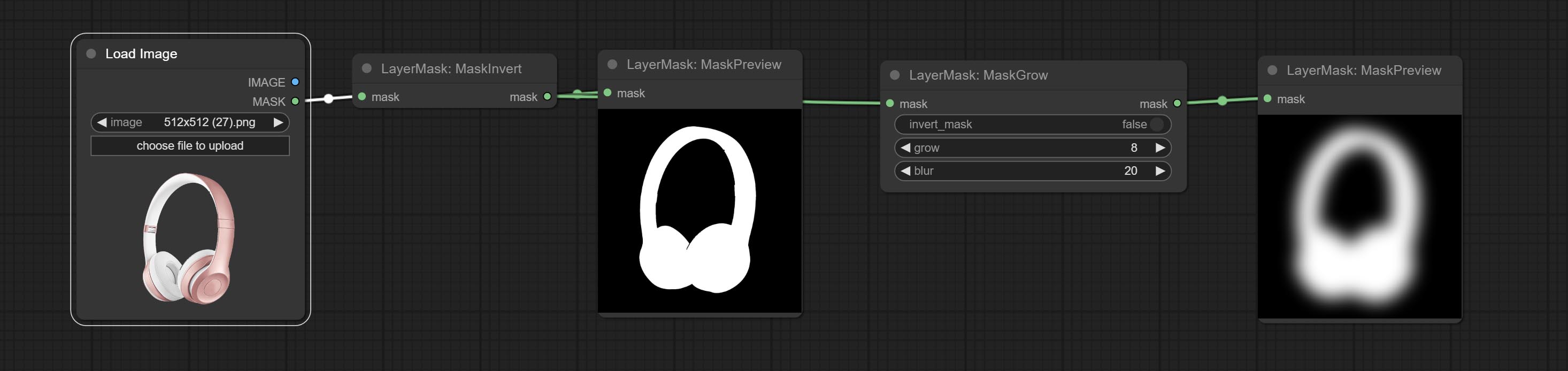

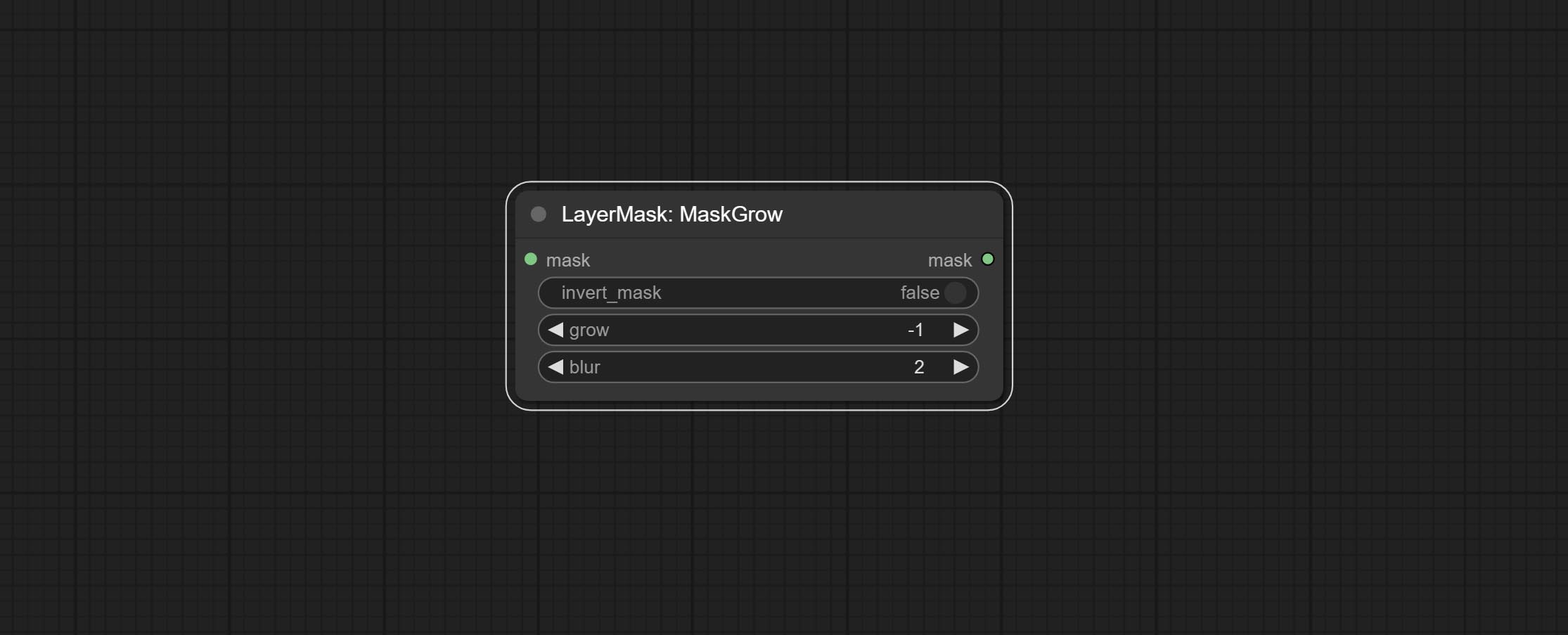

getcolortone 노드를 커밋하고 이미지의 기본 색상 또는 평균 색상을 얻을 수 있습니다. 마스크 그로우 및 마스크드 게시 링크 노드를 커밋하십시오.

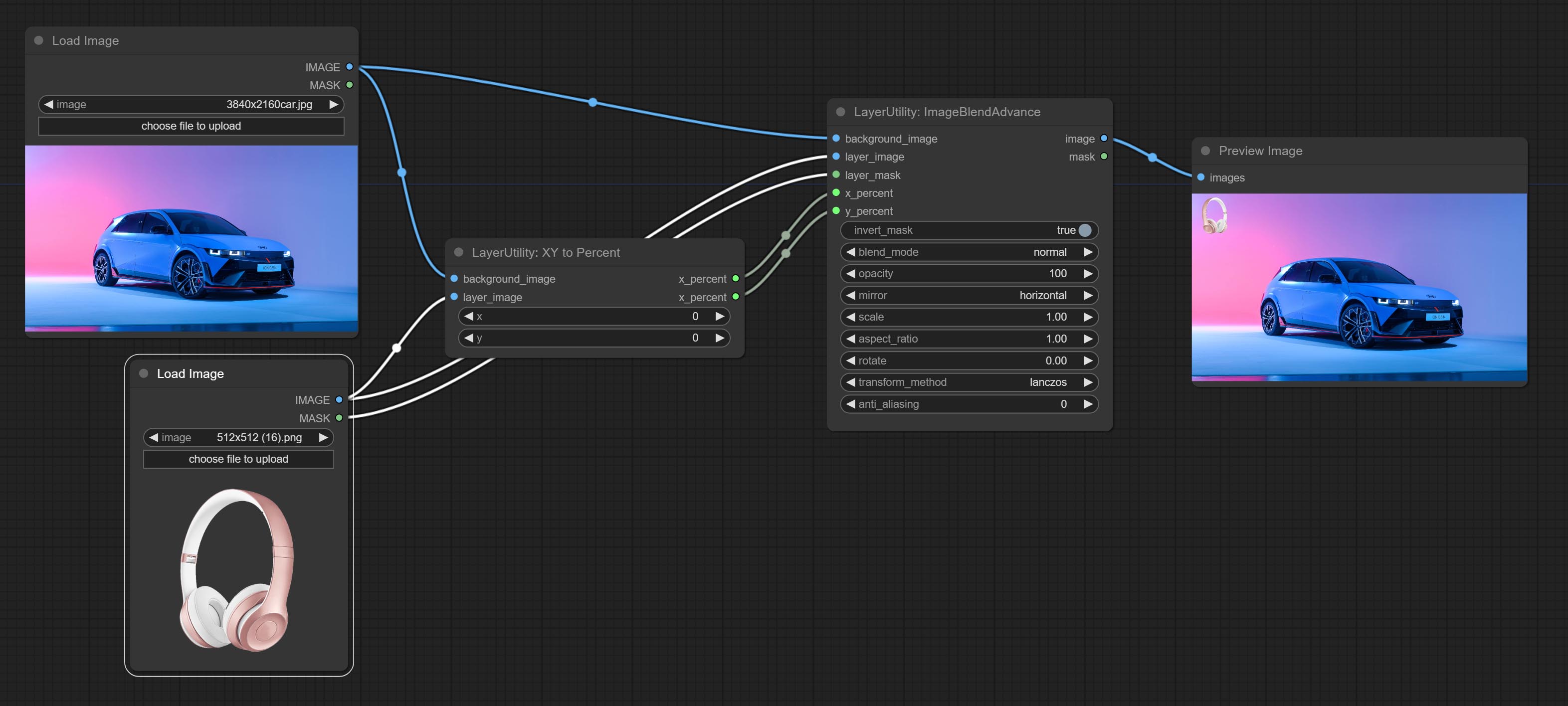

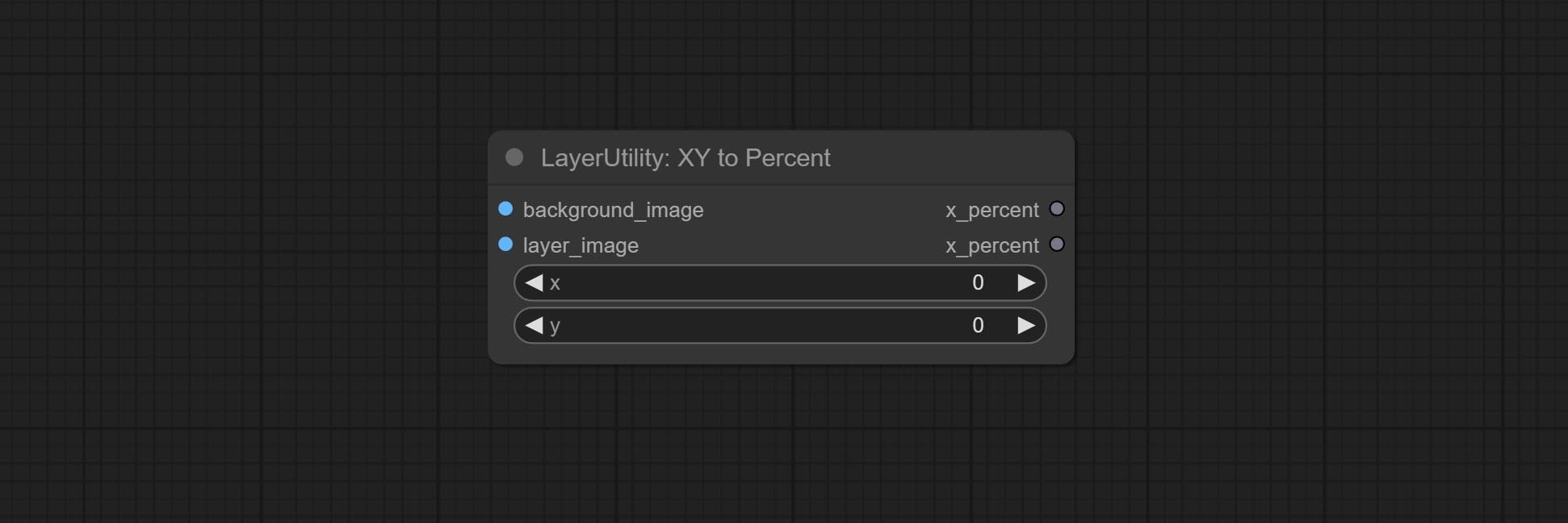

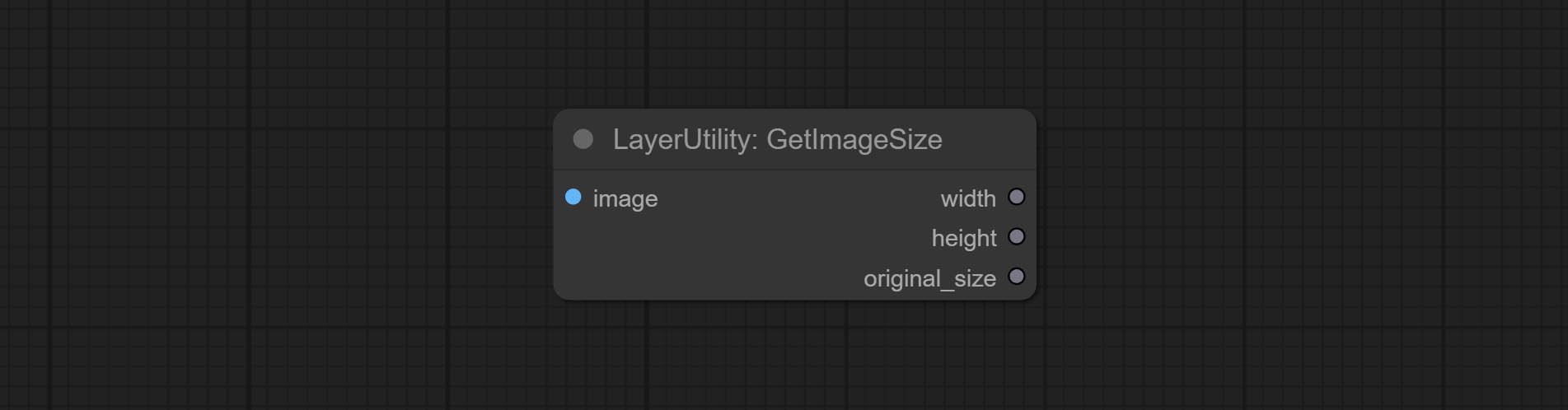

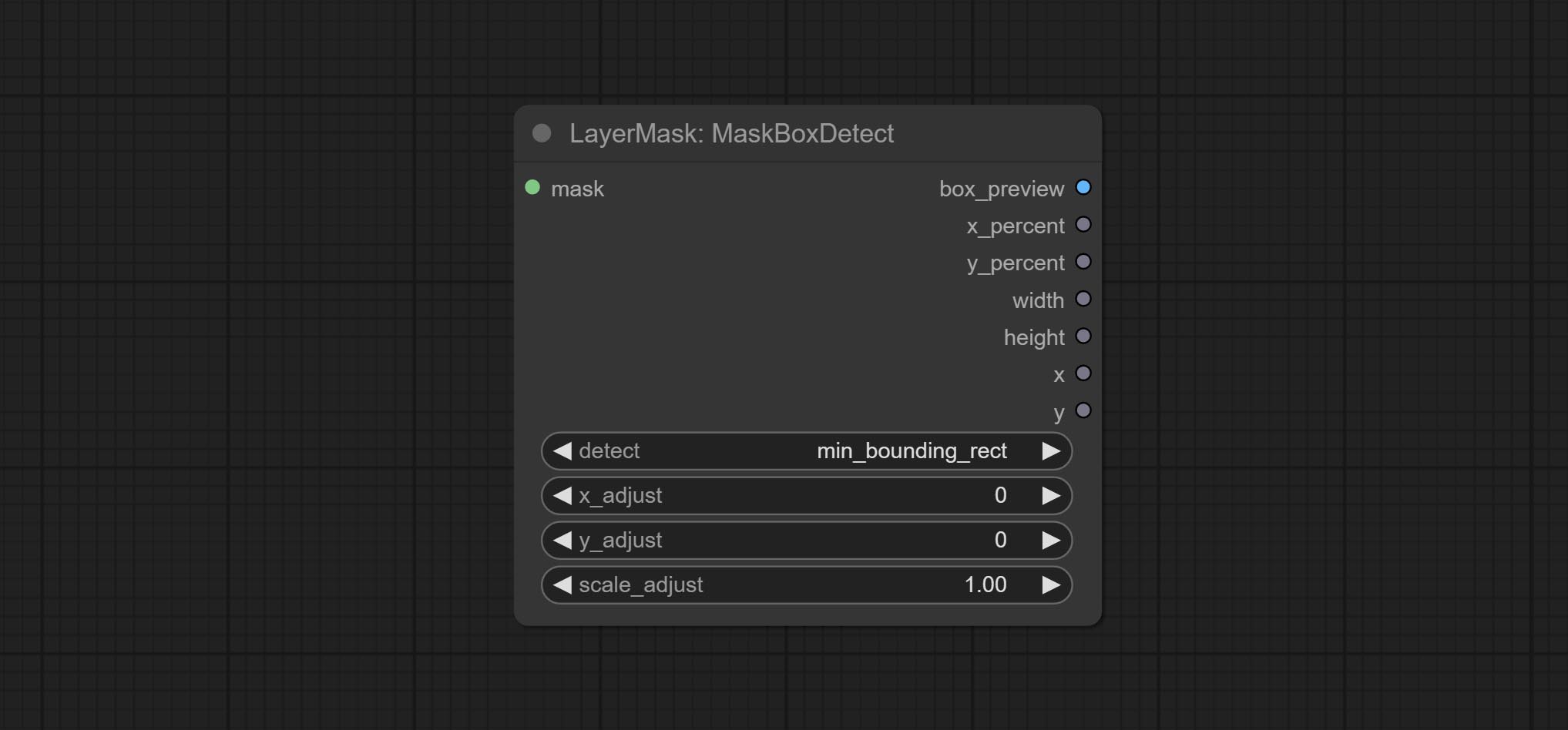

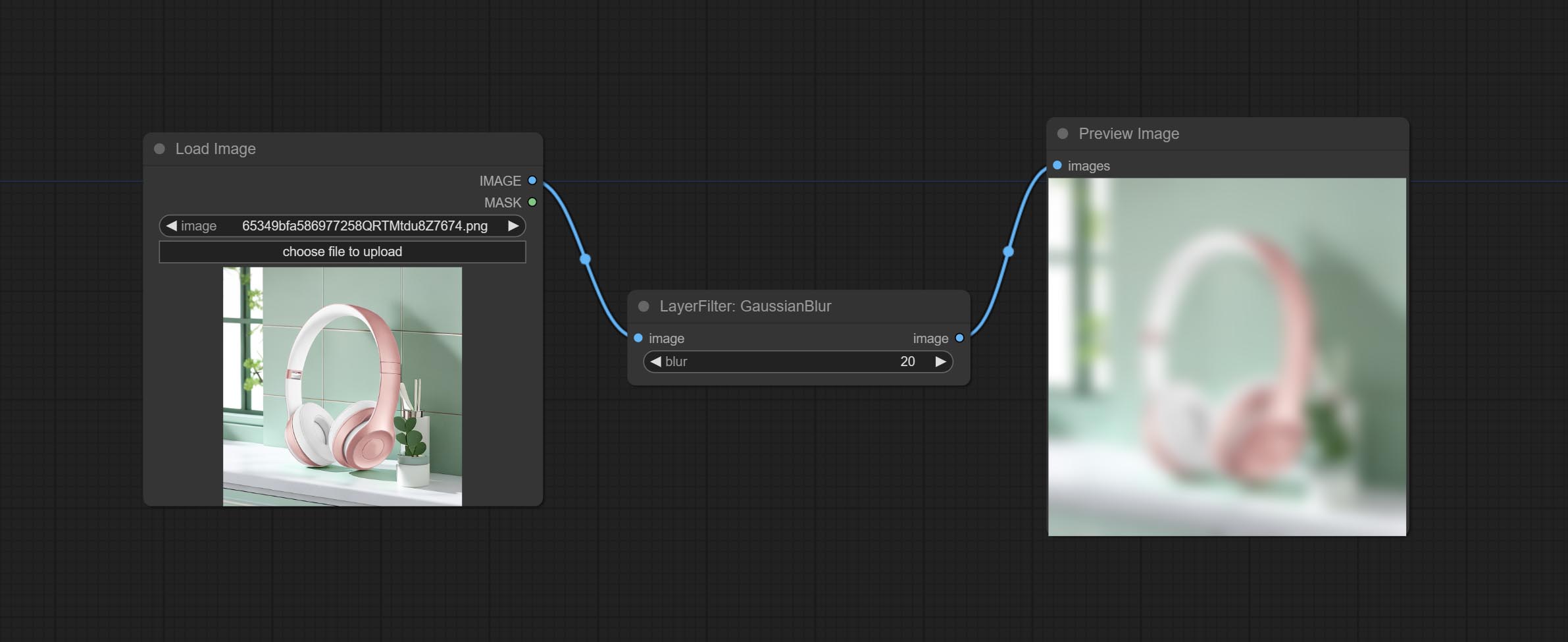

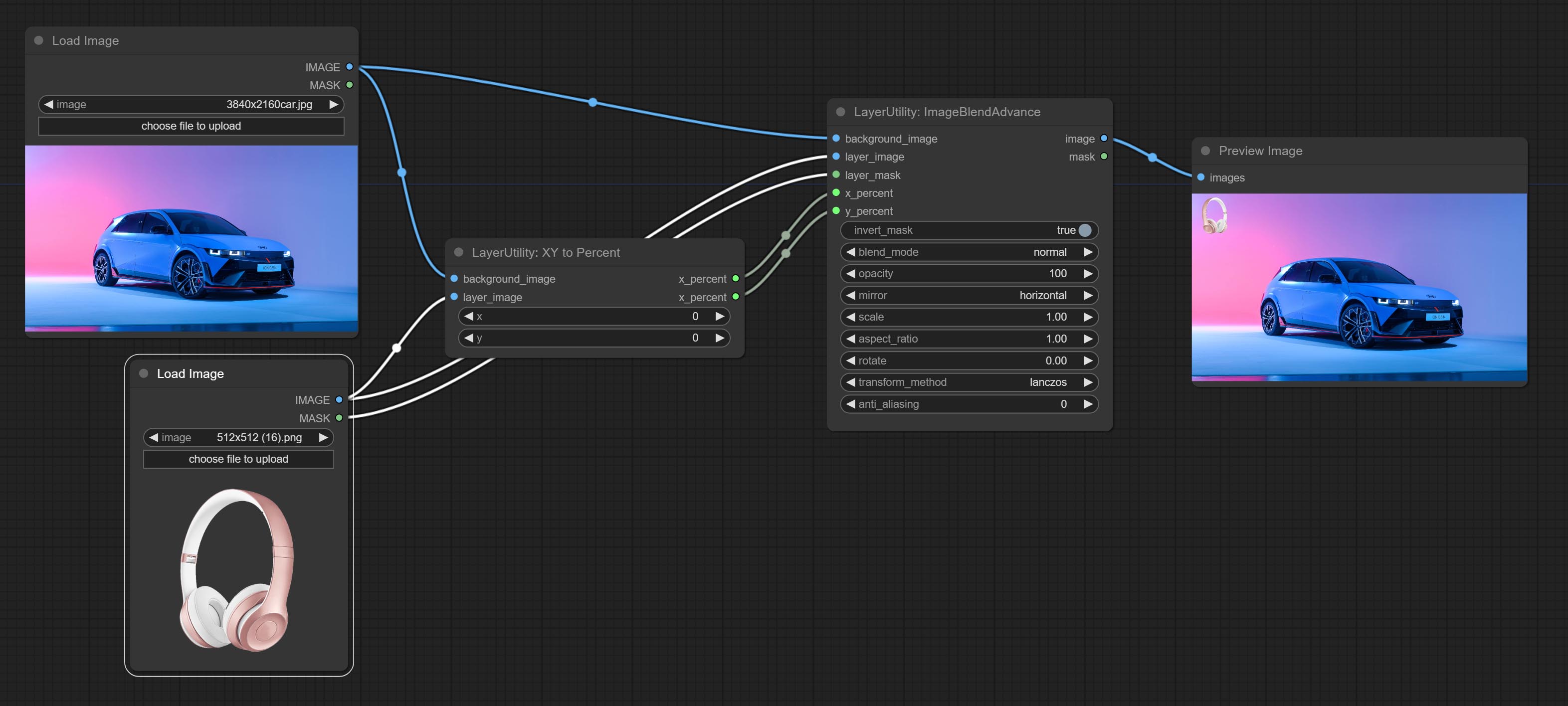

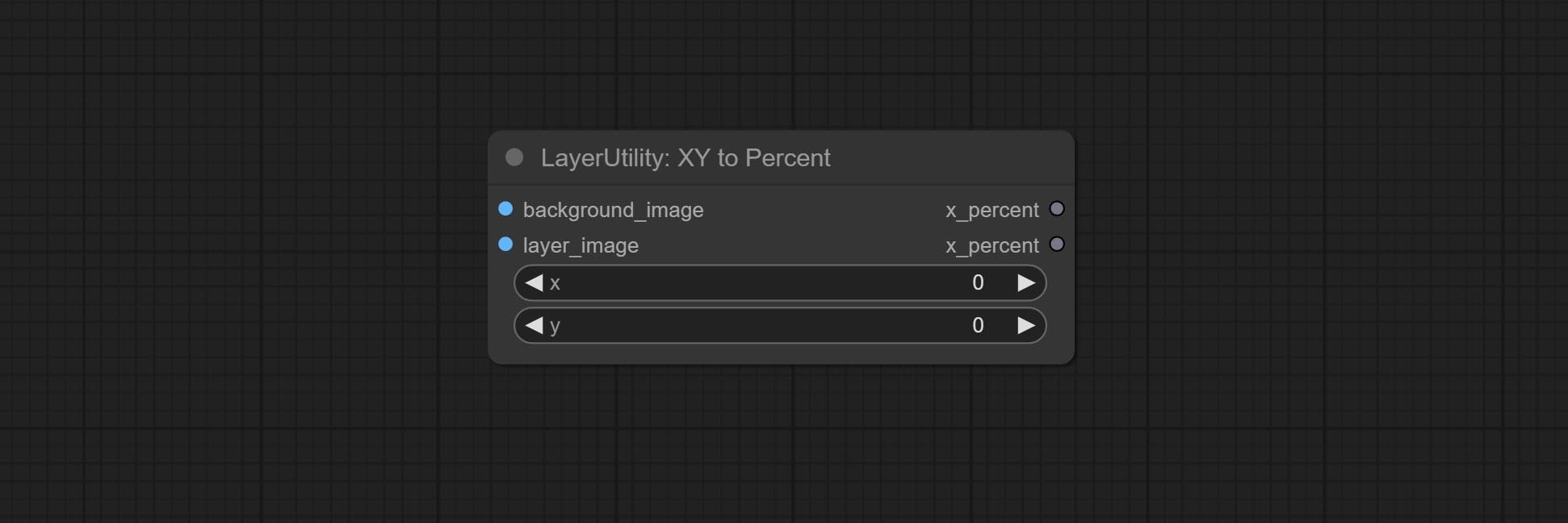

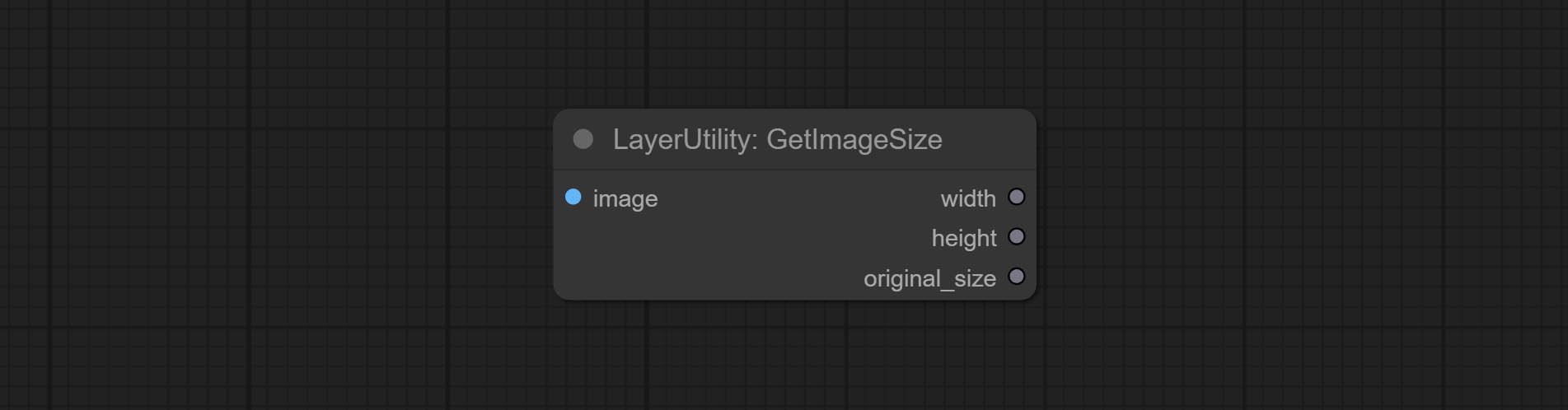

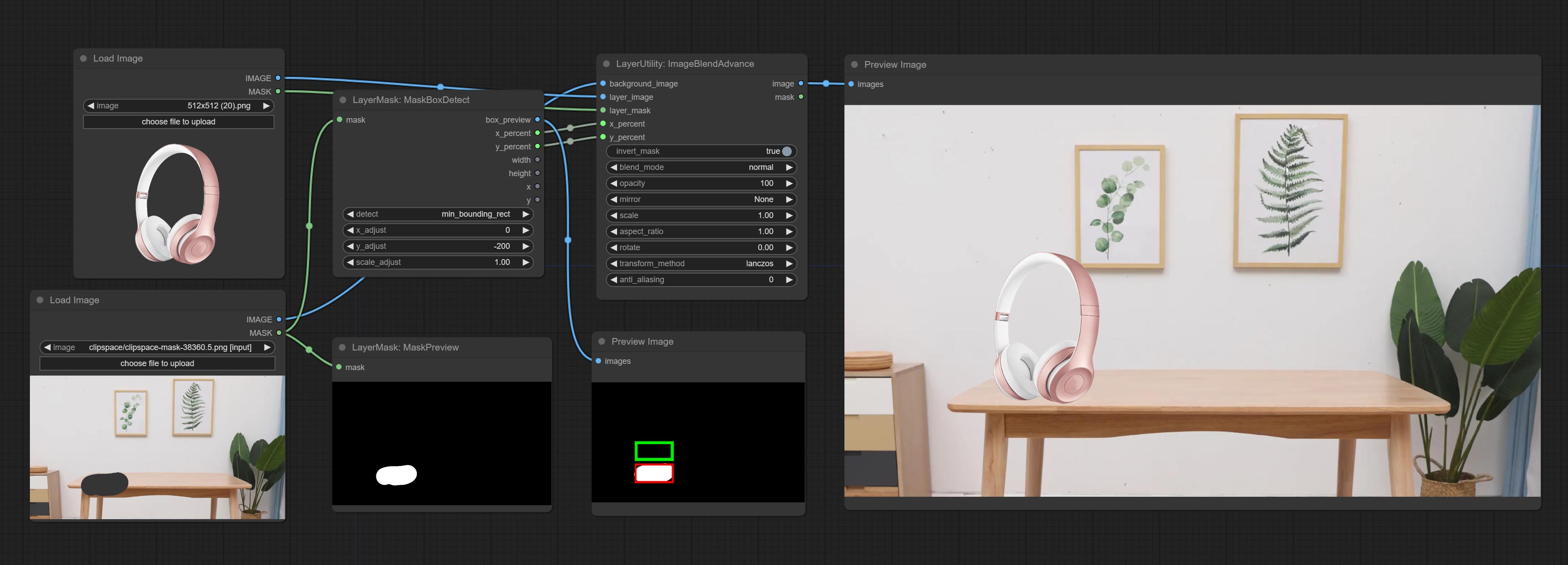

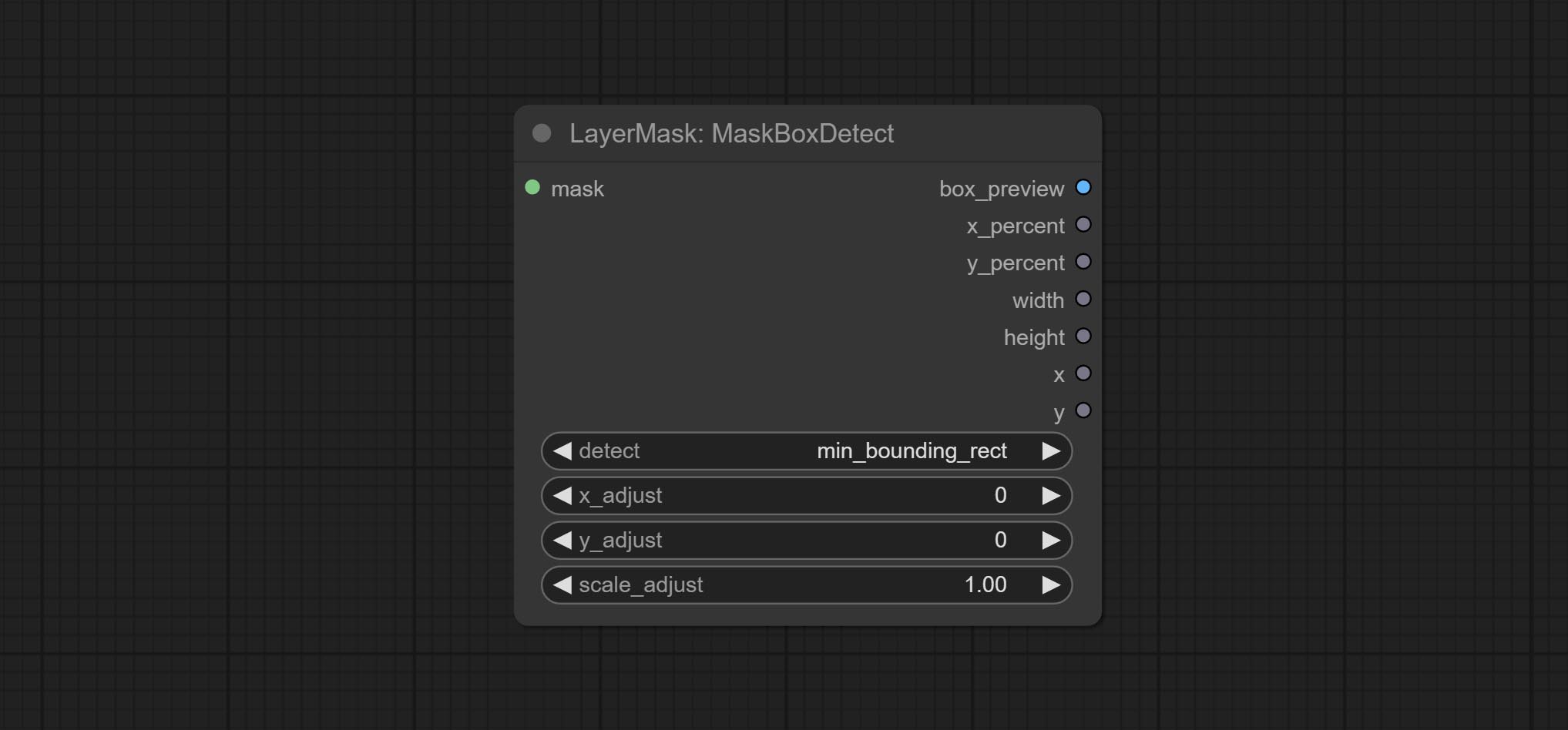

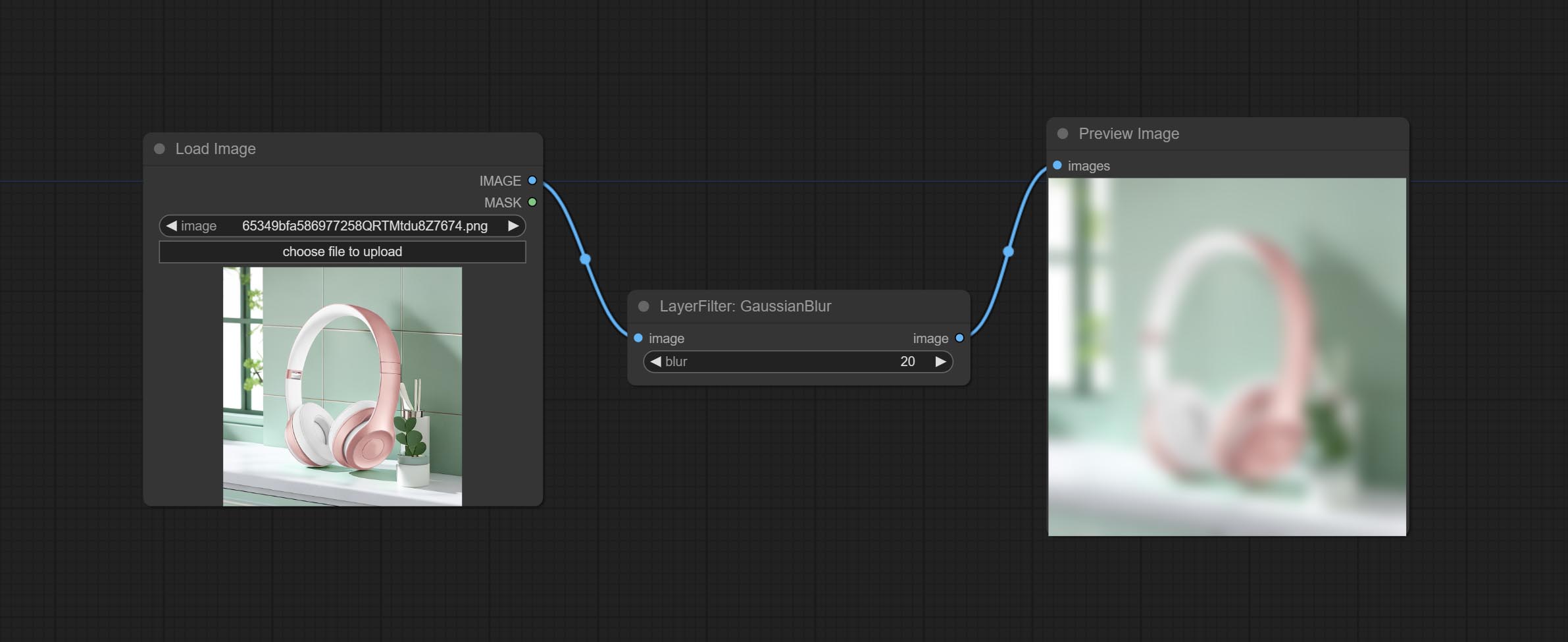

마스크를 통해 위치를 자동으로 감지하고 복합 노드에 출력 할 수있는 MaskBoxDetect 노드를 커밋하십시오. 절대 좌표를 퍼센트 좌표로 변환하기 위해 XY에서 퍼센트 노드를 커밋합니다. 가우시안 블러 노드를 커밋하십시오. getImagesize 노드를 커밋하십시오.

ExtendCanvas 노드를 커밋하십시오.

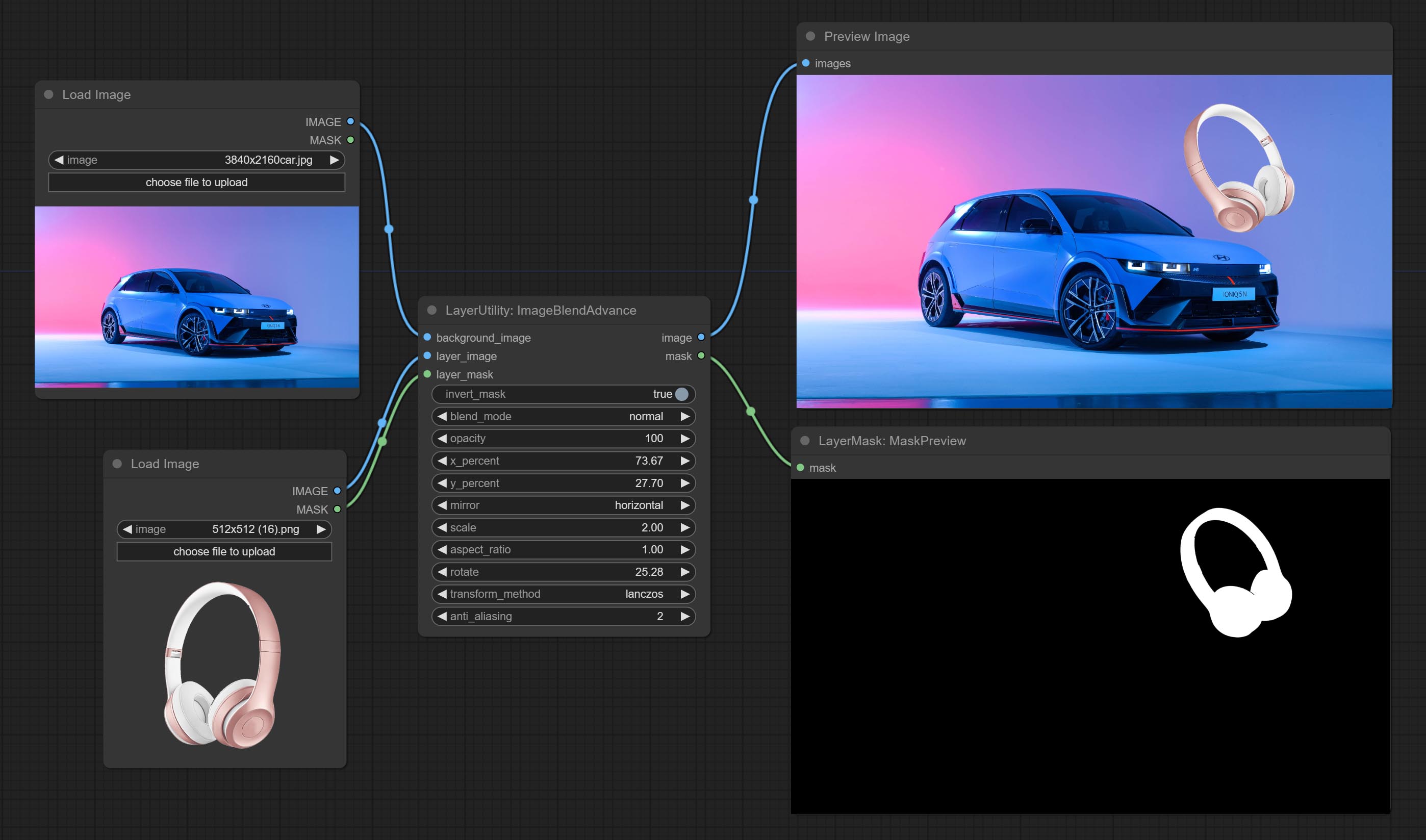

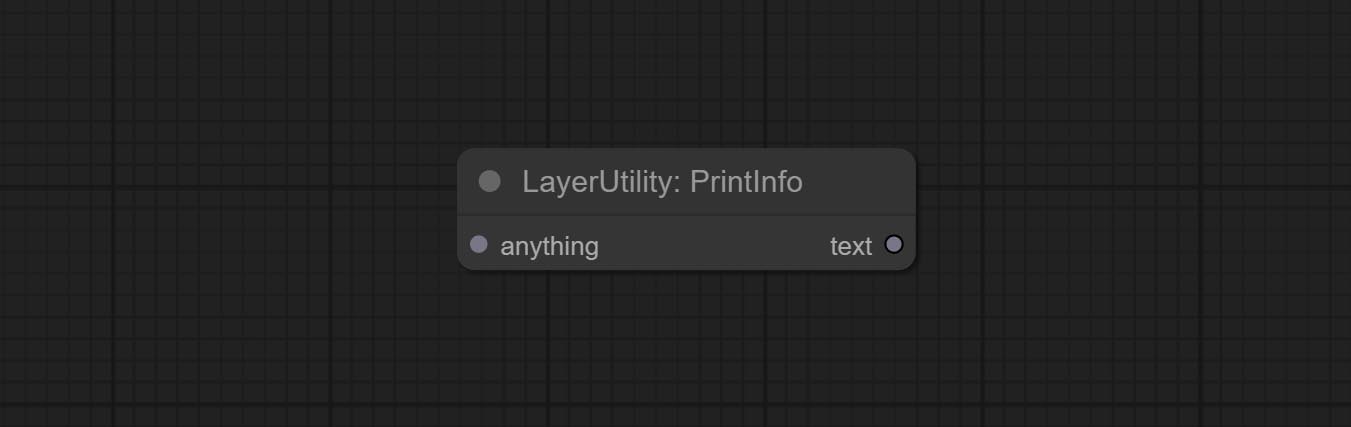

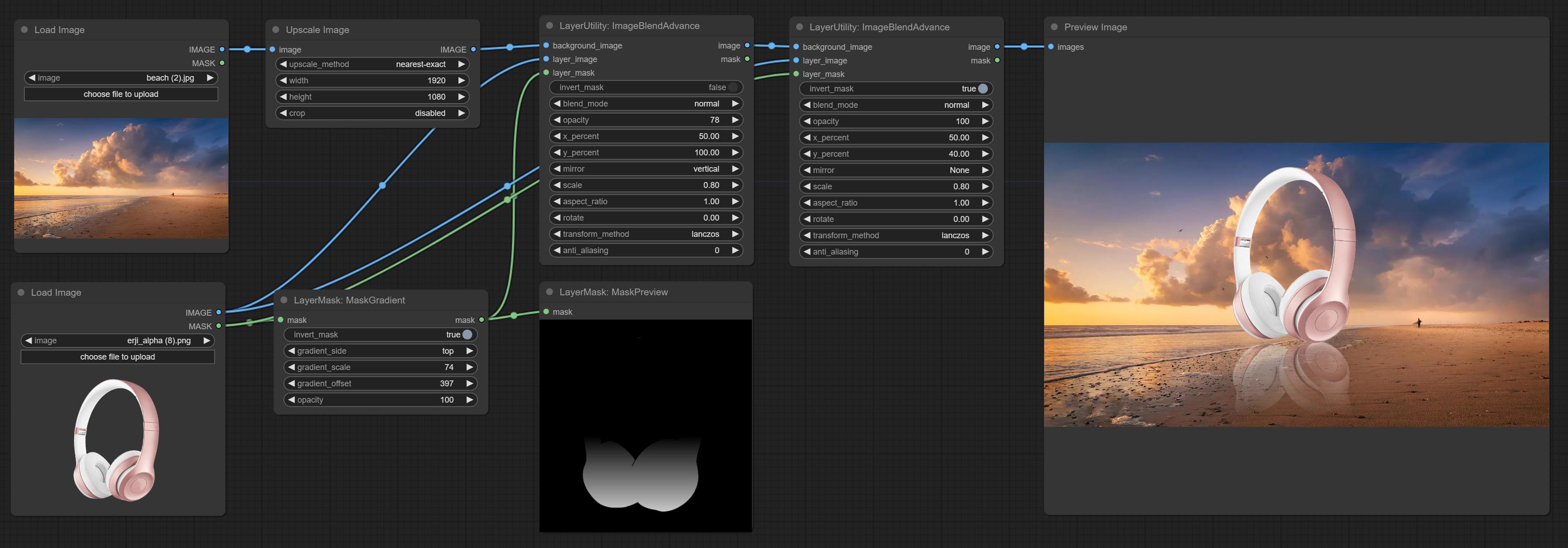

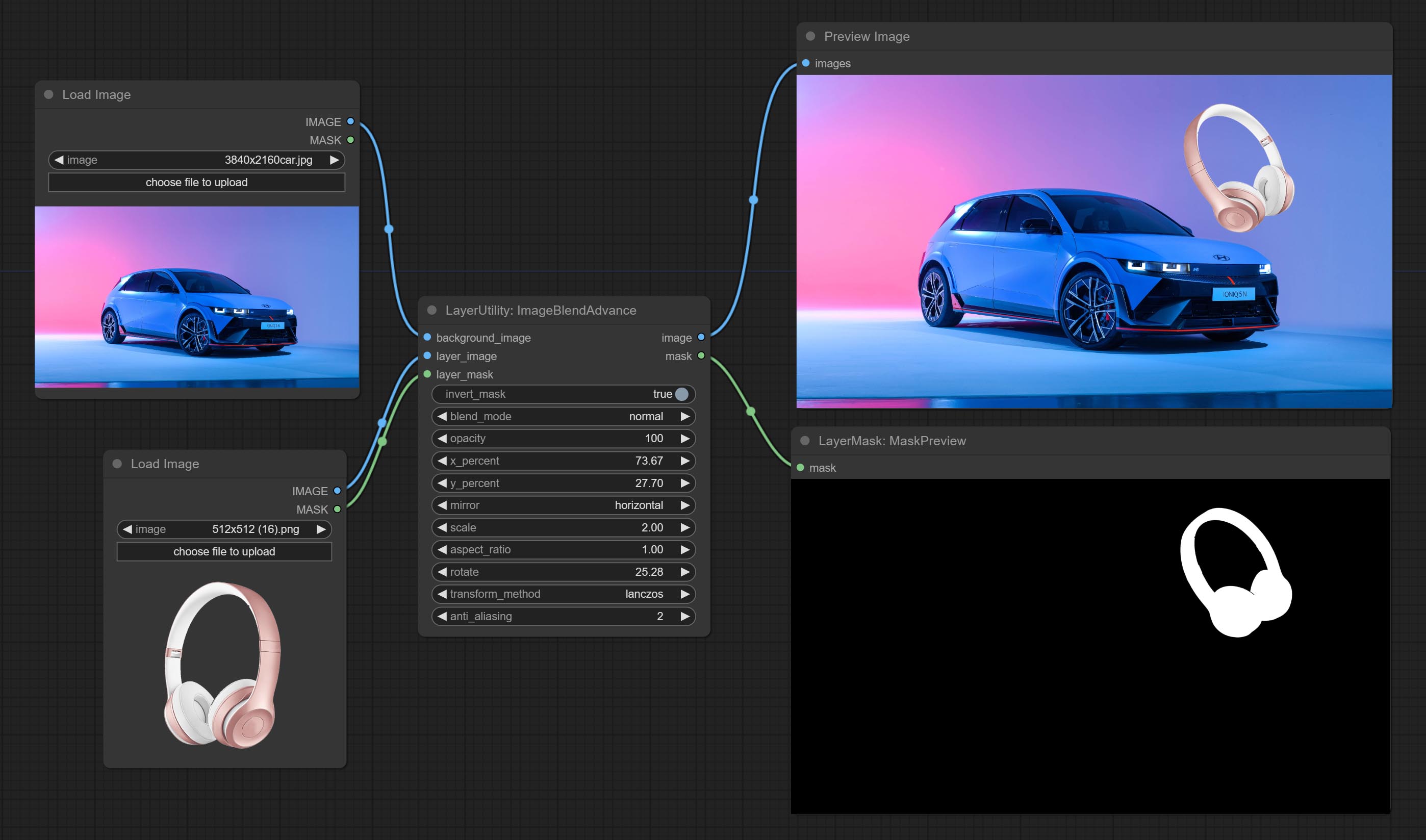

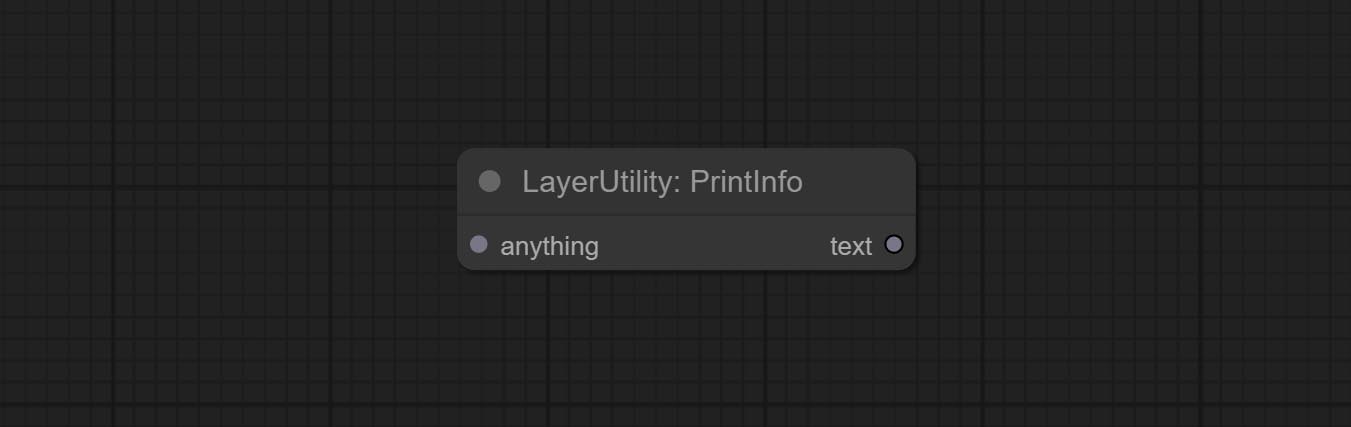

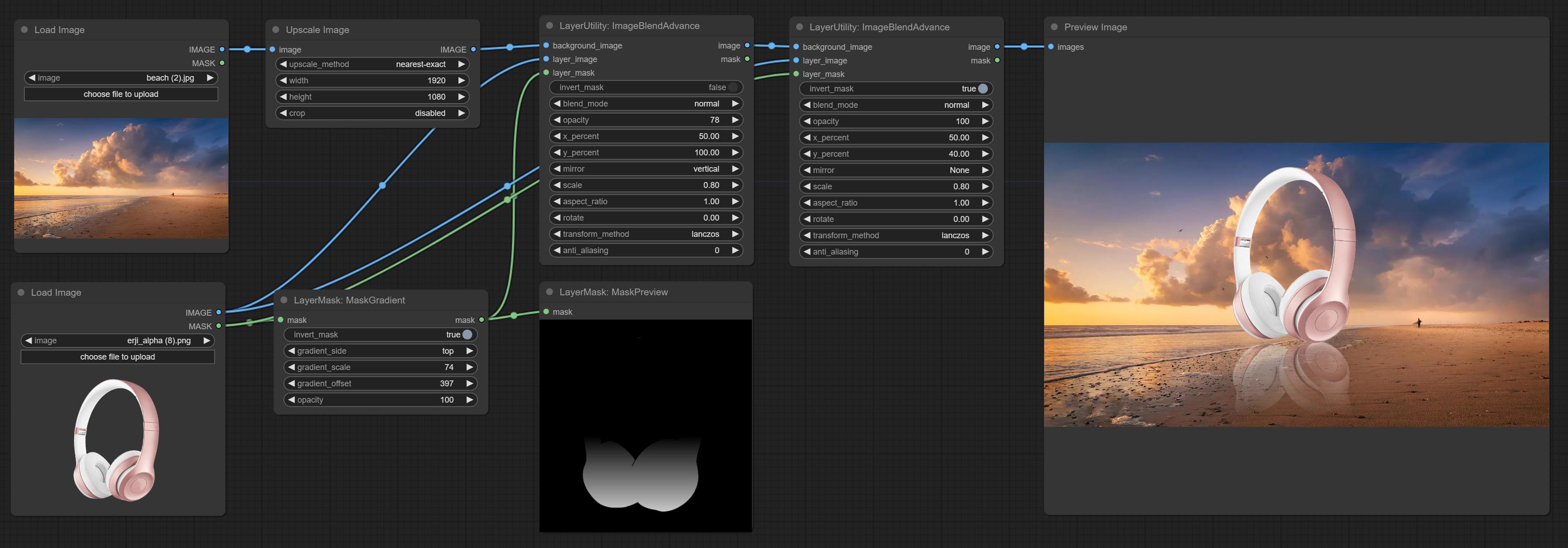

ImageBlendAdvance 노드를 커밋하십시오. 이 노드는 다양한 크기의 배경 이미지와 층의 합성을 허용하여보다 자유로운 합성 경험을 제공합니다. PrintInfo 노드를 워크 플로 디버깅 보조제로 커밋하십시오.

고체 및 그라디언트 컬러 이미지를 생성하는 데 사용되는 Colorimage 및 Gradientimage 노드를 커밋합니다.

GradientOverlay 및 ColorVerlay 노드를 커밋하십시오. 유효하지 않은 마스크 입력 판단을 추가하고 유효하지 않은 마스크가 입력되면 무시하십시오.

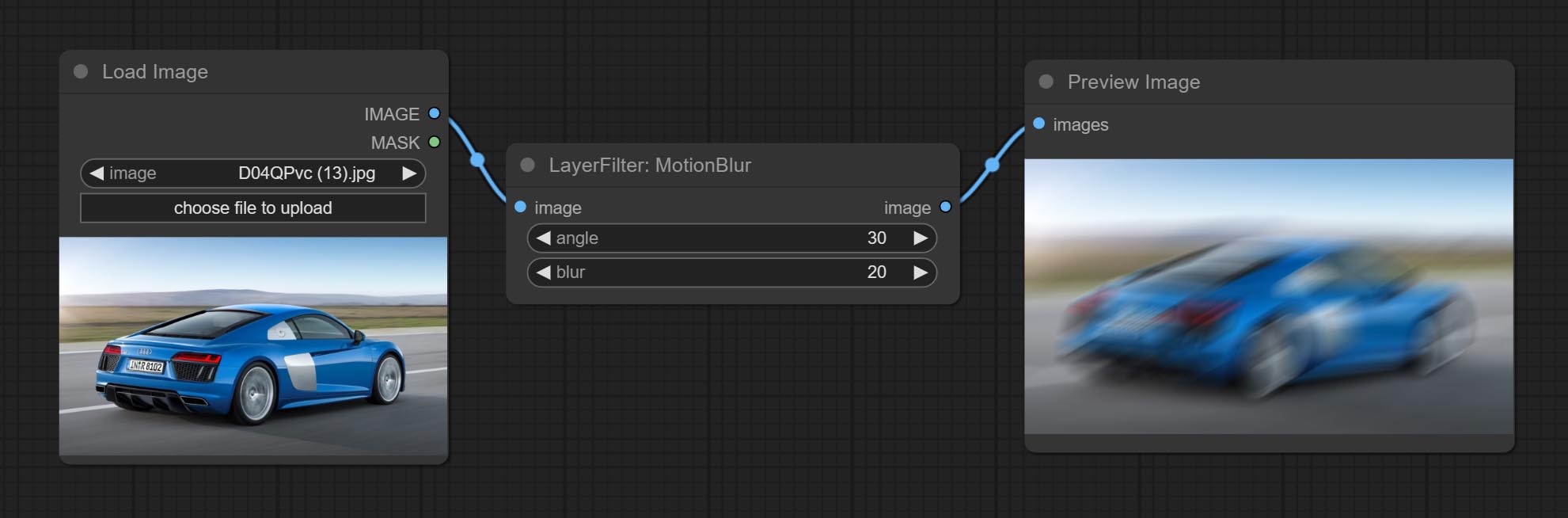

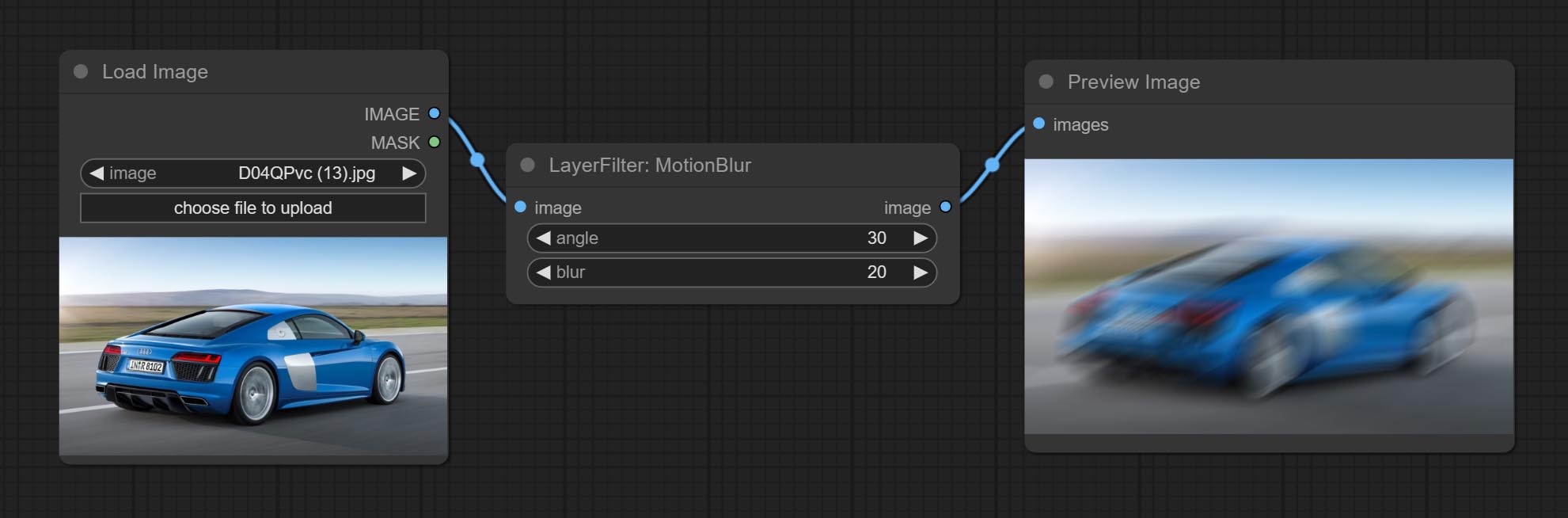

InnerGlow, Innershadow 및 MotionBlur 노드를 커밋하십시오.

완성 된 모든 노드를 바꾸면 노드는 4 개의 그룹으로 나뉩니다. Layerstyle, Layermask, Layerutility, LayerFilter. 이전 버전 노드를 포함하는 워크 플로우는 새 버전 노드로 수동으로 교체해야합니다.

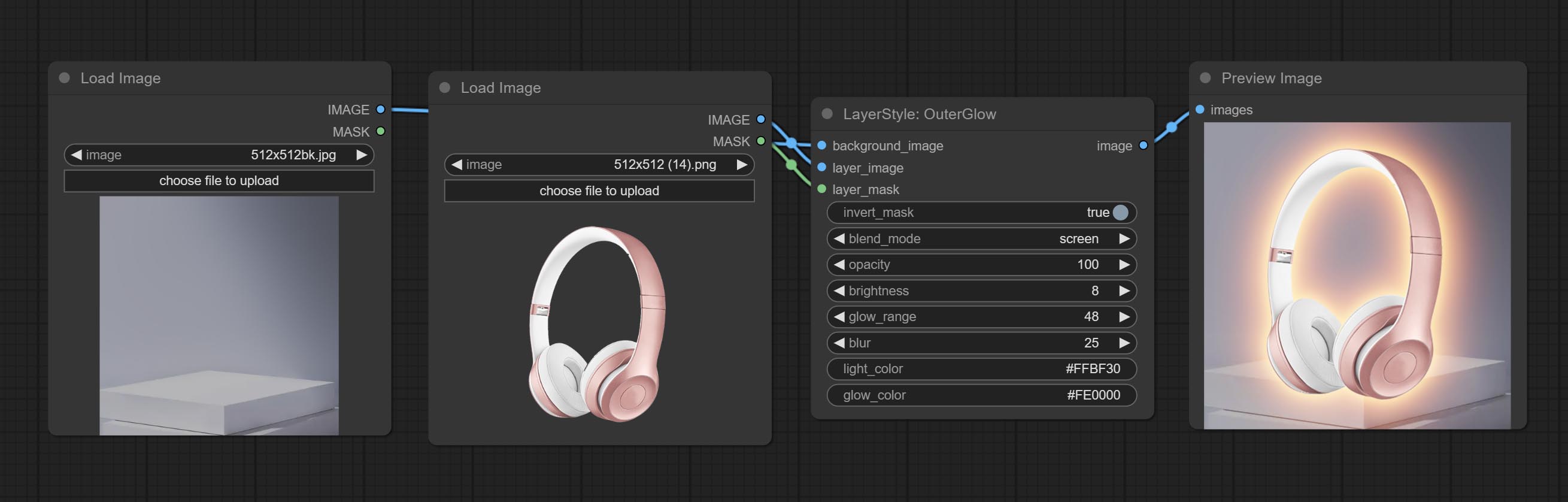

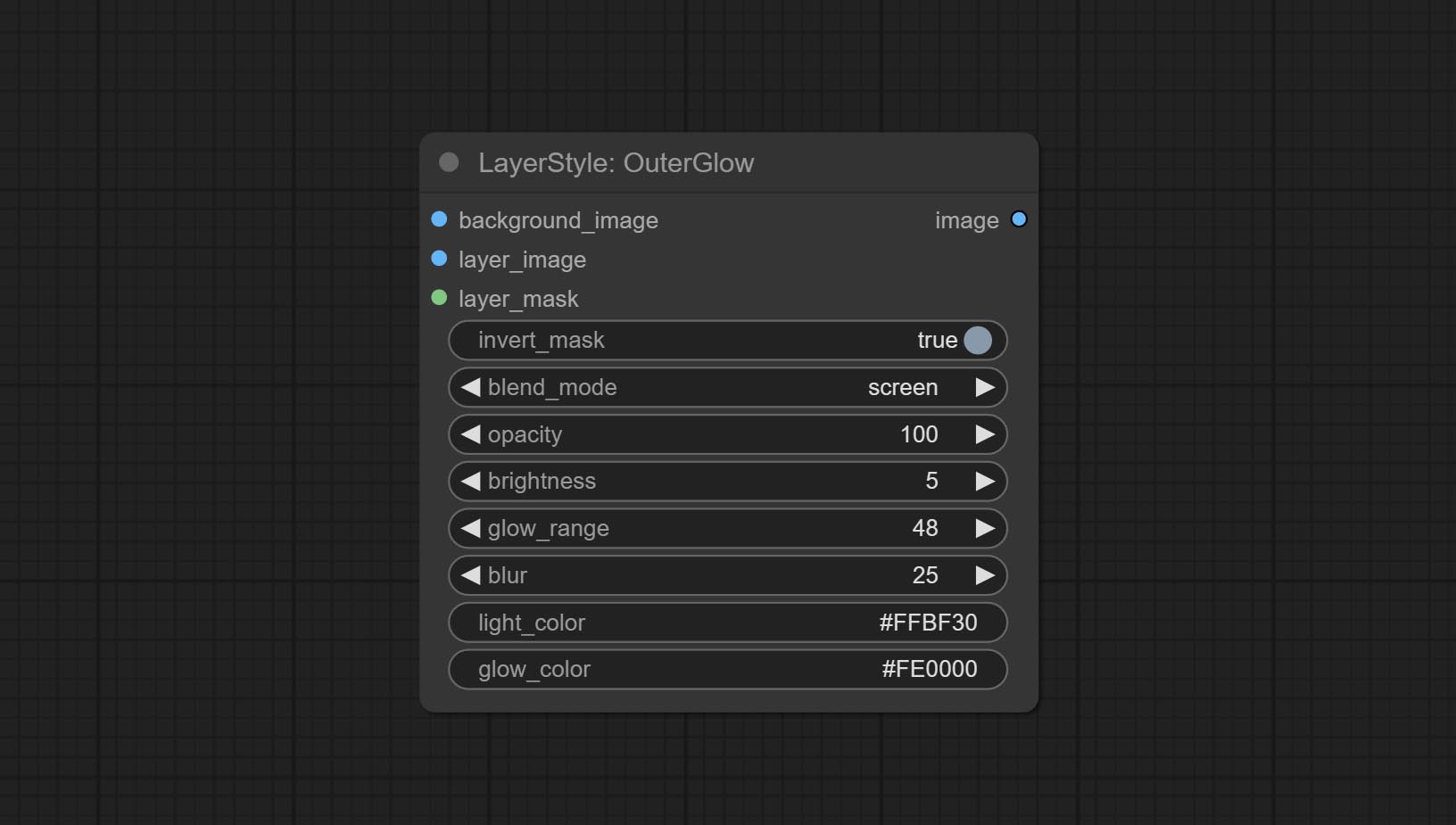

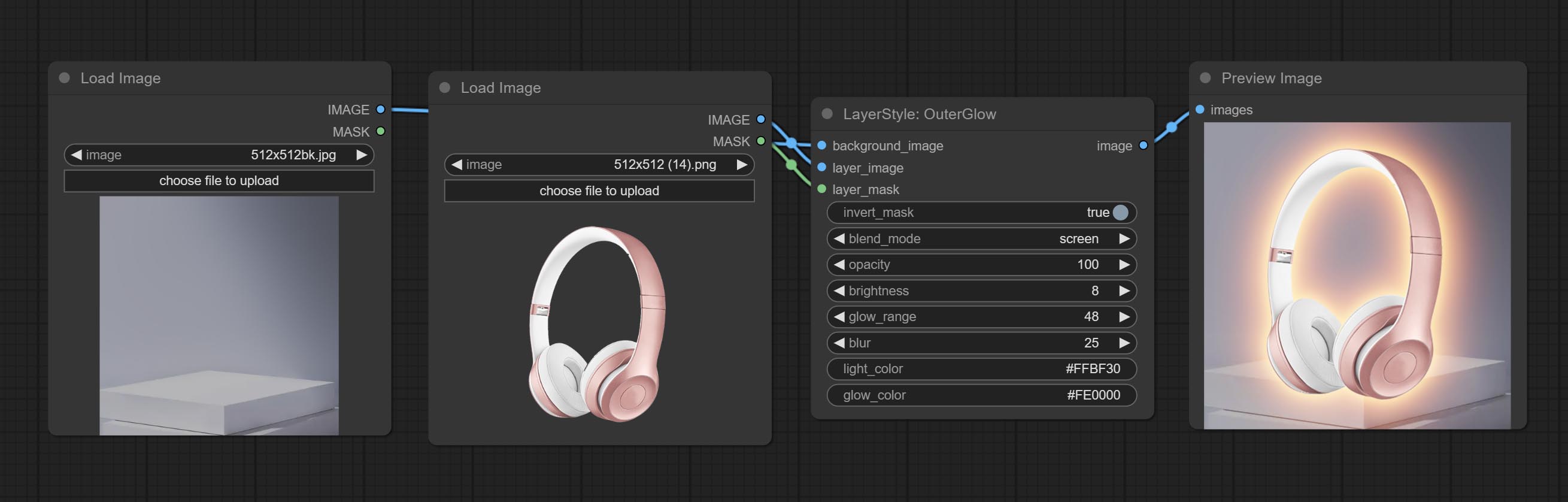

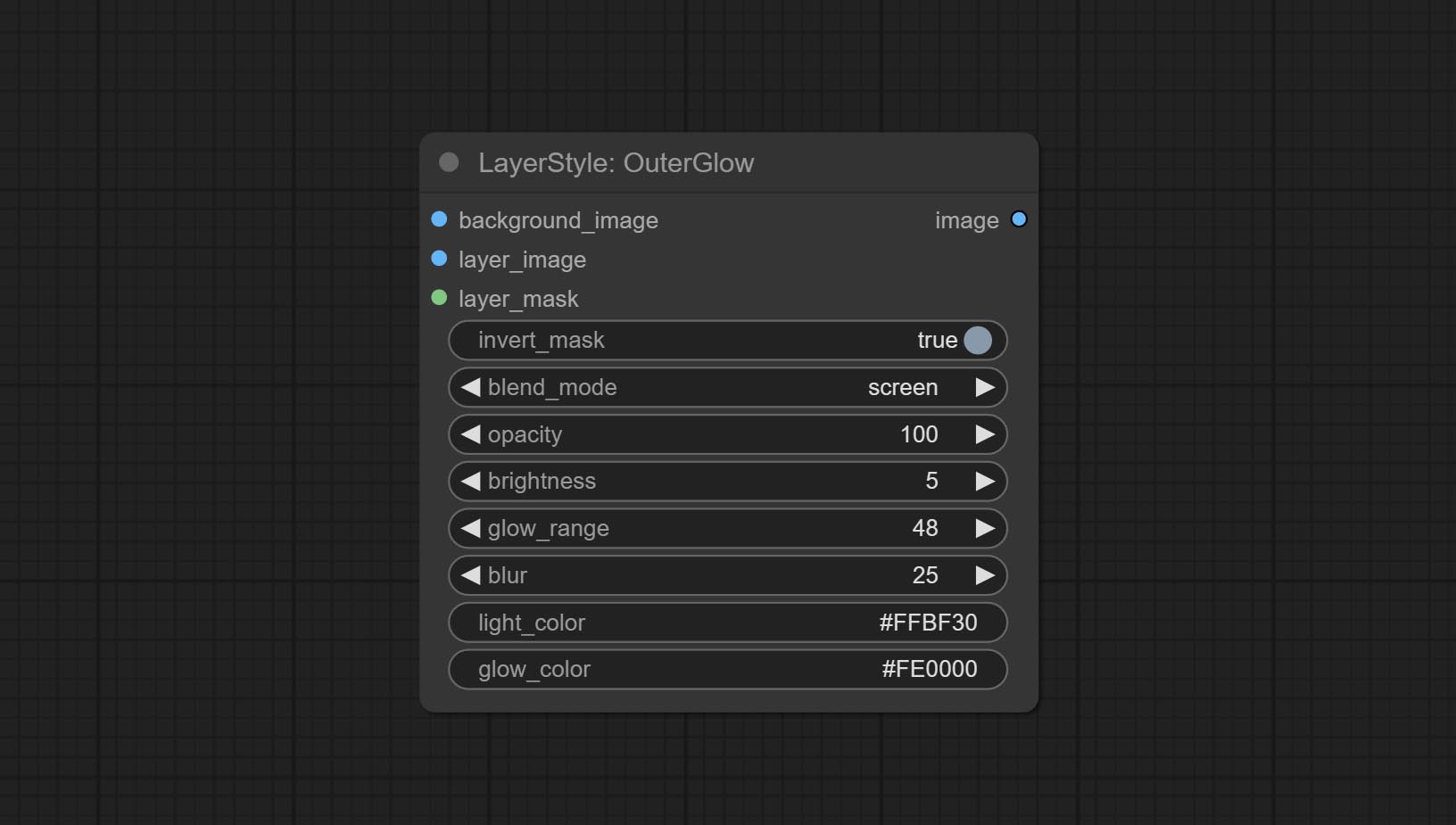

Outerglow Node는 밝기 , Light_Color 및 Glow_Color 에 대한 옵션을 추가하여 상당한 수정을 받았습니다.

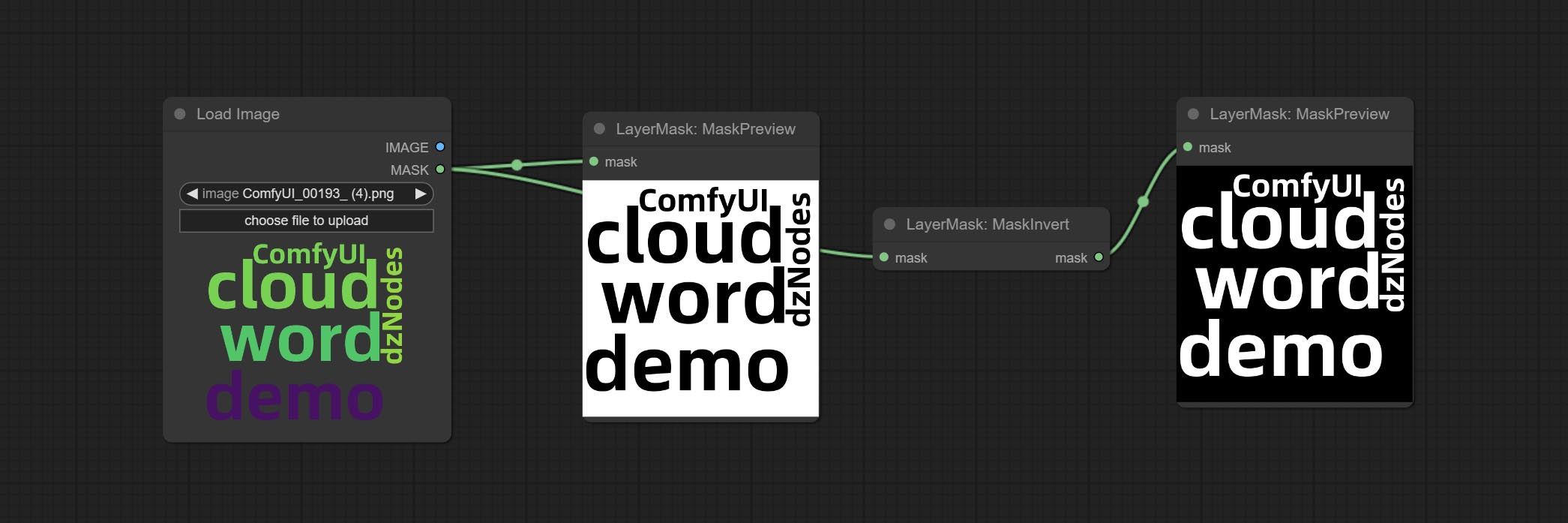

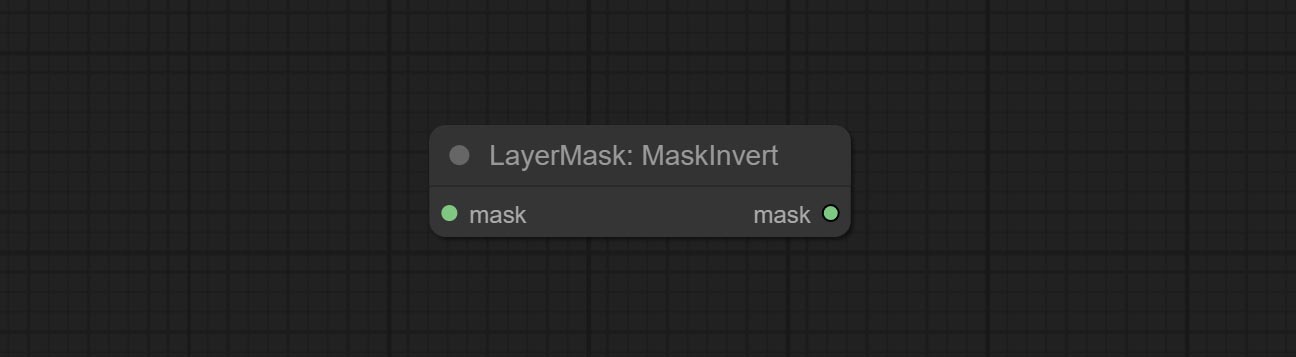

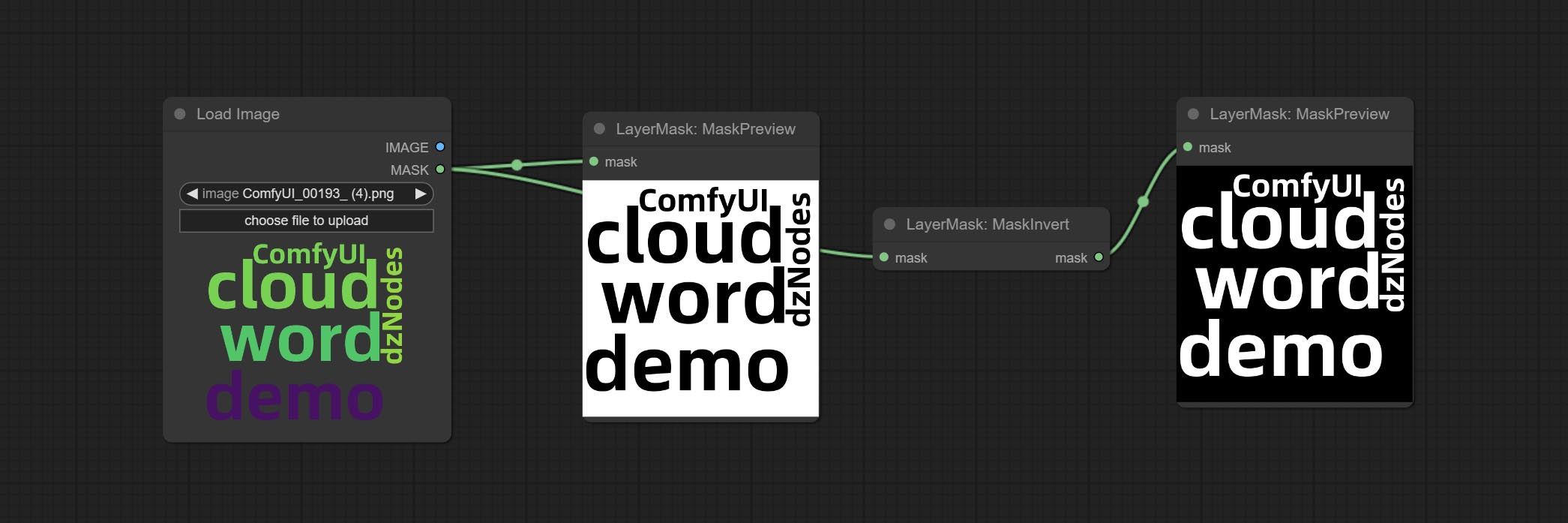

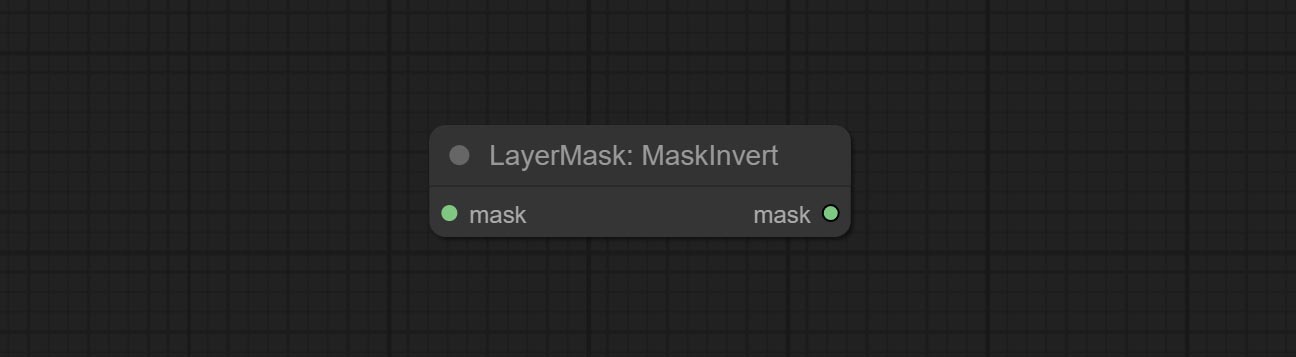

Maskinvert 노드를 커밋합니다.

ColorPick 노드를 커밋하십시오.

스트로크 노드를 커밋하십시오.

Commit MaskPreview 노드.

ImageOpacity 노드를 커밋합니다.

Layer_mask는 현재 필수 입력이 아닙니다. 모양이 다른 레이어와 마스크를 사용할 수 있지만 크기는 일관성이 있어야합니다.

ImageBlend 노드를 커밋합니다.

외부 노드를 커밋하십시오.

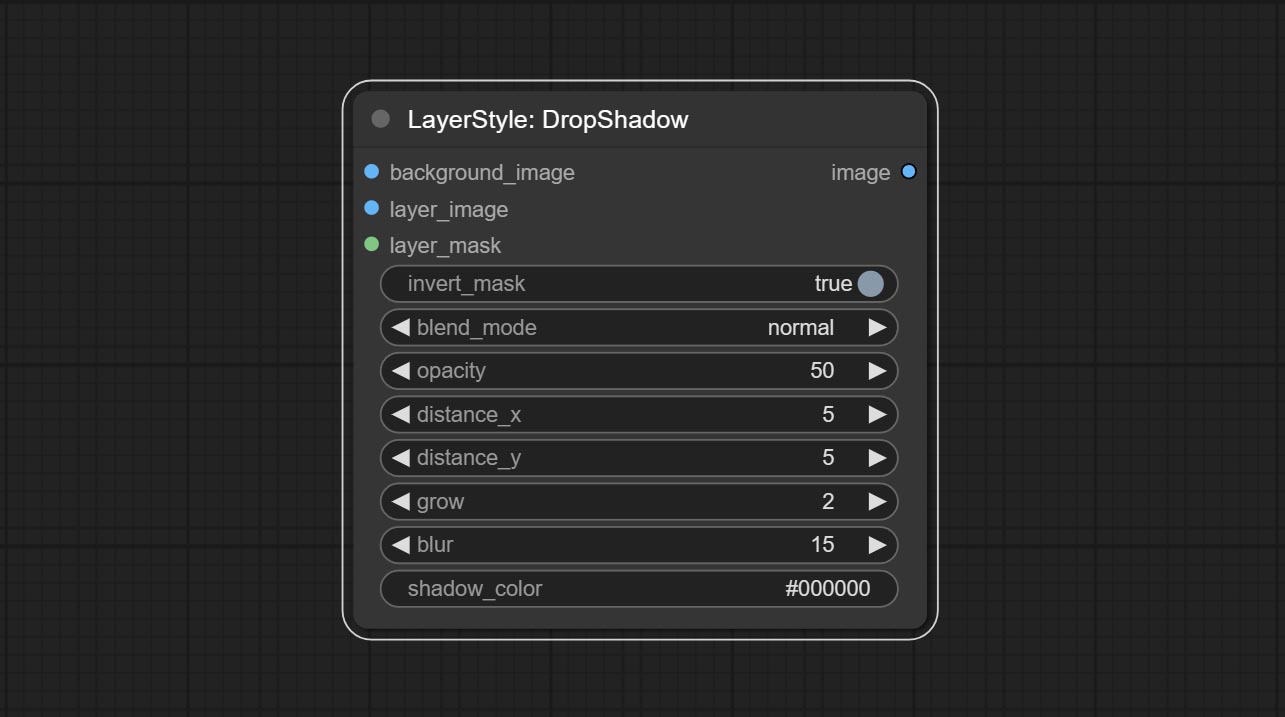

Dropshadow 노드를 커밋하십시오.

설명

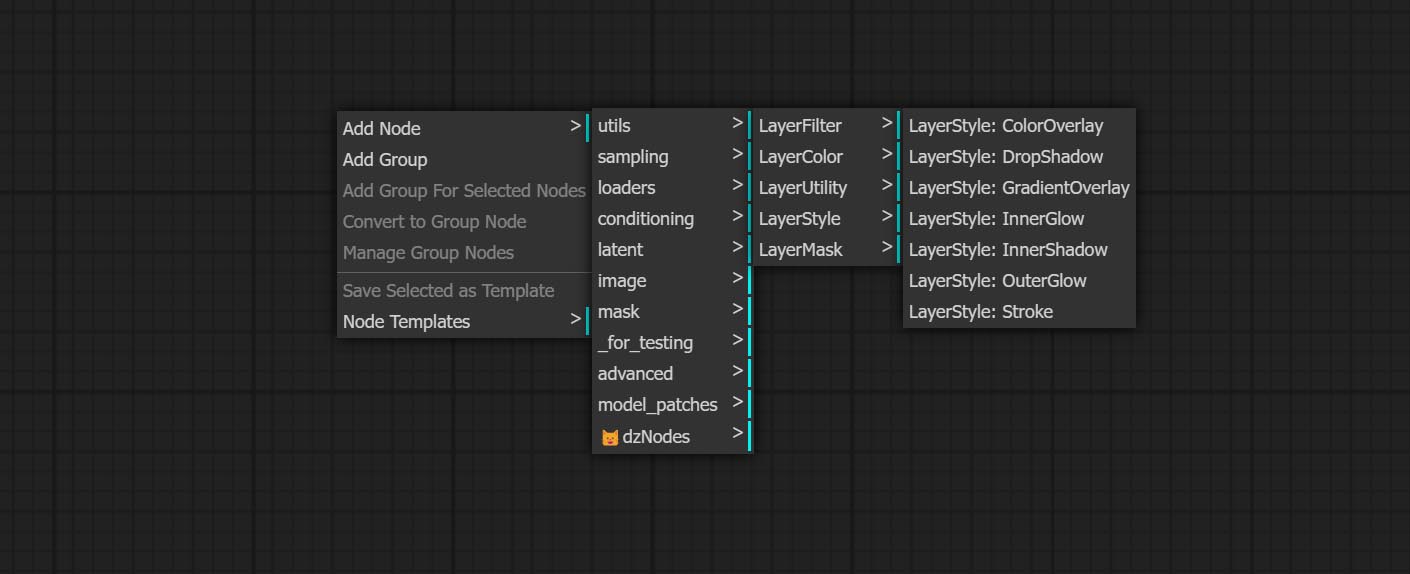

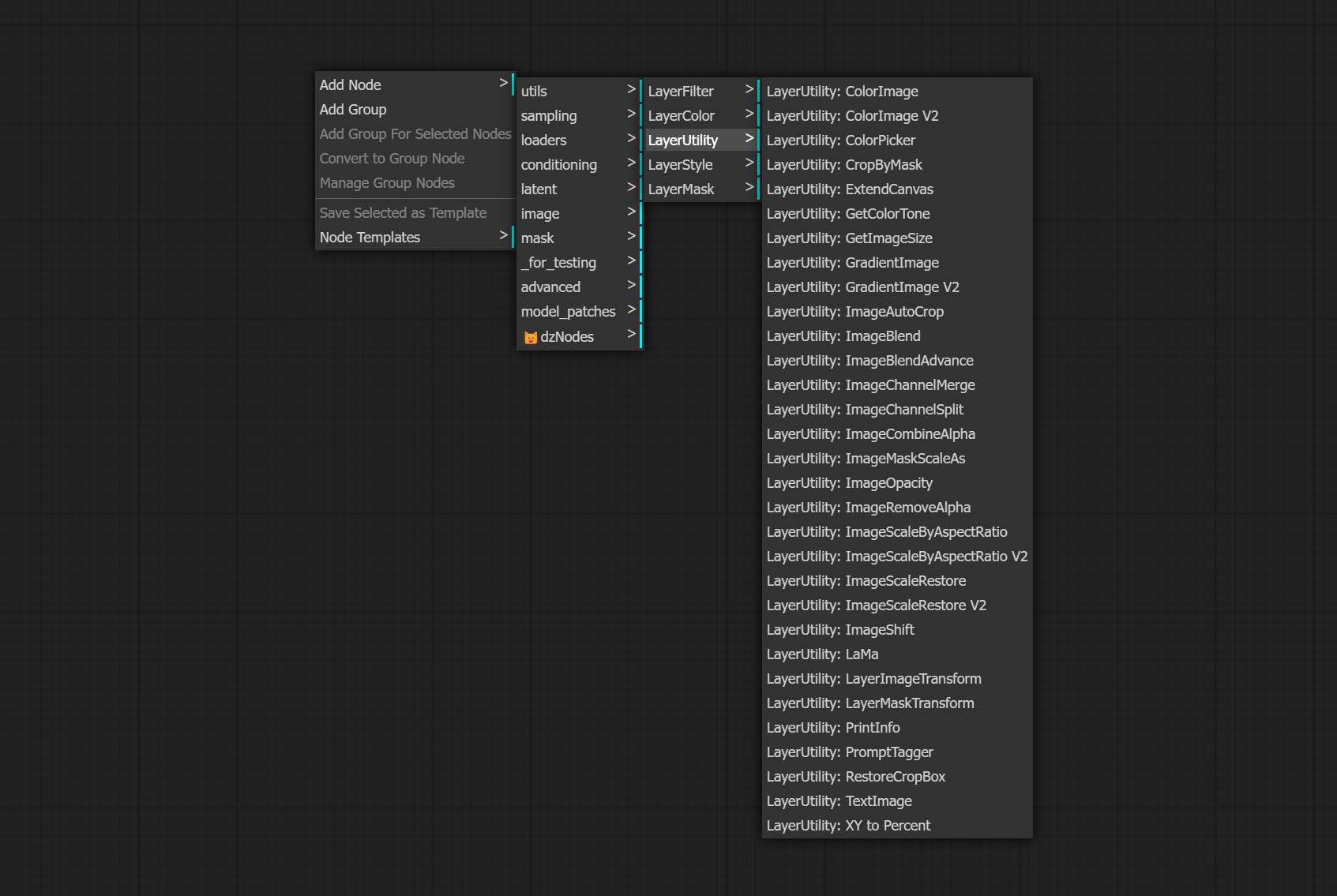

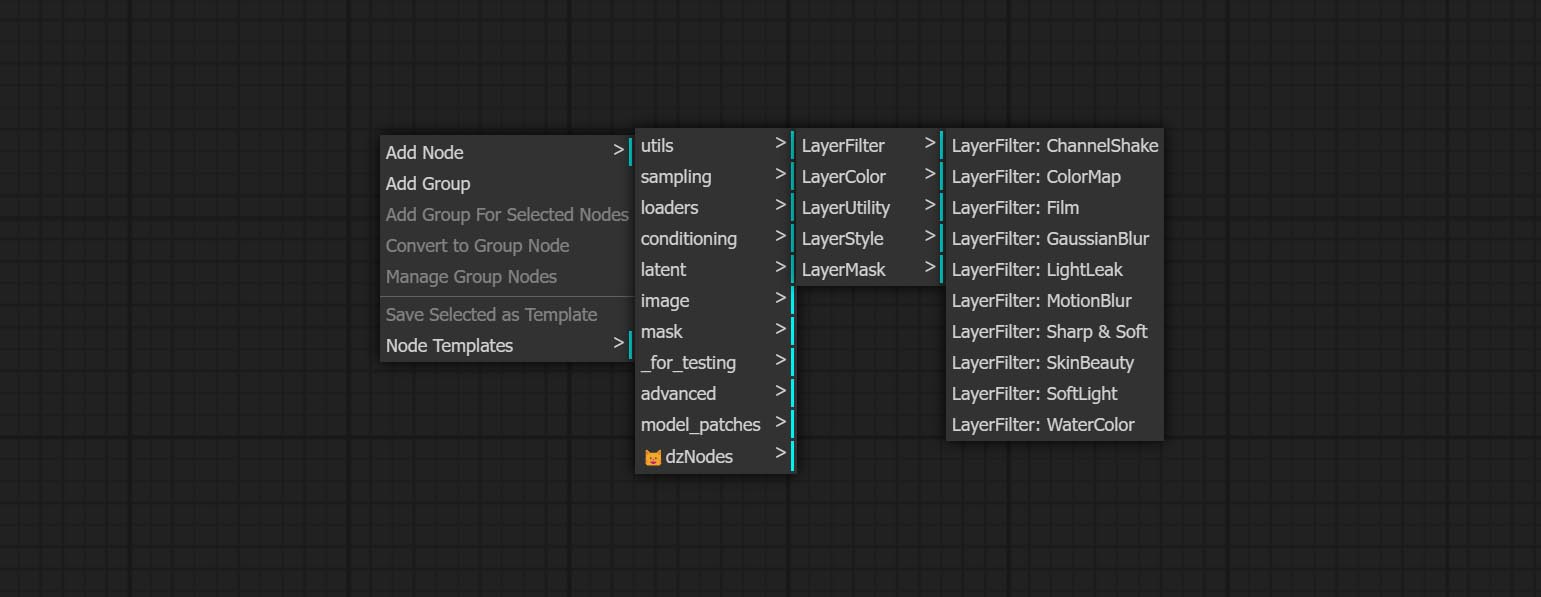

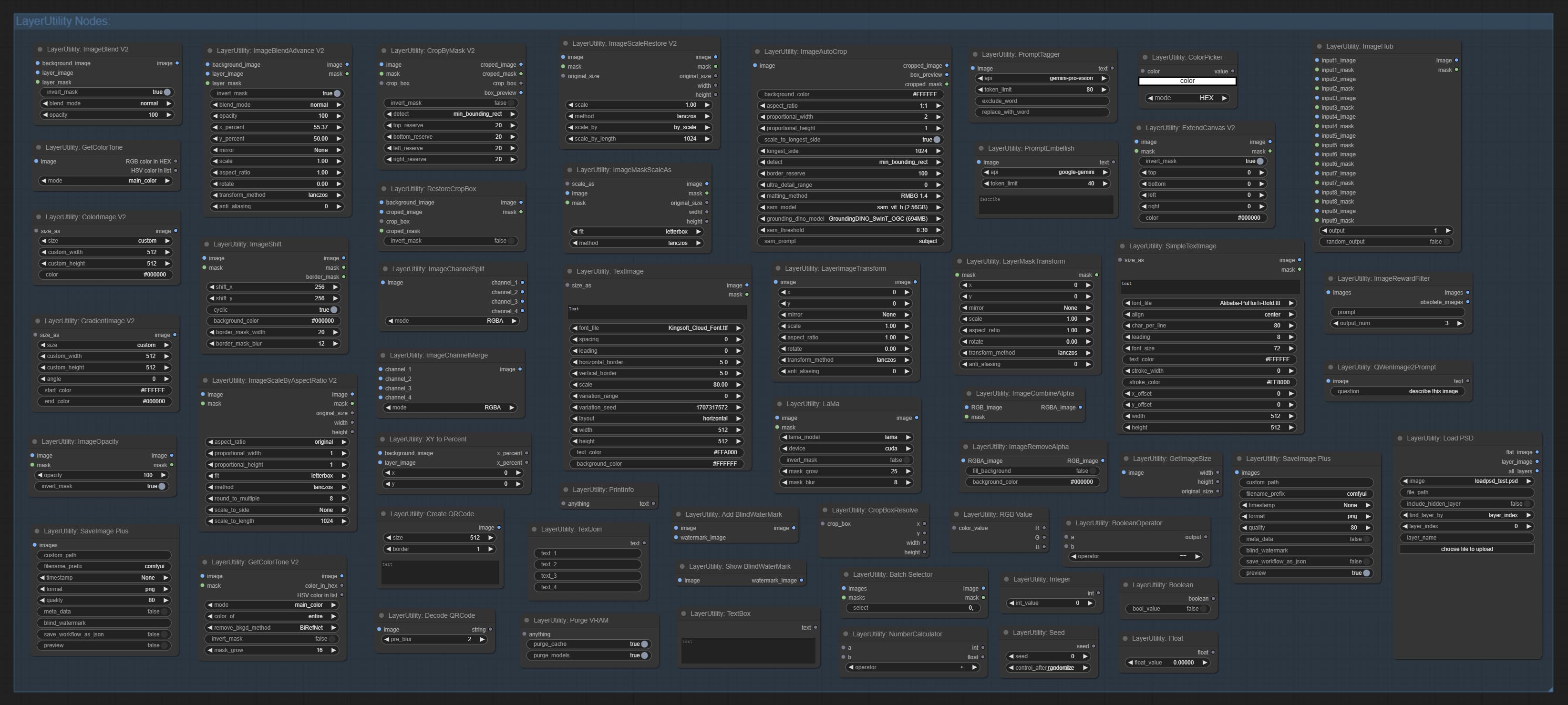

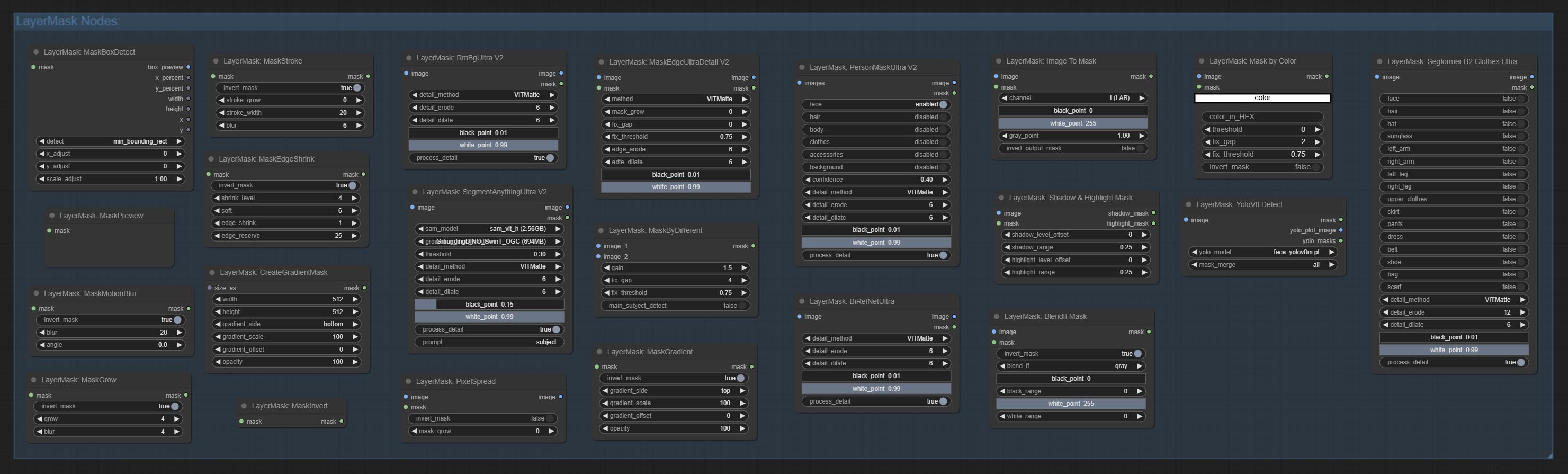

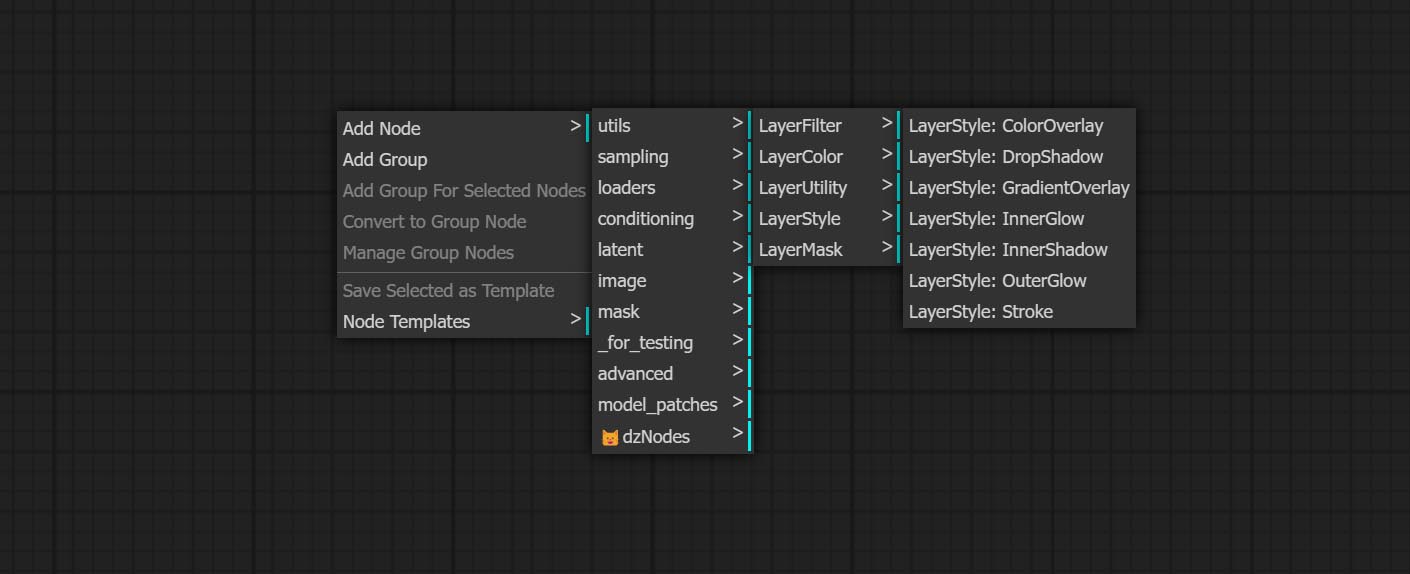

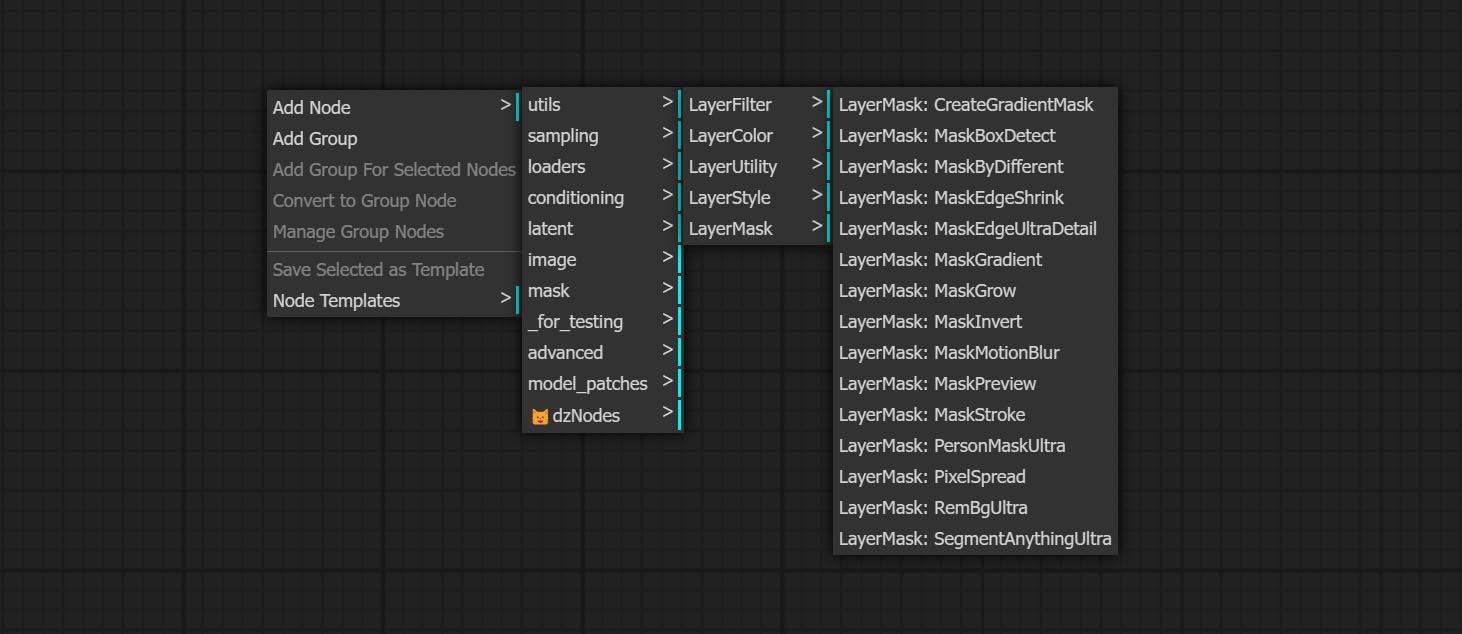

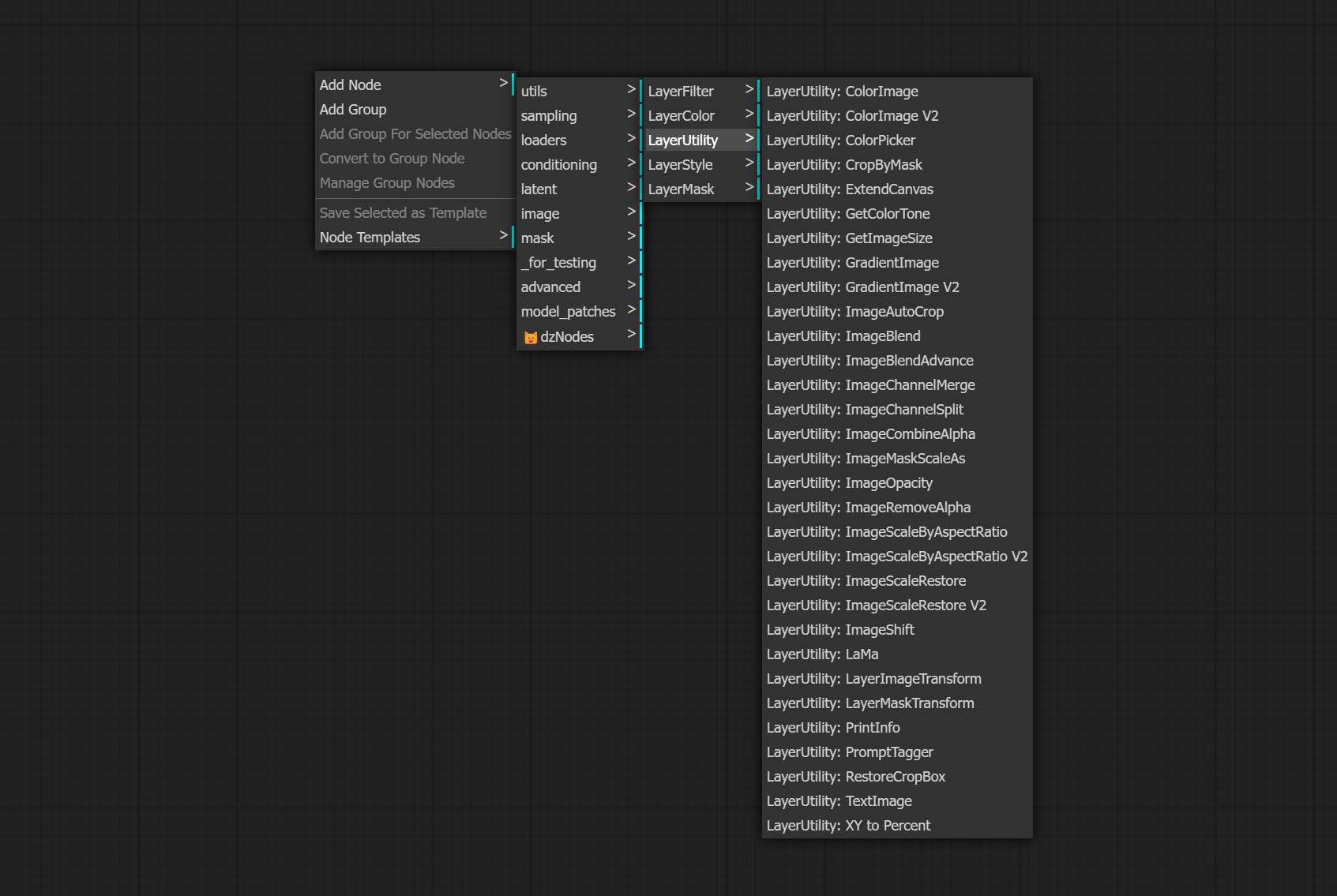

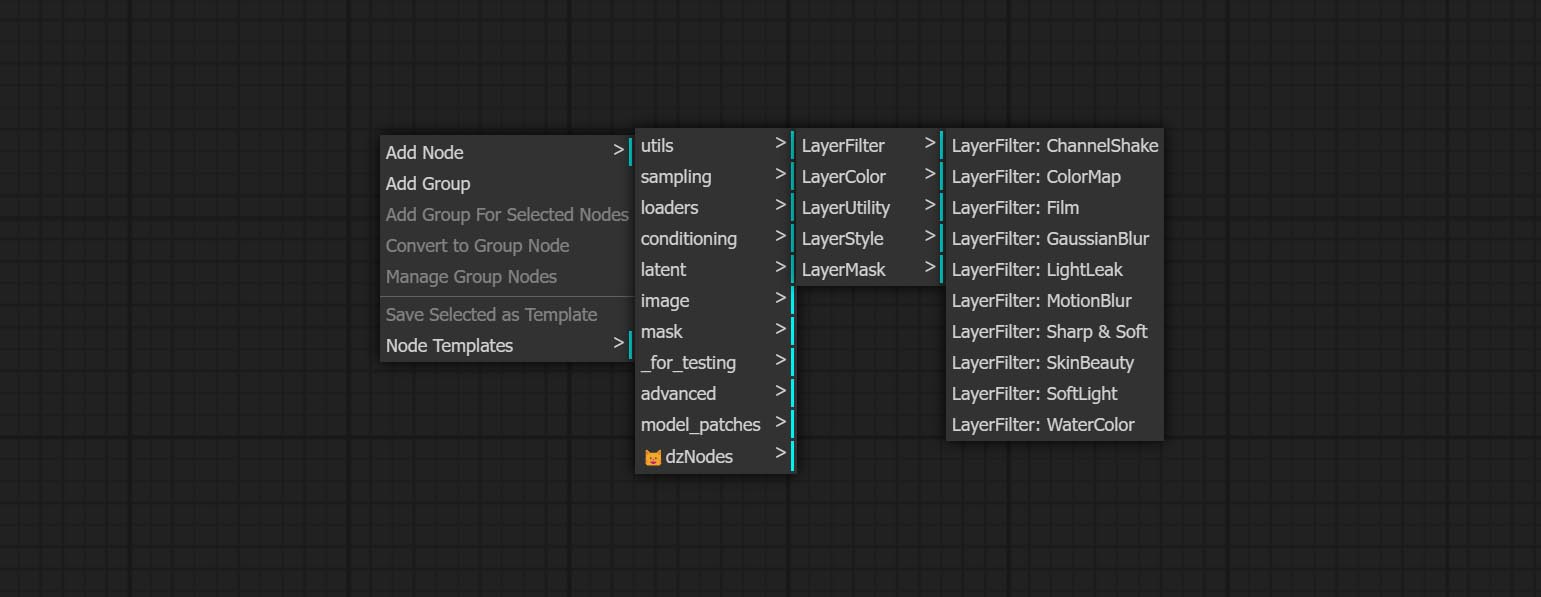

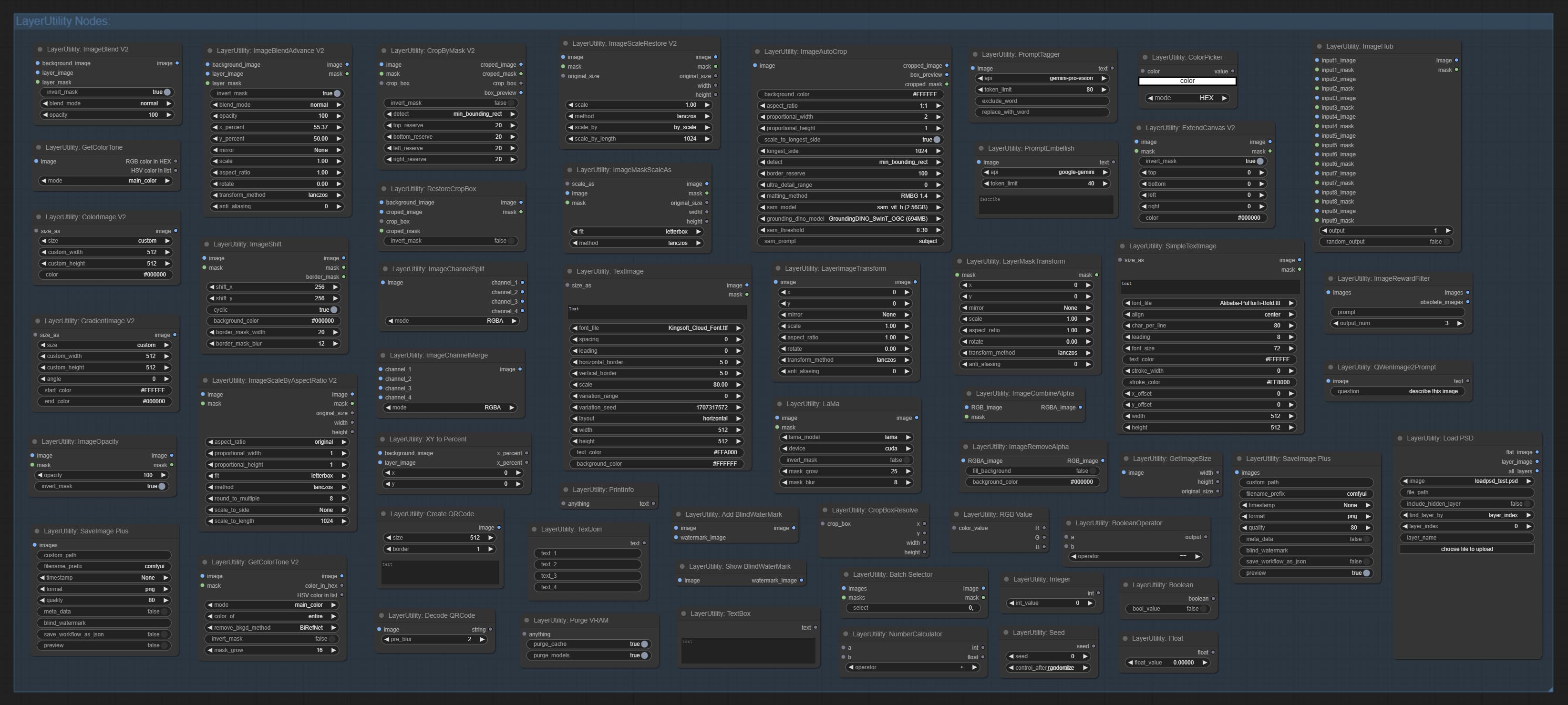

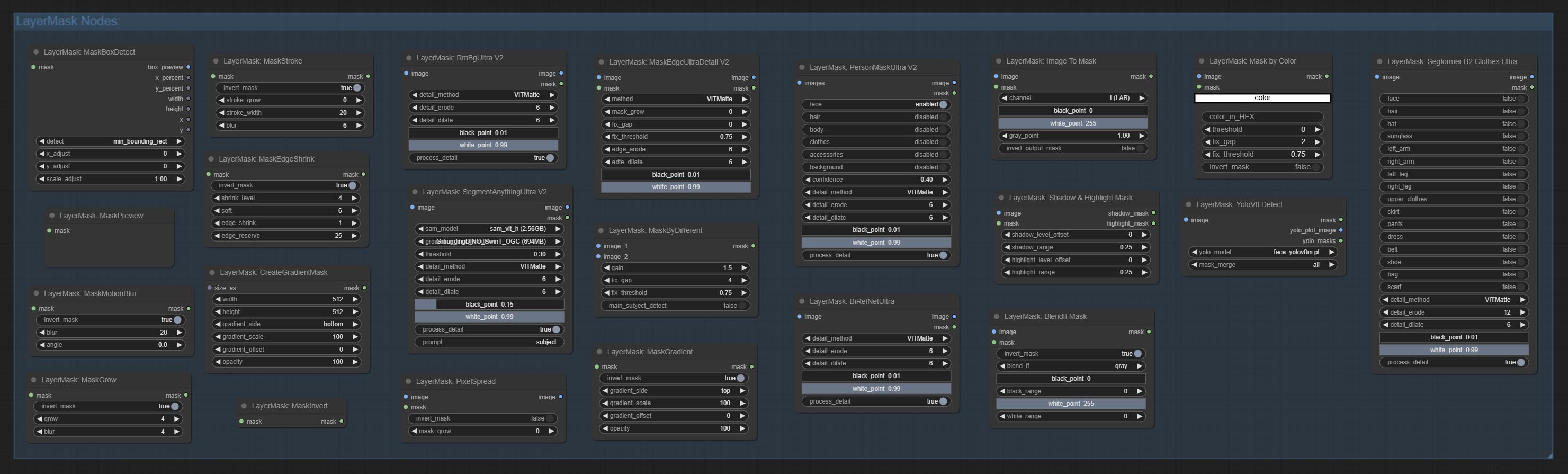

노드는 Layerstyle, Layercolor, Layermask, Layerutility 및 LayerFilter의 기능에 따라 5 개의 그룹으로 나뉩니다.

- Layerstyle 노드는 Adobe Photoshop을 모방하는 레이어 스타일을 제공합니다.

- LayerColor 노드 그룹은 색상 조정 기능을 제공합니다.

- 계층 마스크 노드는 마스크 지원 도구를 제공합니다.

- 계층화 노드는 계층 복합 도구 및 워크 플로와 관련된 보조 노드를 제공합니다.

- LayerFilter 노드는 이미지 효과 필터를 제공합니다.

Layerstyle

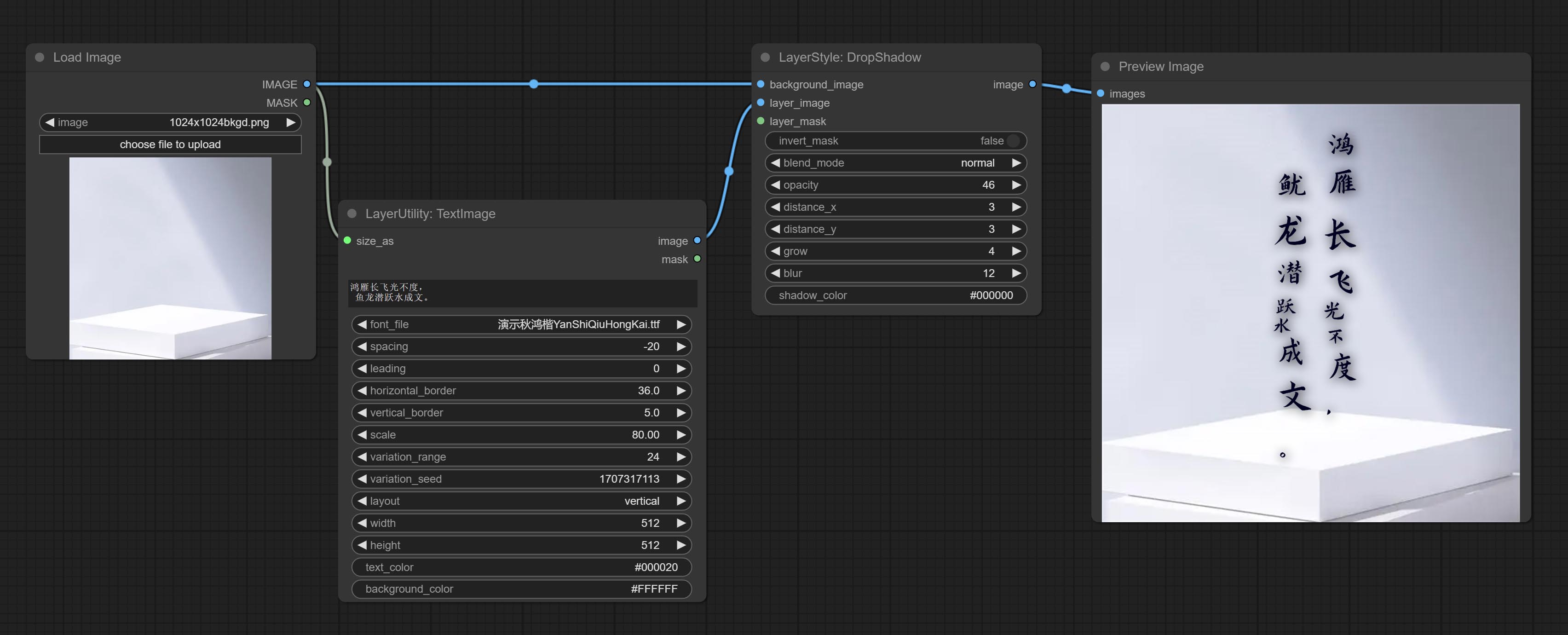

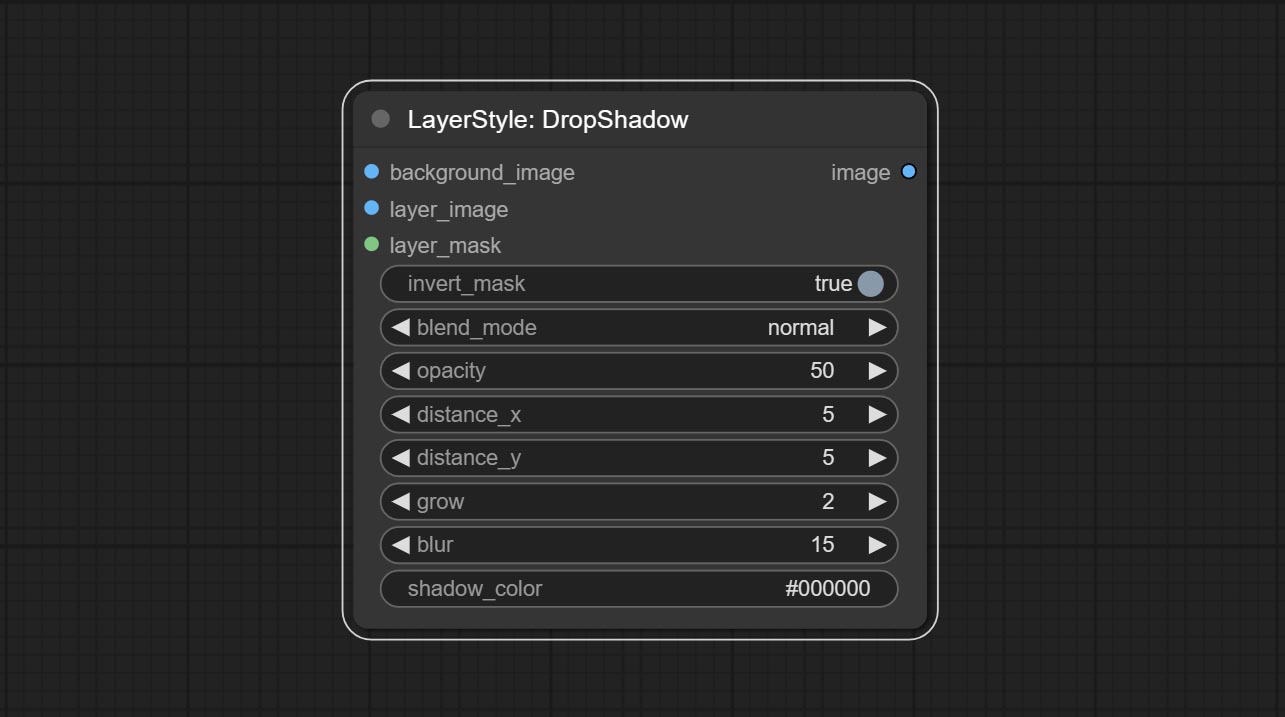

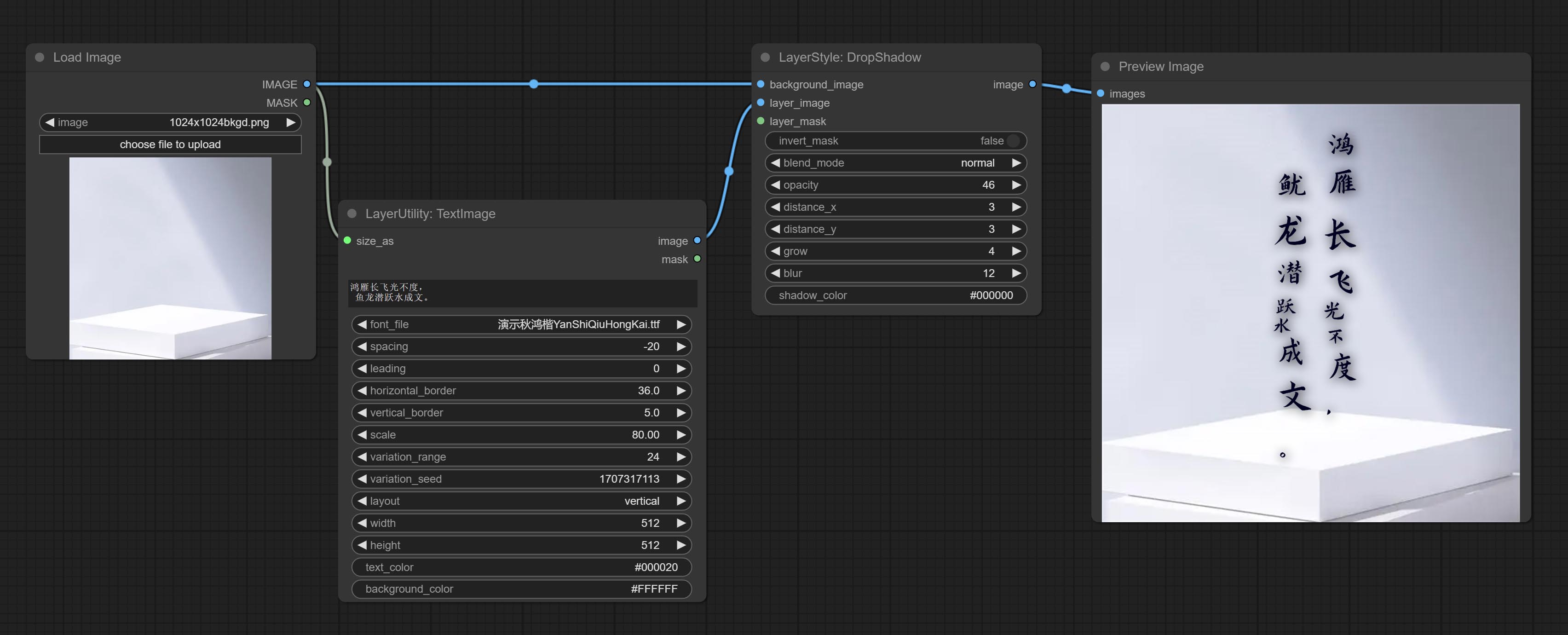

Dropshadow

그림자를 생성하십시오

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image의 경우 마스크는 모양에 따라 생성됩니다.

- Invert_mask : 마스크를 반전 시킬지 여부.

- Blend_mode 3 : 그림자의 블렌딩 모드.

- 불투명도 : 그림자의 불투명도.

- distone_x : 그림자의 수평 오프셋.

- distone_y : 그림자의 수직 오프셋.

- 성장 : 그림자 확장 진폭.

- 블러 : 그림자 블러 레벨.

- 섀도우 _color 4 : 그림자 색상.

- 메모

외부 글로우

외부 빛을 생성하십시오

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image의 경우 마스크는 모양에 따라 생성됩니다.

- Invert_mask : 마스크를 반전 시킬지 여부.

- Blend_mode 3 : 블렌딩 글로우 모드.

- 불투명성 : 빛의 불투명도.

- 밝기 : 빛의 휘도.

- glow_range : 빛의 범위.

- 흐림 : 빛의 흐림.

- Light_Color 4 : 광선의 중심 부분 색상.

- glow_color 4 : glow의 가장자리 부분 색상.

- 메모

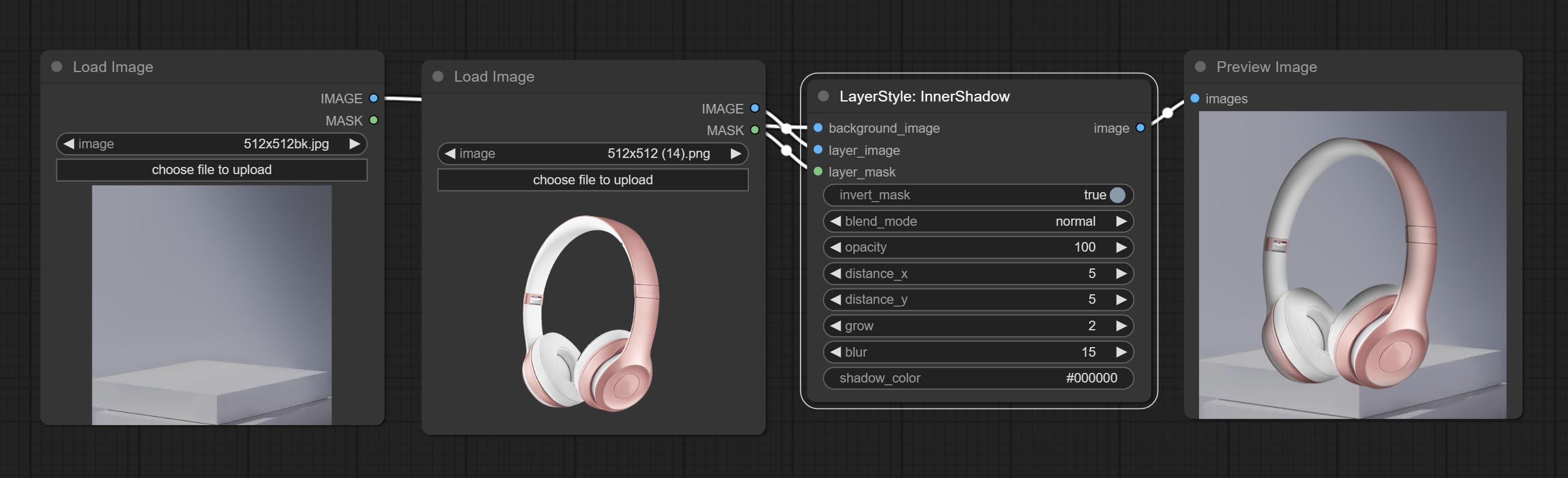

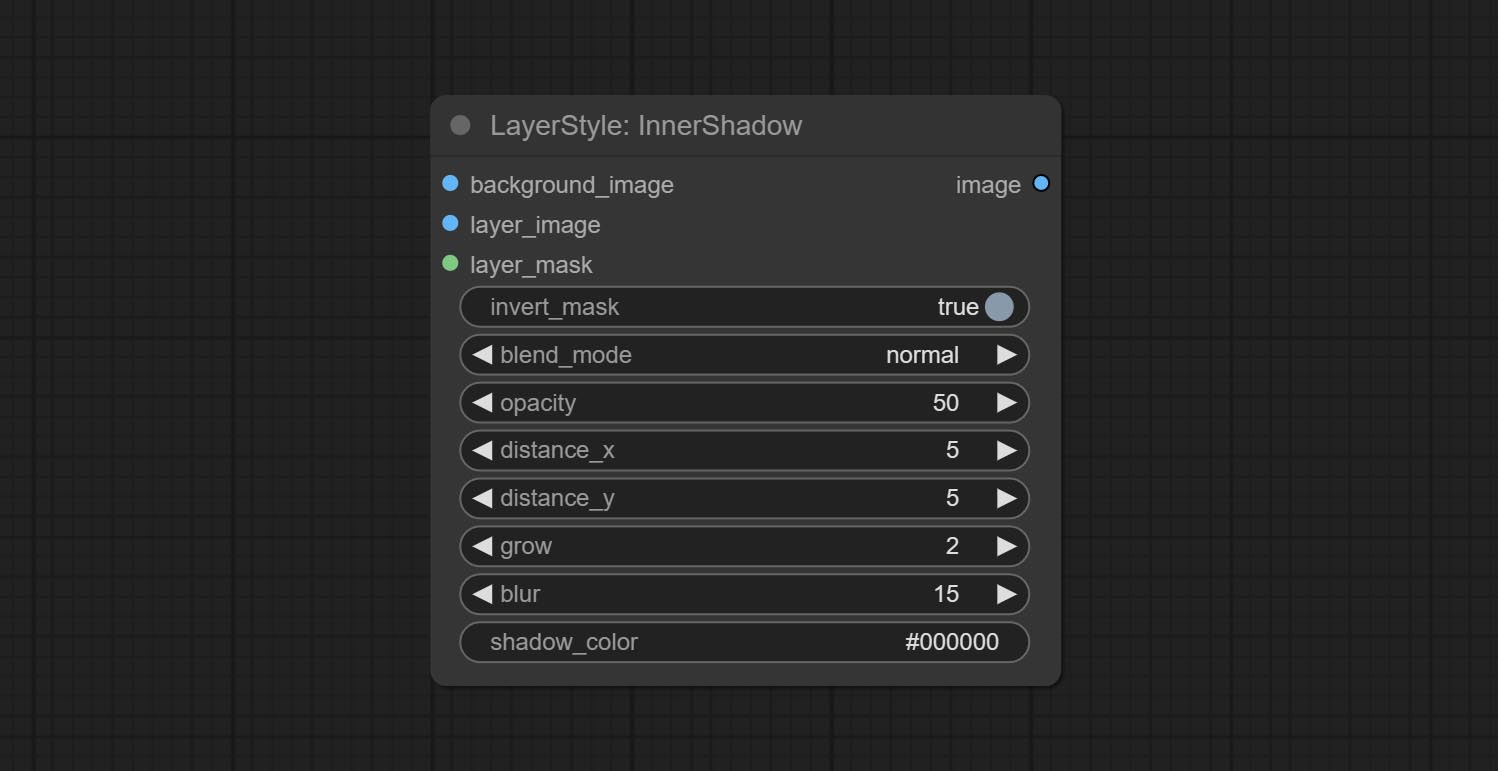

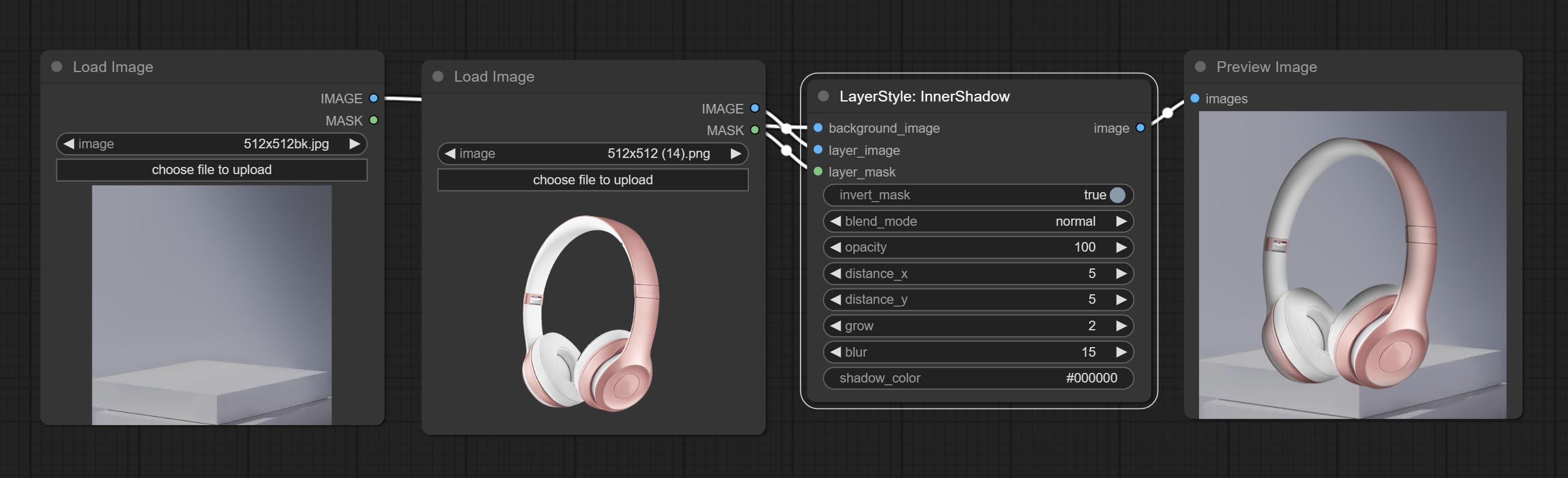

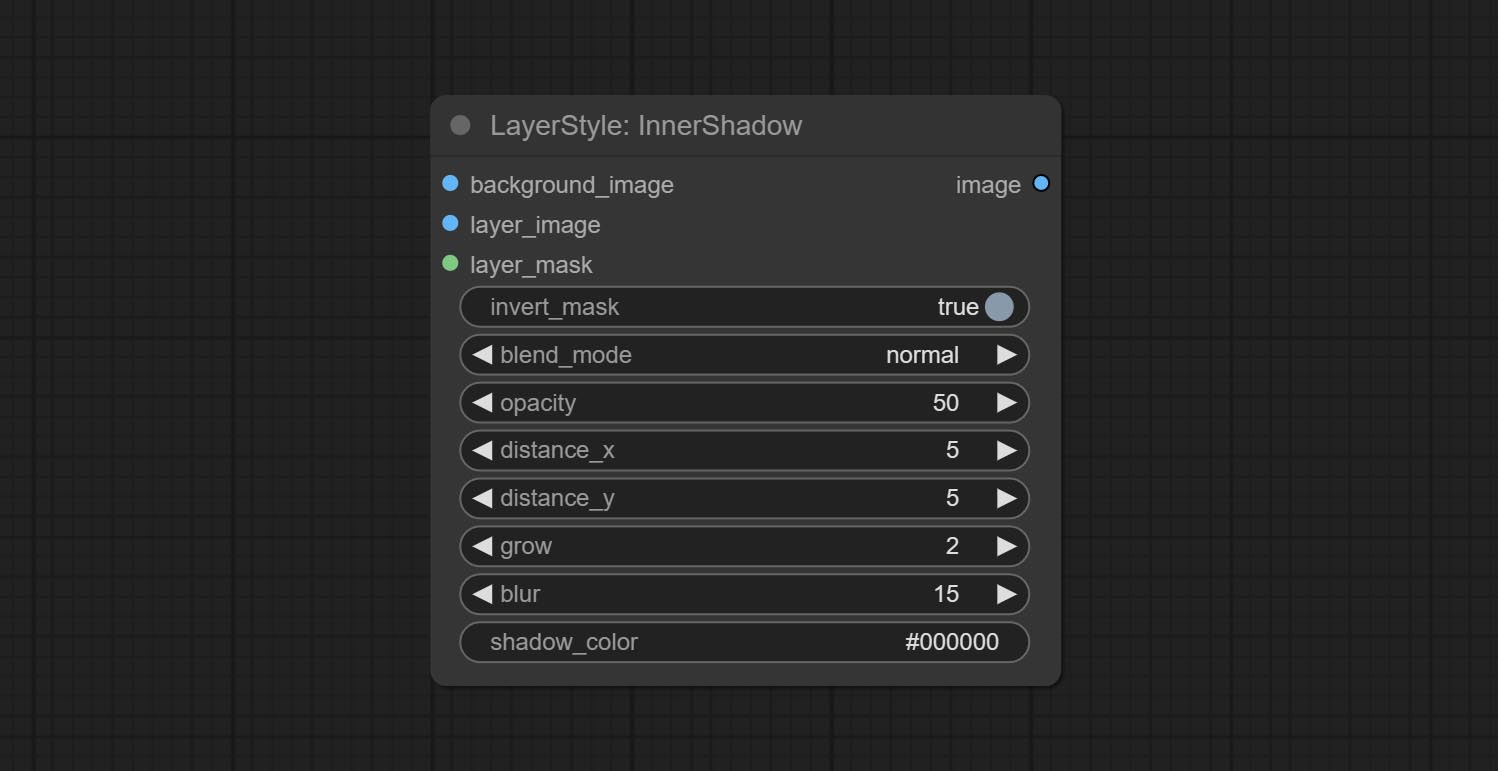

Innershadow

내부 그림자를 생성하십시오

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image의 경우 마스크는 모양에 따라 생성됩니다.

- Invert_mask : 마스크를 반전 시킬지 여부.

- Blend_mode 3 : 그림자의 블렌딩 모드.

- 불투명도 : 그림자의 불투명도.

- distone_x : 그림자의 수평 오프셋.

- distone_y : 그림자의 수직 오프셋.

- 성장 : 그림자 확장 진폭.

- 블러 : 그림자 블러 레벨.

- 섀도우 _color 4 : 그림자 색상.

- 메모

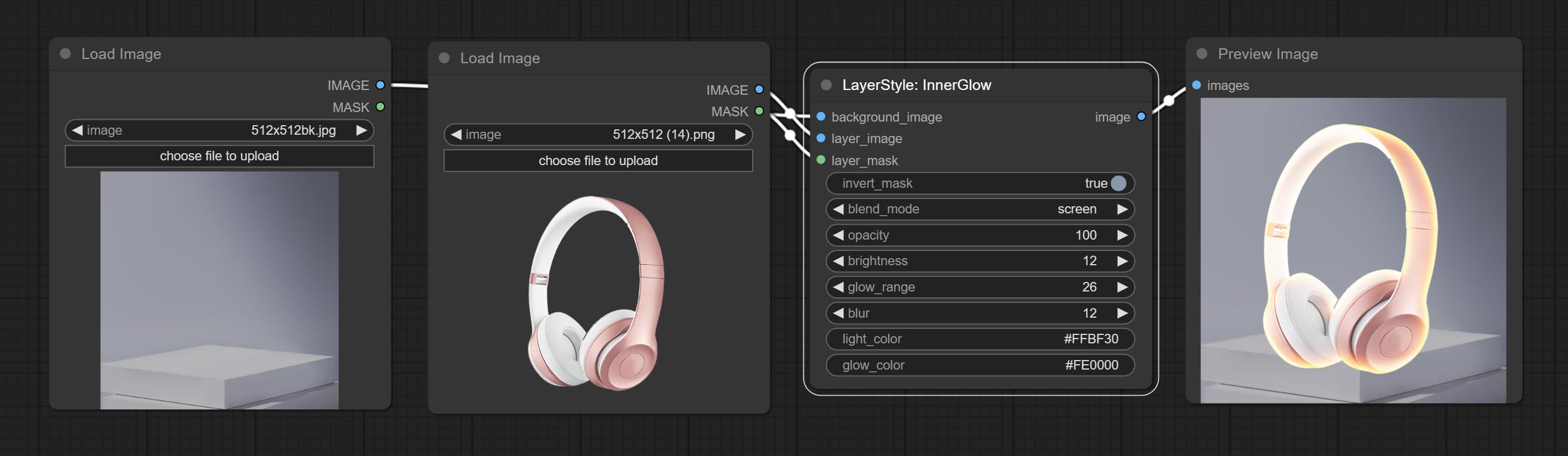

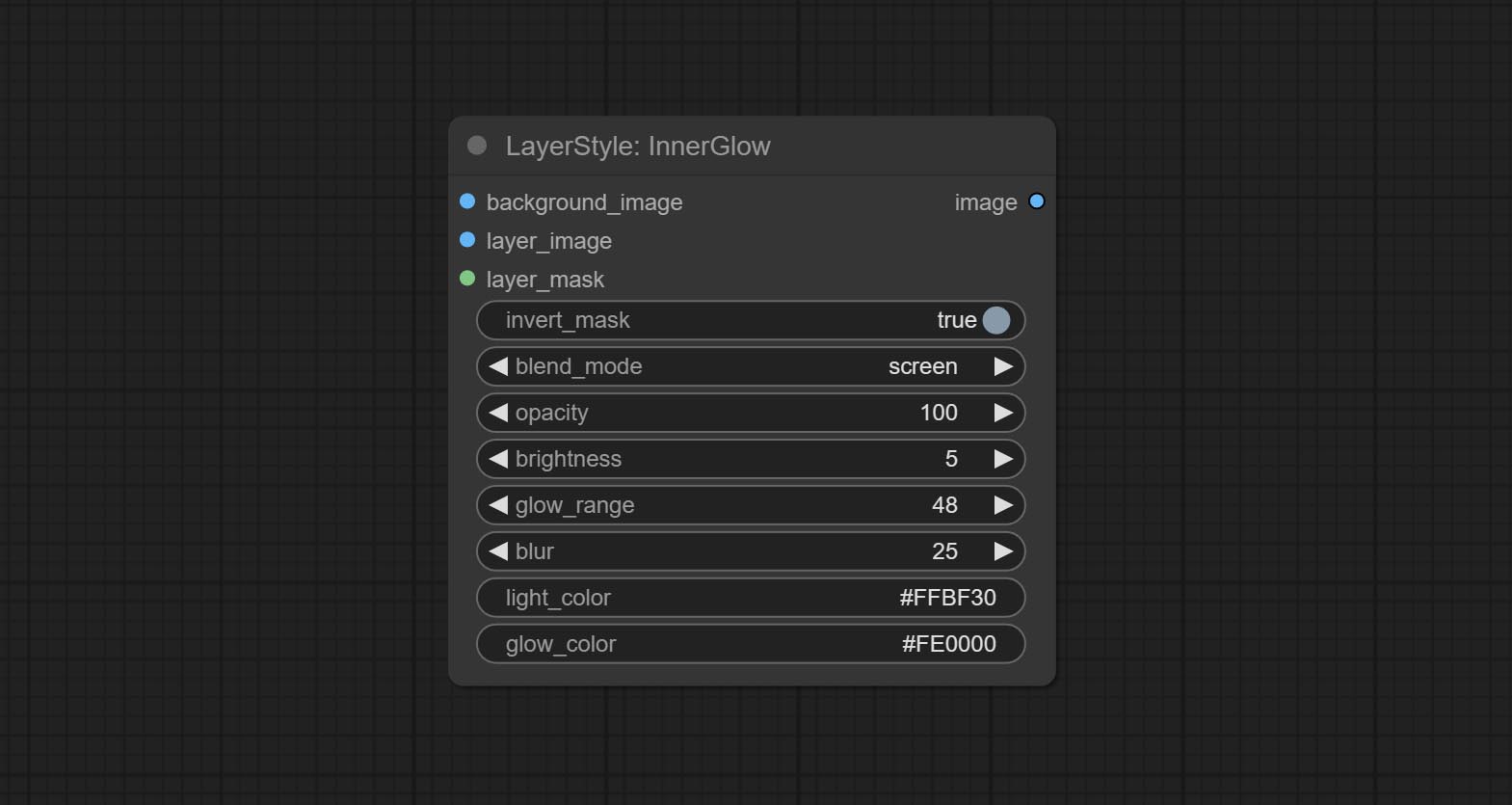

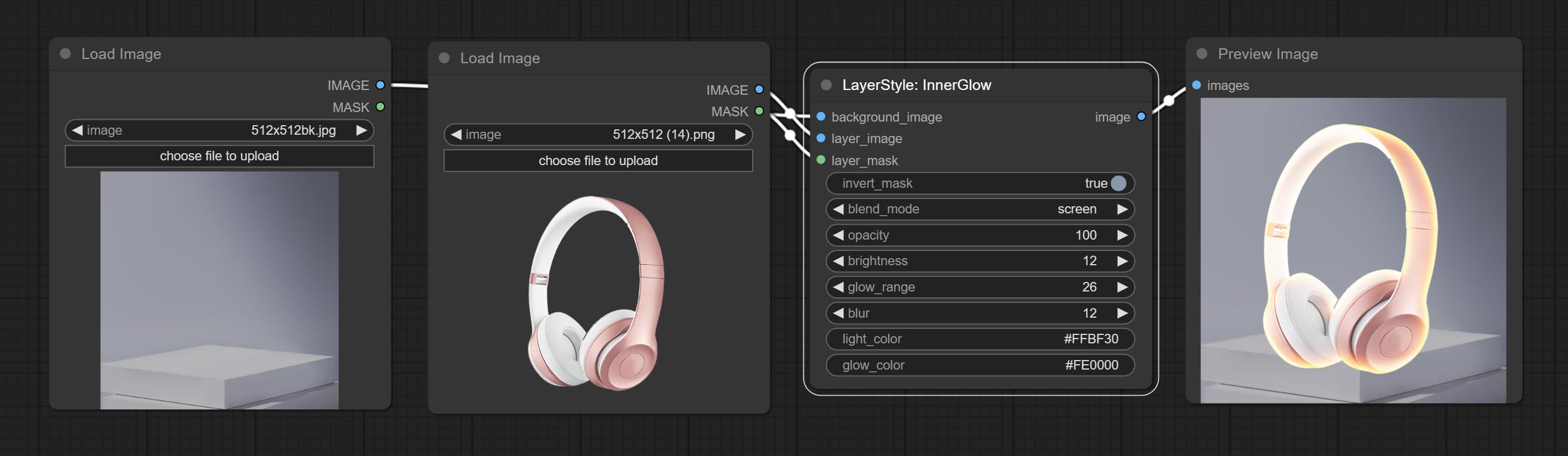

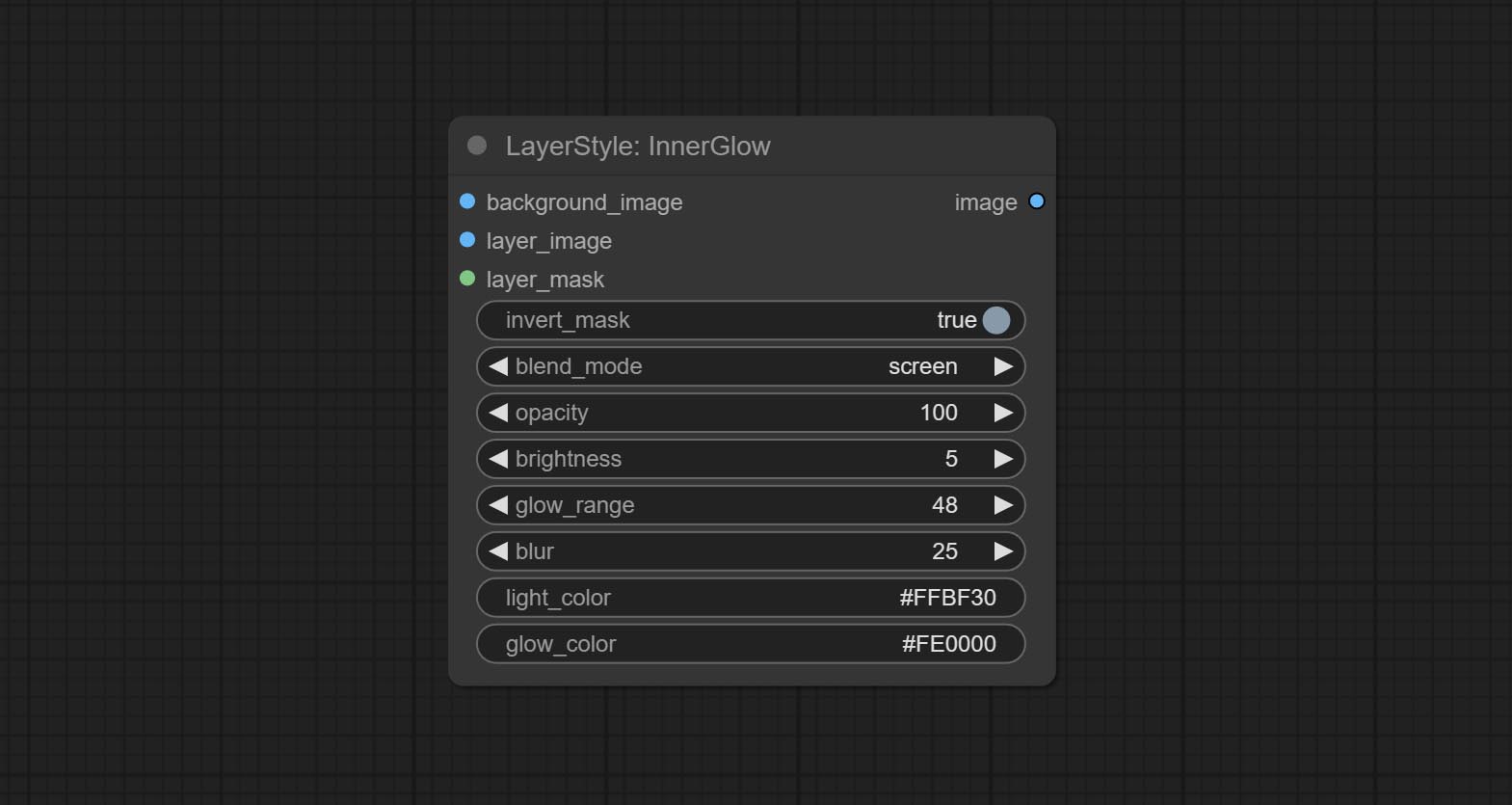

내부 글로우

내부 빛을 생성하십시오

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image의 경우 마스크는 모양에 따라 생성됩니다.

- Invert_mask : 마스크를 반전 시킬지 여부.

- Blend_mode 3 : 블렌딩 글로우 모드.

- 불투명성 : 빛의 불투명도.

- 밝기 : 빛의 휘도.

- glow_range : 빛의 범위.

- 흐림 : 빛의 흐림.

- Light_Color 4 : 광선의 중심 부분 색상.

- glow_color 4 : glow의 가장자리 부분 색상.

- 메모

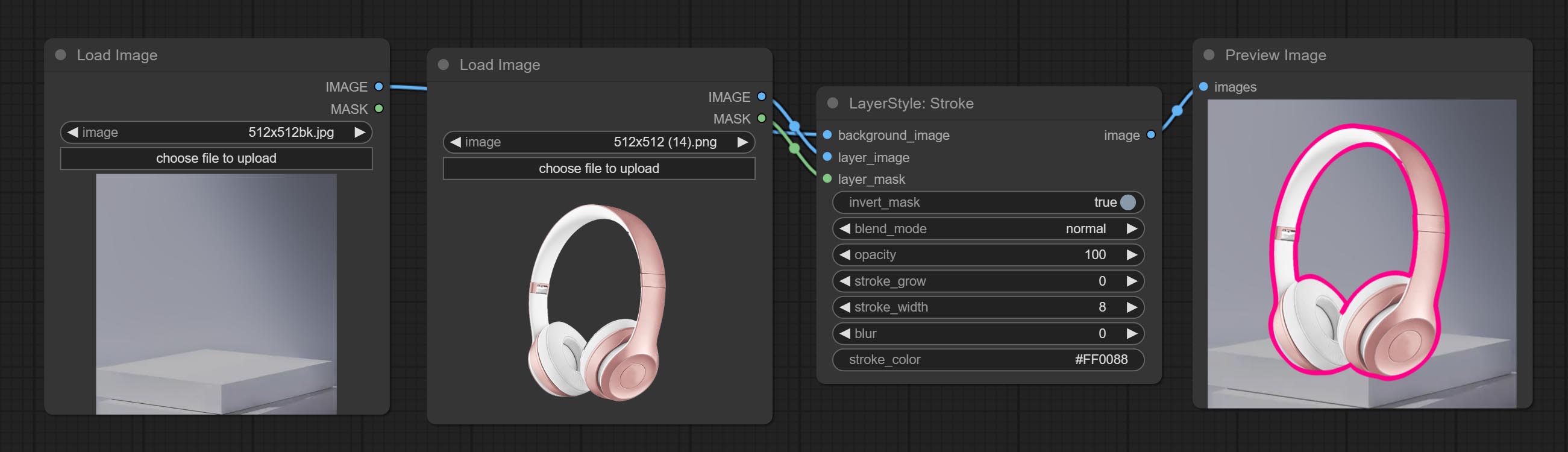

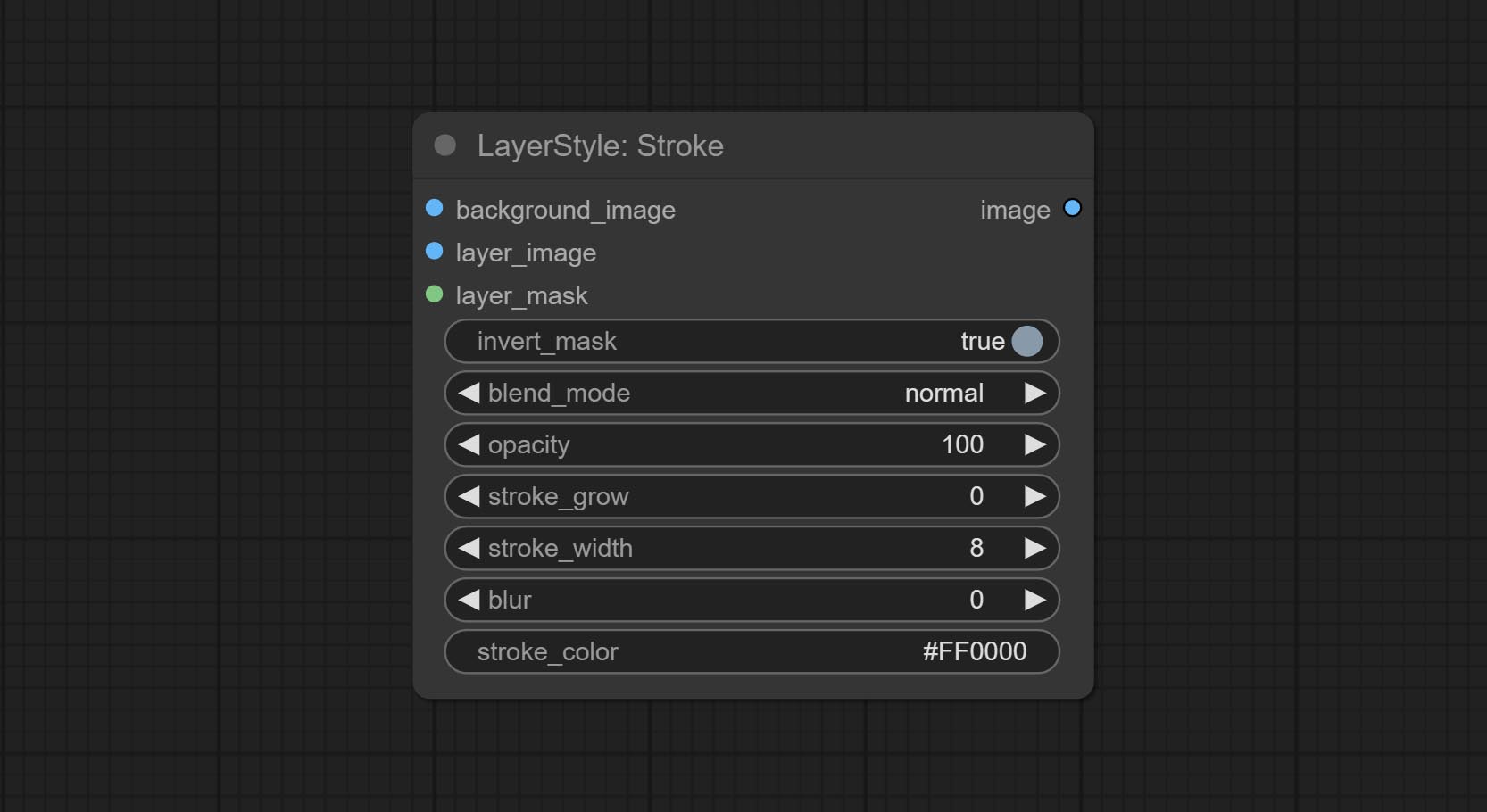

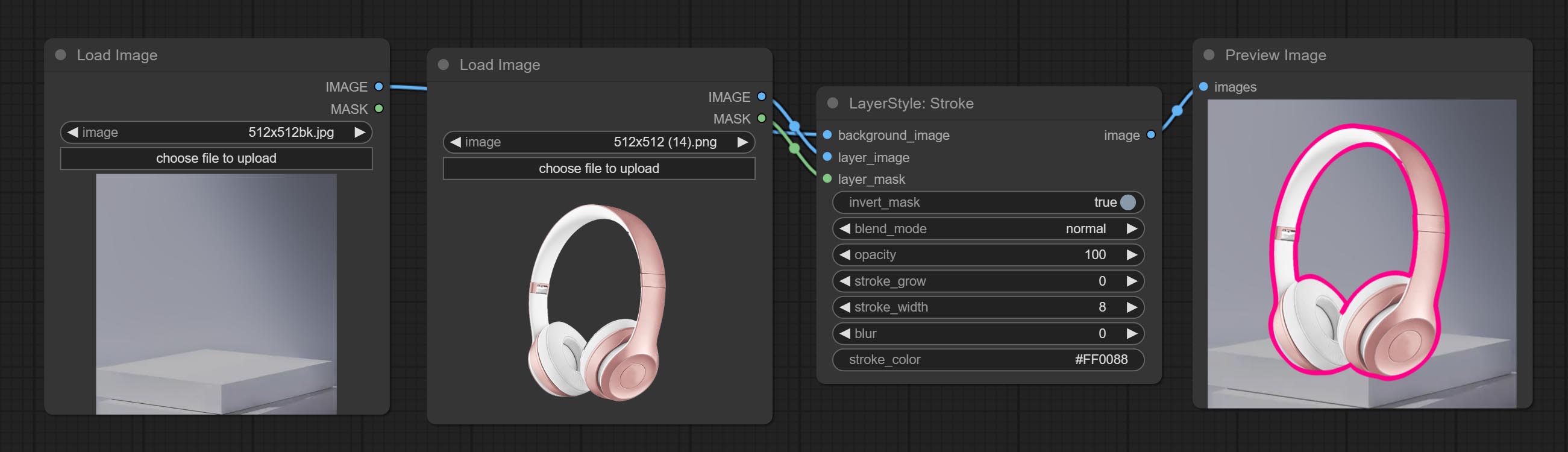

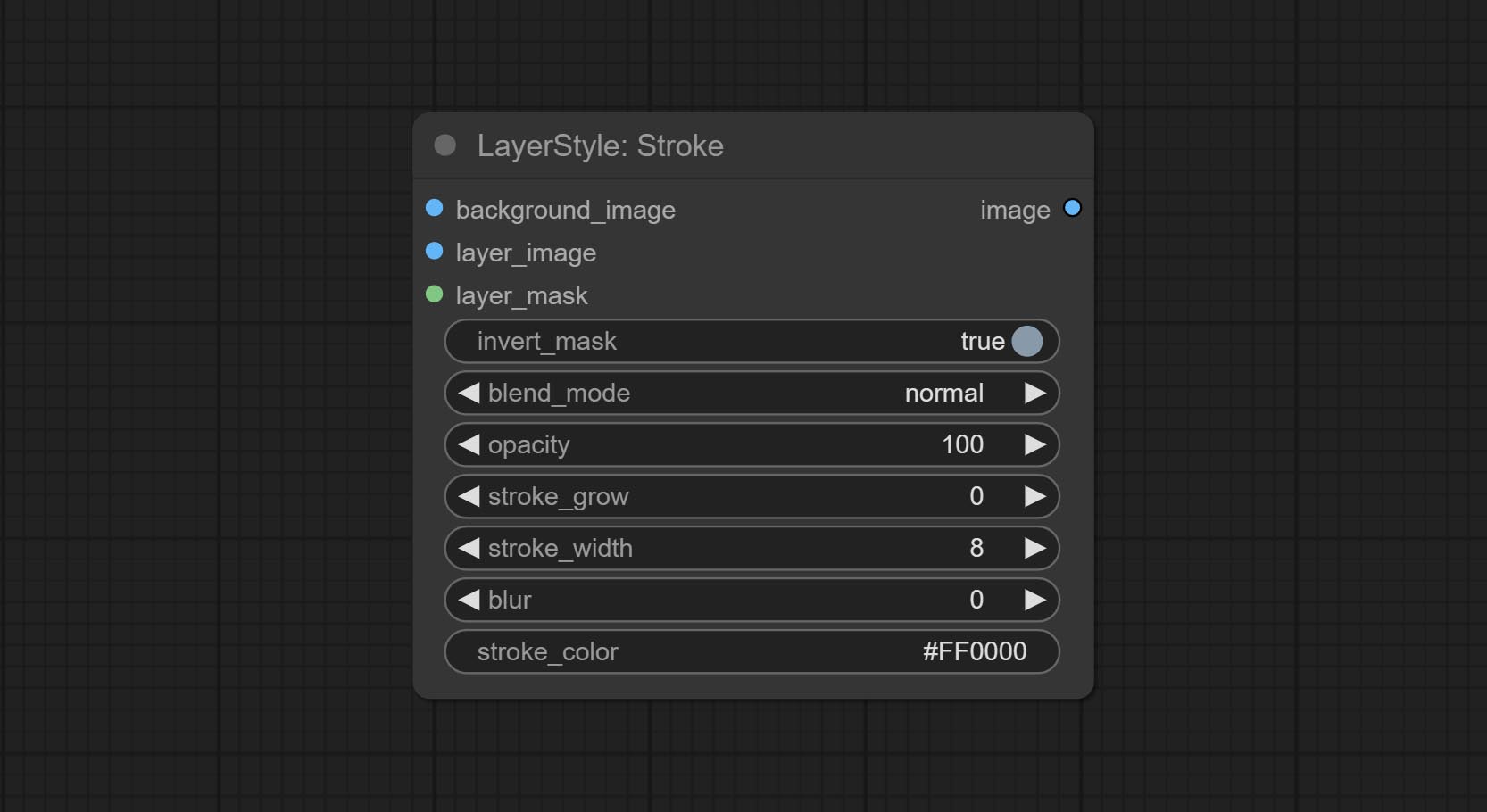

뇌졸중

층의 스트로크 생성。

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image의 경우 마스크는 모양에 따라 생성됩니다.

- Invert_mask : 마스크를 반전 시킬지 여부.

- Blend_mode 3 : 뇌졸중의 블렌딩 모드.

- 불투명도 : 뇌졸중의 불투명도.

- Stroke_grow : 스트로크 팽창/수축 진폭, 양수 값은 확장을 나타내고 음수 값은 수축을 나타냅니다.

- 스트로크 _width : 스트로크 너비.

- 흐림 : 스트로크 흐림.

- Stroke_color 4 : 16 진 RGB 형식으로 설명 된 스트로크 컬러.

- 메모

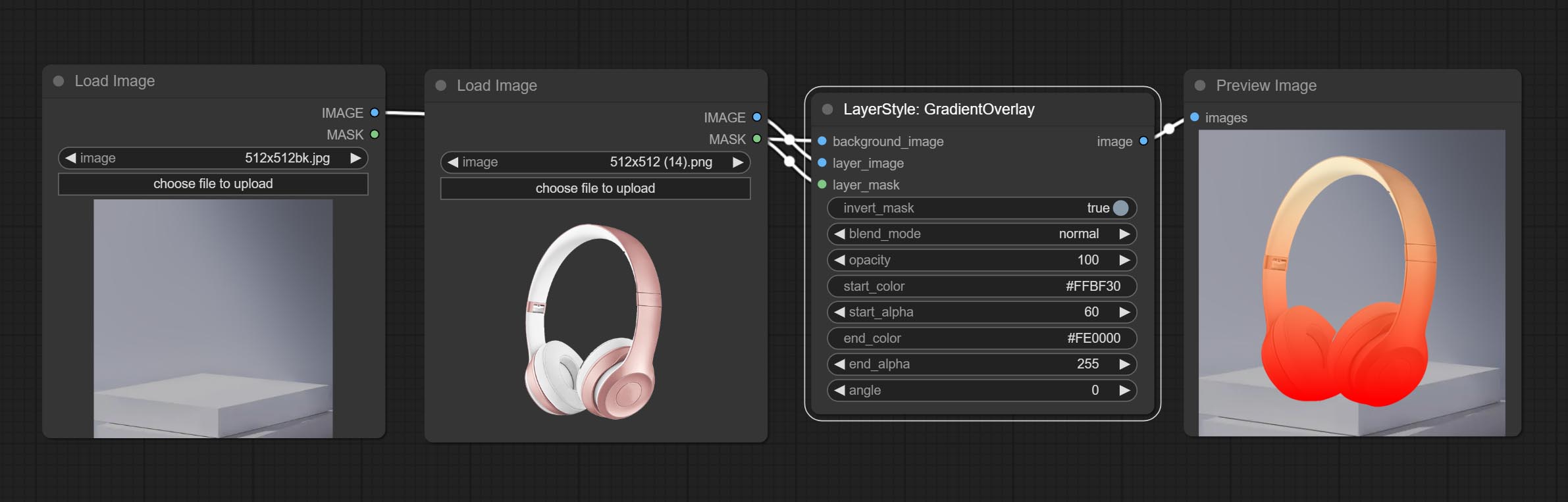

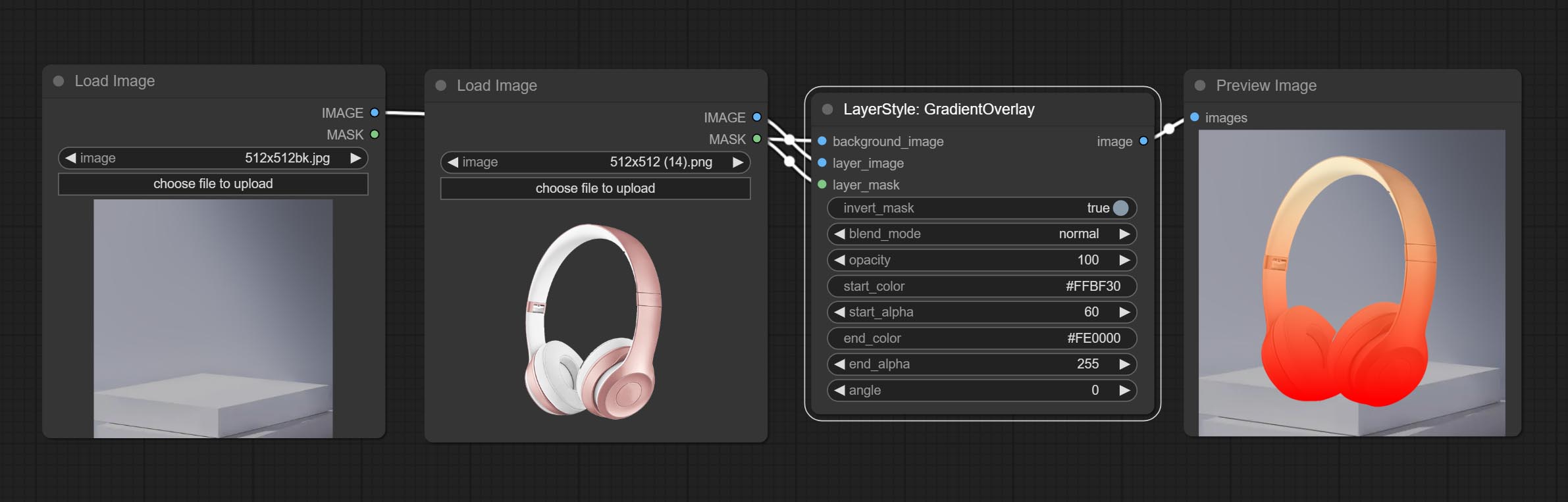

GradientOverlay

그라디언트 오버레이를 생성합니다

노드 옵션 :

- Background_Image 1 : 배경 이미지.

- Layer_Image 1 : 복합재를위한 레이어 이미지.

- Layer_mask 1,2 : Layer_Image 용 마스크.

- invert_mask: Whether to reverse the mask.

- blend_mode 3 : Blending mode of gradient.

- opacity: Opacity of stroke.

- start_color: Color at the beginning of the gradient.

- start_alpha: Transparency at the beginning of the gradient.

- end_color: Color at the end of the gradient.

- end_alpha: Transparency at the end of the gradient.

- angle: Gradient rotation angle.

- 메모

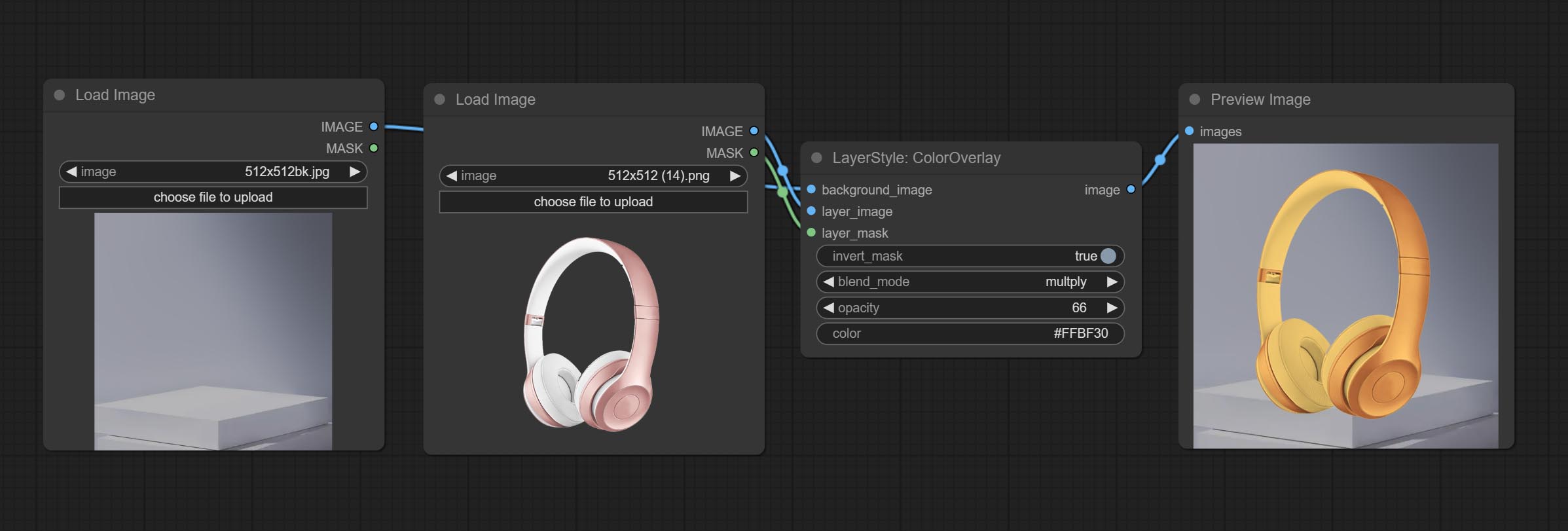

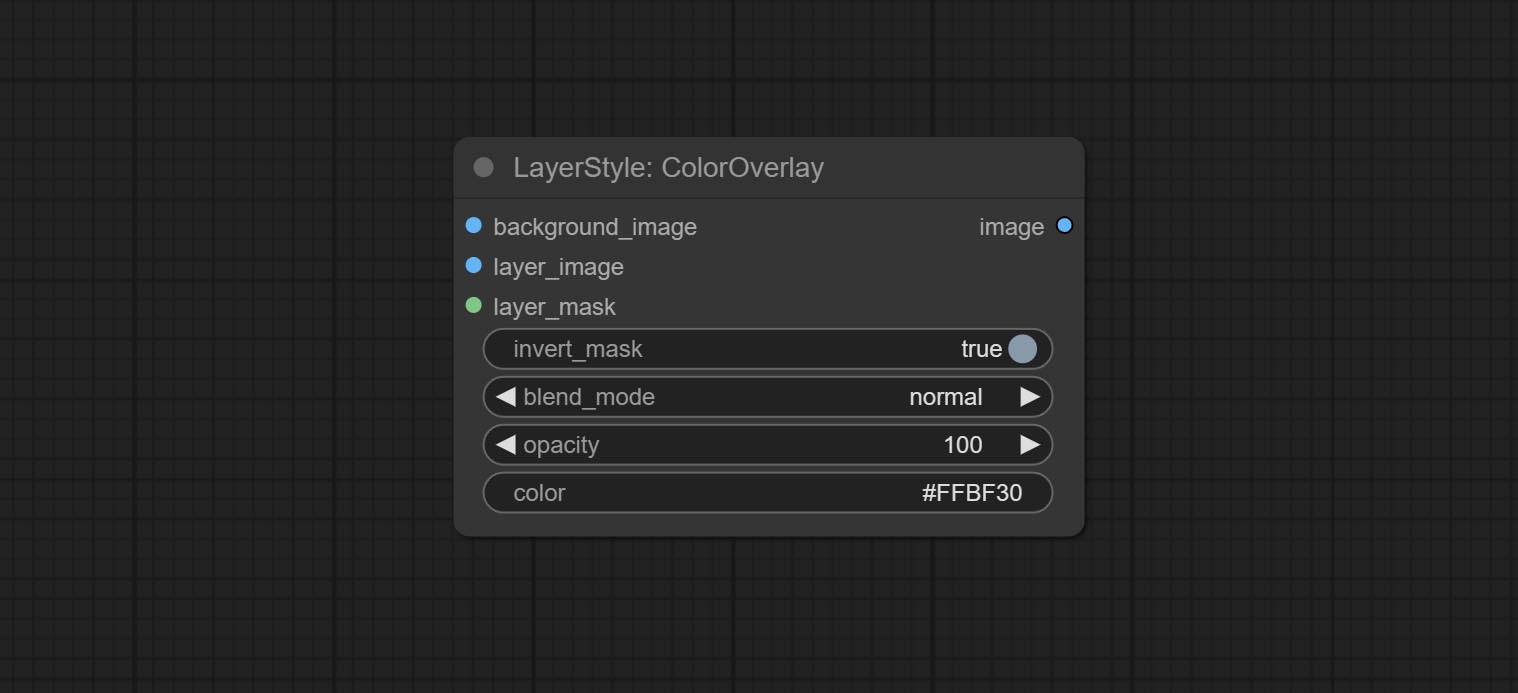

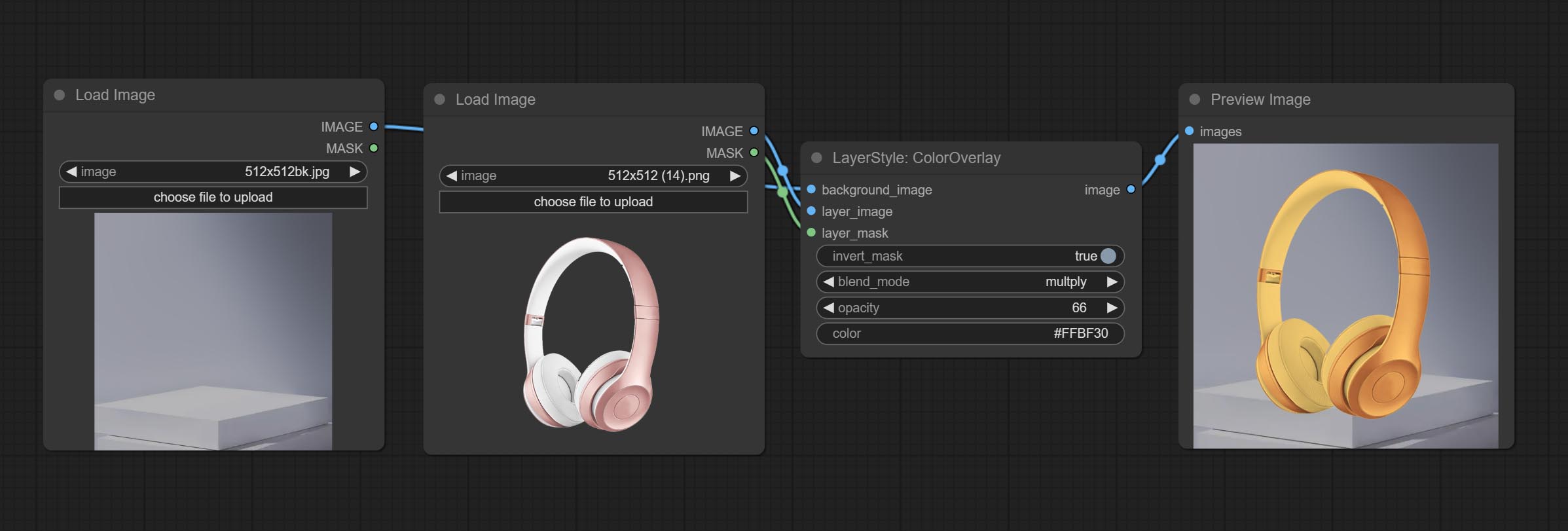

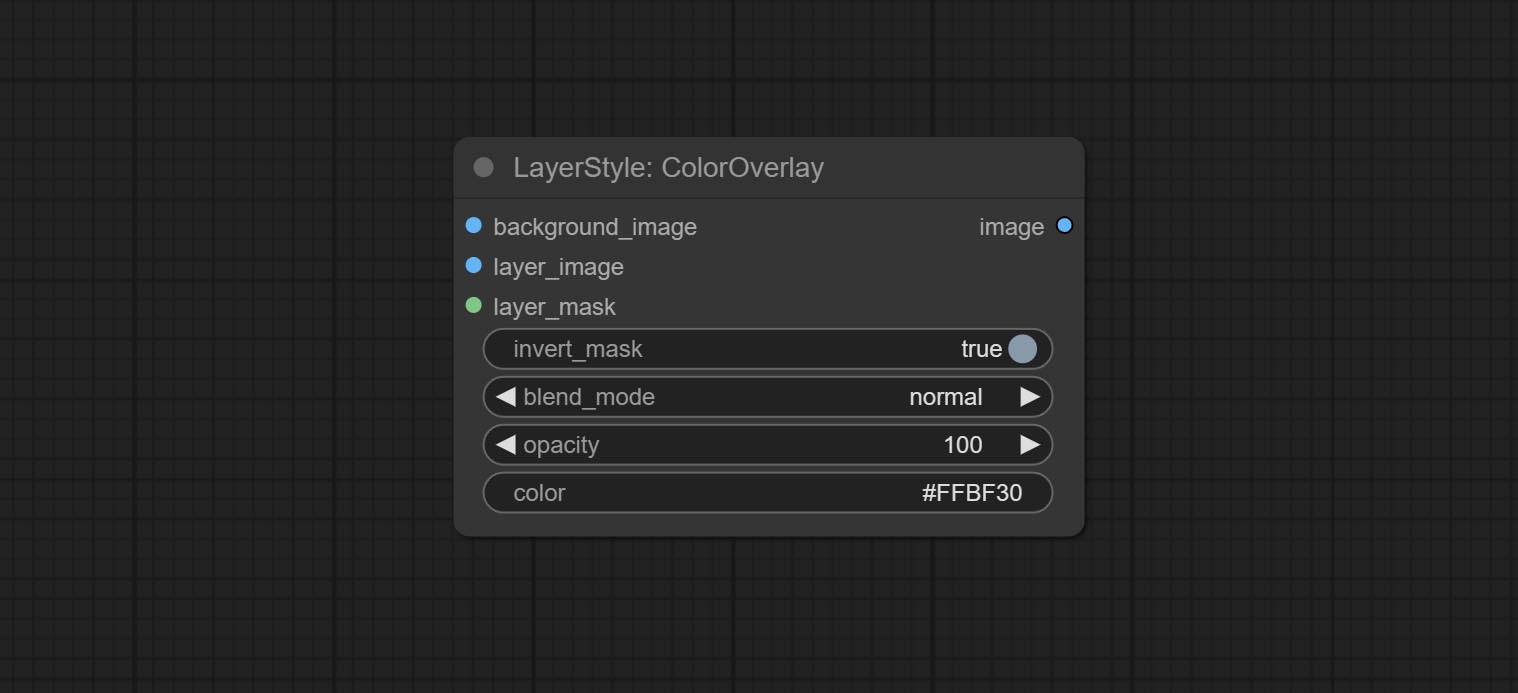

ColorOverlay

Generate color overlay

Node options:

- background_image 1 : The background image.

- layer_image 1 : Layer image for composite.

- layer_mask 1,2 : Mask for layer_image.

- invert_mask: Whether to reverse the mask.

- blend_mode 3 : Blending mode of color.

- opacity: Opacity of stroke.

- color: Color of overlay.

- 메모

LayerColor

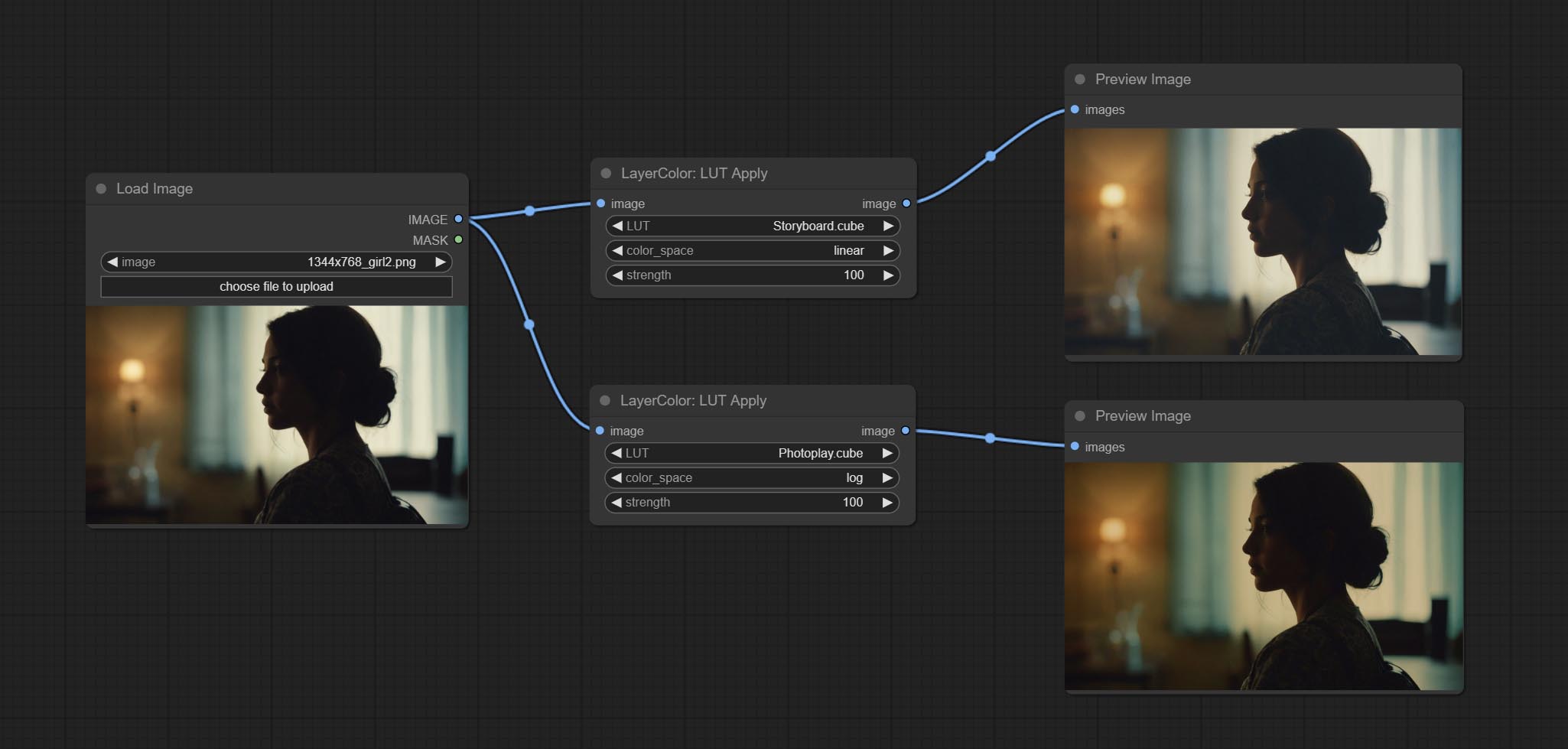

LUT Apply

Apply LUT to the image. only supports .cube format.

Node options:

- LUT * : Here is a list of available. cube files in the LUT folder, and the selected LUT files will be applied to the image.

- color_space: For regular image, please select linear, for image in the log color space, please select log.

- strength: Range 0~100, LUT application strength. The larger the value, the greater the difference from the original image, and the smaller the value, the closer it is to the original image.

* LUT folder is defined in resource_dir.ini , this file is located in the root directory of the plug-in, and the default name is resource_dir.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open the text editing software and find the line starting with "LUT_dir=", after "=", enter the custom folder path name. support defining multiple folders in resource-dir.ini , separated by commas, semicolons, or spaces. all .cube files in this folder will be collected and displayed in the node list during ComfyUI initialization. If the folder set in ini is invalid, the LUT folder that comes with the plugin will be enabled.

AutoAdjust

Automatically adjust the brightness, contrast, and white balance of the image. Provide some manual adjustment options to compensate for the shortcomings of automatic adjustment.

Node Options:

- strength: Strength of adjust. The larger the value, the greater the difference from the original image.

- brightness: Manual adjustment of brightness.

- contrast: Manual adjustment of contrast.

- saturation: Manual adjustment of saturation.

- red: Manual adjustment of the red channel.

- green: Manual adjustment of the green channel.

- blue: Manual adjustment of the blue channel.

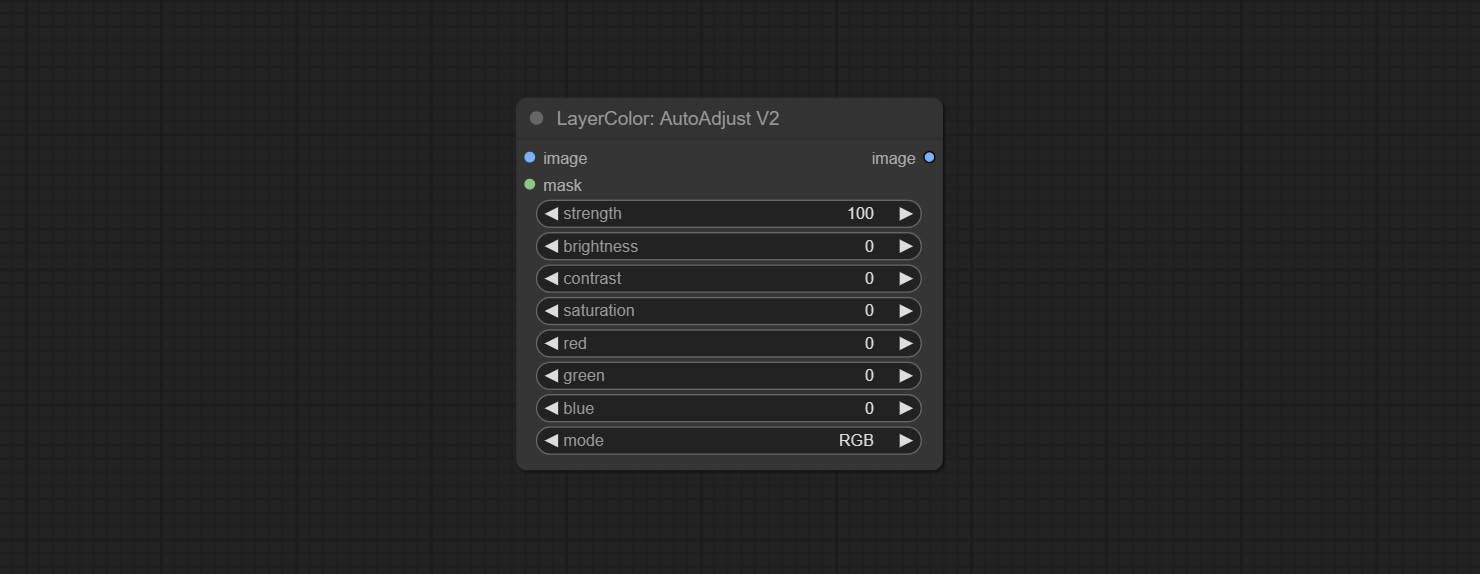

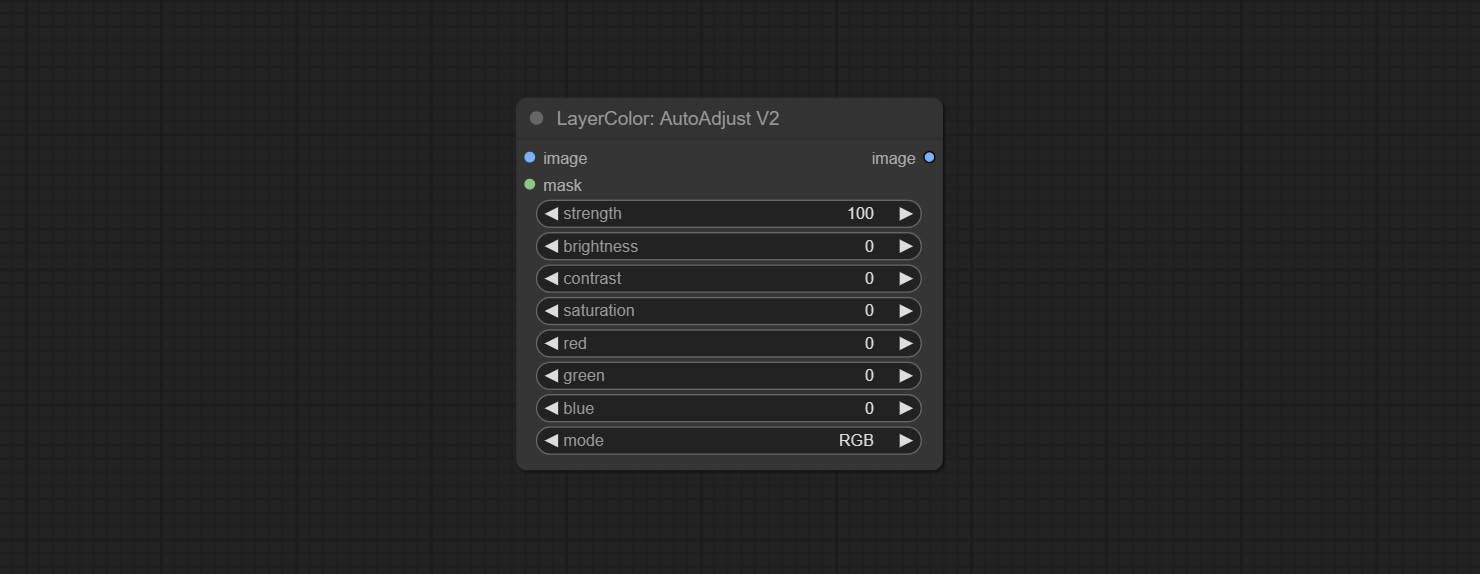

AutoAdjustV2

On the basis of AutoAdjust, add mask input and only calculate the content inside the mask for automatic color adjustment. Add multiple automatic adjustment modes.

The following changes have been made based on AutoAdjust:

- mask: Optional mask input.

- mode: Automatic adjustment mode. "RGB" automatically adjusts according to the three channels of RGB, "lum + sat"automatically adjusts according to luminance and saturation, "luminance" automatically adjusts according to luminance, "saturation" automatically adjusts according to saturation, and "mono" automatically adjusts according to grayscale and outputs monochrome.

AutoBrightness

Automatically adjust too dark or too bright image to moderate brightness, and support mask input. When mask input, only the content of the mask part is used as the data source of the automatic brightness. The output is still the whole adjusted image.

Node options:

- strength: Automatically adjust the intensity of the brightness. The larger the value, the more biased towards the middle value, the greater the difference from the original picture.

- saturation: Color saturation. Changes in brightness usually result in changes in color saturation, where appropriate compensation can be adjusted.

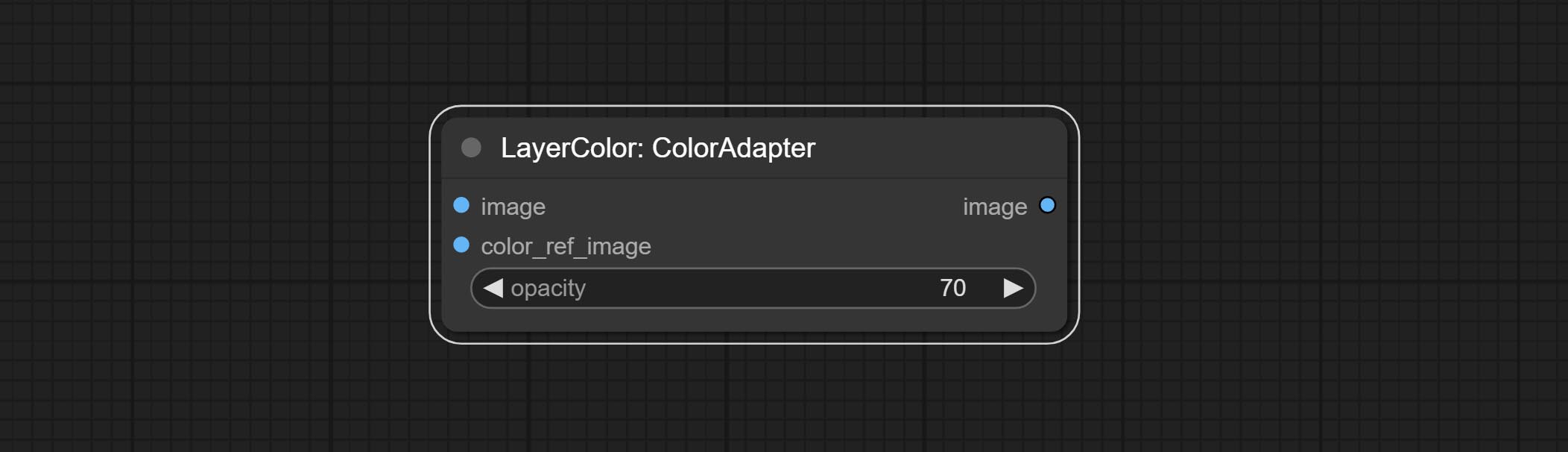

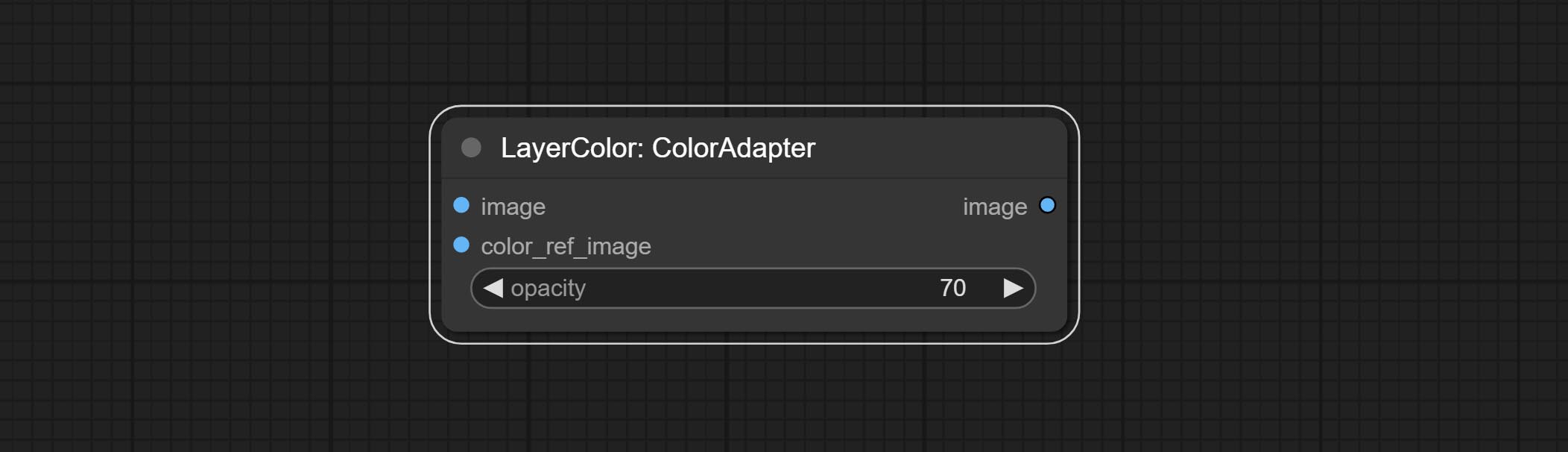

ColorAdapter

Auto adjust the color tone of the image to resemble the reference image.

Node options:

- opacity: The opacity of an image after adjusting its color tone.

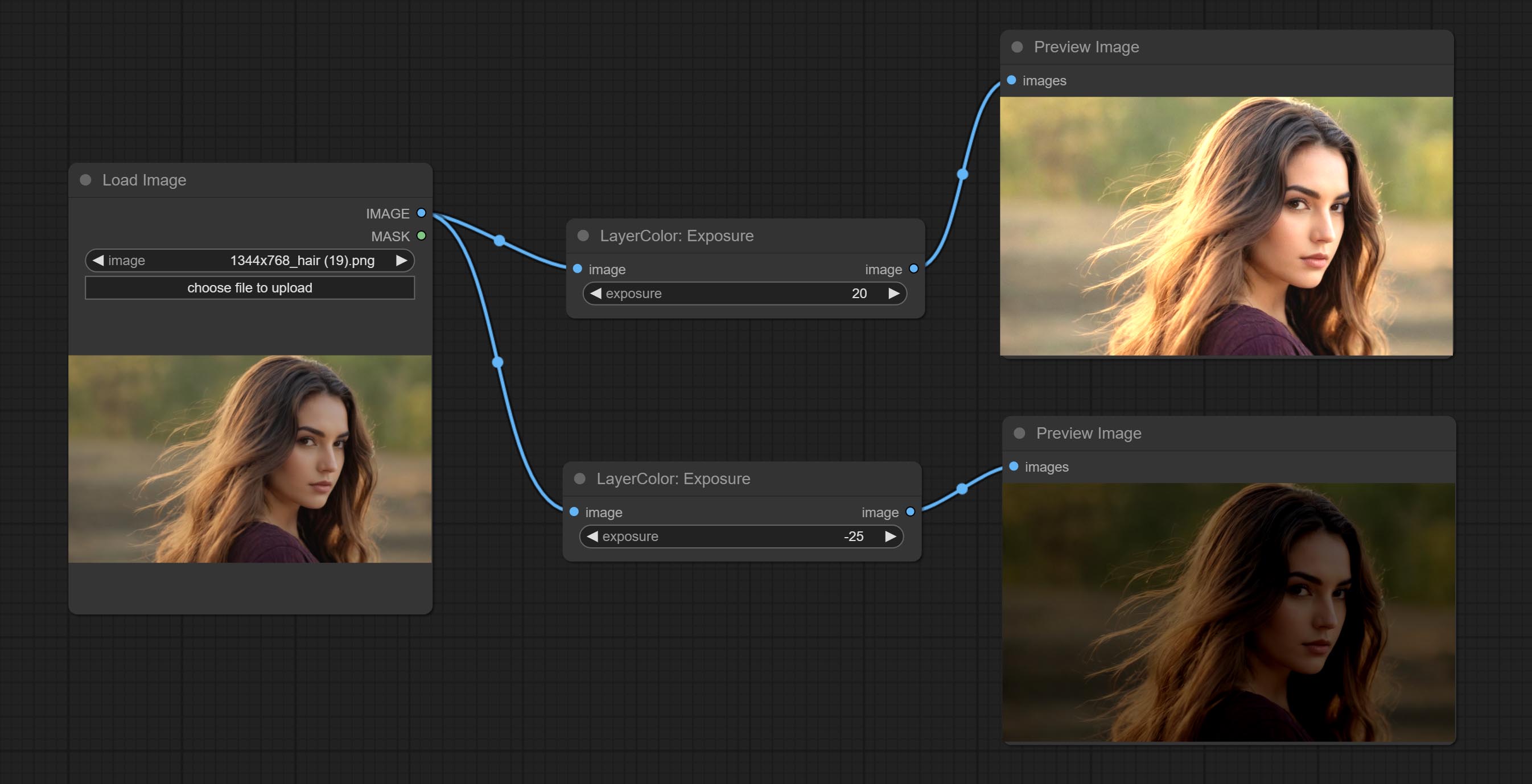

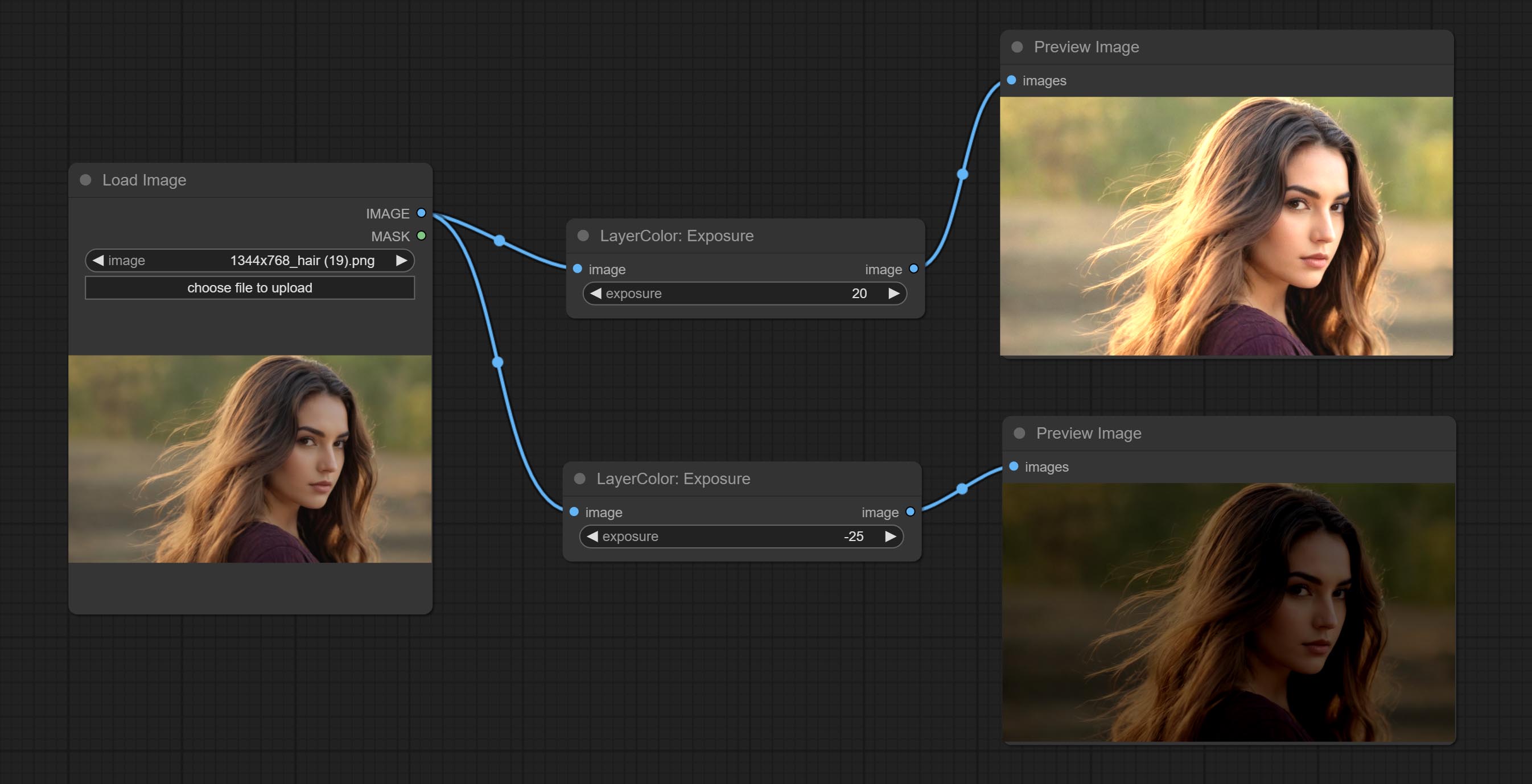

노출

Change the exposure of the image.

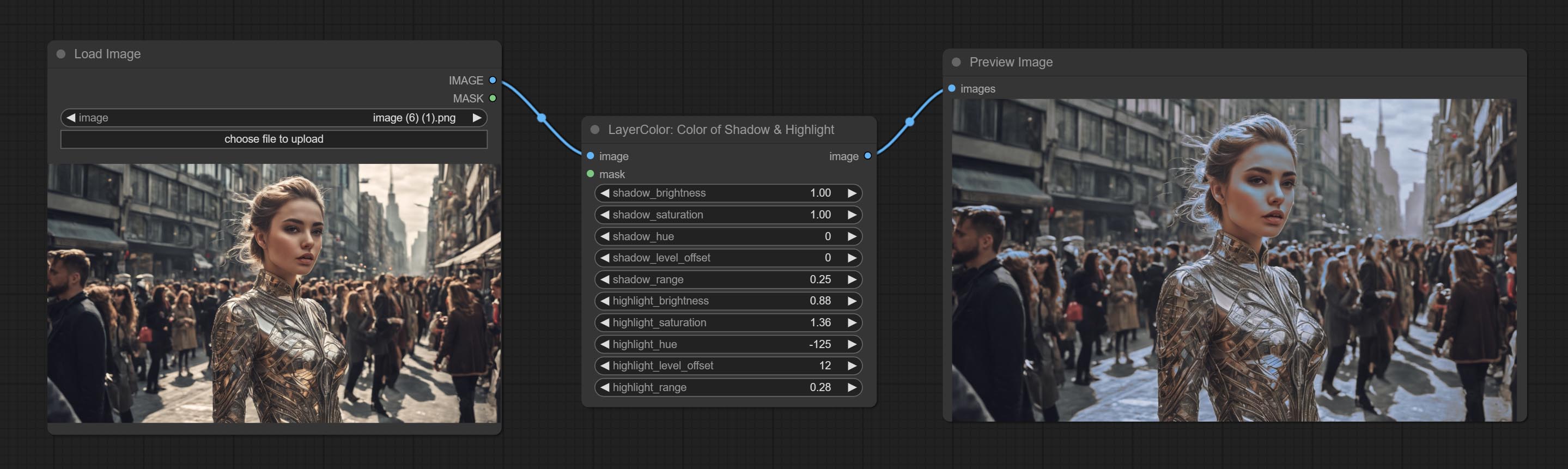

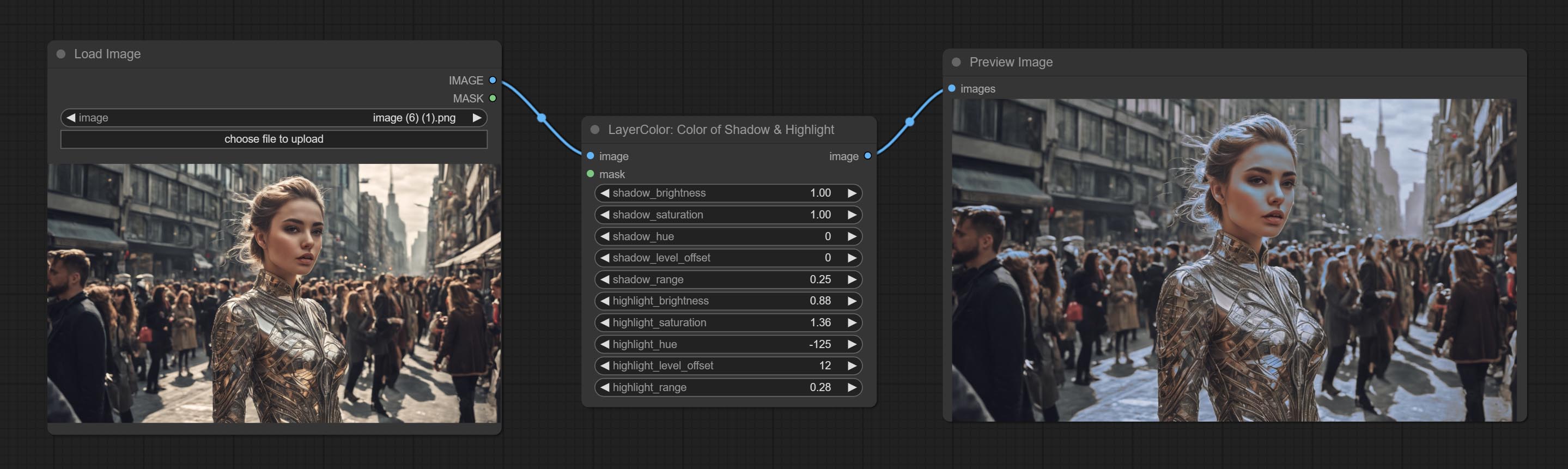

Color of Shadow & Highlight

Adjust the color of the dark and bright parts of the image.

Node options:

- image: The input image.

- mask: Optional input. if there is input, only the colors within the mask range will be adjusted.

- shadow_brightness: The brightness of the dark area.

- shadow_saturation: The color saturation in the dark area.

- shadow_hue: The color hue in the dark area.

- shadow_level_offset: The offset of values in the dark area, where larger values bring more areas closer to the bright into the dark area.

- shadow_range: The transitional range of the dark area.

- highlight_brightness: The brightness of the highlight area.

- highlight_saturation: The color saturation in the highlight area.

- highlight_hue: The color hue in the highlight area.

- highlight_level_offset: The offset of values in the highlight area, where larger values bring more areas closer to the dark into the highlight area.

- highlight_range: The transitional range of the highlight area.

Node option:

- exposure: Exposure value. Higher values indicate brighter image.

Color of Shadow HighlightV2

A replica of the Color of Shadow & Highlight node, with the "&" character removed from the node name to avoid ComfyUI workflow parsing errors.

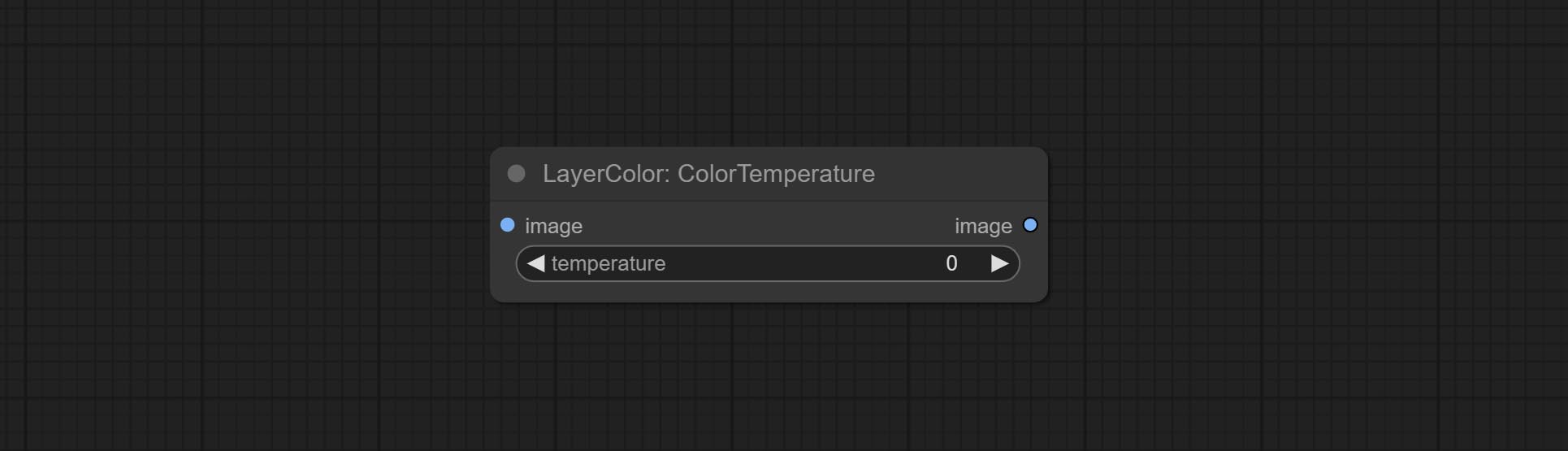

ColorTemperature

Change the color temperature of the image.

Node Options:

- temperature: Color temperature value. Range between-100 and 100. The higher the value, the higher the color temperature (bluer); The lower the color temperature, the lower the color temperature (yellowish).

레벨

Change the levels of image.

Node Options:

- channel: Select the channel you want to adjust. Available in RGB, red, green, blue.

- black_point * : Input black point value. Value range 0-255, default 0.

- white_point * : Input white point value. Value range 0-255, default 255.

- gray_point: Input grey point values. Value range 0.01-9.99, default 1.

- output_black_point * : Output black point value. Value range 0-255, default 0.

- output_white_point * : Output white point value. Value range 0-255, default 255.

* If the black_point or output_black_point value is greater than white_point or output_white_point, the two values are swapped, with the larger value used as white_point and the smaller value used as black_point.

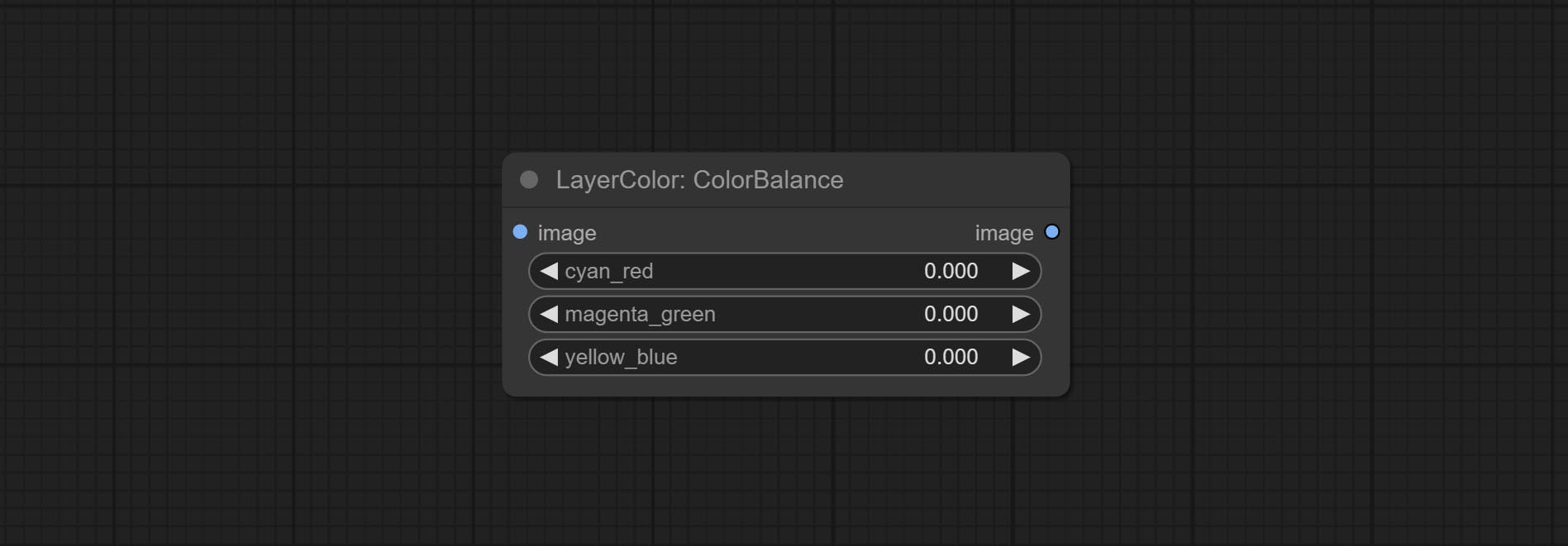

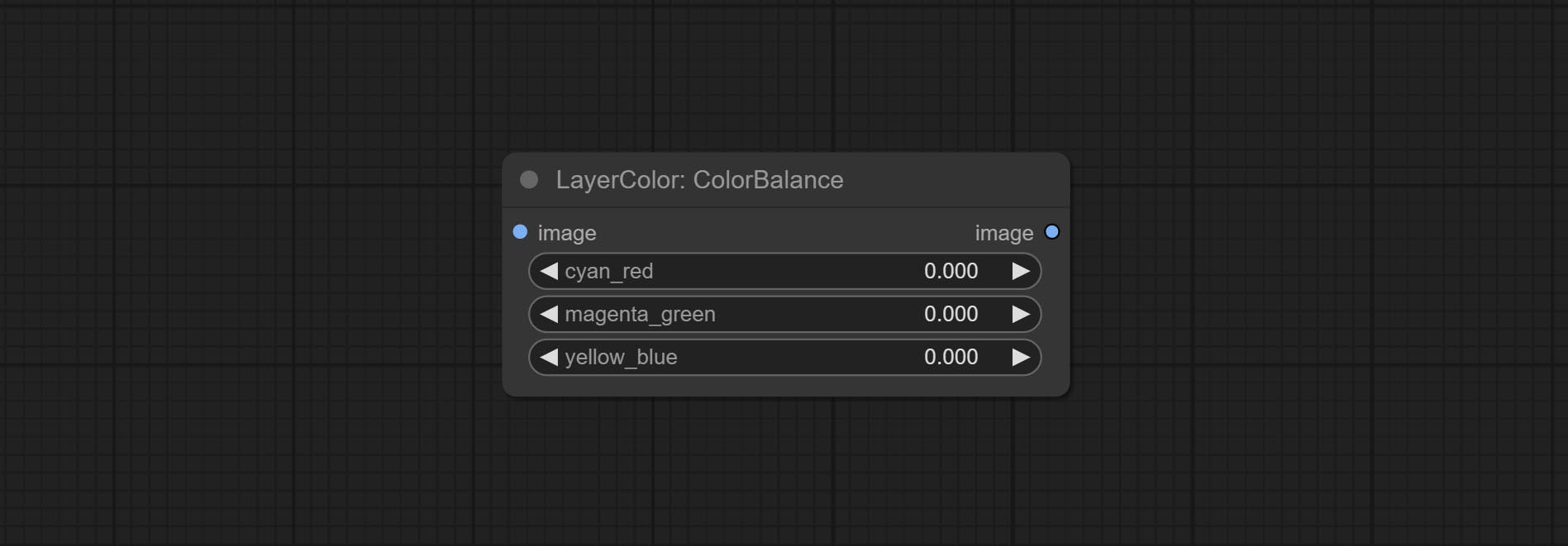

ColorBalance

Change the color balance of an image.

Node Options:

- cyan_red: Cyan-Red balance. negative values are leaning cyan, positive values are leaning red.

- magenta_green: Megenta-Green balance. negative values are leaning megenta, positive values are leaning green.

- yellow_blue: Yellow-Blue balance. negative values are leaning yellow, positive values are leaning blue.

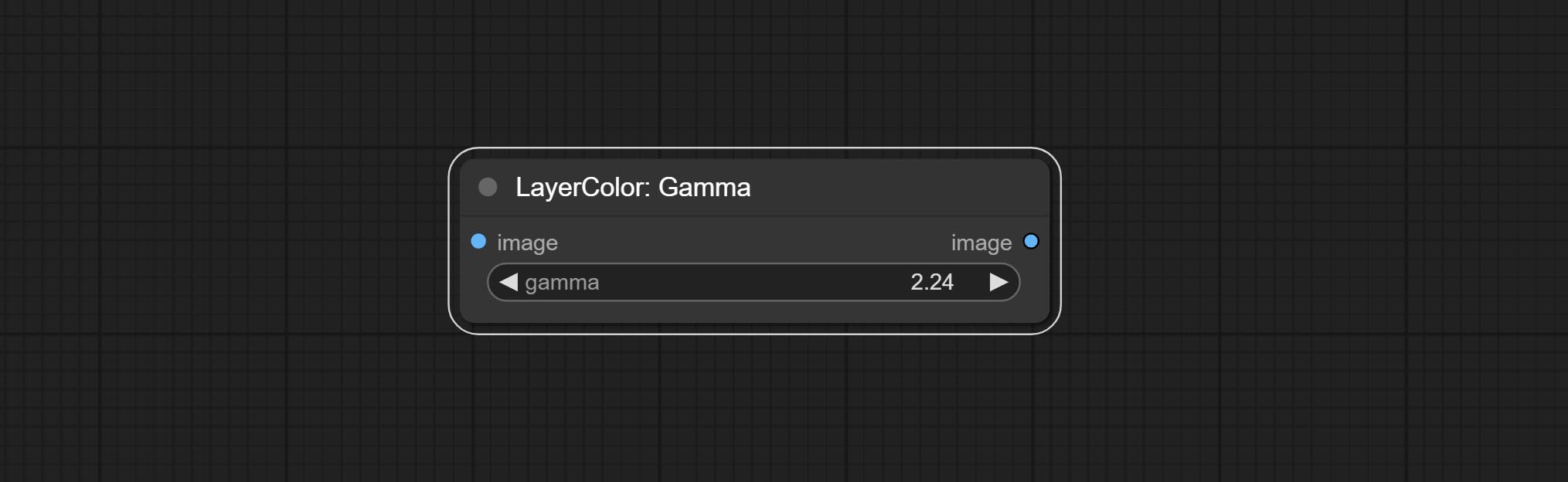

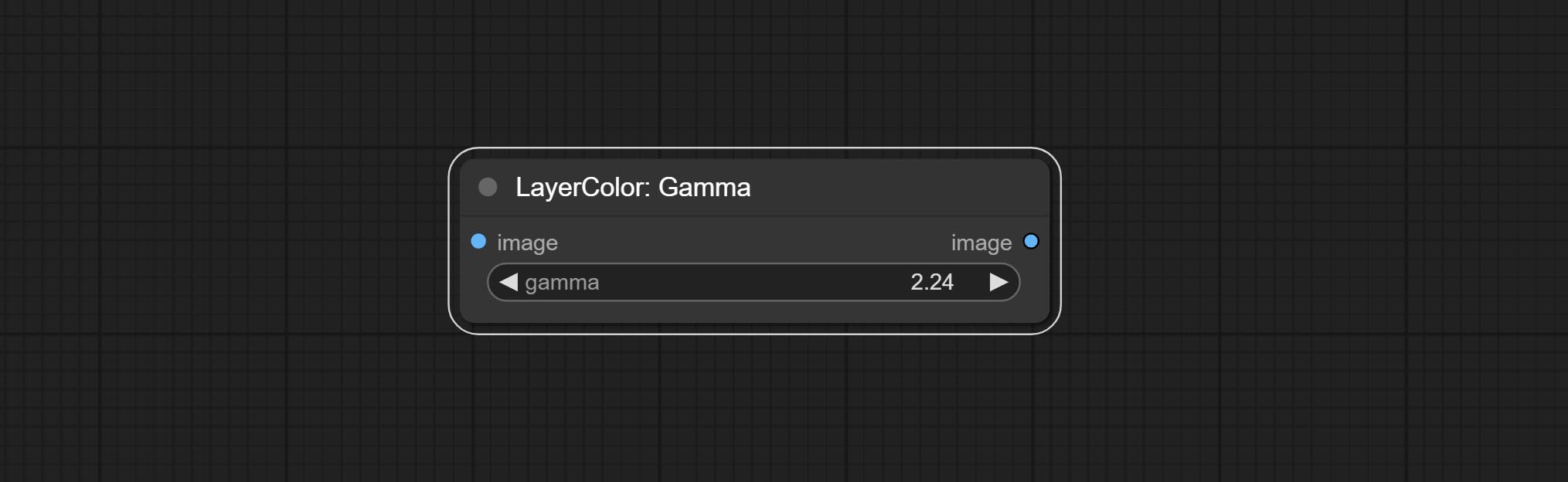

감마

Change the gamma value of the image.

Node options:

- gamma: Value of the Gamma.

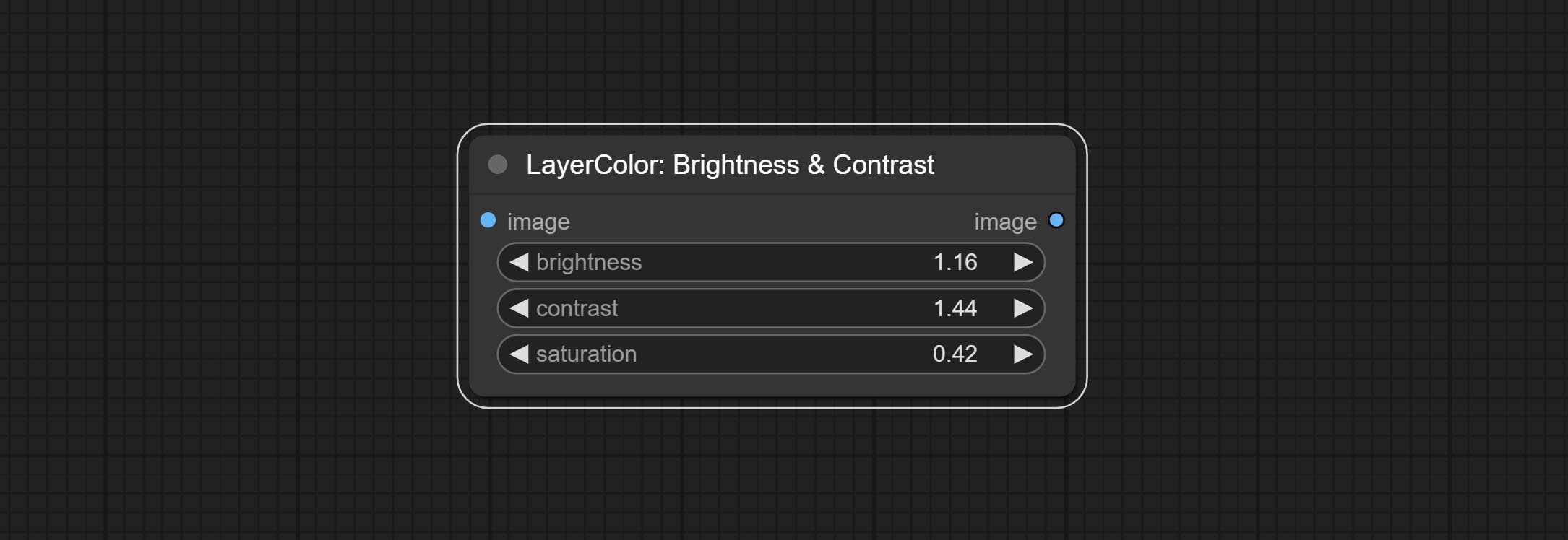

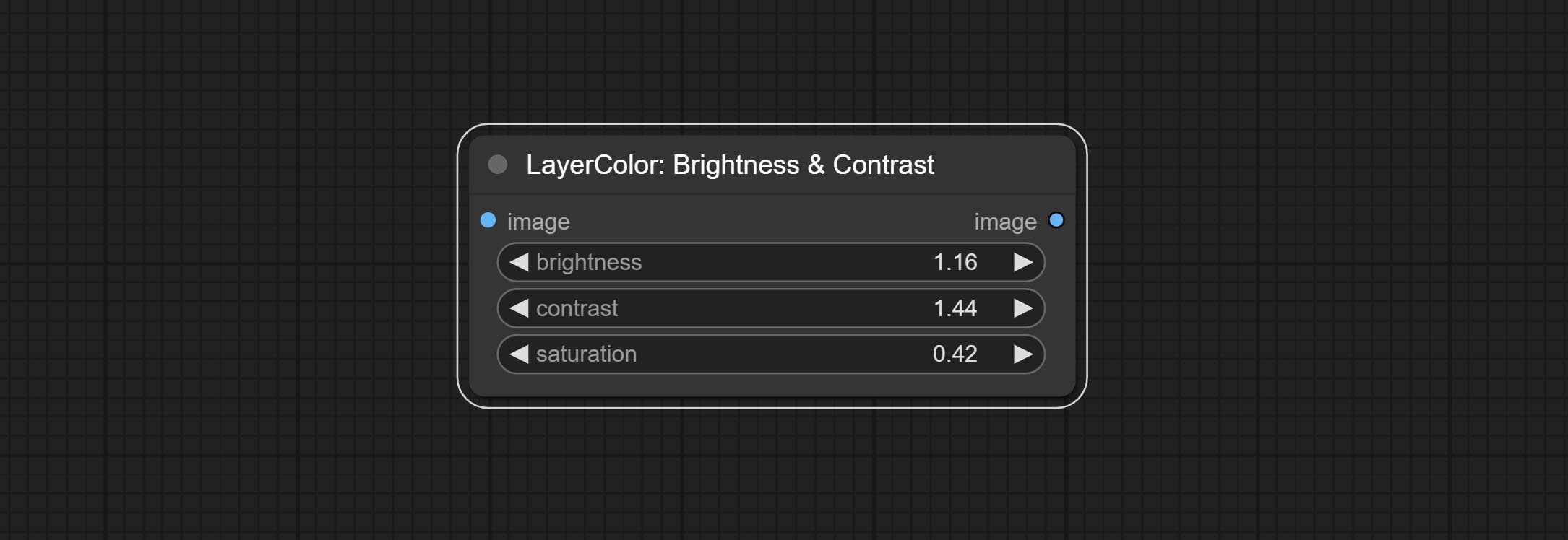

Brightness & Contrast

Change the brightness, contrast, and saturation of the image.

Node options:

- brightness: Value of brightness.

- contrast: Value of contrast.

- saturation: Value of saturation.

BrightnessContrastV2

A replica of the Brightness & Contrast node, with the "&" character removed from the node name to avoid ComfyUI workflow parsing errors.

RGB

Adjust the RGB channels of the image.

Node options:

- R: R channel.

- G: G channel.

- B: B channel.

YUV

Adjust the YUV channels of the image.

Node options:

- Y: Y channel.

- U: U channel.

- V: V channel.

랩

Adjust the LAB channels of the image.

Node options:

- L: L channel.

- A: A channel.

- B: B channel.

HSV

Adjust the HSV channels of the image.

Node options:

- H: H channel.

- S: S channel.

- V: V channel.

LayerUtility

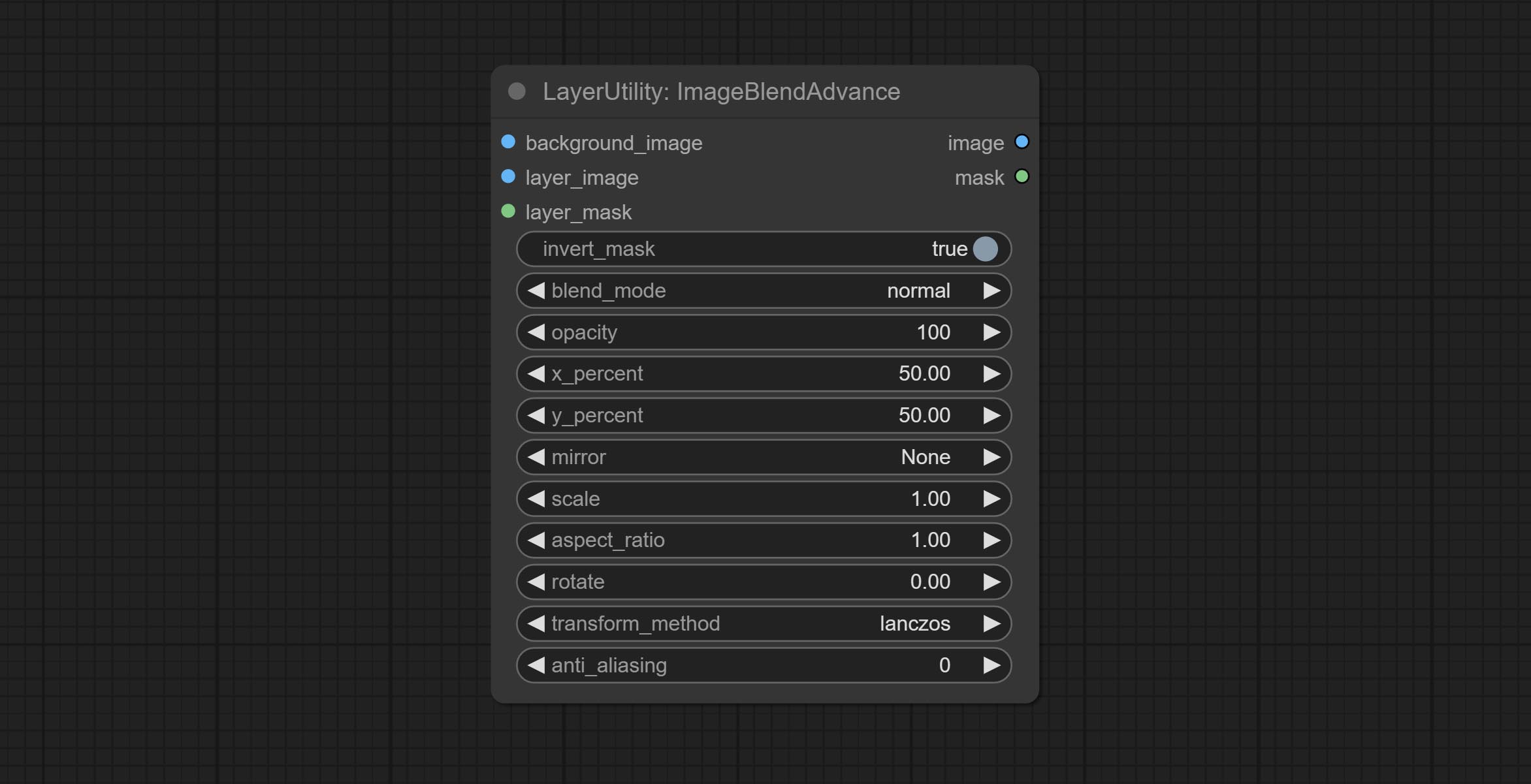

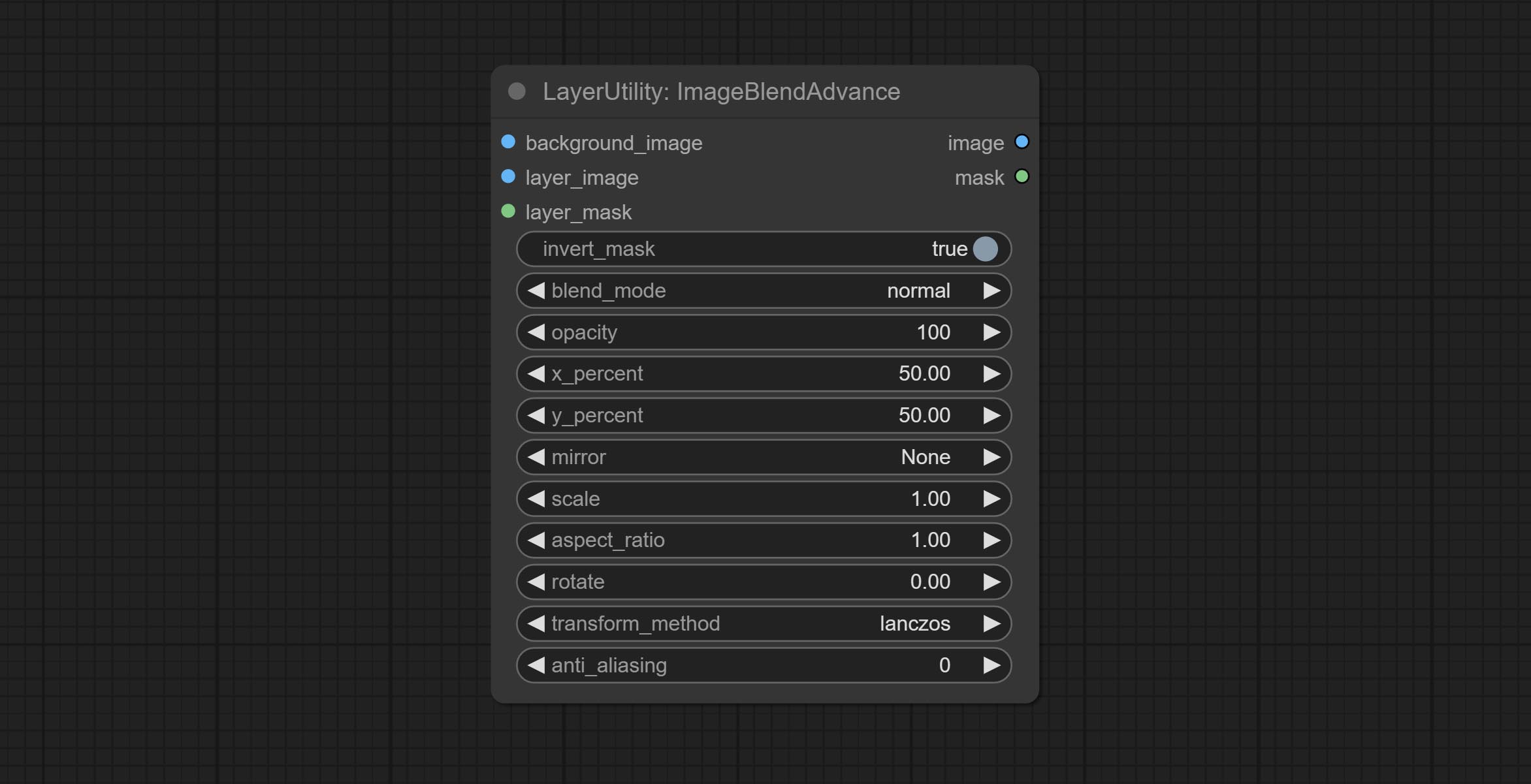

ImageBlendAdvance

Used for compositing layers, allowing for compositing layer images of different sizes on the background image, and setting positions and transformations. multiple mixing modes are available for selection, and transparency can be set.

The node provide layer transformation_methods and anti_aliasing options. helps improve the quality of synthesized images.

The node provides mask output that can be used for subsequent workflows.

Node options:

- background_image: The background image.

- layer_image 5 : Layer image for composite.

- layer_mask 2,5 : Mask for layer_image.

- invert_mask: Whether to reverse the mask.

- blend_mode 3 : Blending mode.

- opacity: Opacity of blend.

- x_percent: Horizontal position of the layer on the background image, expressed as a percentage, with 0 on the far left and 100 on the far right. It can be less than 0 or more than 100, indicating that some of the layer's content is outside the screen.

- y_percent: Vertical position of the layer on the background image, expressed as a percentage, with 0 on the top and 100 on the bottom. For example, setting it to 50 indicates vertical center, 20 indicates upper center, and 80 indicates lower center.

- mirror: Mirror flipping. Provide two flipping modes, horizontal flipping and vertical flipping.

- scale: Layer magnification, 1.0 represents the original size.

- aspect_ratio: Layer aspect ratio. 1.0 is the original ratio, a value greater than this indicates elongation, and a value less than this indicates flattening.

- rotate: Layer rotation degree.

- Sampling methods for layer enlargement and rotation, including lanczos, bicubic, hamming, bilinear, box and nearest. Different sampling methods can affect the image quality and processing time of the synthesized image.

- anti_aliasing: Anti aliasing, ranging from 0 to 16, the larger the value, the less obvious the aliasing. An excessively high value will significantly reduce the processing speed of the node.

- 메모

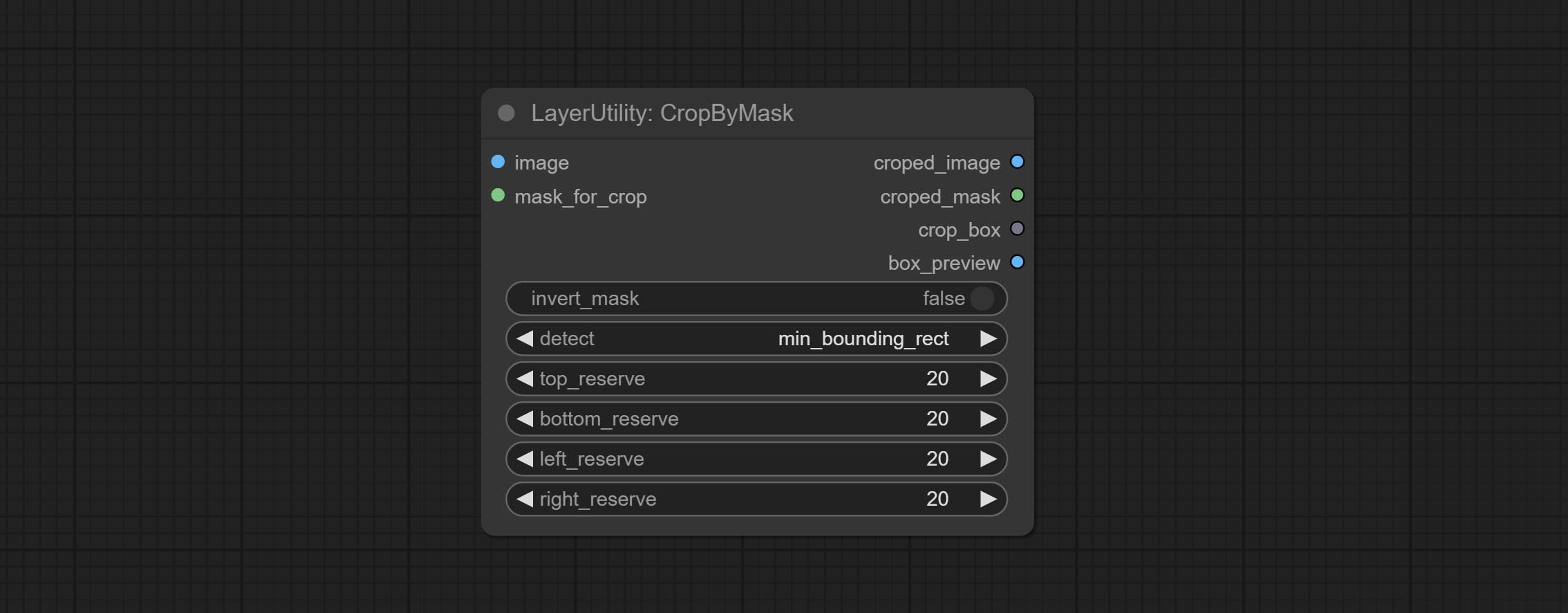

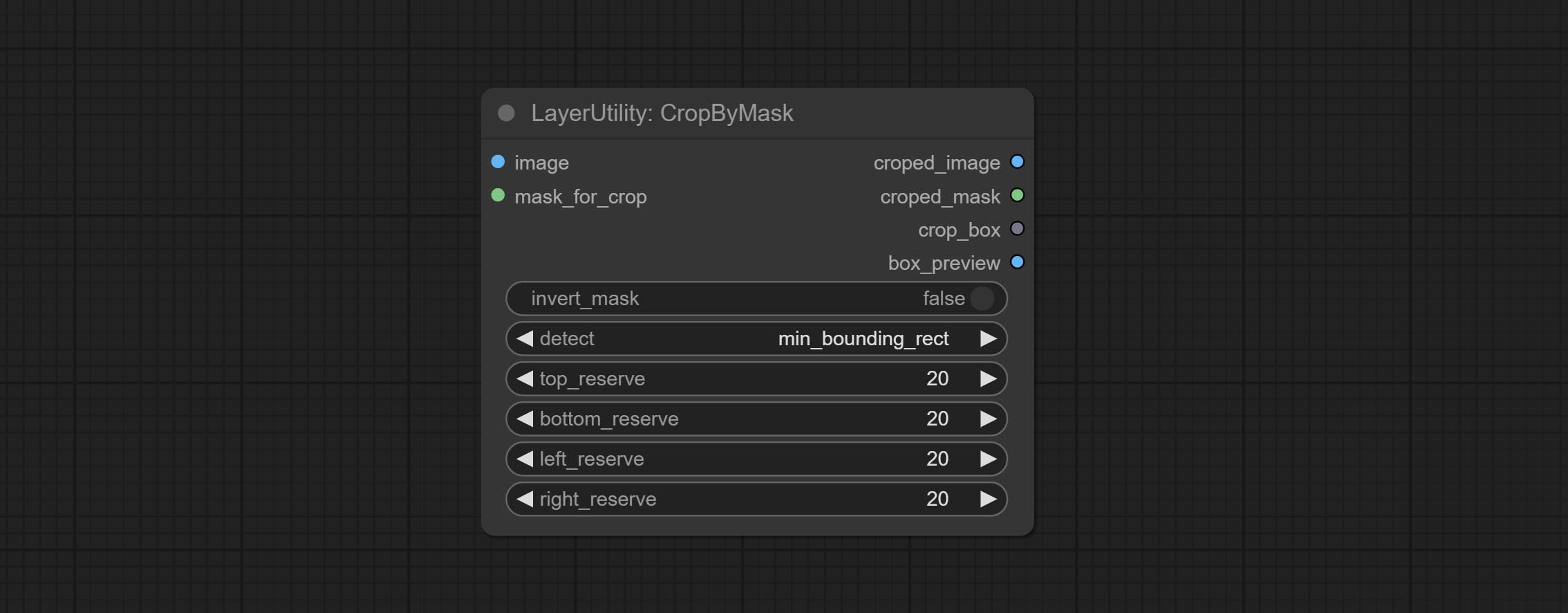

CropByMask

Crop the image according to the mask range, and set the size of the surrounding borders to be retained. This node can be used in conjunction with the RestoreCropBox and ImageScaleRestore nodes to crop and modify upscale parts of image, and then paste them back in place.

Node options:

- image 5 : The input image.

- mask_for_crop 5 : Mask of the image, it will automatically be cut according to the mask range.

- invert_mask: Whether to reverse the mask.

- detect: Detection method,

min_bounding_rect is the minimum bounding rectangle of block shape, max_inscribed_rect is the maximum inscribed rectangle of block shape, and mask-area is the effective area for masking pixels. - top_reserve: Cut the top to preserve size.

- bottom_reserve: Cut the bottom to preserve size.

- left_reserve: Cut the left to preserve size.

- right_reserve: Cut the right to preserve size.

- 메모

산출:

- croped_image: The image after crop.

- croped_mask: The mask after crop.

- crop_box: The trimmed box data is used when restoring the RestoreCropBox node.

- box_preview: Preview image of cutting position, red represents the detected range, and green represents the cutting range after adding the reserved border.

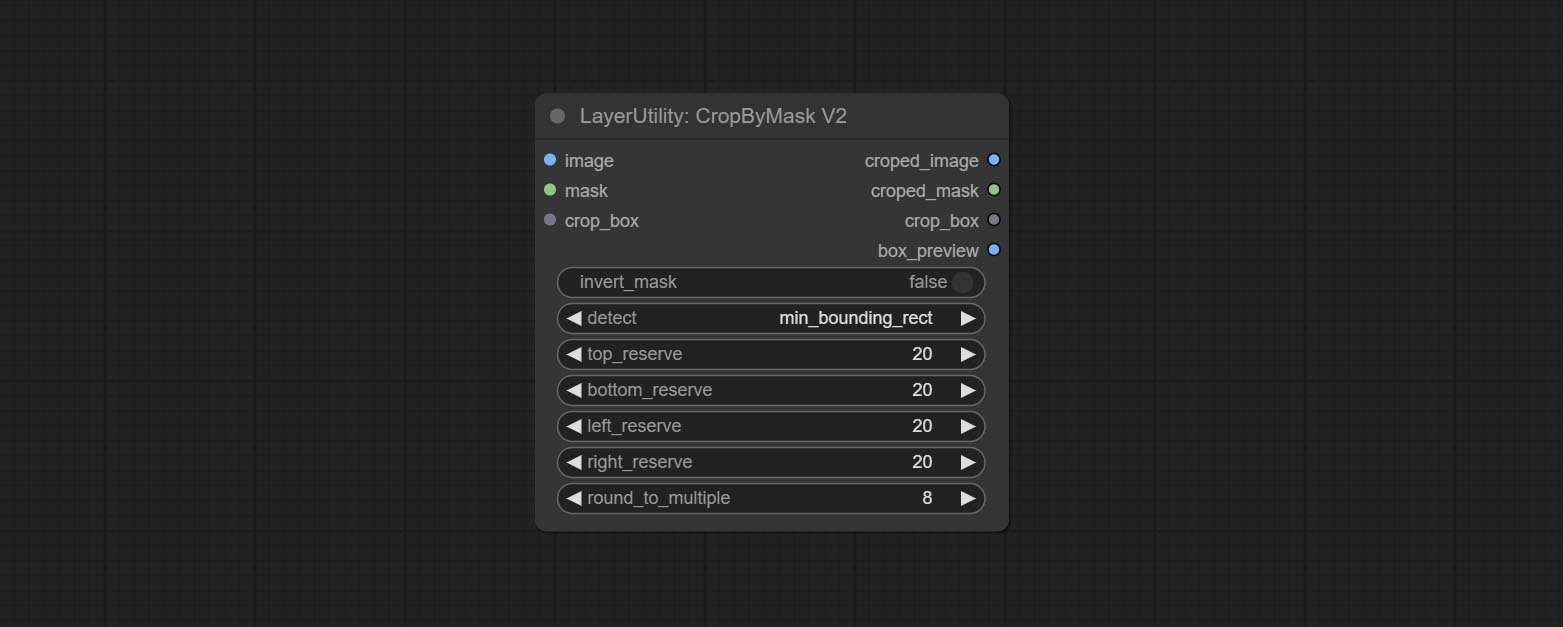

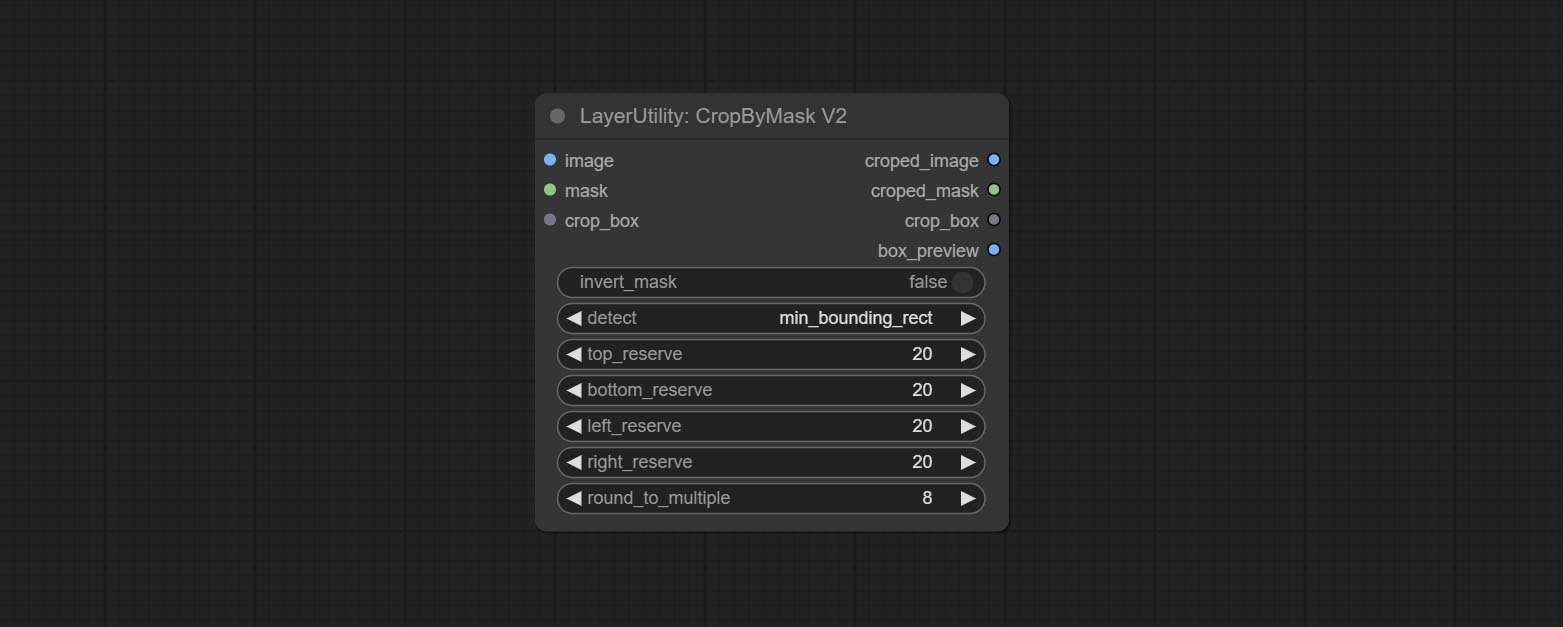

CropByMaskV2

The V2 upgraded version of CropByMask. Supports crop_box input, making it easy to cut layers of the same size.

The following changes have been made based on CropByMask:

- The input

mask_for_crop reanme to mask 。 - Add optional inputs to the

crop_box . If there are inputs here, mask detection will be ignored and this data will be directly used for cropping. - Add the option

round_to_multiple to round the trimming edge length multiple. For example, setting it to 8 will force the width and height to be multiples of 8.

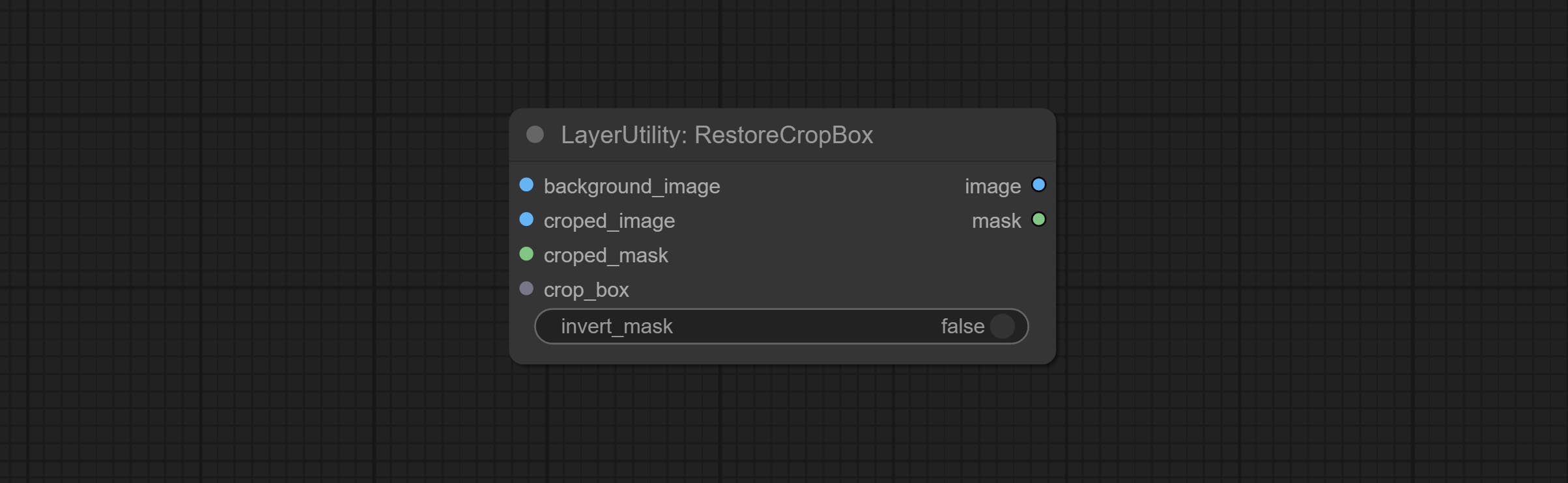

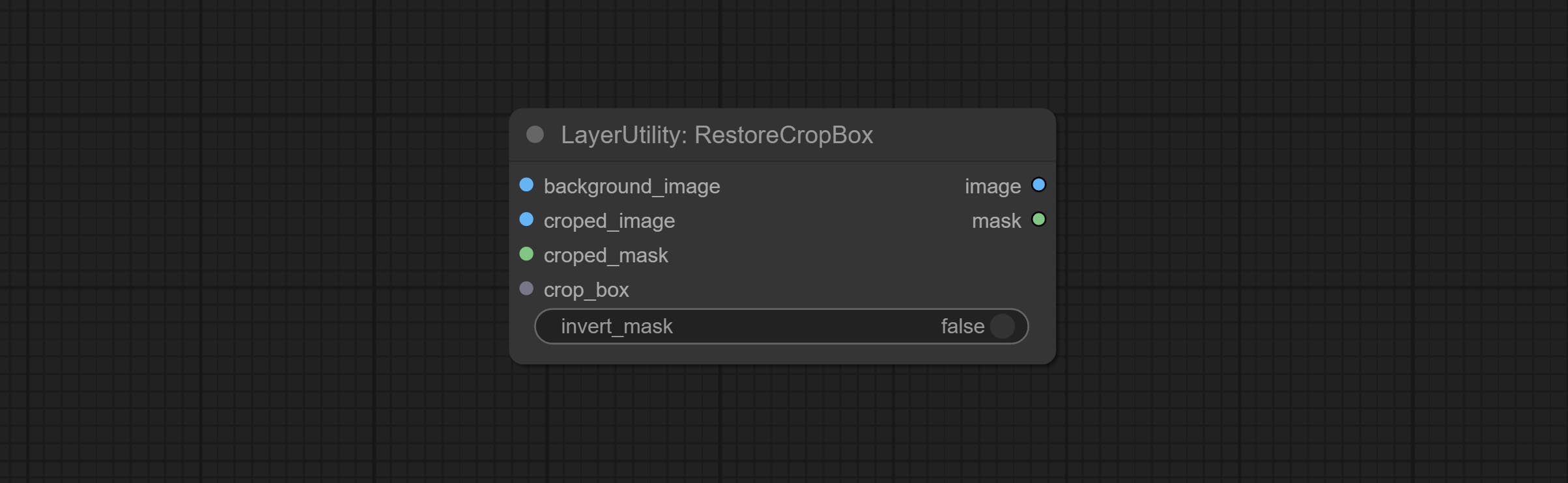

RestoreCropBox

Restore the cropped image to the original image by CropByMask.

Node options:

- background_image: The original image before cutting.

- croped_image 5 : The cropped image. If the middle is enlarged, the size needs to be restored before restoration.

- croped_mask 5 : The cut mask.

- crop_box: Box data during cutting.

- invert_mask: Whether to reverse the mask.

- 메모

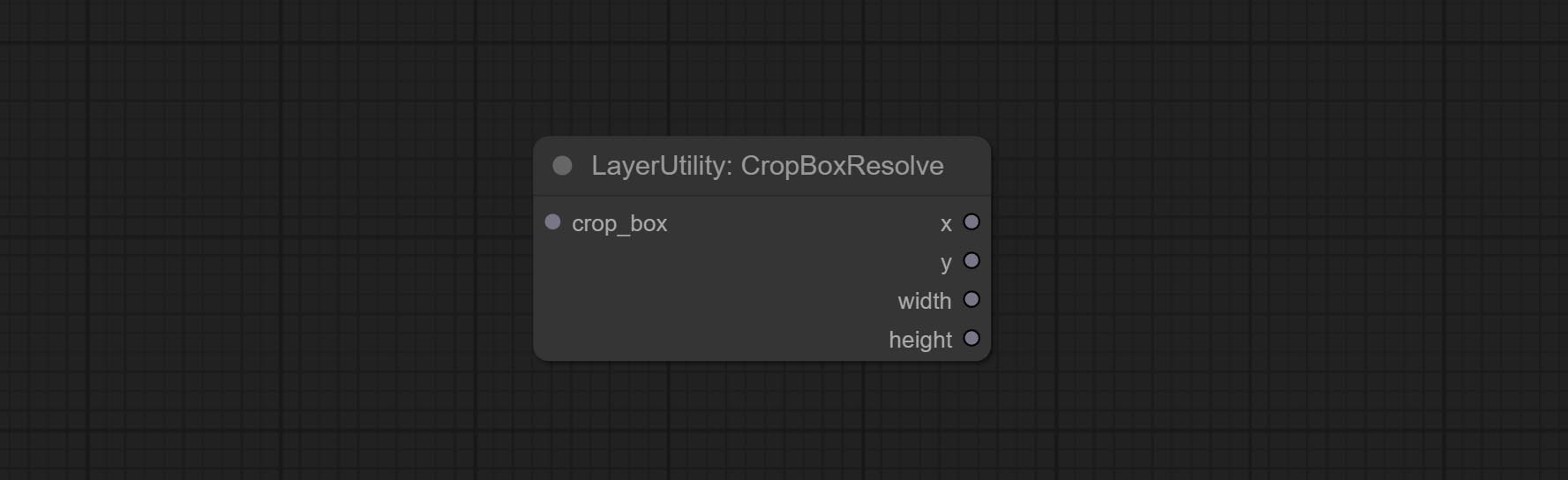

CropBoxResolve

Parsing the corp_box to x , y , width , height .

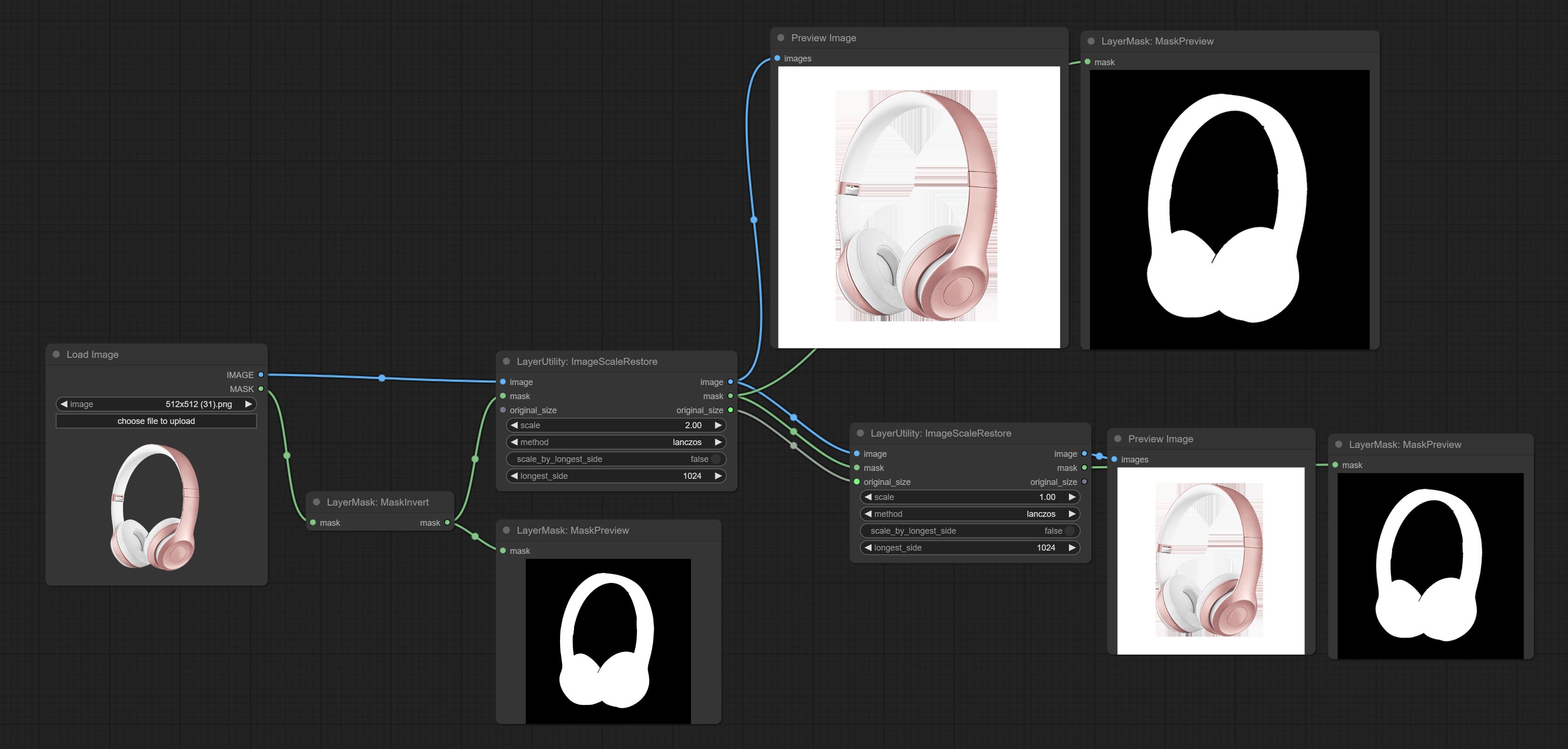

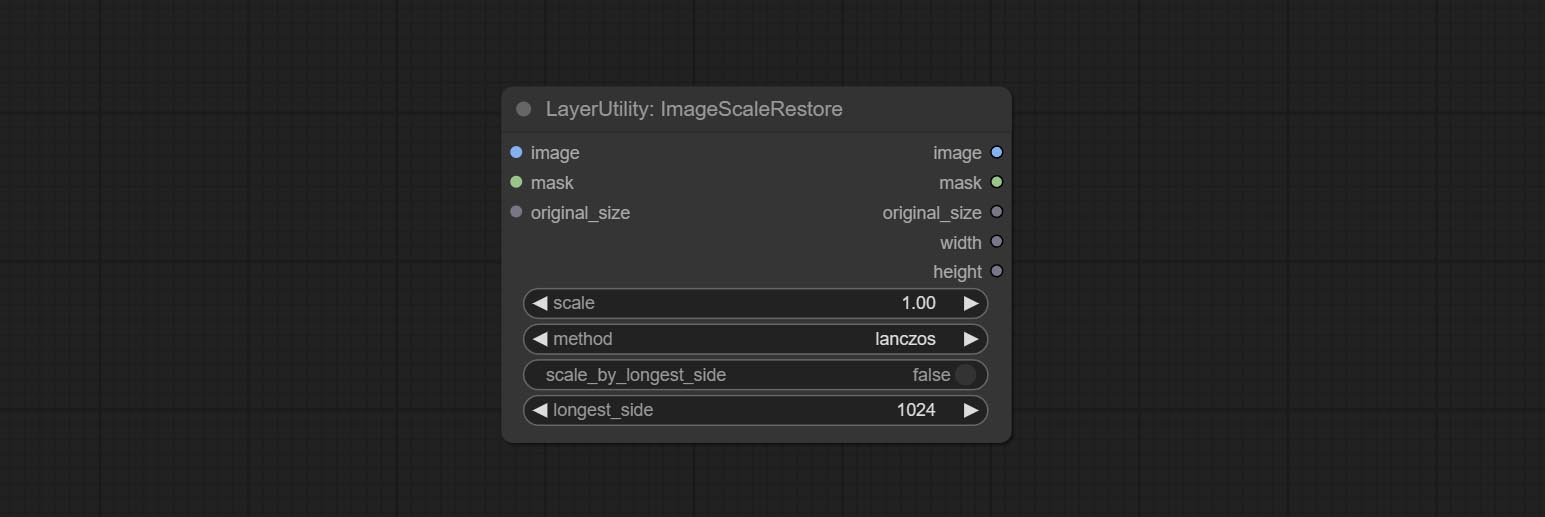

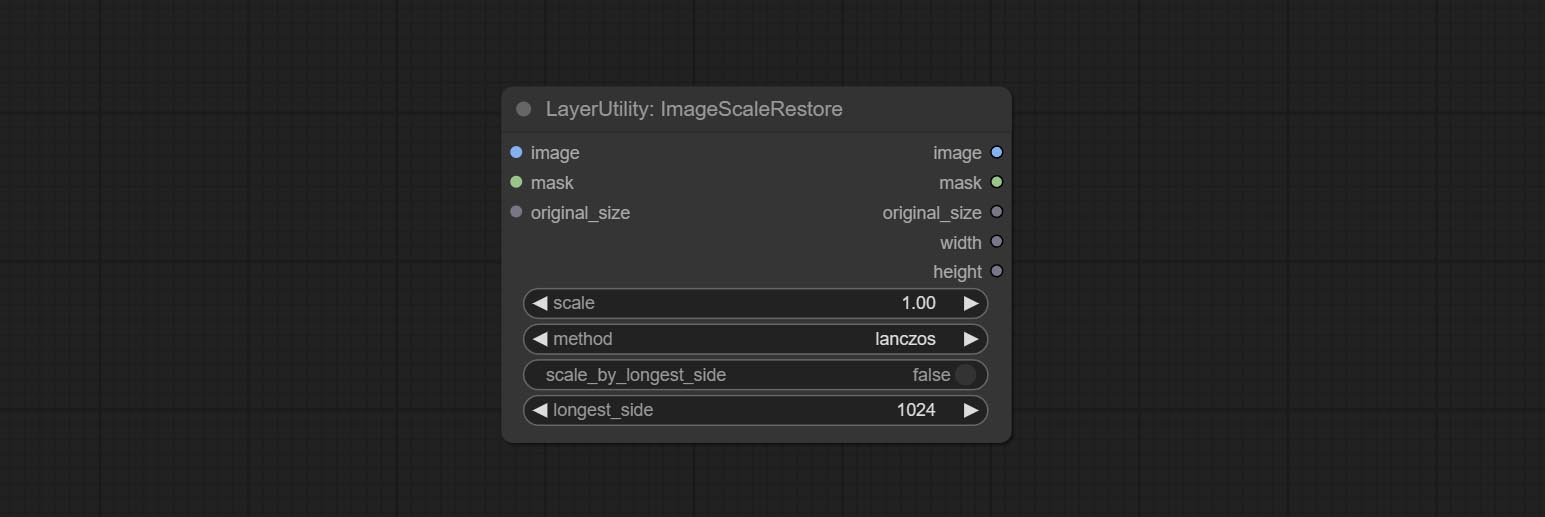

ImageScaleRestore

Image scaling. when this node is used in pairs, the image can be automatically restored to its original size on the second node.

Node options:

- image 5 : The input image.

- mask 2,5 : Mask of image.

- original_size: Optional input, used to restore the image to its original size.

- scale: Scale ratio. when the original_size have input, or scale_ by_longest_side is set to True, this setting will be ignored.

- scale_by_longest_side: Allow scaling by long edge size.

- longest_side: When the scale_by_longest_side is set to True, this will be used this value to the long edge of the image. when the original_size have input, this setting will be ignored.

Outputs:

- image: The scaled image.

- mask: If have mask input, the scaled mask will be output.

- original_size: The original size data of the image is used for subsequent node recovery.

- width: The output image's width.

- height: The output image's height.

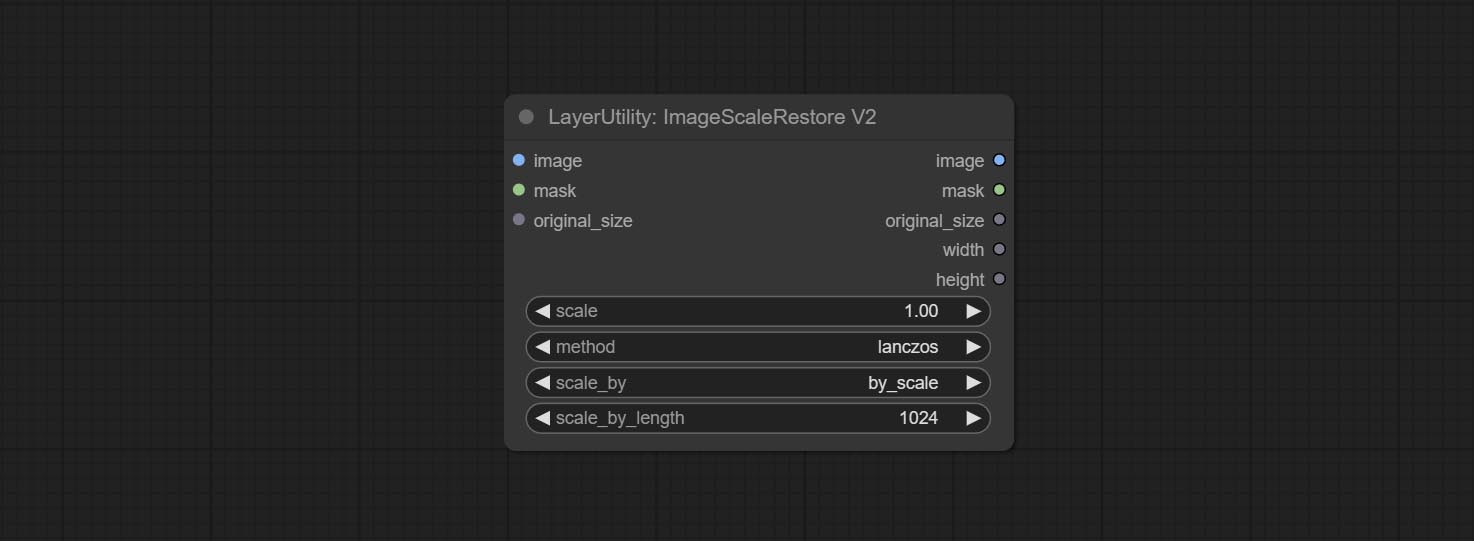

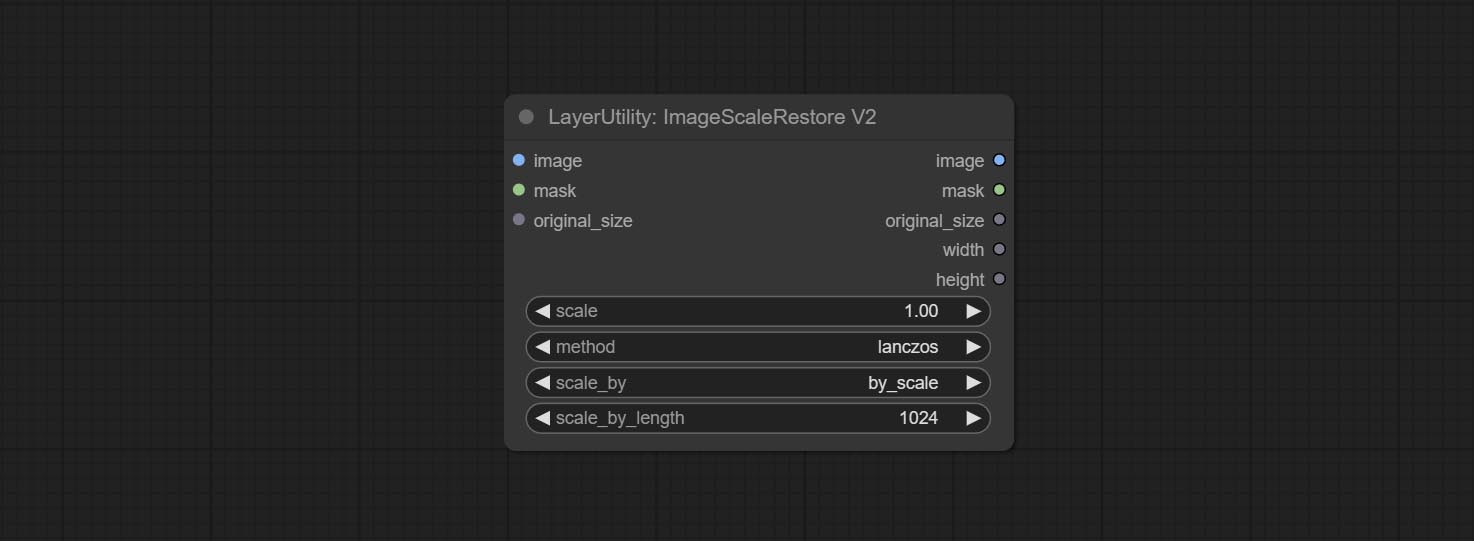

ImageScaleRestoreV2

The V2 upgraded version of ImageScaleRestore.

Node options:

The following changes have been made based on ImageScaleRestore:

- scale_by: Allow scaling by specified dimensions for long, short, width, height, or total pixels. When this option is set to by_scale, use the scale value, and for other options, use the scale_by_length value.

- scale_by_length: The value here is used as

scale_by to specify the length of the edge.

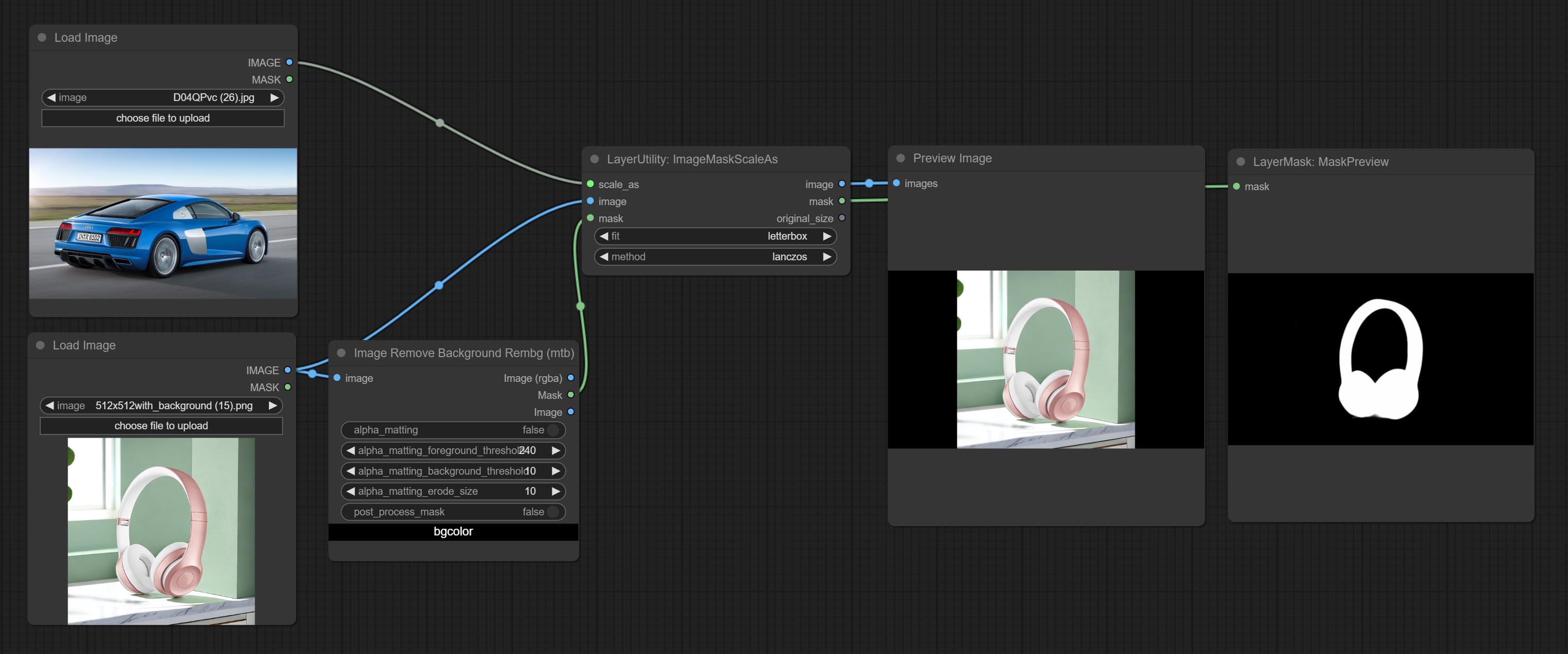

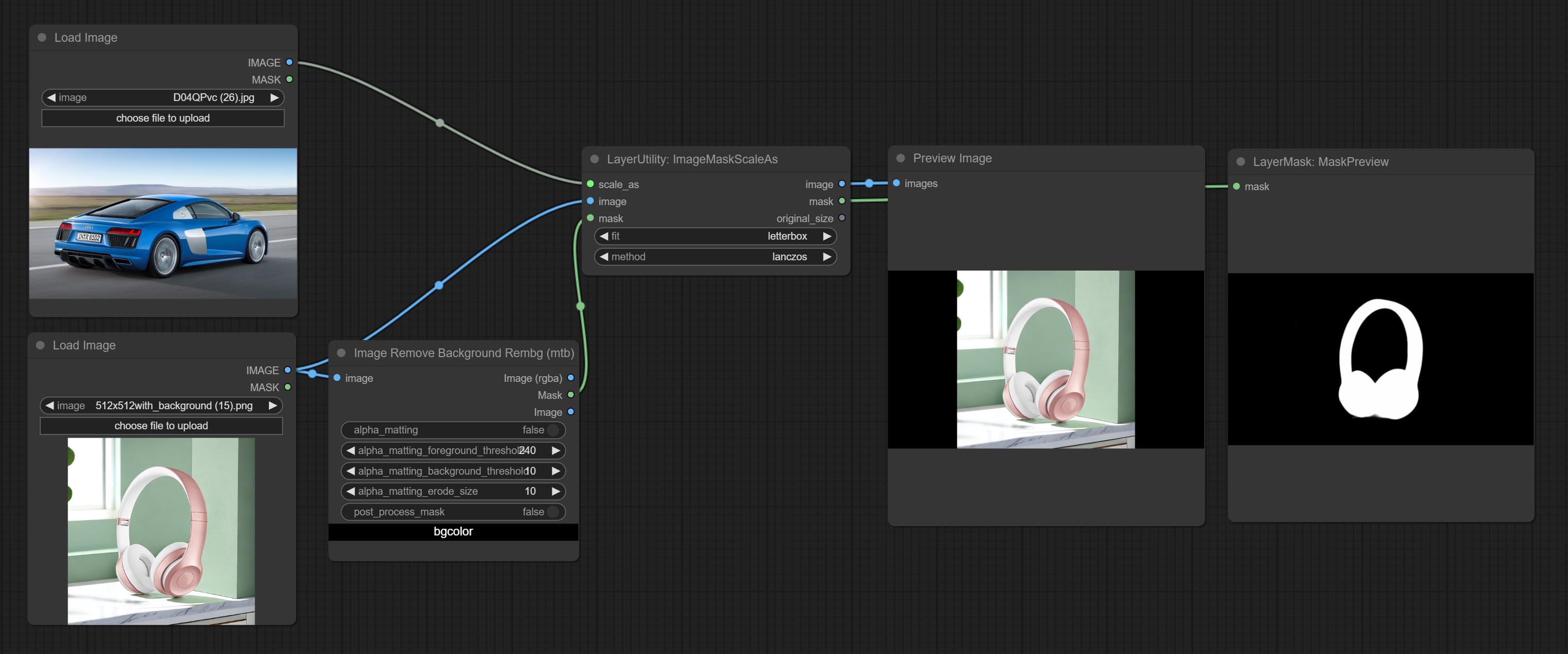

ImageMaskScaleAs

Scale the image or mask to the size of the reference image (or reference mask).

Node options:

- scale_as * : Reference size. It can be an image or a mask.

- image: Image to be scaled. this option is optional input. if there is no input, a black image will be output.

- mask: Mask to be scaled. this option is optional input. if there is no input, a black mask will be output.

- fit: Scale aspect ratio mode. when the width to height ratio of the original image does not match the scaled size, there are three modes to choose from, The letterbox mode retains the complete frame and fills in the blank spaces with black; The crop mode retains the complete short edge, and any excess of the long edge will be cut off; The fill mode does not maintain frame ratio and fills the screen with width and height.

- method: Scaling sampling methods, including lanczos, bicubic, hamming, bilinear, box, and nearest.

* Only limited to input images and masks. forcing the integration of other types of inputs will result in node errors.

Outputs:

- image: If there is an image input, the scaled image will be output.

- mask: If there is a mask input, the scaled mask will be output.

- original_size: The original size data of the image is used for subsequent node recovery.

- width: The output image's width.

- height: The output image's height.

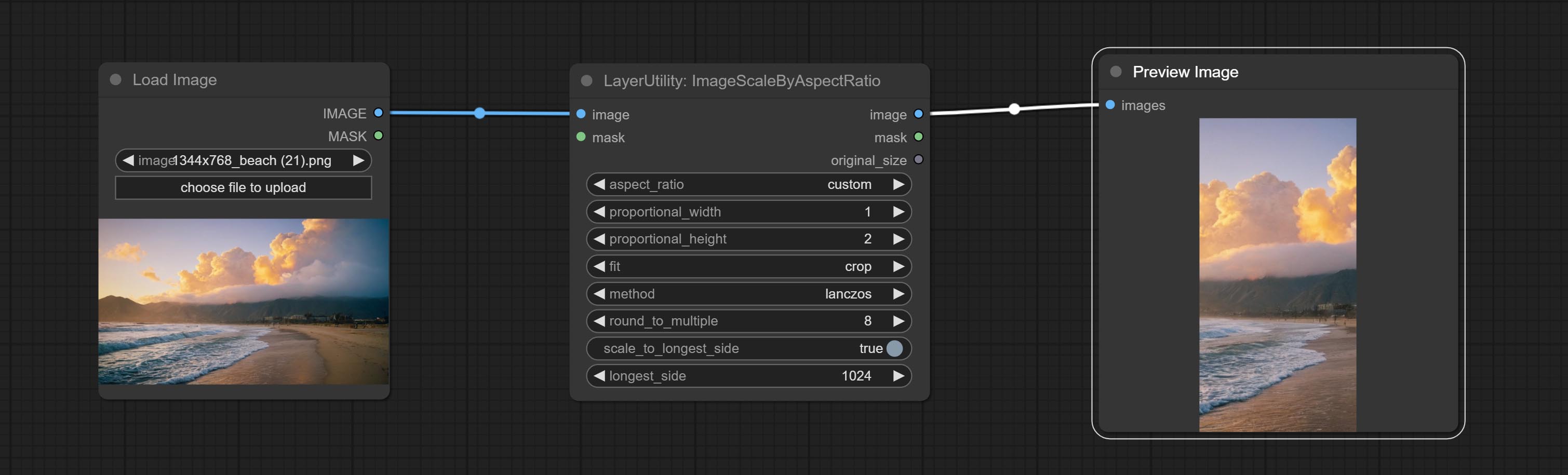

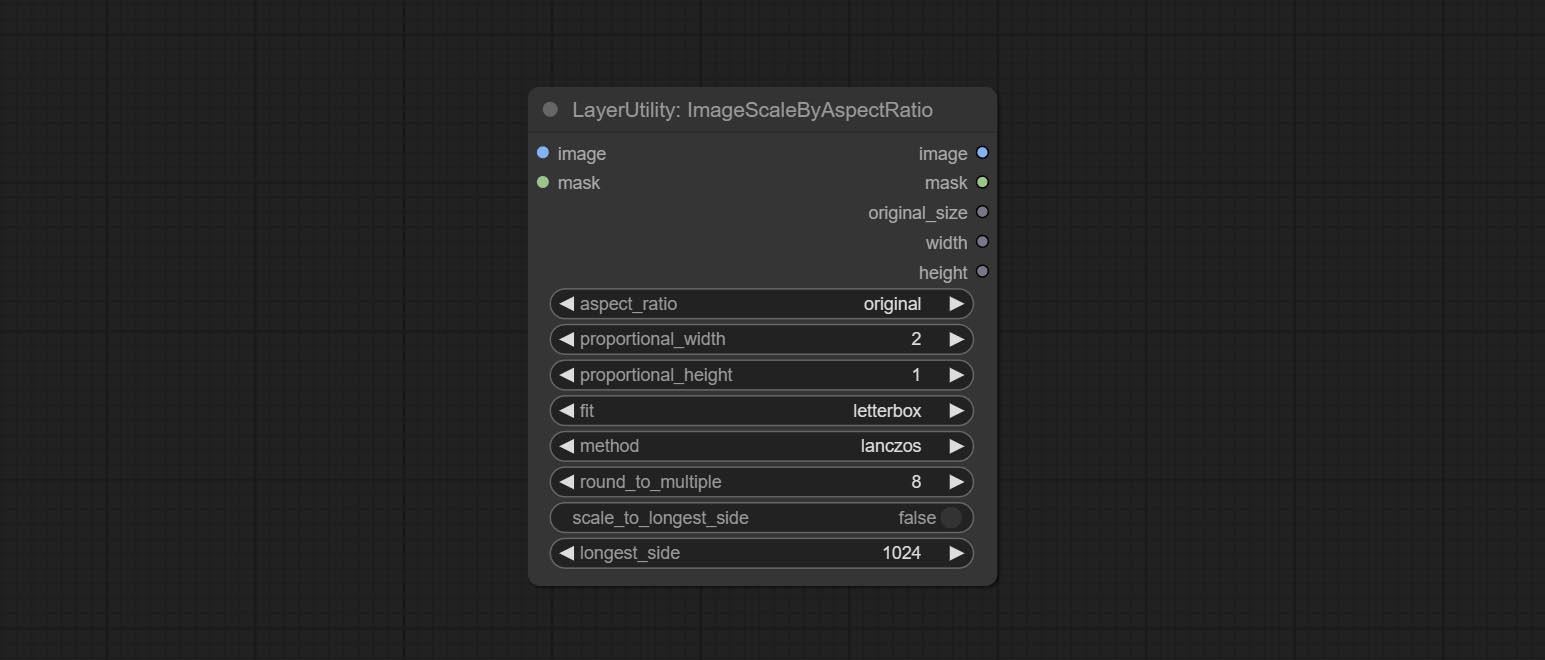

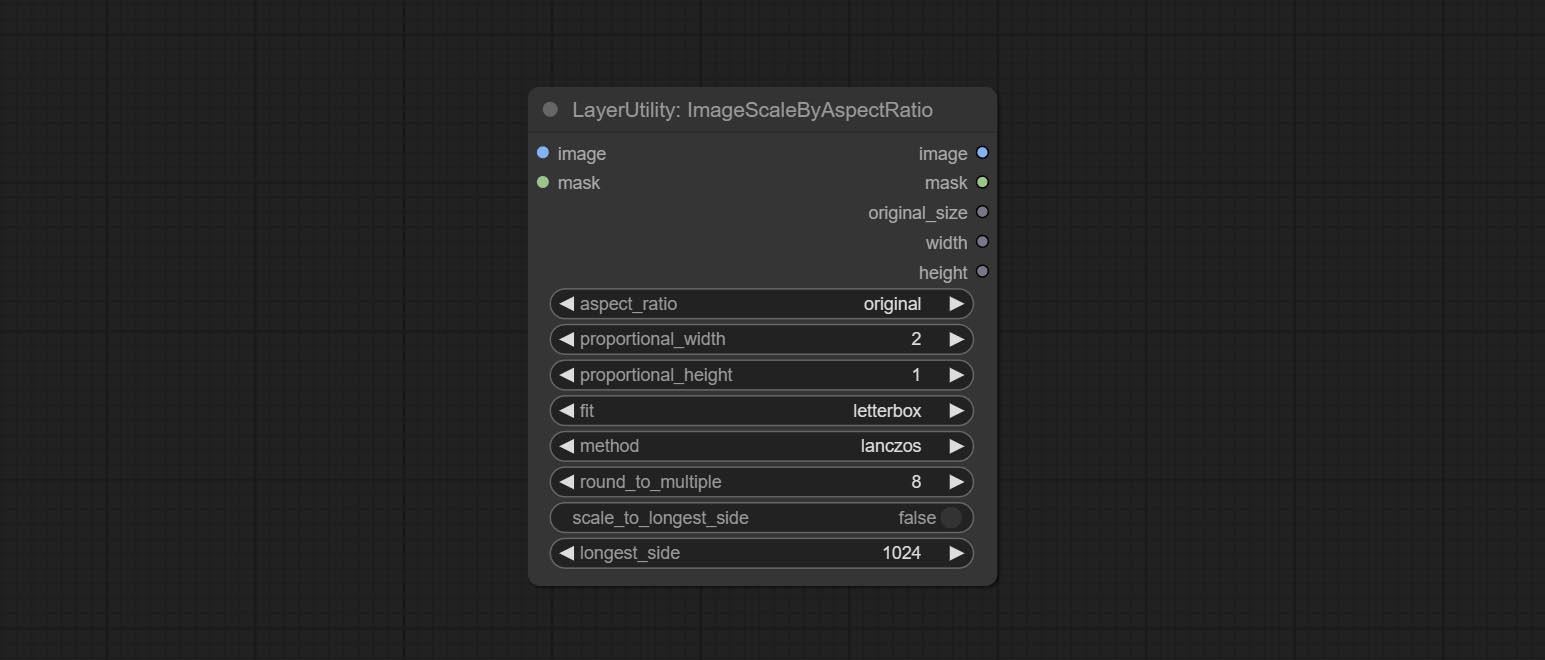

ImageScaleByAspectRatio

Scale the image or mask by aspect ratio. the scaled size can be rounded to a multiple of 8 or 16, and can be scaled to the long side size.

Node options:

- aspect_ratio: Here are several common frame ratios provided. alternatively, you can choose "original" to keep original ratio or customize the ratio using "custom".

- proportional_width: Proportional width. if the aspect ratio option is not "custom", this setting will be ignored.

- proportional_height: Proportional height. if the aspect ratio option is not "custom", this setting will be ignored.

- fit: Scale aspect ratio mode. when the width to height ratio of the original image does not match the scaled size, there are three modes to choose from, The letterbox mode retains the complete frame and fills in the blank spaces with black; The crop mode retains the complete short edge, and any excess of the long edge will be cut off; The fill mode does not maintain frame ratio and fills the screen with width and height.

- method: Scaling sampling methods, including lanczos, bicubic, hamming, bilinear, box, and nearest.

- round_to_multiple: Round multiples. for example, setting it to 8 will force the width and height to be multiples of 8.

- scale_by_longest_side: Allow scaling by long edge size.

- longest_side: When the scale_by_longest_side is set to True, this will be used this value to the long edge of the image. when the original_size have input, this setting will be ignored.

Outputs:

- image: If have image input, the scaled image will be output.

- mask: If have mask input, the scaled mask will be output.

- original_size: The original size data of the image is used for subsequent node recovery.

- width: The output image's width.

- height: The output image's height.

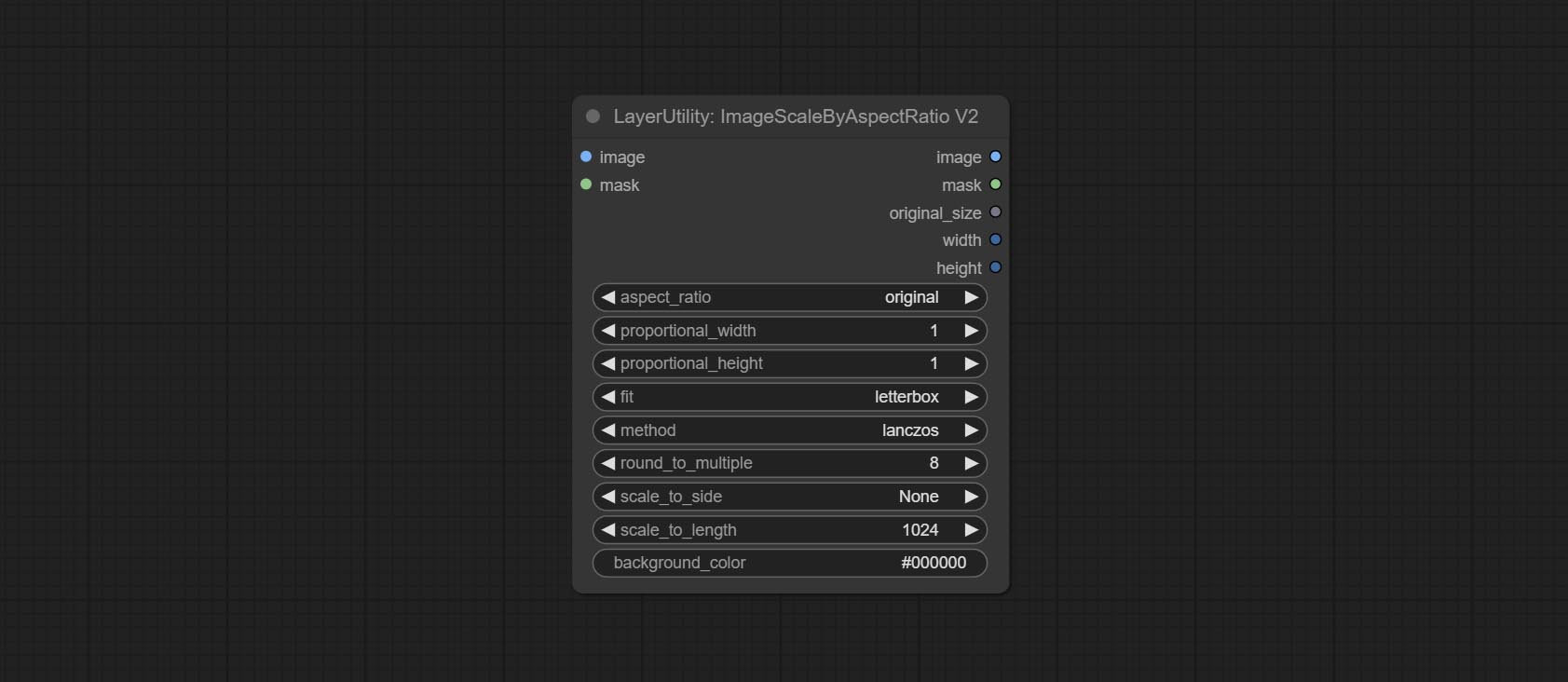

ImageScaleByAspectRatioV2

V2 Upgraded Version of ImageScaleByAspectRatio

Node options:

The following changes have been made based on ImageScaleByAspectRatio:

- scale_to_side: Allow scaling by specified dimensions for long, short, width, height, or total pixels.

- scale_to_length: The numerical value here serves as the length of the specified edge or the total pixels (kilo pixels) for scale_to_side.

- background_color 4 : The color of the background.

QWenImage2Prompt

Inference the prompts based on the image. this node is repackage of the ComfyUI_VLM_nodes's UForm-Gen2 Qwen Node , thanks to the original author. Download model files from huggingface or Baidu Netdisk to ComfyUI/models/LLavacheckpoints/files_for_uform_gen2_qwen folder.

Node Options:

- question: Prompt of UForm-Gen-QWen model.

LlamaVision

Use the Llama 3.2 vision model for local inference. Can be used to generate prompt words. part of the code for this node comes from ComfyUI-PixtralLlamaMolmoVision, thank you to the original author. To use this node, the transformers need upgraded to 4.45.0 or higher. Download models from BaiduNetdisk or huggingface/SeanScripts , and copy to ComfyUI/models/LLM .

Node Options:

- image: Image input.

- model: Currently, only the "Llama-3.2-11B-Vision-Instruct-nf4" is available.

- system_prompt: System prompt words for LLM model.

- user_prompt: User prompt words for LLM model.

- max_new_tokens: max_new_tokens for LLM model.

- do_sample: do_sample for LLM model.

- top-p: top_p for LLM model.

- top_k: top_k for LLM model.

- stop_strings: The stop strings.

- seed: The seed of random number.

- control_after_generate: Seed change options. If this option is fixed, the generated random number will always be the same.

- include_prompt_in_output: Does the output contain prompt words.

- cache_model: Whether to cache the model.

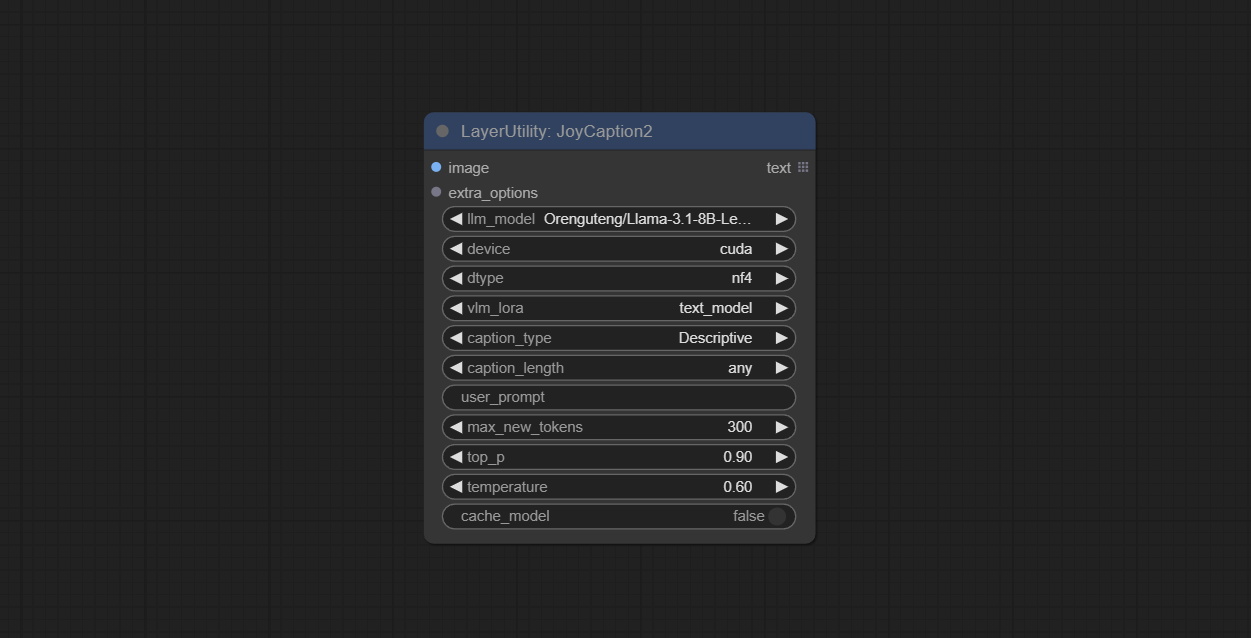

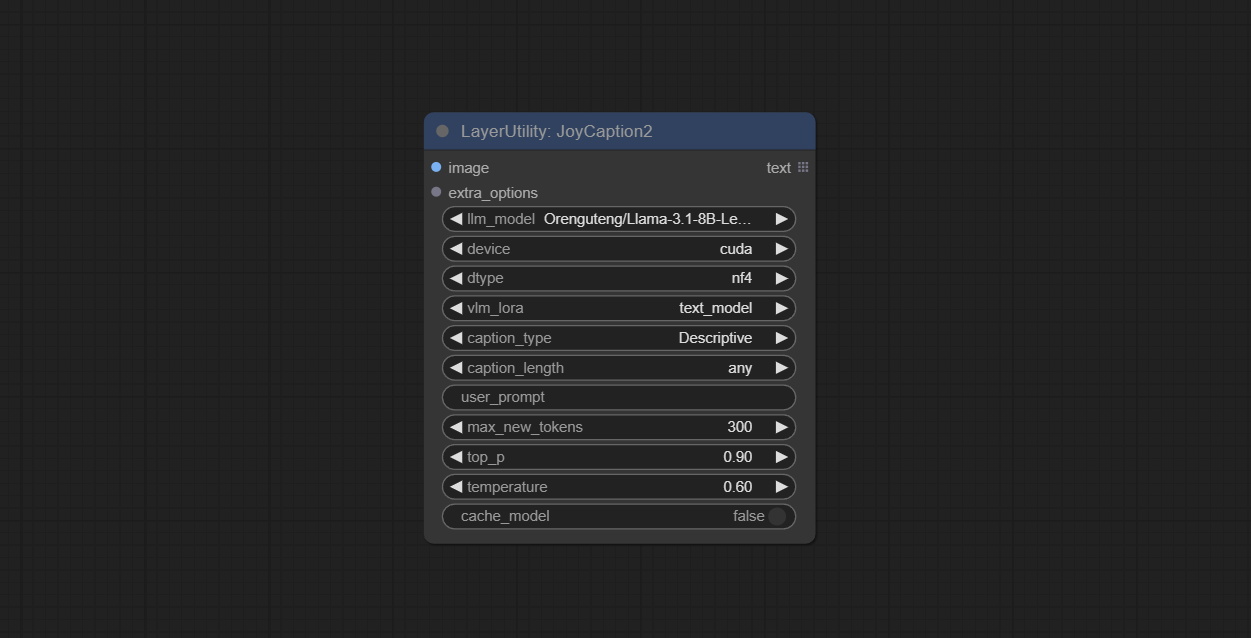

JoyCaption2

Use the JoyCaption-alpha-two model for local inference. Can be used to generate prompt words. this node is https://huggingface.co/John6666/joy-caption-alpha-two-cli-mod Implementation in ComfyUI, thank you to the original author. Download models form BaiduNetdisk and BaiduNetdisk , or huggingface/Orenguteng and huggingface/unsloth , then copy to ComfyUI/models/LLM , Download models from BaiduNetdisk or huggingface/google , and copy to ComfyUI/models/clip , Donwload the cgrkzexw-599808 folder from BaiduNetdisk or huggingface/John6666 , and copy to ComfyUI/models/Joy_caption 。

Node Options:

- image: Image input.

- extra_options: Input the extra_options.

- llm_model: There are two LLM models to choose, Orenguteng/Llama-3.1-8B-Lexi-Uncensored-V2 and unsloth/Meta-Llama-3.1-8B-Instruct.

- device: Model loading device. Currently, only CUDA is supported.

- dtype: Model precision, nf4 and bf16.

- vlm_lora: Whether to load text_madel.

- caption_type: Caption type options, including: "Descriptive", "Descriptive (Informal)", "Training Prompt", "MidJourney", "Booru tag list", "Booru-like tag list", "Art Critic", "Product Listing", "Social Media Post".

- caption_length: The length of caption.

- user_prompt: User prompt words for LLM model. If there is content here, it will overwrite all the settings for caption_type and extra_options.

- max_new_tokens: The max_new_token parameter of LLM.

- do_sample: The do_sample parameter of LLM.

- top-p: The top_p parameter of LLM.

- temperature: The temperature parameter of LLM.

- cache_model: Whether to cache the model.

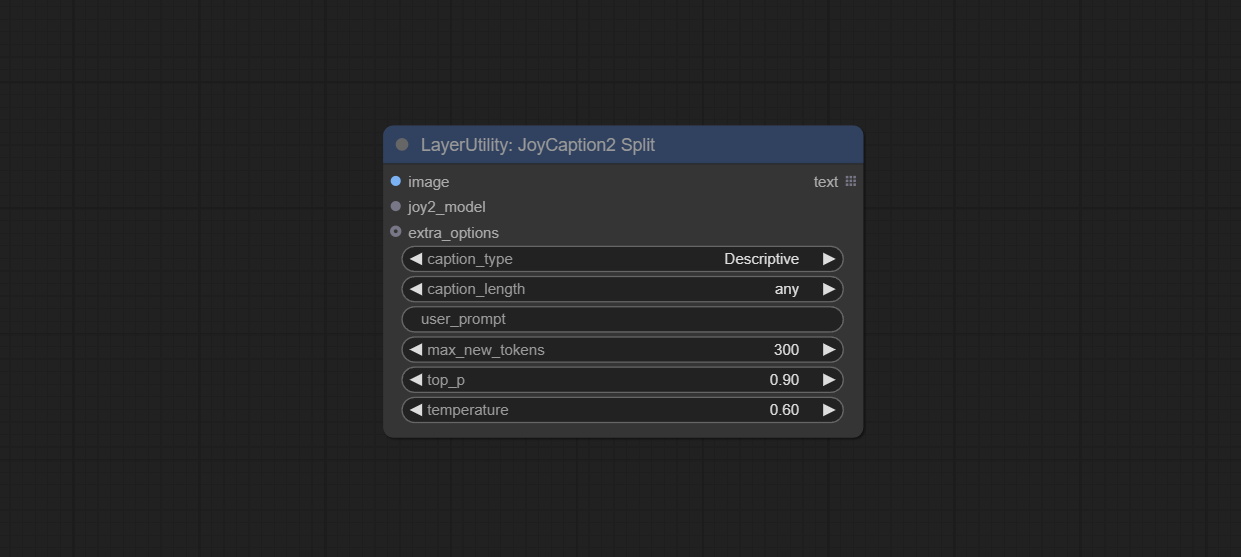

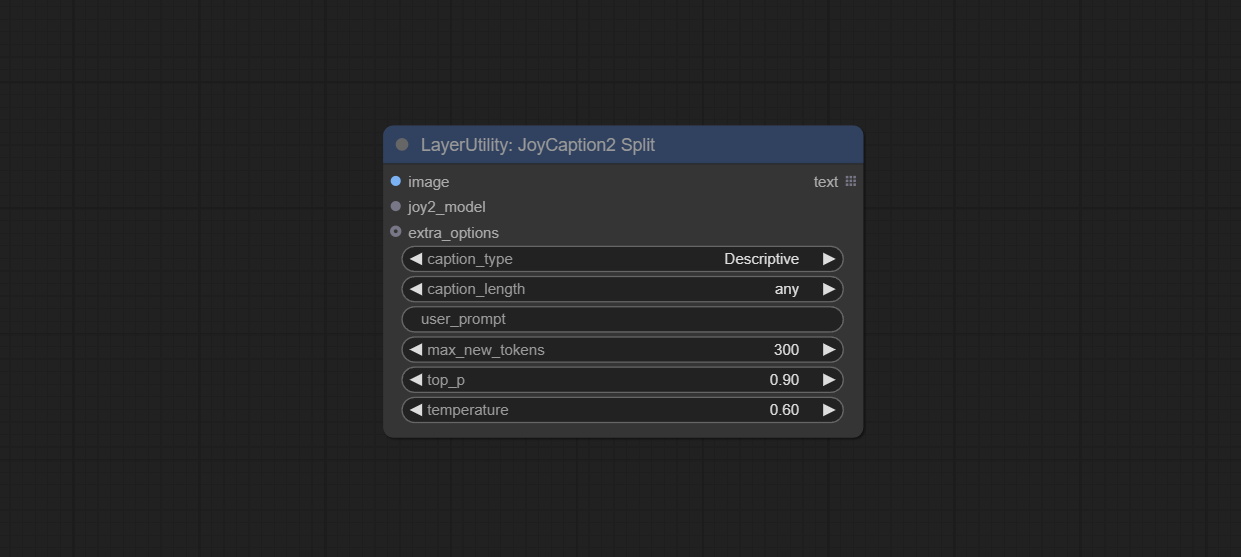

JoyCaption2Split

The node of JoyCaption2 separate model loading and inference, and when multiple JoyCaption2 nodes are used, the model can be shared to improve efficiency.

Node Options:

- image: Image input.。

- joy2_model: The JoyCaption model input.

- extra_options: Input the extra_options.

- caption_type: Caption type options, including: "Descriptive", "Descriptive (Informal)", "Training Prompt", "MidJourney", "Booru tag list", "Booru-like tag list", "Art Critic", "Product Listing", "Social Media Post".

- caption_length: The length of caption.

- user_prompt: User prompt words for LLM model. If there is content here, it will overwrite all the settings for caption_type and extra_options.

- max_new_tokens: The max_new_token parameter of LLM.

- do_sample: The do_sample parameter of LLM.

- top-p: The top_p parameter of LLM.

- temperature: The temperature parameter of LLM.

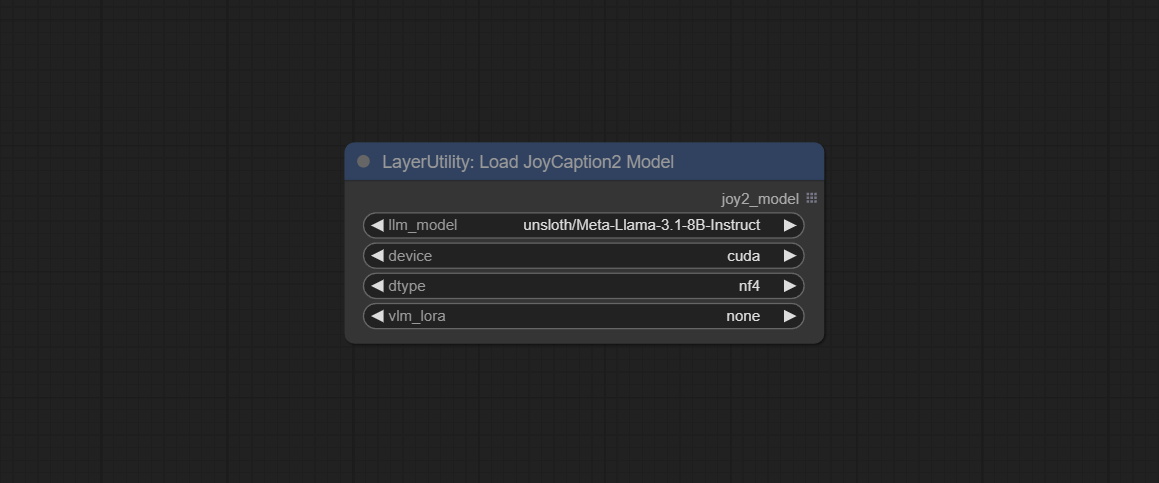

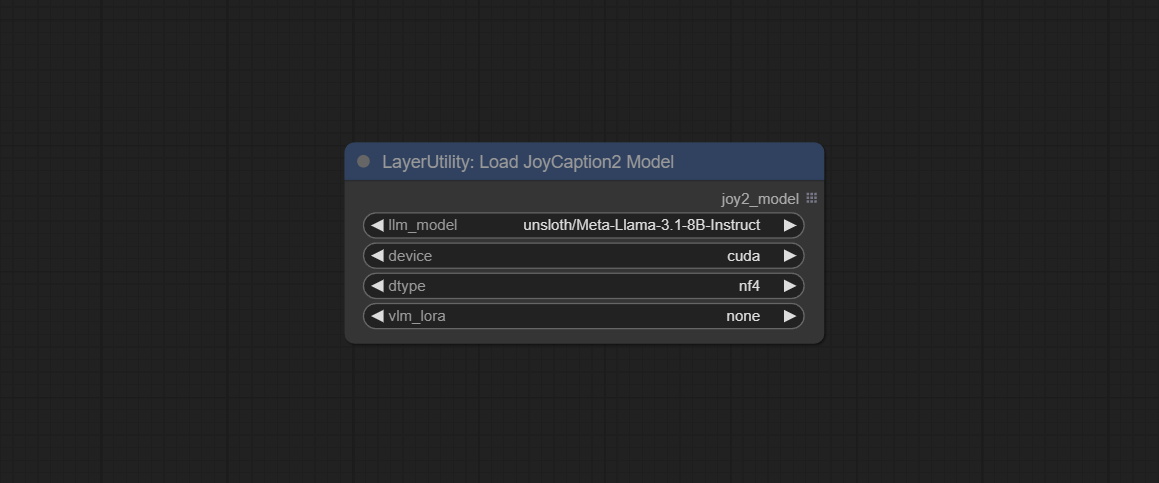

LoadJoyCaption2Model

JoyCaption2's model loading node, used in conjunction with JoyCaption2Split.

Node Options:

- llm_model: There are two LLM models to choose, Orenguteng/Llama-3.1-8B-Lexi-Uncensored-V2 and unsloth/Meta-Llama-3.1-8B-Instruct.

- device: Model loading device. Currently, only CUDA is supported.

- dtype: Model precision, nf4 and bf16.

- vlm_lora: Whether to load text_madel.

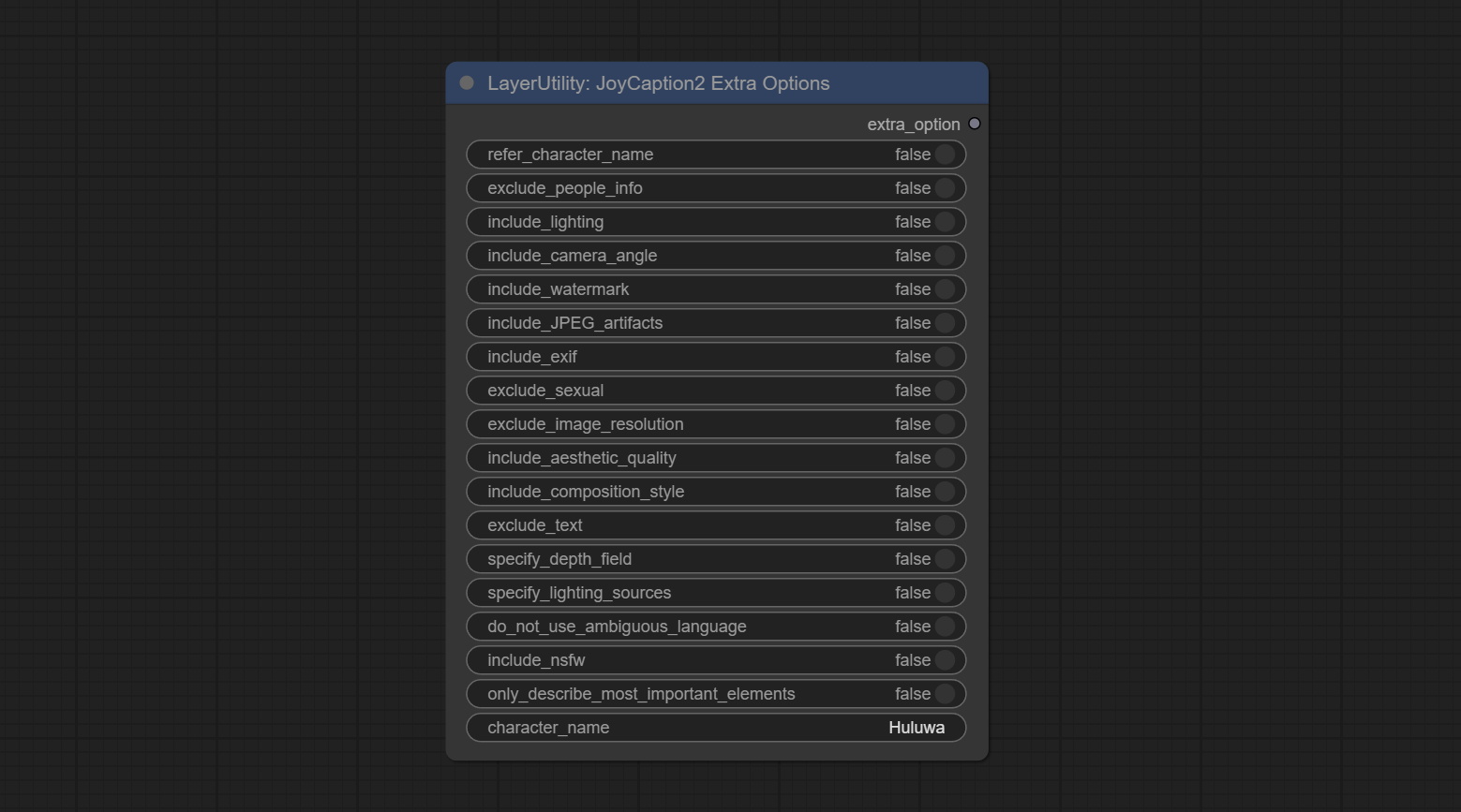

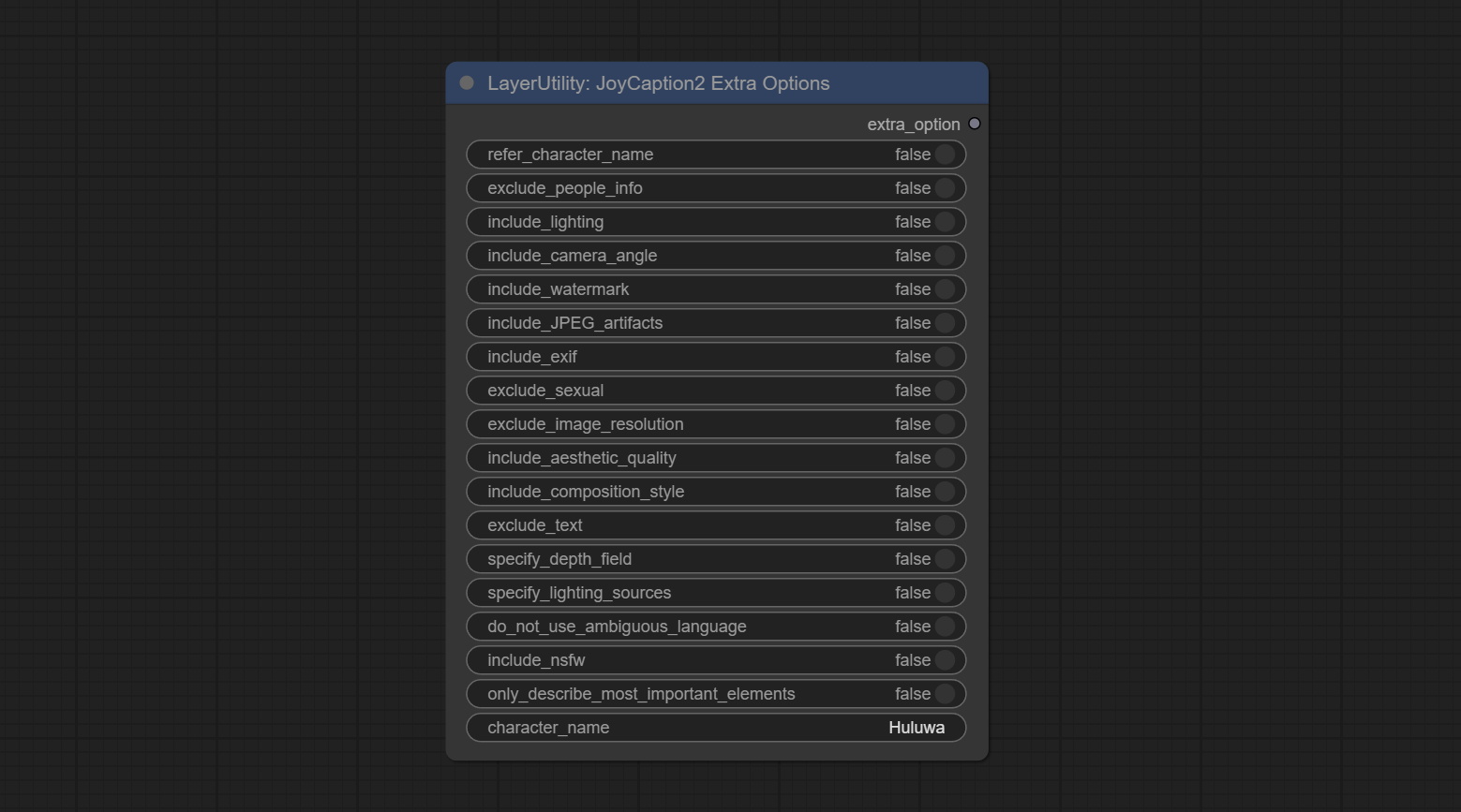

JoyCaption2ExtraOptions

The extra_options parameter node of JoyCaption2.

Node Options:

- refer_character_name: If there is a person/character in the image you must refer to them as {name}.

- exclude_people_info: Do NOT include information about people/characters that cannot be changed (like ethnicity, gender, etc), but do still include changeable attributes (like hair style).

- include_lighting: Include information about lighting.

- include_camera_angle: Include information about camera angle.

- include_watermark: Include information about whether there is a watermark or not.

- include_JPEG_artifacts: Include information about whether there are JPEG artifacts or not.

- include_exif: If it is a photo you MUST include information about what camera was likely used and details such as aperture, shutter speed, ISO, etc.

- exclude_sexual: Do NOT include anything sexual; keep it PG.

- exclude_image_resolution: Do NOT mention the image's resolution.

- include_aesthetic_quality: You MUST include information about the subjective aesthetic quality of the image from low to very high.

- include_composition_style: Include information on the image's composition style, such as leading lines, rule of thirds, or symmetry.

- exclude_text: Do NOT mention any text that is in the image.

- specify_depth_field: Specify the depth of field and whether the background is in focus or blurred.

- specify_lighting_sources: If applicable, mention the likely use of artificial or natural lighting sources.

- do_not_use_ambiguous_language: Do NOT use any ambiguous language.

- include_nsfw: Include whether the image is sfw, suggestive, or nsfw.

- only_describe_most_important_elements: ONLY describe the most important elements of the image.

- character_name: Person/Character Name, if choice

refer_character_name .

PhiPrompt

Use Microsoft Phi 3.5 text and visual models for local inference. Can be used to generate prompt words, process prompt words, or infer prompt words from images. Running this model requires at least 16GB of video memory. Download model files from BaiduNetdisk or huggingface.co/microsoft/Phi-3.5-vision-instruct and huggingface.co/microsoft/Phi-3.5-mini-instruct and copy to ComfyUImodelsLLM folder.

Node Options:

- image: Optional input. The input image will serve as the input for Phi-3.5-vision-instruct.

- model: Selectable to load Phi-3.5-vision-instruct or Phi-3.5-mini-instruct model. The default value of auto will automatically load the corresponding model based on whether there is image input.

- device: Model loading device. Supports CPU and CUDA.

- dtype: The model loading accuracy has three options: fp16, bf16, and fp32.

- cache_model: Whether to cache the model.

- system_prompt: The system prompt of Phi-3.5-mini-instruct.

- user_prompt: User prompt words for LLM model.

- do_sample: The do_Sample parameter of LLM defaults to True.

- temperature: The temperature parameter of LLM defaults to 0.5.

- max_new_tokens: The max_new_token parameter of LLM defaults to 512.

UserPromptGeneratorTxtImg

UserPrompt preset for generating SD text to image prompt words.

Node options:

- template: Prompt word template. Currently, only the 'SD txt2img prompt' is available.

- describe: Prompt word description. Enter a simple description here.

- limit_word: Maximum length limit for output prompt words. For example, 200 means that the output text will be limited to 200 words.

UserPromptGeneratorTxtImgWithReference

UserCompt preset for generating SD text to image prompt words based on input content.

Node options:

- reference_text: Reference text input. Usually it is a style description of the image.

- template: Prompt word template. Currently, only the 'SD txt2img prompt' is available.

- describe: Prompt word description. Enter a simple description here.

- limit_word: Maximum length limit for output prompt words. For example, 200 means that the output text will be limited to 200 words.

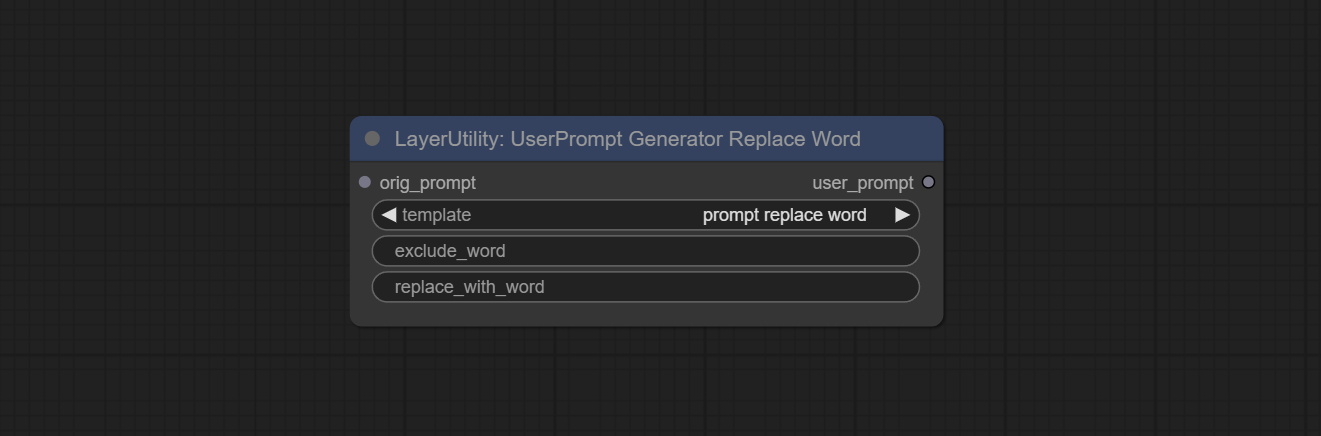

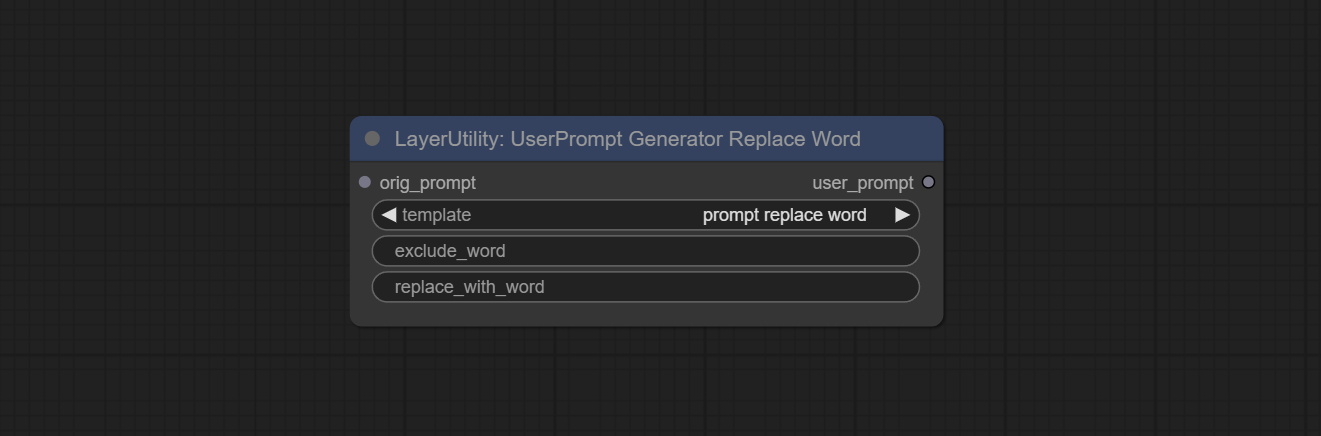

UserPromptGeneratorReplaceWord

UserPrompt preset used to replace a keyword in text with different content. This is not only a simple replacement, but also a logical sorting of the text based on the context of the prompt words to achieve the rationality of the output content.

Node options:

- orig_prompt: Original prompt word input.

- template: Prompt word template. Currently, only 'prompt replace word' is available.

- exclude_word: Keywords that need to be excluded.

- replace_with_word: That word will replace the exclude_word.

PromptTagger

Inference the prompts based on the image. it can replace key word for the prompt. This node currently uses Google Gemini API as the backend service. Please ensure that the network environment can use Gemini normally. Please apply for your API key on Google AI Studio, And fill it in api_key.ini , this file is located in the root directory of the plug-in, and the default name is api_key.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open it using text editing software, fill in your API key after google_api_key= and save it.

Node options:

- api: The Api used. At present, there are two options "gemini-1. 5-flash" and "google-gemini".

- token_limit: The maximum token limit for generating prompt words.

- exclude_word: Keywords that need to be excluded.

- replace_with_word: That word will replace the exclude_word.

PromptEmbellish

Enter simple prompt words, output polished prompt words, and support inputting images as references, and support Chinese input. This node currently uses Google Gemini API as the backend service. Please ensure that the network environment can use Gemini normally. Please apply for your API key on Google AI Studio, And fill it in api_key.ini , this file is located in the root directory of the plug-in, and the default name is api_key.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open it using text editing software, fill in your API key after google_api_key= and save it.

Node options:

- image: Optional, input image as a reference for prompt words.

- api: The Api used. At present, there are two options "gemini-1. 5-flash" and "google-gemini".

- token_limit: The maximum token limit for generating prompt words.

- discribe: Enter a simple description here. supports Chinese text input.

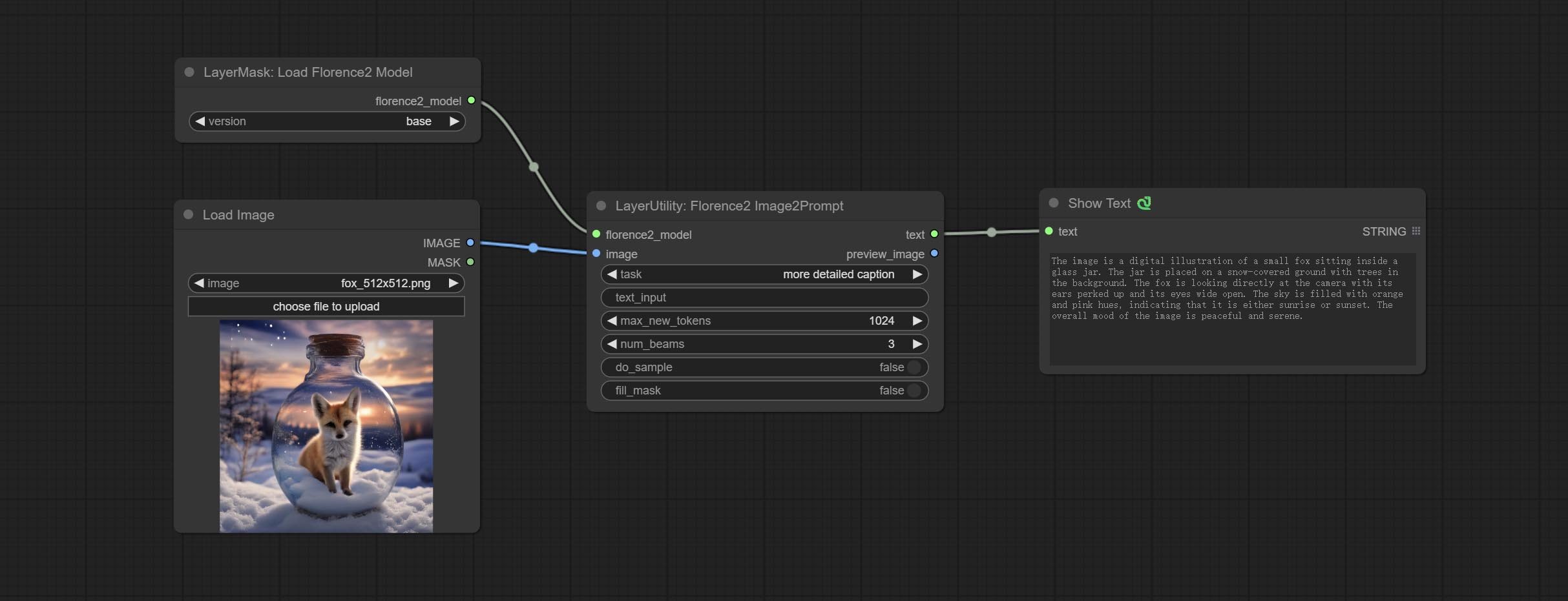

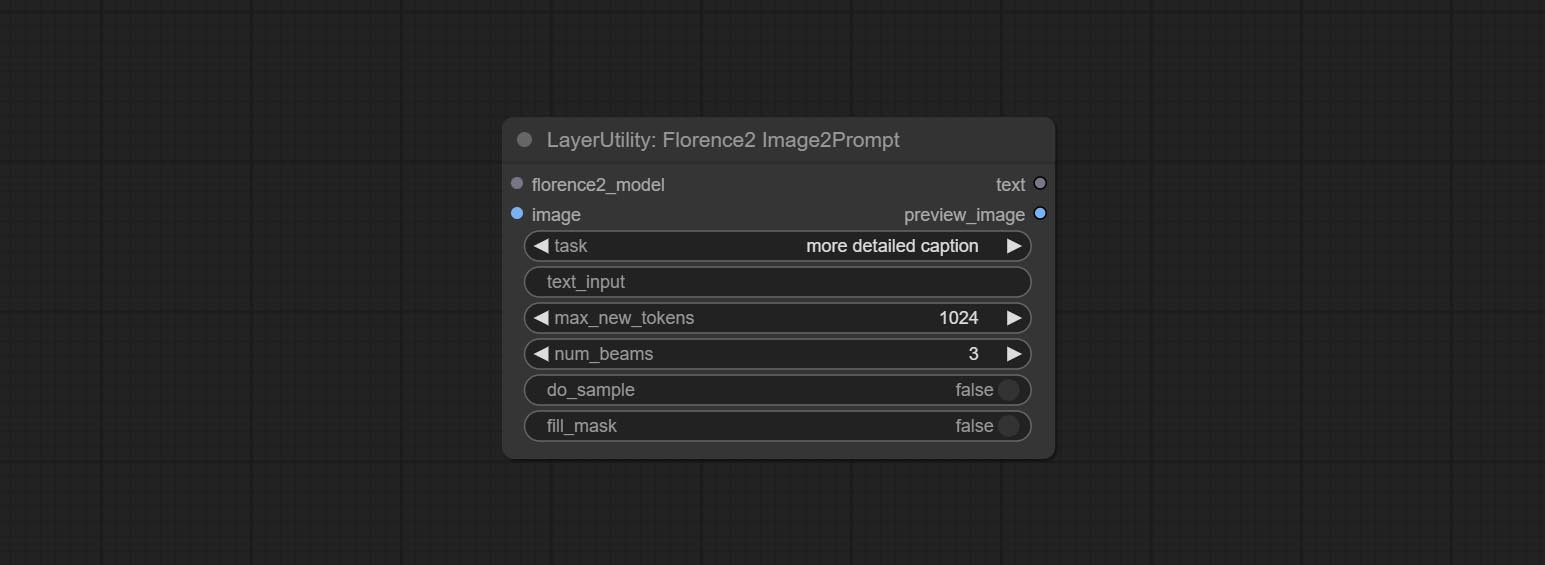

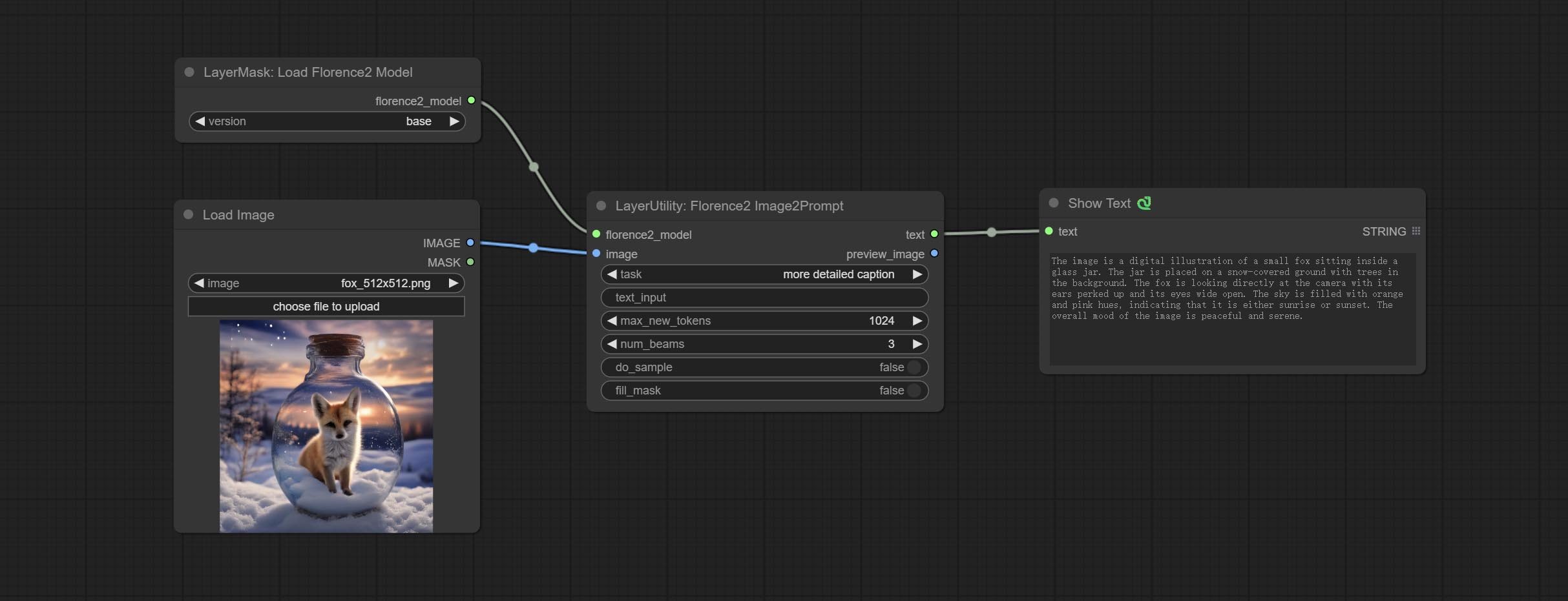

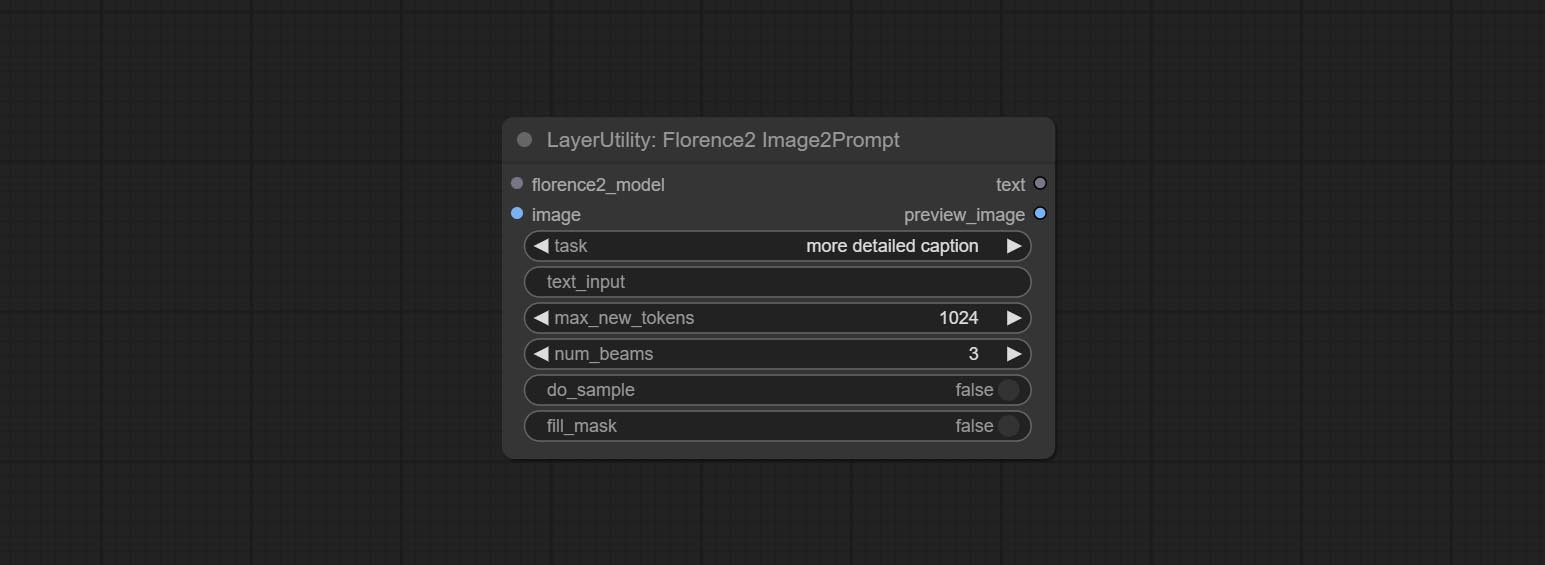

Florence2Image2Prompt

Use the Florence 2 model to infer prompt words. The code for this node section is fromyiwangsimple/florence_dw, thanks to the original author. *When using it for the first time, the model will be automatically downloaded. You can also download the model file from BaiduNetdisk to ComfyUI/models/florence2 folder.

Node Options:

- florence2_model: Florence2 model input.

- image: Image input.

- task: Select the task for florence2.

- text_input: Text input for florence2.

- max_new_tokens: The maximum number of tokens for generating text.

- num_beams: The number of beam searches that generate text.

- do_sample: Whether to use text generated sampling.

- fill_mask: Whether to use text marker mask filling.

VQAPrompt

Use the blip-vqa model for visual question answering. Part of the code for this node is referenced from celoron/ComfyUI-VisualQueryTemplate, thanks to the original author.

*Download model files from BaiduNetdisk or huggingface.co/Salesforce/blip-vqa-capfilt-large and huggingface.co/Salesforce/blip-vqa-base and copy to ComfyUImodelsVQA folder.

Node Options:

- image: The image input.

- vqa_model: The vqa model input, it from LoadVQAModel node.

- question: Task text input. A single question is enclosed in curly braces "{}", and the answer to the question will be replaced in its original position in the text output. Multiple questions can be defined using curly braces in a single Q&A. For example, for a picture of an item placed in a scene, the question is:"{object color} {object} on the {scene}".

LoadVQAModel

Load the blip-vqa model.

Node Options:

- model: There are currently two models to choose from "blip-vqa-base" and "blip-vqa-capfilt-large".

- precision: The model accuracy has two options: "fp16" and "fp32".

- device: The model running device has two options: "cuda" and "cpu".

ImageShift

Shift the image. this node supports the output of displacement seam masks, making it convenient to create continuous textures.

Node options:

- image 5 : The input image.

- mask 2,5 : The mask of image.

- shift_x: Horizontal distance of shift.

- shift_y: Vertical distance of shift.

- cyclic: Is the part of displacement that is out of bounds cyclic.

- background_color 4 : Background color. if cyclic is set to False, the setting here will be used as the background color.

- border_mask_width: Border mask width.

- border_mask_blur: Border mask blur.

- 메모

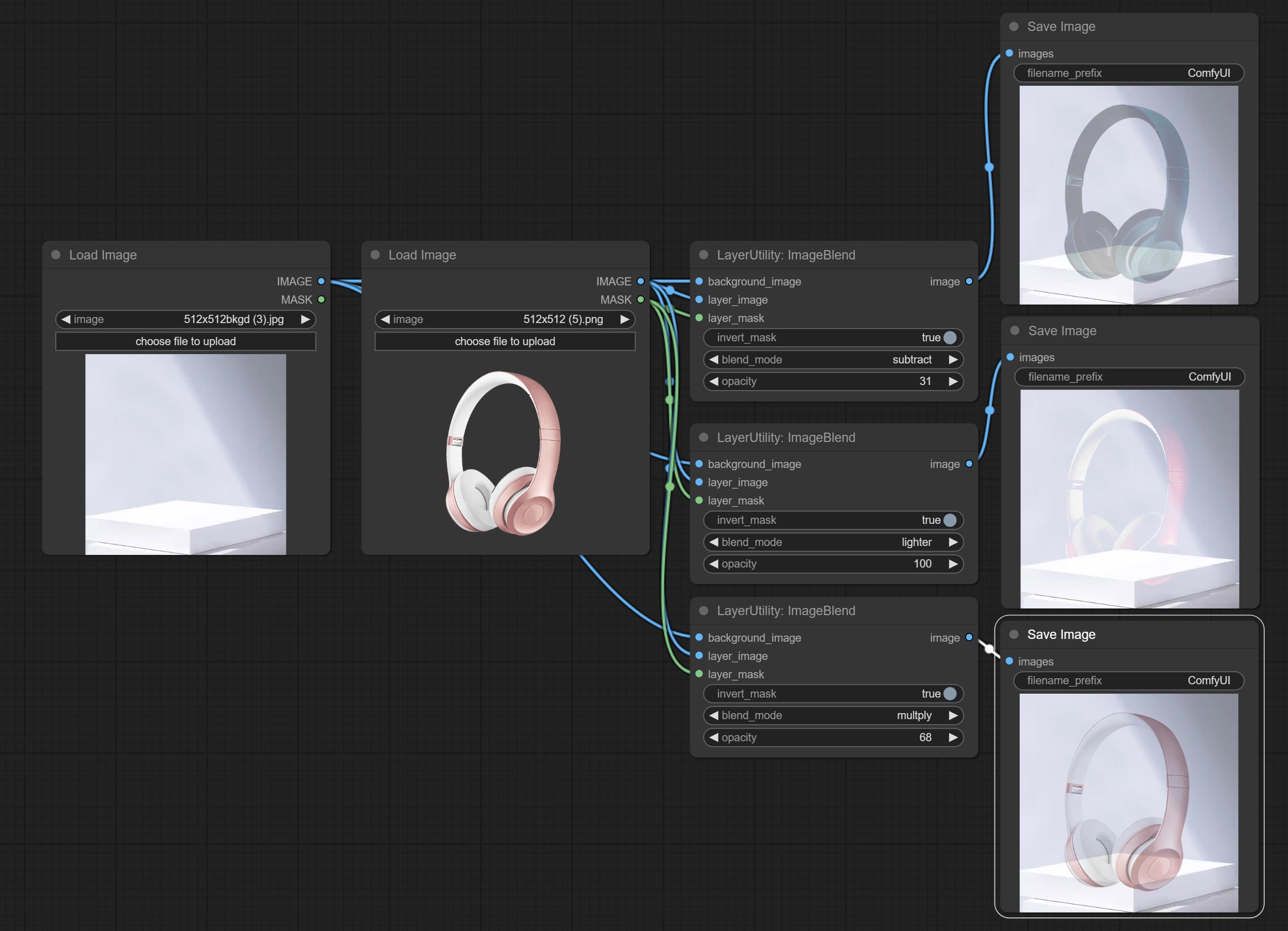

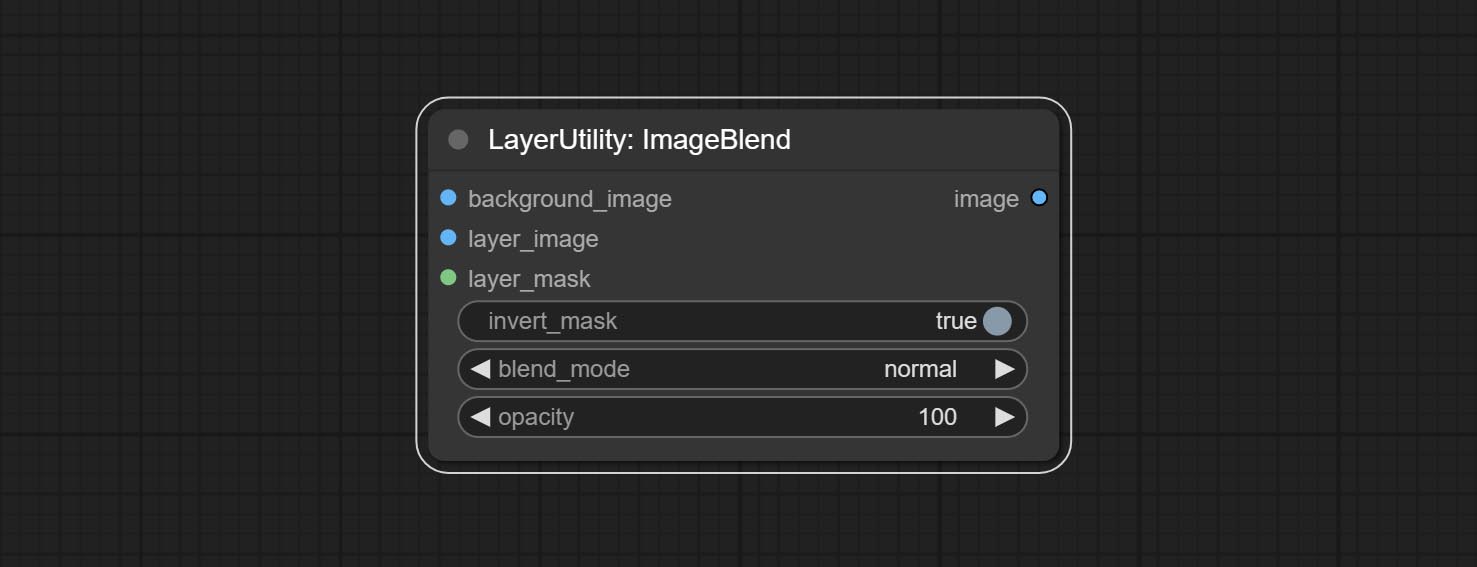

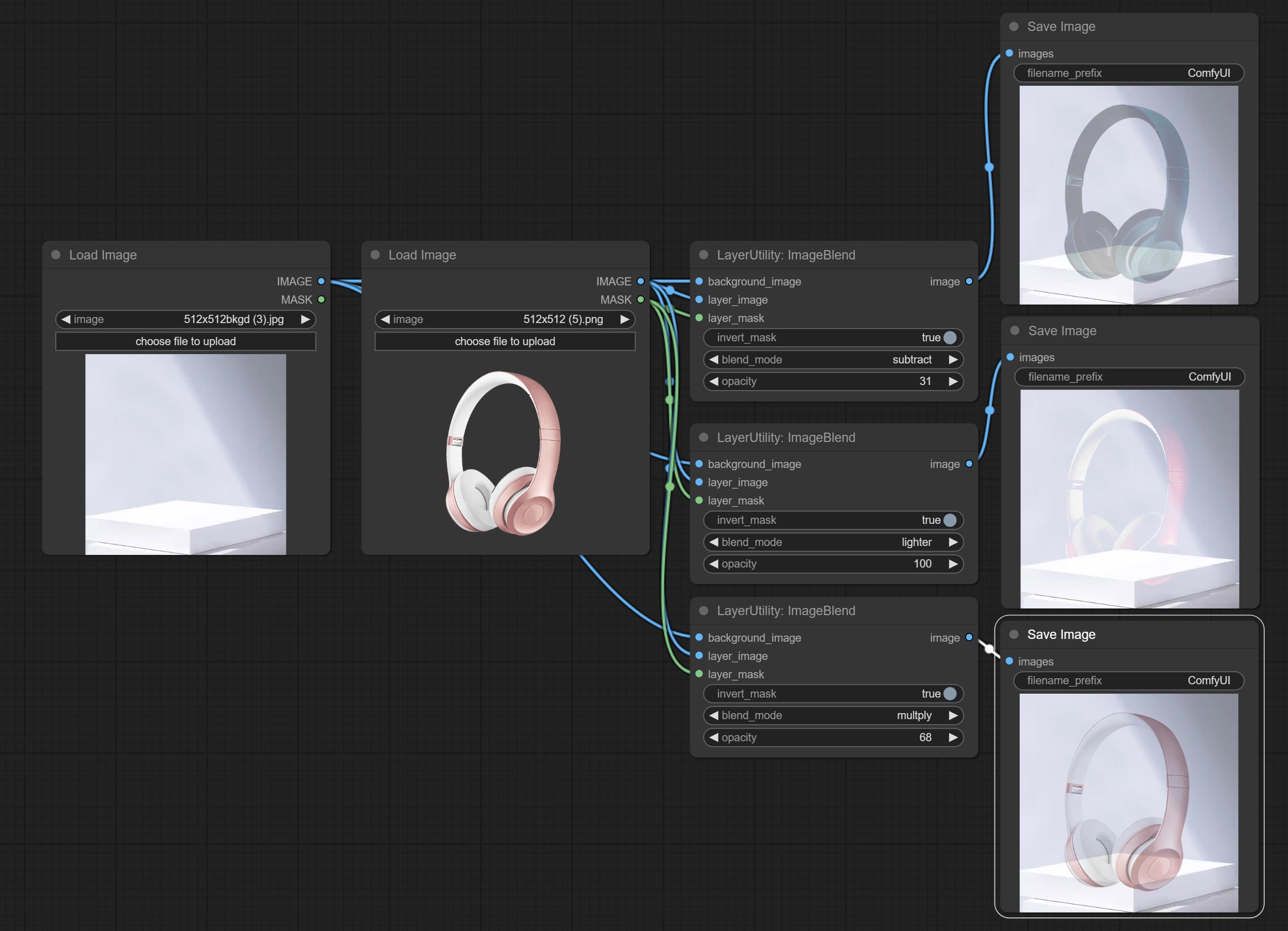

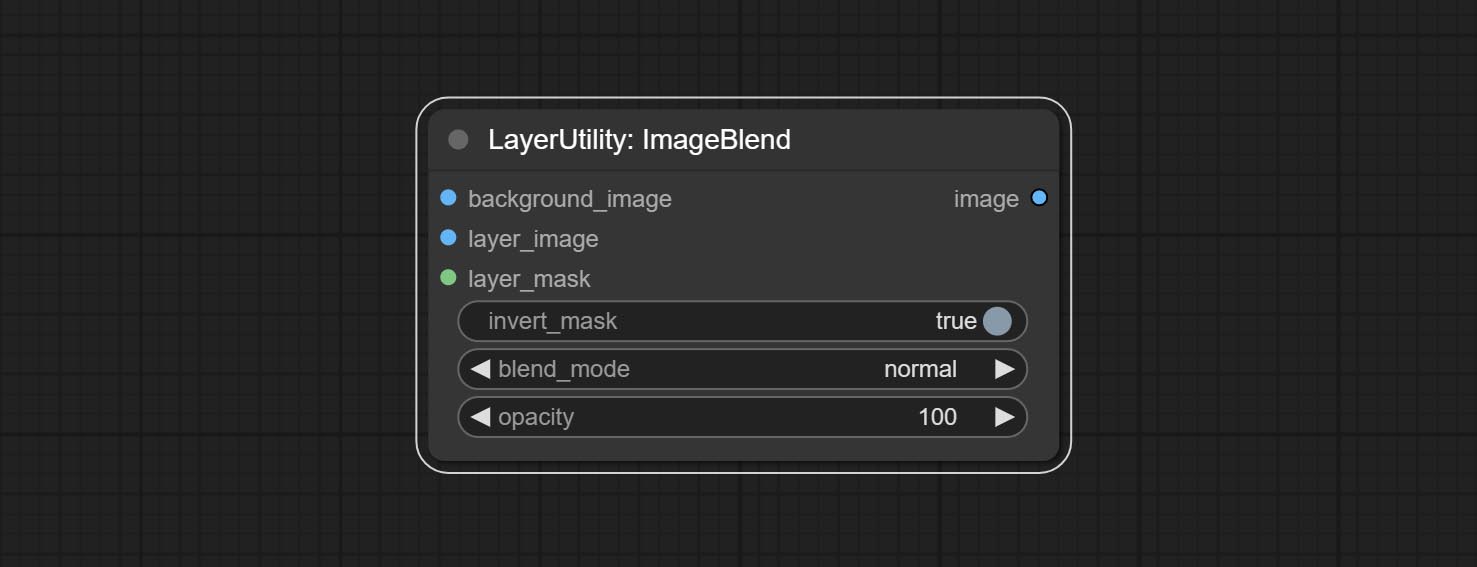

ImageBlend

A simple node for composit layer image and background image, multiple blend modes are available for option, and transparency can be set.

Node options:

- background_image 1 : The background image.

- layer_image 1 : Layer image for composite.

- layer_mask 1,2 : Mask for layer_image.

- invert_mask: Whether to reverse the mask.

- blend_mode 3 : Blending mode.

- opacity: Opacity of blend.

- 메모

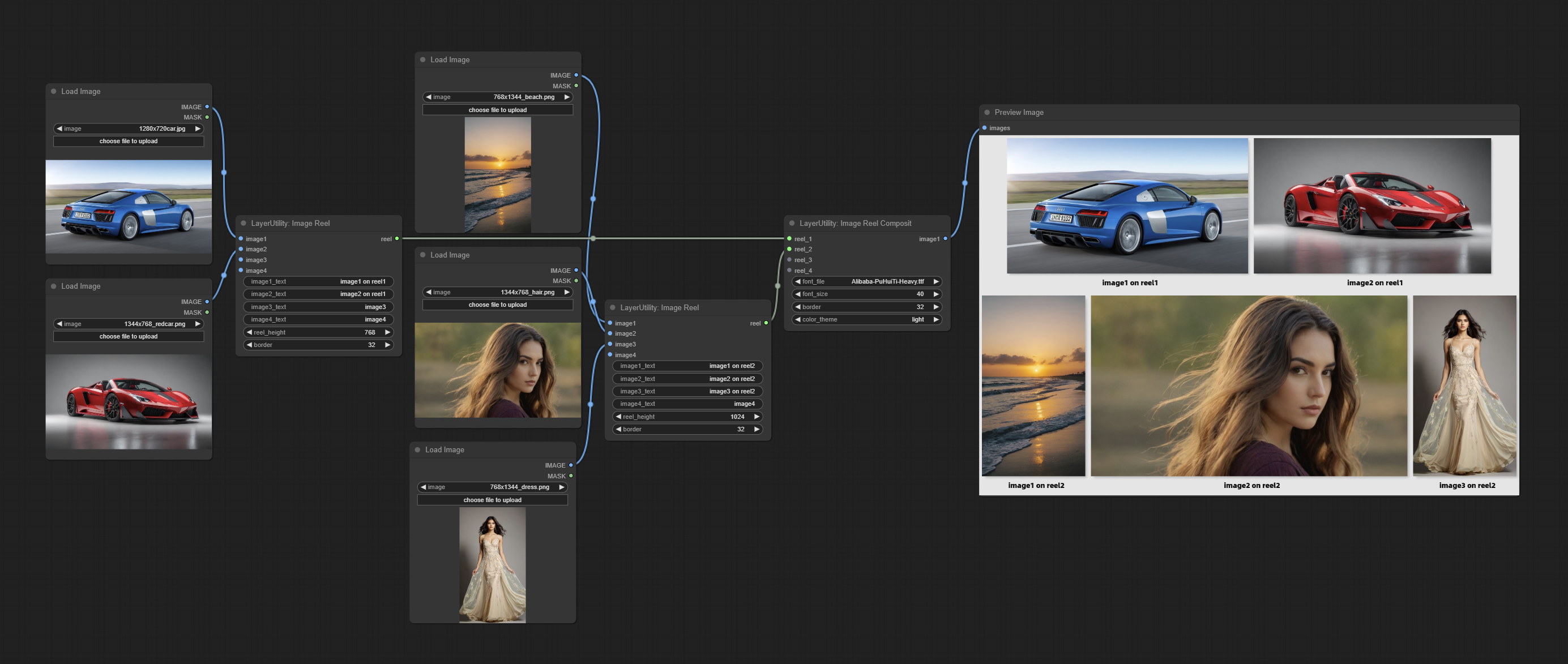

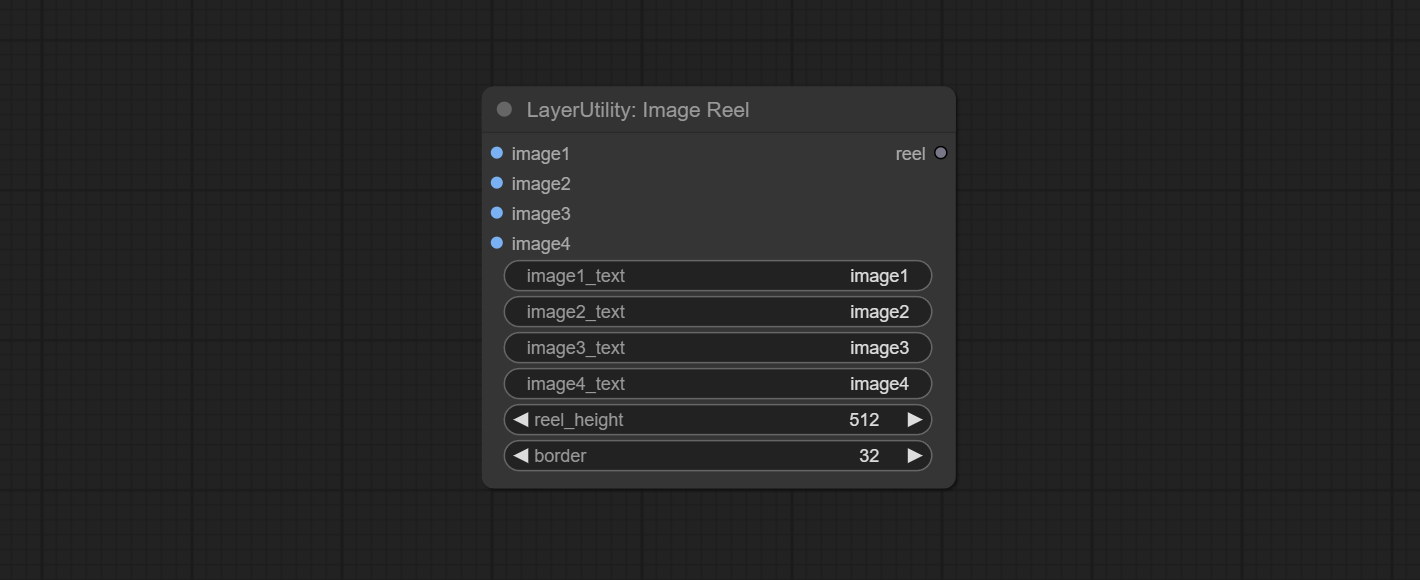

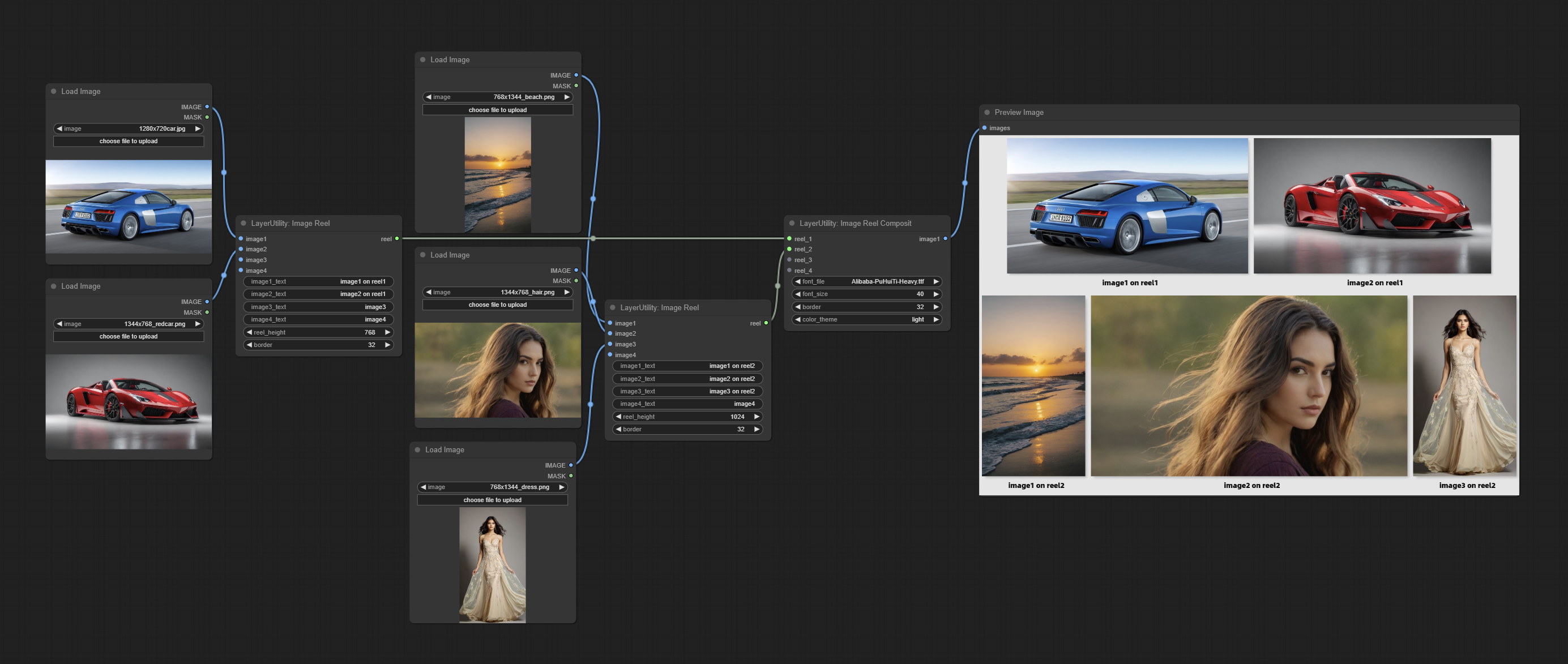

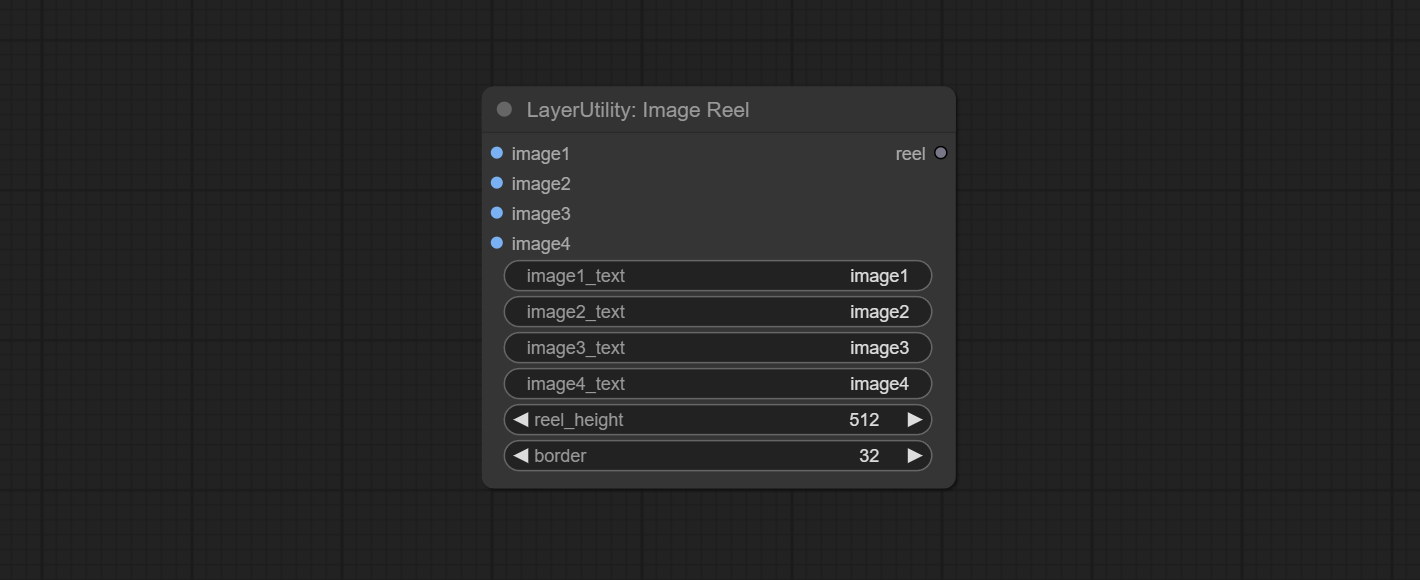

ImageReel

Display multiple images in one reel. Text annotations can be added to each image in the reel. By using the ImageReelComposite node, multiple reel can be combined into one image.

Node Options:

- image1: The first image. it must be input.

- image2: The second image. optional input.

- image3: The third image. optional input.

- image4: The fourth image. optional input.

- image1_text: Text annotation for the first image.

- image2_text: Text annotation for the second image.

- image3_text: Text annotation for the third image.

- image4_text: Text annotation for the fourth image.

- reel_height: The height of reel.

- border: The border width of the image in the reel.

산출:

- reel: The reel of ImageReelComposite node input.

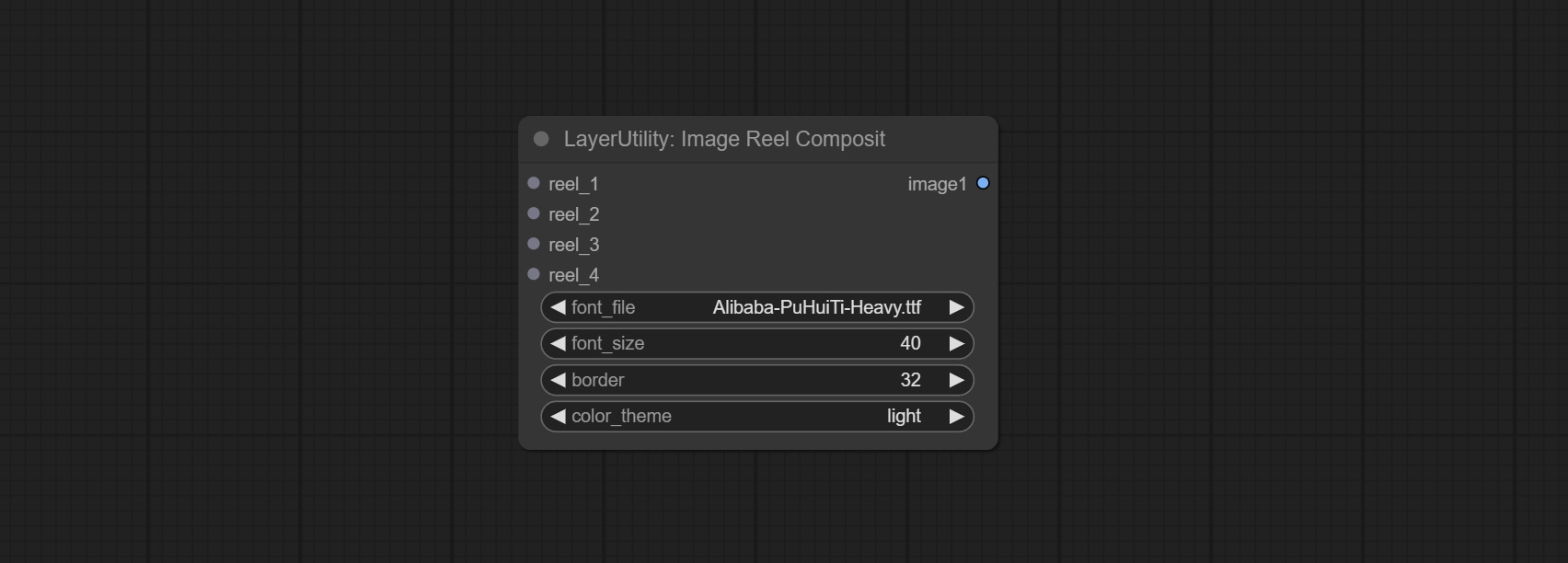

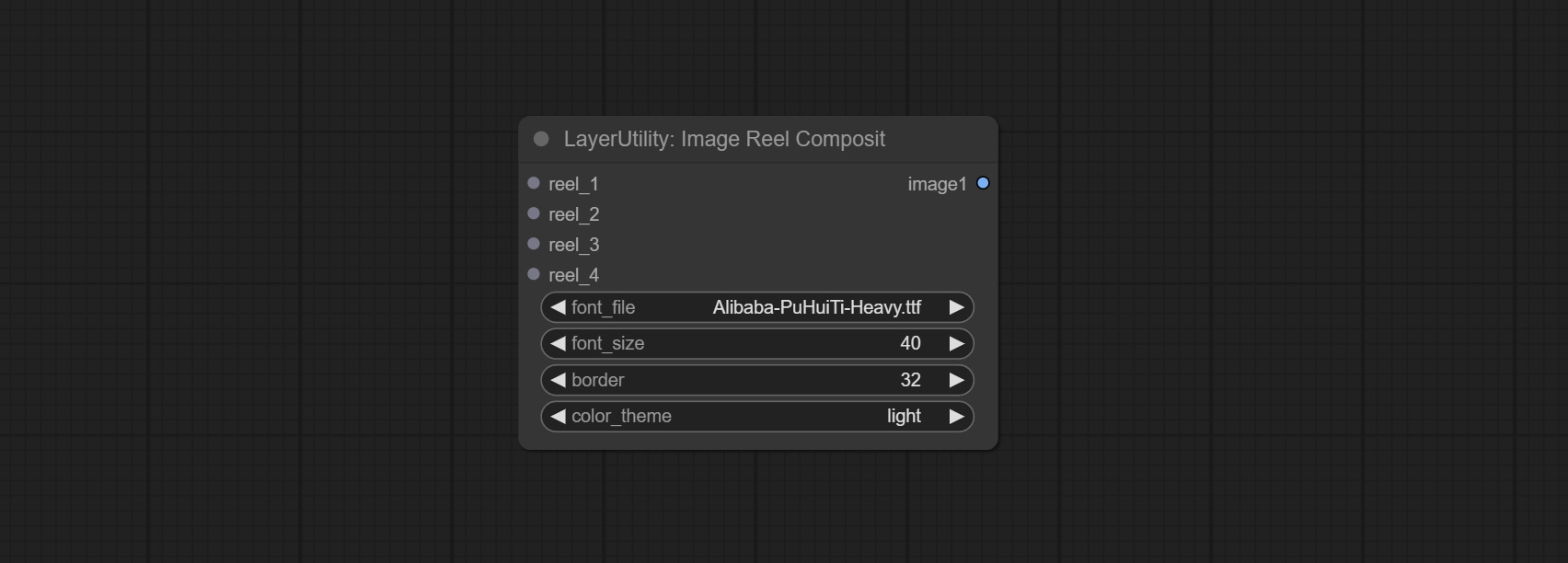

ImageReelComposite

Combine multiple reel into one image.

Node Options:

- reel_1: The first reel. it must be input.

- reel_2: The second reel. optional input.

- reel_3: The third reel. optional input.

- reel_4: The fourth reel. optional input.

- font_file ** : Here is a list of available font files in the font folder, and the selected font files will be used to generate images.

- border: The border width of the reel.

- color_theme: Theme color for the output image.

* The font folder is defined in resource_dir.ini , this file is located in the root directory of the plug-in, and the default name is resource_dir.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open the text editing software and find the line starting with "FONT_dir=", after "=", enter the custom folder path name. support defining multiple folders in resource-dir.ini , separated by commas, semicolons, or spaces. all font files in this folder will be collected and displayed in the node list during ComfyUI initialization. If the folder set in ini is invalid, the font folder that comes with the plugin will be enabled.

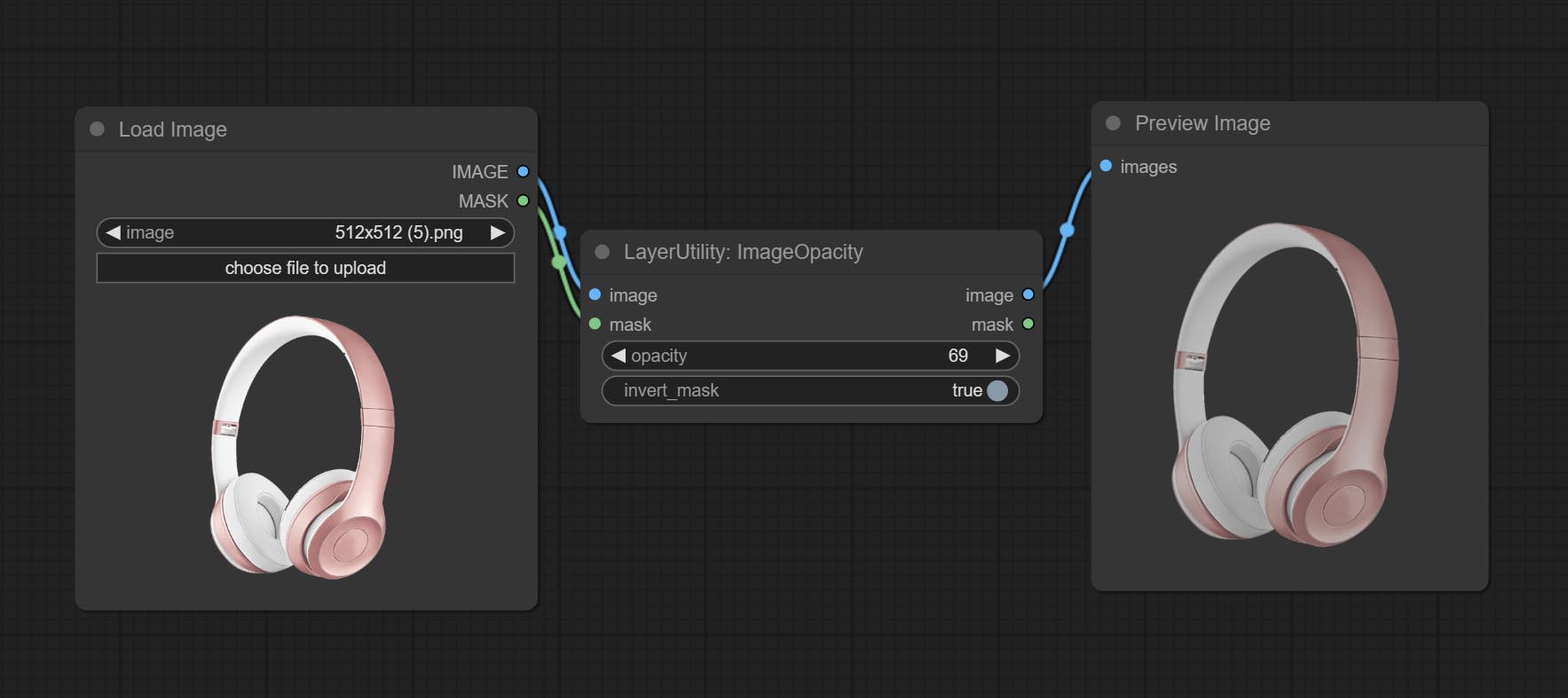

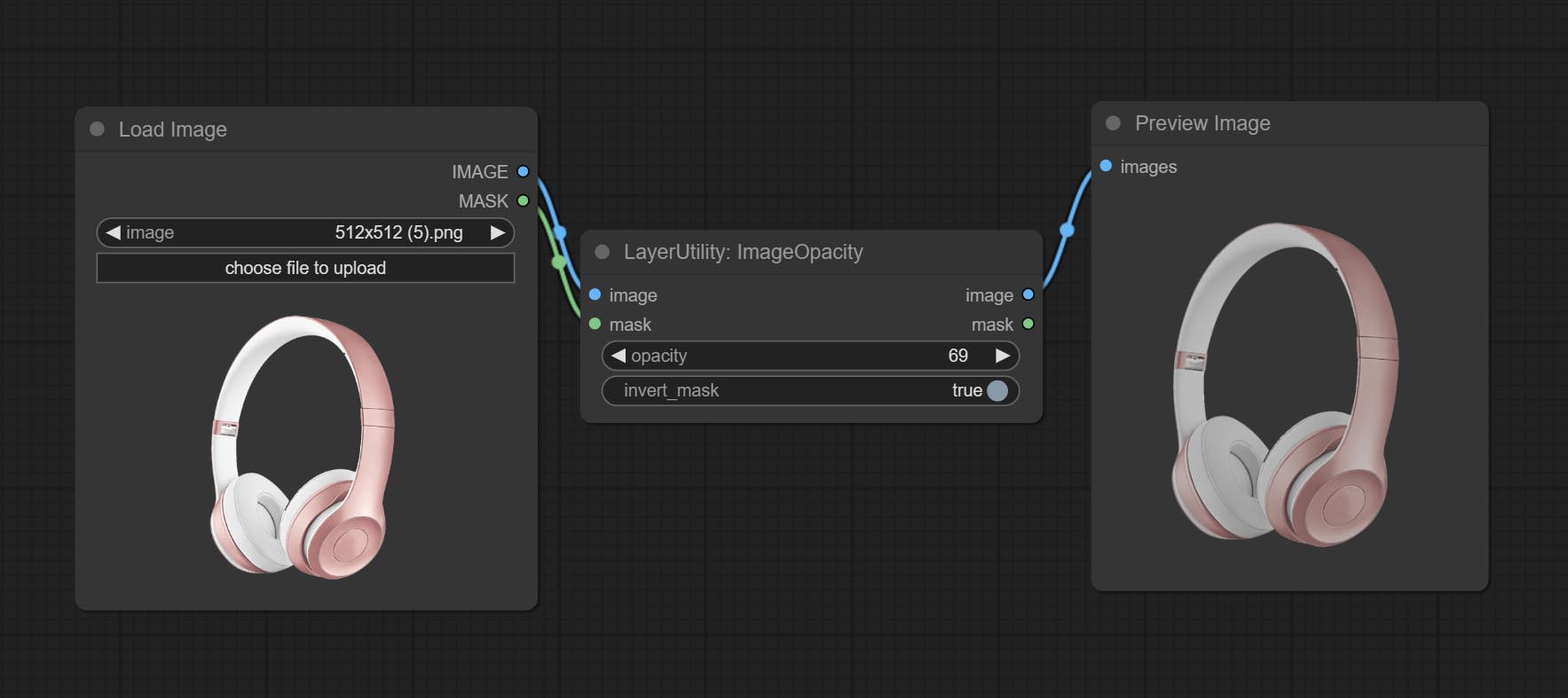

ImageOpacity

Adjust image opacity

Node option:

- image 5 : Image input, supporting RGB and RGBA. if is RGB, the alpha channel of the entire image will be automatically added.

- mask 2,5 : Mask input.

- invert_mask: Whether to reverse the mask.

- opacity: Opacity of image.

- 메모

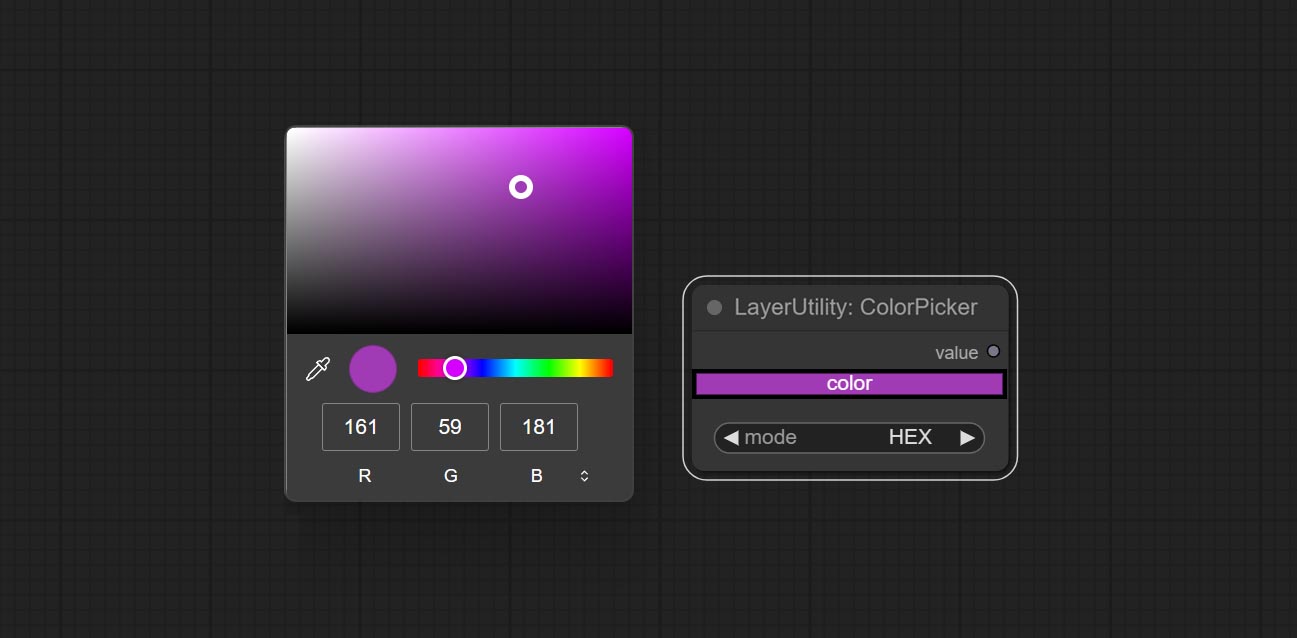

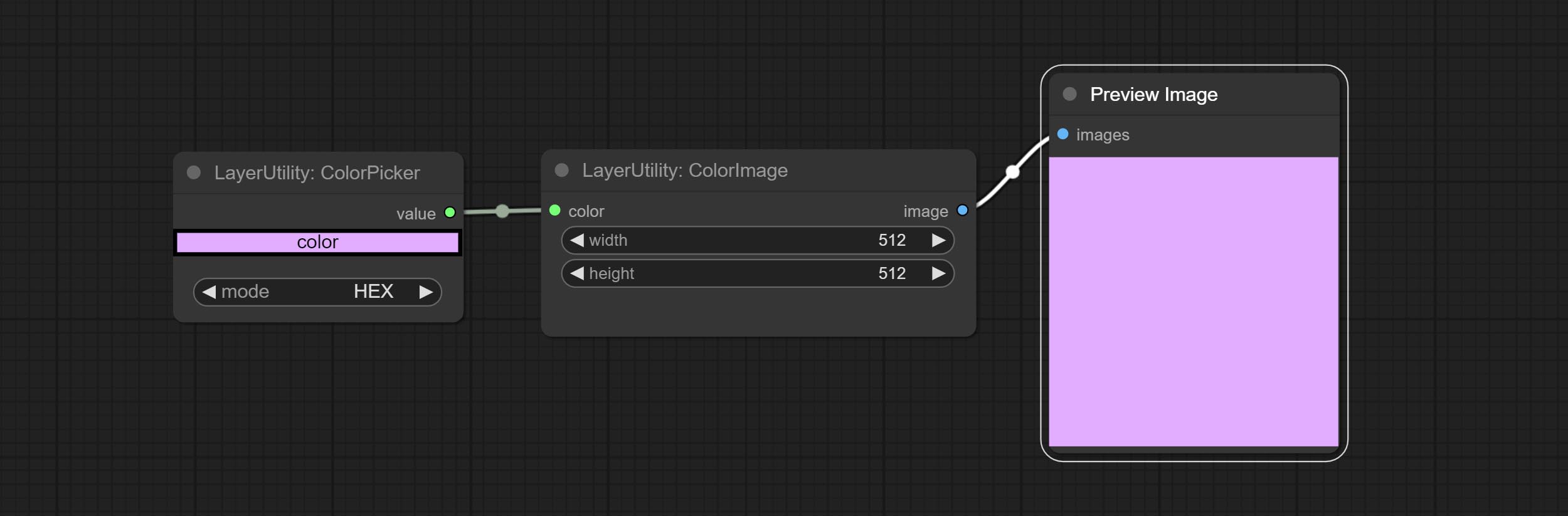

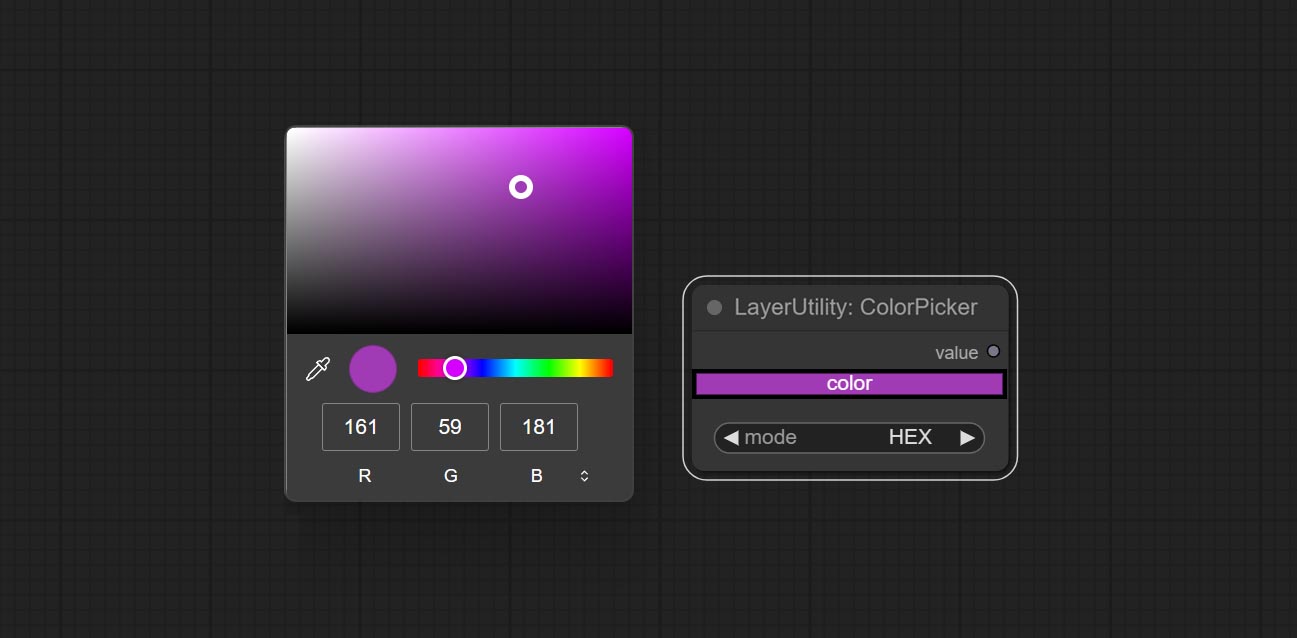

ColorPicker

Modify web extensions from mtb nodes. Select colors on the color palette and output RGB values, thanks to the original author.

Node options:

- mode: The output format is available in hexadecimal (HEX) and decimal (DEC).

Output type:

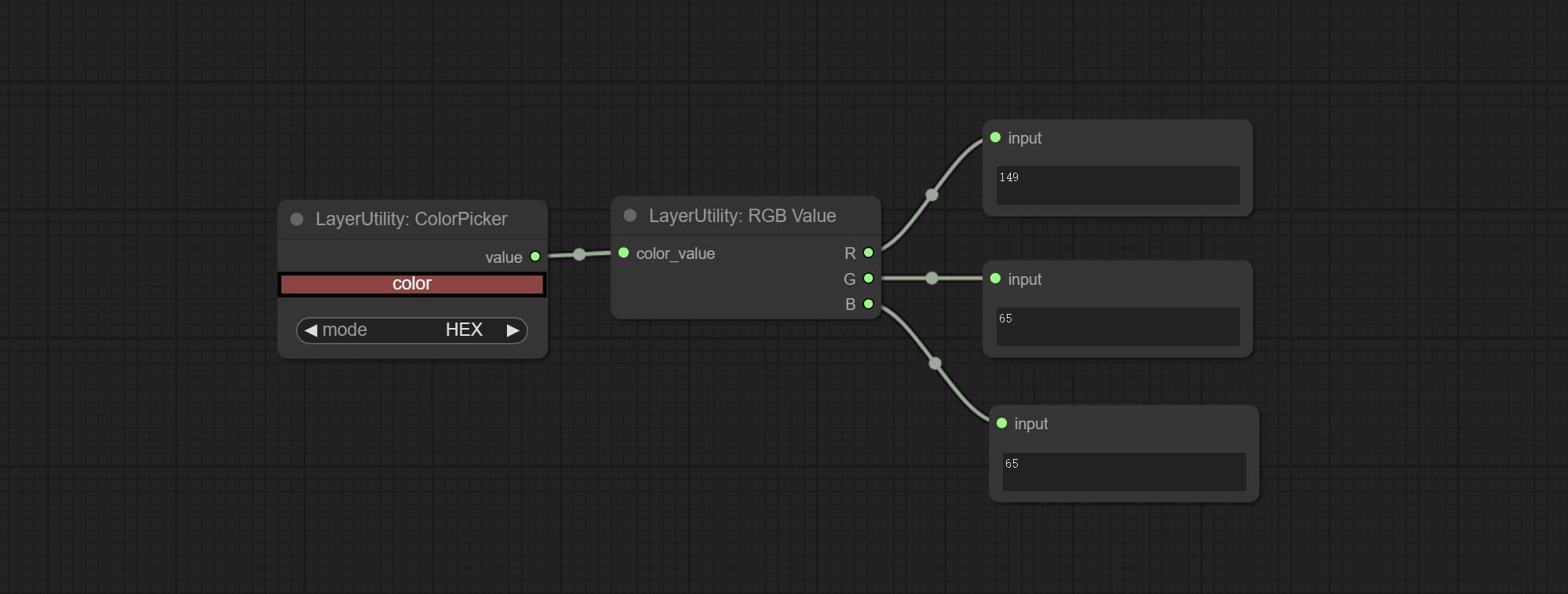

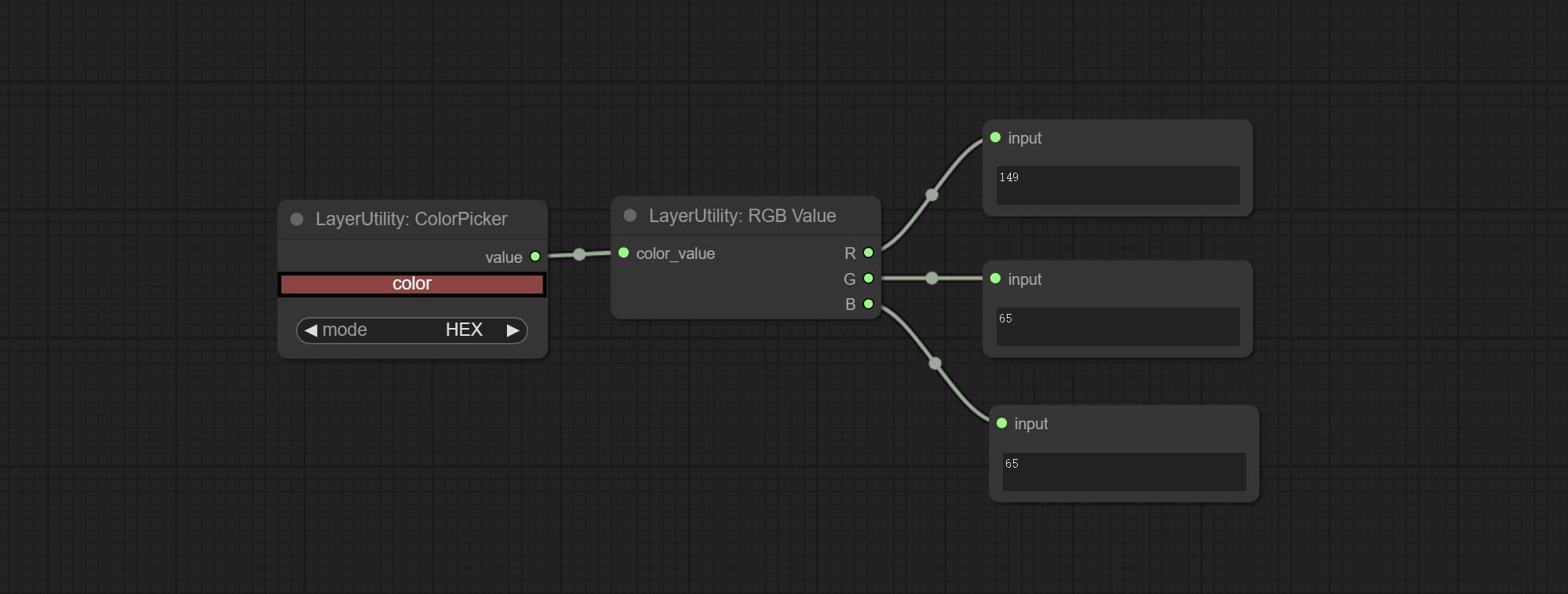

RGBValue

Output the color value as a single R, G, B three decimal values. Supports HEX and DEC formats for ColorPicker node output.

Node Options:

- color_value: Supports hexadecimal (HEX) or decimal (DEC) color values and should be of string or tuple type. Forcing in other types will result in an error.

HSVValue

Output color values as individual decimal values of H, S, and V (maximum value of 255). Supports HEX and DEC formats for ColorPicker node output.

Node Options:

- color_value: Supports hexadecimal (HEX) or decimal (DEC) color values and should be of string or tuple type. Forcing in other types will result in an error.

GrayValue

Output grayscale values based on color values. Supports outputting 256 level and 100 level grayscale values.

Node Options:

- color_value: Supports hexadecimal (HEX) or decimal (DEC) color values and should be of string or tuple type. Forcing in other types will result in an error.

Outputs:

- gray(256_level): 256 level grayscale value. Integer type, range 0~255.

- gray(100_level): 100 level grayscale value. Integer type, range 0~100.

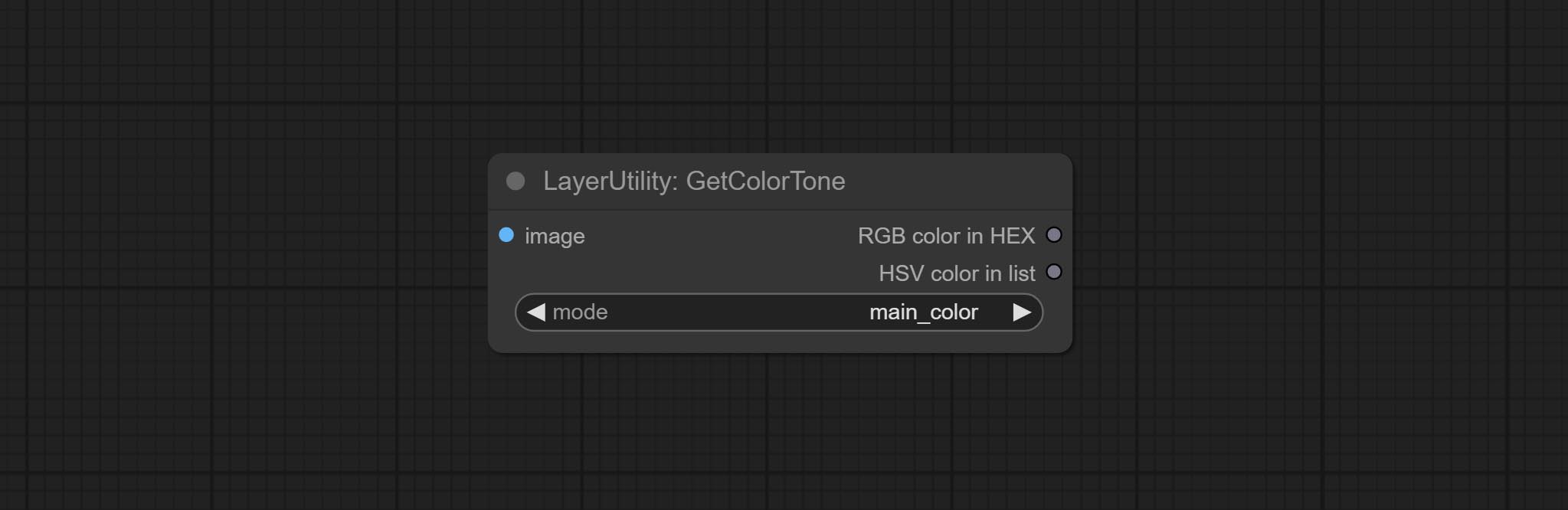

GetColorTone

Obtain the main color or average color from the image and output RGB values.

Node options:

- mode: There are two modes to choose from, with the main color and average color.

Output type:

- RGB color in HEX: The RGB color described by hexadecimal RGB format, like '#FA3D86'.

- HSV color in list: The HSV color described by python's list data format.

GetColorToneV2

V2 upgrade of GetColorTone. You can specify the dominant or average color to get the body or background.

The following changes have been made on the basis of GetColorTong:

- color_of: Provides 4 options, mask, entire, background, and subject, to select the color of the mask area, entire picture, background, or subject, respectively.

- remove_background_method: There are two methods of background recognition: BiRefNet and RMBG V1.4.

- invert_mask: Whether to reverse the mask.

- mask_grow: Mask expansion. For subject, a larger value brings the obtained color closer to the color at the center of the body.

산출:

- image: Solid color picture output, the size is the same as the input picture.

- mask: Mask output.

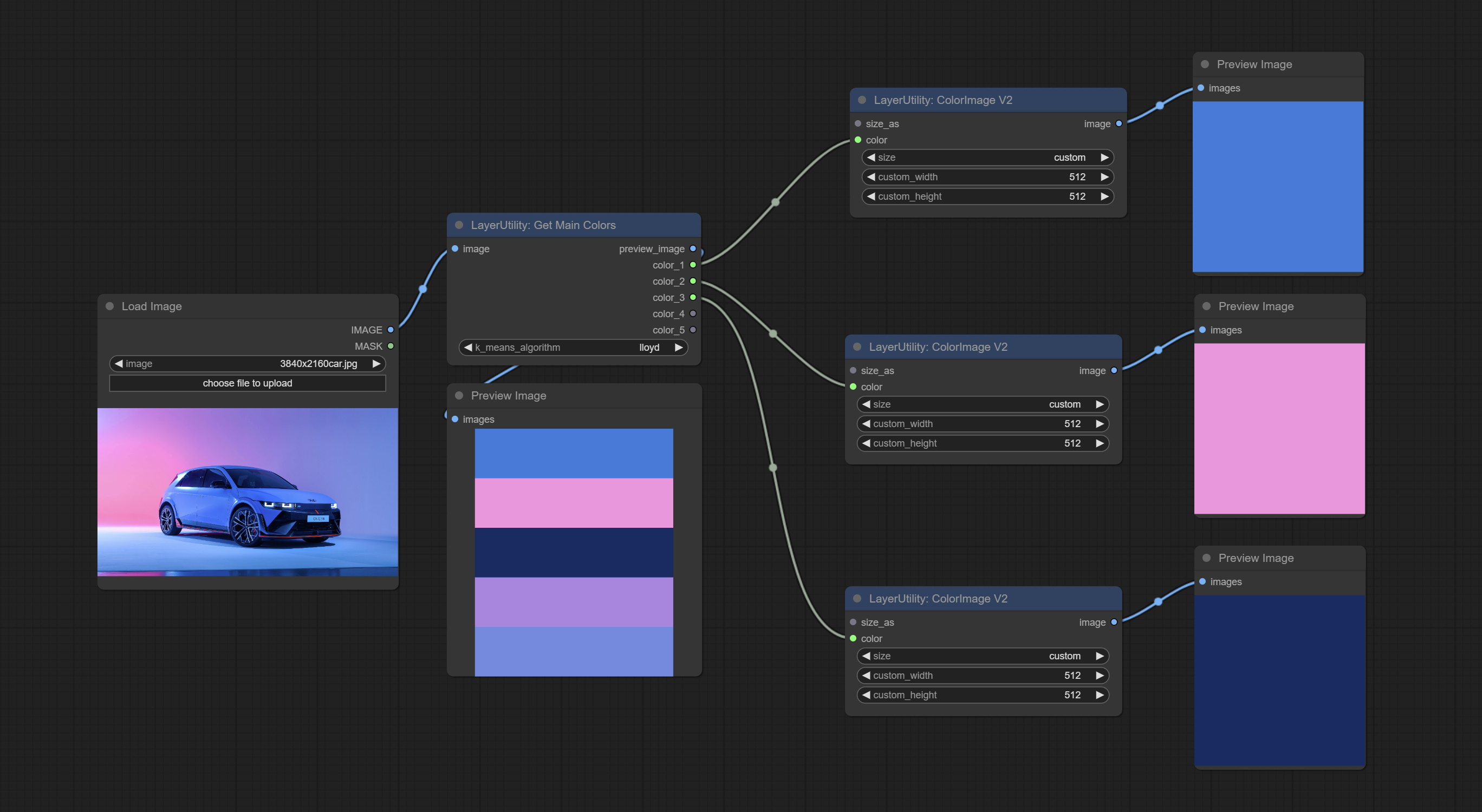

GetMainColors

Obtain the main color of the image. You can obtain 5 colors.

Node Options:

- image: The image input.

- k_means_algorithm:K-Means algorithm options. "lloyd" is the standard K-Means algorithm, while "elkan" is the triangle inequality algorithm, suitable for larger images.

Outputs:

- preview_image: 5 main color preview images.

- color_1~color_5: Color value output. Output an RGB string in HEX format.

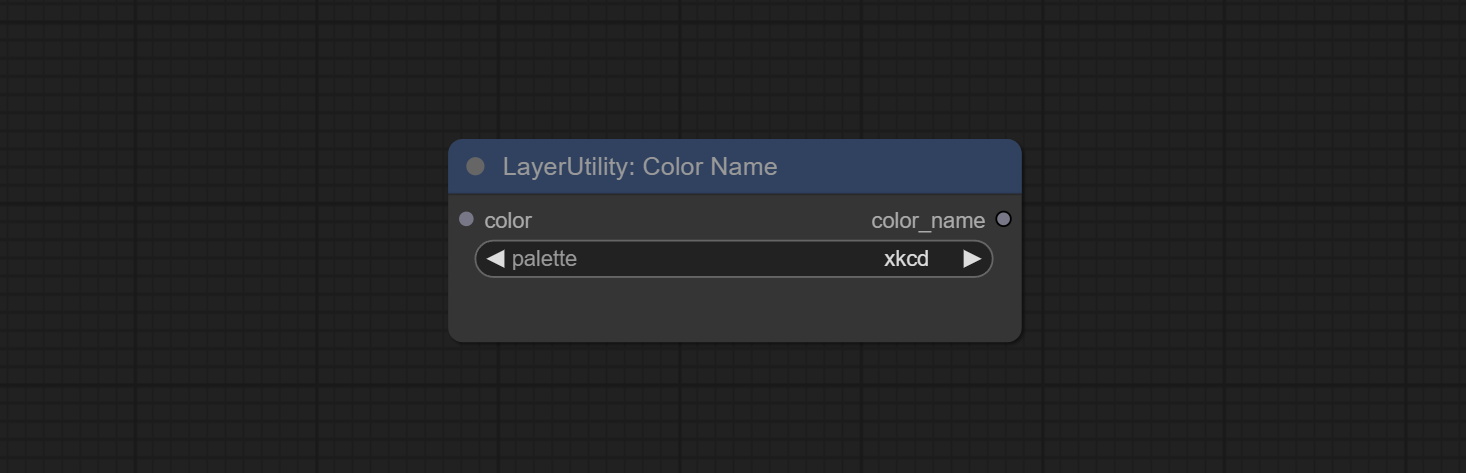

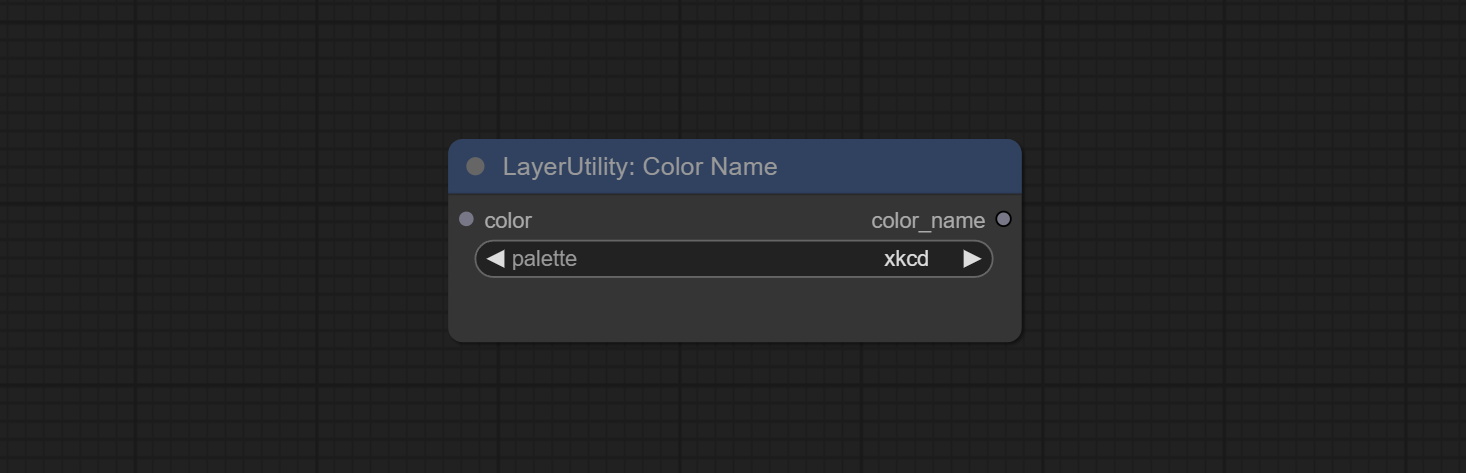

ColorName

Output the most similar color name in the color palette based on the color value.

Node Options:

- color: Color value input, in HEX format RGB string format.

- palette: Color palette.

xkcd includes 949 colors, css3 includes 147 colors, and html4 includes 16 colors.

산출:

- color_name: Color name in string.

ExtendCanvas

Extend the canvas

Node options:

- invert_mask: Whether to reverse the mask.

- top: Top extension value.

- bottom: Bottom extension value.

- left: Left extension value.

- right: Right extension value.

- 색상; Color of canvas.

ExtendCanvasV2

V2 upgrade to ExtendCanvas.

Based on ExtendCanvas, color is modified to be a string type, and it supports external ColorPicker input, Support negative value input, it means image will be cropped.

XY to Percent

Convert absolute coordinates to percentage coordinates.

Node options:

- x: Value of X.

- y: Value of Y.

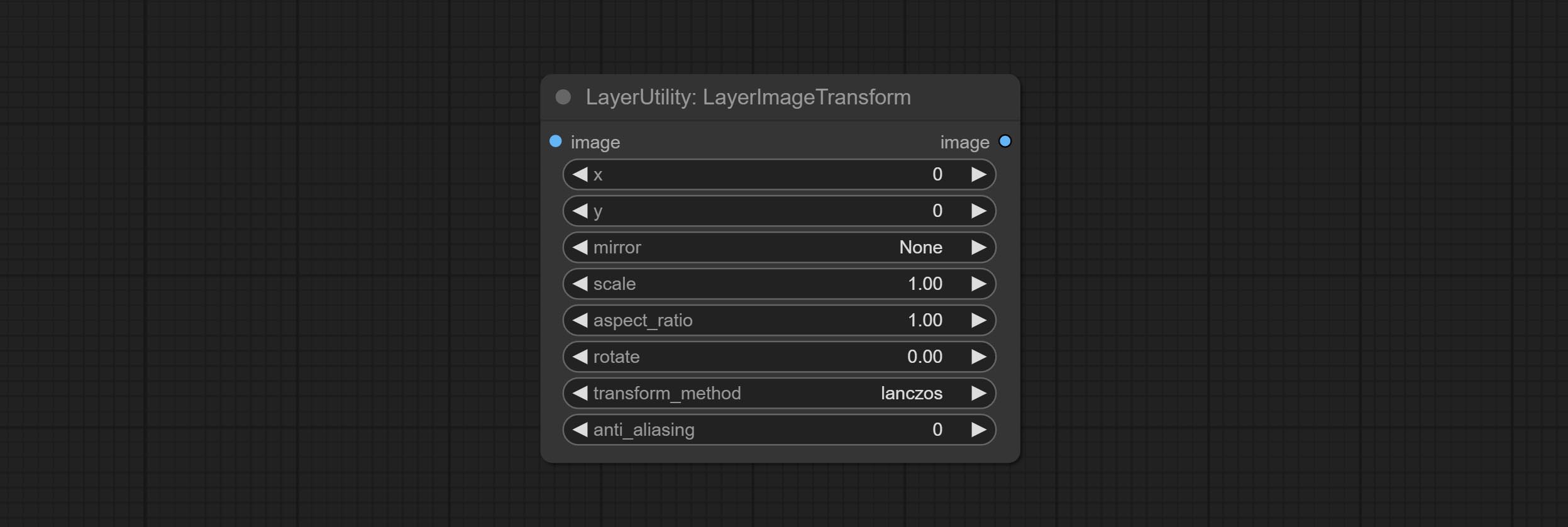

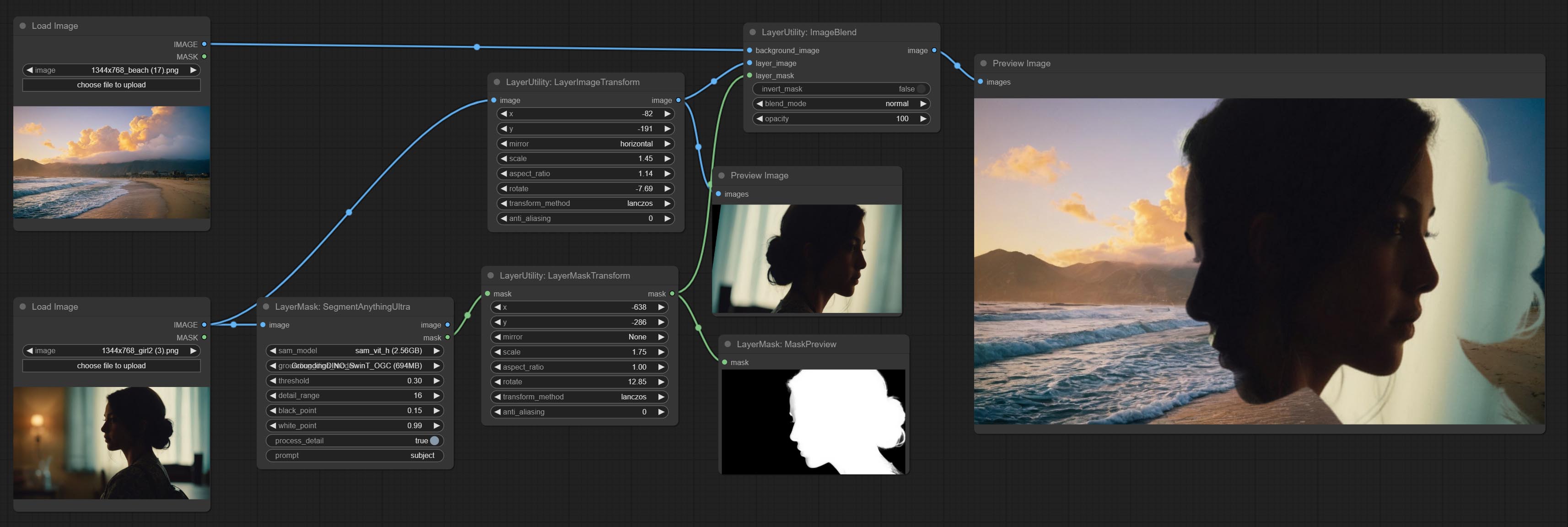

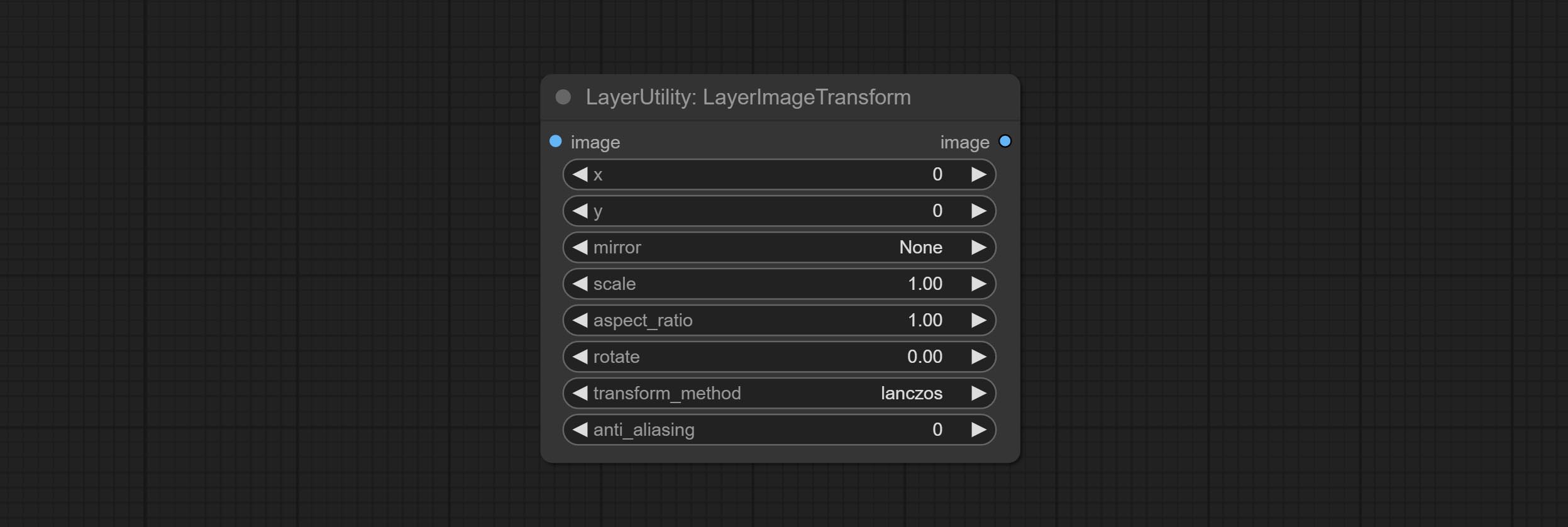

LayerImageTransform

This node is used to transform layer_image separately, which can change size, rotation, aspect ratio, and mirror flip without changing the image size.

Node options:

- x: Value of X.

- y: Value of Y.

- mirror: Mirror flipping. Provide two flipping modes, horizontal flipping and vertical flipping.

- scale: Layer magnification, 1.0 represents the original size.

- aspect_ratio: Layer aspect ratio. 1.0 is the original ratio, a value greater than this indicates elongation, and a value less than this indicates flattening.

- rotate: Layer rotation degree.

- Sampling methods for layer enlargement and rotation, including lanczos, bicubic, hamming, bilinear, box and nearest. Different sampling methods can affect the image quality and processing time of the synthesized image.

- anti_aliasing: Anti aliasing, ranging from 0 to 16, the larger the value, the less obvious the aliasing. An excessively high value will significantly reduce the processing speed of the node.

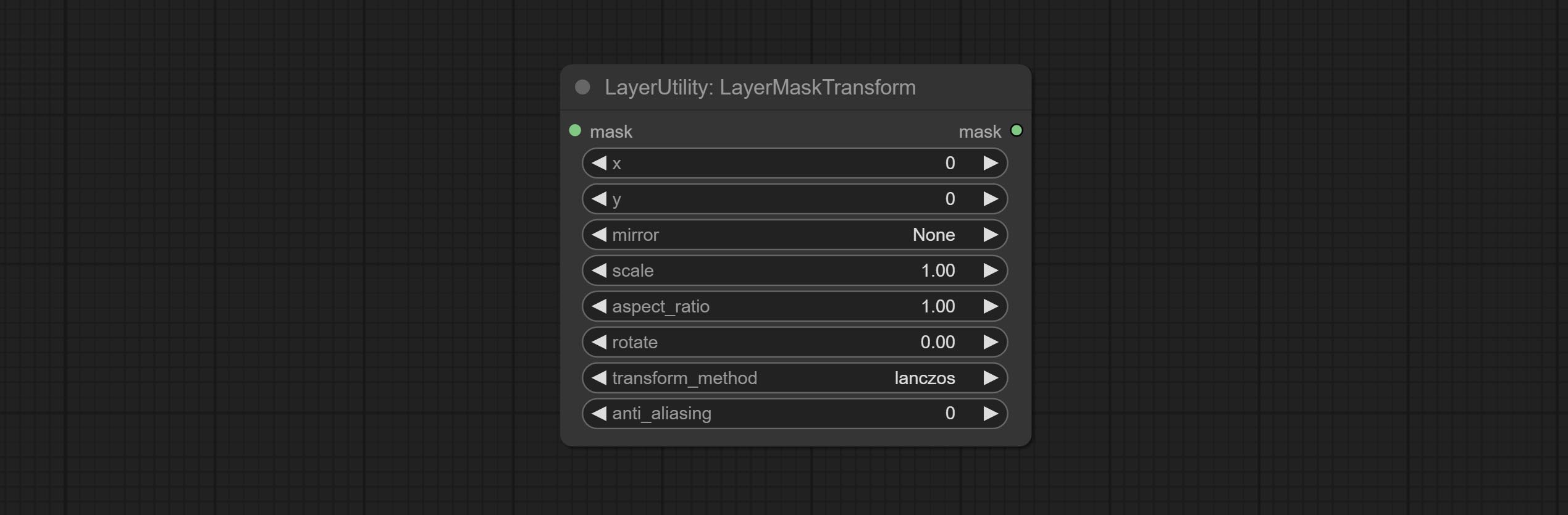

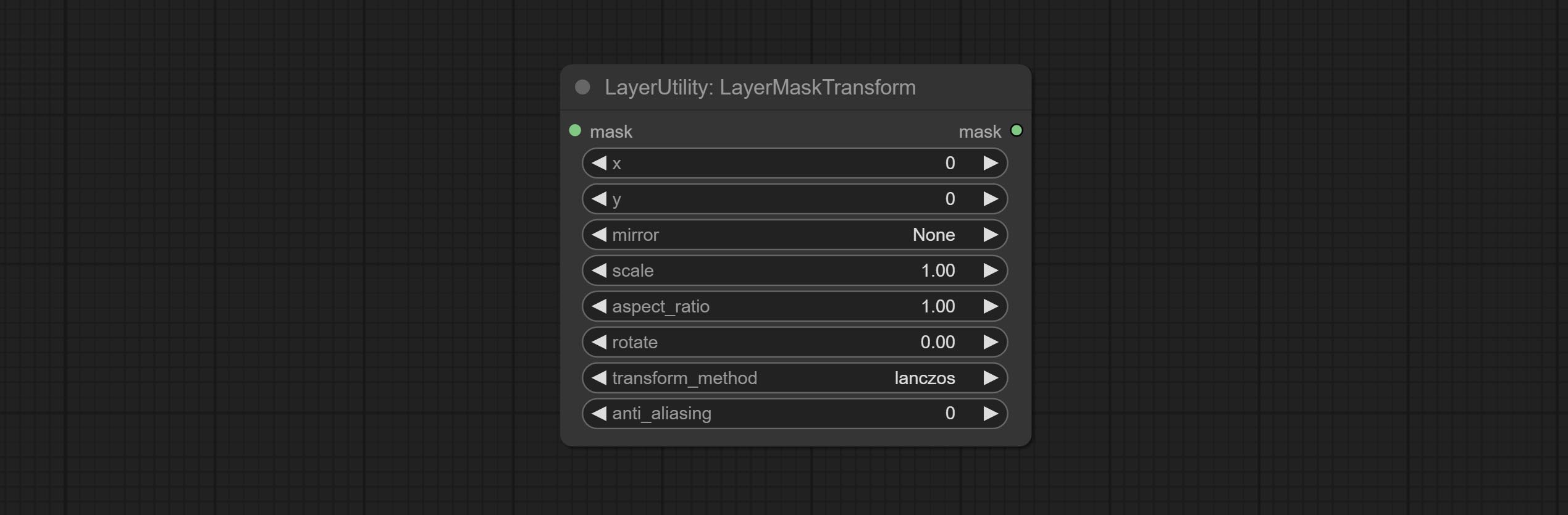

LayerMaskTransform

Similar to LayerImageTransform node, this node is used to transform the layer_mask separately, which can scale, rotate, change aspect ratio, and mirror flip without changing the mask size.

Node options:

- x: Value of X.

- y: Value of Y.

- mirror: Mirror flipping. Provide two flipping modes, horizontal flipping and vertical flipping.

- scale: Layer magnification, 1.0 represents the original size.

- aspect_ratio: Layer aspect ratio. 1.0 is the original ratio, a value greater than this indicates elongation, and a value less than this indicates flattening.

- rotate: Layer rotation degree.

- Sampling methods for layer enlargement and rotation, including lanczos, bicubic, hamming, bilinear, box and nearest. Different sampling methods can affect the image quality and processing time of the synthesized image.

- anti_aliasing: Anti aliasing, ranging from 0 to 16, the larger the value, the less obvious the aliasing. An excessively high value will significantly reduce the processing speed of the node.

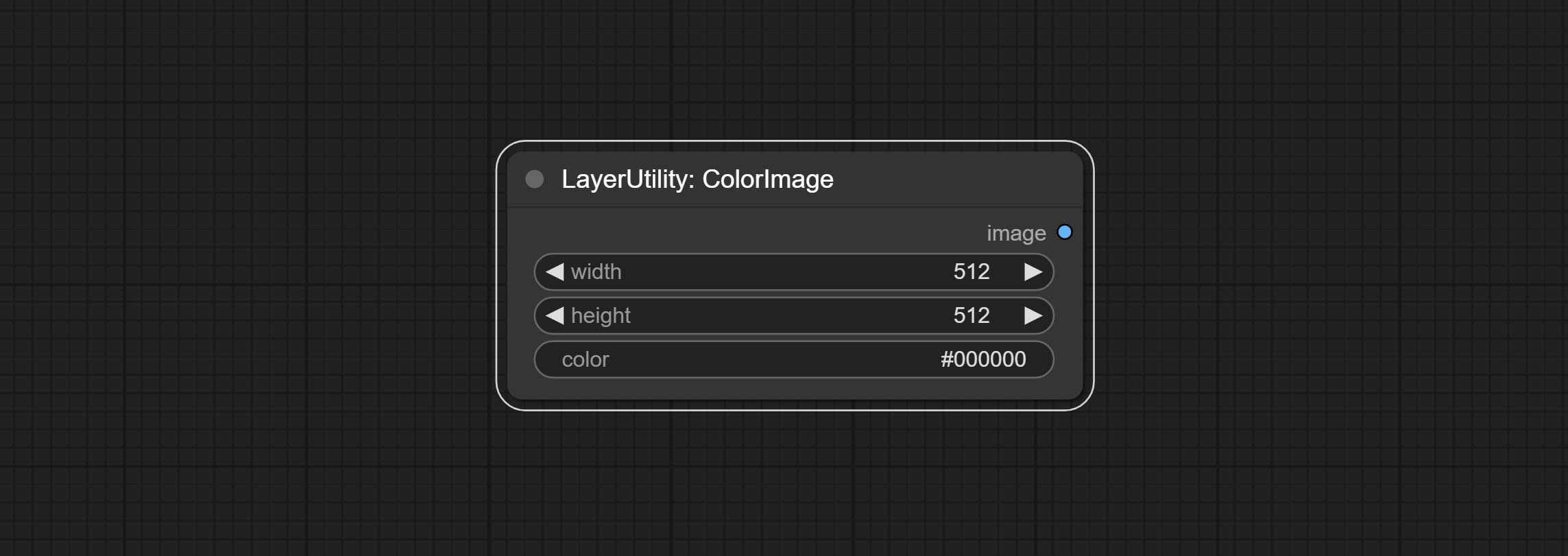

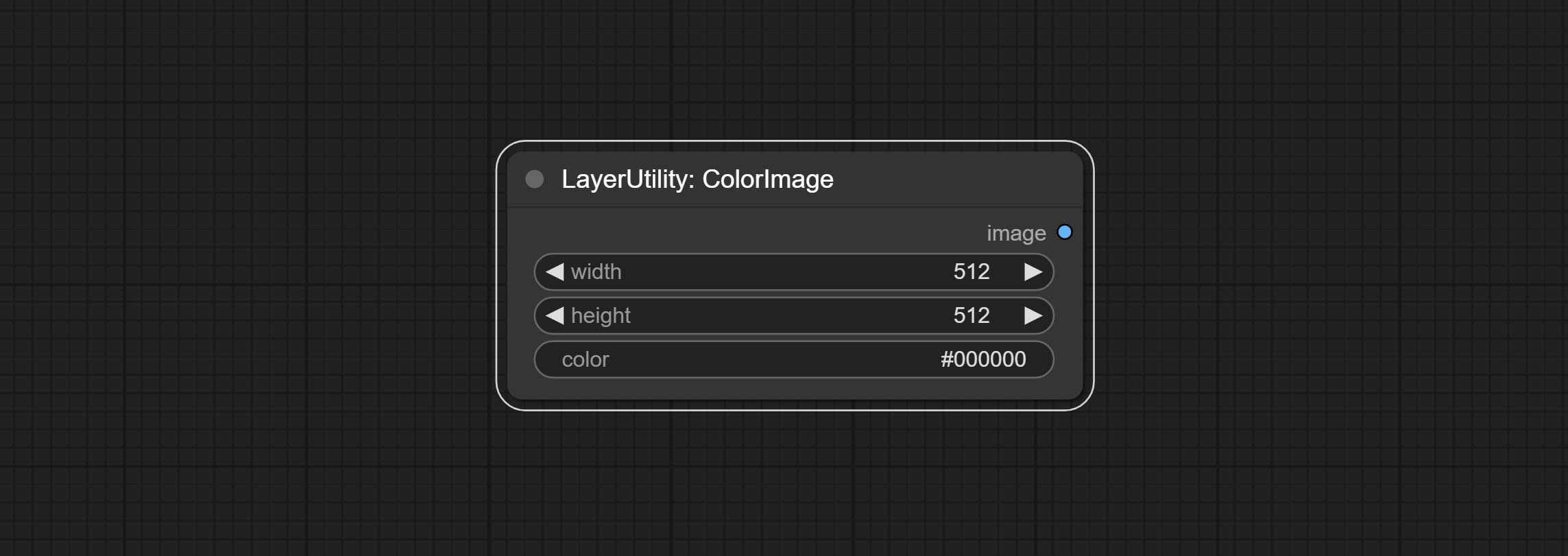

ColorImage

Generate an image of a specified color and size.

Node options:

- width: Width of the image.

- height: Height of the image.

- color 4 : Color of the image.

ColorImageV2

The V2 upgraded version of ColorImage.

The following changes have been made based on ColorImage:

- size_as * : Input image or mask here to generate image according to its size. Note that this input takes priority over other size settings.

- size ** : Size preset. the preset can be customized by the user. if have size_as input, this option will be ignored.

- custom_width: Image width. it valid when size is set to "custom". if have size_as input, this option will be ignored.

- custom_height: Image height. it valid when size is set to "custom". if have size_as input, this option will be ignored.

* Only limited to input images and masks. forcing the integration of other types of inputs will result in node errors. ** The preset size is defined in custom_size.ini , this file is located in the root directory of the plug-in, and the default name is custom_size.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open with text editing software. Each row represents a size, with the first value being width and the second being height, separated by a lowercase "x" in the middle. To avoid errors, please do not enter extra characters.

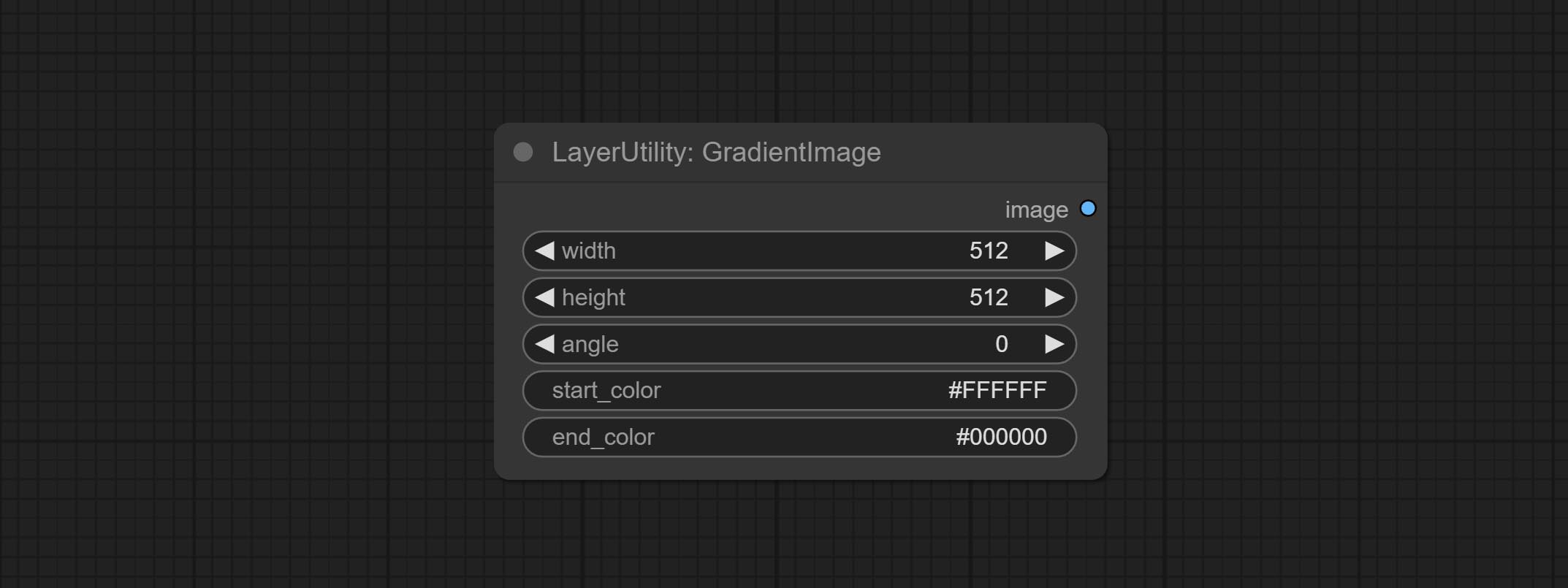

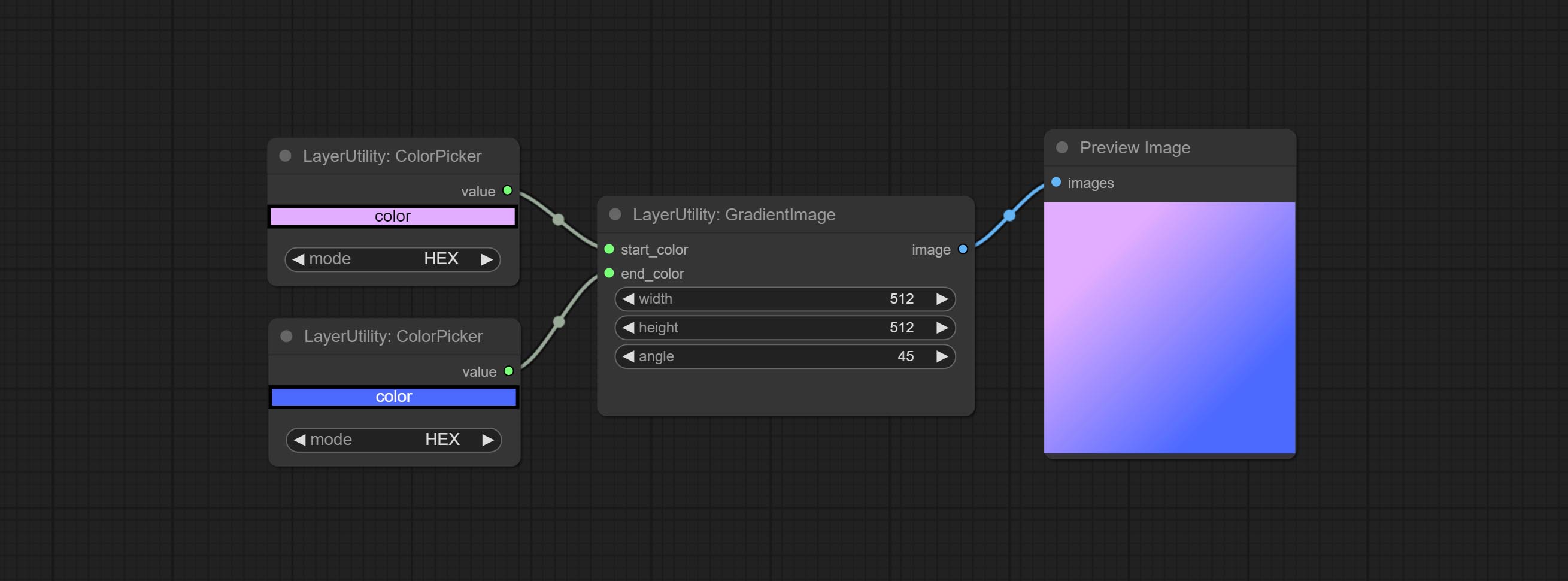

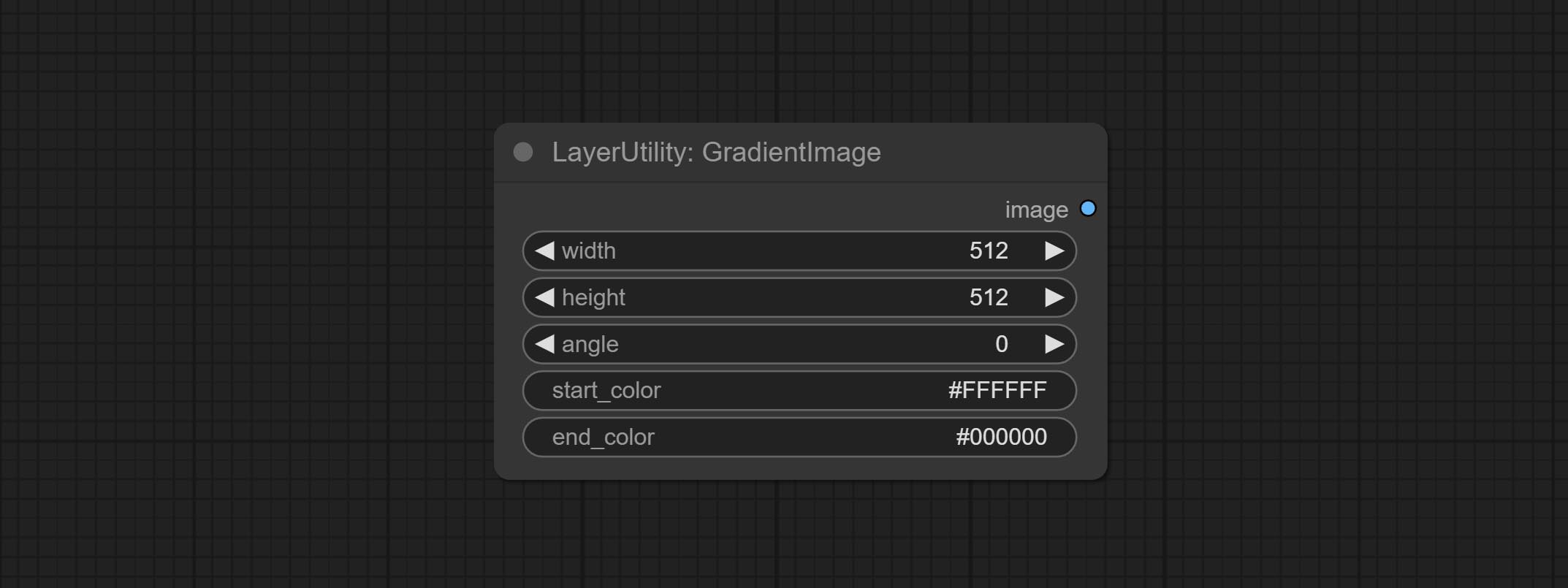

GradientImage

Generate an image with a specified size and color gradient.

Node options:

- width: Width of the image.

- height: Height of the image.

- angle: Angle of gradient.

- start_color 4 : Color of the begging.

- end_color 4 : Color of the ending.

GradientImageV2

The V2 upgraded version of GradientImage.

The following changes have been made based on GradientImage:

- size_as * : Input image or mask here to generate image according to its size. Note that this input takes priority over other size settings.

- size ** : Size preset. the preset can be customized by the user. if have size_as input, this option will be ignored.

- custom_width: Image width. it valid when size is set to "custom". if have size_as input, this option will be ignored.

- custom_height: Image height. it valid when size is set to "custom". if have size_as input, this option will be ignored.

* Only limited to input images and masks. forcing the integration of other types of inputs will result in node errors. ** The preset size is defined in custom_size.ini , this file is located in the root directory of the plug-in, and the default name is custom_size.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open with text editing software. Each row represents a size, with the first value being width and the second being height, separated by a lowercase "x" in the middle. To avoid errors, please do not enter extra characters.

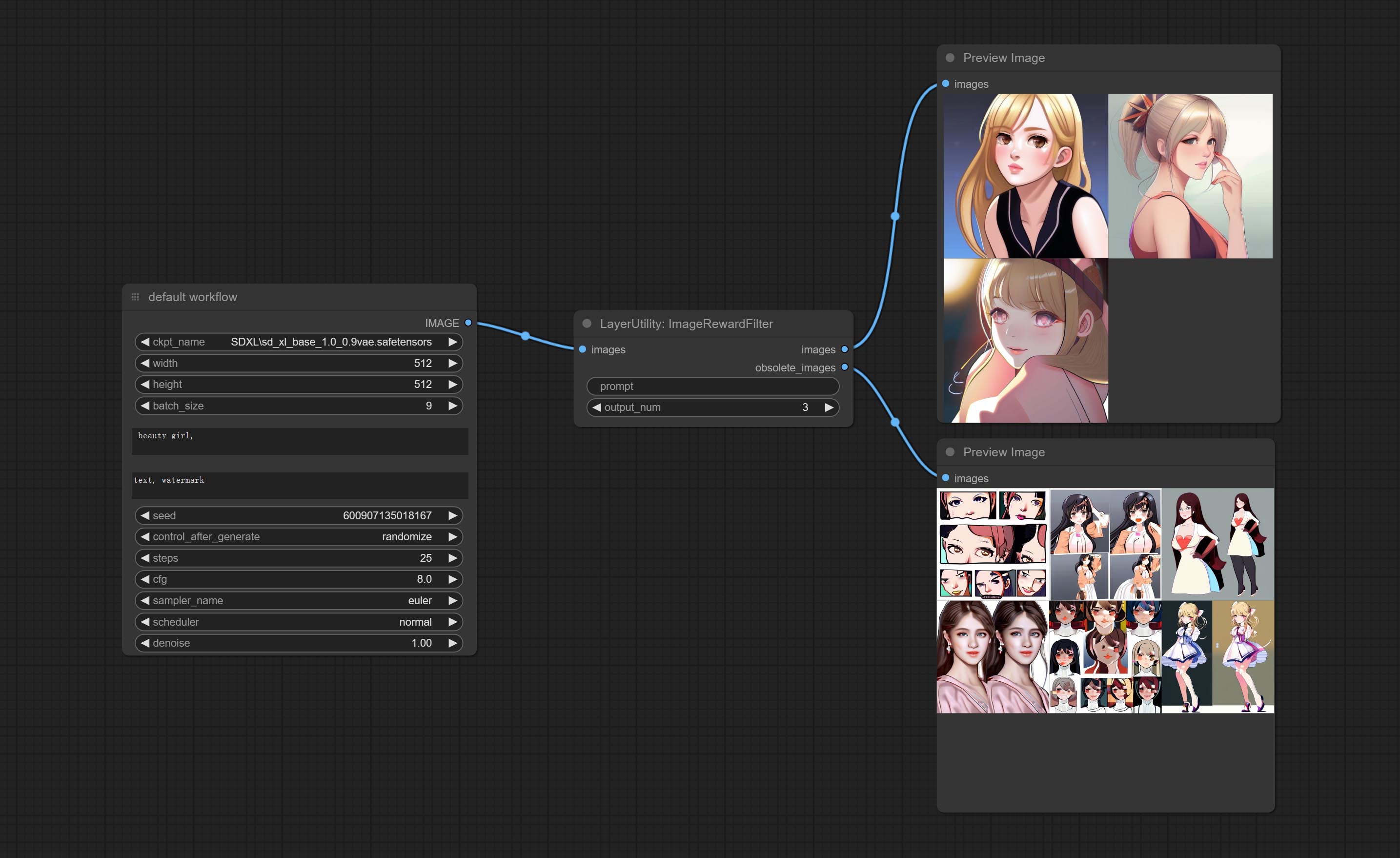

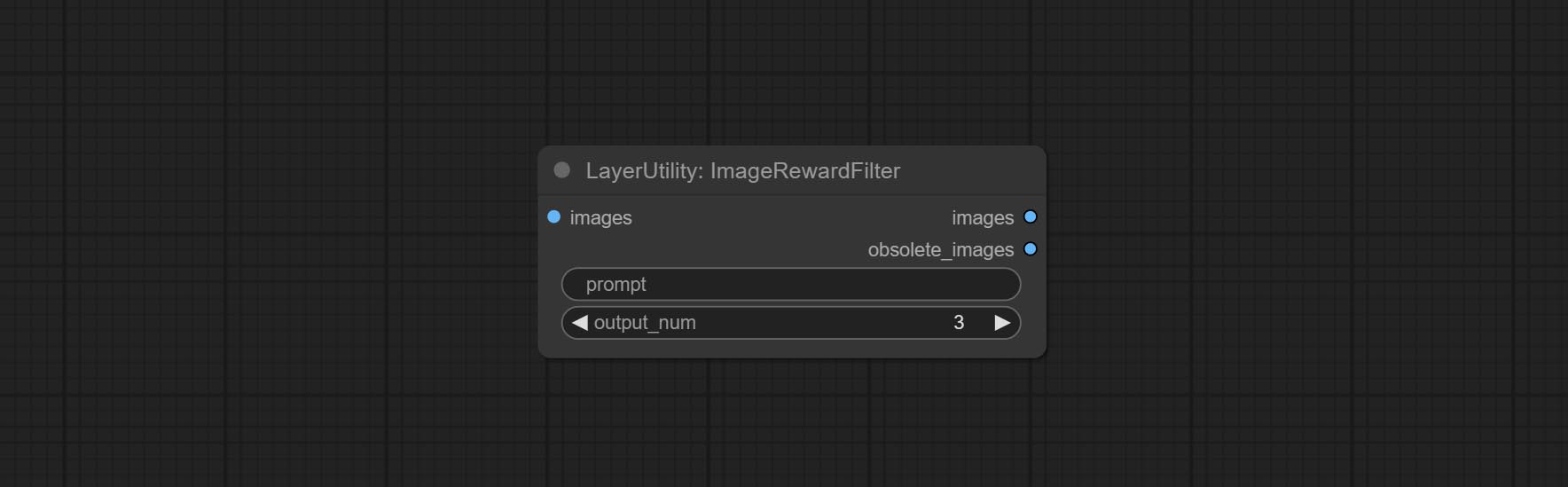

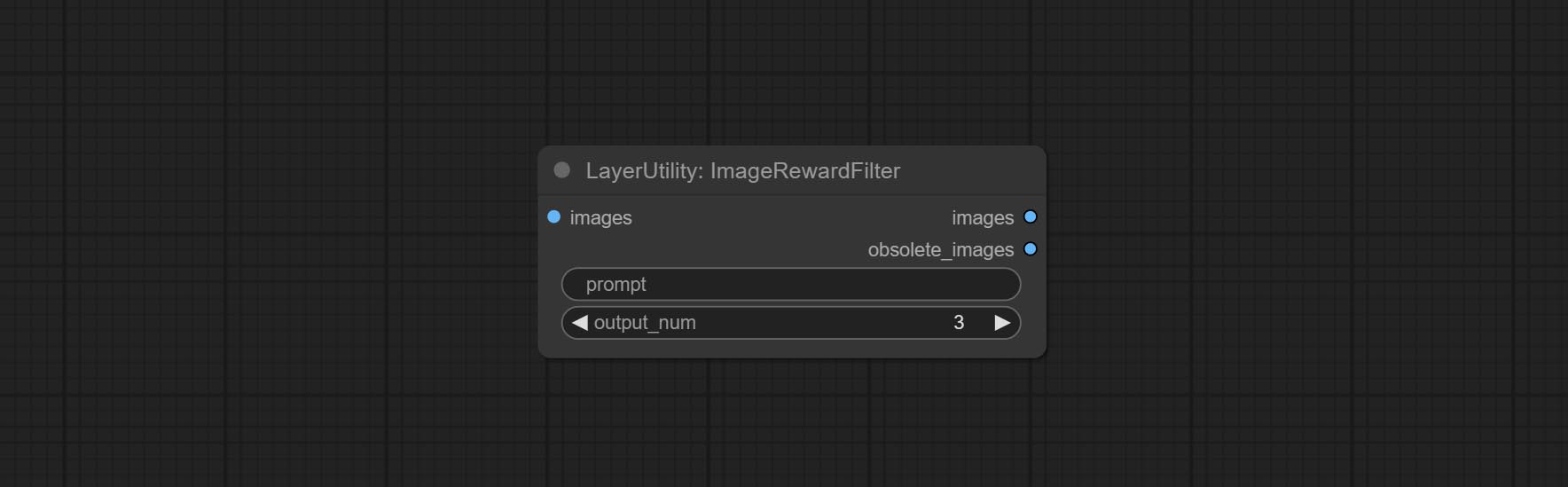

ImageRewardFilter

Rating bulk pictures and outputting top-ranked pictures. it used [ImageReward] (https://github.com/THUDM/ImageReward) for image scoring, thanks to the original authors.

Node options:

- prompt: Optional input. Entering prompt here will be used as a basis to determine how well it matches the picture.

- output_nun: Number of pictures outputted. This value should be less than the picture batch.

Outputs:

- images: Bulk pictures output from high to low in order of rating.

- obsolete_images: Knockout pictures. Also output in order of rating from high to low.

SimpleTextImage

Generate simple typesetting images and masks from text. This node references some of the functionalities and code of ZHO-ZHO-ZHO/ComfyUI-Text_Image-Composite, thanks to the original author.

Node options:

- size_as * : The input image or mask here will generate the output image and mask according to their size. this input takes priority over the width and height below.

- font_file ** : Here is a list of available font files in the font folder, and the selected font files will be used to generate images.

- align: Alignment options. There are three options: center, left, and right.

- char_per_line: The number of characters per line, any excess will be automatically wrapped.

- leading: The leading space.

- font_size: The size of font.

- text_color: The color of text.

- stroke_width: The width of stroke.

- stroke_color: The color of stroke.

- x_offset: The horizontal offset of the text position.

- y_offset: The vertical offset of the text position.

- width: Width of the image. If there is a size_as input, this setting will be ignored.

- height: Height of the image. If there is a size_as input, this setting will be ignored.

* Only limited to input image and mask. forcing the integration of other types of inputs will result in node errors.

** The font folder is defined in resource_dir.ini , this file is located in the root directory of the plug-in, and the default name is resource_dir.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open the text editing software and find the line starting with "FONT_dir=", after "=", enter the custom folder path name. support defining multiple folders in resource-dir.ini , separated by commas, semicolons, or spaces. all font files in this folder will be collected and displayed in the node list during ComfyUI initialization. If the folder set in ini is invalid, the font folder that comes with the plugin will be enabled.

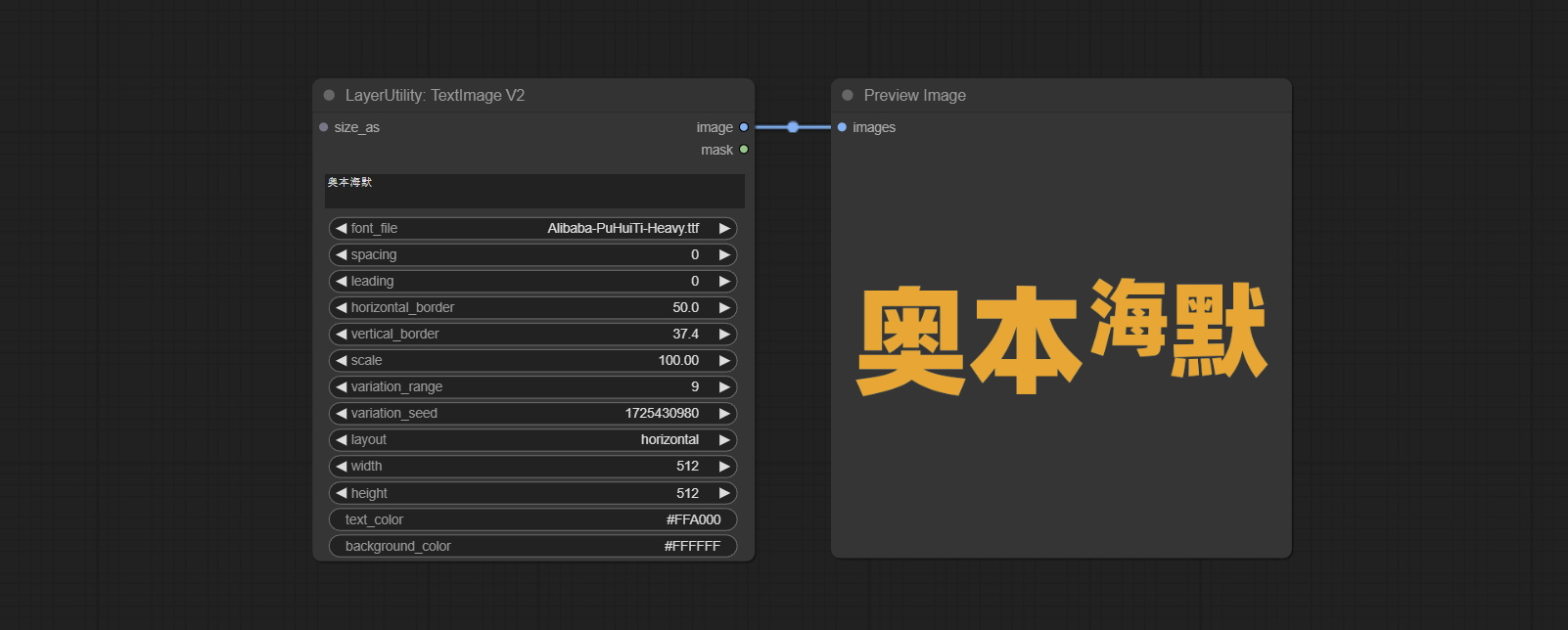

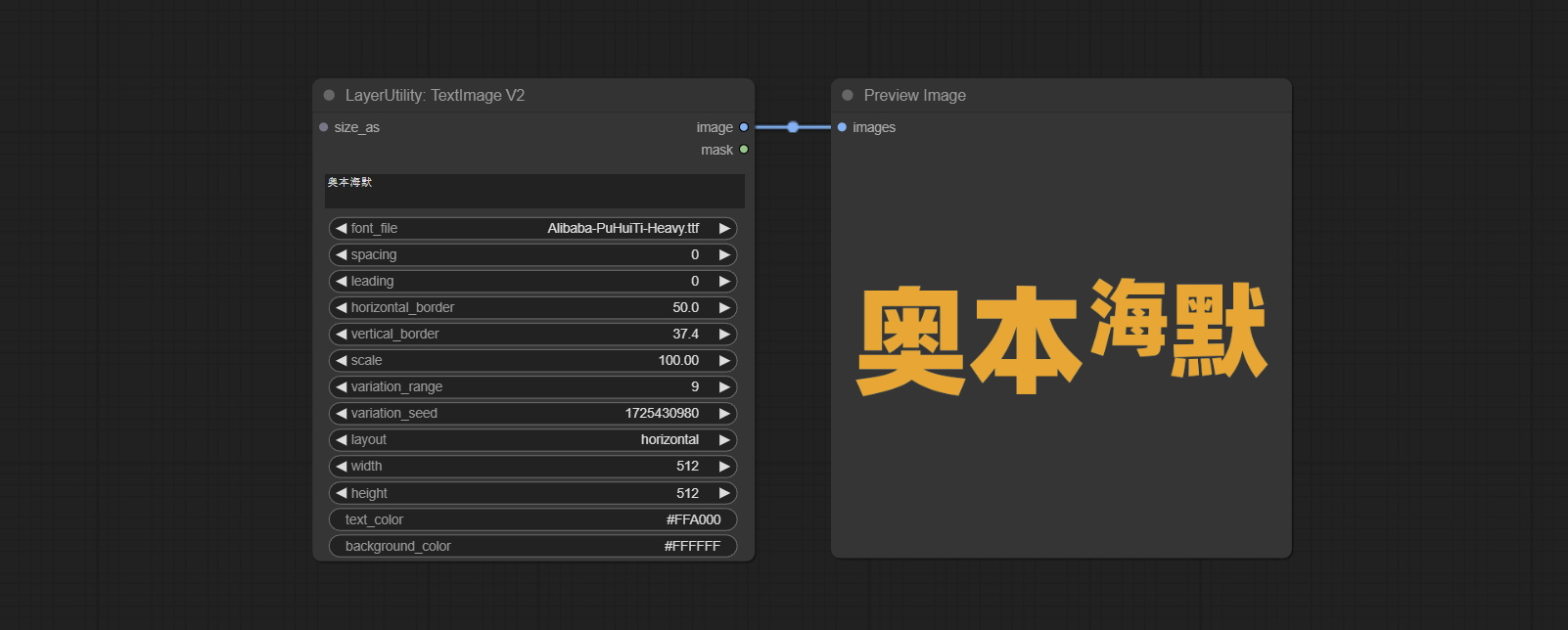

TextImage

Generate images and masks from text. support for adjusting the spacing between words and lines, horizontal and vertical adjustments, it can set random changes in each character, including size and position.

Node options:

- size_as * : The input image or mask here will generate the output image and mask according to their size. this input takes priority over the width and height below.

- font_file ** : Here is a list of available font files in the font folder, and the selected font files will be used to generate images.

- spacing: Word spacing.this value is in pixels.

- leading: Row leading.this value is in pixels.

- horizontal_border: Side margin. If the text is horizontal, it is the left margin, and if it is vertical, it is the right margin. this value is represents a percentage, for example, 50 indicates that the starting point is located in the center on both sides.

- vertical_border: Top margin. this value is represents a percentage, for example, 10 indicates that the starting point is located 10% away from the top.

- scale: The overall size of the text. the initial size of text is automatically calculated based on the screen size and text content, with the longest row or column by default adapting to the image width or height. adjusting the value here will scale the text as a whole. this value is represents a percentage, for example, 60 represents scaling to 60%.

- variation_range: The range of random changes in characters. when this value is greater than 0, the character will undergo random changes in size and position, and the larger the value, the greater the magnitude of the change.

- variation_seed: The seed for randomly. fix this value to individual characters changes generated each time will not change.

- layout: Text layout. there are horizontal and vertical options to choose from.

- width: Width of the image. If there is a size_as input, this setting will be ignored.

- height: Height of the image. If there is a size_as input, this setting will be ignored.

- text_color: The color of text.

- background_color 4 : The color of background.

* Only limited to input image and mask. forcing the integration of other types of inputs will result in node errors.

** The font folder is defined in resource_dir.ini , this file is located in the root directory of the plug-in, and the default name is resource_dir.ini.example . to use this file for the first time, you need to change the file suffix to .ini . Open the text editing software and find the line starting with "FONT_dir=", after "=", enter the custom folder path name. support defining multiple folders in resource-dir.ini , separated by commas, semicolons, or spaces. all font files in this folder will be collected and displayed in the node list during ComfyUI initialization. If the folder set in ini is invalid, the font folder that comes with the plugin will be enabled.

TextImageV2

This node is merged from heshengtao. The PR modifies the scaling of the image text node based on the TextImage node. The font spacing follows the scaling, and the coordinates are no longer based on the top left corner of the text, but on the center point of the entire line of text. Thank you for the author's contribution.

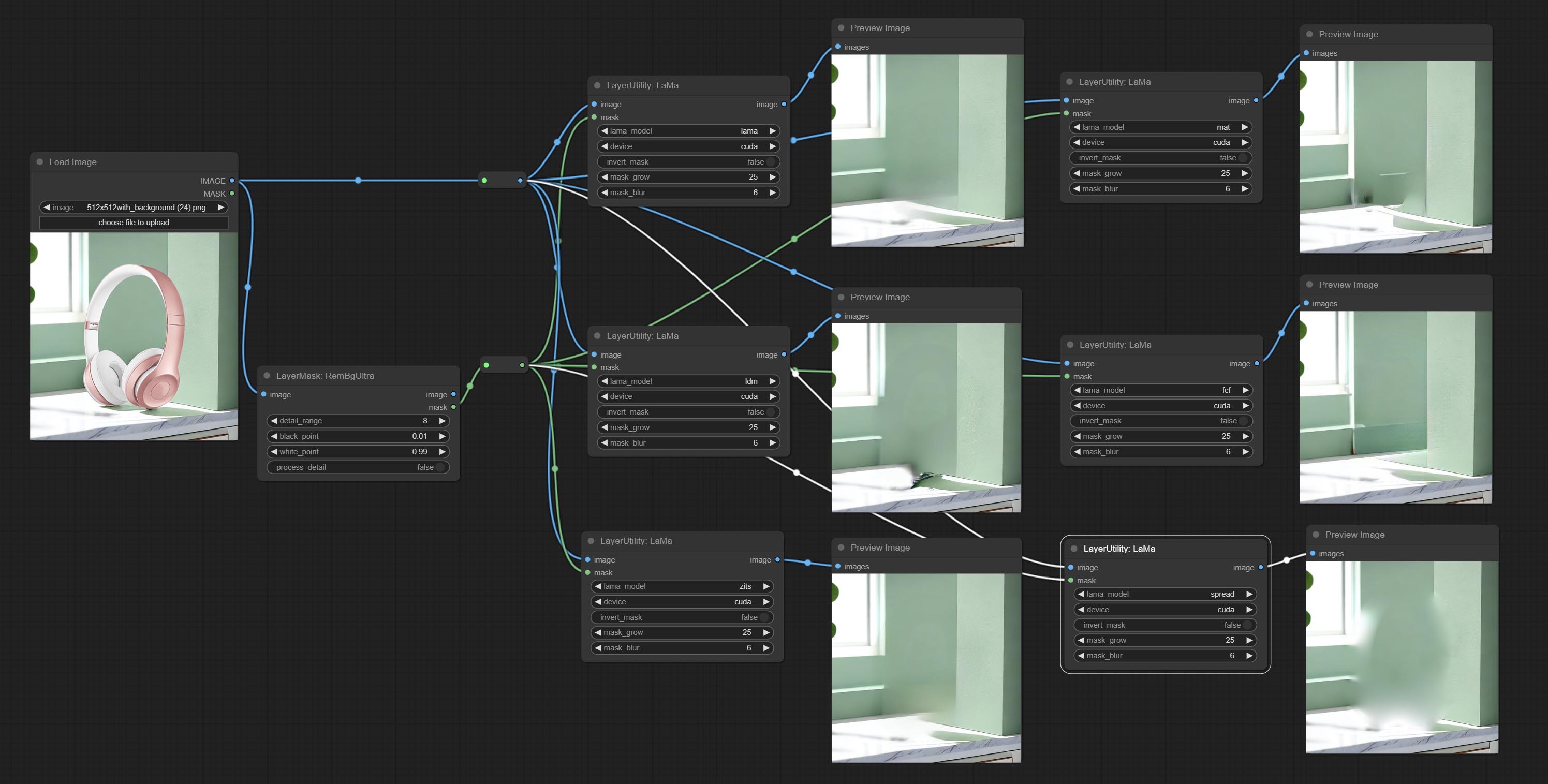

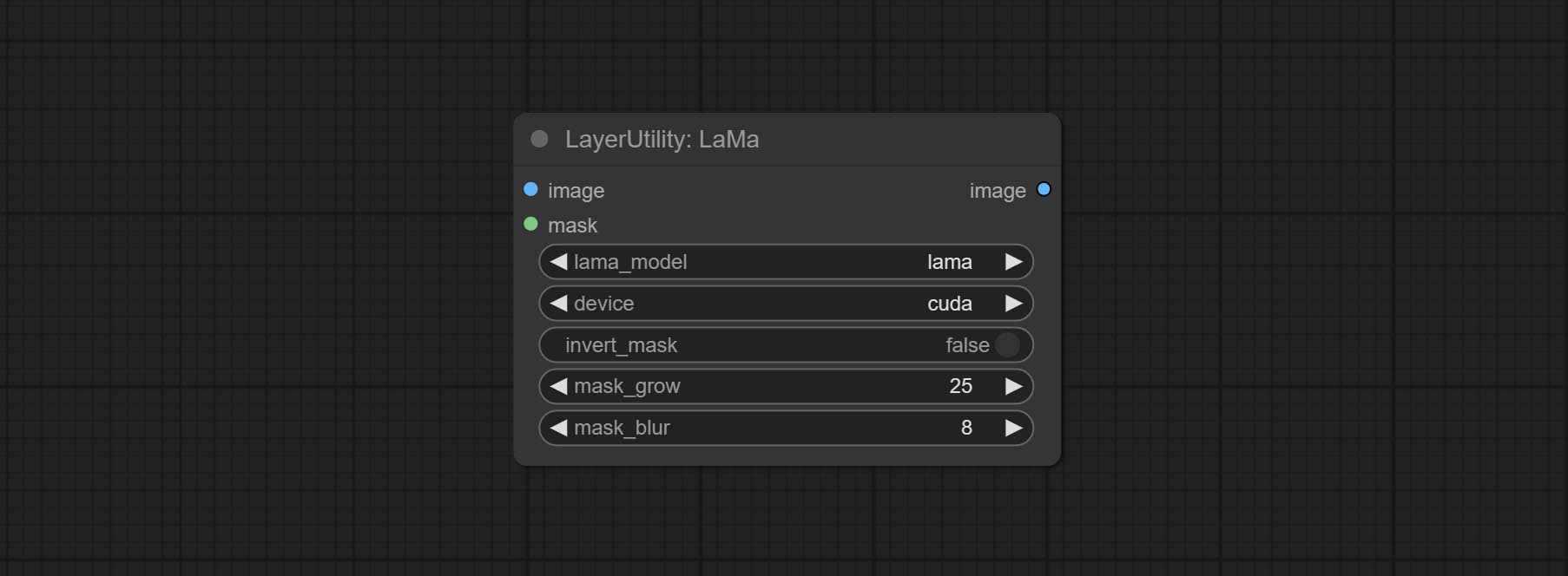

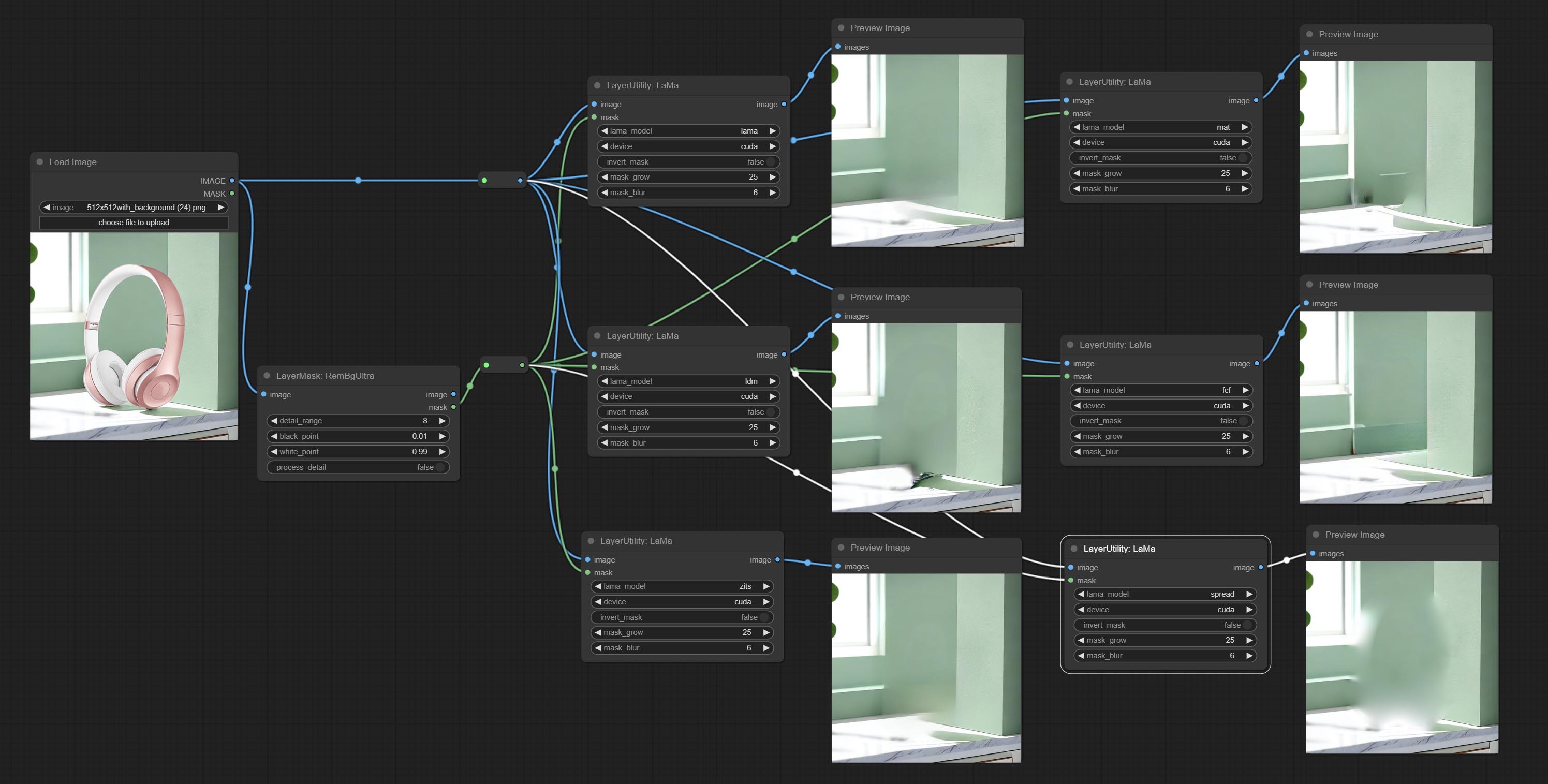

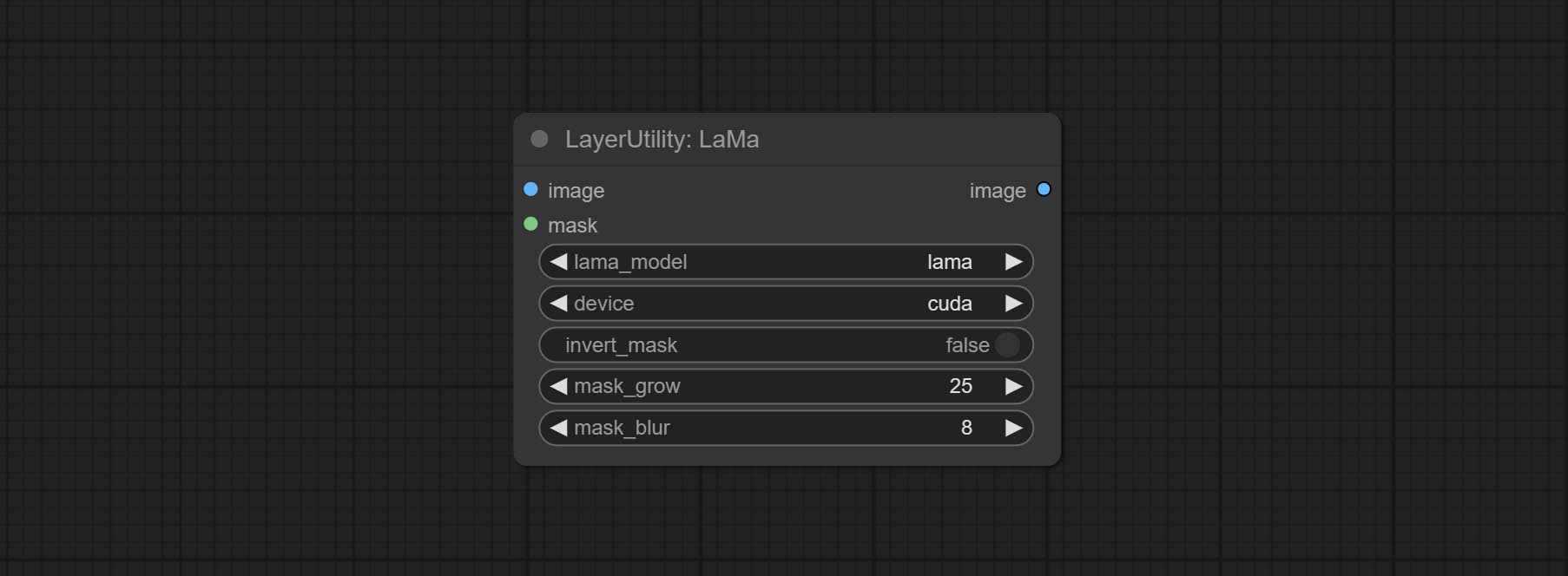

라마승

Erase objects from the image based on the mask. this node is repackage of IOPaint, powered by state-of-the-art AI models, thanks to the original author.

It is have LaMa, LDM, ZITS,MAT, FcF, Manga models and the SPREAD method to erase. Please refer to the original link for the introduction of each model.

Please download the model files from lama models(BaiduNetdisk) or lama models(Google Drive) to ComfyUI/models/lama folder.

Node optons:

- lama_model: Choose a model or method.

- device: After correctly installing Torch and Nvidia CUDA drivers, using cuda will significantly improve running speed.

- invert_mask: Whether to reverse the mask.

- grow: Positive values expand outward, while negative values contract inward.

- blur: Blur the edge.

ImageChannelSplit

Split the image channel into individual images.

Node options:

- mode: Channel mode, include RGBA, YCbCr, LAB adn HSV.

ImageChannelMerge

Merge each channel image into one image.

Node options:

- mode: Channel mode, include RGBA, YCbCr, LAB adn HSV.

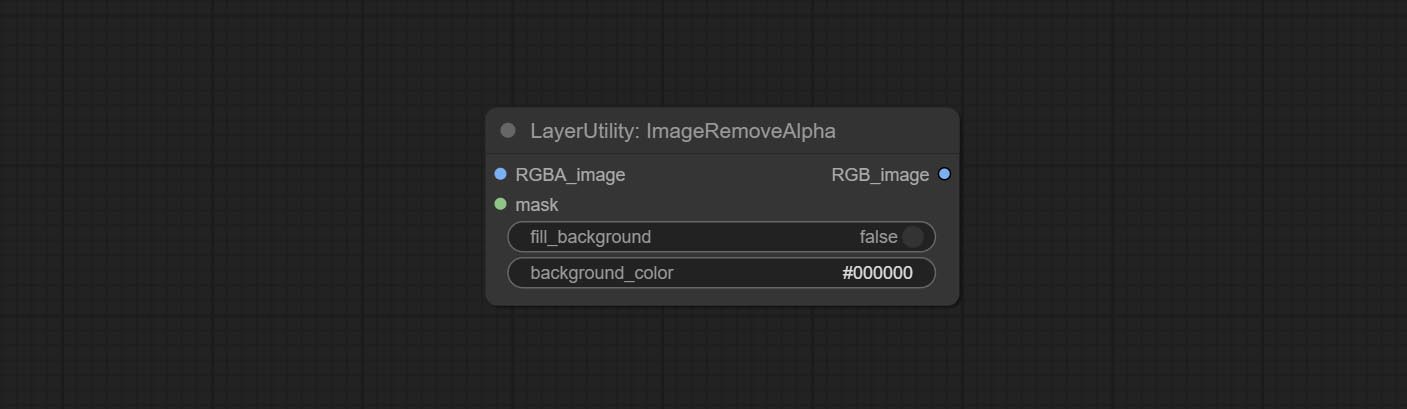

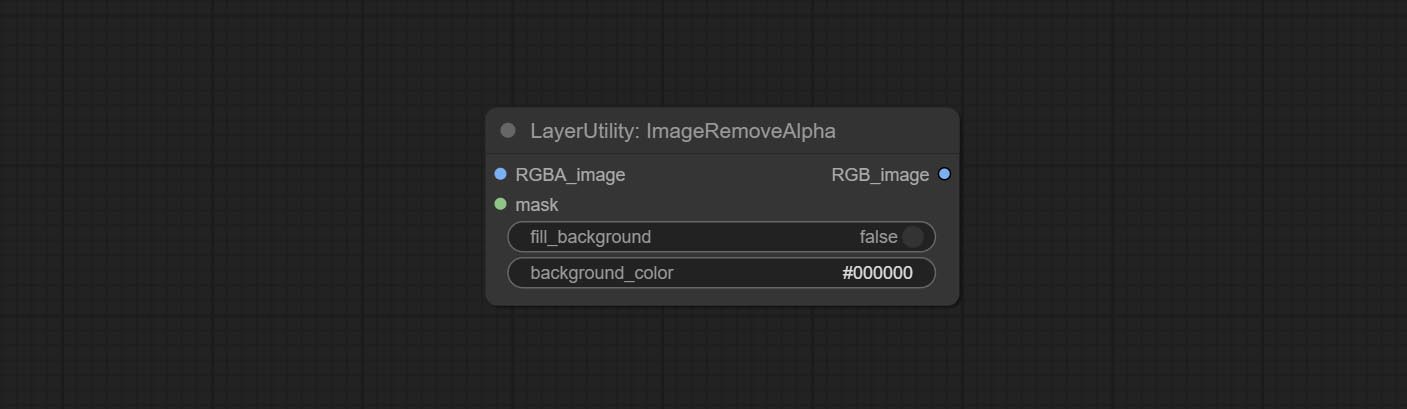

ImageRemoveAlpha

Remove the alpha channel from the image and convert it to RGB mode. you can choose to fill the background and set the background color.

Node options:

- RGBA_image: The input image supports RGBA or RGB modes.

- mask: Optional input mask. If there is an input mask, it will be used first, ignoring the alpha that comes with RGBA_image.

- fill_background: Whether to fill the background.

- background_color 4 : Color of background.

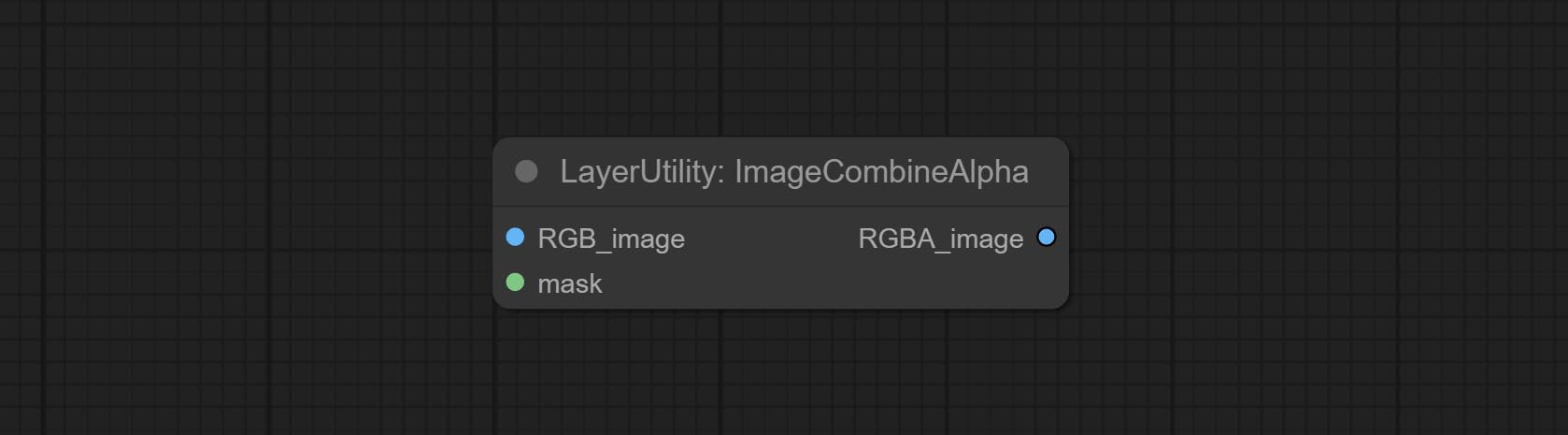

ImageCombineAlpha

Merge the image and mask into an RGBA mode image containing an alpha channel.

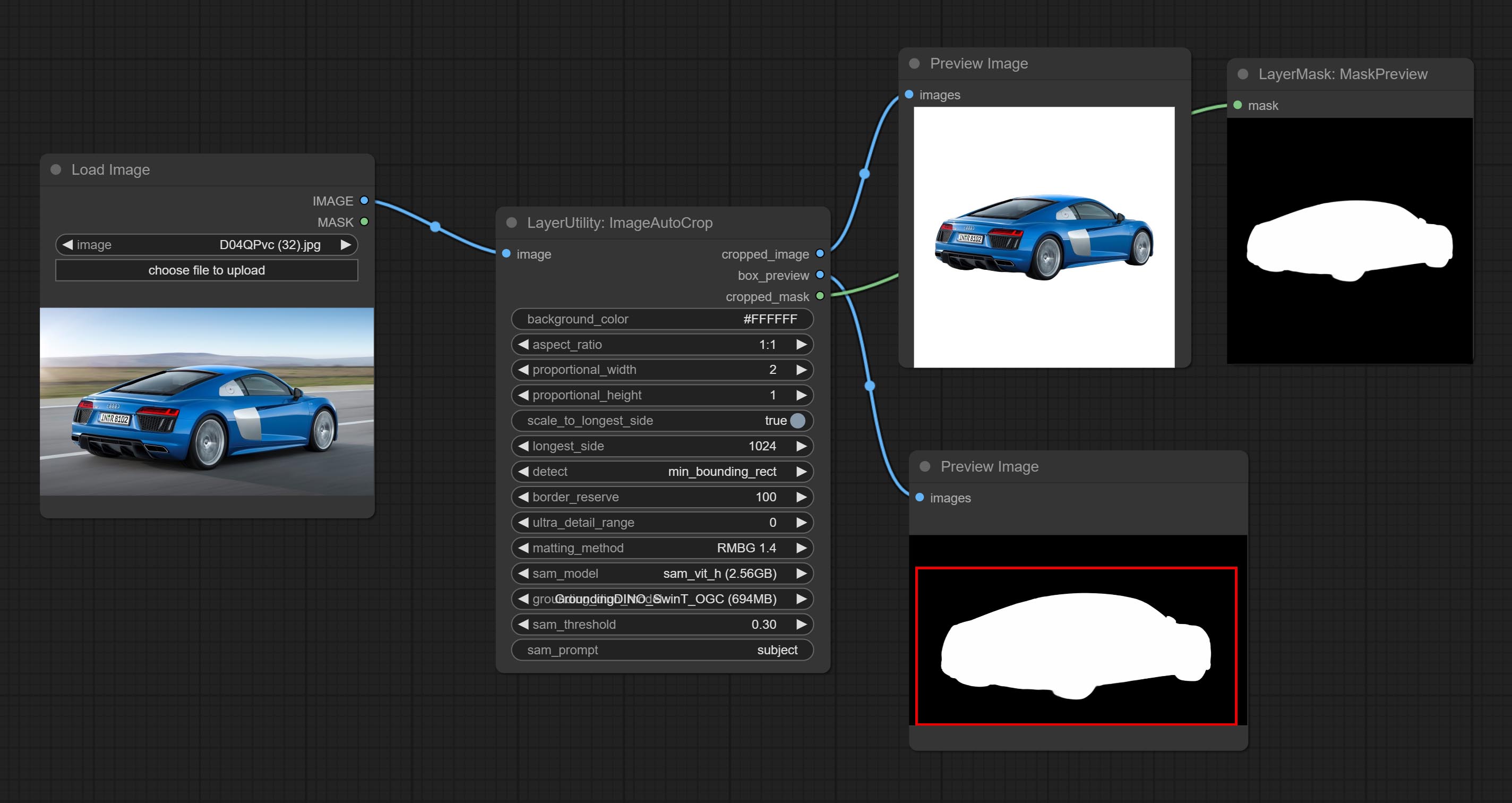

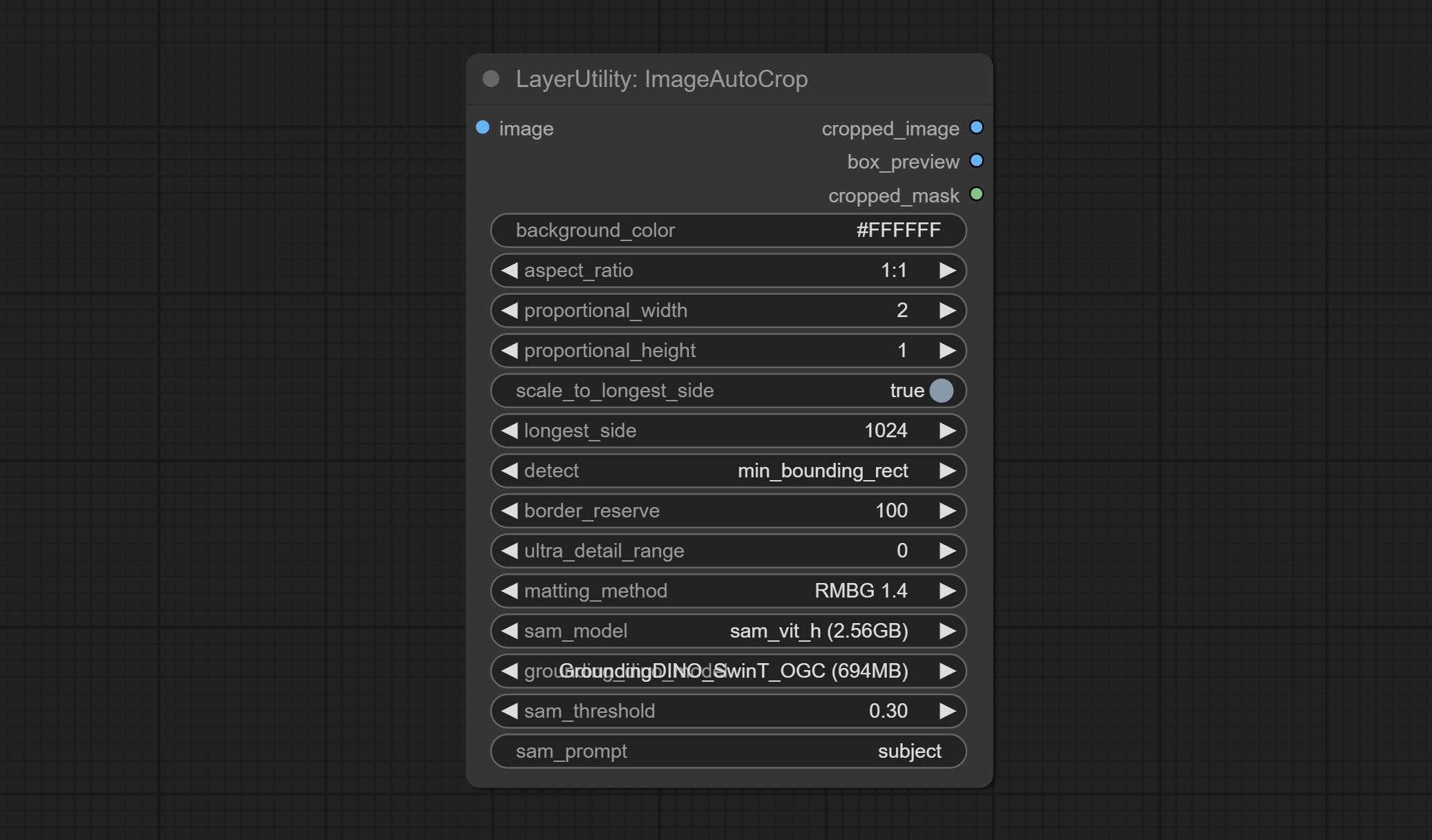

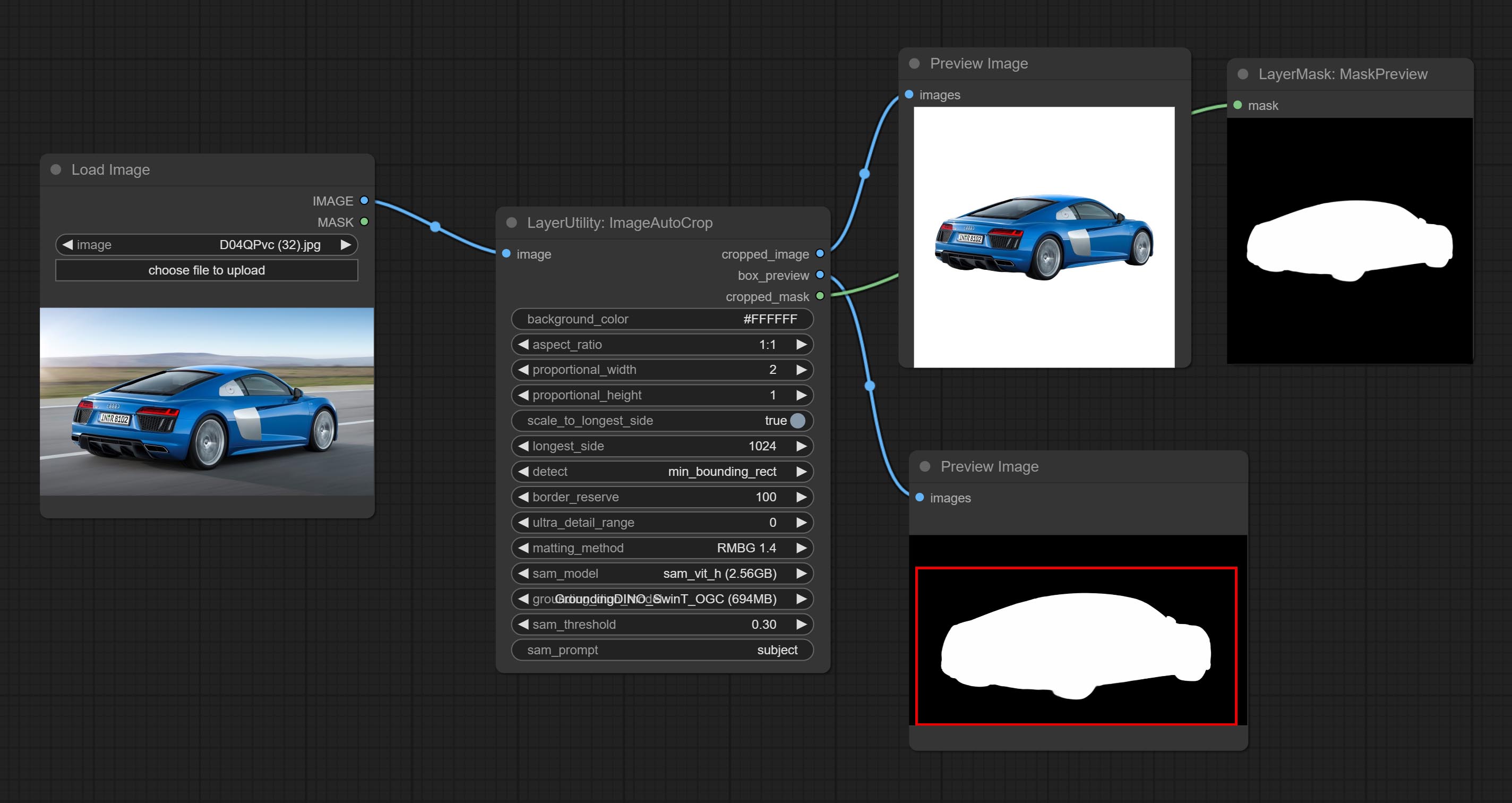

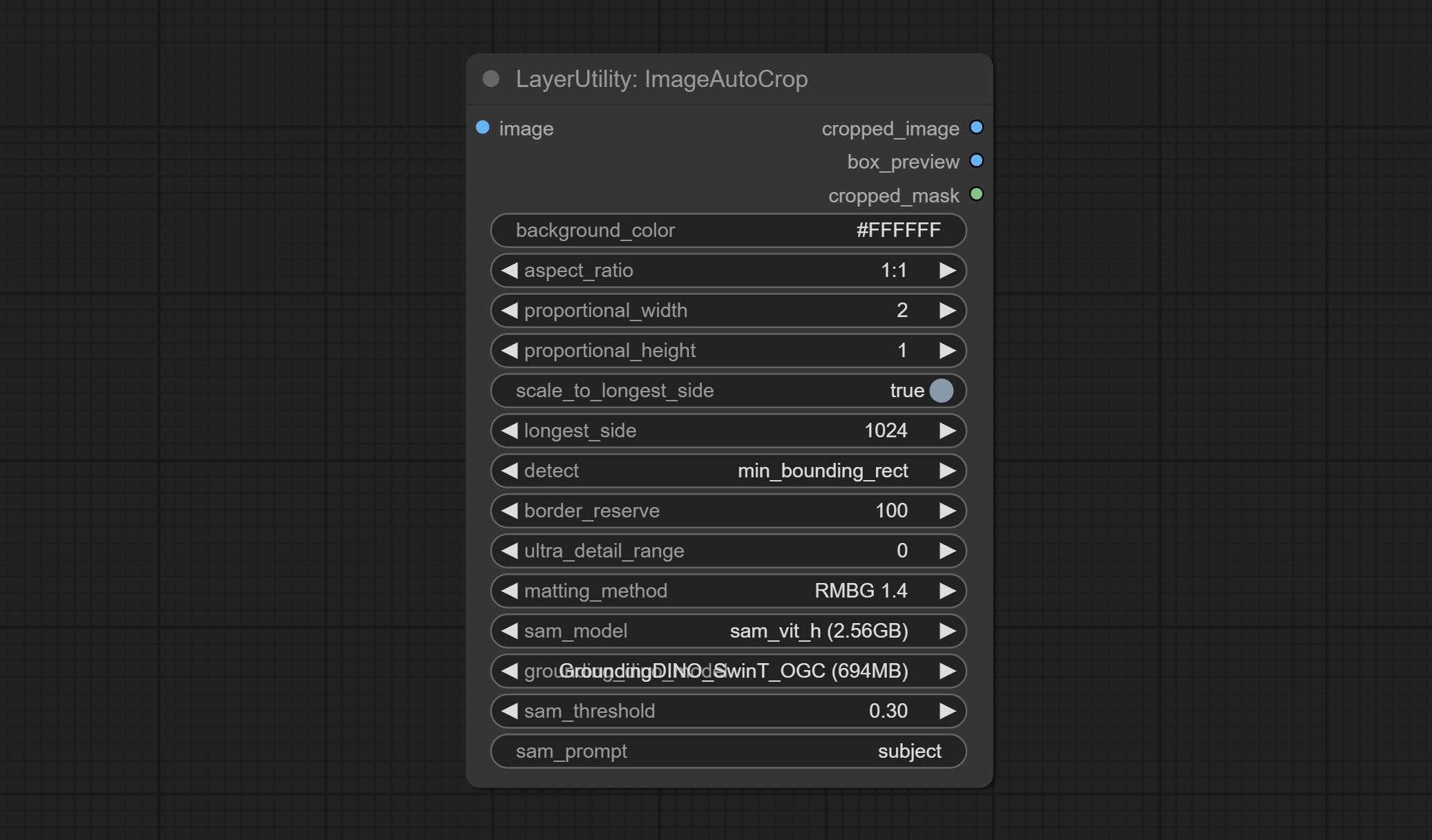

ImageAutoCrop

Automatically cutout and crop the image according to the mask. it can specify the background color, aspect ratio, and size for output image. this node is designed to generate the image materials for training models.

*Please refer to the model installation methods for SegmentAnythingUltra and RemBgUltra.

Node options:

- background_color 4 : The background color.

- aspect_ratio: Here are several common frame ratios provided. alternatively, you can choose "original" to keep original ratio or customize the ratio using "custom".

- proportional_width: Proportional width. if the aspect ratio option is not "custom", this setting will be ignored.

- proportional_height: Proportional height. if the aspect ratio option is not "custom", this setting will be ignored.

- scale_by_longest_side: Allow scaling by long edge size.

- longest_side: When the scale_by_longest_side is set to True, this will be used this value to the long edge of the image. when the original_size have input, this setting will be ignored.

- detect: Detection method, min_bounding_rect is the minimum bounding rectangle, max_inscribed_rect is the maximum inscribed rectangle.

- border_reserve: Keep the border. expand the cutting range beyond the detected mask body area.

- ultra_detail_range: Mask edge ultra fine processing range, 0 is not processed, which can save generation time.

- matting_method: The method of generate masks. There are two methods available: Segment Anything and RMBG 1.4. RMBG 1.4 runs faster.

- sam_model: Select the SAM model used by Segment Anything here.

- grounding_dino_model: Select the Grounding_Dino model used by Segment Anything here.

- sam_threshold: The threshold for Segment Anything.

- sam_prompt: The prompt for Segment Anything.

Output: cropped_image: Crop and replace the background image. box_preview: Crop position preview. cropped_mask: Cropped mask.

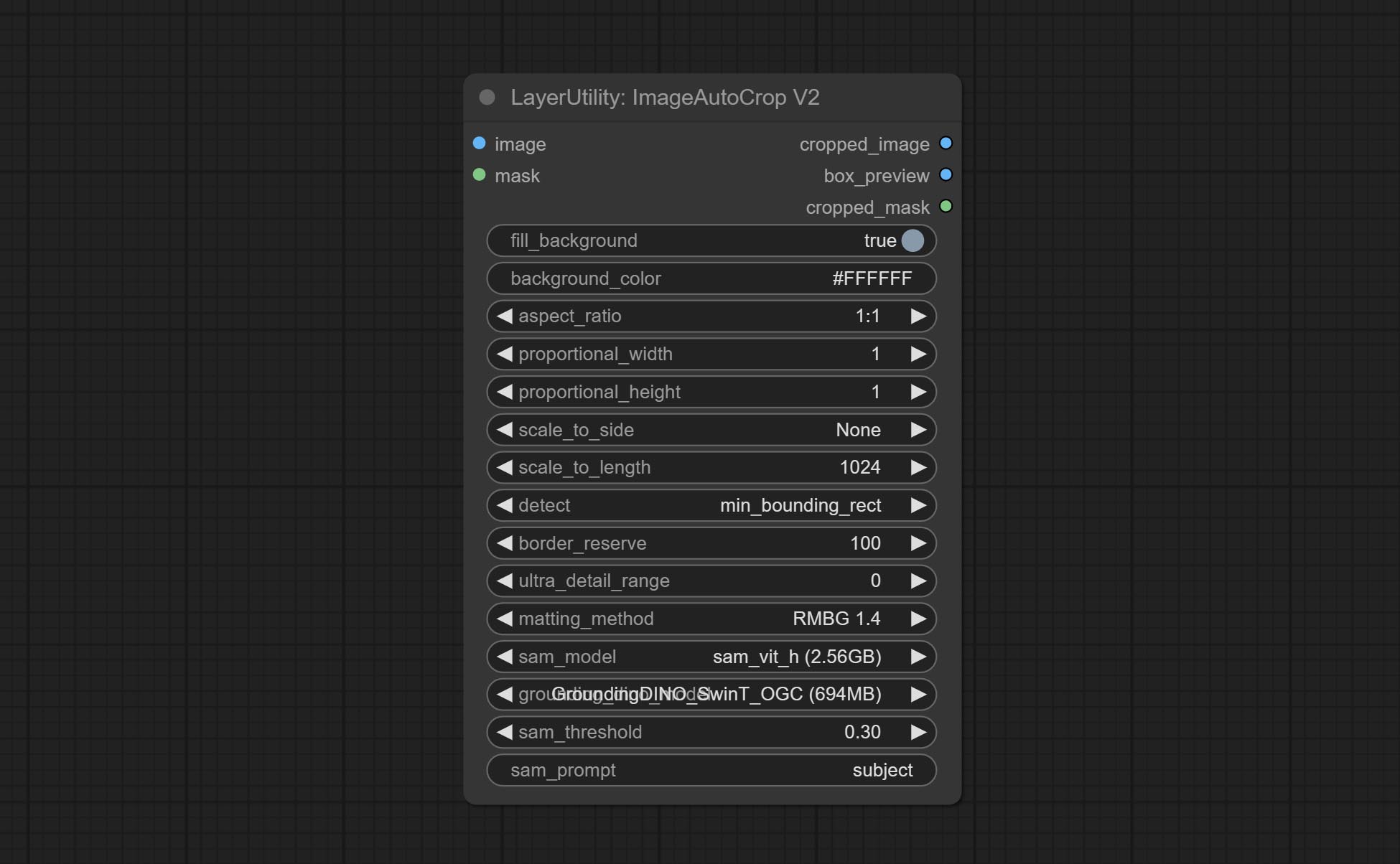

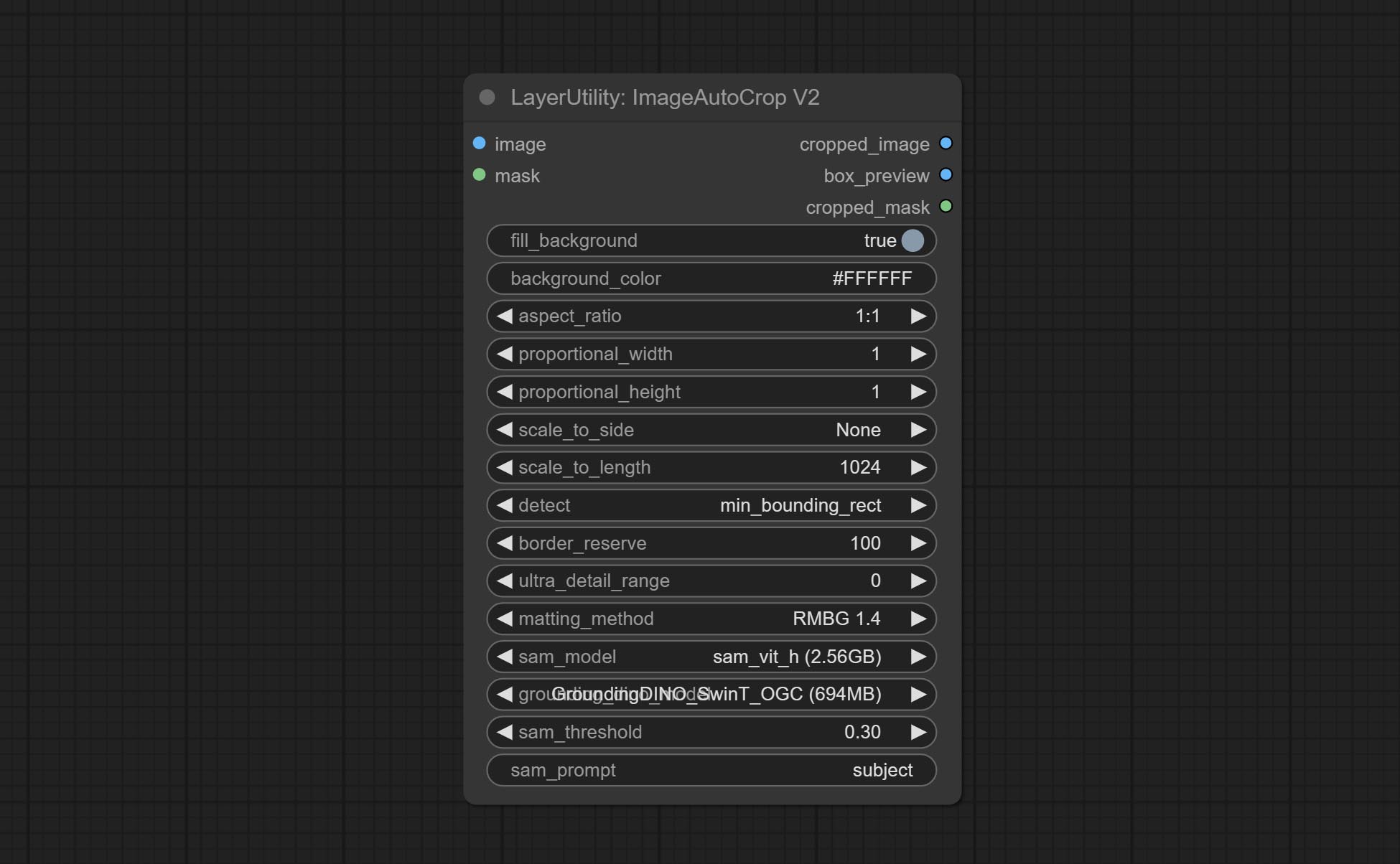

ImageAutoCropV2

The V2 upgrad version of ImageAutoCrop , it has made the following changes based on the previous version:

- Add optional input for mask. when there is a mask input, use that input directly to skip the built-in mask generation.

- Add

fill_background . When set to False, the background will not be processed and any parts beyond the frame will not be included in the output range. -

aspect_ratio adds the original option. - scale_by: Allow scaling by specified dimensions for longest, shortest, width, or height.

- scale_by_length: The value here is used as

scale_by to specify the length of the edge.

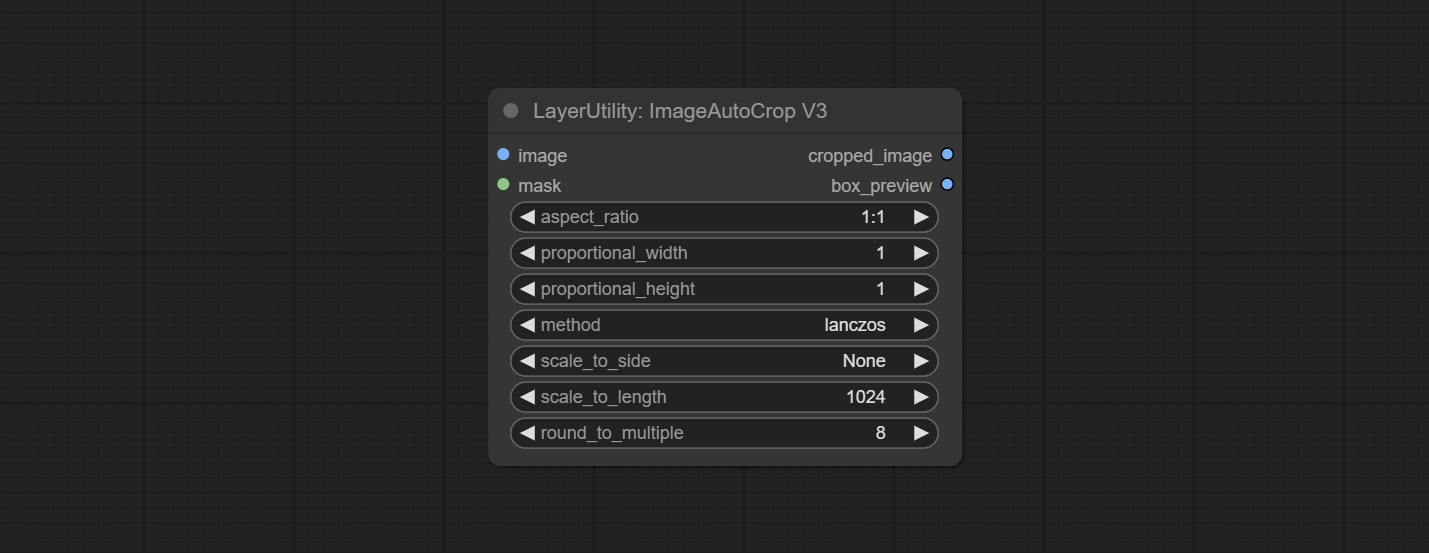

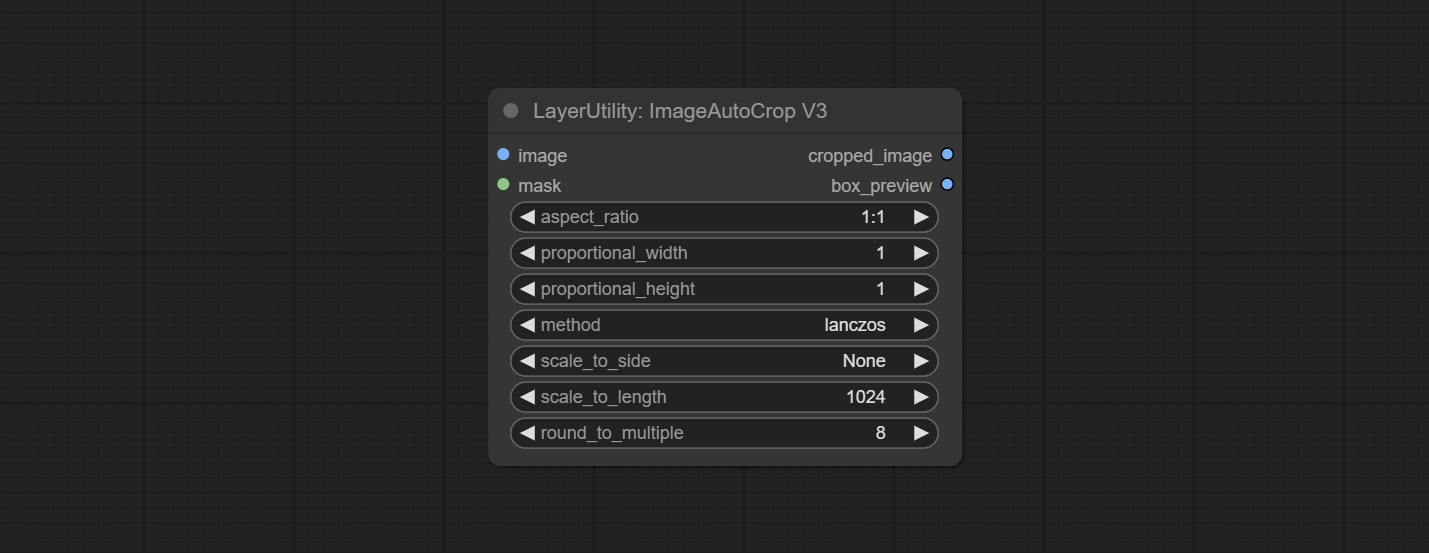

ImageAutoCropV3

Automatically crop the image to the specified size. You can input a mask to preserve the specified area of the mask. This node is designed to generate image materials for training the model.

Node Options:

- image: The input image.

- mask: Optional input mask. The masking part will be preserved within the range of the cutting aspect ratio.

- aspect_ratio: The aspect ratio of the output. Here are common frame ratios provided, with "custom" being the custom ratio and "original" being the original frame ratio.

- proportional_width: Proportionally wide. If the aspect_ratio option is not 'custom', this setting will be ignored.

- proportional_height: High proportion. If the aspect_ratio option is not 'custom', this setting will be ignored.

- method: Scaling sampling methods include Lanczos, Bicubic, Hamming, Bilinear, Box, and Nearest.

- scale_to_side: Allow scaling to be specified by long side, short side, width, height, or total pixels.

- scale_to_length: The value here is used as the scale_to-side to specify the length of the edge or the total number of pixels (kilo pixels).

- round_to_multiple: Multiply to the nearest whole. For example, if set to 8, the width and height will be forcibly set to multiples of 8.

Outputs: cropped_image: The cropped image. box_preview: Preview of cutting position.

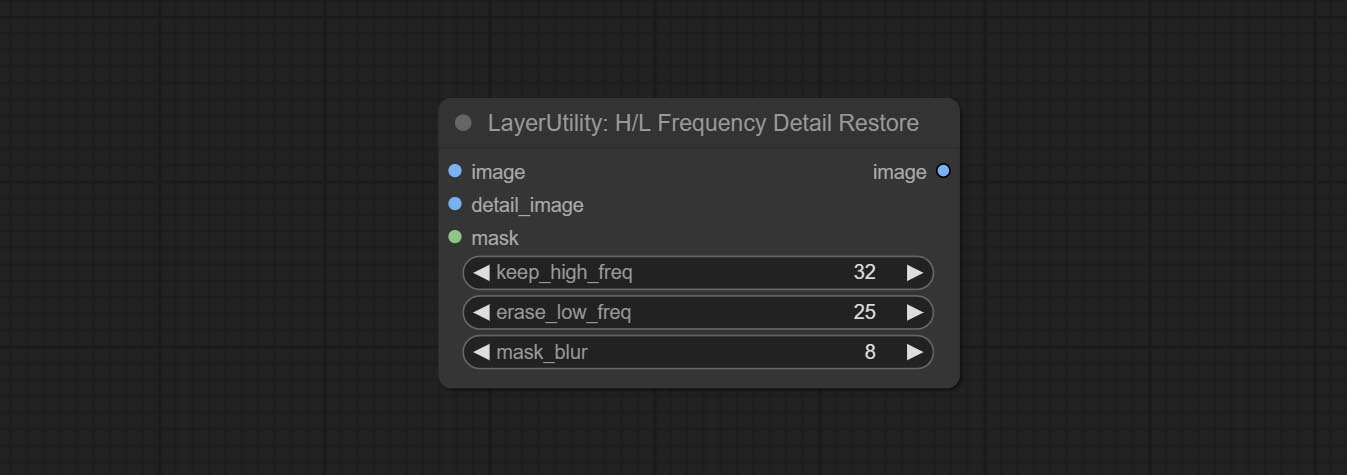

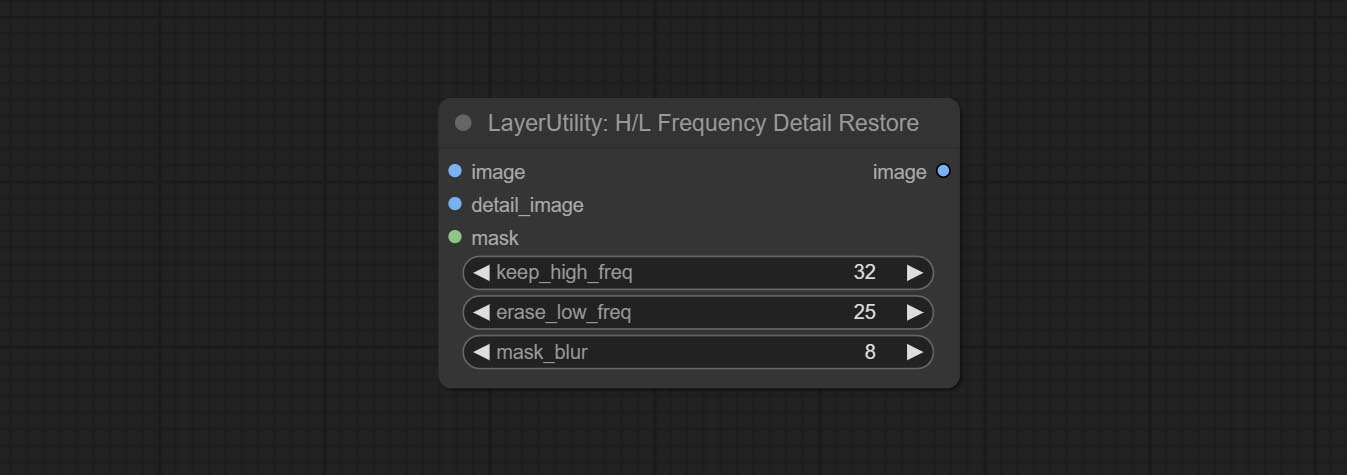

HLFrequencyDetailRestore

Using low frequency filtering and retaining high frequency to recover image details. Compared to kijai's DetailTransfer, this node is better integrated with the environment while retaining details.

Node Options:

- image: Background image input.

- detail_image: Detail image input.

- mask: Optional input, if there is a mask input, only the details of the mask part are restored.

- keep_high_freq: Reserved range of high frequency parts. The larger the value, the richer the retained high-frequency details.

- erase_low_freq: The range of low frequency parts of the erasure. The larger the value, the more the low frequency range of the erasure.

- mask_blur: Mask edge blur. Valid only if there is masked input.