가능한 가장 간단한 방식으로 OpenAI API를 사용하는 Java 라이브러리.

Simple-Openai는 OpenAI API로부터 요청을 보내고 응답을받는 Java HTTP 클라이언트 라이브러리입니다. 모든 서비스에서 일관된 인터페이스를 노출 시키지만 Python 또는 Nodejs와 같은 다른 언어에서 찾을 수있는 것처럼 간단합니다. 비공식 도서관입니다.

Simple-Openai는 HTTP Communication, JSON 파싱을위한 Jackson 및 Lombok를 위해 CleverClient 라이브러리를 사용하여 BoilerPlate 코드를 최소화하여 라이브러리를 사용합니다.

Simple-Openai는 OpenAI에서 가장 최근의 변경 사항을 최신 상태로 유지하려고합니다. 현재 대부분의 기존 기능을 지원하며 향후 변경 사항으로 계속 업데이트 할 것입니다.

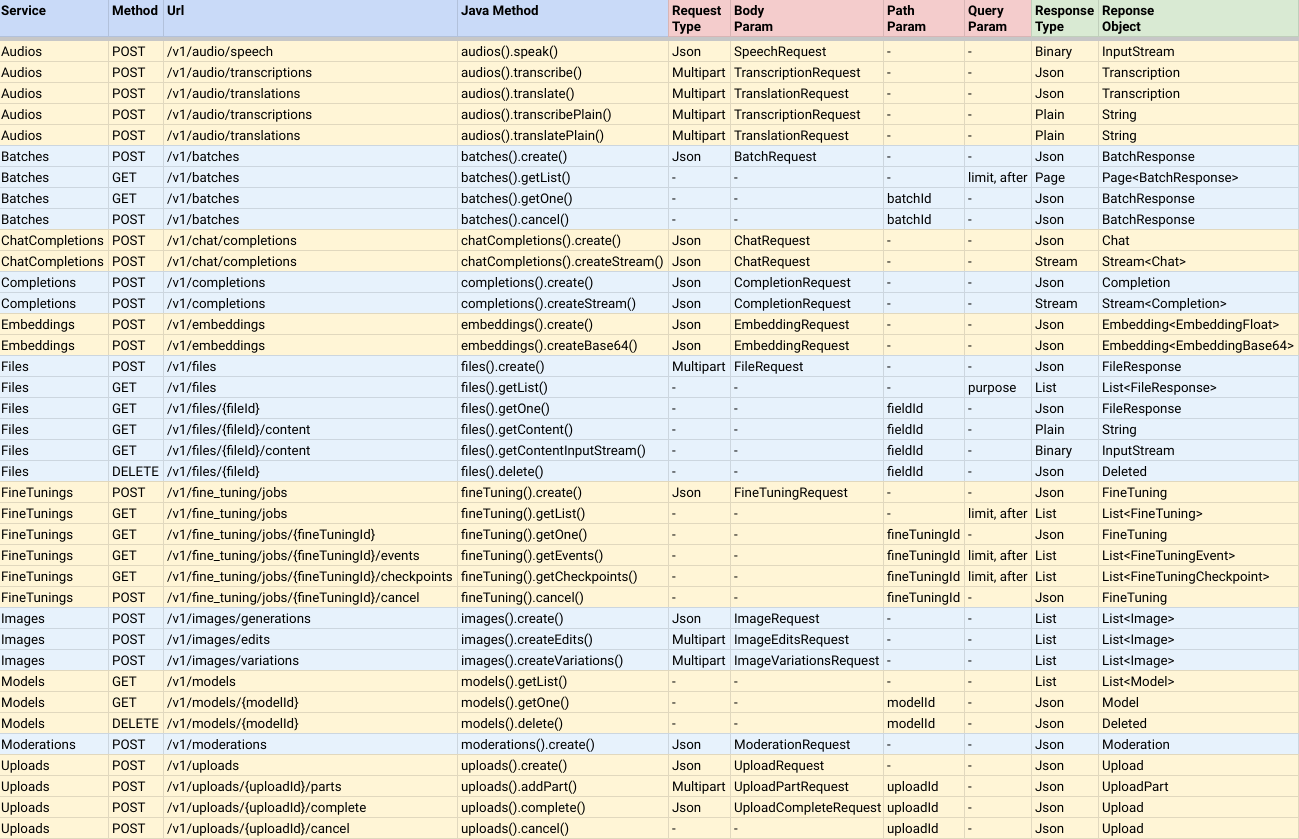

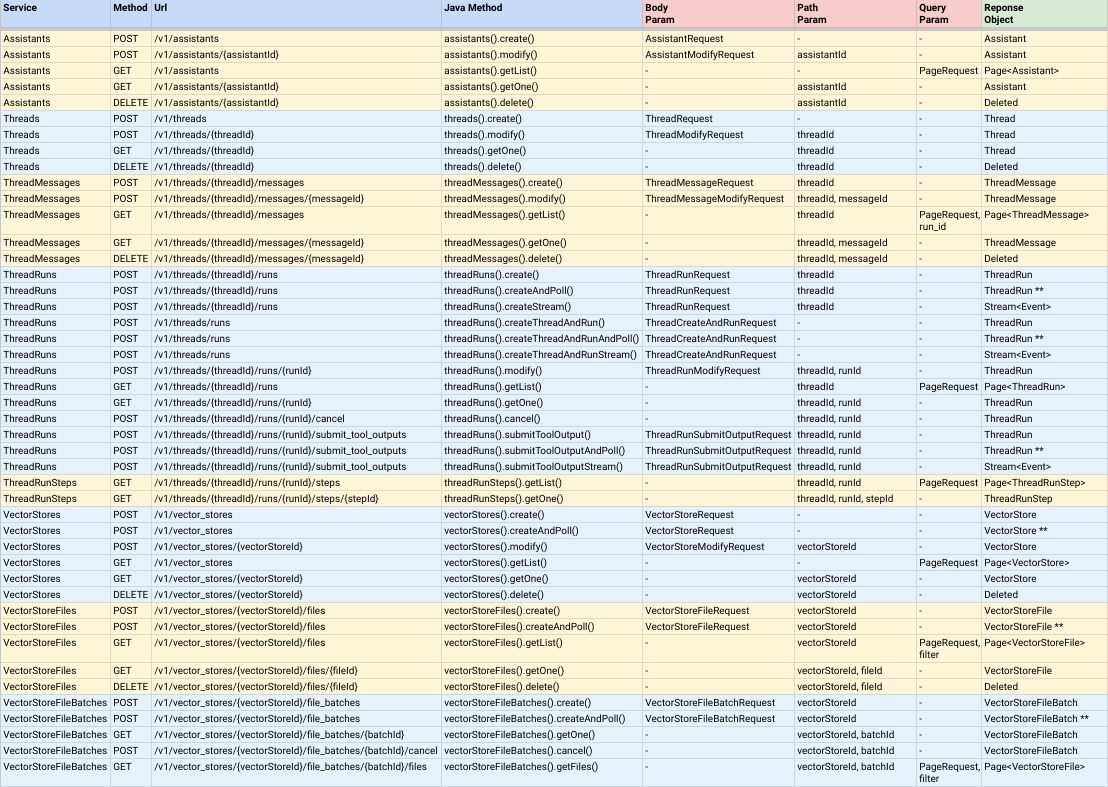

대부분의 OpenAI 서비스에 대한 완전한 지원 :

참고 :

CompletableFuture<ResponseObject> 이므로 비동기식이지만 join () 메소드를 호출하여 결과 값을 완료하면 결과 값을 반환 할 수 있습니다.AndPoll() 로 끝나는 메소드입니다. 이 방법은 동기식이며 제공하는 술어 함수가 거짓으로 거짓으로 표시 될 때까지 차단됩니다. Maven 프로젝트에 다음 의존성을 추가하여 Simple-Openai를 설치할 수 있습니다.

< dependency >

< groupId >io.github.sashirestela</ groupId >

< artifactId >simple-openai</ artifactId >

< version >[latest version]</ version >

</ dependency >또는 대안 적으로 Gradle 사용 :

dependencies {

implementation ' io.github.sashirestela:simple-openai:[latest version] '

} 이것은 서비스를 사용하기 전에해야 할 첫 번째 단계입니다. 최소한 OpenAI API 키를 제공해야합니다 (자세한 내용은 여기 참조). 다음 예에서는 OPENAI_API_KEY 라는 환경 변수에서 API 키를 얻습니다.

var openAI = SimpleOpenAI . builder ()

. apiKey ( System . getenv ( "OPENAI_API_KEY" ))

. build ();선택적으로 여러 조직이 있고 조직별로 사용량을 식별하거나 단일 프로젝트에 대한 액세스를 제공하려는 경우 OpenAI 프로젝트 ID를 통과 할 수있는 경우 OpenAI 조직 ID를 통과 할 수 있습니다. 다음 예에서는 해당 ID에 환경 변수를 사용하고 있습니다.

var openAI = SimpleOpenAI . builder ()

. apiKey ( System . getenv ( "OPENAI_API_KEY" ))

. organizationId ( System . getenv ( "OPENAI_ORGANIZATION_ID" ))

. projectId ( System . getenv ( "OPENAI_PROJECT_ID" ))

. build ();선택적으로도, 실행자, 프록시, 타임 아웃, 쿠키 등과 같은 HTTP 연결에 대한 더 많은 옵션을 원한다면 사용자 정의 Java HTTPClient 객체를 제공 할 수 있습니다 (자세한 내용은 여기를 참조하십시오). 다음 예에서는 맞춤형 httpclient를 제공합니다.

var httpClient = HttpClient . newBuilder ()

. version ( Version . HTTP_1_1 )

. followRedirects ( Redirect . NORMAL )

. connectTimeout ( Duration . ofSeconds ( 20 ))

. executor ( Executors . newFixedThreadPool ( 3 ))

. proxy ( ProxySelector . of ( new InetSocketAddress ( "proxy.example.com" , 80 )))

. build ();

var openAI = SimpleOpenAI . builder ()

. apiKey ( System . getenv ( "OPENAI_API_KEY" ))

. httpClient ( httpClient )

. build ();Simpleopenai 객체를 만든 후에는 OpenAI API와 통신하기 위해 서비스를 호출 할 준비가되었습니다. 몇 가지 예를 봅시다.

예제 오디오 서비스를 호출하여 텍스트를 오디오로 변환합니다. 이진 형식 (InputStream)으로 오디오를 받도록 요청하고 있습니다.

var speechRequest = SpeechRequest . builder ()

. model ( "tts-1" )

. input ( "Hello world, welcome to the AI universe!" )

. voice ( Voice . ALLOY )

. responseFormat ( SpeechResponseFormat . MP3 )

. speed ( 1.0 )

. build ();

var futureSpeech = openAI . audios (). speak ( speechRequest );

var speechResponse = futureSpeech . join ();

try {

var audioFile = new FileOutputStream ( speechFileName );

audioFile . write ( speechResponse . readAllBytes ());

System . out . println ( audioFile . getChannel (). size () + " bytes" );

audioFile . close ();

} catch ( Exception e ) {

e . printStackTrace ();

}예제 오디오 서비스에 전화하여 오디오를 텍스트로 전사합니다. 우리는 일반 텍스트 형식으로 전사를 받도록 요청하고 있습니다 (메소드 이름 참조).

var audioRequest = TranscriptionRequest . builder ()

. file ( Paths . get ( "hello_audio.mp3" ))

. model ( "whisper-1" )

. responseFormat ( AudioResponseFormat . VERBOSE_JSON )

. temperature ( 0.2 )

. timestampGranularity ( TimestampGranularity . WORD )

. timestampGranularity ( TimestampGranularity . SEGMENT )

. build ();

var futureAudio = openAI . audios (). transcribe ( audioRequest );

var audioResponse = futureAudio . join ();

System . out . println ( audioResponse );예제 이미지 서비스를 호출하여 프롬프트에 대한 응답으로 두 개의 이미지를 생성하는 것입니다. 우리는 이미지의 URL을 받도록 요청하고 콘솔에서 이미지를 인쇄하고 있습니다.

var imageRequest = ImageRequest . builder ()

. prompt ( "A cartoon of a hummingbird that is flying around a flower." )

. n ( 2 )

. size ( Size . X256 )

. responseFormat ( ImageResponseFormat . URL )

. model ( "dall-e-2" )

. build ();

var futureImage = openAI . images (). create ( imageRequest );

var imageResponse = futureImage . join ();

imageResponse . stream (). forEach ( img -> System . out . println ( " n " + img . getUrl ()));채팅 완료 서비스에 전화하여 질문을하고 전체 답변을 기다리십시오. 우리는 콘솔에서 그것을 인쇄하고 있습니다.

var chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. message ( SystemMessage . of ( "You are an expert in AI." ))

. message ( UserMessage . of ( "Write a technical article about ChatGPT, no more than 100 words." ))

. temperature ( 0.0 )

. maxCompletionTokens ( 300 )

. build ();

var futureChat = openAI . chatCompletions (). create ( chatRequest );

var chatResponse = futureChat . join ();

System . out . println ( chatResponse . firstContent ());예제 채팅 완료 서비스에 전화하여 질문을하고 부분 메시지 델타에서 답변을 기다리십시오. 각 델타가 도착하자마자 콘솔에서 인쇄하고 있습니다.

var chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. message ( SystemMessage . of ( "You are an expert in AI." ))

. message ( UserMessage . of ( "Write a technical article about ChatGPT, no more than 100 words." ))

. temperature ( 0.0 )

. maxCompletionTokens ( 300 )

. build ();

var futureChat = openAI . chatCompletions (). createStream ( chatRequest );

var chatResponse = futureChat . join ();

chatResponse . filter ( chatResp -> chatResp . getChoices (). size () > 0 && chatResp . firstContent () != null )

. map ( Chat :: firstContent )

. forEach ( System . out :: print );

System . out . println (); 이 기능은 채팅 완료 서비스를 통해 특정 문제를 우리의 맥락에 해결할 수 있습니다. 이 예에서는 세 가지 기능을 설정하고 있으며 그 중 하나 (기능 product )를 호출 해야하는 프롬프트를 입력하고 있습니다. 기능을 설정하기 위해 인터페이스 Functional 구현하는 추가 클래스를 사용하고 있습니다. 해당 클래스는 각 함수 인수에 의해 필드를 정의하여이를 설명하기 위해 주석을 달고 각 클래스는 execute 메소드를 함수의 논리로 무시해야합니다. FunctionExecutor 유틸리티 클래스를 사용하여 함수를 등록하고 openai.chatCompletions() 호출에서 선택한 기능을 실행합니다.

public void demoCallChatWithFunctions () {

var functionExecutor = new FunctionExecutor ();

functionExecutor . enrollFunction (

FunctionDef . builder ()

. name ( "get_weather" )

. description ( "Get the current weather of a location" )

. functionalClass ( Weather . class )

. strict ( Boolean . TRUE )

. build ());

functionExecutor . enrollFunction (

FunctionDef . builder ()

. name ( "product" )

. description ( "Get the product of two numbers" )

. functionalClass ( Product . class )

. strict ( Boolean . TRUE )

. build ());

functionExecutor . enrollFunction (

FunctionDef . builder ()

. name ( "run_alarm" )

. description ( "Run an alarm" )

. functionalClass ( RunAlarm . class )

. strict ( Boolean . TRUE )

. build ());

var messages = new ArrayList < ChatMessage >();

messages . add ( UserMessage . of ( "What is the product of 123 and 456?" ));

chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. messages ( messages )

. tools ( functionExecutor . getToolFunctions ())

. build ();

var futureChat = openAI . chatCompletions (). create ( chatRequest );

var chatResponse = futureChat . join ();

var chatMessage = chatResponse . firstMessage ();

var chatToolCall = chatMessage . getToolCalls (). get ( 0 );

var result = functionExecutor . execute ( chatToolCall . getFunction ());

messages . add ( chatMessage );

messages . add ( ToolMessage . of ( result . toString (), chatToolCall . getId ()));

chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. messages ( messages )

. tools ( functionExecutor . getToolFunctions ())

. build ();

futureChat = openAI . chatCompletions (). create ( chatRequest );

chatResponse = futureChat . join ();

System . out . println ( chatResponse . firstContent ());

}

public static class Weather implements Functional {

@ JsonPropertyDescription ( "City and state, for example: León, Guanajuato" )

@ JsonProperty ( required = true )

public String location ;

@ JsonPropertyDescription ( "The temperature unit, can be 'celsius' or 'fahrenheit'" )

@ JsonProperty ( required = true )

public String unit ;

@ Override

public Object execute () {

return Math . random () * 45 ;

}

}

public static class Product implements Functional {

@ JsonPropertyDescription ( "The multiplicand part of a product" )

@ JsonProperty ( required = true )

public double multiplicand ;

@ JsonPropertyDescription ( "The multiplier part of a product" )

@ JsonProperty ( required = true )

public double multiplier ;

@ Override

public Object execute () {

return multiplicand * multiplier ;

}

}

public static class RunAlarm implements Functional {

@ Override

public Object execute () {

return "DONE" ;

}

}모델이 외부 이미지를 찍고 그에 대한 질문에 답할 수 있도록 채팅 완료 서비스를 호출하는 예입니다.

var chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. messages ( List . of (

UserMessage . of ( List . of (

ContentPartText . of (

"What do you see in the image? Give in details in no more than 100 words." ),

ContentPartImageUrl . of ( ImageUrl . of (

"https://upload.wikimedia.org/wikipedia/commons/e/eb/Machu_Picchu%2C_Peru.jpg" ))))))

. temperature ( 0.0 )

. maxCompletionTokens ( 500 )

. build ();

var chatResponse = openAI . chatCompletions (). createStream ( chatRequest ). join ();

chatResponse . filter ( chatResp -> chatResp . getChoices (). size () > 0 && chatResp . firstContent () != null )

. map ( Chat :: firstContent )

. forEach ( System . out :: print );

System . out . println ();예제는 채팅 완료 서비스를 호출하여 모델이 로컬 이미지를 찍고 그에 대한 질문에 답할 수 있도록합니다 ( 이 저장소에서 Base64util의 코드를 확인하십시오 ).

var chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. messages ( List . of (

UserMessage . of ( List . of (

ContentPartText . of (

"What do you see in the image? Give in details in no more than 100 words." ),

ContentPartImageUrl . of ( ImageUrl . of (

Base64Util . encode ( "src/demo/resources/machupicchu.jpg" , MediaType . IMAGE )))))))

. temperature ( 0.0 )

. maxCompletionTokens ( 500 )

. build ();

var chatResponse = openAI . chatCompletions (). createStream ( chatRequest ). join ();

chatResponse . filter ( chatResp -> chatResp . getChoices (). size () > 0 && chatResp . firstContent () != null )

. map ( Chat :: firstContent )

. forEach ( System . out :: print );

System . out . println ();예제 채팅 완료 서비스를 호출하여 프롬프트에 대한 구어 오디오 응답을 생성하고 오디오 입력을 사용하여 모델을 프롬프트합니다 ( 이 저장소에서 Base64util의 코드를 확인하십시오 ).

var messages = new ArrayList < ChatMessage >();

messages . add ( SystemMessage . of ( "Respond in a short and concise way." ));

messages . add ( UserMessage . of ( List . of ( ContentPartInputAudio . of ( InputAudio . of (

Base64Util . encode ( "src/demo/resources/question1.mp3" , null ), InputAudioFormat . MP3 )))));

chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-audio-preview" )

. modality ( Modality . TEXT )

. modality ( Modality . AUDIO )

. audio ( Audio . of ( Voice . ALLOY , AudioFormat . MP3 ))

. messages ( messages )

. build ();

var chatResponse = openAI . chatCompletions (). create ( chatRequest ). join ();

var audio = chatResponse . firstMessage (). getAudio ();

Base64Util . decode ( audio . getData (), "src/demo/resources/answer1.mp3" );

System . out . println ( "Answer 1: " + audio . getTranscript ());

messages . add ( AssistantMessage . builder (). audioId ( audio . getId ()). build ());

messages . add ( UserMessage . of ( List . of ( ContentPartInputAudio . of ( InputAudio . of (

Base64Util . encode ( "src/demo/resources/question2.mp3" , null ), InputAudioFormat . MP3 )))));

chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-audio-preview" )

. modality ( Modality . TEXT )

. modality ( Modality . AUDIO )

. audio ( Audio . of ( Voice . ALLOY , AudioFormat . MP3 ))

. messages ( messages )

. build ();

chatResponse = openAI . chatCompletions (). create ( chatRequest ). join ();

audio = chatResponse . firstMessage (). getAudio ();

Base64Util . decode ( audio . getData (), "src/demo/resources/answer2.mp3" );

System . out . println ( "Answer 2: " + audio . getTranscript ());예제 모델이 채팅 완료 서비스를 호출하여 모델이 항상 Java 클래스를 통해 정의 된 JSON 스키마에 준수하는 응답을 생성합니다.

public void demoCallChatWithStructuredOutputs () {

var chatRequest = ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. message ( SystemMessage

. of ( "You are a helpful math tutor. Guide the user through the solution step by step." ))

. message ( UserMessage . of ( "How can I solve 8x + 7 = -23" ))

. responseFormat ( ResponseFormat . jsonSchema ( JsonSchema . builder ()

. name ( "MathReasoning" )

. schemaClass ( MathReasoning . class )

. build ()))

. build ();

var chatResponse = openAI . chatCompletions (). createStream ( chatRequest ). join ();

chatResponse . filter ( chatResp -> chatResp . getChoices (). size () > 0 && chatResp . firstContent () != null )

. map ( Chat :: firstContent )

. forEach ( System . out :: print );

System . out . println ();

}

public static class MathReasoning {

public List < Step > steps ;

public String finalAnswer ;

public static class Step {

public String explanation ;

public String output ;

}

}이 예제는 Command Console의 대화 채팅을 시뮬레이션하고 스트리밍 및 통화 기능을 사용하여 ChatCompletion의 사용법을 보여줍니다.

전체 데모 코드와 데모 코드를 실행 한 결과를 볼 수 있습니다.

package io . github . sashirestela . openai . demo ;

import com . fasterxml . jackson . annotation . JsonProperty ;

import com . fasterxml . jackson . annotation . JsonPropertyDescription ;

import io . github . sashirestela . openai . SimpleOpenAI ;

import io . github . sashirestela . openai . common . function . FunctionDef ;

import io . github . sashirestela . openai . common . function . FunctionExecutor ;

import io . github . sashirestela . openai . common . function . Functional ;

import io . github . sashirestela . openai . common . tool . ToolCall ;

import io . github . sashirestela . openai . domain . chat . Chat ;

import io . github . sashirestela . openai . domain . chat . Chat . Choice ;

import io . github . sashirestela . openai . domain . chat . ChatMessage ;

import io . github . sashirestela . openai . domain . chat . ChatMessage . AssistantMessage ;

import io . github . sashirestela . openai . domain . chat . ChatMessage . ResponseMessage ;

import io . github . sashirestela . openai . domain . chat . ChatMessage . ToolMessage ;

import io . github . sashirestela . openai . domain . chat . ChatMessage . UserMessage ;

import io . github . sashirestela . openai . domain . chat . ChatRequest ;

import java . util . ArrayList ;

import java . util . List ;

import java . util . stream . Stream ;

public class ConversationDemo {

private SimpleOpenAI openAI ;

private FunctionExecutor functionExecutor ;

private int indexTool ;

private StringBuilder content ;

private StringBuilder functionArgs ;

public ConversationDemo () {

openAI = SimpleOpenAI . builder (). apiKey ( System . getenv ( "OPENAI_API_KEY" )). build ();

}

public void prepareConversation () {

List < FunctionDef > functionList = new ArrayList <>();

functionList . add ( FunctionDef . builder ()

. name ( "getCurrentTemperature" )

. description ( "Get the current temperature for a specific location" )

. functionalClass ( CurrentTemperature . class )

. strict ( Boolean . TRUE )

. build ());

functionList . add ( FunctionDef . builder ()

. name ( "getRainProbability" )

. description ( "Get the probability of rain for a specific location" )

. functionalClass ( RainProbability . class )

. strict ( Boolean . TRUE )

. build ());

functionExecutor = new FunctionExecutor ( functionList );

}

public void runConversation () {

List < ChatMessage > messages = new ArrayList <>();

var myMessage = System . console (). readLine ( " n Welcome! Write any message: " );

messages . add ( UserMessage . of ( myMessage ));

while (! myMessage . toLowerCase (). equals ( "exit" )) {

var chatStream = openAI . chatCompletions ()

. createStream ( ChatRequest . builder ()

. model ( "gpt-4o-mini" )

. messages ( messages )

. tools ( functionExecutor . getToolFunctions ())

. temperature ( 0.2 )

. stream ( true )

. build ())

. join ();

indexTool = - 1 ;

content = new StringBuilder ();

functionArgs = new StringBuilder ();

var response = getResponse ( chatStream );

if ( response . getMessage (). getContent () != null ) {

messages . add ( AssistantMessage . of ( response . getMessage (). getContent ()));

}

if ( response . getFinishReason (). equals ( "tool_calls" )) {

messages . add ( response . getMessage ());

var toolCalls = response . getMessage (). getToolCalls ();

var toolMessages = functionExecutor . executeAll ( toolCalls ,

( toolCallId , result ) -> ToolMessage . of ( result , toolCallId ));

messages . addAll ( toolMessages );

} else {

myMessage = System . console (). readLine ( " n n Write any message (or write 'exit' to finish): " );

messages . add ( UserMessage . of ( myMessage ));

}

}

}

private Choice getResponse ( Stream < Chat > chatStream ) {

var choice = new Choice ();

choice . setIndex ( 0 );

var chatMsgResponse = new ResponseMessage ();

List < ToolCall > toolCalls = new ArrayList <>();

chatStream . forEach ( responseChunk -> {

var choices = responseChunk . getChoices ();

if ( choices . size () > 0 ) {

var innerChoice = choices . get ( 0 );

var delta = innerChoice . getMessage ();

if ( delta . getRole () != null ) {

chatMsgResponse . setRole ( delta . getRole ());

}

if ( delta . getContent () != null && ! delta . getContent (). isEmpty ()) {

content . append ( delta . getContent ());

System . out . print ( delta . getContent ());

}

if ( delta . getToolCalls () != null ) {

var toolCall = delta . getToolCalls (). get ( 0 );

if ( toolCall . getIndex () != indexTool ) {

if ( toolCalls . size () > 0 ) {

toolCalls . get ( toolCalls . size () - 1 ). getFunction (). setArguments ( functionArgs . toString ());

functionArgs = new StringBuilder ();

}

toolCalls . add ( toolCall );

indexTool ++;

} else {

functionArgs . append ( toolCall . getFunction (). getArguments ());

}

}

if ( innerChoice . getFinishReason () != null ) {

if ( content . length () > 0 ) {

chatMsgResponse . setContent ( content . toString ());

}

if ( toolCalls . size () > 0 ) {

toolCalls . get ( toolCalls . size () - 1 ). getFunction (). setArguments ( functionArgs . toString ());

chatMsgResponse . setToolCalls ( toolCalls );

}

choice . setMessage ( chatMsgResponse );

choice . setFinishReason ( innerChoice . getFinishReason ());

}

}

});

return choice ;

}

public static void main ( String [] args ) {

var demo = new ConversationDemo ();

demo . prepareConversation ();

demo . runConversation ();

}

public static class CurrentTemperature implements Functional {

@ JsonPropertyDescription ( "The city and state, e.g., San Francisco, CA" )

@ JsonProperty ( required = true )

public String location ;

@ JsonPropertyDescription ( "The temperature unit to use. Infer this from the user's location." )

@ JsonProperty ( required = true )

public String unit ;

@ Override

public Object execute () {

double centigrades = Math . random () * ( 40.0 - 10.0 ) + 10.0 ;

double fahrenheit = centigrades * 9.0 / 5.0 + 32.0 ;

String shortUnit = unit . substring ( 0 , 1 ). toUpperCase ();

return shortUnit . equals ( "C" ) ? centigrades : ( shortUnit . equals ( "F" ) ? fahrenheit : 0.0 );

}

}

public static class RainProbability implements Functional {

@ JsonPropertyDescription ( "The city and state, e.g., San Francisco, CA" )

@ JsonProperty ( required = true )

public String location ;

@ Override

public Object execute () {

return Math . random () * 100 ;

}

}

}Welcome! Write any message: Hi, can you help me with some quetions about Lima, Peru?

Of course! What would you like to know about Lima, Peru?

Write any message (or write 'exit' to finish): Tell me something brief about Lima Peru, then tell me how's the weather there right now. Finally give me three tips to travel there.

## # Brief About Lima, Peru

Lima, the capital city of Peru, is a bustling metropolis that blends modernity with rich historical heritage. Founded by Spanish conquistador Francisco Pizarro in 1535, Lima is known for its colonial architecture, vibrant culture, and delicious cuisine, particularly its world-renowned ceviche. The city is also a gateway to exploring Peru's diverse landscapes, from the coastal deserts to the Andean highlands and the Amazon rainforest.

## # Current Weather in Lima, Peru

I'll check the current temperature and the probability of rain in Lima for you. ## # Current Weather in Lima, Peru

- ** Temperature: ** Approximately 11.8°C

- ** Probability of Rain: ** Approximately 97.8%

## # Three Tips for Traveling to Lima, Peru

1. ** Explore the Historic Center: **

- Visit the Plaza Mayor, the Government Palace, and the Cathedral of Lima. These landmarks offer a glimpse into Lima's colonial past and are UNESCO World Heritage Sites.

2. ** Savor the Local Cuisine: **

- Don't miss out on trying ceviche, a traditional Peruvian dish made from fresh raw fish marinated in citrus juices. Also, explore the local markets and try other Peruvian delicacies.

3. ** Visit the Coastal Districts: **

- Head to Miraflores and Barranco for stunning ocean views, vibrant nightlife, and cultural experiences. These districts are known for their beautiful parks, cliffs, and bohemian atmosphere.

Enjoy your trip to Lima! If you have any more questions, feel free to ask.

Write any message (or write 'exit' to finish): exit이 예제는 Command Console의 대화 채팅을 시뮬레이션하고 최신 비서 API V2 기능의 사용법을 보여줍니다.

전체 데모 코드와 데모 코드를 실행 한 결과를 볼 수 있습니다.

package io . github . sashirestela . openai . demo ;

import com . fasterxml . jackson . annotation . JsonProperty ;

import com . fasterxml . jackson . annotation . JsonPropertyDescription ;

import io . github . sashirestela . cleverclient . Event ;

import io . github . sashirestela . openai . SimpleOpenAI ;

import io . github . sashirestela . openai . common . content . ContentPart . ContentPartTextAnnotation ;

import io . github . sashirestela . openai . common . function . FunctionDef ;

import io . github . sashirestela . openai . common . function . FunctionExecutor ;

import io . github . sashirestela . openai . common . function . Functional ;

import io . github . sashirestela . openai . domain . assistant . AssistantRequest ;

import io . github . sashirestela . openai . domain . assistant . AssistantTool ;

import io . github . sashirestela . openai . domain . assistant . ThreadMessageDelta ;

import io . github . sashirestela . openai . domain . assistant . ThreadMessageRequest ;

import io . github . sashirestela . openai . domain . assistant . ThreadMessageRole ;

import io . github . sashirestela . openai . domain . assistant . ThreadRequest ;

import io . github . sashirestela . openai . domain . assistant . ThreadRun ;

import io . github . sashirestela . openai . domain . assistant . ThreadRun . RunStatus ;

import io . github . sashirestela . openai . domain . assistant . ThreadRunRequest ;

import io . github . sashirestela . openai . domain . assistant . ThreadRunSubmitOutputRequest ;

import io . github . sashirestela . openai . domain . assistant . ThreadRunSubmitOutputRequest . ToolOutput ;

import io . github . sashirestela . openai . domain . assistant . ToolResourceFull ;

import io . github . sashirestela . openai . domain . assistant . ToolResourceFull . FileSearch ;

import io . github . sashirestela . openai . domain . assistant . VectorStoreRequest ;

import io . github . sashirestela . openai . domain . assistant . events . EventName ;

import io . github . sashirestela . openai . domain . file . FileRequest ;

import io . github . sashirestela . openai . domain . file . FileRequest . PurposeType ;

import java . nio . file . Paths ;

import java . util . ArrayList ;

import java . util . List ;

import java . util . stream . Stream ;

public class ConversationV2Demo {

private SimpleOpenAI openAI ;

private String fileId ;

private String vectorStoreId ;

private FunctionExecutor functionExecutor ;

private String assistantId ;

private String threadId ;

public ConversationV2Demo () {

openAI = SimpleOpenAI . builder (). apiKey ( System . getenv ( "OPENAI_API_KEY" )). build ();

}

public void prepareConversation () {

List < FunctionDef > functionList = new ArrayList <>();

functionList . add ( FunctionDef . builder ()

. name ( "getCurrentTemperature" )

. description ( "Get the current temperature for a specific location" )

. functionalClass ( CurrentTemperature . class )

. strict ( Boolean . TRUE )

. build ());

functionList . add ( FunctionDef . builder ()

. name ( "getRainProbability" )

. description ( "Get the probability of rain for a specific location" )

. functionalClass ( RainProbability . class )

. strict ( Boolean . TRUE )

. build ());

functionExecutor = new FunctionExecutor ( functionList );

var file = openAI . files ()

. create ( FileRequest . builder ()

. file ( Paths . get ( "src/demo/resources/mistral-ai.txt" ))

. purpose ( PurposeType . ASSISTANTS )

. build ())

. join ();

fileId = file . getId ();

System . out . println ( "File was created with id: " + fileId );

var vectorStore = openAI . vectorStores ()

. createAndPoll ( VectorStoreRequest . builder ()

. fileId ( fileId )

. build ());

vectorStoreId = vectorStore . getId ();

System . out . println ( "Vector Store was created with id: " + vectorStoreId );

var assistant = openAI . assistants ()

. create ( AssistantRequest . builder ()

. name ( "World Assistant" )

. model ( "gpt-4o" )

. instructions ( "You are a skilled tutor on geo-politic topics." )

. tools ( functionExecutor . getToolFunctions ())

. tool ( AssistantTool . fileSearch ())

. toolResources ( ToolResourceFull . builder ()

. fileSearch ( FileSearch . builder ()

. vectorStoreId ( vectorStoreId )

. build ())

. build ())

. temperature ( 0.2 )

. build ())

. join ();

assistantId = assistant . getId ();

System . out . println ( "Assistant was created with id: " + assistantId );

var thread = openAI . threads (). create ( ThreadRequest . builder (). build ()). join ();

threadId = thread . getId ();

System . out . println ( "Thread was created with id: " + threadId );

System . out . println ();

}

public void runConversation () {

var myMessage = System . console (). readLine ( " n Welcome! Write any message: " );

while (! myMessage . toLowerCase (). equals ( "exit" )) {

openAI . threadMessages ()

. create ( threadId , ThreadMessageRequest . builder ()

. role ( ThreadMessageRole . USER )

. content ( myMessage )

. build ())

. join ();

var runStream = openAI . threadRuns ()

. createStream ( threadId , ThreadRunRequest . builder ()

. assistantId ( assistantId )

. parallelToolCalls ( Boolean . FALSE )

. build ())

. join ();

handleRunEvents ( runStream );

myMessage = System . console (). readLine ( " n Write any message (or write 'exit' to finish): " );

}

}

private void handleRunEvents ( Stream < Event > runStream ) {

runStream . forEach ( event -> {

switch ( event . getName ()) {

case EventName . THREAD_RUN_CREATED :

case EventName . THREAD_RUN_COMPLETED :

case EventName . THREAD_RUN_REQUIRES_ACTION :

var run = ( ThreadRun ) event . getData ();

System . out . println ( "=====>> Thread Run: id=" + run . getId () + ", status=" + run . getStatus ());

if ( run . getStatus (). equals ( RunStatus . REQUIRES_ACTION )) {

var toolCalls = run . getRequiredAction (). getSubmitToolOutputs (). getToolCalls ();

var toolOutputs = functionExecutor . executeAll ( toolCalls ,

( toolCallId , result ) -> ToolOutput . builder ()

. toolCallId ( toolCallId )

. output ( result )

. build ());

var runSubmitToolStream = openAI . threadRuns ()

. submitToolOutputStream ( threadId , run . getId (), ThreadRunSubmitOutputRequest . builder ()

. toolOutputs ( toolOutputs )

. stream ( true )

. build ())

. join ();

handleRunEvents ( runSubmitToolStream );

}

break ;

case EventName . THREAD_MESSAGE_DELTA :

var msgDelta = ( ThreadMessageDelta ) event . getData ();

var content = msgDelta . getDelta (). getContent (). get ( 0 );

if ( content instanceof ContentPartTextAnnotation ) {

var textContent = ( ContentPartTextAnnotation ) content ;

System . out . print ( textContent . getText (). getValue ());

}

break ;

case EventName . THREAD_MESSAGE_COMPLETED :

System . out . println ();

break ;

default :

break ;

}

});

}

public void cleanConversation () {

var deletedFile = openAI . files (). delete ( fileId ). join ();

var deletedVectorStore = openAI . vectorStores (). delete ( vectorStoreId ). join ();

var deletedAssistant = openAI . assistants (). delete ( assistantId ). join ();

var deletedThread = openAI . threads (). delete ( threadId ). join ();

System . out . println ( "File was deleted: " + deletedFile . getDeleted ());

System . out . println ( "Vector Store was deleted: " + deletedVectorStore . getDeleted ());

System . out . println ( "Assistant was deleted: " + deletedAssistant . getDeleted ());

System . out . println ( "Thread was deleted: " + deletedThread . getDeleted ());

}

public static void main ( String [] args ) {

var demo = new ConversationV2Demo ();

demo . prepareConversation ();

demo . runConversation ();

demo . cleanConversation ();

}

public static class CurrentTemperature implements Functional {

@ JsonPropertyDescription ( "The city and state, e.g., San Francisco, CA" )

@ JsonProperty ( required = true )

public String location ;

@ JsonPropertyDescription ( "The temperature unit to use. Infer this from the user's location." )

@ JsonProperty ( required = true )

public String unit ;

@ Override

public Object execute () {

double centigrades = Math . random () * ( 40.0 - 10.0 ) + 10.0 ;

double fahrenheit = centigrades * 9.0 / 5.0 + 32.0 ;

String shortUnit = unit . substring ( 0 , 1 ). toUpperCase ();

return shortUnit . equals ( "C" ) ? centigrades : ( shortUnit . equals ( "F" ) ? fahrenheit : 0.0 );

}

}

public static class RainProbability implements Functional {

@ JsonPropertyDescription ( "The city and state, e.g., San Francisco, CA" )

@ JsonProperty ( required = true )

public String location ;

@ Override

public Object execute () {

return Math . random () * 100 ;

}

}

}File was created with id: file-oDFIF7o4SwuhpwBNnFIILhMK

Vector Store was created with id: vs_lG1oJmF2s5wLhqHUSeJpELMr

Assistant was created with id: asst_TYS5cZ05697tyn3yuhDrCCIv

Thread was created with id: thread_33n258gFVhZVIp88sQKuqMvg

Welcome! Write any message: Hello

=====>> Thread Run: id=run_nihN6dY0uyudsORg4xyUvQ5l, status=QUEUED

Hello! How can I assist you today?

=====>> Thread Run: id=run_nihN6dY0uyudsORg4xyUvQ5l, status=COMPLETED

Write any message (or write 'exit' to finish): Tell me something brief about Lima Peru, then tell me how's the weather there right now. Finally give me three tips to travel there.

=====>> Thread Run: id=run_QheimPyP5UK6FtmH5obon0fB, status=QUEUED

Lima, the capital city of Peru, is located on the country's arid Pacific coast. It's known for its vibrant culinary scene, rich history, and as a cultural hub with numerous museums, colonial architecture, and remnants of pre-Columbian civilizations. This bustling metropolis serves as a key gateway to visiting Peru’s more famous attractions, such as Machu Picchu and the Amazon rainforest.

Let me find the current weather conditions in Lima for you, and then I'll provide three travel tips.

=====>> Thread Run: id=run_QheimPyP5UK6FtmH5obon0fB, status=REQUIRES_ACTION

## # Current Weather in Lima, Peru:

- ** Temperature: ** 12.8°C

- ** Rain Probability: ** 82.7%

## # Three Travel Tips for Lima, Peru:

1. ** Best Time to Visit: ** Plan your trip during the dry season, from May to September, which offers clearer skies and milder temperatures. This period is particularly suitable for outdoor activities and exploring the city comfortably.

2. ** Local Cuisine: ** Don't miss out on tasting the local Peruvian dishes, particularly the ceviche, which is renowned worldwide. Lima is also known as the gastronomic capital of South America, so indulge in the wide variety of dishes available.

3. ** Cultural Attractions: ** Allocate enough time to visit Lima's rich array of museums, such as the Larco Museum, which showcases pre-Columbian art, and the historical center which is a UNESCO World Heritage Site. Moreover, exploring districts like Miraflores and Barranco can provide insights into the modern and bohemian sides of the city.

Enjoy planning your trip to Lima! If you need more information or help, feel free to ask.

=====>> Thread Run: id=run_QheimPyP5UK6FtmH5obon0fB, status=COMPLETED

Write any message (or write 'exit' to finish): Tell me something about the Mistral company

=====>> Thread Run: id=run_5u0t8kDQy87p5ouaTRXsCG8m, status=QUEUED

Mistral AI is a French company that specializes in selling artificial intelligence products. It was established in April 2023 by former employees of Meta Platforms and Google DeepMind. Notably, the company secured a significant amount of funding, raising €385 million in October 2023, and achieved a valuation exceeding $ 2 billion by December of the same year.

The prime focus of Mistral AI is on developing and producing open-source large language models. This approach underscores the foundational role of open-source software as a counter to proprietary models. As of March 2024, Mistral AI has published two models, which are available in terms of weights, while three more models—categorized as Small, Medium, and Large—are accessible only through an API[1].

=====>> Thread Run: id=run_5u0t8kDQy87p5ouaTRXsCG8m, status=COMPLETED

Write any message (or write 'exit' to finish): exit

File was deleted: true

Vector Store was deleted: true

Assistant was deleted: true

Thread was deleted: true이 예에서는 코드를 볼 수 있으며 마이크와 스피커를 사용하여 귀하와 모델 사이에 음성 음성 대화 대화를 설정할 수 있습니다. 전체 코드를 참조하십시오.

RealTimeDemo.java

Simple-Openai는 OpenAI API와 호환되는 추가 제공 업체와 함께 사용할 수 있습니다. 현재 다음과 같은 추가 제공 업체에 대한 지원이 있습니다.

Azure Openia는 Simple-Openai가 지원합니다. Class BaseSimpleOpenAI 확장 하여이 공급자를 사용하기 시작하는 Class SimpleOpenAIAzure 사용할 수 있습니다.

var openai = SimpleOpenAIAzure . builder ()

. apiKey ( System . getenv ( "AZURE_OPENAI_API_KEY" ))

. baseUrl ( System . getenv ( "AZURE_OPENAI_BASE_URL" )) // Including resourceName and deploymentId

. apiVersion ( System . getenv ( "AZURE_OPENAI_API_VERSION" ))

//.httpClient(customHttpClient) Optionally you could pass a custom HttpClient

. build ();Azure Openai는 기능이 다른 다양한 모델 세트로 구동되며 각 모델에 대해 별도의 배포가 필요합니다. 모델 가용성은 지역 및 클라우드마다 다릅니다. Azure OpenAI 모델에 대한 자세한 내용을 참조하십시오.

현재 우리는 다음 서비스 만 지원하고 있습니다.

chatCompletionService (텍스트 생성, 스트리밍, 기능 호출, 비전, 구조적 출력)fileService (파일 업로드) 모든 규모는 단순 오 페나이에 의해 서포트됩니다. Class BaseSimpleOpenAI 확장 하여이 공급자를 사용하기 시작하는 Class SimpleOpenAIAnyscale 클래스를 사용할 수 있습니다.

var openai = SimpleOpenAIAnyscale . builder ()

. apiKey ( System . getenv ( "ANYSCALE_API_KEY" ))

//.baseUrl(customUrl) Optionally you could pass a custom baseUrl

//.httpClient(customHttpClient) Optionally you could pass a custom HttpClient

. build (); 현재 우리는 chatCompletionService 서비스 만 지원하고 있습니다. 미스트랄 모델로 테스트되었습니다.

각 OpenAI 서비스에 대한 예는 폴더 데모에서 작성되었으며 다음 단계를 따라이를 실행할 수 있습니다.

이 저장소를 복제하십시오.

git clone https://github.com/sashirestela/simple-openai.git

cd simple-openai

프로젝트 구축 :

mvn clean install

OpenAI API 키에 대한 환경 변수 생성 :

export OPENAI_API_KEY=<here goes your api key>

스크립트 파일에 대한 실행 권한 부여 :

chmod +x rundemo.sh

실행 예제 :

./rundemo.sh <demo> [debug]

어디:

<demo> 는 필수이며 값 중 하나 여야합니다.

[debug] 는 선택 사항이며 각 실행에 대한 로그 세부 사항을 볼 수있는 demo.log 파일을 만듭니다.

예를 들어, 로그 파일로 채팅 데모를 실행하려면 : ./rundemo.sh Chat debug

Azure Openai 데모에 대한 표시

이 데모를 실행하기 위해 권장되는 모델은 다음과 같습니다.

자세한 내용은 Azure Openai 문서를 참조하십시오 : Azure OpenAi 문서. 배포 URL과 API 키가 있으면 다음 환경 변수를 설정하십시오.

export AZURE_OPENAI_BASE_URL=<https://YOUR_RESOURCE_NAME.openai.azure.com/openai/deployments/YOUR_DEPLOYMENT_NAME>

export AZURE_OPENAI_API_KEY=<here goes your regional API key>

export AZURE_OPENAI_API_VERSION=<for example: 2024-08-01-preview>

모든 지역에서는 일부 모델을 사용할 수 없습니다. 모델을 찾는 데 어려움이 있다면 다른 지역을 사용해보십시오. API 키는 지역입니다 (인지 설명 당). 동일한 영역에서 여러 모델을 제공하면 동일한 API 키를 공유합니다 (실제로 대체 키 회전을 지원하기위한 지역 당 두 개의 키가 있습니다).

이 프로젝트에 기여하는 방법을 배우고 이해하려면 기고 가이드를 친절하게 읽으십시오.

Simple-Openai는 MIT 라이센스에 따라 라이센스가 부여됩니다. 자세한 내용은 라이센스 파일을 참조하십시오.

당사 도서관의 주요 사용자 목록 :

간단한 오페나이를 사용해 주셔서 감사합니다. 이 프로젝트가 귀중한 것을 발견하면 우리에게 당신의 사랑, 바람직하게는 모두를 보여줄 수있는 몇 가지 방법이 있습니다.